GitHub's New AI Training Policy: What It Means for Developers [2025]

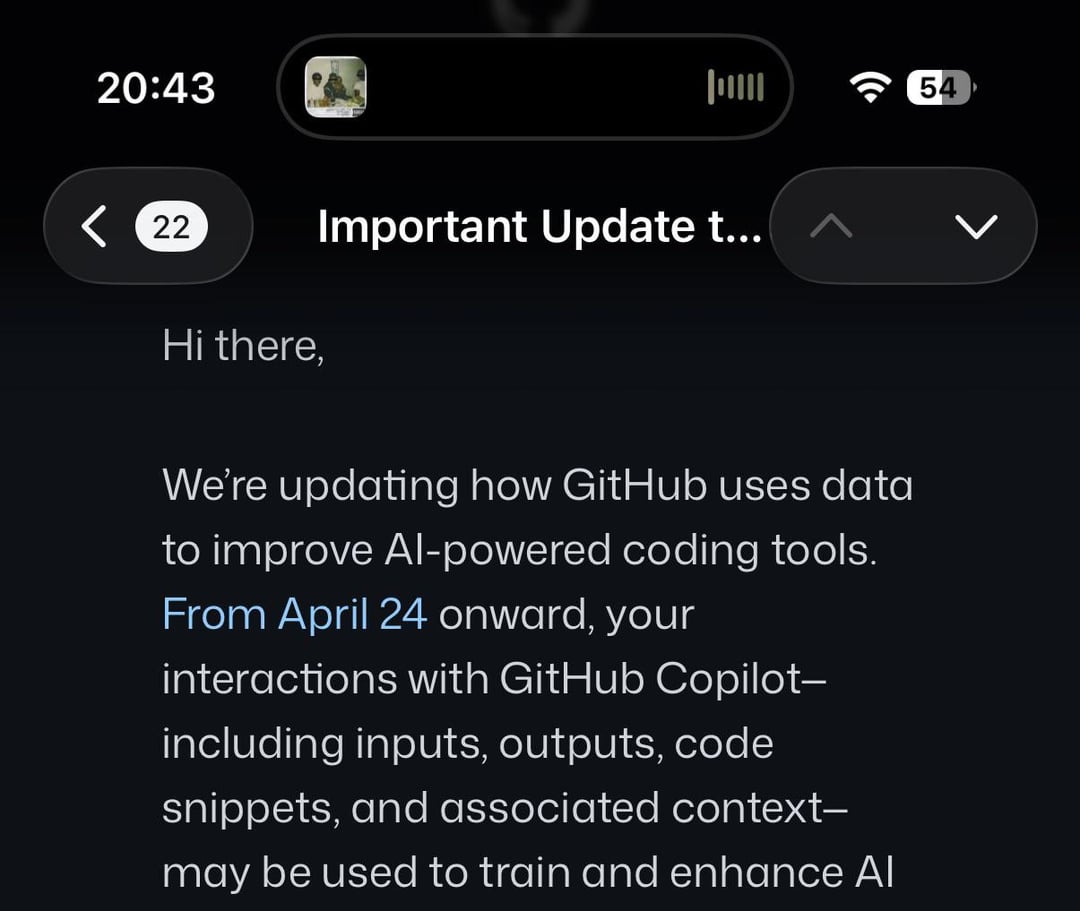

GitHub, the world's largest platform for open-source code collaboration, has recently announced a significant shift in its data policy. The company plans to utilize user data to train its AI models, a move that has sparked a mix of curiosity, concern, and outright skepticism among developers worldwide. While the platform promises enhanced AI capabilities, the trade-off between innovation and privacy is a complex issue that deserves a closer look.

TL; DR

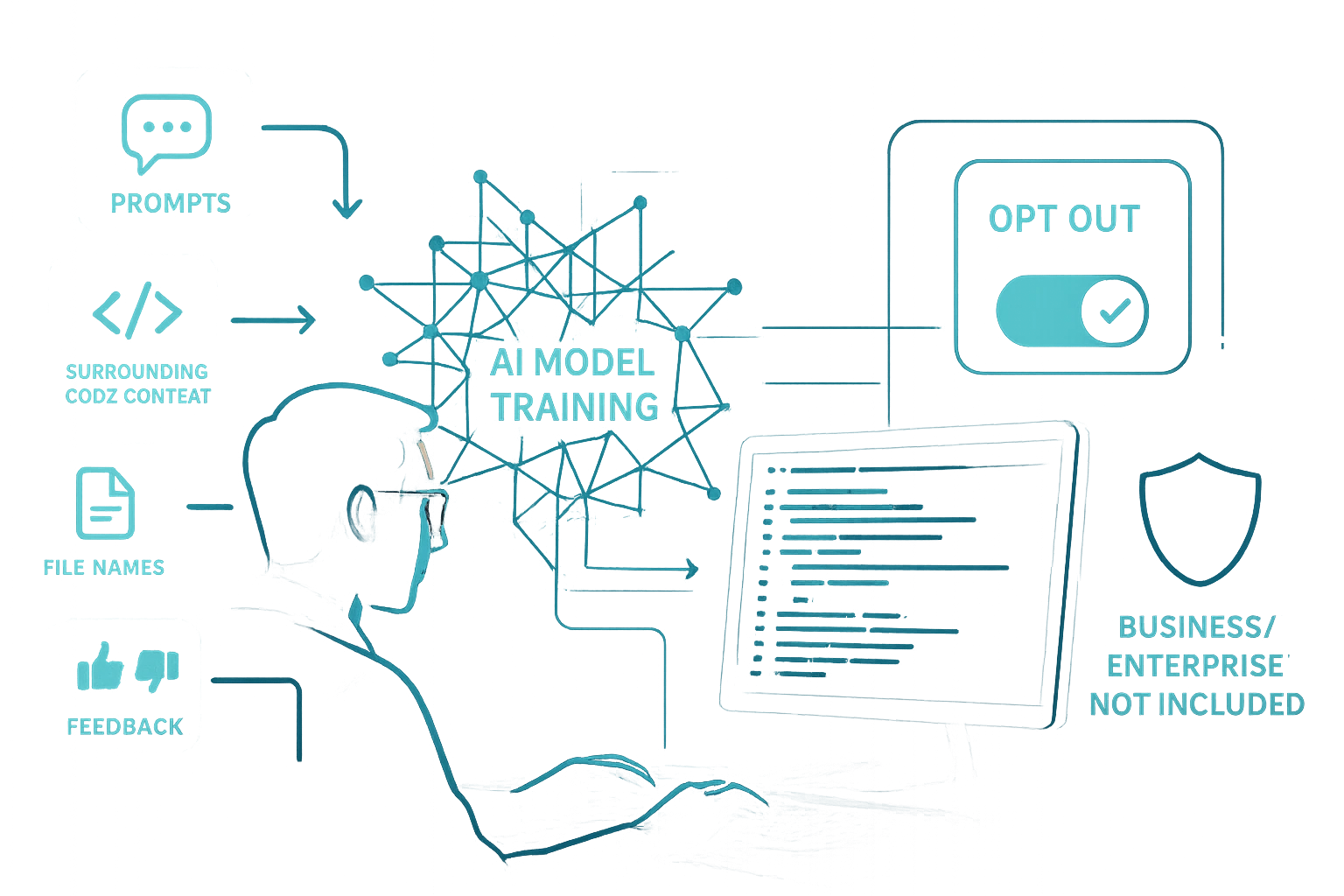

- GitHub's AI Training: User data will be used to enhance AI models, with an opt-out option available. This change is detailed in GitHub's updated data usage policy.

- Exclusions: Business, Enterprise, and educational accounts are exempt from automatic data usage, as noted in The Register's report.

- Privacy Concerns: Developers worry about the implications of data privacy and security, a concern highlighted in Newsweek's analysis.

- User Control: Opting out is possible, but not default, as discussed in GitHub's official announcement.

- Future Impact: This move could redefine AI development practices, as explored in The Atlantic's feature on AI trends.

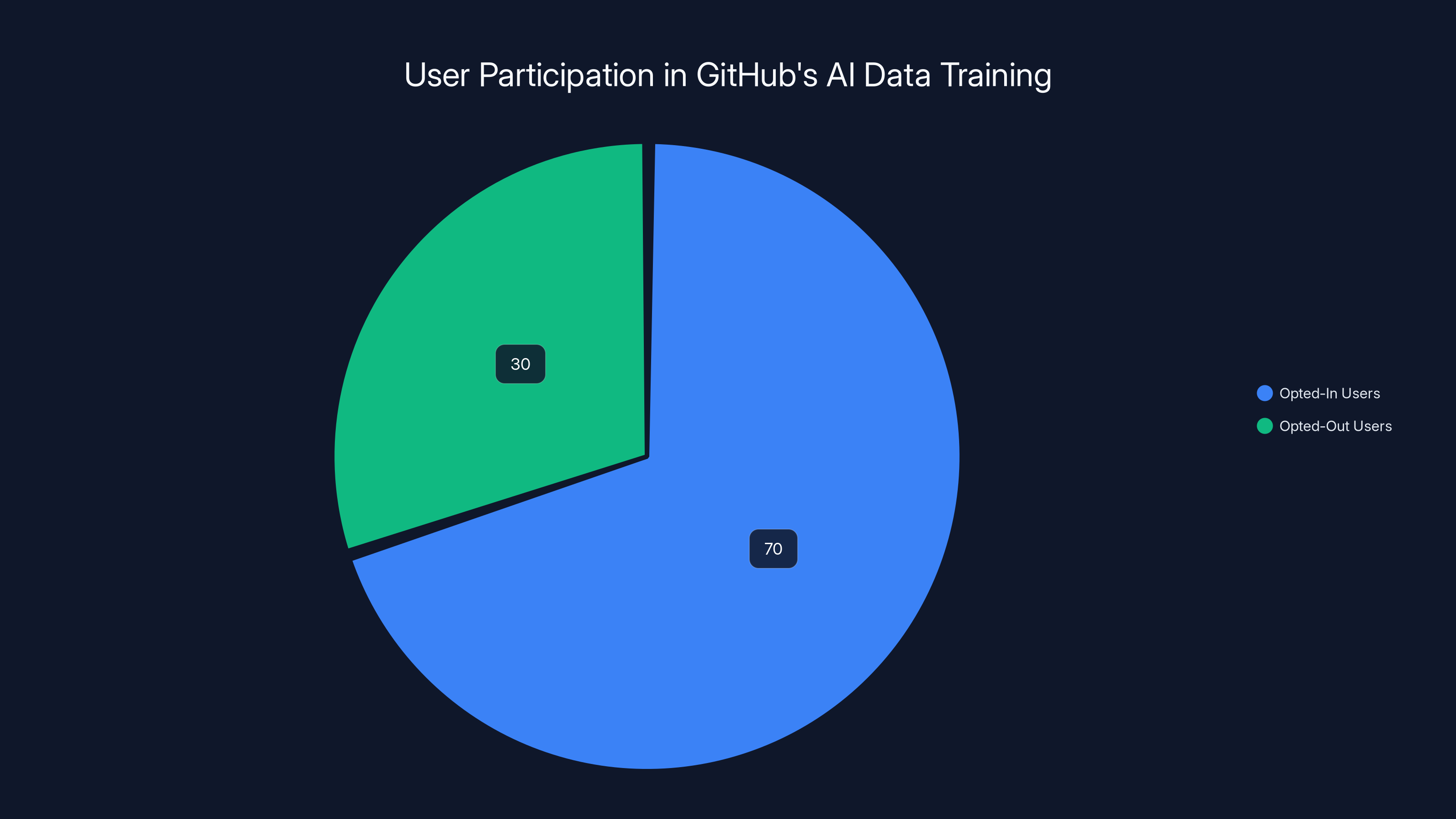

Estimated data shows that a majority of users remain opted-in to GitHub's AI data training by default, with a smaller percentage actively choosing to opt-out.

Understanding GitHub's Decision

GitHub's decision to use user data for AI training isn't entirely unexpected. As AI systems become more sophisticated, they require vast amounts of data to improve their learning algorithms. GitHub's Chief Product Officer, Mario Rodriguez, emphasized the necessity of real-time, live data for effective AI model training. The decision to opt users into this system by default has raised eyebrows, especially given the sensitive nature of code repositories, as reported by GitHub's news insights.

Why User Data Matters

AI models thrive on data. The more diverse and extensive the dataset, the better the AI can learn and adapt. By leveraging user data, GitHub aims to enhance the capabilities of its AI tools, such as GitHub Copilot, which assists developers by suggesting code snippets and solutions based on context, as described in GitHub's AI and ML blog.

Key Benefits of Using Real-Time Data:

- Improved Accuracy: AI can provide more relevant suggestions by learning from actual user interactions.

- Contextual Understanding: Access to live data allows AI to understand and predict user needs more effectively.

- Innovation Acceleration: A more capable AI can lead to faster development cycles and innovative solutions, as noted by Oracle's insights on AI automation.

The Opt-Out Mechanism

While the default setting subscribes users to data collection, GitHub has provided an opt-out option. This means users can choose not to participate in the data training program. However, the onus is on users to actively make this choice, which raises questions about informed consent and user autonomy, as discussed in GitHub's policy update.

Steps to Opt-Out:

- Navigate to your GitHub settings.

- Locate the 'Data Usage' section.

- Select 'Opt-Out' to prevent your data from being used for AI training.

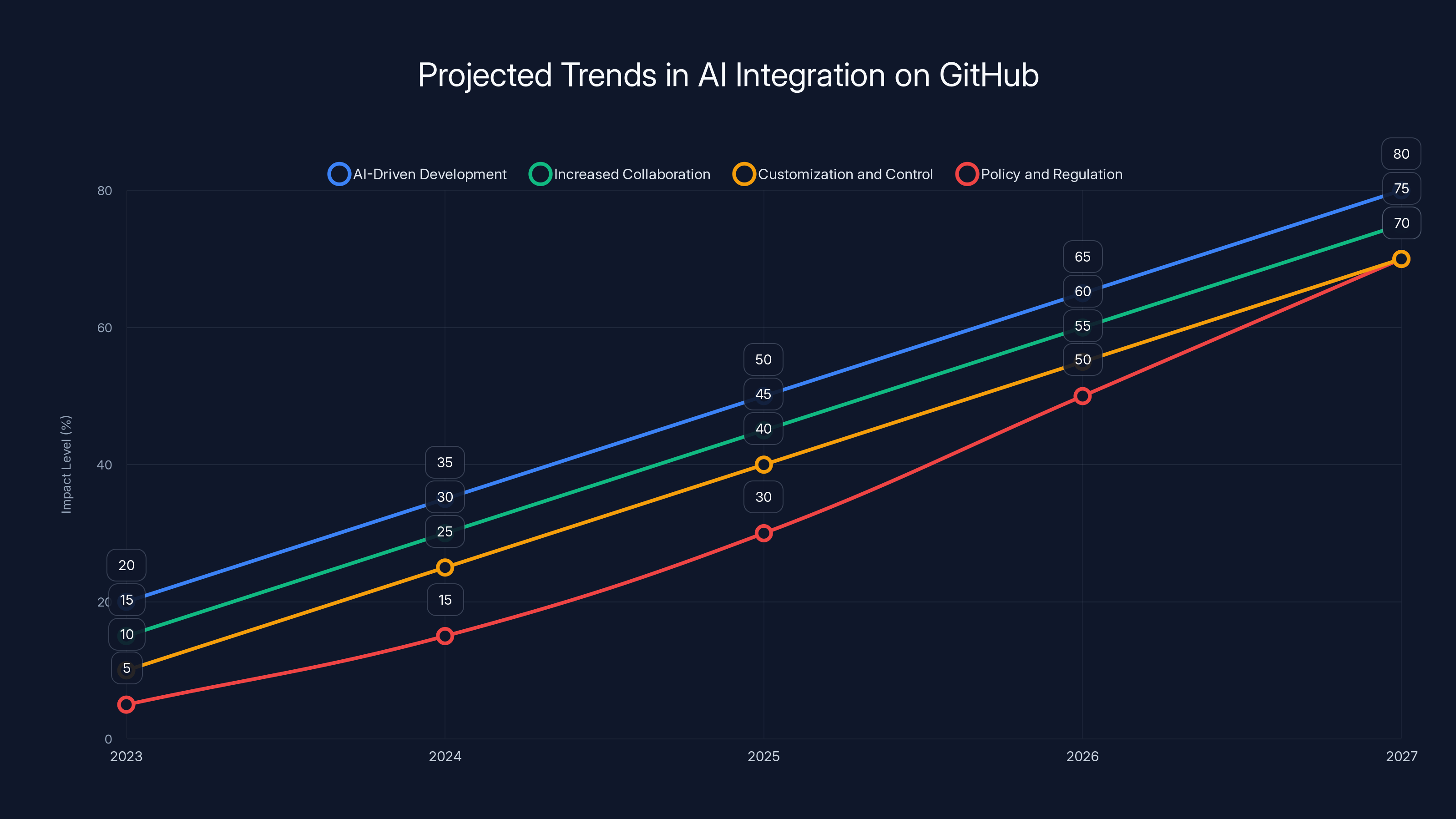

Estimated data shows a steady increase in AI-driven development, collaboration, customization, and regulatory impact on GitHub from 2023 to 2027.

Implications for Developers

The announcement has prompted a variety of reactions from the developer community. While some see the potential benefits of enhanced AI tools, others are concerned about privacy and data security, as highlighted in Maximus's insights on AI accuracy.

Privacy Concerns

The use of personal and project data for AI training brings significant privacy concerns. Developers often work with sensitive information, and the idea of this data being used outside the intended project scope is unsettling for many, as noted by Newsweek.

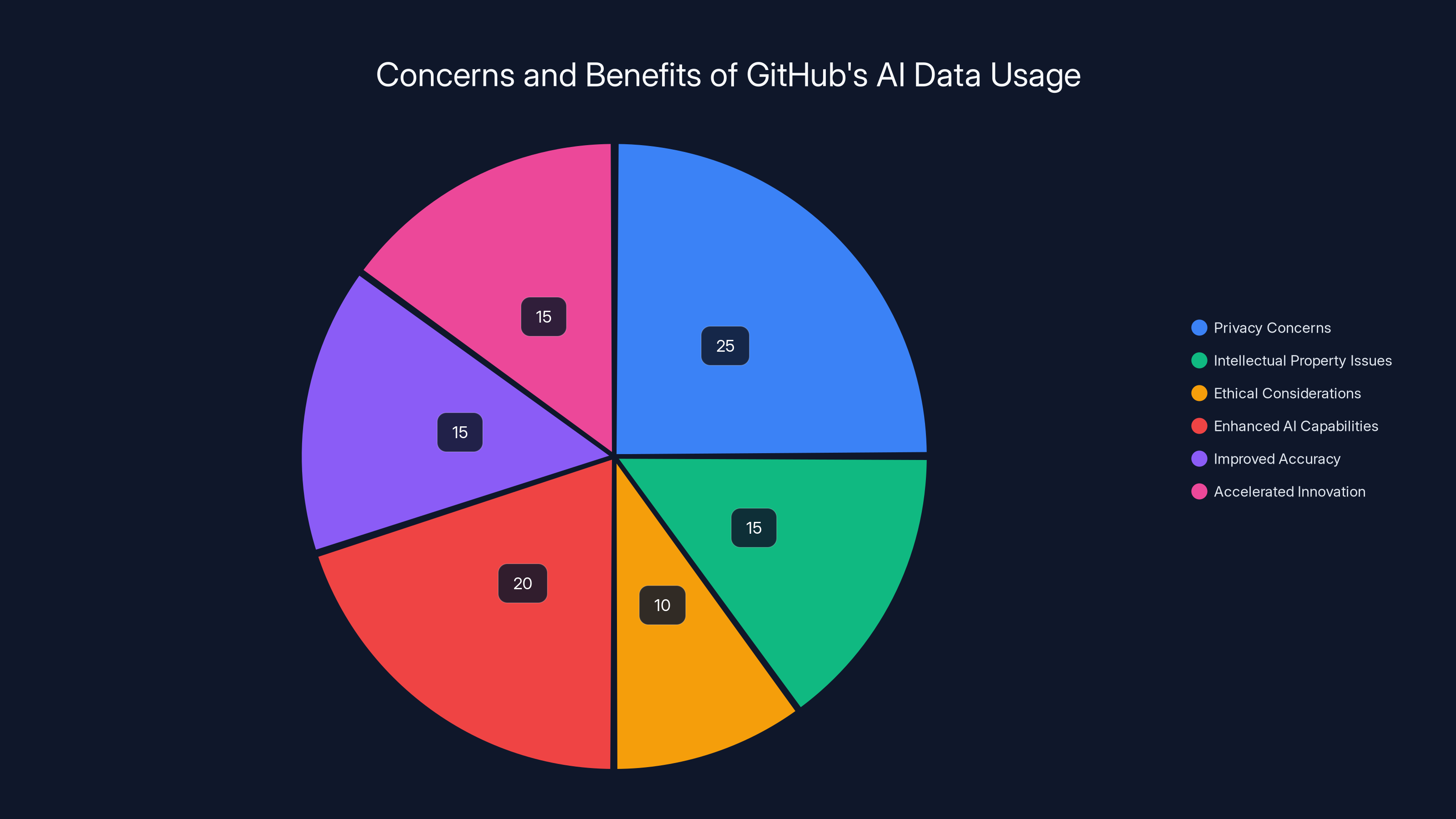

Common Privacy Concerns:

- Data Breach Risks: Increased data usage could lead to vulnerabilities.

- Intellectual Property: Developers are concerned about ownership and control over their code.

- Ethical Considerations: The ethical implications of using user data without explicit consent.

Potential Solutions

To mitigate these concerns, developers and organizations can implement a few best practices.

Best Practices for Data Privacy:

- Regular Audits: Conduct regular audits of your GitHub account to ensure no sensitive data is being inadvertently shared.

- Use Private Repositories: Whenever possible, use private repositories to limit data exposure.

- Stay Informed: Keep up with GitHub's policy updates and adjust your settings accordingly, as advised by GitHub's official blog.

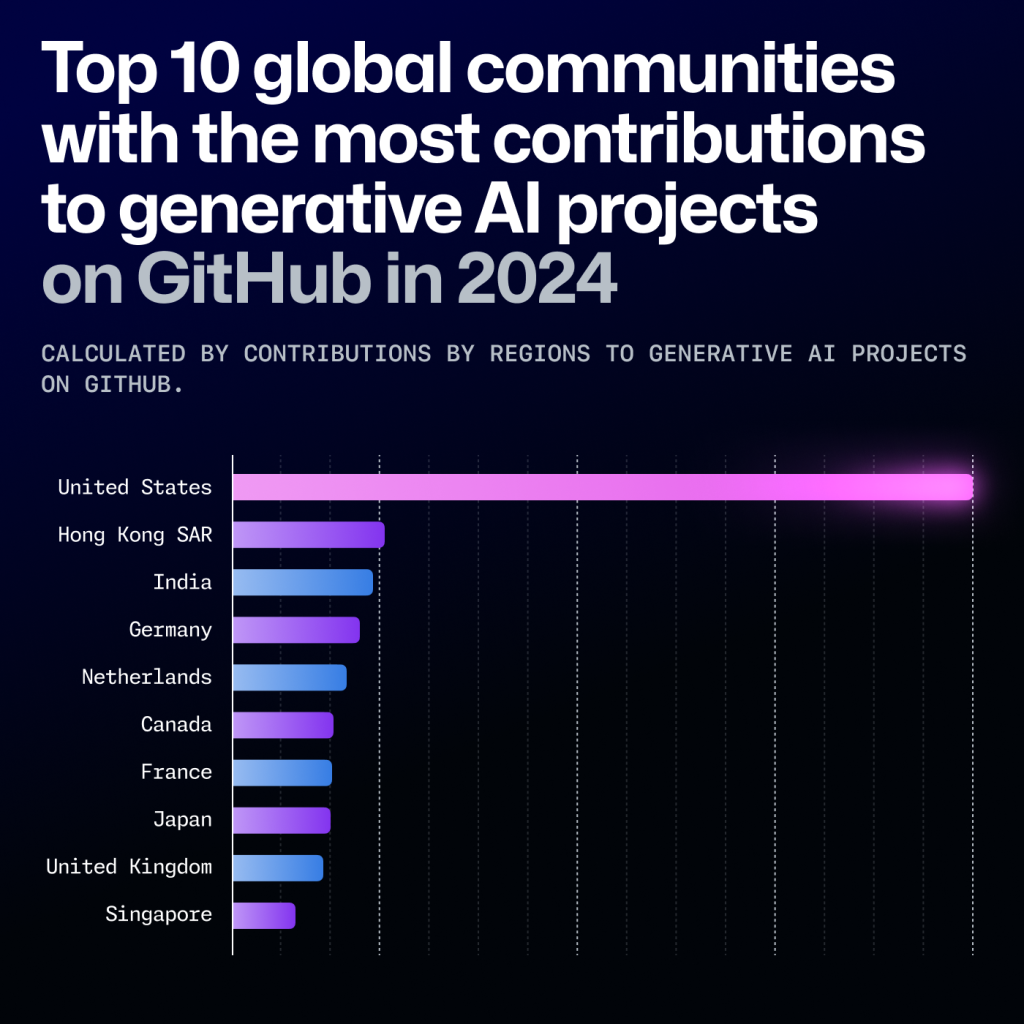

The Future of AI on GitHub

GitHub's decision marks a significant step in the evolution of AI tools in software development. As AI becomes more integrated into development workflows, understanding its potential and limitations will be crucial for developers, as explored in The Atlantic.

Future Trends

- AI-Driven Development: With AI tools becoming more sophisticated, they could take on more complex tasks, shifting the developer's role towards oversight and strategic decision-making.

- Increased Collaboration: AI tools can facilitate better collaboration by providing context and suggestions in real-time, bridging gaps between remote teams.

- Customization and Control: Developers will demand more control over how AI tools interact with their data, leading to customizable AI settings and preferences.

- Policy and Regulation: As AI usage grows, expect more stringent regulations around data privacy and AI ethics, as discussed in Newsweek's analysis.

Estimated data shows a balanced view of concerns and benefits regarding GitHub's use of user data for AI training, with privacy concerns and enhanced AI capabilities being the most prominent.

Common Pitfalls and Solutions

As developers navigate this new landscape, being aware of potential pitfalls is essential.

Pitfall 1: Ignoring Privacy Settings

Solution: Regularly review and adjust your GitHub privacy settings to ensure they align with your comfort level, as recommended in GitHub's policy update.

Pitfall 2: Over-Reliance on AI

Solution: Use AI as a tool to augment your skills, not replace them. Maintain a critical eye on AI-generated suggestions, as advised by Maximus.

Pitfall 3: Data Security Gaps

Solution: Implement strong security practices, such as encryption and access controls, to protect your repositories, as outlined in GitHub's official documentation.

Best Practices for Using AI on GitHub

- Stay Educated: Continuously educate yourself about AI developments and implications.

- Engage with the Community: Participate in forums and discussions to share experiences and learn from others.

- Experiment and Iterate: Try different AI tools and settings to find what best suits your workflow, as suggested by GitHub's blog.

Conclusion

GitHub's move to utilize user data for AI training is a double-edged sword. While it promises enhanced AI capabilities and more efficient development processes, it also raises critical questions about privacy and ethical data use. By staying informed and proactive, developers can navigate these changes effectively, leveraging AI's benefits while minimizing its risks, as emphasized in GitHub's policy update.

FAQs

What is GitHub's new AI training policy?

GitHub's new policy involves using user data to train AI models, with an opt-out option available for users who do not wish to participate, as detailed in GitHub's announcement.

How can I opt-out of GitHub's data usage for AI training?

You can opt-out by going to your GitHub settings, finding the 'Data Usage' section, and selecting the opt-out option, as instructed in GitHub's policy update.

What are the privacy concerns with GitHub using user data?

Concerns include potential data breaches, intellectual property issues, and ethical considerations around data usage without explicit consent, as highlighted by Newsweek.

How can I protect my data on GitHub?

Use private repositories, conduct regular audits, and stay informed about GitHub's policy updates to protect your data, as advised by GitHub's blog.

What are the benefits of GitHub using user data for AI training?

Enhanced AI capabilities, improved accuracy, and accelerated innovation are some benefits of using real-time user data for AI training, as noted in Oracle's insights.

What future trends can we expect from AI in software development?

Expect AI-driven development, increased collaboration, and more stringent regulations around data privacy and AI ethics, as discussed in The Atlantic.

Key Takeaways

- GitHub will use user data to enhance AI models, with an opt-out option.

- Business, Enterprise, and educational accounts are not affected by default.

- Privacy concerns include data breaches and intellectual property issues.

- Developers can opt-out by adjusting privacy settings on GitHub.

- AI tools are expected to become more sophisticated and collaborative.

- Future AI trends include increased customization and regulatory oversight.

- Best practices include using private repositories and conducting audits.

- Understanding AI's potential and limitations is crucial for developers.

Related Articles

- The AI Skills Gap: Power Users Are Leading the Charge [2025]

- The Impact of Sora's Closure on Disney's $1 Billion OpenAI Investment [2025]

- Understanding Anthropic’s Claude Code: Safer Auto Mode Revolution [2025]

- Embracing a More Flexible Buffer: Strategies for Smarter Social Media Management in 2025

- Understanding the EU's Approach to Regulating AI and App Privacy [2025]

- Understanding Reddit's Human Verification for Fishy Accounts [2025]

![GitHub's New AI Training Policy: What It Means for Developers [2025]](https://tryrunable.com/blog/github-s-new-ai-training-policy-what-it-means-for-developers/image-1-1774541152598.png)