Understanding Anthropic’s Claude Code: Safer Auto Mode Revolution [2025]

Introduction

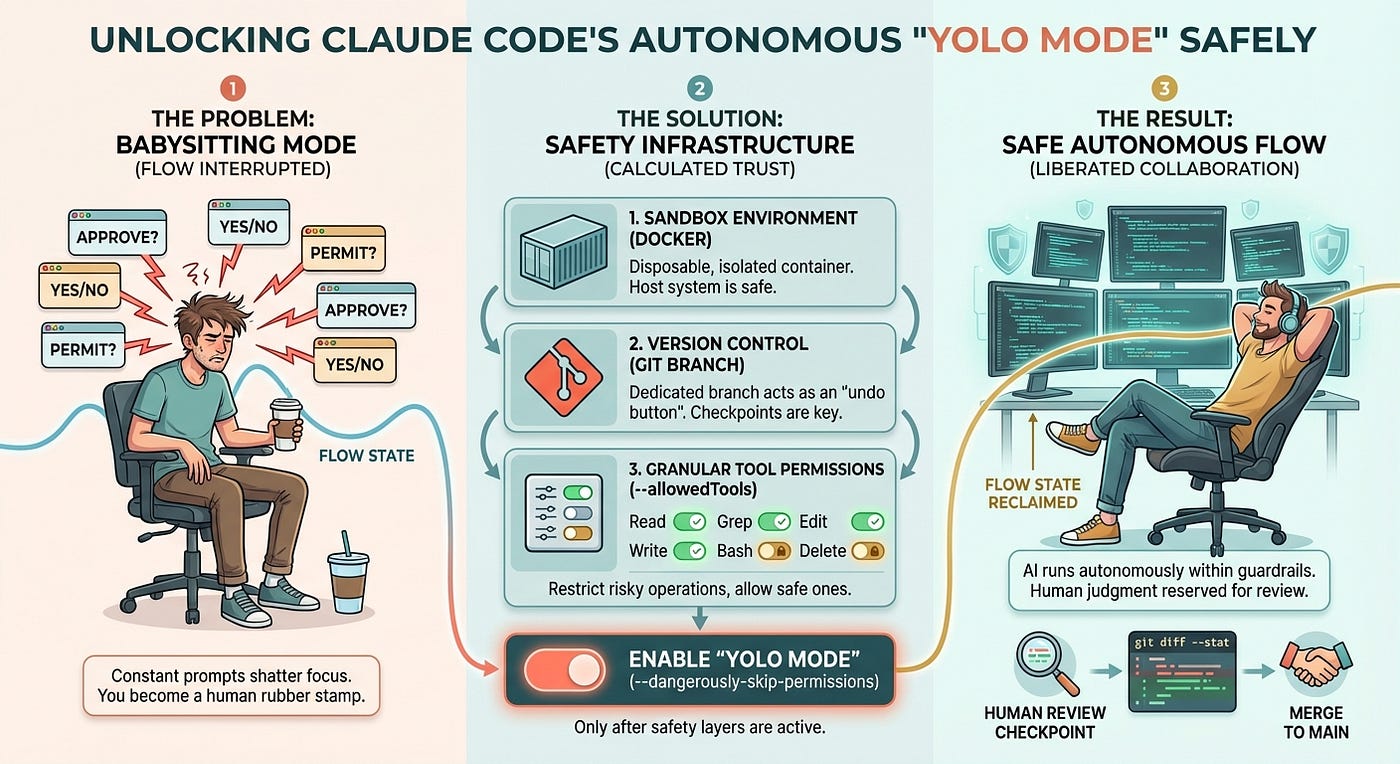

In the rapidly advancing world of artificial intelligence, safety and functionality often stand in conflict. Anthropic, a company known for its commitment to AI safety, has introduced a revolutionary feature in its Claude Code: the 'safer' auto mode. This feature promises to redefine how AI systems operate autonomously while ensuring user safety and ethical standards are upheld. According to 9to5Mac, this mode provides developers with a safer alternative by allowing AI to work independently while adhering to strict permissions.

_LR.jpg)

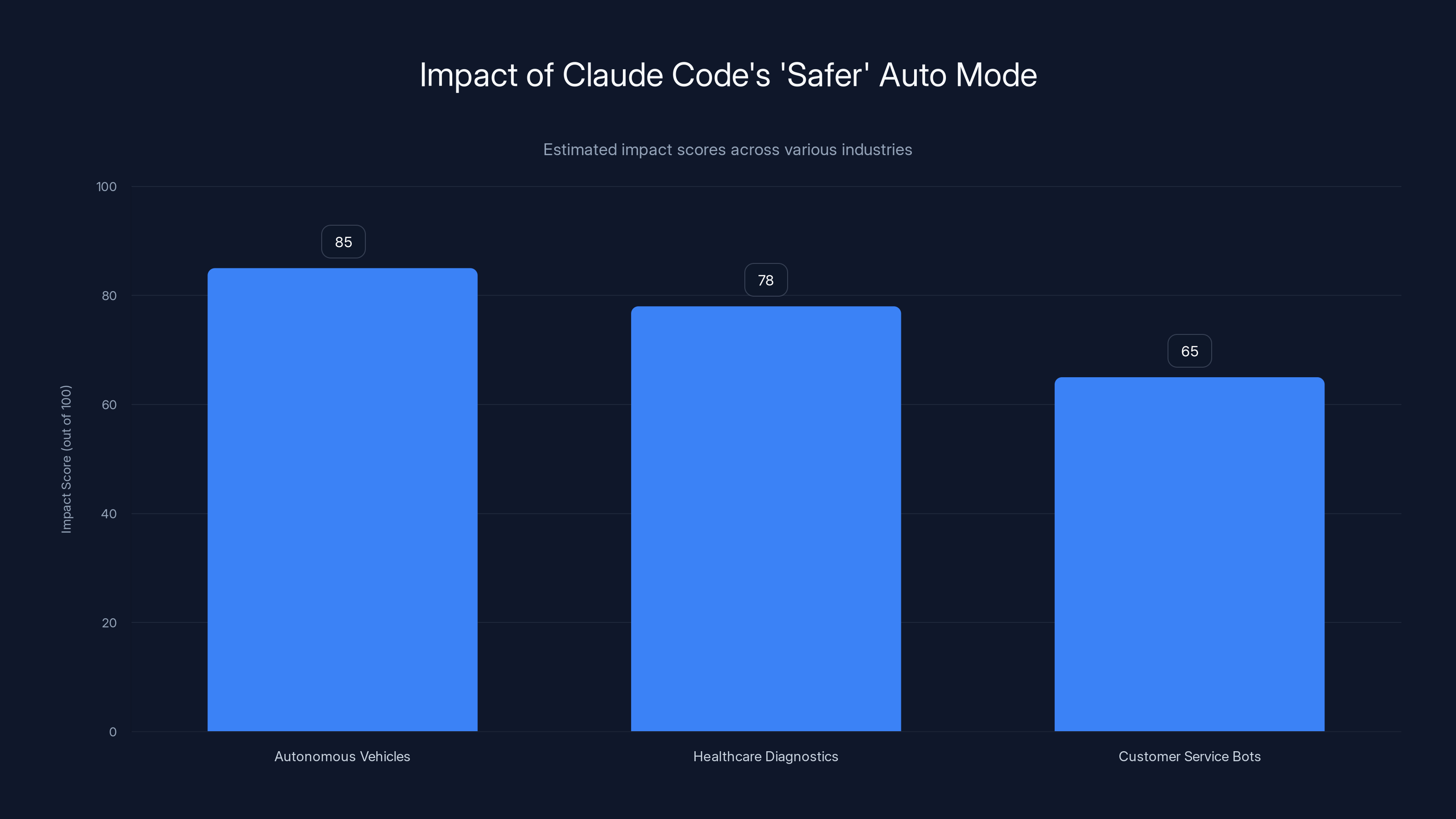

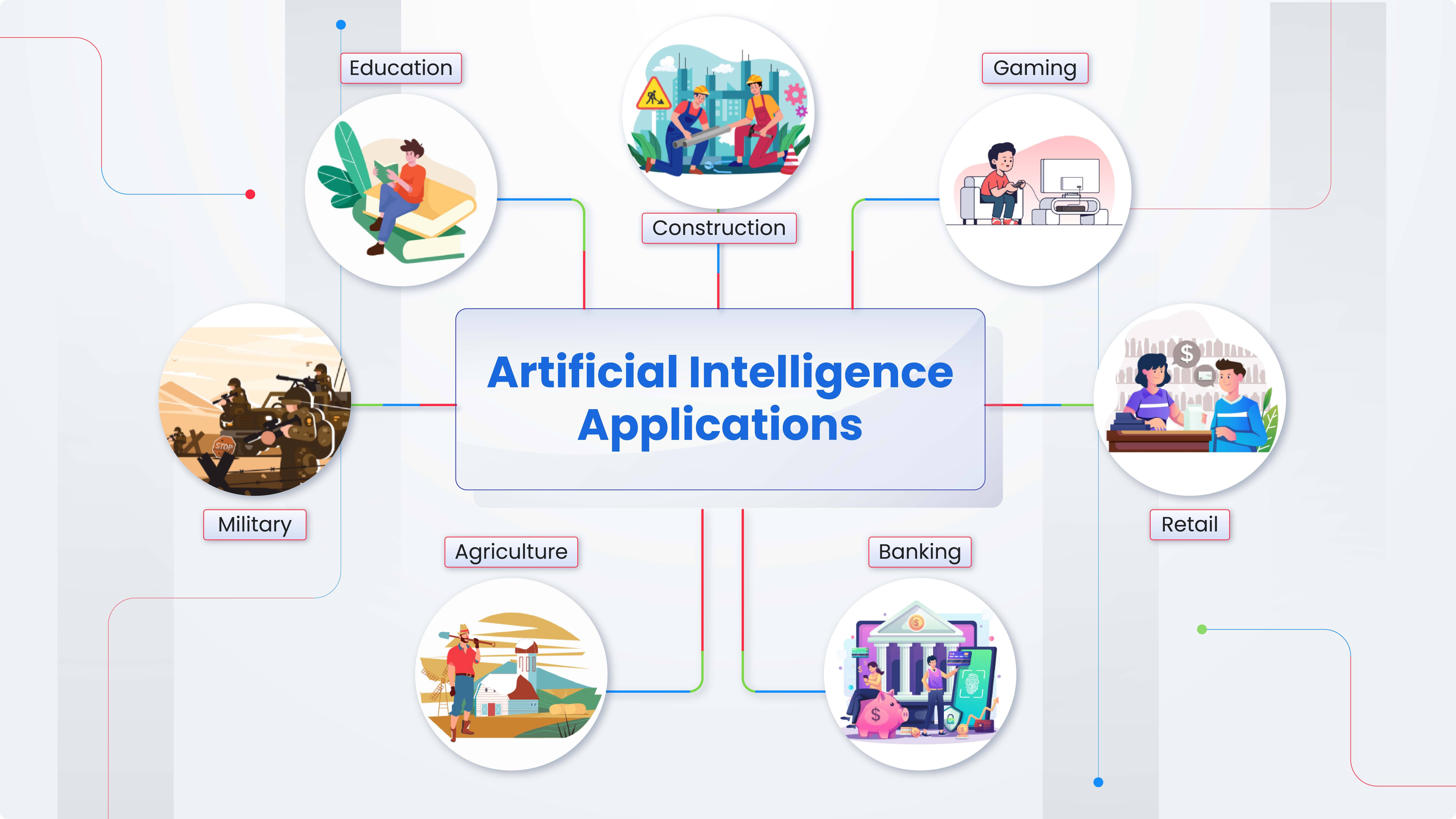

Claude Code's 'safer' auto mode is estimated to have the highest impact on autonomous vehicles, followed by healthcare diagnostics and customer service bots. Estimated data.

TL; DR

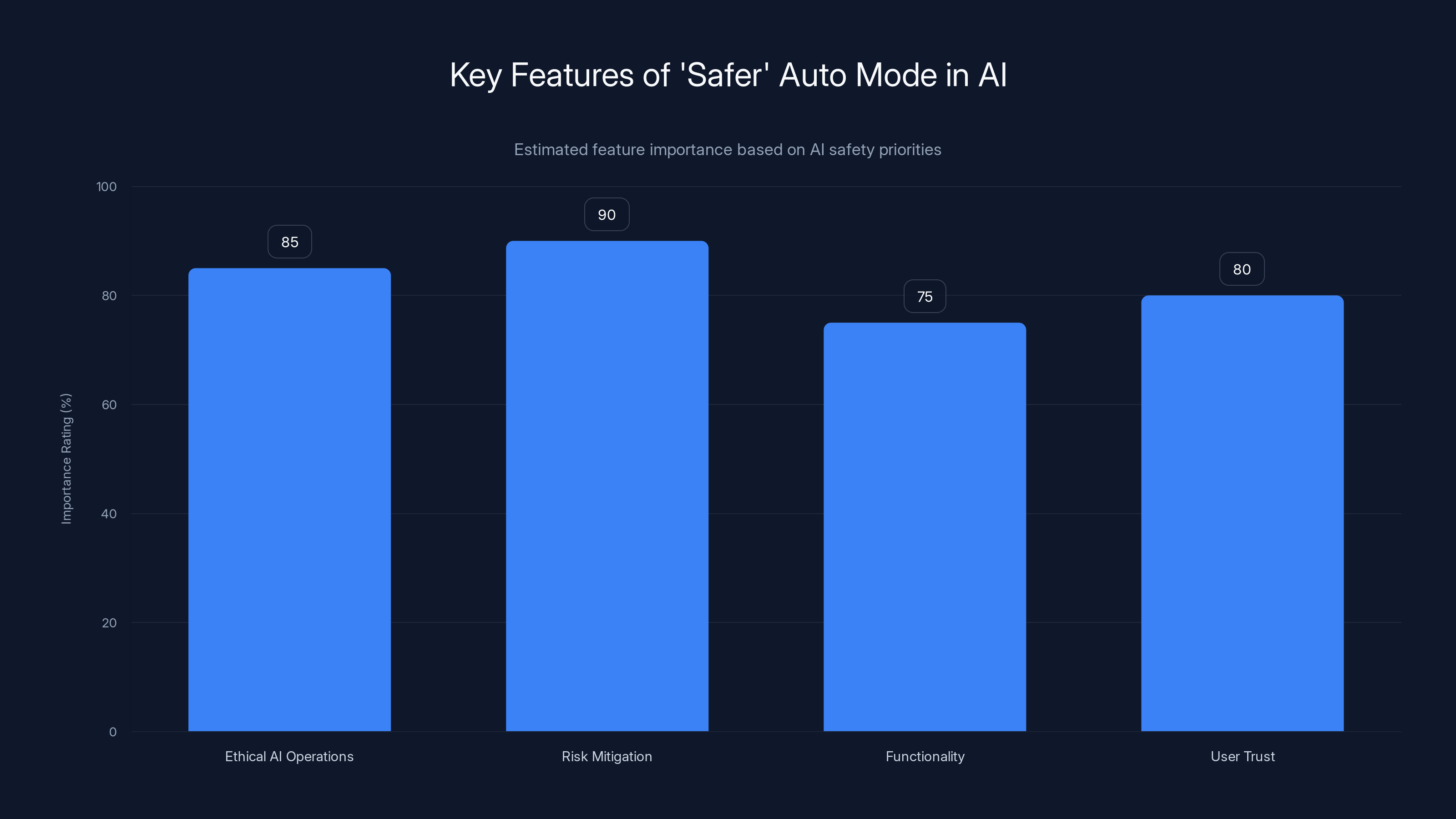

- Claude Code's 'safer' auto mode enhances AI safety by restricting autonomous operations to predefined ethical guidelines. This is supported by The Hans India, which highlights its focus on ethical AI operations.

- New algorithms minimize risk of AI behavior deviating from intended functions, as detailed in TechCrunch.

- Practical applications include autonomous vehicles, healthcare diagnostics, and customer service bots, as noted by SiliconANGLE.

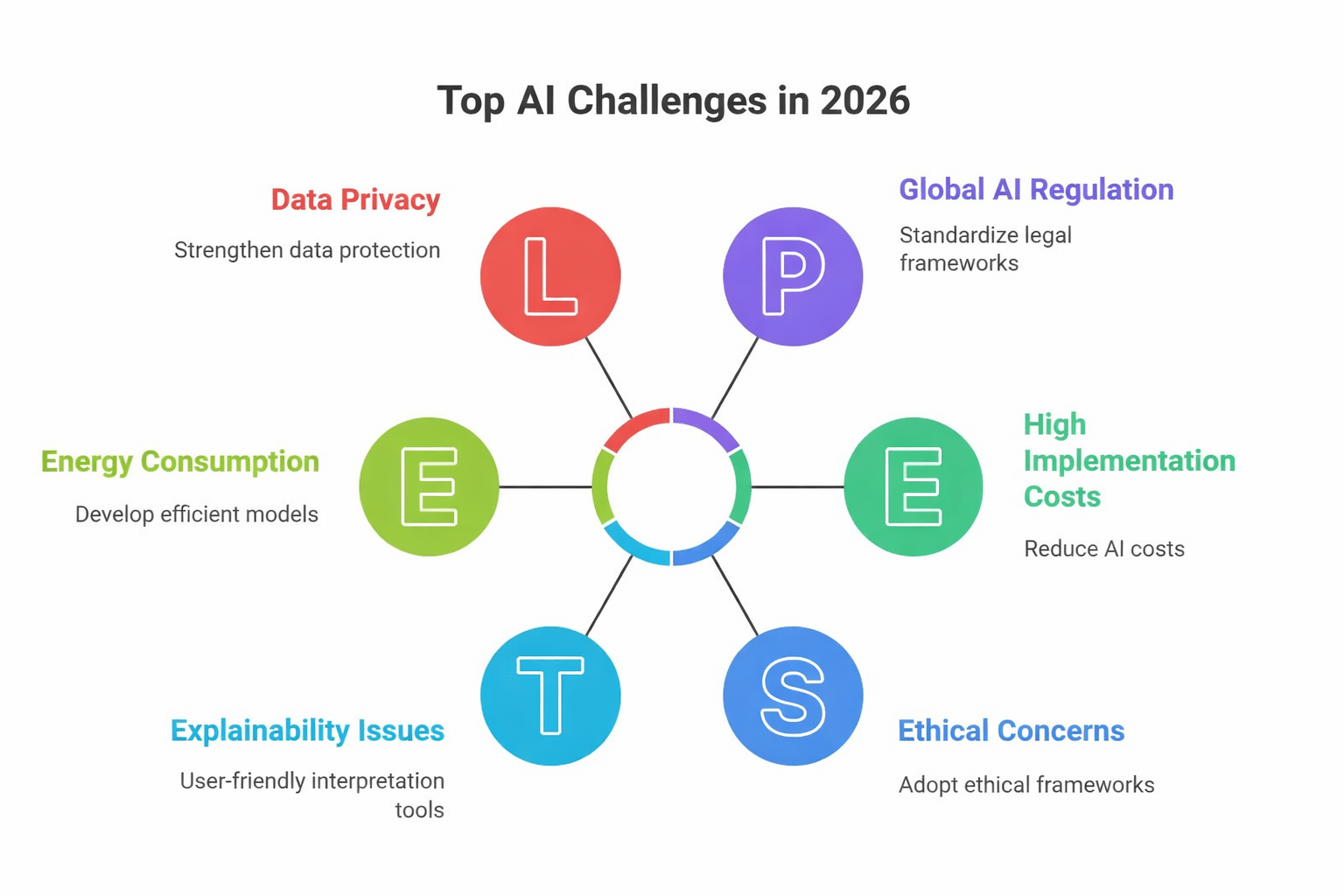

- Implementation challenges involve computational overhead and algorithmic bias, as explored in InfoWorld.

- Future prospects see broader adoption across industries with continued improvements in AI ethics, as discussed in Time.

The 'safer' auto mode emphasizes risk mitigation and ethical operations, with estimated importance ratings showing these as top priorities. Estimated data.

The Genesis of 'Safer' Auto Mode

Anthropic's Claude Code has been at the forefront of AI development, prioritizing safety without compromising on functionality. The introduction of the 'safer' auto mode is a testament to their ongoing efforts to balance these two critical aspects. But what exactly does this mode entail, and why is it necessary?

The Need for Safer AI

With AI systems becoming more autonomous, the potential for misuse or unintended consequences grows. This has led to increased scrutiny over AI safety protocols. The 'safer' auto mode is designed to address these concerns by incorporating safety checks and ethical guidelines directly into the AI's operational framework, as explained by Cornerstone OnDemand.

- Ethical AI Operations: Ensuring AI actions align with human values and societal norms.

- Risk Mitigation: Reducing the likelihood of AI systems causing harm, either through malfunction or misuse.

How Claude Code's 'Safer' Auto Mode Works

At its core, Claude Code's 'safer' auto mode employs a combination of advanced algorithms and continuous monitoring to ensure AI systems operate within safe parameters, as described in Towards Data Science.

Algorithmic Framework

The framework is built on several key components:

- Constraint-Based Models: Define the permissible actions an AI can take, ensuring operations remain within safe boundaries.

- Real-Time Monitoring: Continuously assesses AI behavior against predefined safety criteria.

- Feedback Loops: Allow the system to learn from past actions and adapt to prevent future errors.

python# Pseudocode for Safety Monitoring

class Safe Mode:

def __init__(self, ai_system):

self.ai_system = ai_system

self.constraints = self.load_constraints()

def load_constraints(self):

# Load predefined safety constraints

return []

def monitor(self):

while True:

if not self.check_safety():

self.shutdown()

def check_safety(self):

# Check current state against constraints

return True

def shutdown(self):

# Safely shutdown AI system

pass

Practical Applications

The implications of this technology are vast, affecting numerous industries:

- Autonomous Vehicles: Ensures that self-driving cars adhere to safety standards, reducing accidents and fatalities, as noted by Hacker Noon.

- Healthcare Diagnostics: Enhances the reliability of AI in diagnosing diseases, minimizing false positives and negatives, as discussed in Wiz.

- Customer Service Bots: Maintains a respectful and ethical interaction with users, improving user experience.

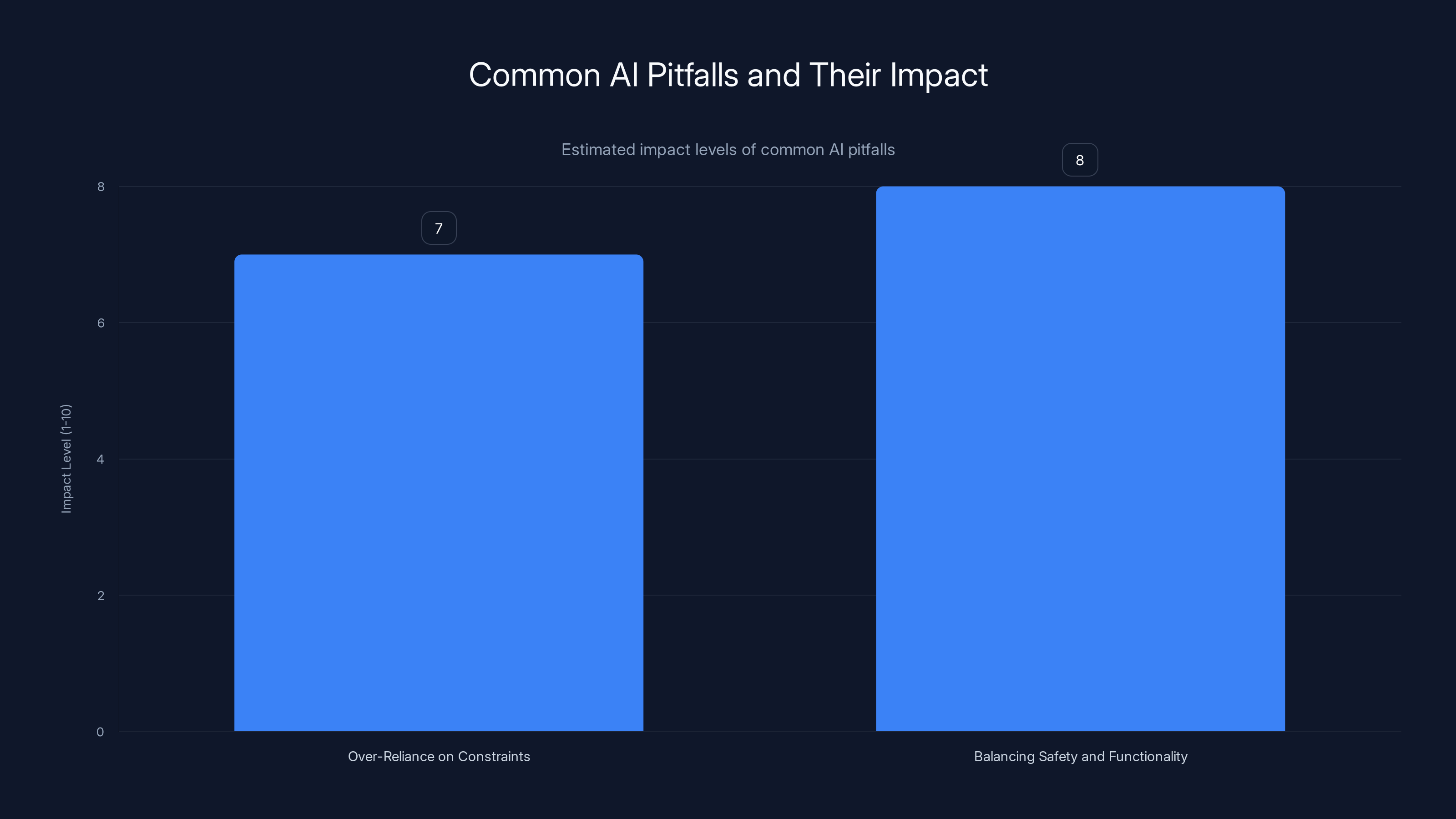

Estimated data shows that balancing safety and functionality has a slightly higher impact level than over-reliance on constraints. Estimated data.

Implementation Challenges

Despite its potential, implementing 'safer' auto mode is not without challenges. These include:

- Computational Overhead: The continuous monitoring and evaluation of AI actions require significant computational resources, as highlighted by Find Articles.

- Algorithmic Bias: Ensuring that the safety constraints do not inadvertently introduce bias into AI operations, as explored in InfoWorld.

Common Pitfalls and Solutions

Over-Reliance on Predefined Constraints

A common pitfall is relying too heavily on predefined constraints, which can limit AI adaptability.

- Solution: Implement adaptive learning algorithms that allow AI to update its constraints based on new data and experiences, as suggested by 9to5Mac.

Balancing Safety and Functionality

Another challenge is maintaining a balance between AI safety and functionality, as overly restrictive constraints can hinder AI performance.

- Solution: Utilize machine learning to identify optimal safety thresholds that maximize both safety and functionality, as detailed in TechCrunch.

Future Trends and Recommendations

As AI technology evolves, so too will the strategies for ensuring its safety. Here are some trends and recommendations for the future:

Increased Collaboration

Collaboration between AI developers, ethicists, and policymakers will be crucial in developing standards that ensure AI safety across industries, as emphasized by Cornerstone OnDemand.

Continuous Improvement

AI safety protocols should not be static. Continuous evaluation and improvement of safety measures are necessary to keep pace with technological advancements, as discussed in Time.

Wider Adoption Across Industries

As confidence in AI safety grows, we can expect to see broader adoption of AI technologies across various sectors, from finance to healthcare to transportation, as noted by SiliconANGLE.

Conclusion

Anthropic's Claude Code 'safer' auto mode represents a significant step forward in AI safety. By prioritizing ethical guidelines and implementing robust safety checks, this technology promises to make AI systems more reliable and trustworthy. As the industry continues to evolve, the lessons learned from this innovation will undoubtedly shape the future of AI development, as highlighted by 9to5Mac.

FAQ

What is Claude Code's 'safer' auto mode?

Claude Code's 'safer' auto mode is an enhancement that incorporates safety checks and ethical guidelines directly into AI systems, ensuring they operate within predefined safe parameters, as explained by The Hans India.

How does the 'safer' auto mode work?

The mode uses constraint-based models, continuous monitoring, and feedback loops to maintain AI operations within safe boundaries and adapt to prevent errors, as detailed in Towards Data Science.

What industries benefit from this technology?

Industries such as autonomous vehicles, healthcare diagnostics, and customer service will see significant improvements in safety and reliability, as noted by Hacker Noon.

What are the common challenges with implementing this mode?

Challenges include computational overhead and the risk of algorithmic bias, which can impact AI performance and fairness, as explored in InfoWorld.

How can AI safety protocols be improved?

Continuous collaboration between developers, ethicists, and policymakers, along with adaptive learning algorithms, can enhance AI safety measures, as emphasized by Cornerstone OnDemand.

What is the future of AI safety?

AI safety will continue to evolve with technology, requiring ongoing evaluation and improvement of safety protocols and wider industry adoption, as discussed in Time.

Key Takeaways

- Claude Code's 'safer' auto mode enhances AI safety with ethical guidelines, as highlighted by 9to5Mac.

- Advanced algorithms minimize AI behavior risks and ensure user trust, as detailed in TechCrunch.

- Practical applications span autonomous vehicles, healthcare, and customer service, as noted by SiliconANGLE.

- Key challenges include computational overhead and algorithmic bias, as explored in InfoWorld.

- Future trends point to broader AI adoption with improved safety measures, as discussed in Time.

Related Articles

- Balancing AI Autonomy and Control: Anthropic's Claude Code Update [2025]

- OpenAI Shuts Down Sora: Exploring the Impact and Future of AI Video Generation [2025]

- Building Safe AI for Teens: OpenAI's New Open Source Tools [2025]

- Mastering Anthropic's Claude Code and Cowork: Revolutionizing Human-Computer Interaction [2025]

- Claude Code and Cowork: Transforming AI Interaction with Your Computer [2025]

- The Rise and Fall of OpenAI's Sora: Lessons from the Creepiest App [2025]

![Understanding Anthropic’s Claude Code: Safer Auto Mode Revolution [2025]](https://tryrunable.com/blog/understanding-anthropic-s-claude-code-safer-auto-mode-revolu/image-1-1774440412714.jpg)