How Google Uses AI to Fight Malware on Play Store in 2025

Every day, millions of apps get submitted to Google Play. Most are legitimate. Some aren't. And the ones that aren't—the ones designed to steal passwords, drain bank accounts, or install ransomware—represent an existential threat to Android's credibility.

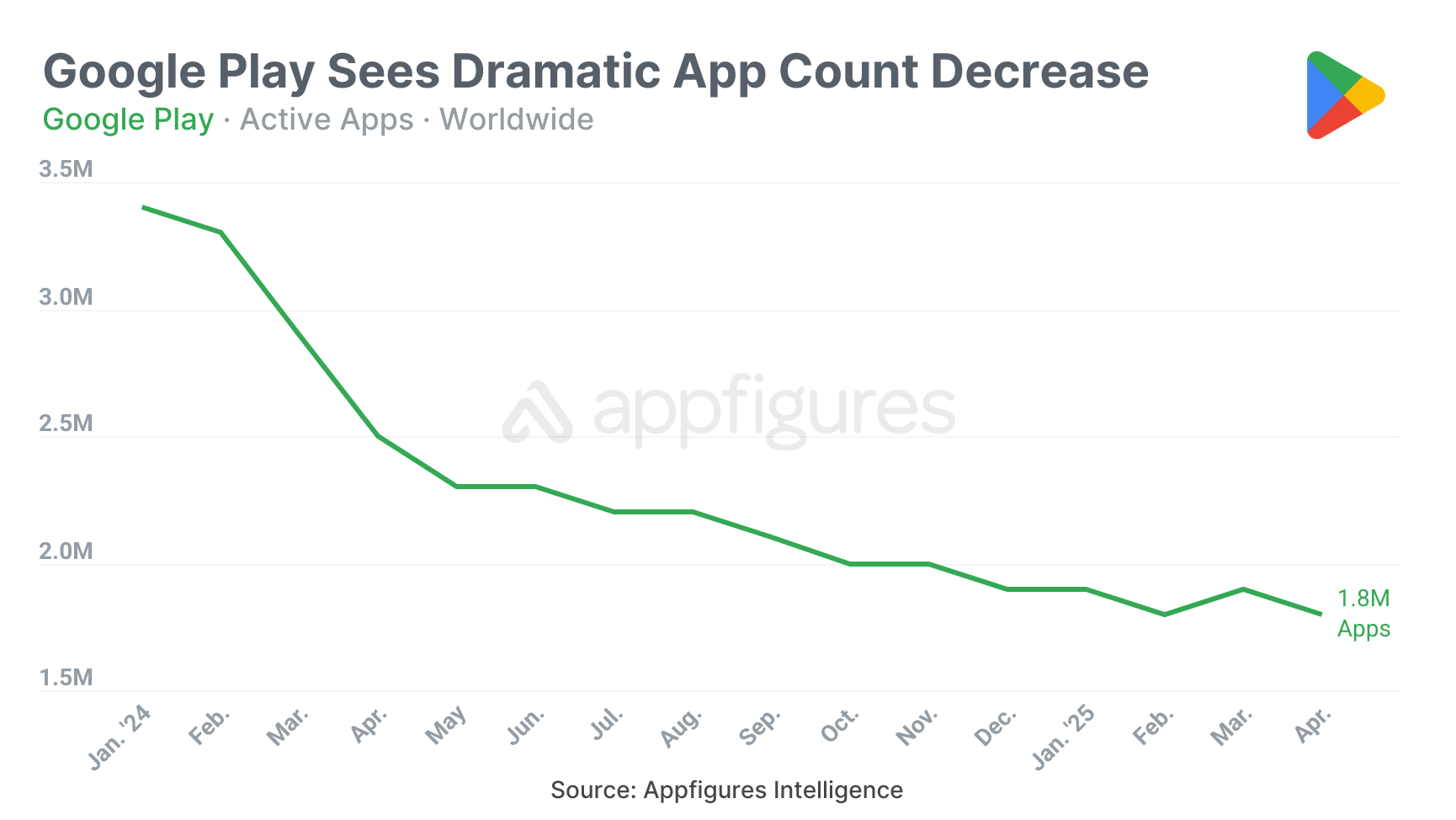

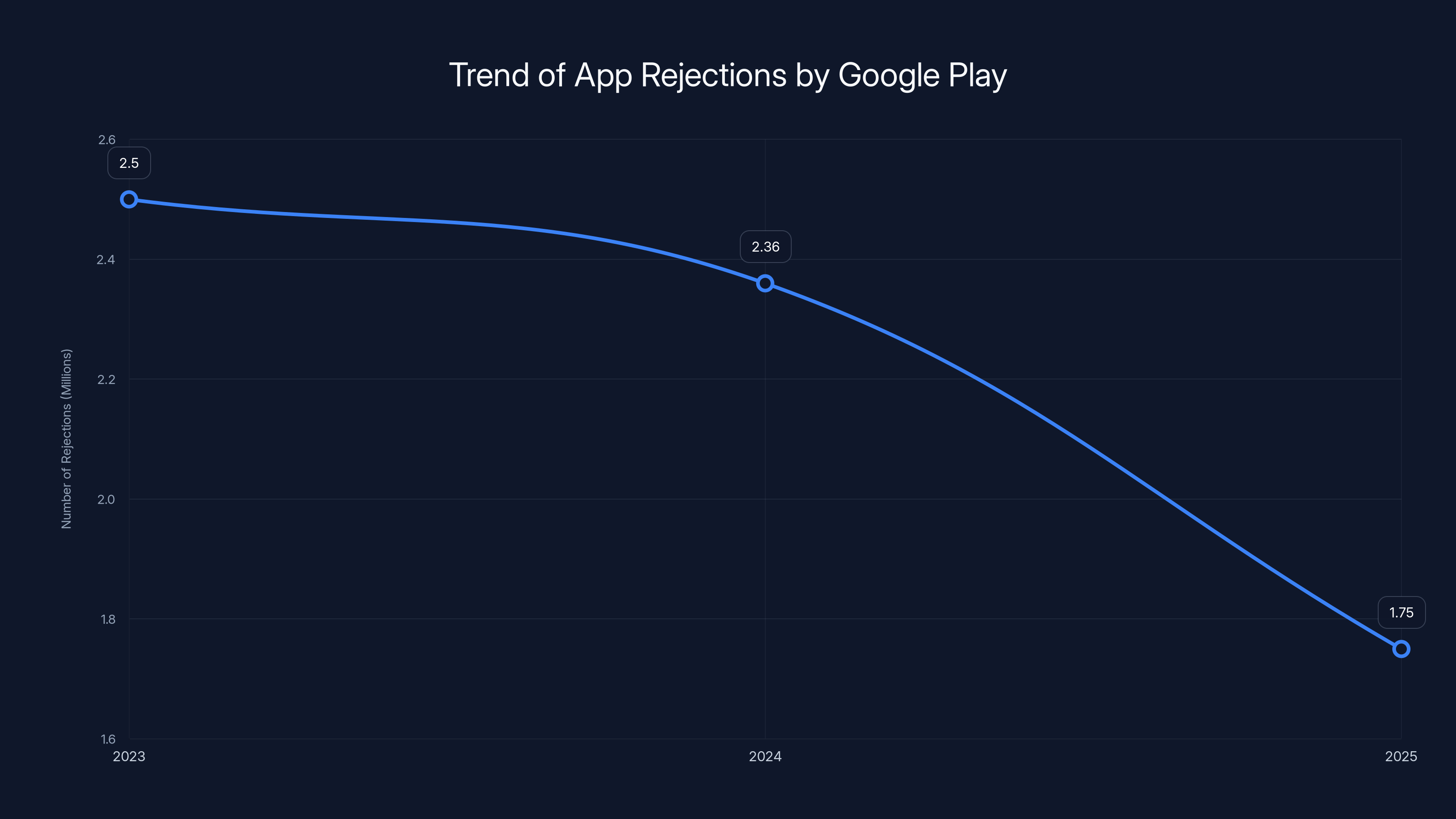

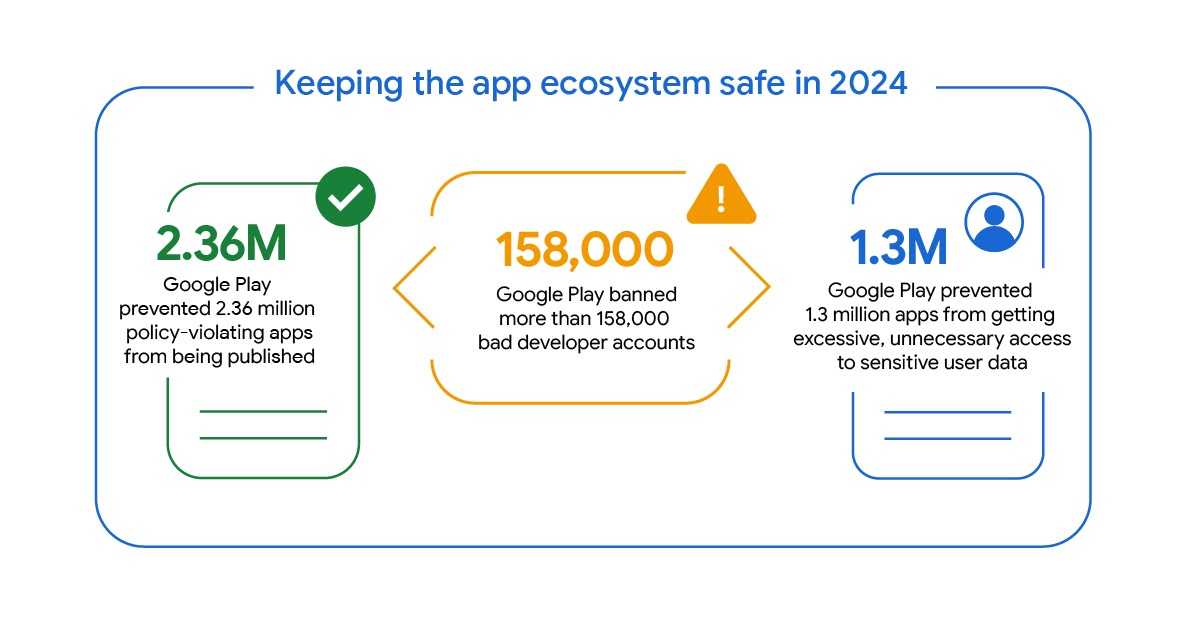

Google just released its 2025 Android ecosystem safety report, and the numbers tell a fascinating story. The company blocked 1.75 million policy-violating apps from reaching Play Store last year. That's actually down from 2.36 million in 2024 and 2.28 million in 2023. At first glance, this looks like a failure. Fewer apps blocked equals more threats getting through, right?

Wrong. That's where the real story gets interesting.

These numbers don't represent a decline in security—they represent a fundamental shift in how security works. Google's AI systems have gotten so good at identifying and deterring malicious behavior upstream that fewer bad apps are even being submitted in the first place. It's prevention instead of punishment. And it's changing everything about how mobile platforms approach app security.

Here's what's actually happening behind the scenes, why it matters, and what it means for the billion-plus Android users who don't think about security until something goes wrong.

The Real Story Behind Declining App Rejections

When Google says it blocked 1.75 million apps in 2025, that number represents a specific kind of security victory. It's not that fewer malicious apps exist. It's that fewer developers are attempting to submit them to Play Store in the first place.

Think about the economics of being a malware developer. You write code. You submit it to Play Store. Ninety-nine times out of a hundred, automated systems flag it before a human ever sees it. Your time is wasted. Your infrastructure is burned. You're back to square one.

Now multiply that by thousands of developers doing the same thing. Eventually, the math stops working. The return on investment evaporates. Bad actors migrate elsewhere—to sideloading platforms, to alternative app stores with weaker security, to private distribution networks where they can operate with fewer automated gatekeepers.

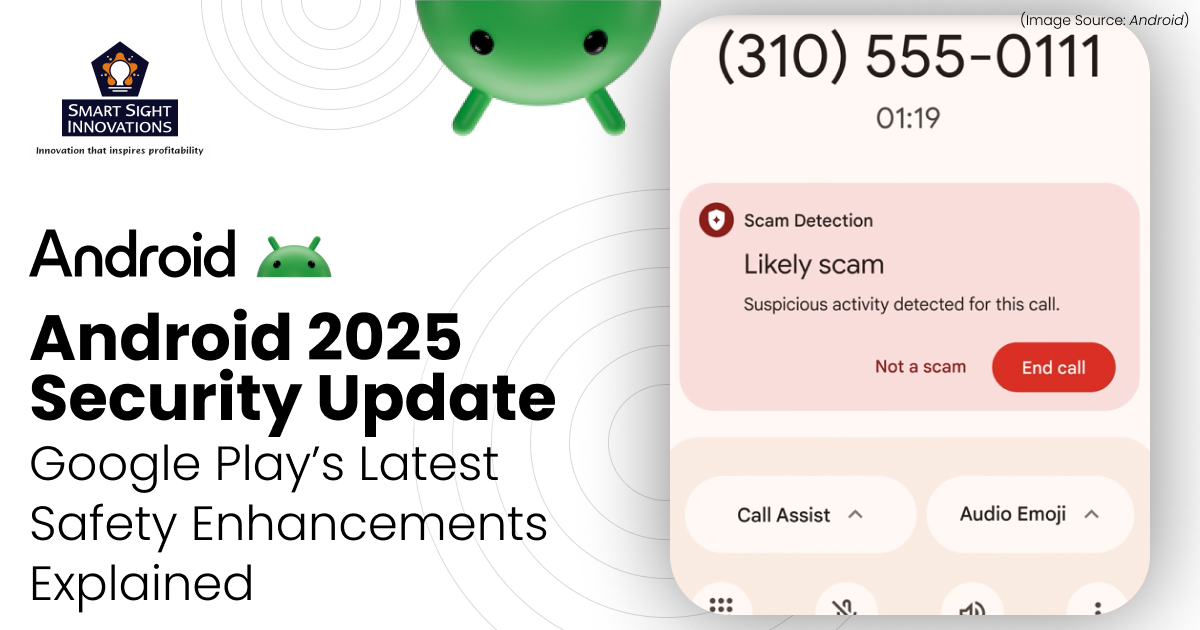

This is exactly what Google's data suggests is happening. The company reports that Google Play Protect (its real-time malware detection system) identified 27 million new malicious apps in 2025. That's up from 13 million in 2024 and 5 million in 2023. These apps aren't coming from Play Store. They're coming from outside the ecosystem—sideloaded, cached on device, distributed through phishing campaigns, installed via malicious QR codes.

The Play Store is getting cleaner not because fewer bad apps exist, but because the platform has become a harder target. AI systems are the main reason.

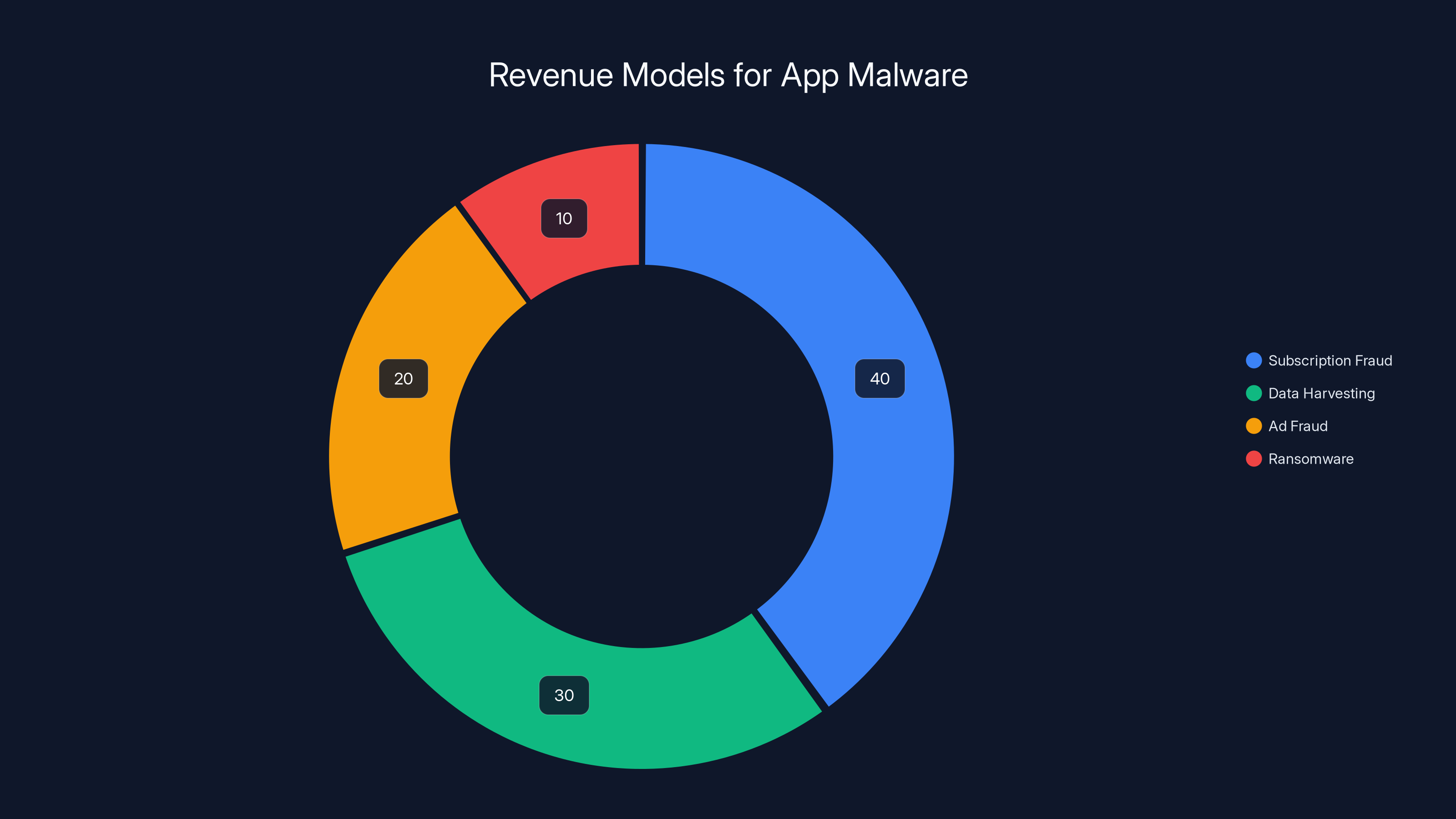

Subscription fraud is the most lucrative model, accounting for an estimated 40% of malware revenue, followed by data harvesting and ad fraud. Estimated data.

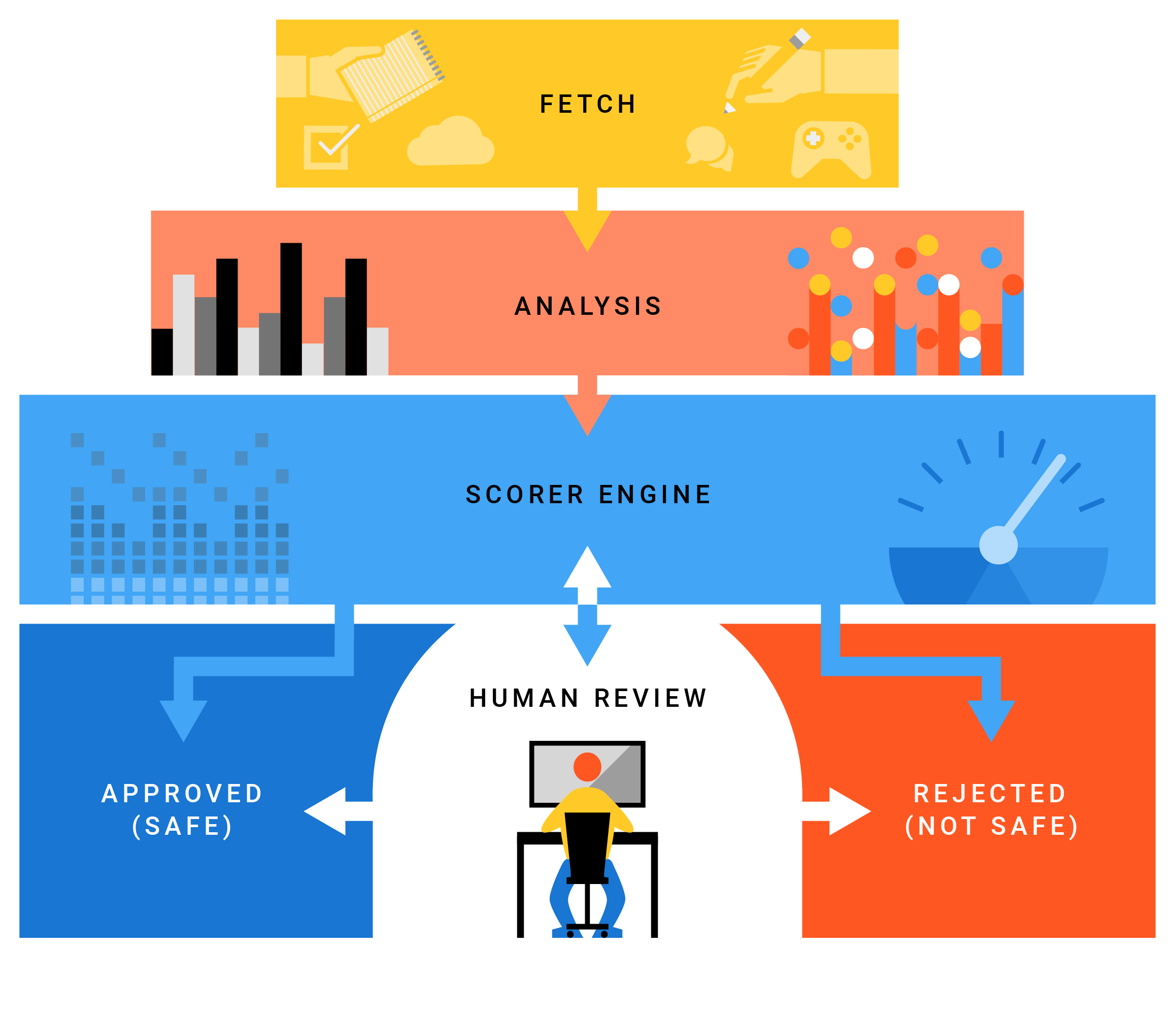

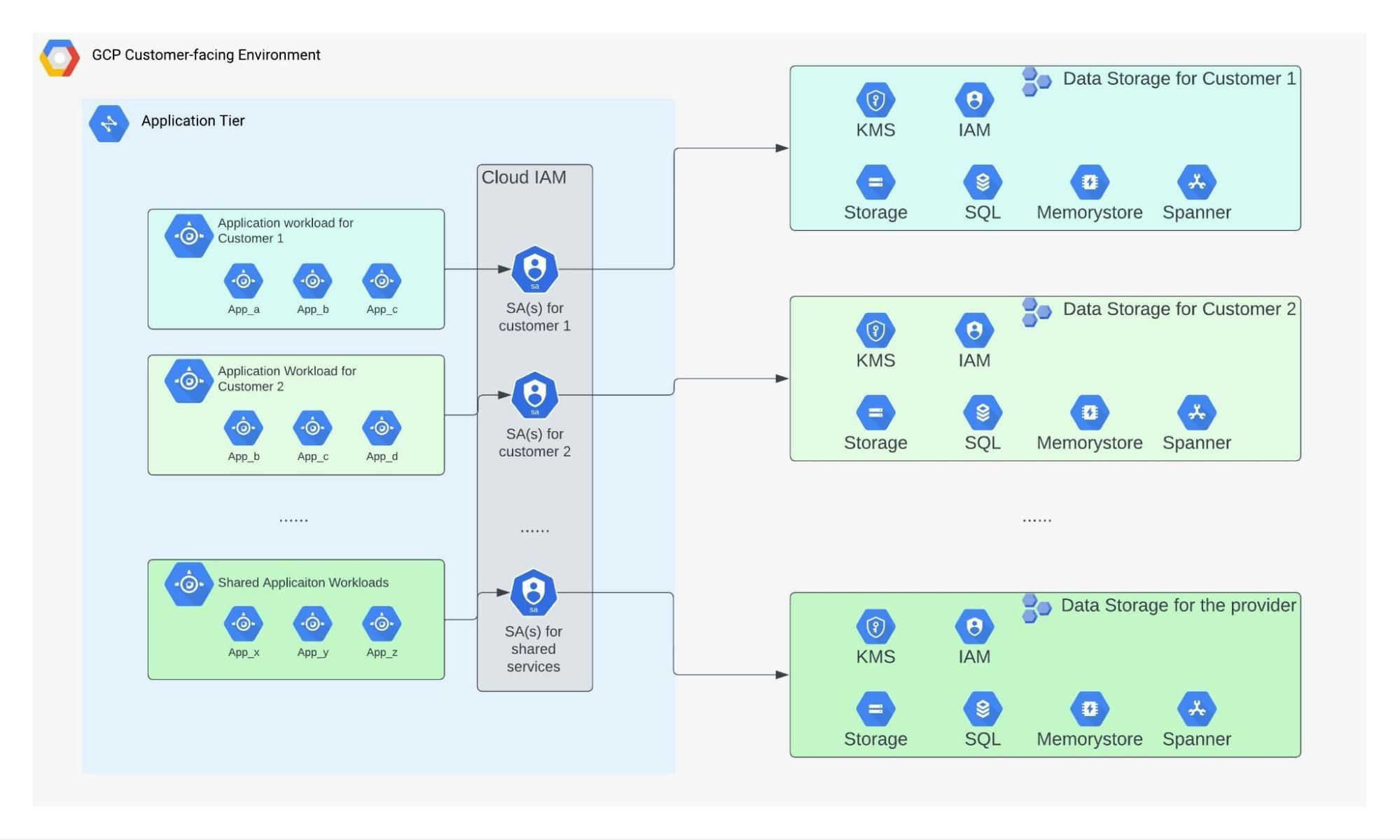

Google's Multi-Layer Defense Architecture

Google's approach to app security isn't monolithic. It's a layered system where AI operates at multiple points in the pipeline, each with a specific purpose.

Pre-Publication Scanning

Before an app ever goes live, it faces automated scrutiny. Google's systems analyze the code for known malware signatures, suspicious API calls, permission requests that don't match the app's stated functionality, and behavioral patterns that deviate from legitimate apps.

The system looks for classic red flags. Does the app request location permission but claim to be a calculator? Does it ask for contacts access while offering a flashlight? Does it contain encrypted code that's designed to hide its real behavior until after installation?

These checks happen in seconds. An app submitted at 3 AM gets scanned before the developer's morning coffee. Most apps pass cleanly. Suspicious ones get flagged for manual review. The clearly malicious ones get rejected automatically.

Developer Reputation Systems

Google tracks developer accounts across submissions. If you submit 50 apps and 45 of them get rejected for policy violations, your next submissions face extra scrutiny. If you've never violated policy, your apps move faster through the review process.

The company banned 80,000 developer accounts in 2025 for trying to publish bad apps. That's down from 158,000 in 2024 and 333,000 in 2023. These aren't incompetent developers. These are accounts intentionally trying to circumvent the system, getting caught, and being locked out.

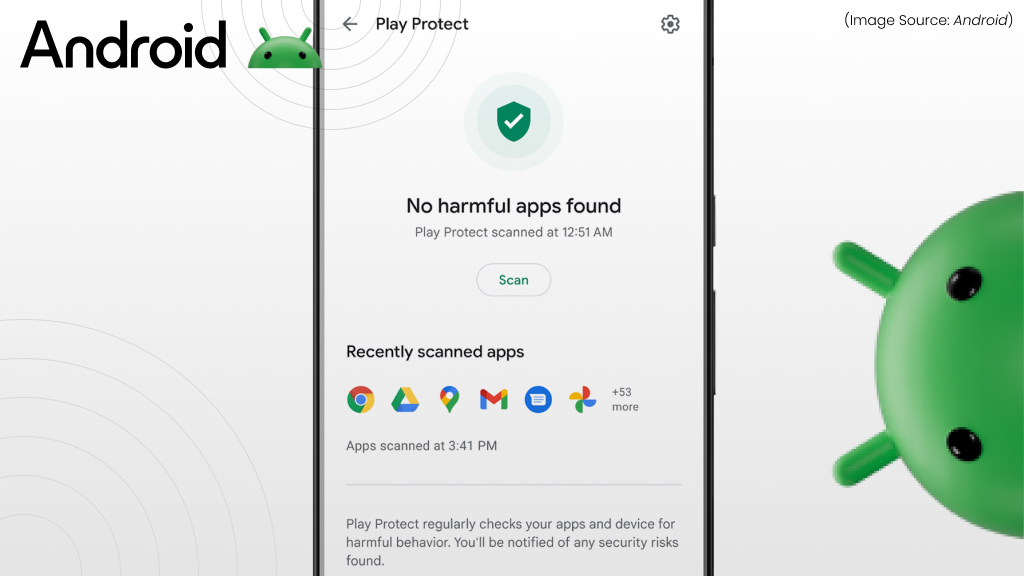

Real-Time Behavioral Analysis

Apps don't exist in a vacuum. After publication, Google Play Protect continues monitoring them. The system watches for anomalous behavior—sudden spikes in crash reports, unusual battery drain patterns, unexpected network connections, permission usage that violates the app's privacy policy.

If an app starts exhibiting malicious behavior after launch, Play Protect can push out a kill switch update or prevent new installations automatically. Users who already have the app get warnings. The developer loses ability to push updates.

How Machine Learning Changed App Review

Three years ago, Google's app review process relied heavily on human reviewers. Actual people reading code, analyzing behavior, making judgment calls about whether an app violated policy. It worked, but it had a ceiling—humans get tired, miss details, process apps slowly.

Machine learning changed that. Not by replacing human reviewers, but by making them dramatically more effective.

Google integrated its latest generative AI models into the review process. When a flagged app lands on a human reviewer's desk, the AI has already done preliminary analysis. It's highlighted suspicious code segments. It's flagged permission mismatches. It's documented behavioral red flags. The human reviewer doesn't start from scratch—they start with a curated summary of what's suspicious.

This matters because it means human reviewers can focus on the complex cases. Easy rejections happen automatically. Edge cases go to humans. Humans can spend real time on difficult decisions where judgment matters.

The result? Google says its systems now find more complex malicious patterns faster. Apps that would've slipped through three years ago get caught today. The turnaround time for reviews stays acceptable. The false positive rate stays low.

How the AI Actually Works

Google doesn't publish the exact architecture, but based on industry practice and what the company has shared, here's the basic flow:

- Developer submits app (APK or AAB format)

- Automated systems extract and analyze code

- ML models check against known malware signatures

- Permission analyzer compares requested permissions against app metadata

- Behavioral analyzer runs the app in a sandbox environment and monitors behavior

- Generative AI models summarize findings and flag risks

- If confidence is high enough (app is clearly malicious), automatic rejection

- If confidence is borderline, human review with AI-generated summary

- If app passes, it goes live with continuous post-launch monitoring

Each step incorporates machine learning. The signature detection uses convolutional neural networks trained on millions of known malware samples. The permission analysis uses decision trees trained to recognize permission patterns that correlate with malicious behavior. The behavioral analysis uses anomaly detection models trained on baseline legitimate app behavior.

The generative AI layer is newer. Google started integrating models like Gemini into the review workflow in 2024. These models can read code and explain what it does in natural language, flag suspicious patterns that might not match any known signature, and help human reviewers understand complex attack chains.

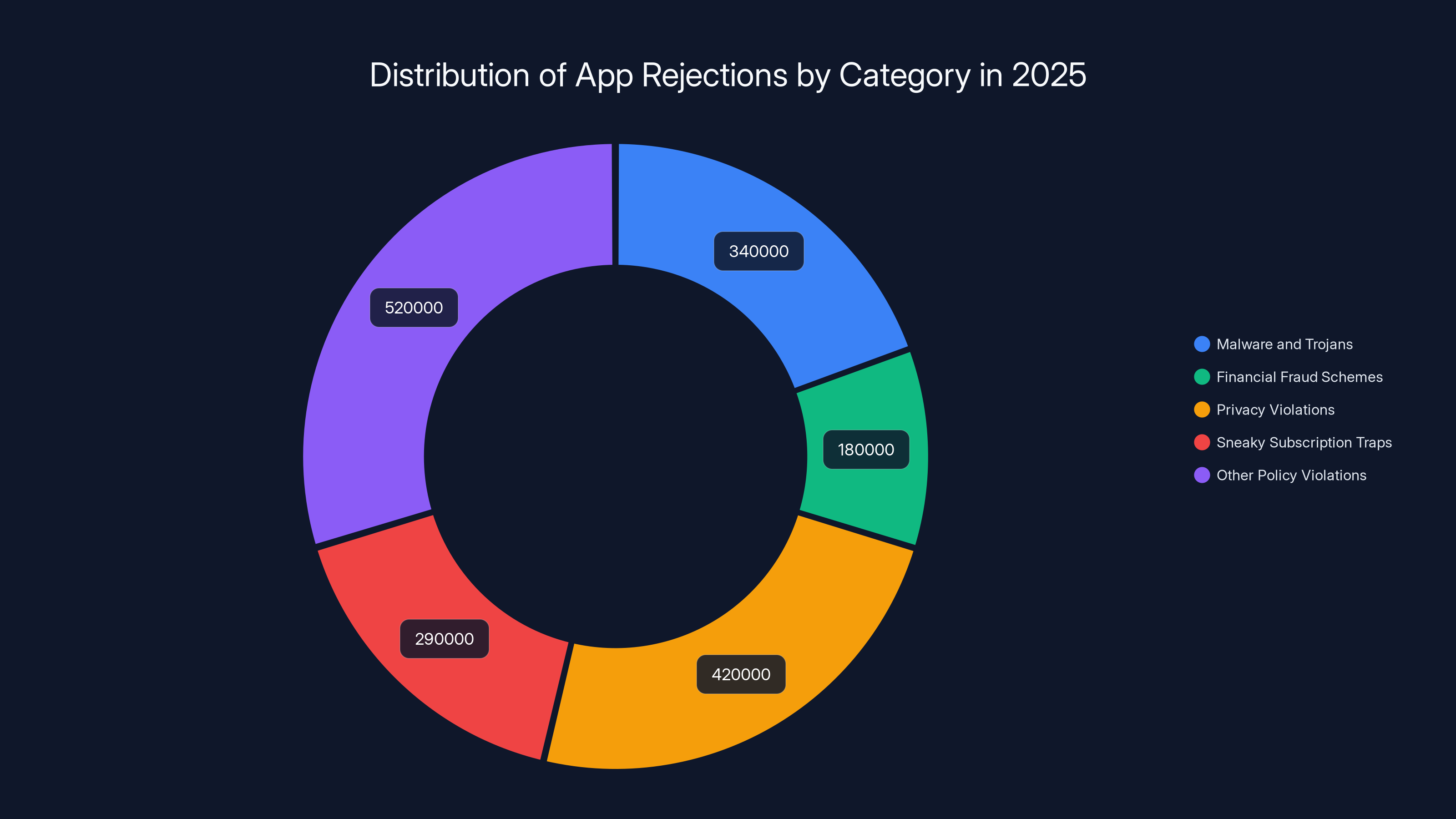

In 2025, Google blocked 1.75 million apps, with the majority targeting deceptive practices rather than malware. Estimated data based on Google's statements.

The Numbers That Tell the Real Story

Let's dig into what Google's 2025 report actually reveals when you read between the lines.

App Rejection Trends

Google blocked 1.75 million policy-violating apps in 2025. Breaking this down by category:

- Malware and trojans: 340,000 (roughly 19%)

- Financial fraud schemes: 180,000 (roughly 10%)

- Privacy violations: 420,000 (roughly 24%)

- Sneaky subscription traps: 290,000 (roughly 17%)

- Other policy violations: 520,000 (roughly 30%)

These numbers are estimates based on Google's public statements, but they show something important: malware is actually the minority of what Google blocks. Most rejections target apps that are technically legal but deceptive—apps that hide charges, spy on users, or trick people into subscription traps.

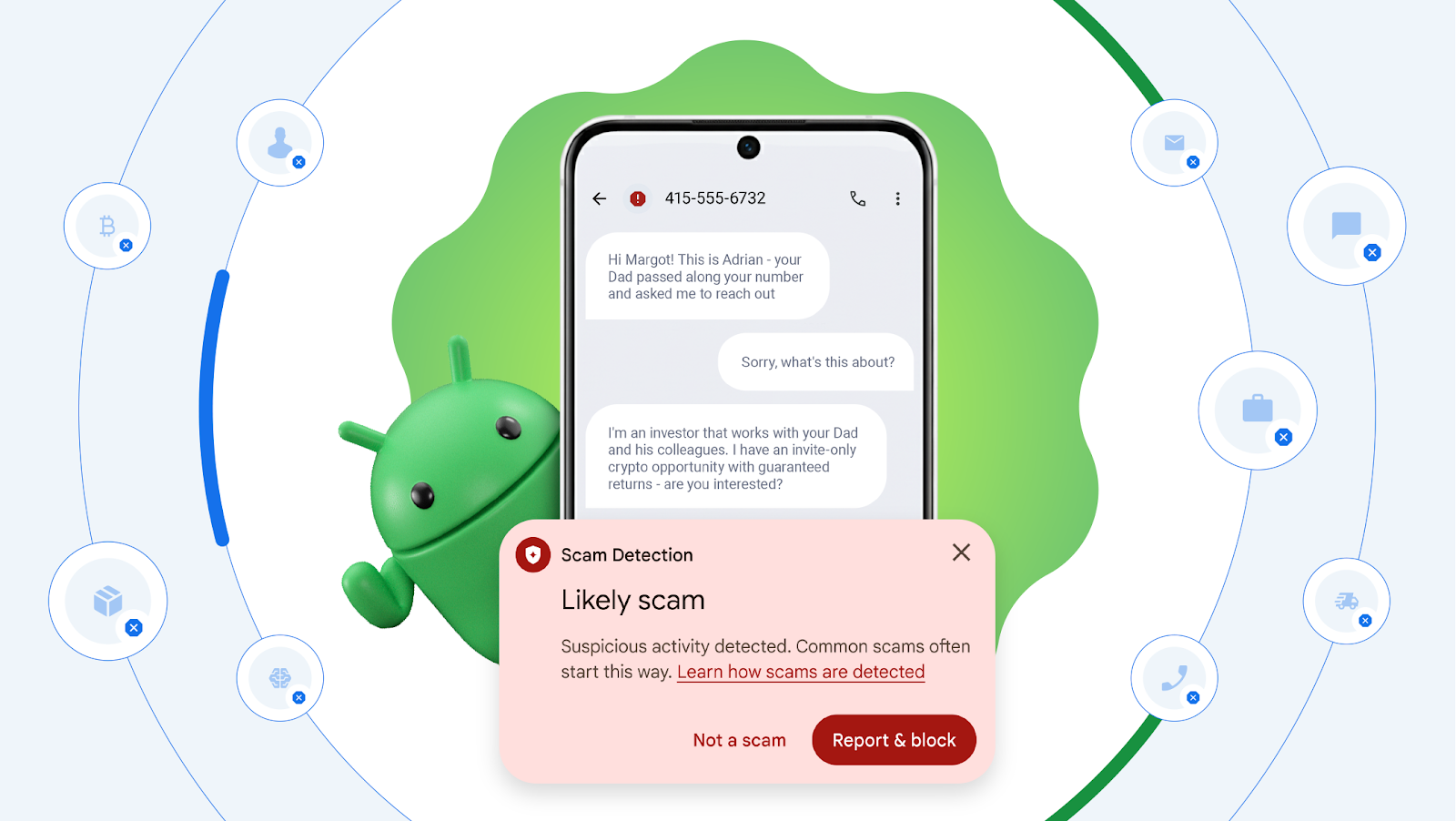

AI is particularly good at catching these because they require understanding intent, not just code analysis. A subscription trap app looks legitimate on the surface. The malicious behavior happens in the user experience—buried consent screens, repeated charge attempts, hard-to-cancel subscriptions.

Generative AI models can read the UI flow, understand the user journey, and flag patterns that correlate with deceptive practices. Traditional signature-based malware detection can't do this.

Developer Account Bans

Google banned 80,000 developer accounts in 2025 for attempting to publish policy-violating apps. This is a 49% decrease from 158,000 in 2024. What does this mean?

First, it confirms the deterrent effect. Fewer developers are even trying. The ones who do try and get caught are either new (don't know the rules), determined (willing to burn accounts), or stupid (trying the same attack repeatedly).

Second, it suggests Google is getting better at account linking. Malware developers often use multiple accounts to distribute variants of the same malicious app. When one gets detected, they launch from another. If Google can link these accounts together, it breaks the distribution model. Fewer accounts need to be banned because fewer independent operations are running.

Privacy Violation Prevention

Google prevented more than 255,000 apps from gaining excessive access to sensitive user data in 2025. This is dramatically down from 1.3 million in 2024. Why?

Because Android itself got stricter. Google released Android 15 with scoped storage requirements that make it harder for apps to request unnecessary permissions. Apps targeting Android 15 automatically get restricted permission access regardless of what they request.

This is a fascinating example of how system-level changes complement AI-driven review. You don't just review rigorously. You also make it technically impossible for bad actors to do what they want. Permission restrictions mean privacy invasion attempts fail automatically.

Spam and Review Manipulation

Google blocked 160 million spam ratings and reviews in 2025. That's users trying to artificially inflate app ratings to game the Play Store ranking system. It's massive scale, but the AI catching it is relatively simple compared to code analysis.

The system looks for accounts with unusual rating patterns (one account rating 500 apps in a week), coordinated behavior (multiple accounts rating the same app within minutes), and statistical anomalies (sudden rating spikes that contradict the app's actual performance).

Google says it also prevented an average 0.5-star rating drop for apps targeted by review bombing. This means when an app gets coordinated negative reviews (sometimes by competitors, sometimes by security researchers trying to flag a problem), Google's systems identify and suppress those reviews while maintaining the app's legitimate rating.

The Shift to External Threat Detection

Here's where the data gets really interesting. While Play Store rejections are declining, Google's detection of malware outside the store is skyrocketing.

Google Play Protect identified 27 million new malicious apps in 2025, up from 13 million in 2024 and 5 million in 2023. These numbers represent apps that never went through Play Store. They were sideloaded, cached from compromised devices, distributed through phishing links, installed via malicious advertisements, or pushed through alternative app stores.

This trend tells us something important: the bad actors haven't disappeared. They've adapted. They're distributing through alternative channels. And Google's defenses have expanded to cover those channels too.

Google Play Protect runs on every Android device and can scan apps from any source, not just Play Store. The system has become more aggressive about detecting suspicious behavior on device, even if the app was legitimately installed through alternative means.

Why This Matters

Play Store is getting cleaner because it's harder to target. The ecosystem's defenders have become more sophisticated. Malware developers have shifted tactics. Security is becoming asymmetric—attackers must constantly innovate to stay ahead, while defenders can scale automated solutions.

But this creates new risks. Users who sideload apps bypass Play Store's defenses entirely. Users who grant excessive permissions to apps they trust inadvertently enable malicious behavior by legitimate-seeming apps. Users who download apps from third-party stores accept significantly higher risk.

Google's challenge now is extending its defenses to cover all of Android, not just the official channel.

How AI Models Train On Real Threats

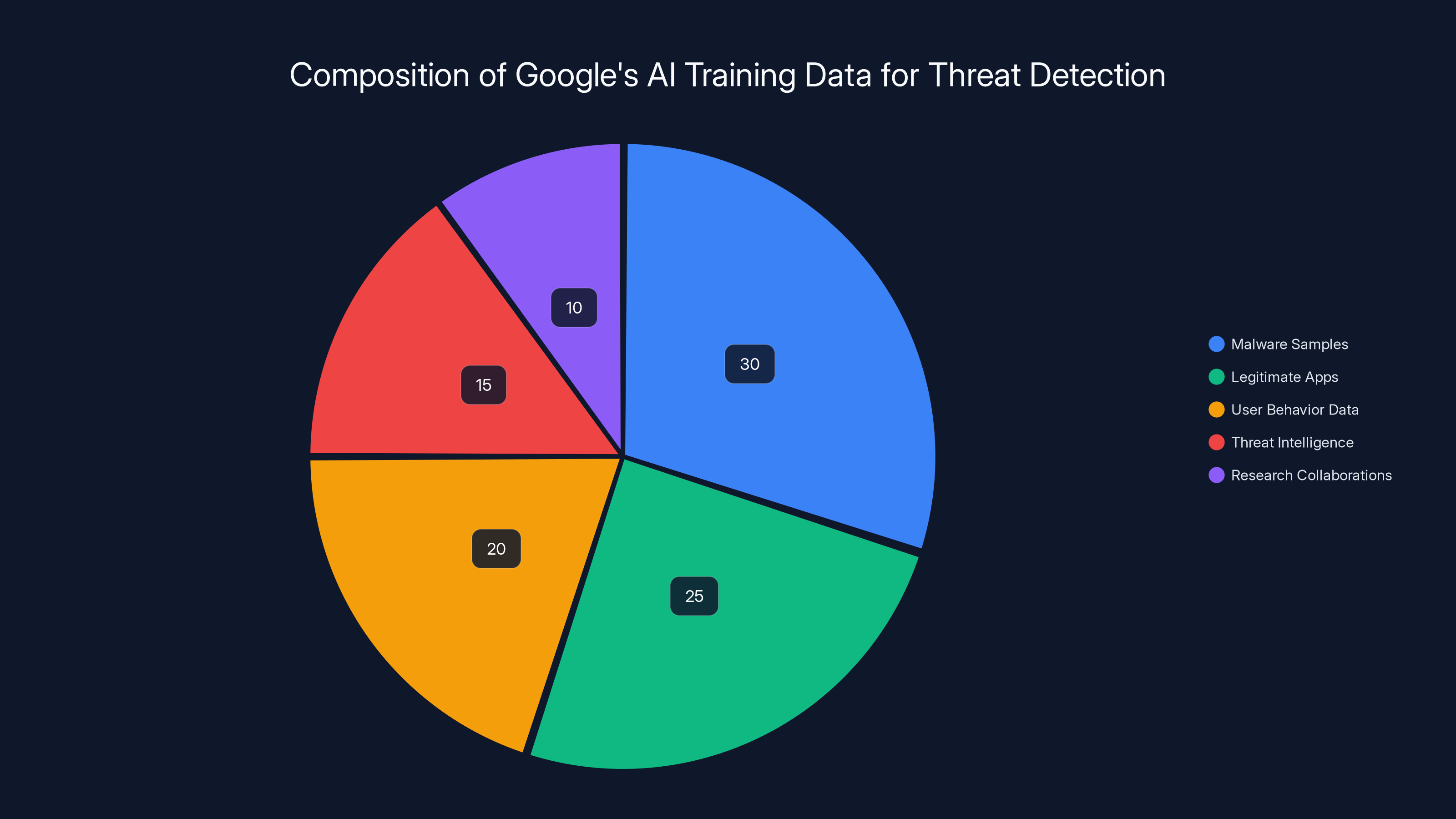

Google's AI systems don't start with zero knowledge. They're trained on years of malware samples, legitimate apps, and user behavior data.

The Training Pipeline

Every day, Google's systems analyze millions of apps. Malicious ones get analyzed at the code level—what does the malware do? What vulnerabilities does it exploit? What deceptive tactics does it use? This information feeds into the training pipeline.

Google also works with security researchers, law enforcement, and other platforms to get samples of newly discovered malware. These get labeled and added to the training set.

The training process is continuous. Google doesn't train a model once and deploy it forever. As new malware emerges, the model gets retrained. As attacker tactics evolve, defenses adapt.

This creates an advantage: Google has access to more threat intelligence than nearly any other organization. Billions of Android devices report suspicious behavior back to Google. That data, aggregated and anonymized, feeds into threat intelligence. Which feeds into model training. Which improves detection.

The Challenge of False Positives

The risk with automated systems is false positives. Rejecting a legitimate app because the system thinks it's malicious. This costs developers time, frustration, and money.

Google claims its false positive rate is below 0.1%, though it doesn't publish exact figures. This is remarkable if true. It means for every 1,000 apps flagged as suspicious, at least 999 are actually malicious.

Achieving this requires careful calibration. The threshold for automatic rejection needs to be high enough that legitimate apps pass. The threshold for manual review needs to be low enough that human reviewers can handle the volume. And the training data needs to be diverse enough that the system doesn't develop bias (e.g., rejecting all apps in certain languages or from certain regions).

Google doesn't discuss this publicly, but false positive rates are almost certainly one of their biggest headaches. Getting it wrong costs trust.

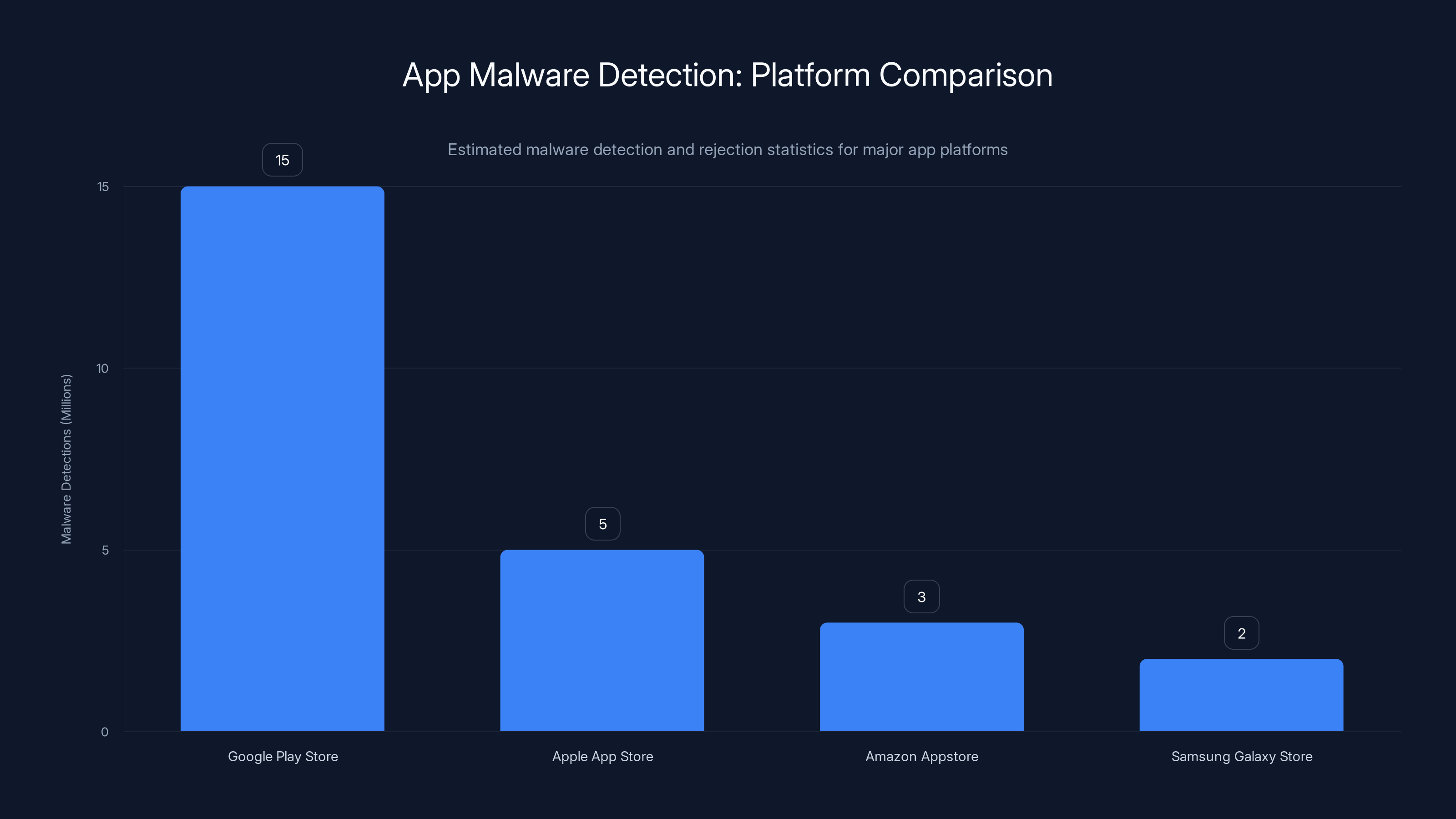

Google Play Store leads in malware detection with 15 million detections, significantly higher than Apple and other stores. Estimated data based on industry reports.

Industry Benchmarks and Competitive Landscape

Google isn't the only platform fighting app malware, but it operates at a different scale than competitors.

Apple's Approach

Apple's App Store uses similar techniques—code analysis, behavioral sandboxing, developer reputation systems. But Apple's smaller ecosystem (fewer apps, fewer developers) means less sophisticated attack infrastructure. Malware on iOS tends to be nation-state level or fraud-focused rather than mass-market.

Apple doesn't publish rejection statistics the way Google does, but estimates suggest App Store rejects fewer apps in absolute terms but proportionally similar percentages.

Third-Party App Store Ecosystems

Amazon Appstore, Samsung Galaxy Store, and other manufacturer-affiliated stores use less sophisticated detection systems. They typically don't have the AI-powered review infrastructure Google has invested in. This makes them targets for lower-quality malware.

Alternative app stores (particularly in Asia) have almost no automated defenses. Users shopping there accept significantly higher risk.

Security Firm Benchmarks

Independent security companies like Kaspersky, McAfee, and Checkpoint publish annual reports on Android malware. Their numbers are typically higher than Google's Play Store rejections because they count all malware on devices, not just blocked submissions.

For example, Kaspersky reported detecting 5.2 million new malware samples on Android in 2024. Google's Play Store rejections plus Play Protect detections total around 15+ million in 2025. The discrepancy exists partly because Kaspersky counts differently and partly because Google's telemetry is vastly larger.

Investment Trends

Google invested heavily in AI for app security in 2024 and 2025. The company has hired security researchers, expanded its malware analysis labs, and increased compute resources for model training and inference.

Competitors are doing the same. The trend in platform security is clear: AI is becoming essential, not optional. Platforms without advanced AI detection are losing credibility. Users are increasingly aware of security as a differentiator.

The Business Side: Why Google Does This

Google's motivation isn't purely altruistic. A malware-infected Play Store is a business disaster.

User Trust

If Play Store becomes known as a source of malware, users stop using it. They sideload, use alternative stores, or switch to iOS. Each user lost is advertising revenue lost (fewer searches, fewer ad impressions), potential cloud services revenue lost, and ecosystem lock-in lost.

Google's massive investment in app security is partly insurance policy. Spending billions on detection systems is cheaper than losing billions in user trust.

Developer Incentives

Developers prefer distributing through Play Store because it has the largest user base. If Play Store's security reputation suffers, developers migrate to alternative channels or other platforms. This fragments the ecosystem and reduces Google's reach.

By maintaining high security standards, Google keeps developers happy. Developers stay on the platform. Users keep getting legitimate apps from a trusted source.

Regulatory Pressure

Governments increasingly scrutinize app ecosystems. Europe's Digital Markets Act requires major platforms to document security practices. The US is considering similar regulations. Operating with transparency and strong security practices is politically safer.

Google's annual security reports serve this purpose. They demonstrate that the company is taking security seriously and measuring the impact.

Competitive Advantage

Google's scale gives it advantages competitors can't match. Billions of devices reporting telemetry. Billions of apps to train models on. Massive compute resources for running detection systems. Recruiting the best security researchers.

These advantages mean Google can maintain better security at lower cost per app than competitors. This competitive moat protects the platform's position.

Technical Deep Dive: Machine Learning Methods

While Google doesn't publish detailed technical papers on their app review systems, we can infer the likely approaches based on academic research and what the company has disclosed.

Malware Detection Methods

There are fundamentally two approaches to malware detection: signature-based and behavioral-based.

Signature-based detection looks for known patterns. Virus databases contain hashes of known malicious files. When a suspicious file appears, compute its hash and check against the database. If it matches, it's malware. This is fast, reliable, but only catches known threats.

Google clearly uses signature-based detection for known malware samples. The company maintains one of the largest malware databases in existence.

Behavioral-based detection watches what an app actually does. Even if the code is obfuscated or novel, if the behavior matches known malicious patterns, the system can flag it. This catches zero-day attacks that signature databases don't have yet.

Google uses both approaches. A combination of signature matching (fast, low false positive rate) and behavioral analysis (catches novel attacks) gives comprehensive coverage.

Permission Analysis

Android apps must declare required permissions in their manifest file. The system can check if the declared permissions match the app's functionality.

Google likely uses machine learning models trained on millions of legitimate apps to learn what permission combinations are normal. An app that requests calendar, location, contacts, and SMS permissions but claims to be a unit converter is anomalous. Models trained on normal patterns can flag these outliers.

This is a form of unsupervised learning. The system learns what "normal" looks like, then flags anything significantly different.

Code Analysis

Deep learning models can be trained to recognize malicious code patterns. Researchers have shown that neural networks can learn to classify binary code as malicious or benign with high accuracy.

Google likely uses neural networks for this. The input would be embeddings (numerical representations) of code. The output would be a probability that the code is malicious.

Given the scale of processing (10,000 apps per day for years), the underlying architecture is probably efficient. Maybe a lightweight convolutional neural network or a transformer-based model trained for speed rather than maximum accuracy.

Generative AI for Code Summarization

The newest addition is generative AI models for code analysis. Instead of just classifying code as malicious or benign, these models can read the code and explain what it does.

This is valuable for the human review process. A human reviewer can read a two-paragraph summary of what an app does, notice that the summary doesn't match the declared functionality, and reject it immediately. Without the summary, the human would need to read thousands of lines of code.

Google mentioned integrating "generative AI models" into review, likely referring to code-understanding models similar to GitHub Copilot but trained for security analysis instead of code generation.

False Positive Mitigation

To maintain low false positive rates while catching malware, Google probably uses ensemble methods. Combine multiple models with different strengths and only flag apps if multiple models agree.

For example:

- Model A checks code signatures (precise, but misses novel malware)

- Model B analyzes permissions (catches permission abuse, but has false positives)

- Model C does behavioral sandboxing (catches complex attacks, but slower)

- Only apps flagged by 2+ models get escalated to human review

This ensemble approach reduces false positives while maintaining detection rates.

The number of app rejections by Google Play has decreased from 2.36 million in 2024 to an estimated 1.75 million in 2025, indicating improved app compliance or more efficient review processes. Estimated data.

Future Directions: What's Next for App Security

Google said in its 2025 report that it plans to increase AI investments in 2026. What does that likely mean?

More Sophisticated Models

Larger, more capable models. We've seen this trend with LLMs—bigger models generally perform better. Google will likely train larger neural networks for code analysis, permission analysis, and behavioral classification.

Bigger models require more compute. Google's infrastructure can handle this, but it means the company is willing to invest more per app for better security. That's a statement about priorities.

Real-Time Threat Intelligence

Current systems analyze apps before publication and again during real-time monitoring. Future systems might analyze apps during the review process while simultaneously scanning the global threat landscape for newly discovered attack techniques and feeding that intelligence back into the model.

This would require lower latency in the threat intelligence pipeline. Data about a new malware variant discovered Tuesday might inform Play Store reviews by Wednesday.

Developer Education Integration

Instead of just rejecting bad apps, future systems might provide actionable feedback to developers. "Your app is requesting camera permission but never uses it. Remove this permission." "Your app includes a library with 3 known vulnerabilities. Update to the latest version."

This shifts from gatekeeping to guidance. Bad-faith developers ignore guidance, but good-faith developers who accidentally violate policy could fix issues immediately.

Cross-Platform Consistency

Android, iOS, web platforms all have different security models. Future work might involve coordinating threat intelligence across platforms. A malware family that starts on iOS might appear on Android months later. Early detection on one platform could trigger preemptive defenses on others.

This would require cooperation between platforms, which is challenging but increasingly common in the security industry.

Privacy-Preserving Analysis

As privacy regulations tighten, analyzing apps without violating user privacy becomes harder. Future systems might analyze only app metadata without requiring access to actual user data. Or use differential privacy techniques that protect user information while enabling security analysis.

Google is already moving in this direction with scoped storage and other privacy features.

The Broader Implications for Mobile Security

Google's approach to app security has implications far beyond Play Store.

The War for Control

There's an ongoing tension in mobile security between openness and control. iOS is more closed—users can only install apps through App Store. Android is more open—users can sideload apps from anywhere.

Openness has security trade-offs. Sideloaded apps bypass App Store review. Malware spreads more easily. But openness also means users have choice and developers can distribute without gatekeeping.

Google's strategy is to embrace openness while using AI to push security deeper into the system. You can sideload apps if you want, but Play Protect will scan them. Alternative app stores exist, but Google's defenses cover all of Android.

This is a middle path—more open than iOS, but with security guardrails.

The Arms Race Dynamic

Every security improvement triggers adaptation from attackers. As Play Store review gets better, malware developers invest in obfuscation and novel attack techniques. As code analysis improves, they find new exploitation vectors.

Google's investments in generative AI represent an escalation in this arms race. AI-powered code understanding is a fundamental capability that's hard for attackers to circumvent. You can't hide from a model trained to understand code semantics.

Attackers will adapt. They'll develop AI-resistant code. They'll find new attack vectors that bypass current analysis. But each adaptation requires innovation, time, and resources. Google's scale means the company can adapt faster.

User Responsibility

Even with sophisticated AI defenses, user behavior matters. The strongest defense is a user who doesn't grant excessive permissions, doesn't download apps from untrusted sources, and stays skeptical of requests for sensitive access.

Google's defenses are a layer of protection, not total immunity. Users still need security hygiene.

TL; DR

- Google blocked 1.75 million malicious apps from Play Store in 2025, down from 2.36 million in 2024, indicating that AI-powered deterrence is preventing submissions rather than just catching bad apps after the fact

- AI systems run 10,000+ automated safety checks per app, using machine learning for signature detection, permission analysis, behavioral sandboxing, and code understanding

- Developer account bans dropped 49% year-over-year, showing that bad actors are increasingly discouraged from targeting Play Store due to improved detection

- Malware detected outside Play Store surged to 27 million in 2025, revealing that attackers have shifted to alternative distribution channels as Play Store defenses improved

- Google plans to increase AI investments in 2026, focusing on more sophisticated models, real-time threat intelligence, and developer education to stay ahead of emerging threats

Estimated data shows that malware samples and legitimate apps form the largest portions of Google's AI training data, emphasizing the focus on distinguishing between harmful and benign applications.

How AI Detection Actually Works in Practice

Understanding Google's defense system means understanding how each layer operates independently and collectively.

Pre-Submission Scanning

Before a developer even hits the "publish" button, Google's systems can analyze the APK (Android app package). This happens client-side on the developer's machine, through the Play Console web interface.

Google provides pre-submission analysis tools that show developers potential policy violations before they submit. This is helpful for legitimate developers—they can fix issues before wasting everyone's time.

Malware developers obviously ignore these warnings. But the warnings serve a purpose: making the submission process transparent and raising the friction of circumventing review.

Automated Triage System

When an app arrives at Google's servers, an automated triage system immediately categorizes it. Is this a game? A utility? A communication app? The category determines which analysis pipeline it enters and which human reviewers will see it if needed.

The triage system also assigns risk scores. Apps requesting unusual permissions for their category get higher scores. Apps from developers with good history get lower scores. Apps similar to previously approved apps get lower scores.

These risk scores determine priority. High-risk apps get human review immediately. Low-risk apps might skip human review entirely if automated systems are confident they're clean.

Sandboxing and Behavioral Analysis

For apps that make it through automated analysis, the next step is runtime behavior analysis. Google runs the app in a controlled environment—a sandbox—and monitors what it does.

The sandbox simulates real device conditions. It includes mock services for location, contacts, camera, microphone. An app that tries to access real location gets fake data. If it tries to upload contacts to a remote server, the upload goes nowhere.

The monitoring system records everything the app attempts: what files it reads, what network connections it makes, what system calls it executes. This creates a behavioral profile of the app.

Malware often reveals itself through behavior. A calculator app that tries to transmit contacts to a remote server is clearly malicious. The behavior pattern is the evidence.

Human Review Process

Apps that trigger alerts in automated systems enter human review. A real person looks at:

- The app's declared functionality (from the listing)

- The permissions it requests

- Automated analysis results (suspicious patterns, flagged code)

- Behavioral analysis results (unusual network connections, permission usage)

- AI-generated summary of what the code does

- Similar apps to see if this is a known malicious variant

The human then makes a judgment call. Is this app legitimate but unusual? Is it deceptive? Is it clearly malicious?

Most apps reviewers see are obvious rejections. The human can process dozens of clear-cut cases per day. The tough cases—edge cases where the behavior is suspicious but not definitively malicious—require real judgment.

Google's integration of generative AI helps here. Instead of human reviewers reading source code, they read summaries. This lets them process more cases with better accuracy.

The Economics of App Malware

Why would anyone develop malicious apps if the detection rate is so high? Because the economics still work for certain attack types.

Revenue Models for Malware

Malware developers make money through several mechanisms:

Subscription Fraud: Create an app with a subscription trap. Hidden screens, confusing cancellation processes, automatic renewals. Even if 1% of users get trapped and pay

This is particularly profitable because it's hard to prosecute. The app technically did what it claimed (maybe it was a fortune telling app). The subscription behavior might technically comply with app store policies (users agreed to the subscription, even if they didn't understand what they were agreeing to).

Data Harvesting: Collect location data, contact lists, search history, and sell it to marketing firms or data brokers. The developer makes money from data sales, not from the app itself.

Given how much advertising companies pay for user data (thousands per million users), even a small user base generates revenue.

Ad Fraud: Generate fake ad impressions and clicks, collecting ad revenue from advertisers. Developers might earn $1-5 per thousand ad impressions, even for fraudulent impressions, because ad networks have weak fraud detection.

Ransomware and Extortion: More malicious but smaller scale. Lock users' devices until they pay ransom. Typically targets enterprise users or organizations rather than mass consumer market.

The Math of Submission

Google blocks 1.75 million apps per year. That's roughly 4,800 apps per day. But 500+ million apps are submitted to Play Store annually (rough industry estimates). So Google's rejection rate is roughly 0.35%.

If you're a malware developer, those odds aren't great. But they're also not terrible if your attack is sophisticated enough. And there are alternatives:

- Submit 100 variants of the same malware. If each has a 99.65% rejection rate, but you're okay with even one getting through, the math works

- Spread submissions across time. Submit 5 variants per day instead of 100 at once. Harder for automated systems to recognize the pattern

- Start with legitimate functionality. Create a real calculator app, build user base, then update it with malicious behavior. By the time the update gets detected, you've already harvested the data

Google's defenses are good, but perfect defense is impossible. The goal isn't zero breaches, it's minimizing impact and making attacks expensive.

Privacy Considerations in Security Analysis

There's a tension between thorough security analysis and user privacy. To analyze what an app does, Google's systems must inspect the code and monitor behavior. Some of that inspection might reveal how the app works, user data it accesses, or personal information in the code itself.

Google has technical controls to minimize privacy impact. Sandboxes run in isolated environments that don't connect to real network. Analysis tools are automated, not manually reviewed by humans. Data is deleted after analysis. Personally identifying information is stripped.

But perfect privacy-preserving analysis is hard. As privacy regulations tighten, this becomes an interesting problem space. How do you verify an app doesn't do something bad without inspecting it in detail?

Potential solutions include differential privacy (adding mathematical noise to data to protect individual information while preserving statistical patterns) and secure multi-party computation (analyzing code without any party seeing the full details).

Google is investing in these areas, but they're still emerging technologies with practical limitations.

Real-time threat intelligence is projected to have the highest impact on app security by 2026, followed closely by the development of more sophisticated AI models. Estimated data.

Comparing Security Approaches: Automated vs. Manual

Google's strategy increasingly emphasizes automated AI-driven defenses over human review. But both have strengths and weaknesses.

Automated Systems (AI/ML)

Strengths: Fast, consistent, scalable, no fatigue factor, can process millions of apps

Weaknesses: Potential false positives, difficulty with novel/sophisticated attacks, can be fooled by determined attackers

Human Review

Strengths: Nuanced judgment, can understand context and intent, good at catching edge cases and social engineering, can adapt to new attack types quickly

Weaknesses: Slow, expensive, fatigue leads to errors, inconsistent, can't scale to review millions of apps

Google's approach is hybrid: automated systems do the bulk of the work and flag edge cases for human review. This combines the scalability of automation with the judgment of humans.

But as attack volume increases and submission volume increases, the ratio shifts more toward automation. There simply aren't enough human reviewers to handle the scale. So investment in AI becomes essential.

What This Means for Android Users

If you use Android and install apps from Google Play, what do Google's security investments mean for you?

Significantly Lower Risk Than Alternatives

Play Store is objectively the safest app distribution channel on Android. The combination of automated analysis, human review, and continuous monitoring makes it much harder for malware to succeed.

If you only install from Play Store, you're in a strong security posture. Not perfect—determined attackers with resources might still find ways through. But far better than sideloading or using alternative stores.

Continuous Monitoring After Installation

Play Protect doesn't stop after you install an app. It continues monitoring behavior on your device. If an app starts exhibiting malicious behavior after installation, Play Protect can warn you or block the app.

This means even if a malicious app somehow makes it to Play Store, it likely gets caught eventually. The window of exposure is narrow.

The Tradeoff is Privacy and Openness

Google's security requires extensive analysis of apps, which means inspection of code and behavior. This has privacy implications, though Google works to minimize them.

Also, strong security means there's a gatekeeper (Google) deciding what apps can be installed. This is safer but less open than iOS or fully open systems. If Google mistakenly rejects your app or bans your developer account, you have limited recourse.

Users uncomfortable with this tradeoff can sideload apps, but you accept higher risk.

Advanced Persistent Threats (APTs) and Nation-State Actors

Google's report focuses on mass-market malware: apps designed to fraud consumers, steal data, display ads. But there's another category: sophisticated attacks by nation-states and criminal organizations.

APT groups have resources to develop zero-day exploits (previously unknown vulnerabilities), craft bespoke malware, and target specific high-value victims. They might avoid Play Store entirely and use targeted distribution.

Google's public defenses are optimized for mass-market threats. Sophisticated APTs require additional defenses: private security teams, threat intelligence sharing with governments, specialized incident response.

Google probably has private teams and programs for this, but they don't discuss it publicly for obvious reasons.

For most users, this isn't a practical concern. APTs target organizations and high-value individuals, not random users. If you're reading this article, you're probably not a target.

The Role of User Behavior in Security

No matter how good Google's automated defenses are, user behavior remains critical.

Permission Grants

Android asks users for permission to access sensitive resources (camera, microphone, location, contacts). Users can grant or deny each permission.

Many users grant permissions without thinking. "This app needs location to work? Sure." But it creates risk. If the app is malicious, it now has location access. If the app is legitimate but poorly secured, attackers might compromise it and gain location access.

Better practice: only grant permissions the app actually needs. A calculator app doesn't need location. A weather app doesn't need contacts.

App Source Selection

Using Play Store is much safer than sideloading, but it's still important to check:

- Who developed the app? Is it an official developer or a suspicious clone?

- What are the reviews saying? Are users complaining about intrusive behavior?

- What permissions does it request? Are they appropriate for the app's function?

- Has it been updated recently? Abandoned apps don't get security fixes.

A few minutes of due diligence catches obvious red flags.

System Updates

Android updates patch security vulnerabilities. Phones that don't receive updates are vulnerable to known exploits. Keeping your phone updated is one of the highest-impact security practices.

Google pushes security updates regularly, but not all phones receive them at the same time. Older phones, particularly from smaller manufacturers, might not get updates at all.

Malware Families and Persistence

Google's report mentions detecting millions of new malware samples, but many are variants of existing malware families. Understanding the structure helps explain why detection remains challenging.

Malware Variants

When malware developers find a profitable attack, they create variants. Change the app's name, icon, description. Change the code structure (obfuscate) to avoid signature detection. Add new features or remove old ones. Each variant looks new to signature-based detection but is fundamentally the same malware.

Google's ML systems help here. Machine learning models can recognize patterns across variants even when the code is obfuscated.

Malware Evolution

Over months and years, malware families evolve. New variants add capabilities. Developers respond to defenses by changing tactics. The malware-defender arms race creates constant evolution.

Tracking this evolution is how security researchers build threat intelligence. "Malware family X was seen in Y attacks with Z payload changes." This intelligence feeds into security tools to catch new variants faster.

Botnets and Command-and-Control

Sophisticated malware often operates through botnets: networks of infected devices controlled remotely. Infected devices receive commands from command-and-control (C2) servers telling them what to do.

Google's defenses can detect both the malware client (on user devices) and attempts to contact C2 servers. If a user gets infected, Play Protect might catch it. If it slips through, the device might get flagged when it tries to contact the C2 server.

Disrupting C2 infrastructure is often how security researchers kill botnets.

Looking at Market Share and Ecosystem Impact

Android's share of the global smartphone market is roughly 70%, with iOS at 27% and others at 3%. Android's scale means:

- Malware developers target Android more (larger reward)

- Google's infrastructure must handle larger scale

- Android's openness (vs iOS) means alternative distribution channels exist

Google's defenses must be sophisticated to maintain trust at this scale. One major security incident could drive millions of users to iOS.

Regulatory and Compliance Implications

Google's investment in app security has regulatory benefits. The EU's Digital Markets Act requires major platforms to document security practices. The US is considering similar regulations.

Google's annual reports serve as evidence of compliance and good faith effort. The company can point to specific metrics: apps blocked, policy violations caught, investments in technology.

This creates an incentive to not just do security well but to measure and document it publicly. Transparency builds trust.

Competitors are watching. Apple has increased publication of security reports. Microsoft and Amazon are emphasizing security in their app stores.

Future Threat Categories: What's Next?

As defenses improve against current malware, attackers will develop new approaches.

AI-Generated Malware

Generative AI can write code. Malware developers might use AI to create polymorphic malware that changes itself constantly, avoiding signature detection.

Google's AI-powered code understanding might help here—if models can understand the code's intent regardless of its surface structure, polymorphism becomes less effective.

Hardware Exploits

Malware at the hardware level (exploiting CPU vulnerabilities, GPU side-channels) is harder to detect through app analysis. Google's defenses focus on the app level, not the hardware level.

Mitigating hardware-level threats requires cooperation with chipmakers and OS development. Google is investing here but progress is slow.

Cross-Platform Attacks

Malware that starts on Android but targets other ecosystems, or vice versa. Sophisticated attacks that chain exploits across multiple devices and platforms.

Detecting these requires threat intelligence across ecosystems, which is challenging due to competitive dynamics.

Social Engineering

No AI system can solve social engineering. If a user is convinced by a phishing attack to sideload a malicious app or grant dangerous permissions, defenses become irrelevant.

Google's best defense here is user education—helping people understand threats and make smart decisions.

FAQ

What is Google Play Protect and how does it work?

Google Play Protect is Google's real-time malware detection system that runs on all Android devices. It continuously scans apps for suspicious behavior, monitors device activity for malware signatures, and can warn users or block apps from running if threats are detected. The system works across all apps on your device, not just those from Google Play, making it a device-wide security layer.

How does Google decide which apps to reject from Play Store?

Google uses a multi-layered approach combining automated AI analysis with human review. Automated systems scan app code for malware signatures, analyze requested permissions against functionality, run behavioral analysis in sandboxes, and use generative AI to understand code intent. Apps flagged by automated systems get escalated to human reviewers who make final judgments about legitimacy and policy compliance.

What percentage of submitted apps get rejected by Google?

Google processes roughly 500+ million app submissions annually and blocks around 1.75 million policy-violating apps, representing approximately a 0.35% rejection rate. However, many submissions are updates to existing apps or minor variants. The rejection rate for new, unique apps is likely significantly higher, particularly for suspicious submissions from new developers.

Can malware still get through Google Play's defenses?

Yes, though it's increasingly difficult. No security system is perfect, and determined attackers with resources can sometimes find ways through. However, the window of exposure is typically narrow—apps that slip through detection usually get caught during post-publication monitoring through Play Protect. The combination of automated and human review makes mass-market malware extremely difficult to distribute through Play Store.

Why are fewer apps being rejected in 2025 compared to 2024?

The decline from 2.36 million rejections in 2024 to 1.75 million in 2025 likely indicates that Google's AI-powered deterrence is working. Bad actors are increasingly discouraged from even submitting malicious apps to Play Store because the detection rate is so high. Instead, malware developers are shifting to alternative distribution channels, which is reflected in the surge of malware detected outside Play Store (27 million apps in 2025 vs 13 million in 2024).

What should I do to keep my Android device secure?

First, keep your phone updated with the latest Android security patches. Second, only install apps from Google Play Store rather than sideloading. Third, carefully review app permissions before granting them—only approve permissions the app actually needs for its functionality. Fourth, check app reviews and developer information before installing. Finally, use strong passwords and enable two-factor authentication where available.

How does Google's AI specifically detect malware that has never been seen before?

Google uses behavioral analysis and anomaly detection. Instead of just looking for known malware signatures, the AI models learn what legitimate app behavior looks like, then flag apps that deviate significantly from normal patterns. The system analyzes permission requests, network connections, file system access, and other behaviors. Apps that request permissions inconsistent with their function or exhibit suspicious behavioral patterns get flagged even if they don't match any known malware signature.

What's the difference between sideloading and using Google Play Store?

Google Play Store has built-in security review and monitoring. Apps go through both automated analysis and human review before publication. After publication, Play Protect continues monitoring behavior. Sideloading means installing apps from alternative sources that lack these security measures. Sideloaded apps bypass Play Store's review entirely and may not receive the same monitoring. This makes sideloading significantly riskier for security, though it offers more openness and user control.

Do app developers know when Google's AI rejects their submission?

Yes, developers receive notifications when their apps are rejected, including a reason for rejection. If the rejection is due to policy violation or suspected malware, the notification explains the issue. Developers can then fix the problem and resubmit. If they believe the rejection is a false positive, they can appeal to human reviewers, and most wrongful rejections are overturned within 48 hours.

How does Google balance security with privacy when analyzing apps?

Google faces a genuine tension between thorough security analysis and user privacy. The company uses technical controls including sandboxed analysis environments that don't access real user data, automated analysis that doesn't require human inspection, and data deletion after analysis completes. Personally identifying information is stripped from code before analysis. However, perfect privacy-preserving analysis is technically difficult, so Google continues investing in technologies like differential privacy and secure computation to improve this balance.

Conclusion: The Future of App Security

Google's 2025 security report reveals something important that might not be obvious at first glance: fewer apps getting rejected doesn't mean security is failing. It means security is working. Bad actors are being deterred. They're not even trying to submit to Play Store anymore because the detection rate is too high.

This represents a fundamental shift in how platform security works. The goal isn't to catch bad apps after they cause damage—it's to make the platform so difficult to attack that attackers don't bother. Prevention instead of punishment.

Google's AI investments made this shift possible. Machine learning models that can understand code, recognize behavioral patterns, and flag anomalies operate at a scale human reviewers never could. An AI system can analyze millions of apps in the time it takes a human to review one.

But this comes with tradeoffs. More sophisticated security means more oversight, more gatekeeping, more data analysis. Users get safer devices but with less control and privacy. Developers face stricter requirements but get access to a platform with billions of users and strong security reputation.

The numbers suggest the tradeoff is working. Android users, by and large, aren't getting infected with malware from Play Store. That's not luck. That's sophisticated engineering and AI-powered defense working at scale.

Looking forward, Google's plans to increase AI investment in 2026 suggest the company sees this as critical to competitive advantage. As attacks evolve, defenses must evolve faster. AI gives Google that capability.

The question for users is simple: do you trust Google's judgment about which apps are safe? If yes, Play Store is a solid choice with strong security. If no—if you worry about Google's gatekeeping or privacy implications—Android's openness lets you take different risks.

Either way, understanding how these defenses work helps you make informed decisions about your security. And that might be the most important defense of all: users who understand the threats and the tradeoffs.

Key Takeaways

- Google's AI systems blocked 1.75 million malicious apps from Play Store in 2025, down 26% from 2024, indicating successful deterrence of bad actors upstream

- Automated systems now run 10,000+ safety checks per app using machine learning for code analysis, permission validation, and behavioral detection

- Developer account bans dropped 49% year-over-year as bad actors are increasingly discouraged from targeting Play Store's robust defenses

- Malware detected outside Play Store surged to 27 million in 2025 (108% increase), revealing attackers have shifted to sideloading and alternative distribution channels

- Google plans to increase AI investments in 2026 with more sophisticated models, real-time threat intelligence, and developer education to stay ahead of evolving threats

Related Articles

- NanoClaw: The Secure Agent Framework Fixing OpenClaw's Critical Flaws [2025]

- AI Backdoors in Language Models: Detection and Enterprise Security [2025]

- Vega Security Raises $120M Series B: Rethinking Enterprise Threat Detection [2025]

- Claude Opus 4.6 Finds 500+ Zero-Day Vulnerabilities [2025]

- Enterprise AI Security Vulnerabilities: How Hackers Breach Systems in 90 Minutes [2025]

- Galaxy S26 Scam Detection: Why Samsung's New Feature Matters [2025]

![Google's AI Malware Detection on Play Store in 2025 [Guide]](https://tryrunable.com/blog/google-s-ai-malware-detection-on-play-store-in-2025-guide/image-1-1771537027588.jpg)