Government AI Security Breach: Inside the Chat GPT Incident [2025]

In early 2026, one of the most consequential national security lapses in recent memory unfolded quietly on a laptop in Washington. The acting head of the United States' premier cybersecurity agency uploaded sensitive government documents to a public AI tool, bypassing security protocols and potentially exposing classified information to a commercial system trained on vast amounts of internet data.

This wasn't some low-level analyst making a mistake. This was Madhu Gottumukkala, appointed directly by the Trump administration to lead the Cybersecurity and Infrastructure Security Agency (CISA), the federal body responsible for protecting America's critical infrastructure from cyber threats.

What makes this incident genuinely alarming isn't just what happened, but what it reveals about the gap between government understanding of AI security risks and the reality of how these tools work. When you upload a document to Chat GPT, you're not just storing it privately in the cloud. You're feeding it into a machine learning model that learns from that data, trains itself on it, and potentially incorporates it into responses given to other users.

This incident exposes a critical vulnerability in how federal agencies are approaching artificial intelligence adoption. It shows that even the people tasked with protecting America's cybersecurity don't fully understand the security implications of the AI tools they're using. And if they don't understand it, what does that say about the thousands of government employees using similar tools without proper oversight?

Let's break down what happened, why it matters, and what comes next.

TL; DR

- The Incident: CISA's acting director uploaded sensitive, unclassified government documents marked "for official use only" to Chat GPT without authorization

- The Breach: The documents triggered multiple automated security warnings but were uploaded anyway, and the system potentially trained on them

- The Exposure: Unclassified but internal government documents are now part of Chat GPT's training data, potentially accessible to any user of the platform

- The Accountability: The official reportedly failed a counterintelligence polygraph test and subsequently suspended six career security staff members

- The Risk: This incident highlights the massive gap between government AI security policy and actual AI security risks

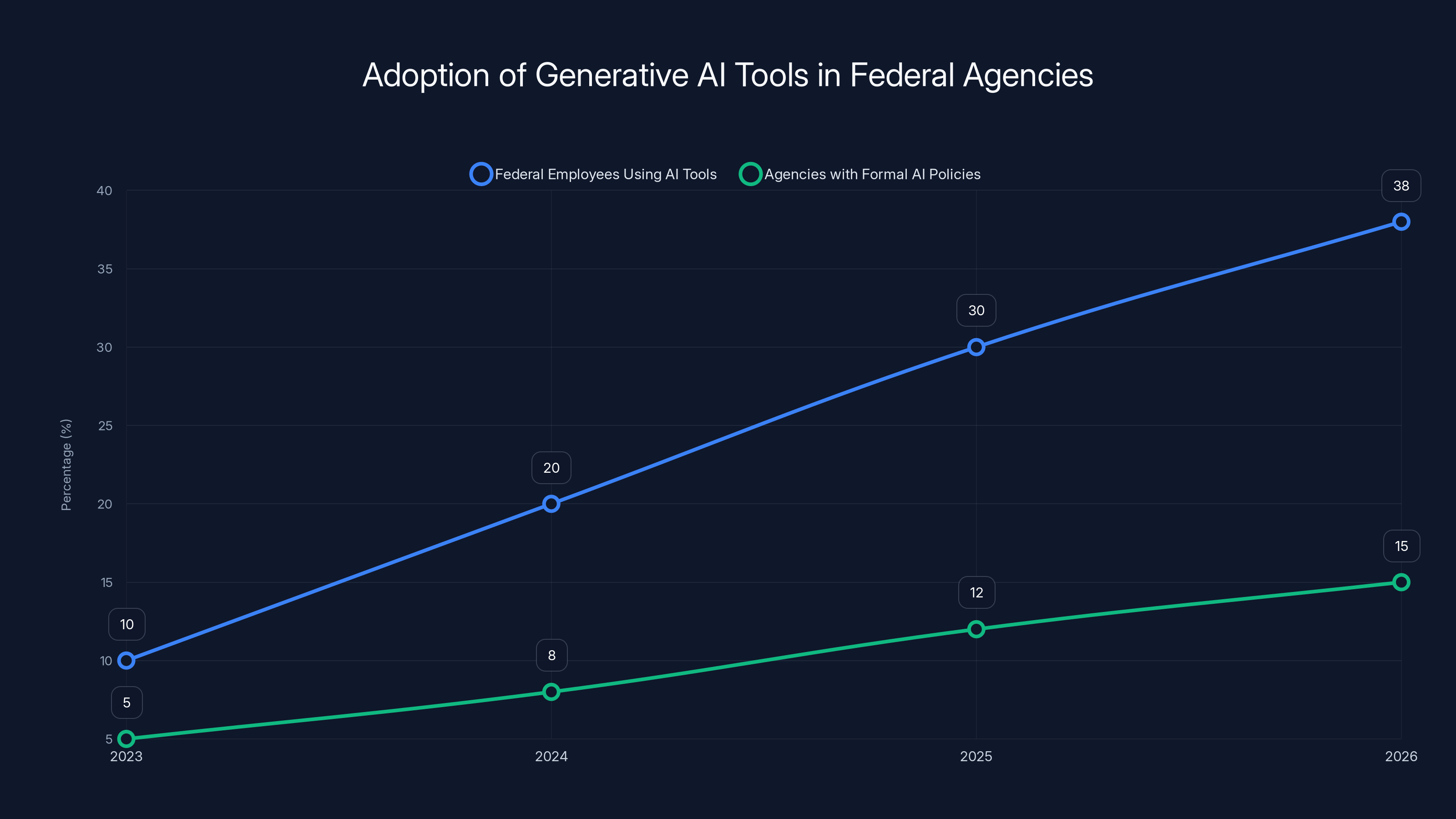

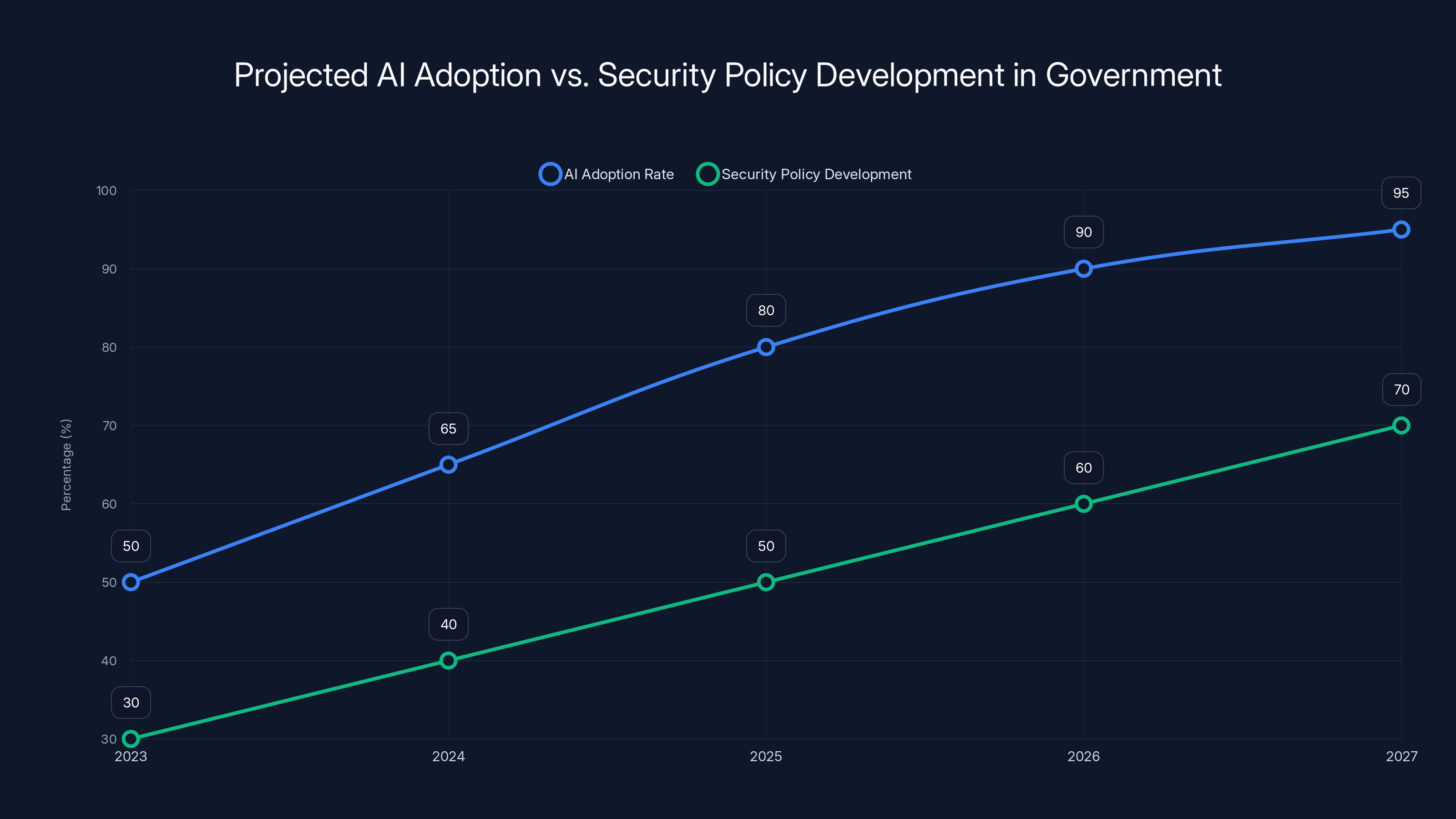

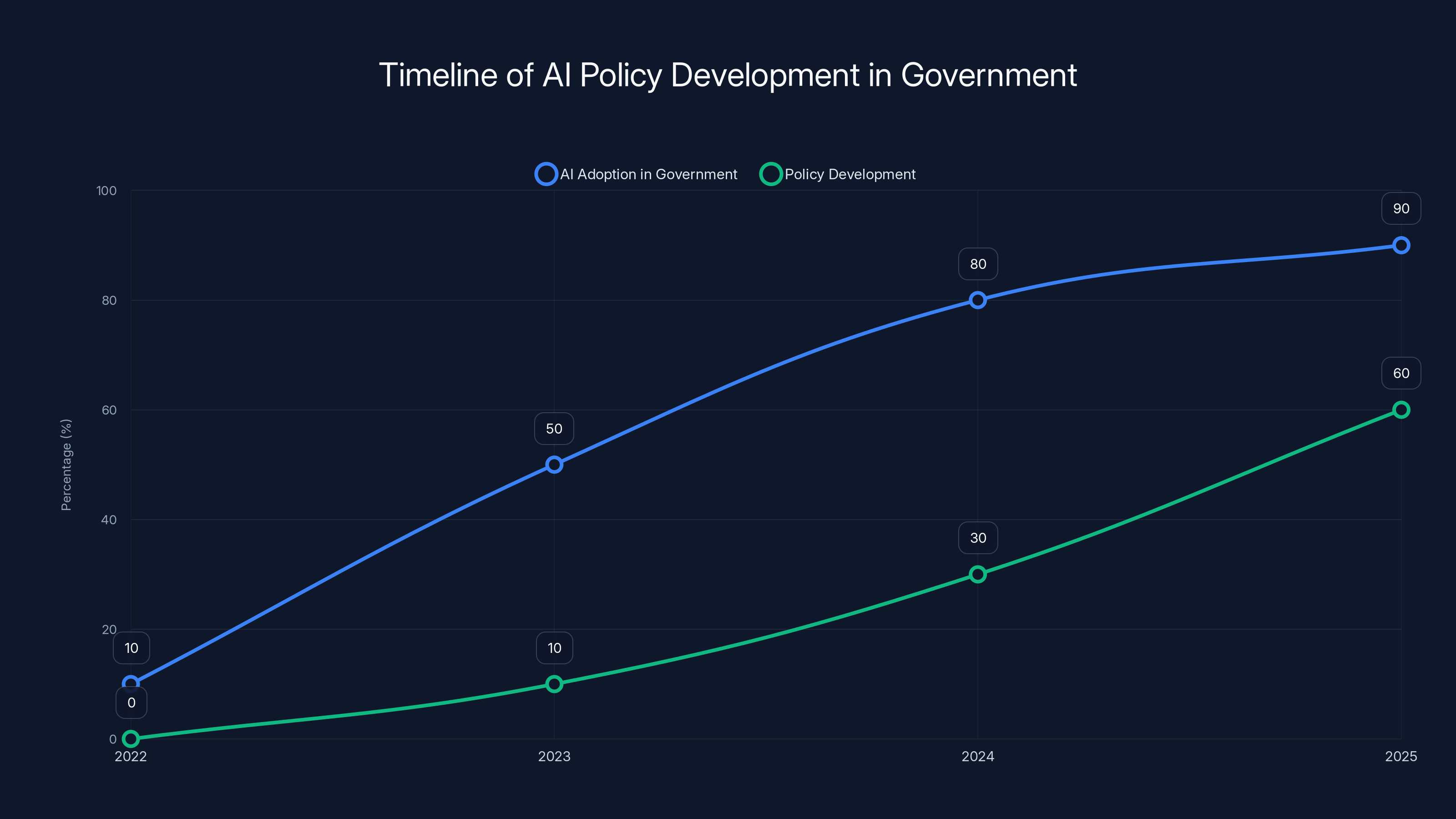

By 2026, it's projected that 38% of federal employees will use generative AI tools, yet only 15% of agencies will have formal policies. Estimated data highlights a significant policy gap.

What Exactly Happened That Day

In late January 2026, Madhu Gottumukkala, who had been appointed as the acting director of CISA just months earlier under the Trump administration, made a series of requests that would eventually trigger a major security investigation within the Department of Homeland Security.

He wanted to use Chat GPT.

This wasn't unusual in 2026. By that time, Chat GPT had become ubiquitous in government offices, much like email or word processors. Federal employees across dozens of agencies were using large language models for draft writing, brainstorming, research, and analysis. But CISA, the agency specifically tasked with cybersecurity, had restrictions in place.

Most CISA employees couldn't access Chat GPT from their government networks. The restriction was intentional, a security measure designed to prevent exactly what was about to happen. But Gottumukkala, as the acting director, apparently requested and received an exception.

Why did he get one? Officials have been cagey about this detail. Was it a formal security waiver? An informal blessing? A misunderstanding about what exceptions were allowed? The exact mechanics of how he was granted access have never been fully disclosed, but what we know is that he had it when others didn't.

Once he had access, he began uploading documents. We don't know exactly which documents or how many, but they were marked "for official use only" (FOUO), a classification level below "confidential" but still protected information not meant for public distribution. These weren't nuclear launch codes or classified intelligence. But they were sensitive contracting documents related to CISA operations.

Then the system did exactly what it was supposed to do. Multiple automated security warnings triggered, the kind of alerts designed to stop exactly this scenario. These aren't vague, easy-to-miss notices. They're specifically designed to catch people uploading government documents they shouldn't.

But the documents were uploaded anyway.

Once they were in Chat GPT, they became training data. Open AI's system incorporated them into its learning process. The documents were now part of the statistical patterns the model uses to generate responses. In theory, fragments of them could show up in responses to other users. Gottumukkala's Chat GPT interactions weren't private, even if the interface made them feel that way.

It took time for the Department of Homeland Security to discover what had happened. When they did, the shock wasn't just about the documents themselves. It was about who had done it and what it said about the security culture at the nation's premier cybersecurity agency.

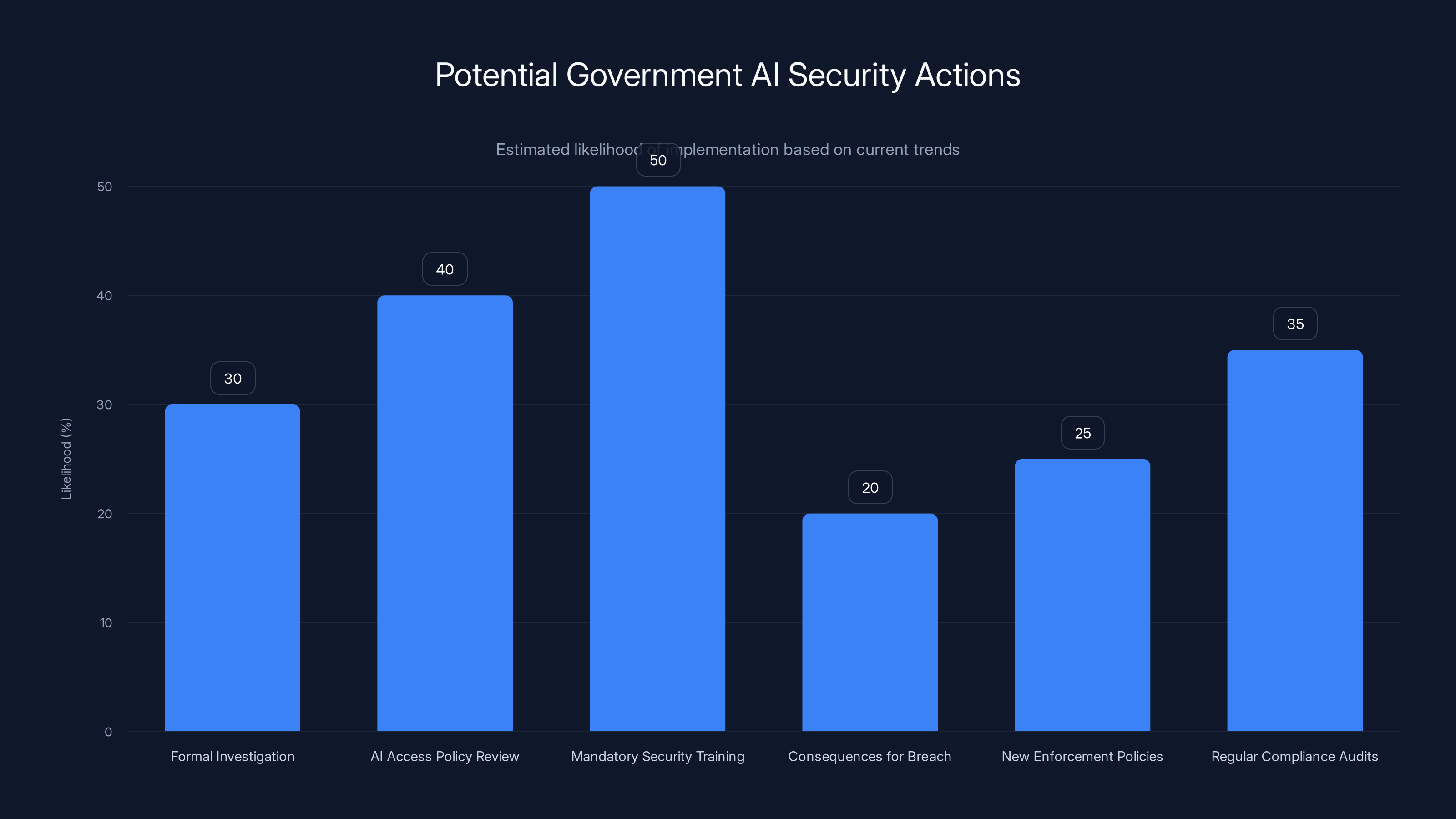

Estimated data suggests that while some actions like mandatory security training might have a moderate chance of implementation, others such as enforcing new policies and regular audits are less likely to occur.

The Counterintelligence Polygraph Failure That Nobody Wanted to Talk About

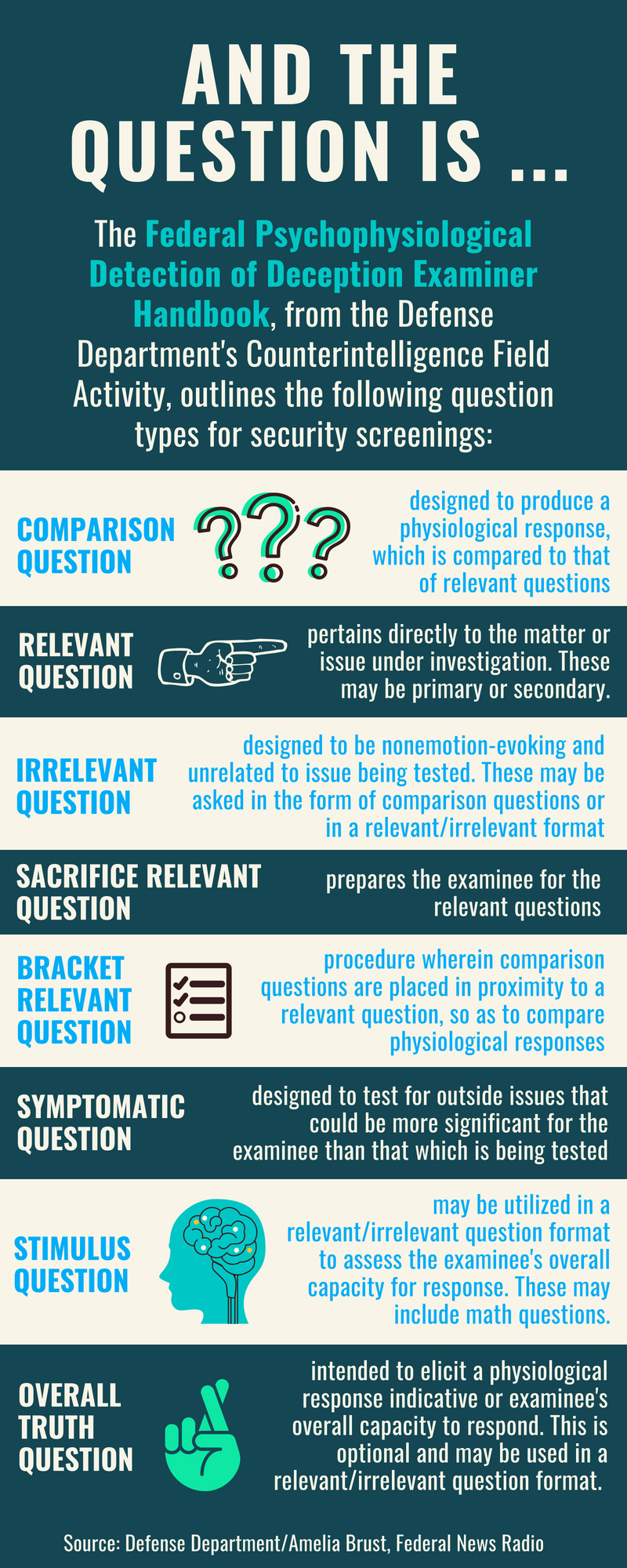

Here's where the story gets stranger. Before Gottumukkala's appointment to CISA, he underwent a counterintelligence polygraph test, a standard requirement for federal security positions. The test is designed to identify potential security risks, foreign influence, and past breaches of trust.

He failed it.

Not slightly. Significantly. The Department of Homeland Security later claimed this polygraph was "unsanctioned," suggesting it was administered improperly or without proper authorization. But that raises an immediate question: why was it unsanctioned? Who ordered it? Why wasn't a properly administered version done?

The timeline is important here. Gottumukkala served as the Chief Information Officer of South Dakota under Governor Kristi Noem. When Noem was selected for Trump's cabinet, Gottumukkala followed, eventually landing at CISA. But somewhere along the way, someone ordered a counterintelligence polygraph test, he failed it, and then the administration claimed the test itself was invalid.

This is the kind of bureaucratic maneuvering that usually gets buried in government reports. Somebody wanted him in the position badly enough to dismiss the failed polygraph as procedurally flawed. That somebody probably had power and didn't see the test results as disqualifying.

Then came the Chat GPT incident. Suddenly, the previously dismissed polygraph failure wasn't ancient history anymore. It was evidence of a pattern. Here was someone who had already failed a counterintelligence screening, and now they'd uploaded sensitive government documents to a public AI system.

What happened next is telling. After the uploads came to light, Gottumukkala suspended six career security staff members from accessing classified information. This sounds like a management decision addressing a security concern, but the timing and context suggest something else. Six people knew about the Chat GPT incident. Six people's clearances suddenly became restricted.

Was this a legitimate security decision? Or was it professional retaliation against people who couldn't be ignored because they had discovered something serious?

Why This Matters More Than A Simple Mistake

At first glance, this looks like a security oopsie. A busy executive uploads something sensitive to the wrong system. Happens all the time in the private sector. But this isn't the private sector. This is the agency literally responsible for protecting American critical infrastructure from cyberattacks.

The irony would be funny if it weren't so serious. CISA issues cybersecurity guidance to every other federal agency. They set standards. They publish best practices. They tell other organizations how to protect their data. And the head of their organization just violated basic security practices that any CISA guideline would have flagged.

But here's the deeper problem: Gottumukkala apparently didn't think what he was doing was that risky. That's the actual scary part. He had an exception to use Chat GPT. He uploaded documents. He presumably thought it was fine.

This reveals a fundamental disconnect between how government security leaders understand AI systems and how those systems actually work. Many people in government still think about data in pre-AI categories. You store it in a database. You encrypt it. You control access. Information stays where you put it.

That's not how large language models work. When you upload data to Chat GPT, you're not storing it the way you store a file. You're feeding it to a machine learning model that learns from it, incorporates it into its weights, and uses it to inform predictions on new queries. There's no taking it back. You can't unsend that data. You can't revoke its access.

If Gottumukkala understood that, he probably wouldn't have uploaded the documents. If he did understand it and uploaded them anyway, that's an even bigger problem.

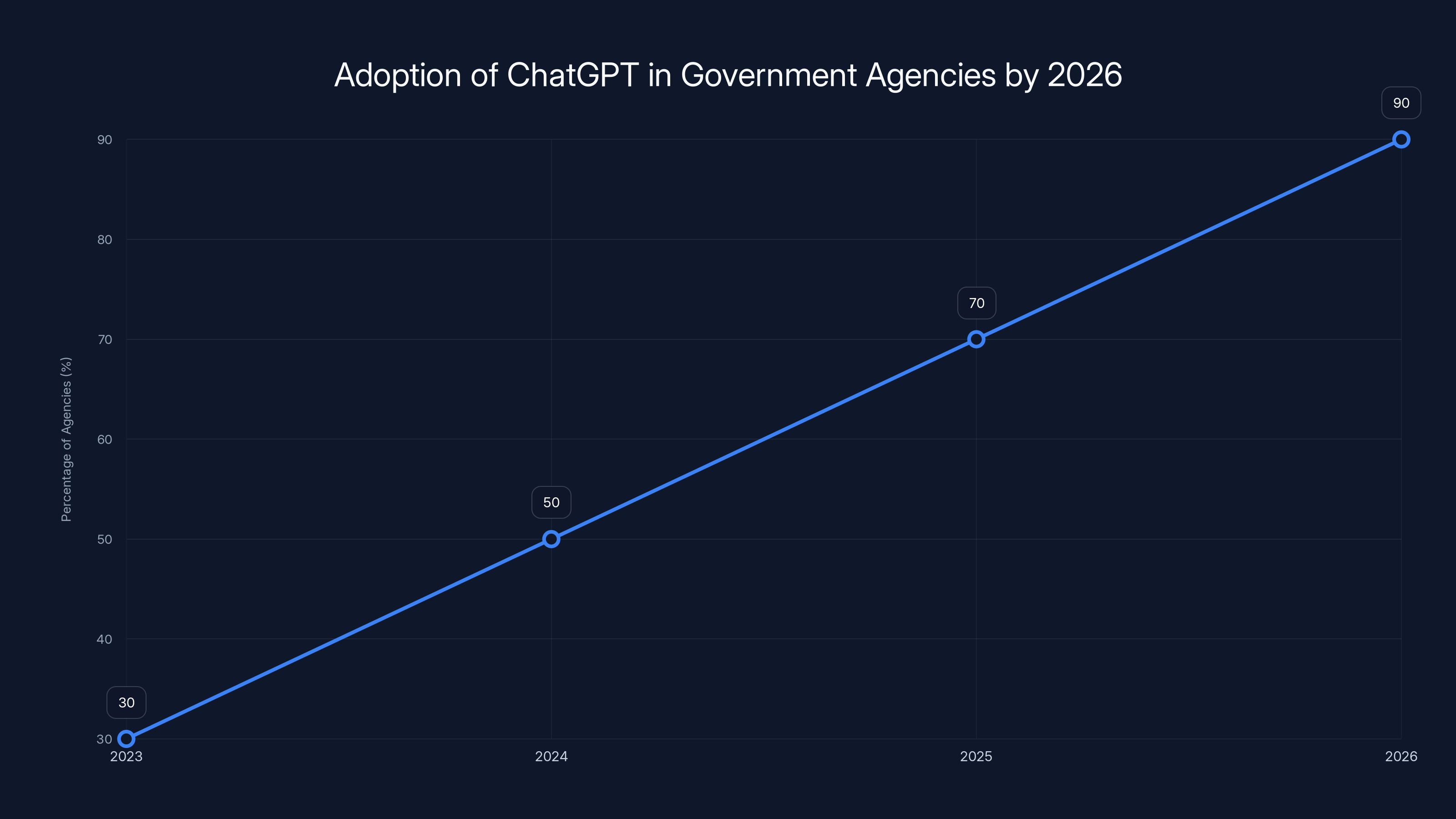

By 2026, ChatGPT was used by approximately 90% of government agencies, reflecting its integration into daily operations. (Estimated data)

The Chat GPT Exception That Shouldn't Have Existed

How did Gottumukkala get permission to use Chat GPT when other CISA employees couldn't? This is one of the most important unanswered questions from the incident.

Various explanations have been offered. Maybe he needed to evaluate Chat GPT for official use. Maybe the exception was intended to be limited. Maybe it was granted informally without proper documentation. Maybe someone assumed the acting director could be trusted not to upload sensitive documents.

That last one is particularly damning. If the assumption was that the head of the agency wouldn't violate basic security protocols, that's not a security policy. That's security theater.

Real security policies work on the principle that anyone could make a mistake, regardless of position. They assume honest people will accidentally do the wrong thing and that malicious actors might compromise even trusted individuals. Granting exceptions based on personal trust is exactly what leads to breaches.

Moreover, if the exception was granted for legitimate work purposes, there should have been guardrails. The exception should have been limited to specific documents. There should have been a process for reviewing what was uploaded. There should have been regular audits.

Instead, it seems like someone got access to Chat GPT and uploaded whatever they wanted. No oversight. No real-time monitoring. No review process.

This is how sophisticated breaches happen. Not because people are intentionally malicious, but because the systems meant to prevent accidents are either too permissive or poorly enforced.

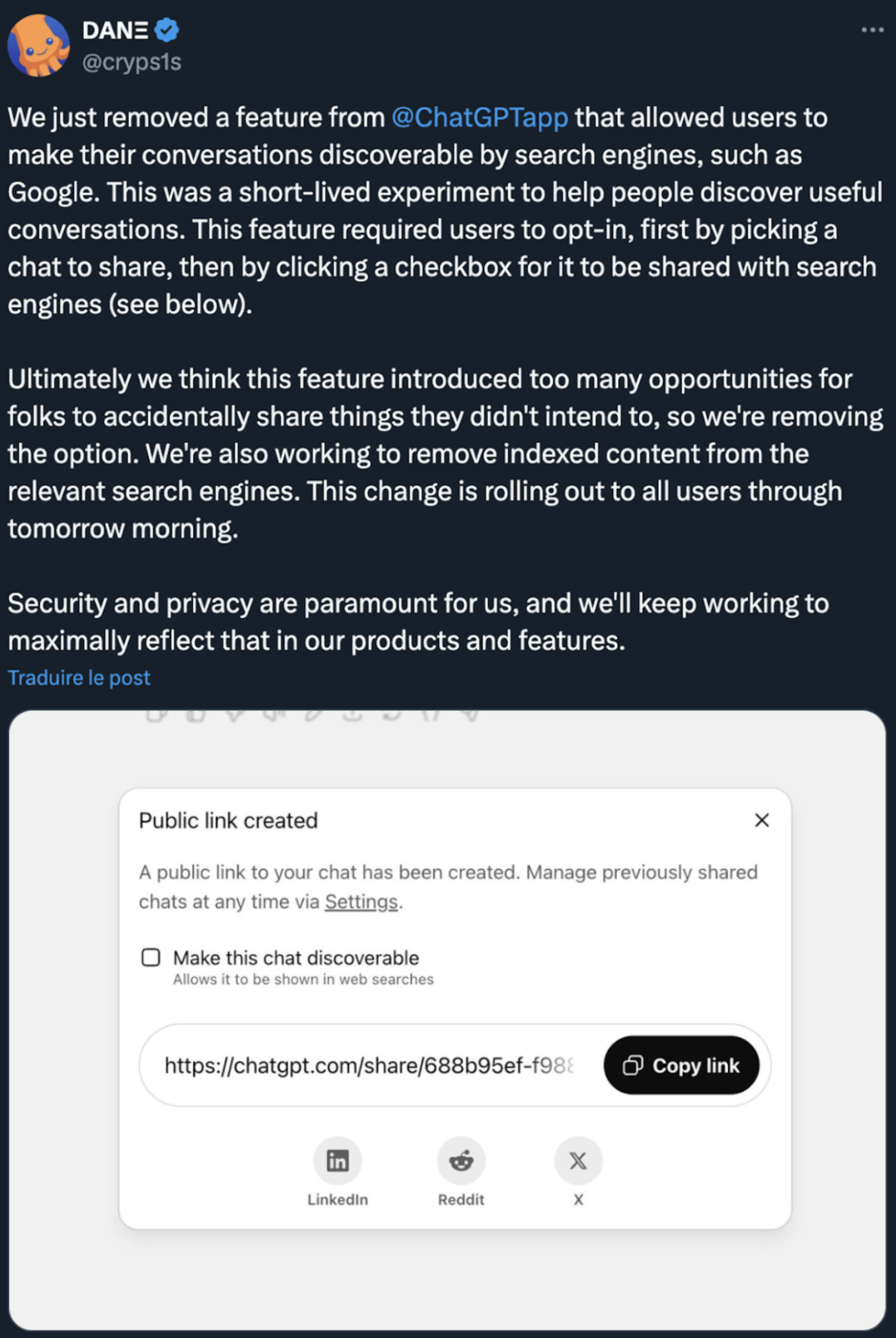

What Open AI Did (And Didn't Do)

When sensitive government documents get uploaded to Chat GPT, what happens? Open AI has to balance competing interests. On one hand, they want to be a good partner to government and sensitive to national security concerns. On the other hand, they built their business model partly on training AI models on vast amounts of data.

Their public position is that they log user data in case of abuse or illegal activity, but they don't generally delete training data from models after it's incorporated. This is a technical and business reality. Removing specific data from a trained neural network is extraordinarily difficult and computationally expensive.

So what actually happened to Gottumukkala's documents? They became part of Chat GPT-4's training data. They're now incorporated into the model that millions of people use. Could another Chat GPT user craft a prompt that recreates fragments of those documents? Probably not deliberately, but AI systems can leak training data in unexpected ways.

Open AI didn't publicly confirm any of this because they don't usually comment on specific user interactions. But the Department of Homeland Security sought to determine whether there was "harm to government security." That's bureaucratic language for "we're trying to figure out if classified information got compromised and to what extent."

The answer was almost certainly yes to the first part (harm occurred) and unclear on the second part (extent unknown).

What made the situation more complicated is that the documents were unclassified but marked FOUO. They're not at the level of state secrets, but they're not public either. Government contracting information. Internal processes. Operational details. The kind of thing that's useful to adversaries but not immediately catastrophic if disclosed.

Estimated data shows AI adoption in government is outpacing security policy development, highlighting a growing gap that could lead to more security breaches.

The Security Staff Suspensions That Changed Everything

What happened after Gottumukkala's uploads came to light tells us something important about how power works in government security agencies.

Six career security staff members, the kind of people who've spent decades protecting American secrets, suddenly found their access to classified information suspended. This is a massive career blow. These people's entire professional identity might revolve around security clearance work. A suspension means no intelligence briefings, no classified projects, no access to the secure facilities where real national security work happens.

Why were they suspended? The official reason was that they knew about the Chat GPT incident and hadn't reported it properly. But the timing and context suggest something different.

These six people found out about the breach. They probably flagged it. They probably said "this is a problem." And then the acting director of CISA suspended them.

This is classic retaliation, even if it's wrapped in security justification. In organizations with healthy security cultures, discovering a breach by a senior official and reporting it up the chain should result in investigation and potential consequences for the official. Instead, the people who discovered it lost their clearances.

What message does that send? Don't report problems. Keep quiet. If your boss does something dangerous, that's not your problem to solve.

This kind of culture is how you get massive breaches. When people stop reporting security issues because they're afraid of career consequences, the organization becomes vulnerable to exactly the kinds of attacks it's meant to prevent.

Eventually, after pressure from oversight bodies and the media, the suspensions were reconsidered. But the initial instinct was to silence the people who found the problem, not to fix the problem itself.

Understanding The AI Security Implications

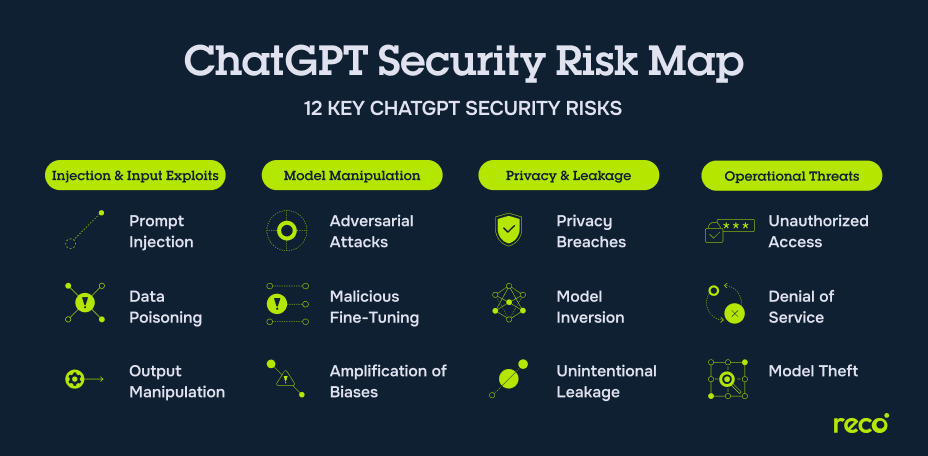

Let's talk about the actual technical security issues at play here, because they're more serious than most government officials seem to realize.

When you upload a document to Chat GPT, several things happen almost instantly:

First, the document gets processed by the model. The text is broken down into tokens, analyzed for meaning and context, and incorporated into the calculation of the model's response. If you ask Chat GPT anything, it's drawing on patterns from that document.

Second, the document likely gets stored in Open AI's systems as part of your conversation history. This is encrypted and supposedly subject to privacy policies, but it's accessible to Open AI's employees and systems.

Third, and most importantly, the document becomes training data. Future versions of Chat GPT will potentially train on this data, meaning the patterns and information it contains become part of the model that millions of people use. This is probably the most permanent part of the process.

Now apply this to sensitive government documents. Contracting information. Operational procedures. Vendor relationships. Any of this could be valuable to someone trying to understand how government works, identify vulnerabilities, or plan attacks.

The documents Gottumukkala uploaded weren't nuclear secrets, but they were sensitive in ways that might not be obvious to someone not steeped in government operations. Which agencies work with which vendors? What are the specific security procedures CISA uses? What are the technical systems involved?

An adversary using Chat GPT might be able to infer some of this from Gottumukkala's documents. They might be able to ask the model questions that, by the way it responds, reveal that it has seen government documents. This is sometimes called "prompt injection" or "training data extraction." Clever prompts can sometimes get AI models to leak pieces of their training data.

The scary part is that the people responsible for protecting against exactly this kind of breach didn't seem to understand the risk. That's not a personal failing of Gottumukkala necessarily. It's a systemic failure of the government to really understand how modern AI systems work and what uploading data to them actually means.

The rapid adoption of AI in government outpaced policy development, leading to security vulnerabilities. Estimated data shows a lag in policy implementation compared to AI usage.

How This Compares To Other Government Data Breaches

Historically, government breaches come from a few categories: stolen credentials, unpatched vulnerabilities, insider threats, and accidents. Gottumukkala's incident is a new category: unforced AI adoption errors.

The OPM breach of 2015 involved stolen credentials and sophisticated hacking. The Equifax breach wasn't government but showed what happens when organizations don't patch known vulnerabilities. The Edward Snowden leaks involved an insider taking data.

But uploading unclassified sensitive documents to a public AI system? That's new. That's what happens when powerful people adopt new technology faster than their organizations can develop policies to govern it.

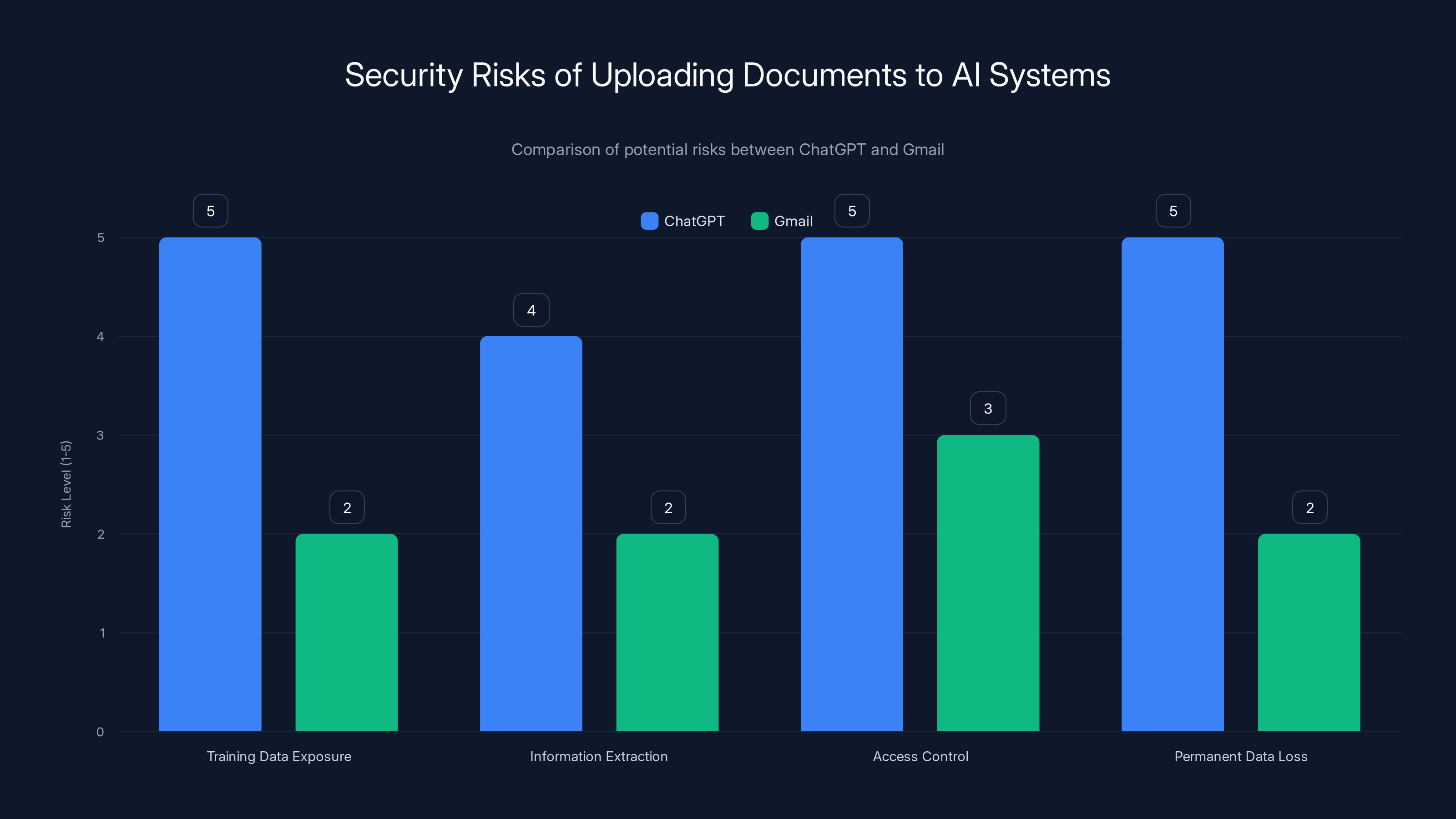

The scale is also different. If Gottumukkala had emailed the documents to a Gmail account, it would be a major breach. But that breach would be limited to Google and anyone who compromised his Gmail. When you upload to Chat GPT, you're also giving the data to Open AI and, more importantly, to everyone who will eventually use future versions of the model that trained on that data.

It's not a one-time exposure. It's permanent incorporation into a tool used by tens of millions of people.

The Policy Vacuum Where This Breach Happened

Why doesn't the federal government have better policies about uploading data to public AI systems? Partly because the technology moves faster than policy, and partly because until recently, nobody in government was really thinking about it as a serious risk.

Compare this to how the government handled cloud computing in the early 2000s. Agencies had to figure out rules about what could go to Amazon Web Services, what needed to stay on-premises, who could approve cloud usage. It took time, but eventually, there were policies.

AI adoption skipped that process. Chat GPT launched in November 2022. By 2024, it was already widely used in government despite no formal policies. Agencies were just... using it. Employees liked it. Executives liked it. It was productive. The fact that uploading data to it had fundamental security implications seemed to escape notice.

The White House eventually issued guidelines about AI use in government, but by then, the damage was already done. Multiple agencies had already uploaded sensitive information to Chat GPT, Claude, and other systems. CISA had already restricted access to it for most employees while giving exceptions to leadership. Nobody had really thought through what the security implications were.

If the government had a policy in place before Gottumukkala arrived at CISA, it probably would have been something like:

- No unclassified but sensitive documents in public AI systems

- All AI usage requires documented approval

- Regular audits of what data is being uploaded

- Training for all staff on AI security risks

- Consequences for violations

But because there was no policy, just a vague restriction that applied to most people but not the acting director, disaster happened.

Uploading documents to ChatGPT poses higher security risks compared to Gmail, especially in terms of data exposure and permanent loss of control. Estimated data based on typical security concerns.

Enterprise AI Alternatives That Actually Protect Data

Here's the thing: you don't have to choose between AI productivity and data security. But you do have to choose tools designed with that tradeoff in mind.

Open AI offers Chat GPT Enterprise, where conversations aren't used for training and don't become public data. Microsoft has Azure Open AI, which lets government agencies use powerful AI on their own infrastructure. Anthropic's Claude has business versions with similar protections.

But these cost more and require more infrastructure to maintain. Chat GPT's free version is seductive precisely because it's free, easy, and powerful. Government agencies naturally gravitate toward it.

The tragedy of Gottumukkala's incident is that it could have been prevented with the kind of tool that costs maybe ten times as much per user and requires slightly more setup. CISA could have signed up for Chat GPT Enterprise, made sure everyone using it understood the rules, and documented what they were doing.

But that costs budget. It requires approvals. It's more complicated than just having your executives use the free version.

So instead, we get an acting CISA director uploading sensitive documents to a public AI system, failing a counterintelligence polygraph test, and then suspending the people who reported the breach.

This is the pattern in government: everyone wants new technology, nobody wants to pay for the secure version, and the costs of that decision only become apparent after something goes wrong.

The Trump Administration's Track Record On Cybersecurity

Gottumukkala's appointment came from the Trump administration, and his behavior reflects something about how that administration approaches security appointments.

First, they prioritized loyalty over credentials. Gottumukkala was elevated partly because he worked with Kristi Noem in South Dakota. He was part of a political team, not necessarily selected because he was the world's foremost expert in cybersecurity.

Second, when problems emerged (the failed polygraph), instead of reconsidering the appointment, they dismissed the polygraph as "unsanctioned." That's not how security should work. If someone fails a counterintelligence test, you should investigate why, not claim the test was invalid.

Third, when his behavior created a breach, the first response was to punish the people reporting it, not to investigate the cause.

This isn't unique to Gottumukkala or the Trump administration. But it does suggest a particular approach to security appointments: put loyal people in positions of authority, don't let inconvenient vetting results stop you, and circle the wagons when problems emerge.

That's a recipe for more breaches, not fewer.

What Should Happen Next (But Probably Won't)

In a rational world, this incident would trigger a comprehensive review of government AI security practices. There would be:

- A formal investigation into what documents were uploaded, to what systems, and what information was exposed

- A review of AI access policies across all federal agencies

- Mandatory security training for all government employees on AI risks

- Consequences for the people responsible for the breach

- New policies with real enforcement mechanisms

- Regular audits to ensure compliance

Some of this is probably happening behind closed doors. But the public response suggests something less comprehensive.

Gottumukkala's role was "acting" director, which is a temporary appointment. There was speculation he might not be formally confirmed. If he's replaced, the incident might just be treated as a personnel issue rather than a systemic security failure.

The six security staff members whose clearances were suspended? That situation has been murky. Some were reportedly cleared, but the message was already sent: report security breaches and face consequences.

As for Chat GPT and other AI platforms? They'll probably add some warnings about government data. But fundamentally, their business model still involves training on whatever data people upload. That's not going to change because a government official was careless.

The policy vacuum will probably persist. Agencies will continue using public AI systems for sensitive work because there's no alternative in place and the productivity benefits are real. And there will be more breaches, probably many more, as more people discover that uploading sensitive information to public AI systems is trivially easy.

The Broader Implications For Government AI Adoption

This incident is really about the tension between innovation and security. Government wants to move faster. It wants to adopt tools that make employees more productive. It doesn't want to be seen as stuck in the past, unable to use modern technology.

But security exists for a reason. The documents Gottumukkala uploaded might have seemed like no big deal to him. But that's how breaches usually start. Nobody thinks their document is sensitive enough to cause a problem. Everyone thinks their exception is justified.

And then one day, someone reverse-engineers classified information from AI training data. Or an adversary crafts a prompt that extracts sensitive operational procedures. Or journalists find government documents in an AI system's training data. And everyone realizes that the decision to move fast broke things in ways that are really hard to fix.

The alternative is to move more carefully. Require documentation. Require approval. Require training. Require audits. This slows adoption, but it prevents catastrophic breaches.

Right now, the federal government isn't moving carefully with AI. It's moving fast and hoping nothing goes wrong. Eventually, hope won't be enough.

Lessons For Private Sector Organizations

If you work in the private sector, especially in regulated industries or with sensitive data, the Gottumukkala incident has lessons for you too.

First, your executives are probably uploading sensitive information to Chat GPT right now. Most don't realize the security implications. You can assume the person running your department just fed proprietary information to a machine learning model that trains on everything you give it.

Second, if you're the person who discovers this and reports it, you might face retaliation, whether direct or subtle. The incident shows that leadership doesn't always respond well to news about their own breaches.

Third, your organization probably doesn't have a policy about this. And even if it does, the policy is probably vague and unenforced. "Don't upload sensitive data" isn't the same as "here's the secure AI tool you're authorized to use, and here's what you can use it for."

The fix is straightforward: evaluate your organization's AI usage. Document what tools people are using. Understand what data they're uploading. Implement policies before there's a breach. Consider enterprise versions of AI tools that keep your data private. Train people on the risks.

Do this proactively. Don't wait until you've accidentally trained a public AI model on proprietary information.

How AI Security Is Actually Different From Traditional Security

For people who've spent their careers thinking about traditional cybersecurity, AI systems are genuinely different in ways that matter.

Traditional breaches are usually about unauthorized access. Someone gets credentials, exploits a vulnerability, or installs malware. The goal is to steal or encrypt data. Defenders focus on preventing access: firewalls, encryption, access controls.

AI security is different because once data is incorporated into a trained model, you can't remove it. It becomes part of the mathematical weights that define the system. Even if Open AI wanted to remove Gottumukkala's documents from Chat GPT, they couldn't do it without retraining the entire model, which would be prohibitively expensive.

Moreover, AI systems leak information in weird ways that traditional systems don't. You can craft prompts that make them reveal training data. You can use social engineering to trick them into revealing information. An adversary doesn't need access credentials; they just need an account and patience.

This is why the traditional security mindset of "control access" doesn't work as well with AI. The real issue isn't who can access the system. It's what data gets put into the system in the first place. And once it's in, it's there permanently.

Gottumukkala's incident is interesting because it shows someone in a security leadership position not understanding this. He was probably thinking like a traditional IT person: "I'm the director, I should be able to use tools other people can't." But with AI systems, your role doesn't give you more security; it gives you more responsibility.

The Role of Polygraph Tests In Modern Security Vetting

There's something worth examining here about Gottumukkala's failed polygraph test and what it tells us about government security procedures.

Counter Intelligence polygraph tests are supposed to identify people who might be security risks. They ask about foreign contacts, financial problems, drug use, and past breaches of trust. The test's reliability is debated, but it's standard practice.

Gottumukkala failed his. Then the administration claimed the test was "unsanctioned" and proceeded with his appointment anyway.

This is problematic for several reasons. First, if a test is properly administered and someone fails it, you don't dismiss the results because they're inconvenient. You investigate why they failed.

Second, the decision to appoint him anyway sent a message: passing security vetting isn't actually required for this position. The loyalty matters more. This is backwards. Security positions should have the highest vetting standards, not the lowest.

Third, when his behavior later validated some of the concerns that polygraph tests are designed to catch (poor judgment, lack of sound security practices), there was no mechanism to hold him accountable based on the original vetting results.

This reflects a broader problem in government: security vetting is often treated as a checkbox rather than a genuine assessment of risk. If you have the right political connections, failed vetting can be overruled. If you don't, passing vetting might not matter if someone more powerful wants your position.

In a system where security actually mattered, failed vetting would end a candidacy. You'd have to investigate why it failed, address those issues, and then re-test. If you fail again, you don't get the position. It's not punishment; it's appropriate risk management.

What This Means For The Future Of AI In Government

If there's a silver lining to Gottumukkala's incident, it's that it forced government to think about AI security as a serious issue. Before this, AI in government was treated mostly as a productivity tool. Now it's becoming a security concern.

The National Security Council has been working on AI governance frameworks. The Department of Homeland Security is developing guidelines. Various agencies are figuring out AI policies.

But will this actually change behavior? Probably not as much as it should. Here's why:

First, AI is too useful. Even with security concerns, people will keep using it. The productivity gains are real and immediate. The security risks are hypothetical and delayed.

Second, there's no enforcement mechanism with teeth. If someone uploads sensitive information to Chat GPT, what happens? A reprimand? Loss of clearance? In Gottumukkala's case, it was the people reporting the problem who lost clearance, not him.

Third, the technology is moving faster than policy. By the time the government develops and implements policies about Chat GPT, there will be new tools with different risks that nobody has policies for.

What might actually work is a combination of technical controls and cultural change. Technical controls: give people access only to secure AI tools that don't train on your data. Cultural change: make security compliance part of how you evaluate leadership.

If you promoted people based on good security practices instead of just business results, you'd see different behavior. If leadership understood that a security breach reflects poorly on their judgment, not just their technical staff, priorities would shift.

But that requires the organization to decide that security matters more than speed. With AI, that's still an open question in most of government.

FAQ

What exactly did Madhu Gottumukkala upload to Chat GPT?

According to reports, Gottumukkala uploaded sensitive contracting documents marked "for official use only" to Chat GPT. The exact nature of the documents hasn't been fully disclosed, but they were related to CISA operations and not meant for public distribution. These weren't classified documents (which would be far more serious), but they were internal government information that had no business being in a public AI system.

Why is uploading documents to Chat GPT worse than uploading them to Gmail?

When you upload a document to Chat GPT, it becomes training data for the model. This means it's incorporated into the AI's understanding and could influence responses to other users. With Gmail, your document stays in your account and Google's systems. With Chat GPT, your document becomes part of a model used by millions of people globally. The exposure is far more permanent and far-reaching.

What are the actual security risks of uploading government documents to AI systems?

The risks include: the documents becoming part of the AI's training data and potentially influencing responses; adversaries crafting prompts designed to extract the information; the documents being accessible to anyone with Chat GPT access; and the permanent loss of control over how that information is used. For government documents, even unclassified ones, this exposure can compromise operational security and provide adversaries with valuable intelligence.

Why did Gottumukkala get an exception to use Chat GPT when other CISA employees couldn't?

The exact reason has never been clearly stated. It may have been for evaluation purposes, it may have been based on his position as acting director, or it may have been informal approval without clear authorization. The point is that the exception existed without apparent oversight or controls, creating the conditions for misuse.

What happened to the six CISA staff members whose clearances were suspended?

The six career security staff members who discovered and reported Gottumukkala's uploads had their access to classified information suspended. This appeared to many observers as retaliation against people who had done their job by reporting a security breach. The situation was later reconsidered, but the original action sent a strong message about the risks of reporting problems up the chain.

Could the information Gottumukkala uploaded to Chat GPT be extracted by adversaries?

Potentially, yes. While Chat GPT isn't designed to recall specific training documents, adversaries can use prompt injection techniques to try to reconstruct parts of training data. Moreover, the documents became part of the model's training, meaning other users might see related information in the AI's responses. The permanent incorporation into training data is the biggest concern.

What's the difference between classified and unclassified government documents?

Classified documents (Secret, Top Secret, etc.) contain information whose disclosure would cause severe damage to national security. Unclassified documents can still be sensitive and restricted (marked FOUO or similar). CISA's documents were unclassified but sensitive, making them less immediately catastrophic than classified information but still problematic to upload to public systems.

Has the federal government developed policies to prevent this from happening again?

Various agencies have been working on AI governance frameworks, and the White House has issued guidelines about AI use in government. However, implementation varies by agency, and enforcement mechanisms are often weak. The policy framework is still evolving, and many agencies still lack clear, enforceable rules about uploading data to public AI systems.

What should federal employees do if they discover others uploading sensitive information to Chat GPT?

Report it through proper channels, but understand that reporting security breaches can have retaliation risks, as Gottumukkala's incident showed. Ideally, there should be secure reporting mechanisms and whistleblower protections, but these vary by agency. Document what you observed and ensure your report goes to the right oversight authority, not just your immediate supervisor.

Are there AI tools that government agencies can use safely with sensitive information?

Yes. Chat GPT Enterprise, Azure Open AI, and similar tools keep your data private and don't use it for training. These are more expensive and require more setup, but they allow agencies to gain AI productivity benefits while maintaining data security. The failure to use these tools reflects budget constraints and preference for free solutions over paid alternatives.

The Bottom Line

The Gottumukkala incident isn't just a security oopsie by one executive. It's a symptom of how government is approaching artificial intelligence adoption: quickly, enthusiastically, and without adequate security thinking.

We're at an inflection point where government agencies have embraced AI tools faster than they've developed policies to govern them. The person tasked with protecting America's cybersecurity uploaded sensitive documents to a public AI system, and when security staff reported it, they faced clearance suspensions.

This will happen again. More officials will upload sensitive data. More breaches will occur. And until there's real enforcement, real consequences for violations, and real investment in secure alternatives, it will keep happening.

The technology isn't going away. AI will become more embedded in government over time. But the security model needs to change. From "trust executives to do the right thing" to "enforce controls that make the wrong thing harder." From "move fast and hope nothing goes wrong" to "move deliberately and assume things will go wrong."

Gottumukkala's incident is a warning. Whether government actually heeds it remains to be seen.

Key Takeaways

- CISA's acting director uploaded sensitive government documents marked 'for official use only' to ChatGPT without proper authorization

- Documents uploaded to public AI systems become permanent training data and cannot be deleted or controlled after incorporation

- The official later failed a counterintelligence polygraph test that was dismissed as 'unsanctioned,' raising questions about vetting procedures

- Six career security staff members who reported the breach had their classified information access suspended, suggesting retaliation against whistleblowers

- The incident reveals a critical gap between government understanding of AI security risks and the actual technical implications of uploading data to public systems

- Secure AI alternatives exist but government agencies prefer free public tools despite serious security risks

- This represents an emerging category of government breach driven by rapid AI adoption without adequate policy or oversight frameworks

Related Articles

- Enterprise AI Security Vulnerabilities: How Hackers Breach Systems in 90 Minutes [2025]

- AI Defense Breaches: How Researchers Broke Every Defense [2025]

- Microsoft Copilot Prompt Injection Attack: What You Need to Know [2025]

- Copilot Security Breach: How a Single Click Enabled Data Theft [2025]

- Enterprise AI Security: How WitnessAI Raised $58M [2025]

![Government AI Security Breach: Inside the ChatGPT Incident [2025]](https://tryrunable.com/blog/government-ai-security-breach-inside-the-chatgpt-incident-20/image-1-1769614709248.jpg)