Introduction

Last month, Instagram rolled out a feature aimed at safeguarding young users by alerting parents if their kids search for self-harm topics. This proactive approach is part of a broader initiative to increase digital safety and mental health awareness on social media platforms. But how exactly does this system work, and what implications does it have for parents, teens, and the tech industry?

In this comprehensive guide, we'll explore the technical underpinnings of Instagram's new feature, offer real-world examples and use cases, and discuss best practices for implementation. We'll also dive into common pitfalls and solutions, future trends in digital safety for social media, and provide actionable recommendations for parents and developers alike.

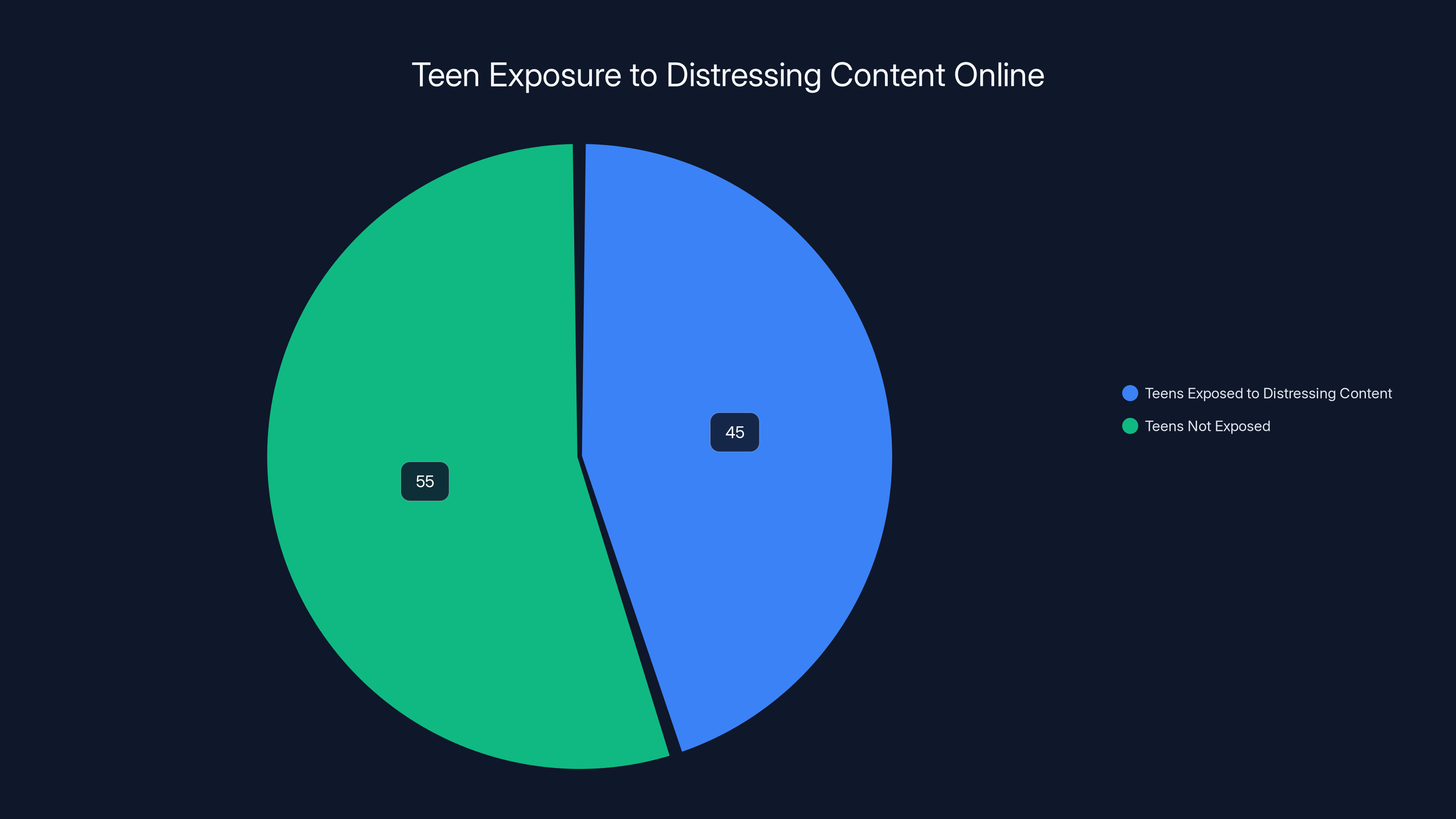

Over 45% of teens have encountered distressing content online, highlighting the importance of Instagram's alert system for enhancing digital safety.

TL; DR

- Key Point 1: Instagram's alert system uses AI algorithms to monitor searches related to self-harm.

- Key Point 2: Parents receive real-time notifications if concerning searches are detected.

- Key Point 3: The feature encourages open communication between parents and children about mental health.

- Key Point 4: Privacy concerns are addressed through encrypted notifications.

- Bottom Line: This feature can be a game-changer in preventing self-harm if used responsibly.

Why Instagram's Alert System Matters

In an era where digital interactions often surpass face-to-face conversations, social media platforms like Instagram have become crucial for social connectivity. However, they also expose young users to potentially harmful content. Instagram's alert system aims to mitigate these risks by providing a safety net for vulnerable users.

The Rise of Digital Safety

Social media's pervasive presence necessitates new safety measures. Recent studies highlight that over 45% of teens have encountered distressing content online. Instagram's alert system is a response to these statistics, aiming to create a safer virtual environment.

How the Alert System Works

Instagram's system uses machine learning algorithms to detect search terms associated with self-harm. If a match is found, an alert is sent to the parent's account, prompting them to engage in a conversation with their child.

Core Components of the System:

- Keyword Detection: Utilizes a database of flagged terms related to self-harm and mental health issues.

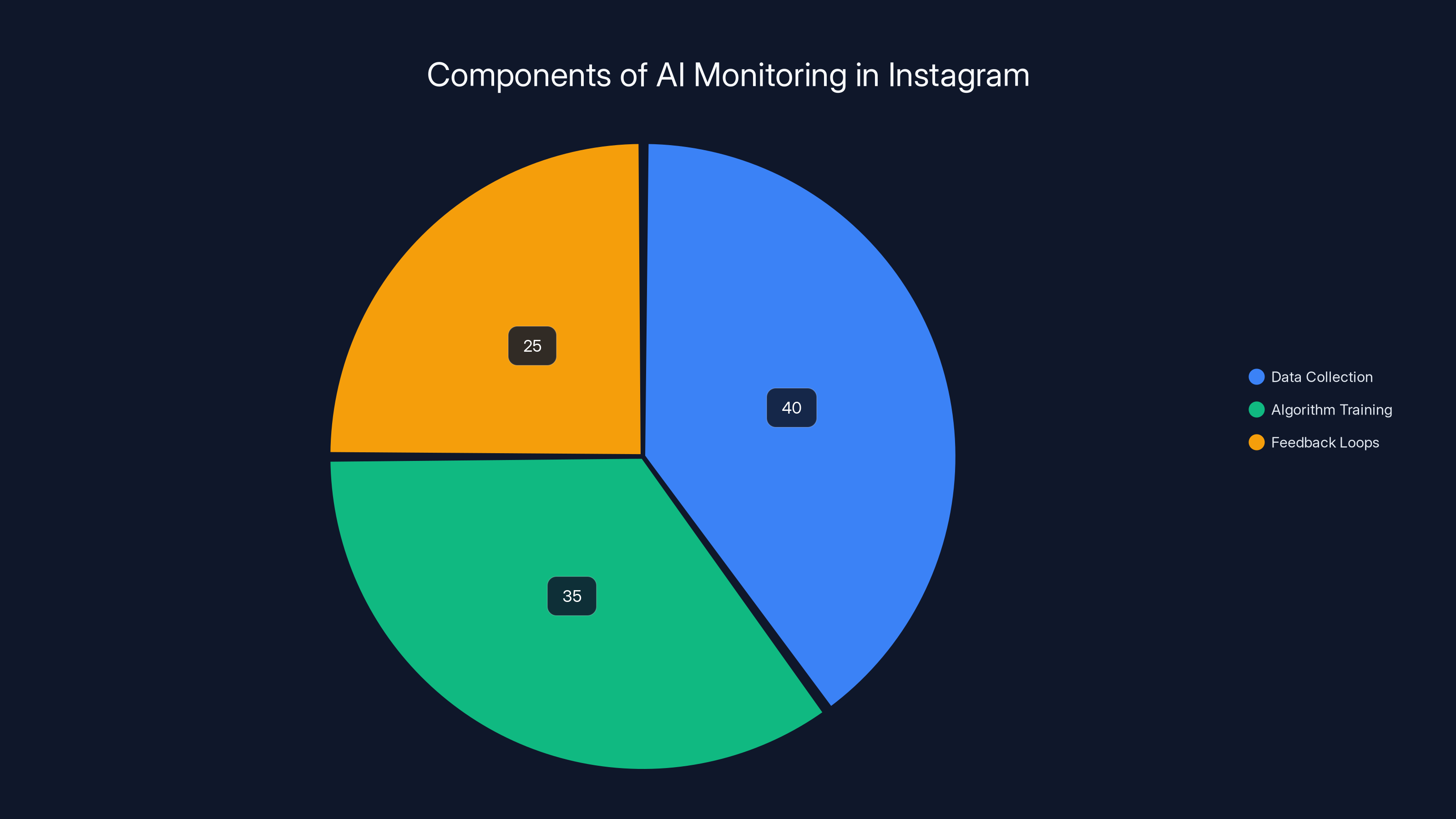

- AI Monitoring: Algorithms continuously scan user searches and content interactions.

- Parent Notifications: Real-time alerts sent via the app or email.

The pie chart illustrates the estimated distribution of focus areas in Instagram's AI monitoring system, with data collection being the primary focus. Estimated data.

Real-World Use Cases

Case Study: A Parent's Perspective

Consider Sarah, a mother of a 14-year-old who frequently uses Instagram. When her daughter searched for "ways to cope with depression," Sarah received an alert. This prompted a heartfelt conversation about mental health resources, including online therapy and support groups.

Educational Institutions

Schools can use Instagram's alert feature to collaborate with parents and mental health professionals. By understanding the types of content students are exposed to, schools can tailor their mental health programs more effectively.

Technical Details and Best Practices

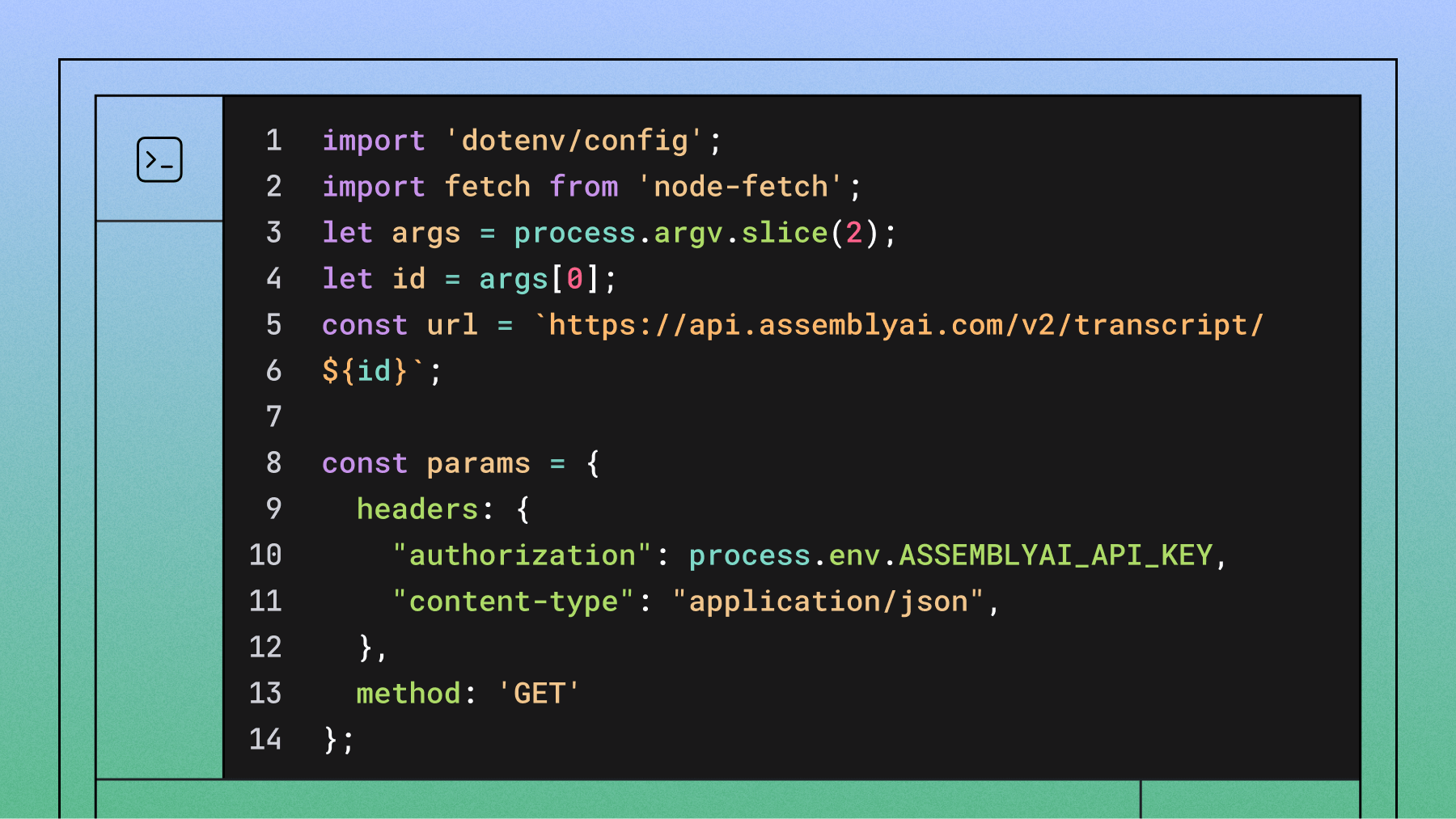

Implementing AI Monitoring

For developers interested in creating similar systems, understanding AI monitoring is crucial. Instagram's approach involves:

- Data Collection: Gathering anonymized search data to identify trends.

- Algorithm Training: Using supervised learning to improve detection accuracy.

- Feedback Loops: Incorporating user feedback to refine keyword lists.

Code Example: Basic AI Keyword Detection

pythonimport re

# Sample list of keywords

keywords = ['self-harm', 'depression', 'suicide']

# Function to check for keywords

def check_keywords(search_query):

for keyword in keywords:

if re.search(keyword, search_query, re.IGNORECASE):

return True

return False

# Test the function

search_query = "How to cope with depression"

print(check_keywords(search_query)) # Outputs: True

Privacy and Security Considerations

Encryption and Data Protection: Instagram ensures that all alerts are encrypted to protect user privacy. Parents only receive notifications without accessing specific search histories.

Consent Mechanisms: Users must opt-in to the alert system, maintaining transparency and user control.

Common Pitfalls and Solutions

False Positives

One challenge is minimizing false positives, where benign searches trigger alerts. Solutions include refining keyword databases and incorporating context analysis.

Privacy Concerns

Balancing privacy with safety is crucial. Instagram addresses this by encrypting notifications and limiting data access.

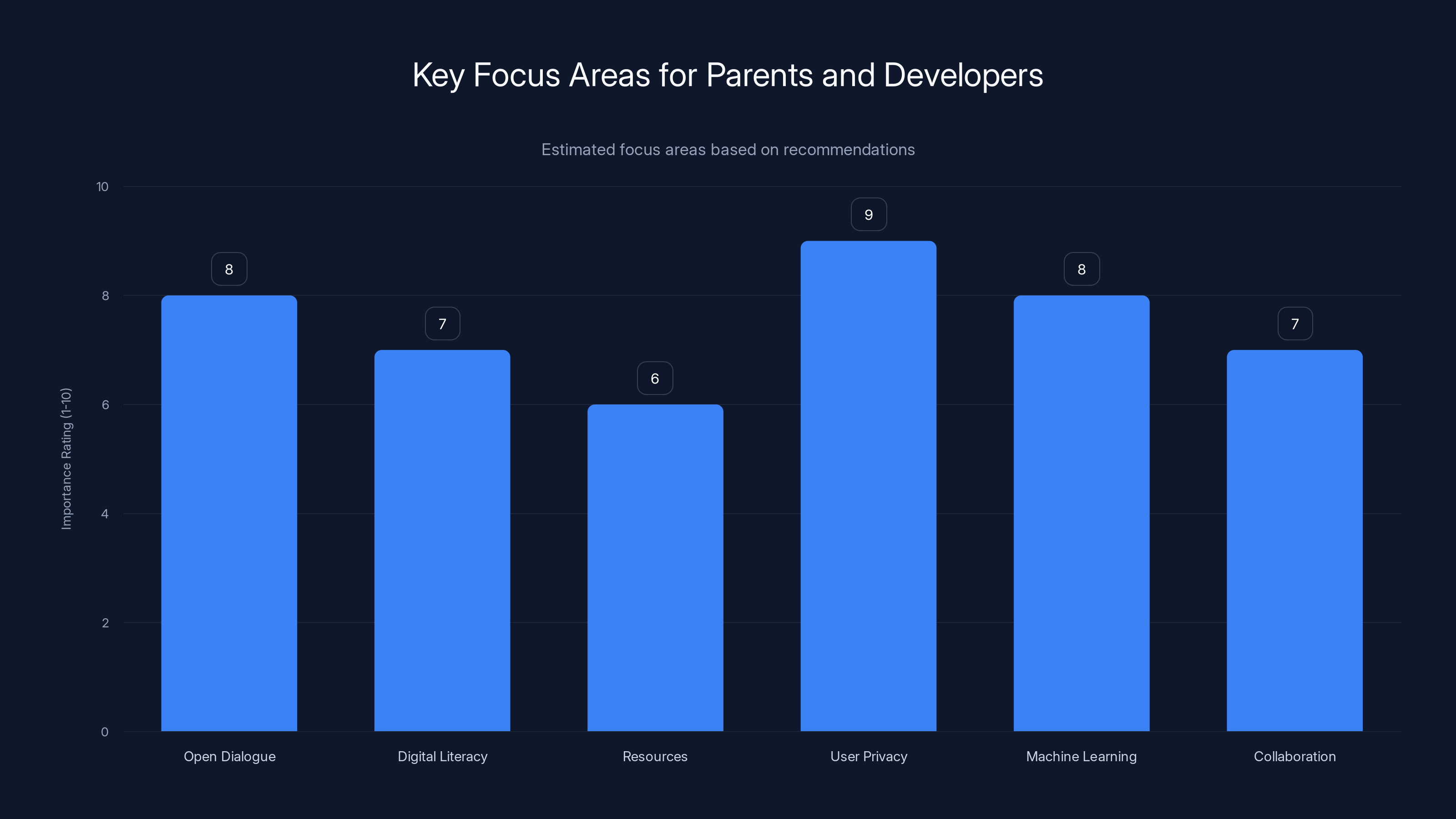

Estimated data: User privacy and open dialogue are top priorities for developers and parents respectively.

Future Trends in Digital Safety

Enhanced AI Capabilities

As AI technologies advance, expect more sophisticated detection systems. Improved natural language processing (NLP) will enable better understanding of context and intent behind searches.

Cross-Platform Safety Measures

Looking ahead, collaboration across platforms could enhance user safety. Unified systems would allow for consistent monitoring across different social media apps.

Legislative Changes

Regulation may play a significant role in shaping future digital safety measures. Governments worldwide are considering laws to mandate safety features on social media platforms.

Recommendations for Parents and Developers

For Parents

- Engage in Open Dialogue: Use alerts as a conversation starter about mental health.

- Educate on Digital Literacy: Teach children about online safety and critical thinking.

- Utilize Resources: Explore mental health resources and support groups.

For Developers

- Focus on User Privacy: Prioritize encryption and user consent in safety features.

- Leverage Machine Learning: Continuously refine algorithms with user feedback.

- Collaborate Across Platforms: Work with other tech companies to develop universal safety standards.

Conclusion

Instagram's alert system for self-harm searches is a promising step towards making social media safer for young users. By proactively notifying parents, it empowers them to address potential mental health issues before they escalate. While challenges like privacy and false positives exist, ongoing advancements in AI and legislative efforts can help overcome these hurdles. Ultimately, the success of such systems relies on collaboration between parents, developers, and policymakers.

FAQ

What is Instagram's alert system for self-harm searches?

Instagram's alert system detects searches related to self-harm and sends real-time notifications to parents, allowing them to intervene early and support their children.

How does Instagram's AI monitoring work?

Instagram uses machine learning algorithms to scan search queries for flagged keywords. When a match is found, an alert is sent to the parent's account.

What are the benefits of Instagram's alert system?

Benefits include early intervention in potential self-harm cases, improved mental health awareness, and fostering open communication between parents and children.

How does Instagram address privacy concerns?

Instagram encrypts notifications and requires user opt-in to ensure privacy. Parents receive alerts without access to detailed search histories.

What future trends can we expect in digital safety for social media?

Expect advancements in AI capabilities, cross-platform safety measures, and potential legislative changes mandating safety features on social media apps.

How can parents use Instagram's alert system effectively?

Parents should use alerts to start conversations about mental health, educate children on digital literacy, and explore available mental health resources.

Key Takeaways

- Instagram's alert system for self-harm searches empowers parents to intervene early.

- Machine learning algorithms enable real-time monitoring and notifications.

- Privacy is maintained through encryption and user consent mechanisms.

- Future trends include enhanced AI capabilities and potential legislative changes.

- Open communication and education are key to leveraging the alert system effectively.

Category

Technology

Tags

"Instagram alerts", "self-harm detection", "digital safety", "AI monitoring", "mental health awareness", "parental controls", "social media privacy", "machine learning", "online safety", "youth mental health"

Related Articles

- Understanding Instagram's Delayed Teen Safety Features: A Comprehensive Analysis [2025]

- Exploring Google Gemini's Agentic AI: Revolutionizing Everyday Tasks [2025]

- The Future of Visual Imitation Learning: Training AI Agents with Human Expertise [2025]

- Startups Surge Forward: Analyzing Stripe's Latest Data and Its Implications [2025]

- 1Password Pricing Update: What Users Need to Know [2025]

- End of Human Web Search: Nimble's Agentic Platform Revolutionizes Enterprise Search [2025]

![How Instagram's New Alerts for Self-Harm Searches Can Help Parents Protect Their Kids [2025]](https://tryrunable.com/blog/how-instagram-s-new-alerts-for-self-harm-searches-can-help-p/image-1-1772107521000.jpg)