When a Lawyer Weaponizes AI—And Loses Everything

Imagine this: You're a federal judge sifting through legal briefs when you encounter a passage that reads like it was written by someone trying way too hard at a creative writing competition. There are extended quotes from Ray Bradbury's Fahrenheit 451, metaphors about gardening and clay, flowery references to ancient Mesopotamian libraries and Biblical scribes. Then you realize something is seriously wrong.

This isn't creative fiction. It's a federal court filing.

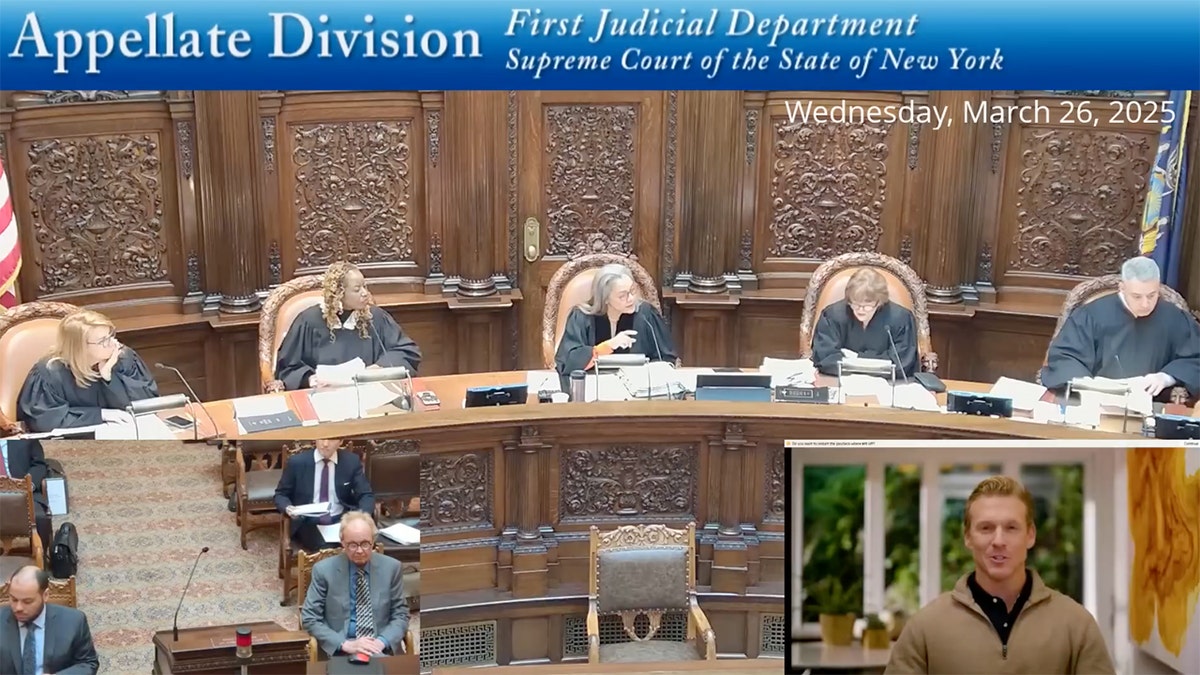

In February 2025, Judge Katherine Polk Failla of the U. S. District Court for the Southern District of New York made a decision that will likely echo through legal practice for years: she terminated an entire case due to a lawyer's egregious misuse of AI in drafting court documents. The attorney in question, Steven Feldman, had relied so heavily on AI tools to generate and review his filings that the documents became riddled with fabricated case citations that didn't exist, as reported by Law360.

But here's where it gets really interesting. When confronted about the fake citations and asked to correct his work, Feldman did something even worse: he submitted revised filings that were somehow even more problematic. These new documents contained the kind of overwrought, purple prose that immediately signaled AI generation to anyone with a trained eye. The judge noticed. And she was not impressed.

What makes the Feldman ruling historically significant isn't just the outcome—it's what it reveals about how lawyers are currently misusing artificial intelligence, often without understanding the technology they're relying on. This case has become the gold standard for documenting the dangers of AI in legal practice, and it's forcing the entire profession to confront uncomfortable truths about artificial intelligence, professional responsibility, and what happens when convenience meets negligence.

The ruling matters because it's the first time a federal judge has taken such dramatic action against AI misuse in legal practice. Previous cases involved isolated instances of fabricated citations. The Feldman case is different because it shows a pattern of escalating negligence combined with what appeared to be dishonesty about where the problematic content actually came from.

For anyone in the legal profession, this case is required reading. For everyone else, it's a cautionary tale about the risks of deploying advanced technology without understanding what you're doing. Let's break down what happened, why it matters, and what the legal profession is doing about it.

The Facts: How a Case Got Terminated

Steven Feldman was working on a federal case in the Southern District of New York when he made a decision that would ultimately destroy his case and raise serious questions about his professional judgment. Instead of personally reviewing the legal citations in his briefs and memoranda, he delegated the citation-checking process to AI tools. Not just one tool. Three of them.

He used Paxton AI to draft portions of his briefs. He used v Lex's Vincent AI to review citations. He ran documents through Google's Notebook LM for additional verification. The idea was sound in theory: use multiple AI systems to cross-check each other's work, ensuring accuracy. In practice, it was a disaster.

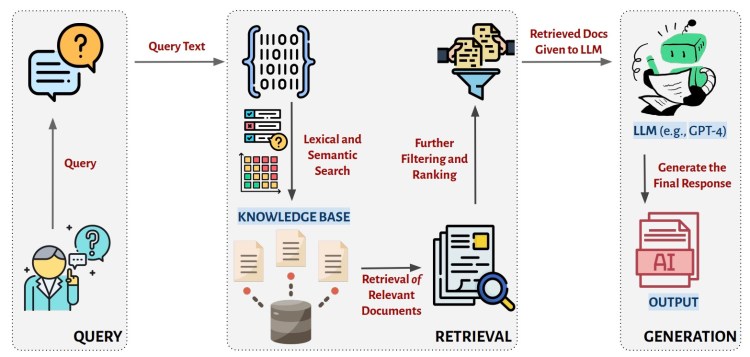

The problem with this approach is fundamental to how large language models work. These AI systems don't "know" facts the way humans do. They generate plausible-sounding text based on patterns in their training data. When it comes to legal citations, they can produce citations that look absolutely legitimate on the surface but don't actually exist. They can invent case numbers, court names, and holdings with complete confidence. In the world of AI, this is called "hallucination."

When Feldman submitted his initial filings, they contained multiple fabricated citations. These weren't typos or minor errors. They were entire cases that a reasonably careful lawyer should have discovered didn't exist by checking a legal database like Westlaw or Lexis Nexis. The judge's clerk apparently discovered the problem during routine verification of the citations in the brief, as noted by WWAY TV3.

When Judge Failla pointed out the problem and asked Feldman to correct his filings, she expected contrition and better work. Instead, she got something far more suspicious. The corrected filings didn't just fix the citations. They contained new passages that were stylistically bizarre—dramatically different from Feldman's normal writing. These passages featured elaborate metaphors, extended literary quotations, and vocabulary that seemed inconsistent with Feldman's demonstrated writing style in other parts of his submissions.

One problematic passage ran to approximately 200 words and consisted entirely of a quote from Ray Bradbury's Fahrenheit 451 about leaving a legacy and the sacred trust of craftsmanship. The passage talks about a grandfather telling his grandson that everyone must "leave something behind when he dies," and contrasts "the man who just cuts lawns" with "a real gardener" based on the quality of their touch and intentionality.

Another passage invoked ancient Mesopotamian libraries, Biblical references to the Book of Ezekiel, and obscure terminology about Ashurbanipal's scribes and the tav mark. It concluded with an elaborate apology that used the metaphor of ancient scribes carrying styluses as "both tool and sacred trust" to acknowledge Feldman's failure to catch the citation errors. The language was so purple, so flowery, so utterly unlike standard legal prose that Judge Failla essentially said in her ruling: no human lawyer would write like this.

Fieldman, when questioned about the passages in a court hearing, insisted that he had written every word himself. He claimed he read Fahrenheit 451 years ago and wanted to include "personal things" in his filing as a way of acknowledging his mistakes. As for the Ashurbanipal references, those "came from me" as well, he said. When pressed about the unusual style and florid prose, Feldman couldn't provide a satisfactory explanation.

But the judge didn't believe him. She wrote in her order that it was "extremely difficult to believe" that an AI system did not generate these passages. She found Feldman's testimony unconvincing and suggested he was being evasive about the true extent to which he had relied on AI to draft his filings. The situation suggested to the judge that Feldman had used AI to generate content, then stripped out most of the citations when he realized they were fabricated, but left the AI-generated prose intact because he either didn't notice how bad it was or didn't want to admit he'd used AI, as highlighted by CBS News.

Faced with this evidence of potential dishonesty combined with repeated misuse of AI tools, Judge Failla decided that the appropriate sanction was severe: dismissal of the case with prejudice. This means Feldman can't simply refile the same case later. It's terminated.

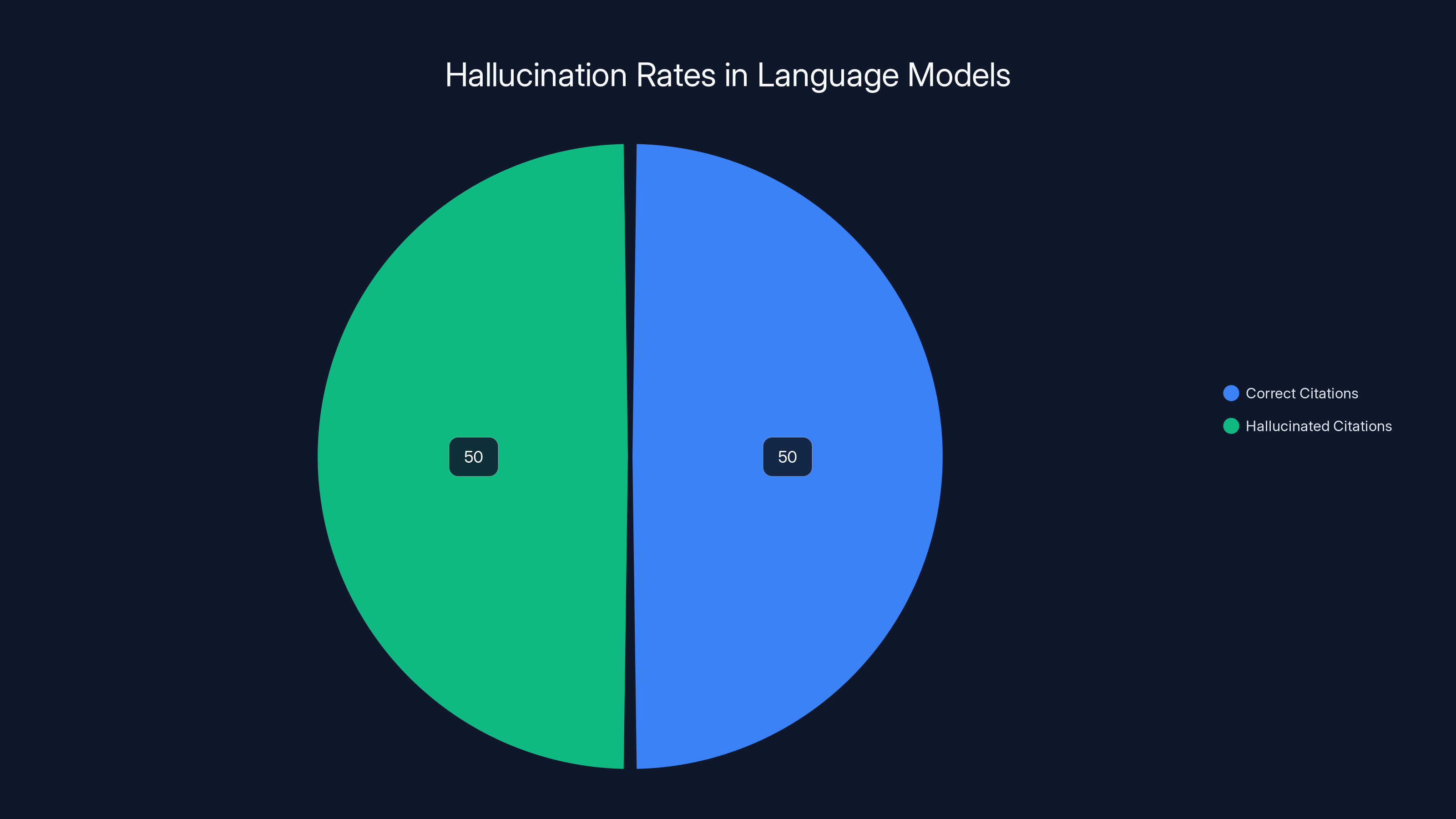

Research indicates that large language models have a hallucination rate of 40-60% when generating citations, meaning up to half of the citations could be fabricated. Estimated data.

Why This Case Matters: The Broader Implications

The Feldman ruling isn't important just because one lawyer lost a case. It's important because it represents a watershed moment in how the legal profession is beginning to reckon with AI. For the first time, a federal judge has used harsh sanctions to send a message that lawyers cannot simply delegate critical thinking to machines and expect professional responsibility to vanish in the process.

The legal profession has professional responsibility rules that date back decades. Lawyers are supposed to review their own work. They're supposed to verify citations. They're supposed to personally ensure that filings comply with court rules and that all representations to the court are accurate. These aren't suggestions. They're fundamental ethical obligations.

The problem is that AI tools have made it tempting to violate these obligations in new ways. A lawyer can tell themselves they're "using technology to work more efficiently" when they're actually abdicating responsibility. They can use the word "tool" to distance themselves from what the tool produces. They can claim ignorance about how AI hallucinations work.

Judge Failla's ruling makes clear that ignorance is not a defense.

In her order, the judge noted that Feldman's case wasn't the first to feature AI-generated fake citations. But it was the first where the attorney apparently tried to cover it up by submitting additional filings that appeared to contain AI-generated prose while claiming the content was his own original work. This combination of negligence, evasion, and potential dishonesty pushed the judge to take the extraordinary step of terminating the case entirely.

The ruling also highlights a fundamental mismatch between how lawyers are trained and what modern AI can actually do. Law schools teach citation checking as a manual skill. Lawyers are trained to understand that when they cite a case, they're making a representation to the court that the case exists, that their description of the holding is accurate, and that the case actually supports the legal point they're making. These aren't small things. The entire legal system depends on citations being accurate because judges rely on them to find precedent and make decisions.

When a lawyer submits a fabricated citation, they're not just making a mistake. They're potentially misleading a court of law. The consequences can be severe, especially in cases that hinge on specific precedents or interpretations of law.

For in-house legal teams and small law firms that are looking to AI tools to reduce costs and improve efficiency, the Feldman ruling is a wake-up call. Using AI to draft initial versions of documents, to suggest research directions, or to help organize information might be fine. Using AI as your actual quality control mechanism, or delegating citation verification to AI, is not fine. It violates professional responsibility standards and can result in sanctions, disbarment, and the loss of cases.

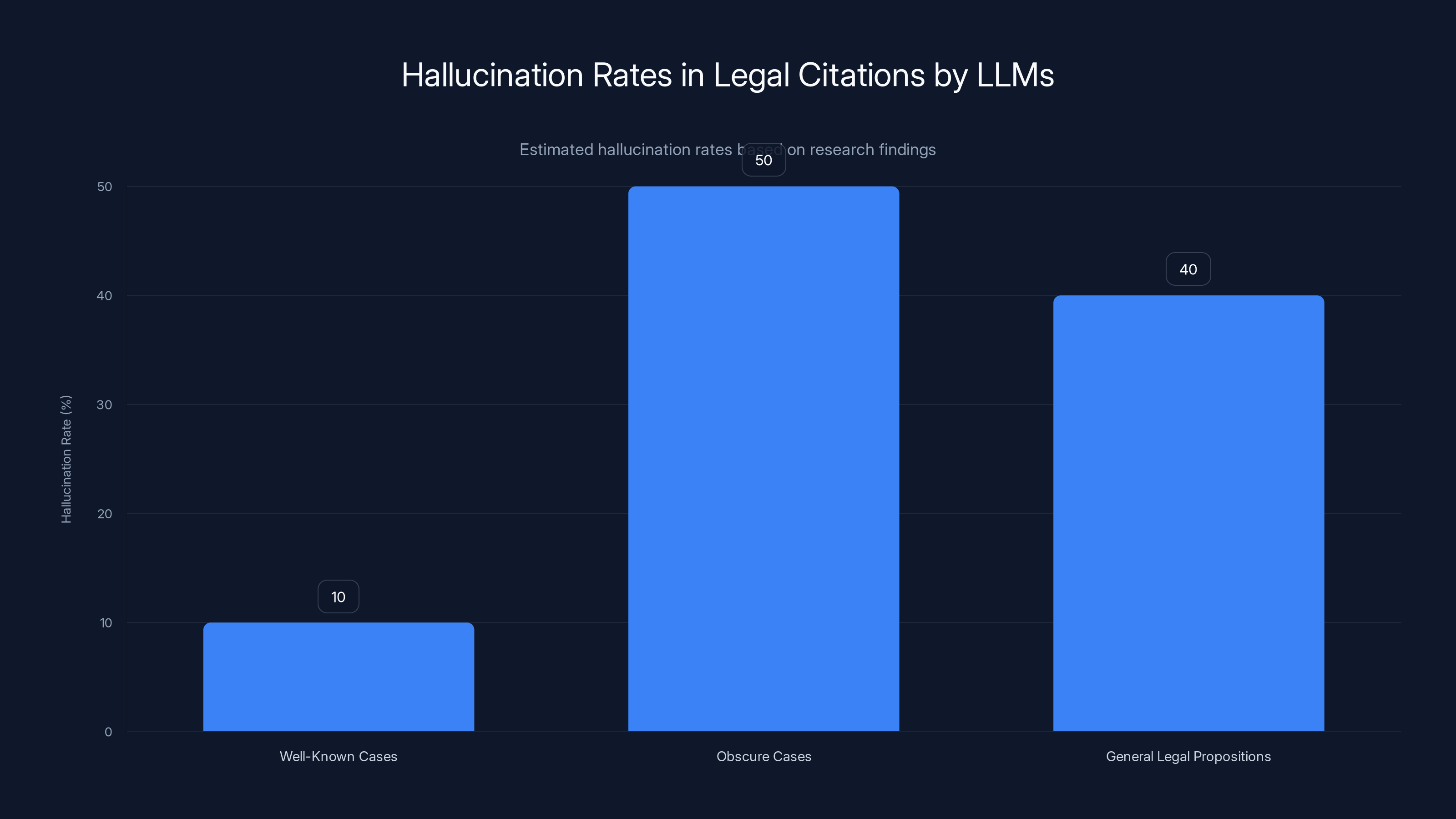

Large language models show higher hallucination rates when generating citations for obscure cases (50%) compared to well-known cases (10%). Estimated data based on research findings.

The AI Tools Feldman Relied On: What They Can and Can't Do

To understand what went wrong in the Feldman case, you need to understand what these AI tools actually do and where their limitations lie. Feldman used three different systems: Paxton AI, v Lex's Vincent AI, and Google's Notebook LM. None of these tools are designed to be the sole arbiter of legal citation accuracy, yet that's essentially how Feldman used them.

Paxton AI is described as an AI-powered legal assistant designed to help lawyers draft documents and briefs. It can take general information about a case and help structure arguments, suggesting legal frameworks and organization. But it doesn't have real-time access to every case in existence, and it can generate plausible-sounding citations that don't actually exist. This is a known limitation of language models.

v Lex's Vincent AI is designed as a research assistant that can help lawyers find relevant cases and synthesize legal research. In theory, it has access to v Lex's database of legal documents and could potentially verify citations. But even tools with database access can generate hallucinations if the relevant information isn't in their training data or if they're not properly configured to distinguish between actual documents and generated text.

Google's Notebook LM is a note-taking and research organization tool that uses AI to help synthesize information from documents you provide. It's useful for organizing research, but it's not a legal research tool, and using it to verify citations is a misuse of the platform.

The core problem Feldman faced is that he stacked these tools on top of each other, assuming that using multiple AI systems to verify each other would eliminate errors. It's a reasonable assumption, but it's wrong. If all three systems make the same mistake in the same way (which is plausible if they share similar underlying training data), then multiple rounds of verification create a false sense of confidence rather than actual accuracy.

This is why the legal profession is moving toward stricter guidelines about AI use. Lawyers need to understand that AI tools are helpers, not replacements for human judgment and professional responsibility. You can use them to draft initial content. You can use them to suggest research directions. You cannot use them as your primary quality control mechanism for citations.

What's particularly interesting about Feldman's choice of tools is that none of them are marketed as citation verification systems. He was essentially using tools in ways they weren't designed to be used and then expecting them to perform functions they were never intended to perform. This is a cautionary tale about the gap between what AI can theoretically do and what it can actually do reliably in practice.

Professional Responsibility Rules and AI: The Legal Framework

The legal profession operates under rules of professional conduct that are remarkably consistent across different states. In New York, where the Feldman case occurred, lawyers are governed by the New York Rules of Professional Conduct. One of the most relevant rules is 8.4, which prohibits conduct that is prejudicial to the administration of justice or that brings dishonor to the legal profession.

But there are also more specific rules. Rule 3.3 requires lawyers not to make false statements of fact or law to a tribunal. Rule 3.4 prohibits conduct that obstructs the other party's access to evidence. And Rule 1.1 requires that lawyers provide competent representation, which necessarily includes understanding the tools they're using to practice law.

These rules predate AI by decades. They were written for a world where lawyers used typewriters and law libraries. But they still apply to lawyers using artificial intelligence, and Judge Failla's ruling makes clear that courts expect lawyers to understand that.

In her order, Judge Failla specifically referenced the concept of "candor toward the tribunal." This is a foundational principle in legal ethics. Lawyers have a duty to be honest with courts. When a lawyer submits a brief with fabricated citations, they're violating this duty. When they then submit revised briefs containing AI-generated prose while claiming the content is their own work, they're potentially violating it a second time.

The judge found Feldman's testimony that he had written the flowery passages himself to be unconvincing, and she suggested that Feldman's real sin wasn't just using AI—it was being dishonest about the extent to which he had used AI and potentially trying to hide the evidence of AI use by removing citations while keeping the AI-generated prose intact.

This touches on something deeper about professional responsibility and AI: lawyers have an obligation not just to avoid obvious errors, but to understand their tools well enough to know what they can go wrong. If you use an AI tool that's known to hallucinate citations, and you don't verify those citations yourself, you're violating your professional responsibility obligations. You're not just making a mistake. You're being negligent in ways that the rules of professional conduct explicitly prohibit.

The American Bar Association has begun issuing guidance on AI use in legal practice. In 2023, the ABA issued Formal Opinion 512, which addressed the use of generative AI in legal services. The opinion makes clear that lawyers have a duty to understand AI tools they use and to ensure that the output of those tools complies with their professional responsibility obligations.

Specifically, the opinion states that when using AI tools, lawyers must:

- Ensure the tool is appropriate for the task

- Understand the capabilities and limitations of the tool

- Maintain competence regarding the tool's use

- Verify the accuracy and quality of any output

- Maintain confidentiality of client information

- Disclose the use of AI if required by the client relationship

Feldman violated most of these principles. He used AI tools that aren't specifically designed for citation verification to perform citation verification. He apparently didn't understand the limitations of these tools regarding hallucinations. He didn't personally verify the output before submitting filings to the court. And he attempted to hide or downplay his reliance on AI when questioned about it.

The Feldman ruling essentially says that when you violate these principles, the consequences can be severe. You can lose your case. You can face bar discipline. Your professional reputation can be damaged. And none of this is negotiable.

Steven Feldman used multiple AI tools to cross-check legal citations, but the approach led to errors due to AI 'hallucinations'. Estimated data.

The Broader Landscape: How Common Is AI Misuse in Legal Practice?

The Feldman case made headlines because Judge Failla took such dramatic action, but AI misuse in legal practice is more common than most people realize. Law firms are under intense pressure to improve efficiency and reduce costs. AI tools promise to help with everything from legal research to document drafting to contract review. Many lawyers are adopting these tools without fully understanding what they do or how they can go wrong.

In 2023, a case involving the law firm Levidow & Levidow came to light when the lawyers submitted briefs containing citations to cases that didn't exist. The lawyer involved claimed he relied on Chat GPT to generate the citations. The case resulted in bar discipline and sanctions. In another case, lawyers at the firm Fardy & Partners submitted a brief with fabricated citations allegedly generated by Chat GPT.

These aren't isolated incidents. They reflect a broader pattern of lawyers adopting AI tools without understanding their limitations. Some firms have started implementing policies around AI use, requiring lawyers to disclose when they've used AI tools and to implement verification procedures. But there's no industry-wide standard yet, and many lawyers are still operating in a gray area.

The problem is compounded by the fact that AI tools are getting better at generating plausible-sounding text. Early AI systems were obviously AI-generated. Modern systems can produce text that's indistinguishable from human-generated text in many contexts. This makes it harder for judges and opposing counsel to identify AI-generated content, which in turn makes it more dangerous when lawyers don't properly verify AI output.

Some bar associations have started issuing guidance. The New York State Bar Association, for example, has issued ethics opinions addressing AI use. But there's no uniform approach, and the guidance often emphasizes lawyer responsibility more than tool capability. In other words, bar associations are saying "you are responsible for any AI output you submit to a court," which is correct, but they're often vague about what that responsibility actually entails in practice.

For small law firms and solo practitioners, the risks are particularly acute. These lawyers often use AI tools to compensate for limited staff resources, but they may not have the time or expertise to thoroughly vet AI output. They might use Chat GPT to draft a brief, assume it's accurate because it's plausible-sounding, and submit it without personally verifying citations or claims. The Feldman case suggests that this approach is legally dangerous.

Meanwhile, larger firms have the resources to implement more rigorous verification procedures. Some firms are hiring people whose job is specifically to audit AI-generated content before it goes to courts. Some are using multiple verification tools. Some have simply decided not to use AI for mission-critical tasks like citation verification.

The legal profession is in a period of transition. AI tools are becoming more sophisticated, more available, and more tempting to use. But the professional responsibility rules haven't caught up, and the consequences of misuse can be severe. The Feldman ruling will likely accelerate this transition, pushing the profession toward clearer standards and stricter practices around AI use.

The Citation Problem: Why Hallucinations Happen

One of the most troubling aspects of the Feldman case is that it highlights a fundamental limitation of large language models that's not going away anytime soon: these systems can generate citations that don't exist with absolute confidence. They don't generate them reluctantly or with hedging language. They just make them up, and they sound real.

This happens because large language models work by predicting the next word in a sequence based on patterns learned from their training data. When you ask an LLM to cite a case that's relevant to a legal argument, the model doesn't actually "look up" the case. It generates text that matches the pattern of how legal citations typically look.

If your training data contains millions of legal briefs and case citations, you learn what a typical citation looks like. It has a case name, like "Smith v. Jones." It has a volume number and a reporter abbreviation, like "123 F.3d." It has a page number. You learn the conventions and you can generate text that matches those conventions. But you don't actually know which specific combinations of those elements correspond to real cases that exist.

When researchers at organizations like Stanford have specifically tested large language models on legal research tasks, they've found hallucination rates that are genuinely alarming. In some cases, when asked to cite cases supporting particular legal propositions, the models generated plausible-sounding citations with 40-60% hallucination rates. In other words, nearly half the citations didn't exist.

What's worse is that hallucinations often appear more frequently for more obscure or specific citations. If you ask an LLM to cite a well-known Supreme Court case, it's usually accurate. If you ask it to cite an obscure federal court decision from 2015, it's more likely to hallucinate. This creates an especially dangerous situation for lawyers working on cases involving specialized areas of law where citations might be less mainstream.

The reason this matters for the Feldman case is that it explains how his initial briefs came to contain fabricated citations in the first place. Feldman used Paxton AI to draft portions of his briefs. Paxton AI, like any language model-based tool, can generate plausible-sounding citations that don't actually exist. Then Feldman used other AI tools to verify the citations, which created a false sense of confidence because the verification tools might generate the same hallucinations.

Some AI researchers have proposed solutions to this problem. One approach is to have AI systems decline to cite cases when they're not confident about the citation, rather than generating plausible-sounding hallucinations. Another approach is to have AI systems with actual access to legal databases, so they're genuinely looking up cases rather than generating text. But these solutions require development effort and aren't widely implemented yet.

In the meantime, the safest approach for lawyers is simple: don't rely on AI for citation verification. Use AI to draft initial content if you want, but then verify every single citation yourself by checking an authoritative legal database. This is the approach that the Feldman ruling essentially mandates, and it's the approach that professional responsibility rules require.

Estimated data suggests Rule 1.1 and Rule 3.3 are highly relevant in the context of AI use in legal practice, emphasizing the need for competence and honesty.

Judge Failla's Reasoning: What the Ruling Actually Says

Judge Katherine Polk Failla's order in the Feldman case is worth reading carefully because it provides crucial guidance for how courts are likely to view AI misuse going forward. The judge was clearly frustrated by what she saw, but she was also systematic in her reasoning.

First, the judge established that fake citations existed in Feldman's initial filings. This was verifiable fact. The citations didn't exist when the judge's staff checked them. Feldman admitted this in his declaration and later testified about it. There was no dispute on this point.

Second, the judge noted that when asked to correct the filings, Feldman submitted revised versions. But these revised versions contained new problems: stylistically odd passages that appeared to be AI-generated. The judge specifically highlighted the Ray Bradbury quote and the Ashurbanipal passage as examples of text that didn't match Feldman's demonstrated writing style in other parts of his filings.

This is where the judge's reasoning becomes particularly important. The judge essentially said: I can recognize AI-generated prose when I see it. It has distinctive characteristics. It's overly flowery. It uses elaborate metaphors. It references obscure historical examples. It doesn't sound like a real lawyer writing in a professional context.

The judge noted that she found it "extremely difficult to believe" that Feldman had written these passages himself. His own testimony about reading Fahrenheit 451 years ago and wanting to include personal touches in the filing wasn't credible. The judge suggested that what had actually happened was that Feldman had used AI to draft the passages, then realized the citations were fake and removed them, but kept the AI-generated prose intact either because he didn't notice how bad it was or because he wanted to downplay the extent to which he'd relied on AI.

The judge also noted that Feldman's explanation for using multiple AI tools to verify citations was problematic. He couldn't explain why using three different AI systems would be better than one competent human being personally reviewing the citations. And when the judge raised this point, Feldman's answers were evasive.

Based on this reasoning, the judge concluded that Feldman had misused AI tools, violated his professional responsibility obligations, and then been less than candid about the extent of his reliance on AI when questioned about it. The combination of these factors warranted the extraordinary sanction of case dismissal.

It's worth noting that Judge Failla didn't just dismiss the case. She wrote a detailed opinion explaining her reasoning. This is significant because it means other judges now have guidance for how to handle similar situations. The opinion serves as a precedent not just for sanctions, but for how courts will evaluate allegations of AI misuse in legal practice.

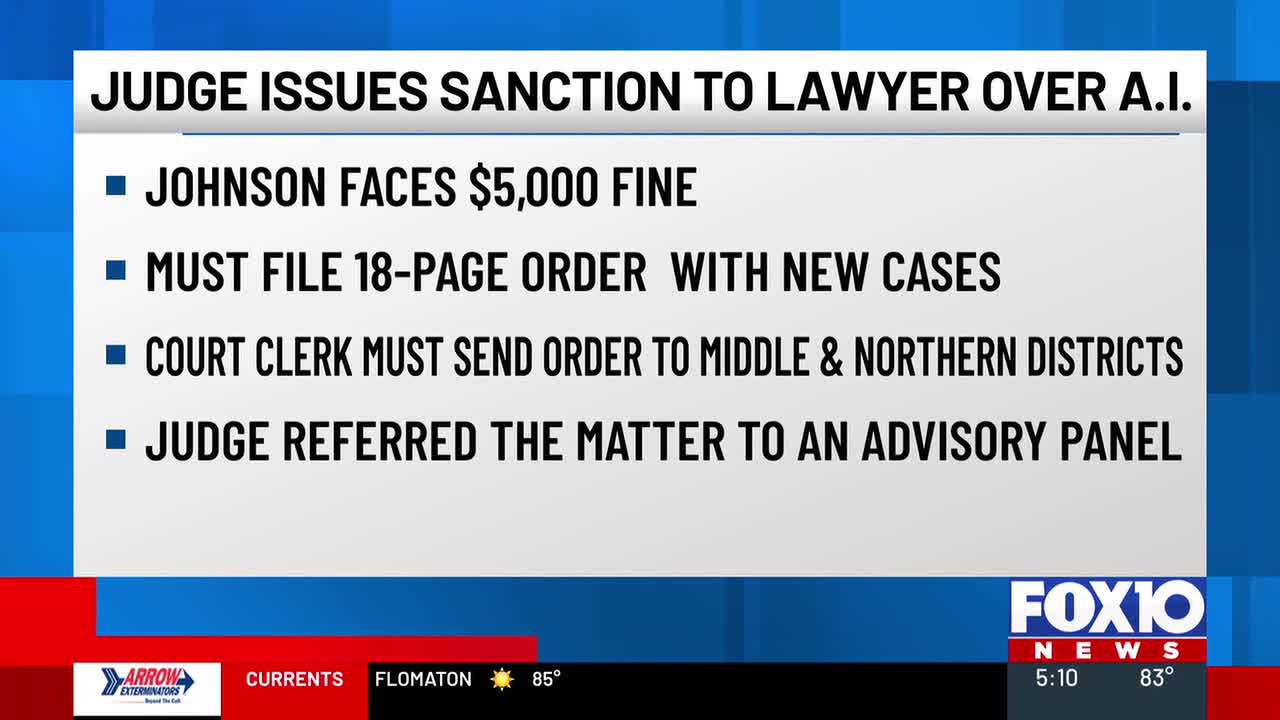

The judge also noted in her order that there were alternative sanctions that might have been appropriate in other circumstances. She could have imposed monetary sanctions. She could have referred the matter to the bar for discipline. She could have allowed the case to proceed but with restrictions on Feldman's ability to file further documents. The fact that she chose the harshest sanction available suggests that she saw something particularly egregious in Feldman's conduct beyond mere negligence.

The Question of Credibility: Did Feldman Actually Write Those Passages?

One of the most contentious aspects of the Feldman case is the question of whether Feldman actually wrote the problematic passages himself or whether AI wrote them and Feldman is simply being dishonest about it. Judge Failla clearly believed the latter, but Feldman testified to the contrary.

This raises an interesting question: how can you prove that someone used AI to write something when they deny it? Writing style analysis can be helpful, but it's not definitive. Feldman could genuinely write in an overly flowery style when he's in a reflective mood. He could have deliberately adopted a more literary tone to apologize for his mistakes.

But the judge's view was that the probability of this explanation was very low. The passages in question were so stylistically distinct from Feldman's other writing that the most plausible explanation was AI generation. The judge noted that the Bradbury passage seemed designed specifically to apologize for the citation errors while employing a metaphor about touching clay and leaving marks, which connects thematically to the issue of citations.

The judge also noted that the Ashurbanipal passage similarly seemed designed to acknowledge the scope of Feldman's failures while employing extensive literary and historical metaphors. Both passages seemed like they might be responses to a prompt like "write an apology for missing citations in a legal filing, using literary and historical references."

This is where AI-generated content becomes genuinely hard to defend. If you ask an AI system to write something, and then submit that text to a court, and then claim you wrote it yourself, you're potentially committing fraud. You're making a misrepresentation to the court about the origin of the document.

Feldman's position was that he wrote the passages himself. But the judge didn't believe him. The judge found his testimony unconvincing and his explanations inadequate. The judge essentially said: I'm not persuaded that you wrote this, and the most plausible explanation is that you used AI and are now being evasive about it.

This creates a kind of credibility paradox for lawyers who use AI. If you use AI to draft content and then submit it to a court, you need to either:

- Disclose the use of AI (if you're disclosing it)

- Personally edit and modify the AI content so heavily that it becomes genuinely your own work

- Be honest when questioned about whether you used AI

Feldman apparently did none of these. He used AI, didn't modify the content significantly, and then claimed he wrote it himself when questioned. This combination of factors made his conduct particularly problematic in the judge's view.

The broader lesson here is that using AI in legal practice isn't necessarily wrong. But if you do use it, you need to be honest about it. You need to personally verify the output. And you absolutely cannot lie about whether you used it when asked directly by a court.

Law firms show the highest intensity in responding to AI use post-Feldman ruling, focusing on stricter policies and verification processes. Estimated data.

The Ripple Effects: How the Legal Profession Is Responding

The Feldman ruling has already begun to ripple through the legal profession, creating pressure for clearer standards and stricter policies around AI use. Law firms are responding in different ways, but the overall trend is toward more caution and more rigor in how AI is deployed in legal practice.

Some firms have implemented new policies requiring that any AI-generated content be reviewed by a partner before submission. Some firms have prohibited the use of generative AI for citation-critical tasks entirely. Some firms are using specialized legal AI tools that have database access rather than general-purpose language models that are more prone to hallucination.

Bar associations are also responding. Several state bars have issued ethics opinions addressing AI use. The consensus so far is that lawyers are responsible for the output of AI tools they use, and that they must verify the accuracy of AI-generated content before submitting it to courts. But bars are also beginning to discuss whether more specific guidance is needed.

Law schools are starting to incorporate AI ethics into their curricula. Students are learning about the risks of AI-generated hallucinations and the importance of verification. Some schools are explicitly teaching students about cases like Feldman as cautionary tales.

The broader technology sector is also responding. AI tool developers are working on systems that can identify when their own output might be unreliable. Some legal AI tools are adding verification features that check citations against legal databases before presenting them as verified. But these systems are still emerging and aren't universally available.

One interesting development is the emergence of "AI compliance" as a service within law firms. Some firms are hiring specialists whose job is specifically to audit AI-generated content before it goes to courts. This creates overhead, but it's cheaper than the alternative of losing cases or facing bar discipline.

The insurance industry is also paying attention. Legal malpractice insurers are starting to require proof that law firms have appropriate policies around AI use before offering coverage. Some insurers have explicitly excluded coverage for AI-related malpractice unless firms can demonstrate proper verification procedures.

All of this represents a maturation of how the legal profession thinks about AI. The early phase, where lawyers adopted AI tools without understanding their limitations, appears to be ending. The phase where the profession is establishing standards and requiring verification is beginning.

What Lawyers Need to Do Right Now

If you're a lawyer and you use AI tools in your practice, the Feldman ruling creates some clear imperatives. These aren't suggestions. They're lessons drawn from a case where the consequences were severe.

First, understand your tools. If you use AI to assist with legal work, you need to know what the tool is designed to do, what its limitations are, and what it's not designed to do. Read the documentation. Understand how the tool handles citations. Know whether it has access to legal databases or whether it's generating text based on patterns.

Second, implement verification procedures. Before submitting anything that contains citations to a court, personally verify those citations in an authoritative legal database. Don't rely on AI to verify citations. Don't assume that using multiple AI tools to verify each other creates reliable results. Do the verification yourself.

Third, maintain professional responsibility standards. Your obligation to be honest with courts and to provide competent representation doesn't change just because you're using AI. If anything, it becomes more important because you need to ensure the AI output meets these standards.

Fourth, be transparent about AI use if questioned. If a court or opposing counsel asks whether you used AI, answer honestly. Don't try to hide it. Don't claim you wrote content yourself if you used AI to generate it and then only minimally edited it. Transparency is safer than evasion.

Fifth, document your verification process. If you use AI to draft content, keep records of how you verified it. Keep records of when you checked citations. Keep records of what changes you made to the AI-generated content. This documentation can protect you if your verification process is ever questioned.

Sixth, consider the risks versus the benefits. AI tools can help with legal research, drafting, and organization. But the risks of relying on them for citation-critical tasks outweigh the efficiency gains. Be realistic about where AI is useful and where it creates liability.

These aren't complicated requirements, but they do require discipline and care. The Feldman ruling suggests that courts will not be forgiving of lawyers who cut corners on these requirements.

Estimated data suggests AI significantly influences legal responsibilities, particularly in representation accuracy and professional responsibility. Estimated data.

The Broader Question: What Does AI Ethics Look Like in the Legal Profession?

The Feldman case raises a broader question about what responsible AI use looks like in a profession where accuracy and trust are fundamental. The legal profession is built on the principle that lawyers have a duty to be honest with courts and to provide competent representation. AI tools introduce new ways that lawyers can fail these duties, sometimes without even realizing it.

A key part of AI ethics in legal practice is understanding that good intentions aren't sufficient. Feldman may have genuinely believed he was using AI responsibly. He may have thought that using multiple AI tools to verify citations was a sound approach. But intention doesn't matter if the result is fake citations in a court filing.

AI ethics in legal practice also requires understanding the difference between using AI as a helper and using it as a decision-maker. Using AI to organize research or suggest relevant cases is different from using AI as your primary citation verification mechanism. The first is using AI as a tool. The second is abdicating professional responsibility.

There's also a question of transparency. If AI is going to be part of legal practice, courts and clients need to know about it. Some clients might prefer that AI not be used in their cases. Some courts might want to know about AI use. Transparency is part of maintaining trust in the legal system.

The Feldman ruling seems to set a marker for what responsible AI use looks like in the legal profession: it means understanding your tools, verifying output, maintaining professional standards, and being honest about what you've done. It means that AI can help you work more efficiently, but it cannot replace human judgment and professional responsibility.

Looking Forward: What Changes Are Coming?

The Feldman ruling is likely to accelerate several trends that were already underway in the legal profession.

Stricter bar guidelines on AI use. State bars are likely to issue more specific guidance about how lawyers can and cannot use AI. We'll probably see explicit requirements for citation verification, disclosure requirements for AI use in certain contexts, and clear standards for what constitutes malpractice when AI is involved.

Evolution of AI tools themselves. Developers of legal AI tools will likely invest in features that reduce hallucinations, that verify citations against databases before presenting them, and that make it easier for lawyers to audit AI output. The tools will get better at distinguishing between what they're confident about and what they're uncertain about.

Insurance and liability issues. Legal malpractice insurers will likely continue to tighten requirements around AI use. We may see a situation where firms can't get malpractice coverage unless they implement specific AI verification procedures. This creates strong financial incentives for doing things right.

Educational changes. Law schools will incorporate AI ethics into their curricula more systematically. Students will learn not just about the benefits of AI in legal practice, but about the risks and the ethical obligations that come with using it.

Technology solutions. Companies will emerge to provide AI auditing, verification, and compliance services. These services will check legal documents for AI-generated content, verify citations, and ensure compliance with emerging standards.

Regulation and standards. There may be broader regulatory efforts to establish standards for legal AI tools. Some jurisdictions might require certification or testing of AI tools before they can be used in legal practice. This would parallel how other professions regulate technology.

All of these changes reflect the legal profession's recognition that AI is here, it's powerful, and it needs to be managed carefully. The Feldman ruling is a turning point that accelerates this recognition and pushes the profession toward more responsible practices.

Lessons for Other Professionals Using AI

While the Feldman case is specifically about lawyers, the lessons extend to other professionals who use AI tools. Doctors, accountants, engineers, and consultants all face similar risks when they rely on AI without understanding its limitations.

The core lesson is this: AI is a tool that amplifies your capabilities, but it doesn't replace your professional judgment or your responsibility for the work you produce. If you're an accountant and you use AI to prepare financial statements, you're responsible for the accuracy of those statements. If you're a doctor and you use AI to assist with diagnosis, you're responsible for ensuring the diagnosis is correct. If you're an engineer and you use AI to check designs, you're responsible for ensuring the designs are safe.

This means that any professional using AI needs to understand the tool's limitations, implement verification procedures, maintain the standards of their profession, and be transparent about how they're using AI. These principles apply across professional boundaries.

It also means that professional ethics rules, licensing requirements, and liability standards will all need to evolve to address AI use. This process is just beginning, and the Feldman case is an important marker of how courts and regulators are likely to view AI misuse.

Conclusion: The Feldman Case as a Watershed Moment

The Feldman case is historically significant not because it's the first case involving AI-generated fake citations in legal practice, but because a federal judge took such dramatic action in response. Judge Failla essentially said: using AI carelessly in legal practice is not just a mistake. It's a violation of professional responsibility. It can result in the loss of cases, bar discipline, and damage to professional reputation.

For lawyers, the ruling creates clear expectations: if you use AI to assist with legal work, you are responsible for verifying the output. You cannot delegate citation verification to AI systems. You cannot submit documents containing fabricated citations to courts. You cannot lie about whether you used AI when questioned. These aren't suggestions. They're professional responsibility requirements.

The ruling also sends a message to the broader profession about the importance of understanding AI before deploying it. The legal profession, like other fields, is under pressure to embrace new technologies to improve efficiency and reduce costs. But this pressure cannot be allowed to override professional responsibility standards and ethical obligations.

What makes the Feldman case particularly important is that it provides a clear cautionary tale with real consequences. Feldman lost his case. His professional reputation was damaged. He faced the possibility of bar discipline. All because he made assumptions about what AI could do safely and then didn't implement appropriate verification procedures.

The legal profession is now in a period of adjustment. Bar associations are issuing guidance. Law firms are implementing new policies. Technology companies are improving their tools. Law schools are teaching AI ethics. All of this is happening in response to cases like Feldman, where things went wrong.

The broader lesson for the profession is that AI adoption needs to be thoughtful and careful. It's fine to use AI to improve efficiency. It's fine to use AI to assist with research, drafting, and organization. But it's not fine to use AI as a replacement for professional judgment. It's not fine to deploy AI without understanding what it can and cannot do reliably. And it's absolutely not fine to be dishonest about the extent to which you've relied on AI.

The Feldman ruling establishes a clear standard: professional responsibility doesn't disappear just because you're using artificial intelligence. In fact, it becomes more important, because you need to ensure that the technology you're using meets the standards your profession demands.

FAQ

What exactly did Steven Feldman do wrong in his legal filings?

Fieldman submitted federal court briefs that contained multiple fabricated case citations. When asked to correct them, he submitted revised filings that contained AI-generated prose—overly flowery passages with literary quotations and historical metaphors that didn't match his normal writing style. When confronted about this, he claimed he had written the passages himself, which the judge found unconvincing. The combination of fake citations, AI-generated content, and dishonest explanations led to case dismissal.

How can large language models generate fake citations that sound real?

Large language models like Chat GPT generate text by predicting the next word in a sequence based on patterns learned from their training data. When asked to cite a legal case, the model doesn't actually look up the case. Instead, it generates text that matches the pattern of how legal citations typically look. If you ask for a citation supporting a particular legal principle, the model will generate plausible-sounding text with case names, volume numbers, and page numbers that match the format of real citations. But that doesn't mean the case actually exists. Researchers have found hallucination rates of 40-60% when testing language models on citation tasks.

What are the professional responsibility rules that Feldman violated?

Feldman violated several rules of professional conduct. Rule 3.3 requires lawyers not to make false statements of fact or law to a tribunal. By submitting briefs with fabricated citations, he violated this rule. Rule 1.1 requires lawyers to provide competent representation, which includes understanding the tools they use. Rule 8.4 prohibits conduct prejudicial to the administration of justice. By submitting false citations to a federal court, Feldman violated all of these rules. The judge also emphasized the principle of "candor toward the tribunal," which requires lawyers to be honest with courts.

Can lawyers use AI tools in their legal practice?

Yes, lawyers can use AI tools to assist with legal work. They can use AI to help draft initial versions of documents, to suggest research directions, to organize information, and to identify potentially relevant case law. However, lawyers remain responsible for verifying the accuracy of any AI-generated content before submitting it to courts. Specifically, every citation must be manually verified in an authoritative legal database. AI cannot be used as the sole source of citation verification. Any use of AI that results in submitting false information to a court violates professional responsibility rules and can result in sanctions.

What should law firms do to ensure responsible AI use?

Law firms should implement clear policies about AI use that include several elements. First, establish which tools are approved for legal work and which are not. Second, require manual verification of all citations in briefs and motions using authoritative legal databases. Third, train lawyers on the limitations of AI tools, particularly the problem of hallucinations with citations. Fourth, consider implementing AI audits where documents are reviewed before filing to ensure they comply with professional standards. Fifth, keep documentation of how AI was used and how documents were verified. Sixth, maintain transparency about AI use if courts or clients ask about it.

How are state bar associations responding to the Feldman case?

State bars are issuing guidance about AI use in legal practice. The New York State Bar Association has issued ethics opinions clarifying that lawyers are responsible for AI-generated content they submit to courts. The American Bar Association issued Formal Opinion 512 in 2023 addressing generative AI use, which emphasizes that lawyers must understand and verify AI output. Bar associations are also discussing whether additional guidance is needed regarding citation verification, disclosure requirements, and what constitutes malpractice when AI is involved. Some bars are considering mandatory CLE courses on AI ethics.

What does "hallucination" mean in the context of AI?

Hallucination refers to when an AI system generates false information with confidence. In the case of legal citations, this means the AI generates what looks like a legitimate case citation but the case doesn't actually exist. The AI isn't intentionally lying. Rather, it's generating plausible-sounding text based on patterns in its training data without actually verifying that what it's generating is factually accurate. Hallucinations are a known limitation of current AI systems and are particularly problematic in domains where accuracy is critical, like law.

Can judges tell if content was AI-generated?

Judges can often identify AI-generated content through stylistic analysis, particularly if the AI-generated content is inserted into otherwise normal legal prose. The AI-generated passages in Feldman's briefs were notable for being overly flowery, containing elaborate metaphors, referencing obscure historical examples, and generally sounding unnatural compared to standard legal writing. However, as AI systems improve, they may become harder to identify through stylistic analysis alone. This is why verification procedures are more important than relying on judges to catch AI misuse.

What are the potential consequences of misusing AI in legal practice?

The Feldman case demonstrates that consequences can be severe. Feldman lost his case entirely through dismissal with prejudice, meaning he cannot refile. He may face bar discipline, including possible suspension or disbarment. His professional reputation has been damaged. He may face malpractice liability to his client. Additionally, he may face sanctions and attorney fees. For other lawyers, consequences of AI misuse could include monetary sanctions, restrictions on filing documents, referral to bar disciplinary authorities, or dismissal of cases.

Are there specialized AI tools for legal research that are better than general-purpose AI?

Yes, some legal AI tools are specifically designed for legal research and have capabilities that general-purpose language models don't have. These tools often have access to legal databases, allowing them to actually verify citations rather than just generating plausible-sounding text. Tools like Lexis Nexis+ AI, Westlaw's AI-Assisted Research, and specialized legal platforms generally perform better on citation tasks than Chat GPT. However, even specialized legal tools should not be used as your only verification mechanism. Manual verification in an authoritative legal database remains the gold standard.

How might AI use in legal practice evolve over the next 5-10 years?

Experts expect several developments. AI tools will likely become more specialized and better at legal tasks. Bar associations will likely issue clearer, more specific guidance about AI use. Professional liability insurance will likely require proof of appropriate AI verification procedures. Law schools will increasingly teach AI ethics. There may be regulatory requirements for certification or testing of legal AI tools. Using AI without implementing appropriate verification procedures will likely come to be considered malpractice rather than just poor practice. The profession will likely move toward a state where AI is accepted and used widely, but within strict guardrails that protect against misuse.

TL; DR

-

Fake Citations Cost Cases: Judge Katherine Polk Failla terminated an entire lawsuit after discovering attorney Steven Feldman had submitted briefs containing fabricated case citations generated by AI tools, making this the most severe sanction ever imposed for AI misuse in legal practice.

-

AI Tools Can Hallucinate: Large language models like Chat GPT generate plausible-sounding citations that don't actually exist, with hallucination rates of 40-60% on citation tasks. Using multiple AI tools to verify citations doesn't eliminate this problem.

-

Professional Responsibility Trumps Efficiency: Lawyers are responsible for the accuracy of everything they submit to courts, regardless of whether they used AI to help create it. Understanding AI limitations and implementing manual verification procedures is non-negotiable.

-

Dishonesty Compounds the Problem: Feldman's initial mistake (relying on AI for citation verification) became catastrophic when he submitted revised briefs containing obviously AI-generated prose and then claimed he had written the passages himself.

-

The Profession Is Responding: State bar associations are issuing guidance, law firms are implementing stricter AI policies, and malpractice insurers are requiring proof of appropriate verification procedures, signaling a shift toward more responsible AI use across the legal profession.

Key Takeaways

- Federal judge Katherine Polk Failla terminated a case entirely due to lawyer Steven Feldman's misuse of AI tools that generated fake citations and refused to correct the conduct

- Large language models can generate plausible-sounding legal citations with 40-60% hallucination rates, making them dangerous for citation-critical legal tasks

- Feldman violated multiple professional responsibility rules: submitting false citations to court, failing to understand his tools, and being dishonest when questioned about AI use

- Using multiple AI tools to verify each other's work doesn't eliminate hallucinations because the tools share similar underlying patterns and limitations

- The legal profession is responding with stricter bar guidance, law firm policies requiring manual citation verification, and insurance requirements for AI compliance procedures

Related Articles

- How to Watch 2026 Winter Olympics Highlights Free [2025]

- ICE Agent's Criminal Case Exposes Evidence in Renee Good Shooting [2025]

- Claude + WordPress Integration: AI Site Management [2025]

- dYdX Supply Chain Attack: How Malicious NPM Packages Emptied Wallets [2025]

- Steam Machine $700 Price Crisis: Component Costs & Market Impact 2025

- Inside the Trump Phone T1: Design, Specs, and Delays [2025]

![Judge Tosses Case Over AI Misuse: The Feldman Ruling [2025]](https://tryrunable.com/blog/judge-tosses-case-over-ai-misuse-the-feldman-ruling-2025/image-1-1770419324873.jpg)