Introduction

In recent months, the Lang Chain framework has come under scrutiny for several high-severity security vulnerabilities that have raised significant concerns across the enterprise tech ecosystem. These vulnerabilities expose various classes of enterprise data, posing potential risks to organizations relying on Lang Chain for AI-powered solutions. As an expert in the field, I'm here to break down what these vulnerabilities mean for businesses and how they can safeguard their data and operations.

TL; DR

- Multiple Vulnerabilities Identified: Lang Chain has three key security flaws exposing files, secrets, and conversations.

- Data Exposed: Each vulnerability targets different types of enterprise data, increasing risks.

- Immediate Patches Required: Organizations must apply the latest patches to secure their systems.

- Implement Best Practices: Adopt security best practices to mitigate future risks.

- Future Trends: AI frameworks must prioritize security to maintain trust.

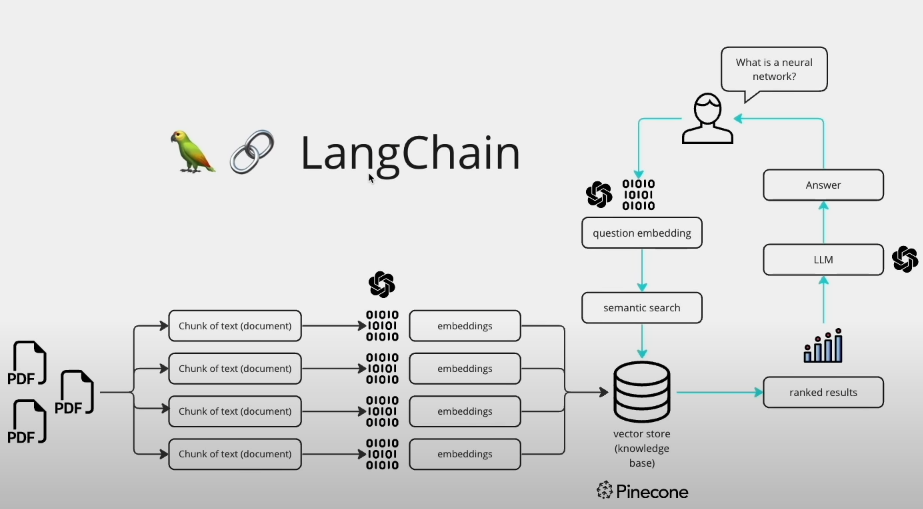

Understanding Lang Chain and Its Importance

Lang Chain is a robust framework designed to streamline the development of AI-driven applications. It provides tools and libraries to make it easier for developers to integrate complex AI functionalities into their applications without needing extensive expertise in AI or machine learning.

Key Features of Lang Chain

- Modular Design: Allows developers to customize and extend functionalities as needed.

- AI Integration: Simplifies the integration of AI capabilities into existing systems.

- Scalability: Designed to handle large datasets and high traffic with ease.

- Community Support: Backed by a strong community that contributes to its ongoing development and improvement.

The Security Vulnerabilities

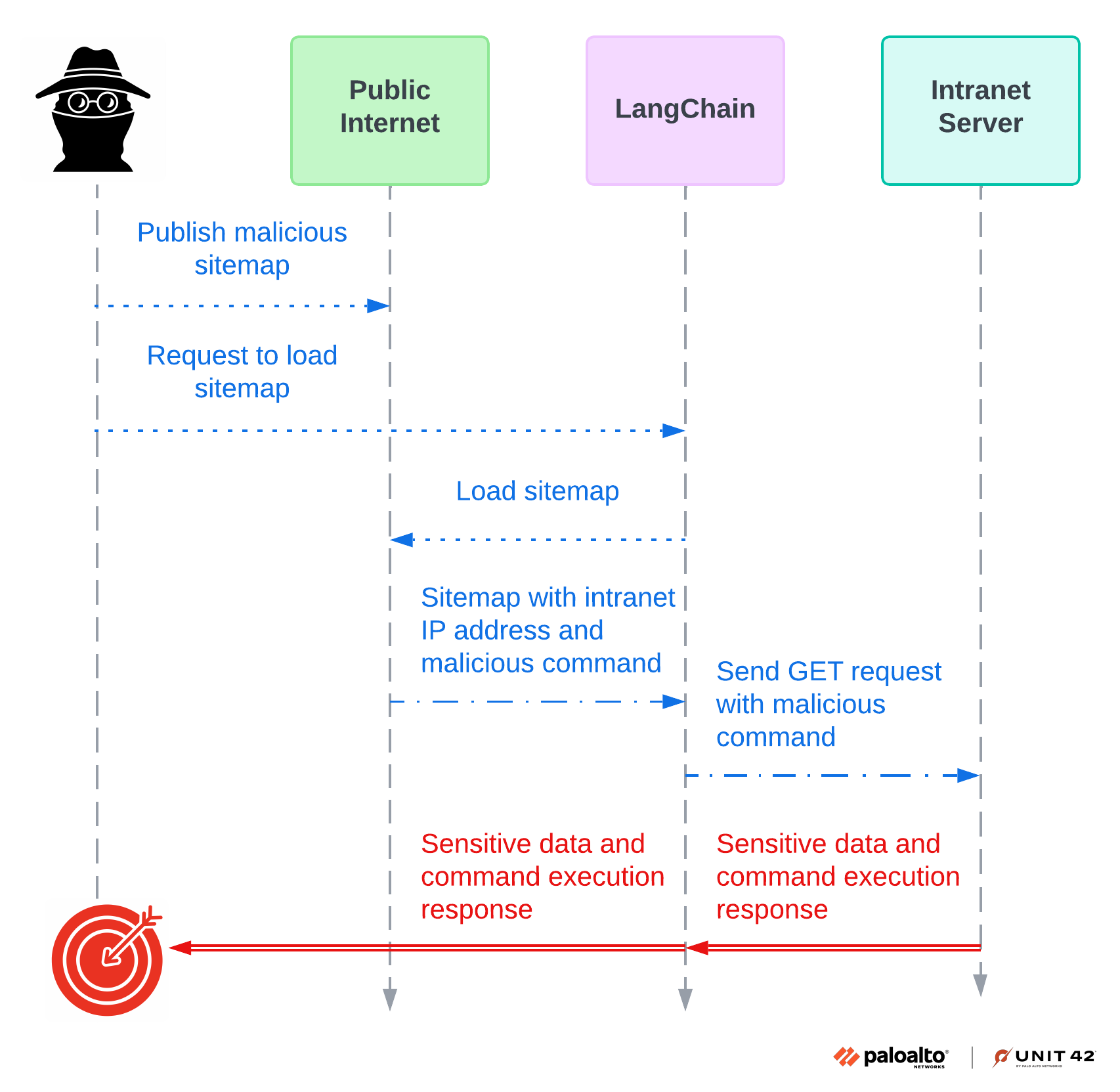

Vulnerability 1: Unauthorized File Access

The first vulnerability involves unauthorized file access. This flaw allows attackers to gain access to sensitive files stored within the system. File exposure can lead to data breaches, which can have severe implications for businesses, including loss of intellectual property and customer trust. According to Palo Alto Networks, unauthorized file access is a critical concern for enterprises using AI frameworks.

Example Scenario

Imagine a financial institution using Lang Chain to process customer transactions. An attacker exploiting this vulnerability could access confidential financial records, causing a major security incident.

Mitigation Steps

- Apply Patches: Ensure all updates and patches provided by Lang Chain are promptly applied.

- Implement Access Controls: Use role-based access controls (RBAC) to limit file access to authorized users only.

- Encryption: Encrypt sensitive data both at rest and in transit to prevent unauthorized access.

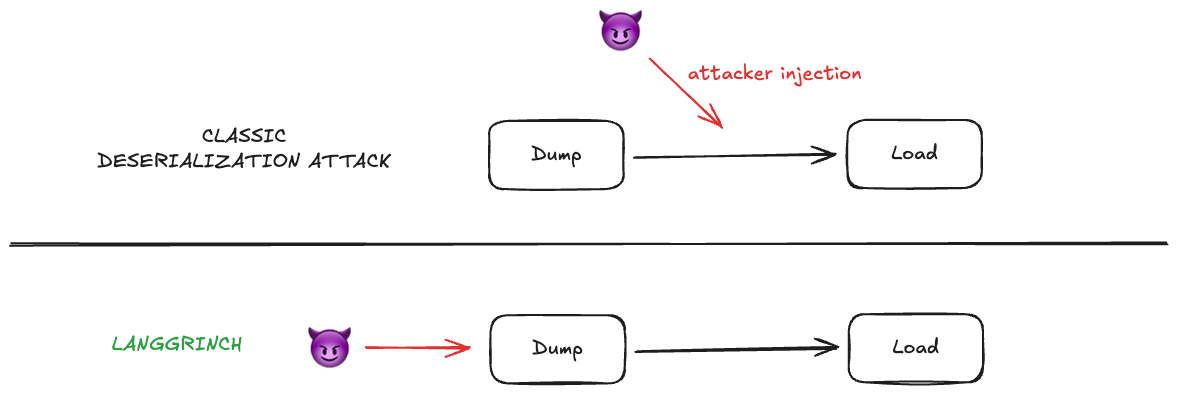

Vulnerability 2: Secret Leaks

The second vulnerability involves the leakage of secrets, such as API keys and passwords. Attackers can exploit this flaw to gain unauthorized access to systems or services, leading to potential data breaches or service disruptions. Research by Unit 42 highlights the dangers of secret leaks in AI systems.

Example Scenario

A company using Lang Chain for its cloud services might store API keys in the system. If these keys are exposed, an attacker could manipulate cloud resources, leading to unauthorized data access or even service outages.

Mitigation Steps

- Secret Management: Utilize secure secret management solutions, such as HashiCorp Vault, to store and manage sensitive information.

- Regular Audits: Conduct regular security audits to identify and address potential vulnerabilities.

- Environment Variables: Avoid hardcoding secrets and use environment variables instead.

Vulnerability 3: Conversation Exposure

The third vulnerability involves the exposure of conversations processed by Lang Chain. This flaw can lead to the leakage of sensitive information exchanged during AI-driven interactions, such as customer support chats or personal assistant commands. Unit 42's analysis underscores the risks associated with conversation exposure in AI frameworks.

Example Scenario

Consider a healthcare application using Lang Chain to manage patient interactions. If conversation data is exposed, sensitive patient information could be leaked, violating privacy regulations like HIPAA.

Mitigation Steps

- Data Anonymization: Anonymize conversation data to protect personally identifiable information (PII).

- Logging Controls: Implement strict logging controls to monitor and secure chat logs.

- Access Restrictions: Limit access to conversation data to authorized personnel only.

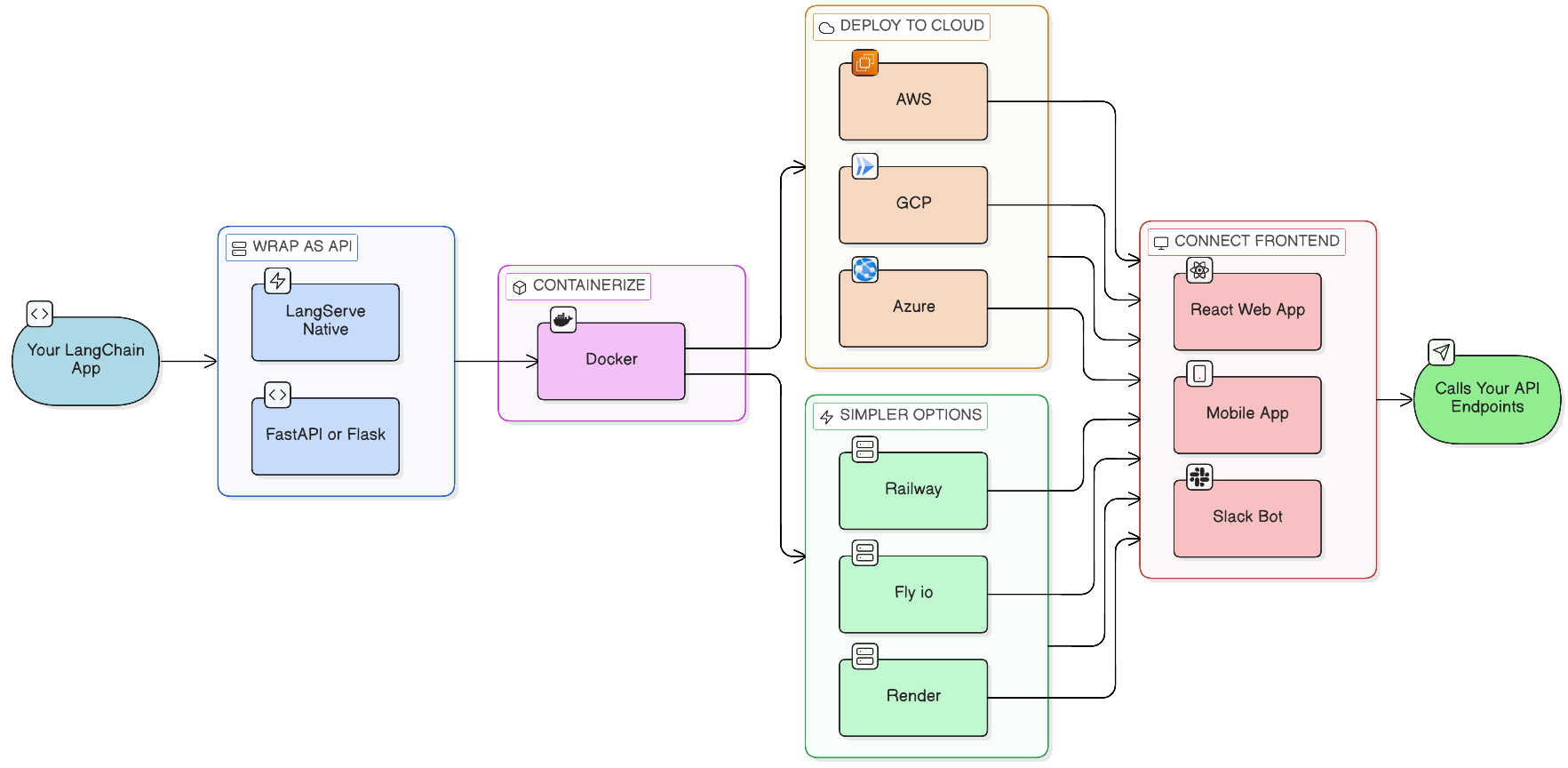

Practical Implementation Guides

Securing Lang Chain Deployments

Securing Lang Chain deployments involves a multi-layered approach that combines technology, processes, and people.

- Network Security: Implement firewalls and intrusion detection/prevention systems (IDPS) to monitor and protect network traffic.

- Regular Patching: Keep all software, including Lang Chain, up to date with the latest security patches.

- Monitoring and Logging: Utilize comprehensive monitoring and logging solutions to detect and respond to suspicious activities.

- Employee Training: Educate employees about security best practices and how to recognize potential threats.

Code Example: Securing API Keys

pythonimport os

from vault import Vault

# Initialize Vault client

vault = Vault()

# Retrieve API key from Vault

api_key = vault.get_secret('my_api_key')

# Use API key in application

print(f"API Key: {api_key}")

Common Pitfalls and Solutions

Pitfall 1: Inadequate Access Controls

Many organizations fail to implement robust access controls, leading to unauthorized access to sensitive information.

Solution: Implement role-based access controls (RBAC) and regularly review access permissions to ensure they align with job responsibilities.

Pitfall 2: Poor Data Encryption Practices

Data encryption is often overlooked, leaving sensitive information vulnerable to interception.

Solution: Use strong encryption algorithms to encrypt data both at rest and in transit. Regularly update encryption protocols to address new vulnerabilities.

Pitfall 3: Insufficient Monitoring and Logging

Without adequate monitoring, suspicious activities can go unnoticed, leading to undetected breaches.

Solution: Deploy comprehensive monitoring solutions and establish alert mechanisms to notify security teams of potential threats.

Future Trends and Recommendations

The Increasing Importance of AI Security

As AI becomes more integrated into business processes, securing AI frameworks like Lang Chain will be critical to maintaining trust and ensuring data integrity. Gartner's insights emphasize the growing importance of AI security in enterprise environments.

Emphasis on Zero Trust Security Models

Organizations are shifting towards zero trust security models, which assume that threats can exist both inside and outside the network. This approach requires verifying each access request regardless of its origin. Forrester's research highlights the benefits of zero trust models in enhancing security.

The Role of AI in Enhancing Security

AI can play a significant role in identifying and mitigating security threats. Machine learning algorithms can analyze patterns and detect anomalies that may indicate a security breach. McKinsey's analysis discusses the potential of AI in transforming security practices.

Conclusion

The security vulnerabilities in the Lang Chain framework highlight the need for robust security measures in AI-driven applications. By understanding these vulnerabilities and implementing best practices, organizations can protect their data and maintain trust with their stakeholders.

FAQ

What is Lang Chain?

Lang Chain is a framework designed to simplify the integration of AI capabilities into applications, enabling developers to build AI-driven solutions efficiently.

How do the identified vulnerabilities impact enterprises?

The vulnerabilities expose different types of enterprise data, increasing the risk of data breaches and unauthorized access.

What are the best practices for securing Lang Chain deployments?

Best practices include applying patches, implementing access controls, using encryption, and conducting regular security audits.

How can organizations protect their data from unauthorized access?

Organizations can protect their data by implementing role-based access controls, encrypting sensitive information, and using secure secret management solutions.

What is the future of AI security?

AI security will focus on zero trust models, integrating AI for threat detection, and ensuring data integrity in AI-driven applications.

Key Takeaways

- LangChain's vulnerabilities expose different classes of enterprise data.

- Implementing security patches is crucial to mitigate risks.

- Adopt best practices like encryption and access controls.

- AI security will increasingly rely on zero trust models.

- Regular security audits can identify and resolve vulnerabilities.

Related Articles

- Unpacking iOS 26 Security: Progress Made, Challenges Persist in the Face of New Spyware Threats [2025]

- Silicon Valley's Biggest Dramas: The Intersection of LiteLLM and Delve [2025]

- How Virtual Cloud Phones Are Reshaping Digital Fraud Economics [2025]

- English Learning Apps: Critical Security Risks and Best Practices [2025]

- Five Signs Your Infrastructure is Stalling Your AI Strategy [2025]

- Understanding Banking App Glitches: Lessons from Lloyds and Beyond [2025]

![LangChain Framework Faces Security Challenges: Key Vulnerabilities and Best Practices [2025]](https://tryrunable.com/blog/langchain-framework-faces-security-challenges-key-vulnerabil/image-1-1774632878823.jpg)