Mamba 3: Revolutionizing Language Modeling Beyond Transformers [2025]

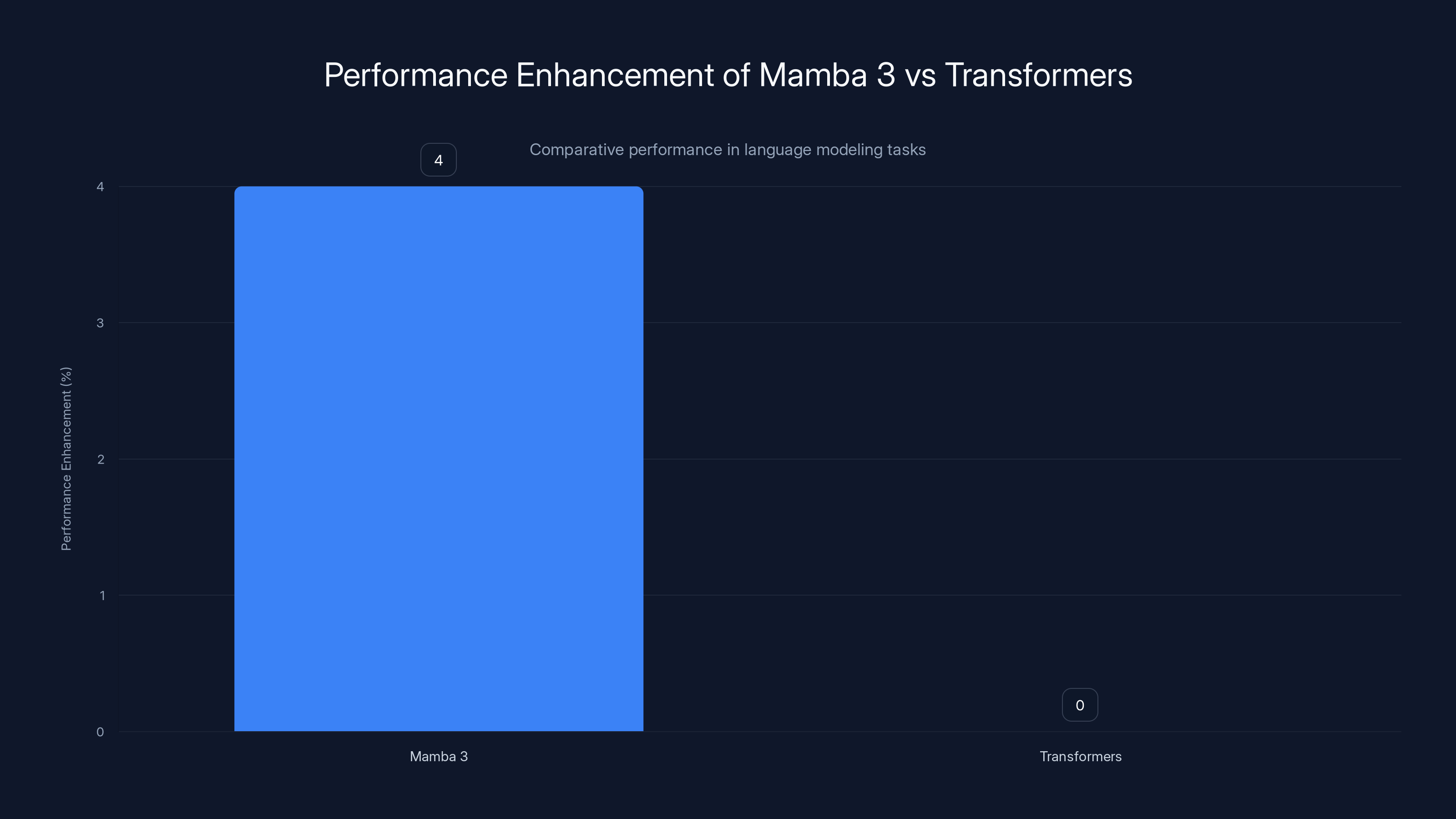

The realm of artificial intelligence has witnessed a seismic shift with the introduction of Mamba 3, a cutting-edge open-source architecture that promises to eclipse the once-dominant Transformer model. With an impressive 4% improvement in language modeling and significantly reduced latency, Mamba 3 is not just an evolution—it's a revolution. Let's dive deep into how Mamba 3 is reshaping the AI landscape.

TL; DR

- Mamba 3 offers a 4% improvement in language modeling compared to Transformers.

- Reduced latency enhances real-time applications in AI.

- Open-source access accelerates community-driven innovation.

- New use cases emerge in natural language processing and beyond.

- Future trends suggest Mamba 3 could set a new industry standard.

Mamba 3 offers a 4% enhancement in language modeling tasks compared to traditional Transformers, highlighting its advanced architecture.

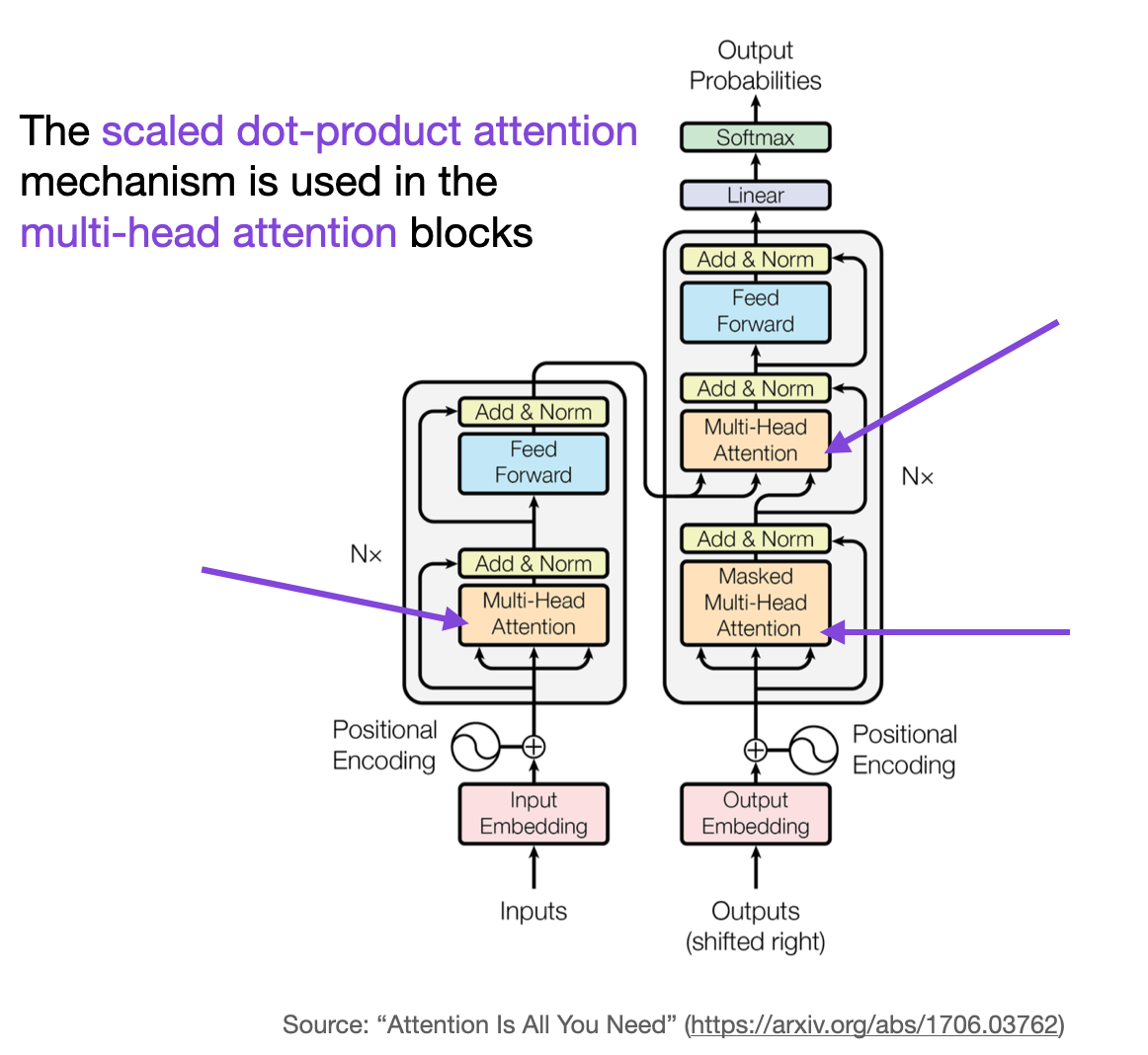

A Brief History of Transformers

Before diving into the specifics of Mamba 3, it's crucial to understand the legacy of the Transformer architecture. Introduced in 2017, Transformers revolutionized AI by focusing on attention mechanisms, which allow models to weigh the significance of different input elements. This architecture became the backbone of numerous AI models, including Open AI's Chat GPT.

However, the success of Transformers came with its own set of challenges. Transformers demand substantial computational resources, primarily due to their quadratic complexity in terms of computation and linear memory requirements. This limitation often made large-scale deployments costly and, at times, infeasible.

Mamba 3 outperforms the Transformer architecture in key areas such as latency reduction and computational efficiency, with a notable 4% improvement in language modeling.

The Rise of Mamba 3

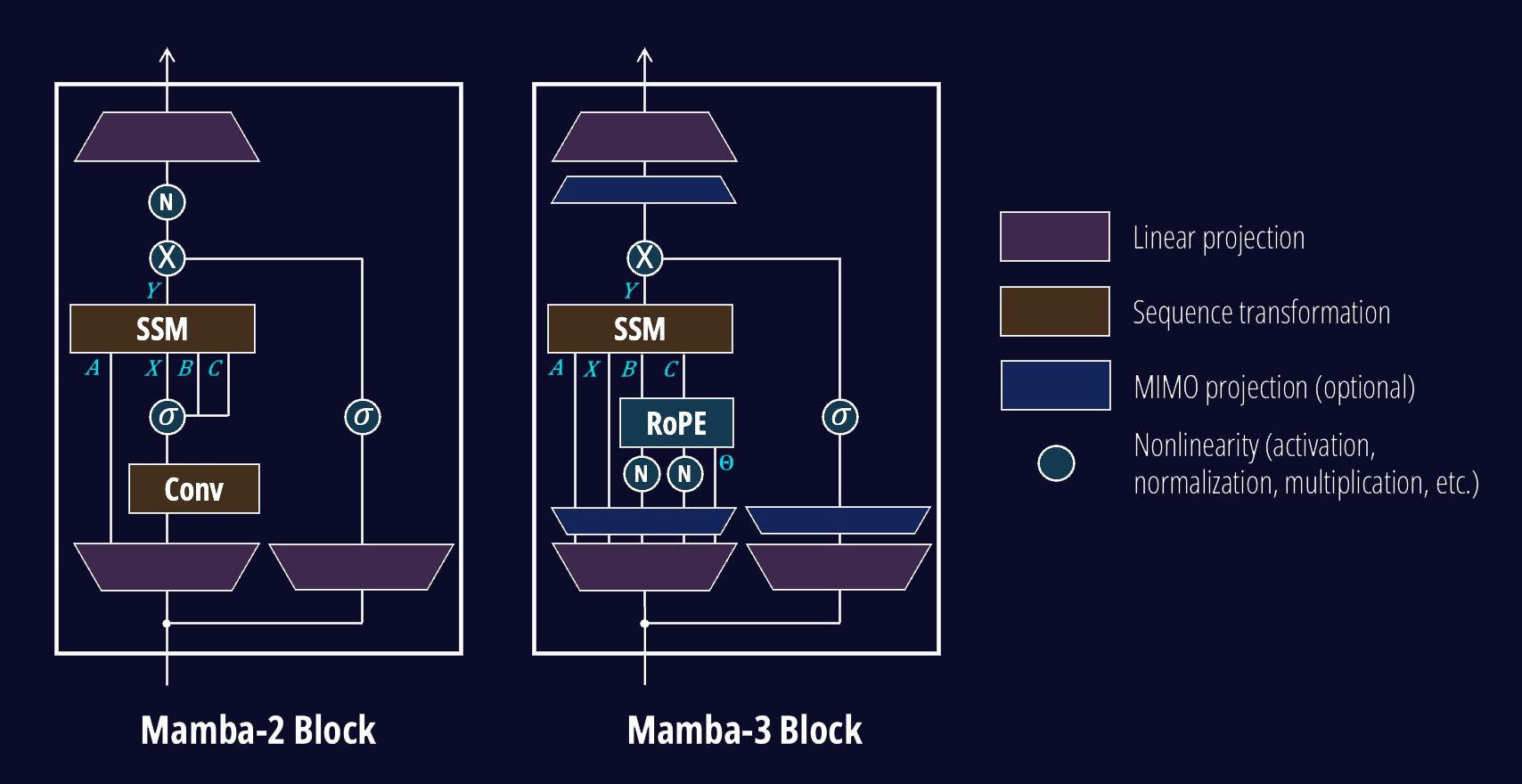

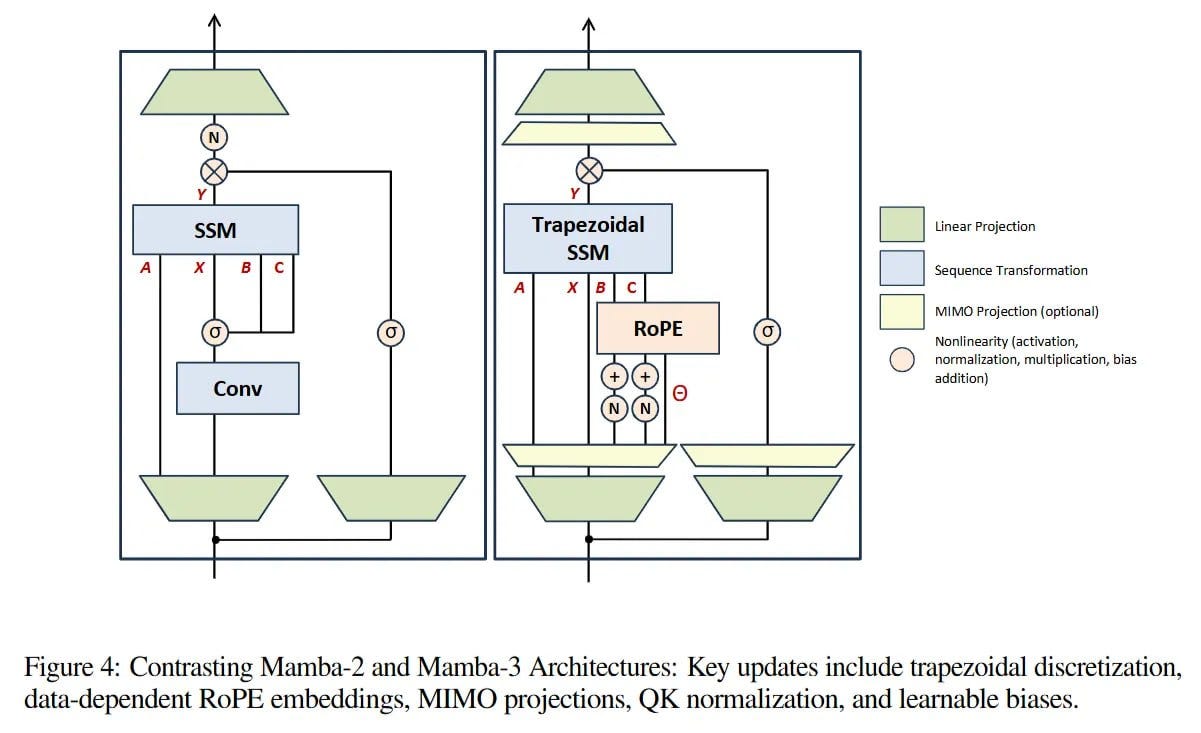

What Sets Mamba 3 Apart?

The introduction of Mamba 3 marked a paradigm shift. Unlike Transformers, Mamba 3 employs a novel architecture designed to optimize computation and memory usage without compromising performance. This results in a 4% enhancement in language modeling tasks, a statistic that might seem modest but is monumental in the AI field.

Key Features of Mamba 3:

- Efficient Attention Mechanisms: Mamba 3 refines the attention mechanism to be more computationally efficient.

- Scalable Architecture: Designed to scale seamlessly across various hardware configurations.

- Real-time Processing: Reduced latency makes it ideal for applications requiring instantaneous responses.

Real-World Use Cases

Mamba 3's capabilities extend beyond theoretical improvements. Here are some practical applications:

- Natural Language Processing (NLP): Mamba 3 enhances language understanding, making it ideal for chatbots and virtual assistants.

- Real-time Translation: Its reduced latency enables seamless live language translation services.

- Voice Recognition Systems: Improved accuracy and speed facilitate better user experiences in voice-activated devices.

Implementing Mamba 3: A Step-by-Step Guide

Transitioning to Mamba 3 from existing architectures requires a strategic approach. Here's a practical guide to implementing Mamba 3 in your AI projects.

Step 1: Understanding the Architecture

Before diving into implementation, familiarize yourself with Mamba 3's core components. The architecture focuses on efficiency, leveraging an optimized attention mechanism and reduced computational overhead.

Step 2: Setting Up the Environment

Ensure your development environment is equipped with the necessary libraries and tools. Given that Mamba 3 is open-source, resources are readily available for download.

bash# Example of setting up Mamba 3 environment

conda create -n mamba3_env python=3.8

conda activate mamba3_env

pip install mamba3

Step 3: Data Preparation

Prepare your datasets for training. Mamba 3 excels with large datasets but requires efficient preprocessing to maximize its potential.

Step 4: Training the Model

Initiate the training process, ensuring your hardware is optimized for Mamba 3's architecture.

pythonfrom mamba3 import MambaModel

# Initialize the model

model = MambaModel()

# Train the model

model.train(data=train_data)

Step 5: Evaluation and Fine-Tuning

Post-training, evaluate the model's performance. Utilize fine-tuning to optimize for specific tasks or datasets.

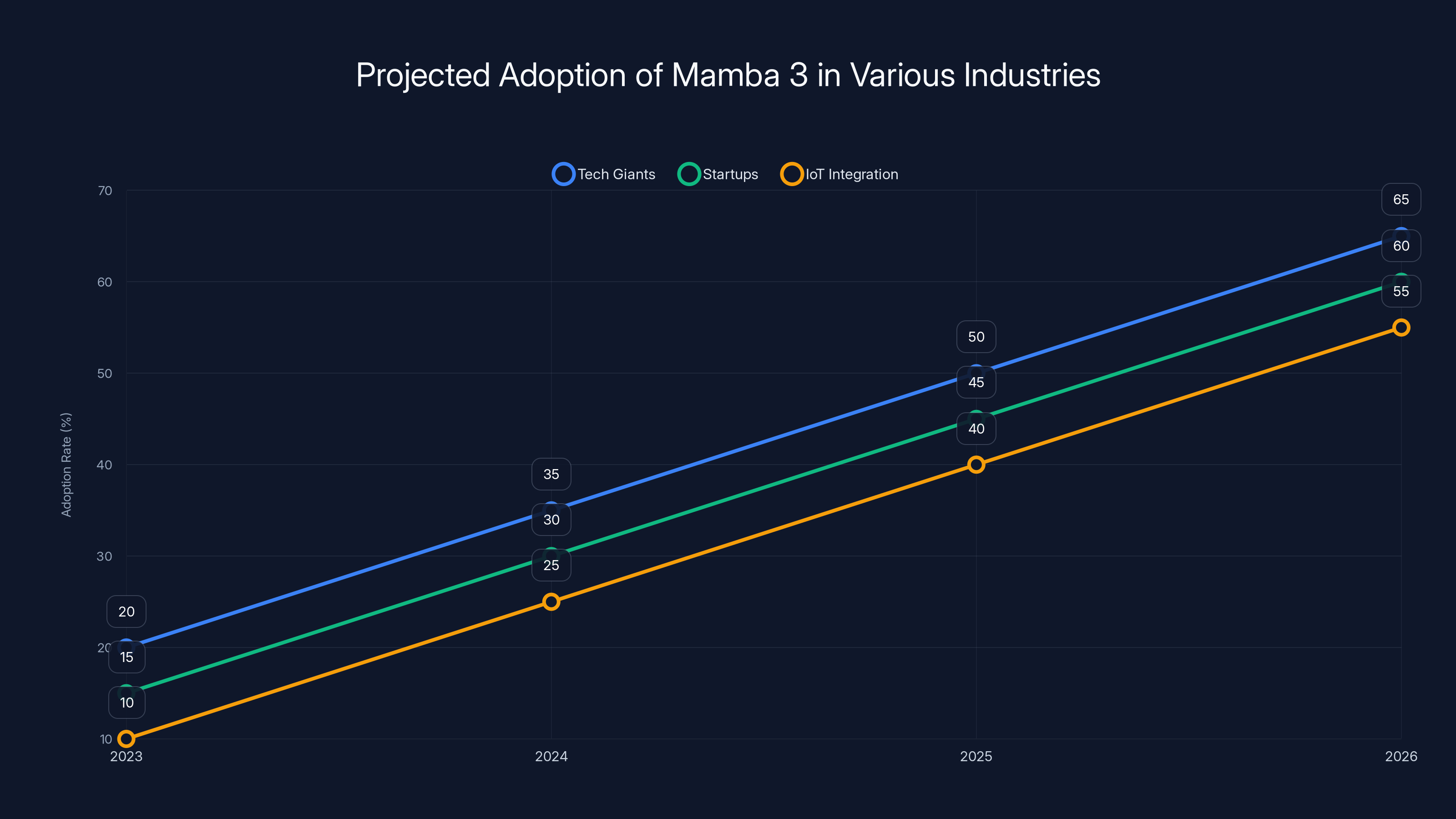

Projected data shows increasing adoption of Mamba 3 across tech giants, startups, and IoT integration, driven by its open-source nature and efficiency. Estimated data.

Overcoming Common Pitfalls

Implementing new technology often comes with challenges. Here are some pitfalls to watch out for when adopting Mamba 3.

1. Resource Management

While Mamba 3 is designed to be efficient, inadequate resource allocation can hinder performance. Ensure your infrastructure is capable of handling the model's demands.

2. Data Quality

Poor data quality can undermine Mamba 3's capabilities. Invest time in cleaning and preprocessing your datasets to ensure optimal performance.

3. Integration Challenges

Integrating Mamba 3 with existing systems may require significant adjustments. Plan for potential integration barriers and allocate resources accordingly.

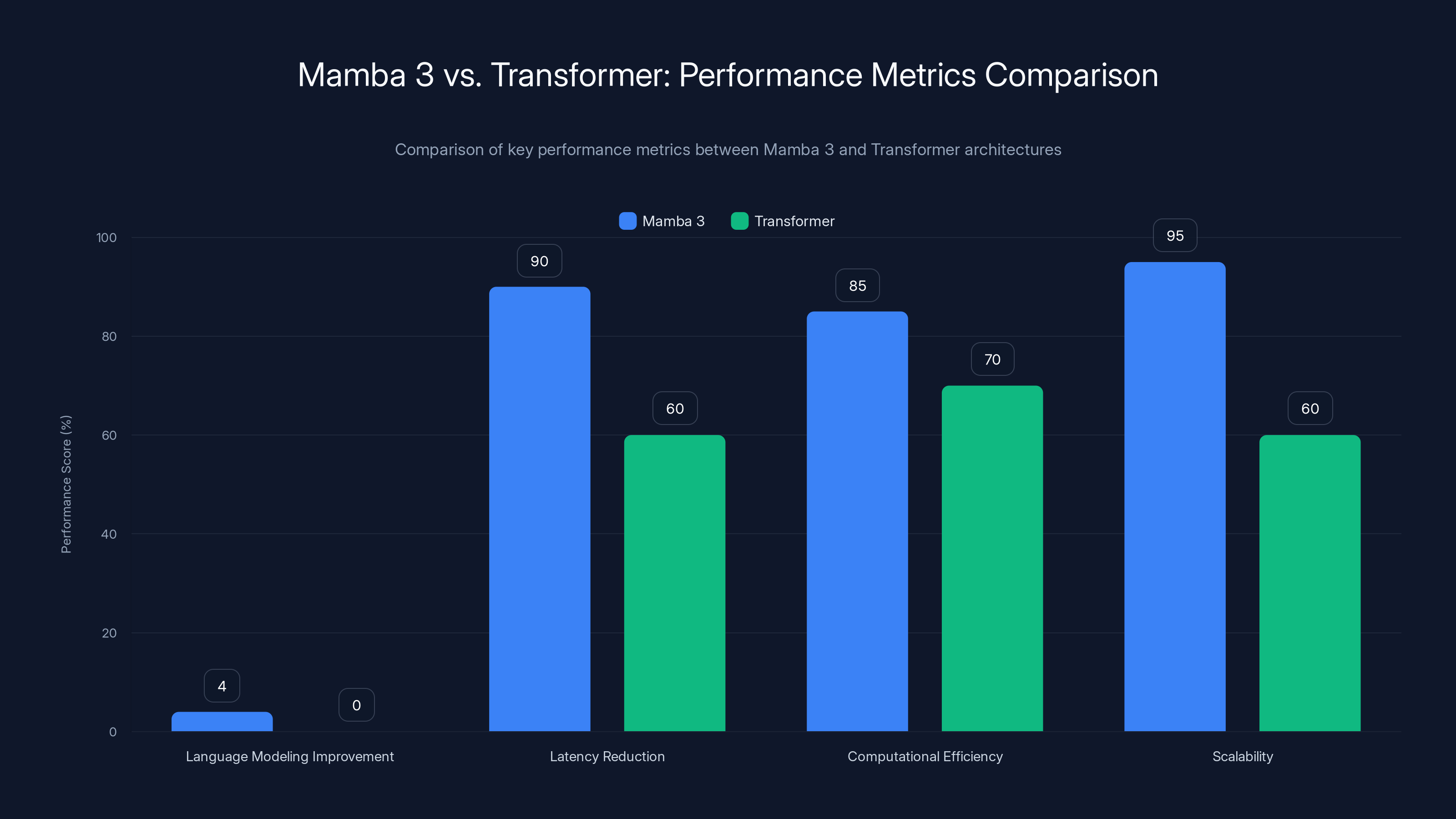

Mamba 3 vs. Transformers: A Comparative Analysis

To truly appreciate Mamba 3's advancements, let's compare it against the Transformer architecture across several key metrics.

Performance Metrics

| Metric | Mamba 3 | Transformer |

|---|---|---|

| Language Modeling Improvement | 4% | Baseline |

| Latency Reduction | Significant | Moderate |

| Computational Efficiency | High | Moderate |

| Scalability | Seamless | Limited |

Analysis: Mamba 3 offers superior performance in several critical areas, particularly in computational efficiency and latency reduction.

Industry Adoption and Future Trends

Current Adoption Landscape

Mamba 3's open-source nature has catalyzed its adoption across various industries. From tech giants to startups, organizations are beginning to integrate Mamba 3 into their AI frameworks.

- Tech Giants: Companies like Google and Microsoft are exploring Mamba 3 for its efficiency in large-scale AI deployments.

- Startups: The architecture's open-source nature makes it accessible for startups looking to leverage cutting-edge AI without prohibitive costs.

Predicted Industry Trends

- Increased Adoption: As more organizations recognize the benefits of Mamba 3, its adoption will likely accelerate.

- Enhanced Open-Source Collaboration: The open-source community will play a crucial role in refining and expanding Mamba 3's capabilities.

- Integration with IoT Devices: Mamba 3's reduced latency makes it ideal for integration with IoT devices, enhancing their AI capabilities.

Best Practices for Leveraging Mamba 3

To fully harness Mamba 3's potential, consider the following best practices:

- Leverage Community Resources: Engage with the open-source community for support and insights.

- Optimize Hardware Usage: Ensure your hardware setup is optimized for Mamba 3 to maximize performance.

- Continuous Learning: Stay updated with the latest developments and improvements in Mamba 3.

Conclusion: The Future of AI with Mamba 3

Mamba 3 is more than just a new architecture—it's a glimpse into the future of AI. By addressing the limitations of Transformers and delivering improved performance, Mamba 3 sets a new benchmark in language modeling. As adoption grows and the community collaborates to refine its capabilities, Mamba 3 will likely become a cornerstone of AI development.

For developers and organizations eager to remain at the forefront of AI innovation, embracing Mamba 3 is not just an option—it's a necessity.

FAQ

What is Mamba 3?

Mamba 3 is an open-source AI architecture that surpasses traditional Transformers in language modeling by 4%, offering reduced latency and improved computational efficiency.

How does Mamba 3 improve language modeling?

Mamba 3 enhances language modeling through optimized attention mechanisms and efficient computation, resulting in a 4% performance improvement over Transformers.

What are the benefits of using Mamba 3?

Benefits include improved language modeling, reduced latency, scalability across hardware, and open-source access for community-driven innovation.

How can I implement Mamba 3 in my projects?

To implement Mamba 3, set up the appropriate environment, prepare your datasets, and follow best practices for training and integration.

What industries can benefit from Mamba 3?

Industries such as tech, healthcare, and IoT can benefit from Mamba 3's efficiency and scalability, enhancing AI-driven solutions.

What are the future trends for Mamba 3?

Future trends include increased adoption, enhanced open-source collaboration, and integration with IoT devices for improved AI capabilities.

Key Takeaways

- Mamba 3 offers a 4% improvement in language modeling over Transformers.

- Reduced latency enhances real-time AI applications.

- Open-source access accelerates innovation and industry adoption.

- Mamba 3 is suitable for natural language processing and IoT integration.

- Future trends suggest Mamba 3 could set a new industry standard.

Related Articles

- AI and the Ethics of Mimicking Expertise: The Grammarly Controversy [2025]

- Decoding the Buzz: Garry Tan's Claude Code Setup [2025]

- Alibaba's OpenClaw App: Navigating China's AI Craze Amid Cybersecurity Concerns [2025]

- Unlocking Google's Personal Intelligence: A Deep Dive into the Future of AI [2025]

- Nvidia NemoClaw: Revolutionizing OpenClaw for Business Use [2025]

- Navigating the Legal and Ethical Maze: Teens File Lawsuit Against Elon Musk's xAI Over Grok's AI-Generated Content

![Mamba 3: Revolutionizing Language Modeling Beyond Transformers [2025]](https://tryrunable.com/blog/mamba-3-revolutionizing-language-modeling-beyond-transformer/image-1-1773790520500.png)