AI and the Ethics of Mimicking Expertise: The Grammarly Controversy [2025]

Last month, the tech world was stirred by a lawsuit that shined a spotlight on an emerging ethical dilemma in AI development. Grammarly's rollout of its 'Expert Review' feature, which mimics the editorial styles of well-known figures, has sparked a debate over consent, intellectual property, and the responsibilities of AI developers. Julia Angwin, a journalist, has filed a class action lawsuit against Grammarly's parent company, Superhuman, arguing that the tool violates the privacy and publicity rights of experts by impersonating their writing styles without consent, as reported by Law360.

TL; DR

- Grammarly's 'Expert Review' feature uses AI to mimic editorial feedback from notable figures without their consent.

- Journalist Julia Angwin has filed a class action lawsuit, highlighting privacy and intellectual property concerns.

- The case raises questions about AI ethics, transparency, and the future of content creation.

- AI's ability to replicate expertise poses both opportunities and risks for the creative industry.

- This controversy could set precedents for AI usage and intellectual property rights.

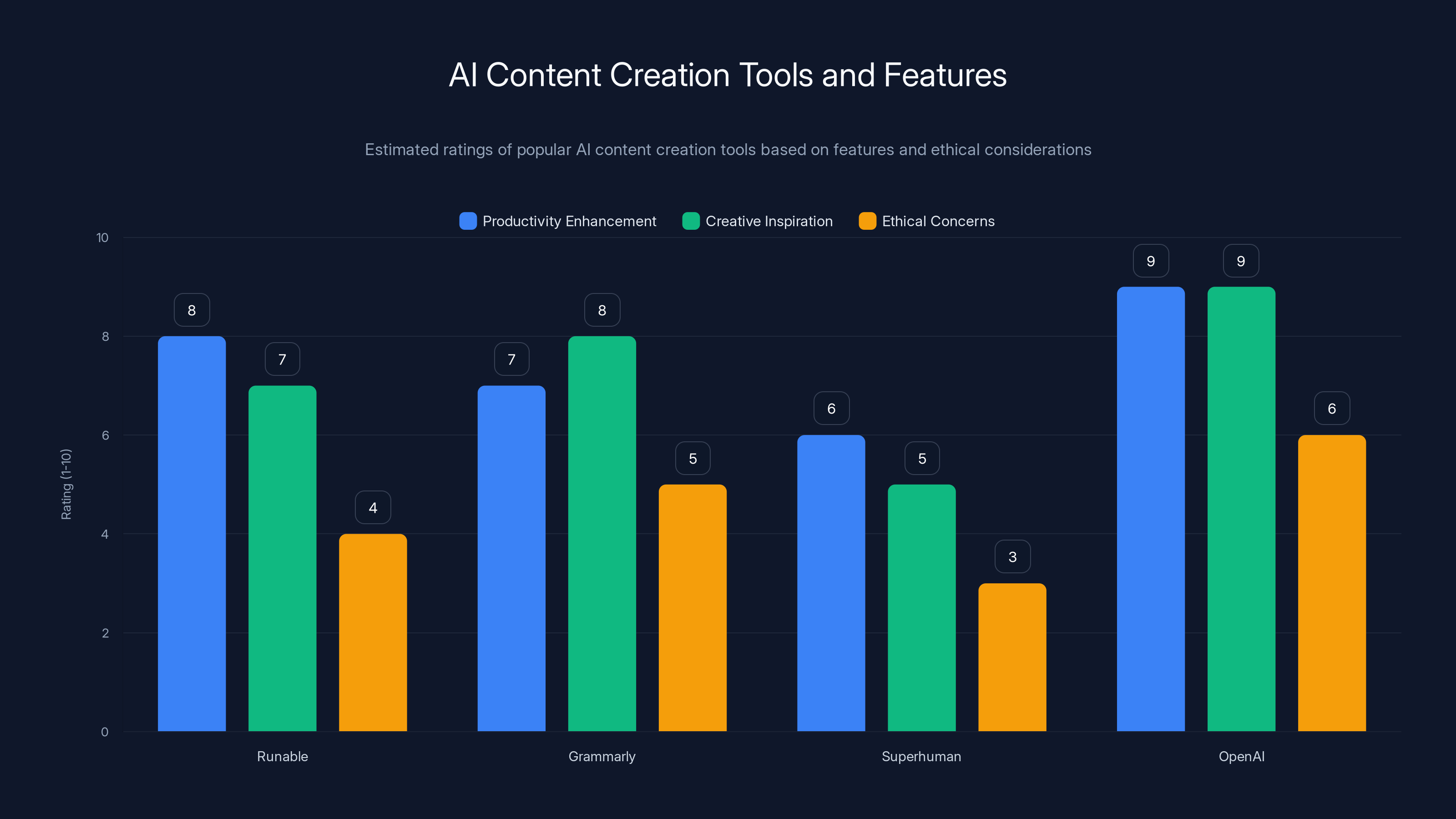

This bar chart compares AI content creation tools based on productivity, creativity, and ethical concerns. Runable and OpenAI score high in productivity and creativity, while ethical concerns remain notable across all tools. Estimated data.

The Rise of AI in Content Creation

Artificial intelligence has revolutionized content creation, offering tools that enhance productivity, provide creative inspiration, and automate tedious editing tasks. Platforms like Runable have harnessed AI to automate the generation of presentations, documents, and reports, making workflows more efficient. However, as AI's capabilities expand, so do the ethical challenges that accompany its use.

The 'Expert Review' Feature: A Double-Edged Sword

Grammarly's 'Expert Review' feature, at first glance, appears to be a groundbreaking tool for writers. By simulating the feedback styles of renowned figures like Stephen King or Carl Sagan, it promises users a taste of expert critique. But here's the thing: the individuals being impersonated were not consulted, nor did they provide consent for their styles to be used in this manner, as detailed by TechBuzz.

This feature raises significant ethical questions:

- Consent and Attribution: Should AI developers obtain permission before mimicking an individual's style?

- Intellectual Property: Who owns the rights to a writing style?

- Transparency: How should companies disclose the capabilities and limitations of AI tools?

Case Study: Julia Angwin's Lawsuit

Julia Angwin's lawsuit against Superhuman exemplifies the broader concerns regarding AI and intellectual property. Angwin, who has spent decades honing her journalistic skills, argues that the 'Expert Review' feature diminishes her hard-earned expertise by offering a synthetic version without her consent. The potential financial implications of this lawsuit are significant, as it could encourage other affected writers to join the class action, as noted by TechTimes.

This bar chart estimates the importance of various ethical practices in AI development, highlighting 'Obtain Explicit Consent' and 'Develop Ethical Guidelines' as top priorities. (Estimated data)

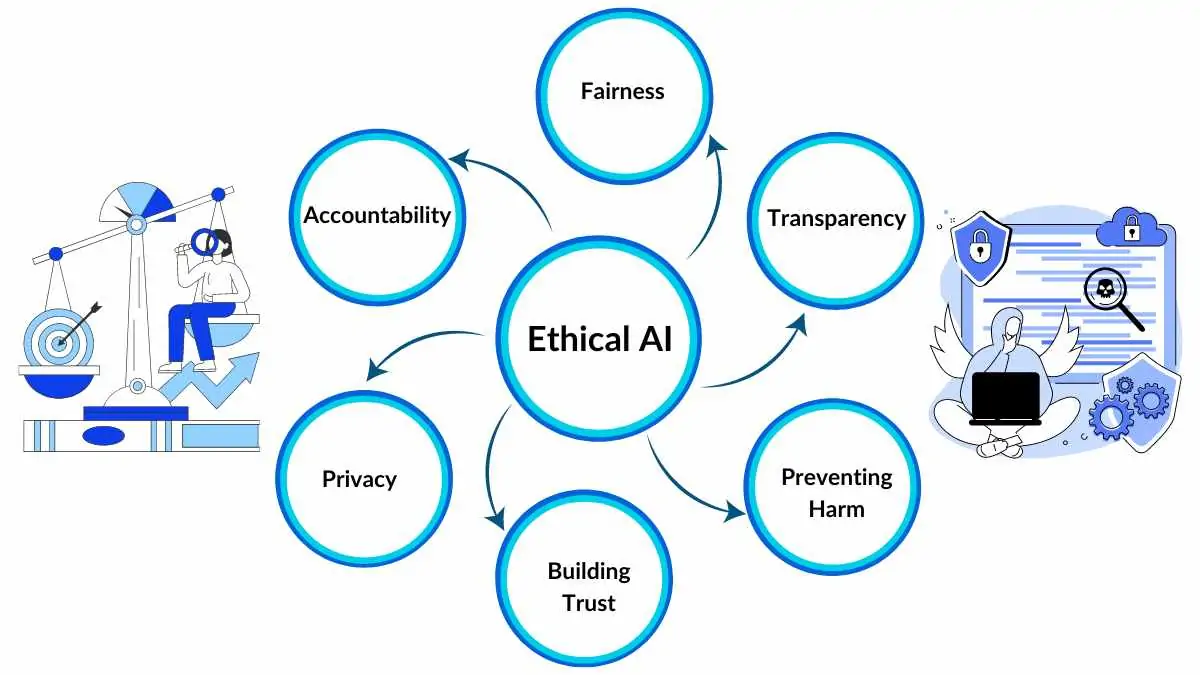

Ethical Implications of AI Imitation

AI's ability to replicate human expertise prompts a host of ethical considerations. These include the potential for AI to undermine the value of human creativity and expertise, as well as the need for clear guidelines governing the use of AI in content creation.

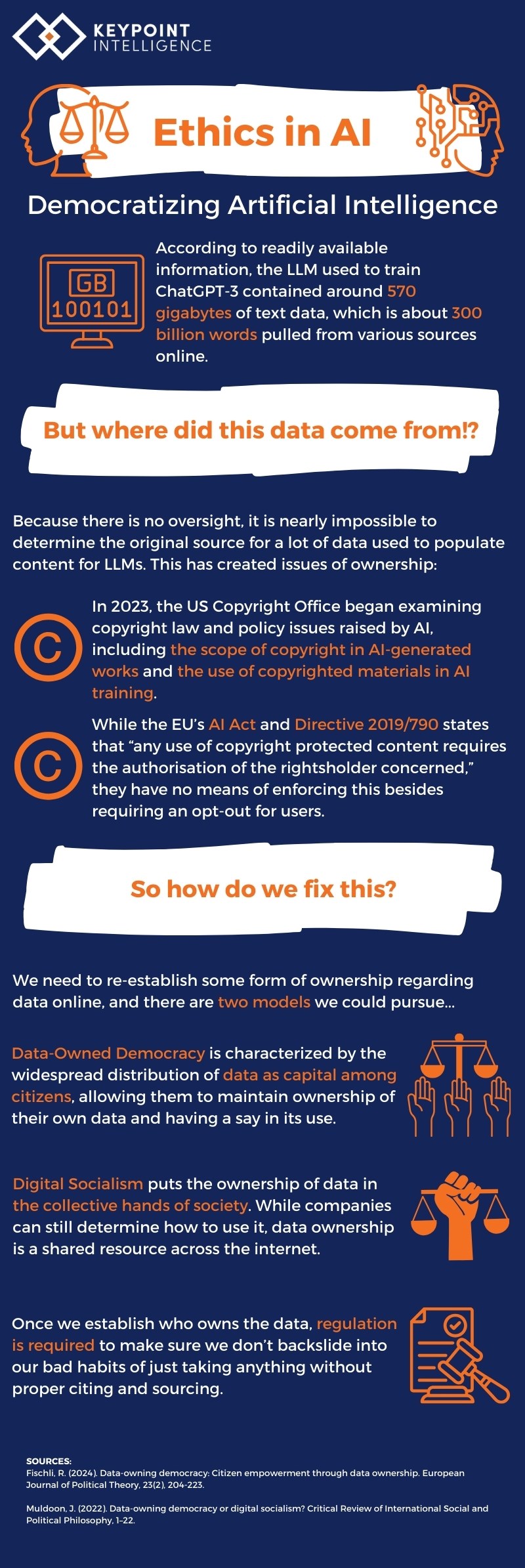

The Importance of Consent

Consent is a cornerstone of ethical AI development. Without it, companies risk exploiting individuals' intellectual property and infringing on their rights. As AI tools become more sophisticated, obtaining explicit consent should be a priority for developers to ensure the ethical use of technology, as emphasized by Britannica.

Intellectual Property Rights

The concept of intellectual property must evolve to address the unique challenges posed by AI. While traditional copyright laws protect tangible works, the mimicry of a writing style exists in a gray area. Establishing clear guidelines for the ownership of style and expertise is crucial to protecting creators' rights in the digital age, as discussed in Morgan Lewis.

Transparency and User Awareness

Transparency is key to building trust between AI developers and users. Companies should be upfront about the capabilities and limitations of their tools, enabling users to make informed decisions. This includes disclosing whether an AI tool uses simulated expert feedback and how it was developed, as highlighted by Platformer.

Practical Implementation Guide for Ethical AI Use

For AI developers, navigating the ethical landscape requires a commitment to responsible practices. Here are some best practices for implementing AI tools ethically:

-

Obtain Explicit Consent: Before using an individual's style or likeness, seek their permission and compensate them fairly.

-

Implement Clear Disclosure: Clearly communicate the use of AI and its capabilities to users, ensuring they understand what the tool can and cannot do.

-

Develop Ethical Guidelines: Establish internal guidelines that prioritize ethical considerations in AI development, focusing on consent, transparency, and user rights.

-

Engage with Stakeholders: Involve affected individuals and industry experts in the development process to address potential ethical concerns early on.

-

Monitor and Evaluate: Continuously assess the impact of AI tools on users and creators, making adjustments as necessary to align with ethical standards.

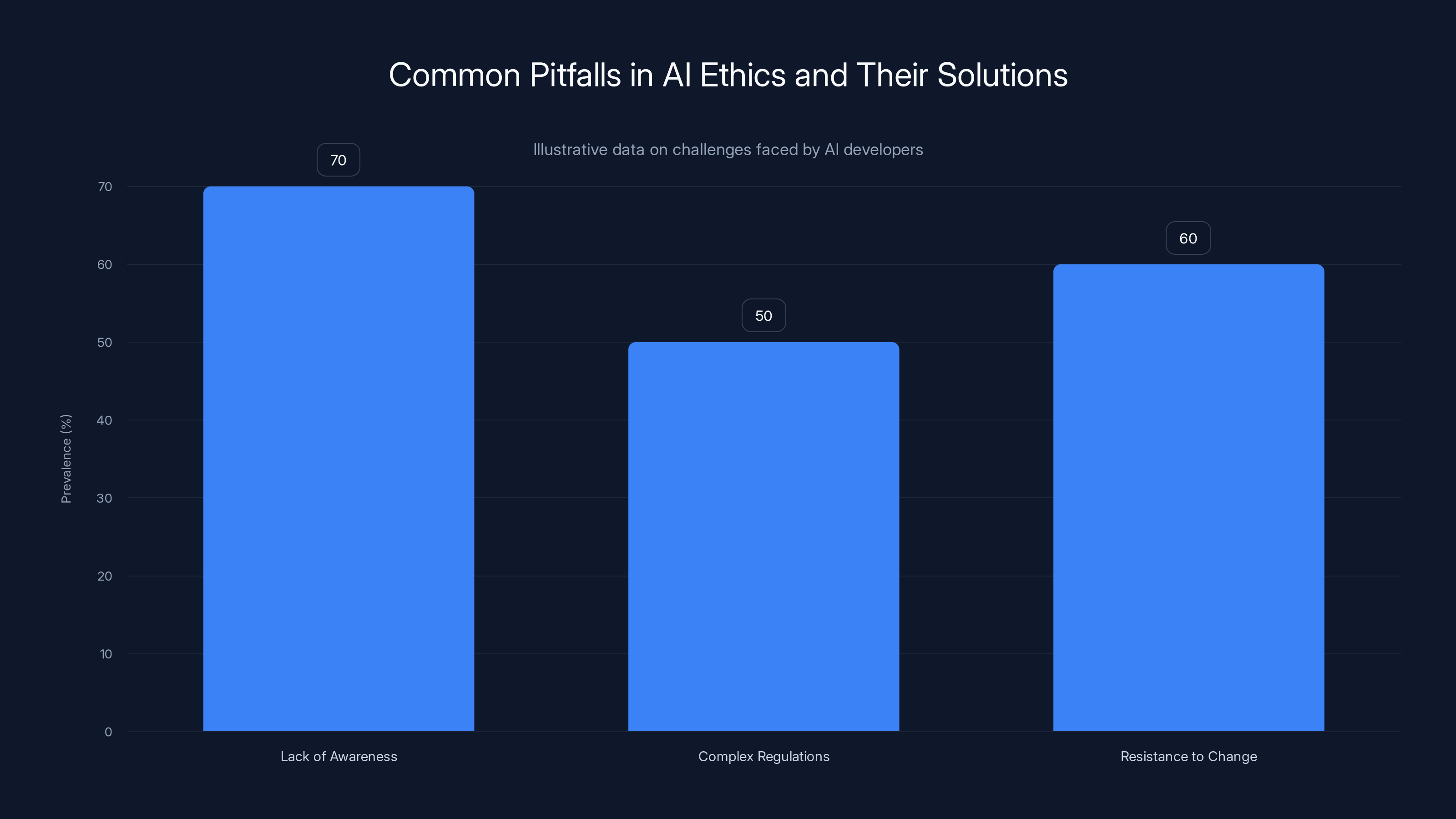

Estimated data shows that lack of awareness is the most prevalent pitfall in AI ethics, followed by resistance to change and complex regulations. Highlighting solutions can mitigate these challenges.

Common Pitfalls and Solutions

Despite best efforts, AI developers may encounter pitfalls when implementing ethical practices. Some common challenges include:

- Lack of Awareness: Developers may not fully understand the ethical implications of their tools. Solution: Provide training and resources to increase awareness.

- Complex Regulations: Navigating legal frameworks can be challenging. Solution: Consult with legal experts to ensure compliance with relevant laws and regulations.

- Resistance to Change: Some developers may resist implementing ethical practices due to perceived costs or complexity. Solution: Highlight the long-term benefits of ethical AI use, including increased trust and brand reputation.

Future Trends and Recommendations

As AI continues to evolve, so too will the ethical considerations surrounding its use. Here are some trends and recommendations for the future:

- Development of AI Ethics Frameworks: Industry-wide frameworks can provide standardized guidelines for ethical AI development, promoting consistency and accountability.

- Increased Focus on Transparency: Users will demand greater transparency in AI tools, pushing companies to disclose more information about their algorithms and data sources.

- Evolving Intellectual Property Laws: Legal systems will need to adapt to address the unique challenges posed by AI, including the ownership of style and expertise.

- Collaborative AI Development: Engaging with diverse stakeholders, including creators, ethicists, and policymakers, will be crucial for developing AI tools that align with societal values.

FAQ

What is the controversy surrounding Grammarly's 'Expert Review' feature?

Grammarly's 'Expert Review' feature simulates editorial feedback from notable figures without their consent, raising ethical concerns about privacy and intellectual property, as reported by Wired.

How does the 'Expert Review' feature work?

The feature uses AI to mimic the writing styles of experts, providing users with feedback that appears to come from well-known figures, as explained by EurekAlert.

What are the ethical implications of AI tools like 'Expert Review'?

These tools raise questions about consent, intellectual property rights, and transparency, as they can diminish the value of human expertise and creativity, as discussed in Corporate Compliance Insights.

How can AI developers implement ethical practices?

Developers should obtain explicit consent, implement clear disclosure, develop ethical guidelines, engage with stakeholders, and continuously monitor the impact of their tools, as recommended by Syracuse University.

What are some common pitfalls in ethical AI development?

Pitfalls include a lack of awareness, complex regulations, and resistance to change. Solutions include providing training, consulting with legal experts, and highlighting the benefits of ethical AI use, as outlined by Vocal Media.

What future trends are expected in AI ethics?

Trends include the development of AI ethics frameworks, increased transparency, evolving intellectual property laws, and collaborative AI development, as forecasted by Bloomberg.

Conclusion

The controversy surrounding Grammarly's 'Expert Review' feature highlights the complex ethical landscape of AI development. As AI continues to advance, developers must prioritize ethical practices to protect the rights of creators and maintain trust with users. By obtaining consent, ensuring transparency, and adapting to evolving legal standards, companies can harness the potential of AI while respecting the expertise and creativity of individuals.

Key Takeaways

- Grammarly's 'Expert Review' feature raises consent and intellectual property concerns.

- AI can replicate expertise, posing ethical challenges for developers.

- Ethical AI practices include obtaining consent and ensuring transparency.

- Future trends in AI ethics include evolving legal standards and transparency demands.

- Developers should engage stakeholders in ethical AI development.

- Julia Angwin's lawsuit highlights the importance of protecting creators' rights.

Related Articles

- Understanding the Legal Challenges Facing Grammarly's AI 'Expert Review' Feature [2025]

- The Intricacies of Generative AI in Writing Tools: Lessons from Grammarly's Recent Strategy Shift [2025]

- Testing AI: Unique Challenges and Best Practices for the Modern Enterprise [2025]

- Bumble's AI Dating Assistant 'Bee': Revolutionizing Modern Matchmaking [2025]

- Gemini's Task Automation: Transforming Workflows with AI [2025]

- Understanding Claude AI's Visual Capabilities: A Deep Dive into Anthropic's Latest Innovations [2025]

![AI and the Ethics of Mimicking Expertise: The Grammarly Controversy [2025]](https://tryrunable.com/blog/ai-and-the-ethics-of-mimicking-expertise-the-grammarly-contr/image-1-1773335359466.jpg)