Introduction: The Future of Wearable Communication Is Here

Imagine replying to a text message without pulling out your phone. No tapping, no typing, just your hand moving naturally through the air while AI translates the gesture into words. That's no longer science fiction. Meta has officially brought this capability to Ray-Ban Display glasses, and it's reshaping what we think wearable technology can do.

Back in 2021, when Meta first acquired Ray-Ban parent company Essilor Luxottica, the collaboration felt like a luxury novelty. Smart glasses for messages and calls? Cool, but niche. Fast forward to today, and Meta is pushing these devices into genuinely transformative territory. The company just announced three major features rolling out to Ray-Ban Display users: EMG handwriting recognition powered by the Meta Neural Band, a built-in teleprompter for reading text overlaid on your vision, and expanded pedestrian navigation to four new cities.

What makes this announcement significant isn't just the features themselves. It's the underlying shift in how we interact with technology. We're moving away from screens you hold toward intelligence you wear. We're trading keyboards for hand gestures. We're swapping phone notifications for text cards floating in your field of view.

For developers, creators, business professionals, and anyone who's tired of being tethered to their phone, this matters. A lot. This article breaks down everything you need to know about Ray-Ban Display's latest capabilities, how they actually work, what they mean for the broader AR landscape, and most importantly, why you should care.

Let's dive in.

TL; DR

- EMG Handwriting: Write messages by moving your hand in the air, powered by Meta's neural band detecting electrical muscle signals

- Teleprompter Feature: Copy notes to your phone and see them displayed as text cards on your glasses, navigable with neural band gestures

- Expanded Navigation: Pedestrian directions now available in Denver, Las Vegas, Portland, and Salt Lake City

- Early Access Rollout: Features rolling out via WhatsApp, Messenger, and phased deployment throughout 2025

- Hardware Requirement: All features require the Meta Neural Band accessory worn on your wrist

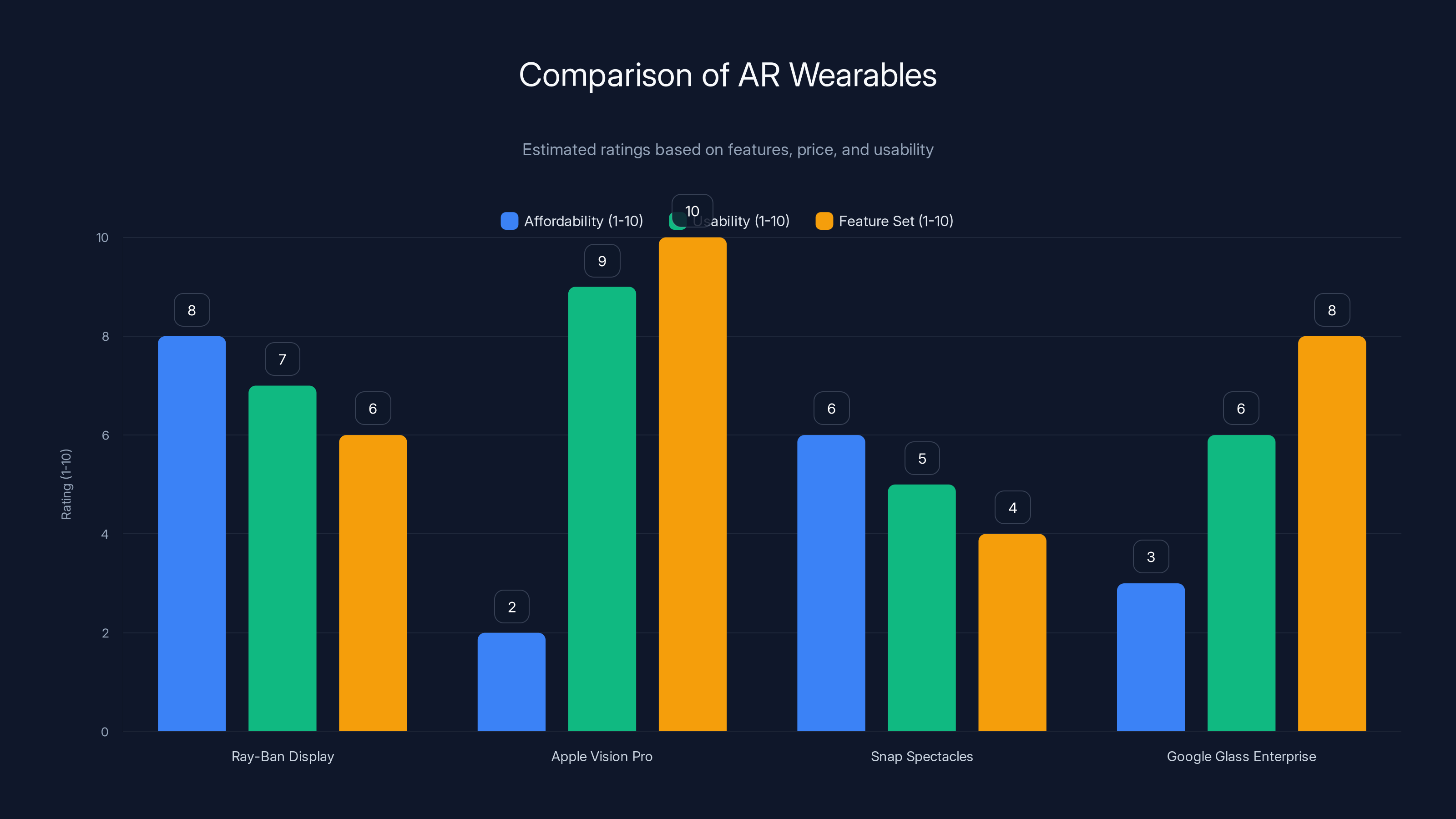

Ray-Ban Display offers a balanced mix of affordability and usability, while Apple Vision Pro excels in features but is less affordable. Estimated data.

Understanding Meta's Neural Band: The Invisible Interface

Before we talk about what the new features do, we need to understand the hardware making it possible. The Meta Neural Band is a wristband that reads electromyography (EMG) signals directly from your muscles. No cameras, no keyboards, no touchscreens. Just electrical sensors detecting the tiny impulses your muscles produce when you move.

Think of it like this: every time you move your hand, your muscles fire electrical signals. These signals are invisible to the naked eye, but the neural band's sensors detect them with remarkable precision. Meta's AI then processes these signals in real time, translating muscle movements into digital commands.

The technology isn't entirely new. Electromyography has existed in medical contexts for decades, primarily for diagnosing muscle disorders. What's new is miniaturizing it, making it reliable enough for consumer use, and pairing it with AI powerful enough to understand intent from muscle noise.

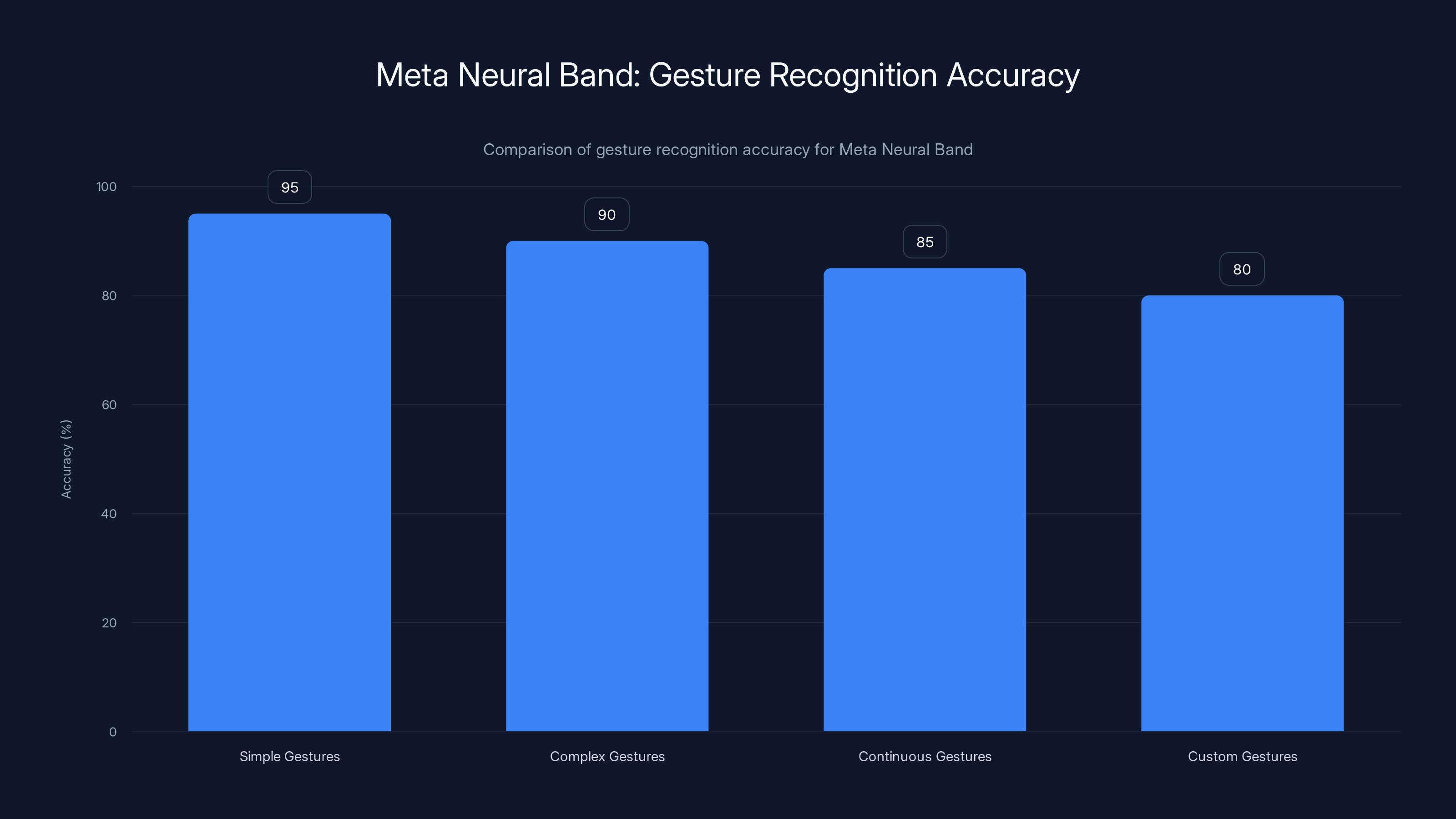

The neural band itself is relatively unobtrusive. It looks like a fitness tracker, sits on your wrist, and communicates wirelessly with your Ray-Ban Display glasses. The latency is low enough that interactions feel natural, not laggy. Meta claims the device can distinguish between dozens of different hand gestures with high accuracy.

One critical detail: this isn't a thought-reading device. Some headlines oversimplify the technology as "mind control." It's not. You're still making deliberate hand movements. The neural band just detects those movements through your skin instead of requiring a camera to see them. The privacy implications are actually better than computer vision in some respects, since the band doesn't record video of your surroundings.

The neural band requires charging, and Meta hasn't officially stated battery life for the latest version. Based on earlier iterations, expect somewhere in the range of 24-48 hours between charges, though this varies based on usage patterns.

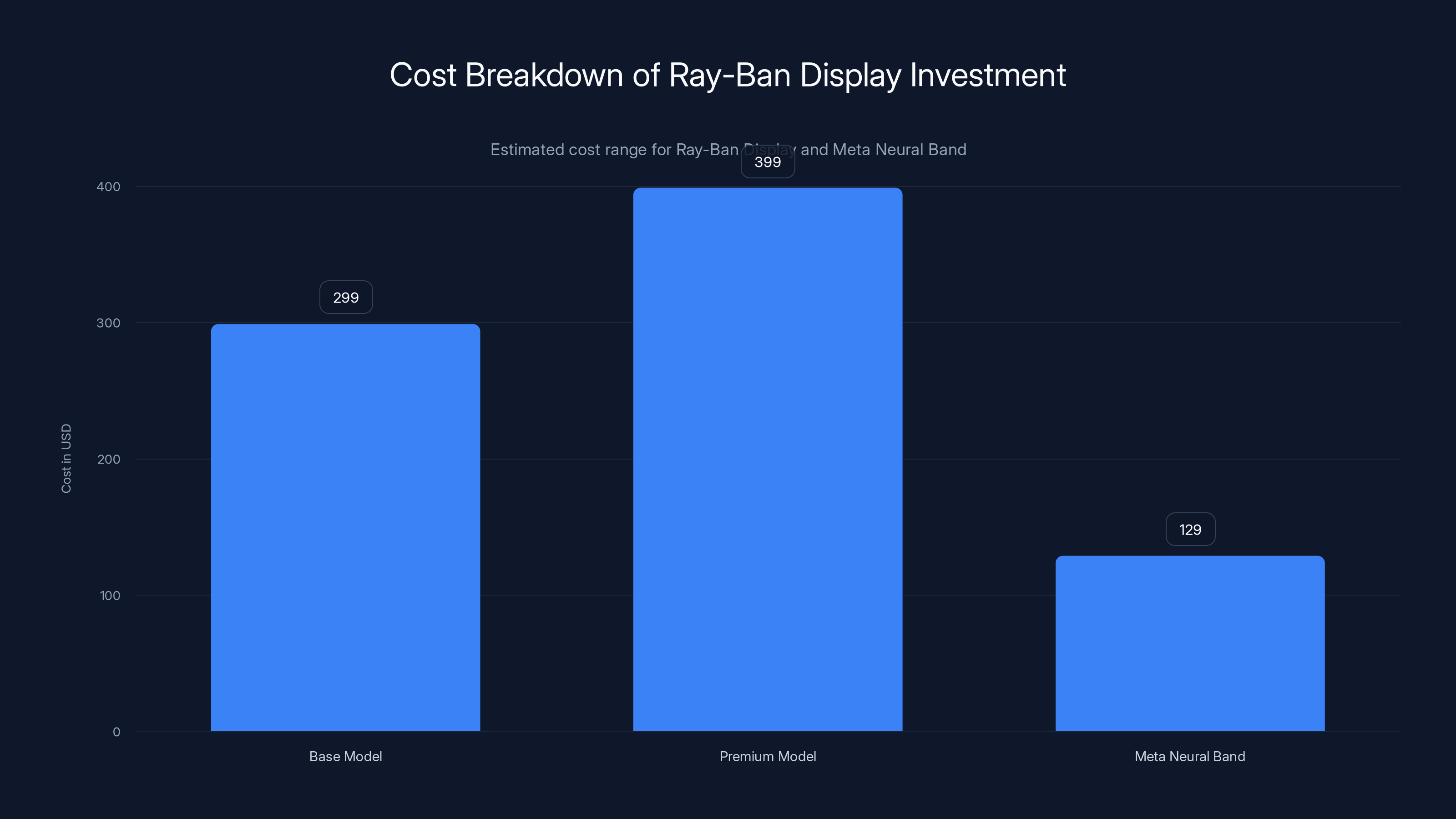

The total investment for Ray-Ban Display and Meta Neural Band ranges from

EMG Handwriting: Writing Without a Keyboard

The marquee feature of this announcement is EMG handwriting. Here's how it works: you want to reply to a text. Instead of reaching for your phone or pulling up a virtual keyboard, you make writing motions in the air with your hand. The neural band detects your muscle patterns, Meta's AI recognizes the shape of your "handwriting," and the glasses display the recognized text in real time.

This is genuinely different from existing gesture systems. Your hand doesn't need to be in frame of any camera. You don't need to point at the glasses or make exaggerated motions. You're literally writing, the same way you'd write on paper, except the surface is invisible and the recognition happens via muscle signals instead of vision.

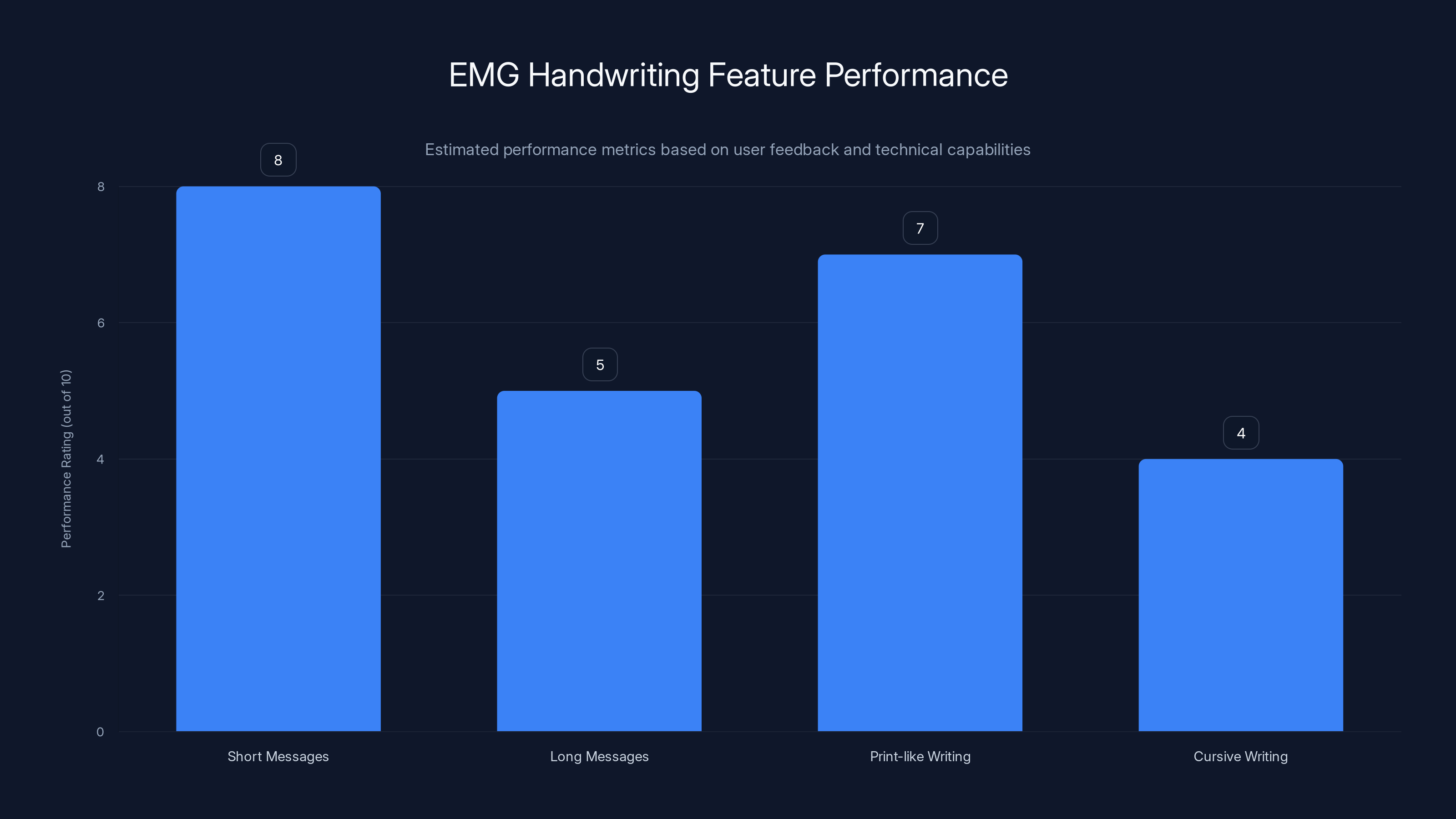

Meta demonstrated the feature at CES 2026, and early testers reported it worked surprisingly well for short messages. A user could write "Yes, I'll be there by 6" in roughly the time it takes to type the same message on a phone keyboard. For longer messages, writing becomes tedious (as it always has), but for quick replies, it's genuinely faster than unlocking your phone.

The accuracy isn't 100%, especially for cursive or unusual handwriting styles. Meta's neural band works better with print-like writing. Messy or sloppy handwriting occasionally triggers misrecognitions. But here's the thing: you see the recognized text in real time on your glasses. If something is wrong, you can erase and rewrite instantly without sending a message you didn't intend.

This feature is rolling out first in WhatsApp and Messenger, which makes sense. These apps are where most people reply to quick texts anyway. The early access rollout started in Q4 2025 and is expanding throughout early 2026. Not all users will have access immediately, and Meta is controlling the rollout to monitor system performance and gather feedback.

The muscle recognition AI powering this feature is genuinely impressive from a technical standpoint. Meta's researchers trained the system on thousands of hours of handwriting data, capturing variations in how different people write the same letters. The system learned not just what letters look like, but the distinct muscle patterns each person uses. This personalization actually improves accuracy over time as the system learns your specific writing style.

The Teleprompter Feature: Reading from Your Glasses

The second major feature is the teleprompter, and this one deserves serious attention from creators, public speakers, and anyone who presents regularly.

Here's the use case: you're about to give a presentation, record a video, or conduct a video call. You've written your talking points. Instead of holding note cards, keeping notes on your phone, or trying to memorize everything, you copy your notes into the Ray-Ban Display app on your phone. The glasses then display these notes as customizable text cards in your field of view. You can navigate between cards using neural band gestures.

The implementation is deceptively clever. The text appears in your line of sight but doesn't obstruct your view completely. You can glance down to see a few words, then look back at your camera or audience naturally. It's like having an invisible note card floating in your peripheral vision.

Creators have been asking for this feature for years. YouTubers filming product reviews often need to reference specs without looking at their phones. Podcasters recording interviews want to keep questions visible without mounting a monitor. Video call participants want to remember talking points without looking distracted. The teleprompter solves all of these problems.

You can customize text size, color, and position on the glasses. Some users prefer white text on a dark background for maximum contrast. Others want semi-transparent text that doesn't interfere with their actual vision. Meta's implementation supports these customizations through the mobile app.

The feature is rolling out starting this week in a phased manner. Not every Ray-Ban Display user will have access immediately. Meta is prioritizing early access to verified creators and content professionals first, then expanding to the general user base. This approach helps Meta gather feedback and optimize performance before full rollout.

There's an interesting privacy angle here. Your notes stay on your phone and sync to your glasses. They're not stored on Meta's servers (you can control this in settings). For sensitive content, you're not broadcasting your notes anywhere. You can delete notes from your phone and they automatically clear from your glasses.

EMG Handwriting excels in short messages and print-like writing, but struggles with cursive and longer texts. Estimated data based on user feedback.

Pedestrian Navigation Expansion: More Cities, More Guidance

The third feature in this announcement is less flashy than the other two, but arguably more immediately useful: expanded pedestrian navigation.

Ray-Ban Display has had pedestrian navigation for a while, but it's been limited to a handful of cities. With this update, Meta is adding support for Denver, Las Vegas, Portland, and Salt Lake City. The feature lets you see walking directions overlaid on your real-world view, turn-by-turn, without looking at your phone.

This is maps, but worn on your face. You want to get from your hotel to a restaurant. Instead of staring at your phone while walking, potentially getting hit by a car or walking into a fountain, you look straight ahead and see the next turn highlighted in your field of view. The glasses show the street name, distance to the turn, and which direction to go.

The navigation system is smart enough to understand context. If you're approaching a turn, the glasses make it obvious with highlights and text. If you're walking straight, the information fades to the background so it doesn't obstruct your view of actual traffic.

Pedestrian navigation is still in beta, which is why it's limited to certain cities. Meta is testing the system extensively to ensure accuracy and reliability. Walking directions are different from driving directions. You need to account for foot traffic, sidewalk congestion, time to cross streets, and places where pedestrians can legally walk but cars cannot.

Expanding to four new cities suggests the feature is performing well and that Meta is confident enough to expand beyond the original test markets. City selection likely depends on factors like population density, walkability, available mapping data, and beta tester feedback.

Comparing Ray-Ban Display to Competing Wearables

Ray-Ban Display isn't the only AR glasses on the market. It's worth understanding how it stacks up against alternatives and why Meta's approach is different.

Apple Vision Pro is the premium AR option. It's a full spatial computing device with screens generating virtual environments. It's powerful, expensive ($3,500+), and designed for immersive computing experiences. Ray-Ban Display is lightweight, social, and designed to be worn all day without fatigue.

Snap Spectacles are designed primarily for social sharing. They capture video and images, sync to Snapchat, and let you interact with Snapchat's augmented reality filters. They're great for content creators working within Snapchat's ecosystem, but limited outside of that.

Google Glass Enterprise Edition focuses on enterprise use cases. Delivery drivers use them to see package information. Warehouse workers use them to locate items. Healthcare professionals use them in operating rooms. They're specialized, expensive, and not for consumer purchase.

Meta Ray-Ban Display lands in the middle ground. It's consumer-focused, affordable (around $300-400), designed for all-day wear, and increasingly capable with each update. The glasses still look like regular Ray-Bans. Nobody knows you're wearing a computer.

The new features strengthen Ray-Ban Display's position by making it genuinely useful for communication, content creation, and navigation. EMG handwriting and teleprompter features solve real problems that consumers face daily.

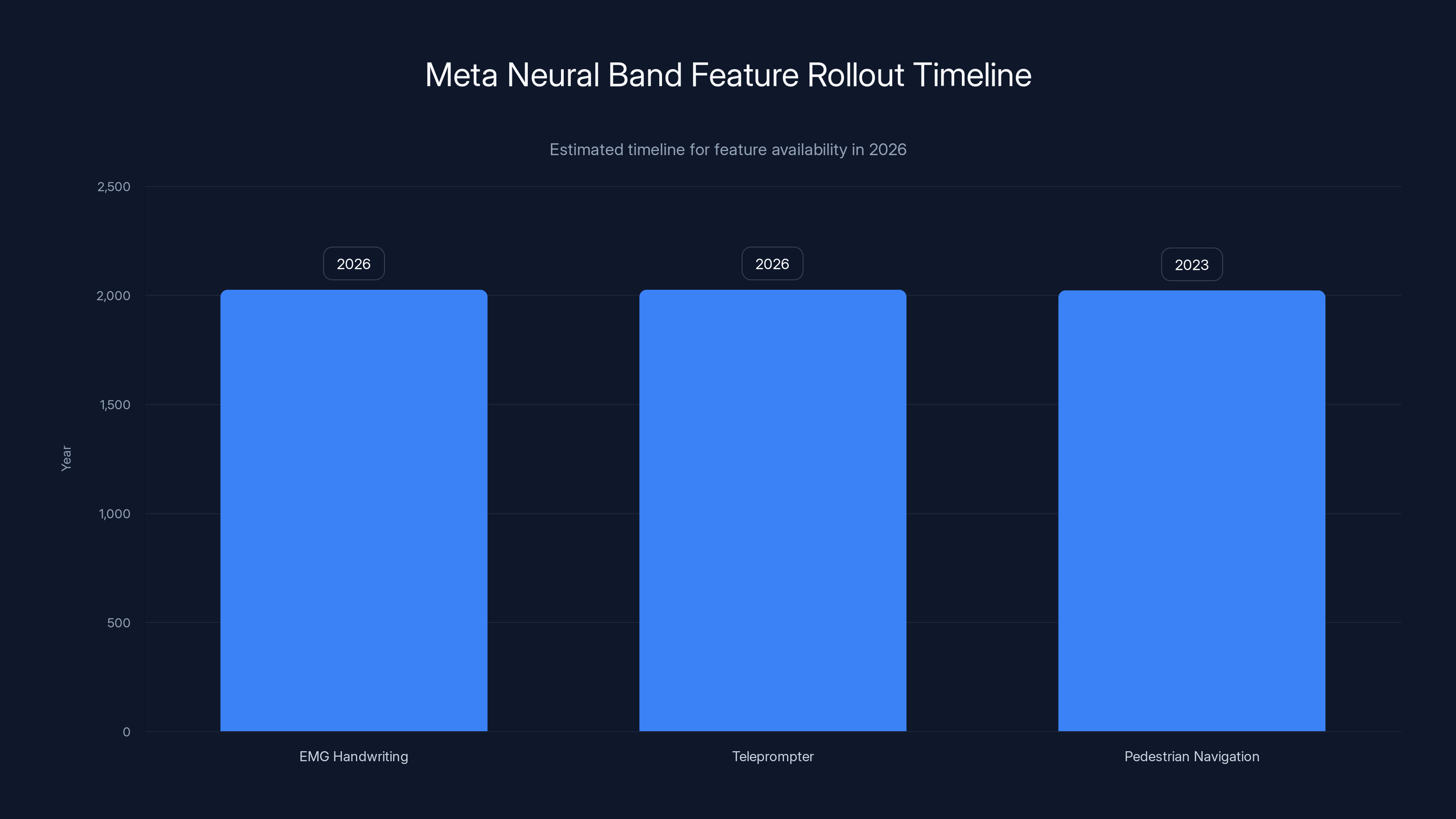

Estimated data shows EMG handwriting and teleprompter features are expected to be widely available in 2026, while pedestrian navigation is already available in 2023.

The Technical Architecture Behind These Features

Understanding how these features actually work on a technical level helps explain why they're impressive and why they took years to develop.

The EMG handwriting system works like this: the neural band's sensors pick up electrical signals from your forearm muscles. This raw sensor data is noisy and ambiguous. Thousands of different muscle configurations can produce similar signals. The device doesn't just look for specific patterns. Instead, Meta uses a machine learning model that's been trained on handwriting from thousands of users.

When you write in the air, the neural band samples muscle activity at hundreds of times per second. These samples are fed into a neural network that predicts what letter or stroke you're making. The system runs continuously, so it's producing predictions stream-style, not waiting for you to finish a whole letter.

This is more like continuous gesture recognition than discrete character recognition. The system learns transitions between letters, understanding that the muscle pattern for writing "a" followed by "b" is different from writing them separately.

The teleprompter feature is simpler on the technical side. Your phone syncs text to the glasses via Bluetooth. The glasses render text in a specific area of the display, overlaid on your real-world view. Navigation between cards is handled by the neural band's gesture recognition system. It's straightforward, but the user experience design is what matters.

Both systems are running on-device, not in the cloud. Your handwriting data doesn't get sent to Meta's servers for processing. The AI models are deployed directly on the Ray-Ban Display's processor. This is important for latency (no waiting for a cloud response) and privacy (your data stays on your device).

Use Cases That Make Ray-Ban Display Essential

Let's talk about real scenarios where these features actually matter.

Content Creator Recording Product Videos: You're filming yourself demonstrating a new piece of software. You need to reference features, explain shortcuts, and describe functionality. With the teleprompter, you can see your talking points in your glasses without looking at a monitor or phone. Your hands stay free. Your eye contact stays natural. Your viewers never see note cards or a monitor in the background.

Professional Speaker at a Conference: You're about to present for 30 minutes. You've memorized your main points, but you want backup notes visible if your mind goes blank. The teleprompter displays your notes in outline form. You can glance down for a word or statistic without losing your train of thought or breaking eye contact with the audience.

Quick Text Replies: You're in a meeting, in a grocery store, or cooking dinner. Someone messages you. Instead of pulling out your phone and typing a response, you handwrite a quick reply using the neural band. It's faster than typing on a phone, doesn't interrupt your current activity as obviously, and feels more natural than pulling a phone out of your pocket.

Visitor Navigation in a New City: You're traveling to Denver for the first time. Instead of staring at your phone while walking, you see directions overlaid on your real-world view. You never look down. You never get distracted. You know exactly when and where to turn.

Fitness Coach Feedback: You're doing a workout in your home gym. Your fitness app is synced to your Ray-Ban Display. Form corrections appear in your vision. Reps counted. Time remaining shown. All without a separate screen or phone mount.

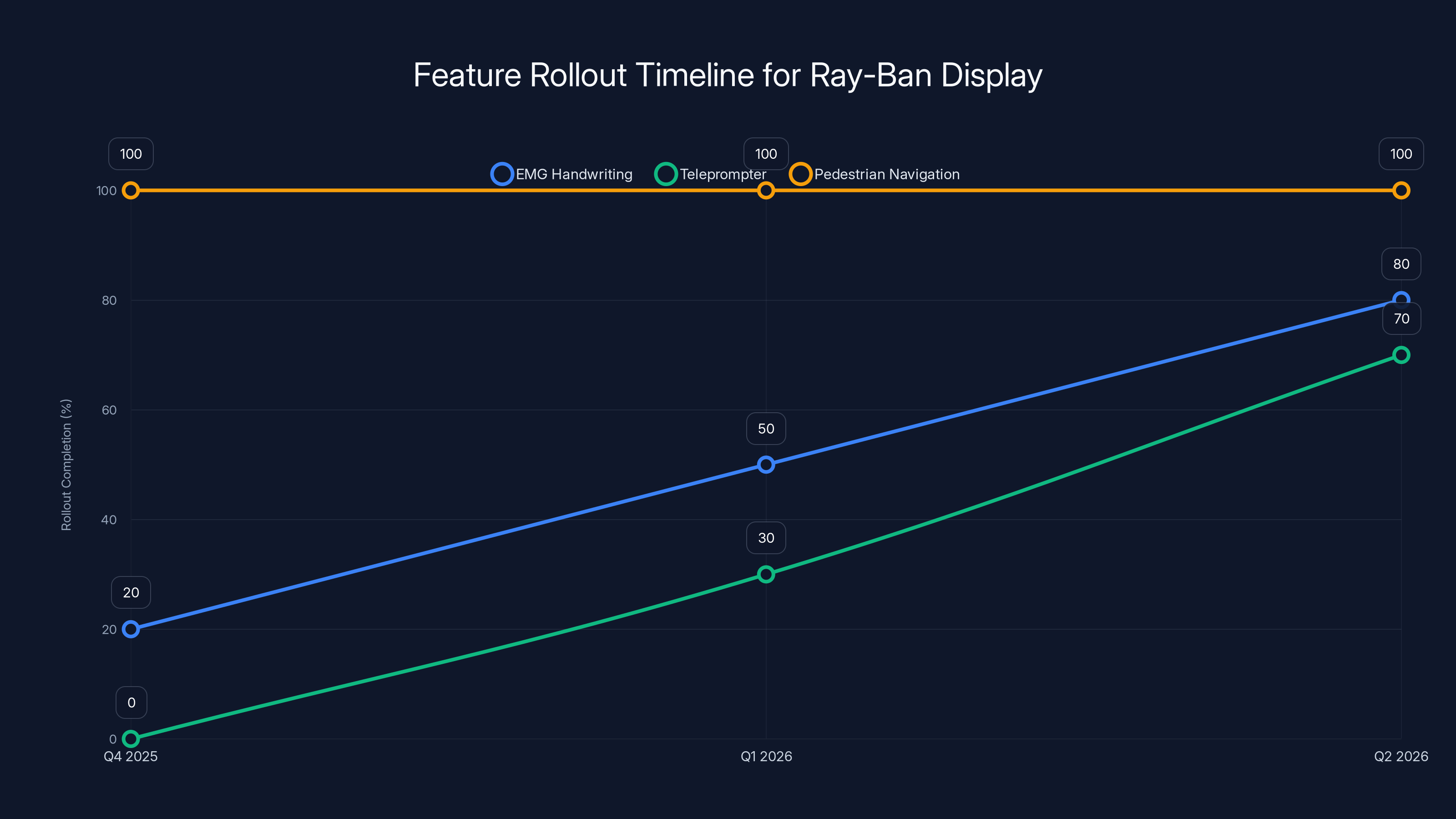

The EMG handwriting feature is gradually rolling out from Q4 2025 to early 2026, while the teleprompter feature starts its phased rollout in January 2026. Pedestrian navigation is already fully available in the new cities. Estimated data.

Privacy and Security Considerations

Wearing a computer on your face raises legitimate privacy questions. Let's address them directly.

The neural band doesn't use cameras, which actually improves privacy in some ways. It can't photograph people around you. It can't record video of your environment. It detects muscle signals, nothing else. Someone standing next to you has no way of knowing what gestures you're making or what you're seeing on your glasses.

That said, the data the neural band does collect is sensitive. Muscle signals are unique to individuals, similar to fingerprints. Theoretically, a sufficiently sophisticated system could identify you by your EMG patterns. Meta acknowledges this. The company has committed to not using muscle signal data for identification or tracking.

Your handwriting is also personal data. Meta stores this data locally on your device by default. You can control whether it's backed up to Meta's servers. You can delete your handwriting data on demand. You can opt out of using the feature entirely.

The teleprompter feature stores your notes where you tell it to store them. By default, notes sync between your phone and glasses but don't upload to Meta's cloud servers. You maintain ownership and control.

For enterprise deployments, Meta provides additional security options. Organizations can control what data syncs, where it's stored, and how long it's retained. This is important for companies dealing with sensitive information.

The Path Forward: What's Next for Ray-Ban Display

Meta hasn't announced what features are coming after this rollout, but we can make informed predictions based on the company's research roadmap and the capabilities of the hardware.

Advanced Gesture Recognition: The neural band currently recognizes about 200 distinct gestures and movements. Meta is working on expanding this to thousands of nuanced interactions. Imagine being able to control multiple aspects of your environment with different hand movements, all without saying a word or looking at a screen.

Multilingual Handwriting: The current EMG handwriting system works with English, and Meta has prioritized this language. Expanding to Mandarin, Spanish, Arabic, and other languages with different writing systems will be technically complex but valuable for global adoption.

Health and Fitness Integration: The neural band is essentially a sophisticated fitness tracker. It measures muscle activation, can infer movement types, and could provide real-time feedback about your workout form. Integration with fitness apps could turn Ray-Ban Display into a dedicated fitness coaching device.

Mental Health Applications: Research suggests that EMG patterns could eventually provide insights into stress, anxiety, and fatigue levels. This is early-stage research, but the potential is significant. Your glasses could eventually alert you when you're stressed and suggest a break.

Business and Enterprise Workflows: We'll likely see specialized versions of Ray-Ban Display for industries like healthcare, manufacturing, and logistics. Field technicians could pull up repair manuals in their vision. Surgeons could reference imaging during procedures. Warehouse workers could see inventory information in real time.

Meta is also investing heavily in smaller, lighter versions of the hardware. Future generations will be thinner, more aesthetically comparable to regular glasses, and potentially with better displays and longer battery life.

The Meta Neural Band achieves high accuracy in recognizing various hand gestures, with simple gestures being recognized at 95% accuracy. Estimated data.

Challenges and Limitations to Understand

Ray-Ban Display's new features are impressive, but they come with real limitations that potential users should understand.

Accuracy Isn't Perfect: EMG handwriting works well for most handwriting, but unusual styles or medical conditions affecting muscle function can reduce accuracy. The system learns your writing over time, but the initial learning curve exists.

Battery Life Is a Factor: Neural band battery life is measured in hours, not days. If you're using the handwriting feature extensively, you're probably charging daily. This isn't terrible, but it's a consideration if you plan to use the glasses all day.

Social Acceptance Varies: Some people are comfortable wearing visible electronics. Others find AR glasses self-conscious or intrusive. Ray-Ban Display looks more like regular sunglasses than other AR devices, but it's still visibly tech-forward.

Limited Content Ecosystem: The feature set is growing, but Ray-Ban Display still isn't as capable as a smartphone. You can't download and run arbitrary apps. You can't stream video. You can't do complex computing tasks. The glasses are purpose-built for specific interactions, not general computing.

Weather and Lighting Conditions: The display is bright, but bright sunlight still reduces visibility. Extreme cold affects battery life. These are manageable issues, but they exist.

Regulatory Questions Remain: Some jurisdictions have concerns about wearable cameras (even though Ray-Ban Display includes cameras, but not for the new features). As the technology expands, regulatory frameworks will evolve. Early adopters might face restrictions future users don't.

Comparing Ray-Ban Display to Smartphones: When to Use What

Ray-Ban Display isn't replacing your smartphone. It's complementary. Understanding when to use each device matters.

Use Ray-Ban Display when you need hands-free interaction, minimal visual obstruction, or don't want to pull out a phone. Quick text replies, navigation, quick reference information, and status notifications fit here.

Use Smartphones for complex tasks requiring detailed input, browsing, watching video, or anything that benefits from a large screen. Your phone will remain your primary computing device.

The two devices work best together. Your phone syncs information to your glasses. The glasses handle quick interactions. The phone handles heavy lifting.

Implementation Timeline and Rollout Strategy

Understanding when these features actually arrive matters if you're considering purchasing Ray-Ban Display.

EMG handwriting is rolling out as an early access feature in WhatsApp and Messenger. The rollout started in Q4 2025 and is expanding throughout early 2026. Not all users get access immediately. Meta is staggering the rollout to monitor system performance and collect feedback before expanding further.

The teleprompter feature began its phased rollout this week (January 2026). Early access is available to verified creators and content professionals. General rollout to all Ray-Ban Display users will happen throughout Q1 and Q2 2026.

Pedestrian navigation expansion to the four new cities is already live. If you own Ray-Ban Display and are in Denver, Las Vegas, Portland, or Salt Lake City, the feature is available now.

Note that feature availability depends on geography, account status, and software version. You might need to update your Ray-Ban Display app to access new features. All updates are free.

The Bigger Picture: What This Means for AR

These feature announcements are important beyond just what they enable today. They represent a philosophical shift in how we think about augmented reality.

Early AR visions imagined glasses that overlay vast amounts of information on your view. Your eyes would be flooded with notifications, data, advertising. The devices would be bulky. They'd stand out. They'd create social awkwardness.

Meta's approach is different. It's introducing features deliberately. It's using the neural band to enable hands-free interaction without cameras watching your hands. It's keeping the glasses light and social. It's prioritizing features that genuinely solve problems versus features that impress people.

The handwriting feature is particularly symbolic. For thousands of years, humans have written things by hand. Then computers arrived and we typed instead. Ray-Ban Display is bringing handwriting back, but eliminating the paper. It's familiar and natural.

This approach requires the AI to be genuinely good. The teleprompter only works if text recognition is accurate. The handwriting only works if muscle signal detection is reliable. Meta has invested billions in the research. The results are impressive enough to warrant this announcement.

Industry Response and Expert Perspective

AR industry analysts have responded positively to Ray-Ban Display's new capabilities. The consensus is that these features represent meaningful progress toward AR becoming truly practical for daily life.

Creators are particularly excited about the teleprompter. Independent content creators often struggle with the logistical challenge of reference information while filming. Solutions like this reduce friction and improve content quality.

Enterprise software companies are watching closely. If Ray-Ban Display can demonstrate success in consumer spaces, corporate applications will follow. Field service management companies, healthcare organizations, and manufacturing firms all see potential.

The EMG technology draws particular interest from accessibility researchers. The technology could eventually enable people with limited hand mobility to control devices through muscle signals. While the current implementation is for muscle-based handwriting in people with typical dexterity, the underlying technology has profound accessibility applications.

Critics note that Ray-Ban Display still faces network effects challenges. The device is only as useful as the ecosystem of compatible apps. Right now, that ecosystem is limited. WhatsApp and Messenger are significant, but the glasses need dozens of compatible apps to become truly indispensable.

Cost Analysis: Is Ray-Ban Display Worth the Investment?

Ray-Ban Display starts at approximately

So the total investment for both devices is around $400-530. That's not insignificant, but it's less than a flagship smartphone.

Return on investment depends on your use case. If you're a content creator using the teleprompter regularly, the investment likely pays for itself in improved content quality and efficiency gains. If you're someone who walks in unfamiliar cities frequently, the navigation feature is valuable. If you reply to texts constantly, the handwriting feature saves time.

For casual users just interested in AR gadgetry, the value is less clear. You might use the features occasionally but not frequently enough to justify the cost.

Meta also sells standard Ray-Ban sunglasses with displays built in, so you're not "wasting" the device only for AR. You get functional sunglasses whether or not you use the computing features.

Frequently Asked Questions

FAQ

What is EMG handwriting on Ray-Ban Display?

EMG handwriting lets you write messages by moving your hand in the air. The Meta Neural Band detects electrical signals from your arm muscles and converts your handwriting motions into text displayed on your glasses. You can then send this text as messages in WhatsApp or Messenger without using a keyboard or phone.

How does the neural band detect handwriting?

The neural band contains sensors that read electromyography signals, which are tiny electrical impulses your muscles produce when they contract. As you move your hand to write letters and words, these muscle signals create a unique pattern that Meta's AI recognizes as specific handwriting. The system works continuously, recognizing strokes in real time as you write.

What is the teleprompter feature and how does it work?

The teleprompter feature displays text notes on your Ray-Ban Display glasses in your field of view. You copy notes from your phone into the Ray-Ban app, and they appear as customizable text cards on your glasses. You navigate between cards using neural band gestures, letting you reference talking points, scripts, or information without looking at a separate device.

Who has access to these new features?

EMG handwriting is rolling out as early access in WhatsApp and Messenger to Ray-Ban Display users throughout 2026. The teleprompter is expanding from early access to creators through Q1-Q2 2026. Pedestrian navigation expansion to four new cities is available now for Ray-Ban Display owners in those locations.

What is the Meta Neural Band and do I need it?

The Meta Neural Band is a wristband that reads muscle electrical signals. Yes, you need it to use EMG handwriting and to navigate teleprompter cards. The neural band costs approximately $99-129 separately and is compatible with Ray-Ban Display glasses. Regular text input on Ray-Ban Display works without the neural band using voice commands or on-device keyboards.

How accurate is EMG handwriting recognition?

Accuracy is generally high for print-style handwriting but lower for unusual or very cursive writing. The system learns your writing patterns over time, so accuracy improves the more you use it. You see recognized text in real time and can correct errors before sending messages.

What's the battery life of the neural band?

Meta hasn't officially specified battery life, but estimates based on early hardware suggest 24-48 hours between charges depending on usage intensity. Heavy use of handwriting features will drain the battery faster than light use.

Can I use Ray-Ban Display without the neural band?

Yes, Ray-Ban Display works without the neural band. You can use voice commands, on-screen keyboards, and tapping for standard functions like messaging and navigation. The neural band is required only for EMG handwriting and teleprompter navigation.

Is my handwriting data private?

Your handwriting data is stored locally on your device by default and doesn't upload to Meta's servers without your permission. You can delete handwriting data at any time. You control whether data is backed up to Meta's cloud.

Where is pedestrian navigation available?

As of January 2026, pedestrian navigation is available in the original cities plus Denver, Las Vegas, Portland, and Salt Lake City. Meta indicates more cities will be added as the feature expands from beta to general availability.

How do I get access to these features?

Feature availability rolls out gradually. Check your Ray-Ban Display app for feature availability in your region. If a feature isn't available yet, you'll be able to see it listed as "coming soon" or "early access." You'll receive a notification when features become available for your account.

Can I use the teleprompter with any app or just Meta apps?

Currently, the teleprompter is designed for WhatsApp, Messenger, and Meta's first-party experiences. Third-party developers will eventually be able to integrate teleprompter functionality into their apps through Meta's developer tools, but this isn't available yet.

Conclusion: The Wearable Computing Inflection Point

Ray-Ban Display's new features represent a meaningful inflection point for wearable computing. We're transitioning from "neat gadgets you can try out" to "devices that actually make your life easier." The teleprompter genuinely helps content creators. The handwriting feature genuinely saves time replying to texts. The navigation actually improves the walking experience in supported cities.

These aren't flashy features designed to impress people at parties. They're practical features designed to solve real problems. That's significant.

What's more significant is the underlying technology trajectory. The neural band is getting smarter every year. The AI recognizing handwriting is improving. The displays are becoming clearer. The battery life is extending. All of these trends point toward AR glasses becoming not just viable, but genuinely indispensable.

The roadblocks are shrinking. Privacy is being addressed seriously. Battery life is improving. The app ecosystem is growing. The form factor is becoming socially acceptable.

For early adopters, Ray-Ban Display is now compelling enough to justify the investment if your use case aligns with the available features. For mainstream users, we're probably 18-24 months away from the tipping point where these glasses become genuinely essential rather than optional.

Meta isn't alone in this space. Apple, Snap, and others are developing AR experiences. But Meta's strategy of prioritizing practical features over flashy experiences, and focusing on intelligent interactions rather than information overload, might just be the approach that finally makes AR stick.

The future of computing might not look like futuristic sci-fi movies. It might just look like better versions of the Ray-Bans sitting in your drawer.

Use Case: Build AI-powered workflows that automate your content generation, from teleprompter scripts to social media captions, without touching code.

Try Runable For Free

Key Takeaways

- EMG handwriting on Ray-Ban Display uses neural band muscle signal detection to convert hand gestures into text messages without keyboards

- Teleprompter feature displays customizable text cards visible on glasses, helping creators, speakers, and professionals reference notes hands-free

- Pedestrian navigation expanded to Denver, Las Vegas, Portland, and Salt Lake City with turn-by-turn directions overlaid on user's view

- Features roll out gradually throughout 2026 in phased approach, starting with WhatsApp and Messenger for handwriting, and verified creators for teleprompter

- Total investment of $400-530 for Ray-Ban Display glasses and neural band provides compelling value for content creators, public speakers, and frequent communicators

Related Articles

- Razer Project Motoko: The Future of AI Gaming Headsets [2025]

- Corsair Galleon 100 SD: The Ultimate Stream Deck Gaming Keyboard [2025]

- Meta Pauses Ray-Ban Display International Expansion: What It Means [2025]

- CES 2026: Why AI Integration Matters More Than AI Hype [2025]

- Shure MV88 USB-C: The Game-Changing Phone Microphone for Content Creators [2025]

- Fender ELIE Bluetooth Speakers: Playing 4 Audio Sources Simultaneously [2025]

![Meta Ray-Ban Display Glasses Get Teleprompter and EMG Handwriting [2025]](https://tryrunable.com/blog/meta-ray-ban-display-glasses-get-teleprompter-and-emg-handwr/image-1-1767713777938.png)