Meta's Path Forward: New Rules for AI-Generated Content [2025]

In the rapidly evolving digital landscape, the integration of artificial intelligence into content creation has become both a boon and a challenge. The Oversight Board has recently highlighted the urgent need for Meta to revise its policies regarding AI-generated content. This isn't just a matter of tweaking existing rules—it's about crafting a new paradigm for how AI content is managed, detected, and labeled. In this comprehensive guide, we delve into the implications of these recommendations, explore the technical intricacies of AI content generation, and provide actionable insights for stakeholders.

TL; DR

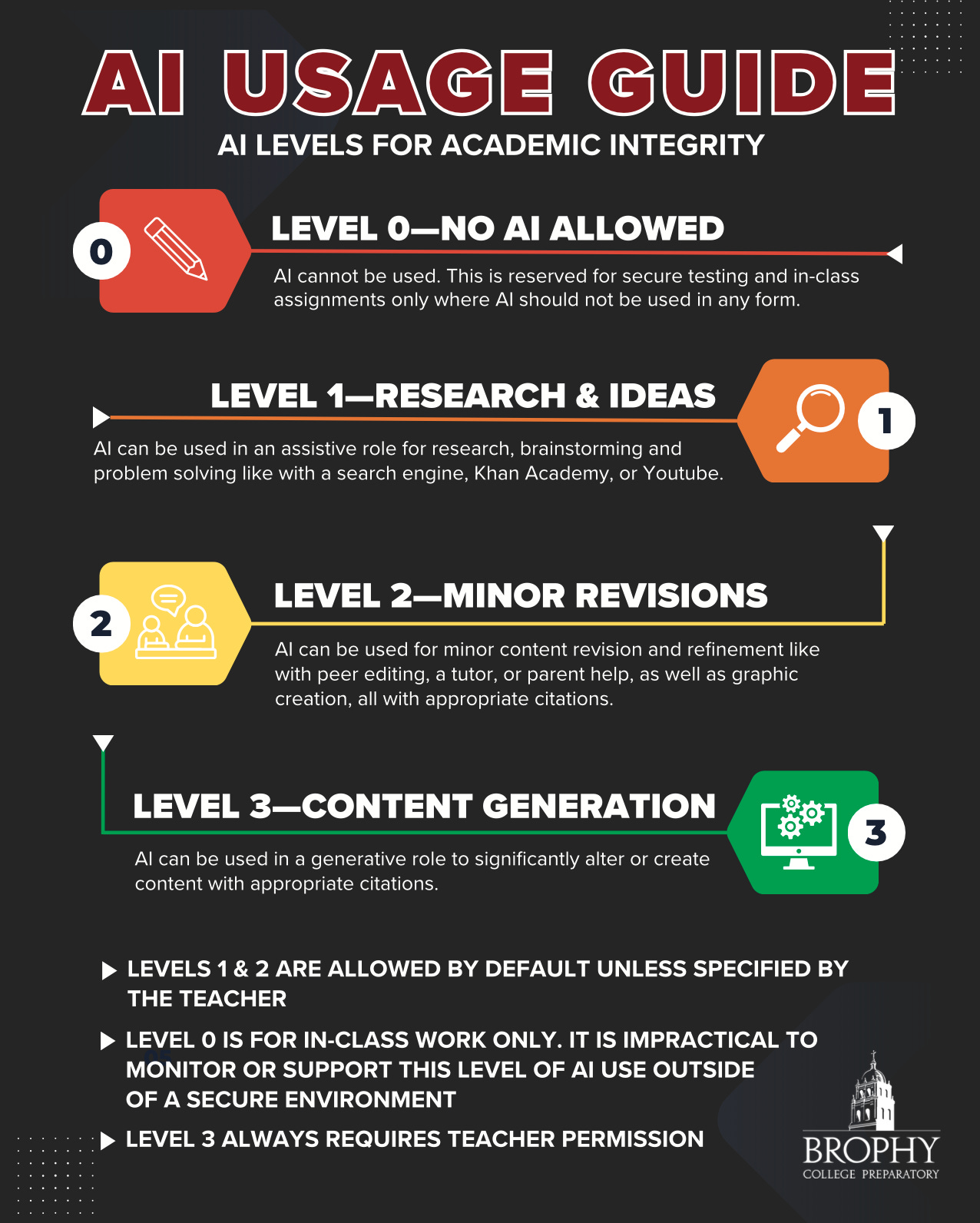

- New Rule Creation: The Oversight Board stresses the need for distinct policies for AI-generated content, separate from misinformation protocols.

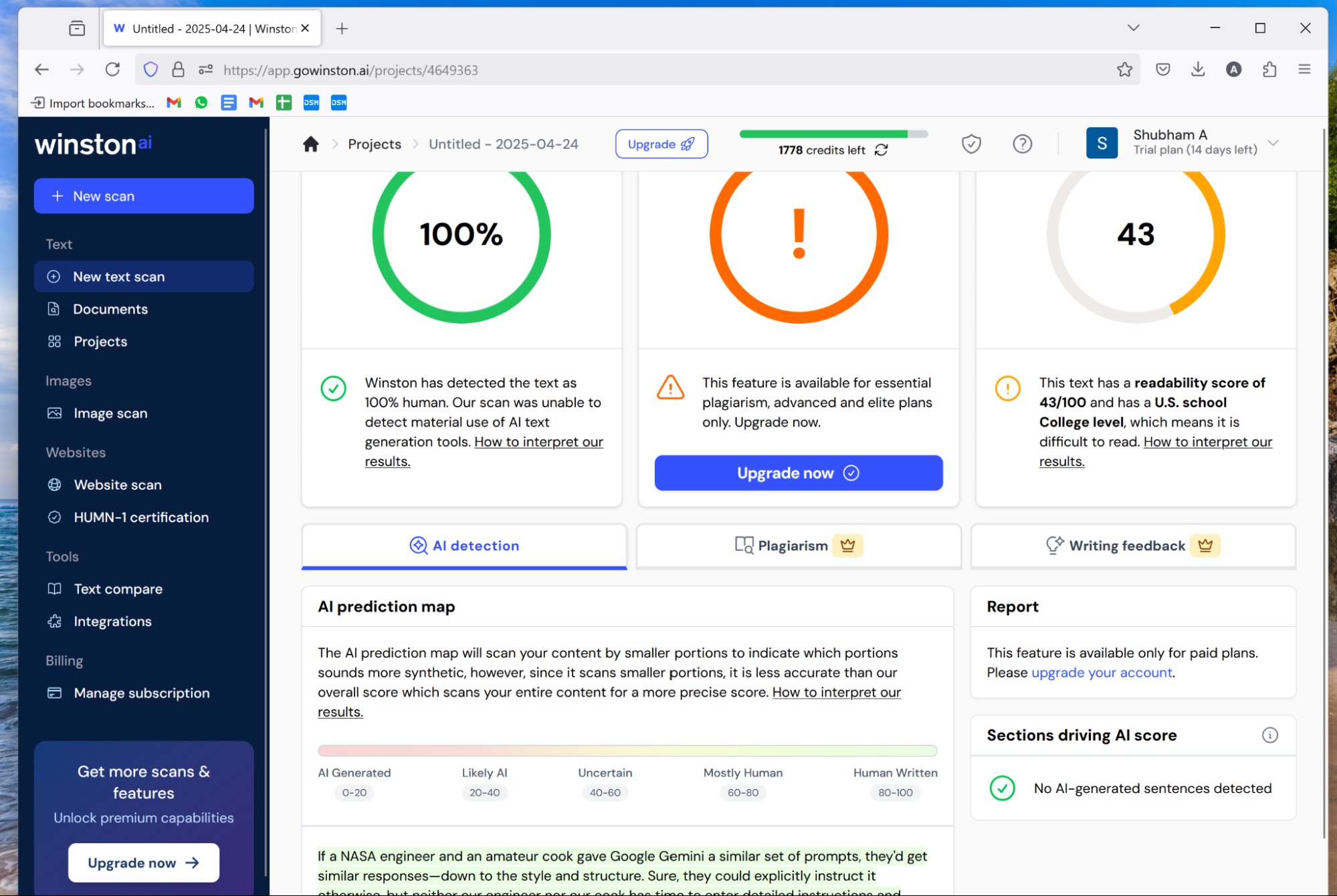

- Detection Tools: Investment in advanced detection mechanisms is crucial for identifying AI content accurately.

- Digital Watermarks: Implementing robust watermarking can aid in identifying the origins of AI-generated media.

- Transparency and User Education: Clear labeling and user education are vital to prevent misinformation.

- Future Trends: Anticipate AI's role in content creation to grow, necessitating ongoing policy adaptation.

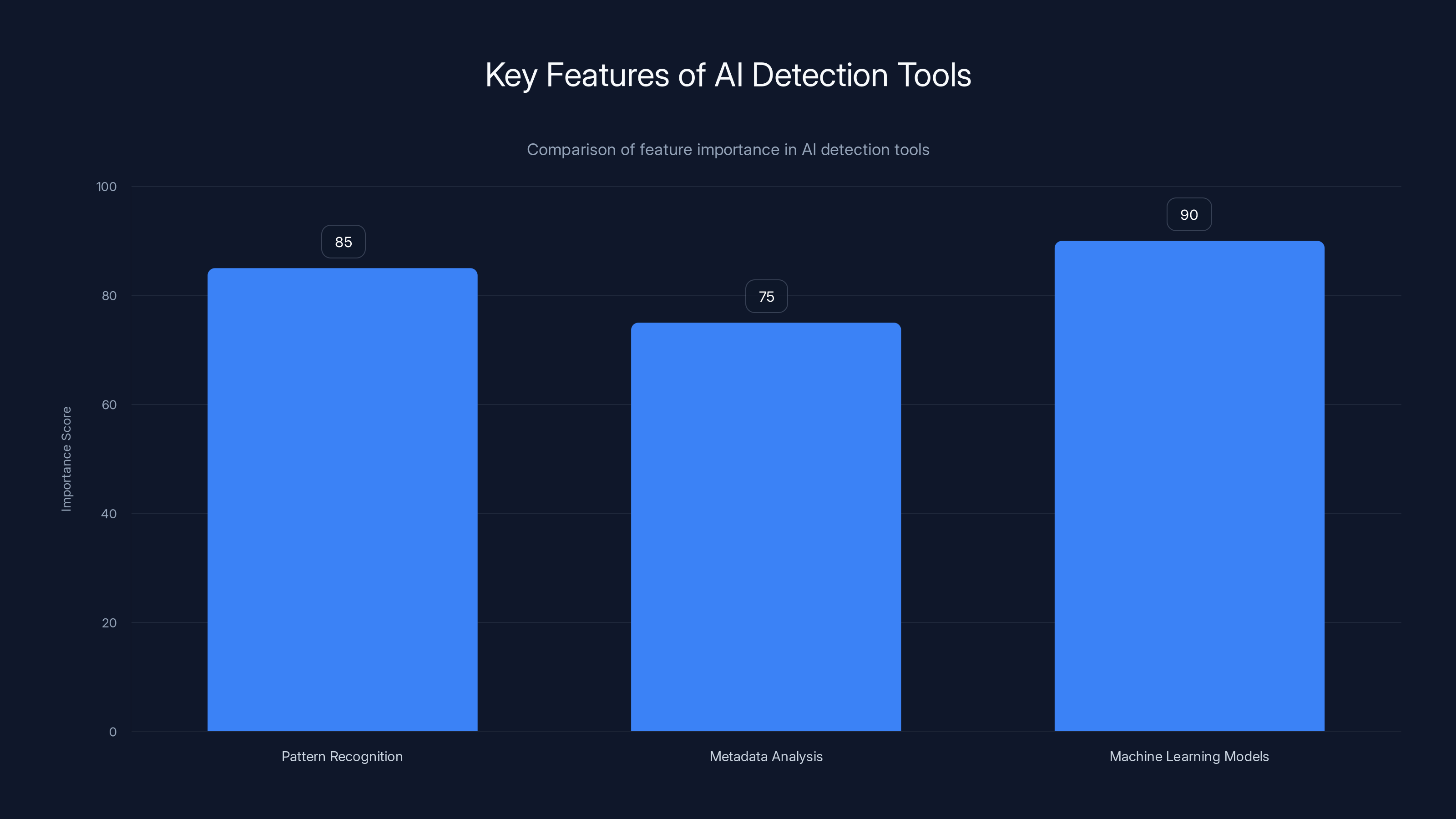

Machine Learning Models are rated highest in importance for AI detection, followed closely by Pattern Recognition. Estimated data based on typical feature evaluation.

The Oversight Board's Recommendations

The Oversight Board has been vocal about the necessity for Meta to establish new rules explicitly targeting AI-generated content. This move is essential due to the unique challenges AI content poses, which differ significantly from traditional misinformation. The board's recommendations include:

-

Separate AI Content Rules: AI-generated content needs its own set of rules, distinct from those governing misinformation. The complexity and variety of AI content require tailored policies that address specific risks and opportunities.

-

Enhanced Detection Tools: Meta should invest in state-of-the-art detection tools that can reliably identify AI-generated content, distinguishing it from human-created media.

-

Use of Digital Watermarks: By embedding digital watermarks in AI-generated content, Meta can improve traceability and accountability, helping users identify the origins of such content.

-

Improved Transparency: Labels like "AI Info" are insufficient. More detailed and accessible information about AI-generated content is necessary to educate users and prevent the spread of misinformation.

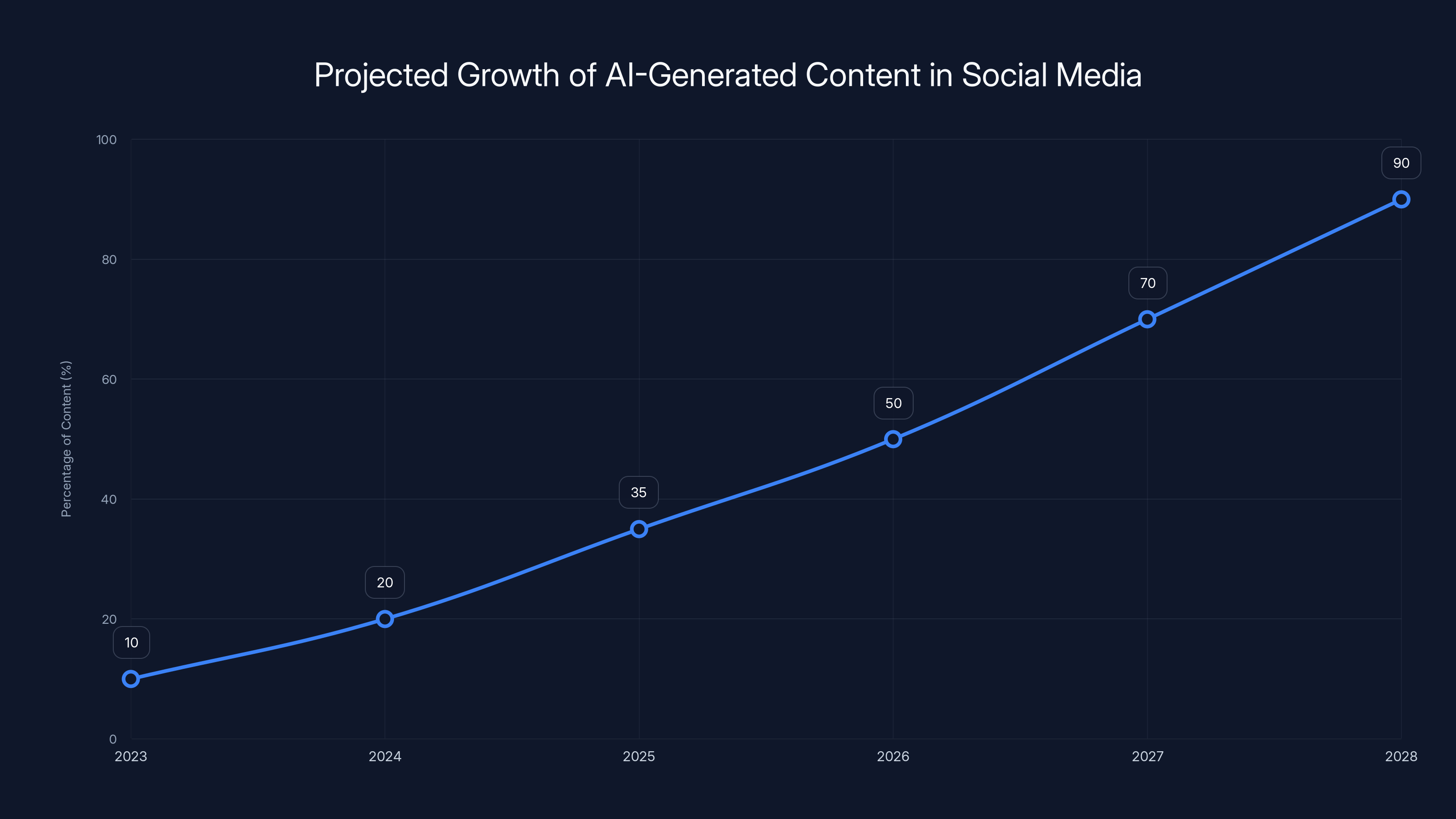

AI-generated content on social media platforms is projected to grow significantly, reaching 90% by 2028. Estimated data.

Understanding AI-Generated Content

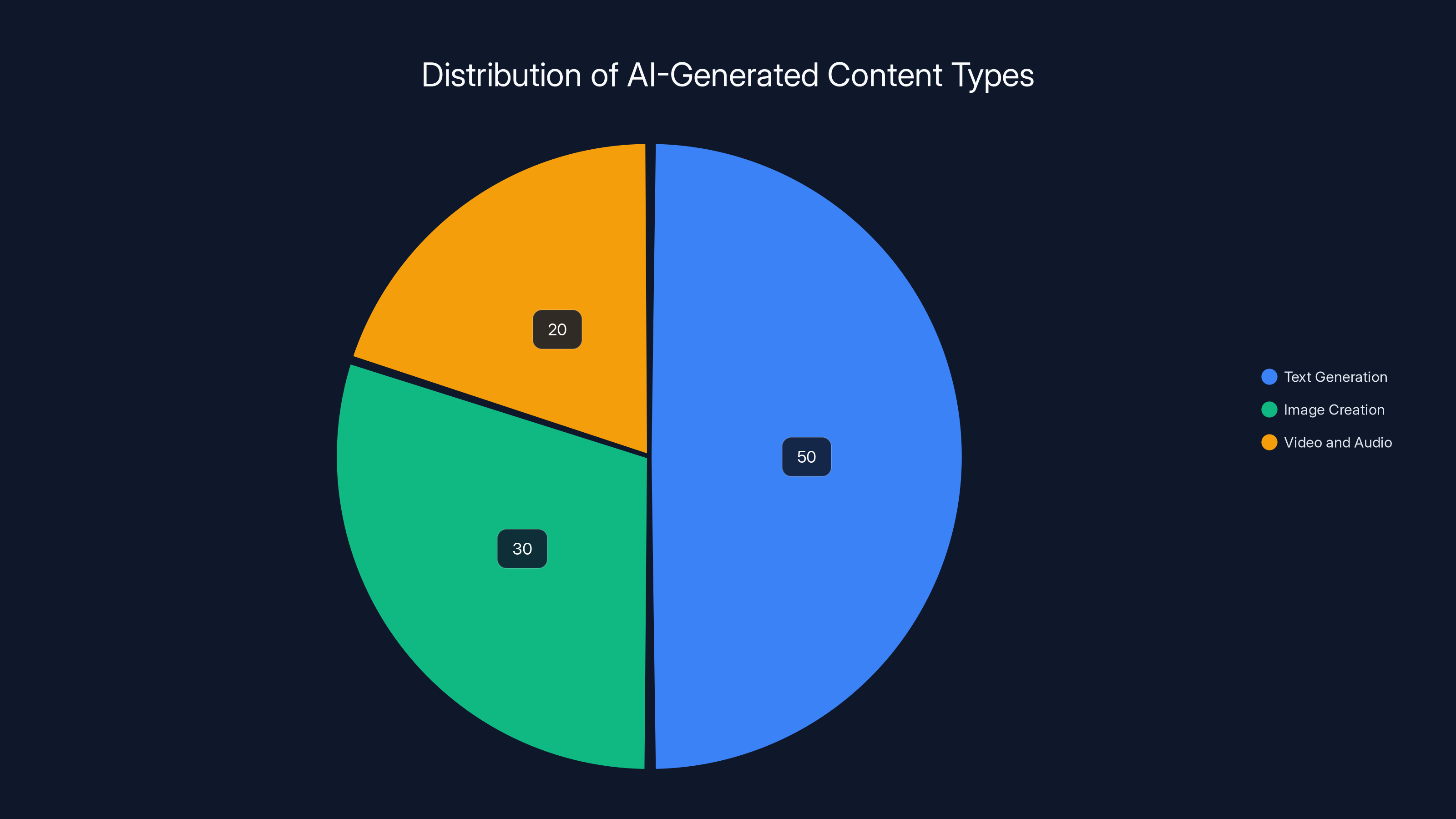

AI-generated content spans various forms, from text and images to video and audio. It leverages advanced machine learning models to create media that is often indistinguishable from human-created content.

How AI-Generated Content Works

AI content creation typically involves generative models such as GPT (Generative Pre-trained Transformer) for text or GANs (Generative Adversarial Networks) for images and video. These models are trained on vast datasets to learn patterns and structures, enabling them to generate new content that mimics these inputs.

Example Use Cases:

- Text Generation: AI models can write articles, create social media posts, or draft emails, often requiring minimal human intervention.

- Image Creation: Tools like DALL-E generate images from textual descriptions, creating art or visual content for marketing.

- Video and Audio: AI can produce video clips or voiceovers, useful in advertising and content creation.

Practical Implementation of AI Detection Tools

For Meta to effectively manage AI-generated content, robust detection tools are essential. These tools must be capable of identifying subtle cues that distinguish AI content from human-generated media.

Key Features of AI Detection Tools

- Pattern Recognition: Advanced algorithms analyze content patterns to detect anomalies typical of AI-generated media.

- Metadata Analysis: By examining metadata, tools can identify fingerprints left by AI processes.

- Machine Learning Models: Continuously trained models improve detection accuracy over time.

Implementation Guide:

- Select Appropriate Tools: Choose tools that integrate seamlessly with existing platforms and can scale with content volume.

- Regular Updates: Keep detection models updated to handle new AI techniques and patterns.

- Cross-Platform Integration: Ensure tools work across various content types and platforms for consistent monitoring.

AI-generated content is predominantly text-based (50%), followed by image creation (30%) and video/audio (20%). Estimated data.

The Role of Digital Watermarks

Digital watermarks can play a critical role in authenticating and tracing AI-generated content. They serve as a digital signature, providing information about the content's origin and authenticity.

How Digital Watermarks Work

Digital watermarks are embedded into media files, often invisible to the naked eye but detectable by software. They can include data about the creator, creation date, and even the AI model used.

Best Practices:

- Robustness: Ensure watermarks are durable and resistant to tampering.

- Standardization: Adopt industry standards for watermarks, facilitating cross-platform recognition.

- Transparency: Clearly communicate the presence and purpose of watermarks to users.

Addressing Transparency and User Education

Transparency is crucial in managing AI-generated content. Users need clear, accessible information to make informed decisions about the content they consume.

Effective Labeling Strategies

- Detailed Labels: Move beyond simple "AI Info" labels to more comprehensive information about content origin and creation.

- User Education Campaigns: Launch initiatives to educate users on recognizing and understanding AI-generated content.

Common Pitfalls:

- Overly Technical Language: Avoid jargon that can confuse users; opt for clear, simple explanations.

- Inconsistent Labeling: Ensure all AI content is labeled uniformly across platforms.

Future Trends in AI Content Management

As AI technology evolves, so too will the challenges and opportunities it presents. Here are some future trends to watch:

- Increased AI Integration: Expect more platforms to integrate AI for content creation, necessitating ongoing policy updates.

- Enhanced Collaboration: Tools that allow users to collaborate with AI in content creation will become more prevalent.

- Real-Time Detection: Advances in AI will enable real-time detection and labeling of AI-generated content.

Recommendations:

- Continuous Monitoring: Regularly review and update policies to keep pace with technological advancements.

- Community Involvement: Engage with users and experts to gather feedback on AI content policies and practices.

Conclusion

The Oversight Board's call for new rules at Meta regarding AI-generated content is a necessary step towards addressing the unique challenges posed by this technology. By implementing separate rules, investing in detection tools, utilizing digital watermarks, and enhancing transparency, Meta can better manage the impact of AI on its platforms. As AI continues to advance, these strategies will be crucial in maintaining trust and integrity in digital content.

FAQ

What is AI-generated content?

AI-generated content refers to media created using artificial intelligence technologies, such as text, images, videos, and audio.

How does AI content detection work?

AI content detection involves using advanced algorithms to identify patterns and anomalies typical of AI-generated media, often through machine learning models and metadata analysis.

What are digital watermarks?

Digital watermarks are invisible markers embedded in media files, providing information about the content's origin and authenticity to help trace and verify AI-generated content.

Why is transparency important in AI content?

Transparency ensures users are informed about the origin and creation process of AI-generated content, helping prevent misinformation and fostering trust.

What future trends should we expect in AI content management?

Expect greater AI integration in content creation, real-time detection capabilities, and more collaborative tools that enable users to work alongside AI in creating media.

Key Takeaways

- New policies for AI content separate from misinformation rules are essential.

- Advanced detection tools are necessary to accurately identify AI-generated media.

- Digital watermarks enhance traceability and origin verification of AI content.

- Transparency and user education prevent misinformation and build trust.

- Ongoing policy updates are needed to keep pace with AI advancements.

Related Articles

- Meta's Deepfake Moderation: Challenges and Future Directions [2025]

- Building AI with a Physical World Understanding: Yann LeCun's Vision [2025]

- Exploring OpenAI's GPT-5.4: Strengths, Weaknesses, and Future Enhancements [2025]

- The Future of Work: Lawyers and Scientists Training AI to Steal Their Careers [2025]

- Nvidia's Open-Source AI Agent Platform: A Comprehensive Guide [2025]

- OpenAI's Acquisition of Promptfoo: Securing AI Agents for the Future [2025]

![Meta's Path Forward: New Rules for AI-Generated Content [2025]](https://tryrunable.com/blog/meta-s-path-forward-new-rules-for-ai-generated-content-2025/image-1-1773139117686.jpg)