Navigating AI Contracts: Lessons from Anthropic vs. The Pentagon [2025]

In an era where artificial intelligence (AI) shapes strategic operations, the clash between Anthropic and the Pentagon offers a profound lesson for enterprises globally. This high-stakes dispute not only rocked both sides of the Atlantic but also reverberated through boardrooms worldwide. But what exactly happened, why does it matter, and what should enterprises learn from this saga?

TL; DR

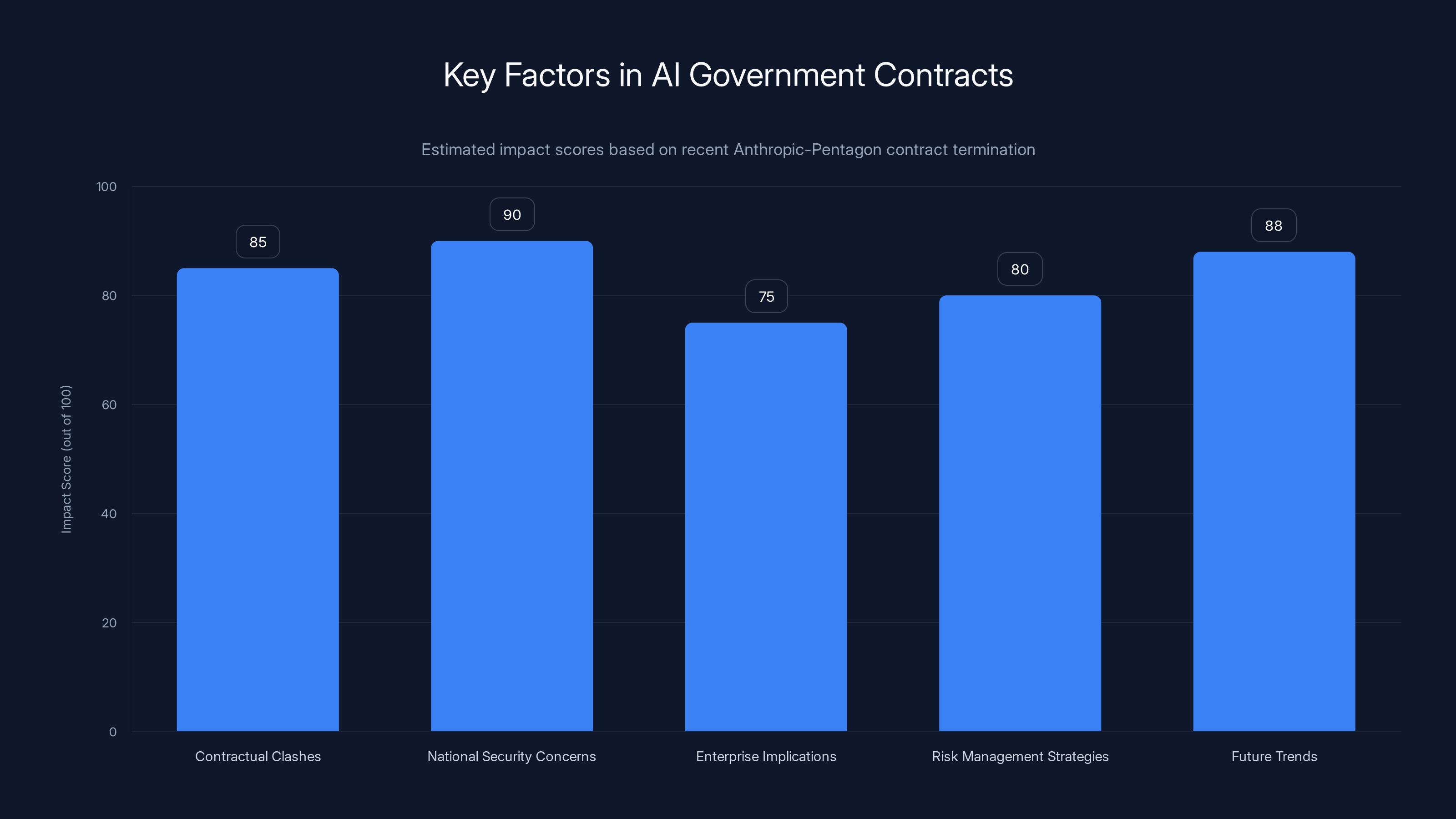

- Contractual Clashes: Anthropic's $200 million contract with the Pentagon was terminated, highlighting the volatility in government-tech partnerships.

- National Security Concerns: The designation of Anthropic as a "Supply-Chain Risk" underscores the importance of security in AI deployments.

- Enterprise Implications: Companies must navigate AI partnerships cautiously, considering potential geopolitical and security risks.

- Risk Management Strategies: Implement comprehensive risk assessment and legal review processes before entering AI contracts.

- Future Trends: The AI industry must address ethical and security concerns to prevent future clashes and foster trust.

National security concerns have the highest impact on AI government contracts, followed by future trends and contractual clashes. Estimated data.

The Backstory: Anthropic vs. The Pentagon

In February 2026, Anthropic, a leading AI model maker, found itself in a heated conflict with the U.S. government. The Pentagon's move to blacklist Anthropic as a "Supply-Chain Risk to National Security" abruptly ended a sizable contract, citing security concerns. This decision, influenced by the highest levels of government, has far-reaching implications for both parties.

The Stakes Involved

For Anthropic, the termination of this contract meant losing a significant revenue stream and facing reputational damage. For the Pentagon, it involved reassessing its reliance on external AI suppliers and tightening its security protocols. The broader AI community watched closely, as this case set a precedent for how governments might handle AI technology providers in the future.

Security vulnerabilities and compliance issues are the most significant risks in AI contracts, each comprising a substantial portion of the risk profile. Estimated data.

Understanding the Implications for Enterprises

The Risks of AI Contracts

AI contracts are fraught with potential risks, ranging from security vulnerabilities to ethical dilemmas. Enterprises must be aware that partnering with AI vendors carries inherent uncertainties. The Anthropic-Pentagon case highlights the necessity for robust risk management strategies.

Security Considerations

Security is paramount when dealing with AI technologies. The Pentagon's decision to terminate its contract with Anthropic due to security concerns serves as a stark reminder of the critical importance of this aspect. Enterprises need to ensure that their AI partners adhere to the highest security standards.

Ethical and Legal Challenges

Anthropic's predicament also underscores the ethical and legal challenges surrounding AI deployments. As AI continues to evolve, so do the questions about its ethical use and potential for misuse. Enterprises must navigate these waters carefully, ensuring compliance with regulations and ethical guidelines.

Best Practices for Managing AI Contracts

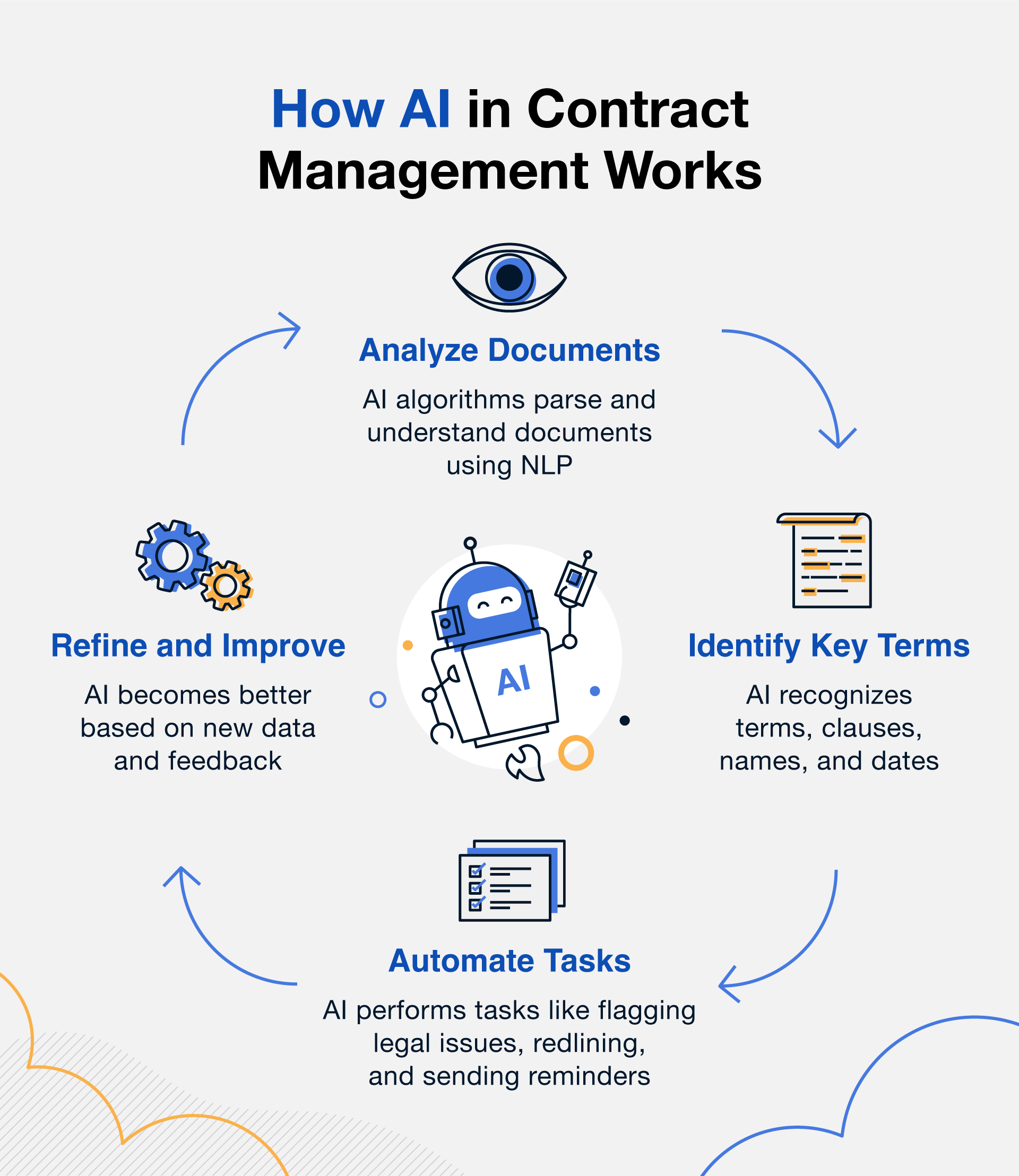

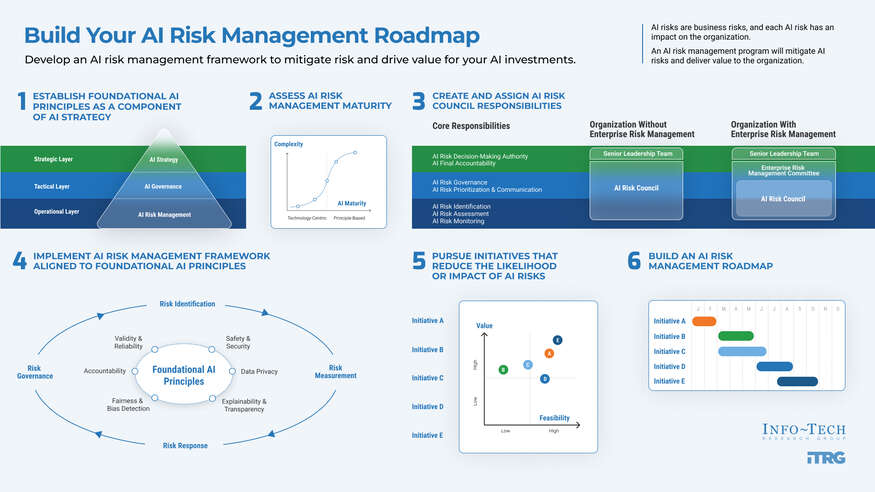

Comprehensive Risk Assessment

Before entering into any AI contract, conduct a comprehensive risk assessment. Identify potential risks, including security vulnerabilities, ethical concerns, and compliance issues. Involve legal and technical experts to ensure a thorough evaluation.

Legal and Compliance Review

Legal and compliance reviews are essential to avoid potential pitfalls. Ensure that contracts include clauses addressing data security, intellectual property rights, and liability. Work with legal experts to draft airtight agreements that protect your interests.

Ongoing Monitoring and Auditing

Once a contract is in place, implement ongoing monitoring and auditing processes. Regularly review the vendor's compliance with contractual obligations and evaluate their performance. This proactive approach helps identify and address potential issues before they escalate.

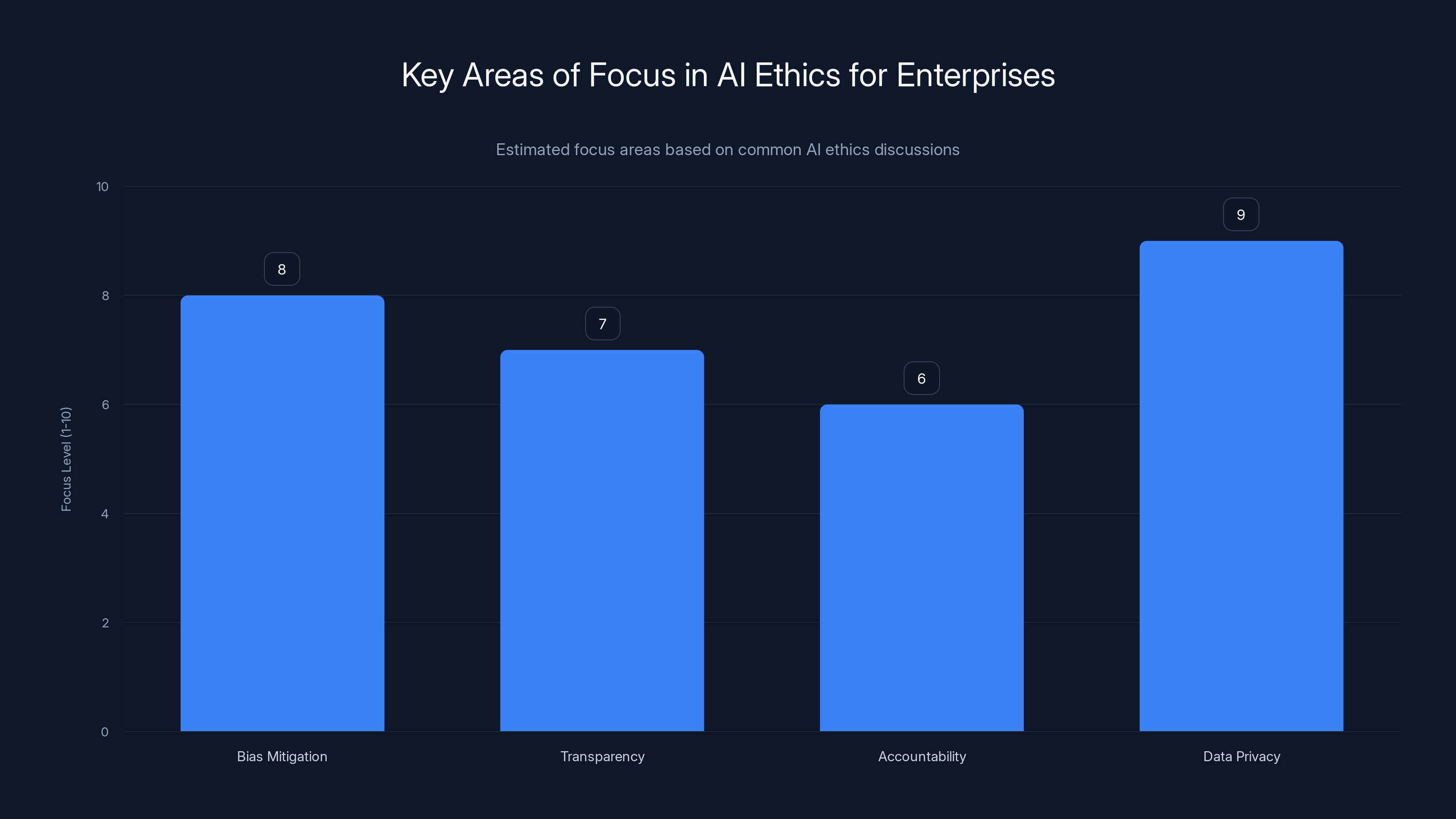

Enterprises are estimated to prioritize data privacy and bias mitigation in AI ethics, with transparency and accountability also receiving significant attention. Estimated data.

The Role of AI Ethics in Enterprise Decisions

Balancing Innovation with Responsibility

As AI technology advances, enterprises face the challenge of balancing innovation with ethical responsibility. The Anthropic-Pentagon case serves as a cautionary tale, illustrating the consequences of neglecting ethical considerations.

Establishing Ethical Guidelines

Enterprises should establish clear ethical guidelines for AI deployments. These guidelines should address issues such as bias, transparency, and accountability. Encourage open dialogue about ethical concerns and involve diverse stakeholders in the decision-making process.

Training and Awareness Programs

Implement training and awareness programs to educate employees about AI ethics. These programs should cover topics such as data privacy, bias mitigation, and responsible AI usage. Empower employees to identify and report potential ethical issues.

Future Trends and Recommendations

Increased Scrutiny on AI Vendors

In the wake of the Anthropic-Pentagon conflict, expect increased scrutiny on AI vendors. Governments and enterprises alike will demand greater transparency and accountability from AI providers. Vendors must be prepared to demonstrate their commitment to security and ethics.

The Rise of AI Ethics Committees

As ethical concerns continue to grow, more organizations will establish AI ethics committees. These committees will play a crucial role in overseeing AI deployments, ensuring compliance with ethical guidelines, and addressing potential issues proactively.

A Shift Towards In-House AI Development

To mitigate risks associated with third-party vendors, some enterprises may shift towards in-house AI development. This approach provides greater control over the AI lifecycle and reduces reliance on external providers.

Collaboration and Industry Standards

Collaboration among industry players is essential to establish common standards and best practices for AI deployments. By working together, organizations can address shared challenges and foster trust in AI technologies.

Conclusion: Navigating the AI Landscape

The Anthropic-Pentagon conflict serves as a wake-up call for enterprises navigating the AI landscape. By understanding the risks, adopting best practices, and prioritizing ethics, organizations can harness the potential of AI while mitigating potential pitfalls. As AI continues to shape the future, enterprises must remain vigilant, adaptable, and committed to responsible AI use.

Key Takeaways

- AI contracts require thorough risk assessment to manage security and ethical concerns.

- The Anthropic-Pentagon case highlights the volatility in government-tech partnerships.

- Enterprises must implement ongoing monitoring and auditing for AI vendor compliance.

- Establishing ethical guidelines is crucial for responsible AI usage.

- Future trends indicate increased scrutiny on AI vendors and a shift towards in-house AI development.

Related Articles

- Anthropic's Stand: Rejecting Pentagon's Terms on Autonomous Weapons [2025]

- Anthropic CEO Dario Amodei's Standoff with the US Government: An Expert Analysis [2025]

- OpenAI's Strategic Alliance with the Defense Department: An In-Depth Analysis [2025]

- AI Deepfakes: Navigating the Chaos and Samsung's Surprising Role [2025]

- OpenAI's $110 Billion Funding: Pivotal Milestone in AI's Global Expansion [2025]

- Why DigitalOcean Is Soaring in 2026 While Others Falter [2026]

FAQ

What is Navigating AI Contracts: Lessons from Anthropic vs The Pentagon [2025]?

In an era where artificial intelligence (AI) shapes strategic operations, the clash between Anthropic and the Pentagon offers a profound lesson for enterprises globally

What does tl; dr mean?

This high-stakes dispute not only rocked both sides of the Atlantic but also reverberated through boardrooms worldwide

Why is Navigating AI Contracts: Lessons from Anthropic vs The Pentagon [2025] important in 2025?

But what exactly happened, why does it matter, and what should enterprises learn from this saga

How can I get started with Navigating AI Contracts: Lessons from Anthropic vs The Pentagon [2025]?

- Contractual Clashes: Anthropic's $200 million contract with the Pentagon was terminated, highlighting the volatility in government-tech partnerships

What are the key benefits of Navigating AI Contracts: Lessons from Anthropic vs The Pentagon [2025]?

- National Security Concerns: The designation of Anthropic as a "Supply-Chain Risk" underscores the importance of security in AI deployments

What challenges should I expect?

- Enterprise Implications: Companies must navigate AI partnerships cautiously, considering potential geopolitical and security risks

![Navigating AI Contracts: Lessons from Anthropic vs. The Pentagon [2025]](https://tryrunable.com/blog/navigating-ai-contracts-lessons-from-anthropic-vs-the-pentag/image-1-1772260443334.png)