The Perfect Storm: Why Your Next TV is Getting More Expensive

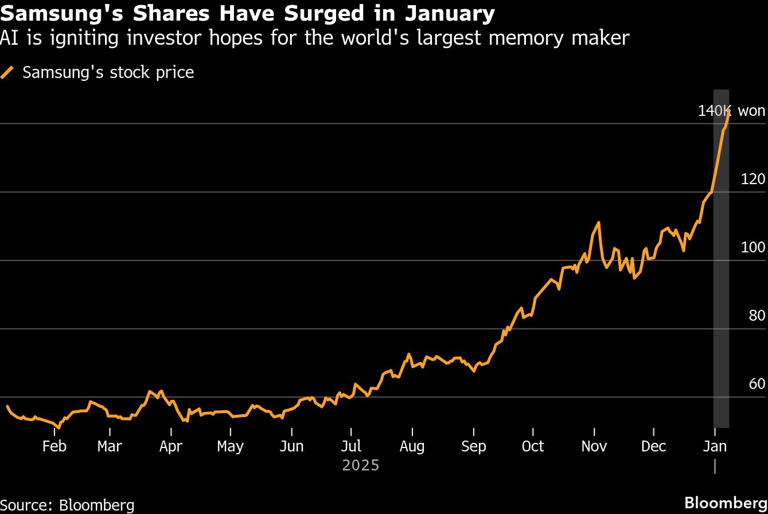

Last week, Samsung dropped a bombshell that didn't make headlines outside tech circles. The company quietly warned that TV prices are about to spike. Not a little. Significantly. The culprit? Artificial intelligence is eating up all the memory chips.

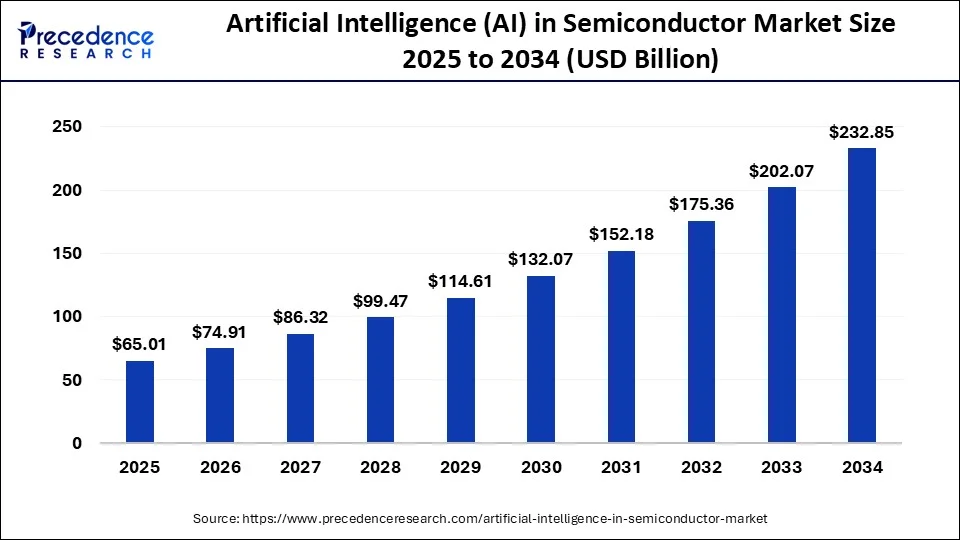

Here's what's happening. The semiconductor industry is experiencing a resource crunch. AI data centers are consuming massive quantities of high-bandwidth memory—the kind that powers everything from graphics processing to real-time data analysis. Meanwhile, TV manufacturers still need memory chips for their smart TV systems. And right now, AI's appetite is winning that competition.

This isn't speculation. Samsung Electronics explicitly stated that memory chip availability will tighten through 2025, directly impacting production costs. When supply contracts and demand stays constant, prices rise. Basic economics, but with real consequences for your wallet.

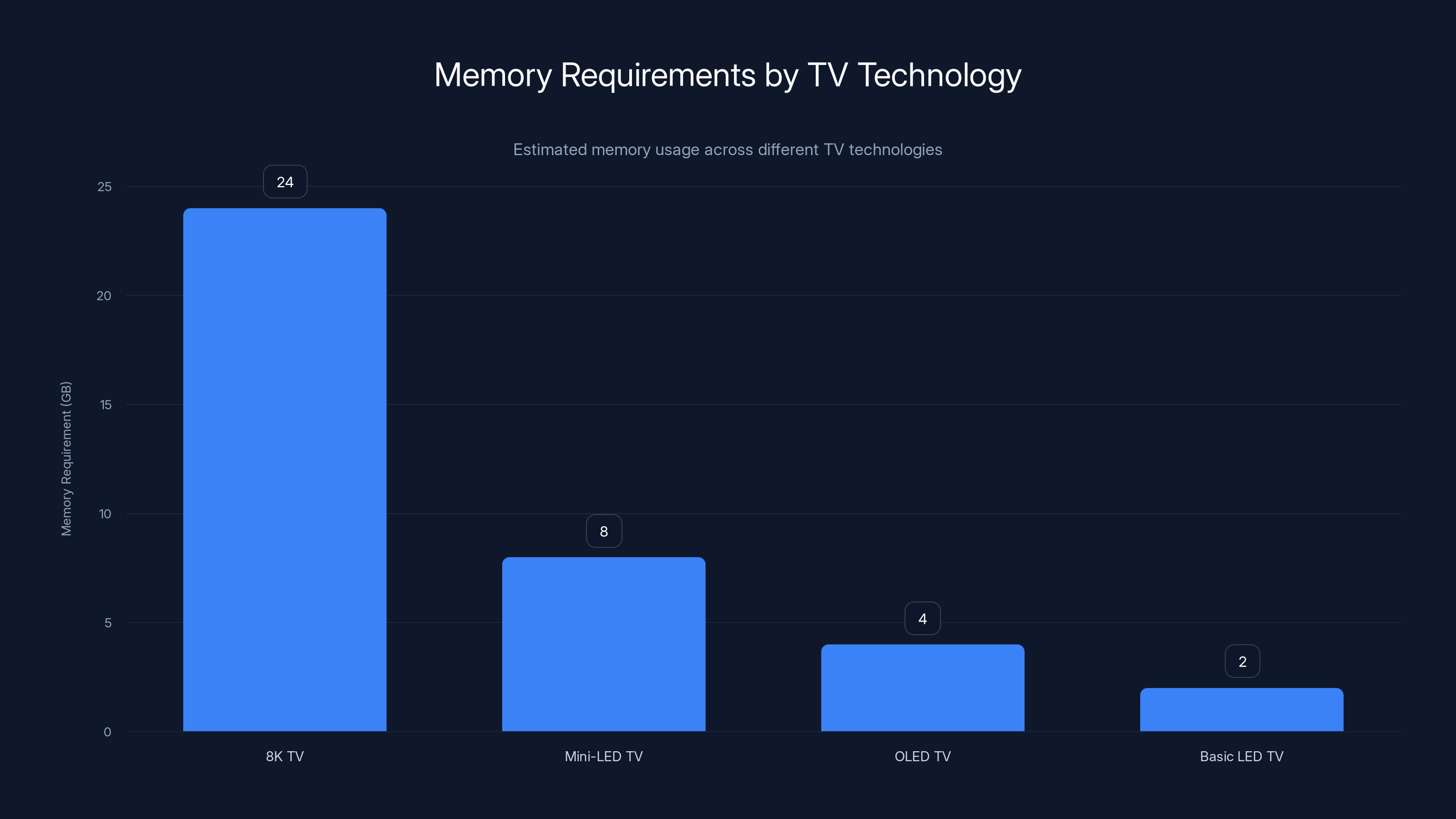

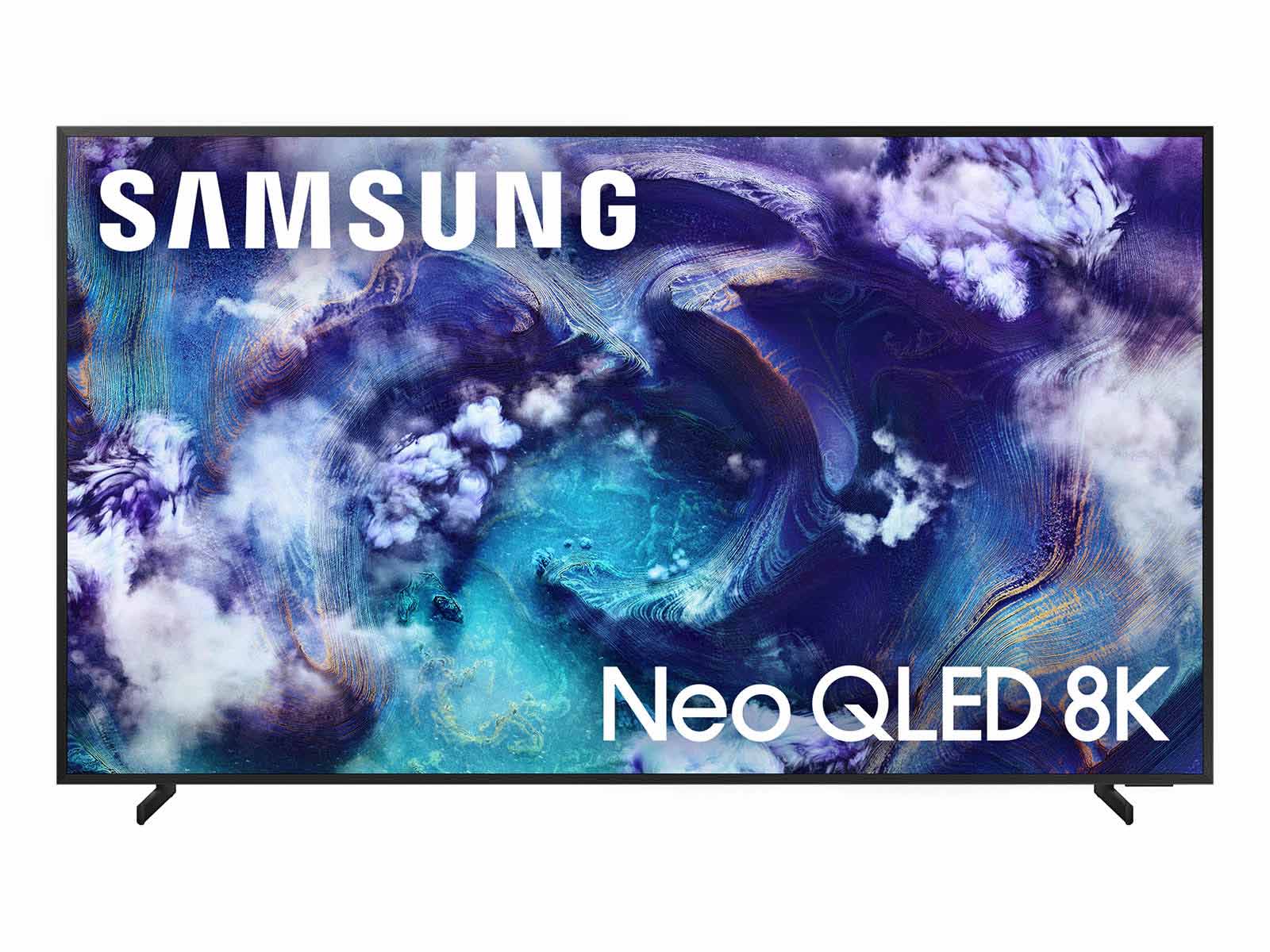

What makes this particularly frustrating is the timing. We're in a golden age of TV technology. 8K resolution, Mini-LED backlighting, AI upscaling, variable refresh rates for gaming—these features have become standard on mid-range models. But they all require sophisticated memory infrastructure. More AI features mean higher memory demands. Fewer available chips mean manufacturers can produce fewer TVs, which means prices climb.

The average smart TV today contains more processing power than a desktop computer from 2010. That's not hyperbole. A mid-range 65-inch Samsung TV running Tizen OS, AI picture enhancement, and real-time streaming optimization needs dedicated memory architecture. Add machine learning algorithms that upscale lower-resolution content to 4K in real-time, and you're looking at substantial memory requirements.

But here's where it gets interesting: this shortage reveals something deeper about modern electronics. Every device is becoming a mini-computer. TVs are no longer passive displays. They're computational devices. And computation requires silicon. The more sophisticated the feature set, the more silicon you need.

Understanding the Semiconductor Supply Chain Crisis

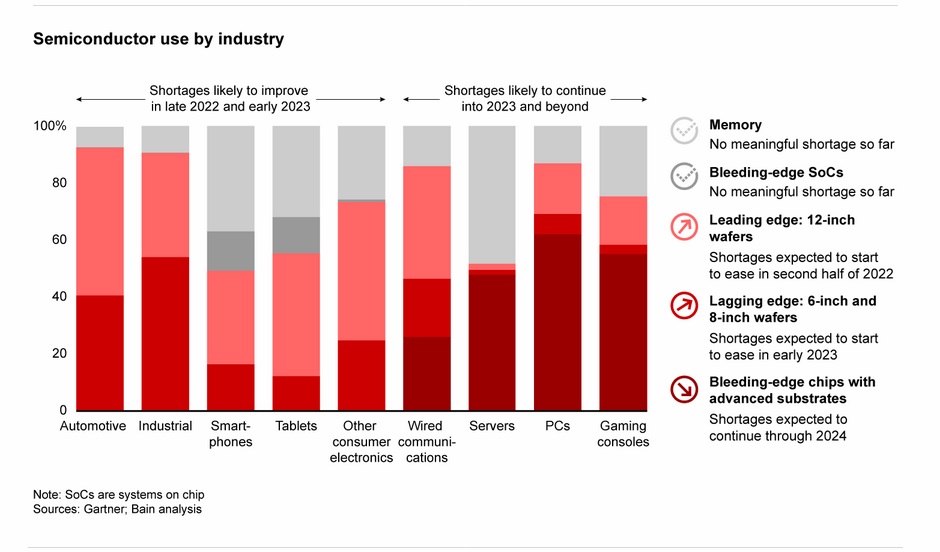

The semiconductor shortage isn't new, but its causes have shifted dramatically. In 2021-2023, we saw pandemic-related supply chain disruptions. Factories shut down. Shipping lanes backed up. Containers sat in ports. That was a logistics problem with a clear solution: reopen factories, restart shipping, restore normal operations.

What's happening now is fundamentally different. It's a demand problem, not a supply problem. And AI is the demand driver.

Data centers training large language models consume extraordinary amounts of memory bandwidth. A single AI training cluster might contain thousands of GPUs, each requiring high-speed memory connections. NVIDIA's latest H200 GPUs alone consume 141 gigabytes per second of memory bandwidth. Multiply that across thousands of chips across hundreds of data centers worldwide, and you're looking at extraordinary aggregate demand.

Memory manufacturers like Samsung, SK Hynix, and Micron face a choice: allocate production to AI infrastructure or consumer electronics? The margins are better on AI chips. The contracts are longer-term. The customers are tech giants with proven revenue streams. A contract with OpenAI or Meta is safer than selling to TV makers who face cyclical demand patterns.

Consumer electronics manufacturers didn't see this coming. Or rather, they saw it coming but miscalculated its speed. They assumed gradual AI adoption. Instead, they got a spike that resembles a hockey stick chart. Straight up.

The memory shortage manifests differently across semiconductor types. DRAM—dynamic random-access memory—is where the worst pressure sits. High-bandwidth memory designed for AI applications has become scarce. Standard DDR memory for consumer TVs doesn't face the same shortage, but production capacity conversations are zero-sum. Fab space dedicated to high-end memory is fab space not dedicated to commodity memory.

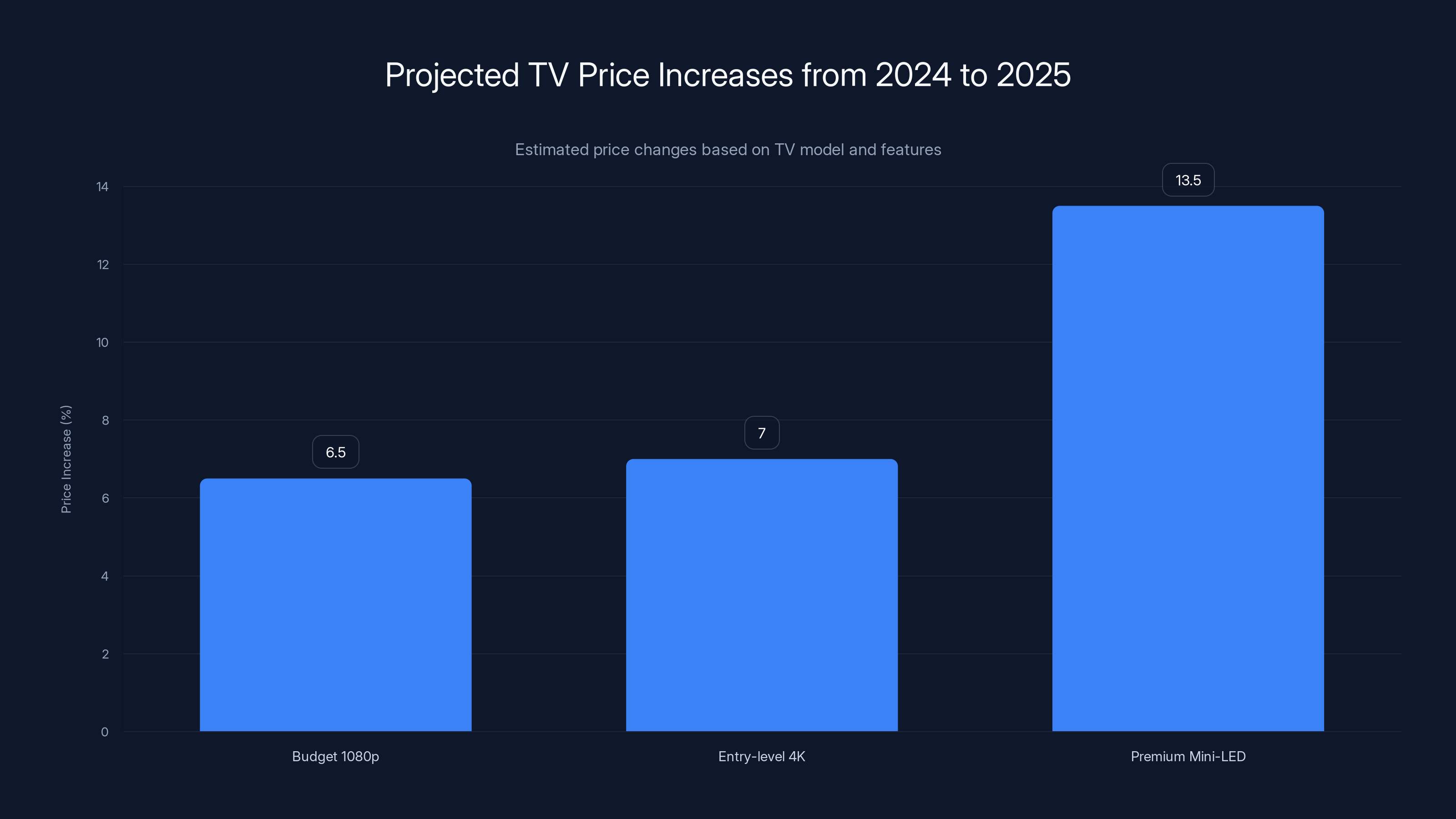

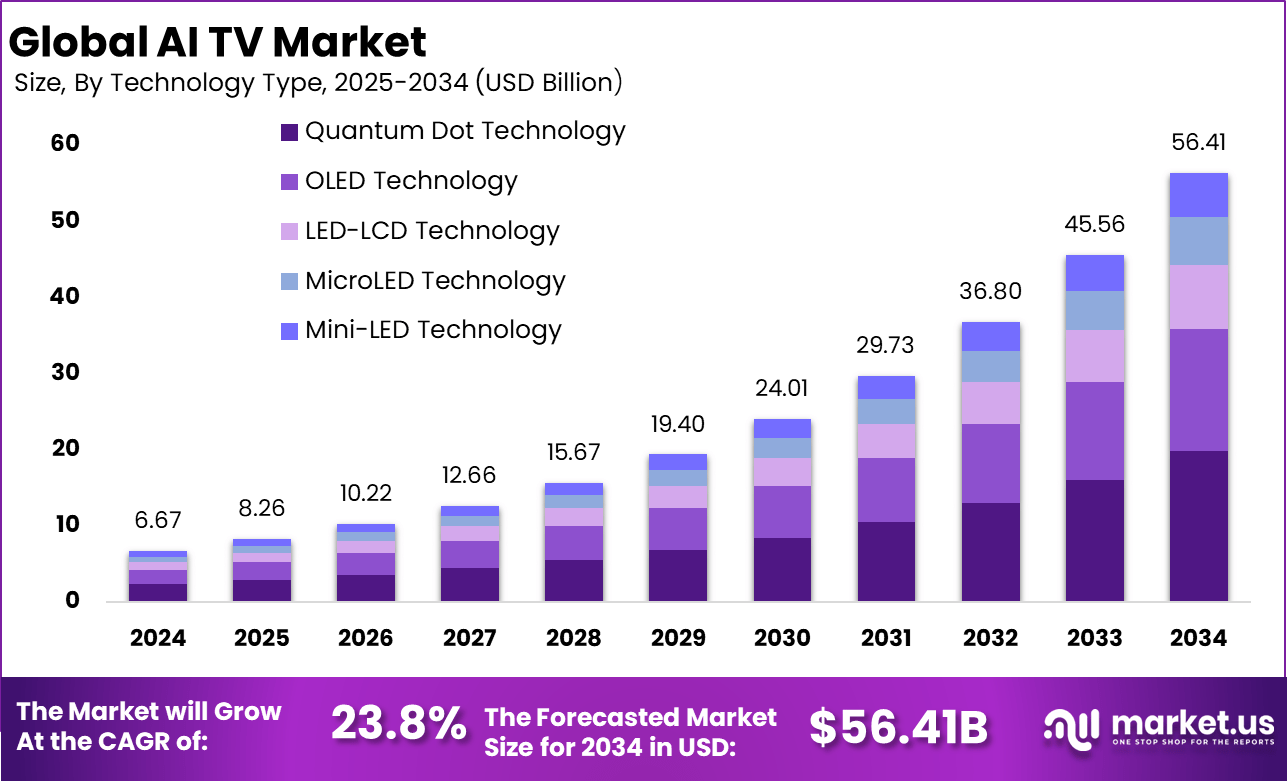

Estimated data shows that premium models with advanced features will see the highest price increases, up to 13.5%, due to higher memory chip costs.

How AI is Consuming Semiconductor Supply

Let's be concrete about this. A modern data center GPU cluster training an AI model might use 5-10 petabytes of working memory capacity across its system. That's 5-10 million gigabytes. A smart TV uses maybe 8 gigabytes of total memory, including flash storage. One AI cluster consumes as much memory infrastructure as tens of thousands of TVs.

Now multiply that across the major cloud providers. OpenAI, Google, Meta, Microsoft, Anthropic, and emerging Chinese competitors are all building massive AI infrastructure. Each company is deploying thousands of GPUs. Each GPU needs memory. The aggregate demand is staggering.

From a manufacturer's perspective, here's the calculus. A high-bandwidth memory chip for AI data centers sells for

It's not even close. The economics point toward AI every single time.

Samsung's NVIDIA HBM—high-bandwidth memory—for AI is flying off the shelves. Their standard DDR chips for TVs? Those are competing for production slot time against more profitable alternatives. Samsung isn't being malicious. It's following basic business incentives.

This creates a cascading effect. TV manufacturers can't get the memory chips they need, so they reduce production. With fewer TVs manufactured, they need fewer chips from suppliers. But suppliers have already committed most of their fab capacity to high-margin AI chips. They can't easily reallocate capacity back to consumer electronics.

The result? Price increases are the market's way of restoring equilibrium. Higher prices reduce demand. Lower demand matches constrained supply. Eventually, the market balances.

8K TVs require the most memory, significantly more than other technologies, due to higher resolution and data throughput needs. Estimated data.

The Specifics of Memory Chip Types and TV Requirements

Not all memory is created equal. Understanding different chip types helps explain why the shortage is so painful for TV makers.

DRAM (Dynamic Random Access Memory) is the working memory in TVs. It holds data that the processor actively uses. When you're watching Netflix and the TV is running its AI upscaling algorithm, DRAM holds the frame buffer, the algorithm's state, and temporary processing data. Typical smart TVs need 2-4GB of DRAM today, up from 512MB a decade ago.

Flash memory stores the TV's operating system, apps, and user data. This is non-volatile—it persists when the TV powers off. As TVs add more features and apps, flash requirements have grown. Modern Tizen OS TVs might have 16-32GB of flash storage.

HBM (High-Bandwidth Memory) is what AI data centers absolutely need. HBM offers 10x the bandwidth of standard DRAM. For AI applications performing massive matrix multiplications, this bandwidth is non-negotiable. You simply can't train efficient large language models without it.

Here's the problem: HBM and high-end DRAM share some manufacturing processes. Some fab lines can produce either, depending on configuration. When AI demand explodes for HBM production, those shared fab lines get reallocated. Standard DRAM production capacity shrinks.

TV manufacturers designed their 2025 product roadmaps assuming steady DRAM availability. They planned 50 million units. They booked chip allocations based on historical patterns. Then AI exploded. Samsung, their chip supplier, suddenly couldn't deliver the quantities promised.

The contractual language matters here. Chip suppliers often include force majeure clauses allowing them to reduce deliveries if circumstances change. A sudden surge in AI demand might qualify. TV manufacturers are stuck: they either accept reduced shipments or pay significantly higher prices.

Most are choosing the latter. Absorbing higher chip costs means raising TV prices. Simple math.

Samsung's Position: Supplier and Competitor

Here's where it gets complicated. Samsung is simultaneously the world's largest TV manufacturer and one of the world's largest memory chip suppliers.

As a chip supplier, Samsung has strong incentives to maximize AI chip production. These are high-margin products. The gross margins on HBM might reach 50% or higher. Standard consumer DRAM margins sit around 20-30%.

As a TV manufacturer, Samsung has incentives to maximize consumer memory chip availability. Lower input costs mean more competitive TV pricing.

These incentives conflict. And Samsung's own announcement—warning of TV price increases—suggests the chip supplier incentives are winning internally.

This creates an interesting market dynamic. When Samsung raises TV prices due to chip shortages, competitors like LG and TCL face the same shortage. Everyone's prices are rising. But Samsung, controlling both its chip supply and TV manufacturing, might optimize its supply allocation to maximize overall profit. That could mean prioritizing high-margin HBM over lower-margin consumer DRAM.

It's not sinister. It's rational business optimization. But it does mean consumers of all brands are paying more.

Samsung's public warning serves a purpose too. By announcing price increases in advance, Samsung manages expectations. Retailers and consumers understand the shortage is industry-wide, not Samsung-specific. This prevents customers from switching to competitors in search of better prices. Everyone's raising prices because everyone faces the same chip shortage.

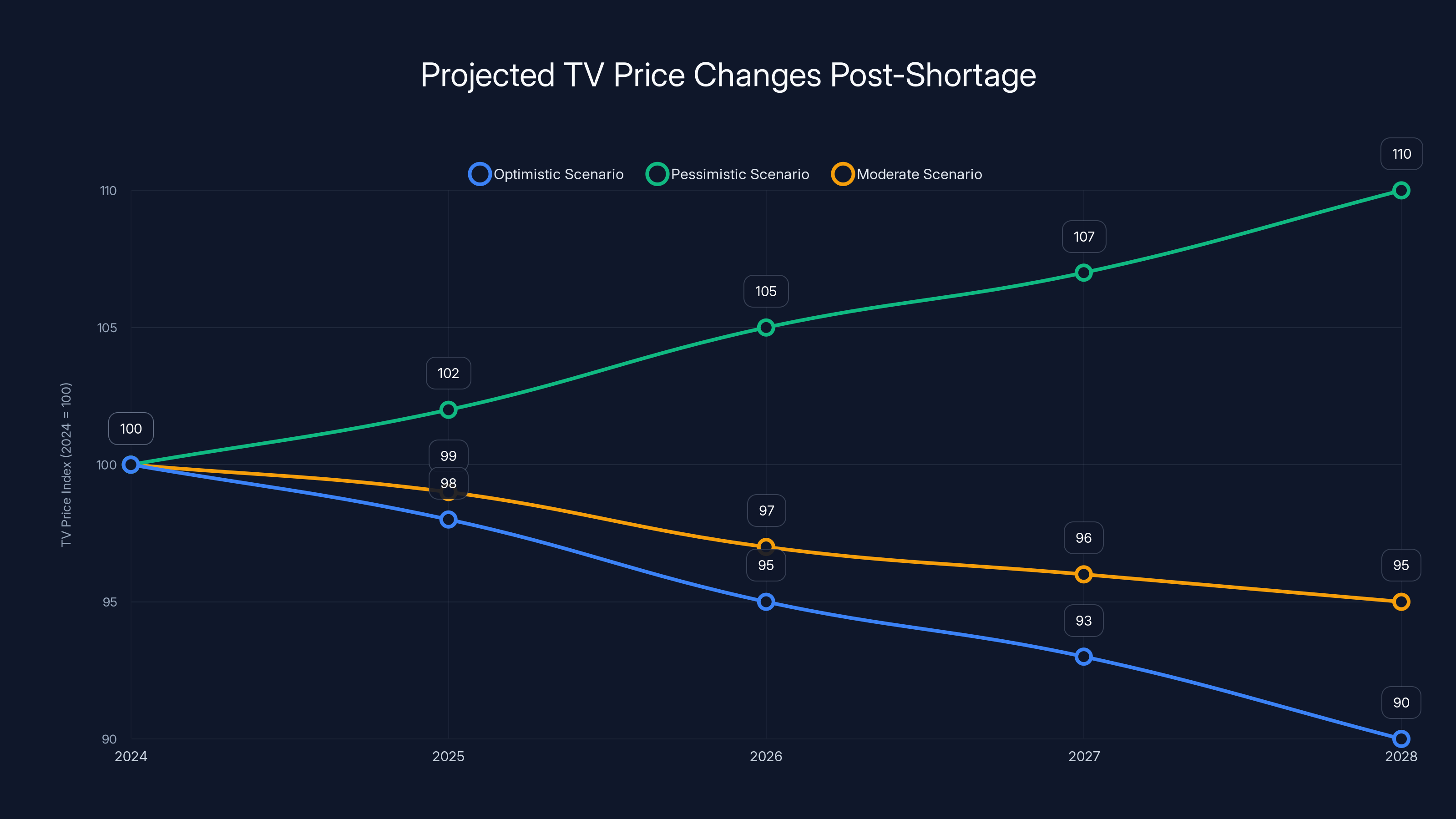

Under the optimistic scenario, TV prices could decrease slightly by 2028. However, the pessimistic scenario suggests continued price increases due to sustained AI chip demand. Estimated data based on historical trends.

Impact on TV Pricing: What Consumers Should Expect

How much are we talking? Industry estimates suggest 5-15% price increases across most TV segments through 2025. On a

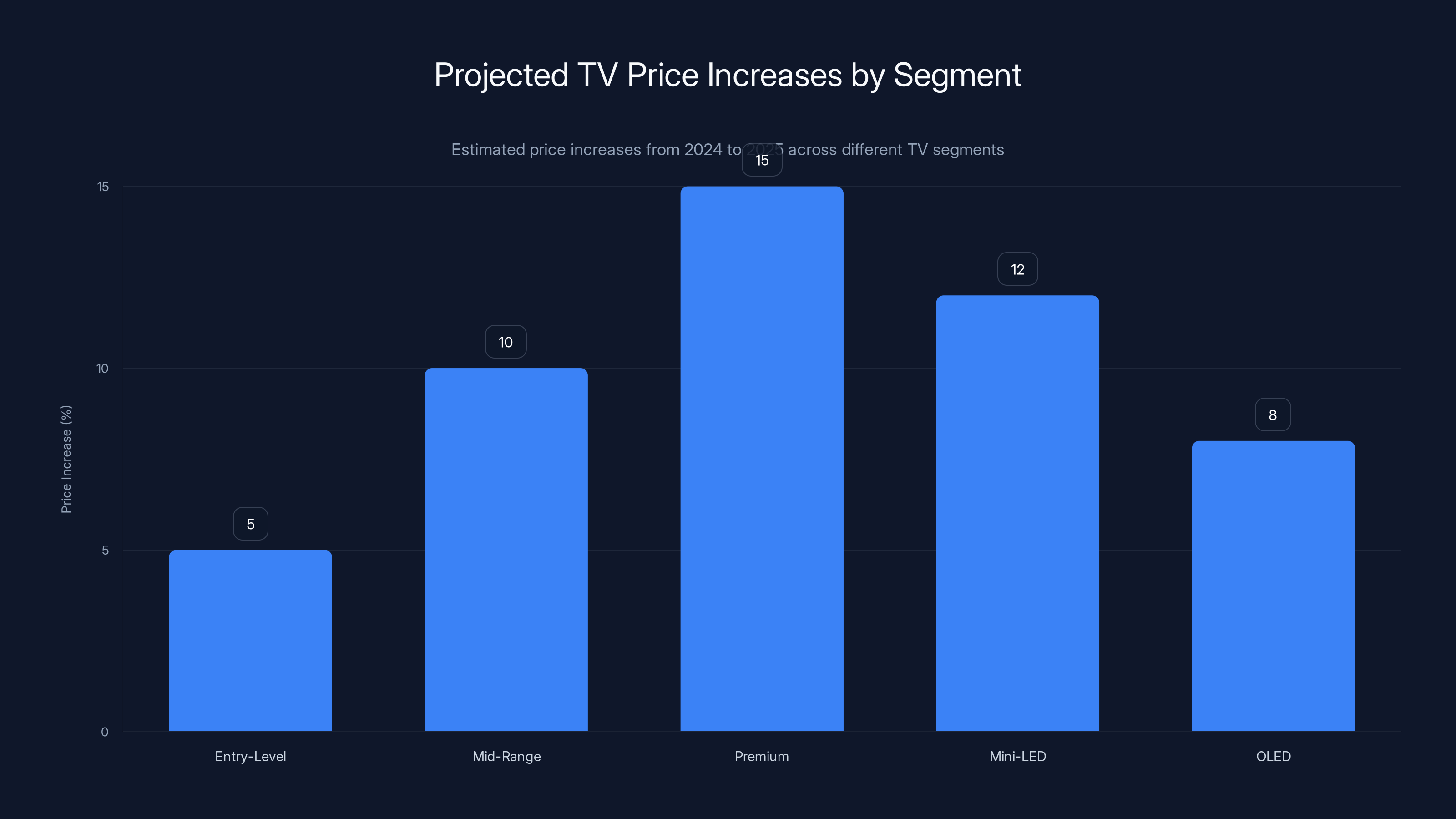

The increases won't be uniform. Premium models with more advanced features—those requiring more memory for AI upscaling, local processing, and edge AI features—will see larger price hikes. Budget models with fewer features will see modest increases.

Here's why: adding AI features costs manufacturers more in memory. But the premium they can charge for AI features is larger than the memory cost. So manufacturers are incentivized to push premium models harder, accepting tighter margins on budget options.

Historically, a 55-inch 4K Samsung TV cost around

LG and TCL will face identical pressures. TCL, cheaper than Samsung, might absorb less of the cost increase, but they'll still need to raise prices. LG, focused on premium models, will likely see larger percentage increases on high-end OLED TVs.

Mini-LED TVs, which require more memory for local dimming algorithms and backlighting control, will see steeper increases than standard LED models. OLED TVs, already premium-priced, might see smaller percentage increases but larger absolute increases.

The Cascade Effect: How Price Increases Ripple Through Markets

When TV prices rise, the impact extends far beyond the television itself. It's a cascade effect.

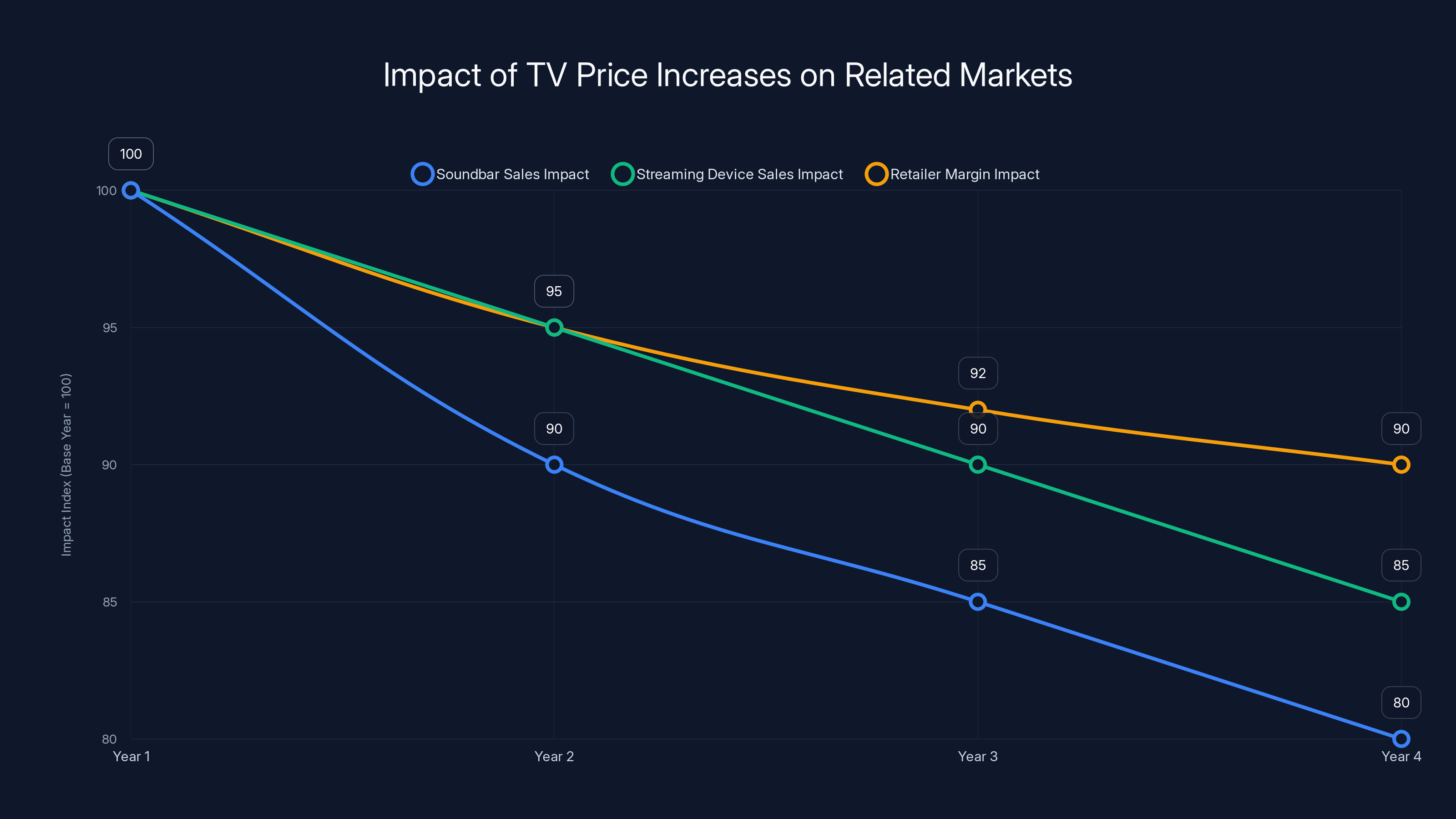

Soundbar manufacturers struggle. Consumers buying a

Streaming device manufacturers feel pressure. A Roku, Fire TV Stick, or Apple TV might seem discretionary when a TV itself costs more. Customers delay accessory purchases.

Retailers adjust margins. Best Buy or Costco typically mark up TVs 15-20%. With higher wholesale costs, they must choose: absorb the increased cost (reducing profit per unit) or pass it to consumers (risking sales volume). Most pass it through, accelerating the visible price increase.

Smaller retailers suffer more. A local electronics store can't negotiate volume discounts like Best Buy. They pay higher wholesale prices and have narrower margins to work with. Some exit the TV business entirely, focusing on services like installation and repair instead.

Consumers respond by delaying purchases. TV replacement cycles extend. Instead of upgrading every 5-7 years, customers keep older sets 7-10 years. This temporarily depresses overall market demand, which could theoretically create a future inventory surplus.

But here's the interesting part: while consumers delay purchasing, manufacturers continue reducing production. If demand drops 10% but supply drops 15%, prices stay elevated. The equilibrium shifts upward.

Estimated data indicates that premium and Mini-LED TVs will see the highest price increases, driven by advanced features and higher memory costs.

Which TV Technologies Are Most Affected?

Not all TVs face equal pressure. Technology complexity determines memory requirements.

8K TVs are getting hammered. They're already expensive and niche. Adding 10-15% to an $8,000 set is painful. The market for 8K is tiny anyway—less than 3% of global TV sales. But the cost structure matters. 8K TVs require triple the DRAM of 4K sets.

Mini-LED TVs see big increases. These displays require sophisticated memory for controlling thousands of individual dimming zones. A high-end Mini-LED TV from Samsung or Hisense might need 8GB of DRAM just for backlighting control logic. Standard LED models need 2-3GB total.

OLED TVs face moderate pressure. OLED technology doesn't require as much memory as Mini-LED. The pixel-level control is handled by the display driver hardware, not the main CPU. But premium features like AI upscaling add memory requirements.

Basic LED TVs see minimal increases. A $400 1080p LED TV might see just 3-5% price growth. These models use less memory—1-2GB total. Simpler processors. Lower manufacturing complexity overall.

Smart TV platforms matter too. Tizen OS (Samsung), Web OS (LG), and Android TV (TCL, Hisense) all have memory footprints. But the real memory consumer isn't the OS—it's the AI features bolted on top. Upscaling algorithms. Frame interpolation. Noise reduction. These machine learning models live in memory, consuming significant resources.

A TV running aggressive local AI upscaling uses 2x the memory of an equivalent model relying on server-side upscaling. But consumers prefer local processing—it's faster, doesn't require internet, and feels snappier. So manufacturers add memory to enable local processing. Shortage hits. Price rises.

The Semiconductor Manufacturing Bottleneck

Underlying this crisis is a fundamental manufacturing limitation. Building a new semiconductor fab costs $10-20 billion and takes 3-4 years. You can't quickly add capacity.

Existing fabs operate at near-maximum capacity across the industry. Samsung operates fabrication plants in South Korea, the US, and China. TSMC in Taiwan runs multiple fabs. SK Hynix spreads production across South Korea and China. Micron across the US, Japan, and Europe.

They're all running hot. Utilization rates above 90%. When demand spikes for high-margin products like AI chips, there's no slack capacity. You can't instantly retool a fab line from producing consumer DRAM to HBM. Reconfiguration takes weeks. Validation testing takes months. So manufacturers make rational economic choices: dedicate available capacity to highest-margin products.

Geopolitical tensions complicate matters. Taiwan produces over 60% of the world's advanced semiconductors through TSMC. China, the US, and Taiwan are in a delicate dance around semiconductor trade. Some memory manufacturing is shifting to South Korea and the US for supply chain resilience. But existing plants already operate at capacity. New plants haven't come online yet.

Maturity of manufacturing processes matters too. Creating leading-edge chips requires sophisticated fabrication. Creating memory requires volume. Memory chips actually don't need cutting-edge process nodes. They benefit from mature, optimized processes that maximize yield and throughput. But these mature fabs are fully booked.

Estimated data shows a declining trend in soundbar and streaming device sales, alongside reduced retailer margins, as a result of increased TV prices.

AI's Exponential Memory Demands

Why is AI consuming so much memory so quickly? The answer is algorithmic complexity and model size.

GPT-3 has 175 billion parameters. GPT-4 likely exceeds 1 trillion parameters. Training these models requires holding vast amounts of data in working memory simultaneously. A single training run might process terabytes of data. The model's internal state—the weights being adjusted during training—consumes enormous memory.

Inference (running a trained model to generate responses) also requires substantial memory. When Chat GPT processes your prompt, it's holding the entire model in memory, plus your prompt, plus the tokens it's generated so far, plus working memory for the computation. A 1.4 trillion parameter model is approximately 2.8 terabytes of memory if stored in float 16 format. Inference requires this in high-speed memory.

Quantization helps somewhat, reducing memory requirements by 50-75%. But even quantized, modern models are enormous.

Compare this to traditional software. A smartphone app occupies gigabytes. A desktop application uses megabytes. A web server application uses gigabytes. But AI models operate at fundamentally different scale.

And it's accelerating. Scaling laws suggest that each 10x increase in parameter count provides meaningful capability improvements. We're seeing this with GPT-4, Claude 3, and emerging open-source models. Each generation is 2-10x larger than the previous one.

If memory demands double every 12-18 months while semiconductor production grows 5-10% annually, the shortage will persist for years.

Supply Chain Lessons: What the TV Shortage Tells Us

This crisis illuminates broader supply chain vulnerabilities in modern electronics.

First, single-commodity bottlenecks can devastate industries. Memory chips are commodity components. You'd think massive competition among suppliers would prevent shortages. But when demand spikes for a specific variant—HBM for AI—suppliers converge on it. Diversity of suppliers doesn't help if they're all responding to the same market signals.

Second, contracts with force majeure clauses create leverage asymmetries. Chip suppliers can reduce deliveries if circumstances warrant. TV manufacturers can't force delivery. When supply tightens, suppliers have all the power.

Third, globalization creates fragility. Semiconductors flow from Taiwan, South Korea, and Japan to China, Vietnam, and Mexico for TV assembly, then distribute globally. Disruption at any point cascades everywhere. No country has domestic chip supply meeting domestic demand.

Fourth, margin economics drive allocation decisions. High-margin products attract supply. Low-margin products get squeezed. If TV manufacturers could offer higher margins, they'd get allocation priority. But TV markets are competitive. LG, TCL, Hisense, and smaller Chinese makers prevent margins from staying high. So manufacturers end up rationed.

Fifth, speed to market matters enormously. OpenAI released Chat GPT and created AI urgency. Enterprises immediately started committing to AI infrastructure. Cloud providers rushed to deploy chips. The speed of enterprise AI adoption outpaced supply chain velocity. Traditional industries, used to 18-month planning cycles, got caught flat-footed.

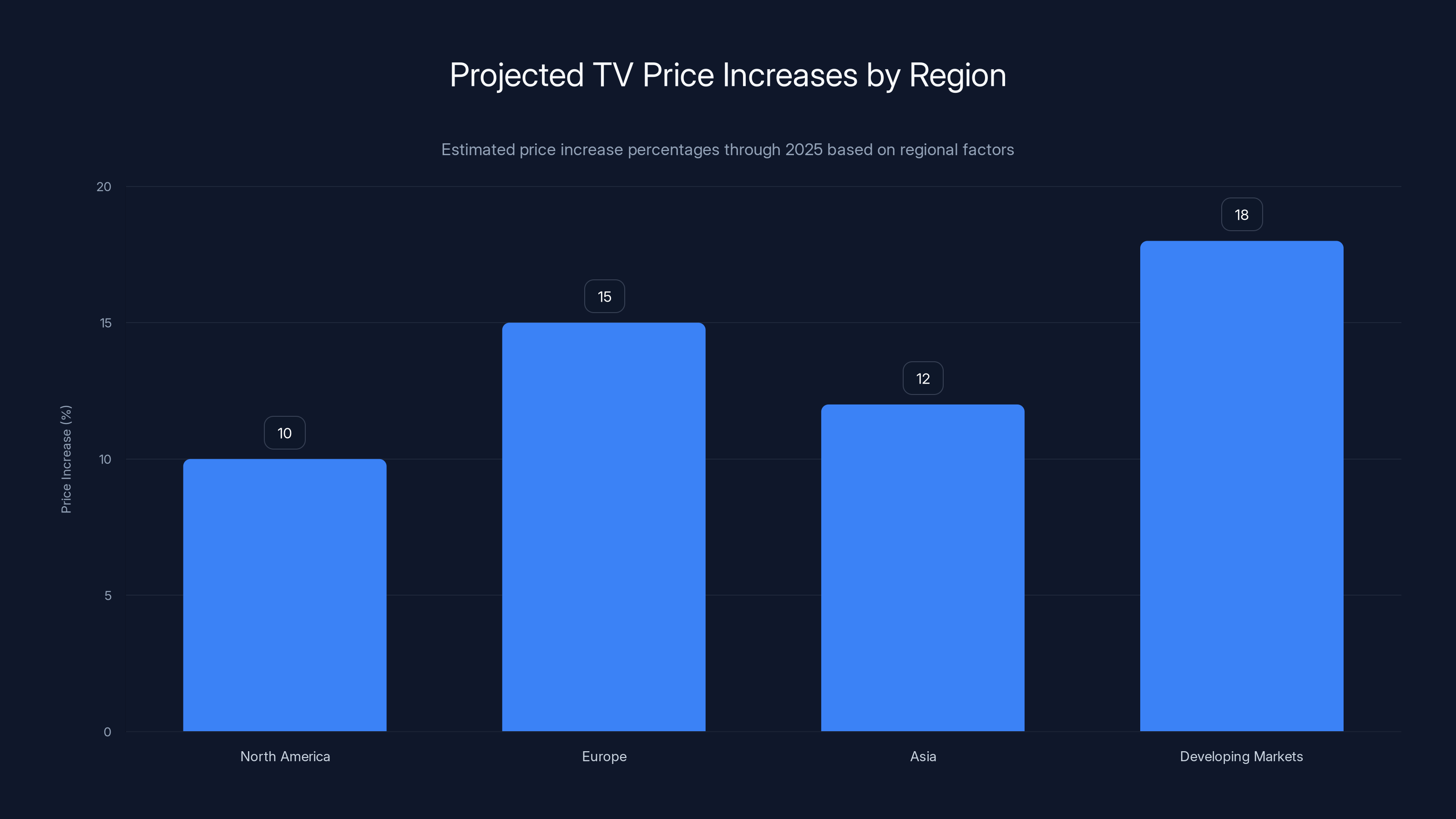

Estimated data shows Europe and Developing Markets facing the highest TV price increases, while North America and Asia experience moderate rises due to regional factors.

Global TV Market Response: Regional Variations

Price impacts won't be uniform globally. Regional supply chains, tariffs, and consumer preferences create different pressures.

North America faces moderate increases. US tariffs on Chinese electronics add complexity, but Samsung TV production in Mexico provides some domestic supply. Prices might rise 8-12% through 2025.

Europe faces steeper increases. Most TVs sold in Europe are imported or assembled from imported components. Transportation costs and tariffs stack on top of higher memory costs. European consumers might see 12-18% increases. British consumers face additional Brexit-related complexities in supply chains.

Asia sees regional variation. South Korea and Japan, home to Samsung and other manufacturers, might see smaller increases than exported units. China might see larger increases if component availability is more constrained. India and Southeast Asia, dependent on imports, could see severe increases.

Developing markets often bear the worst impact. A

Brand responses vary too. Samsung, vertically integrated with chip production, has more control. LG depends more on external suppliers. TCL, with heavy China exposure and lower cost structure, might absorb costs more than Samsung. Smaller brands might exit markets where they can't maintain margin viability.

When Will Prices Drop Again?

This is the critical question: when does the shortage ease?

Two scenarios exist. Optimistic scenario: AI chip demand stabilizes as major cloud providers finish deploying infrastructure. They invested $100+ billion in AI capacity. Once deployed, the voracious appetite slows. More memory capacity gets freed for consumer electronics. By late 2025 or 2026, memory prices stabilize and TV prices return to historical levels.

Pessimistic scenario: AI adoption accelerates beyond infrastructure deployment. AI features proliferate. Every company wants edge AI, local inference, on-device processing. Demand for AI chips continues growing exponentially. Memory remains scarce. TV prices stay elevated through 2026, 2027, beyond.

Reality is probably somewhere between. Some markets will stabilize faster. AI chip demand will likely moderate after the initial infrastructure build-out, but continue growing at 30-40% annually for years. Memory capacity will increase, but not fast enough to fully satisfy demand. TV prices will drop 2-3% from peak increases, but stay permanently elevated versus 2024 levels.

Consider pricing history. In 2012, the worst DRAM shortage in a decade hit. Prices tripled. When the shortage eased, prices fell sharply but stabilized 20-30% higher than pre-shortage levels. The new capacity built during the shortage remained, but margins normalized. TVs that cost

We should expect similar dynamics. Higher equilibrium prices, not a return to 2024 levels.

Manufacturer Strategies: How TV Makers Are Adapting

TV manufacturers aren't just accepting higher costs. They're actively adapting.

Some are simplifying feature sets. Instead of every model including AI upscaling, they're making it a premium feature. Entry-level models use less memory. This differentiates products, justifies price premiums on high-end models, and reduces memory needs in budget segments.

Others are shifting to server-side processing. Instead of local AI upscaling, stream the video to the cloud, upscale on servers, stream back. This offloads memory requirements to data centers. But it requires reliable internet and creates latency. Most consumers prefer local processing. So this is a secondary strategy.

Some manufacturers are increasing memory efficiency. More sophisticated algorithms fitting in less memory. Optimized code using fewer cycles. Better compression of model weights. But physics limits optimization. You can't squeeze modern AI models into 2GB of memory without losing functionality.

Others are negotiating longer-term supply contracts at fixed prices. If you commit to buy millions of units at a fixed price, suppliers will allocate capacity. This shifts risk—if prices fall, you're stuck at the higher price. But it guarantees supply and budget certainty.

Some are diversifying suppliers. Historically, Samsung might have bought memory from TSMC, Samsung, and SK Hynix. Now they're reaching to secondary suppliers, Chinese domestic chips, and smaller manufacturers. Quality consistency suffers somewhat, but it improves supply security.

The most sophisticated are reshoring production. Manufacturing closer to markets reduces logistics costs and supply chain risk. Samsung's Texas fab, TSMC's Arizona fab, and Micron's Texas expansion are partly driven by supply security concerns. These U. S.-made chips cost more but provide American brands domestic supply.

The AI-Consumer Electronics Competition

Underlying this entire shortage is a fundamental competition for resources between AI infrastructure and consumer electronics.

AI is newer, more lucrative, and more exciting. Enterprises are in a gold rush mentality. They're committing enormous capital to AI infrastructure. Every company wants to own AI capability. The winners could be worth trillions. The losers get disrupted. The urgency is real and justified.

Consumer electronics is mature. TV markets grow maybe 2-3% annually. Margins are competitive. There's no gold rush energy. It's a steady business. Manufacturers optimize margins and volume, not disruption and dominance.

In this competition for semiconductor resources, AI wins every time. It's rational. But it illustrates a subtle shift in technology competition. The most important breakthroughs aren't in consumer devices anymore. They're in AI. The infrastructure that enables AI matters more than the devices consumers touch.

This matters for your TV not just through price increases. It matters because TV development slows while AI races ahead. The 65-inch TV you buy in 2025 won't be as advanced as it could have been, because engineers and resources went to AI chips instead of TV chips.

It's not a crisis, exactly. But it's a choice. A choice that prioritizes future economic value creation over current consumer happiness. History will judge whether it was worth it.

Outlook: The Next 18 Months

Here's what to expect through mid-2026.

Q4 2024 - Q1 2025: Price increases fully propagate. Retail prices reflect higher chip costs. Early adopters who didn't buy in 2024 face elevated prices. Demand responds by dropping 5-10% as price-sensitive consumers delay purchases.

Q2 - Q3 2025: Chip prices stabilize or drop slightly as AI infrastructure build-out moderates. TV manufacturers see modest memory cost relief. Prices might drop 2-3% from peak levels. But they don't return to 2024 pricing—that opportunity has passed.

Q4 2025 - Q1 2026: Supply-demand equilibrium settles at higher prices. Some chip capacity has expanded. AI demand has moderated post-infrastructure build. Consumer electronics gets increased allocation. But the new equilibrium sits 8-12% above 2024 levels.

Beyond 2026: If AI adoption accelerates beyond infrastructure deployment, shortages re-intensify. But more likely, supply catches up with demand. Memory prices stabilize at lower levels than 2025 peaks, but higher than 2024 bottoms.

The practical implication: if you need a TV, buy before prices fully increase. Once Q1 2025 hits, new inventory will be priced high. Older inventory depletes. You get squeezed between higher prices and limited selection.

Why This Matters Beyond Just TVs

This semiconductor shortage is happening across consumer electronics. It's not TV-specific.

Smartphones face similar pressures. The latest phones require increasingly sophisticated memory for local AI processing. Apple's A18 chips and equivalent from Qualcomm demand more memory than previous generations. Smartphone prices will likely rise alongside TV prices.

Laptops and tablets face the same dynamics. Gaming devices require memory for AI-assisted rendering and real-time upscaling. IoT devices, smart home systems, wearables—all compete for constrained memory.

The lesson is broader: as AI becomes embedded in every device, resource competition intensifies. Not every device can have cutting-edge processing. Manufacturers have to choose. Those with scale and margins can prioritize memory. Others get squeezed.

Consumers should understand that device prices are in a transition period. Expect broader increases across consumer electronics through 2025, driven by semiconductor scarcity and AI-driven demand. Budget accordingly.

The TV price increase Samsung warned about isn't just about TVs. It's about resource allocation in a technology economy increasingly driven by AI. Understanding that context helps you make better purchasing decisions and understand why consumer electronics are getting more expensive.

FAQ

Why are TV prices increasing if memory chips are a commodity?

While memory chips are produced by multiple companies, semiconductor manufacturing capacity is the real constraint. When demand for high-margin AI chips spikes, manufacturers reallocate fab space from lower-margin consumer products to higher-margin AI products. It's not that memory chips aren't available globally—it's that the specific types and quantities needed for TV production are being allocated toward AI infrastructure instead. Additionally, long-term contracts and force majeure clauses in chip supply agreements allow suppliers to reduce consumer electronics allocation when circumstances change. This creates an imbalance where TV makers compete for the same capacity that AI data centers are now demanding.

How much will my TV cost more in 2025 compared to 2024?

Expect price increases ranging from 5-15% depending on the TV model and feature set. Budget 1080p and entry-level 4K TVs will see smaller increases (5-8%), while premium models with advanced features like Mini-LED backlighting and aggressive AI upscaling will see larger increases (12-15%). A TV that cost

Is the chip shortage affecting other electronics like phones and laptops too?

Yes, the memory chip shortage impacts all consumer electronics that require DRAM or advanced memory types. Smartphones are seeing increased prices as manufacturers demand more memory for on-device AI processing. Laptops with AI-capable processors face similar pressures. The shortage is industry-wide, affecting any device that needs memory chips. However, products with less complex memory requirements—basic smartwatches, simple IoT devices—see minimal impact. The shortage specifically affects devices with sophisticated computational requirements and memory-intensive AI features.

When will TV prices return to 2024 levels?

Prices likely won't return to 2024 levels, even when the shortage eases. Historical precedent from the 2012 DRAM shortage shows that prices dropped from peak shortage levels but settled 20-30% higher than pre-shortage baselines. Expect a similar pattern: TV prices will rise through 2025, potentially drop 2-3% in 2026, then stabilize at levels 8-12% higher than 2024. New semiconductor manufacturing capacity coming online in 2026-2027 will help, but the market equilibrium has shifted upward. Manufacturers have raised their cost structure expectations, and price competition won't fully erode these higher levels.

Which TV brands are most affected by the chip shortage?

All TV manufacturers face the same memory chip shortage since they rely on the same suppliers—Samsung, SK Hynix, Micron, and TSMC. However, impact varies. Samsung, which manufactures its own chips, has more control over allocation. LG and TCL, which depend heavily on external suppliers, face tighter allocation. Smaller brands with less negotiating leverage suffer more. Chinese brands like Hisense might have access to domestic Chinese memory suppliers, providing some insulation. However, all major brands will implement price increases due to the universal shortage of high-quality, advanced memory chips needed for modern smart TV features.

Will the price increases be the same in every country?

No, price increases will vary significantly by region. North American consumers might see 8-12% increases due to Samsung's Mexico-based production and negotiating power with U.S. retailers. European consumers could see 12-18% increases due to import dependencies and additional tariffs. Asian consumers face variation—South Korea and Japan might see smaller increases than developing markets. Emerging markets in India, Southeast Asia, and Africa might see 15-25% increases due to less retail competition, higher import costs, and less consumer price sensitivity leverage. Currency fluctuations and local tariffs will also affect regional pricing differently.

Should I buy a TV now or wait for prices to drop?

If you need a TV, buying before price increases fully propagate is your best option. Existing 2024 inventory priced at current levels will deplete as 2025 approaches. Once new stock arrives at higher prices, you'll face elevated pricing with limited selection. However, if you can wait until mid-2026, prices might drop 2-3% from peak shortage levels. The decision depends on your timeline and urgency. If you need a TV in the next 6 months, buy soon. If you can wait 18+ months, delaying until 2026 might save money despite some price increase from 2024 levels.

How are manufacturers responding to the chip shortage?

TV manufacturers are adopting multiple strategies: simplifying feature sets to reduce memory requirements, negotiating longer-term supply contracts to guarantee allocation, diversifying suppliers to reduce dependence on single sources, implementing more efficient algorithms to reduce memory footprint, and in some cases reshoring production for domestic supply security. Some manufacturers are making AI features premium add-ons rather than standard features, allowing budget models to use less memory. Others are exploring server-side processing instead of local AI processing to offload memory requirements. These adaptations help manufacturers manage costs and improve supply security, but they also mean some consumers will get fewer features or less local processing capability than they expected.

Is the AI chip demand really that large?

Yes, AI data center buildout is consuming extraordinary amounts of memory. A single advanced GPU cluster training large language models might use petabytes of working memory. OpenAI, Google, Meta, Microsoft, and other tech giants are collectively deploying millions of GPUs, each requiring high-speed memory. This aggregate demand exceeds total memory production capacity when allocated to the highest-margin applications. One AI training cluster consumes as much memory infrastructure as tens of thousands of TVs. As enterprises race to build AI capabilities, this demand will likely remain intense through 2025 at minimum, affecting memory allocation across all consumer electronics industries.

Will Samsung raise prices on its own chips because of the shortage?

Yes, Samsung has already begun raising memory chip prices for both consumer and data center applications. As a chip manufacturer, Samsung benefits from higher memory prices. As a TV manufacturer, Samsung is harmed. But the incentives favor raising prices since memory represents a larger profit center than TV manufacturing. Internally, Samsung's chip division has more pricing power than its consumer electronics division. This means Samsung will likely extract maximum value from the shortage through chip pricing before allowing consumer product prices to absorb the full impact. This benefits Samsung's overall profitability but accelerates TV price increases for consumers.

Key Takeaways

- Samsung warned of TV price increases through 2025 due to memory chip shortage driven by AI infrastructure demand

- Memory manufacturers prioritize high-margin AI chips over lower-margin consumer electronics, squeezing TV production capacity

- Expect TV prices to rise 5-15% in 2025, with premium models seeing larger increases than budget options

- The shortage reflects broader competition for semiconductor resources between AI infrastructure and consumer devices

- Prices likely won't return to 2024 levels even after shortages ease, settling 8-12% higher due to shifted market equilibrium

Related Articles

- Why RAM Prices Are Skyrocketing: AI Demand Reshapes Memory Markets [2025]

- Samsung RAM Price Hikes: What's Behind the AI Memory Crisis [2025]

- Invisible Unemployment in Tech: 2026 [2025]

- PC Market Downturn: How AI's Memory Crunch Will Impact 2026 [2025]

- PS5 and PS5 Pro Discounts: Complete Buying Guide [2025]

![Samsung TV Price Hikes: AI Chip Shortage Impact [2025]](https://tryrunable.com/blog/samsung-tv-price-hikes-ai-chip-shortage-impact-2025/image-1-1767960441312.jpg)