Introduction: When Student Data Becomes Public Knowledge

Imagine someone could access your child's school application, address, date of birth, and photo—just by tweaking a few numbers in a web browser. No password needed. No special hacking skills. Just basic math.

That's exactly what happened at Ravenna Hub, a platform used by families across thousands of schools to manage student applications. For an unknown period, any logged-in parent could view any other family's complete profile. Not through a breach. Not through stolen credentials. Through a simple, preventable coding mistake.

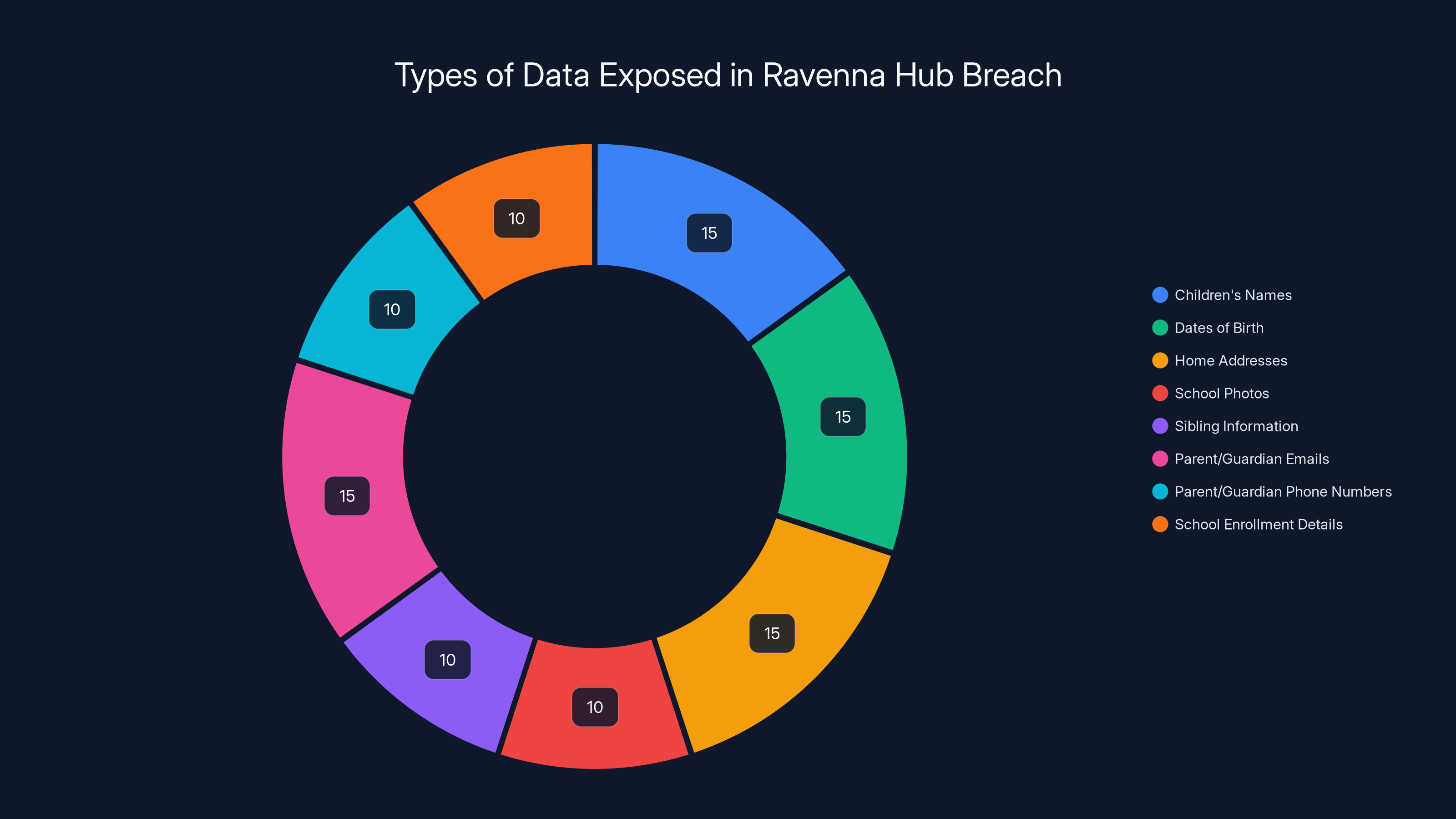

The vulnerability exposed approximately 1.63 million student records. Children's names, dates of birth, home addresses, school photos, and sibling information were all accessible. Parent email addresses and phone numbers? Exposed too. The company that built the platform, Venture Ed Solutions, didn't immediately notify users. The CEO wouldn't confirm whether unauthorized access actually occurred. No third-party security audit was mentioned.

This isn't a story about sophisticated hackers or zero-day exploits. It's a story about a fundamental security failure so basic that it shows a troubling pattern in edtech: companies handling sensitive children's data without implementing security controls that should be industry standard. And it raises urgent questions about oversight, accountability, and how we protect the most vulnerable users on the internet.

The incident revealed something darker than the technical vulnerability itself. When TechCrunch disclosed the flaw, the company fixed it within hours—proving the fix was trivial. But the fact that it existed in the first place, and that the company's response was defensive rather than transparent, suggests a broader problem in how educational technology platforms approach security.

This article breaks down what happened, why it matters, and what needs to change to prevent the next breach.

TL; DR

- The Breach: Ravenna Hub exposed 1.63 million student records through an IDOR vulnerability allowing unauthorized access to children's personal data

- What Was Exposed: Names, dates of birth, home addresses, school photos, sibling information, parent emails, and phone numbers

- The Company's Response: Venture Ed Solutions fixed the bug same-day but refused to confirm unauthorized access or commit to user notification

- The Root Cause: Insecure Direct Object Reference (IDOR)—a common, preventable security flaw affecting applications across industries

- Bottom Line: Companies handling children's data must implement mandatory security standards, third-party audits, and transparent incident reporting

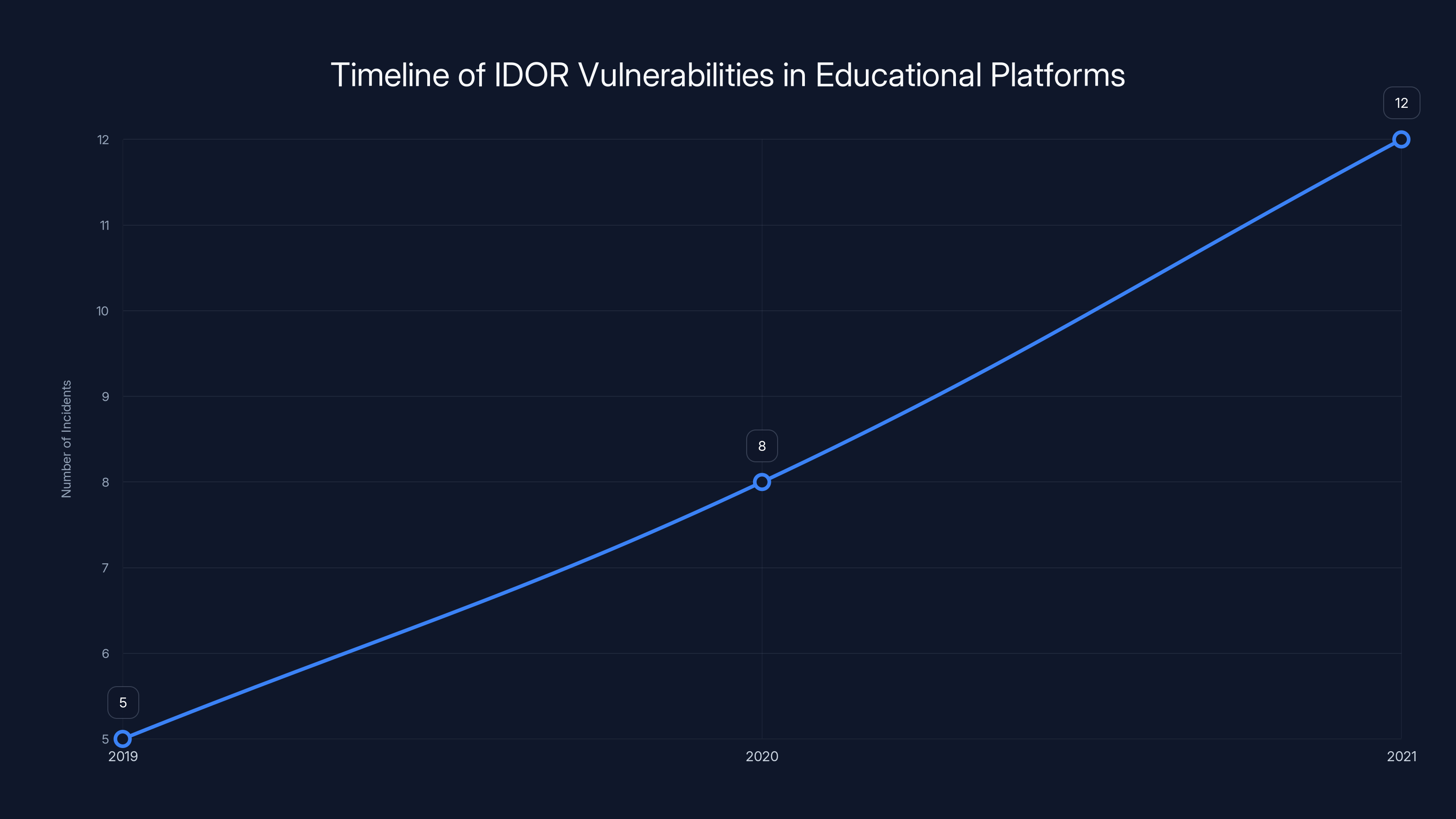

The number of reported IDOR vulnerabilities in educational platforms has increased from 2019 to 2021, highlighting a growing pattern of negligence. Estimated data based on reported incidents.

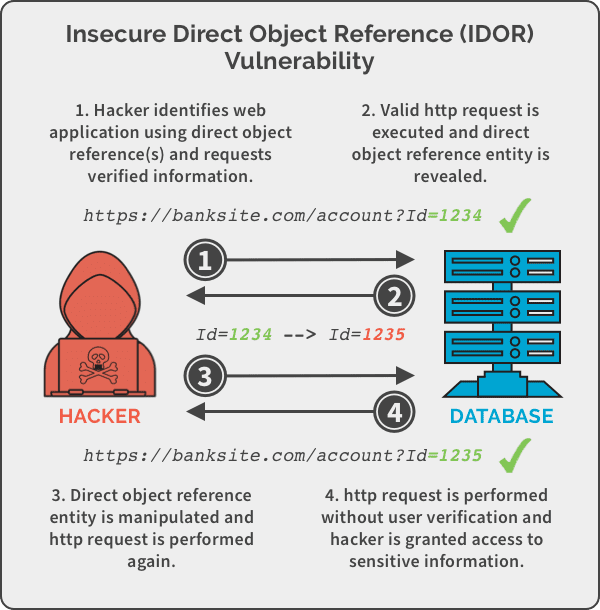

Understanding IDOR: The Most Overlooked Security Flaw

IDOR stands for Insecure Direct Object Reference. In theory, it's easy to explain. In practice, it's the kind of vulnerability that should never exist in production software, yet it shows up repeatedly across major platforms.

Here's how it works: Web applications need to identify resources—like user profiles, documents, or in this case, student records. They typically assign each resource a unique identifier, often a simple number. Your profile might be /user/12345. Your neighbor's is /user/12346. The system trusts that you'll only access your own profile. So it checks: "Is this person logged in?" If yes, it shows the resource.

The vulnerability happens when the system doesn't verify that you should have access to that specific resource. Any logged-in user can simply modify the number and access someone else's data.

In Ravenna Hub's case, the student profiles used seven-digit numbers. Incrementing or decrementing by one exposed the next student's complete record. Since these numbers were sequential, attackers didn't even need to guess—they could simply iterate through every combination. A script could theoretically crawl through 1.63 million records in minutes.

The OWASP Top 10—a widely respected guide ranking the most critical security risks—lists broken access control (which includes IDOR) as the number one vulnerability in web applications. Not number two. Number one. This has been true for years. The security community has documented countless case studies showing the dangers.

Yet IDOR vulnerabilities continue appearing in major applications with millions of users. Why? Because preventing them requires a security mindset that isn't universally adopted in engineering teams. It's not about advanced cryptography or complex threat modeling. It's about asking: "Should this user be allowed to access this specific resource?"

For Ravenna Hub, the fix was likely adding a check: "Does this user own this student profile?" before displaying the data. This check should have been there from day one. The fact that it wasn't suggests security wasn't integrated into the development process—it was an afterthought.

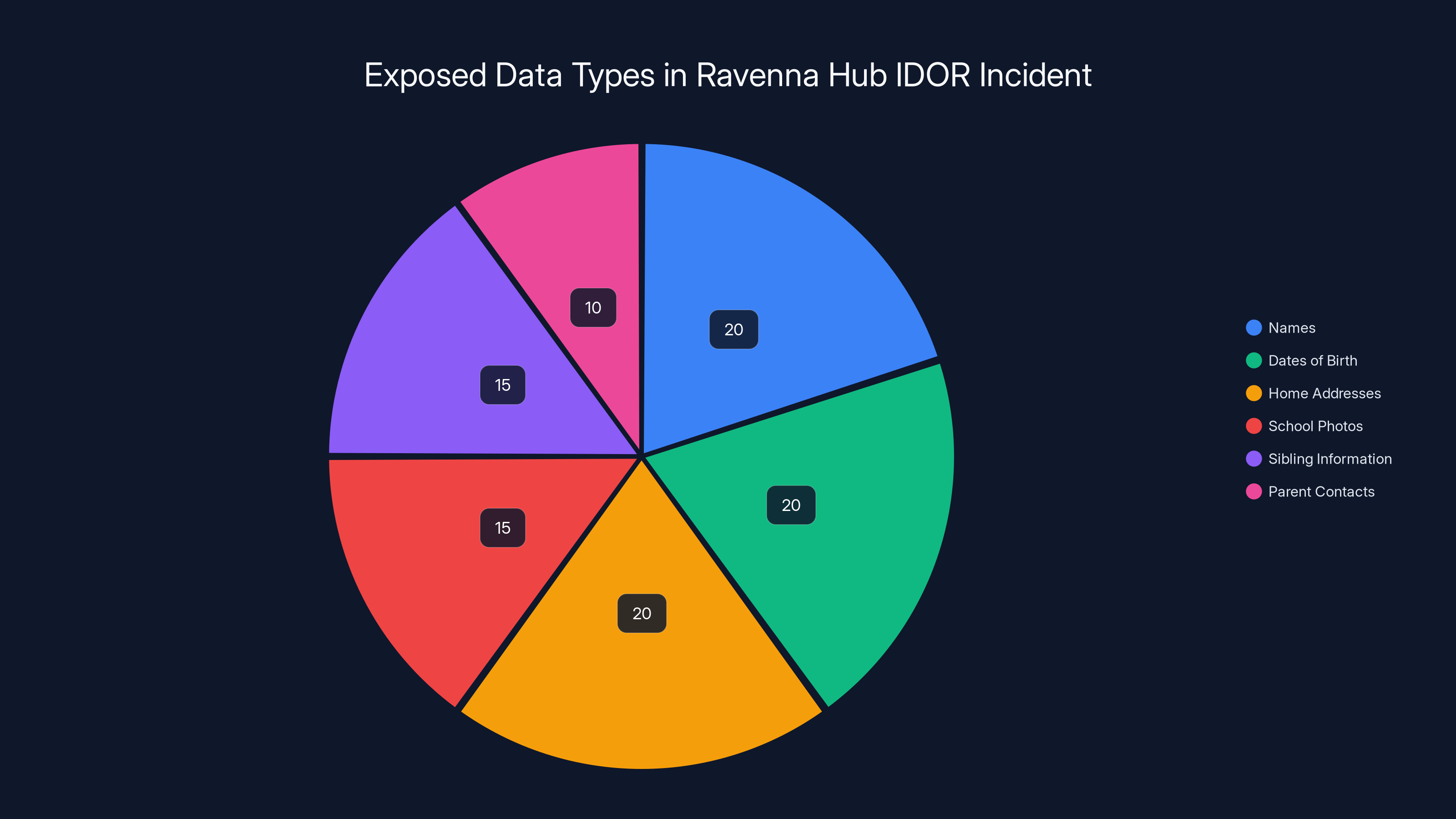

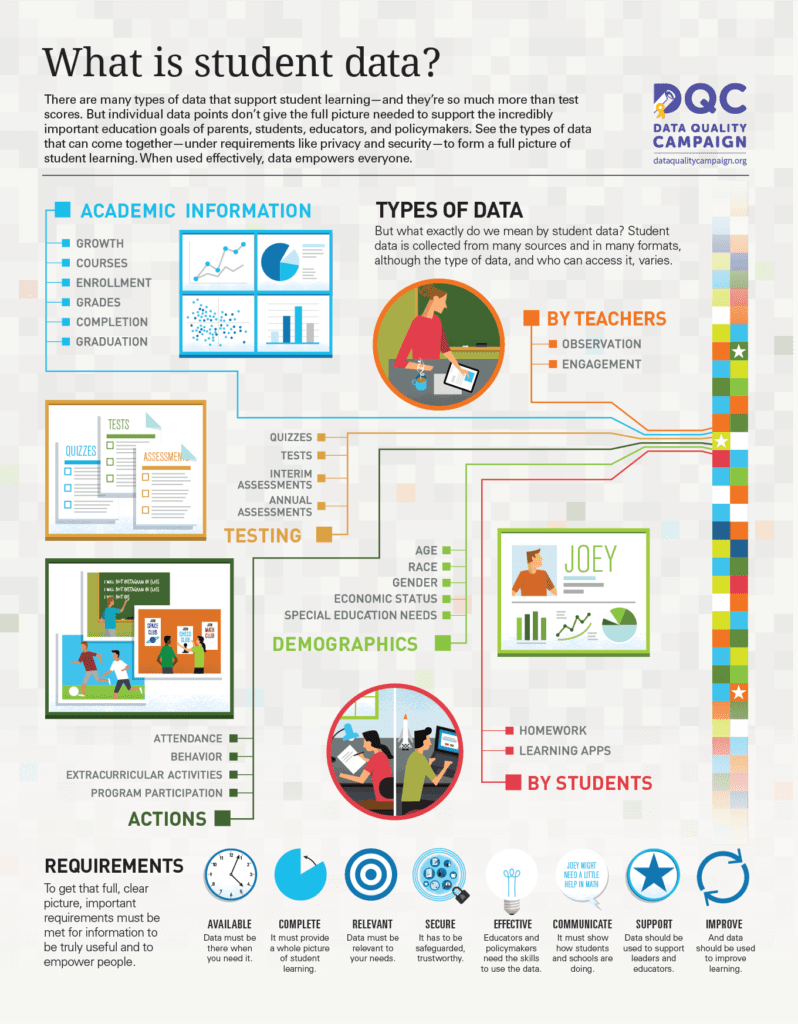

The Ravenna Hub IDOR vulnerability exposed a variety of sensitive data types, with names, dates of birth, and home addresses each comprising 20% of the exposed data, highlighting the comprehensive nature of the breach. Estimated data.

What Data Was Actually Exposed: The Full Scope

When security researchers and journalists discuss data breaches, they often list categories of information abstractly. "Personal identifiable information (PII)" sounds clinical. But we need to understand what that actually means when we're talking about children.

Ravenna Hub exposed:

- Children's names: The most basic identifying information

- Dates of birth: Combined with names and addresses, this uniquely identifies minors

- Home addresses: Physical location data for children

- School photos: Visual identification of minors

- Information about siblings: Family relationship data

- Parent/guardian email addresses: Primary contact information

- Parent/guardian phone numbers: Secondary contact information

- School enrollment details: Which institutions the children attended

This combination of data points creates what security researchers call a "super-identifier." Individually, some of this information might seem less sensitive. Combined, it paints a complete picture of a child's identity, location, and family structure.

Consider the potential misuses. A bad actor could:

- Impersonate the child in school communications using the parent's email

- Locate the family using the home address and identify when no one's home

- Target the child with scams or grooming attempts using accumulated knowledge

- Harvest data for identity theft years later when the child turns 18

- Create fake accounts using the child's full identity information

- Sell the data to marketers, scammers, or data brokers

The concerning part isn't just what was exposed—it's that we still don't know if anyone actually accessed it. Venture Ed Solutions declined to commit to investigating whether unauthorized access occurred. The CEO wouldn't answer whether the company could even detect such access through their logs. This is a massive oversight.

For comparison, when legitimate data breaches occur at financial institutions, they're legally required to notify affected parties within specific timeframes. They're required to investigate the scope of the breach. They're required to maintain forensic logs. Ravenna Hub operates in an industry—education technology—where regulations are far less stringent.

The Company Behind the Breach: Venture Ed Solutions and Ravenna Hub

Venture Ed Solutions, the Florida-based company that develops and maintains Ravenna Hub, describes itself as serving over one million students and processing hundreds of thousands of applications annually. That's significant scale. Those numbers suggest the company has substantial revenue and resources.

Despite that scale, the company's public posture following the vulnerability disclosure was troubling. The CEO acknowledged the vulnerability existed and confirmed it was fixed. But beyond that, the response became evasive.

When asked if the company would notify users about the security lapse, the CEO provided no commitment. When asked if the company could even check whether unauthorized access occurred, there was no clear answer. When asked if Ravenna Hub had undergone third-party security audits, the CEO declined to comment.

This pattern suggests one of several possibilities: Either the company lacks the infrastructure to detect unauthorized access (meaning their logging and monitoring systems are inadequate), or they didn't want to investigate (suggesting indifference to whether users were actually compromised), or they investigated and found evidence of unauthorized access and chose not to disclose it.

None of these scenarios inspire confidence.

Large education technology companies typically maintain security teams, conduct regular audits, and have incident response procedures. The fact that Ravenna Hub's leadership didn't immediately announce an investigation or commit to notifying users suggests either immaturity in their security practice or a deliberate choice to downplay the incident.

The education technology market operates with less regulatory pressure than fintech or healthcare. There's no federal mandate requiring breach notifications for student data like there is for health information under HIPAA. This creates a perverse incentive structure where companies can handle breaches quietly.

Ravenna Hub's parent company should be implementing:

- Annual third-party security assessments by reputable firms

- Automated logging of all data access with retention for legal investigation

- Penetration testing by independent security researchers

- Incident response procedures that include mandatory user notification

- Background checks and security training for all development staff

None of these are exotic or expensive. They're table stakes for any company touching children's data.

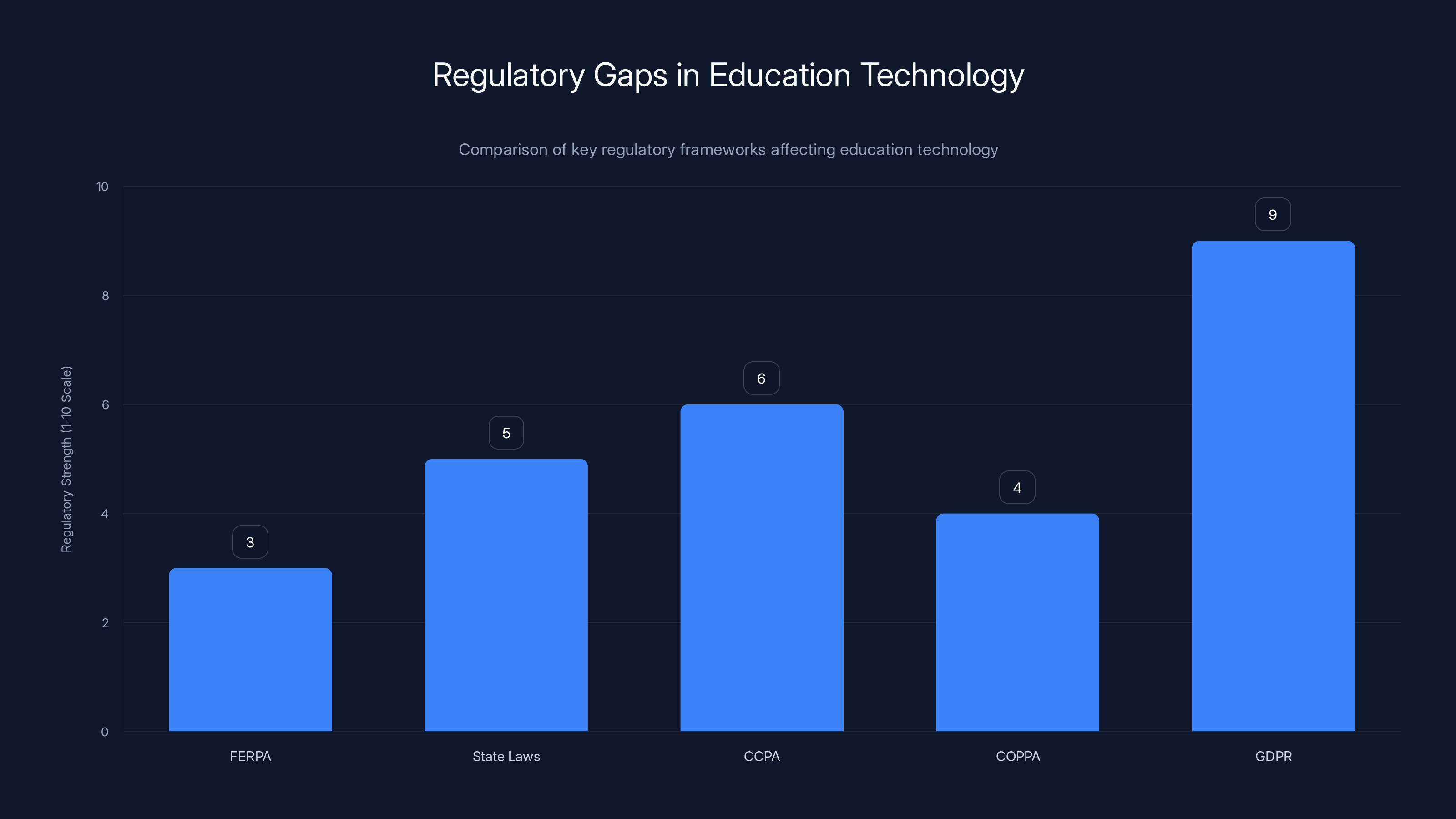

The chart illustrates the varying strength of regulatory frameworks affecting education technology. GDPR is the most stringent, while FERPA is less comprehensive. Estimated data.

IDOR Vulnerabilities in Educational Platforms: A Pattern of Negligence

The Ravenna Hub incident isn't isolated. Educational technology platforms have a documented history of IDOR and similar access control vulnerabilities.

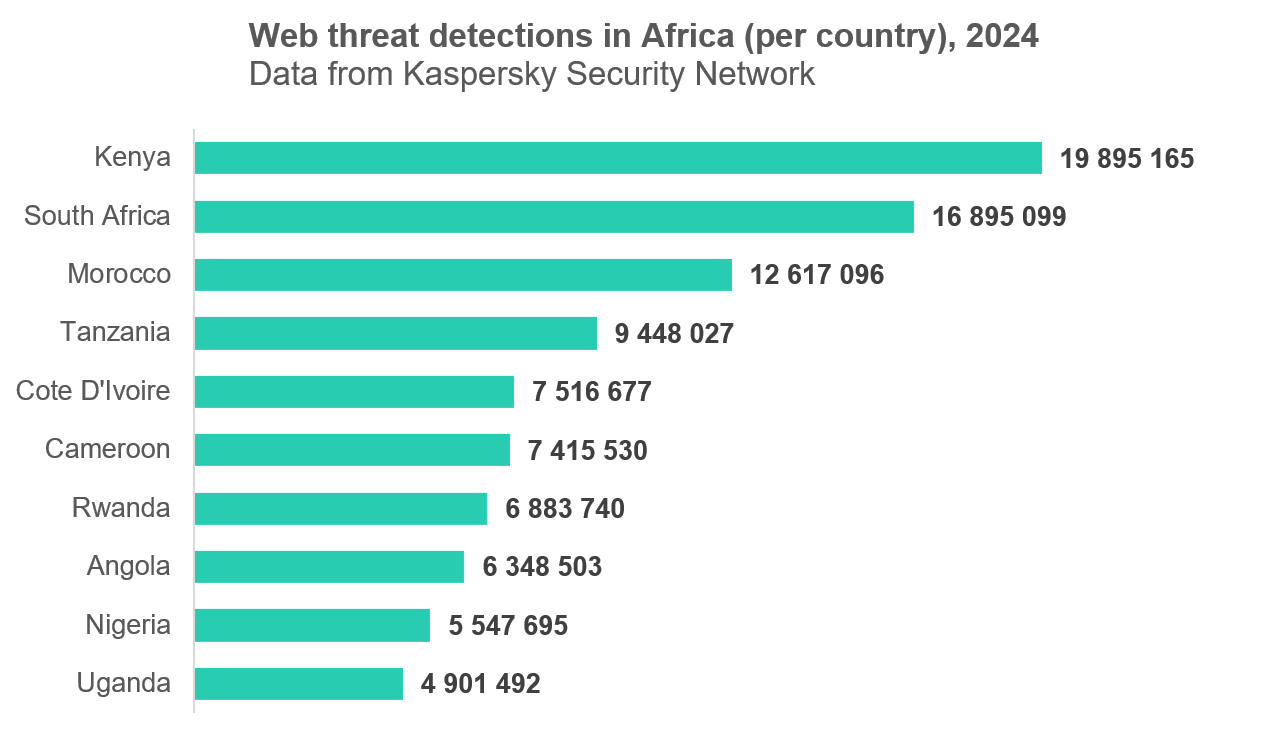

In 2019, researchers discovered IDOR vulnerabilities in multiple school district online portals, allowing unauthorized access to student transcripts, test scores, and disciplinary records. In 2020, a popular online tutoring platform exposed student progress records and payment information through similar sequential ID vulnerabilities. In 2021, a school management system used by thousands of institutions in Asia left student records accessible through simple ID manipulation.

What makes education technology particularly vulnerable?

Complexity and Scale: School districts and education platforms integrate multiple systems—admissions, grades, attendance, meal plans, special education services. Each integration point is a potential vulnerability. Ravenna Hub alone handles applications across thousands of schools. That's thousands of data sources, thousands of integration points, thousands of opportunities to miss security controls.

Limited Security Expertise: Many education technology companies are founded by educators or entrepreneurs who understand pedagogy better than cybersecurity. They build products with user experience in mind, often hiring developers based on full-stack capabilities rather than security expertise. The first security hire often comes after significant growth—if it comes at all.

Regulatory Arbitrage: Unlike healthcare (HIPAA), finance (SOX, PCI-DSS), or even general privacy (GDPR, CCPA), education data protection laws are fragmented and often toothless. FERPA provides some protection for school records, but it's focused on institutional liability rather than platform security standards. This creates an incentive for platforms to invest minimally in security because the legal risk is perceived as low.

Misplaced Trust: Schools and families assume platforms handle data securely because they're regulated or recommended by educational associations. They don't ask hard questions about security architecture. The sales process focuses on features and pricing, not audit reports and incident response procedures.

Legacy Systems: Many education platforms built in the 2010s were constructed before security matured as an industry concern. Retrofitting security into older code is harder and more expensive than building security from the start. Companies face a constant trade-off: invest in modernizing the security architecture, or invest in new features that attract customers.

Ravenna Hub's vulnerability suggests the company chose the latter.

The Technical Details: How Sequential IDs Enabled Mass Exposure

Let's dig into the technical specifics because they matter. Understanding exactly how the vulnerability worked helps explain why it's so preventable and so dangerous.

Ravenna Hub used seven-digit numbers to identify student profiles. These numbers were sequential, meaning:

- Student 1 = profile

/student/1000000 - Student 2 = profile

/student/1000001 - Student 3 = profile

/student/1000002

And so on.

Sequential IDs are convenient for developers. They're easy to generate, easy to remember for debugging, easy to paginate through in databases. But they're a security nightmare because they eliminate the "guessing" component entirely. An attacker doesn't need to guess or brute-force IDs—they can simply count.

When Tech Crunch's researchers created a test account, they received profile number 1,630,001 (approximately). This meant:

- 1,630,000 records existed before theirs

- All were accessible via sequential ID incrementation

- No authentication checks prevented access to "other" profiles

Here's the typical attack flow:

- Register an account with any fake student data

- Log in and navigate to your profile page

- Note the URL showing your student ID

- Increment the ID by one and hit Enter

- View another student's profile (and all their data)

- Automate the process with a simple script to iterate through all IDs

The time required? Minutes. The technical skill required? Minimal. Any moderately technical parent could have done this in an afternoon.

The proper fix involves:

Option 1: Access Control Checks Before displaying student data, check: "Does this logged-in user have permission to access this specific student profile?" This requires tracking ownership relationships in the database—like "parent_id 5 owns student_id 1000042." Every request checks this relationship.

Option 2: Opaque IDs

Instead of sequential numbers, generate random or hashed identifiers for each profile. This prevents enumeration attacks entirely. Instead of /student/1000001, you'd get /student/a7f3k9m2x8q6b1p9. Even if an attacker accesses the system, they can't predict other valid IDs.

Option 3: Encrypted References Encrypt the student ID before sending it to the browser, then decrypt it on the server. This prevents manipulation entirely.

Ravenna Hub likely needed only Option 1. A single database query to verify ownership before returning data. This is taught in basic web development courses. That it wasn't implemented suggests either inadequate code review, rushed development timelines, or insufficient security training.

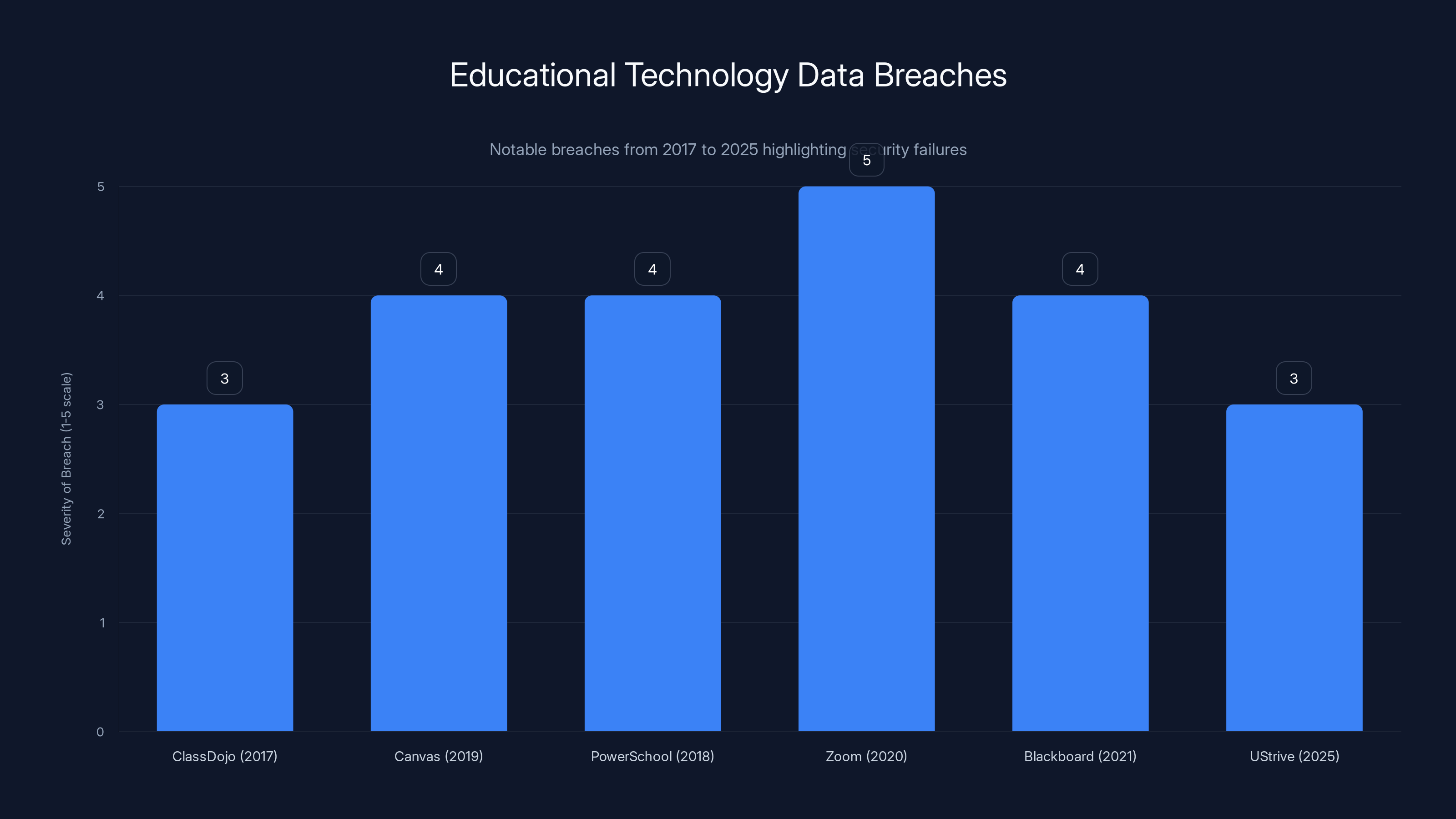

Educational technology platforms have repeatedly failed to secure student data, with breaches occurring across various platforms over the years. Estimated data highlights the severity of these breaches.

Regulatory Gaps: Why Education Technology Escapes Accountability

A critical question emerges from this incident: Why was there no federal enforcement action? Why no mandatory breach notification? Why did the company face no regulatory consequences?

The answer lies in regulatory fragmentation and industry-specific carve-outs that make education data less protected than other sensitive information.

FERPA (Family Educational Rights and Privacy Act) FERPA, passed in 1974, governs educational records. It's primarily a transparency law—it gives parents the right to access their child's records and know who else has accessed them. But FERPA doesn't mandate specific security practices. It doesn't require encryption, doesn't mandate security audits, doesn't establish data breach notification timelines. When a FERPA violation occurs, the U. S. Department of Education investigates, but consequences are rarely publicized and penalties are often small relative to the company's revenue.

State Data Breach Notification Laws Each state has its own data breach notification requirements. Some are comprehensive. Others are toothless. Florida (where Venture Ed Solutions is based) requires notification of data breaches affecting Florida residents, but the timeline is vague ("without unreasonable delay") and the definition of "personal information" is narrower than in other states.

CCPA and COPPA Limitations California's Consumer Privacy Act (CCPA) provides some protections for minors' data, requiring affirmative parental consent for data processing. However, CCPA excludes student educational records covered by FERPA, creating a gap. COPPA (Children's Online Privacy Protection Act) applies to websites and online services directed to children under 13, but doesn't cover all educational platforms and carries minimal penalties.

International Gaps Unlike Europe's GDPR, which imposes strict data protection requirements and up to €20 million (or 4% of global revenue) in fines, the United States has no federal privacy law. Companies face different rules in different states, creating compliance challenges but also opportunities to minimize protection by choosing favorable jurisdictions.

Compare this to other industries:

Healthcare: HIPAA mandates specific security controls, annual audits, breach investigations, mandatory notification within 60 days, and fines up to $1.5 million per violation category per year.

Finance: PCI-DSS mandates encryption, access controls, regular penetration testing, and detailed logging. Penalties include transaction fees of $50-100 per compromised card, plus potential fines from regulatory bodies.

Online Privacy: GDPR mandates data protection by design, regular assessments, breach notification within 72 hours, and fines up to 4% of global revenue.

Education: FERPA requires transparency but not specific security practices. State laws vary wildly. COPPA applies only to services directed to under-13 children. Result: Companies are incentivized to spend minimal resources on security.

This is the regulatory arbitrage problem. Rational actors—companies seeking profit—allocate resources where regulations demand it. Education technology faces less regulatory pressure than healthcare or finance, so it receives less investment in security. Students and families bear the consequences.

The solution requires action at multiple levels:

Federal Level: A "HIPAA for Education" standard mandating security practices, audits, breach investigation, and meaningful penalties.

State Level: Alignment around strong privacy and security requirements for education platforms.

Industry Level: Self-regulation through common standards like SOC 2 Type II certifications, mandatory third-party audits, and public disclosure of security practices.

Institutional Level: Schools and districts demanding security compliance from vendors before adoption, and conducting annual security reviews.

None of this is happening at meaningful scale today.

Third-Party Audits: The Missing Safety Check

When Venture Ed Solutions was asked whether Ravenna Hub had undergone third-party security audits, the CEO declined to answer. This silence is deafening.

Third-party security audits serve several critical functions:

Verification: An independent organization reviews the company's systems against established standards (like SOC 2 Type II, ISO 27001, or HIPAA-equivalent frameworks). They verify that security controls actually exist and function as claimed.

Accountability: The audit report is typically shared with customers and stakeholders, creating transparency. A company can't claim strong security and then hide a lack of audits.

Continuous Assessment: Reputable audit frameworks require annual or biennial reassessment, forcing companies to maintain security standards over time rather than implementing them once and forgetting.

Remediation Tracking: Auditors identify vulnerabilities and require documented remediation plans. They follow up to ensure fixes are implemented.

Insurance and Compliance: Many insurance policies and compliance frameworks require documented third-party audits. Their absence signals risk.

SOC 2 Type II (Service Organization Control) is the most relevant standard for technology platforms handling customer data. It certifies that a company has implemented and maintained security, availability, and confidentiality controls over a period (usually 6 months or longer). Achieving SOC 2 certification requires:

- Security governance: Documented policies and procedures

- Access controls: Verification that users access only appropriate data

- Change management: Controlled deployment of software updates

- Logging and monitoring: Comprehensive records of system activity

- Incident response: Documented procedures for handling security incidents

- Risk assessment: Regular evaluation of threats and vulnerabilities

- Vendor management: Review of third-party services and risks

The fact that Ravenna Hub's CEO wouldn't mention audits suggests one of two scenarios:

Scenario 1: The company hasn't undergone SOC 2 or similar certification. This means no independent verification of security practices exists. Parents have no basis for trusting the company's security claims beyond the company's word.

Scenario 2: The company has undergone certification but found significant issues during the audit, and chose not to publicize results. This would be even more damning because it would indicate the company discovered problems and chose opacity over transparency.

Major education technology platforms absolutely should have current SOC 2 Type II certifications. Companies serving healthcare must have them. Companies handling payment information must have them. The education sector's failure to demand them is a regulatory failure.

What should change:

- Schools and districts should require SOC 2 Type II certification (or equivalent) before adopting any new platform

- Parents should ask about certifications before uploading their children's data

- Industry associations (like ISTE or organizations representing school districts) should mandate security standards for member companies

- States should include security audit requirements in education technology procurement guidelines

Until this becomes standard practice, companies face no external pressure to invest in third-party verification.

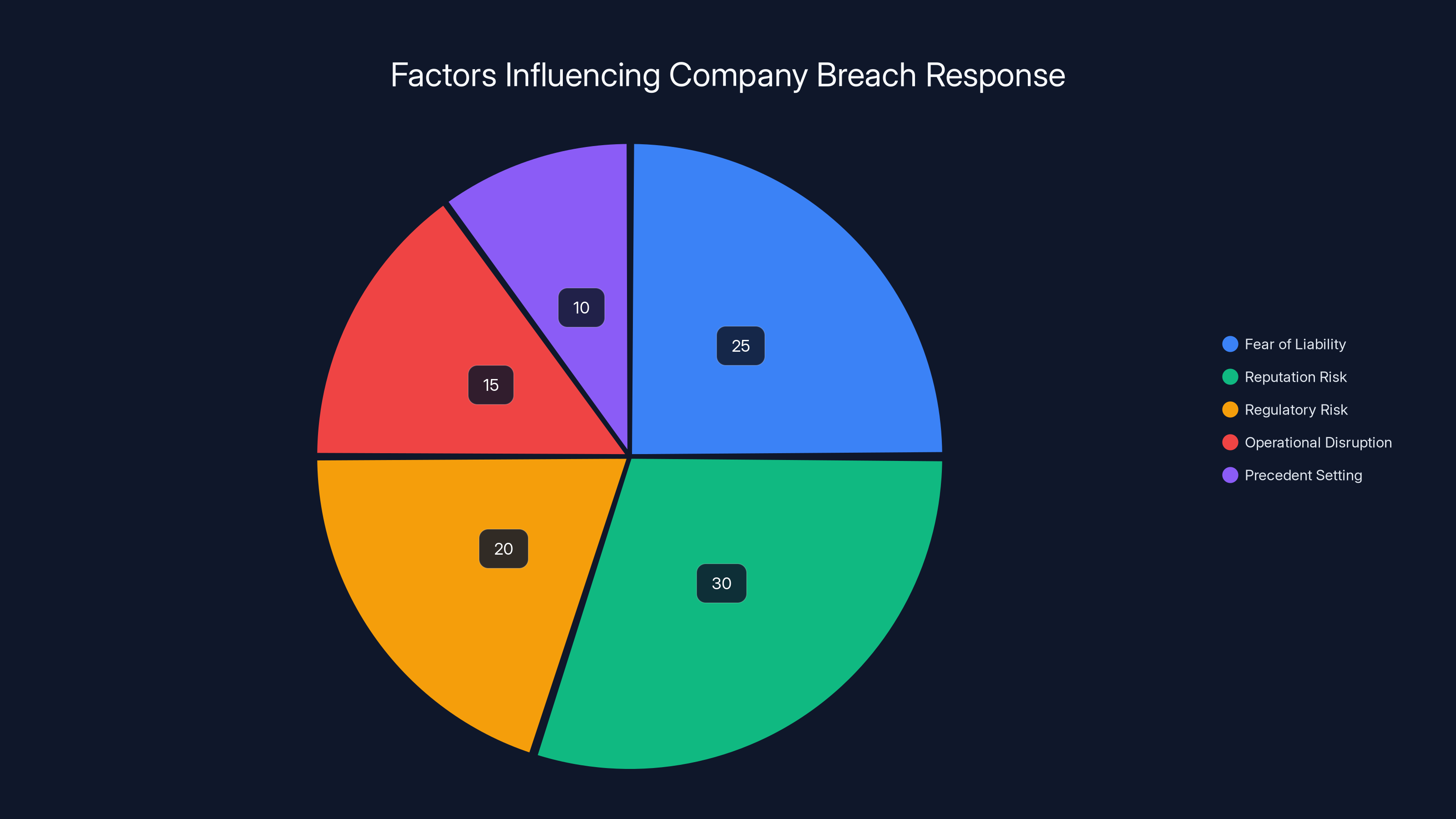

Estimated data shows Reputation Risk (30%) and Fear of Liability (25%) as the top factors influencing company breach responses, highlighting the psychological barriers to transparency.

The Vulnerability Disclosure Process: Speed and Silence

One aspect of the Ravenna Hub incident deserves scrutiny: the timeline and disclosure process.

Here's what happened:

Wednesday: Tech Crunch discovered the vulnerability and immediately contacted Venture Ed Solutions

Same Day: The company fixed the bug and confirmed the fix to Tech Crunch

Publication Delay: Tech Crunch held the story for days while verifying the fix, then published

On its face, this looks good. The company fixed the bug quickly. But several aspects are troubling.

First, the speed of the fix tells us something important: the vulnerability was trivial to resolve. If it took one day to fix, it should have taken one day to prevent. The existence of the vulnerability suggests either inadequate code review or insufficient security testing before deployment.

Second, the company's refusal to commit to user notification is problematic. Responsible vulnerability disclosure has industry standards. When a security researcher discovers a vulnerability, the expected process is:

- Responsible disclosure: Give the company time to fix (typically 90 days)

- Good faith: Assume the company wants to do the right thing

- Investigation: After fix confirmation, investigate whether unauthorized access occurred

- Notification: Inform affected users of the vulnerability and any evidence of unauthorized access

- Transparency: Publish details so others can learn from the incident

Venture Ed Solutions completed steps 1 and 2 (fix quickly), but refused to commit to steps 3, 4, and 5. This suggests a company unprepared for legitimate security incidents.

A mature company would have announced:

"We discovered a security vulnerability in our platform on [date]. We immediately patched the issue and launched an investigation into whether unauthorized access occurred. We are notifying all affected users and offering complimentary credit monitoring services. We have engaged [reputable security firm] to conduct a comprehensive audit of our systems. Full details are available at [URL]."

Instead, Venture Ed Solutions said nothing. The only information comes from journalists who happened to discover it.

This creates a moral hazard. Companies learn that if they remain silent and fix vulnerabilities quickly, they avoid public scrutiny. Responsible disclosure becomes unrewarded. Opacity becomes advantageous.

Better approaches include:

Bug bounty programs: Reward security researchers for finding vulnerabilities rather than forcing them to discover issues accidentally. Platforms like HackerOne and Bugcrowd connect researchers with companies.

Vulnerability disclosure policies: Public commitment to notification timelines and investigation procedures. This builds trust and ensures consistent handling.

Security incident reporting: Requirement for school districts to be notified of breaches within specific timeframes, triggering mandatory parent notification.

Public disclosure: Commitment to sharing post-incident analysis so others can learn from mistakes.

The absence of these practices at Ravenna Hub suggests the company operates in a reactive mode, fixing problems only when discovered externally.

Similar Breaches: Educational Technology's Pattern of Failure

Ravenna Hub isn't an anomaly. It's part of a broader pattern of educational technology platforms mishandling student data.

UStrive (2025): An online mentoring platform exposed personal information of students, including names, email addresses, and educational backgrounds, through inadequate access controls.

Blackboard Collaborate (2021): The widely-used virtual classroom platform leaked student information including unique identifiers, session recordings, and personal data through insecure authentication mechanisms.

Zoom (2020): "Zoombombing" incidents revealed that Zoom's default security settings were insufficient for classroom use, allowing unauthorized access to student-attended sessions.

Canvas Learning Management System (2019): Multiple instances of data leakage through insecure API endpoints that exposed student grades, submissions, and personal information.

Class Dojo (2017): A popular classroom communication app revealed vulnerabilities allowing unauthorized access to student behavioral data and communications.

Power School (2018): The school information system used by thousands of districts exposed student grades, attendance records, and personal information through SQL injection vulnerabilities.

Why does education technology specifically suffer from these issues?

Market Structure: The education technology market consists of many small and mid-sized companies competing on features and price, not security. Larger companies (Microsoft, Google, Blackboard) have better security teams, but many school districts can't afford them.

Procurement Process: Schools typically evaluate software based on features and cost, not security. Administrators aren't trained to audit security. Procurement departments don't hire security consultants. Vendors aren't required to provide security certifications.

Urgency and Adoption: Education technology adoption often happens quickly in response to emergencies (like pandemic remote learning). Schools adopted Zoom, Google Classroom, and other platforms rapidly without security vetting. Speed trumped security.

Regulatory Vacuum: As discussed earlier, the lack of strong federal standards means companies can compete on features while minimizing security investment.

User Base Vulnerability: Students and families are generally less sophisticated about security than enterprise customers. They don't negotiate service agreements. They don't demand audits. They accept platform terms of service without reading them.

Developer Training Gaps: Many education technology developers lack security training. They focus on building features. Security is treated as a checkbox rather than an integral part of design.

The cumulative effect is an industry where security failures are common, accountability is minimal, and improvements are slow.

Estimated data distribution shows a variety of personal and sensitive information exposed, with names, dates of birth, and contact details being the most prevalent.

Forensic Investigation: What We'll Never Know

One of the most troubling aspects of the Ravenna Hub incident is what we'll never know.

When a security vulnerability is discovered, several critical questions demand investigation:

Was unauthorized access actually detected? Venture Ed Solutions hasn't indicated whether they reviewed server logs for evidence of unauthorized data access. Did they search for suspicious login patterns? Did they examine database queries that accessed student data belonging to other parents? We don't know.

How long did the vulnerability exist? Tech Crunch discovered it, but was it exploited before discovery? Ravenna Hub hasn't said when this vulnerability was introduced or how long it was in production.

Did anyone exploit it? Without investigation, no one can say. Attackers might have silently accessed records for months before the flaw was patched. They might have sold the data to brokers. They might have used it for identity theft, fraud, or worse.

Who had access? If the company discovered unauthorized access, they would need to identify which specific student records were accessed and by whom. This requires detailed logging systems most startups don't have.

Proper forensic investigation would require:

Log Analysis: Comprehensive server logs showing every login, every API call, every database query. When did each user access what data, at what time?

Timeline Reconstruction: Determining exactly when the vulnerability was introduced and deployed to production.

Pattern Analysis: Looking for suspicious access patterns—single users accessing dozens of student records, or accesses from unusual geographic locations.

Data Broker Searches: Checking whether the stolen data appears on dark web markets or data broker sites.

Attribution: If unauthorized access is detected, determining whether it was an employee, a competitor, a criminal, or an accidental access.

This investigation should be conducted by independent forensic experts, documented in a detailed report, and shared with affected families. It should not be handled internally by the company.

But here's the uncomfortable truth: Companies have minimal incentive to investigate thoroughly if they discover unauthorized access, because that discovery triggers notification obligations and liability. There's a perverse incentive toward minimal investigation and documentation.

This is exactly why regulatory mandates exist. Companies shouldn't be trusted to police themselves. Independent enforcement is necessary.

Architectural Improvements: Building Secure Education Platforms

If you were tasked with redesigning Ravenna Hub to prevent similar vulnerabilities, what would change?

Secure architecture for education platforms requires multiple layers of security, not a single defense.

Layer 1: Authentication Verify that users are who they claim to be. This typically involves usernames/passwords (plus two-factor authentication for sensitive operations), or single sign-on integration with school districts.

Layer 2: Authorization/Access Control For every request, verify that the authenticated user has permission to access the specific resource. This is where IDOR is prevented. Before displaying student profile 12345, confirm that this request is from a parent/user who owns that profile.

Implementation in pseudocode:

GET /student/12345

1. Authenticate user → get user_id = 567

2. Check: Does user_567 own student_12345?

3. If yes: Return data. If no: Return 403 Forbidden.

Layer 3: Data Encryption Encrypt sensitive data both in transit (HTTPS) and at rest (database encryption, encrypted backups). Even if logs or backups are compromised, the data is unreadable without encryption keys.

Layer 4: Logging and Monitoring Maintain detailed logs of every access to sensitive data. Monitor for suspicious patterns automatically. Alert security teams when a user accesses multiple student profiles or accesses unusual amounts of data.

Layer 5: Rate Limiting Prevent attackers from rapidly iterating through student IDs. After N failed requests within a time period, block the user temporarily.

Layer 6: Input Validation Validate that student IDs conform to expected formats. If you're expecting a 7-digit number, reject requests with unexpected values.

Layer 7: Regular Testing Conduct regular penetration testing to find vulnerabilities before attackers do. Include security testing in the development pipeline—don't treat it as an afterthought.

Layer 8: Incident Response When vulnerabilities are discovered, have a documented process: immediate patch, investigation, notification, and transparency.

The key insight: Security isn't a single gate. It's layered defense. If one layer fails, others remain. This is called "defense in depth."

Ravenna Hub failed at Layer 2 (authorization). They likely had Layer 1 (authentication) working fine. But the combination was insufficient.

Building this infrastructure takes time and expertise. It requires hiring security engineers, investing in tools, conducting testing. It's more expensive than not doing it.

But for a company serving one million students? The investment is trivial relative to the risk. A single successful lawsuit from families whose children's data was compromised could cost millions. Insurance premiums for unaudited platforms serving sensitive data are substantial. The math favors investing in security.

The Psychology of Breach Response: Why Companies Behave This Way

One of the most revealing aspects of the Ravenna Hub incident is how the company responded to the vulnerability discovery.

The CEO acknowledged the flaw but became defensive about investigations and notifications. This behavior isn't unusual—it's the norm for companies facing security breaches. Understanding the psychology helps explain why we continue seeing poor responses.

Fear of Liability: The company worries that admitting an exploit occurred creates legal liability. Notification triggers lawsuits. Families sue for damages. Insurance carriers increase premiums. Better to remain silent.

Reputation Risk: Public admission of a security failure damages the company's brand. Parents might choose competitors. Schools might switch platforms. Market share could decline. Silence minimizes immediate damage.

Regulatory Risk: The company worries that admitting to a breach triggers regulatory investigation. If acknowledged, the breach might trigger mandatory breach notification laws in affected states. The company faces potential fines from the Department of Education or state attorneys general.

Operational Disruption: Investigation requires time and resources. The security team could be spending that time on feature development and product improvements that drive revenue growth.

Precedent Setting: Admitting one breach and investigating it thoroughly could signal to future users and regulators that more breaches exist. "See, the company does leak data. Why would we trust them?"

These incentives align toward silence and minimal action. A rational company (in game theory terms) behaves defensively.

This is exactly why regulation is necessary. When companies face only internal incentives, they optimize for company benefits at the expense of user protection. When regulators impose external incentives (fines, mandatory notifications, public enforcement actions), the optimization problem changes.

Consider the difference:

Without Regulation: Company discovers breach → internal calculus: "Investigating costs money and creates liability. Not investigating costs nothing and avoids liability." → Decision: Don't investigate, stay silent.

With Regulation: Company discovers breach → internal calculus: "Not investigating violates law and triggers massive fines. Investigating costs money but avoids criminal liability and regulatory penalties." → Decision: Investigate, notify, be transparent.

The healthcare industry illustrates this. HIPAA's mandatory breach notification and significant penalties mean that when health companies experience breaches, they investigate thoroughly and notify patients. The regulatory framework forced the appropriate behavior.

Education technology lacks this external pressure. Until it exists, expect companies to behave like Ravenna Hub did: fix the problem quietly and avoid transparency.

What Families Should Do: Practical Risk Reduction

Parents and students can't rely on companies to protect their data perfectly. Perfect security is impossible—every system has vulnerabilities. What families can do is reduce risk and prepare for potential breaches.

Evaluate Platforms Before Adoption

Before uploading your child's information to any platform:

- Ask the company about security certifications (SOC 2 Type II, ISO 27001)

- Request to see their vulnerability disclosure policy

- Ask whether they conduct regular security audits

- Check for history of security incidents (search the company name + "breach" or "vulnerability")

- Read reviews on sites like G2 or Capterra, filtering for security-related comments

Minimize Data Sharing

Share only information that's absolutely necessary. If a platform requests "optional" information, leave it blank. Do they really need your child's photograph? Their sibling's information? Their complete address including apartment number? Question every data request.

Use Strong, Unique Passwords

For education platforms (and every other service), use unique passwords. Never reuse passwords across platforms. If one platform is compromised, attackers will try the same email/password combination on others. A password manager like Bitwarden or 1Password makes this manageable.

Enable Two-Factor Authentication

If available, enable two-factor authentication. This prevents attackers from accessing accounts even if they steal passwords.

Monitor Credit Reports

Children's identities are frequently stolen. Once or twice yearly, check your child's credit reports using Annual Credit Report.com. Freeze your child's credit with the three major bureaus (Equifax, Experian, Trans Union) to prevent fraudulent accounts being opened in their name.

Know What Was Exposed

If a breach occurs, understand what data was exposed. Names and emails? Less risky. Dates of birth, addresses, social security numbers, financial information? Much riskier. This determines what monitoring and protective steps are necessary.

Demand Transparency

If your child's school uses a platform that experiences a breach, demand answers from the school district and the platform company. Did unauthorized access occur? What specific data was exposed? What investigation was conducted? What remediation is offered? Schools and platforms should be required to provide these answers.

Advocate for Better Standards

Connect with parent groups at your school or district. Advocate for policies requiring security audits before platform adoption. Push for disclosure requirements when breaches occur. Help school administrators understand that security is important, not just convenient features and low cost.

These steps don't guarantee protection, but they meaningfully reduce risk. Perfect security isn't possible, but reasonable security is achievable and should be expected.

Looking Forward: Necessary Changes

The Ravenna Hub incident reveals a system in need of fundamental change. Incremental improvements won't be sufficient. We need systemic shifts at regulatory, institutional, and industry levels.

Federal Regulation

The U. S. needs a comprehensive federal privacy and security law specifically addressing education data. This should:

- Mandate specific security controls (encryption, access controls, logging)

- Require third-party security audits (SOC 2 Type II or equivalent) annually

- Mandate breach notification within 48 hours to affected users

- Establish clear fines for violations ($X per affected record, with caps on total fines based on company size)

- Require data impact assessments before processing students' information

- Allow private right of action so families can sue for breaches

- Create a federal education data privacy office to investigate complaints and enforce standards

State Coordination

States should align around strong privacy standards rather than creating a patchwork of different rules. Model legislation exists (like the proposed Digital Age Responsibility and Accountability Act). States should adopt it.

Industry Standards

Education technology associations should establish mandatory security standards for members, including:

- SOC 2 Type II certification requirement

- Annual penetration testing

- Vulnerability disclosure policy with specific notification timelines

- Data retention limits (don't keep data longer than necessary)

- Regular security training for employees

Procurement Standards

Schools and districts should establish procurement guidelines that include:

- Security audit review before platform adoption

- Service level agreements with security breach notification timelines

- Right to audit provisions allowing schools to verify security practices

- Vendor lock-in prevention (data portability, standard formats)

Transparency Requirements

When breaches occur, companies should be required to:

- Notify affected users within 48 hours (not weeks or months)

- Disclose exactly what data was compromised

- Publish a post-breach analysis explaining what happened and what changes were made

- Offer free credit monitoring for affected individuals

Developer Education

Universities should enhance computer science curriculum to include mandatory security training. Concepts like access control, authentication, encryption, and secure coding practices should be fundamental, not specialized electives.

Insurance Requirements

Cyber insurance policies should require SOC 2 certification and mandate breach investigations. Insurance carriers have leverage to enforce better practices.

None of these changes would eliminate security breaches—that's impossible. But they would dramatically reduce the likelihood and severity of breaches, and ensure that when they occur, companies respond transparently and victims are protected.

The question isn't whether these changes will happen, but when. And whether they'll happen through regulation (proactive) or litigation (reactive). Litigation is more expensive and creates more damage. Regulation is preferable.

Conclusion: Trust Requires Verification

The Ravenna Hub incident reveals a simple truth: When it comes to your child's personal information online, trust is not sufficient. Verification is necessary.

Companies serving millions of students claim to protect data responsibly. Many probably intend to. But intentions matter less than systems. A company with good intentions but poor security practices will leak data. A company with strong systems and bad intentions will be constrained by those systems.

Ravenna Hub had neither good intentions (they didn't notify users, they didn't investigate thoroughly) nor strong systems (IDOR vulnerabilities suggest basic security isn't implemented). The result was exposure of 1.63 million student records through a trivial security flaw.

What's most troubling is that this situation was entirely preventable. The vulnerability didn't require sophisticated attack vectors or zero-day exploits. It was a basic failure of access control—the same failure that's been documented in security textbooks for decades. That such a failure existed and persisted in production suggests the company simply didn't prioritize security as an engineering concern.

The path forward requires changes at every level:

For Families: Scrutinize platforms before adoption. Question what data is truly necessary. Monitor your child's identity proactively. Advocate for stronger standards in your school district.

For Schools: Make security a primary criteria in platform evaluation, not an afterthought. Demand SOC 2 certification and third-party audit results. Include security requirements in procurement RFPs. Condition platform adoption on transparent incident response procedures.

For Companies: Implement baseline security practices as a cost of doing business, not as a competitive differentiator. Conduct regular security audits. Maintain strong incident response procedures. Prioritize transparency over defensive opacity when breaches occur.

For Regulators: Create clear federal standards for education data protection, including mandatory security controls, audit requirements, breach notification timelines, and meaningful penalties. Stop allowing companies to regulate themselves in a space where failures harm children.

The education technology industry has tremendous potential to improve learning outcomes, expand access to quality instruction, and prepare students for future success. But that potential is undermined when platforms leak data, when companies respond defensively to breaches, and when families lack confidence that their children's information is secure.

Ravenna Hub serves as a case study in what happens when security isn't prioritized. The company's response (or lack thereof) demonstrates that transparency doesn't happen voluntarily. The regulatory vacuum suggests that change requires external pressure.

Parents didn't upload their children's information to Ravenna Hub because they trusted the company—they did it because the school used the platform, and they had no choice. That power dynamic, where families have limited options and companies face minimal accountability, creates the conditions for repeated failures.

Change is possible. But it requires families demanding better, schools insisting on verification, and regulators establishing clear standards. The question is whether we'll wait for that change to happen voluntarily, or whether we'll demand it now—before the next 1.63 million student records are exposed through vulnerabilities that should never exist.

FAQ

What is an IDOR vulnerability?

An Insecure Direct Object Reference (IDOR) is a security flaw where an application doesn't properly verify that a user has permission to access a specific resource. Attackers exploit this by accessing resources belonging to other users by modifying identifiers in URLs or API requests. In Ravenna Hub's case, attackers could access other students' profiles by incrementing a number in the URL.

How does IDOR differ from a data breach?

An IDOR vulnerability is a flaw in application security that makes data accessible. A data breach occurs when someone actually accesses and exfiltrates the data. Ravenna Hub had an IDOR vulnerability, but we don't know if anyone actually breached it. The company wouldn't investigate whether unauthorized access occurred, which is the key issue—the vulnerability existed for an unknown period before being discovered and fixed.

Why didn't Venture Ed Solutions notify families immediately?

The company likely weighed the costs and benefits of disclosure. Notification triggers legal liability, regulatory attention, reputational damage, and potential litigation. Companies facing only internal incentives often choose minimal disclosure. This is why regulation is necessary—it changes the cost-benefit analysis to make transparency advantageous rather than disadvantageous.

What data was exposed and how risky is it?

Ravenna Hub exposed names, dates of birth, home addresses, school photos, sibling information, parent email addresses, and phone numbers for 1.63 million students. This combination creates a complete identity profile for children, enabling identity theft, fraud, location tracking, and social engineering attacks. The risk is substantial and long-term—children's stolen identities may not be discovered until adulthood when they apply for credit.

How could this vulnerability have been prevented?

The fix required a simple access control check: before displaying a student profile, verify that the requesting user is the parent/guardian of that student. This is taught in basic web development courses. The vulnerability existed because the company either didn't implement this check or didn't review code to ensure it was present. Better development practices (code review, security testing, threat modeling) would have caught this before production deployment.

What should families do if their child's information was on Ravenna Hub?

Families should monitor their child's identity by checking credit reports annually at Annual Credit Report.com, freezing credit with the three major bureaus to prevent fraudulent accounts being opened, and watching for suspicious activity. Consider credit monitoring services that alert you to suspicious activities. Request that Ravenna Hub provide details about what data was exposed and whether unauthorized access was detected.

Are other education platforms vulnerable to similar IDOR attacks?

Almost certainly yes. IDOR vulnerabilities are consistently reported as the most common security flaw in web applications by OWASP. Many education platforms, especially smaller companies in rapid growth phases, haven't implemented strong access control frameworks. Parents should ask prospective platforms about their security certifications and testing practices before adoption.

What regulations would prevent incidents like this?

A federal privacy law specifically for education data (similar to HIPAA for healthcare) would require security controls, third-party audits, breach notifications within 48 hours, and meaningful penalties for violations. Current regulations (FERPA, COPPA, CCPA) provide some protection but have significant gaps and limited enforcement mechanisms. State-level data breach notification laws vary wildly, creating inconsistent protection depending on where families live.

How can schools evaluate the security of education platforms?

Schools should require vendors to provide SOC 2 Type II certifications (or equivalent standards like ISO 27001), which verify that security controls are implemented and maintained. Schools should request audit reports and independent penetration testing results. They should ask whether the company has a formal vulnerability disclosure policy and incident response procedure. Schools should also include security criteria in their procurement RFPs with weighted scoring that treats security as equally important to features and cost.

What is the long-term impact of this incident on the education technology industry?

The incident reveals that self-regulation hasn't worked. Companies serving education data without third-party oversight continue experiencing breaches and responding defensively. The long-term impact depends on whether the incident triggers regulatory change. If so, it could force the entire industry to adopt stronger security standards. If not, breaches will continue as normalized costs of doing business, with families bearing the risk.

Key Takeaways

- IDOR vulnerabilities enable unauthorized access through sequential ID numbers and are the #1 security flaw in web applications according to OWASP

- VentureEd Solutions exposed 1.63 million student records including names, birthdates, addresses, and photos through inadequate access control checks

- Education technology operates in a regulatory vacuum compared to healthcare (HIPAA) and finance (PCI-DSS), reducing security investment incentives

- Third-party security audits and SOC 2 Type II certification should be mandatory before schools adopt education platforms

- Companies lack external incentives to investigate breaches transparently; regulation is necessary to change the cost-benefit analysis toward user protection

![Student Admissions Data Breach: How 1.6M Children's Records Were Exposed [2025]](https://tryrunable.com/blog/student-admissions-data-breach-how-1-6m-children-s-records-w/image-1-1771515955930.jpg)