Introduction

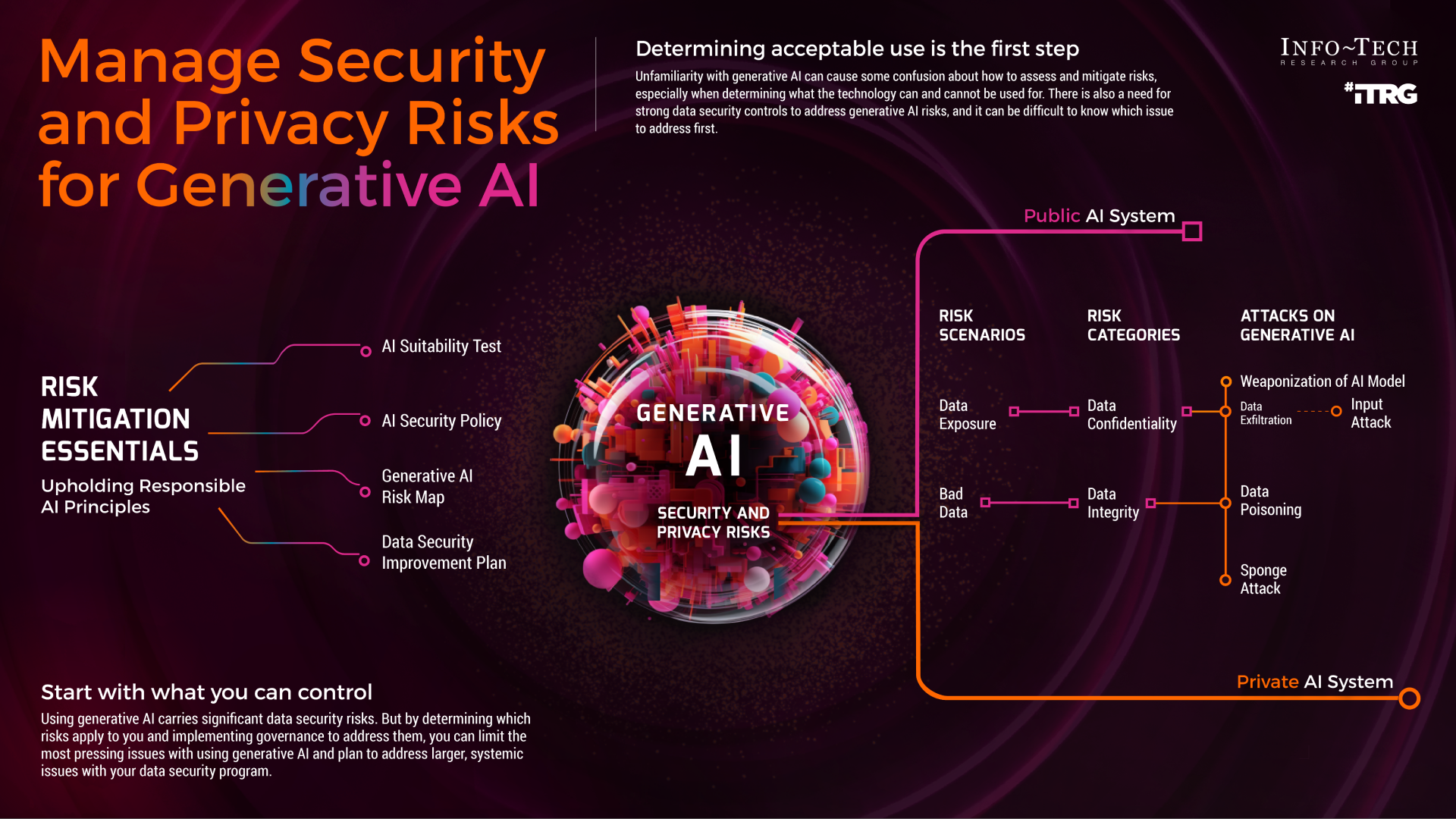

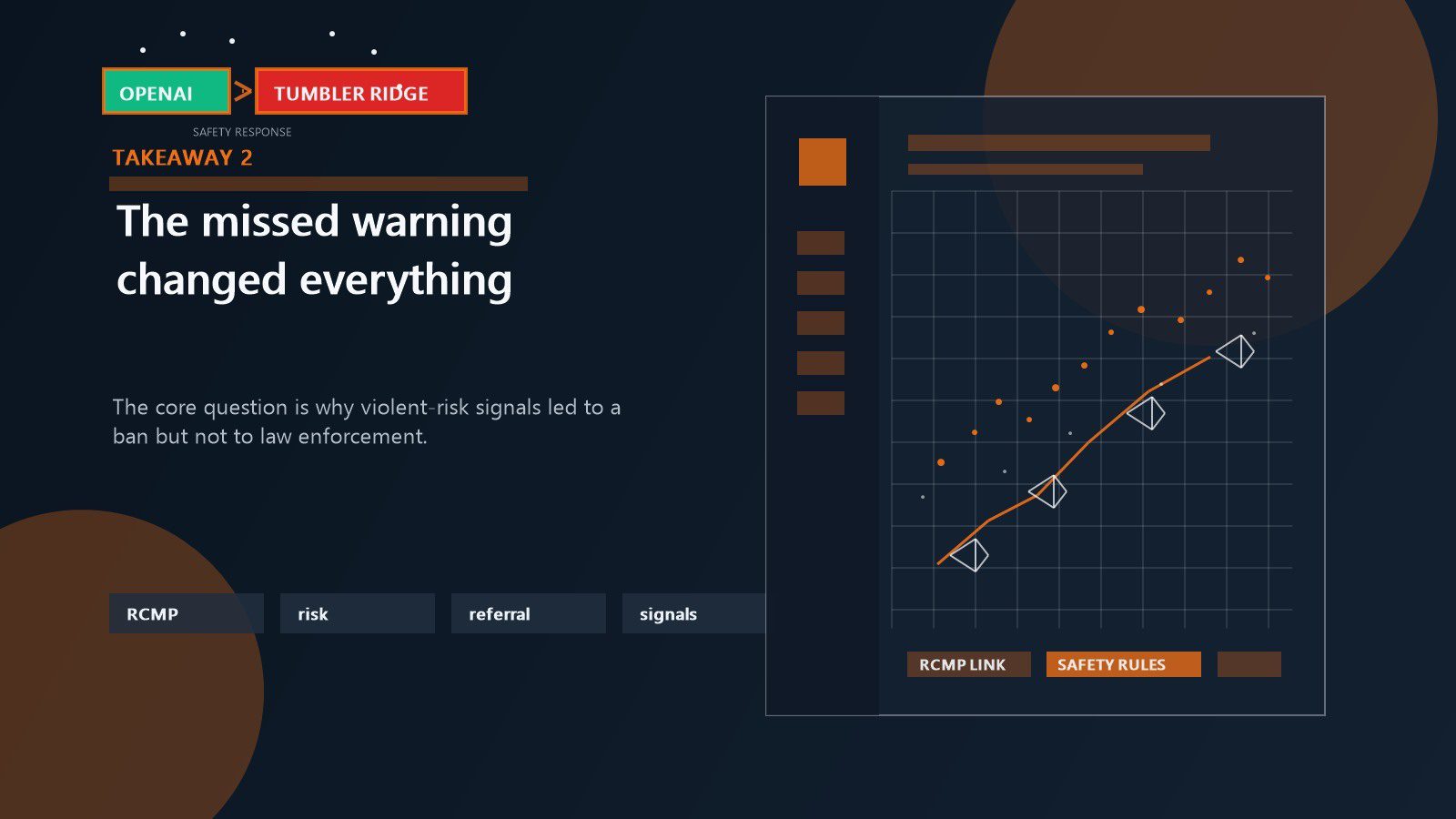

Last month, a legal battle emerged in Tumbler Ridge, sparking a debate that could redefine AI accountability. Families are suing Open AI, alleging the company failed to alert authorities about suspicious conversations on Chat GPT that may have prevented a tragedy. This case raises critical questions about the responsibilities of AI developers and the balance between privacy and security, as reported by Tumbler Ridge Lines.

TL; DR

- AI Responsibility: The case highlights the need for clear guidelines on AI's role in monitoring user activity.

- Legal Implications: Could set a precedent for future tech company liabilities, as discussed in CNBC's report.

- Privacy Concerns: Balancing user privacy with security needs is a central challenge.

- Technical Challenges: Implementing effective monitoring without infringing on privacy is complex.

- Future Trends: Expect regulatory frameworks that address AI surveillance, as noted by The Conversation.

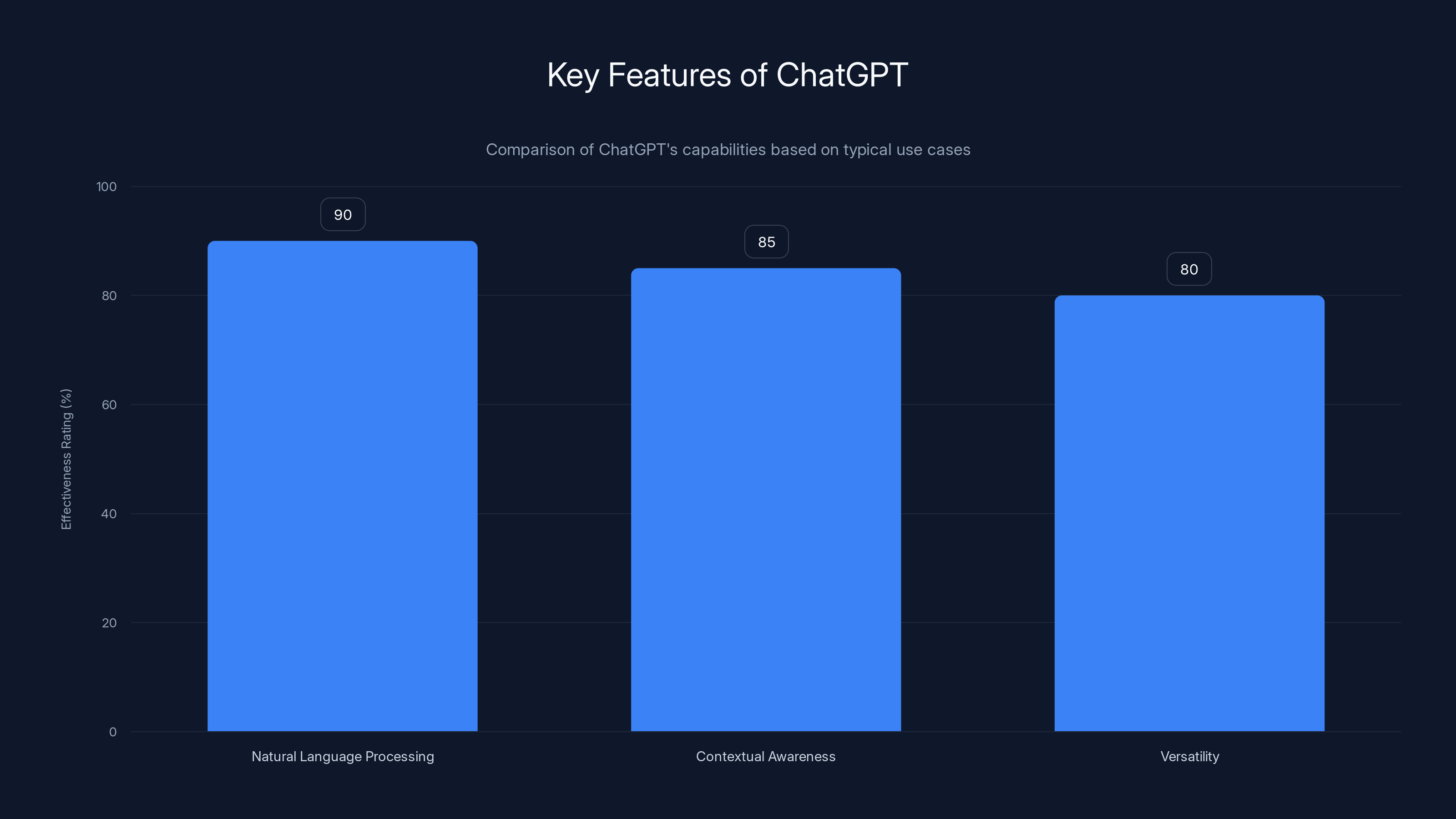

ChatGPT excels in natural language processing, with high effectiveness in contextual awareness and versatility across applications. (Estimated data)

The Incident: A Closer Look

The lawsuit revolves around a Chat GPT user whose interactions allegedly revealed plans for criminal activity. Families claim Open AI had enough information to alert law enforcement, potentially averting harm. This incident underscores the potential for AI tools to inadvertently become part of crime prevention—or complicity.

Understanding Chat GPT's Capabilities

Chat GPT, developed by Open AI, is a powerful language model capable of generating human-like text based on input prompts. It's used for a variety of applications, from customer service to creative writing. However, like any tool, it can be misused.

Key Features of Chat GPT:

- Natural Language Processing: Capable of understanding and generating text that feels natural.

- Contextual Awareness: Remembers user input to keep conversations coherent.

- Versatility: Used across industries for automation, content creation, and more.

The Legal Context

This lawsuit could establish new legal precedents surrounding AI accountability. Traditionally, tech companies have shielded themselves under "platform" status, claiming not to be responsible for user-generated content. However, as AI's role becomes more integrated into decision-making processes, this defense may no longer suffice, as discussed in Lawfare Media.

Legal Questions Raised:

- Duty to Report: Should AI companies have a legal obligation to report potential threats?

- Liability: To what extent are AI developers responsible for user actions facilitated by their platforms?

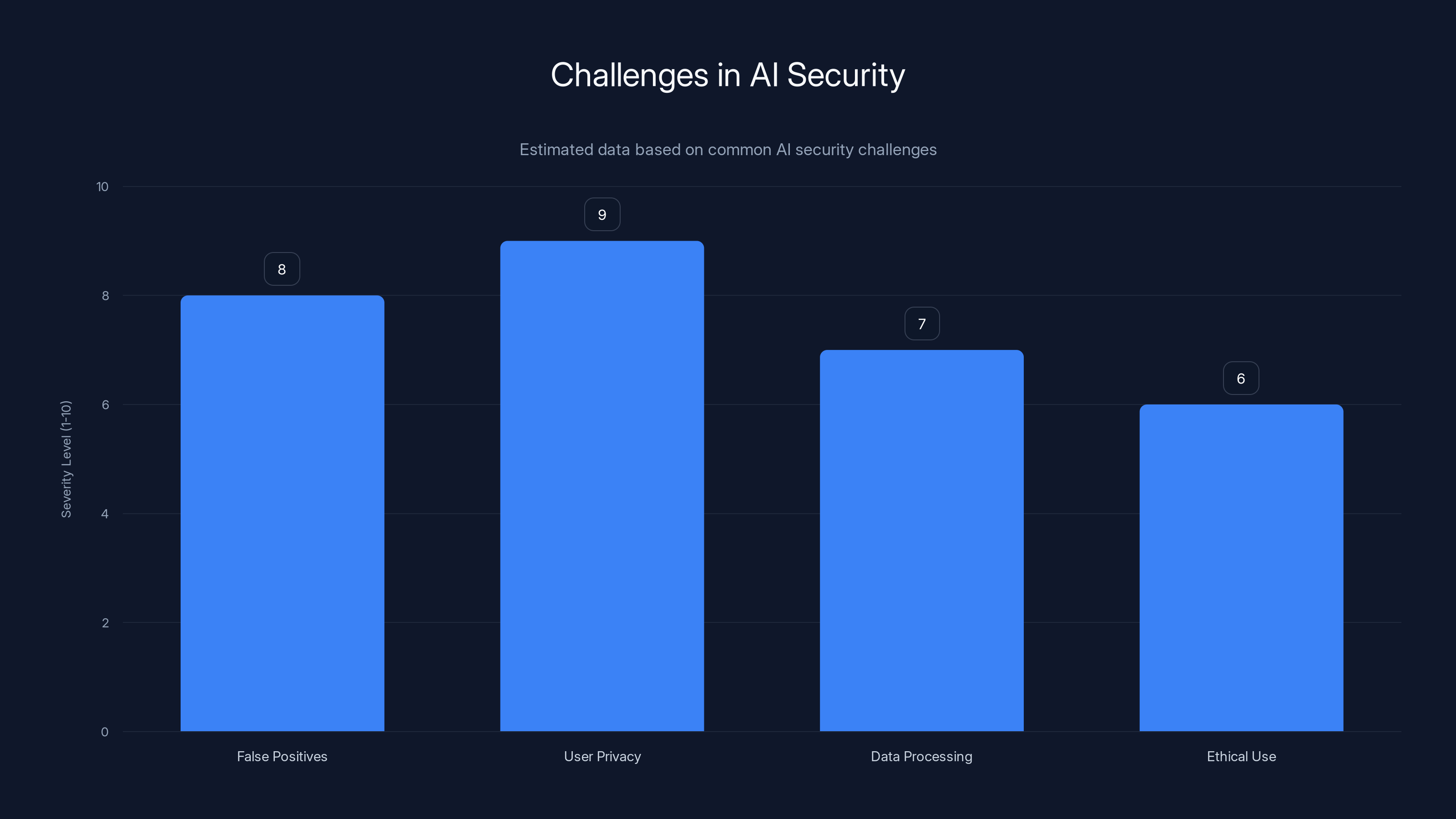

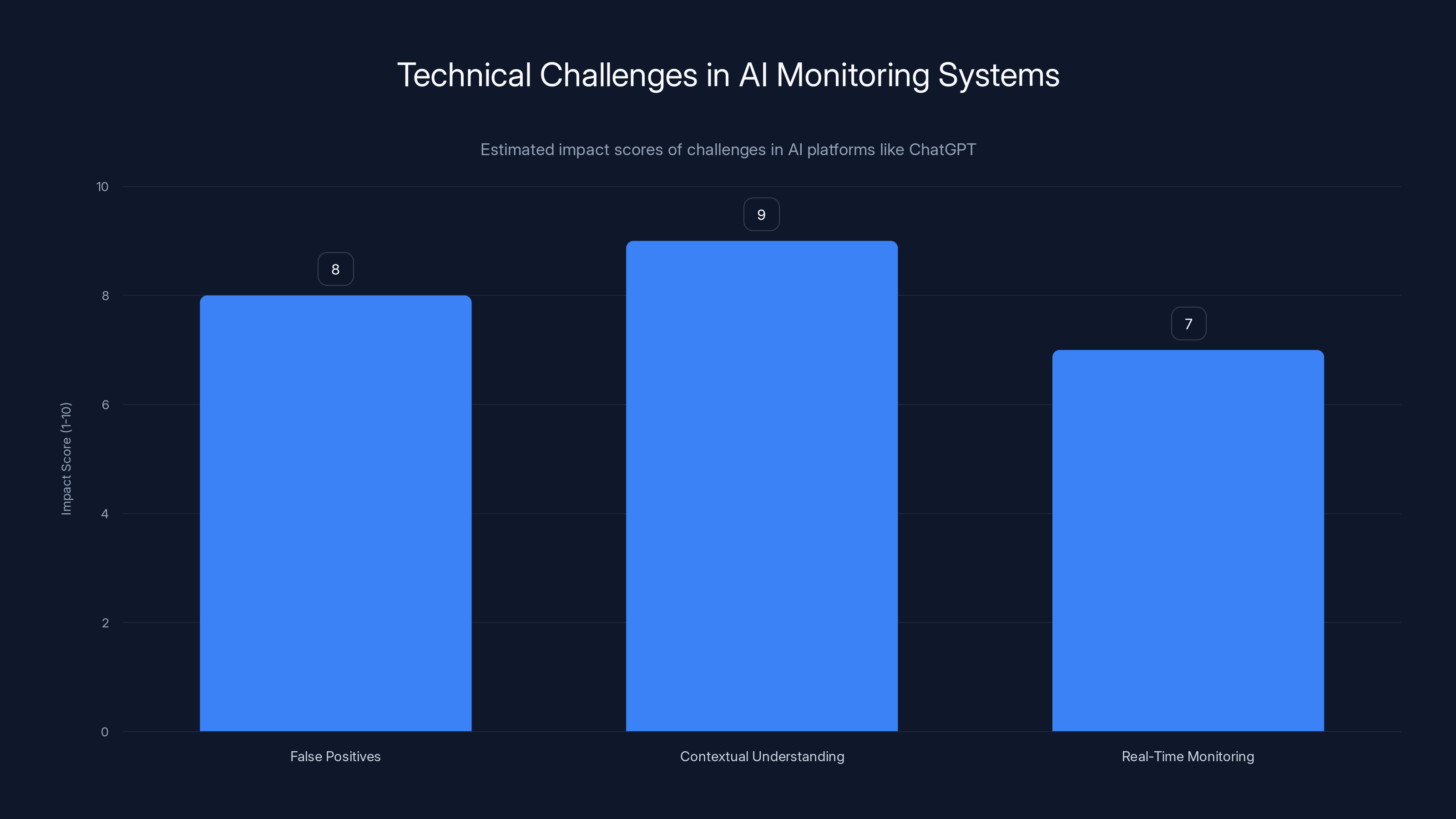

AI security faces significant challenges, particularly in maintaining user privacy and avoiding false positives. Estimated data.

Privacy vs. Security: The Ethical Dilemma

The core of the Tumbler Ridge case lies in the tension between privacy and security. AI platforms like Chat GPT are designed to respect user privacy, but this can conflict with security needs.

Privacy Concerns

Users trust AI tools with sensitive information, expecting confidentiality. Implementing surveillance measures could undermine this trust and deter use.

Challenges in Maintaining Privacy:

- Data Encryption: Ensuring conversations are secure from unauthorized access.

- Anonymity: Protecting user identities while monitoring for risks.

Security Imperatives

On the flip side, AI can play a pivotal role in identifying potential threats and preventing crimes. However, determining when and how to intervene remains a complex challenge.

Security Measures Considered:

- Automated Flagging Systems: Using AI to identify and flag suspicious activity.

- Human Oversight: Involving human review to ensure accuracy and context.

Technical Challenges and Solutions

Implementing effective monitoring systems in AI platforms like Chat GPT involves navigating numerous technical challenges.

Challenges

- False Positives: Excessive flagging can overwhelm systems and lead to "cry wolf" scenarios.

- Contextual Understanding: AI must accurately interpret context to differentiate between benign and malicious intent.

- Real-Time Monitoring: Ensuring systems can process vast amounts of data without significant delays.

Proposed Solutions

- Advanced Algorithms: Developing AI that can better understand context and reduce false positives.

- Integrated Systems: Combining AI with human oversight to balance efficiency and accuracy.

- User Feedback Loops: Allowing users to report inaccuracies to refine AI algorithms.

Contextual understanding poses the highest challenge in AI monitoring, followed by false positives and real-time monitoring. (Estimated data)

Best Practices for AI Developers

Developers must adopt best practices to navigate the legal and ethical landscapes of AI responsibly.

Transparency and Accountability

- Clear Privacy Policies: Inform users about data collection and usage.

- Regular Audits: Conduct audits to ensure compliance with privacy standards.

Collaboration with Authorities

- Predefined Protocols: Establish clear guidelines for when and how to report potential threats.

- Training: Equip AI systems with the ability to recognize and escalate genuine threats.

Future Trends in AI Accountability

The Tumbler Ridge case is likely to influence future AI policy and regulation significantly.

Expected Developments

- Regulatory Frameworks: Governments may introduce stricter regulations on AI monitoring and reporting, as highlighted by BizTech Magazine.

- Industry Standards: Creation of industry-wide standards for AI transparency and accountability.

Predictions for AI's Future:

- Increased Scrutiny: AI tools will face greater scrutiny regarding their role in security and privacy.

- Technological Advancements: Continued improvement in AI's ability to understand context and intent.

Conclusion

The Tumbler Ridge lawsuit against Open AI is more than a legal battle; it's a pivotal moment in the evolution of AI responsibility. As AI continues to integrate into daily life, finding the balance between privacy and security will be crucial. By adopting best practices and anticipating future trends, developers can help shape a future where AI enhances safety without compromising privacy.

FAQ

What is AI accountability?

AI accountability refers to the responsibility of AI developers and companies to ensure their technologies are used ethically and do not cause harm.

How does AI monitoring work?

AI monitoring involves using algorithms to track user interactions and flag potential threats, often supplemented by human oversight for accuracy.

What are the benefits of AI surveillance?

Benefits include improved security by preventing crimes and threats, though it must be balanced with privacy considerations.

What challenges do developers face in AI security?

Challenges include avoiding false positives, maintaining user privacy, and ensuring systems can process data efficiently.

How can AI developers ensure privacy?

By using data encryption, anonymizing user data, and being transparent about data usage.

What trends are expected in AI regulation?

Expect stricter regulations and industry standards focused on transparency and accountability in AI applications.

How can AI improve its contextual understanding?

Through advanced algorithms, machine learning, and user feedback loops to enhance accuracy in interpreting user intent.

Use Case: Automate your weekly reports with AI to save time and ensure accuracy.

Try Runable For Free

Key Takeaways

- The Tumbler Ridge case highlights the need for clear AI accountability guidelines.

- Legal implications could redefine tech company liabilities in AI usage.

- Balancing AI-driven security with privacy is a growing challenge.

- Implementing AI monitoring involves significant technical hurdles and solutions.

- Future trends suggest stricter AI regulations and industry standards.

Related Articles

- Trust by Design: Evaluating Trustworthiness in AI Agents [2025]

- The Rise of Taylor Swift Deepfakes: Exploring the Impact and Mitigation Strategies [2025]

- The AI Illusion: Why Businesses Are Spending Big but Fixing Nothing [2025]

- Keeping AI Agents in Check: Preventing Unauthorized Use of Your Credit Cards [2025]

- Exploring the Intricacies of Sci-Fi: Why 'Aphelion' Deserves Your Attention [2025]

- Using ChatGPT to Implement 'The 7 Habits of Highly Effective People' [2025]

![The Complex Dynamics of AI Responsibility: A Case Study on Tumbler Ridge and OpenAI [2025]](https://tryrunable.com/blog/the-complex-dynamics-of-ai-responsibility-a-case-study-on-tu/image-1-1777475057143.jpg)