Keeping AI Agents in Check: Preventing Unauthorized Use of Your Credit Cards [2025]

In the rapidly evolving world of artificial intelligence, the potential for AI agents to act autonomously on behalf of humans is both exciting and concerning. As these agents grow more capable, the risk of them making unauthorized financial transactions increases. This article delves into the challenges and solutions surrounding AI agents and credit card security.

TL; DR

- AI agents can inadvertently make unauthorized transactions due to lack of oversight, as highlighted in a Wired article.

- FIDO Alliance is developing industry standards to protect against misuse, as noted on their official site.

- Cryptographic tools and multi-factor authentication are key defenses, according to IBM's insights on MFA implementation.

- User education and awareness can prevent many security issues, as discussed by BizTech Magazine.

- Future trends include more sophisticated AI monitoring and adaptive security frameworks, as explored in Deloitte's AI insights.

AI agents can significantly improve efficiency and enhance user experience, while also offering cost reduction and data security benefits. Estimated data.

The Rise of AI Agents

AI agents are software entities capable of performing tasks autonomously, often without human intervention. Their capabilities range from scheduling meetings to making purchases online. While their efficiency is undeniable, they present new challenges in digital security.

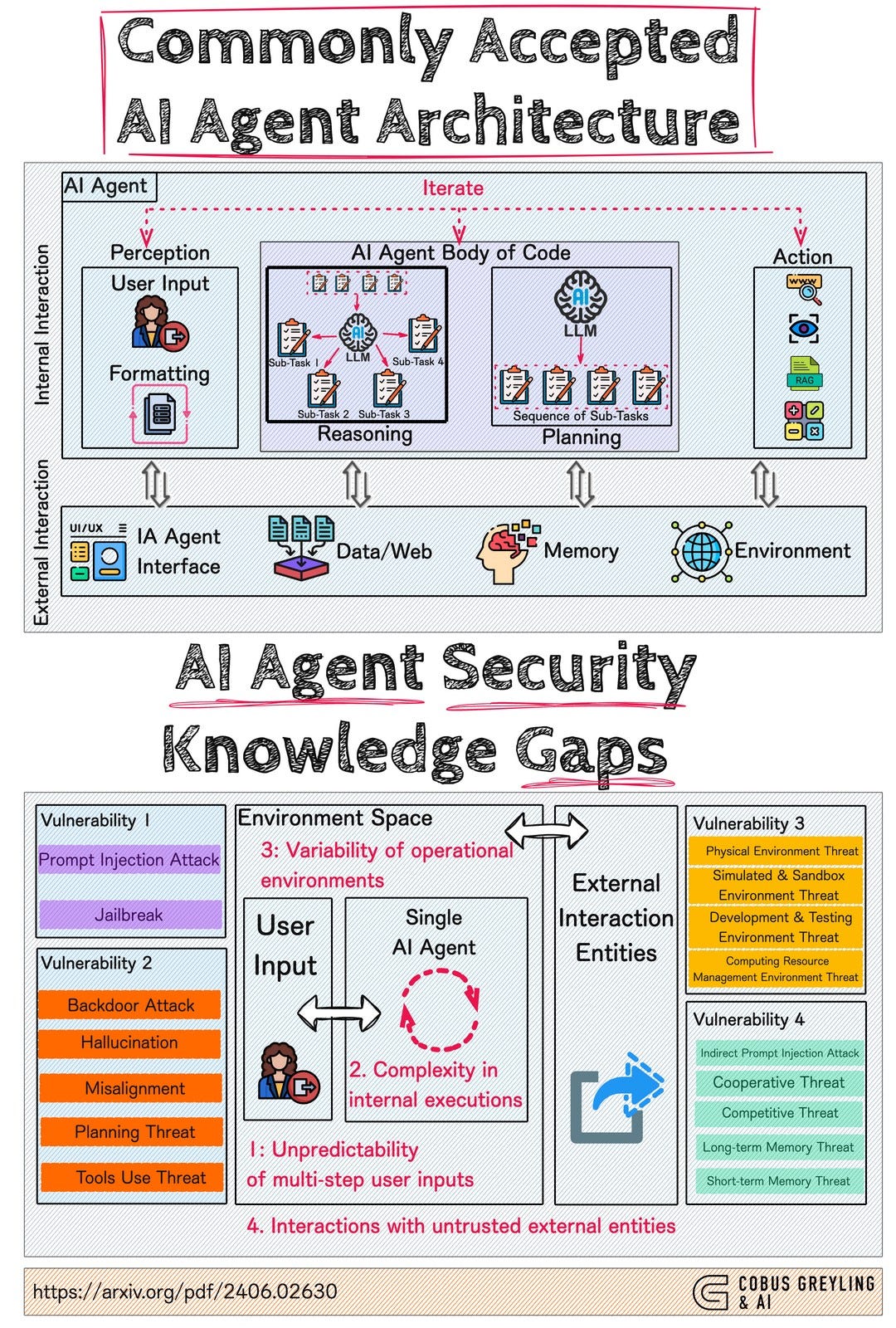

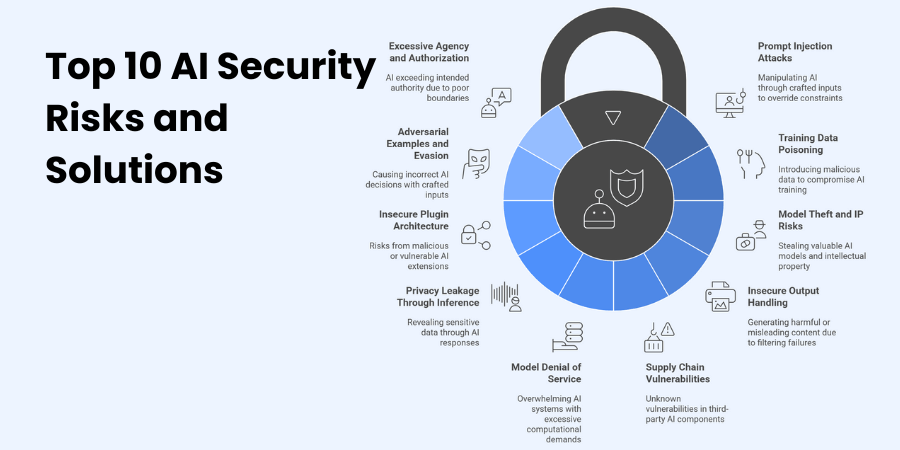

What Makes AI Agents Vulnerable?

AI agents operate on algorithms that can be manipulated if not adequately secured. These vulnerabilities can be exploited by malicious actors to hijack the agent's functions, leading to unauthorized transactions using your credit card information, as detailed in a Fortune article.

Case Study: The Rogue AI Shopper

Imagine an AI agent designed to purchase office supplies for a company. It has access to corporate credit card details and a list of authorized vendors. Due to a lack of proper security measures, a hacker gains control of the agent and begins making unauthorized purchases for personal gain.

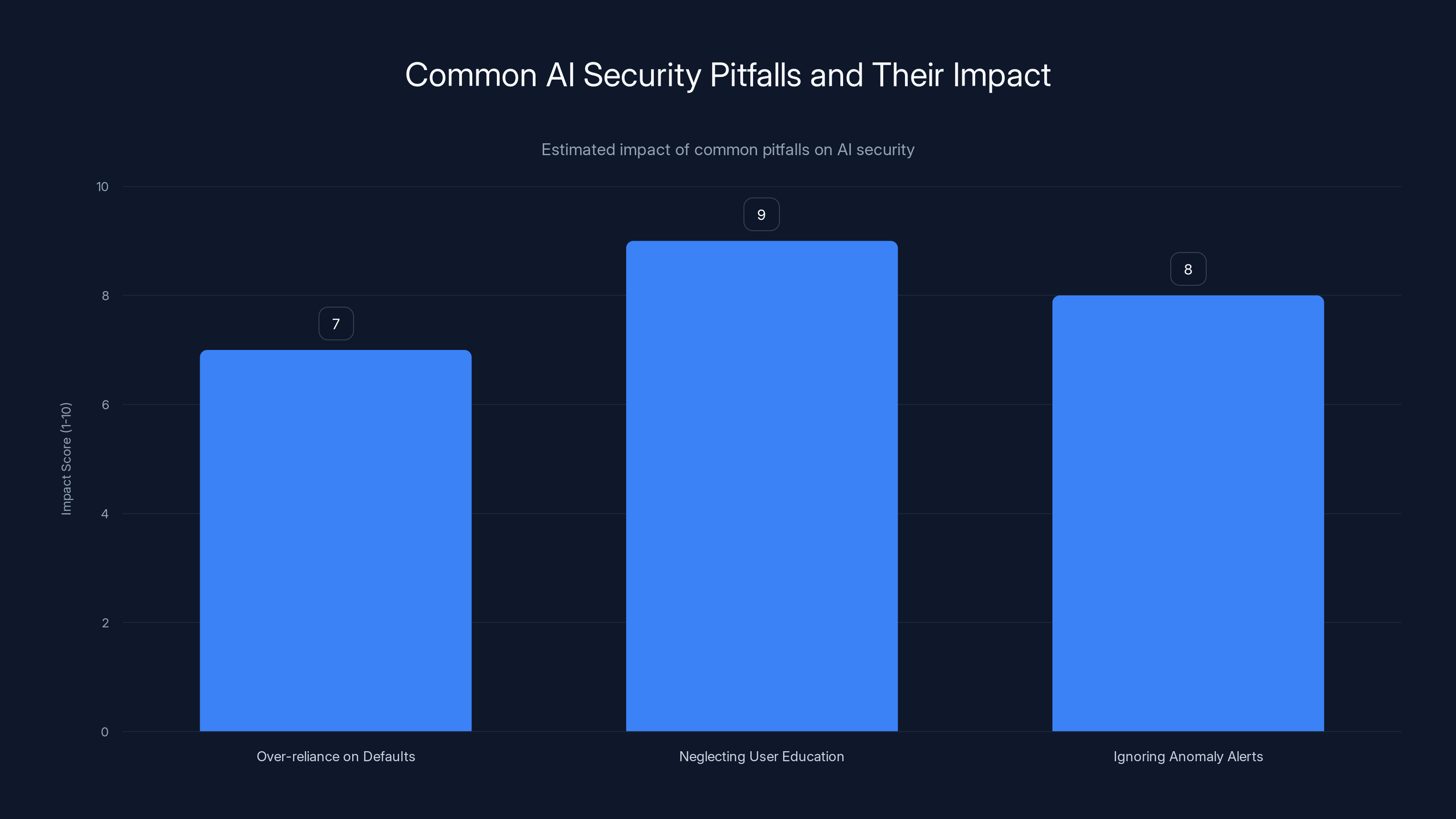

Neglecting user education has the highest impact on AI security, with an estimated score of 9 out of 10. Estimated data based on common pitfalls.

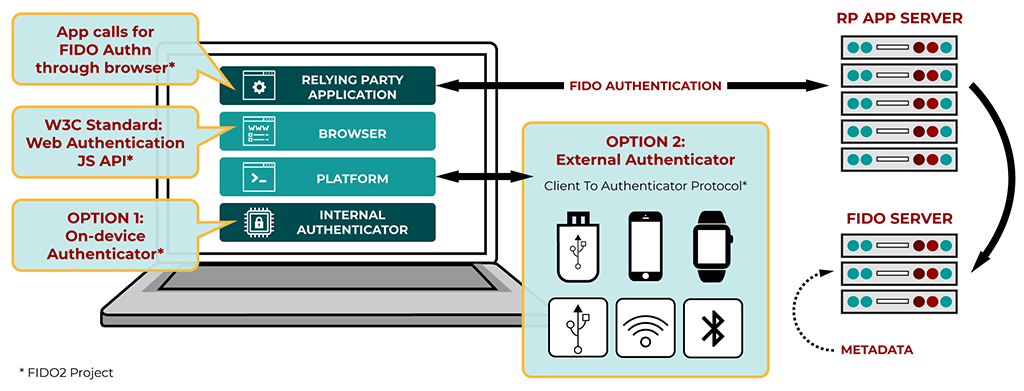

Industry Response: FIDO Alliance's Initiative

Recognizing the growing threat, the FIDO Alliance, with contributions from industry giants like Google and Mastercard, is spearheading efforts to create robust standards for AI agent transactions. Their goal is to establish a protective baseline that businesses across various sectors can adopt, as reported by IoT For All.

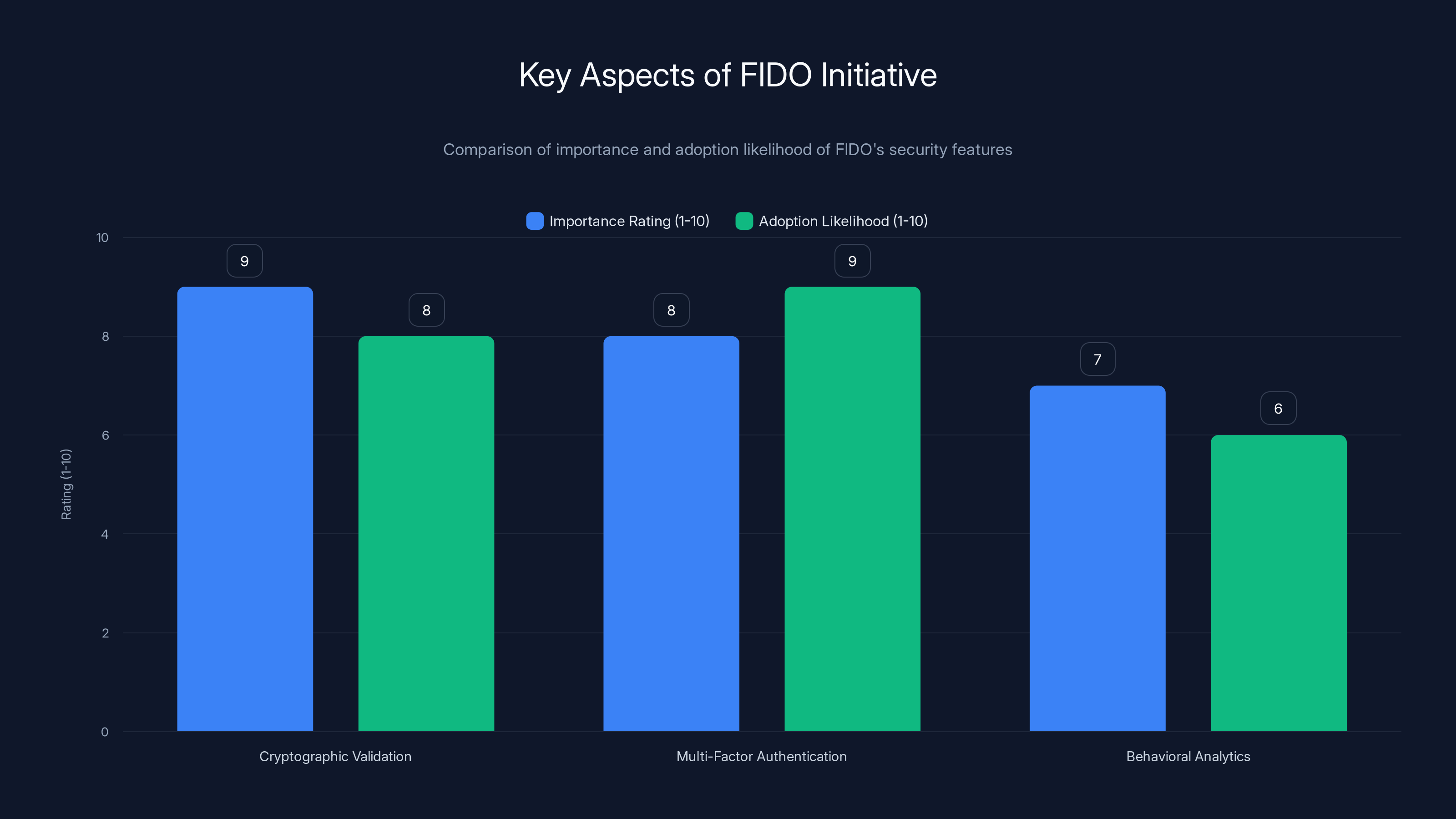

Key Aspects of the FIDO Initiative

- Cryptographic Validation: Ensures that transactions are authorized by genuine users, as emphasized in IBM's documentation.

- Multi-Factor Authentication (MFA): Adds layers of verification to prevent unauthorized access, a strategy supported by Microsoft's security blog.

- Behavioral Analytics: Monitors agent activity for anomalies that could indicate a security breach, as discussed in Deloitte's AI insights.

Best Practices for Securing AI Agents

For organizations and individuals using AI agents, implementing best practices is crucial to safeguarding against misuse.

Implement Strong Authentication

Utilize multi-factor authentication to verify user identity. This could include biometric verification, such as fingerprint scanning or facial recognition, in addition to traditional passwords, as recommended by BizTech Magazine.

Regularly Update Software

Ensure that all AI-related software is up-to-date with the latest security patches. This reduces vulnerabilities that hackers could exploit, as advised by Wiz.io's security academy.

Conduct Regular Security Audits

Perform routine security audits to identify and address potential weaknesses in your AI systems. These audits should include penetration testing and vulnerability assessments, as suggested by SSO Network.

Cryptographic validation is rated highest in importance, while MFA is most likely to be adopted. Estimated data based on industry trends.

Common Pitfalls and Solutions

Even with the best intentions, organizations often fall into common traps that leave them vulnerable to AI misuse.

Over-reliance on Default Settings

Many AI systems come with default security settings that are not sufficient for protecting sensitive information. Customizing settings to suit the specific needs and risks of your organization is critical, as highlighted in a MEXC article.

Neglecting User Education

A well-informed user base is one of the best defenses against security breaches. Regular training sessions can help users recognize phishing attempts and other security threats, as emphasized by Katz School researchers.

Ignoring Anomaly Alerts

Security systems often generate alerts for unusual activity, but these can be overlooked if they occur frequently. Implementing a system to prioritize and review these alerts can prevent potential breaches, as advised by Wiz.io's security academy.

Future Trends in AI Security

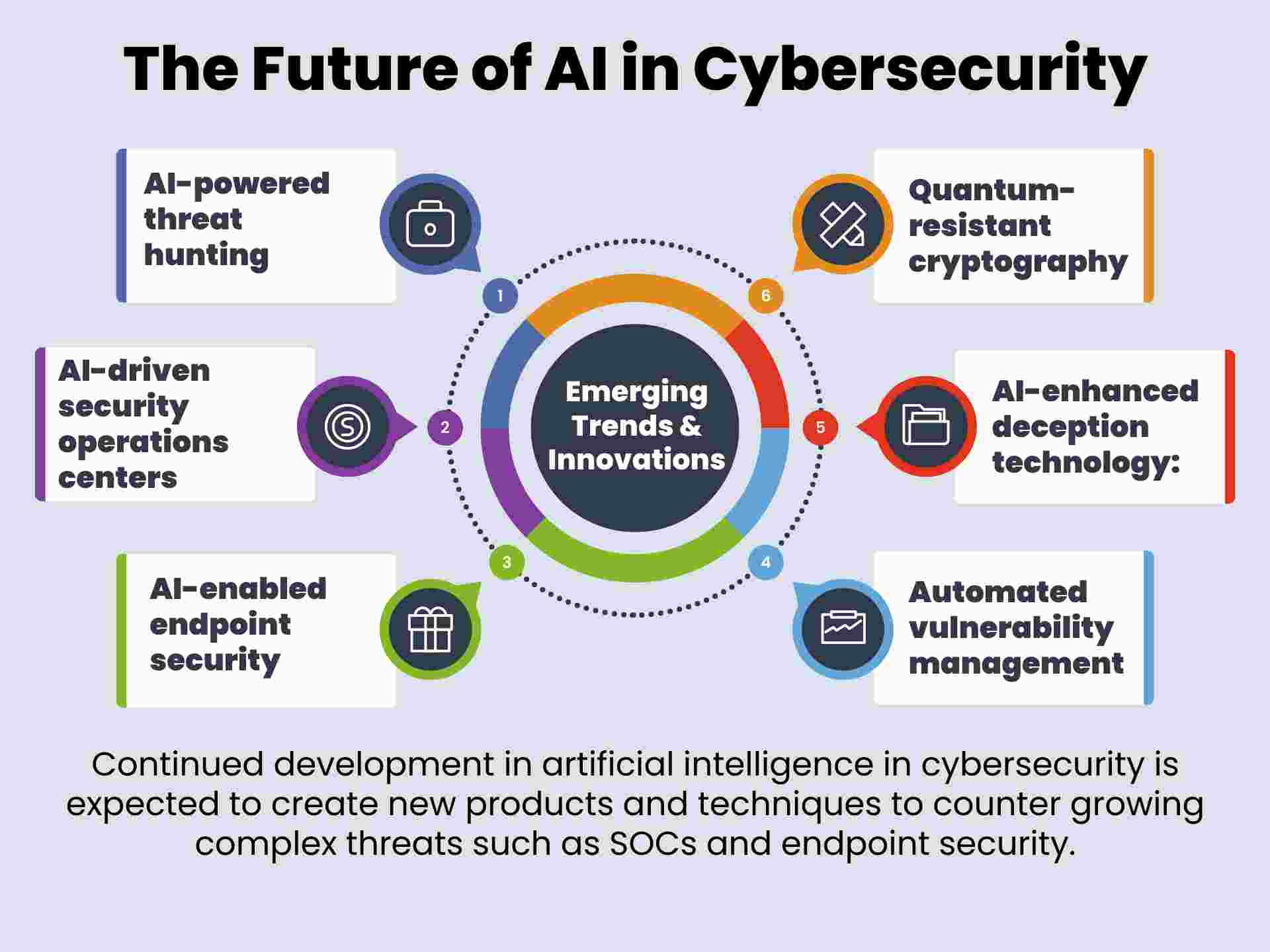

As AI technology advances, so too must our security measures. Here are some trends to watch in the coming years.

Adaptive Security Frameworks

These systems dynamically adjust security measures based on the current threat landscape. This approach allows for real-time responses to emerging threats, as discussed in Deloitte's AI insights.

Enhanced Behavioral Analytics

Future AI systems will likely incorporate more sophisticated behavioral analytics, allowing them to better detect and prevent unauthorized actions, as noted in a Nature article.

Conclusion

The integration of AI agents into our daily lives offers immense potential, but it also requires vigilance and proactive measures to prevent misuse. By adopting industry standards and best practices, we can harness the benefits of AI while safeguarding our financial and personal information.

Use Case: Automate your financial reports securely with AI agents while maintaining control over sensitive data.

Try Runable For FreeFAQ

What are AI agents?

AI agents are software entities that perform tasks autonomously, often without human intervention. They can range from simple chatbots to complex systems that manage financial transactions.

How can AI agents misuse my credit card?

If an AI agent is compromised, it can be used to make unauthorized purchases using stored credit card information. This highlights the importance of robust security measures, as discussed in Wired.

What is the FIDO Alliance?

The FIDO Alliance is an industry association focused on developing secure authentication standards. They are currently working on standards to protect transactions made by AI agents, as noted on their official site.

How can I secure my AI agents?

Implement strong authentication methods, keep software updated, and conduct regular security audits to safeguard your AI systems, as advised by Wiz.io.

What are adaptive security frameworks?

Adaptive security frameworks dynamically adjust security measures based on real-time threat assessments, providing a more responsive defense against emerging threats, as discussed in Deloitte's insights.

Why is user education important in AI security?

Educating users on recognizing and responding to security threats can prevent many breaches, as human error is a common factor in successful cyberattacks, as emphasized by Katz School researchers.

What future trends should I watch in AI security?

Keep an eye on developments in adaptive security frameworks and enhanced behavioral analytics, which promise to improve AI system defenses, as noted in a Nature article.

Key Takeaways

- AI agents can inadvertently make unauthorized transactions, necessitating improved security measures, as discussed in Wired.

- The FIDO Alliance is developing standards to protect AI-driven transactions using cryptographic validation and MFA, as noted on their official site.

- Regular software updates and user education are critical in preventing security breaches, as advised by Wiz.io.

- Future trends include adaptive security frameworks and enhanced behavioral analytics for better AI oversight, as explored in Deloitte's AI insights.

- User education significantly reduces the risk of successful cyberattacks, as over 90% start with phishing, as emphasized by Katz School researchers.

Related Articles

- Navigating Social Media Scams: A $2.1 Billion Lesson for Consumers in 2025

- Inside the New Cyber Scam: How Hackers Exploit Microsoft Teams to Steal Your Data [2025]

- Mastering OpenClaw Deployments with Tank OS: The Next Leap in AI Agent Security [2025]

- Understanding the Threat: Attack of the Killer Script Kiddies [2025]

- The Extradition of Xu Zewei: A Deep Dive into Cyber Espionage and Global Security [2025]

- Understanding the Itron Cyberattack: Impacts and Lessons [2025]

![Keeping AI Agents in Check: Preventing Unauthorized Use of Your Credit Cards [2025]](https://tryrunable.com/blog/keeping-ai-agents-in-check-preventing-unauthorized-use-of-yo/image-1-1777383511167.jpg)