The Intricacies of Generative AI in Writing Tools: Lessons from Grammarly's Recent Strategy Shift

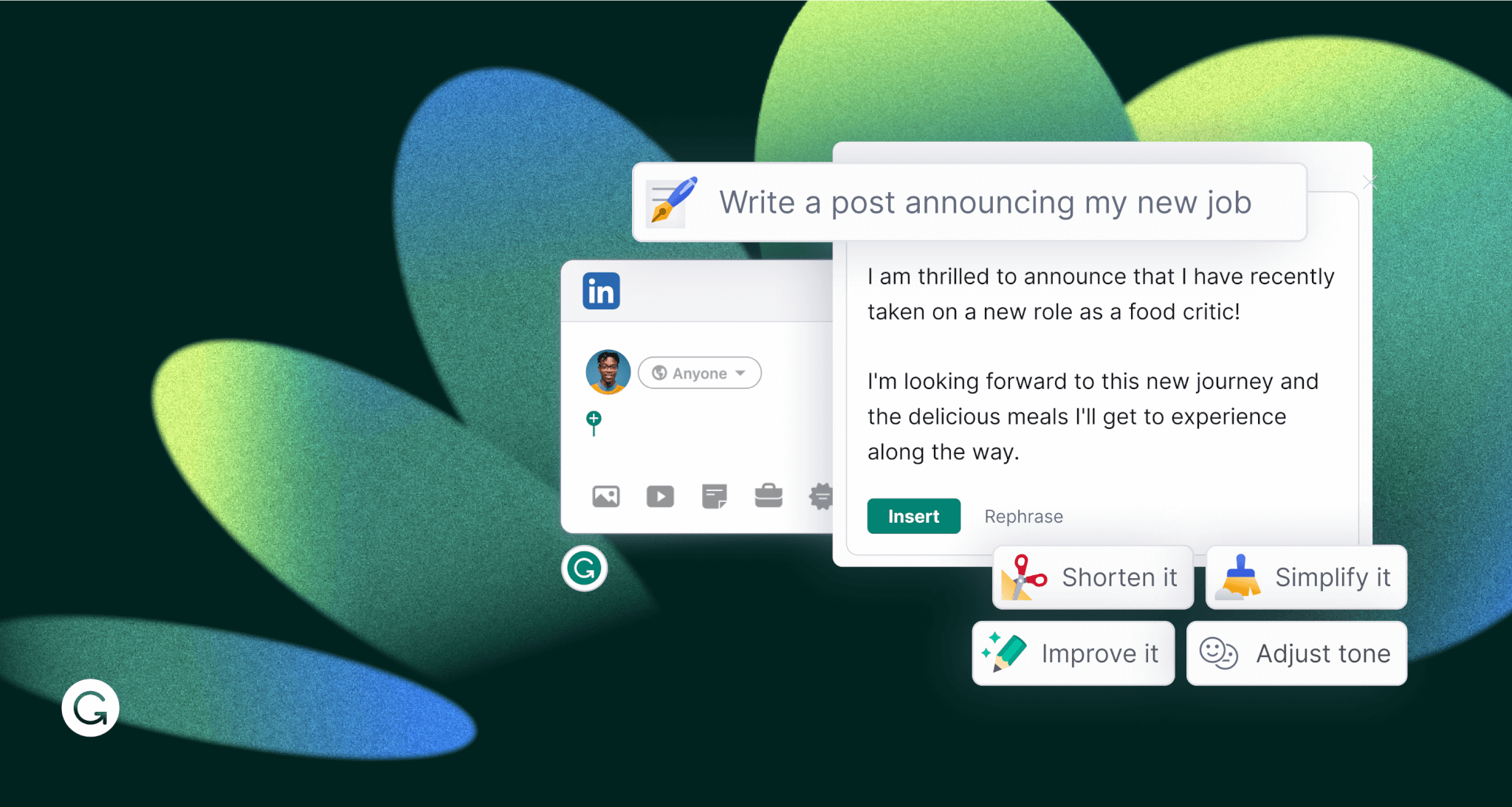

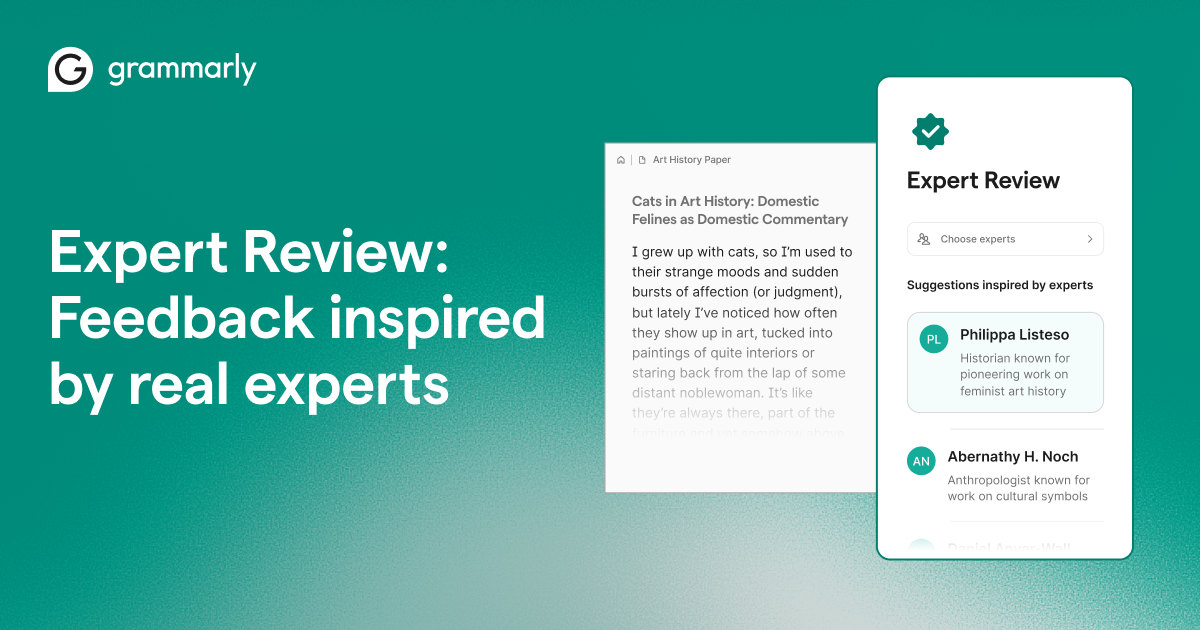

Last year, Grammarly, the well-known writing assistant, embarked on an ambitious venture—launching a feature called Expert Review. This feature aimed to offer feedback on users' writing, presenting it as coming from well-known writers or academics using generative AI. However, this initiative quickly hit a snag, leading to its discontinuation. Let's dive into the complexities of this situation, exploring the technical, ethical, and practical dimensions of generative AI in writing tools and what lessons can be gleaned from Grammarly's experience.

TL; DR

- Generative AI Feedback: Grammarly's feature offered AI-generated feedback under famous writers' names, leading to ethical concerns as reported by Decrypt.

- Legal and Ethical Challenges: Using real writers' names without permission raised significant issues, highlighted by The Verge.

- User Trust and Transparency: Maintaining user trust is crucial in AI tool development, as noted by Cornerstone OnDemand.

- Future of AI in Writing: Balancing AI capabilities with ethical considerations is key, according to MIT Sloan.

- Practical Tips: Focus on transparent AI implementation to avoid pitfalls.

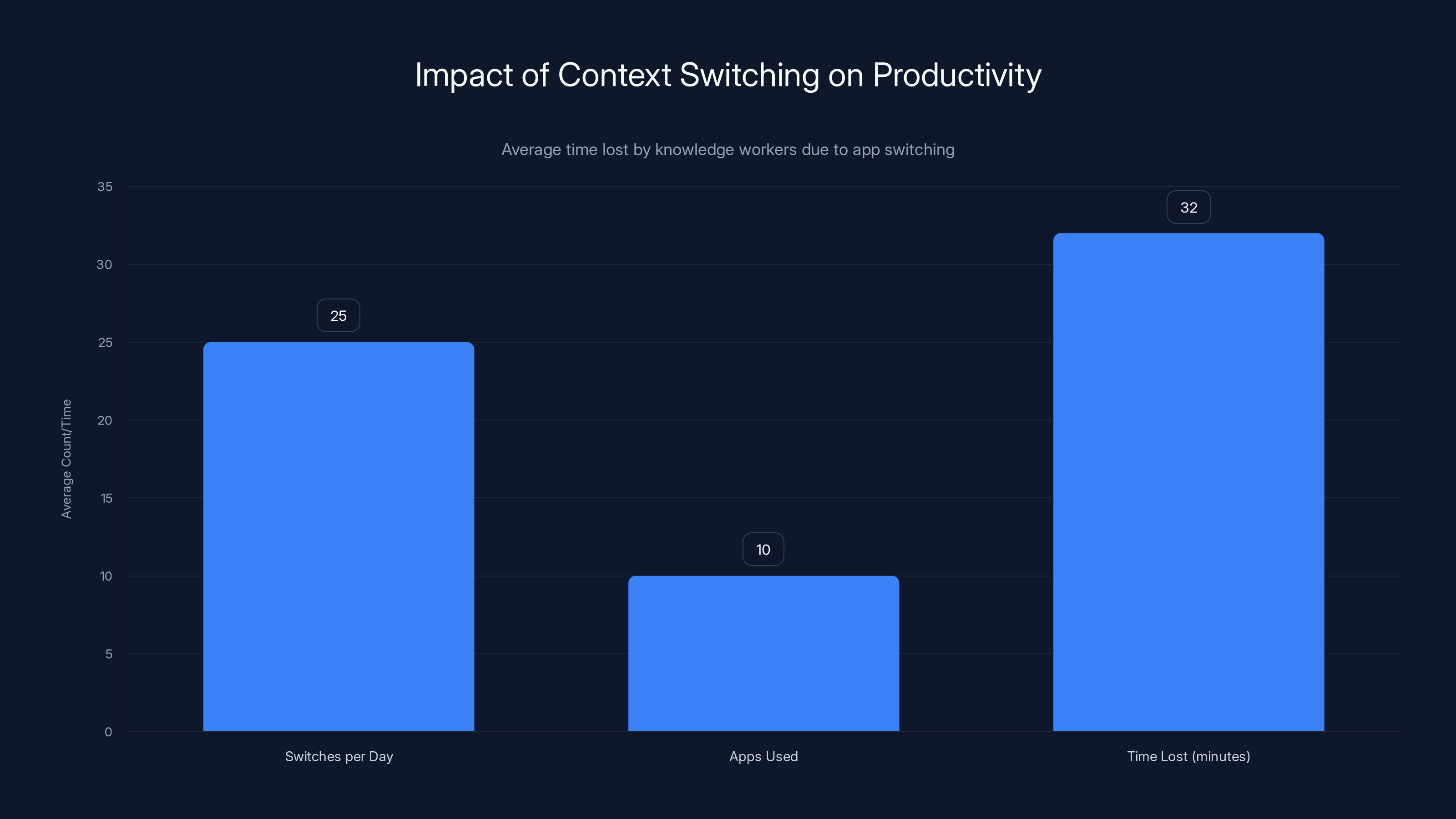

Knowledge workers lose an average of 32 minutes daily due to switching between 10 apps 25 times. Estimated data.

Understanding Generative AI in Writing Tools

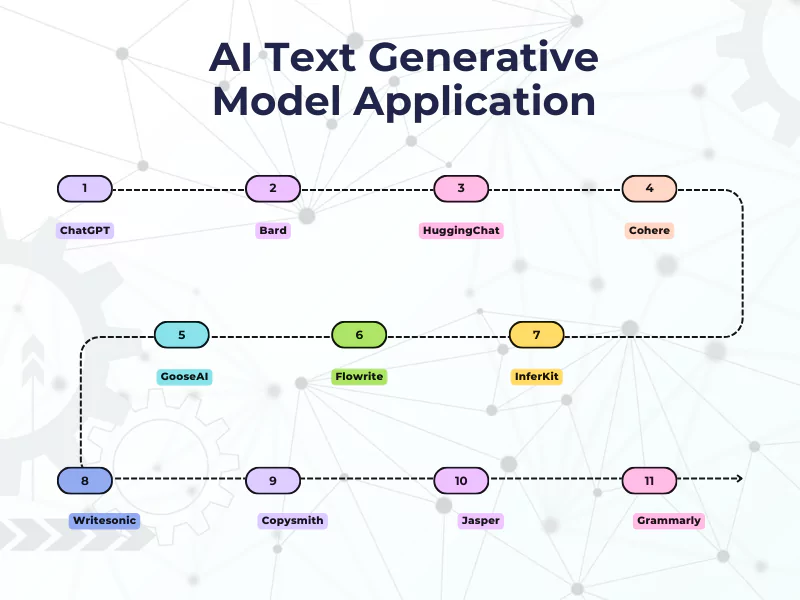

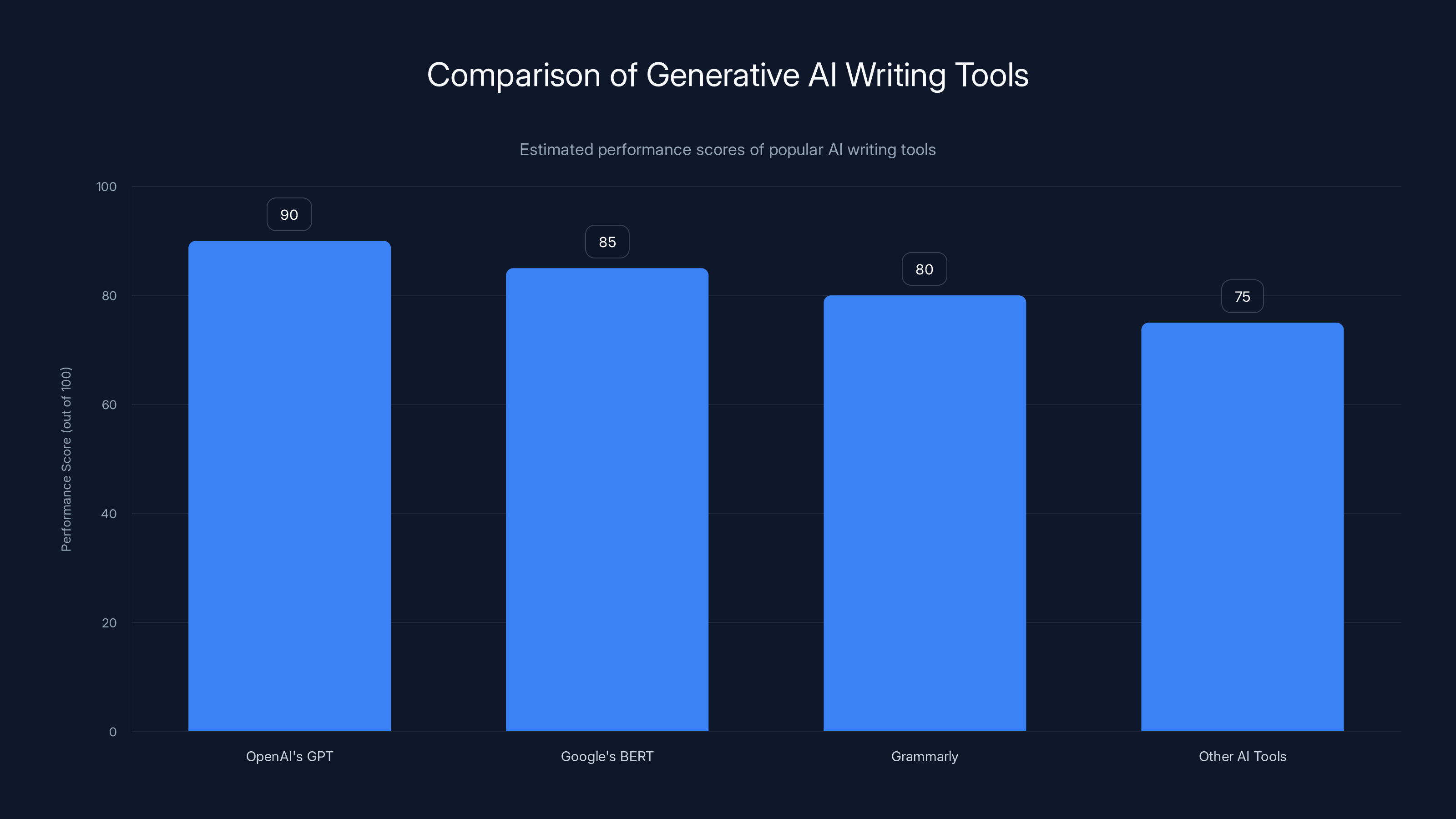

Generative AI refers to algorithms capable of generating text that mimics human writing. These systems, often built upon large language models (LLMs) like OpenAI's GPT or Google's BERT, can analyze vast amounts of text to produce coherent, contextually relevant content. In the case of Grammarly, this technology was leveraged to generate writing feedback ostensibly from renowned authors or experts, adding a layer of novelty and authority to the suggestions.

How Does Generative AI Work?

Generative AI tools process input text, identify key themes, and generate output text that aligns with the style and substance of the input. Here's a simplified breakdown:

- Data Collection: Algorithms analyze extensive datasets, learning from the style, tone, and structure of written content.

- Training: The AI model is trained on this data to recognize patterns and generate text that mimics these patterns.

- Feedback Loop: Through user interactions, the model refines its output, learning what works and what doesn’t.

Estimated data shows OpenAI's GPT leading in performance among generative AI writing tools, followed by Google's BERT and Grammarly.

Grammarly’s AI Feedback Feature: A Closer Look

Grammarly's Expert Review was an innovative attempt to provide users with feedback from AI-generated personalities. Users could choose from a range of historical and contemporary figures, receiving feedback that purportedly reflected these figures' writing styles and philosophical approaches.

The Appeal

- Personalization: Users could select feedback from figures they admired, creating a more engaging experience.

- Authority: Feedback from a 'famous' writer added perceived credibility.

The Challenge

However, this approach quickly encountered hurdles. The use of real names without explicit permission drew criticism. Here's why:

- Intellectual Property: Writers' styles and personas are intellectual property and using them without consent is problematic, as discussed by Platformer.

- User Trust: Transparency about AI-generated content is essential to maintain user trust. Misrepresenting AI outputs as coming from real people can erode this trust, as noted by TechBuzz.

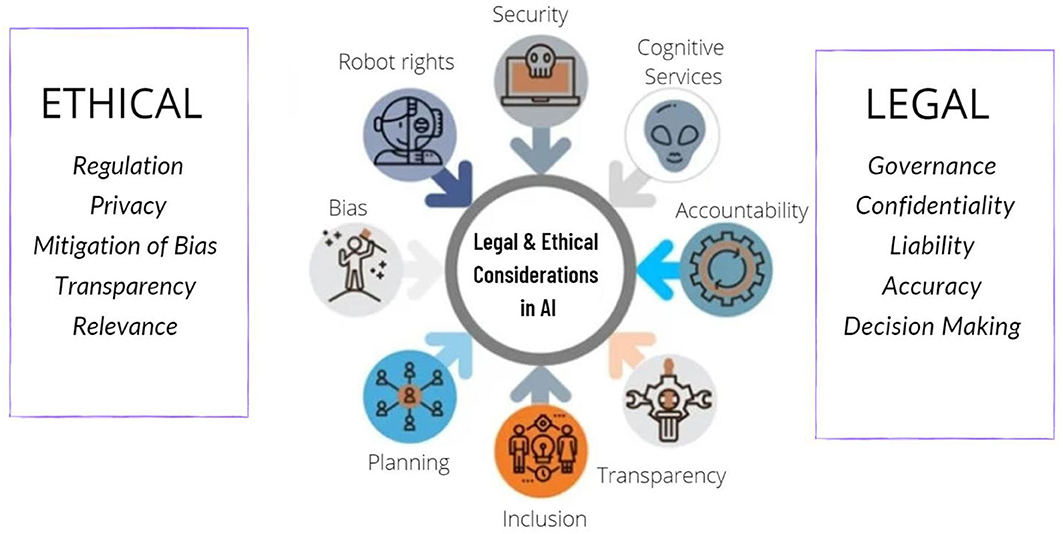

Legal and Ethical Implications

The decision to disable the feature was likely driven by several legal and ethical considerations, which are crucial in AI tool development.

Intellectual Property Concerns

Using a real writer's name or style without permission raises intellectual property rights issues. Just as companies can't use a celebrity's likeness without permission, using a writer's style in AI-generated content without consent is questionable, as highlighted by The Verge.

Ethical Use of AI

Beyond legal issues, there are ethical questions:

- Transparency: Users have a right to know whether content is human-generated or AI-generated, as emphasized by Ipsos.

- Consent: The individuals whose styles are emulated should consent to their use.

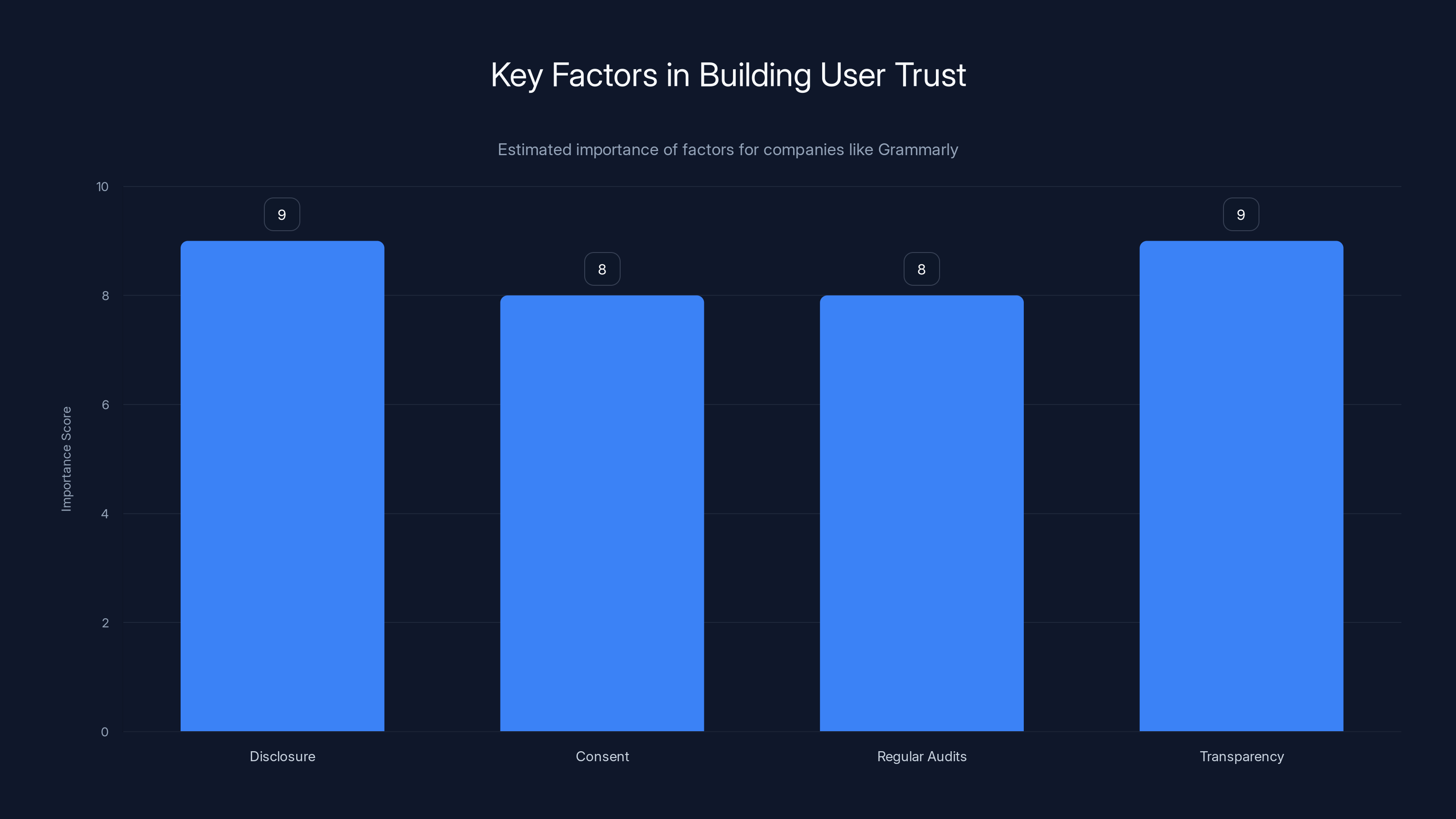

Disclosure and transparency are estimated to be the most crucial factors in building user trust, with high importance scores. Estimated data.

The Importance of User Trust

For companies like Grammarly, user trust is paramount. Users need to feel confident that the feedback they receive is accurate and ethically sourced.

Building Trust

- Disclosure: Clearly indicate when content is AI-generated, as recommended by eWeek.

- Consent: Ensure any use of personal styles or names is authorized.

- Regular Audits: Conduct regular reviews of AI outputs to ensure compliance with ethical standards.

Transparency in AI

Transparency involves not only letting users know about AI-generated content but also explaining how it works. This includes:

- Data Sources: Where does the training data come from?

- Model Limitations: What can the AI do, and where might it fall short?

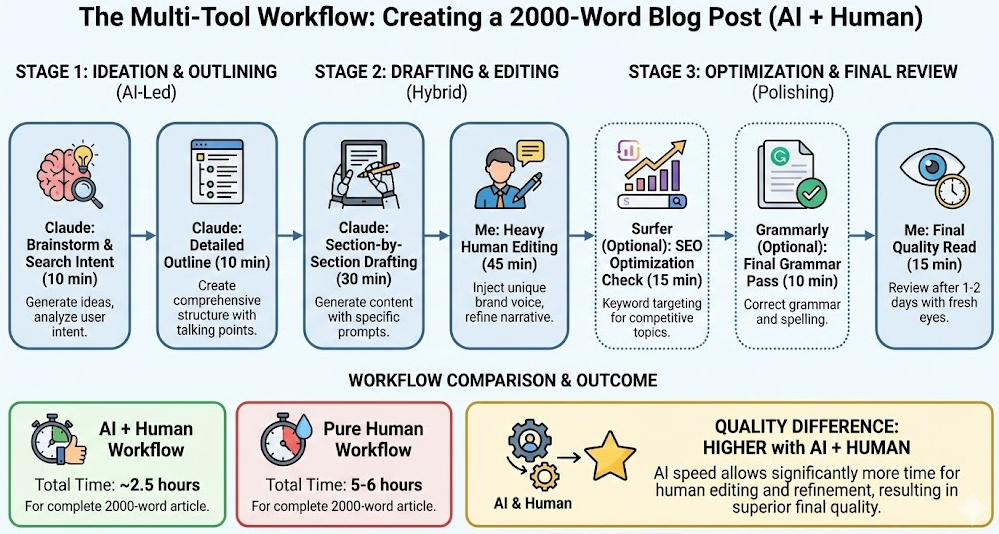

Implementing AI Writing Tools: Best Practices

For those looking to implement AI writing tools, here are some practical steps:

Step 1: Define Clear Objectives

Understand what you aim to achieve with AI. Is it enhanced grammar checks, style suggestions, or something else?

Step 2: Choose the Right Model

Select an AI model that aligns with your objectives. Consider models like GPT-3 for more generalized tasks, or specialized models for niche applications.

Step 3: Focus on Data Quality

High-quality datasets are crucial for training reliable AI models. Ensure your data is diverse and representative of your target audience.

Step 4: Monitor and Evaluate

Regularly assess AI outputs to ensure they meet quality standards and ethical guidelines.

Common Pitfalls and Solutions

Implementing AI writing tools isn't without its challenges. Here are some common pitfalls and how to avoid them:

Pitfall 1: Over-reliance on AI

Solution: Balance AI suggestions with human oversight. Encourage users to critically evaluate AI suggestions before acceptance.

Pitfall 2: Data Privacy Concerns

Solution: Implement robust data protection measures. Ensure user data is anonymized and stored securely.

Pitfall 3: Insufficient Training Data

Solution: Use diverse datasets and continually update them to reflect changing language trends.

Future Trends in AI Writing Tools

As AI technology continues to evolve, several trends are emerging:

Increased Personalization

AI tools will offer more personalized feedback, tailoring suggestions based on user preferences and past interactions, as discussed by MIT Sloan.

Enhanced Collaboration

AI will facilitate better collaboration between writers, editors, and AI tools, streamlining the editing process.

Improved Contextual Understanding

Future AI models will better understand context, leading to more nuanced and relevant writing suggestions.

Recommendations for AI Tool Developers

For developers working on AI writing tools, consider these recommendations:

Prioritize Ethical AI

Ensure your AI tools comply with ethical standards, focusing on transparency and user consent.

Invest in Continuous Learning

AI models should be updated regularly to incorporate new language trends and user feedback.

Engage with the Community

Involve users in the development process, using their feedback to refine and improve AI tools.

Conclusion

Grammarly's experience highlights the complexities and challenges of implementing generative AI in writing tools. While the potential benefits are significant, developers must navigate a landscape filled with ethical, legal, and practical considerations. By prioritizing transparency, user trust, and ethical standards, companies can harness the power of AI to enhance the writing process without compromising integrity.

FAQ

What is generative AI in writing tools?

Generative AI in writing tools refers to algorithms that can produce human-like text based on input data, often used for tasks like grammar checking, style suggestions, and content generation.

How does Grammarly's AI feature work?

Grammarly's AI feature, Expert Review, was designed to provide AI-generated feedback from famous writers. It used large language models to mimic the writing styles of these figures.

Why was Grammarly's AI feature disabled?

Grammarly's AI feature was disabled due to legal and ethical concerns about using real writers' names and styles without permission, which raised intellectual property rights issues.

What are the legal implications of using AI-generated content?

The legal implications include potential violations of intellectual property rights if real names or styles are used without consent. Transparency about AI-generated content is also crucial.

How can developers avoid pitfalls in AI tool development?

Developers can avoid pitfalls by ensuring transparency, seeking consent for using personal styles, and regularly auditing AI outputs for compliance with ethical guidelines.

What are the future trends in AI writing tools?

Future trends include increased personalization, improved contextual understanding, and enhanced collaboration between AI tools and human writers.

What recommendations exist for AI tool developers?

AI tool developers should prioritize ethical AI, invest in continuous learning, and engage with the community to refine and improve their tools.

How important is user trust in AI tools?

User trust is crucial in AI tools, as it ensures users feel confident in the accuracy and ethical sourcing of AI-generated content. Transparency and consent are key components in building and maintaining this trust.

What are the benefits of AI in writing tools?

AI in writing tools offers benefits such as improved grammar and style suggestions, personalized feedback, and streamlined editing processes. However, these benefits must be balanced with ethical considerations to maintain user trust.

Key Takeaways

- Generative AI in writing tools can enhance personalization and authority.

- Legal and ethical considerations are critical in AI tool development.

- Transparency about AI-generated content is essential for user trust.

- Future AI writing tools will focus on personalization and contextual understanding.

- Developers should prioritize ethical AI practices and user engagement.

Related Articles

- Understanding the Impact of AI Chatbots in Facilitating Violence [2025]

- Elon Musk’s Grok: The Controversy, Technical Insights, and Future Implications [2025]

- Google's Gemini Embedding 2: Redefining Enterprise Efficiency with Multimodal Support [2025]

- Understanding AI Downtime: A Deep Dive into Claude's Recent Outage [2025]

- Meta’s Strategic Move into the Agentic Web: The Real Story Behind the Moltbook Acquisition [2025]

- The Rise of the Silver Collar Workforce: Bridging Human Expertise and AI [2025]

![The Intricacies of Generative AI in Writing Tools: Lessons from Grammarly's Recent Strategy Shift [2025]](https://tryrunable.com/blog/the-intricacies-of-generative-ai-in-writing-tools-lessons-fr/image-1-1773261311187.png)