Understanding Anthropic's New AI Code Review Tool: An In-Depth Analysis [2025]

Last month, Anthropic introduced a fresh player to the AI code review arena, a tool designed to elevate the quality and security of AI-generated code. It's called Code Review for Claude Code, and while it promises to address common pitfalls in AI-generated content, it also comes with a price tag that might make you pause. Let's dive into how this tool works, its benefits, and what it could mean for developers and organizations alike.

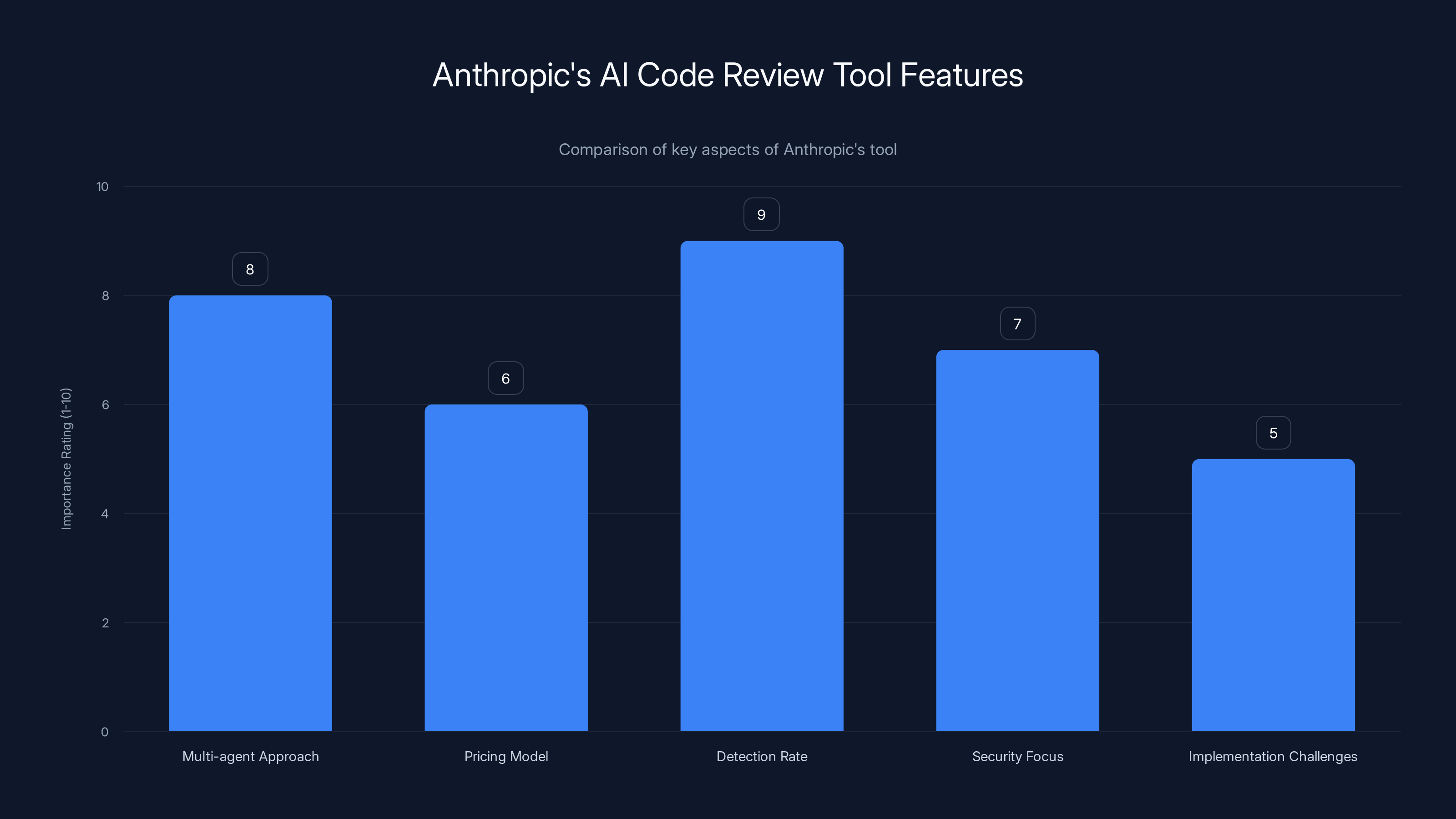

TL; DR

- Multi-agent Approach: Anthropic's tool leverages multiple AI agents, enhancing the accuracy of code reviews by identifying logic errors and vulnerabilities.

- Pricing Model: The token-based pricing could result in costs ranging from 25 per review, potentially increasing depending on the size and complexity of the code.

- High Detection Rate: In trials, 84% of large pull requests had issues flagged, averaging 7.5 findings per review.

- Security Focus: The tool aims to address security concerns, a major factor given the scrutiny on AI-generated code.

- Implementation Challenges: Integration into existing workflows might require adjustments, particularly for teams unfamiliar with AI-driven tools.

Anthropic's tool excels in detection rate and multi-agent approach, with pricing and implementation posing moderate challenges. Estimated data.

The Rise of AI in Code Review

The integration of AI into code review processes is not a novel concept. However, the complexity and capability of these tools have significantly evolved. AI tools now not only help automate mundane tasks but also enhance the accuracy of detecting errors and potential security vulnerabilities. Anthropic's take on this, with the Code Review tool, is particularly noteworthy for its multi-agent approach.

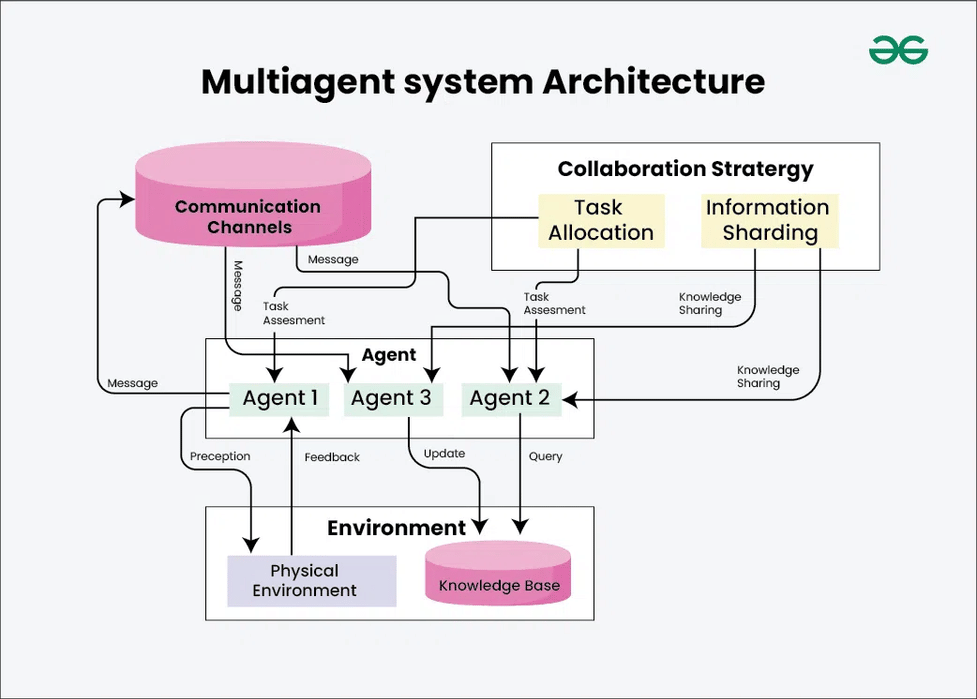

Why Multi-Agent Systems Matter

In traditional AI setups, a single agent processes the input and delivers an output. Anthropic's multi-agent system changes this dynamic by employing several agents, each specializing in a specific aspect of code review. This results in a more thorough analysis, as different agents can cross-validate findings, reducing the likelihood of false positives and negatives.

Key Features of Multi-Agent Systems:

- Specialization: Each agent is fine-tuned for specific tasks, such as detecting security issues or logic errors.

- Cross-Verification: Agents can independently review each other's findings, enhancing reliability.

- Parallel Processing: Multiple agents can work simultaneously, speeding up the review process.

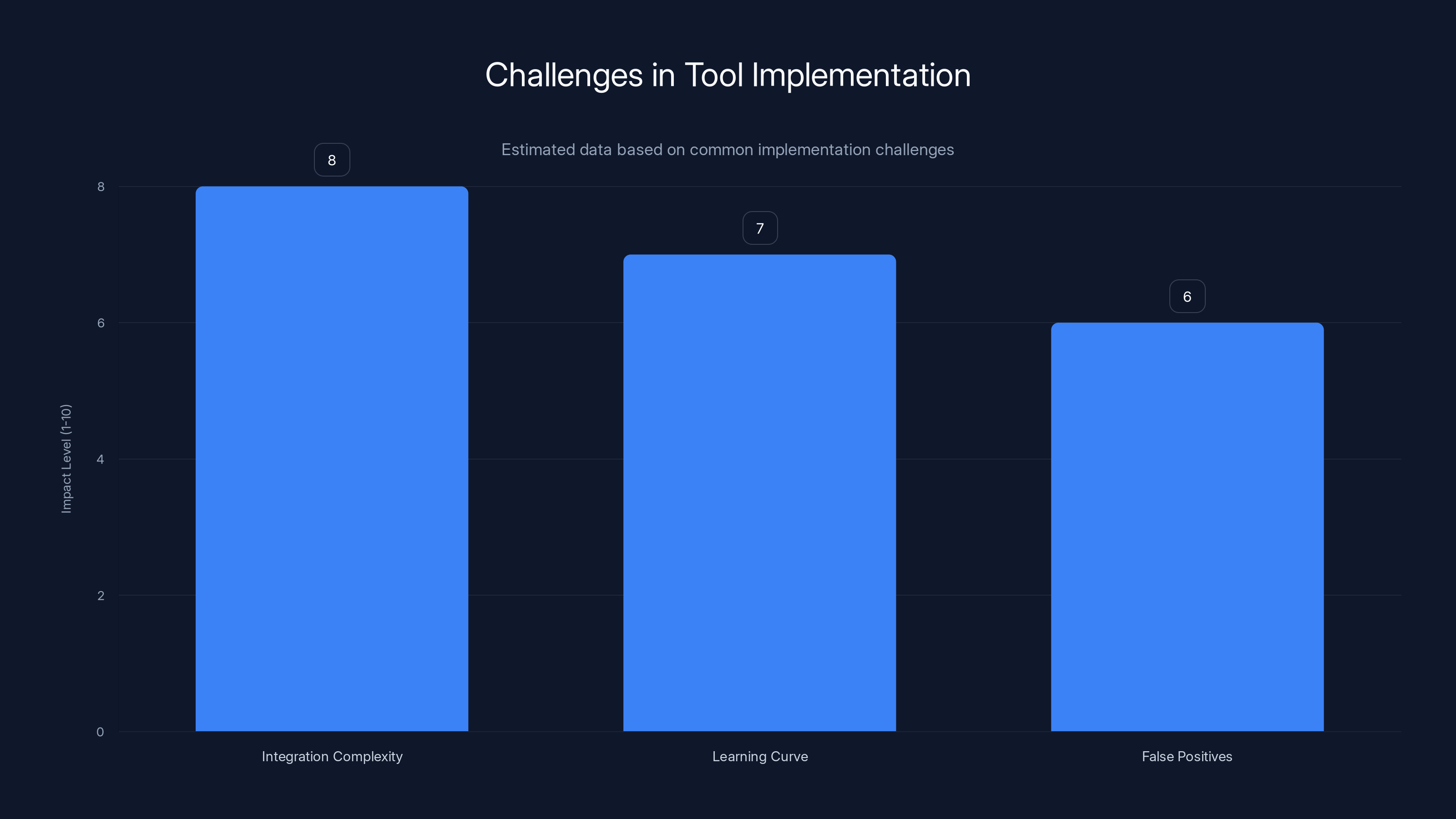

Integration complexity is the most significant challenge when adopting new tools, followed by the learning curve and false positives. Estimated data based on typical implementation issues.

How Code Review for Claude Code Works

At its core, the tool is designed to seamlessly integrate with GitHub, the preferred platform for many developers. Once integrated, it reviews pull requests, analyzing them for potential issues. This is particularly useful for teams using AI to generate large volumes of code, where manual review would be cumbersome and inefficient.

Process Overview:

- Integration: Connect the tool to your GitHub repository.

- Setup: Configure the agents based on the specific needs of your project.

- Review: Submit a pull request, and the tool automatically reviews the code.

- Feedback: Receive a detailed report highlighting issues, categorized by severity.

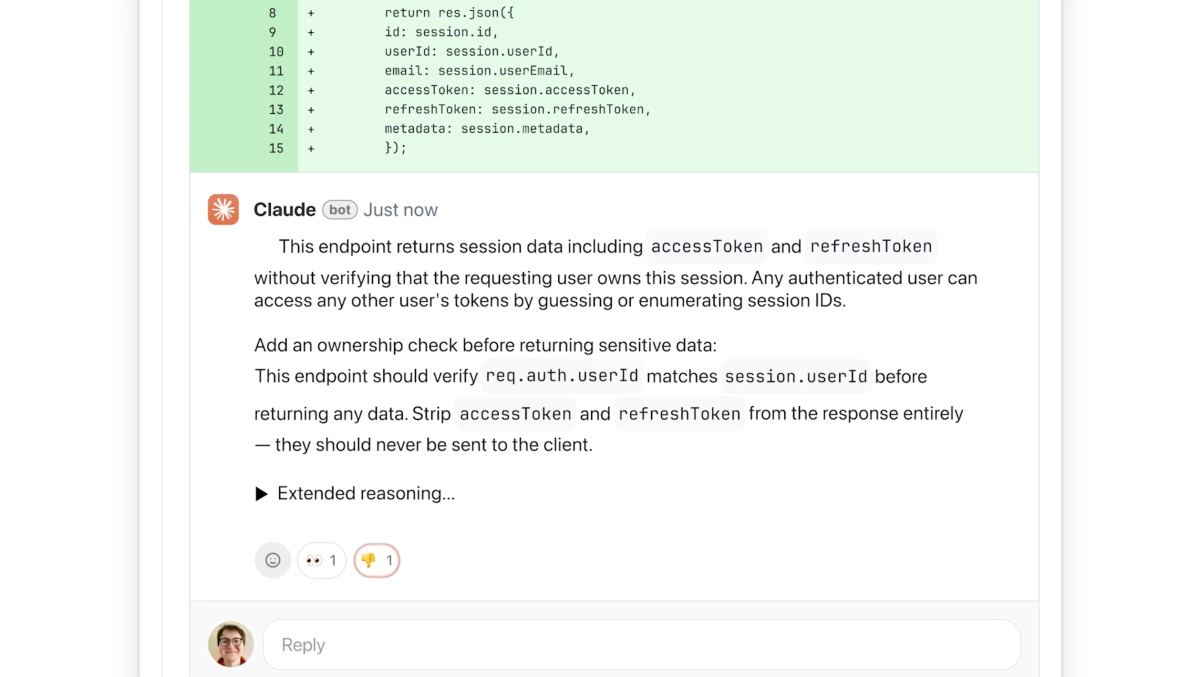

Real-World Use Case

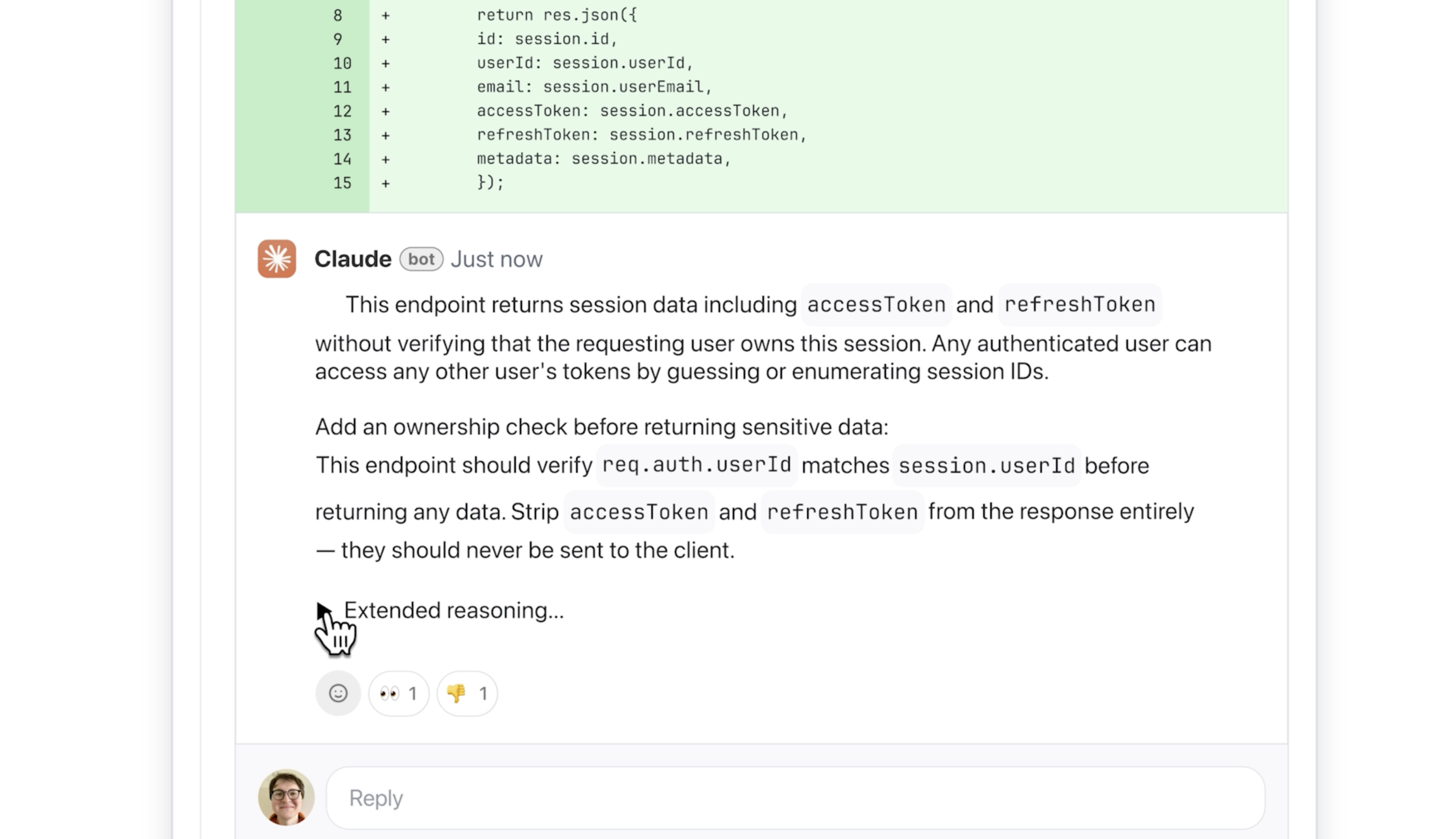

Consider a development team working on a fintech application. Security is paramount, and the codebase is extensive. Using Anthropic's tool, they can automate the review process, ensuring that every pull request is scrutinized for potential vulnerabilities. This not only saves time but also improves the overall security posture of the application.

Pricing: A Double-Edged Sword

Anthropic's decision to implement a token-based pricing model is both innovative and contentious. On one hand, it aligns costs with usage, potentially making it more affordable for smaller teams or projects with less frequent reviews. On the other hand, projects with large, complex codebases might see costs escalate quickly.

Pricing Details:

- Per Review Cost: 25, depending on the size and complexity of the pull request.

- Volume Discounts: Available for organizations with high review volumes.

Cost Management Strategies

To manage these costs, teams can:

- Optimize Code: Reduce unnecessary complexity in code to minimize review time.

- Prioritize Reviews: Focus on critical sections of code that require more scrutiny.

- Batch Reviews: Combine multiple changes into a single pull request to save on review costs.

Anthropic's Code Review tool costs range from

Enhancing Security and Privacy

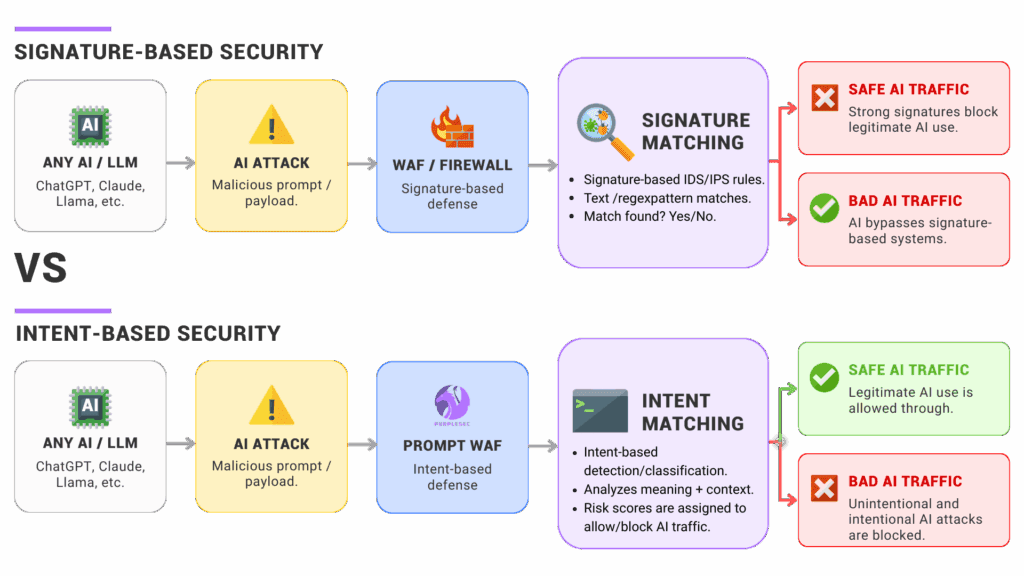

Security is a major selling point for Anthropic's tool. With increasing scrutiny on AI-generated content, ensuring that code is free from vulnerabilities is crucial.

Common Security Issues Detected

- SQL Injection: Ensures input validation to prevent unauthorized database access.

- Cross-Site Scripting (XSS): Identifies potential XSS vulnerabilities in web applications.

- Hardcoded Secrets: Flags instances where sensitive information is hardcoded into the source.

Best Practices for Secure Code

- Input Validation: Always validate user inputs to prevent injection attacks.

- Use Environment Variables: Store sensitive information in environment variables instead of hardcoding them.

- Regular Audits: Conduct regular security audits to identify and address vulnerabilities.

Implementation Challenges and Solutions

Adopting a new tool is never without its challenges. Teams must consider factors like integration, training, and workflow adjustments.

Common Pitfalls

- Integration Complexity: Difficulty in integrating the tool with existing systems.

- Learning Curve: Teams may face a steep learning curve when adapting to new technology.

- False Positives: Dealing with false positives can lead to wasted time and effort.

Overcoming Challenges

- Comprehensive Training: Invest in training sessions to help team members understand the tool's capabilities and limitations.

- Gradual Rollout: Implement the tool in stages to minimize disruptions.

- Customization: Configure the tool to align with your team’s specific needs and preferences.

Future Trends in AI Code Reviews

As AI technology continues to evolve, we can expect to see further improvements in code review processes. Here are some trends to watch:

Increased Accuracy

With advancements in AI algorithms, tools will become more accurate in detecting issues, reducing both false positives and negatives.

Enhanced Integration

Future tools will offer seamless integration with more version control systems, beyond just GitHub, to cater to a broader audience.

Real-Time Feedback

Imagine a tool that provides real-time feedback as developers write code, allowing them to address issues immediately rather than post-development.

AI-Powered Recommendations

Beyond just flagging issues, AI tools will offer actionable recommendations to resolve detected problems, further streamlining the development process.

Conclusion

Anthropic's Code Review tool is a significant step forward in the realm of AI-driven code quality assurance. Its multi-agent approach promises enhanced accuracy, particularly in identifying security vulnerabilities. However, the pricing model could be a barrier for some, especially those managing large codebases. As with any tool, the key to success lies in thoughtful implementation and alignment with team objectives.

FAQ

What is Anthropic's Code Review tool?

Anthropic's Code Review tool is an AI-driven system designed to evaluate code in GitHub pull requests, identifying issues like logic errors and security vulnerabilities using a multi-agent approach.

How does Anthropic's Code Review tool work?

The tool integrates with GitHub, automatically reviewing pull requests by employing multiple AI agents that specialize in different aspects of code analysis.

What are the benefits of using Anthropic's Code Review tool?

Benefits include enhanced accuracy in detecting code issues, improved security through vulnerability identification, and time savings by automating the review process.

How much does Anthropic's Code Review tool cost?

The tool uses a token-based pricing model, with costs ranging from

What challenges might teams face when implementing this tool?

Common challenges include integration complexity, a learning curve for team members, and managing false positives.

How can teams overcome these challenges?

Solutions include providing comprehensive training, adopting a gradual rollout approach, and customizing the tool to fit specific team needs.

What future trends can we expect in AI code review tools?

Future trends include increased accuracy, enhanced integration with more platforms, real-time feedback capabilities, and AI-powered recommendations for issue resolution.

Key Takeaways

- Anthropic's tool uses a multi-agent approach for enhanced accuracy.

- Token-based pricing may increase costs for large codebases.

- The tool excels at detecting security vulnerabilities.

- Integration challenges can be managed with training and gradual rollout.

- Future trends include real-time feedback and AI recommendations.

Related Articles

- Mastering AI-Driven Code Review: The Future of Software Development [2025]

- Anthropic and OpenAI Unveil Free Tools That Challenge SAST's Limitations [2025]

- Exploring Anthropic's Claude Marketplace: The Future of Third-Party Cloud Services [2025]

- Grammarly's Identity Usage Policy: Understanding the Implications and Protecting Your Privacy [2025]

- OpenAI and Google Support Anthropic in High-Stakes Legal Battle Against US Government [2025]

- Understanding the Implications of Anthropic's Legal Battle with the Department of Defense [2025]

![Understanding Anthropic's New AI Code Review Tool: An In-Depth Analysis [2025]](https://tryrunable.com/blog/understanding-anthropic-s-new-ai-code-review-tool-an-in-dept/image-1-1773160718169.jpg)