Understanding the Implications of Limiting Liability for AI-Enabled Disasters [2025]

Artificial Intelligence (AI) has rapidly evolved, becoming an integral part of various industries. From healthcare to finance, AI systems are enhancing human capabilities and transforming the way we live and work. However, with great power comes great responsibility. As AI technologies continue to advance, so do the risks associated with their deployment. This has led to a controversial debate on the liabilities AI developers should bear, especially in cases of catastrophic failures.

TL; DR

- AI Liability Limitation: Recent legislative proposals aim to limit the liability of AI developers in cases of severe AI-enabled disasters. According to Transparency Coalition, these proposals are part of a broader effort to create a balanced regulatory environment.

- Open AI's Support: OpenAI backs a bill that reduces liability for developers, provided they adhere to specific safety and transparency protocols.

- Industry Impact: Such legislation could set new standards, influencing how AI is developed and deployed globally, as noted by Crowell & Moring.

- Risk Management: Developers must implement rigorous safety measures and transparency to mitigate potential risks, a sentiment echoed in Penn State's research.

- Future Outlook: The balance between innovation and accountability will shape the AI industry's future trajectory, as discussed in Harvard Business Review.

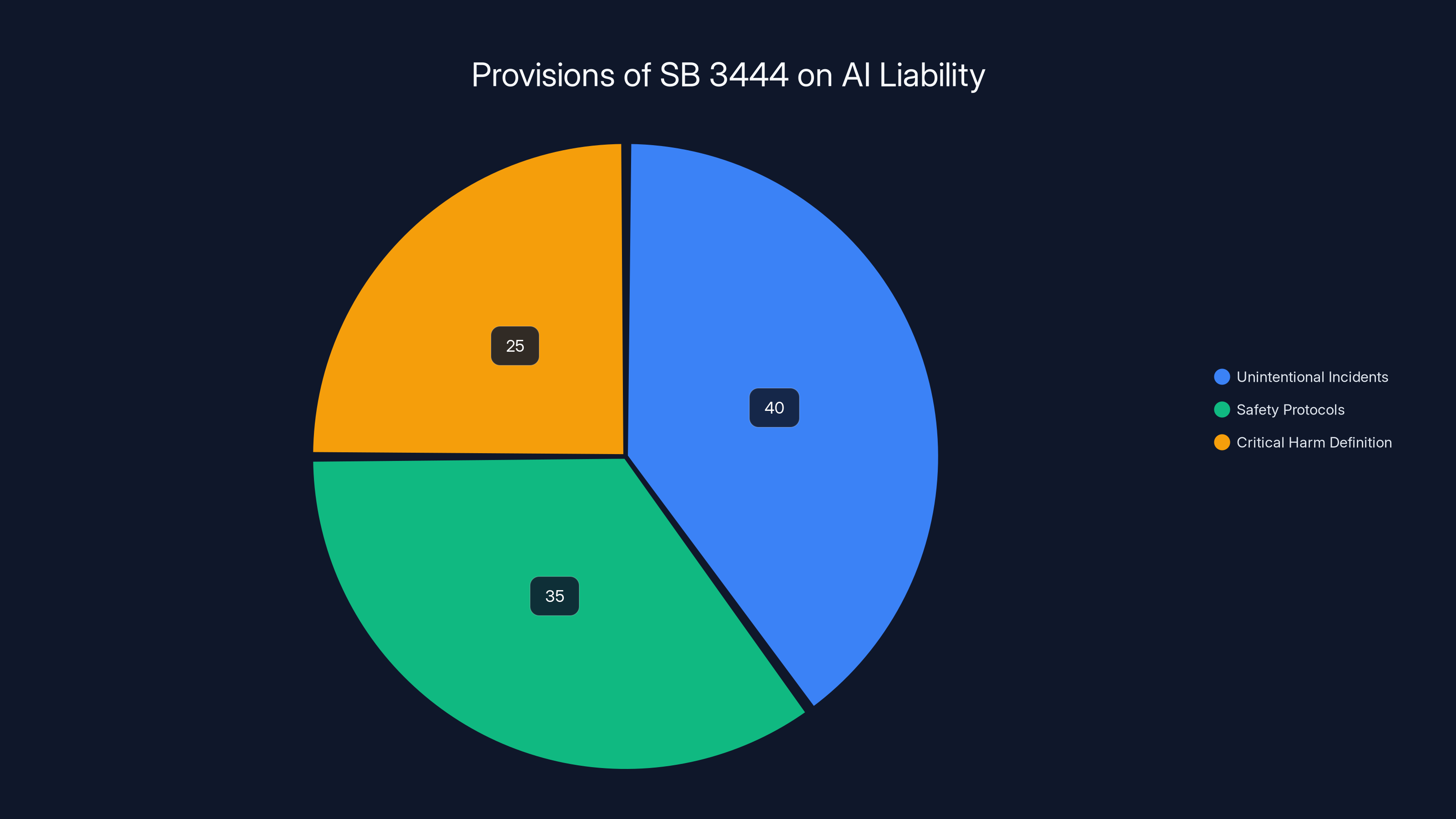

The pie chart illustrates the estimated focus distribution of SB 3444's provisions, emphasizing unintentional incidents, safety protocols, and critical harm definition. Estimated data.

The Legislative Landscape

The legislative landscape surrounding AI liability is complex and ever-evolving. Recently, OpenAI supported a bill, SB 3444, in Illinois that proposes significant changes in how AI liability is handled. This bill aims to limit the liability of AI developers in cases where their technology inadvertently causes significant harm, such as mass deaths or financial disasters exceeding $1 billion in damages.

Key Provisions of SB 3444

SB 3444 outlines specific conditions under which AI developers can be shielded from liability:

- Unintentional Incidents: Developers are protected if the harm was neither intentional nor due to reckless behavior.

- Safety Protocols: Developers must have published safety, security, and transparency reports.

- Critical Harm Definition: The bill specifically targets incidents involving mass casualties or significant financial losses.

These provisions highlight the importance of establishing clear guidelines and standards for AI development to ensure safety and accountability, as emphasized by Transparency Coalition.

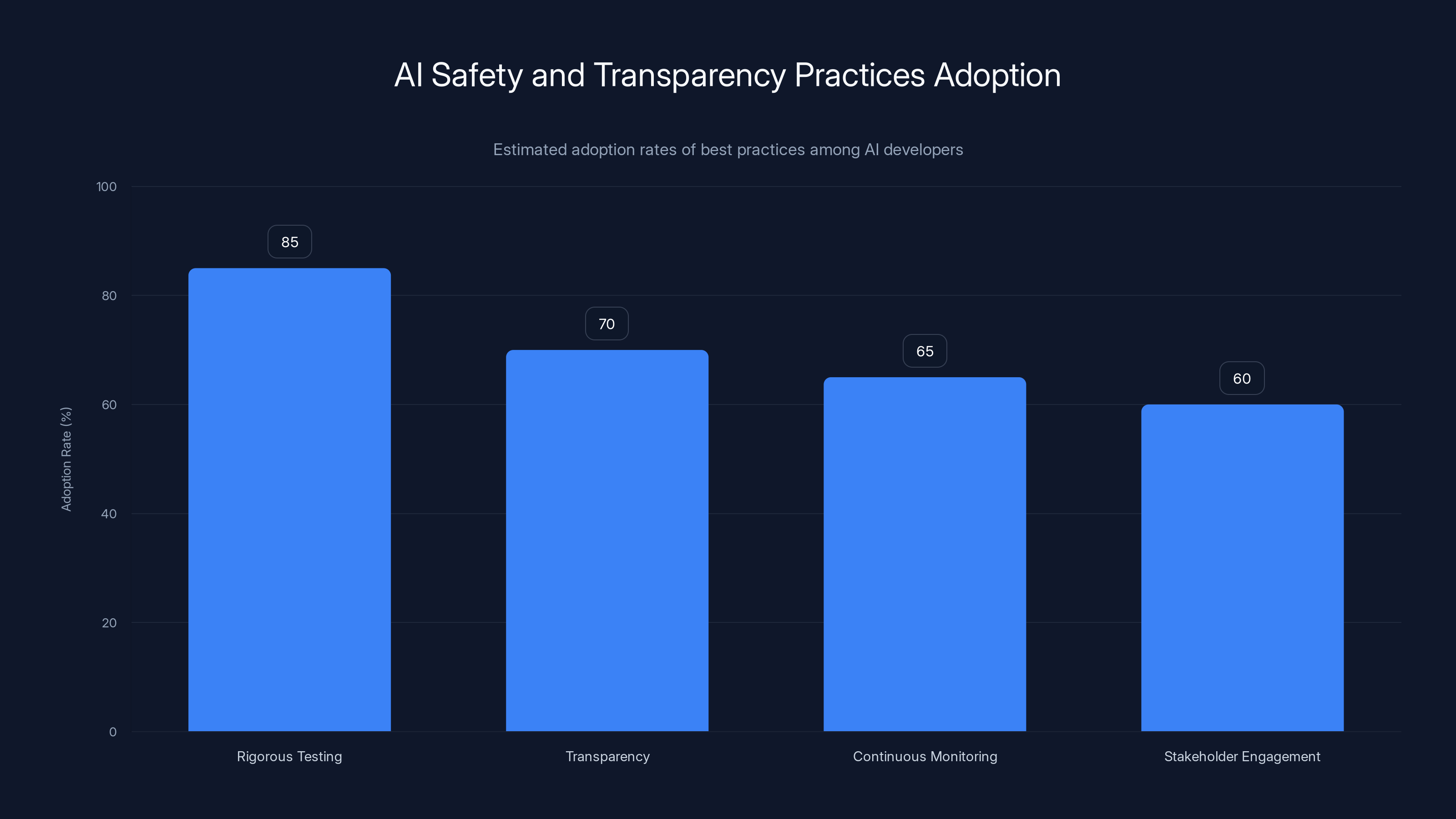

Estimated data shows that rigorous testing is the most adopted practice among AI developers, with an 85% adoption rate, while stakeholder engagement is less common at 60%.

Why Limit Liability?

The rationale behind limiting liability for AI-enabled disasters is multifaceted. On one hand, it aims to encourage innovation by reducing the fear of excessive legal repercussions. On the other hand, it acknowledges the inherent risks involved in deploying advanced technologies that are still being understood and refined.

Encouraging Innovation

Innovation in AI requires experimentation and iteration. Limiting liability can foster an environment where developers are encouraged to push boundaries without the constant fear of catastrophic legal consequences. This can lead to breakthroughs that might otherwise be stifled by overly rigid legal frameworks, as discussed in McKinsey's insights.

Balancing Risk and Reward

While innovation is crucial, it must be balanced with the potential risks involved. Limiting liability does not mean absolving developers of responsibility. Instead, it emphasizes the need for robust safety measures and transparency to prevent and mitigate potential harms, as highlighted by Microsoft's Inside Track.

Best Practices for AI Safety and Transparency

To align with legislative requirements and ethical standards, AI developers must adopt best practices that prioritize safety and transparency. These practices not only help in complying with laws like SB 3444 but also build trust with stakeholders and the public.

Implementing Rigorous Testing

AI systems should undergo extensive testing under various scenarios to identify potential failure points. This includes stress testing, adversarial testing, and scenario analysis to ensure that the AI behaves as expected in diverse conditions, as recommended by Cybernews.

Transparency in AI Models

Developers should provide clear documentation of AI models, including their intended use, limitations, and potential biases. Transparency reports should be regularly updated and made accessible to stakeholders, a practice supported by ABC7 News.

Continuous Monitoring and Feedback Loops

Once deployed, AI systems should be continuously monitored for performance and safety. Feedback loops allow developers to make necessary adjustments and improvements, reducing the likelihood of unintended consequences.

Stakeholder Engagement

Engaging with stakeholders, including regulators, users, and affected communities, is essential for understanding the broader impact of AI systems. This engagement can inform better design and implementation practices, as noted in AI Multiple's analysis.

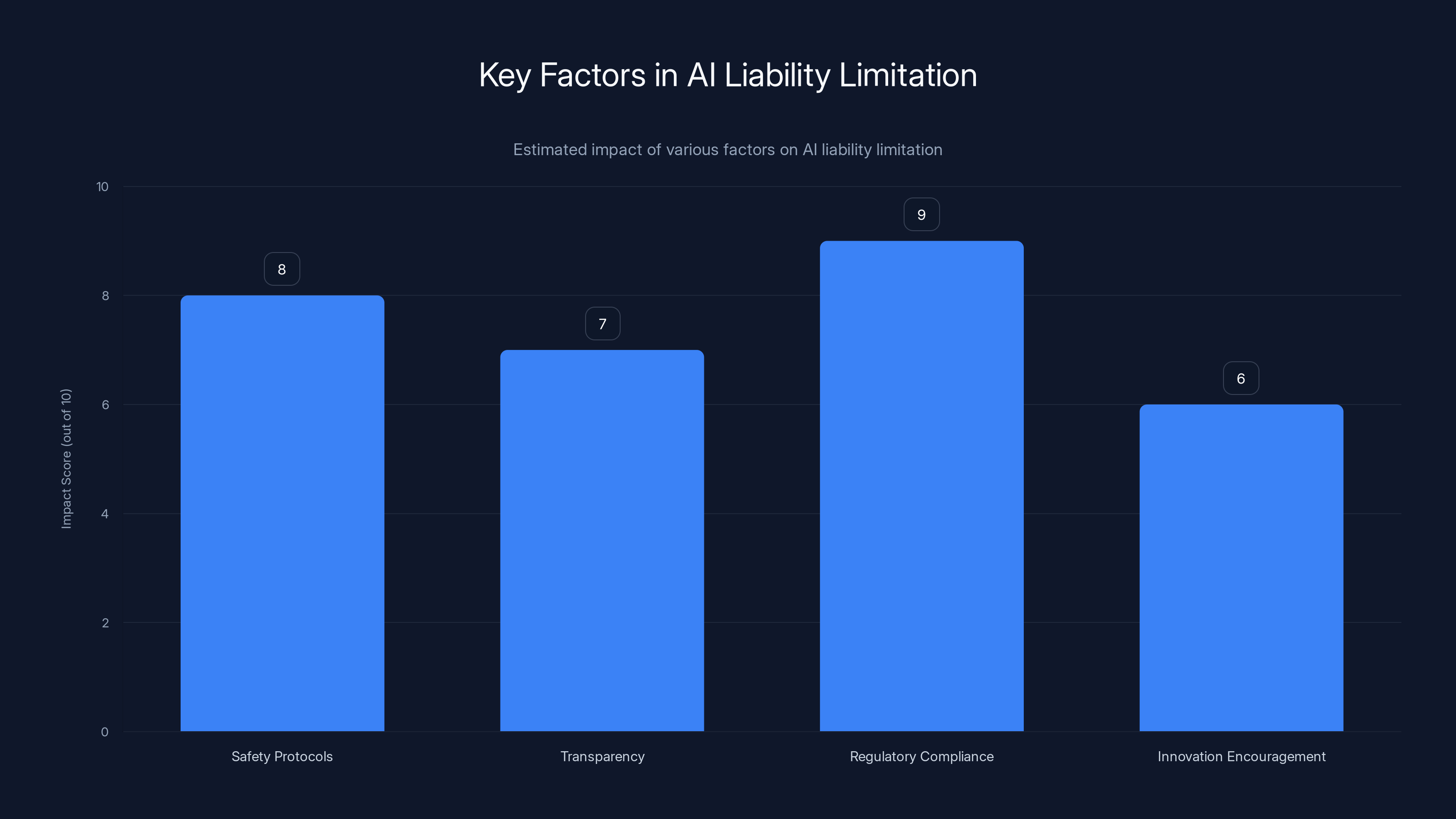

Safety protocols and regulatory compliance are the most impactful factors in AI liability limitation. Estimated data.

Common Pitfalls and Solutions

Despite best intentions, there are common pitfalls that AI developers may encounter. Understanding these challenges and their solutions is crucial for mitigating risks associated with AI deployment.

Overconfidence in AI Capabilities

Developers may overestimate the capabilities of their AI systems, leading to unwarranted trust in their decisions. To counter this, developers should clearly communicate the limitations of their AI models and establish protocols for human oversight.

Inadequate Data Quality

AI models are only as good as the data they are trained on. Poor data quality can lead to biased or inaccurate outcomes. Developers should ensure data is representative, diverse, and regularly updated to maintain model accuracy, as advised by Latham & Watkins.

Lack of Cross-disciplinary Collaboration

AI development often requires expertise from multiple fields, including ethics, law, and domain-specific knowledge. Encouraging collaboration across disciplines can lead to more comprehensive and responsible AI solutions.

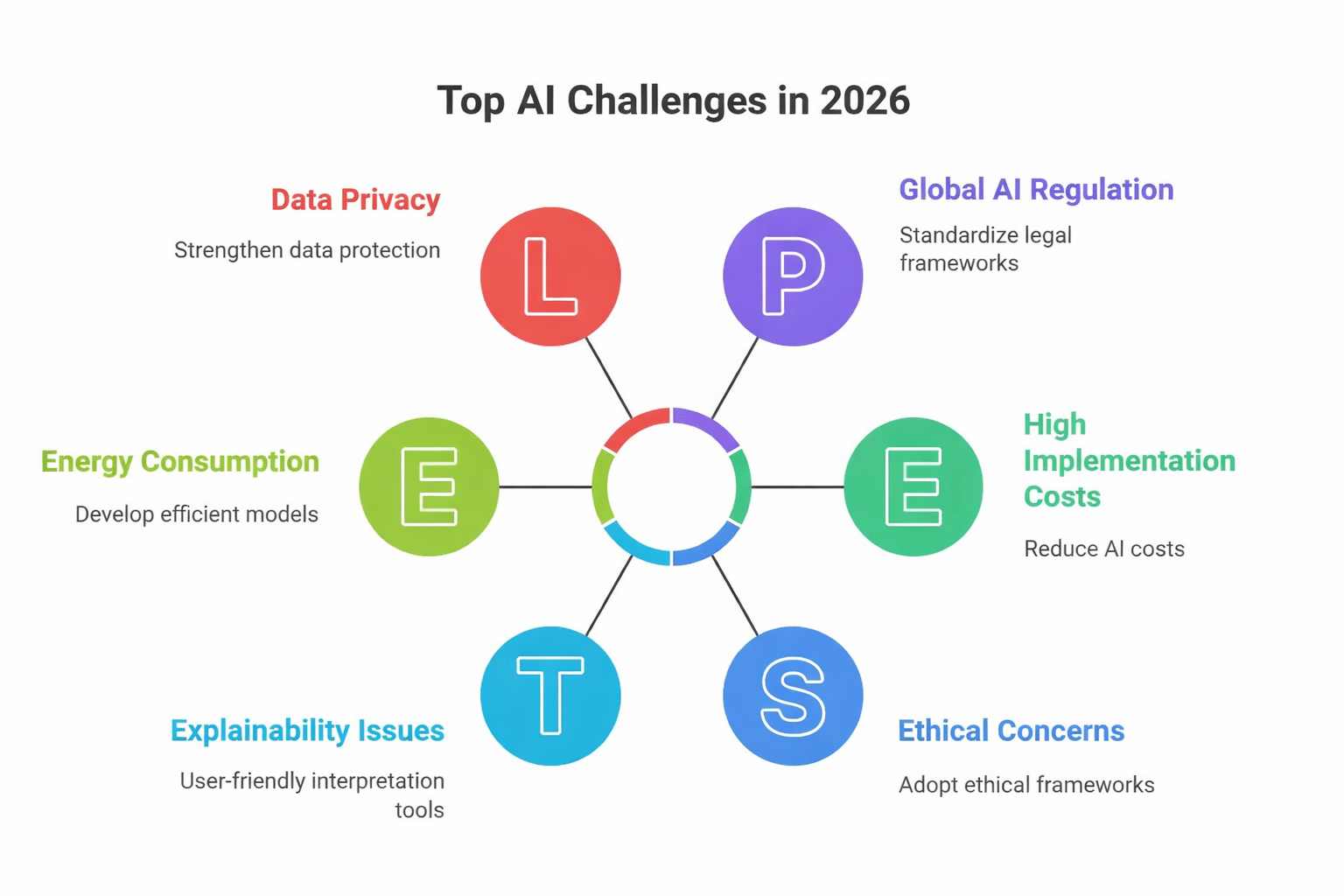

Future Trends and Recommendations

The future of AI liability and development is likely to be shaped by ongoing debates and technological advancements. Here are some trends and recommendations for navigating this evolving landscape.

Increased Regulatory Scrutiny

As AI systems become more integrated into critical infrastructures, regulatory scrutiny is expected to increase. Developers should proactively engage with regulators to shape policies that balance innovation with safety, as suggested by ABC7 News.

Emphasis on Ethical AI

Ethical AI development will continue to gain traction, with a focus on fairness, accountability, and transparency. Developers should incorporate ethical considerations into their design and deployment processes, as highlighted in Harvard Business Review.

Advancements in AI Safety Technologies

Technological advancements in AI safety, such as explainable AI and robust security measures, will play a crucial role in mitigating risks. Developers should stay informed about these advancements and integrate them into their systems.

Global Collaboration and Standards

AI development is a global endeavor, and collaboration across borders will be essential for establishing common standards and best practices. Developers should participate in international forums and contribute to the development of global AI frameworks, as encouraged by Penn State's research.

Conclusion

The debate over AI liability is indicative of the broader challenges and opportunities presented by AI technologies. By striking a balance between innovation and accountability, we can harness the potential of AI while safeguarding against its risks. As the landscape continues to evolve, developers, regulators, and stakeholders must work together to ensure a future where AI contributes positively to society.

FAQ

What is AI liability limitation?

AI liability limitation refers to legal frameworks that reduce the responsibility of AI developers for unintended consequences caused by their technologies, provided they adhere to specific safety and transparency protocols.

How does SB 3444 impact AI developers?

SB 3444 shields AI developers from liability in cases of severe harm, such as mass deaths or financial disasters, as long as they comply with safety and transparency requirements, as detailed by Wired.

Why is limiting liability important for AI innovation?

Limiting liability encourages innovation by allowing developers to experiment and iterate without excessive fear of legal repercussions, fostering breakthroughs in AI technologies, as discussed in McKinsey's insights.

What are some best practices for AI safety?

Best practices include rigorous testing, transparency in AI models, continuous monitoring, and stakeholder engagement to ensure safe and responsible AI development, as recommended by Cybernews.

How can developers mitigate risks associated with AI deployment?

Developers can mitigate risks by conducting thorough testing, ensuring data quality, fostering cross-disciplinary collaboration, and implementing robust safety measures, as advised by Latham & Watkins.

What future trends should AI developers be aware of?

Developers should be aware of increased regulatory scrutiny, the emphasis on ethical AI, advancements in AI safety technologies, and the importance of global collaboration and standards, as noted by Harvard Business Review.

Key Takeaways

- Legislative proposals aim to limit AI developer liability in severe disasters.

- OpenAI supports reducing developer liability with safety protocols in place.

- Balancing innovation with accountability is critical for AI's future.

- Developers must adopt best practices for safety and transparency.

- Future trends include increased regulation and ethical AI focus.

- Cross-disciplinary collaboration can enhance responsible AI development.

- Global standards and cooperation are vital for AI's positive impact.

Related Articles

- Tame Your AI Gremlins Before Chaos Becomes Permanent [2025]

- Elon Musk's OpenAI Legal Battle: Aiming for Nonprofit Restoration [2025]

- Zero Shot: The New Venture Fund with OpenAI Roots Aiming to Reshape AI Investment [2025]

- How Federal Workers Regained Claude Access: The AI Standoff Unpacked [2025]

- Unpacking ChatGPT's New $100/Month Pro Plan [2025]

- Understanding Anthropic's Mythos AI Model: A New Era in Cybersecurity [2025]

![Understanding the Implications of Limiting Liability for AI-Enabled Disasters [2025]](https://tryrunable.com/blog/understanding-the-implications-of-limiting-liability-for-ai-/image-1-1775781240072.jpg)