Unlocking Science: Building AI Researchers Can Trust [2025]

In the world of scientific research, trust is a currency. Researchers rely on data integrity, repeatability, and transparency to build upon past discoveries. But as Artificial Intelligence (AI) becomes increasingly integral to research methodologies, the question arises: How do we build AI systems that researchers can trust?

TL; DR

- Trustworthy AI requires transparency in algorithms and data sources.

- Ethics and accountability are critical in AI development.

- Open-source platforms can foster collaborative improvements and trust.

- Real-time data validation ensures AI reliability.

- Human oversight remains crucial to mitigate AI biases.

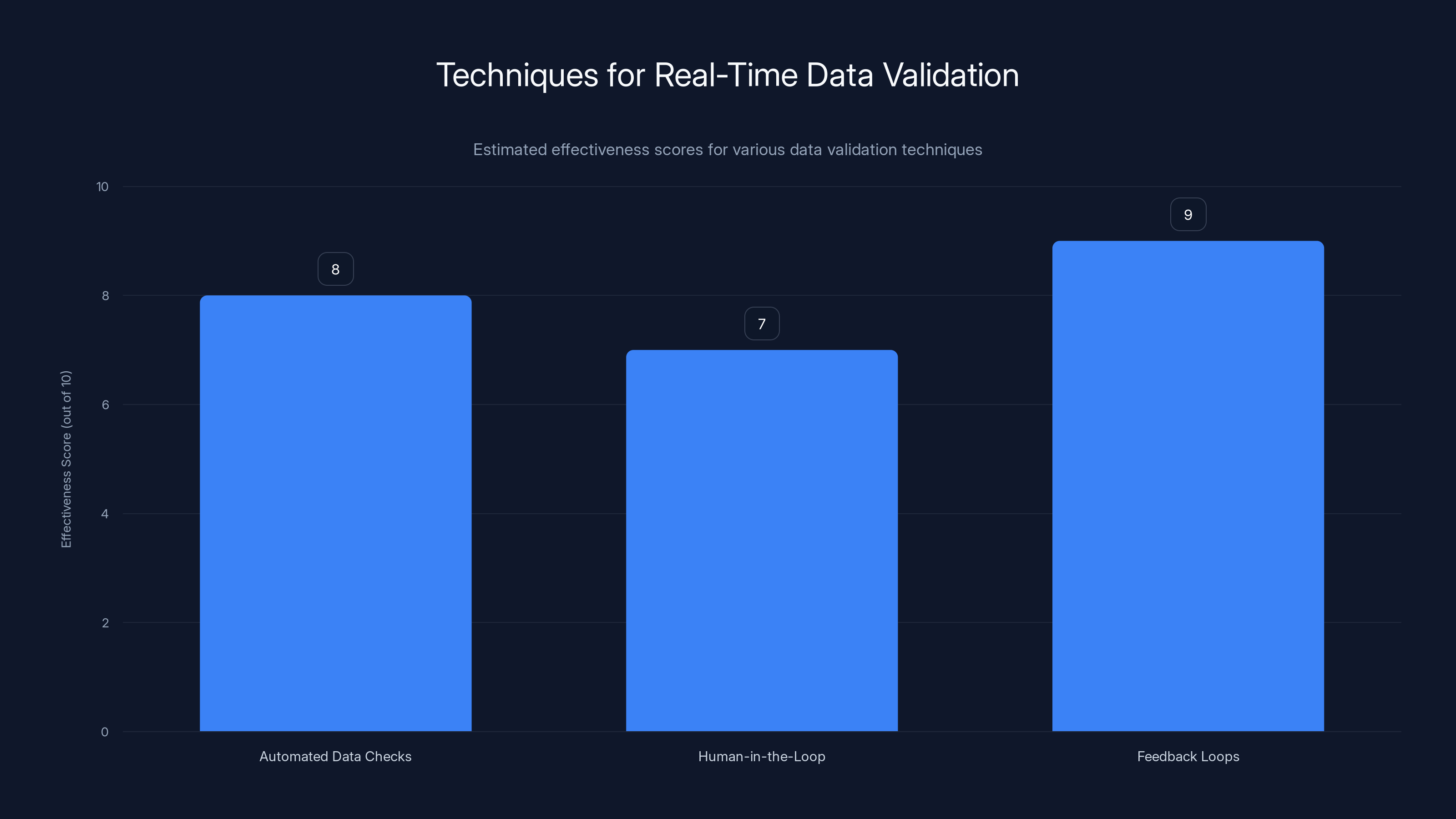

Feedback loops are estimated to be the most effective technique for real-time data validation, with a score of 9 out of 10. Estimated data.

The Need for Trustworthy AI in Research

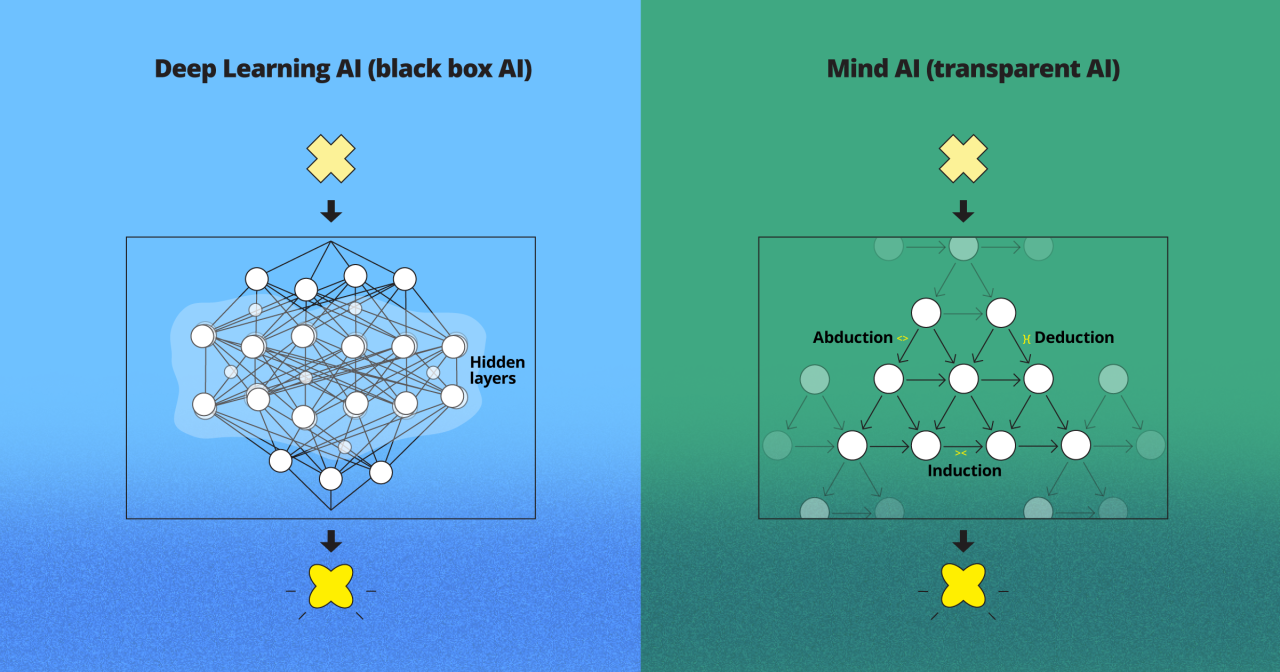

AI's potential in research is immense. It can analyze datasets faster than any human, recognize patterns invisible to the naked eye, and even predict outcomes with remarkable accuracy. However, the black-box nature of many AI systems poses a significant challenge to researchers who need to understand how conclusions are reached.

Transparency in AI Algorithms

For researchers to trust an AI, they need visibility into how it works. This means access to the algorithms, data sources, and decision-making processes. Transparency is not just about opening the hood; it’s about making it understandable to non-experts. According to a recent study published in Nature, transparency in AI is crucial for building trust among researchers.

Example: Consider an AI model predicting climate change effects. If researchers don't understand the model's assumptions and data inputs, they can't confidently use its predictions.

Ethical AI Development

Ethical considerations must be built into AI from the ground up. This involves ensuring that AI systems do not perpetuate biases, discriminate, or cause harm. It requires a commitment to moral principles in AI design and implementation. China's new rules on AI ethics highlight the global emphasis on integrating ethics into AI development.

Best Practice: Establish an ethics board that reviews AI projects from inception to deployment. This board should consist of multidisciplinary experts, including ethicists, sociologists, and technical experts.

Accountability in AI Systems

Accountability means that AI systems must be designed so that their creators can be held responsible for their actions. This requires clear documentation and traceability of decisions made by AI systems. The insurance industry has been actively working on frameworks to ensure accountability in AI systems.

Quick Tip: Implement logging mechanisms that record decision pathways and data usage for post-analysis.

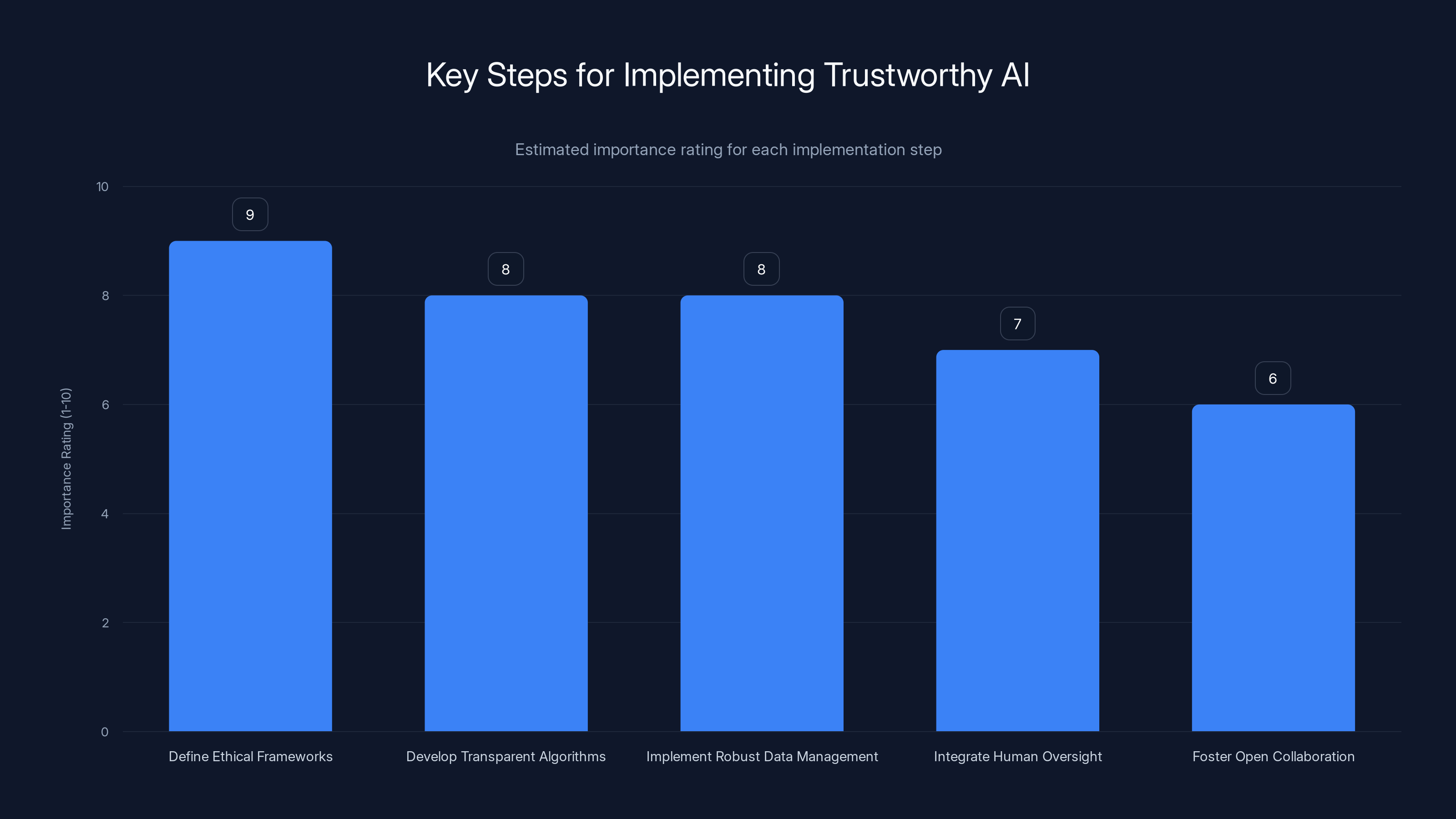

Defining ethical frameworks is rated as the most crucial step in implementing trustworthy AI, followed closely by developing transparent algorithms and robust data management. (Estimated data)

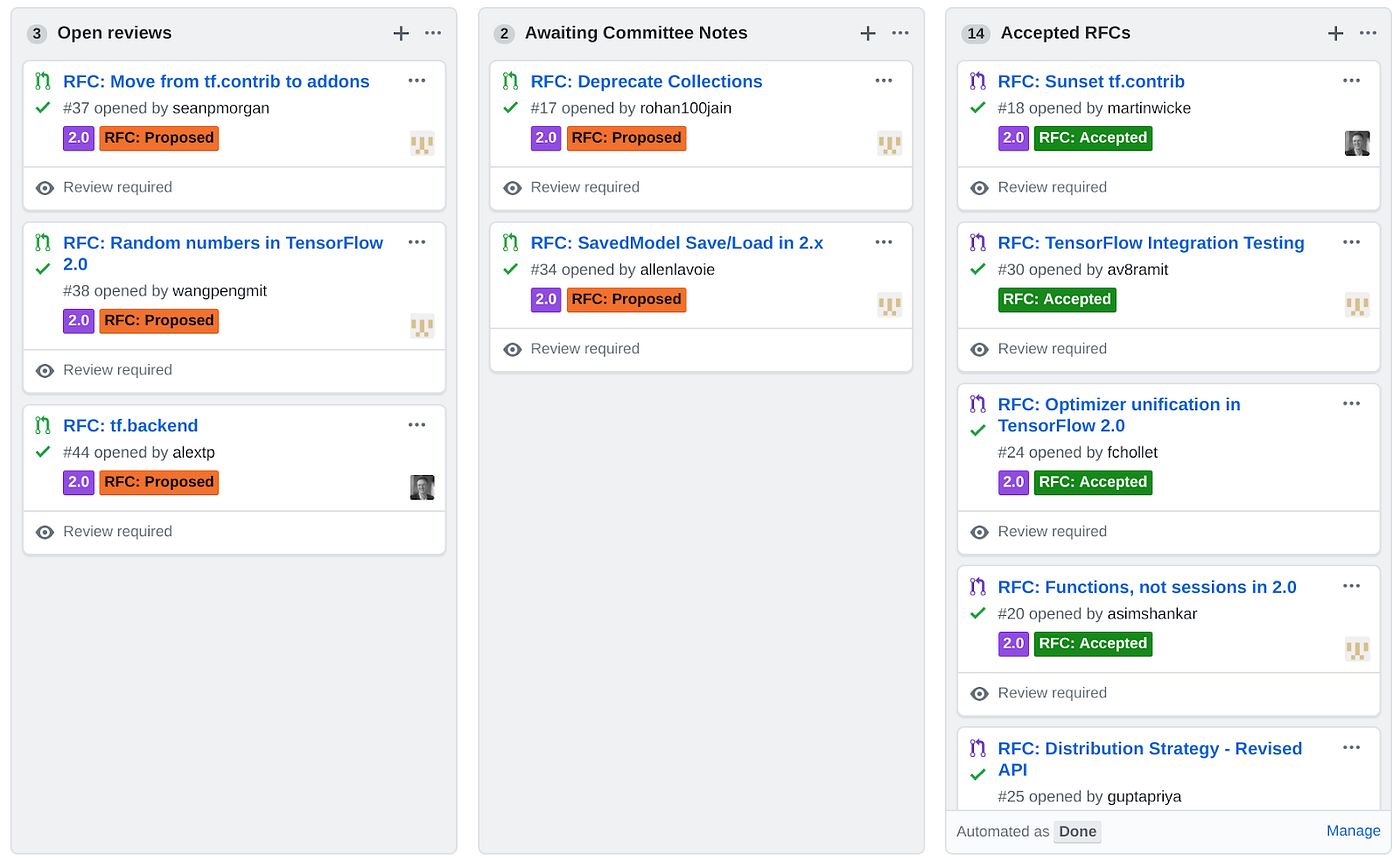

Building Trust Through Open Source

Open-source AI platforms allow researchers to inspect, modify, and enhance AI tools. This openness promotes collaboration, accelerates innovation, and builds trust within the scientific community. The addition of open-source hardware licenses to platforms like Thingiverse exemplifies the growing trend towards open-source collaboration.

Collaborative Development

Open-source projects can leverage the collective expertise of a global community. This collaborative approach not only improves AI tools but also democratizes access to advanced technologies. The assertion of American leadership in open-source AI highlights the strategic importance of collaborative development.

Case Study: The TensorFlow community, which has contributed to numerous enhancements and applications, showcases the power of open-source collaboration in AI development.

Benefits of Open Source in AI

- Transparency: Researchers can peer into the code that makes up AI systems.

- Cost-Effectiveness: Open-source tools often reduce the financial burden of research.

- Customizability: Researchers can tailor AI tools to specific research needs.

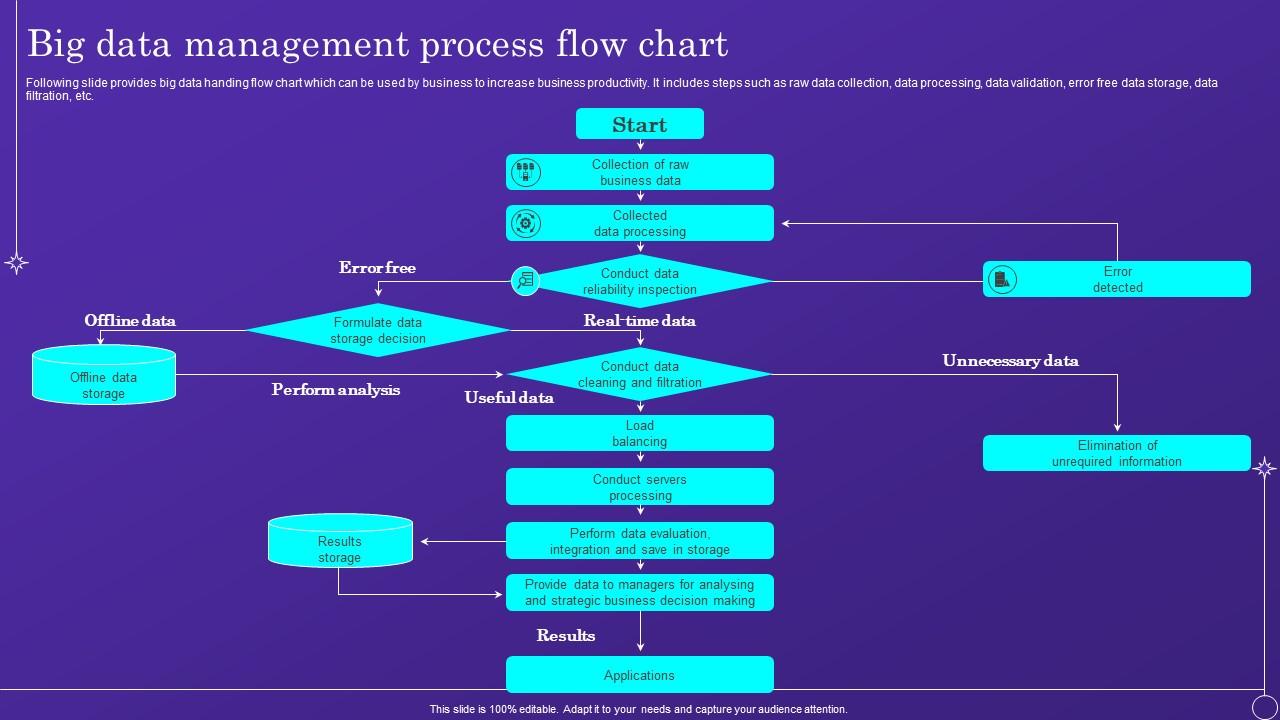

Implementing Real-Time Data Validation

Data validation is crucial for maintaining the integrity of AI outputs. Real-time validation ensures that AI systems operate on accurate and relevant data, crucial for reliable predictions and insights. The World Economic Forum emphasizes the importance of connected data quality for decision-ready AI.

Techniques for Data Validation

- Automated Data Checks: Implement scripts that continuously monitor data for anomalies or inconsistencies.

- Human-in-the-Loop Validation: Occasionally involve human experts to validate data and AI outputs, as discussed in Clinical Leader.

- Feedback Loops: Use feedback from AI outputs to refine data inputs and improve accuracy.

Pitfalls in Data Validation

- Over-reliance on Automation: Automation should support, not replace, human judgment.

- Data Drift: AI systems must adapt to changes in data patterns over time to remain accurate.

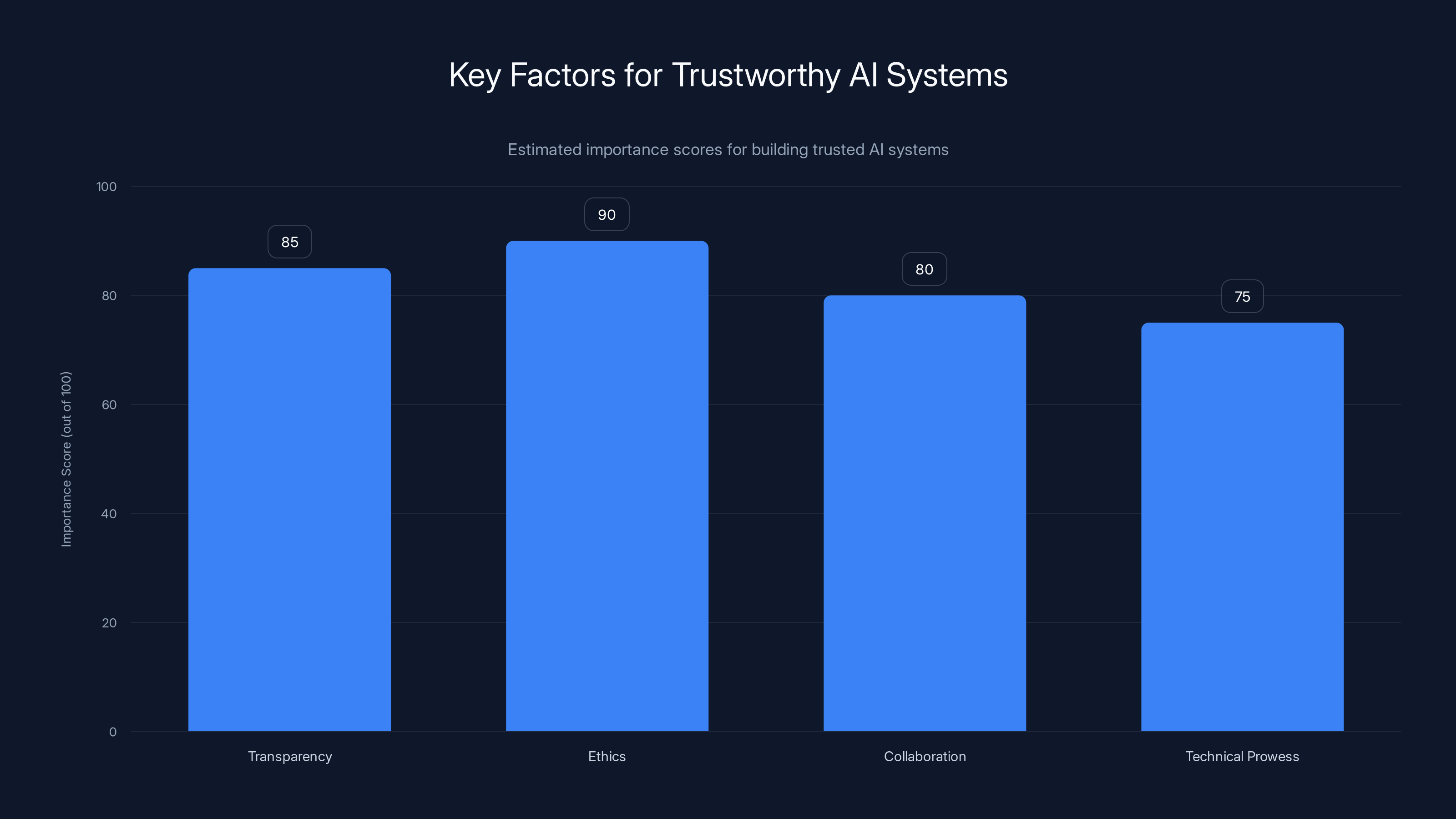

Ethics and transparency are rated as the most crucial factors in building AI systems that researchers can trust. (Estimated data)

Ensuring Human Oversight

While AI can automate many tasks, human oversight is essential to ensure that AI systems remain aligned with ethical standards and research goals. The partnership between USF and By Light underscores the importance of human oversight in AI for national security.

Roles of Human Oversight

- Bias Detection: Humans can identify biases that AI might overlook.

- Ethical Review: Regular audits of AI processes to ensure compliance with ethical standards.

- Decision Intervention: Humans can intervene in critical decisions that AI systems make.

Future Trend: As AI systems become more autonomous, the need for human oversight will shift from routine monitoring to strategic oversight.

Future Trends in Trustworthy AI

As AI continues to evolve, so too must the frameworks that govern its use in research. Emerging trends will shape the future of trustworthy AI.

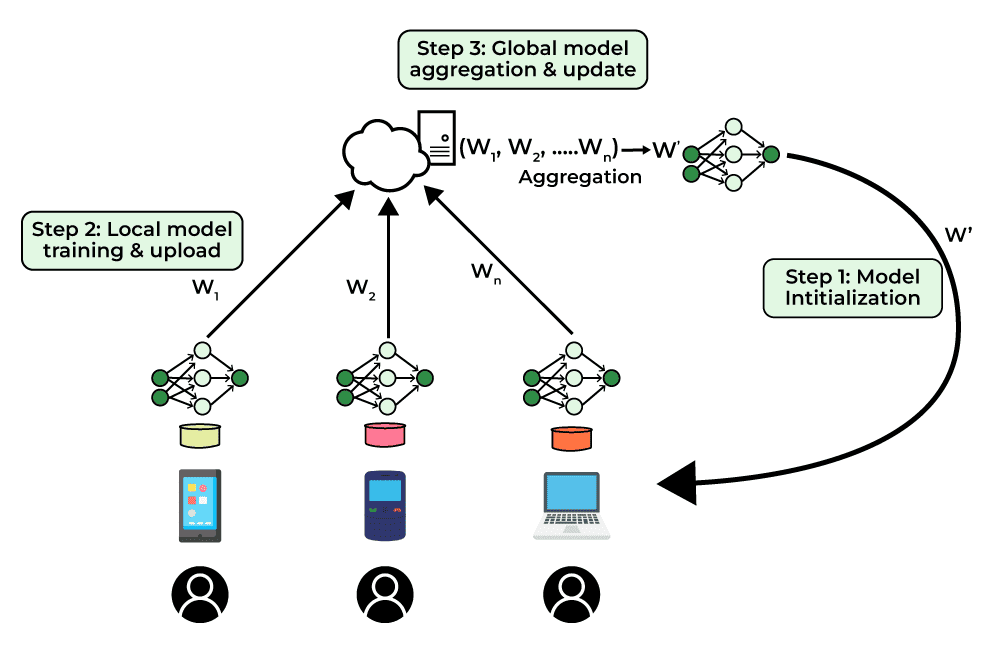

Federated Learning

Federated learning allows AI models to learn from decentralized data sources, enhancing privacy and security. This approach minimizes the risks associated with data sharing while maintaining AI model performance. The AI risk management framework by Databricks highlights the importance of privacy in AI development.

Explainable AI (XAI)

Explainable AI focuses on making AI decisions understandable to humans. By providing insights into how AI systems make decisions, XAI builds trust and facilitates adoption in sensitive research areas.

The Role of AI Ethics in Policy

Governments and institutions are increasingly recognizing the importance of AI ethics in policy-making. Establishing guidelines and regulations around AI use in research will be critical to maintaining public trust.

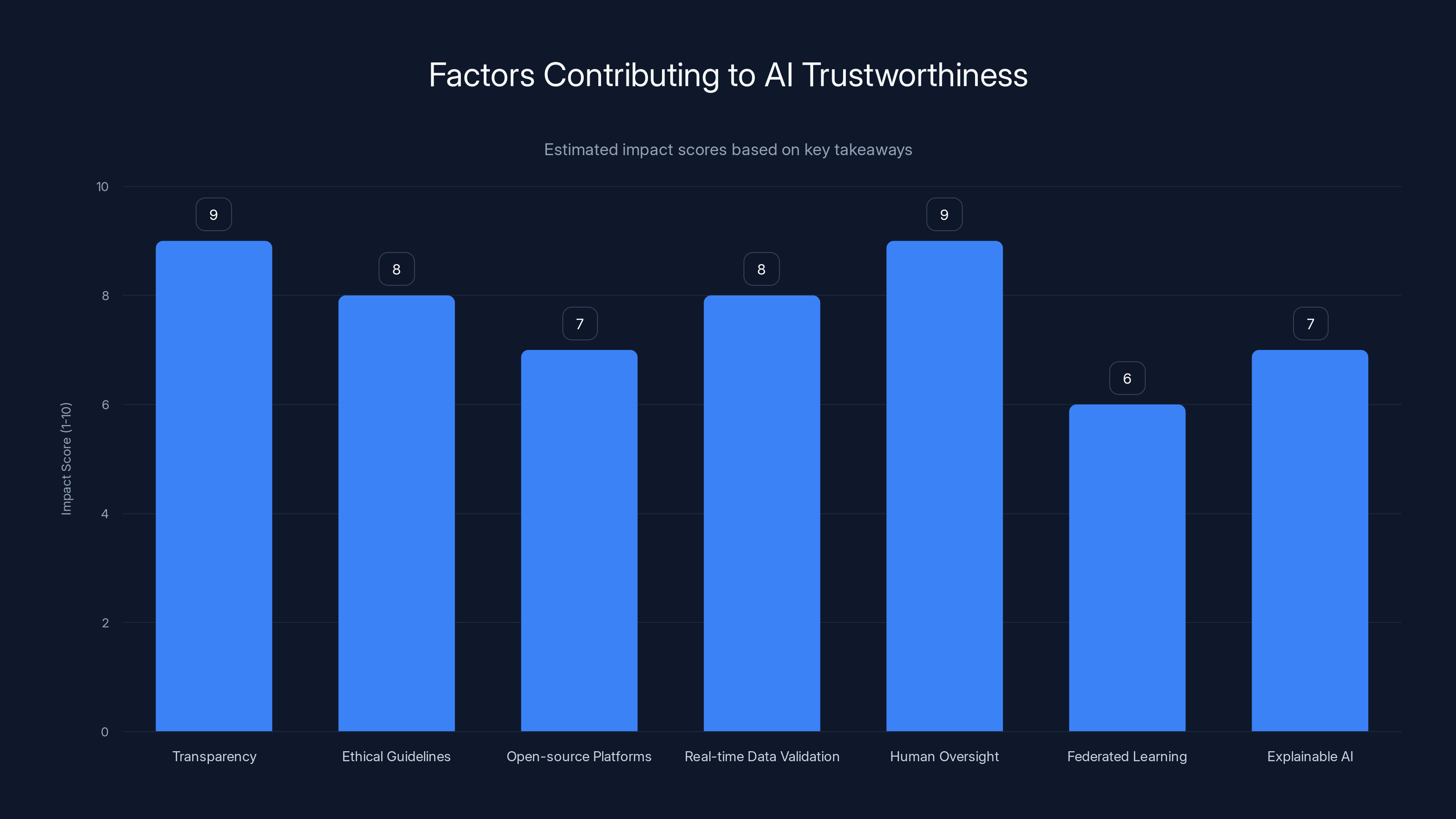

Transparency and human oversight are rated highest in contributing to AI trustworthiness. Estimated data based on narrative insights.

Practical Implementation Guides

Building AI systems researchers can trust involves a series of practical steps. Here’s a blueprint for implementing trustworthy AI in research.

Step 1: Define Ethical Frameworks

Begin by establishing ethical guidelines that govern the development and deployment of AI systems. This should include:

- Ethical Principles: Define core principles such as fairness, transparency, and accountability.

- Stakeholder Involvement: Engage diverse stakeholders to ensure comprehensive ethical considerations.

Step 2: Develop Transparent Algorithms

Ensure that AI algorithms are transparent and interpretable:

- Document Algorithms: Provide detailed documentation of algorithm design and decision-making processes.

- Use Interpretable Models: Favor models that offer insights into decision-making over black-box models.

Step 3: Implement Robust Data Management

Data management is critical for AI reliability:

- Data Provenance: Track the origin and history of data used in AI systems.

- Data Quality Checks: Regularly validate data to ensure accuracy and relevance.

Step 4: Integrate Human Oversight

Incorporate human oversight at critical points in AI workflows:

- Review Processes: Establish processes for human review of AI decisions.

- Training Programs: Provide training for researchers to effectively oversee AI systems.

Step 5: Foster Open Collaboration

Promote open collaboration to accelerate AI development:

- Create Open Repositories: Share AI models and datasets to facilitate community contributions.

- Engage with the Community: Actively participate in AI communities to share insights and gather feedback.

Common Pitfalls and Solutions

Building trustworthy AI is not without challenges. Here are common pitfalls and strategies to overcome them:

Pitfall: Lack of Transparency

Solution: Implement explainability tools that provide insights into AI decision-making processes.

Pitfall: Bias in AI Models

Solution: Use diverse datasets and regularly audit AI models for biases.

Pitfall: Over-reliance on AI

Solution: Maintain a balanced approach by integrating human oversight and decision-making.

Conclusion

Building AI systems that researchers can trust involves more than just technical prowess. It requires a commitment to transparency, ethics, and collaboration. By following best practices and addressing common pitfalls, the scientific community can harness the power of AI while maintaining the trust that is fundamental to research.

Use Case: Automate your research data analysis with AI-powered tools to save time and increase accuracy.

Try Runable For Free

FAQ

What is trustworthy AI?

Trustworthy AI refers to AI systems that are transparent, ethical, and accountable, ensuring they can be trusted by researchers and the public.

How can AI transparency be achieved?

Transparency can be achieved by providing access to AI algorithms, data sources, and decision-making processes, making them understandable to non-experts.

What role does ethics play in AI development?

Ethics ensures that AI systems do not perpetuate biases, discriminate, or cause harm, and that they adhere to moral principles throughout their lifecycle.

How can human oversight enhance AI systems?

Human oversight can identify biases, ensure ethical compliance, and intervene in critical decisions, enhancing the reliability and trustworthiness of AI systems.

What are the benefits of open-source AI platforms?

Open-source platforms promote transparency, reduce costs, facilitate collaboration, and allow researchers to tailor AI tools to specific needs.

What are common pitfalls in building trustworthy AI?

Common pitfalls include lack of transparency, bias in AI models, and over-reliance on AI, which can be addressed through explainability tools, diverse datasets, and human oversight.

Key Takeaways

- Transparency is crucial for AI trustworthiness.

- Ethical guidelines are essential for responsible AI use.

- Open-source platforms enhance collaboration and trust.

- Real-time data validation ensures AI accuracy.

- Human oversight mitigates bias and ethical risks.

- Future trends include federated learning and explainable AI.

- Common pitfalls can be overcome with best practices.

- AI trustworthiness requires a balance of technical and ethical considerations.

Related Articles

- 'ChatGPT: Navigating Ethical Boundaries in AI Assistance' [2025]

- The Complex Dynamics of AI Responsibility: A Case Study on Tumbler Ridge and OpenAI [2025]

- ChatGPT Images 2.0: A Global Perspective with India Leading the Charge [2025]

- Unpacking OpenAI's 'Goblin' Problem: Why It Matters and How to Tame Your Own Goblins [2025]

- Inside ChatGPT's Goblin Obsession: The Nerdy Evolution of AI [2025]

- The Hidden Cost of Google's AI Defaults and the Illusion of Choice [2025]

![Unlocking Science: Building AI Researchers Can Trust [2025]](https://tryrunable.com/blog/unlocking-science-building-ai-researchers-can-trust-2025/image-1-1777635355497.jpg)