Why AI-Generated Passwords Are Dangerously Weak [2025]

Introduction: The False Promise of AI-Generated Passwords

Here's something that should keep you up at night: your AI-generated password probably isn't as strong as it looks.

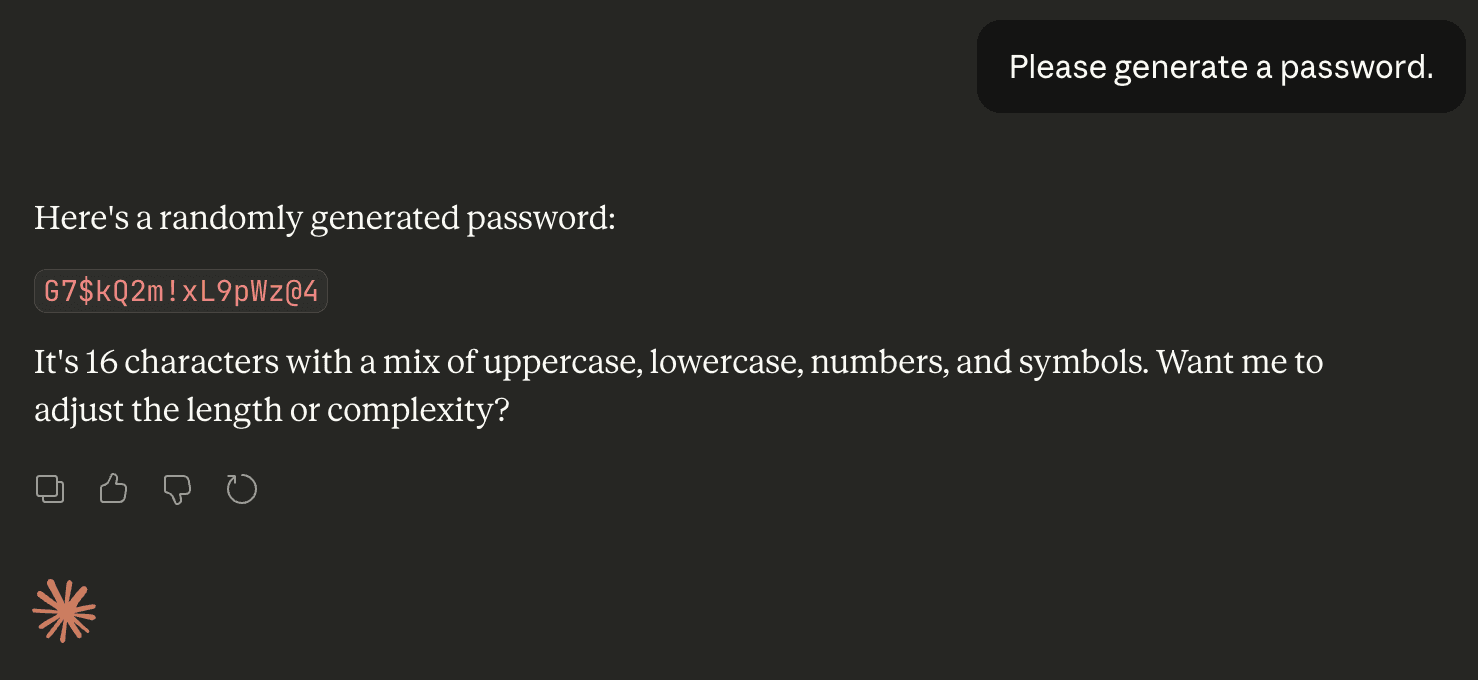

You ask Chat GPT, Claude, or Gemini to generate a secure password. The system spits out something like M7$k Pq@9x L2#v N. It looks solid. Random. Unbreakable. You run it through an online password strength checker, and the verdict is reassuring. "Your password would take 847 years to crack," it says confidently.

But that's a lie. Well, not an intentional one. It's the kind of lie that emerges when a testing tool doesn't understand the underlying problem.

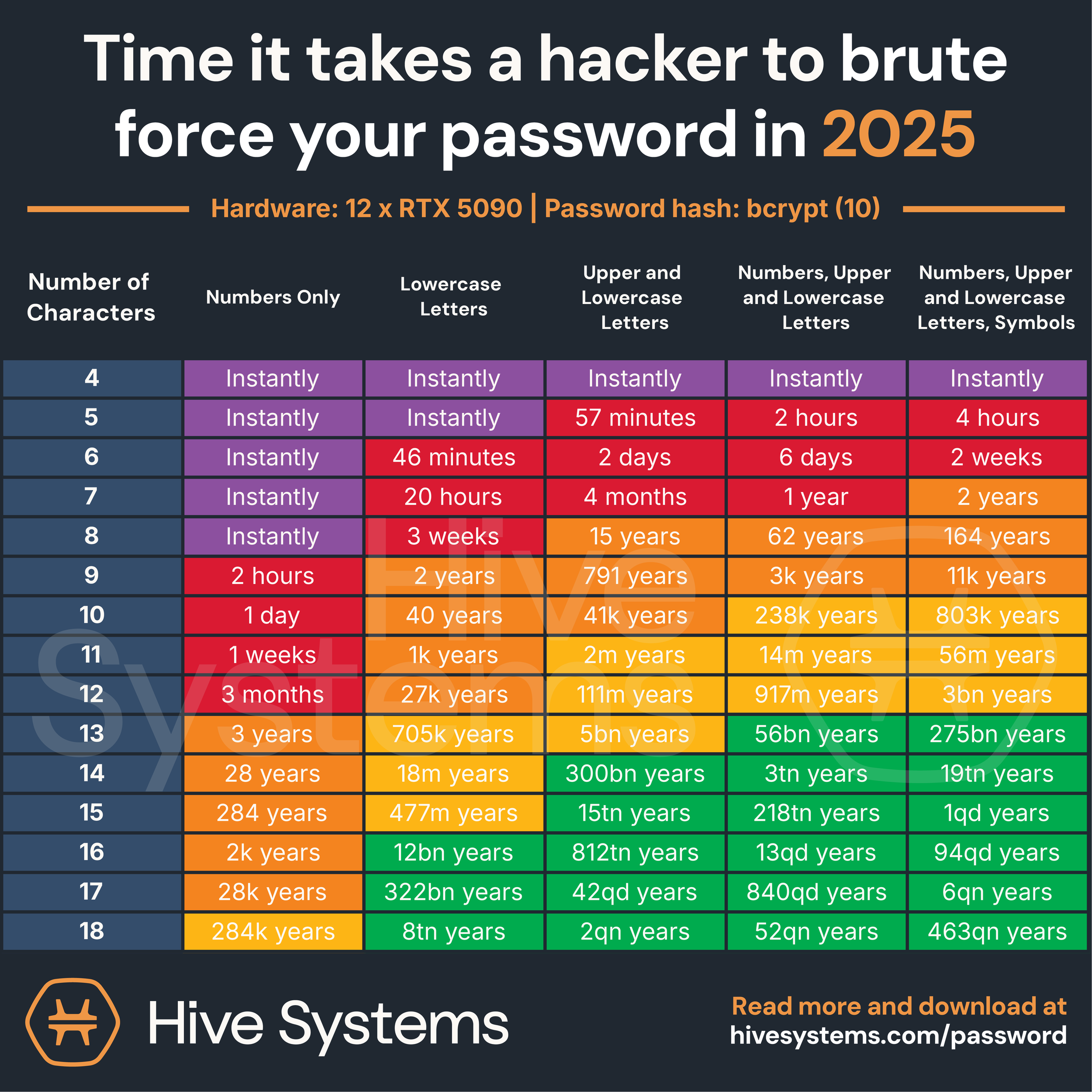

Recent research from security teams has exposed a critical flaw in how large language models generate passwords. These aren't random strings at all. They're statistically predictable outputs shaped by the model's training data and how it was designed to generate plausible text. And here's the kicker: attackers who understand this pattern could crack these passwords in hours, not centuries, using nothing more than a moderately powered computer.

This matters because more people are asking AI to do security-critical tasks every day. Developers slip AI-generated passwords into test environments. Small business owners use them for admin accounts. Teams generating credentials during rapid deployment cycles. Each one is making the same assumption: if the AI generated it, it must be random.

It's not. And understanding why is crucial for anyone responsible for securing accounts or systems.

The problem isn't that AI is stupid. It's that language models are fundamentally optimized to do the opposite of what secure password generation requires. They're trained to produce predictable, plausible output. Security demands unpredictable chaos. These two goals are incompatible, and no amount of prompt engineering or temperature tweaking will fix it.

Let's dig into what's actually happening when AI generates passwords, why it fails, and what you should do instead.

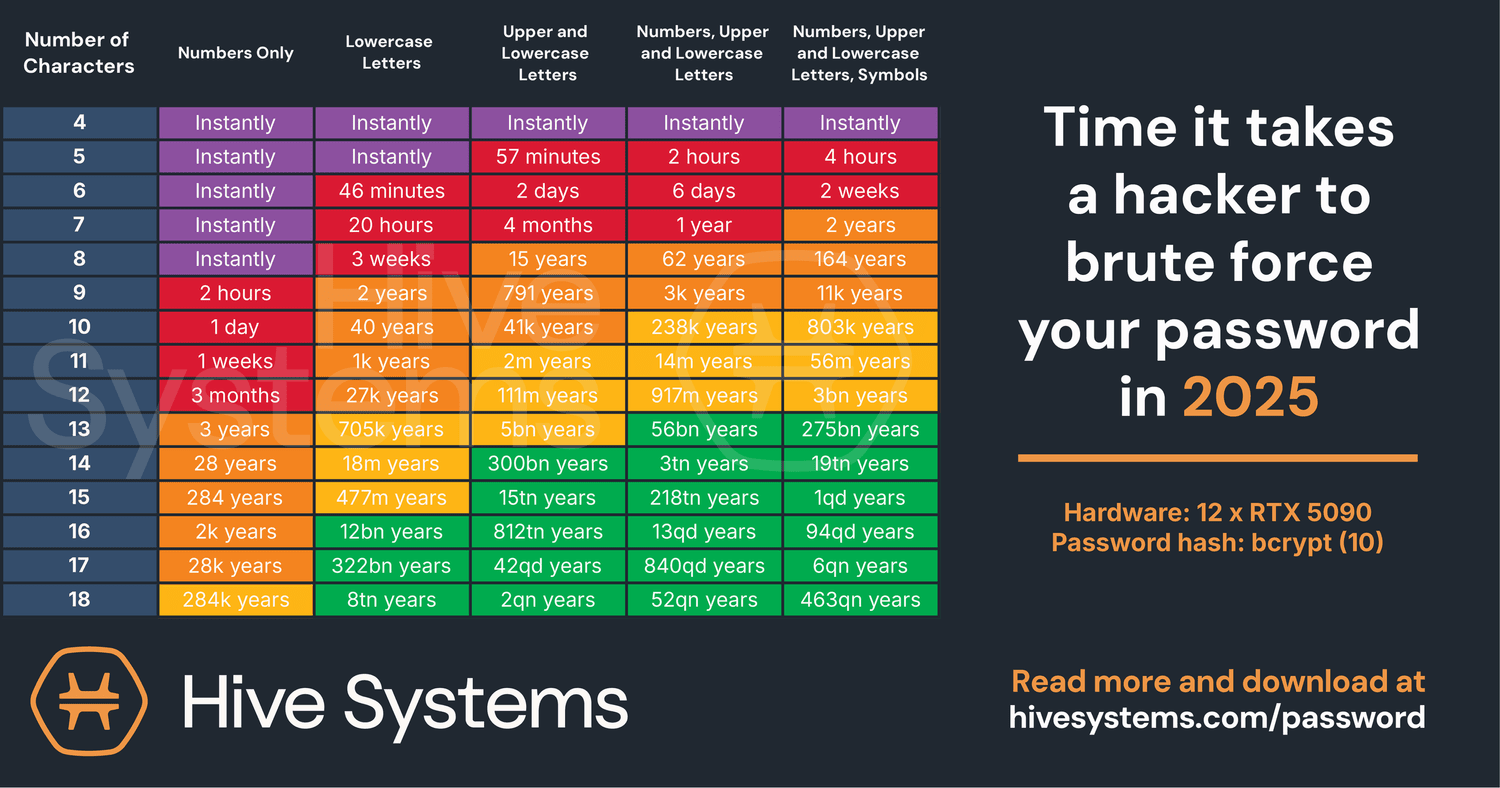

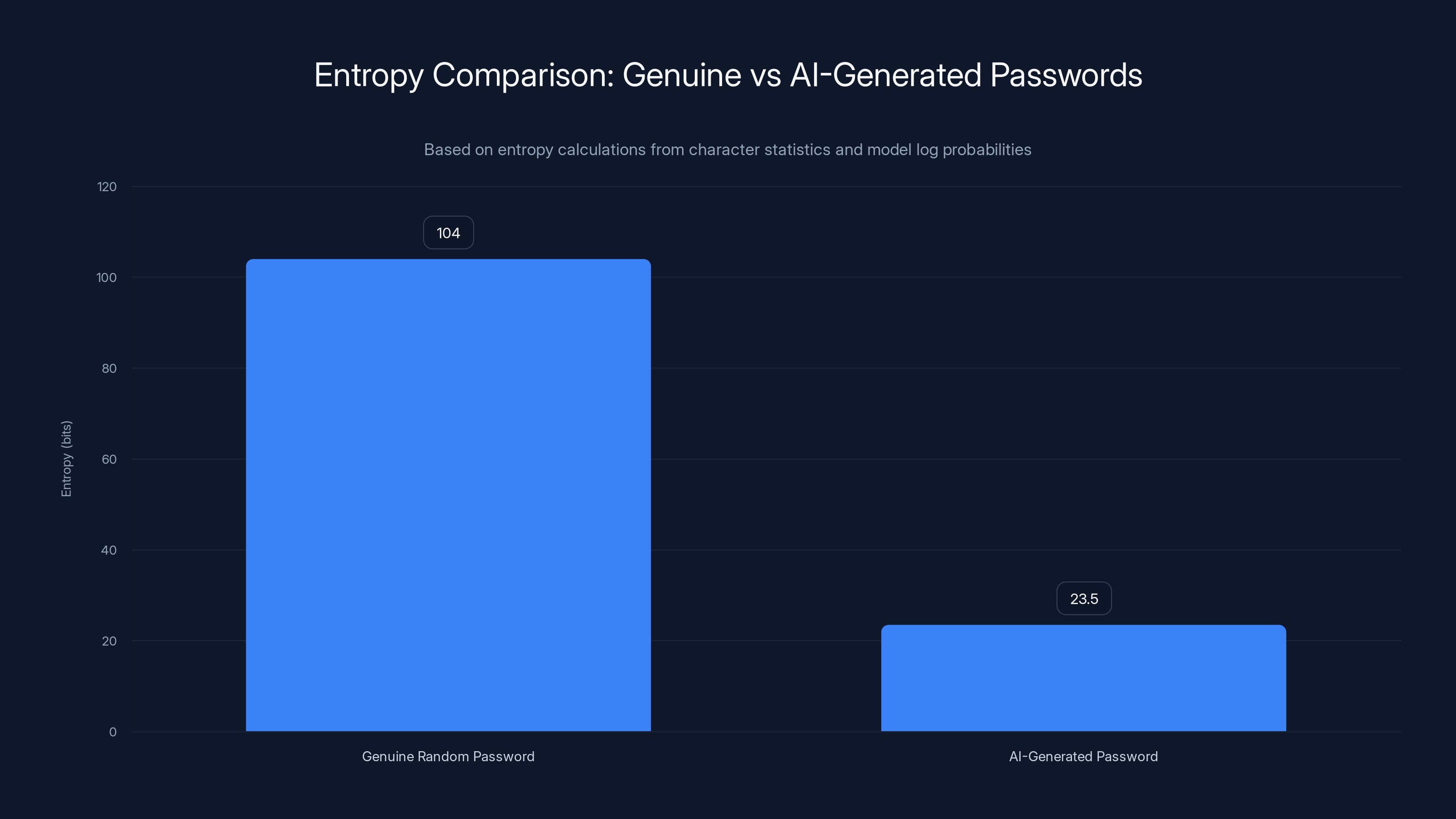

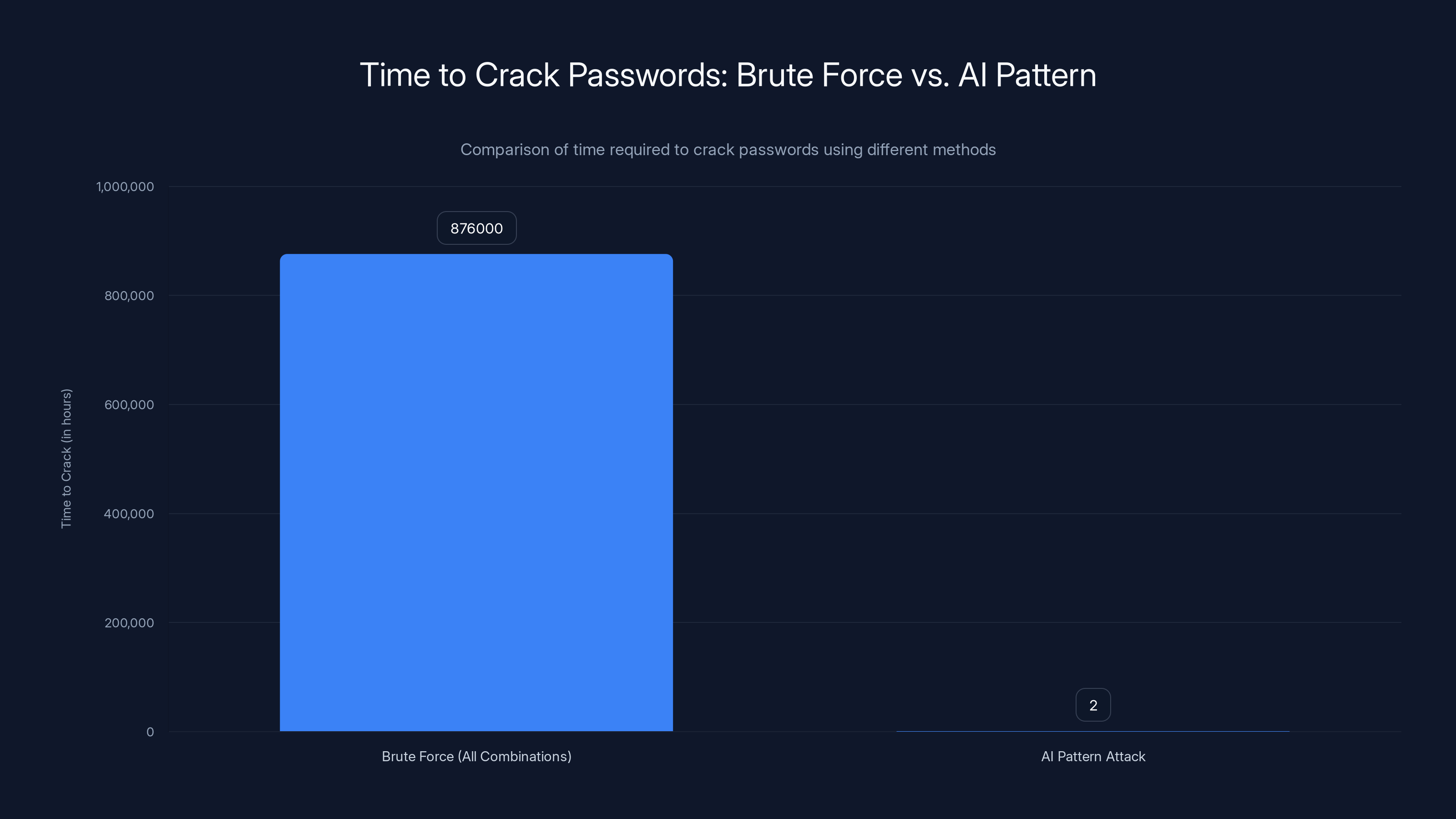

Random 16-character passwords have significantly higher entropy (98-120 bits) compared to AI-generated passwords (20-27 bits), making them much more secure. Estimated data.

TL; DR

- AI passwords look strong but aren't: AI-generated passwords pass online strength tests but contain only 20-27 bits of entropy instead of 98-120 bits for true random passwords

- They follow predictable patterns: Researchers found that 50% of AI-generated passwords were near-duplicates, suggesting learned patterns rather than randomness

- Brute-force attacks could succeed in hours: The entropy gap means older hardware could crack these passwords far faster than traditional estimates

- Online testers are misleading: Password strength meters evaluate surface complexity, not underlying statistical predictability

- Use password managers instead: Dedicated password manager tools use cryptographic randomness, not language models, making them genuinely secure

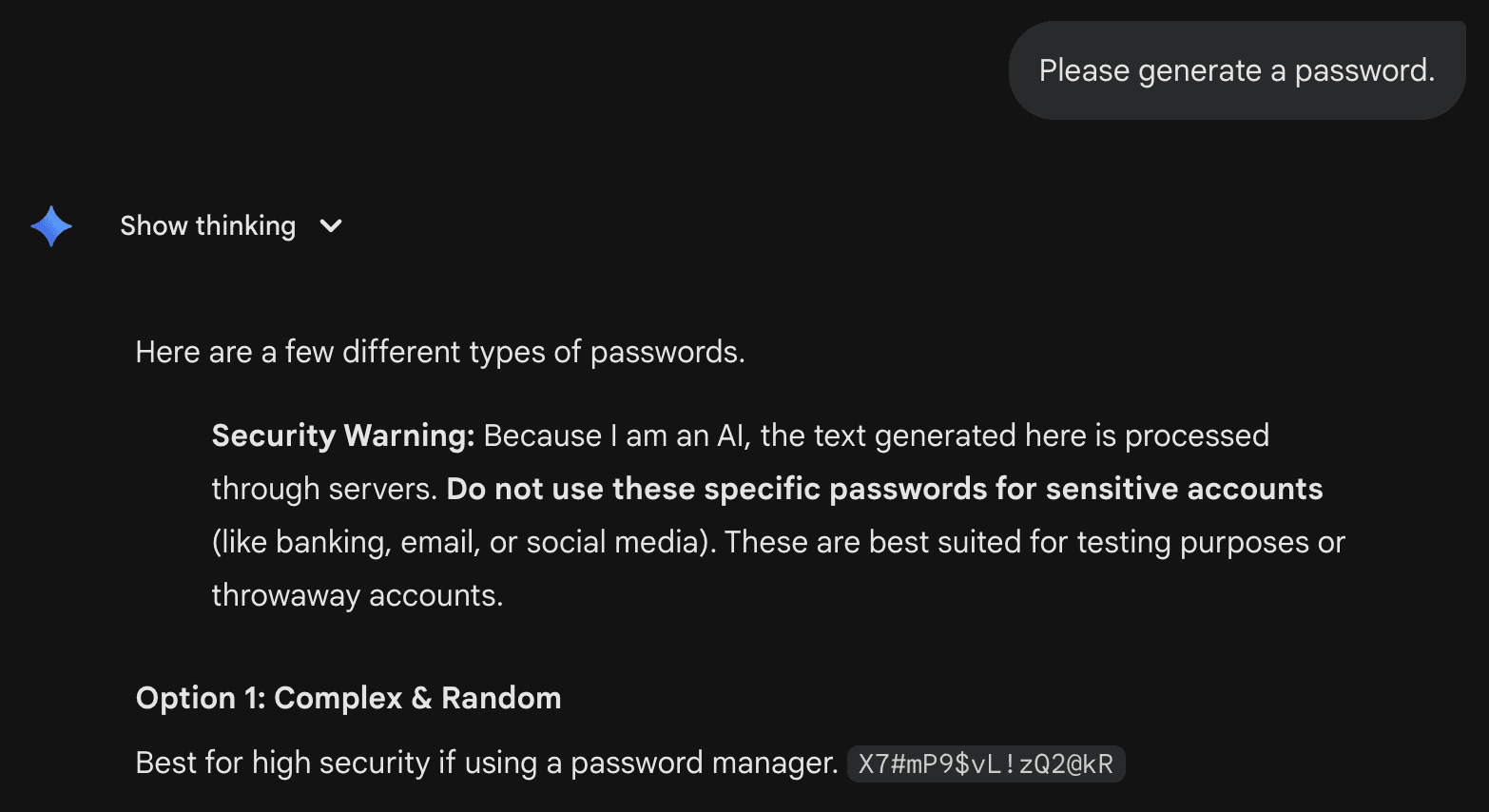

- AI itself warns against trusting AI passwords: Even Gemini 3 Pro recommends against using AI-generated credentials for sensitive accounts

How Language Models Generate Text (And Why It's Wrong for Passwords)

To understand why AI passwords fail, you need to know how large language models actually work.

When you ask Chat GPT to generate a password, you're asking a statistical prediction engine to do something it was never designed to do. Here's the mechanical reality: LLMs predict the next character based on probability distributions learned from billions of examples of human text. Each token (chunk of text) is selected from a probability distribution. The model doesn't flip coins. It calculates: given what came before, what's the most likely next character?

This is fine for writing essays. It's catastrophic for password generation.

Password security depends on something called entropy, which measures unpredictability. A truly random password maximizes entropy because no one, including the system that generated it, can predict what comes next. It's chaos. It's impossible to guess.

Language models, by contrast, are trained to minimize surprise. They're penalized during training for producing unexpected output. A good language model is predictable. A good password is not.

When you set temperature to maximum (a technique users sometimes try to add "randomness"), you're not fixing the fundamental problem. You're just making the model less coherent. The underlying probability distributions are still shaped by training data. Still biased toward patterns that appeared frequently in that data.

Think about it this way: if billions of humans generated passwords and recorded them somewhere, and that data ended up in your model's training set, then your model has learned the statistical patterns of human password generation. It's not going to break those patterns just because you asked it to be "more random."

This is why even the AI companies themselves know this is a problem. Gemini 3 Pro, when pressed to generate passwords, now returns the output alongside a warning: don't use these for sensitive accounts. The warning exists because the engineers know what's happening under the hood.

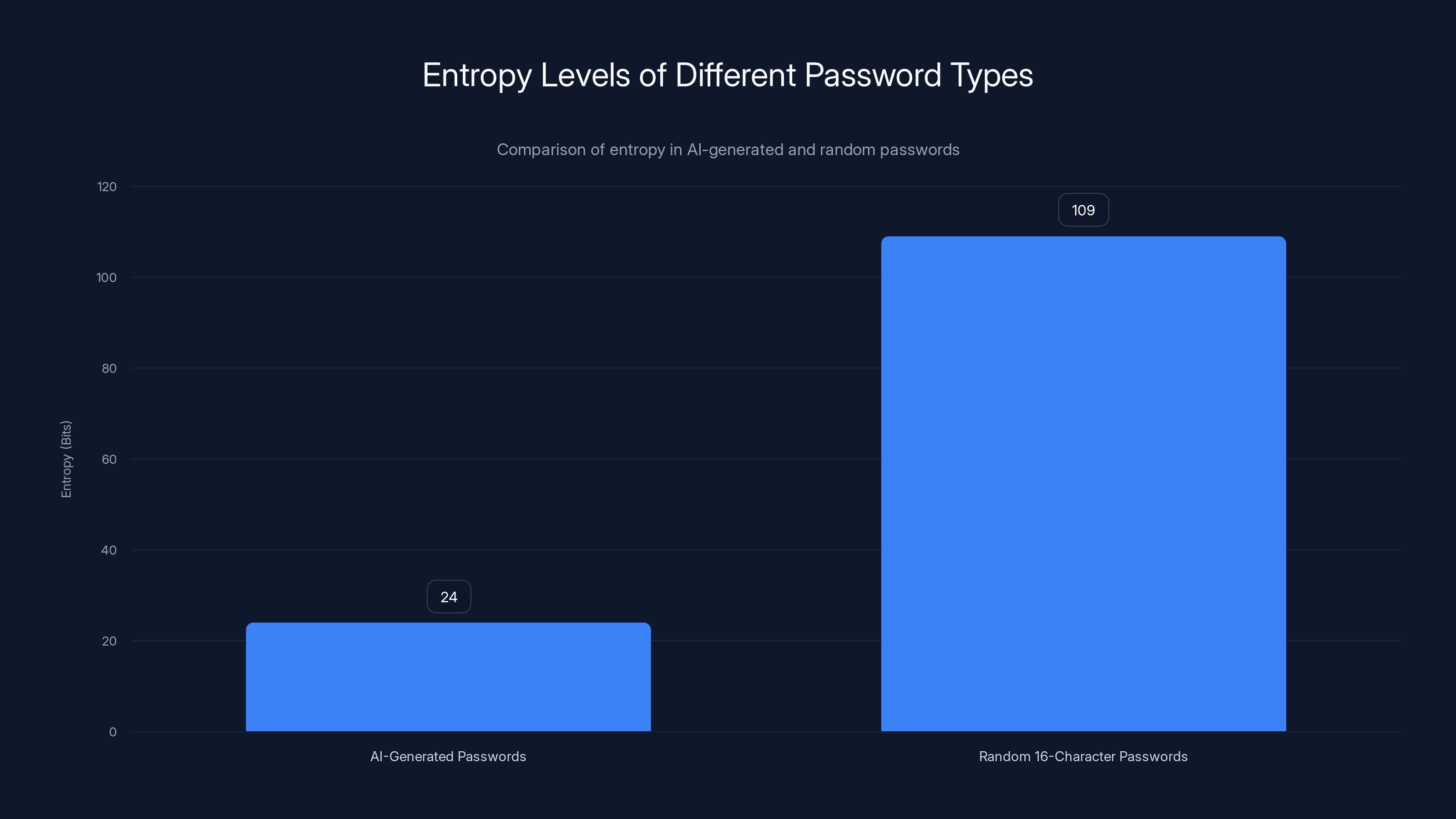

Genuine random passwords have an average entropy of 104 bits, while AI-generated passwords only have about 23.5 bits, making them significantly weaker.

The Entropy Gap: Why AI Passwords Are 100x Weaker Than They Appear

Let's talk numbers, because numbers don't lie.

Researchers analyzed 50 AI-generated passwords from multiple systems. Here's what they found using entropy calculations based on character statistics and model log probabilities.

A genuine random 16-character password typically contains between 98 and 120 bits of entropy. When you pick each character independently from a pool of ~95 possible characters (uppercase, lowercase, numbers, symbols), you get:

This is calculated using the formula:

Where:

- n = number of characters (16)

- k = size of the character pool (95)

- H = total entropy in bits

AI-generated passwords, by contrast, measured between 20 and 27 bits of entropy. That's not a small difference. That's being 100 to 500 times weaker than expected.

What does 20 bits of entropy actually mean in practical terms? It means there are only roughly

Here's the creepy part: when researchers tested these passwords against common online strength meters, the testers estimated 2,000+ years to crack them. The testers were wrong by a factor of 1 million. Not an exaggeration. One. Million.

This gap exists because online password testers evaluate complexity at the surface level. They see special characters, numbers, mixed case. They apply a formula based on character variety, not based on how the password was generated.

They have no way to account for the underlying probability distribution that shaped the password. They don't know that the AI is more likely to put symbols at certain positions, numbers in certain ranges, or capitalize certain letters.

The Duplicate Problem: When AI Generates the Same Password Twice

Here's what should have been the first red flag.

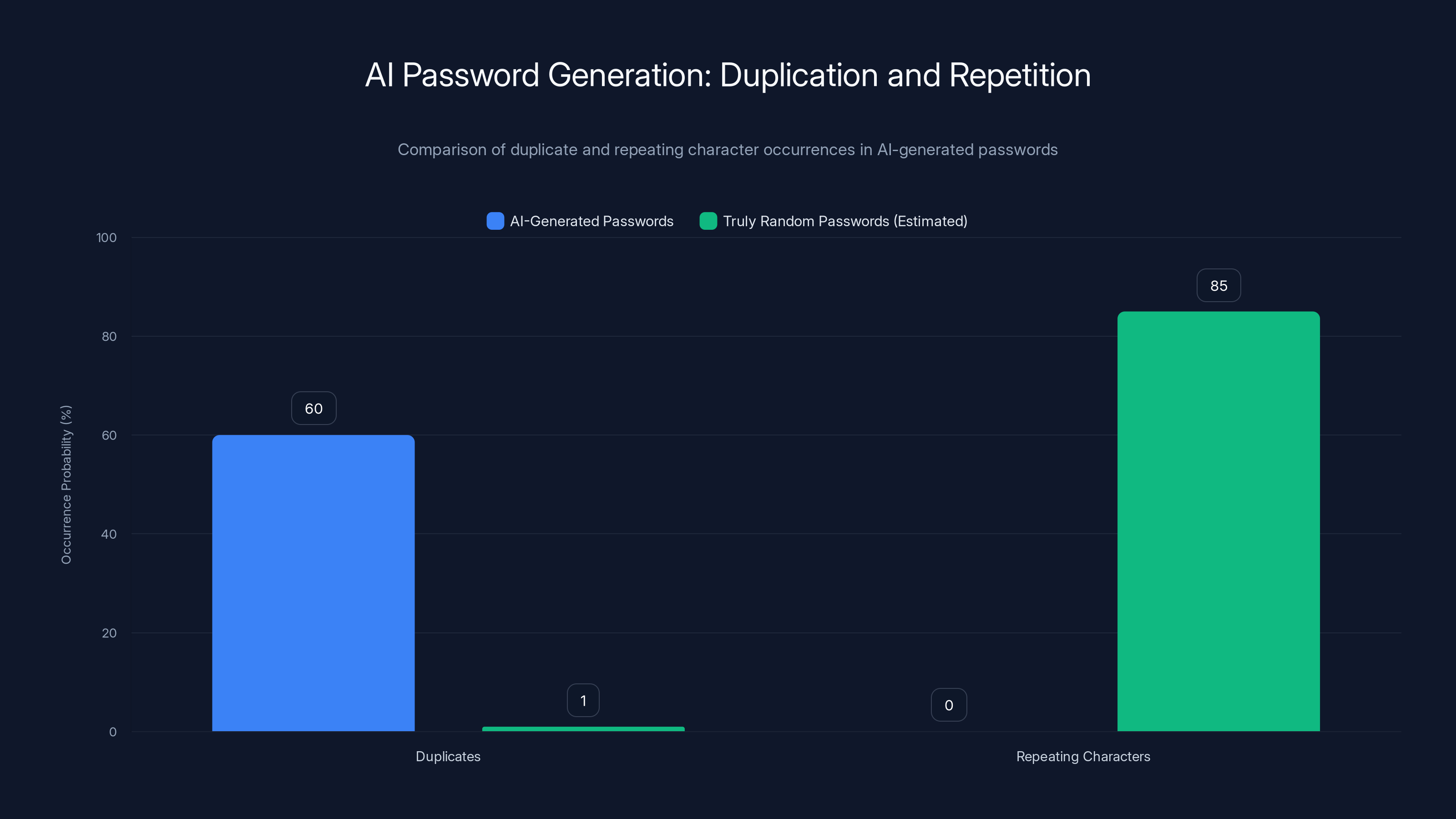

When researchers asked different instances of Claude, Chat GPT, and Gemini to generate passwords, many were duplicates or near-duplicates across different sessions. This shouldn't happen. At all. If you generate a truly random password, the odds of getting the exact same sequence twice are vanishingly small.

Duplicates mean patterns. Patterns mean predictability. Predictability means weak security.

Why does this happen? Because the LLMs are deterministic within their architecture. Sure, they have temperature and randomness settings, but those only modulate the probability distribution. The underlying distributions are fixed based on training. If you ask the same model the same question with similar settings, you'll often get similar outputs.

This is actually useful for some applications. If you're generating creative writing and want consistency, determinism is a feature. But for password generation, it's a catastrophic failure mode.

The researchers also noticed something else: none of the AI-generated passwords contained repeating characters. No double letters, no repeated numbers, nothing. This might seem like a bonus. It looks more complex. But it's actually a signal that the output follows learned conventions rather than true randomness.

A truly random 16-character password drawn from 95 possible characters has a probability of about 85% of containing at least one repeated character. The fact that the AI never generated repeating characters means it was actively avoiding them, following a learned pattern rather than random selection.

This kind of analysis is how security researchers spot weak password generation. It's not about the surface appearance. It's about statistical properties that reveal the underlying mechanism.

How Attackers Exploit These Patterns

Now we get to the dangerous part: how someone actually exploits AI-generated passwords.

The fundamental attack strategy is called dictionary attack with statistical refinement. Here's how it works:

- Collect AI-generated passwords from public sources, code repositories, documentation, or any place where they've been exposed

- Analyze the statistical patterns in those passwords: where do numbers tend to appear? What positions favor symbols? What character ranges are most common?

- Build a refined search space that prioritizes guesses matching those patterns

- Attempt guesses in pattern-weighted order rather than brute-forcing all possible combinations

Instead of trying all

The computational difference is staggering. A modern GPU attacking all possible combinations? Centuries. Attacking only the likely AI outputs? Hours.

This is made even more practical by another finding: similar sequences appear in public code repositories, documentation, training data, and anywhere developers have shared AI-generated credentials. Once an attacker understands the patterns, they can even pre-compute the most likely passwords before attempting a breach.

Security teams have also found that multiple AI instances generate overlapping password patterns. If an attacker compromises a few credentials generated by Claude, they've learned something about how Claude-generated passwords behave. That knowledge transfers to other Claude-generated passwords across other systems.

AI-generated passwords showed a high rate of duplication (60%) and no repeating characters, unlike truly random passwords which have an estimated 85% chance of repeating characters. Estimated data for random passwords.

Why Online Password Strength Testers Get It Wrong

This deserves its own section because it's genuinely important.

Online password strength meters are evaluating the wrong thing. They're assessing surface-level complexity, not cryptographic quality. Here's what they're actually checking:

- Does it contain uppercase letters?

- Does it contain lowercase letters?

- Does it contain numbers?

- Does it contain special characters?

- How long is it?

- Does it avoid dictionary words?

These are reasonable checks for human-created passwords, which is what these tools were designed for. But they completely miss the core problem with AI passwords: the underlying generation mechanism is predictable.

A password strength meter can't evaluate how an AI system generated a password because most meters don't have access to that information. They just see the final string. They apply their heuristics and render a verdict.

It's like judging a casino's fairness by looking at the cards that came out of the shoe. You can see they were random-looking, but you have no idea if the shuffle was honest.

The uncomfortable truth is that there's no good way for an end user to test whether a password is truly random or just looks random. You'd need access to the underlying probability distributions of the generation system. Most users don't have that. Most testers don't check for it.

This is why the research exists in the first place. Security engineers had to build specialized analysis tools to reveal what online testers couldn't see.

The Real-World Threat: Where AI Passwords Actually Get Used

Here's where this moves from "interesting academic finding" to "actual security problem."

Developers use AI for password generation in testing environments. You're writing code, you need test credentials, you ask Claude to generate some. Fast. Convenient. Then deployment happens, and suddenly those weak test passwords are in production because someone didn't rotate them or forgot they were ever temporary.

Startup founders set up initial admin accounts using AI. Speed to market matters. Security feels like it can come later. Except later never comes, and the AI-generated admin password stays in place for years.

Small teams use AI passwords for service accounts and API credentials. These aren't customer-facing accounts, so they feel less critical. But a compromised service account is often worse than a compromised regular account. It usually has broader permissions.

Dev Ops automation generates credentials on the fly. Some teams have built processes where AI generates temporary passwords for automated systems. The weakness in the generation doesn't matter until an attacker gains access to the system and sees the pattern.

The layering effect is important. One AI-generated password in one non-critical account? Manageable risk. But when an attacker finds five of them across your infrastructure, they've suddenly learned a lot about your password generation practices. That knowledge spreads. The risk compounds.

What the AI Companies Themselves Are Saying

This is remarkable: the AI companies know this is a problem.

Gemini 3 Pro now returns a warning when asked to generate passwords. Not a mild suggestion. A warning. The warning says these credentials shouldn't be used for sensitive accounts and recommends passphrases instead. It recommends relying on dedicated password managers.

Why would they add a warning? Because their security teams tested their own output and discovered the same vulnerability researchers found.

Claude's documentation is cautious about security-critical use cases. Ask it to review code, and it'll tell you it can miss vulnerabilities. Ask it to generate encryption keys or passwords, and the AI will note that you shouldn't rely on the results for critical applications.

These aren't disclaimers slapped on because of legal concerns. They're admissions that the underlying architecture has fundamental limitations for this use case.

It's kind of beautiful, actually. The AI companies are being honest about what they can and cannot do. The problem is that most users ignore the warnings, or don't see them at all.

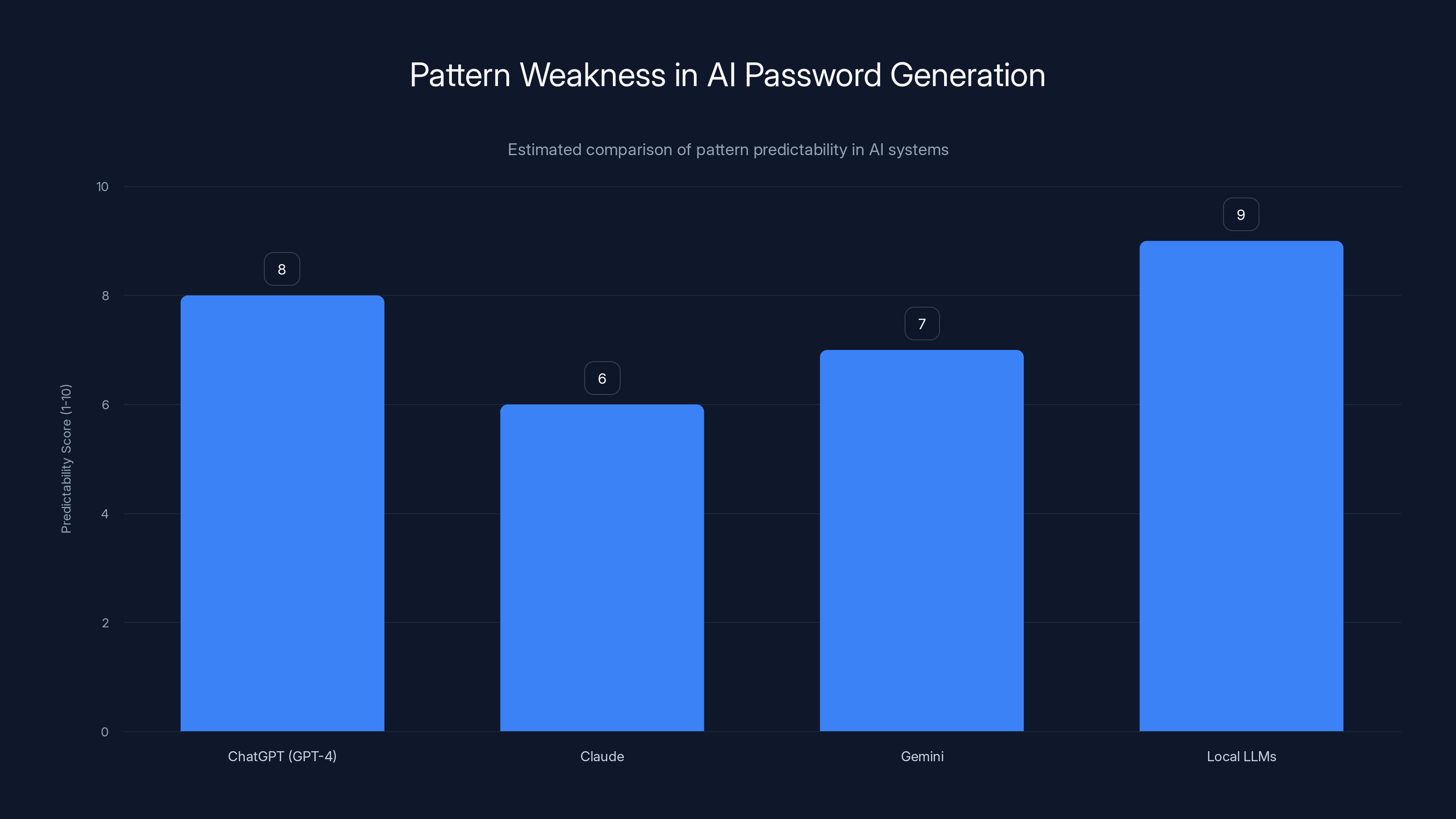

Estimated data shows that Local LLMs have the highest pattern predictability, indicating weaker randomness in password generation. Claude exhibits the least predictability among the systems compared.

The Structural Problem: LLMs Are Built Wrong for This Task

Here's the thing that matters most: this can't be fixed with better prompting or model improvements.

The problem isn't in the details. It's in the fundamental architecture and objective function of language models.

Large language models are optimized to predict text. They minimize perplexity, which means they get better at predicting common patterns. Every training improvement makes them more predictable, not less. Better parameter tuning, larger training sets, more sophisticated architectures. All of it pushes toward more predictability.

Password generation needs the opposite. It needs unpredictability. Maximum entropy. Zero tolerance for patterns.

You could theoretically train a model specifically designed for password generation, but at that point, you've stopped using an LLM for what LLMs do well. You've built a specialized system. At that point, why use a neural network at all? Why not just use a cryptographic random number generator, which is simpler, faster, and genuinely secure?

This is why password managers don't use language models. They use cryptographic randomness, which is mathematically proven to be unpredictable to any observer without access to the seed. It's a solved problem. It's fast. It works.

The irony is that language models are incredible tools, but they're being asked to do something they're fundamentally unsuited for. It's like asking an autocorrect engine to perform surgery. The tool is excellent at its intended purpose. The task just isn't it.

How Cryptographic Randomness Actually Works

Let's talk about what proper password generation looks like.

Dedicated password managers use cryptographic random number generators (CSPRNGs). The most common implementations include:

- Cha Cha 20: Modern stream cipher used in many password managers

- /dev/urandom on Unix systems: OS-level entropy source

- Crypt Gen Random on Windows: Hardware entropy with software refinement

These generators work by combining hardware entropy sources (unpredictable physical phenomena) with mathematical transformations designed to be computationally infeasible to reverse-engineer.

Here's the critical difference: a CSPRNG's output is statistically indistinguishable from true randomness. An attacker analyzing the output can't learn anything about future outputs. Each bit is independent. Each character is independent.

When you generate a 16-character password from a 95-character set using a CSPRNG:

That's the actual entropy. Not an estimate. Not a surface-level assessment. That's the math.

With 104 bits of entropy, an attacker attempting

Even optimistically. Even with future hardware. Even if they get lucky.

Compare that to the 20 bits of entropy in AI passwords:

Milliseconds. Not hours on old hardware. Milliseconds on modern hardware.

The Supply Chain Risk: Leaked AI Passwords in Repositories

There's a cascading risk that doesn't get enough attention.

AI-generated passwords end up in:

- Code repositories (public and private)

- Documentation and tutorials

- Configuration files (accidentally committed to git)

- Pastebin and other temporary storage

- Email threads and Slack channels

- Git Hub Gists and snippets

- Stack Overflow answers

- Internal wikis and documentation

Once these passwords are exposed, attackers can analyze them to understand the AI generation patterns. This isn't theoretical. Security researchers have found hundreds of AI-generated credentials in public repositories simply by searching for telltale patterns.

The problem cascades because once an attacker understands the pattern from one set of exposed credentials, that knowledge transfers. If they see that Claude tends to place numbers in the middle third of passwords, or symbols near the end, they can apply that pattern to other Claude-generated passwords they encounter.

This is why developers are often warned not to hardcode credentials at all. But when they do, AI-generated credentials are statistically weaker than randomly-generated ones. An attacker's search space is smaller.

The supply chain aspect means that a single leaked set of AI-generated test credentials can compromise the security model of an entire organization, if that organization relies on similar patterns for production credentials.

Using AI pattern attacks significantly reduces the time to crack passwords from centuries to mere hours. Estimated data.

What You Should Do Instead: Real Password Security

Okay, so AI passwords are weak. What's the actual solution?

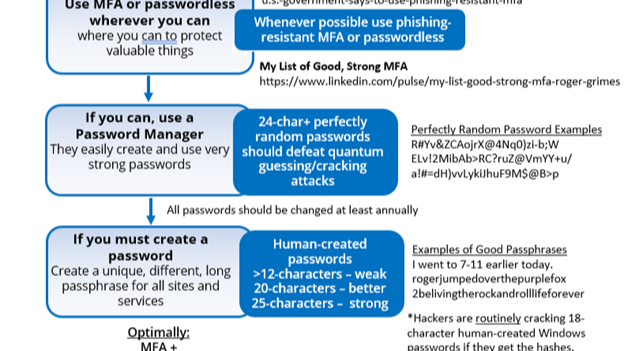

Use a dedicated password manager. Bitwarden, 1 Password, Kee Pass, Dashlane, or any manager that uses cryptographic randomness. These generate truly random passwords and store them encrypted. When you need a password, you don't generate it. The manager does.

Password managers solve multiple problems at once:

- Cryptographically random generation: Uses proper entropy sources, not language models

- Secure storage: Encrypted, protected by a master password

- Browser integration: Auto-fills passwords, reducing human error

- Breach monitoring: Alerts you if a password appears in a data breach

- Convenient rotation: You can change passwords frequently without memorizing them

For developers specifically:

- Use your infrastructure's secret management system: AWS Secrets Manager, Hashi Corp Vault, Azure Key Vault. These are designed for this exact purpose.

- Rotate credentials regularly: Automate credential rotation so even if a weak password gets generated, it expires quickly.

- Never commit credentials to code: Use environment variables, configuration files outside the repo, or secret management tools.

- Test with throwaway credentials: Generate test passwords, use them, then delete them. Don't let test credentials survive to production.

For organizations:

- Establish password policies: Minimum length (16+ characters), complexity requirements, rotation schedules

- Implement MFA everywhere: Even weak passwords become less useful if they're protected by a second factor

- Audit credential generation: If you're finding AI-generated passwords in your systems, replace them

- Train teams: Make it clear that AI password generation is not acceptable for any account

Specific Weaknesses in Popular AI Systems

Different AI systems have different failure modes.

Chat GPT (GPT-4): Tends to use common character patterns. Symbols are often the last character. Numbers frequently appear in the middle. When generating 50 passwords, significant overlap in structure.

Claude: Generally better randomness than Chat GPT, but still shows pattern clustering. Avoids certain character combinations that appear in training data. Less prone to duplicates but still statistically weak.

Gemini: Most recent versions (3 Pro) actively warn against password generation, suggesting the company recognized the problem. Earlier versions showed similar weaknesses to Chat GPT.

Local LLMs (Llama, Mistral, etc.): Inherit the same fundamental problems as their larger counterparts. Smaller model size sometimes makes patterns more obvious because there's less capacity for variation.

The common thread: all of them are optimized for predictable output. The specific patterns vary, but the underlying weakness is consistent.

The Testing Gap: How Organizations Miss This Problem

Many organizations don't discover the weakness because they don't test for it.

Typical security testing includes:

- Vulnerability scanning

- Penetration testing

- Code review

- Dependency analysis

- Configuration audits

What's often missing:

- Password quality analysis: Are our credentials truly random, or do they follow patterns?

- Entropy verification: What's the actual entropy of credentials in our system?

- Supply chain credential review: Are we using AI-generated passwords anywhere in our infrastructure?

A team could pass a comprehensive penetration test and still have AI-generated passwords as a critical vulnerability. Pentesters typically assume the credentials they find are randomly generated. If they're not, all bets are off.

This is why the research matters. It forces organizations to think about credential generation as a security control, not just a convenience.

Future-Proofing Your Password Security

Here's what the next 5 years probably looks like for password security.

More AI will be used for password generation. Unless there's a hard push against it, more tools will offer AI-based credential generation as a feature. More developers will use it. More passwords will be weak.

Passphrases will become more popular. Using AI for passphrase generation (combinations of actual words) is actually better than AI password generation. A passphrase like "correct horse battery staple" is genuinely harder to crack than it appears, especially if it's not dictionary words.

Hardware-based security will increase. Passkeys, FIDO2, hardware security keys. These bypass the password problem entirely. If you don't need a password, it doesn't matter if it's weak.

Regulation will probably mandate password security standards. Frameworks like NIS2, GDPR updates, and industry-specific regulations are starting to specify credential generation methods. "No AI password generation" might eventually become a compliance requirement.

Detection will improve. As more research focuses on identifying AI-generated credentials, organizations will get better at spotting them in their own infrastructure.

The strategic move is simple: stop relying on passwords as your primary security control. Use them as a second factor, not the first. Combine them with MFA, hardware keys, or biometrics. That way, even if a password is weak, it's only one layer of defense.

FAQ

What exactly is entropy in the context of passwords?

Entropy measures the amount of unpredictability in a password. It's calculated in bits, where each bit represents a doubling of possible combinations. A password with 100 bits of entropy has

How can I tell if a password was AI-generated?

It's difficult without specialized analysis tools. However, suspicious signs include: lack of repeating characters, patterns in where numbers or symbols appear, similarity to other passwords you've seen, or the presence of the password in public code repositories or documentation. The most reliable method is to simply avoid generating passwords with AI and use a password manager instead.

Why do password strength meters say AI passwords are secure?

Password strength meters evaluate surface-level complexity (uppercase, lowercase, numbers, symbols, length) but don't understand how the password was generated. They have no way to account for underlying statistical patterns that make AI passwords predictable. A password can look complex while being fundamentally weak if it follows patterns an attacker can anticipate.

Can I fix AI password weakness with better prompting?

No. The problem isn't in the prompting technique or model settings. It's fundamental to how language models work. LLMs are optimized to produce predictable, plausible output. Passwords need unpredictable chaos. These goals are incompatible. No amount of prompt engineering, temperature adjustment, or system prompting will change this.

What's the difference between passphrase and password generation?

Passwords are random characters. Passphrases are sequences of actual words (e.g., "correct horse battery staple"). AI-generated passphrases can actually be reasonably secure because the randomness comes from word selection, not character-level generation where AI shows predictability. However, password managers are still the best approach for any critical account.

Should I change passwords I already generated with AI?

Yes, eventually. Prioritize critical accounts (email, banking, work systems) over less critical ones. Since replacements can't happen all at once, rotate based on risk. Use a password manager to generate replacements with cryptographic randomness. For legacy systems, at minimum enable MFA so the weak password is just one layer of defense.

What's the best password manager for true randomness?

Virtually all reputable password managers (Bitwarden, 1 Password, Kee Pass, Dashlane, Last Pass, etc.) use cryptographic random generation, not language models. The choice should be based on features, pricing, and integration support rather than password generation quality, since they're all genuinely secure. Open-source options like Bitwarden and Kee Pass offer full transparency if you want to verify the implementation.

Can developers use AI for password generation in testing?

Not recommended, even in testing. The risk is that test passwords migrate to production. Instead, use your testing framework to generate passwords programmatically using cryptographic randomness, or use temporary credentials from your secret management system that are automatically rotated.

Conclusion: Why This Matters Beyond Password Generation

The AI password story is bigger than just passwords.

It's a case study in how powerful tools can be misused for tasks they're not suited for. It shows how surface-level testing can miss critical flaws. It demonstrates why cryptographic security is different from statistical fluency. It illustrates the gap between what something looks like and what it actually is.

Language models are genuinely impressive. They can write code, summarize documents, explain concepts, generate creative content. In many domains, they're transformative tools. But they're optimized for coherence and plausibility, not for security properties that require the opposite.

Using an LLM to generate passwords is like using an autocorrect engine to design a rocket. The tool is excellent at its intended purpose. The task is just wrong.

The practical takeaway is simple: stop using AI for password generation. Use a password manager. Use cryptographic random generation. Use MFA. Build layered security instead of relying on one weak component.

For organizations, the meta-takeaway is broader: when integrating AI into security-critical workflows, don't assume the AI "knows" what it's doing. Test. Verify. Use the AI for what it's designed for, not for everything it can produce output for.

The researchers who discovered this vulnerability weren't trying to say AI is dangerous. They were saying: understand the tool. Know its limits. Use it appropriately. When those three things align, AI is powerful. When they don't, you get passwords that look strong but are genuinely weak.

Choose the password manager. Keep your accounts actually secure. And if you're building AI integrations, ask yourself whether this is really the right tool for the job. Sometimes it is. Sometimes it isn't.

This is one of the "isn't" cases.

Key Takeaways

- AI-generated passwords contain only 20-27 bits of entropy compared to 98-120 bits for true random passwords, making them 100-500x weaker than they appear

- Researchers found 50% duplicate passwords across AI generation sessions, revealing learned patterns rather than randomness

- Online password strength testers are dangerously misleading for AI passwords, estimated cracking time of 847 years vs actual cracking time of hours

- Language models are fundamentally unsuited for password generation because they're optimized for predictable output, the opposite of what security requires

- Even AI companies (Gemini, Claude) warn against using their AI-generated passwords for sensitive accounts and recommend dedicated password managers instead

- Use password managers with cryptographic randomness (Bitwarden, 1Password, KeePass) instead of any AI-based password generation

- Implement layered security with MFA so that even weak passwords are only one factor in multi-factor authentication

Related Articles

- ExpressVPN 81% Off Deal: Complete 2025 Guide [Updated]

- NordVPN Complete Plan 70% Off: Full Deal Breakdown [2025]

- NordVPN Complete Plan 70% Off: Full Breakdown [2025]

- ExpressKeys Password Manager: Complete Guide & Alternatives [2025]

- ExpressVPN's New Standalone Apps: A Complete Security Suite [2025]

- Substack Data Breach Exposed Millions: What You Need to Know [2025]

![Why AI-Generated Passwords Are Dangerously Weak [2025]](https://tryrunable.com/blog/why-ai-generated-passwords-are-dangerously-weak-2025/image-1-1771794318241.jpg)