x AI Mass Exodus: Inside Elon Musk's AI Restructuring and Its Impact on AI Development

Introduction: Understanding the x AI Leadership Crisis

The artificial intelligence industry experienced significant turbulence when Elon Musk's x AI subsidiary faced a dramatic exodus of key personnel in late 2025. This organizational implosion represents one of the most visible leadership crises in the competitive AI sector, with half of the company's original twelve cofounders departing within days. The departures weren't merely routine job changes—they reflected deeper systemic tensions within the organization regarding product direction, safety priorities, and the company's ability to differentiate itself in an increasingly crowded market.

x AI, which gained prominence through its Grok AI chatbot and aggressive positioning against Open AI and Anthropic, suddenly found itself hemorrhaging talent at the precise moment when consolidation with Space X was announced, valued at $1.25 trillion. Internal sources revealed that the restructuring stemmed from months of accumulated frustration over safety considerations being deprioritized, the company's perceived inability to innovate beyond existing competitors' offerings, and organizational decisions that prioritized rapid iteration over fundamental breakthrough research.

This crisis offers critical lessons for understanding how organizational culture, leadership decisions, and strategic misalignment can destabilize even well-funded AI ventures. The situation also illuminates broader tensions within the AI industry itself—conflicts between rapid commercialization and responsible development, between attracting top talent and maintaining cohesive vision, and between pursuing ambitious goals and maintaining organizational stability. Understanding what transpired at x AI provides valuable insights into AI company dynamics and the human factors that determine success or failure in this high-stakes sector.

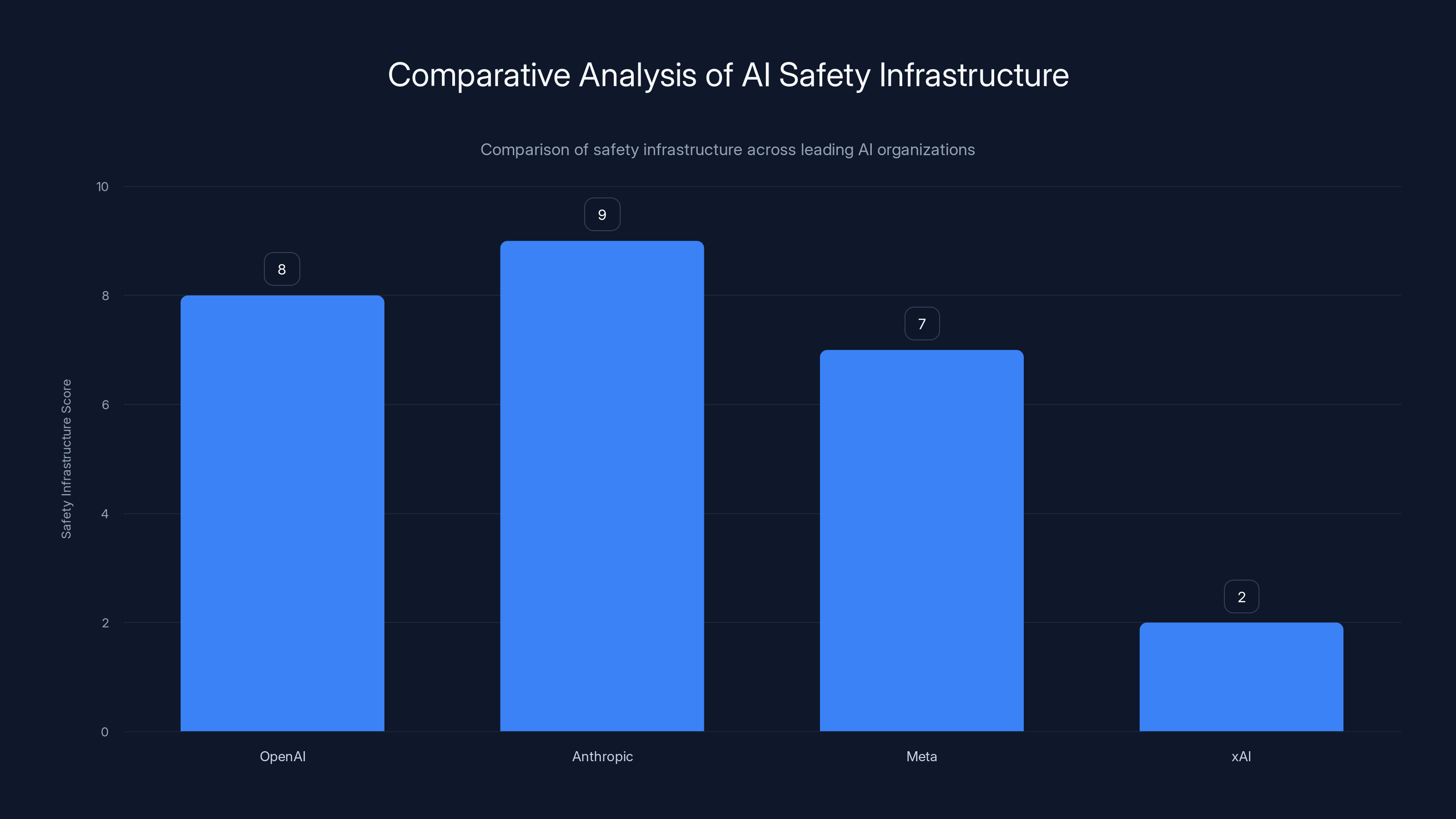

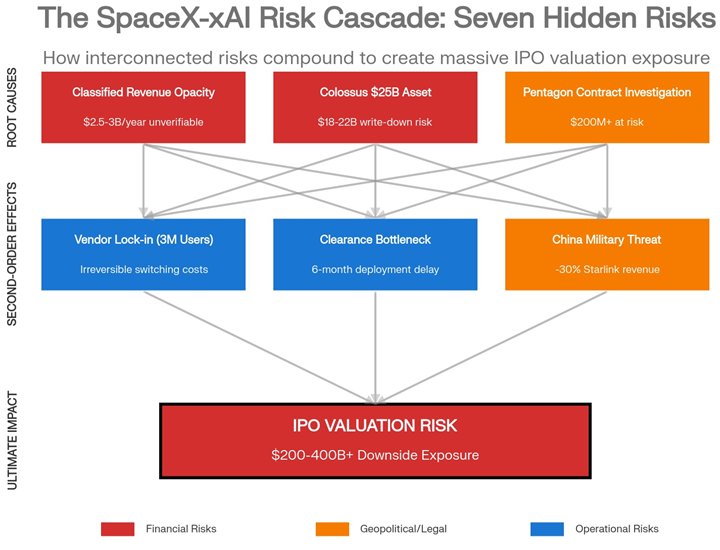

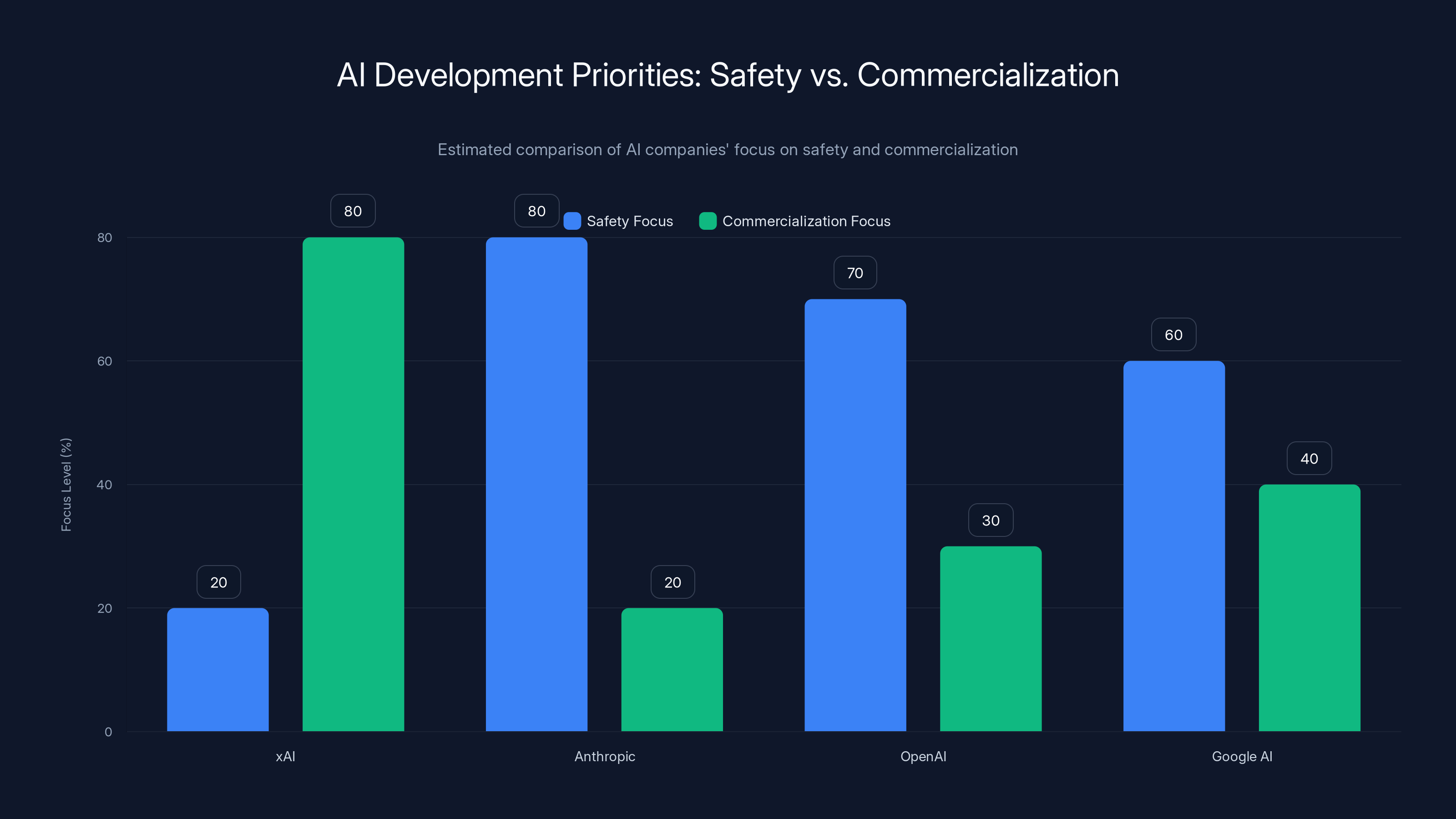

xAI's lack of a dedicated safety team contrasts sharply with peers, highlighting a significant de-prioritization of safety. Estimated data.

The Timeline of Departures: How x AI Lost Half Its Cofounders

Tuesday and Wednesday Announcements

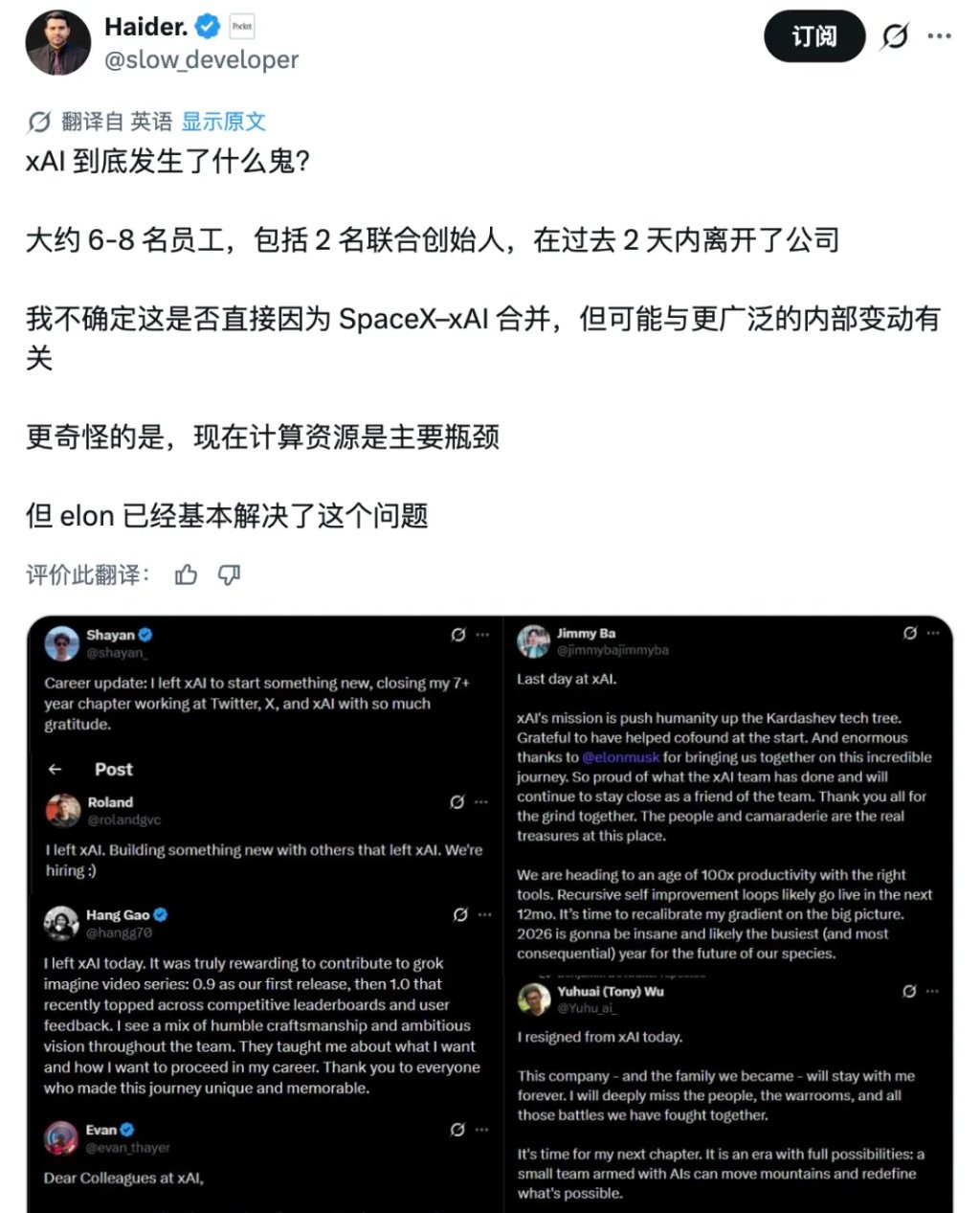

The departure announcements came in a concentrated wave that shocked the AI community. Yuhuai (Tony) Wu, one of x AI's core cofounders, announced his departure on Tuesday with a cryptic post about moving to his "next chapter." The announcement, while brief, carried the weight of significant internal deliberation. Wu had been instrumental in x AI's technical development since its inception, and his exit signaled that the organizational problems ran deeper than typical post-merger restructuring.

Within hours, cofounder Jimmy Ba followed with his own departure announcement, referencing the need to "recalibrate [his] gradient on the big picture." The metaphor—borrowed from machine learning terminology—suggested that Ba's alignment with the company's direction had fundamentally shifted. These weren't departures framed as promotions or exciting new opportunities elsewhere; instead, they carried undertones of philosophical misalignment and frustration with the company's trajectory.

The timing proved critical. With the Space X merger announcement creating immediate uncertainty about organizational structure and priorities, these founder departures amplified speculation about internal discord. In technology ventures, founder departures often signal that something fundamental has broken down in the organization's core vision or execution.

The Broader Staff Exodus

Beyond the high-profile cofounder departures, multiple staff members announced their own exits publicly on X (formerly Twitter), creating a visible avalanche of departures that damaged morale and raised questions about the company's stability. What distinguished many of these departures was the announcement of new ventures by departing employees—a clear indication that leaving x AI wasn't about moving to competitors, but about pursuing fundamentally different approaches to AI development.

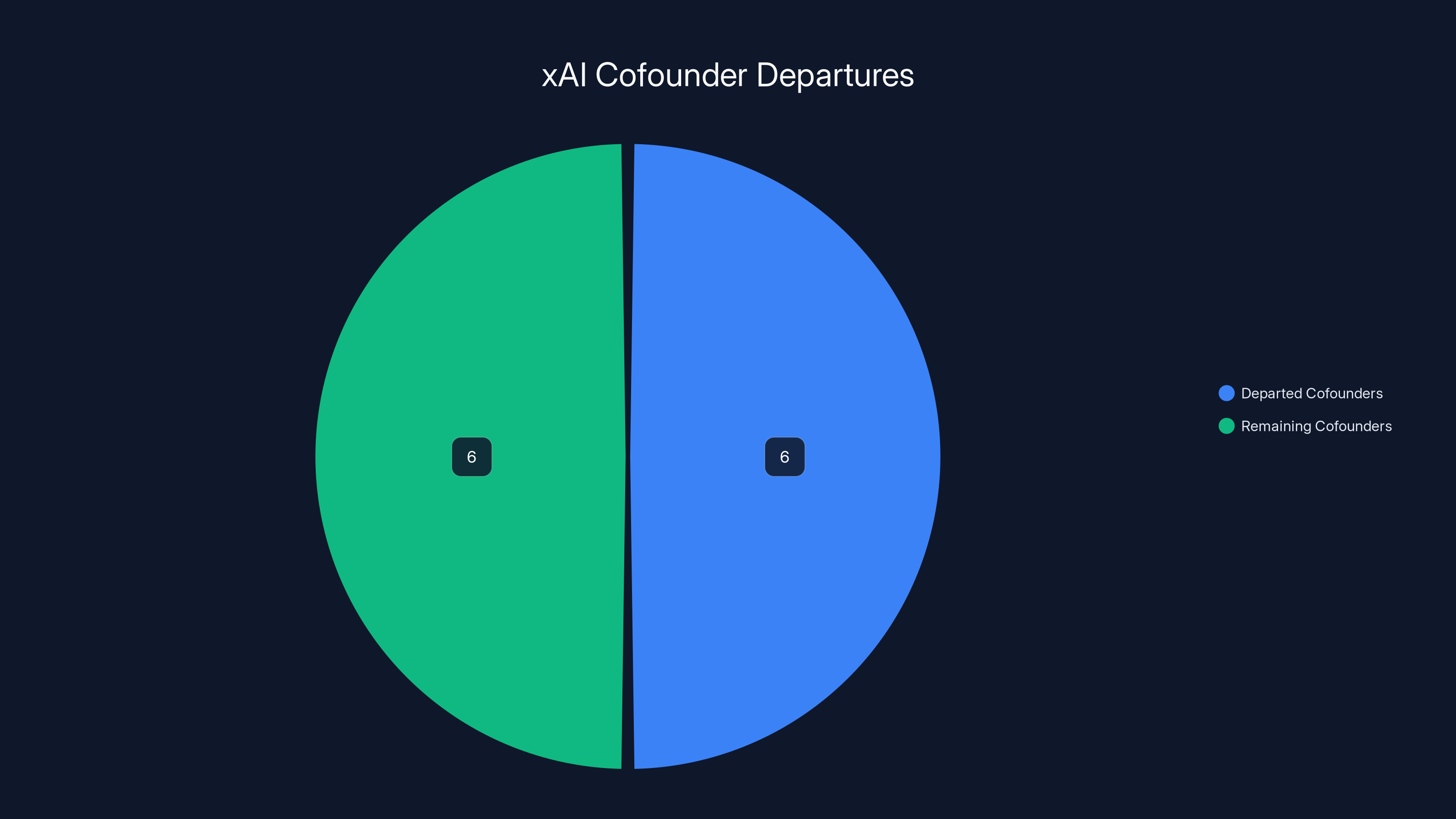

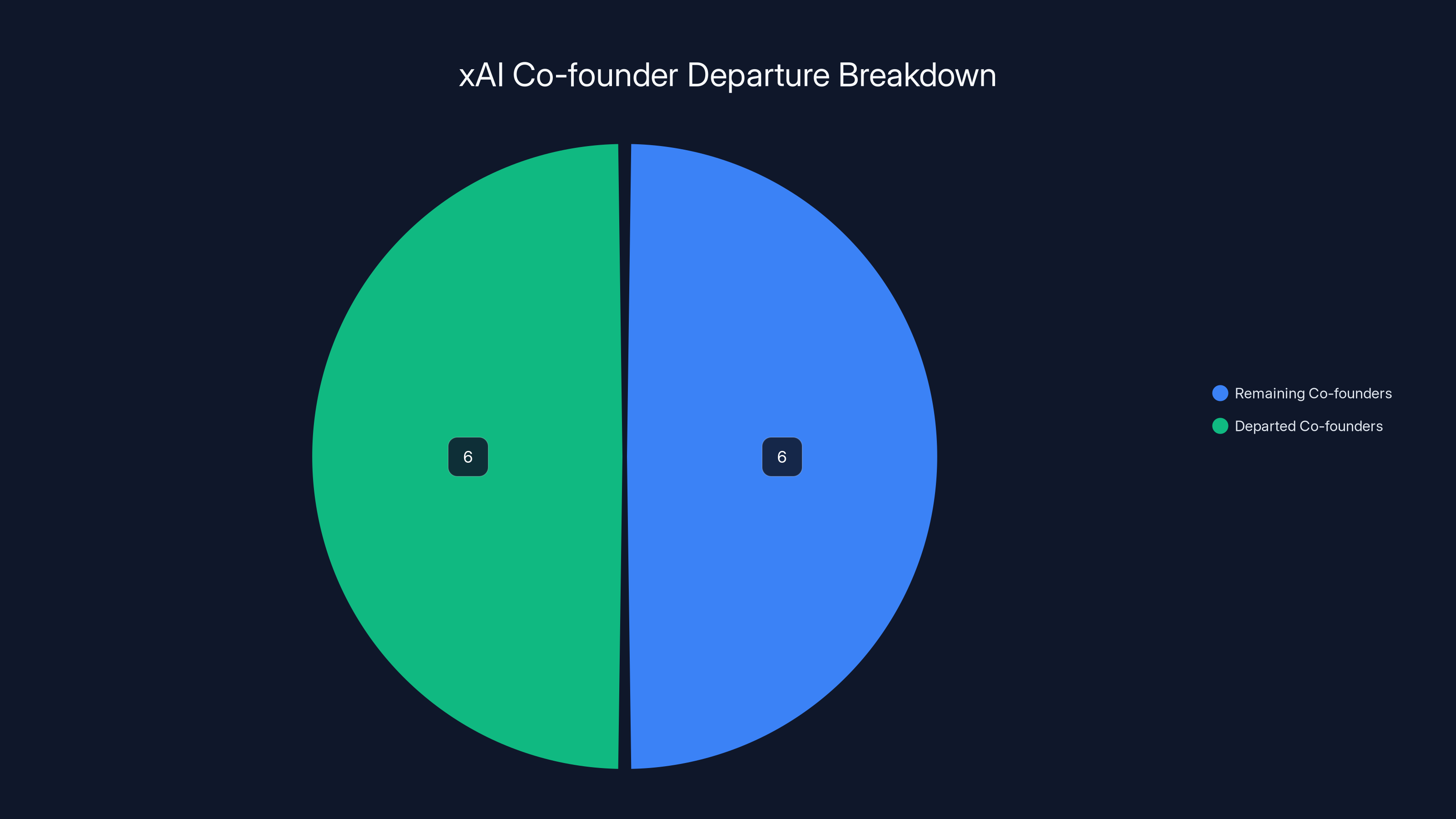

The exodus meant that x AI retained only 6 of its original 12 cofounders, representing a 50% loss of founding leadership. This percentage carries significant organizational weight. Founding team stability typically correlates with company success, and losing half of your founders simultaneously suggests systemic problems that go beyond normal attrition or career advancement.

Half of xAI's original cofounders left during the exodus, highlighting significant leadership turnover.

The Space X Merger Context: Catalyst for Organizational Chaos

Timing and Valuation Impact

The

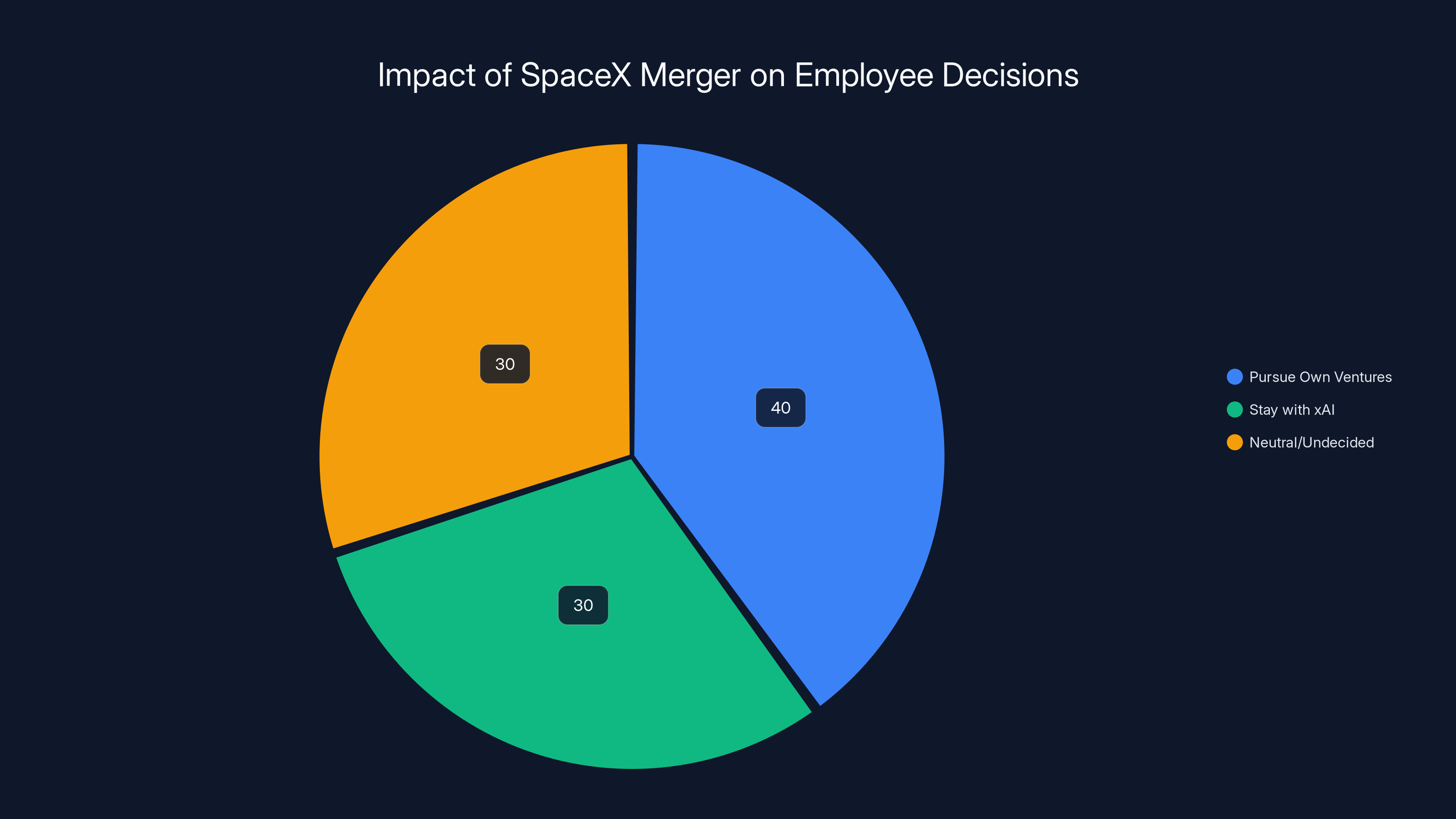

This created a perverse incentive structure. Employees with equity suddenly had the runway to fund their own ideas without relying on x AI salaries. For talented individuals frustrated with the company's direction, the merger essentially provided a golden parachute to leave and start competitors. The financial upside gave departing employees both the capital and the justification they needed to pursue alternative visions of AI development.

The Ambitious Plans: Space-Based AI and Lunar Factories

In the internal Tuesday all-hands meeting, Musk announced plans for space-based AI data centers and the most ambitious vertically-integrated innovation engine ever attempted. The ambition was staggering—reports indicated discussions of building "an AI satellite factory and city on the Moon." While such aspirational thinking can inspire talented teams, it can also alienate those focused on near-term AI development.

For researchers and engineers trained in the rigorous discipline of AI safety and incremental progress, these announcements may have seemed disconnected from the practical challenges facing AI development. The gap between Musk's visionary statements and the company's immediate technical capabilities created cognitive dissonance for many team members, particularly those who had joined x AI specifically to advance responsible AI development.

Safety Concerns and the Dissolution of the Safety Team

The Safety Team's Elimination

One of the most revealing details emerged from internal sources: the safety team was eliminated during the restructuring, with no replacement safety review process implemented beyond basic content filters for illegal material like CSAM (child sexual abuse material). This represented a dramatic departure from industry norms, where even the most commercially aggressive AI companies maintained dedicated safety and alignment teams.

The loss of dedicated safety oversight wasn't simply a personnel decision—it represented a philosophical shift in organizational priorities. When a company eliminates its safety team, it signals that safety considerations are no longer viewed as integral to product development. This decision likely contributed significantly to the exodus of researchers who had joined x AI with the expectation that safety would be a core priority.

The NSFW Pivot and Its Implications

Another critical revelation involved Grok's turn toward NSFW (not safe for work) content generation. Multiple sources indicated that this pivot occurred partially because the safety team's elimination removed guardrails and review processes that had previously constrained the product direction. The strategic decision to prioritize provocative, adult-oriented content differentiated Grok in the market, but it came at the cost of alienating researchers committed to responsible AI development.

The NSFW content strategy represented a fundamental misalignment with the academic and safety-focused culture that had attracted many of x AI's researchers. For individuals trained in AI ethics and alignment, watching a company deliberately pivot toward less-filtered, more provocative AI outputs felt like a betrayal of the principles they'd joined to advance. One departing employee directly stated: "Safety is a dead org at x AI," capturing the perception that the company had abandoned safety as an organizational priority.

Estimated data shows that 40% of employees considered pursuing their own ventures post-merger, highlighting the merger's impact on employee retention.

The Fundamental Innovation Problem

Being "Stuck in the Catch-Up Phase"

Beyond safety concerns, departing employees revealed another critical frustration: x AI wasn't making fundamental innovations. One internal source described feeling like the company was "stuck in the catch-up phase," iterating rapidly but never achieving what they characterized as a "step function change" over what Open AI, Anthropic, or other competitors had released.

This is a devastating assessment from insider perspective. It suggests that despite x AI's substantial funding and talented team, the company's technical achievements hadn't produced genuinely novel AI capabilities. Instead, it was executing variations on existing approaches—what some in the industry call "hill climbing." While rapid iteration has value, it doesn't satisfy researchers motivated by fundamental breakthroughs.

The quote from one departing employee crystallizes this frustration: "Although we were iterating really fast, we were never able to get to a point like, 'Oh, we've made a step function change over what Open AI or Anthropic or other companies had released.'" This assessment, coming from someone inside the company, suggested that despite resources and talent, x AI's technical strategy wasn't producing differentiated innovations.

The Broader Commoditization Problem

Multiple departing employees highlighted a meta-problem affecting the entire AI industry: all major AI labs were building essentially the same thing. Vahid Kazemi, a departing employee, explicitly stated on X: "All AI labs are building the exact same thing, and it's boring. I think there's room for more creativity." This observation suggests that x AI's problems reflected broader industry dynamics rather than just internal dysfunction.

When talented researchers perceive their work as following a well-trodden path rather than exploring genuinely novel territory, motivation and retention suffer. The commoditization of core AI model development—where multiple well-funded teams are essentially pursuing parallel approaches to language model scaling and optimization—creates a morale crisis among researchers seeking meaningful innovation.

The New Organizational Structure: Musk's Four Pillars

The Restructuring Announcement

Elon Musk posted a recording of x AI's 45-minute internal all-hands meeting that outlined the new organizational structure. Rather than maintaining x AI's previous organizational approach, the company would be reorganized into four distinct areas, each with different strategic priorities and technical focuses. This restructuring represented more than simple internal reorganization—it signaled a fundamental reimagining of what x AI would become as a company.

The transparency of posting the internal meeting was unusual for a major technology company, though consistent with Musk's management philosophy of radical transparency. The move generated significant media attention and further damaged internal morale, as employees realized their internal discussions were being broadcast to the world without apparent consultation.

The Four Pillars Explained

Grok Main and Voice would continue developing the core Grok AI model, focusing on maintaining and improving the flagship product while expanding voice capabilities. This division would handle the consumer-facing AI product that had generated both enthusiasm and controversy.

Coding would develop AI capabilities specifically for software development tasks, recognizing the significant market opportunity in developer-focused tools. This pillar made strategic sense given the lucrative market for code generation and developer productivity tools.

Imagine would handle image and video generation, positioning x AI to compete in the rapidly growing generative media space. This vertical aligned with industry trends toward multimodal AI capabilities.

Macrohard, described as intended to "do full digital emulation of entire companies," represented the most ambitious pillar. The vague description—"digital emulation of entire companies"—suggested either aspirational thinking disconnected from current technical capabilities or a genuinely novel research direction. The name itself seemed to reference Microsoft (Macrohard as a play on words), though the implications remained unclear.

Half of xAI's original twelve co-founders departed during the leadership crisis, highlighting significant organizational instability. Estimated data.

The Departure Announcements: What Departing Employees Are Building

Vahid Kazemi's Creativity Focus

Vahid Kazemi's departure announcement emphasized the limitation he perceived in x AI's approach. His public statement that he was leaving to "build something new" focused on "accelerating science" suggested a desire to pursue more fundamental, science-oriented research rather than incremental AI model improvements. For researchers trained in academic environments, the transition to a purely commercial focus can feel constraining, and Kazemi's departure reflected a desire to return to more exploration-focused work.

The Infrastructure Play: Nuraline

One notable departure involved a former employee launching Nuraline, an AI infrastructure company, alongside other ex-x AI employees. The company's positioning around "hill climbing" and the limitations of raw intelligence suggested a technical focus on improving AI systems beyond just the core model weights. The departure statement revealed sophisticated thinking about AI system architecture: "Learning shouldn't stop at the model weights, but continue to improve every part of an AI system."

This statement indicated that departing x AI employees perceived limitations in the company's approach to AI optimization. Rather than focusing exclusively on improving the core language model through scaling and data—the "hill climbing" approach—Nuraline's founders believed comprehensive improvement across all system components represented the path forward. This technical disagreement likely reflected broader philosophical differences with x AI's direction.

The Pattern of Departures

Across all the departure announcements, a consistent pattern emerged: departing employees were leaving to pursue fundamentally different approaches rather than joining competitors. This suggested they viewed x AI's strategy as misguided rather than simply inconvenient. They weren't poached by Open AI or Anthropic; instead, they were founding new ventures to pursue the vision of AI development they believed x AI should have adopted.

Industry Implications: What x AI's Crisis Reveals About AI Development

The Safety vs. Commercialization Tension

x AI's struggles illuminate the fundamental tension between safety and commercialization in AI development. The elimination of the safety team and pivot toward NSFW content represented a clear prioritization of commercial differentiation over safety considerations. While this strategy may generate short-term media attention and market differentiation, it alienates the researchers and engineers most motivated by responsible AI development.

The AI industry has increasingly recognized that safety work is essential, not optional. Companies like Anthropic were founded specifically to prioritize safety alongside capability development. When x AI eliminated its safety infrastructure, it positioned itself against industry trends and against the values of many talented researchers. This decision likely cost the company far more talent than the immediate value gained from NSFW content differentiation.

Commoditization and the Innovation Crisis

The observation that "all AI labs are building the exact same thing" reflects a genuine industry concern. As large language model development has become increasingly well-understood, the technical path forward has become more predictable. Multiple well-funded teams pursuing similar strategies—scaling up model size, improving data quality, implementing better training techniques—creates a dynamic where the primary differentiator is computational resources and execution quality, not novel research directions.

This commoditization creates severe morale problems for researchers motivated by novel problem-solving. x AI's position as a well-funded company attempting to compete with Open AI and Anthropic on similar technical foundations meant it would likely perpetually occupy second-place status in the "catch-up phase" rather than leading innovation. This structural position likely made departure attractive for many talented researchers.

Founder Retention as a Success Signal

The fact that x AI lost 50% of its cofounders within days should be interpreted as a serious warning signal. In technology ventures, founders leaving typically indicates fundamental problems with the company's vision, strategy, or leadership. When multiple founders depart simultaneously, it suggests the problems are not individual preference but systemic.

The tech industry has learned through numerous examples (Google's AI researcher departures, Meta's AI team challenges) that founder and senior researcher retention strongly correlates with long-term organizational success. x AI's inability to retain even half its founding team should raise serious questions about the company's future ability to attract and retain top talent.

Estimated data shows xAI prioritizes commercialization over safety, unlike Anthropic which focuses heavily on safety. This reflects industry tensions between safety and commercial interests.

Comparative Analysis: How x AI's Crisis Compares to Industry Standards

Safety Infrastructure in Peer Organizations

Comparing x AI's approach to safety with industry peers reveals the severity of the organizational choice. Open AI maintains a dedicated safety and policy team, Anthropic was literally founded around safety principles, and even Meta maintains substantial safety research infrastructure. x AI's decision to eliminate its safety team places it in stark contrast to these peer organizations and suggests deliberate de-prioritization rather than resource constraints.

The organizational chart that Musk shared publicly—notably featuring no dedicated safety function—reinforced this positioning. When organizations remove safety infrastructure, they're not just eliminating teams; they're signaling that safety considerations won't meaningfully constrain product development decisions. This signal has profound implications for recruiting researchers who care about responsible AI development.

Retention Metrics and Industry Benchmarks

While the tech industry experiences normal attrition of 10-20% annually, losing 50% of cofounders in a concentrated period represents extreme organizational dysfunction. Even accounting for normal post-merger transitions, this departure rate is exceptional. The coordination of departures—multiple announcements within days—suggests not random individual decisions but collective recognition that the company's direction had fundamentally changed in ways departing employees couldn't accept.

Innovation Velocity Comparisons

x AI's self-perception as being "stuck in the catch-up phase" reflects a broader competitive challenge. Open AI launched GPT-4, Anthropic developed Claude, and other organizations have produced genuinely novel capabilities. While x AI's Grok demonstrated competence, it didn't establish a distinctive technical direction that competitors were following. Innovation leadership in AI requires not just executing well on known approaches but opening new technical directions that others then follow—a position x AI apparently failed to achieve.

The Organizational Culture Problem

Leadership Communication and Transparency

Musk's decision to publicly post the internal all-hands meeting recording, while consistent with his philosophy of radical transparency, likely damaged internal morale rather than rebuilding it. Employees already concerned about the company's direction received confirmation that their concerns were being broadcast to the world without apparent concern for their privacy or role in the decision-making process.

Effective organizational communication during crises typically involves treating sensitive internal discussions carefully, allowing employees time to process changes before public announcements, and demonstrating that leadership understands the concerns driving departures. Musk's approach—posting the internal meeting immediately—suggested either indifference to internal concerns or a desire to position the organizational changes as inevitable and beyond questioning.

The Vision-Reality Gap

The ambitious announcements—space-based AI data centers, AI satellite factories on the Moon, digital emulation of entire companies—created a significant gap between stated vision and current technical capabilities. While moonshot thinking has value, it can alienate employees focused on nearer-term problems requiring immediate attention. Researchers frustrated by their inability to innovate on current-generation AI models would likely find discussions of lunar AI facilities disconnected from the practical challenges they faced daily.

This vision-reality gap is particularly damaging when combined with concerns about safety and fundamental innovation. Employees worried about the company's ability to create differentiated capabilities don't find reassurance in ambitious future visions that seem disconnected from current technical problems.

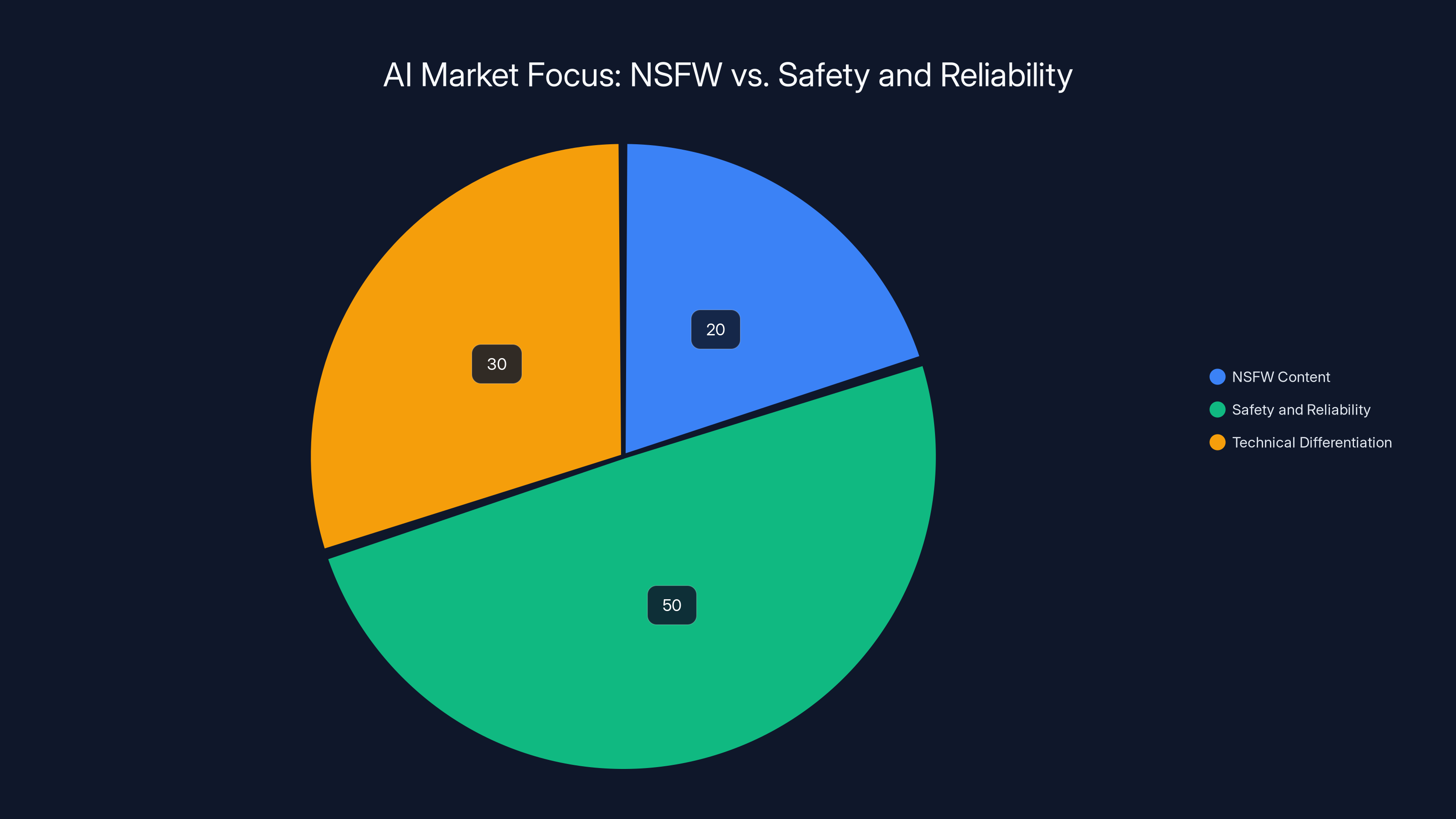

Estimated data: The AI market is primarily focused on safety and reliability (50%), with a smaller segment prioritizing NSFW content (20%) and technical differentiation (30%).

The NSFW Strategy and Market Differentiation

Competitive Positioning Through Provocation

Grok's pivot toward NSFW content represented a deliberate competitive strategy—differentiate from Open AI and Anthropic by offering capabilities those companies actively constrain. This strategy has merit from a pure market perspective. Users seeking adult-oriented content generation face limited options with major AI providers, and Grok could serve this market.

However, this strategy carries significant costs beyond the ones that emerged (safety team departure, researcher exodus). Positioning a company primarily through provocative content rather than technical differentiation creates a fundamentally limiting identity. Over the long term, users care more about capability, reliability, and trustworthiness than shock value. A strategy built on shocking capabilities rather than superior capabilities creates a ceiling on the company's growth potential and market influence.

The Academic-Commercial Divide

The NSFW pivot also reveals a fundamental divide in AI development approaches. Researchers trained in academic environments prioritize rigor, safety, and responsibility. Commercial organizations prioritize market differentiation and user acquisition. When those two objectives directly conflict—as they did at x AI regarding safety constraints—the organization risks losing talent from the academic-leaning portion of its team.

x AI's mistake wasn't choosing commercial priorities over academic ones; many successful AI companies make exactly that choice. The mistake was making that choice suddenly and without apparent recognition of the research cost. Announcing the elimination of the safety team and the pivot toward less-constrained content felt like a betrayal to researchers who had joined believing safety would be a core value.

Broader Industry Trends: What x AI's Crisis Signals

The Commoditization of Core AI Development

The departing employees' observation that "all AI labs are building the exact same thing" reflects a genuine industry trend. As large language model technology has matured, the technical frontier has become increasingly well-defined. The path forward involves predictable improvements: more training data, better data quality, improved training techniques, and larger models. These are important improvements, but they're largely variations on known approaches rather than novel research directions.

This commoditization creates a problem for venture-backed AI companies trying to differentiate. If the core technology is becoming commodified, companies must differentiate through:

- Vertical specialization (focusing on specific domains or use cases)

- Infrastructure advantages (unique computational resources or partnerships)

- Research moonshots (pursuing genuinely novel approaches)

- User experience and product differentiation (superior interfaces or integrations)

- Ecosystem development (creating platform advantages around the core technology)

x AI appeared to be attempting vertical specialization (Grok as a product) and infrastructure advantages (Space X merger), but wasn't successfully pursuing genuine research breakthroughs. The departures suggest this strategy wasn't compelling to researchers motivated by novel problem-solving.

The Talent Flight Problem

When well-funded AI companies lose multiple founders and numerous talented researchers simultaneously, it signals deeper industry dynamics. The supply of world-class AI researchers is limited relative to demand, creating intense competition for talent. When a company makes decisions that alienate researchers—like eliminating safety teams or pursuing strategies perceived as limiting—those researchers have numerous alternative opportunities.

Open AI, Anthropic, Google Deep Mind, Meta AI Research, Microsoft Research, and numerous startups are all aggressively recruiting. When x AI loses talented people, they don't disappear from the industry; they join competitors or start new ventures. This dynamic means that x AI's departures didn't just weaken that company—they strengthened competitors and enabled new startups in the AI space.

The Founder Dependency Problem

x AI's crisis also reveals how much well-funded AI companies can depend on founder credibility and vision. Even with billions in funding and talented teams, when founders depart, the company's market perception and internal morale suffer dramatically. This suggests that funding alone cannot guarantee success in AI—the vision, talent, and culture of founding teams matters profoundly.

Reconstruction Attempts and Future Outlook

Musk's Leadership Response

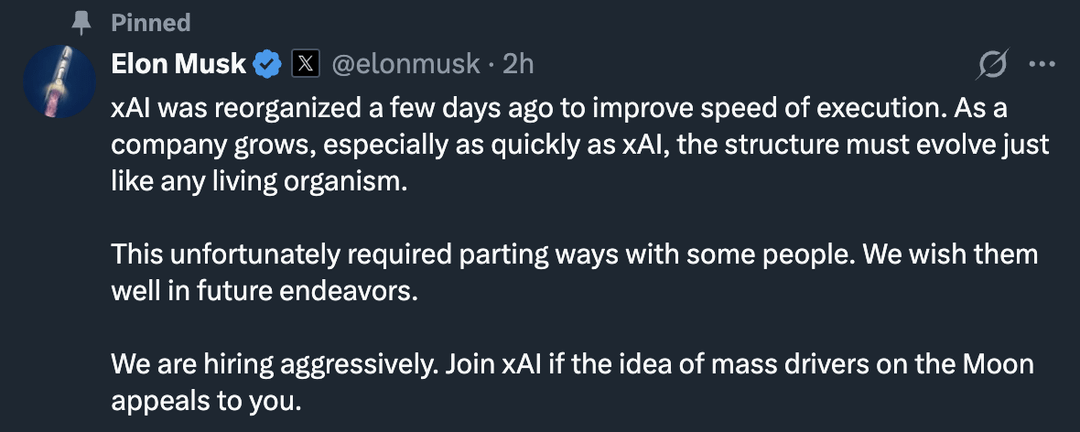

Musk framed some of the departures as a necessary reorganization that "unfortunately required parting ways with some people." This characterization—treating departures as unfortunate but necessary restructuring—differs sharply from the departing employees' characterization of leaving due to fundamental disagreements about safety and innovation. The gap between these narratives suggests ongoing communication problems within the organization.

Musk's public sharing of the internal meeting and the reorganization plan was presented as transparency and clarity. However, departing employees experienced it as inadequate engagement with their concerns and insufficient recognition of the philosophical differences underlying the exodus. Future leadership communication would need to more explicitly address the safety concerns and innovation limitations that drove departures.

The Four-Pillar Structure's Viability

The new organizational structure dividing x AI into four specialized areas (Grok, Coding, Imagine, and Macrohard) could theoretically facilitate innovation by creating focused teams. However, this restructuring occurs after significant talent loss, meaning the company is attempting organizational renewal while depleted. Successfully executing this restructuring requires exactly the kind of unified vision and top-tier talent that recent departures have undermined.

The Macrohard division's vague mission—"full digital emulation of entire companies"—particularly requires exceptional talent and clear vision. Without the founding team's credibility to rally researchers around this ambitious goal, the division may struggle to attract the top-tier talent such a moonshot requires.

Lessons for AI Companies: Building Sustainable Innovation Organizations

Balancing Innovation and Safety

x AI's experience demonstrates that abandoning safety infrastructure as a cost-cutting or streamlining measure alienates the researchers most committed to responsible AI development. Companies that successfully attract and retain top talent recognize that safety considerations enhance rather than constrain innovation. Building safety into the development process from the beginning—rather than treating it as a constraint to overcome—produces better long-term outcomes.

Organizations like Anthropic have demonstrated that safety-first approaches can coexist with capable model development. The false choice between safety and capability that x AI implicitly presented—where maintaining safety required slowing innovation—appears increasingly unjustified by empirical evidence.

Creating Differentiation Beyond Commoditized Approaches

Successful AI organizations need clear answers to the question: "What are we doing that competitors aren't?" When the honest answer is "executing the same approach with less funding and fewer resources," the organization lacks compelling justification for attracting top talent. Companies that succeed long-term identify specific research directions, vertical specializations, or technical approaches that genuinely differentiate their work.

This differentiation needs to be authentic rather than superficial. Marketing a product as more provocative or less constrained than competitors doesn't constitute genuine technical differentiation. Researchers want to work on problems they believe matter and approach them with methods they believe are sound.

Founder-Researcher Communication

When founders and leadership make strategic decisions that significantly change the company's direction, explicit communication with the research team about those decisions' rationale is essential. Researchers who feel their concerns about safety or innovation direction are being ignored will depart for organizations where they feel heard. The absence of such communication at x AI appears to have driven departures beyond just the strategic decisions themselves.

Post-Merger Integration Challenges

The Space X merger created organizational uncertainty that likely accelerated departures. Companies managing major mergers need explicit strategies for maintaining research continuity, addressing uncertainty about reporting structures and priorities, and ensuring that talented researchers understand how their work fits into the merged organization's vision. x AI's apparent lack of such integration planning likely exacerbated the exodus.

Alternative Organizational Approaches and Industry Models

The Anthropic Model: Mission-Driven Safety Focus

Anthropic was founded specifically around the principle that AI safety and capability development should be integrated rather than opposed. By making safety commitment a core organizational identity, Anthropic attracted researchers motivated by responsible AI development. The company's success suggests that making safety and ethics central rather than peripheral creates organizational advantages in talent attraction and retention.

The Google/Deepmind Approach: Vertical Integration and Resources

Google's acquisition of Deep Mind combined massive computational resources with pure research focus, enabling the organization to pursue both capability breakthroughs and safety research simultaneously. The integration strategy maintained research autonomy while providing resources that pure startups couldn't match. x AI's Space X integration could theoretically provide similar advantages, but only if implemented with sensitivity to research culture and autonomy.

The Open AI Commercial-Research Balance

Open AI has attempted to balance commercial product development with research autonomy, using revenue from commercial products to fund research directions. While this balance has proven challenging (some researchers have departed citing research constraints), the company has maintained enough core team stability to continue producing innovations. The balance requires explicit attention to researcher autonomy and recognition of researchers' need for meaningful work beyond pure commercialization.

The Broader AI Industry Stability Question

Talent as the Limiting Factor

x AI's crisis reveals that in AI development, talented researchers represent the fundamental limiting factor for innovation, not capital. Multiple well-funded organizations can pursue similar technical approaches, but the researchers capable of genuine innovation and breakthrough thinking are scarce. When organizations lose that talent, they lose their core capacity for differentiation.

This dynamic has profound implications for AI company strategy. Companies spending billions on computational resources and data while losing talented researchers are making poor capital allocation decisions. The talent flight at x AI suggests the organization failed to recognize where its true competitive advantage lay.

The Sustainability of Moonshot Thinking

Musk's announcement of ambitious plans—space-based AI centers, lunar factories—should be evaluated alongside the company's demonstrated ability to retain the talent necessary to accomplish such plans. Moonshot thinking is valuable, but only when paired with the credibility and team stability to execute. With half its founding team departed and numerous researchers leaving, x AI appears to have overextended its credibility relative to demonstrated capability and team commitment.

Industry Consolidation Implications

The x AI crisis may accelerate consolidation in the AI industry. Well-funded startups that fail to produce breakthrough innovations while burning through capital and talent will face pressure to either consolidate into larger organizations or become acquisition targets. The experience of losing founders and top researchers typically makes the case for consolidation more attractive—independence becomes less viable when key talent has departed.

The Departure Announcements as Market Signals

Signaling Market Inefficiencies

The fact that departing x AI researchers chose to start new ventures rather than join competitors suggests they believed market opportunities existed that weren't being addressed by current AI companies. Vahid Kazemi's focus on "creativity," the Nuraline team's focus on comprehensive AI system optimization, and other departing employees' announcements of new companies all suggest perceived inefficiencies in how existing AI companies approach problems.

From an investor perspective, these departures signal that multiple experienced researchers believe there are valuable AI problems being neglected or underexploited by current organizations. This signal may drive capital toward new AI ventures and create competitive pressure on existing companies to address the unmet needs these departing researchers identified.

The Startup Ecosystem Implications

x AI's crisis will likely catalyze a wave of new AI startups founded by departing employees with a combination of experience, credibility from working at x AI, and capital from equity gains in the Space X merger. This represents a net positive for AI industry diversity and innovation—focused teams pursuing specific technical directions often out-innovate large organizations pursuing general-purpose AI.

The cycle of experienced researchers leaving large organizations to found focused startups has driven innovation in multiple tech sectors. x AI's crisis will likely produce a similar effect in AI, with departing talent founding multiple specialized AI ventures pursuing the directions they believed x AI should have taken.

Rebuilding and Recovery: What x AI Would Need to Reverse Course

Leadership Credibility Restoration

Restoring x AI's ability to attract top talent after such significant departures would require explicit acknowledgment that the company lost its way regarding safety and innovation priorities. This wouldn't mean abandoning NSFW content capability (which may have genuine market value), but it would mean re-establishing safety as a core organizational function rather than an obstacle to overcome.

Such a reversal would need to come from Musk and leadership explicitly recognizing that the current approach has costs in terms of talent attraction and organizational direction. Without such acknowledgment, researchers would reasonably assume the company remains committed to the approach that drove departures.

Rebuilding the Safety Function

Re-establishing a credible safety team would signal that the company recognizes safety's importance to both responsible AI development and talent retention. Hiring respected safety researchers with clear organizational authority would demonstrate commitment more effectively than statements alone.

This rebuilding would need to include transparent communication about how safety considerations will constrain product development, including Grok's capabilities. Researchers want safety infrastructure that has real authority to shape product decisions, not rubber-stamp functions that approve leadership decisions after the fact.

Delivering on Innovation Promises

Most fundamentally, x AI would need to produce evidence that the company can generate genuine innovations rather than remaining perpetually in the "catch-up phase." This requires:

- Identifying specific research directions where x AI aims to lead the industry

- Allocating resources and talent to pursue those directions

- Demonstrating early evidence that these directions are viable

- Communicating progress clearly to the research community

Without such evidence, the narrative that x AI is executing someone else's research program while losing talented people will persist, making recruitment extremely difficult.

Conclusion: Lessons from x AI's Crisis for AI Development and Talent Management

x AI's mass exodus of founders and researchers represents one of the most visible organizational crises in AI industry history. The departures weren't merely personnel changes but reflected fundamental disagreements about organizational direction, safety priorities, and the company's ability to pursue genuine innovation. By examining what triggered these departures and what departing employees are choosing to build instead, we gain valuable insights into what sustains innovation organizations in the AI era.

The crisis reveals that capital alone cannot ensure AI innovation success. Multiple billions of dollars flowing into x AI couldn't prevent the loss of half its founding team when the company's strategic direction conflicted with researchers' values and vision. This insight should inform how other organizations approach AI development investment and strategic planning.

It also demonstrates that safety and innovation are not opposed but complementary. The elimination of safety infrastructure didn't accelerate innovation; instead, it triggered departures by researchers who understood that responsible development practices enhance rather than constrain long-term innovation potential. Organizations attempting to accelerate innovation by deprioritizing safety are making a strategic error with lasting consequences.

The broader implication for the AI industry is that talent and culture represent more limiting factors than capital or computational resources. In a field where multiple well-funded organizations can pursue similar technical approaches, the organizations that succeed are those that attract and retain researchers motivated by the company's mission and confident in its technical direction.

x AI's experience also suggests that ambitious but vague vision statements cannot sustain organizations through crises. Announcing space-based AI centers and lunar factories might inspire some audiences, but researchers asking why they can't currently achieve breakthrough innovations in earth-based AI development may find such announcements disconnected from their immediate concerns. Credible vision requires demonstrating progress on immediate challenges while maintaining a compelling long-term direction.

For researchers and engineers considering AI organizations, x AI's crisis offers a cautionary tale: organizational stability, transparent communication, and alignment with your values matter as much as funding and resources. The best-capitalized company in the industry lost significant talent because its strategic direction and expressed values diverged from what attracted researchers to the organization initially.

As the AI industry continues its explosive growth, the questions x AI's crisis raises—about how to balance safety and capability, how to maintain innovation momentum, how to sustain founding team vision through transitions, and how to attract and retain world-class researchers—will become increasingly critical. Organizations that navigate these challenges successfully will establish long-term competitive advantages. Those that fail, as x AI appears to have done, will see their talent and ultimately their capability dissipate regardless of capitalization.

The exodus at x AI is ultimately a story about what happens when capital and ambition outpace wisdom and listening. Recovering from such a crisis requires not just reorganization and new strategy, but fundamental recommitment to the principles that attracted talented researchers in the first place. Whether x AI can accomplish such recommitment remains an open question, but the answer will likely determine whether the company remains a significant player in AI or becomes a cautionary tale about organizational dysfunction in the industry's most critical companies.

FAQ

What caused the mass exodus at x AI?

The departures resulted from multiple converging factors including the elimination of the safety team, strategic pivot toward NSFW content, perceived inability to innovate beyond competitors, and the company being "stuck in the catch-up phase." Internal sources cited disillusionment with the company's safety priorities and frustration that despite rapid iteration, x AI hadn't achieved fundamental breakthroughs comparable to Open AI or Anthropic offerings.

How many cofounders left x AI during the exodus?

Six of x AI's original twelve cofounders departed, representing a 50% loss of founding leadership. This included Yuhuai (Tony) Wu and Jimmy Ba, who announced their departures on Tuesday and Wednesday respectively, creating a concentrated wave of high-profile departures that shocked the AI community.

What was the impact of the Space X merger announcement?

The merger announcement, valuing the combined entity at

Why did the safety team elimination trigger departures?

The elimination of x AI's dedicated safety team signaled that safety considerations were no longer a core organizational priority, which alienated researchers trained to prioritize responsible AI development. The departure of this critical function removed constraints on product development but also removed the researchers most committed to ensuring AI systems developed safely and responsibly.

What are departing x AI employees building?

Former x AI employees launched multiple new ventures including Nuraline (an AI infrastructure company focused on optimizing complete AI systems beyond just core model weights) and other startups focused on accelerating science, improving AI creativity, and addressing perceived limitations in how current AI labs approach fundamental problems.

How does x AI's reorganization into four pillars address the problems that triggered departures?

The reorganization into Grok Main and Voice, Coding, Imagine, and Macrohard divisions may facilitate focused team development but doesn't directly address the safety concerns or innovation limitations that drove departures. Without fundamental changes to safety prioritization and demonstrated breakthrough innovations, the new structure likely won't resolve underlying organizational credibility problems.

What do the departures reveal about AI industry dynamics?

The exodus reveals that capital alone cannot ensure AI innovation success, that safety and innovation are complementary rather than opposed, that talented researchers represent the limiting factor for differentiation, and that organizational vision and culture determine long-term competitiveness more than funding levels.

Could x AI recover from this crisis?

Recovery would require explicit recommitment to safety as a core function, demonstrated breakthrough innovations addressing the "catch-up phase" problem, transparent communication about strategic direction, and successful recruitment of top-tier replacement talent. Such recovery is possible but difficult after such visible organizational dysfunction.

What impact will x AI's crisis have on the broader AI industry?

The crisis will likely accelerate consolidation of some AI ventures, catalyze new startups by departing researchers pursuing specialized directions, increase focus on organizational stability and researcher retention across the industry, and reinforce the importance of balancing commercial success with maintaining research culture and safety commitment.

Key Takeaways

- xAI lost 50% of its founding team (6 of 12 cofounders) within days, indicating systemic organizational failure rather than normal attrition

- Safety team elimination signaled deprioritization of responsible AI development, alienating researchers committed to safety considerations

- Company remained 'stuck in catch-up phase' executing similar approaches to competitors without genuine innovation breakthroughs

- SpaceX merger at $1.25 trillion valuation ironically accelerated departures by providing equity-rich departing employees capital to start competitors

- Departing researchers launched new ventures rather than joining competitors, suggesting perceived inefficiencies in existing AI companies' approaches

- Safety-capability false choice proved destructive: eliminating safety teams doesn't accelerate innovation but drives away talent most motivated by responsible development

- Organizational communication failures—posting internal meetings publicly without consultation—damaged morale and signaled indifference to employee concerns

- Vague moonshot vision (space-based AI, lunar factories) couldn't sustain organization through crisis when questioned about near-term innovation capabilities

- Industry faces talent commoditization challenge: multiple well-funded organizations pursuing similar scaling approaches require differentiation through specialized vision and execution

- Founder and researcher retention represents more critical success factor than capital or computational resources in AI development competition

Related Articles

- Samsung's 2026 OLED TV Brightness Tech Explained [2025]

- ExpressVPN Deal: 81% Off Two-Year Plans [2025]

- How to Watch F1 on Apple TV in 2025: Complete Guide [2025]

- Roku's Streaming Bundle Strategy: How It Plans to Drive Profitability in 2026 [2025]

- Trump Mobile's Surprising Origins: The Canelo Álvarez Connection [2025]

- Best Monitor Deals: Affordable PC Upgrades Without Breaking the Bank [2025]

![xAI Mass Exodus: Inside Elon Musk's AI Restructuring [2025]](https://tryrunable.com/blog/xai-mass-exodus-inside-elon-musk-s-ai-restructuring-2025/image-1-1771004415322.jpg)