YouTube's Expanding AI Deepfake Detection: Impact on Politicians, Government Officials, and Journalists

Last year, YouTube introduced a groundbreaking technology to detect AI-generated deepfakes, initially targeting a vast number of creators in the YouTube Partner Program. Now, the company is expanding this initiative to include a pilot group of politicians, government officials, and journalists. This move marks a significant step in combating misinformation and protecting the integrity of public figures, as detailed in FindArticles.

TL; DR

- Enhanced Detection: YouTube's AI deepfake detection now targets politicians, government officials, and journalists, as reported by TechCrunch.

- Policy Enforcement: The tool allows affected individuals to request the removal of unauthorized AI-generated content, according to YouTube's official blog.

- Broader Implications: The expansion could reshape media credibility and governmental trust, as discussed in Axios.

- Technical Advances: The technology builds upon the existing Content ID system, as noted by The Verge.

- Future Trends: Expect more platforms to adopt similar AI-driven protections, as highlighted in Wiz.io.

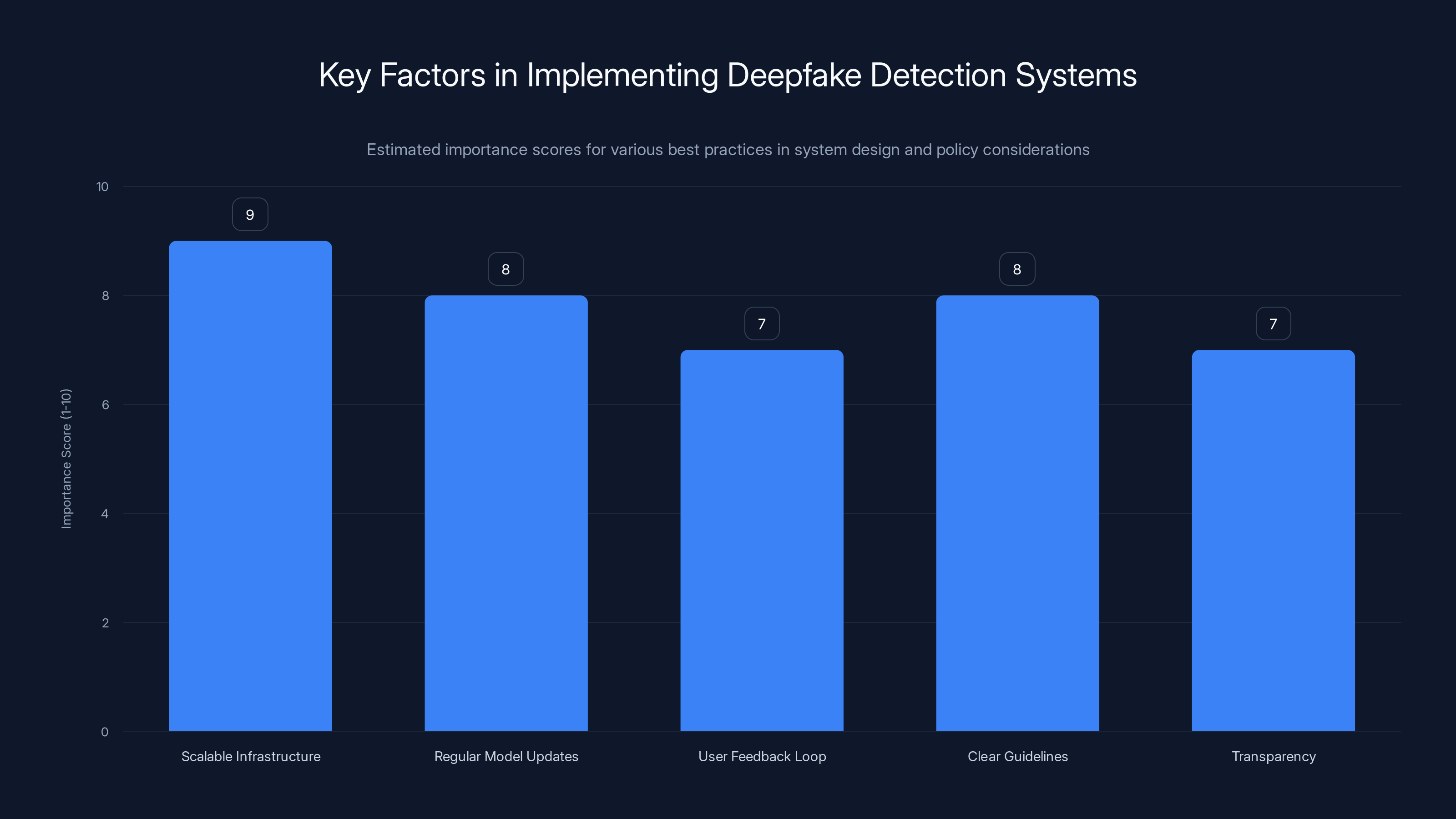

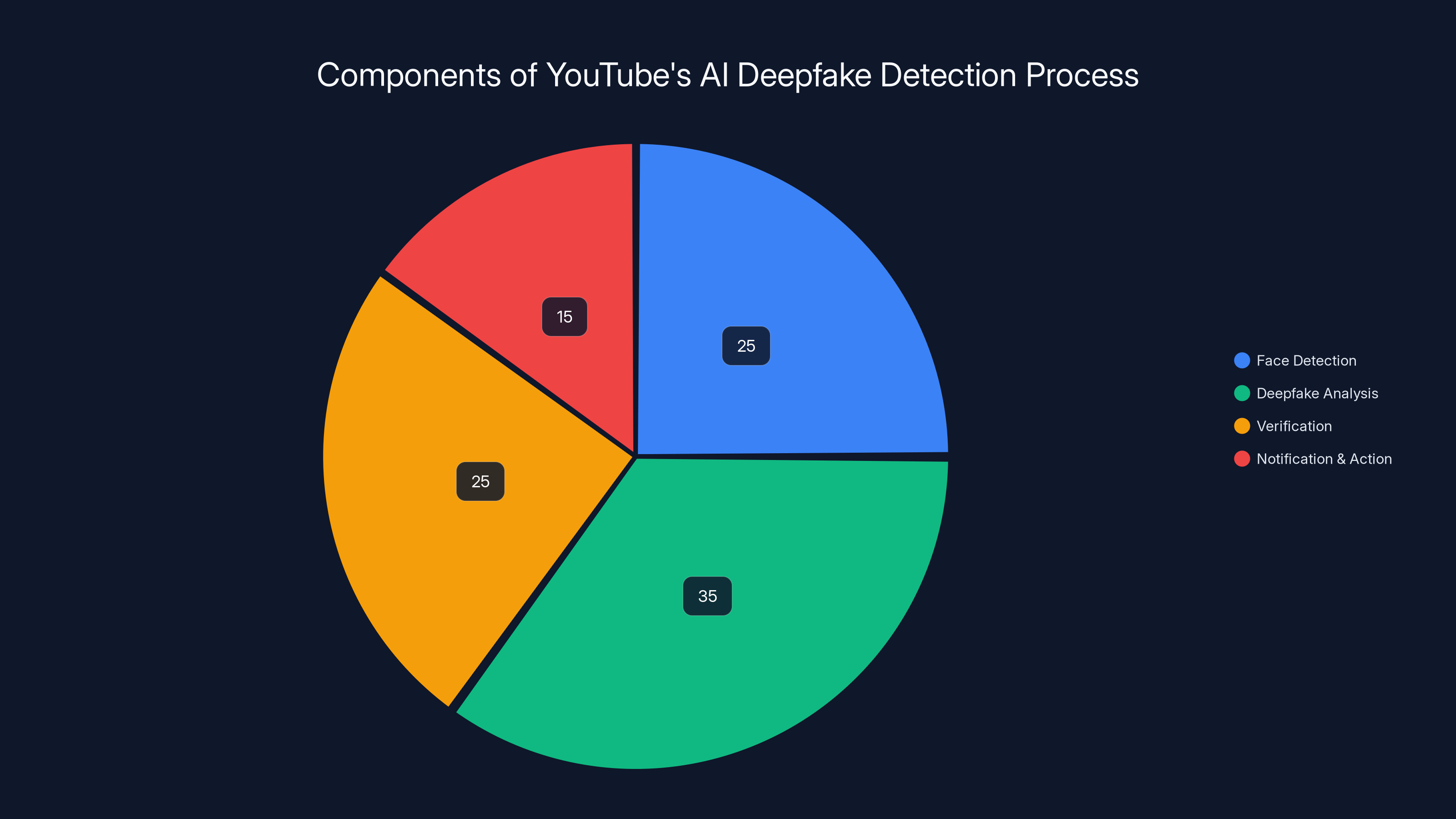

Scalable infrastructure and regular model updates are crucial for effective deepfake detection systems. Estimated data.

Understanding YouTube's AI Deepfake Detection

Deepfake technology has gained notoriety for its ability to create hyper-realistic videos that can portray individuals seemingly saying or doing things they never did. This technology, while innovative, poses significant risks, especially when used to mislead or manipulate public opinion, as explored in TechCrunch.

How the Detection Process Works

YouTube's likeness detection tool operates similarly to its Content ID system, which scans uploaded videos for copyrighted material. The deepfake detection tool focuses on identifying synthetic faces generated through AI. Here's a high-level overview of the process:

- Face Detection: The system first identifies potential faces in a video using computer vision algorithms.

- Deepfake Analysis: Detected faces are then analyzed for signs of AI generation, such as unnatural eye movements or mismatched lighting.

- Verification: If a face is flagged as a potential deepfake, the system cross-references it with a database of known public figures.

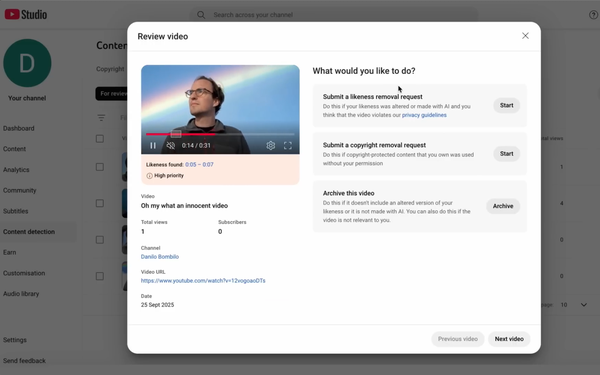

- Notification and Action: Affected individuals can be notified and given the option to request content removal if it violates YouTube's policies, as outlined in YouTube's official blog.

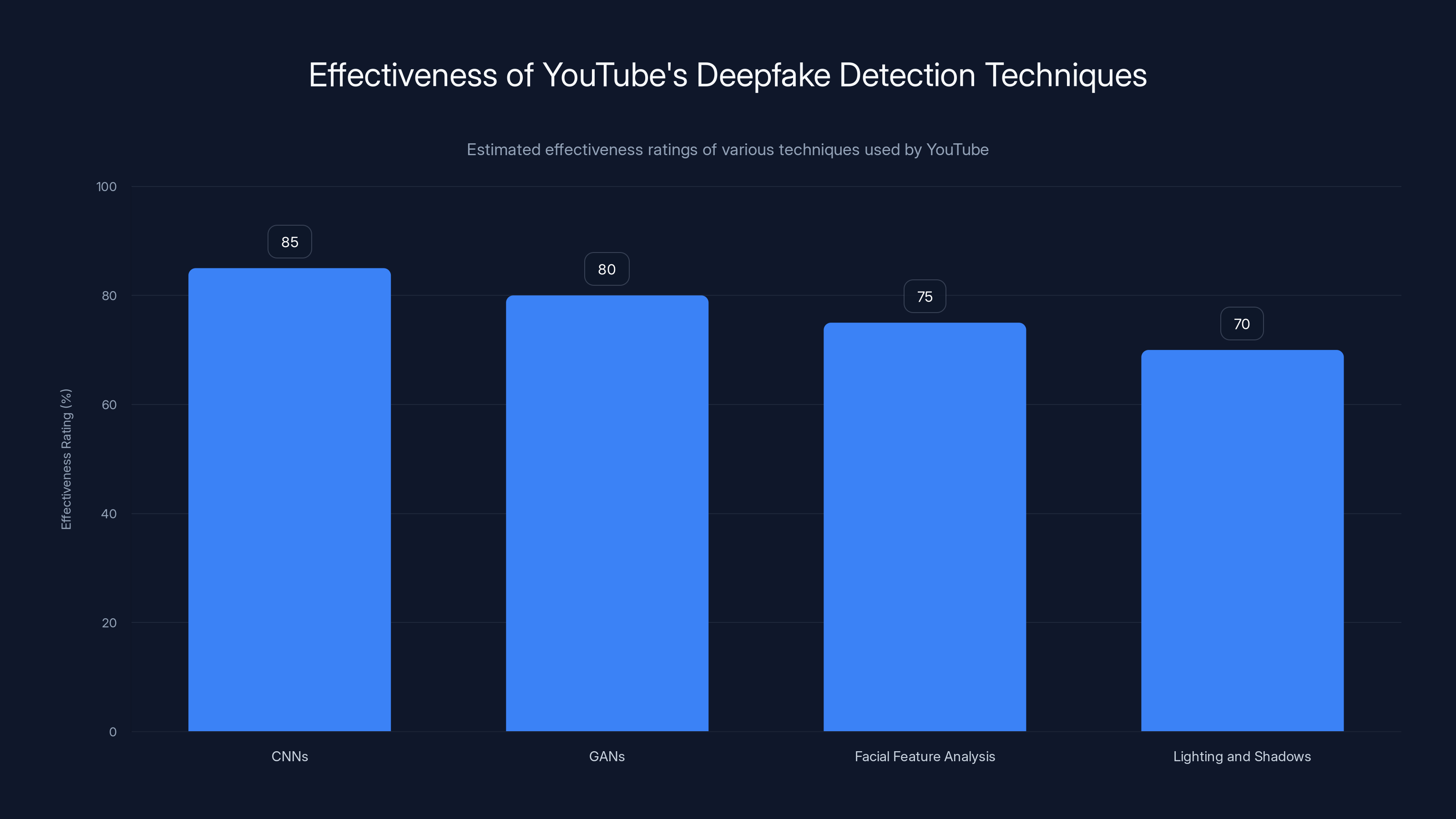

Estimated data shows CNNs are most effective in detecting deepfakes, followed by GANs and other computer vision techniques.

The Importance of Expanding Detection to Public Figures

The decision to extend this technology to politicians and journalists is strategic. These individuals are often at the center of public discourse and are frequent targets of misinformation campaigns, as discussed in GNET Research.

Why Politicians and Journalists?

- Influence on Public Opinion: Public figures have the power to shape narratives. Deepfakes targeting these individuals can have outsized impacts on political stability and societal trust.

- Target for Misinformation: Politicians and journalists are often targeted for deepfakes due to their visibility and influence, as noted by Syracuse University.

- Potential for Harm: Misinformation about these figures can lead to real-world consequences, from influencing elections to inciting violence, as reported by BBC News.

Technical Details: How YouTube's System Identifies Deepfakes

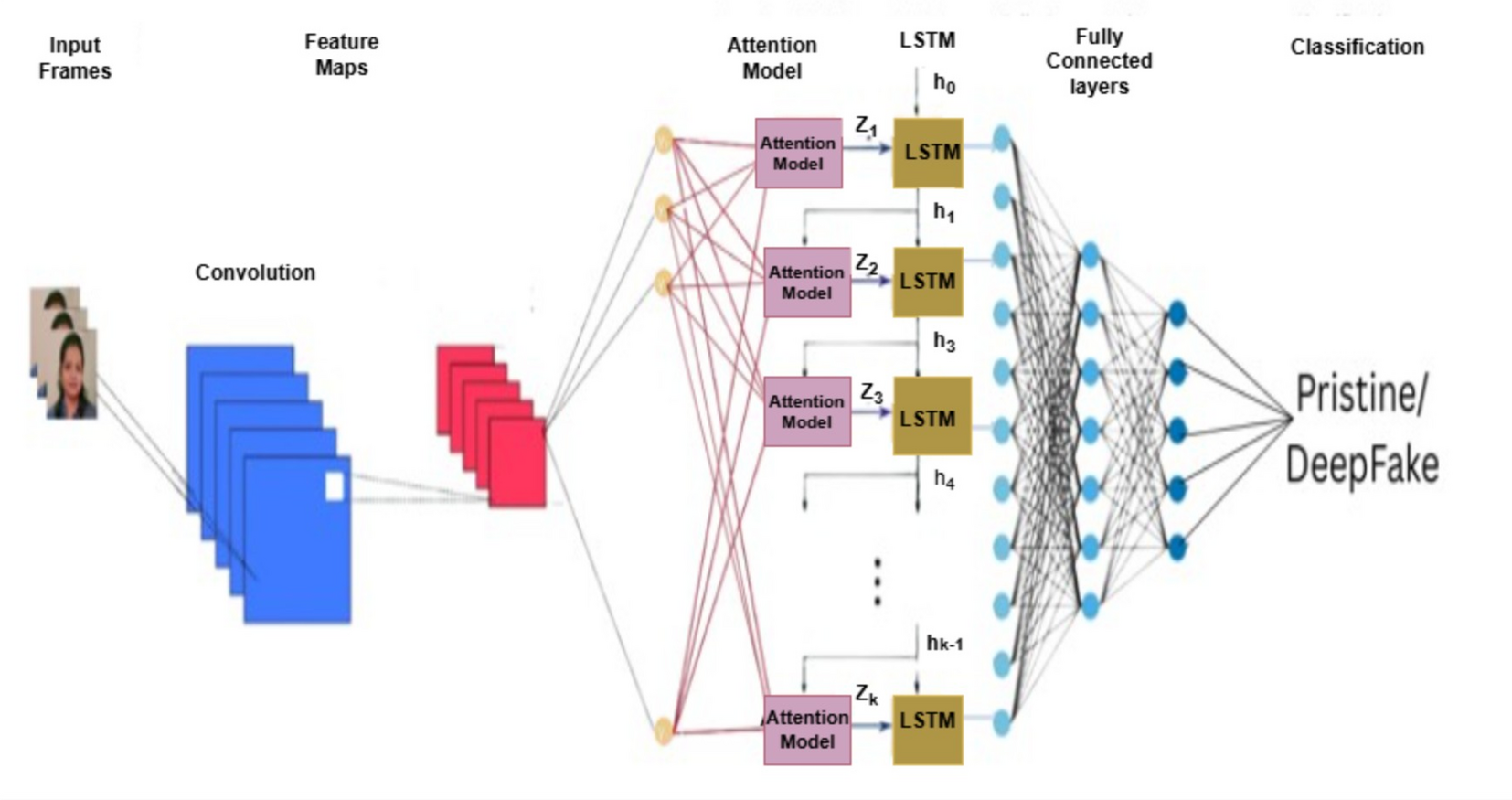

YouTube's system employs a combination of machine learning models and traditional computer vision techniques. Here's a deeper dive into the technical workings:

Machine Learning Models

- Convolutional Neural Networks (CNNs): Used for image classification and to identify patterns that are typical of AI-generated faces.

- Generative Adversarial Networks (GANs): These models are both a tool for creating deepfakes and a method for detecting them by understanding their generation process.

Computer Vision Techniques

- Facial Feature Analysis: The system examines facial features for anomalies that can indicate AI generation, such as symmetrical imperfections or unusual artifacting.

- Lighting and Shadows: AI-generated faces often have inconsistencies in lighting and shadow, which are analyzed for authenticity.

The pie chart illustrates the estimated distribution of focus areas in YouTube's AI deepfake detection process, with deepfake analysis taking the largest share. Estimated data.

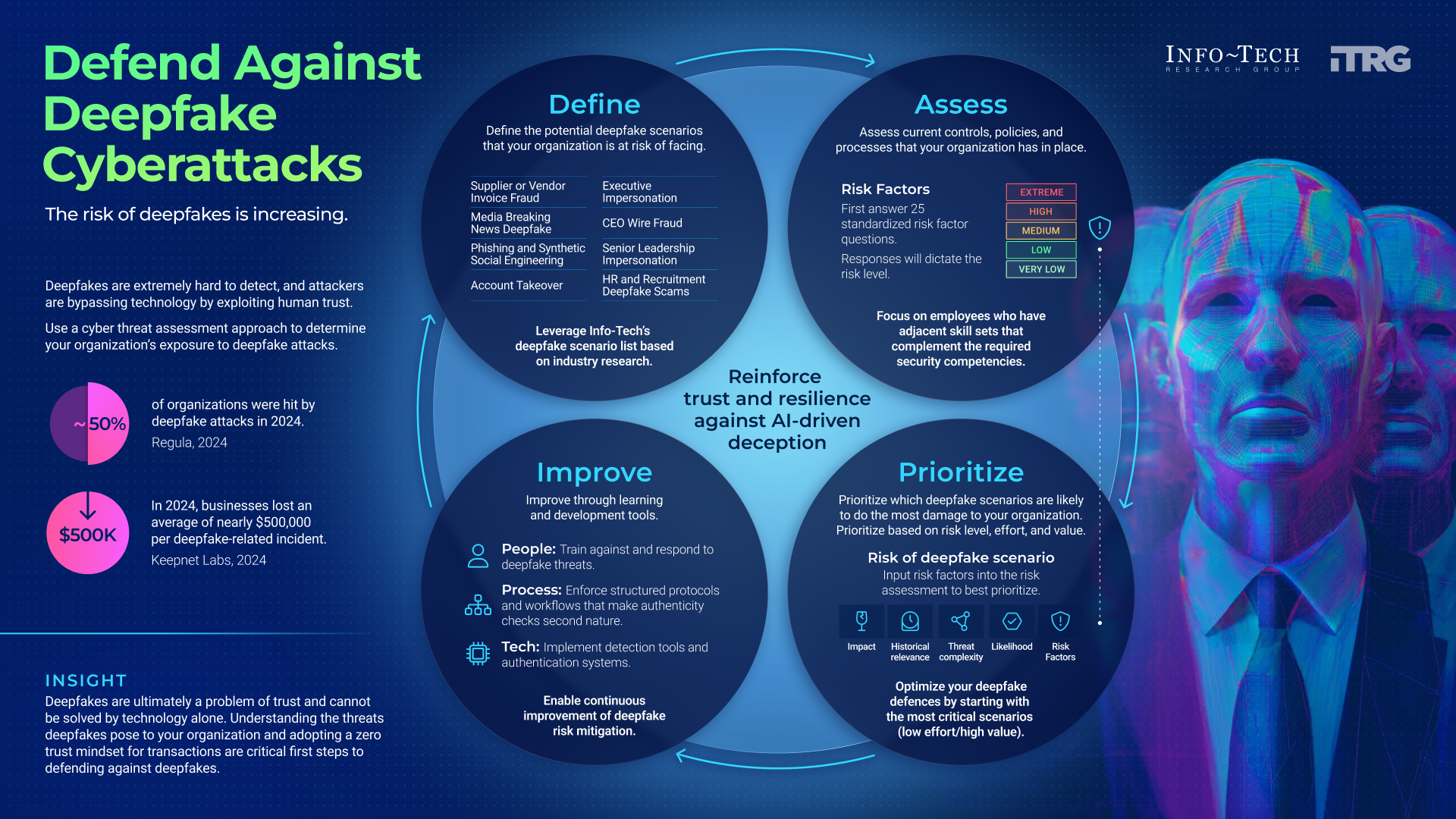

Common Pitfalls in Deepfake Detection

While YouTube's system represents a significant advancement, it is not without challenges:

- False Positives: Legitimate content can sometimes be flagged as deepfakes, leading to unnecessary content takedowns.

- Evolving Techniques: As deepfake technology evolves, so too must detection methods. AI models must continually be updated to recognize new techniques.

- Resource Intensity: Deepfake detection can be computationally expensive, requiring significant processing power and storage.

Practical Implementation and Best Practices

For those looking to implement similar detection systems, here are some best practices:

System Design

- Scalable Infrastructure: Ensure the system can handle large volumes of data, as video analysis is resource-intensive.

- Regular Model Updates: Continuously train and update models to keep up with advances in deepfake technology.

- User Feedback Loop: Incorporate a feedback mechanism for users to report false positives or missed detections.

Policy Considerations

- Clear Guidelines: Establish clear guidelines for what constitutes a deepfake and what actions will be taken when one is detected.

- Transparency: Be transparent with users about the detection process and criteria.

Future Trends in Deepfake Detection

As the field of AI and machine learning advances, so too will the methods for detecting and creating deepfakes. Here are some trends to watch:

Increased Sophistication

- Real-time Detection: Future systems may be able to detect deepfakes in real-time, allowing for immediate action.

- Cross-Platform Integration: Expect to see detection tools integrated across multiple platforms, not just YouTube.

Regulatory Developments

- Legislation: More countries may introduce legislation regulating the creation and distribution of deepfakes, similar to California's anti-deepfake law.

- International Cooperation: Global cooperation may be necessary to address the cross-border nature of digital misinformation.

Recommendations for Stakeholders

For politicians, journalists, and other public figures, understanding and mitigating the risks of deepfakes is crucial. Here are some recommendations:

- Education and Training: Regularly educate teams about the risks of deepfakes and the tools available to combat them.

- Collaboration with Platforms: Work closely with platforms like YouTube to ensure your likeness is protected.

- Crisis Management Plans: Develop plans to quickly address and counteract misinformation if targeted by deepfakes.

Conclusion

The expansion of YouTube's AI deepfake detection to include politicians, government officials, and journalists is a pivotal development in the fight against digital misinformation. As technology evolves, the need for robust detection and mitigation strategies will only become more critical. By staying informed and proactive, stakeholders can better protect themselves and the public from the potential harms of deepfakes.

FAQ

What is a deepfake?

A deepfake is a synthetic media where a person's likeness is digitally manipulated, often using artificial intelligence, to appear as if they are saying or doing something they did not.

How does YouTube's deepfake detection work?

YouTube uses machine learning models and computer vision techniques to identify AI-generated faces in videos, allowing affected individuals to request content removal if it violates policies.

Why are politicians and journalists targeted by deepfakes?

These public figures are often targeted due to their influence on public opinion and the potential impact of misinformation on societal stability.

What are the challenges in detecting deepfakes?

Challenges include false positives, evolving deepfake creation techniques, and the computational resources required for detection.

How can I protect myself from deepfakes?

Stay informed about detection tools, verify video sources, and collaborate with platforms to protect your likeness.

What are the future trends in deepfake detection?

Expect advancements in real-time detection, cross-platform integration, and regulatory developments aimed at curbing deepfakes.

How can platforms improve deepfake detection?

Platforms can improve detection by regularly updating models, ensuring scalable infrastructure, and maintaining transparency with users.

Key Takeaways

- YouTube expands AI deepfake detection to politicians and journalists.

- Detection tool allows removal requests for unauthorized content.

- AI models analyze facial features for deepfake signs.

- Challenges include false positives and evolving techniques.

- Future trends: real-time detection and regulatory developments.

Related Articles

- The Ultimate Guide to SanDisk Extreme SSDs: Unveiling Amazon's Unbeatable Offers [2025]

- I Used Google’s New Gemini-Powered ‘Help Me Create’ Tool in Docs. It’s Great at Corporate-Speak | WIRED

- AI Networking Startup Eridu: A New Dawn in Network Innovation [2025]

- Meta's Path Forward: New Rules for AI-Generated Content [2025]

- Revolutionizing Women's Health: Whoop's New Blood Test Initiative [2025]

- Meta's Deepfake Moderation: Challenges and Future Directions [2025]

![YouTube's New AI Deepfake Detection for Politicians and Journalists [2025]](https://tryrunable.com/blog/youtube-s-new-ai-deepfake-detection-for-politicians-and-jour/image-1-1773157382114.jpg)