AI Judges and Arbitration: The Future of Legal Decisions [2025]

The American legal system is drowning. Courts backed up for years. Lawyers costing thousands per hour. Small businesses avoiding disputes entirely because they can't afford legal help. Justice delayed is justice denied, the saying goes, but what about justice that never comes at all?

Now there's a new player entering the courtroom, if you can call it that. Not a person in a black robe. Not even a person at all. It's an AI system trained on legal precedent and case law, ready to review evidence, weigh arguments, and render a decision. Faster. Cheaper. More consistent. At least, that's the promise.

Bridget Mc Cormack knows judges' work intimately. She spent years as the former chief justice of the Michigan Supreme Court, reviewing cases where lower court judges missed key evidence, overlooked critical arguments, or simply got overwhelmed by volume. Now she leads the American Arbitration Association, and she's betting that AI can fix what's broken.

The system they've built—powered by Open AI's large language models—sounds almost too good to be true. It walks parties through their dispute, understands both sides, checks its work, and drafts a decision explaining its reasoning. All without the human judge's fatigue, bias, or scheduling constraints.

But here's the catch: AI has already failed spectacularly in courtrooms. Federal judges have issued orders containing completely fabricated facts. AI systems have been shown to encode the exact human biases they were supposed to eliminate. And the idea of letting a machine make life-altering decisions for people? That raises questions nobody's quite answered yet.

So we're left with a genuinely difficult question: Can a flawed technology improve a broken system? Or does it just spread the brokenness wider?

Let's dig into what's actually happening, why it matters, and what could go wrong.

TL; DR

- AI arbitration systems use machine learning to resolve disputes faster and cheaper, potentially saving small businesses thousands in legal costs

- Current use cases are limited to document-based disputes with human oversight at every stage, but the technology is expanding rapidly

- Documented failures include hallucinated case citations, encoded racial bias in risk assessment algorithms, and loss of public trust in the justice system

- The promise is real: faster resolution times (weeks instead of months), lower costs, and more consistent rulings without judge burnout

- The risk is equally real: AI biases, lack of accountability, opacity that violates due process, and a justice system that prioritizes speed over fairness

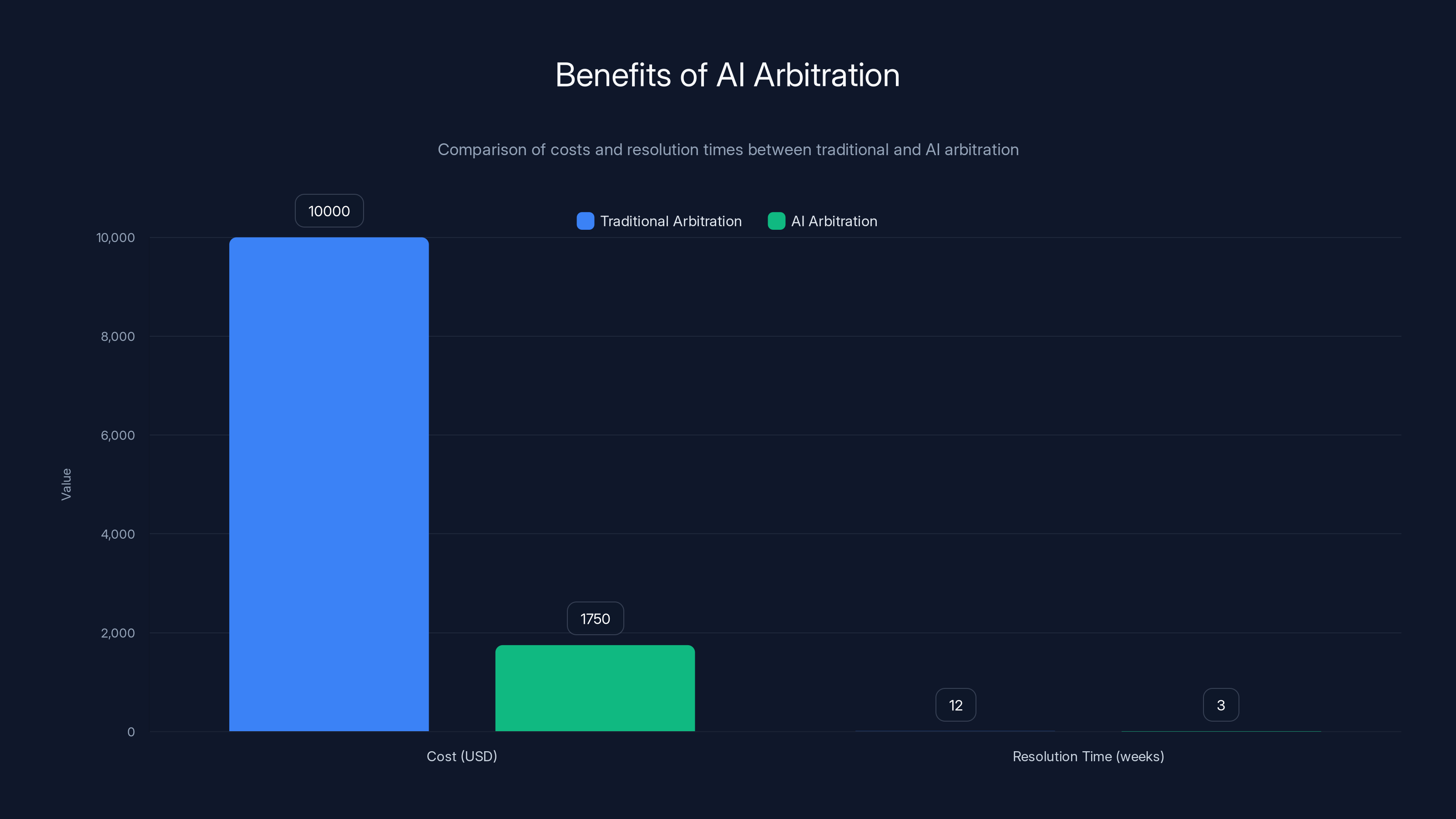

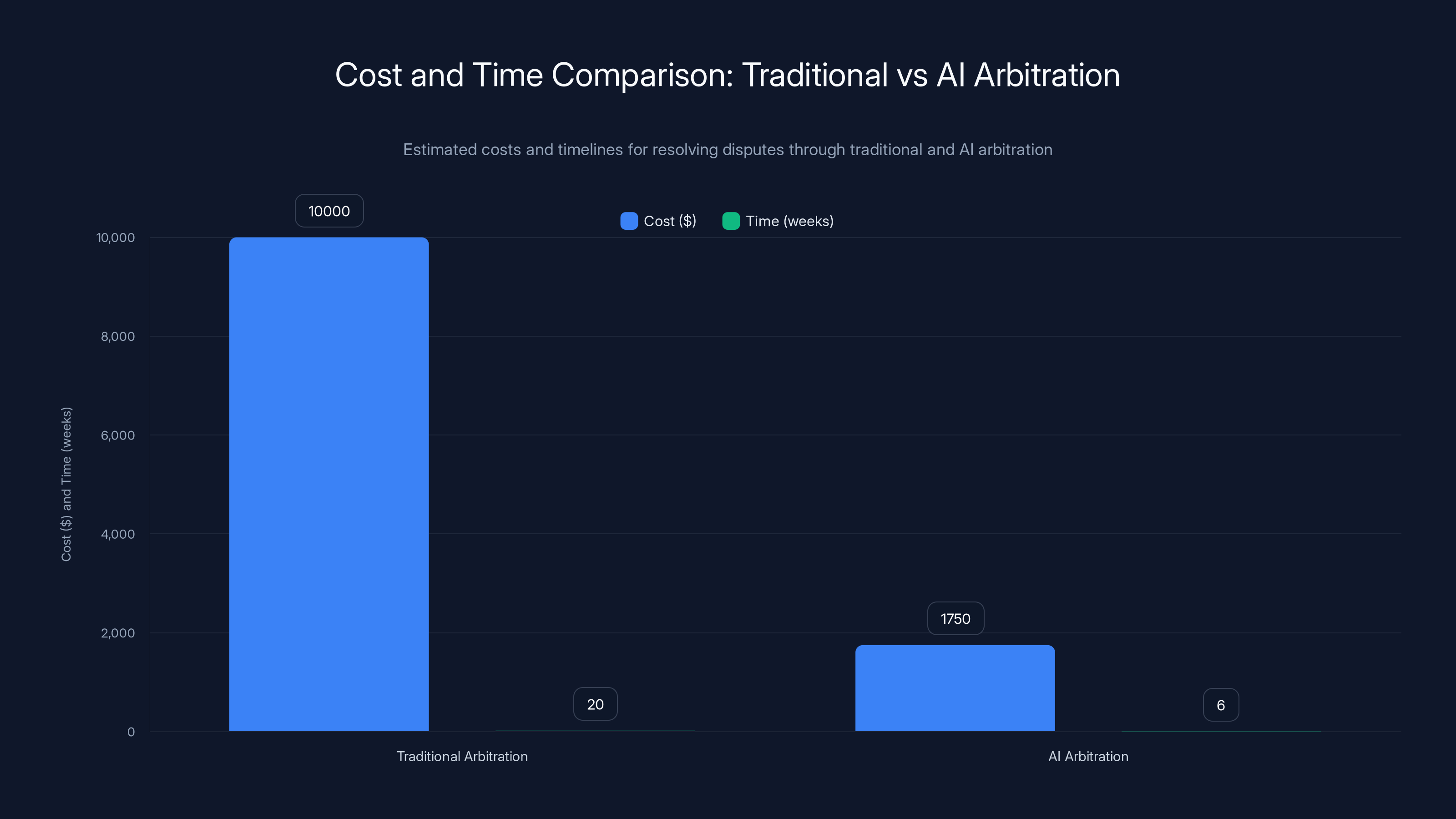

AI arbitration significantly reduces costs and resolution times compared to traditional methods, making it more accessible, especially for small businesses. Estimated data based on typical cases.

How the American Legal System Got So Broken

Before we talk about AI fixes, we need to understand what's actually broken. The picture isn't pretty.

America has roughly 15,000 state and federal judges handling a case load that grows every year. The average federal district judge manages between 400 and 500 active cases at any given time. That's not a number that allows for careful, thoughtful consideration of each case. It's a number that guarantees overwhelm.

State courts are worse. Many have dockets so packed that civil cases—disputes between businesses, contract disagreements, property disputes—take years to resolve. Some take nearly a decade to reach trial. A restaurant owner disputing a lease agreement might wait five years for their day in court. By then, the business is gone.

The cost problem is even more brutal. A simple contract dispute can cost

There's also the judge quality problem. Good judges are thoughtful, careful, and attuned to nuance. Bad judges are... well, bad. And the system doesn't really distinguish between them until something goes catastrophically wrong. A judge who misses evidence or misinterprets the law might affect hundreds of people's lives before anyone notices.

Arbitration was supposed to fix this. Instead of a public court, two parties could agree to have a neutral third party (an arbitrator) hear their case and make a binding decision. Faster. Cheaper. Private. It worked reasonably well for decades.

But arbitration has its own problems now. Arbitrators are expensive, though less expensive than judges. And as the court system got worse, arbitration became the preferred method for dispute resolution, which meant good arbitrators were also overbooked. The system that was supposed to be the fast alternative became slow too.

This is the gap AI is trying to fill.

AI arbitration significantly reduces both the cost and time required to resolve disputes compared to traditional methods. Estimated data.

What the American Arbitration Association Built

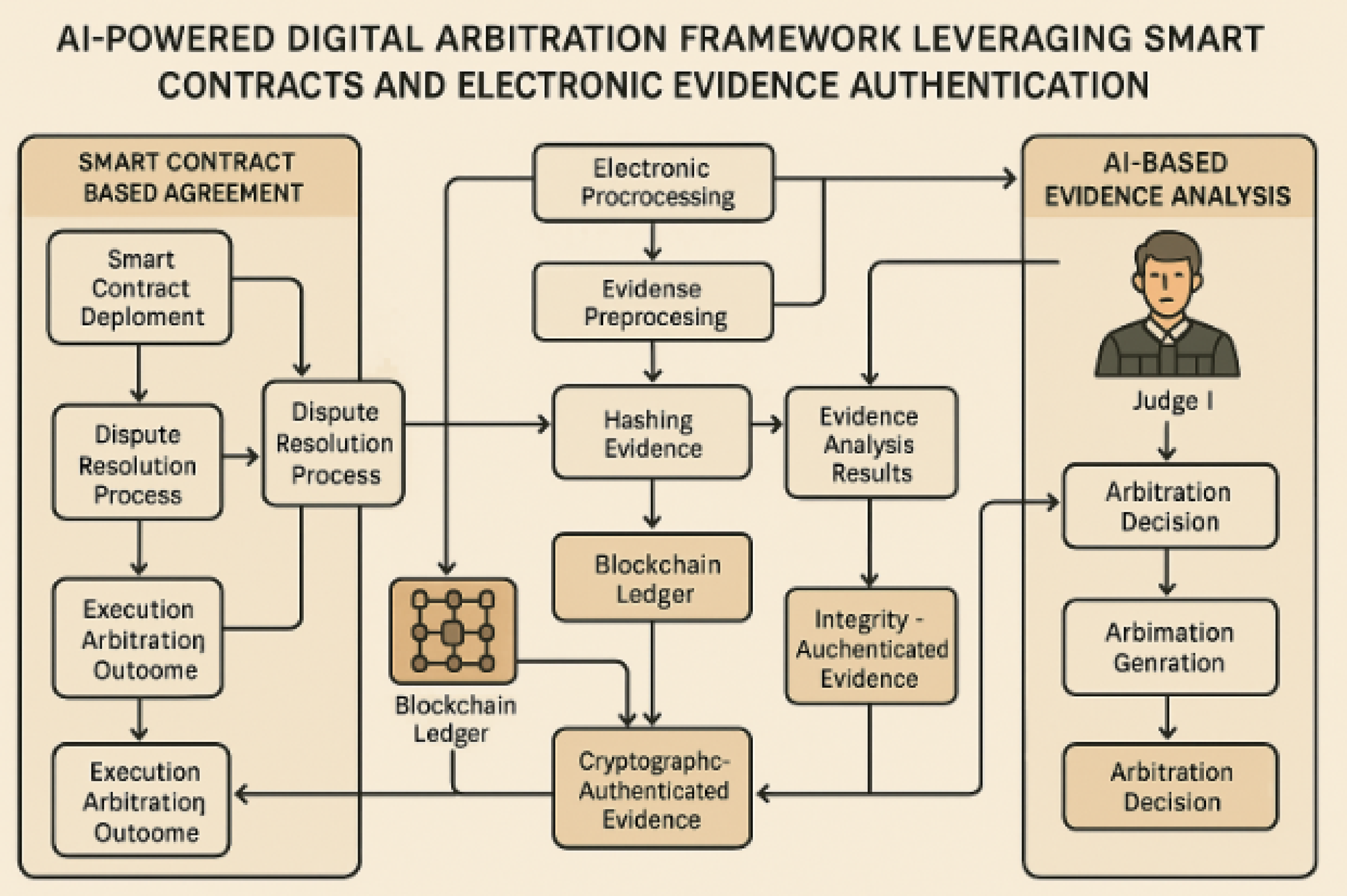

Let's talk specifics about what Mc Cormack and her team actually created. It's not a fully autonomous judge that makes all decisions unilaterally. If it were, it would face massive regulatory and practical barriers. Instead, it's more like a very capable clerk who helps both parties and a human decision-maker work through a dispute.

Here's how it works in practice:

Two companies have a contract dispute about a service delivery disagreement. They agree to use the AI Arbitrator process. The system guides each party through structured interviews, asking specific questions about the facts, the contract terms, what each side believes was promised, and what actually happened.

The AI system then reviews all the documents—emails, contracts, service records, anything relevant. It identifies the key facts both parties agree on. It flags the facts they disagree about. It notes which contract terms apply to the disputed facts.

Then it drafts a decision. Here's the critical part: it shows all its reasoning. It explains which facts it found credible and why. It walks through the relevant contract language. It applies that language to the facts. It concludes who should win and why.

A human arbitrator reviews this draft. They can accept it as-is, modify it, or reject it entirely and write their own decision. But the AI system has already done the heavy lifting—organizing information, spotting patterns, identifying applicable terms.

Mc Cormack emphasizes that humans are in the loop at every stage. The parties can input their own documents and narratives. They can challenge factual assertions. They see the AI's reasoning before the final decision. A human arbitrator makes the final call.

It's not Judge Roy Bean or Judge Judy. It's more like having a paralegal do all the preliminary work perfectly—organizing documents, spotting inconsistencies, drafting a detailed summary—and then a judge reviews that work product.

Except the "paralegal" never gets tired. Never forgets anything. Never lets personal bias affect how it weighs evidence. Or at least, that's the theory.

The Cost and Speed Promise

This is where AI arbitration gets genuinely compelling. The numbers matter.

Traditional arbitration through the American Arbitration Association currently costs participants roughly

Full litigation through courts costs multiples of that. You're paying lawyer fees, court costs, expert witness fees, potentially appeals. For a contract dispute worth $25,000, the legal costs might consume 40% to 60% of the value at stake. This means many disputes simply don't get resolved. The cost of justice exceeds the value of justice.

AI arbitration could change this math substantially. If the same dispute that costs

The timeline improvement is just as significant. Traditional arbitration still typically takes 3 to 6 months from filing to final decision. The parties need to exchange documents, schedule arbitrator availability, prepare written submissions or conduct hearings, and then wait for the arbitrator to decide and write their opinion.

AI arbitration could compress this to 4 to 8 weeks. The AI can process documents instantly. It doesn't need to wait for arbitrator availability. It can work continuously.

Let's model this out. Say you're a small software company and a client refuses to pay for a custom development project. The client claims your code doesn't work. You claim it works fine and the client didn't follow implementation steps correctly. The money involved is $30,000.

Scenario 1: You hire a lawyer to pursue this. Lawyer costs:

Scenario 2: You use traditional arbitration. Cost: $8,000 total. Timeline: 5 months. You might take it, but it hurts.

Scenario 3: You use AI arbitration. Cost: $1,500 total. Timeline: 6 weeks. You absolutely take it. This is economically rational.

This is the promise. For Mc Cormack and her team, it's not really about replacing judges. It's about making justice accessible to people who currently can't afford any justice at all.

The American legal system faces significant challenges, including judicial overload, high legal costs, and affordability issues, with severity scores ranging from 6 to 9. Estimated data.

Current AI Use in Courts: The Reality Today

Before we assume AI arbitration will work smoothly, let's look at how courts are already using AI. The picture is decidedly mixed.

Research by Stanford's Reg Lab, directed by Daniel Ho, reviewed actual AI use in judicial systems. The findings show courts already rely on AI for a surprisingly wide range of tasks, many of them high-stakes.

On the low-risk side, courts use AI for administrative work: processing and categorizing filings, scheduling, basic customer service queries, and monitoring social media for threats against judges. These are straightforward applications where AI's weaknesses don't typically matter.

On the higher-risk side, courts use AI for things that directly affect case outcomes. Judges have used generative AI to analyze case law and summarize legal precedent. That seems useful—why wouldn't you want an AI to organize the legal landscape?

But here's the problem: AI language models sometimes hallucinate case citations. They confidently cite cases that don't exist. They misquote real cases. They state legal principles that sound authoritative but are simply wrong. If a judge relies on these fabrications, it can lead to incorrect rulings.

We've seen this play out in real cases. In 2023, a federal judge in New York relied on Chat GPT-generated case citations in a legal brief, only to discover later that the AI had invented the cases entirely. The judge had to correct the record and sanction the lawyers involved.

That's a relatively minor incident—nobody lost their freedom based on invented case law. But it demonstrates the core problem: AI is confident regardless of whether it's right.

Courts also use AI for translation and transcription in some jurisdictions. This seems straightforward, but language is full of ambiguity. A mistranslation in a critical piece of testimony could completely change a verdict. The AI might be 99% accurate, but that 1% might be the word that determines guilt or innocence.

There's also the risk assessment problem. For decades, courts have used algorithms to help decide whether to release defendants before trial. The logic seems sound: use historical data about who committed crimes after release to predict who's dangerous. Protect public safety.

But Pro Publica's 2016 investigation of the COMPAS algorithm revealed something troubling: the system disproportionately flagged Black defendants as high-risk, even when controlling for criminal history. The algorithm wasn't explicitly programmed to be racist. But it was trained on historical criminal justice data that reflected systemic racism. So it learned the bias.

This is perhaps the most important cautionary tale for AI in the legal system. Bias doesn't have to be intentional. It's embedded in the data. A machine learning system trained on flawed data doesn't just reproduce the flaws—it often amplifies them and disguises them in a veneer of mathematical objectivity.

The Bias Problem: Why AI Judges Might Discriminate Better Than Humans

Let's be direct about this. AI systems can be biased. Sometimes dramatically so. And the bias can be nearly invisible.

When a human judge is biased, at least there's a human there that could, theoretically, engage in self-reflection, feel shame, or be publicly called out. A biased AI system? It just keeps churning out biased decisions while everyone stares at the mathematical model and assumes it must be objective.

The bias comes from training data. If the AI is trained on past court decisions—which many legal AI systems are—and those past decisions encoded bias, then the AI will learn to make biased decisions too.

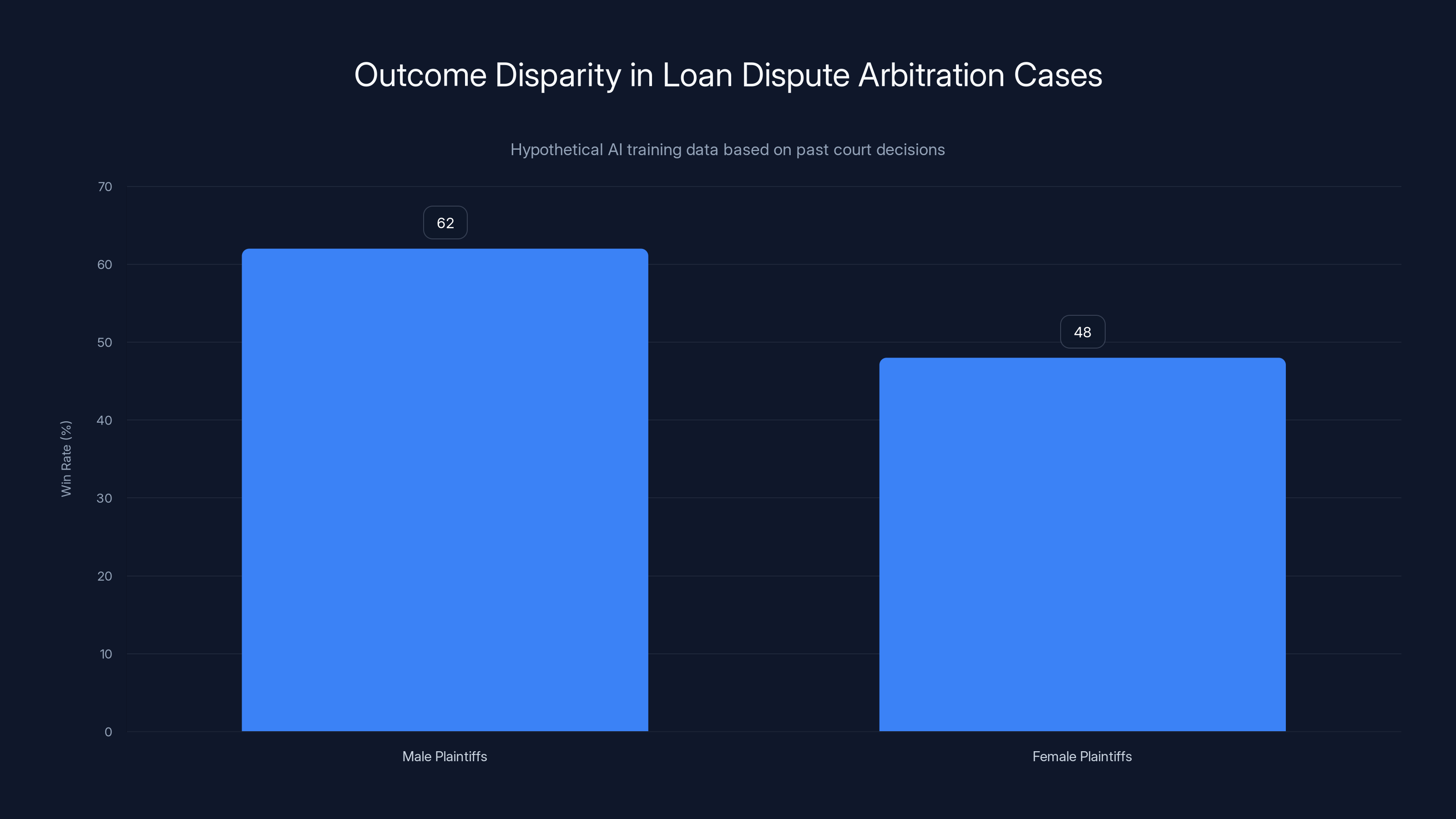

Consider a hypothetical scenario: An AI is trained on 20 years of loan dispute arbitration cases. In those cases, male business owners won 62% of their cases. Female business owners won 48%. This wasn't necessarily because of any overt discrimination. Maybe men statistically had better documentation. Maybe arbitrators subconsciously trusted men more. The reasons don't really matter for our purposes.

Now the AI is trained on this data. It learns patterns. Male plaintiffs tend to win. Female plaintiffs tend to lose. When a new case comes in with a female plaintiff making a similar argument to a past case with a male plaintiff, the AI might subtly weight evidence differently. Not because it's explicitly programmed to—but because that's what the training data showed.

The arbitrator reviews the AI's decision. It seems reasonable. It cites relevant facts. The reasoning appears sound. The arbitrator approves it. The female plaintiff loses. Everyone assumes the system is working fairly because the decision looks technically correct.

But it encoded 20 years of bias into an algorithm that looks objective.

There's a term for this: "fairwashing." It's when a system appears fair and objective on the surface but perpetuates or amplifies bias underneath. The appearance of mathematical neutrality can actually make bias more dangerous, not less, because fewer people question it.

Now, some companies are working on debiasing techniques. Training data can be adjusted. Models can be tested for bias against protected classes. Monitoring systems can catch disparities in outcomes.

But here's the frustrating part: there's no magic bullet. Debiasing an AI is like debiasing a human—it requires constant attention, ongoing testing, and humility about the limits of what's been tested.

And nobody's really required to do this work.

Estimated data shows a disparity in win rates between male and female plaintiffs in loan dispute cases, highlighting potential bias in AI training data.

Transparency and Due Process: The Accountability Gap

Here's another problem that's rarely discussed but critically important.

When a human judge makes a ruling, there's a record. The judge explains their reasoning from the bench or in a written opinion. If the reasoning is garbage, lawyers can appeal, and appellate judges review the logic. If a judge consistently makes bad decisions, there are mechanisms to remove them.

None of this exists for AI systems, at least not yet.

When an AI arbitrator drafts a decision, it's theoretically showing its work. But here's the thing: most AI systems can't actually explain why they reached a conclusion. They process input, run through millions of mathematical operations, and produce output. There's no internal "thought process" that a human can read and verify.

This is the black box problem. A neural network is fundamentally difficult to interpret. You can see what goes in and what comes out, but the middle part—the thousands of hidden layers where the decision actually forms—is opaque.

Some researchers are working on "interpretability" techniques that let you understand which inputs were most important to a decision. But these interpretability methods themselves are imperfect and still not broadly deployed.

Without true transparency, you lose due process. One of the fundamental principles of justice is the right to confront the evidence against you and challenge the reasoning of the decision-maker. If an AI system can't actually explain its reasoning, that right becomes meaningless.

Suppose an AI arbitrator decides you lose your case. You ask why. The system explains its logic: "Plaintiff's evidence was more credible, and the contract language supports plaintiff's interpretation." That's fine. But what made the evidence more credible? What subtle linguistic patterns in the documents did the AI detect? How much weight did it give to the fact that the plaintiff's documents were formatted better or more professionally written (which might correlate with resources, not truth)?

You can't really answer these questions by examining the AI. You'd need to understand neural network interpretability, which is beyond the capability of a typical litigant.

There's also the problem of appeal. In traditional arbitration, if you believe the arbitrator made a serious error, you have limited appeal rights. You can't just ask a different arbitrator to reconsider. There are specific grounds for setting aside an arbitration award—fraud, misconduct, exceeding authority—but mere error in judgment usually isn't enough.

With AI arbitration, the appeal situation is even murkier. Do you appeal to a human arbitrator? Another AI system? The error might not be in the logic but in biased training data or a prompt that was written in a way that unconsciously favored one party.

The Optimistic Case: When AI Makes Sense in Law

Okay, so AI in law has real problems. But let's not throw the baby out with the bathwater. There are legitimate use cases where AI could actually improve things.

First, there's the document organization problem. Lawyers spend absurd amounts of time reading, organizing, and cross-referencing documents. A typical major case might have 500,000 documents. A human paralegal would take months to index all of this, create timelines, identify contradictions, and extract relevant passages.

An AI system can do this in days or weeks. And it'll be more thorough and less likely to miss something important than a human who's been reading documents for 80 hours and is starting to hallucinate from exhaustion.

This is a good use case because it's augmentation, not replacement. The AI handles a task that's tedious and error-prone for humans. Then humans use that organized information to make decisions.

Second, there's the consistency problem. Suppose you have a large company using consistent contract templates. A dispute arises about contract interpretation. The question is: "What does this ambiguous phrase mean?" An AI trained on how that company's contracts have been interpreted in the past could point out that in 47 previous similar cases, the phrase was interpreted a certain way, establishing a precedent.

This could help create consistency without biasing outcomes, because the training data is narrow and specific rather than sweeping and systemic.

Third, there's the problem of information asymmetry. Some parties have resources for expensive lawyers who'll spend weeks preparing arguments. Others can't afford any lawyer. An AI system that helps level the playing field—by making it easier for the resource-poor party to organize and present their case—actually improves justice.

Fourth, there's the speed and cost issue for straightforward cases. If your dispute is genuinely simple—a contract interpretation, a straightforward facts question, no nuance—then AI might be genuinely better than waiting 18 months for a court date.

The optimistic case isn't that AI replaces judges. It's that AI handles the easy cases so efficiently that the human judicial system can focus on the hard cases that actually need human judgment.

In a pessimistic scenario, AI systems could handle 90% of commercial disputes, potentially leading to biased outcomes. Estimated data.

The Pessimistic Case: What Could Go Very Wrong

But let's also talk about the bad scenario. Because it's possible.

Imagine AI arbitration becomes the default for commercial disputes. Small businesses use it because it's cheap. Corporations start including AI arbitration clauses in their terms of service, forcing consumers to resolve disputes through AI rather than courts. Within a decade, 90% of commercial disputes are handled by AI systems instead of human arbitrators.

Now suppose we discover—not immediately, but eventually—that these AI systems have been consistently disadvantaging certain groups. Maybe it's by race. Maybe by accent (if the AI processes transcripts). Maybe by something we don't even have a name for yet.

What do we do? You can't un-arbitrate thousands of cases. You can't go back and get human review. The arbitration awards are final. Some people are financially ruined based on AI decisions that encoded hidden bias.

Worse, imagine the AI systems are performing as designed, but the design itself is flawed. The system was optimized to "speed up dispute resolution," which means the developers weighted rapid decision-making heavily. As a side effect, the system sometimes misses nuance or averages out complicated situations into simple categories.

A small business dispute that actually involved fraud gets classified as a simple contract interpretation and ruled against the defrauded party. Nobody catches this because the speed is working as intended. Justice just becomes faster, not fairer.

Or imagine corporate lawyers figure out how to game the system. They learn what patterns the AI weights heavily and start submitting documents formatted to exploit those patterns. They use language that subtle testing shows the AI interprets favorably. The AI becomes just another tool for the rich and well-resourced to dominate.

The poorest version of this scenario is that AI arbitration becomes a way to avoid accountability altogether. A tech company includes a binding AI arbitration clause in its terms of service. When users suffer harm, their disputes go to AI rather than court or human arbitrator. The AI, being software, can be updated and improved by the company. The system optimizes for faster resolution, not fairness. Users get worse outcomes, but the process is fast and cheap, so there's no incentive to change it.

Regulatory Approaches: Who Decides If This Is Okay?

Here's the problem: there's no consensus regulatory approach to AI in arbitration yet. Different countries are thinking about this differently. Different states might handle it differently. Even different industries might have different rules.

In the U. S., the Federal Arbitration Act governs arbitration broadly. But it was written in 1925, long before AI existed. It says parties can agree to arbitration terms they negotiate. It doesn't say much about what kinds of arbitration terms are valid.

Could an arbitrator use AI if both parties consent? Probably yes, the FAA wouldn't necessarily forbid it.

But should there be additional rules? Most legal experts think yes. Things like:

- Transparency requirements: The AI's training data should be disclosed. The reasoning for decisions should be explainable.

- Testing requirements: Systems should be tested for bias before deployment and monitored for bias during operation.

- Appeal rights: If the AI makes a decision, there should be some mechanism to challenge it.

- Consent requirements: Using AI shouldn't be a take-it-or-leave-it proposition buried in fine print. It should require informed, explicit agreement.

- Audit rights: The parties should be able to audit the AI system to understand how it reached its decision.

Some states are starting to think about these issues. California, for instance, has been developing AI regulations that could eventually touch on legal AI. The European Union is more aggressive, with its AI Act already imposing requirements on high-risk systems.

But globally, we're still in the wild west phase.

Mc Cormack and the American Arbitration Association have taken the stance that transparency and human oversight are essential. Their system isn't fully autonomous. The human is genuinely in the loop. This is responsible, but it also means the system is more expensive than it could theoretically be, which undercuts the cost savings.

Other companies might not be as careful. If a startup decides to launch an AI arbitration system with minimal human oversight and without testing for bias, regulators would struggle to stop them. The FAA would probably allow it if the parties consented. And nobody would know the system was biased until patterns emerged in case outcomes.

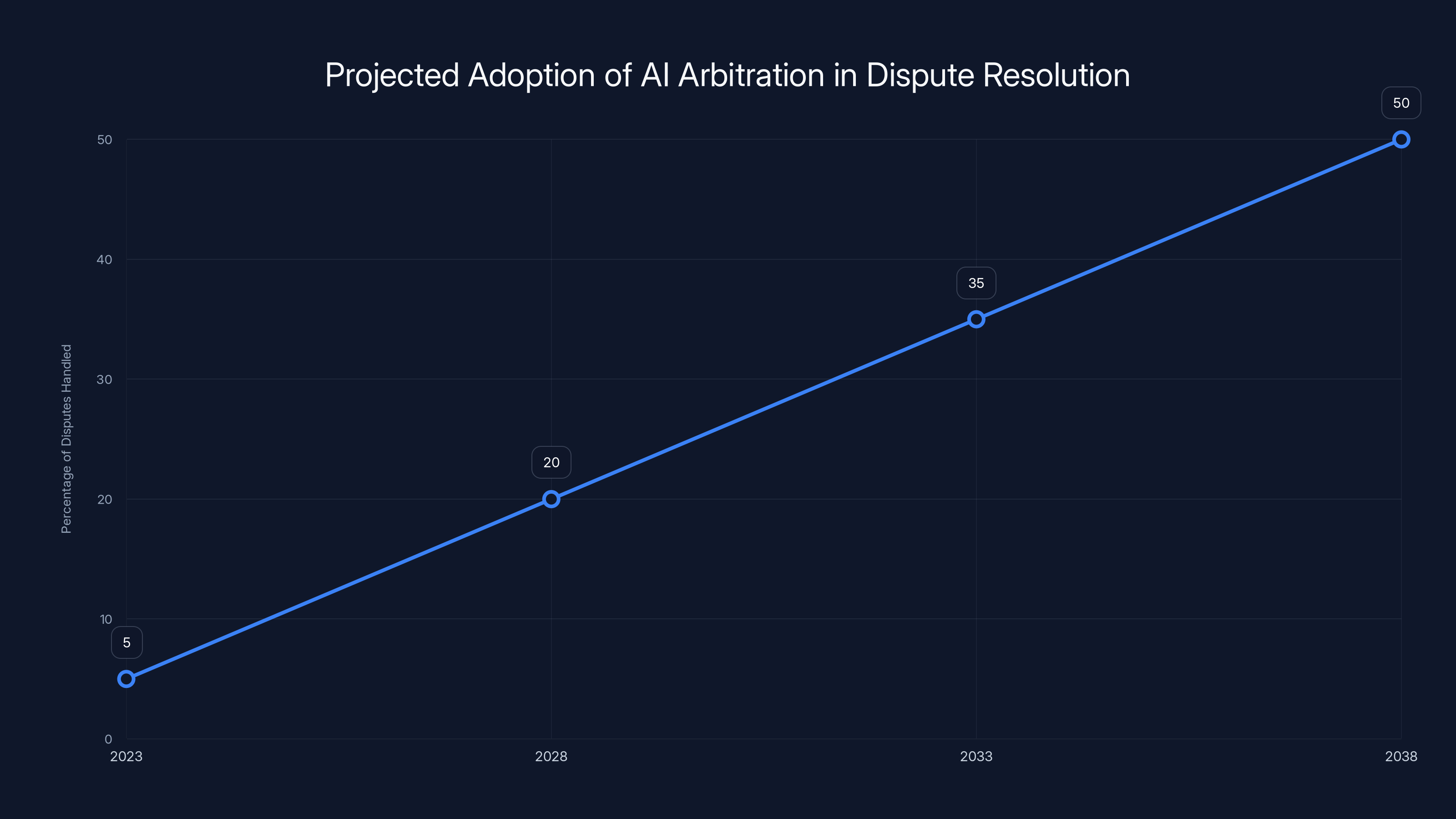

Estimated data suggests AI arbitration could handle 30-50% of disputes within 15 years, driven by cost savings and efficiency.

Industry Players and Emerging Systems

The American Arbitration Association isn't alone in exploring this space. Several other players are thinking about AI and legal decision-making.

Runable is one of several platforms exploring AI-powered automation in professional settings, though their primary focus is on presentation, document, and report generation rather than legal arbitration specifically. However, their underlying approach—AI agents handling multi-step workflows—could theoretically be adapted for other domains.

There are legal tech companies specifically focused on legal document automation and analysis. Tools like Legal Zoom use AI for document drafting and basic legal advice. These aren't arbitration systems, but they're in a similar ecosystem of AI-assisted legal work.

There are also research institutions exploring this space. Stanford's Reg Lab, MIT's Media Lab, and various law schools are studying how AI could and should be used in legal contexts.

The consensus across these groups is: "AI can help, but we need to be very careful about how."

What's notably absent is a dominant player or industry standard. The American Arbitration Association's system is probably the most carefully considered and transparent version. But it's also not widely deployed yet. We're still in the pilot phase.

Consumer Disputes and Binding Arbitration Clauses

Here's where this gets real and immediate for regular people.

You probably don't realize this, but you've likely agreed to arbitration clauses in dozens of contracts. When you bought your phone, agreed to terms of service for an app, accepted the conditions of a bank account, or signed up for a credit card, you probably agreed that any disputes would be resolved through arbitration, not court.

These clauses usually take you out of the public judicial system entirely. You can't sue in court. You can't join a class action. You have to arbitrate with the company, either one-on-one or in some kind of grouped arbitration.

The current system is already problematic. Arbitration should theoretically be neutral, but it's often run by arbitration providers that depend on corporate clients for repeat business. The incentive structure sometimes favors the large company over the individual consumer.

Imagine if all consumer arbitration was handled by AI. The company includes an AI arbitration clause in its terms of service. You have a dispute with them. You have to submit your case to an AI system that the company either owns or contracted with.

The AI's decision-making is influenced by its training data, which might reflect past disputes in similar companies or industries. If those past disputes favored companies over consumers, the AI would learn that pattern.

You lose your arbitration. You have no court appeal. You have no human arbitrator to petition. You have an AI system that can't really explain why it ruled against you, trained on data you never saw, operated by a system you never chose, in a process you never really agreed to (it was hidden in the fine print of a take-it-or-leave-it contract).

This scenario is possible right now, with current law. And it seems bad.

There's growing attention to arbitration reform, specifically around limiting companies' ability to force binding arbitration on consumers. Some states have started restricting it in certain contexts. If arbitration reform happens, it might explicitly address AI arbitration and require additional safeguards.

But right now, it's mostly unregulated.

How Judges and Lawyers Are Reacting

You might expect lawyers to be thrilled about AI that could handle routine cases, freeing them up to focus on complex work. In reality, the reaction is more mixed.

Some lawyers see AI as a tool that could make them more productive. Instead of spending 40 hours organizing documents and writing briefs for routine cases, they could spend 8 hours using AI to do the busywork, then focus on strategy. These lawyers tend to be in high-end firms where they're already stretched thin on complex cases.

Other lawyers see AI as a threat to their livelihood. A lot of legal work is, frankly, routine and boring. Junior lawyers spend years doing document review and writing basic briefs. If AI can do that work, what happens to junior lawyers? The profession has already been consolidating—big firms are getting bigger, small practices are getting harder to sustain. AI arbitration could accelerate that trend.

There's also skepticism among judges about whether AI could actually do the job. Many judges are legitimately uncertain whether AI is sophisticated enough to handle the nuance of legal reasoning. Some worry it's premature.

Federal judges have actually been fairly skeptical about generative AI in courtrooms, especially after the hallucination incidents. Some federal judges have issued standing orders prohibiting lawyers from using Chat GPT or similar tools in their cases. Others have warned about the risks.

Arbitrators, meanwhile, are more varied in their perspectives. Some see AI as potentially useful. Others are concerned about legitimacy and fairness.

There's no unified position yet, which is part of the problem. The legal profession is trying to figure this out in real time.

What Should Actually Happen

If we're going to use AI in arbitration (and it seems like we are, at least in some contexts), here's what reasonable people should insist on:

Transparency as a baseline. The training data should be disclosed. The decision-making process should be as explainable as possible. The parties should be able to understand how the AI reached its conclusion.

Bias testing and monitoring. Before deployment, the AI should be tested for disparate impact on protected classes. During operation, the system should be monitored to catch bias in outcomes. If bias is detected, the system should be updated and past decisions should be reviewed.

Informed consent, not fine print. Using AI shouldn't be a hidden term in a take-it-or-leave-it contract. Both parties should explicitly agree to AI decision-making, understanding what that means.

Appeal mechanisms. There should be some way to challenge an AI decision if you believe it's fundamentally wrong. This could be human review, re-arbitration with a human arbitrator, or limited court review. Something.

Audit rights. The parties should be able to audit or examine the AI system to understand how it works and whether it's functioning as intended.

Regulatory oversight. Whoever develops and deploys AI arbitration systems should be subject to regulatory review. They shouldn't be free to experiment on people's disputes without any oversight.

Accountability. If an AI system makes systematically biased decisions and harms people, there should be recourse. The developer should be liable. There should be a mechanism to vacate unjust decisions.

None of this is crazy. None of it would prevent beneficial use of AI in arbitration. But it would prevent the worst-case scenarios.

The Parallel to Other AI Regulation Efforts

We're having similar conversations in other high-stakes domains. Medical AI, hiring AI, loan approval AI—all of them face the same fundamental questions:

How do we ensure the AI is accurate? How do we detect and fix bias? How do we maintain human oversight? How do we ensure transparency? How do we create accountability?

In some cases, we've figured out good answers. Medical device manufacturers have to validate their tools through clinical trials. Hiring discrimination laws require employers to demonstrate their systems aren't discriminatory.

But these regulations took years to develop, often after failures happened. We didn't foresee the problems perfectly. We reacted when they became obvious.

With AI arbitration, we could potentially learn from those experiences and get ahead of problems instead of reacting after harm occurs. Or we could just let it unfold without guardrails and hope for the best.

Historically, hope doesn't work great as a policy.

The Honest Assessment: Is AI Judgment Better Than Human Judgment?

After all of this, we're back to the core question: Can AI actually make the legal system better?

The answer is probably yes, but not in the way the optimists imagine.

AI won't and shouldn't replace human judgment in complex cases. There are too many variables, too much nuance, too much at stake. A human judge, for all their limitations, can understand context in ways AI currently cannot.

But AI could handle routine cases better than current human-based systems. Document-based disputes, straightforward contract interpretation, simple factual disagreements—for these, an AI system that's transparent, unbiased, and cheap could be genuinely better than waiting 18 months for an overworked human arbitrator.

The practical version might be: AI handles 70% of cases (the routine ones) and handles them well. Humans focus on the 30% that actually require judgment. Cases are resolved faster overall. Costs drop. Access to justice improves.

But this only works if:

- The AI systems are genuinely high-quality and unbiased (which requires investment and testing)

- Regulatory oversight prevents abuse

- Appeal mechanisms exist if something goes wrong

- The savings get passed to consumers, not hoarded by arbitration providers

- The system doesn't become another tool for the powerful to dominate

That's a lot of "ifs."

Mc Cormack and the American Arbitration Association seem to be trying to do this right. They're being thoughtful about transparency, human oversight, and fairness. If their model becomes the standard, we could genuinely improve access to justice.

But if cheaper alternatives succeed by cutting corners on transparency and oversight, we could end up with a system that's faster but less fair, which makes things worse.

The legal system is already broken for most people. AI could fix it or break it worse. Which outcome we get depends entirely on the choices we make about how to build, regulate, and deploy these systems.

The Future: What's Coming Next

Here's my prediction, and it's based on how technology adoption usually works: We'll see rapid expansion of AI arbitration in the commercial space. The cost savings are just too compelling. Companies will offer AI arbitration clauses. Some consumers and small businesses will use them voluntarily because they need faster dispute resolution.

As usage expands, we'll probably see some failures. Some cases will be decided badly. Some people will realize they lost because of bias. There will be public outcry.

Then regulation will come. States will pass laws. The federal government will probably get involved eventually. We'll develop standards for transparency and bias testing.

By the time the regulation is in place, the industry will have already consolidated around a few major players who've invested in being compliant. Smaller operators will be forced out. The winners will be those who got ahead on the transparency and fairness stuff.

Overall, we'll end up with AI arbitration being a normal part of the legal landscape, probably handling 30-50% of disputes within 15 years. It will be better than the current bottleneck in some ways and worse in others.

But the legal system for the wealthy will probably remain human-based and careful, while AI gets deployed on the disputes of regular people and small businesses. That's how technology usually works.

The question is whether we can break that pattern.

FAQ

What is AI arbitration?

AI arbitration is a process where artificial intelligence systems help resolve disputes between parties, typically in commercial or contractual disagreements. Rather than going through the traditional court system or hiring a human arbitrator, parties can use an AI system (powered by large language models) to review evidence, understand both sides, and help generate a decision. The American Arbitration Association has developed a system that uses this approach for document-based disputes, with human oversight at every stage.

How does AI arbitration currently work?

In the American Arbitration Association's system, both parties submit documents and their positions. The AI reviews all materials, identifies agreed-upon facts and points of disagreement, and drafts a detailed decision explaining its reasoning. A human arbitrator then reviews this draft, can request modifications, or can accept or reject it entirely. The process is designed to be faster than traditional arbitration while maintaining human oversight and decision-making authority over the final award.

What are the benefits of AI arbitration?

The potential benefits are significant: cost reduction (from

What are the main risks of AI arbitration?

The primary concerns include algorithmic bias (where AI systems trained on historically biased data may perpetuate or amplify discrimination), lack of transparency in how AI systems reach decisions, absence of meaningful appeal mechanisms if the AI system makes an error, hallucination risks (where AI confidently generates false information), and the potential for companies to use AI arbitration clauses to avoid accountability. Federal judges have already had to correct cases where AI-generated case citations were entirely fabricated, demonstrating real-world failure modes.

Is AI arbitration legally enforceable right now?

In the United States, the Federal Arbitration Act generally allows parties to agree to whatever arbitration terms they negotiate. Since the FAA was written in 1925 (before AI existed), it doesn't explicitly address AI arbitration. If both parties consent to using AI arbitration, it would likely be enforceable. However, there's no comprehensive regulatory framework yet governing how AI arbitration systems should function, what standards they must meet, or what rights parties have if the system makes errors. Different states may eventually develop their own rules.

How does AI arbitration differ from using AI for legal research or document review?

Legal research and document review assistance are augmentation tools—the AI helps a human lawyer work more efficiently. AI arbitration is potentially a replacement for human decision-making, at least in the first instance. This distinction matters because bias, errors, or unfairness in augmentation tools can be caught by human review, whereas bias or errors in decision-making systems directly affect outcomes. The risk profile is fundamentally different.

What safeguards should be in place for AI arbitration?

Experts recommend several key safeguards: transparent disclosure of training data and decision-making processes, pre-deployment testing for bias and disparate impact on protected classes, ongoing monitoring for biased outcomes, informed and explicit consent (not buried in fine print), audit rights for parties to examine how the AI system works, meaningful appeal mechanisms if a decision appears erroneous, regulatory oversight of AI arbitration providers, and legal liability for developers if their systems cause harm through bias or malfunction.

Could AI arbitration disadvantage consumers in contracts?

Yes, this is a significant concern. Many commercial companies already use binding arbitration clauses in contracts with consumers, which remove the ability to sue in court or join class actions. If these clauses were expanded to include AI arbitration, consumers would have disputes resolved by AI systems they didn't choose, trained on data they never saw, with minimal appeal rights, and explanations they might not understand. This could particularly disadvantage vulnerable populations who lack legal resources to understand what they're agreeing to.

Conclusion

The American legal system is breaking under its own weight. Courts are overloaded. Justice is slow. Costs are prohibitive. Most people can't access legal help, so most small disputes simply remain unresolved. It's not a sustainable situation.

AI arbitration could genuinely help with this. A well-designed, carefully regulated system could make justice faster and cheaper without making it less fair. That's worth pursuing.

But the history of technology in society is clear: without thoughtful regulation and a commitment to fairness, the tools designed to help the many often end up helping the few. The same AI system that could democratize justice could also become another tool for corporations to avoid accountability.

Mc Cormack seems to understand this. The American Arbitration Association's approach includes transparency, human oversight, and stated commitment to fairness. If their model becomes the standard, we could actually improve things.

But the legal system isn't waiting for that. Companies are already building AI legal tools with less scrutiny. Courts are already using AI with mixed results. Arbitration providers are already experimenting. The technology is moving faster than the regulation.

If we want AI to actually improve justice instead of just replacing one set of flawed humans with a flawed algorithm, we need to invest in getting this right: transparent, testable systems with real human oversight, regulatory standards that actually protect fairness, and accountability mechanisms when things go wrong.

It's possible. It's just not inevitable. The next few years will determine whether AI becomes a tool for democratizing justice or another mechanism for consolidating power among the wealthy and well-resourced. The choice is genuinely ours, but only if we make it intentionally.

Key Takeaways

- AI arbitration could reduce dispute resolution costs from 1,500-2,000 and compress timelines from 6 months to 4-8 weeks

- The American Arbitration Association's system maintains human oversight at every stage, but other implementations might not be as careful

- AI systems trained on historical legal data risk perpetuating and amplifying existing bias in ways that appear objective and are difficult to detect

- Without transparency, bias testing, and meaningful appeals, AI arbitration could become a tool for corporations to avoid accountability

- Regulation is coming but probably after some failures occur, following the pattern of other high-stakes AI deployments in medicine and hiring

![AI Judges and Arbitration: The Future of Legal Decisions [2025]](https://tryrunable.com/blog/ai-judges-and-arbitration-the-future-of-legal-decisions-2025/image-1-1769515650080.jpg)