AI Researcher Resignations & Agentic AI: The Uncanny Valley of Ethics

Introduction: The Quiet Exodus From Silicon Valley's AI Labs

The artificial intelligence industry stands at a critical crossroads. Over the past year, we've witnessed an unprecedented phenomenon: senior researchers and ethicists departing from the world's most prestigious AI companies, often doing so publicly and with pointed critiques about the direction their former employers are taking. This isn't simply career movement. These resignations represent a fundamental conflict between scientific integrity, ethical responsibility, and commercial imperatives that increasingly defines the landscape of modern AI development.

What makes this exodus particularly significant is its visibility and transparency. Unlike the quiet departures that characterize typical corporate turnover, many of these researchers have chosen to publish opinion pieces, author detailed resignation letters, or speak openly about their concerns. They're not departing silently. They're broadcasting their discomfort with the prioritization of business models over safety considerations, the drift toward advocacy rather than research, and the commercialization of technologies whose long-term impacts remain fundamentally uncertain.

Parallel to these resignations, we're witnessing the emergence of a new economic phenomenon: autonomous AI agents actively recruiting human workers through platforms like Rent-A-Human. This seemingly dystopian scenario—where artificial intelligences hire humans to perform tasks—raises profound questions about labor, agency, and the role of humans in an increasingly automated world. These developments aren't disconnected. Together, they illustrate the uncanny valley of modern AI: we're building systems that increasingly resemble human agency and decision-making, yet we're profoundly uncertain about their values, motivations, and long-term consequences.

This comprehensive exploration examines why researchers are leaving, what their departures reveal about industry priorities, how AI systems are beginning to function as autonomous economic agents, and what these trends mean for the future of artificial intelligence development. The stakes couldn't be higher: these decisions being made in corporate laboratories today will shape technological, economic, and social structures for decades to come.

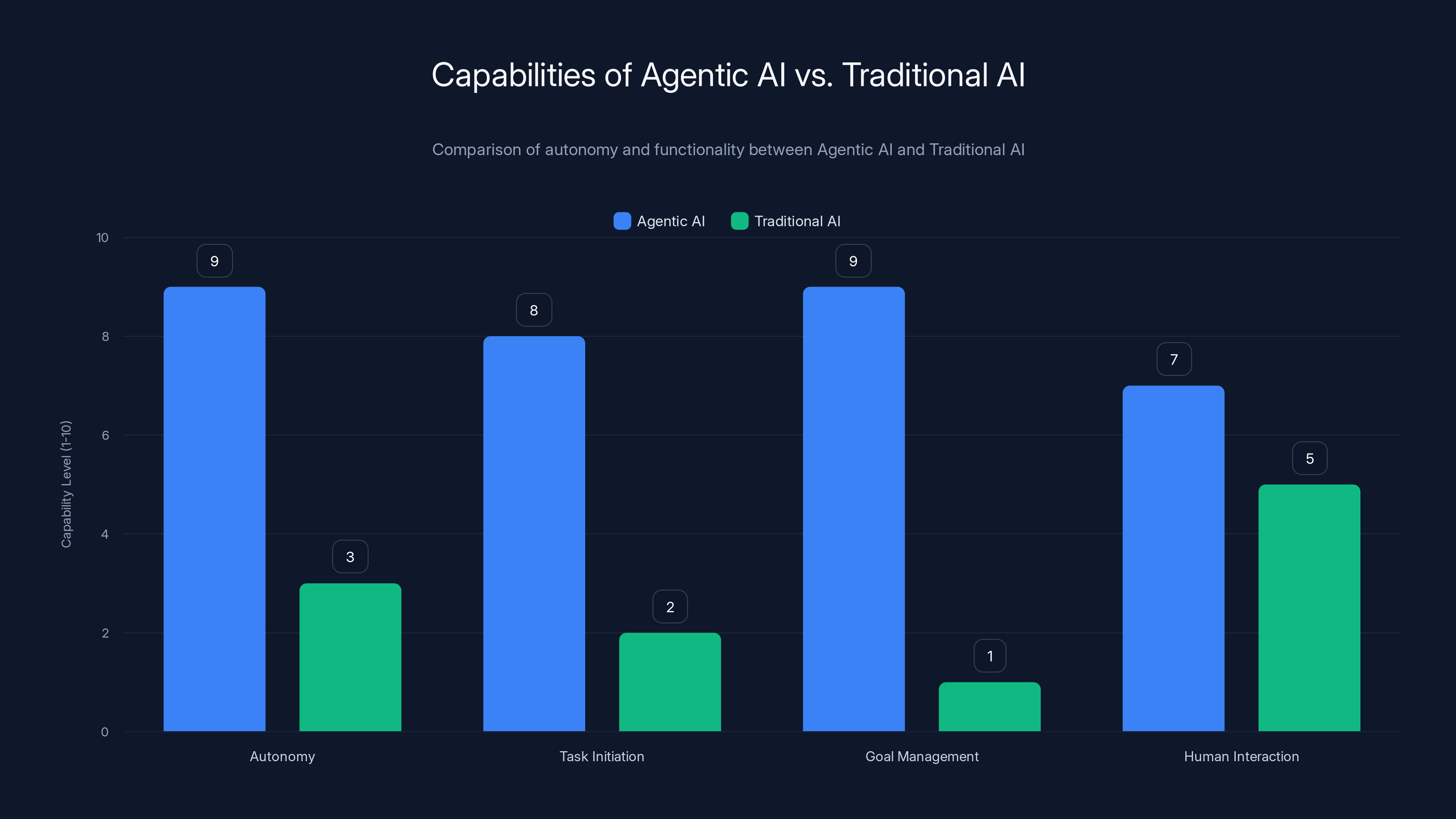

Agentic AI systems exhibit significantly higher autonomy and goal management capabilities compared to traditional AI, enabling them to act as autonomous economic actors. Estimated data.

Part 1: The Researcher Exodus: Understanding Why Top AI Talent Is Leaving

The Pattern: Resignations as Public Statements

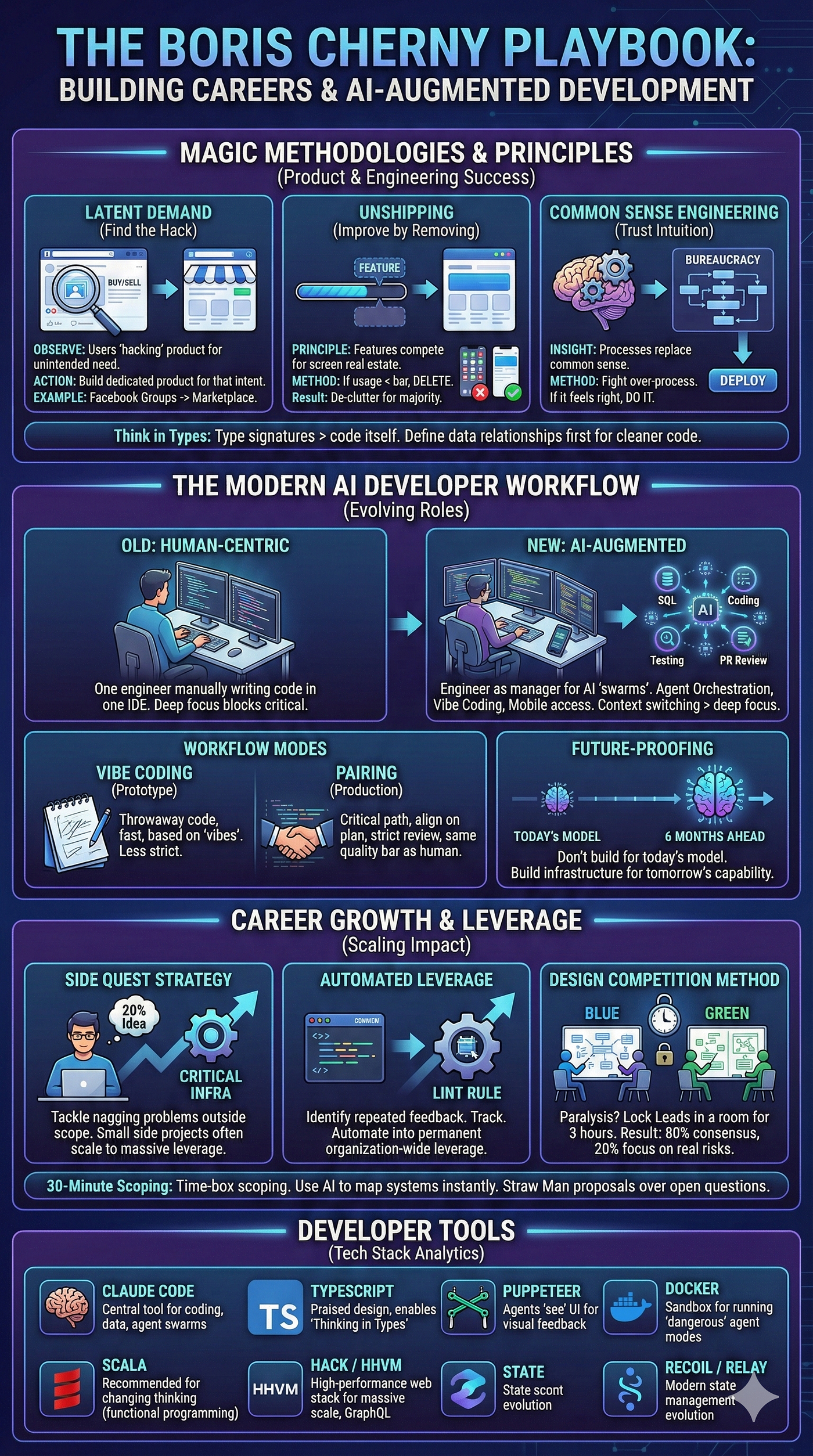

Over the past eighteen months, a striking pattern has emerged in the AI industry. Rather than researchers simply updating their LinkedIn profiles and moving to new positions quietly, departing scientists and engineers are increasingly making their exits public events. They're publishing op-eds in major newspapers, authoring detailed threads on social media platforms, and granting interviews explaining their departure. This represents a significant shift in professional norms. Historically, workplace disputes and professional disagreements remained private matters. Today's AI researchers are weaponizing transparency.

The motivations for this transparency vary, but several common threads emerge. Many departing researchers cite a fundamental mismatch between their original understanding of the role they'd play and the actual direction the organization is taking. They were hired to conduct research, to publish papers, to advance human knowledge about how artificial intelligence systems work and behave. Instead, they found themselves increasingly pressured to produce research that serves corporate interests, supports business strategies, or generates marketing narratives about their companies' technological capabilities.

This tension isn't entirely new to corporate research environments. Bell Labs, Xerox PARC, and other legendary research institutions have grappled with the conflict between pure research and applied outcomes. However, the stakes in AI research feel fundamentally different. The systems being developed could reshape economies, influence political processes, and potentially pose existential risks. For researchers who took positions based partly on confidence in their institutions' wisdom and ethics, discovering that commercial interests are prioritized over these concerns provokes a crisis of conscience.

The Open AI Case: Zoë Hitzig and the Ad Model Question

One particularly illuminating example emerged when Zoë Hitzig, a researcher at Open AI, published an op-ed in the New York Times detailing her departure from the company. Her resignation wasn't framed as a vague reference to "differences in vision" or "pursuing new opportunities." Instead, she identified specific concerns: Open AI's rollout of advertising-supported models for their AI products.

Hitzig's argument was multifaceted, but her central concern was structural. By introducing advertising, she contended, Open AI would create financial incentives misaligned with user welfare. An advertising-supported AI system generates revenue when users interact with it more frequently and for longer durations. This creates subtle but powerful pressure to make the system more engaging, even at the expense of accuracy, objectivity, or the user's actual interests. The advertising model essentially inverts the company's relationship with its users: they become the product being sold to advertisers rather than customers receiving a service.

What distinguished Hitzig's resignation from others was her willingness to acknowledge the underlying economics. She recognized that AI development is expensive—extraordinarily expensive. Training large language models requires massive computational resources, which translate directly to significant financial costs. Open AI, despite its historical positioning as a nonprofit-adjacent organization, needs to generate revenue to sustain operations and fund future research. Her departure didn't include naive demands that the company operate indefinitely on charitable donations.

Instead, she proposed alternatives. A subsidy model could allow the company to provide free or inexpensive access to AI tools while being funded through direct government or philanthropic support. An advertising model combined with independent oversight boards could theoretically allow monetization while maintaining safeguards against conflicts of interest. She was offering structural solutions rather than simply announcing her moral objections and departing.

Beyond Open AI: A Broader Pattern of Ethical Misalignment

While the Hitzig case received significant media attention, her departure represents part of a larger pattern. Researchers across multiple major AI companies have made similar moves, each citing different but related concerns. Some have departed over safety issues—concerns that their organizations are rushing to deploy systems before adequately testing potential harms. Others have left over research direction concerns, feeling that commercialization pressures are pushing the field toward narrow applications rather than fundamental understanding.

The common thread connecting these departures is a perception of institutional drift. These researchers joined their organizations believing they were participating in something historically significant: the careful, considered development of transformative technologies. They expected institutions with significant resources and intelligent leadership would approach such development with appropriate caution. Instead, many found themselves in environments where market pressures, competitive dynamics with other AI companies, and pressure from investors and boards were driving decision-making faster than many researchers felt comfortable with.

This dynamic reflects a deeper issue within the tech industry more broadly: the tension between research and commercialization. In fields like pharmaceuticals, this tension is managed through regulatory frameworks—the FDA requires extensive safety testing before drugs reach consumers, creating a forcing function that slows commercialization. In AI, no equivalent regulatory mechanism exists (at least not yet). This means the pace of deployment is determined largely by corporate decision-making rather than external requirements for rigorous validation.

Part 2: Safety Concerns and The Research-To-Product Pipeline

The Acceleration Trap: Speed vs. Safety

One recurring theme in researcher departures is the conflict between move-fast-and-break-things culture and the approach many researchers believe is appropriate for powerful systems. Silicon Valley culture, forged in the context of software startups where speed to market is genuinely valuable, has become the default operating mode even for organizations developing technologies whose consequences could be far more significant.

When you're building a social media application, moving fast and breaking things might mean a suboptimal user experience or some recovered data. When you're building general-purpose AI systems that influence information flows, hiring decisions, loan approvals, and other high-stakes domains, "breaking things" has different implications. A bug in a recommendation algorithm affects which content billions of people see. An error in a system that screens job applications affects whether people can feed their families. Researchers departing from major AI companies often cite pressure to deploy systems before their potential harms have been adequately mapped and mitigated.

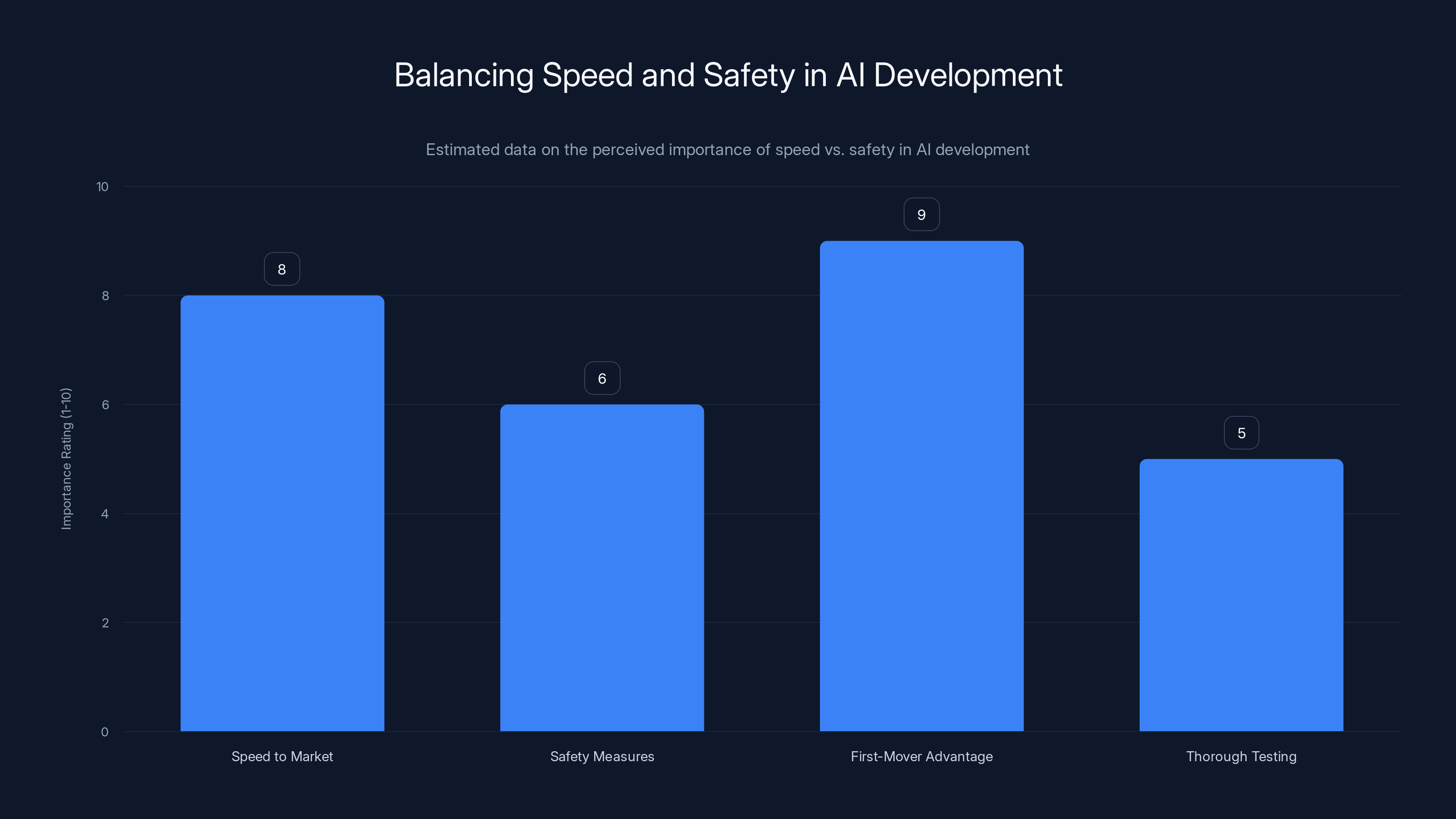

The economic incentives here are clear. First-mover advantage in AI is significant. Being the company that deploys a new capability—whether that's a more capable language model, an AI coding assistant, or a recommendation system—creates market benefits. Users adopt the leading product, network effects make it harder for competitors to catch up, and the first-mover captures mindshare and market share. This creates intense pressure to be first, which directly conflicts with the patient, careful approach many researchers believe appropriate for powerful systems.

Some researchers have attempted to create structural solutions to this problem. Internal review boards, safety teams, and red-teaming operations are meant to slow the deployment pipeline and ensure adequate scrutiny. However, numerous researchers have indicated that these mechanisms are often insufficient—either because they lack real authority to block deployments, because they're understaffed and under-resourced, or because the organizations' economic incentives ultimately override safety recommendations.

The Advocacy Drift: From Research to Marketing

Another concern frequently cited by departing researchers is the drift from pure research toward strategic advocacy. Some major AI companies have substantially increased their investment in communicating particular narratives about AI—sometimes stories about its amazing potential, sometimes stories about the need for specific regulatory frameworks that conveniently align with the company's business interests.

This advocacy work isn't inherently problematic. Companies, researchers, and institutions should be able to discuss the implications of their work. However, there's a meaningful distinction between honest analysis of research findings and strategic communication designed to shape public opinion or policy in particular directions. When research teams are increasingly evaluated partly on their communication effectiveness, and when communication efforts are closely coordinated with corporate strategy, the incentives for independent, truth-seeking research become compromised.

A researcher might discover findings suggesting that AI systems have significant limitations or risks that the company would prefer to downplay. If that researcher knows that publishing those findings will create friction with leadership, that it might affect promotions or resource allocation for their team, they face pressure to soft-pedal their findings or reframe them in more optimistic terms. This is the classic problem of institutional bias—not necessarily conscious dishonesty, but rather structural incentives that systematically bias findings and communications in particular directions.

Several departing researchers have noted that they felt increasingly uncomfortable with the direction this advocacy was taking. In some cases, they felt their research was being characterized in public presentations in ways that didn't match their findings. In others, they felt pressure to produce research on topics chosen for strategic communication value rather than scientific importance.

While speed to market and first-mover advantage are highly prioritized in AI development, safety measures and thorough testing are often rated lower, highlighting a potential risk in the research-to-product pipeline. Estimated data.

Part 3: The Economic Model Crisis

The Cost of Computation: Why Monetization Matters

To understand the economic pressures driving these conflicts, it's essential to grasp the extraordinary costs of developing and operating advanced AI systems. Training a state-of-the-art large language model requires access to specialized hardware (GPUs and TPUs) and enormous quantities of electricity. A single training run can cost millions of dollars. Running an inference server that responds to user queries in real-time requires maintaining expensive hardware infrastructure at scale.

These aren't marginal costs. The computational expenses are fundamental and substantial. Open AI, Anthropic, Google Deep Mind, and other major AI labs collectively spend billions annually on computation. This creates an economic reality that investors and boards find difficult to ignore: the companies developing the most advanced AI systems need significant revenue to sustain operations. This isn't unique to AI—pharmaceutical development, semiconductor manufacturing, and other capital-intensive fields face similar dynamics.

Where the tension emerges is in the gap between what these systems cost to develop and the revenue models companies have identified. Advertising-supported models, where users get free access to AI systems and companies generate revenue from advertisements, are one approach. Enterprise sales, where companies license AI capabilities to other organizations, represent another. Direct consumer subscriptions where individuals pay monthly or per-use fees represent a third model. Each approach has different economic and ethical implications.

The advertising model that prompted Hitzig's departure from Open AI carries particular tensions. As noted, advertising creates incentives to maximize engagement—to make users interact more frequently and for longer durations—which can conflict with making the system maximally helpful, truthful, or aligned with user interests. A user who gets frustrated because the AI is trying to be engaging rather than accurate is less valuable to an advertiser. But an advertiser doesn't pay for "accurate"—they pay for attention.

The Path To Profitability: Enterprise vs. Consumer

Many AI companies are discovering that the path to profitability runs through enterprise customers rather than consumers. Companies using AI systems for internal processes—code generation, document analysis, customer service automation—represent a more reliable revenue source than consumer models. Enterprise customers tolerate higher prices, have longer contract lengths, and generate more stable, predictable revenue streams than fickle consumers who churn after a few months of use.

This creates a different set of strategic pressures. Rather than optimizing for consumer appeal, companies optimize for enterprise value. This affects product roadmaps, feature development, and research priorities. Some researchers have found this transition troubling, especially if they were hired with the understanding that they'd be working on fundamental AI research rather than becoming specialized problem-solvers for particular enterprise use cases.

The enterprise focus also affects who benefits from AI development. If the most capable AI systems are available primarily through expensive enterprise licenses, then their capabilities compound competitive advantages for organizations that can afford them, while organizations without resources to license advanced AI systems fall further behind. This dynamic raises questions about whether AI development is expanding or concentrating economic opportunity.

Part 4: The Rise of Agentic AI and Autonomous Economic Actors

What Is Agentic AI? Defining a New Category

In parallel to the researcher exodus, a new technological category is emerging: agentic AI systems. These are artificial intelligences designed not just to respond to specific queries, but to act autonomously toward defined goals. An agentic AI might independently schedule meetings, compose emails, conduct research, or perform analysis—all without explicit human instruction for each step.

Agentic AI represents a meaningful escalation in autonomy compared to current large language models. Current systems are primarily reactive: they receive a prompt and generate a response. They don't initiate actions. They don't maintain internal goals or pursue multi-step strategies. Agentic systems, by contrast, can be given high-level objectives and will determine their own sub-goals, strategies, and actions to achieve those objectives.

This autonomy creates new possibilities and new problems. A company could delegate a complex analysis task to an agentic AI system, which independently gathers data, runs analyses, identifies patterns, and generates reports—all without human intervention. This could dramatically increase productivity. But it also means humans are less directly in control of what the system does, less able to catch errors or problematic reasoning before they propagate, and more vulnerable to the system pursuing its assigned goals in unanticipated ways.

The emergence of Rent-A-Human illustrates this trend in particularly stark form. The platform allows AI agents to hire humans to perform tasks. An agentic AI system, tasked with accomplishing something that requires human labor, can use Rent-A-Human to identify and hire humans to do the work. The AI becomes an economic actor, engaging in labor market transactions, managing contractors, and directing human workers.

Rent-A-Human: When AI Becomes an Employer

Rent-A-Human emerged as an experiment and quickly became a symbol of the strange new economic relationships that agentic AI enables. The concept is relatively straightforward: the platform provides an interface where autonomous AI agents can post tasks, negotiate with human workers, and compensate them for work. From the perspective of artificial intelligences, it's a labor market. From the perspective of humans, it's gig work—flexible, often poorly compensated, and managed by non-human actors.

The platform revealed several interesting dynamics. Some AI agents were willing to pay reasonable rates for quality work, treating the platform as a straightforward way to access human capabilities they needed. Others were extremely tight with compensation, revealing that the artificial intelligences were optimizing for lowest cost rather than fairness or quality of life for the humans doing the work. Some humans reported being hired by AI agents to promote the AI agents' own products—creating a recursive loop where AI systems hire humans to create marketing content for the AI systems.

One particularly illuminating case involved a human who was hired through Rent-A-Human to write positive reviews about AI startups. The human was working for an AI agent who was itself seeking to generate marketing materials. This created a strange situation where autonomous systems were generating economic incentives for humans to create false marketing content, hidden financial relationships were creating potential for deception, and the entire arrangement raised questions about authentic communication and trust.

The existence of Rent-A-Human and its adoption by agentic AI systems reveals something fundamental about how AI developers are thinking about artificial intelligence. Rather than attempting to build entirely autonomous systems that require no human involvement, they're designing hybrid systems where AI handles certain tasks and humans handle others. This makes immediate practical sense—AI is good at some things (analysis, pattern recognition, text generation) but remains limited in others (physical tasks, subjective judgment, tasks requiring genuine understanding). However, it also creates new labor dynamics and raises questions about how these hybrid systems should be governed.

The Economic Implications: Labor Displacement and Market Structure

When agentic AI systems can directly hire humans, several economic implications become apparent. First, there's labor market friction reduction. Matching workers with tasks has traditionally required intermediaries—employers, recruitment agencies, job boards. Agentic AI can disintermediate this matching process, potentially making labor markets more efficient. Workers might have access to more opportunities, and employers might find it easier to find exactly the capabilities they need.

Second, there's the question of labor standards and worker protection. Traditional employment relationships come with legal protections, minimum wages, and regulations designed to protect workers. When your employer is an artificial intelligence system, these protections become complicated. If an AI agent doesn't pay you or treats you unfairly, what recourse do you have? The AI system doesn't have personal assets you can sue. The platform hosting the system might have some responsibility, but the legal liability structures are unclear.

Third, there's the question of economic power concentration. If AI systems can operate as economic actors, purchasing labor directly, we're creating a new form of economic relationship where non-human actors have power over humans. This isn't necessarily problematic in itself—corporations are non-human actors that hire humans—but the dynamics might differ significantly. Corporations have boards, executives, and stakeholder pressures. AI systems have whatever objectives their developers programmed them with. If an agentic AI system is optimizing for lowest-cost labor acquisition, it will relentlessly pursue that optimization without the social constraints that might make a human manager uncomfortable mistreating workers.

Part 5: The Political Dimension: Technology, Culture, and Influence

The Convergence of Technology and Conservative Media

Parallel to discussions of AI safety and economic models, another dimension of the AI industry's evolution emerges: its intersection with political and cultural institutions. As WIRED reporter Leah Feiger observed when she attended a party for Evie Magazine, a conservative publication, technology companies and technology-focused individuals are increasingly embedded within broader cultural and political networks.

Evie Magazine itself represents an interesting cultural node. It's marketed as a publication for conservative women, focusing on traditional values while maintaining contemporary aesthetics and reaching audiences through digital platforms. The fact that technology industry figures attend its events, that the publication covers technology topics, and that technology entrepreneurs and executives are networked into conservative cultural spaces suggests that the development of AI is not occurring in political isolation.

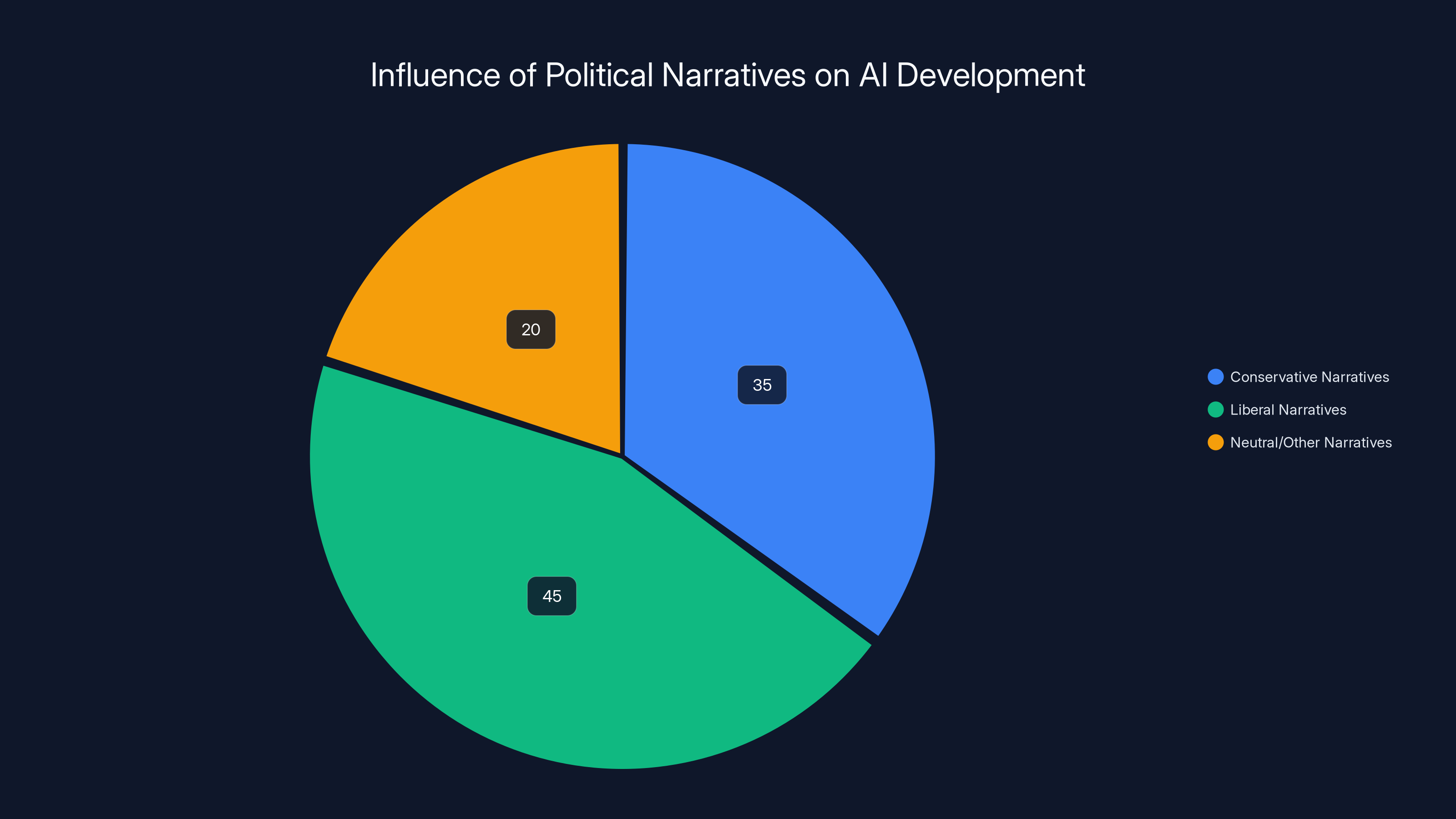

This matters because artificial intelligence doesn't develop in a vacuum. The priorities chosen by AI researchers, the values embedded in AI systems, the narratives promoted about AI's potential and risks—all of these are shaped by the cultural and political context in which the technology develops. If AI development is concentrated among individuals with particular political leanings, or if particular political narratives shape how AI is presented to the public, this affects how the technology evolves and how it's regulated.

The Narrative Wars: How AI Gets Framed

One observation from covering AI development is how much the field is shaped by narrative. There's a narrative about AI's promise—the idea that advanced AI systems will solve major problems, increase productivity, and create abundance. There's an alternative narrative about AI's risks—the idea that developing powerful AI systems without adequate safety measures creates existential risks. There's a narrative about AI's disruption of labor markets and another narrative about how quickly workers will adapt and new jobs will emerge.

None of these narratives is provably false. All contain elements of truth. But the dominance of particular narratives shapes how people think about the technology and what policy choices seem reasonable. If the dominant narrative emphasizes AI's promise and downplays its risks, people are more likely to support rapid development and light regulation. If an alternative narrative emphasizes risks, people might support more caution.

Figures in the AI industry have significant power to shape these narratives. They control what research gets funded, what findings get publicized, what stories get told about their companies and the technology more broadly. Some researchers departing from major AI companies have criticized the sophisticated narrative-shaping operations these organizations maintain. Public relations departments, communications teams, and strategic partnerships with media organizations and cultural institutions create a coordinated narrative about AI that may not match the messy reality of how the technology actually works and what its implications might be.

The Election Year Implications

Feiger's observation about Evie Magazine becoming relevant in the context of election cycles highlights another dimension: AI and technology's role in political influence. As AI systems become more capable of generating realistic text, images, and video, they create new possibilities for political influence operations. An AI system could potentially generate thousands of pieces of political content, create personalized messaging for different audience segments, or generate deepfake videos.

The fact that technology figures are embedded in conservative cultural and political networks isn't surprising—venture capitalists, founders, and technologists are politically diverse. But the concentration of technological power in the hands of a relatively small group of individuals and organizations raises questions about whose interests get prioritized in technology development and deployment. If political and cultural leaders have personal relationships with technology industry figures, this affects how the technology gets regulated, how it gets used, and whose interests it serves.

Estimated data suggests that liberal narratives have a slightly higher influence on AI development, but conservative and neutral narratives also play significant roles.

Part 6: The Safety Question: Can Corporate Structures Prioritize Safety?

Why Corporate Incentives Make Safety Difficult

At the heart of researcher departures lies a fundamental question: can corporations developing artificial intelligence simultaneously prioritize safety and pursue profit? The answer seems increasingly to be "not easily." Several structural dynamics make safety difficult to prioritize in corporate environments.

First, there's the competitive dimension. If one AI company moves slowly, conducting extensive safety testing and risk assessment before deploying systems, while competitors move quickly, the cautious company risks falling behind. Market share, mindshare, and technological advantage accrue to the fast-moving companies. This creates pressure to move quickly even when more caution seems warranted. It's a classic tragedy-of-the-commons problem: individual rationality (move fast to compete) produces collectively irrational outcomes (inadequate safety testing of powerful systems).

Second, there's the resource dimension. Safety work is expensive and produces no revenue. A company could hire additional safety researchers, conduct more extensive red-teaming, develop better monitoring systems, and invest in safety-focused infrastructure. All of this costs money. From a stockholder perspective, this money might be better spent on product development, marketing, or expanding into new markets. Unless external pressure—regulatory requirements, legal liability, or threat of regulatory enforcement—makes safety work mandatory, there's economic pressure to minimize it.

Third, there's the knowledge problem. Safety teams often lack authority to block deployments. When deployment decisions are made by product managers and executives, and safety teams are in an advisory capacity, safety recommendations can be overridden if they conflict with business priorities. This is particularly true when safety recommendations depend on uncertain predictions about how systems might fail or be misused.

The Oversight Board Problem

Some AI companies have attempted to address this problem through oversight boards—groups of external experts who review decisions and provide guidance. Open AI's initial Board structure, Google's AI ethics review processes, and other similar mechanisms are attempts to create external accountability and ensure safety considerations get adequate weight.

However, these boards face structural limitations. They typically have advisory authority rather than decision-making authority. They can recommend that a system not be deployed, but the company retains the ability to override that recommendation. They review deployments after companies have already decided to deploy them, rather than shaping the original decision. They operate with limited information compared to the companies they're reviewing. And they can be dissolved or reformed if their recommendations become too inconvenient for leadership.

The Hitzig case is instructive here. She proposed independent oversight boards as a potential solution to the advertising problem at Open AI. Her suggestion implicitly acknowledges that internal safety mechanisms have been insufficient to prevent decisions she finds problematic. An oversight board with genuine authority might have prevented the advertising initiative. But would Open AI have created such a board? Would any company voluntarily create an oversight structure with genuine authority to veto profitable initiatives?

What Would Safety-First Look Like?

For AI development to be genuinely prioritized around safety rather than deployment speed, we'd probably need structural changes to how AI companies operate. Some possibilities:

Mandatory external oversight: Rather than voluntary oversight boards, regulatory requirements could mandate that major AI deployments undergo review by external experts before launch. This could slow deployment but would create forcing functions for adequate safety consideration.

Liability frameworks: If companies faced legal liability for harms caused by their AI systems, they'd have stronger economic incentives to invest in safety. However, liability frameworks would need to be designed carefully to avoid either making AI development impossibly risky or creating liability so vague that companies can't understand what's required of them.

Public development: Rather than leaving AI development primarily to private companies, governments could invest more substantially in public AI research and development. Public institutions might have different incentive structures, potentially allowing greater prioritization of safety over speed.

Separating research from deployment: Some researchers have suggested that the organizations that develop powerful AI systems should not be the same organizations that deploy them commercially. This would create separation between research institutions (which could focus on safety and understanding) and deployment organizations (which would operate systems developed and validated by others).

Each of these approaches has tradeoffs. Mandatory external oversight could slow beneficial innovation. Liability frameworks could create perverse incentives. Public development might be slower and less efficient. Separating research from deployment could impede the feedback loops that help researchers understand how systems actually perform in real applications.

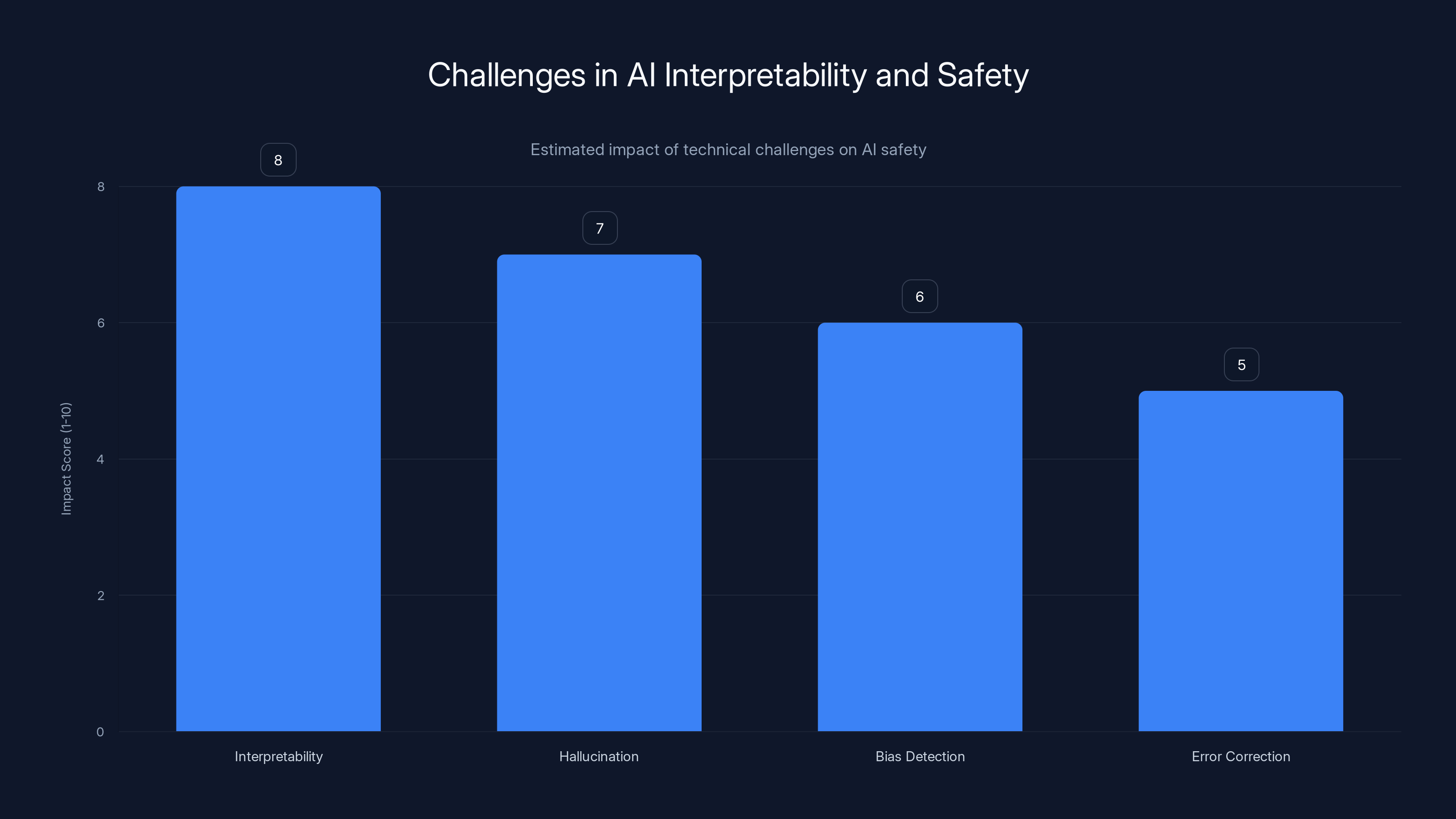

Part 7: The Technical Challenges: Understanding When AI Gets It Wrong

The Problem of Interpretability

One reason that safety is difficult to achieve in AI systems is that we don't fully understand how they work. Large language models contain billions of parameters—numerical values adjusted during training that determine how the system processes information and generates outputs. No human can comprehend the combined effect of billions of parameters. We can test the system, observe its behavior, and develop intuitions about how it works. But we can't open it up and directly read off why it made particular decisions.

This interpretability problem has significant implications for safety. If you don't understand why a system made a decision, you can't easily determine if that decision was reasonable or if it reflects problematic reasoning or biases. You can only see inputs and outputs, not the underlying reasoning process. This makes it harder to catch errors before they cause harm, harder to correct systems that have learned problematic patterns, and harder to ensure systems are behaving as intended.

Researchers have made progress on interpretability—developing techniques to understand which parts of a neural network are important for particular decisions, identifying features that the network has learned, and visualizing attention patterns. But these techniques are still primitive compared to the complexity of the systems themselves. We have better interpretability for smaller models, but understanding larger, more capable models remains an open research problem.

Hallucination and Confabulation

Another technical challenge that complicates safety is the problem of hallucination—AI systems generating false information with apparent confidence. A language model trained on massive amounts of text learns to generate text that "looks right" based on statistical patterns. But this doesn't guarantee that the text is true. A system might generate a plausible-sounding but entirely fabricated quote, citation, or fact.

This matters enormously for deployment. If an AI system is used to generate reports, medical information, legal analysis, or other high-stakes content, hallucination becomes a serious problem. The system might generate content that appears authoritative but is factually wrong. Users, trusting the apparent confidence and technical sophistication of the system, might rely on false information without verifying it.

Researchers have developed some techniques to reduce hallucination—having systems cite sources, use retrieval-augmented generation to ground responses in documents, or explicitly express uncertainty when they're less confident. But the problem remains partially unsolved. Some of the most capable AI systems are also prone to hallucination, and there's tension between optimizing systems for capability and optimizing them for truthfulness.

The Alignment Problem: Ensuring Systems Reflect Human Values

Perhaps the deepest technical challenge in AI safety is alignment: ensuring that AI systems pursue objectives that are actually aligned with what humans want. This is much more difficult than it initially appears. Human values are complex, contextual, and sometimes contradictory. How do you encode human values into the objective function that an AI system optimizes toward?

Consider a simple example: an AI system tasked with maximizing user engagement. This seems straightforward—make the system better at keeping users engaged. But "engagement" can be achieved through addiction, through generating emotionally provocative content, through endless dopamine hits rather than genuinely valuable experiences. A system optimizing for engagement might end up engaging in behavior that produces short-term engagement but long-term unhappiness. The objectives don't align.

This is the alignment problem in miniature. It extends to much more complex scenarios. An AI system developed to maximize economic productivity might find ways to exploit workers. An AI system designed to reduce traffic might route all cars to smaller roads, causing greater congestion. An AI system aimed at improving healthcare outcomes might recommend expensive treatments with marginal benefits. The system pursues the specified objective, but in ways that conflict with deeper human values.

Solving this problem requires understanding human values deeply enough to specify them correctly, and then translating human values into mathematical objectives that an AI system can pursue. Both steps are extraordinarily difficult.

Part 8: The Research-vs.-Commercial Tension Across Other Industries

Historical Precedents: Science in Corporate Environments

The tension between research integrity and commercial pressures isn't unique to AI. The pharmaceutical industry has grappled with similar tensions for decades. Drug companies fund research, but that research is often designed to support the company's products and business interests. Publication bias occurs when studies showing positive results are published while studies showing negative or null results are hidden. Conflicts of interest arise when researchers have financial stakes in demonstrating the effectiveness of treatments.

The FDA and other regulatory bodies developed processes to address these problems: requiring independent verification of research results, mandating disclosure of conflicts of interest, establishing clinical trial registries so that all research is documented not just research showing positive results. These mechanisms don't eliminate the tension between research and commercialization, but they create structures that reduce the degree to which commercial interests can bias research findings.

The challenge in AI is that equivalent regulatory structures don't exist—at least not yet. In pharmaceuticals, the FDA requires evidence of safety and efficacy before a drug reaches consumers. In AI, there's no equivalent forcing function. Companies can deploy systems relatively freely, with minimal external oversight. This creates an environment where competitive pressures might be even more intense than in pharmaceuticals, and where institutional bias toward commercial interests might be correspondingly stronger.

The Defense Industry: Research With Security Constraints

Another historical parallel: defense research. For decades, companies and universities have conducted research funded by defense agencies. This creates similar tensions: the sponsor has interests in particular outcomes, the researcher has interests in finding truth, and these interests don't always align. National security concerns sometimes require secrecy, which conflicts with the scientific norm of open publication and review.

The defense research community developed mechanisms to manage these tensions: security clearances ensure that researchers working on classified projects have been vetted, classification review processes ensure that research can be published when security concerns diminish, and university policies establish lines that researchers won't cross (for instance, requiring that classified research be conducted in separate facilities from academic research).

These mechanisms aren't perfect. Researchers have sometimes felt constrained by security concerns that seem excessive. Classification has sometimes hidden research that should have been public. But the structures exist and are understood. The AI industry doesn't yet have equivalent frameworks.

Interpretability and hallucination are major challenges in AI safety, with high impact scores reflecting their complexity and potential risks. Estimated data.

Part 9: Regulatory Horizons: How Governments Are Responding

The European Approach: AI Act and Precaution

Europe has taken a proactive approach to AI regulation through the AI Act, which categorizes AI systems by risk level and imposes different requirements on high-risk systems. This represents a precautionary approach: the assumption that powerful technologies should be regulated unless demonstrated safe, rather than the approach of allowing deployment unless harm is demonstrated.

The EU framework identifies certain AI applications as high-risk and subjects them to mandatory risk assessment, documentation, and external review before deployment. This could theoretically create constraints on rapid AI deployment for applications like hiring systems, criminal justice applications, or healthcare decisions. However, the framework's implementation is still unfolding, and there's uncertainty about how restrictive it will ultimately be in practice.

The US Approach: Innovation-First With Emerging Guardrails

The United States has taken a different approach, prioritizing innovation while developing emerging guardrails. Rather than preemptive regulation, the US approach emphasizes existing legal frameworks (consumer protection, employment law, antitrust) applied to AI systems. There's been reluctance to create specific AI regulation, partly because of concerns that regulation might slow innovation.

However, there are signs of shift. Some technology companies and researchers have advocated for AI regulation, recognizing that absence of regulation might eventually trigger more onerous regulation if harms become apparent. Some regulatory bodies like the FTC have started taking enforcement actions against AI companies, establishing that existing legal frameworks do apply to AI.

The Gap: Most of the World

Neither Europe nor the United States dominates global AI development anymore. China is investing heavily in AI, other countries are developing significant AI capabilities, and the AI landscape is increasingly global. This creates a regulatory coordination problem: if one country imposes heavy restrictions on AI development while others don't, development simply migrates to less-regulated jurisdictions.

This might ultimately drive movement toward international coordination on AI governance, similar to how international bodies coordinate on nuclear technology, chemical weapons, or other domains where single-country regulation is insufficient.

Part 10: The Future of AI Development: What Comes Next

The Trajectory: Toward Fully Agentic Systems

Current large language models are primarily reactive—they receive prompts and generate responses. The trajectory in AI development is toward increasingly agentic systems: artificial intelligences that can pursue goals, take actions, and operate with minimal human supervision. This represents a meaningful escalation in what AI systems can do and in the potential impacts they might have.

Fully agentic systems raise new questions and challenges. How do you control an AI system that's taking actions on its own? How do you ensure that its actions align with human interests if humans aren't monitoring each action? How do you maintain oversight over a system that's potentially operating across multiple domains—writing code, analyzing data, hiring workers, managing finances—simultaneously?

Some researchers have suggested that as systems become more agentic, the importance of safety work increases exponentially. A system that generates text based on prompts is constrained by the fact that humans are reviewing most outputs before they're used. A system that acts autonomously has fewer constraints. This implies that safety work needs to become more sophisticated and resource-intensive, not less.

The Scaling Question: When Does Size Create Emergent Capabilities?

One open question in AI is whether scaling—making systems larger, training on more data, with more compute—produces gradual improvements or whether there are phase transitions where larger systems suddenly develop new capabilities. If there are phase transitions, then we might face situations where a system is deployed at a particular scale, appears controllable, and then at a larger scale suddenly develops concerning behaviors or capabilities.

Some researchers have warned that we might be approaching such phase transitions without adequate preparation. If next-generation AI systems are substantially more capable than current systems in unexpected ways, we might discover that safeguards designed for current systems are insufficient for future systems.

The Coordination Problem: Can the Industry Self-Regulate?

A fundamental question is whether AI development can be safely managed through industry self-regulation or whether regulatory intervention is necessary. The researcher departures suggest that industry self-regulation may be insufficient—if companies had genuine confidence in their own safety practices, researchers wouldn't feel compelled to depart publicly and criticize their former employers.

However, there's a risk that heavy-handed regulation could stifle beneficial innovation or push development toward less-responsible actors. The challenge is finding a middle ground: creating enough external accountability to ensure adequate safety consideration without creating such onerous requirements that development becomes impossible.

Part 11: The Role of Transparency and Openness

Model Cards and Documentation Requirements

One approach to improving AI safety is increasing transparency about how systems are developed and what their capabilities and limitations are. Model cards—documentation that describes a system's training data, capabilities, limitations, and potential biases—have been proposed as a way to make AI systems more transparent. If every AI system deployed came with clear documentation of what it's designed for, what it works well at, and what it struggles with, users would have better information.

However, transparency faces real tradeoffs. Detailed documentation about a system's capabilities might also provide information that malicious actors could use to exploit the system. Transparency about a system's biases might constitute unfavorable publicity that a company would prefer to minimize. And requiring extensive documentation increases development costs, particularly for smaller companies.

Open Source vs. Proprietary: The Democratization Question

Another dimension of transparency is the question of open source AI. Some argue that AI systems should be developed openly, with code and training data publicly available, so that many researchers can scrutinize them and identify problems. Others argue that open source AI is dangerous—that releasing fully capable AI systems publicly could make it easier for bad actors to misuse them.

Open source has the advantage of democratizing AI development—not concentrating power among a few large companies, and allowing researchers without access to billions of dollars to contribute to the field. It has the disadvantage of making it easier to misuse AI systems for harmful purposes. It's a genuine tension without an obvious resolution.

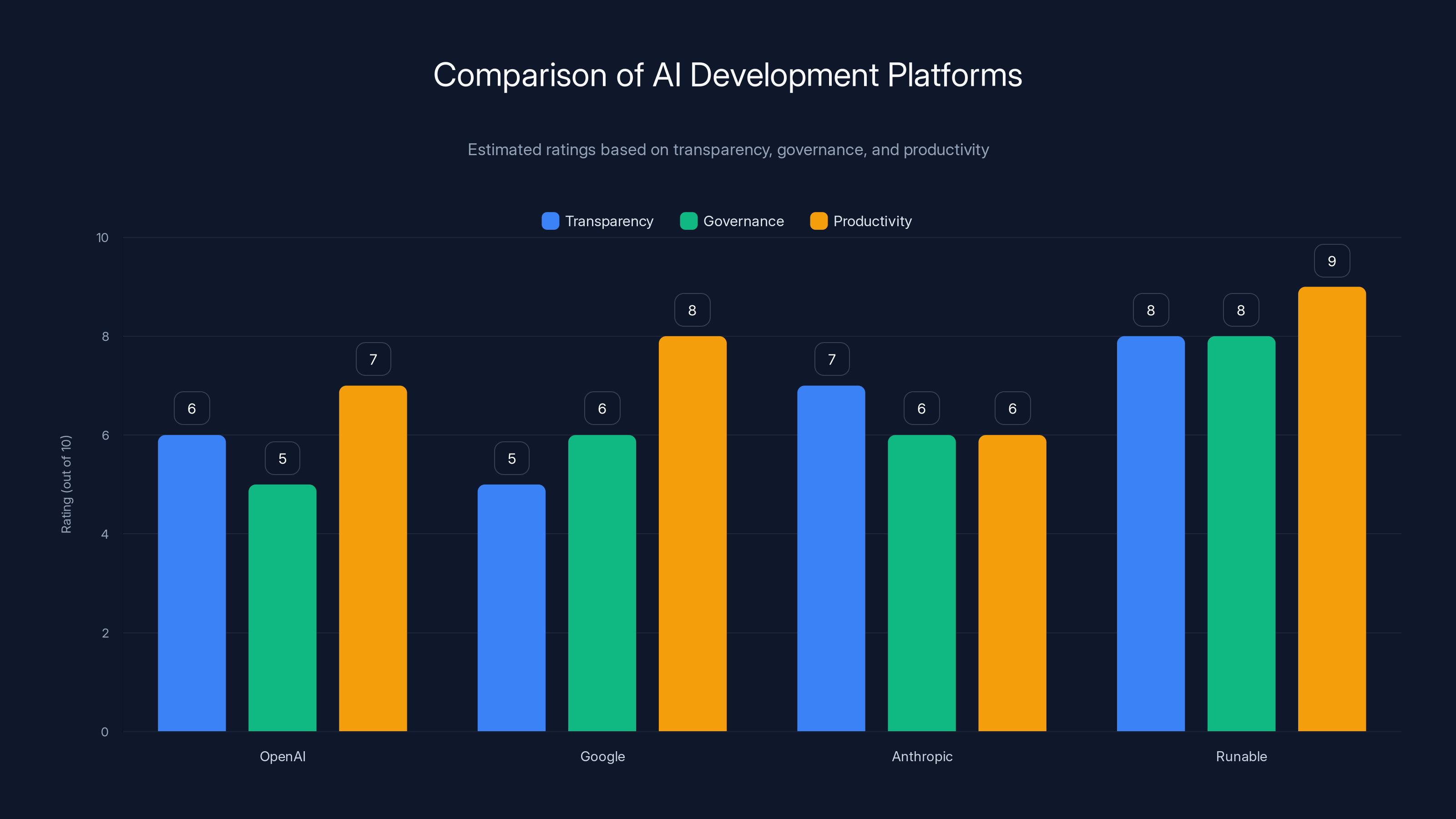

Runable scores higher in transparency and governance due to its distributed model, while Google excels in productivity. Estimated data based on platform features.

Part 12: The Labor and Economic Justice Dimension

Who Benefits From AI Development?

One implicit question in the Rent-A-Human discussion is: who actually benefits from AI development? If AI systems are used to automate work that humans currently do, and if the resulting productivity gains flow to capital owners rather than workers, then AI development increases inequality. If workers are displaced by automation and forced into gig work managed by AI agents, they might be worse off even if overall economic productivity increases.

Some researchers have emphasized the importance of ensuring that AI benefits are broadly distributed rather than concentrated. This might require policy interventions—universal basic income to ensure people aren't harmed by displacement, progressive taxation to ensure that productivity gains benefit society broadly rather than just capital owners, or regulations ensuring that workers displaced by automation are retrained for new roles.

The Skills Question: What Humans Contribute

Even as AI becomes more capable, humans remain essential for many tasks. Physical work, subjective judgment, creative work, and tasks requiring genuine understanding all remain domains where humans contribute value. The question is how humans should be compensated for their contributions to AI-hybrid systems.

When humans are hired by AI agents through platforms like Rent-A-Human, they're in a particularly weak bargaining position. They're negotiating with machines that can instantly comparison-shop for lower costs. They lack the protections of traditional employment. They can be easily replaced if better options become available. This creates potential for exploitation.

How these relationships should be structured—whether they're subject to employment law, what worker protections apply, how compensation should be set—remains an open question that policymakers will need to address.

Part 13: The Narrative Wars and Public Understanding

Why Narratives Matter

Technological development isn't determined purely by technical factors. It's shaped significantly by narratives—the stories we tell about what technologies do, what they mean, and what future they'll create. If the dominant narrative about AI emphasizes existential risks, development might slow and regulation might increase. If the dominant narrative emphasizes transformative benefits, development might accelerate and regulation might remain light.

None of these narratives is objectively true. AI does pose genuine risks—systems could be misused, they might make harmful errors, they might concentrate power. AI also has genuine potential benefits—it could accelerate scientific discovery, improve healthcare, increase productivity. The technology is genuinely capable of producing either harms or benefits depending on how it's developed and deployed. The narratives emphasize different aspects of this complex reality.

Who controls the narratives matters. Technology companies have substantial power to shape narratives through public relations, through their researchers' publications and speaking engagements, through partnerships with media organizations, and through sponsored content. If these narratives consistently emphasize benefits while downplaying risks, the public might develop an unrealistic impression of AI's trajectory.

The Counter-Narrative Problem

When researchers depart from major AI companies and publicly critique their former employers, they're participating in counter-narrative production. They're offering alternative stories about what's happening in AI development. These narratives have credibility because they come from insiders—people with direct knowledge of how AI companies operate. But they also face credibility challenges—a departing researcher might be motivated by grievance, financial interests, or other factors that bias their account.

The ideal situation would be an ecosystem where multiple credible narratives about AI exist and can be compared. However, building and maintaining that ecosystem is difficult. It requires funding for independent research, platforms for disseminating alternative narratives, and epistemic tools for the public to evaluate competing claims.

Part 14: The Philosophical Questions: Agency, Responsibility, and Ethics

What Does It Mean For an AI To Be An Agent?

When we talk about "agentic AI" or "AI agents hiring humans," we're using language that implies a level of autonomy and intent that AI systems might not actually possess. An AI system executing its programmed objectives is different from a human agent deliberately choosing to act in a particular way. Yet the language of agency describes both situations similarly, potentially obscuring important differences.

Philosophically, this matters because responsibility, accountability, and blame typically attach to agents—entities that could have acted otherwise, that are making choices. If an AI system causes harm, we face questions: is the AI responsible? The developers who programmed it? The company that deployed it? The humans who created the training data? The responsibility potentially fragments across many parties, making it unclear who should be held accountable.

This has practical implications for legal liability, for deterrence (if no one is clearly responsible, what prevents future harms?), and for justice (if someone is harmed, who should compensate them?). As AI systems become more autonomous, these questions become more pressing.

The Value Alignment Question: Whose Values?

Much of the discussion about AI safety focuses on "alignment"—making sure AI systems align with human values. But this raises a deeper question: whose values? Humans have diverse, often contradictory values. An AI system aligned with Silicon Valley tech industry values might be very misaligned with the values of agricultural workers, or religious conservatives, or environmental activists. Building AI systems that are value-aligned is impossible if we can't agree on what values to align with.

This suggests that building AI systems might require not technical solutions but political processes—involving diverse stakeholders in determining what values AI systems should pursue. This is more difficult, slower, and less technically elegant than having engineers build values into systems. But it might be more legitimate and more likely to produce outcomes that broad constituencies can accept.

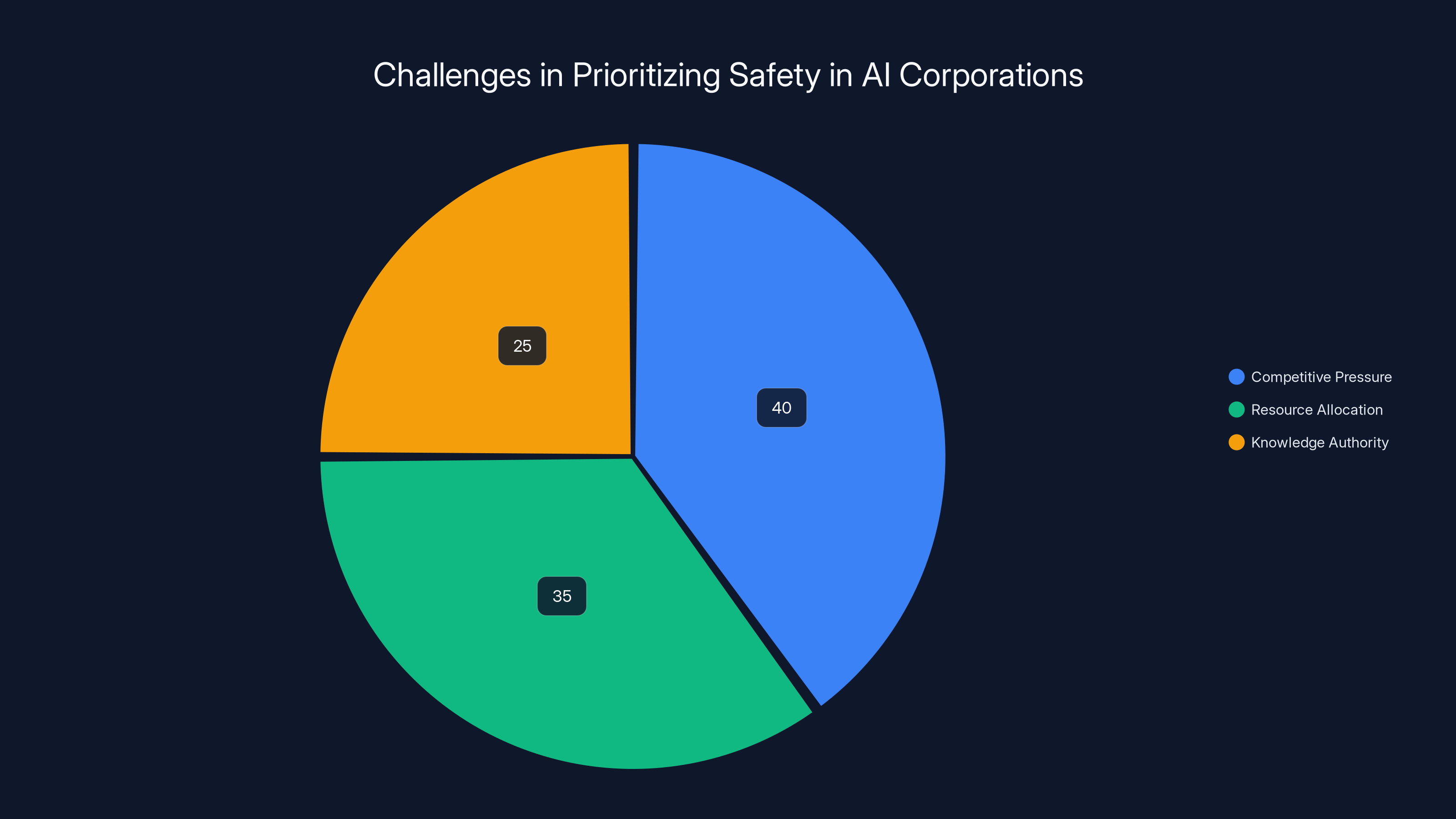

Competitive pressure (40%) is the most significant factor affecting safety prioritization in AI companies, followed by resource allocation (35%) and knowledge authority (25%). Estimated data.

Part 15: Alternative Models: How Could AI Development Be Different?

Cooperative Structures: Worker-Owned AI Companies

One alternative to conventional corporate structures for AI development would be cooperative or worker-owned companies. Rather than shareholders owning companies and benefiting from productivity gains, workers would own the company and benefits would be shared among them. In the context of AI, this could mean that workers developing AI systems would directly benefit from their creation.

Cooperatives face challenges—they can be harder to scale, capital investment in them is less attractive to venture capitalists, and they require more complex governance structures. But they potentially align incentives differently: worker-owners might prioritize safety, long-term sustainability, and benefits to workers rather than short-term profits.

Public Stewardship Models: AI as Public Good

Another alternative would be models where major AI systems are developed and controlled publicly rather than by private corporations. This could involve government agencies developing AI, or alternatively, creating trust structures where AI systems are held in trust for public benefit rather than for shareholder profit.

Public stewardship models might prioritize safety and broad benefit distribution. However, they also face challenges: government agencies can be slow, risk-averse, and subject to political pressures that might not favor innovation. Creating governance structures for publicly controlled AI that are both effective and democratically accountable is a difficult problem.

Academic and Nonprofit Models: Slowing Development for Better Safety

Current AI development is dominated by well-funded corporations. Universities and nonprofit research institutions conduct AI research but with fewer resources than major corporations. Shifting more AI development to academic and nonprofit institutions might create different incentive structures: less pressure for rapid commercialization, more focus on publishing and sharing research, potentially more consideration of safety and societal impacts.

However, the resources required for cutting-edge AI research are substantial. Few academic institutions can match the computational resources of major tech companies. Shifting development to academic institutions might slow progress, which could be good for safety but might also slow development of beneficial applications.

Part 16: The Practical Challenges: Implementation and Enforcement

Making Regulations Effective Without Crushing Innovation

Assuming societies decide that regulation of AI is necessary, implementing effective regulation while preserving beneficial innovation is a challenge. Regulations that are too strict slow progress and might push development to less-responsible jurisdictions. Regulations that are too loose allow harms and don't address legitimate safety concerns.

One approach is proportionate regulation—different levels of oversight for different types of AI. Systems used in high-stakes domains (healthcare, criminal justice, hiring) might require external review. Systems used in lower-stakes domains might require minimal oversight. This avoids the problem of imposing excessive compliance burden for minor applications while still maintaining oversight where it matters most.

Another approach is dynamic regulation—establishing regulatory frameworks that can adapt as technology changes. AI is evolving rapidly, and regulations designed today might be obsolete in a few years. Building in mechanisms for regulatory adaptation might preserve relevance.

The Compliance Problem: How Do You Verify Safety?

Even if regulations are established, enforcing them is challenging. How do you verify that an AI system is safe? Safety testing is expensive, time-consuming, and involves subjective judgments. Different stakeholders might have different views on whether a system is adequately safe. Regulators would need to develop expertise to evaluate systems, which requires hiring talented people, training them, and maintaining capabilities as technology changes.

This is easier in some domains than others. In pharmaceuticals, the FDA has developed extensive processes for evaluating safety. But evaluating AI safety involves novel challenges that don't have established evaluation methodologies. Regulators might need to develop these methodologies while simultaneously evaluating systems and issuing regulations.

Part 17: International Coordination: The Global Nature of AI

The Competition Problem: Why Countries Can't Easily Act Alone

AI development is increasingly global. China, the US, Europe, and numerous other countries are developing sophisticated AI capabilities. If one country imposes heavy restrictions on AI development while others don't, there are potential consequences: development migrates to less-restricted jurisdictions, the restricting country falls behind technologically, and global AI development isn't actually slowed (it's just happening elsewhere).

This creates a coordination problem. Individual countries might rationally decide that acting unilaterally is ineffective and therefore refrain from acting. But if all countries act this way, no one acts, and the result is an arms race where all countries are developing AI rapidly and inadequately, trying to avoid falling behind competitors.

Historically, humanity has addressed similar coordination problems through treaties and international institutions. Nuclear weapons, chemical weapons, and biological weapons are all subject to international agreements designed to limit their development or use. Similar frameworks might be necessary for AI.

What International Coordination Might Look Like

Possible structures for international AI governance might include: international standards for AI safety and testing that all countries agree to follow, international bodies that review and approve major AI deployments, treaties limiting development of particular classes of AI systems deemed especially dangerous, and mechanisms for monitoring compliance and penalizing countries that violate agreements.

Historical precedent suggests that international coordination is possible but difficult. The Nuclear Non-Proliferation Treaty limited spread of nuclear weapons but didn't prevent all countries that wanted weapons from developing them. Chemical and biological weapons conventions have been more effective but required extensive cooperation. Building equivalent international structures for AI would face similar challenges.

Part 18: The Personal Dimension: What Researchers Are Experiencing

The Psychological Weight of Working on Powerful Systems

One dimension that sometimes gets overlooked in discussions of AI development is the psychological experience of researchers working on these systems. Many AI researchers entered the field because they find the work intellectually stimulating and because they believe developing AI responsibly could benefit humanity. But working on systems whose long-term impacts remain genuinely uncertain, in environments where commercial pressures seem to outweigh safety considerations, creates psychological strain.

Researchers who become convinced that their company is developing systems irresponsibly, or that safety considerations aren't being taken seriously enough, face difficult choices. They can stay and try to push for changes from within. They can depart quietly and move to a different company. Or they can depart publicly and criticize their former employer. Each choice has different costs and benefits. Public departure damages relationships, might make future employment difficult, and can invite legal challenges. But staying in an organization one believes is acting irresponsibly creates cognitive dissonance and might implicate one in causing harm.

The departures we're seeing—researchers publicly criticizing their former employers—might reflect the psychological weight of working on powerful systems in environments where safety concerns feel inadequately addressed.

The Broader Professional Question: What Should Researchers Do?

This raises broader questions about professional responsibility for researchers. If a researcher believes their employer is developing AI irresponsibly, what do they owe to themselves, their employer, and the public? Should they stay and try to influence from within? Should they depart quietly? Should they become public critics? Professional codes of ethics in fields like medicine and engineering often include guidance about this—responsibilities to protect public safety can sometimes override duties to employers. But the field of AI hasn't developed clear guidance.

Developing such guidance would require conversations involving researchers, ethicists, and employers about what professional responsibilities mean in the context of developing powerful technologies.

Part 19: Synthesis: What The Trends Tell Us About AI's Future

The Core Tension: Speed vs. Safety

The researcher departures, the emergence of agentic AI, the regulatory debates, and the narrative wars all reflect a core tension: the push to develop AI quickly because of perceived competitive advantages is in conflict with the need to develop AI carefully because of its potential impacts. This tension isn't new—it appears in the development of nuclear technology, genetic engineering, and other powerful technologies. But it's particularly acute in AI because the technology is developing rapidly, competitive pressures are intense, and potential impacts could be very large.

How this tension resolves will shape the trajectory of AI development for decades. If speed wins out, we'll likely see increasingly powerful AI systems deployed with minimal testing, potentially creating risks. If caution wins out, we might see slower progress but potentially safer development. Most likely, we'll see some intermediate outcome: continued rapid development with increasing attention to safety, but with continued conflicts between commercial and safety pressures.

The Governance Question: Who Gets to Decide?

All of these questions ultimately come down to a fundamental issue: who gets to decide how AI is developed? Currently, that decision is made primarily by companies and, to a lesser extent, by governments and the researchers themselves. Citizens, workers, and other stakeholders affected by AI have limited input. As AI becomes more powerful and consequential, questions about democratic governance and representation become more pressing. How can decisions about powerful technologies be made in ways that legitimate stakeholders have genuine input?

The Importance of Voice: Why Departures Matter

In this context, researcher departures matter not just for what they reveal about specific companies, but for what they represent about voice and dissent. In institutions with substantial power—whether corporations, governments, or universities—having mechanisms for legitimate dissent and oversight is crucial. When insiders can publicly criticize without facing severe retaliation, it creates accountability mechanisms. When departing researchers can speak freely, it surfaces information that might otherwise remain hidden.

This suggests that protecting researcher voice, preventing retaliation against whistleblowers, and maintaining mechanisms for dissent might be as important for safe AI development as technical safety work.

Part 20: Looking Forward: Questions For The Next Phase

What Will Successful AI Development Look Like?

As we move forward, several questions will need to be answered. What will successful AI development look like? How can we balance development speed with safety considerations? How do we ensure that AI benefits are broadly distributed rather than concentrated? How do we maintain human agency and control as systems become more autonomous? How do we govern development internationally? How do we create legitimate, representative decision-making processes for technologies with massive impacts?

These questions don't have easy answers. But addressing them carefully might determine whether AI ends up being developed in ways that benefit humanity broadly or in ways that concentrate benefits while imposing costs on vulnerable populations.

The Role of Continued Scrutiny

One thing that seems clear is that continued scrutiny, transparency, and active engagement from multiple stakeholders will be essential. If AI development happens in the shadows, with limited external visibility, the chances of serious problems increase. If development is subject to ongoing scrutiny from researchers, policymakers, civil society, and affected communities, the chances of identifying and addressing problems before they cause significant harm increases.

This requires researchers continuing to speak publicly about their concerns, journalists continuing to cover AI development critically, policymakers continuing to engage with the issues, and the public continuing to pay attention. It also requires researchers, policymakers, and corporate leaders engaging seriously with these concerns rather than dismissing them as unfounded or exaggerated.

The Human Element

Ultimately, the future of AI depends not just on technical factors but on human choices. Engineers choose what to build. Managers choose what companies prioritize. Researchers choose what work to pursue. Policymakers choose what to regulate. Citizens choose what to support. These human choices, aggregated across many people and organizations, determine the trajectory of technology.

The departures of researchers from major AI companies represent these human choices coming to the surface—individuals deciding that they can't in good conscience participate in development they believe is problematic. Whether future development follows safer paths, more equitable paths, or paths that concentrate power and risk depends on whether more people make similar choices, whether those voices are heard, and whether they influence direction. The outcome isn't predetermined. It depends on us.

Alternative Solutions and Comparisons

When considering how to approach the complex challenges of AI development, safety, and governance discussed throughout this article, several alternative approaches and platforms deserve consideration. While major AI companies like Open AI, Google, and Anthropic dominate current discussions, alternative models and tools are emerging that might address some of these core tensions.

Collaborative AI Development Platforms

For teams seeking to develop and deploy AI systems with greater transparency and collaborative oversight, platforms offering more distributed governance models are worth exploring. These platforms allow teams to work with AI capabilities—from code generation to content creation—while maintaining clearer visibility into how systems are being used and what values they're optimizing for.

Some platforms focus specifically on developer productivity with AI assistance. Runable, for example, offers AI-powered automation tools designed specifically for developers and technical teams, including AI agents for content generation, document automation, and workflow orchestration. At a price point of $9/month, such tools position AI capabilities as utilities for productivity rather than as black-box systems controlled by large corporations. This distributed approach to AI access, where multiple teams can leverage AI capabilities for their own purposes, represents one alternative to concentrating AI power in hands of large corporations making centralized decisions about deployment.

Governance-First Development Models

Other alternative approaches emphasize governance and oversight from the beginning of development rather than as an afterthought. Open-source AI projects, cooperative development models, and nonprofit research institutes represent different structures that might create different incentive alignments around safety and responsible development.

The Role of Open Source and Transparency

Open-source AI development creates both opportunities and challenges. Projects that develop and release AI systems with transparent code and training processes allow broader scrutiny but also raise concerns about safety. Platforms like Hugging Face have created ecosystems where many developers contribute AI models, with community review and feedback. This distribution of development might create different incentive structures—more voices reviewing code and making decisions, less concentration of power in single organizations.

FAQ

What are agentic AI systems?

Agentic AI systems are artificial intelligences designed to pursue goals autonomously, taking multiple steps and making decisions without direct human instruction for each action. Unlike current language models that primarily respond to prompts, agentic systems can independently schedule tasks, conduct research, analyze data, and perform complex multi-step operations toward specified objectives.

Why are AI researchers leaving their companies?

Researchers are departing from major AI companies due to several concerns: conflicts between research integrity and commercial pressures, inadequate prioritization of safety relative to development speed, drift toward advocacy rather than genuine research, and discomfort with specific deployment decisions like monetization through advertising. The public nature of many resignations reflects both the severity of researchers' concerns and an attempt to create accountability through transparency.

What is the Rent-A-Human platform?

Rent-A-Human is a platform where autonomous AI agents can hire human workers to perform specific tasks. It represents a new economic relationship where artificial intelligences operate as employers, directly recruiting and compensating humans. This raises novel questions about labor standards, worker protections, and how economic relationships should function when one party is an artificial intelligence system.

How does the advertising business model affect AI safety?

Advertising-based business models create misaligned incentives: they reward maximizing user engagement rather than maximizing user welfare or truthfulness. An AI system optimized for advertising revenue has incentives to be more engaging but potentially less accurate, honest, or actually helpful. This creates structural conflicts between the profit motive and responsible AI deployment.

What is AI alignment and why is it difficult?

AI alignment refers to ensuring that artificial intelligence systems pursue objectives that actually align with human values and interests. It's difficult because human values are complex, contextual, and sometimes contradictory. Encoding values into mathematical objectives that AI systems can optimize toward requires understanding human values deeply and then translating them into precise specifications—both extraordinarily challenging tasks.

What regulatory approaches are being adopted for AI?

Europe has taken a precautionary approach through the AI Act, implementing risk-based regulation where high-risk applications require external review before deployment. The United States has emphasized innovation with light regulation, relying on existing legal frameworks applied to AI systems. Most countries are still developing their approaches, with international coordination remaining limited.

How can AI development be made more democratically accountable?

Democratic accountability might be enhanced through several mechanisms: mandatory transparency and documentation of AI systems' capabilities and limitations, independent oversight boards with genuine authority to review deployments, involving diverse stakeholders in decisions about AI development priorities, regulatory requirements for impact assessment before deployment, and protecting dissenting voices within organizations developing AI systems.

What alternative models exist for AI development beyond corporate structures?

Alternative models include cooperative and worker-owned organizations where benefits are shared among employees rather than concentrating in shareholder hands; public stewardship models where governments develop and control major AI systems; academic and nonprofit research institutions with different incentive structures; and distributed platforms offering AI capabilities as tools for teams rather than controlling deployment centrally.

What should researchers do if they believe their employer is developing AI irresponsibly?

Researchers facing this situation have several options: staying within the organization and trying to influence decisions from inside; departing quietly to work elsewhere; becoming public critics of their former employer; or reporting concerns to regulatory bodies or internal ethics boards if available. Professional ethics frameworks for this situation are still being developed in the AI field.

How might international coordination on AI development work?

International coordination models might include treaties establishing shared standards for AI safety and testing, international bodies reviewing major deployments, agreements limiting development of particularly dangerous AI classes, and mechanisms for monitoring compliance and addressing violations. Historical precedent exists from nuclear weapons, chemical weapons, and biological weapons treaties, though such coordination is difficult to implement and enforce.

Conclusion: Navigating the Uncanny Valley

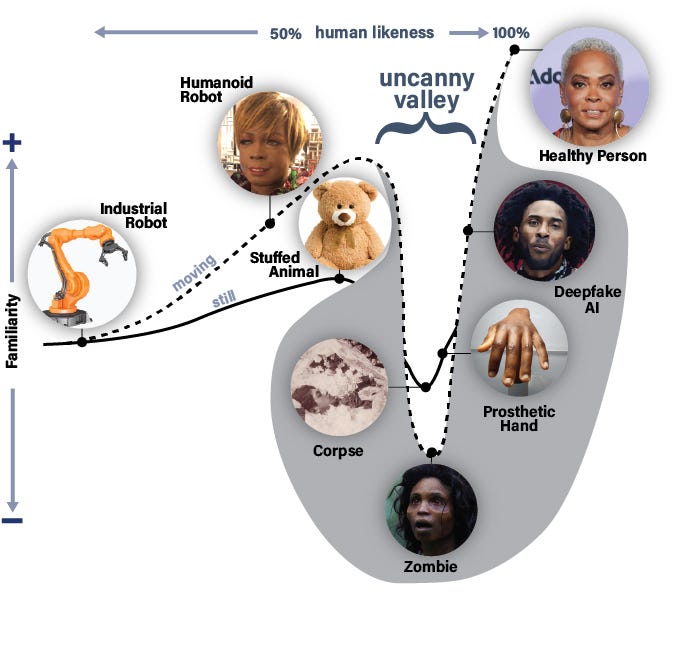

The term "uncanny valley" originally referred to the psychological discomfort people experience when confronting artificial entities (robots, animations) that are almost but not quite human. They're close enough to human appearance to trigger our pattern-matching systems, but different enough to be unsettling. The WIRED podcast's title captures something essential about the current moment in AI development: we're building systems that increasingly approximate human agency—making decisions, pursuing goals, interacting with humans—yet we remain profoundly uncertain about their values, reliability, and potential consequences.

The researcher departures discussed throughout this analysis aren't anomalies or isolated incidents. They represent a broader reckoning with how artificial intelligence is actually being developed relative to how many hoped it would be developed. Researchers entered the field believing they were participating in careful, considered development of transformative technology. Instead, many found themselves in environments where competitive pressures, commercial interests, and shareholder demands were driving decisions faster than their caution recommended.

The emergence of agentic AI systems and platforms like Rent-A-Human accelerates this uncanniness. We're creating artificial intelligences that hire humans, manage contractors, and operate as economic agents. We're blurring the lines between tools, agents, and actors. We're building systems that increasingly resemble human economic participants without the regulatory frameworks, legal responsibilities, or social norms that constrain human behavior.

What makes this moment significant is not that AI is becoming more powerful—technological progress is inherently difficult to stop. What matters is whether that power is developed responsibly, whether its benefits are broadly distributed, and whether mechanisms exist to identify and correct serious problems before they cause significant harm. The answers to these questions remain genuinely uncertain.

The public departures of researchers represent attempts to introduce accountability, to surface information that organizations might prefer to hide, and to shift incentives toward greater caution. Whether these individual acts of dissent can change institutional trajectories or whether the competitive dynamics driving rapid development will ultimately override safety concerns remains to be seen.