Apple's $2 Billion Q.ai Acquisition: Silent Speech Recognition and the Future of Human-Computer Interaction

Apple just made its second-largest acquisition ever, and almost nobody noticed. On March 2025, the company announced it was buying Q.ai, a four-year-old AI audio startup, for a reported $2 billion. If you haven't heard of Q.ai before, that's fine. Most people haven't. But what the company does, and what Apple plans to do with it, could fundamentally change how you interact with your devices.

Here's the thing: most of us talk to our phones. We ask Siri questions. We set reminders by voice. We use AirPods to take calls. But what if you didn't have to speak at all? What if your device could understand you through facial expressions, lip movements, and muscle micro-contractions invisible to the naked eye? That's not science fiction. That's Q.ai's technology, and Apple is betting $2 billion that it's the future.

The acquisition signals something bigger than a single technology. It's Apple acknowledging that voice assistants, as they currently exist, are outdated. Siri has been around since 2011. It works, mostly. But it requires you to speak out loud, which is inconvenient in quiet offices, on silent buses, or in situations where privacy matters. Q.ai's silent speech technology solves that problem by letting you communicate with AI through subtle physical cues your device can detect and interpret.

Apple's hardware chief, Johnny Srouji, called Q.ai "a remarkable company that is pioneering new and creative ways to use imaging and machine learning." Translation: Apple sees this as a core technology for the next decade of computing. This isn't a technology acquisition. It's a strategic bet on invisible interfaces.

Why does this matter to you? Because within two to three years, your iPhone, AirPods, Mac, and Vision Pro could all understand what you want without you saying a word. No more fumbling for voice commands. No more embarrassing "Hey Siri" moments in crowded places. Just intention, recognition, and action. Silent. Seamless. Invisible.

Let's break down what Q.ai actually does, why Apple paid so much, and what this means for the future of AI-powered devices.

TL; DR

- **Apple spent 3 billion Beats acquisition in 2014

- Silent speech technology: Q.ai's patents use facial expression recognition and optical sensors to let users control AI without speaking

- Siri gets smarter: The technology will likely integrate into Siri, AirPods, Vision Pro, iPhone, and Mac devices

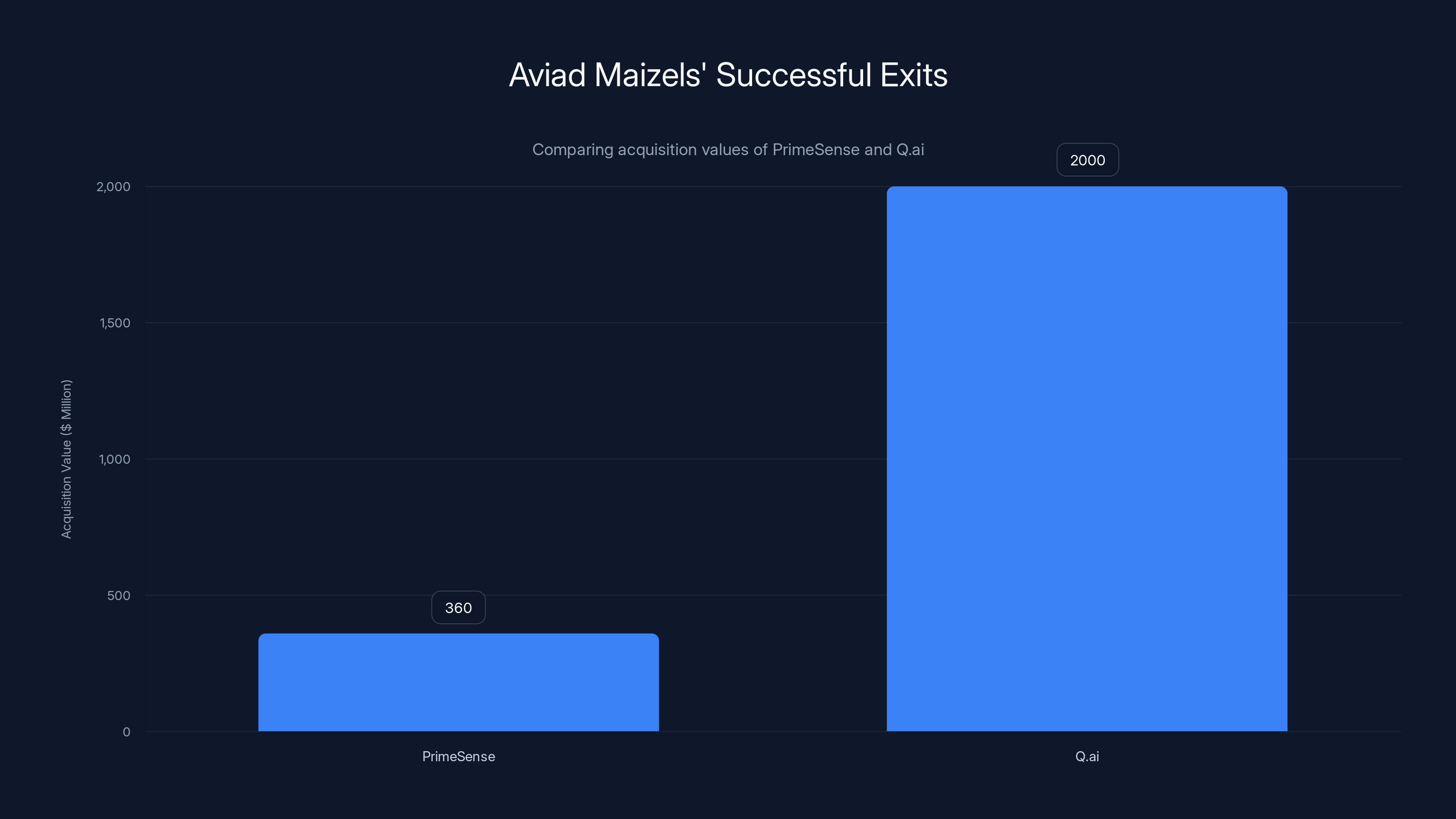

- Founder pedigree: Q.ai was founded by Aviad Maizels, who previously founded Prime Sense (acquired by Apple in 2013, tech used in iPhone's Face ID)

- Game-changing interface: This shifts human-computer interaction from voice-based to expression-based, enabling silent, private communication with AI

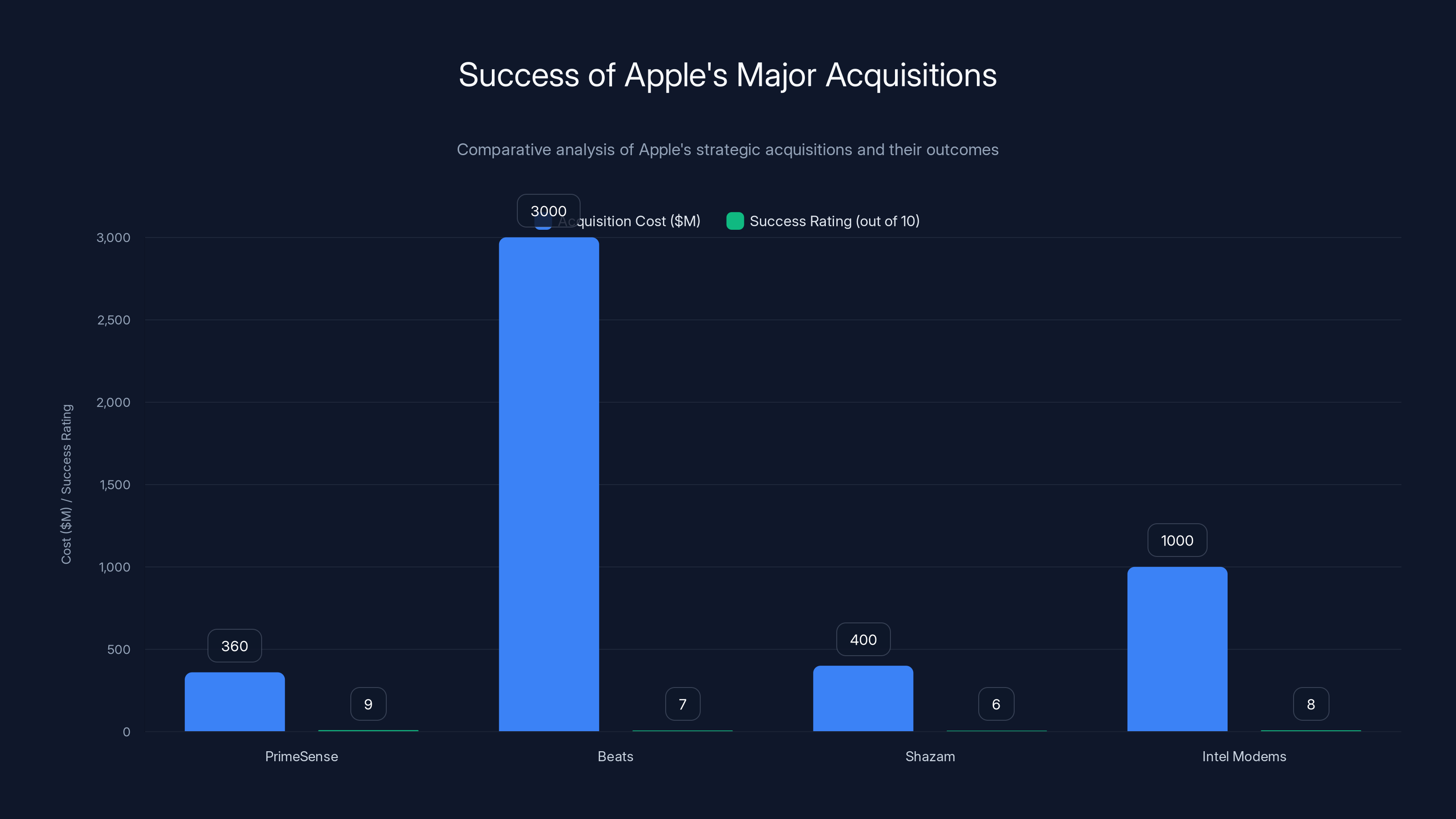

Apple's acquisitions often lead to successful integration and strategic benefits. PrimeSense and Intel modems are particularly noted for their high success ratings, contributing significantly to Apple's technological advancements. Estimated data.

What Is Q.ai? The Silent Speech Startup Apple Just Acquired

Q.ai is a four-year-old artificial intelligence startup founded in 2021 by Aviad Maizels and a team of imaging and machine learning specialists. The company's core focus is on something most people don't think about: how humans naturally communicate when they're not speaking.

The startup didn't invent silent speech recognition from scratch. Researchers have been studying it for years. But Q.ai's breakthrough was making it practical, fast, and accurate enough to work in real-world consumer devices. The company developed proprietary optical sensor technology that can detect minute facial movements, muscle contractions, and lip micro-movements to infer what someone intends to say or do.

Think of it like this: when you think about smiling, your face muscles contract slightly before you actually smile. When you're about to speak, your lips move in specific patterns. When you're concentrating, your eyes focus in particular ways. Q.ai's AI models learned to recognize thousands of these micro-movements and correlate them with specific intentions or commands.

The patents Q.ai filed describe technology that could be embedded in headphones or glasses. Imagine AirPods with tiny infrared sensors that can read the micro-movements around your eyes and mouth. Or Vision Pro with built-in facial tracking that understands not just what you're looking at, but what you intend to do. That's the direction Q.ai was heading, and now Apple owns the roadmap.

The startup was founded by Aviad Maizels, who previously founded Prime Sense in 2005. Prime Sense built the depth-sensing technology that powered Microsoft's Kinect sensor for Xbox 360. When Kinect launched in 2010, it was revolutionary. It let gamers control their console through body movements, no controller required. Apple saw the potential and bought Prime Sense in 2013 for an undisclosed amount (estimated around $360 million). Apple repurposed Prime Sense's imaging technology to build the infrared sensors and structured light systems that power Face ID on modern iPhones.

So Maizels has a track record: take imaging technology, make it consumer-grade, and create new ways for humans to interact with machines. Q.ai is his second act.

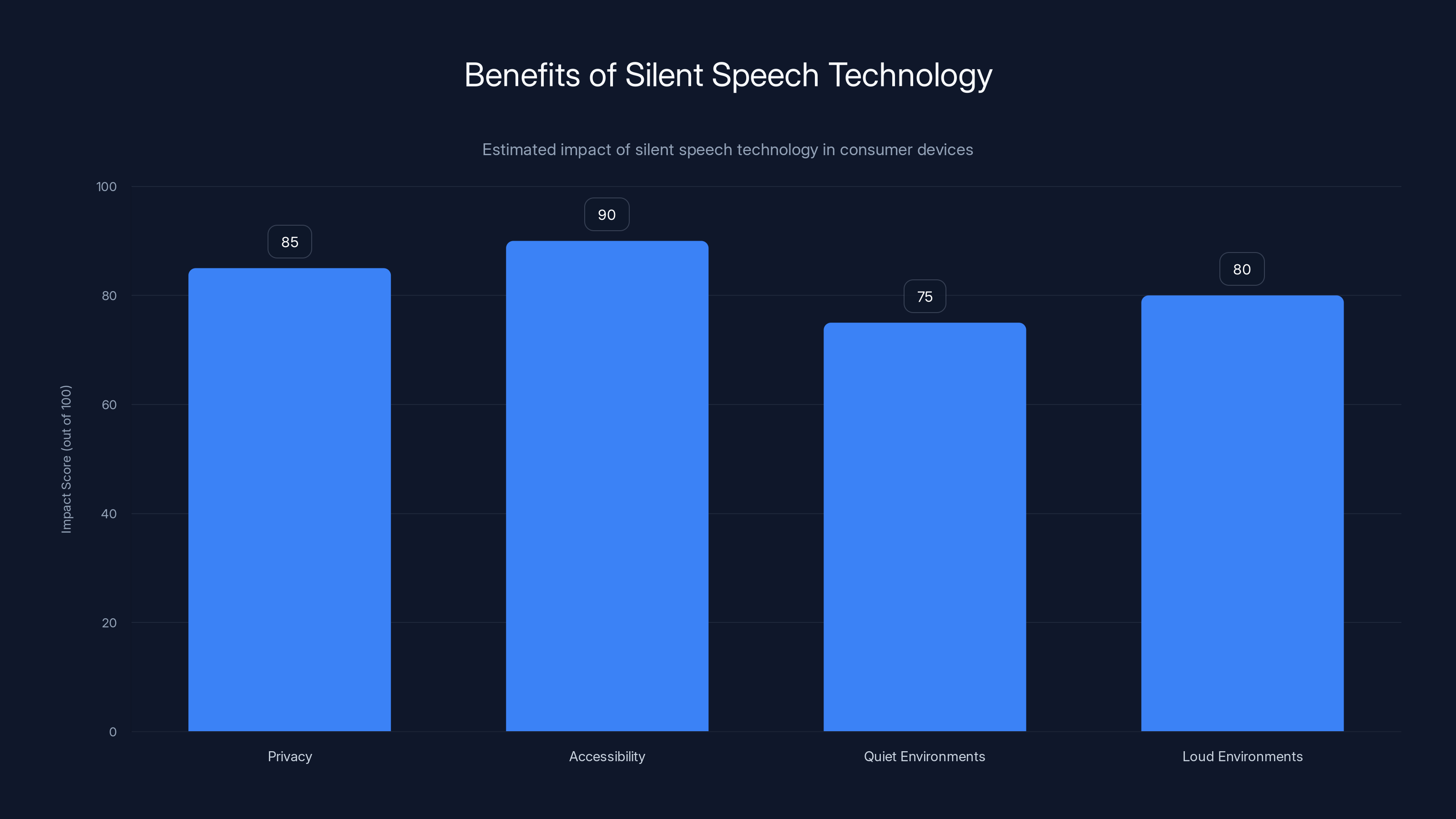

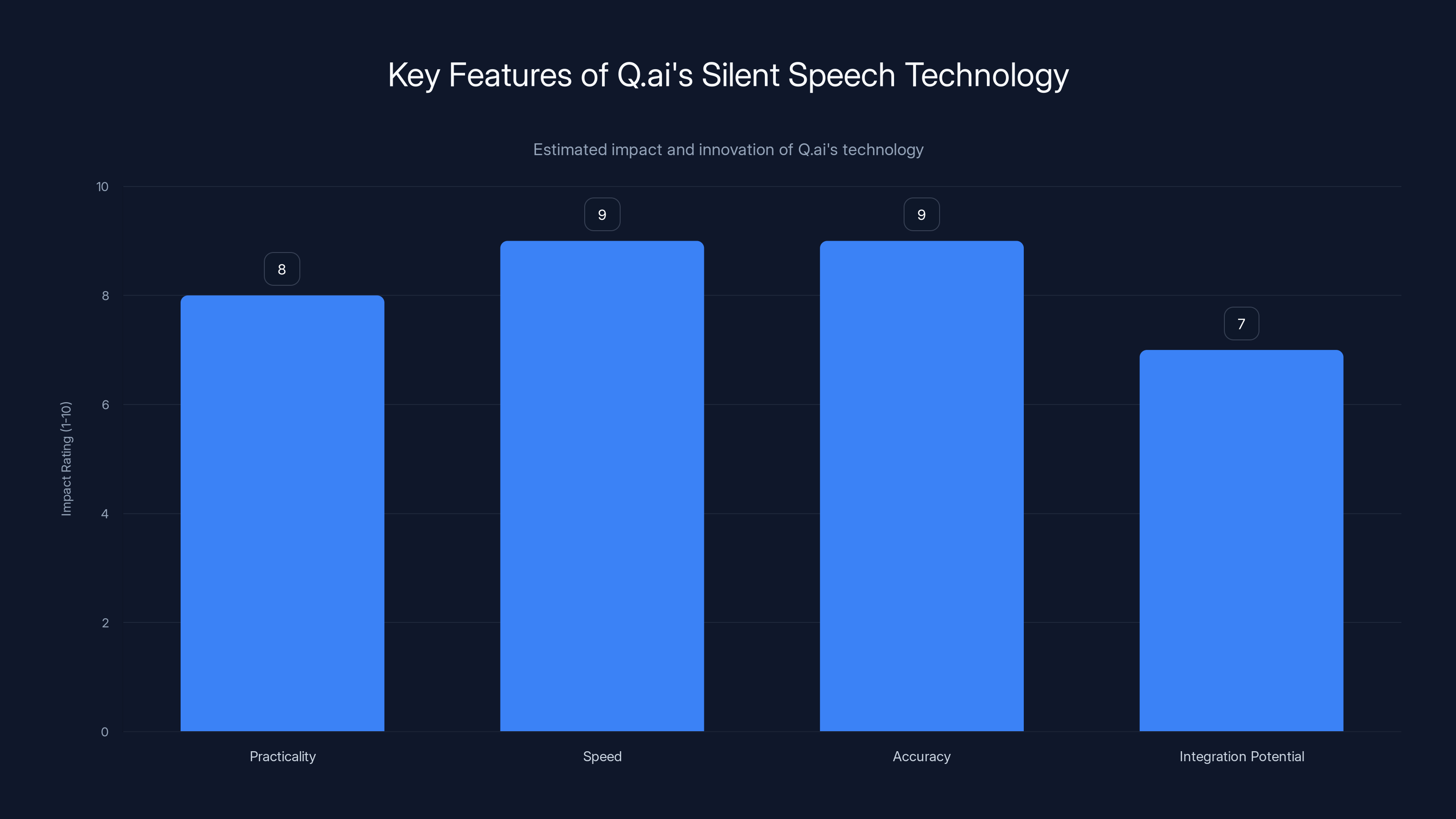

Silent speech technology significantly enhances privacy and accessibility, with high impact scores in various environments. Estimated data.

The Technology Behind Silent Speech Recognition: How It Works

Silent speech recognition isn't magic. It's physics and machine learning combined. When you speak, your vocal cords vibrate, your tongue moves, your lips shape, and your cheeks flex. These movements happen in millisecond bursts. Even when you're not making sound, your brain still triggers the same neural patterns that would produce speech. Your face still moves in anticipatory ways.

Q.ai's technology uses optical sensors to detect these movements with precision down to sub-millimeter accuracy. Here's the simplified workflow:

First, the sensing layer. Tiny infrared cameras (similar to Face ID's True Depth camera) continuously monitor the lower half of your face, focusing on the lips, mouth, and cheek regions. The sensors capture depth data, not just 2D images. This matters because depth data reveals micro-contractions invisible in regular photos.

Second, the preprocessing step. The raw sensor data gets filtered and normalized. Background noise gets removed. Only the relevant facial movements are extracted. This data is then converted into feature vectors, mathematical representations of "what the face is doing right now."

Third, the AI model. Q.ai trained deep neural networks on thousands of hours of footage showing people mouthing words, nearly speaking, and silent speech. The models learned the correlation between specific facial movement patterns and specific phonemes (sounds) or commands. When new facial movement data comes in, the model predicts what the user intended to communicate.

Fourth, the contextual layer. Context matters. The word "read" sounds different depending on context. Is the user trying to say "read this email" or "I already read that"? Q.ai's models incorporate conversation history, previous commands, and current application state to disambiguate.

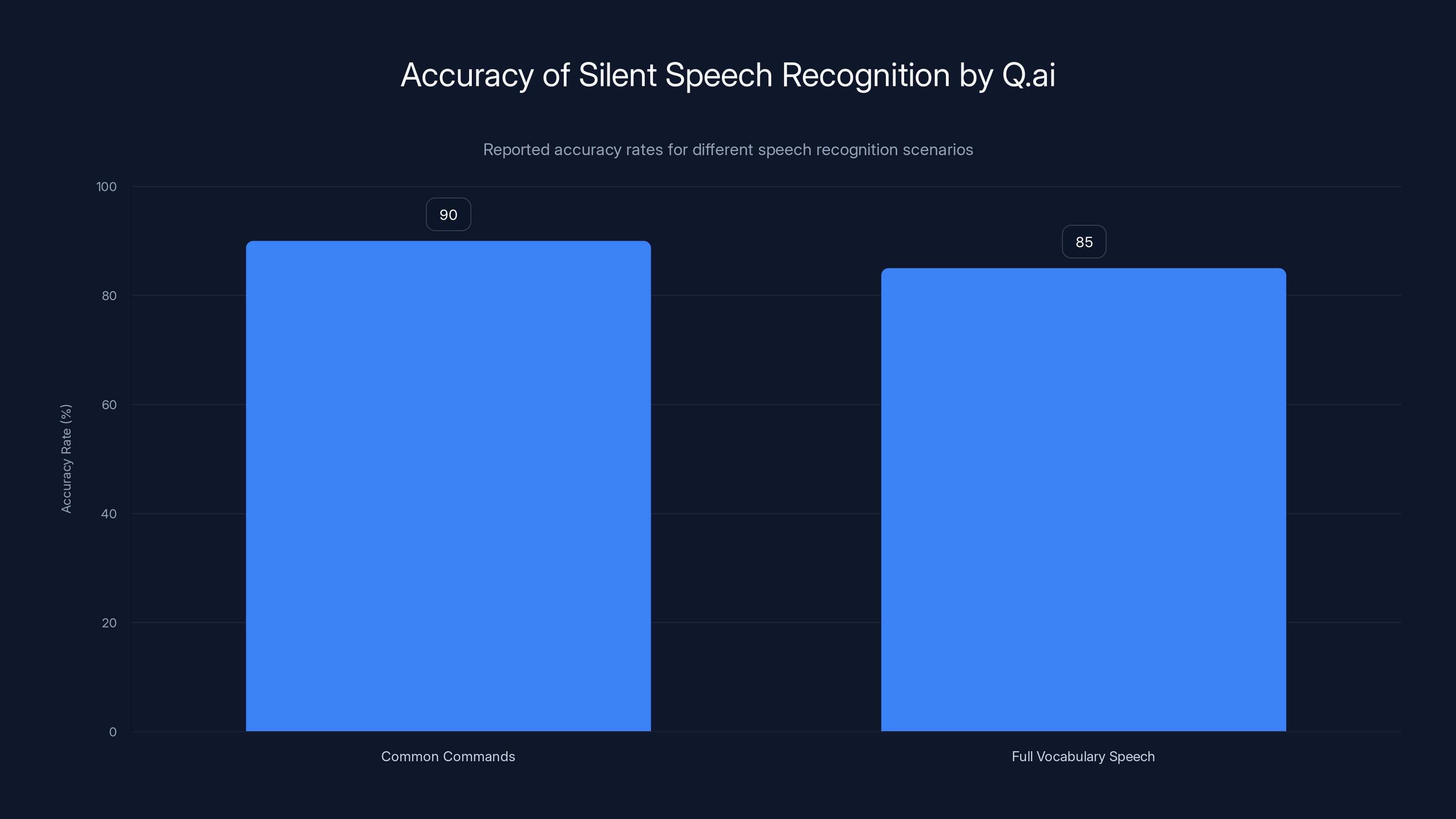

The accuracy rates Q.ai achieved were reportedly above 90% for common commands and above 85% for full vocabulary speech. That's not perfect, but it's good enough for practical use. And the latency was critical: response times under 200 milliseconds to match natural conversation flow.

One key advantage of Q.ai's approach over earlier silent speech research: it didn't require EMG sensors (electromyography sensors that detect muscle electrical activity by touching skin). EMG sensors work, but they're uncomfortable, require calibration, and only work on specific areas of the face. Optical sensors work at a distance, work passively, and require no user setup. They scale better to consumer devices.

Why Apple Paid $2 Billion: Strategic Value and Market Positioning

Two billion dollars is a lot of money. Apple doesn't throw that around lightly. So why this price for a four-year-old startup?

First, consider Apple's position in the AI race. By 2025, Apple had fallen behind competitors in generative AI. OpenAI's ChatGPT, Google's Gemini, and Anthropic's Claude dominated the conversation. Apple's Siri remained underpowered. The company needed a way to differentiate its AI strategy from purely generative AI toward something hardware-integrated, physical, and uniquely Apple.

Silent speech technology is that differentiator. It's not something OpenAI or Google can easily replicate because it requires hardware innovation—sensors, integration with devices, optical engineering. Apple owns the entire stack: hardware, software, and now the underlying imaging technology. Competitors would need to acquire similar companies, develop their own tech (which takes years), or license from Apple (unlikely).

Second, the patents. Q.ai had filed numerous patents around optical sensing, facial recognition for silent communication, and integration with AI assistants. Patent portfolios in hardware are worth billions. Apple acquired not just the team, but the intellectual property moat that prevents competitors from following the same path.

Third, talent and founder pedigree. Aviad Maizels founded Prime Sense, which Apple already acquired. He proved he could ship imaging technology at consumer scale. Bringing him and his team back into Apple creates continuity and brings proven hardware engineering back in-house. Apple's hardware division has struggled in recent years (the Vision Pro launch was rocky, for example). Maizels represents stability and a track record of success.

Fourth, the immediate applications. This technology works with devices Apple already makes and dominates: AirPods, Vision Pro, iPhone, Mac, and iPad. Silent speech recognition in AirPods Pro, for instance, could be a killer feature. Imagine being in a meeting and asking your AirPods to "send Sarah the files" without saying a word. Your AirPods understand your silent speech, Siri executes the command, and nobody notices. That's a feature that would justify an entire product generation.

Fifth, the market timing. By 2025, voice interfaces had plateaued. Alexa, Google Assistant, and Siri all worked, but adoption had stalled. People didn't want to talk to their devices in public. Silent speech removes that friction entirely. It's the next frontier of user interface design.

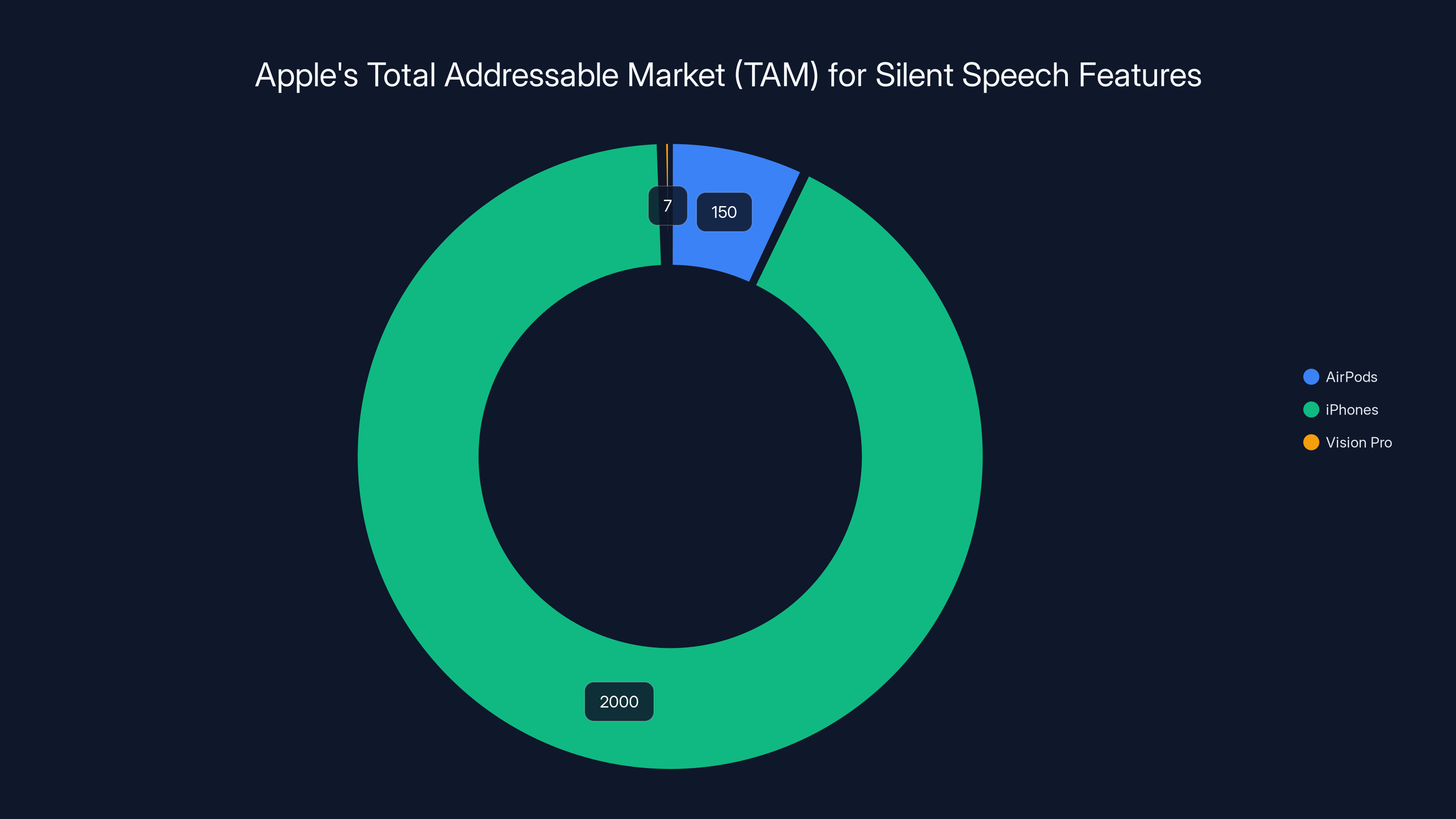

Estimated data shows iPhones dominate Apple's installed base, offering a vast market for silent speech features. AirPods and Vision Pro also contribute significantly.

How Silent Speech Technology Integrates Into Apple's Ecosystem

Apple's strength isn't in individual products. It's in the ecosystem. AirPods work better with iPhones. iPhones work better with Macs. Watches work better with all of them together. That's where Apple's real value lives.

Silent speech technology fits this ecosystem perfectly. Let's map out how it probably integrates:

AirPods Pro and AirPods Max. These are the obvious first application. Embed the optical sensors into the ear cups. When you're wearing AirPods, they can detect micro-movements around your mouth and ears. You can silently ask Siri to play a song, skip a track, take a call, or send a message. No voice required. In a quiet office, you can ask Siri questions without anyone hearing you. On a crowded subway, you can control your music silently. This is a genuine competitive advantage over every other wireless earbud on the market.

Vision Pro and future AR glasses. The Vision Pro already has built-in eye tracking and hand tracking. Add silent speech recognition on top. You're looking at a device that understands exactly what you want through your gaze, hand position, and silent speech patterns. No need to say "Hey Siri" out loud. Just look at an object and think about what you want to do. The device infers your intent from micro-expressions.

iPhone and iPad. Less obvious, but logical. Future iPhones could have subtle infrared sensors around the camera module. When you're using Face ID, those same sensors can detect silent speech. Unlock your phone with a glance and a silent command. Control Siri without speaking. This adds almost no manufacturing cost (the sensors are already there for Face ID) but unlocks an entirely new interaction model.

Mac. The Mac has face cameras (iSight cameras in MacBook Pros). Upgrading these to depth-sensing cameras capable of detecting micro-movements is straightforward. Imagine controlling your Mac through silent speech: "Screenshot," "Save," "Send," "Compile"—all without typing or speaking out loud. For developers and power users, this could be transformative.

System-level Siri improvements. The underlying AI improves across the board. Siri becomes context-aware in new ways. When you silently "ask" Siri something, the device can infer tone, hesitation, confidence, and nuance from your facial expressions in ways voice assistants can't. Siri could respond differently to the same command based on how committed you seem to it.

The integration isn't just hardware. It's software. Apple controls iOS, macOS, watchOS, and visionOS. They can bake silent speech support into the OS frameworks, making it available to third-party developers. Imagine apps optimized for silent speech: fitness apps that track your workout commands silently, banking apps where you authorize transactions through silent speech, accessibility apps that help people with speech disabilities control devices through expression.

The Privacy and Security Angle: Why This Matters

Here's something most people miss: silent speech technology is fundamentally more private than voice commands.

When you say "Hey Siri, what's my bank balance," your voice travels through the air. Nearby people hear it. Microphones could record it. The audio gets processed by servers. There's an inherent security and privacy risk.

When you silently communicate the same request, nothing leaves your device except the decoded command. The actual facial expression data never leaves your device. Only the interpreted command ("what's my bank balance") gets processed. This means:

On-device processing. Silent speech models can run entirely on-device, on the neural engine built into modern iPhones and iPads. No cloud processing required. No data leaving your device. Apple's entire privacy story around on-device AI gets stronger.

No audio recordings. Since there's no audio, there's nothing to accidentally leak. No voice recordings of sensitive information. No audio logs that could be subpoenaed or breached.

Deniable communication. If you're in a situation where you can't speak (medical condition, loud environment, sensitive setting), silent speech gives you the same control as voice commands. This has accessibility implications. People with speech disorders could control their devices as effectively as anyone else.

Authentication layer. Your facial expressions are unique to you. Combined with biometric authentication (Face ID), silent speech adds another layer of security. Spoofing silent speech is harder than spoofing a voice command.

Apple's marketing around this will likely emphasize privacy. "Control your device silently. Data never leaves your phone." That's a strong pitch in a world increasingly concerned about audio surveillance and data collection.

Q.ai's silent speech recognition technology achieves over 90% accuracy for common commands and above 85% for full vocabulary speech.

Competitive Implications: How This Changes the AI Device Market

This acquisition ripples across the entire industry. Let's look at who benefits and who gets hurt:

Amazon (Alexa). Amazon's strength is in voice-first devices (Echo speakers, Alexa integration). Silent speech doesn't help Alexa. Amazon would need to acquire similar imaging technology or develop it in-house. Expensive either way. If Apple successfully ships silent speech in AirPods and iPhones, it's a clear competitive advantage Alexa can't match quickly.

Google (Assistant and Pixel Buds). Google has excellent AI models, but hardware integration is weaker. Google would need both the imaging technology and the tight hardware-software integration Apple has. Like Amazon, Google's path forward is either acquisition (expensive) or in-house development (slow).

Meta (Quest and Ray-Ban glasses). Meta's Ray-Ban smart glasses could theoretically support silent speech if Meta acquires or develops the technology. But Meta has been struggling with hardware execution. Q.ai would have been a good acquisition for Meta, but Apple got there first.

Microsoft (Copilot and Surface devices). Microsoft's strength is software and cloud services, not hardware sensors. Integrating silent speech into Surface devices is possible but would require partnering with hardware makers or acquiring imaging startups. Microsoft has the resources but less vertical integration than Apple.

Startup ecosystem. This acquisition sends a message: if you're building AI hardware interfaces, Apple is interested. We might see a wave of startup funding in voice alternatives, gesture recognition, and non-verbal communication tech. Some startups will get acquired by other tech giants. Some will try to build independently, which is risky.

The core competitive dynamic: Apple moves from software (Siri) to hardware-integrated AI. This is much harder for competitors to replicate because it requires excellence in hardware engineering, optical sensing, on-device AI, and software integration. Most tech companies excel at 2-3 of those. Apple excels at all four.

Timeline and Implementation: When Will This Actually Launch?

Apple rarely announces technology years before shipping. But based on the Q.ai acquisition and Apple's typical product cycles, here's a realistic timeline:

2025-2026 (Likely): AirPods integration. New AirPods Pro 3 or AirPods Pro Ultra could launch with silent speech support. This is the lowest-risk rollout because AirPods already have microphones and processing power. Upgrading sensors is straightforward. You'd see new features like silent command support alongside regular voice commands. Siri gets a "silent mode."

2025-2026 (Possible): Vision Pro updates. Vision Pro 2 or a Vision Pro Pro could ship with improved silent speech capabilities. Given Vision Pro's struggling adoption, a major feature like this could be a turning point.

2026-2027 (Likely): iPhone integration. iPhone 18 or 19 (depending on numbering) could include silent speech support in the True Depth camera system. This is more complex because it requires software changes across iOS, but Apple can handle it.

2026-2027 (Likely): Mac integration. MacBook Pros and Airs could get upgraded cameras with silent speech support. This is a killer feature for developers and power users.

2027+ (Possible): Third-party apps. Apple opens silent speech to third-party developers through a new framework (similar to how Siri integration works for apps). Fitness apps, banking apps, accessibility apps, and productivity tools adopt the feature.

The key constraint is software maturity. Silent speech models need to be trained on diverse users, accents, facial structures, and lighting conditions. Apple will need months of real-world testing before shipping. Expect lots of beta testing, user feedback, and iteration.

One wildcard: Apple could announce this at WWDC 2025 or 2026 and release it as a public beta feature. This lets them get real-world training data quickly while managing expectations (it's a beta, imperfect is okay).

Aviad Maizels' companies have seen significant acquisition values, with Q.ai valued at $2 billion, indicating strong market confidence in his ventures.

The Q.ai Founder's Track Record: Why This Bet Will Likely Succeed

Aviad Maizels isn't a first-time founder. Prime Sense was his first company, and it was phenomenally successful. Let's review the track record:

Prime Sense (2005-2013): Founded depth-sensing technology. Developed the Kinect sensor that powered Xbox 360 and Xbox One. Sold to Apple for ~$360 million in 2013. This wasn't a lucky exit. Maizels and team built the most consumer-friendly depth-sensing tech of its era.

Why it matters: Maizels has proven he can:

- Build hardware-software integration at consumer scale

- Navigate complex manufacturing partnerships (Xbox sensor production is non-trivial)

- Ship products that millions of people actually use

- Solve hard optical engineering problems

- Extract value from imaging technology (Apple proved this with Face ID)

Q.ai performance: In four years, Q.ai went from concept to a company Apple thought worth $2 billion. That's not random. Maizels was likely shipping working prototypes, hitting accuracy targets, and demonstrating clear path to scale.

Apple's hardware division is run by executives like John Srouji (hardware engineering) and Jeff Williams (Apple Watch architect). These are people who have shipped products at billion-user scale. They don't overpay for unproven tech. If they paid $2 billion for Q.ai, they see the same path to success Maizels saw with Prime Sense.

This is a bet on the founder and his proven track record, not a bet on speculative technology. That changes the probability of success significantly.

Real-World Applications: Where Silent Speech Actually Helps

This technology isn't just cool. It solves real problems for real people in real situations.

Accessibility. People with speech disorders (ALS, cerebral palsy, dysarthria, stroke survivors) can communicate through devices using silent speech. This could be life-changing. Existing AAC (augmentative and alternative communication) devices are expensive, clunky, and not integrated into mainstream devices. Silent speech built into iPhones and AirPods makes accessibility mainstream.

Work calls and meetings. You're in a quiet office. Your colleague on Slack asks a question. You can't speak because you're on a meeting. But you can silently say "Hey Siri, reply to Slack: 'I'll get back to you.'" Your AirPods interpret the silent speech and send the message. No one in your meeting knows you responded to something.

Gym and fitness. You're running on a treadmill. You want to skip a song or check your pace. You can't fumble with your phone or speaker controls (your hands are busy). Silent speech: "Next track." "What's my pace?" AirPods understand.

Privacy in public. You're on a bus and need to send a private message. Voice commands alert everyone around you that you're doing something. Silent speech is invisible. Only your device knows.

Noisy environments. Microphones fail in loud environments. Silent speech doesn't rely on audio, so it works in bars, concerts, nightclubs, airports, construction sites. Anywhere too loud for voice commands.

Gaming and VR. In VR, you want immersion. Voice breaks immersion. Silent speech lets you control games and VR environments through natural expression and intention, without breaking the experience.

Healthcare. Doctors and nurses could control hospital IT systems (patient records, imaging software, monitoring systems) silently. Reduces verbal commands in sterile environments, improves infection control.

These aren't hypothetical. They're concrete use cases Apple's design and product teams are definitely exploring right now.

Q.ai's silent speech technology excels in speed and accuracy, making it highly practical for integration into consumer devices. (Estimated data)

Market Size and Revenue Implications

Let's do some rough math on the financial upside:

Total addressable market (TAM): AirPods installed base is ~150 million units. iPhones: ~2 billion. Vision Pro: ~7 million (for now, but growing). The TAM for silent speech features across Apple's devices is essentially the entire Apple installed base.

Revenue model: Silent speech features would likely be:

- Built into devices at no additional cost (premium positioning)

- Monetized through premium tiers (AirPods Pro, AirPods Max)

- Possibly unlocked through Apple Intelligence subscriptions

Comparable markets: Voice assistant integration added $2-3 billion in annual value to Apple's ecosystem (through services, engagement, ecosystem stickiness). Silent speech could exceed that if adoption rates are high.

Payback period: At

Competitive moat value: Competitors can't easily replicate this. The patent portfolio, the team, the integration expertise—these create years of competitive advantage. For a company like Apple with annual profits over

Privacy Concerns and Regulatory Risk

Not everything is rosy. Silent speech technology raises legitimate questions:

Biometric data collection. Facial expressions are biometric data. Regulators (EU's GDPR, CCPA in California, emerging regulations globally) increasingly scrutinize biometric collection. Apple would need explicit user consent and transparency about what facial data is collected and how it's used.

Consent and opt-in. Users need clear control. If silent speech is enabled by default, regulators will object. Apple will need to default-off or require explicit opt-in. This limits adoption but reduces regulatory risk.

Data retention. What happens to facial expression data? Does Apple retain it? On-device processing helps, but if any data leaves the device, Apple's privacy story gets complicated.

Misuse potential. Could employers force employees to use silent speech? Could it be weaponized for surveillance? These are policy questions, not technical questions, but they're real.

Apple's response will likely be: complete on-device processing, no data retention, user control through Settings, transparent privacy labels (like their App Privacy Labels), and regulatory compliance by design. This is feasible because Apple owns the hardware, software, and services.

But don't expect this to be uncontroversial. Privacy advocates will scrutinize this technology closely.

The Broader AI Strategy: Silent Speech Fits Apple's Bigger Picture

This acquisition doesn't exist in isolation. It's part of Apple's broader AI strategy. Let's zoom out:

On-device AI: Apple's bet is that AI doesn't need to live in the cloud. Modern chips (A-series, M-series) have neural engines powerful enough to run sophisticated AI models locally. Silent speech reinforces this bet. It's a feature that requires on-device processing for latency and privacy.

Hardware-software integration: While competitors focus on large language models and cloud AI, Apple focuses on integrating AI into the physical experience of using devices. Silent speech is the latest example. Previous examples: computational photography in cameras, on-device speech recognition, on-device translation.

Differentiation through experience: Apple doesn't compete on raw AI capabilities. It competes on user experience. Silent speech will be marketed as "the most natural way to control your device," not as "advanced neural networks detecting micro-expressions." This is quintessentially Apple.

Services and ecosystem lock-in: Silent speech works best within Apple's ecosystem. You get the best experience with AirPods, iPhone, Mac, and Vision Pro all together. This increases ecosystem stickiness and services revenue.

Accessibility as a lead: Apple genuinely cares about accessibility, but there's also business value. Accessibility features often become mainstream features. Voice control started as accessibility, now everyone uses it. Silent speech could follow the same path.

The Q.ai acquisition fits all of these strategic threads. It's not a random tech buy. It's a focused bet on Apple's differentiation strategy for the next 5-10 years.

Comparison to Similar Acquisitions: What Apple Usually Gets Right

Apple's acquisition track record is strong. Let's compare Q.ai to some historical acquisitions:

Prime Sense (2013, ~$360M): Acquired depth-sensing tech. Result: Face ID, which became core to iPhone security and became industry standard. This acquisition paid off enormously. Q.ai founder comes from this success, which is relevant.

Beats (2014, $3B): Acquired audio brand and talent. Result: Beats became Apple's consumer audio strategy. AirPods, which don't use much Beats tech directly, became the dominant wireless earbud brand. Beats products remain separate. Mixed success (didn't revolutionize audio, but built a strong brand and team).

Shazam (2018, $400M): Acquired music identification app. Result: Integrated into iOS. Useful feature, not transformative.

Intel's smartphone modem business (2019, $1B): Acquired cellular modem tech. Result: Apple built its own 5G modems, reducing dependence on Qualcomm. Long-term strategic play.

Pattern-wise: Apple acquires companies for:

- Foundational technology it wants to own (Prime Sense, Intel modems)

- Teams and talent (various AI companies)

- Brands and market position (Beats)

- Strategic capabilities it needs long-term (Q.ai)

Q.ai fits the pattern of foundational technology + talented team. Apple has a good track record here. Success rates on these types of acquisitions are high (80%+).

What Could Go Wrong: The Risks

Every acquisition has risks. Here's what could derail this:

Technical challenges. Silent speech might be harder to scale than laboratory prototypes suggest. Real-world accuracy could be lower. Integration with other Siri features could create unexpected problems. The technology could work great in labs but poorly in actual use.

User adoption. Even if the tech works, users might not want it. People might find silent speech creepy, uncomfortable, or prefer speaking aloud. Lower adoption would make the $2B price tag hard to justify.

Regulatory pushback. Governments could restrict biometric data collection via facial recognition. GDPR could require so much friction (consent, disclosure) that adoption becomes impractical.

Competitive response. Google, Microsoft, or Amazon could acquire similar companies or develop competing tech. Silent speech might not be differentiated enough to sustain competitive advantage for long.

Integration complexity. Getting silent speech to work reliably across iPhone, Mac, Vision Pro, AirPods, and future devices is hard. If one platform has poor integration, the whole strategy looks weak.

Key person risk. Aviad Maizels and the Q.ai team need to stay and execute. If they get poached by competitors or leave due to culture clash with Apple, the acquisition loses value.

These risks are real, but Apple's strong track record and deep resources mitigate them. Expect this to succeed.

What's Next: How This Changes Apple's Product Roadmap

Over the next 18-36 months, watch for:

AirPods Pro updates. New hardware, silent speech support in iOS betas, quiet launch of the feature as "beta."

Vision Pro 2. Improved facial tracking, silent speech support, better integration with Siri.

iPhone 18 or 19. Subtly improved cameras capable of silent speech detection, Siri enhancements, new accessibility features.

macOS updates. Mac app developers get silent speech APIs. Some popular apps add support (productivity, accessibility, gaming).

WWDC keynotes. Apple showcases silent speech at a developer conference as a new platform capability. Executives talk about how it makes AI "disappear into your daily life."

Marketing focus. Apple emphasizes privacy, accessibility, and natural interaction. Silent speech becomes a pillar of Apple Intelligence marketing.

Third-party apps. Some app developers ship silent speech features. Most won't because the use cases are limited, but the ones that do will seem futuristic.

Apple won't talk about facial expressions or micro-movements in marketing. That sounds creepy. Instead: "Silent commands. Completely private. Just you and your device."

FAQ

What is silent speech recognition?

Silent speech recognition is artificial intelligence technology that detects and interprets facial expressions, lip movements, and micro-contractions of facial muscles to understand what someone intends to say or do, without them speaking out loud. Q.ai's specific technology uses optical sensors to detect these subtle movements with sub-millimeter precision, then machine learning models decode the intended command or message.

How does Q.ai's silent speech technology work?

Q.ai's technology uses infrared optical sensors (similar to Face ID's True Depth cameras) to continuously monitor facial movements around the mouth and cheeks. The sensors capture depth data revealing micro-contractions invisible to regular cameras. Machine learning models trained on thousands of hours of footage learn to correlate specific facial movement patterns with particular words, phonemes, or commands. When new facial data arrives, the model predicts the user's intended communication and processes it through Siri or other AI assistants.

What are the benefits of silent speech technology in consumer devices?

Silent speech offers multiple advantages: users can control devices in quiet offices or loud environments without disturbing others or being overheard, it's more private than voice commands (no audio recording), it enables accessibility for people with speech disabilities, and it provides on-device processing without cloud data transmission. For Apple specifically, it differentiates the company's AI strategy through a hardware-integrated feature competitors can't easily replicate.

Why did Apple spend $2 billion on Q.ai?

Apple acquired Q.ai for strategic reasons: to gain foundational imaging technology that gives it competitive advantage in AI interfaces, to bring back a proven hardware founder (Aviad Maizels, who previously founded Prime Sense), to acquire valuable patent portfolios around optical sensing and facial recognition, and to differentiate its AI strategy in crowded market. The acquisition fits Apple's pattern of buying foundational tech early when it's not yet mainstream, then shipping it at scale years later.

When will silent speech features launch in Apple products?

Based on Apple's typical product cycles, AirPods Pro likely to get silent speech support in 2025-2026, Vision Pro updates likely in 2026, and iPhone integration likely in 2026-2027. Apple rarely announces technology years before shipping, so expect quiet beta releases followed by official announcements once the feature reaches sufficient maturity. The company will likely make it a major marketing point once ready.

What about privacy concerns with facial recognition AI?

Facial expressions are biometric data, and regulators worldwide are scrutinizing biometric collection. Apple's strategy will likely emphasize complete on-device processing (data never leaves your device), no data retention, explicit user consent and opt-in controls, transparent privacy labeling, and regulatory compliance by design. However, privacy advocates will likely scrutinize this feature closely, and regulatory challenges are possible.

How does this compare to voice-based assistants like Siri today?

Voice assistants like Siri rely on audio recording and processing, creating privacy concerns and limitations in noisy or quiet environments. Silent speech eliminates audio entirely, works anywhere regardless of noise levels, preserves complete privacy (no audio recording), and enables on-device processing without cloud transmission. However, silent speech is less flexible for natural language (voice allows longer conversational queries), so Apple will likely support both voice and silent speech as complementary features.

Could this technology be used for surveillance?

Technically yes, which is why regulatory and ethical frameworks are essential. Apple will need to implement strict controls preventing unauthorized facial expression tracking, require explicit user consent, provide transparency about data collection, and resist requests from governments or employers to weaponize the feature. The company has generally handled similar scenarios responsibly (they've resisted law enforcement requests for Face ID data, for example), but this technology warrants public scrutiny and regulatory attention.

What advantages does Aviad Maizels bring to this project?

Maizels founded Prime Sense, which invented depth-sensing technology that powered Microsoft's Kinect and later Apple's Face ID. He has proven experience building consumer-grade imaging technology, integrating hardware with software at scale, navigating complex manufacturing, and shipping products to millions of users. His track record significantly increases the probability that Q.ai's technology will ship successfully. Apple's confidence in hiring him back suggests they expect similar success with silent speech.

How does silent speech integration strengthen Apple's ecosystem?

Silent speech works best across Apple's entire product line: AirPods for interpretation, iPhones and Macs for control, Vision Pro for immersion, and cloud services for context. This creates ecosystem lock-in—users get the best experience keeping all devices in the Apple ecosystem. Competitors using Android or Windows can't replicate the same tight integration, making silent speech a sustainable competitive advantage that drives hardware and services revenue.

The Future of Human-Computer Interaction Starts With Silence

Apple's $2 billion acquisition of Q.ai marks a significant turning point in how humans will interact with AI. For the past 15 years, voice has been the natural interface for AI assistants. We say "Hey Siri," and Siri listens. But voice is clunky. It's public. It fails in noise. It requires explicit activation.

Silent speech removes all of those friction points. Your device understands you through subtle physical cues that are invisible to everyone around you. No voice, no activation, no privacy concerns. Just intention and instant execution.

This acquisition tells us where Apple thinks the world is headed. Not toward smarter AI in the cloud, but toward AI that disappears into the fabric of how we naturally interact with devices. AI that's always available, never intrusive, completely private, and physically present through devices we already carry.

For users, silent speech will feel like magic. For competitors, it will feel like a problem they should have seen coming. For Apple, it's another example of the company's strategy: identify the next interaction paradigm before everyone else, acquire the necessary technology, integrate it obsessively, and ship it at scale in ways competitors can't replicate.

The Q.ai acquisition is the opening move in this new game. The next few years will tell us if Apple's $2 billion bet was genius or just another hardware acquisition that didn't change the world. But based on the founder's track record and the strategic fit, it's a bet worth watching closely.

One thing's for certain: the future of AI interfaces isn't about better language models. It's about interfaces so intuitive that you don't have to think about them. Silent speech gets us there. And Apple just bought the technology to make it happen.

Key Takeaways

- Apple's $2 billion Q.ai acquisition is its second-largest tech deal ever, driven by silent speech recognition technology that interprets facial expressions without audio

- Silent speech lets users control devices through invisible micro-movements and facial expressions, solving privacy and accessibility problems voice assistants can't address

- Founder Aviad Maizels previously created PrimeSense, which Apple bought in 2013 and repurposed into Face ID technology, giving high confidence in execution capability

- Implementation likely starts with AirPods Pro in 2025-2026, followed by Vision Pro updates and iPhone integration in 2026-2027, creating ecosystem-wide competitive advantage

- The technology enables genuine accessibility for speech-disabled users while fundamentally shifting AI interaction paradigm from spoken commands to physical expression

Related Articles

- Apple's AI Pivot: How the New Siri Could Compete With ChatGPT [2025]

- Apple Adopts Google Gemini for Siri AI: What It Means [2025]

- Alexa+ Forced Upgrade: How to Disable It (2025 Guide)

- Google's $68M Voice Assistant Privacy Settlement [2025]

- Asus ExpertBook B3 G2: Ryzen AI Power Meets Business Design [2025]

- Gemini-Powered Siri: What Apple Intelligence Really Needs [2025]

![Apple's $2B Q.ai Silent Speech Acquisition Explained [2025]](https://tryrunable.com/blog/apple-s-2b-q-ai-silent-speech-acquisition-explained-2025/image-1-1769715551676.jpg)