Exploring Chat GPT's Linguistic Evolution: The 'Strawberry' Test and Beyond [2025]

Last month, OpenAI announced a significant update to Chat GPT, boasting its newfound capability to pass the deceptively simple 'how many “r”s in strawberry' test. While this may seem trivial at first glance, it signals a broader evolution in how AI models like Chat GPT handle language and context. However, it wasn't long before users discovered they could still trip the AI by switching to words like 'cranberry'. This article delves into the technical underpinnings of this development, the challenges that remain, and what the future holds for AI language models.

TL; DR

- Chat GPT now effectively counts letters in specific words, marking a step forward in linguistic comprehension.

- Despite improvements, users continue to find new ways to challenge AI with similar tests.

- Understanding context and ambiguity remains a hurdle for AI models.

- Practical applications of these improvements span various industries.

- Ongoing research aims to bolster AI's adaptability and accuracy in real-world scenarios.

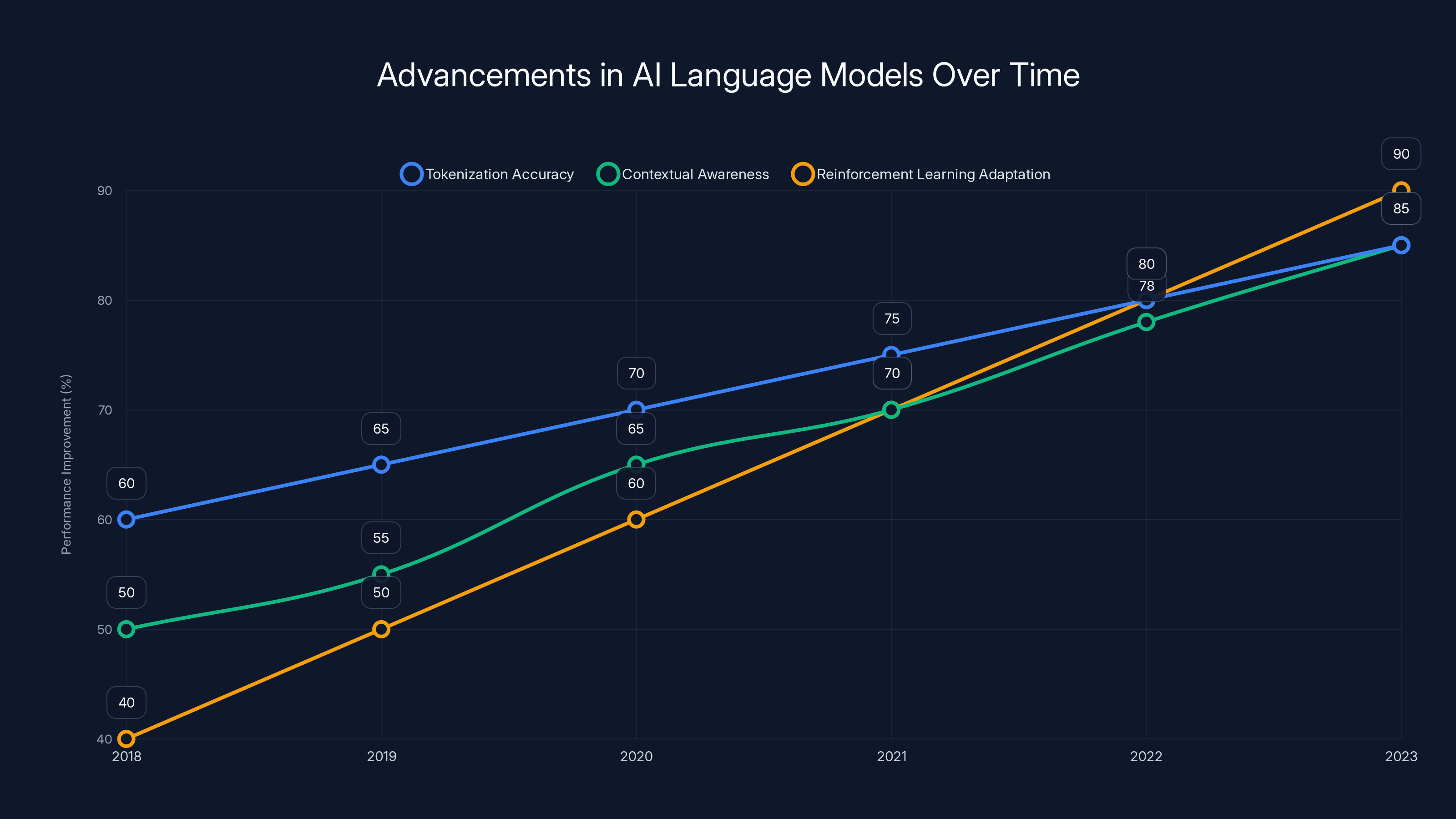

Estimated data shows significant improvements in tokenization, contextual awareness, and reinforcement learning adaptation in AI language models from 2018 to 2023.

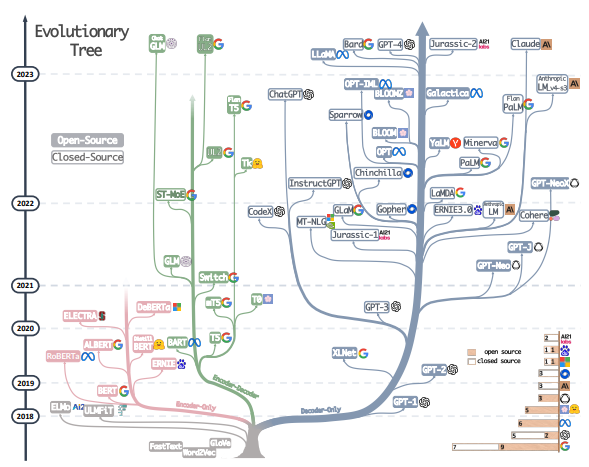

The Evolution of AI Language Models

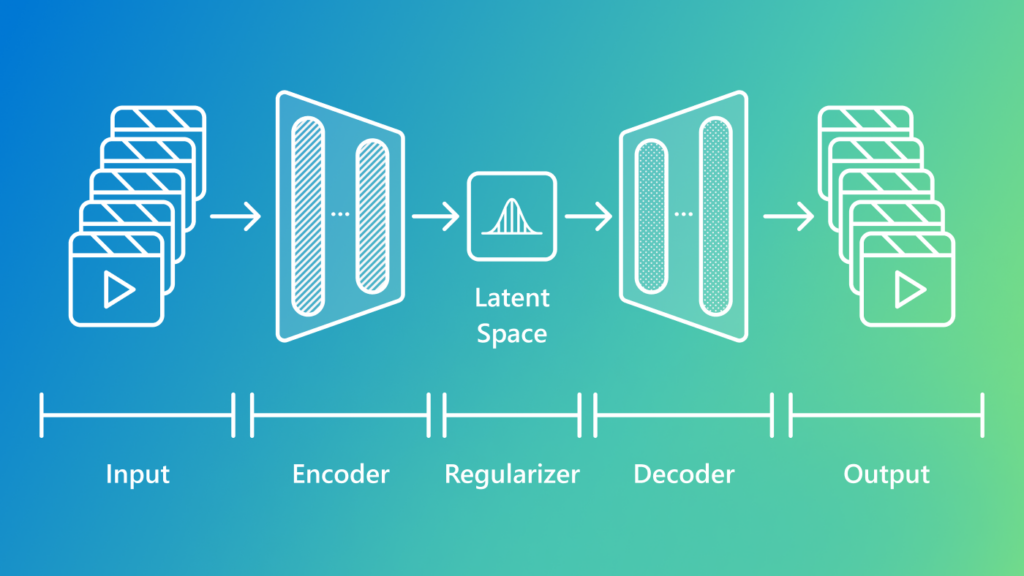

The journey of AI language models like Chat GPT has been marked by rapid advancements and occasional stumbling blocks. Initially, these models excelled in structured tasks but faltered with nuanced language understanding. The 'strawberry' test, while seemingly trivial, highlighted a critical gap in AI's ability to parse basic linguistic tasks. Addressing this gap required significant advancements in natural language processing (NLP) techniques.

Key Developments in NLP

-

Tokenization Improvements:

- AI's understanding of language starts with tokenization, which breaks text into manageable pieces. Recent updates to Chat GPT have improved its ability to recognize and accurately count individual characters and letters.

-

Contextual Awareness:

- Enhancements in contextual comprehension allow Chat GPT to better understand the meaning and intent behind words, reducing errors in tasks like letter counting.

-

Reinforcement Learning:

- By incorporating reinforcement learning, Chat GPT can learn from interactions, adapting its responses based on user feedback and past mistakes.

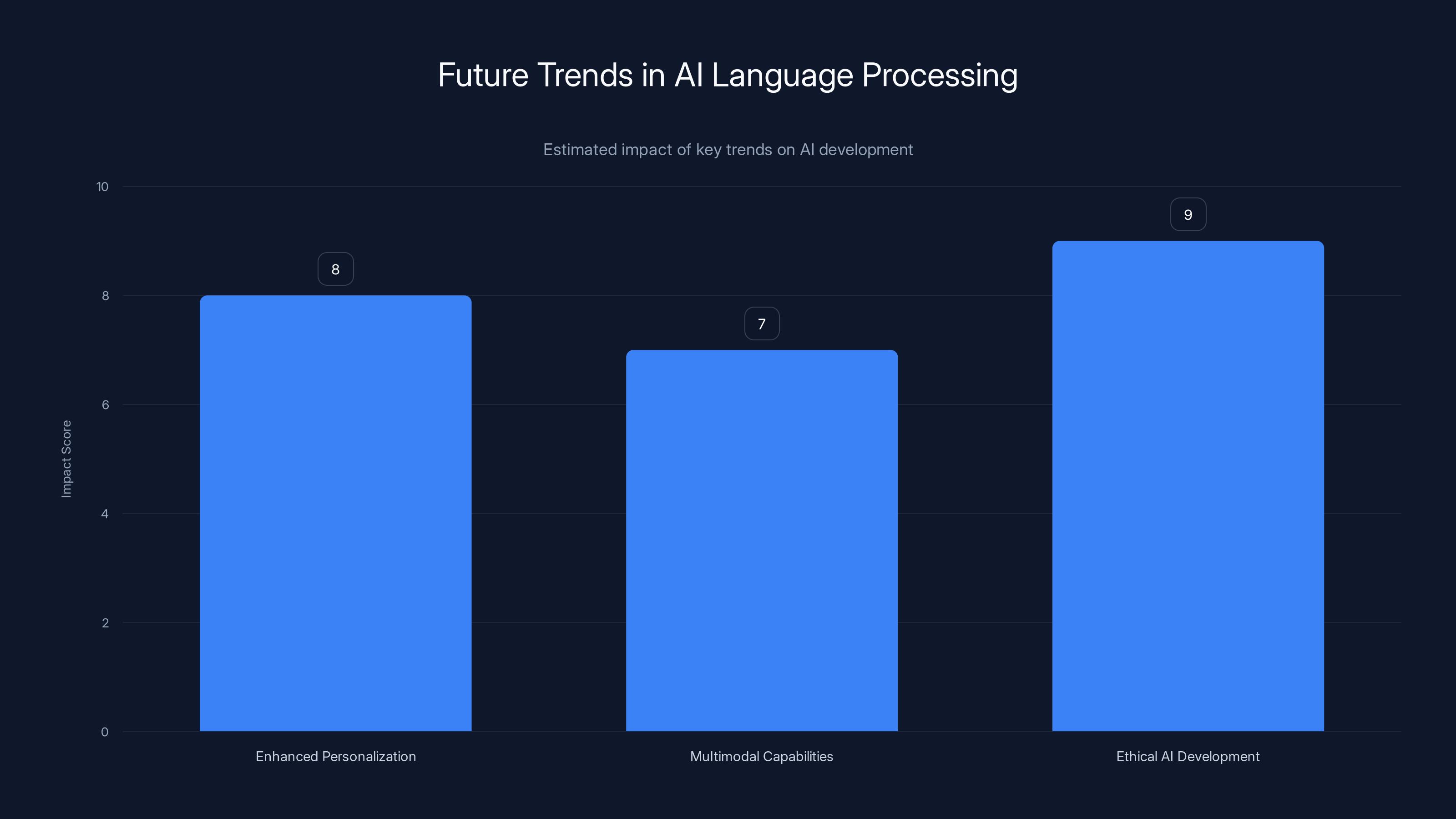

Ethical AI development is projected to have the highest impact on the evolution of AI language models. (Estimated data)

The 'Strawberry' Test: A Deceptively Simple Challenge

The 'how many “r”s in strawberry' test is a classic example of a linguistic challenge that requires both straightforward counting and an understanding of context. For years, AI struggled with such tasks due to limitations in parsing and interpreting text at a granular level.

Why This Test Matters

- Basic Comprehension: It tests AI's ability to understand basic language constructs.

- Error Reduction: Successfully passing the test reduces common errors in text-based tasks.

- Foundation for Complex Tasks: Mastering simple tasks forms the basis for tackling more complex linguistic challenges.

Overcoming the Challenge

To address this, developers refined the underlying algorithms, focusing on improving the AI's pattern recognition and memory capabilities. The result is an AI that not only counts correctly but also understands why it's counting.

Beyond Strawberries: New Challenges for AI

While Chat GPT can now handle the 'strawberry' test with ease, users quickly found that switching to words like 'cranberry' could still cause issues. This highlights an ongoing challenge for AI: handling variations and unexpected inputs.

Common Pitfalls and Solutions

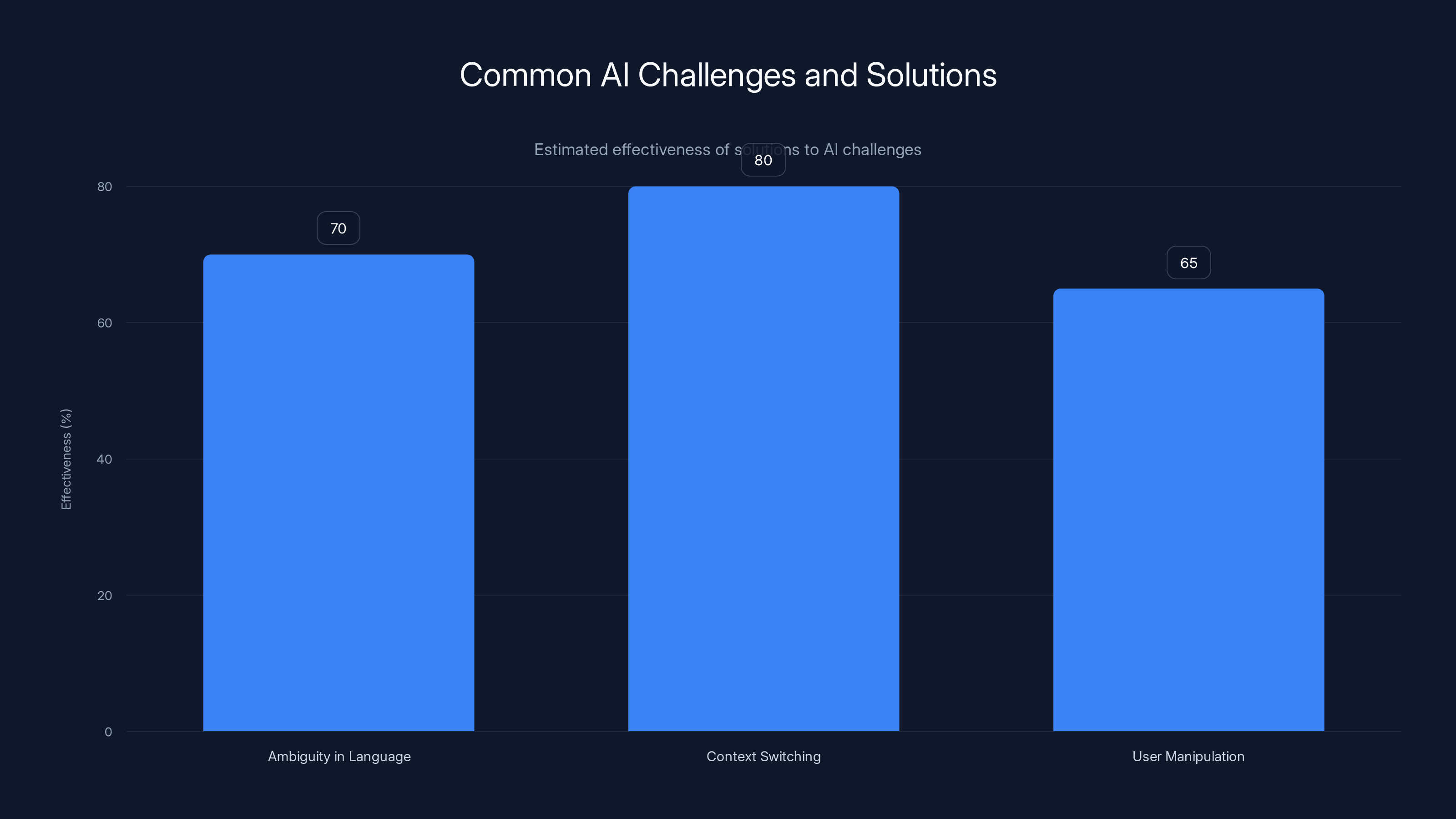

-

Ambiguity in Language:

- Pitfall: AI models often struggle with words that have multiple meanings or similar structures.

- Solution: Enhanced training datasets focusing on diverse linguistic contexts.

-

Context Switching:

- Pitfall: Rapid changes in context can confuse AI models, leading to incorrect responses.

- Solution: Continuous learning algorithms that enable dynamic adaptation to new contexts.

-

User Manipulation:

- Pitfall: Users intentionally exploit weaknesses in AI to test its limits.

- Solution: Implementing robust error-checking mechanisms and feedback loops to correct misinterpretations.

Estimated effectiveness of solutions to common AI challenges shows that context switching solutions are the most effective, while user manipulation solutions are less so. (Estimated data)

Practical Applications of Improved AI Language Models

The ability of AI to handle linguistic tasks more effectively opens doors to numerous practical applications across different sectors.

Healthcare

AI models can assist in drafting medical reports, ensuring accurate and contextually relevant information is included. Improved language understanding enhances the quality of these drafts, reducing the need for manual corrections.

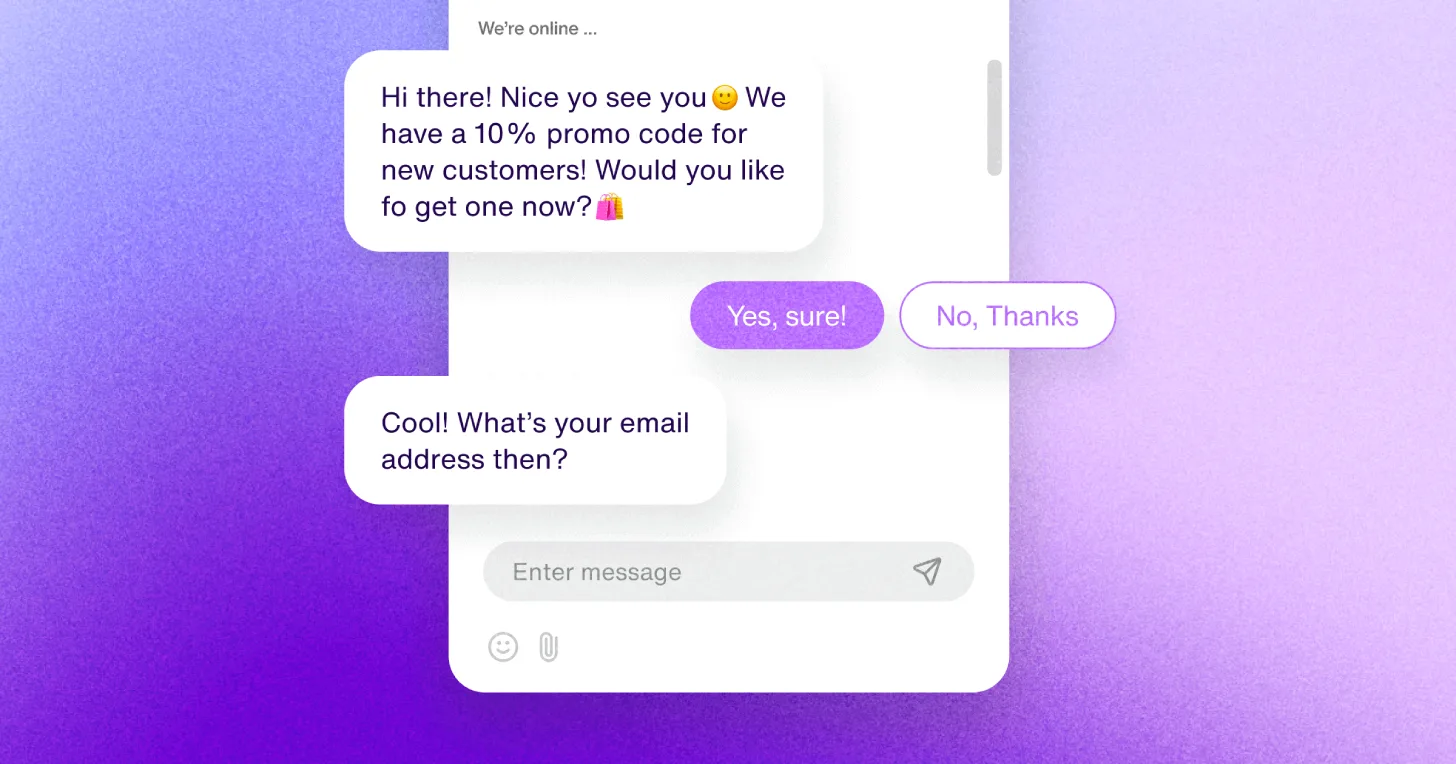

Customer Service

Chatbots and virtual assistants can offer more precise and helpful responses, improving customer satisfaction and reducing the workload on human agents.

Content Creation

Tools like Runable use AI to automate content generation for presentations, documents, and reports. This allows for more efficient workflow management and higher productivity.

Future Trends in AI Language Processing

As AI continues to evolve, several trends are likely to shape the future of language processing.

Enhanced Personalization

AI models will become more adept at personalizing interactions based on user preferences and past interactions, leading to more tailored and relevant responses.

Increased Multimodal Capabilities

The integration of text, voice, and visual data will enable AI to provide more comprehensive and contextually aware responses.

Ethical AI Development

As AI becomes more ubiquitous, ensuring ethical development and deployment will be crucial. This includes addressing biases in training data and ensuring transparency in AI decision-making processes.

Recommendations for Implementing AI Language Models

Organizations looking to implement AI language models should consider the following best practices:

- Invest in Quality Data: Ensure that the training data is diverse and representative of real-world language use.

- Prioritize User Feedback: Implement mechanisms for users to provide feedback on AI interactions, allowing the model to learn and improve.

- Focus on Security: Protect user data and ensure compliance with data protection regulations.

- Stay Agile: Continuously update and refine AI models to keep pace with evolving language trends and user needs.

Conclusion

Chat GPT's ability to pass the 'strawberry' test marks a significant milestone in AI language processing. However, the journey is far from over. As users continue to challenge AI with new linguistic puzzles, developers must remain committed to refining and improving these models. By embracing practical applications and future trends, AI language models can transform industries and enhance our everyday interactions with technology.

FAQ

What is the 'strawberry' test?

The 'strawberry' test is a simple linguistic challenge that assesses an AI's ability to count the number of specific letters in a word, testing its basic comprehension and contextual understanding.

How does Chat GPT handle context switching?

Chat GPT uses advanced algorithms that incorporate contextual awareness and continuous learning to adapt to changes in context, improving its response accuracy.

What are the benefits of improved AI language models?

Improved language models enhance various sectors, including healthcare, customer service, and content creation, by providing accurate, context-aware, and efficient interactions.

How can organizations implement AI language models effectively?

Organizations should prioritize quality data, user feedback, security, and agility in development to ensure successful AI implementation.

What future trends will shape AI language processing?

Future trends include enhanced personalization, increased multimodal capabilities, and ethical AI development, which will drive the evolution of AI language models.

Key Takeaways

- ChatGPT has improved its ability to handle basic linguistic tasks, such as counting letters in words.

- Despite advancements, AI models still face challenges with context switching and ambiguity.

- Improved AI language models have practical applications in healthcare, customer service, and content creation.

- Future trends in AI language processing include enhanced personalization and increased multimodal capabilities.

- Organizations should focus on quality data, user feedback, security, and agility when implementing AI language models.

Related Articles

- The Complex Dynamics of AI Responsibility: A Case Study on Tumbler Ridge and OpenAI [2025]

- Building Custom Reasoning Agents with Minimal Compute [2025]

- Trust by Design: Evaluating Trustworthiness in AI Agents [2025]

- Unveiling the Best Gaming Deals from Amazon's Gaming Week [2025]

- AWS and OpenAI Forge New Cloud Partnership [2025]

- Exploring Shapes: Bridging Humans and AI in Group Chats [2025]

![Exploring ChatGPT's Linguistic Evolution: The 'Strawberry' Test and Beyond [2025]](https://tryrunable.com/blog/exploring-chatgpt-s-linguistic-evolution-the-strawberry-test/image-1-1777477198127.jpg)