Confidentiality is Not Security: Bridging the Authorization Gap in AI [2025]

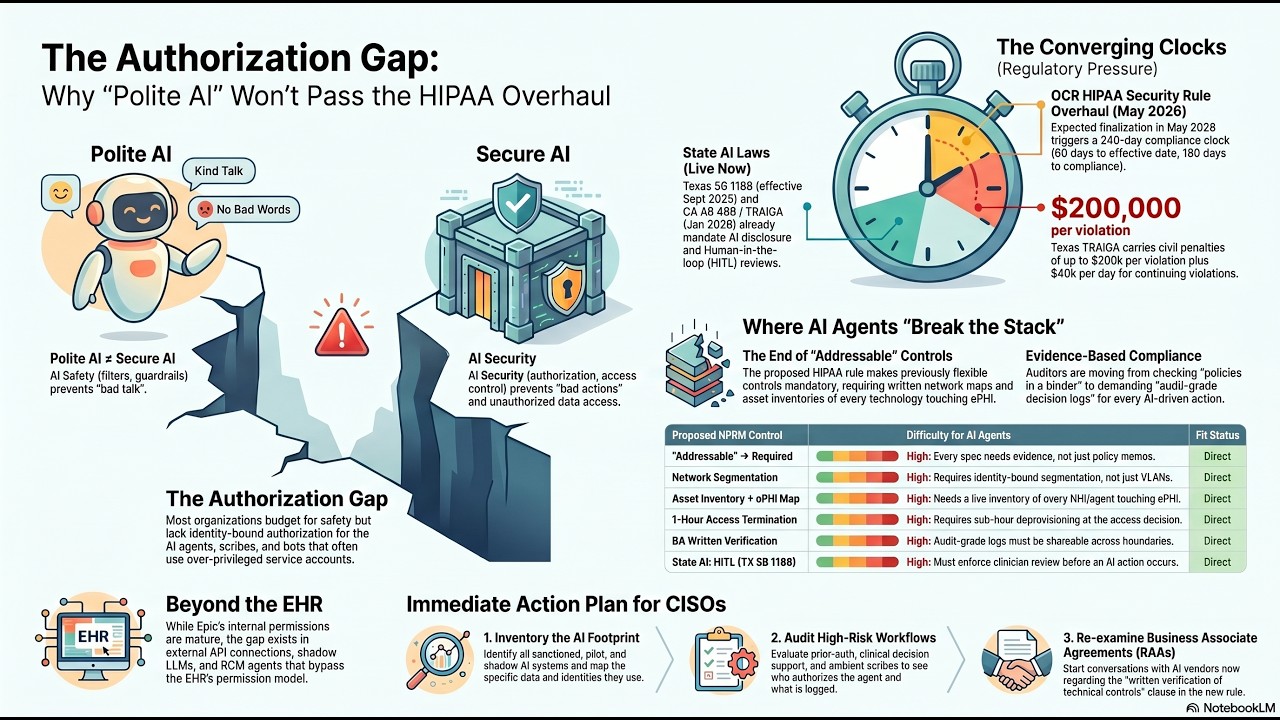

Artificial Intelligence (AI) has become the backbone of modern enterprise operations, driving efficiencies and enabling innovative solutions across industries. However, as organizations increasingly rely on AI, the security landscape becomes more complex. A common misconception is that confidentiality alone ensures AI security. In reality, a more pressing issue is the authorization gap—the lack of robust mechanisms to control who can access and manipulate AI systems during runtime.

TL; DR

- Confidentiality alone is inadequate: It fails to address who can access AI systems at runtime.

- The authorization gap: A critical vulnerability where unauthorized access can lead to misuse of AI systems.

- Comprehensive security strategies: Must include robust authorization protocols alongside encryption.

- Common pitfalls: Over-reliance on encryption without considering access control measures.

- Future recommendations: Implementing AI-specific authorization frameworks to mitigate risks.

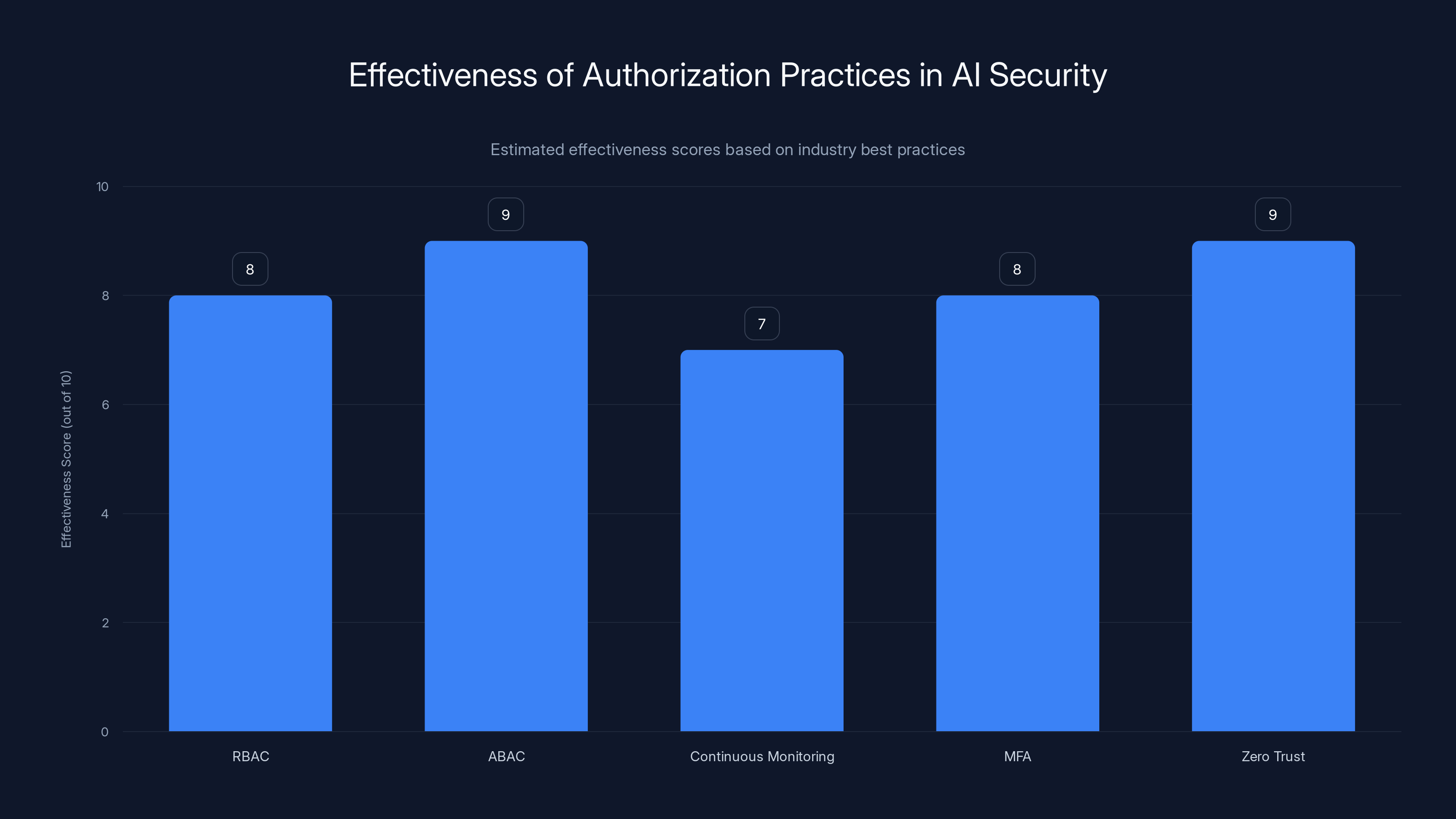

Zero Trust and ABAC are estimated to be the most effective practices for securing AI systems, scoring 9 out of 10. Estimated data.

Understanding the AI Security Landscape

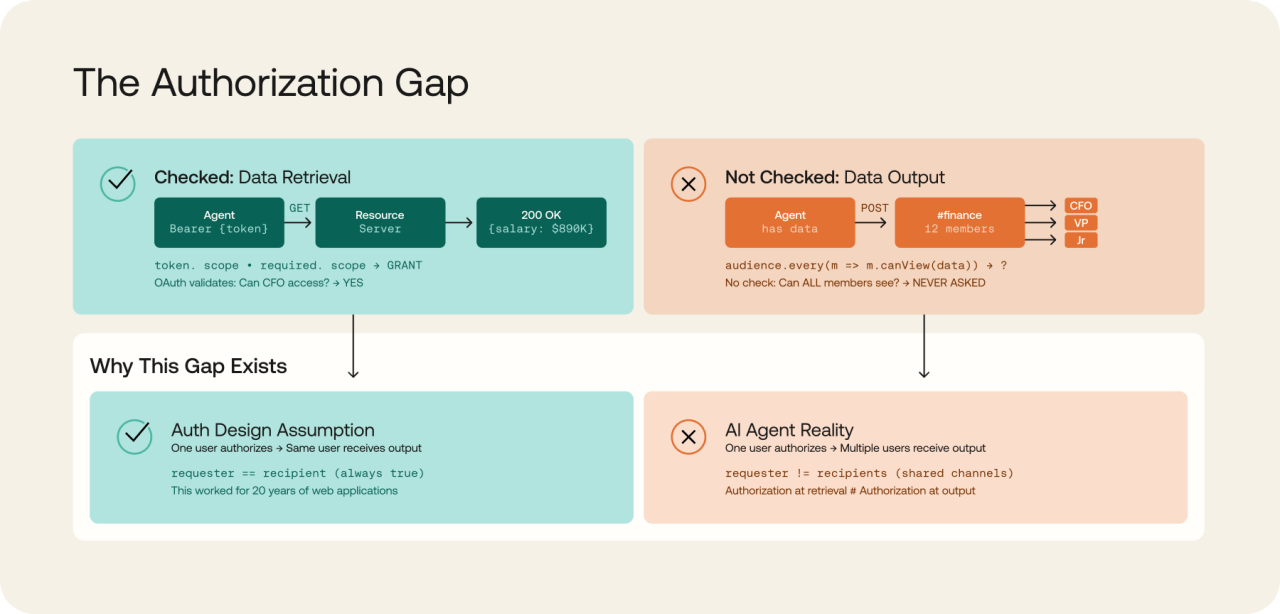

AI systems are unique in that they process vast amounts of data in real-time, often making decisions with minimal human intervention. Traditional security measures focus heavily on confidentiality—ensuring data is encrypted and protected from unauthorized viewing. However, confidentiality does not equate to comprehensive security. The assumption that encrypting data is sufficient overlooks the critical aspect of controlling access to AI systems, especially during their operation.

The Misconception of Encrypted Safety

Encryption is a cornerstone of data security, providing a layer of protection against unauthorized access. However, in AI, it primarily addresses data at rest and in transit. During runtime, when AI models actively process data, encryption offers little protection against unauthorized manipulations.

Consider an AI system managing financial transactions. While the data exchanged between servers might be encrypted, the system's operational integrity depends on who has access to modify transaction rules or override decisions. Hence, the focus must shift to authorization—determining who has the rights to make changes or access certain functionalities within the AI environment.

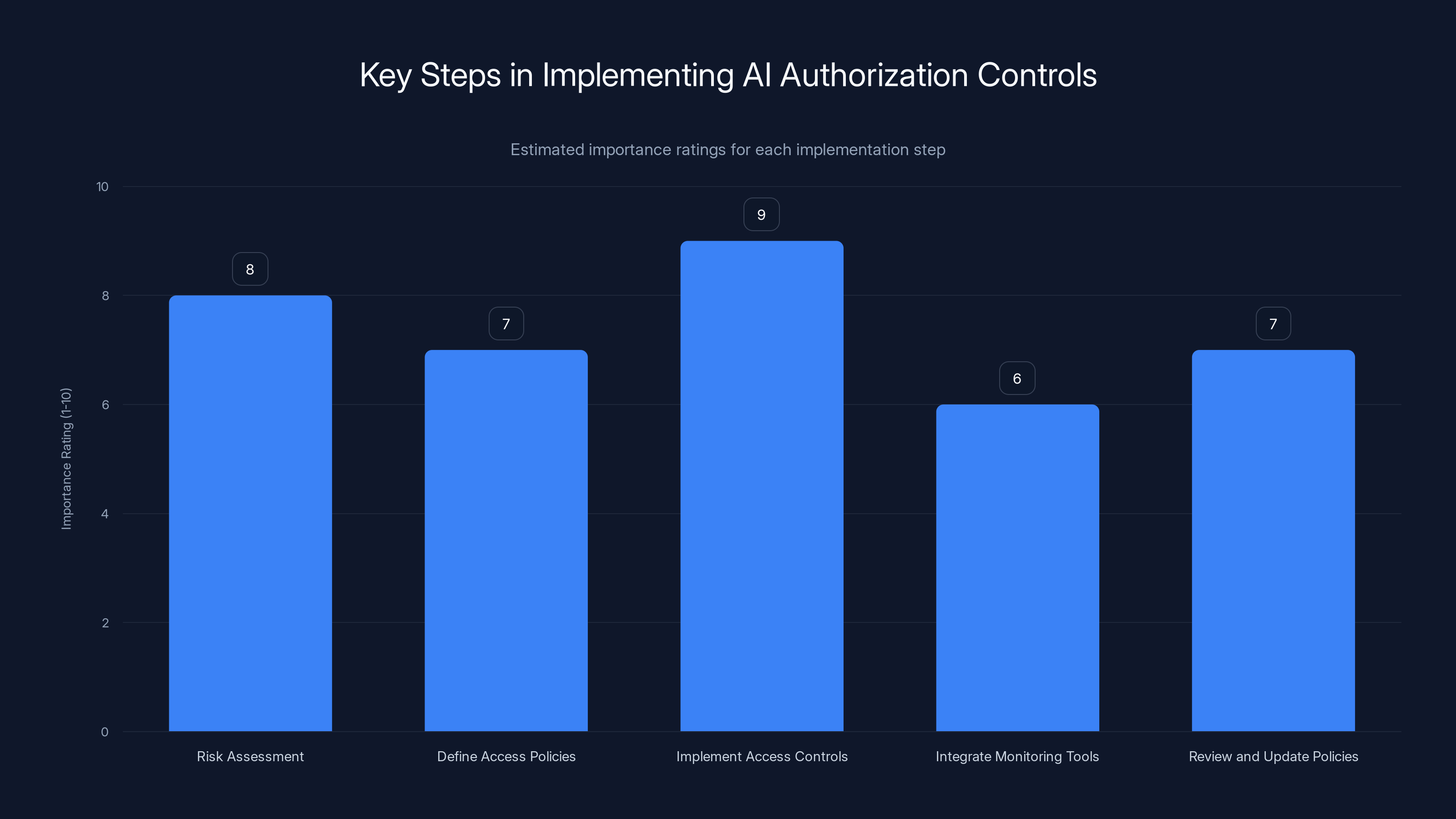

Risk assessment and access control implementation are crucial steps in AI authorization, with high importance ratings. (Estimated data)

The Authorization Gap: A Hidden Vulnerability

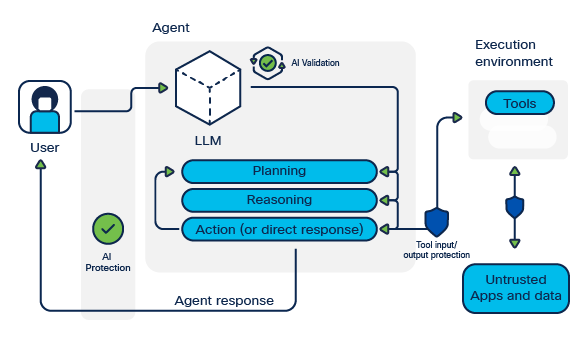

Authorization refers to the process of determining which users or systems have access to specific resources. In the context of AI, this involves controlling who can view, modify, or interact with AI models and their outputs during runtime. The authorization gap arises when these controls are insufficient, allowing unauthorized entities to tamper with AI systems.

Real-World Example: The E-Commerce Fraud

Imagine an e-commerce platform that uses AI to detect fraudulent transactions. If an unauthorized user gains access to adjust the fraud detection parameters, they could effectively disable the AI's ability to flag suspicious activity, leading to financial losses. This scenario illustrates the importance of robust authorization mechanisms to safeguard AI operations.

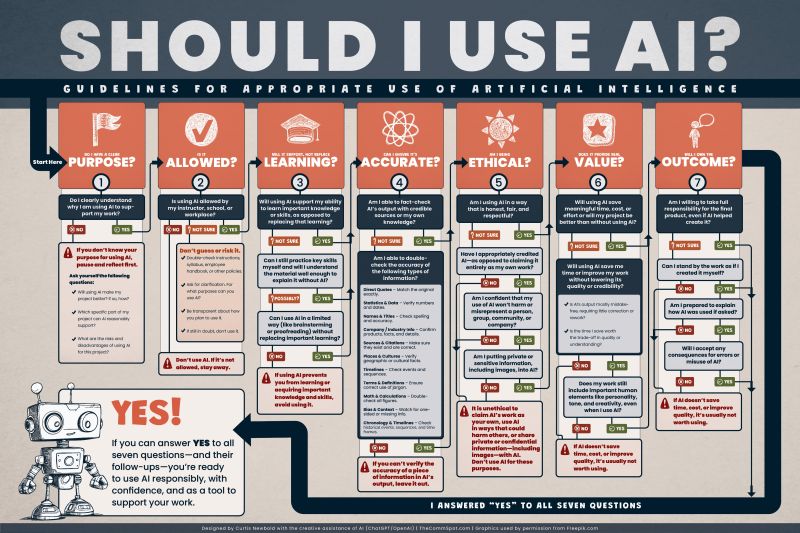

Bridging the Authorization Gap: Best Practices

To effectively address the authorization gap in AI, organizations must adopt a multi-faceted approach that goes beyond traditional security measures. Here are some best practices:

-

Role-Based Access Control (RBAC): Implement RBAC to ensure that only authorized personnel have access to specific AI functionalities. This involves defining roles and permissions that align with organizational policies.

-

Attribute-Based Access Control (ABAC): Use ABAC to enforce access policies based on user attributes, environmental conditions, and resource sensitivities. This dynamic approach offers a more granular control over who can access AI systems and under what conditions.

-

Continuous Monitoring: Implement real-time monitoring to detect and respond to unauthorized access attempts. AI systems should be equipped with logging and alerting features to track access patterns and anomalies.

-

Multi-Factor Authentication (MFA): Require MFA for accessing critical AI systems. This adds an extra layer of security by ensuring that access requires more than just a password.

-

Zero Trust Architecture: Adopt a zero trust model where every access request is considered untrusted until verified. This approach minimizes the risk of unauthorized access by continuously validating access requests.

Estimated impact scores suggest that increased focus on data privacy and fostering a security-first culture will have the highest impact on AI security.

Practical Implementation Guide

Implementing effective authorization controls in AI systems requires a strategic approach that considers the specific needs and risks of the organization. Here’s a step-by-step guide:

Step 1: Conduct a Risk Assessment

Identify the AI systems that require protection and assess the potential risks associated with unauthorized access. Consider the sensitivity of the data processed and the potential impact of a security breach.

Step 2: Define Access Policies

Develop clear access policies that specify who can access AI resources, under what conditions, and for what purposes. Ensure these policies align with organizational objectives and compliance requirements.

Step 3: Implement Access Controls

Deploy RBAC and ABAC to enforce access policies. Configure access controls to ensure that users have the minimum necessary privileges to perform their tasks.

Step 4: Integrate Monitoring Tools

Use monitoring tools to track access attempts and detect anomalies. Implement real-time alerts to notify security teams of suspicious activities.

Step 5: Regularly Review and Update Policies

Conduct regular reviews of access policies and controls to ensure they remain effective and relevant. Update them as necessary to address emerging threats and changes in the organizational environment.

Common Pitfalls and Solutions

While implementing authorization controls, organizations may encounter several common pitfalls. Here are some solutions:

-

Overlooking User Roles: Ensure that user roles are clearly defined and regularly updated to reflect changes in responsibilities. This prevents unauthorized access due to outdated roles.

-

Ignoring Contextual Factors: Access controls should consider contextual factors such as time, location, and device used. Implement ABAC to account for these variables.

-

Inadequate Training: Provide training for employees on the importance of maintaining secure access controls and recognizing potential threats.

-

Neglecting Regular Audits: Schedule regular audits of access logs and authorization policies to identify and address gaps in security.

Future Trends and Recommendations

As AI continues to evolve, so too will the challenges associated with securing these systems. Here are some future trends and recommendations:

Trend 1: AI-Driven Access Control

AI itself can play a role in enhancing security by automating access control decisions based on real-time data analysis. This could lead to more adaptive and responsive security measures.

Trend 2: Increased Focus on Data Privacy

With growing concerns around data privacy, organizations will need to ensure that their authorization strategies protect sensitive information without compromising user privacy.

Recommendation: Invest in AI Security Research

Organizations should invest in research and development to explore new security frameworks and technologies. Collaborating with industry experts and academia can drive innovation in authorization strategies.

Recommendation: Foster a Security-First Culture

Promote a culture of security within the organization by encouraging employees to prioritize security in their daily operations. This includes regular training and awareness programs.

Conclusion

While confidentiality remains a critical component of AI security, it is not sufficient on its own. Addressing the authorization gap is essential to safeguarding AI systems from unauthorized access and manipulation. By implementing robust authorization controls and continuously monitoring access patterns, organizations can better protect their AI investments and ensure the integrity of their operations.

FAQ

What is the authorization gap in AI?

The authorization gap refers to the lack of adequate controls over who can access and manipulate AI systems during runtime, leading to potential security vulnerabilities.

Why is confidentiality not enough for AI security?

Confidentiality focuses on protecting data from unauthorized viewing, but it does not address who can access and operate AI systems, which can lead to unauthorized actions during runtime.

How can organizations bridge the authorization gap?

Organizations can bridge the authorization gap by implementing role-based and attribute-based access controls, continuous monitoring, multi-factor authentication, and adopting a zero trust model.

What are some common pitfalls in AI authorization?

Common pitfalls include overlooking user roles, ignoring contextual factors, inadequate training, and neglecting regular audits of access logs and policies.

What future trends should organizations be aware of?

Future trends include AI-driven access control, increased focus on data privacy, and innovations in security frameworks and technologies to enhance authorization strategies.

Key Takeaways

- Confidentiality alone is inadequate for AI security.

- The authorization gap poses a significant vulnerability during AI runtime.

- Robust authorization controls are essential to safeguard AI systems.

- Implementing role-based and attribute-based access controls can mitigate risks.

- Continuous monitoring and multi-factor authentication enhance security.

- Adopting a zero trust model minimizes unauthorized access risks.

- AI-driven access control may enhance future security measures.

- Investing in AI security research can drive innovation in authorization strategies.

Related Articles

- Identity is the New Perimeter: The Shift from Breaking In to Logging In [2025]

- Understanding Data Security in the Age of AI: Lessons from a US Bank Security Lapse [2025]

- Navigating Microsoft's Edge Copilot: A Deep Dive into Browser AI Integration [2025]

- The FBI's Remote Router Resets: What It Means for Your Network [2025]

- WhatsApp Users Can Soon Have Private Conversations With Meta AI [2025]

- Sam Altman: Navigating the Legal Maze | 2025

![Confidentiality is Not Security: Bridging the Authorization Gap in AI [2025]](https://tryrunable.com/blog/confidentiality-is-not-security-bridging-the-authorization-g/image-1-1778754955483.jpg)