Understanding Data Security in the Age of AI: Lessons from a US Bank Security Lapse [2025]

Data security is a critical issue in today's AI-driven world. As AI applications become more integrated into business processes, the risk of data exposure grows. This article delves into the recent security lapse at a US bank, drawing lessons and providing insights into data security best practices, common pitfalls, and future trends.

TL; DR

- Sensitive Data Exposure: A US bank incident highlights the risks of sharing customer data with AI applications.

- Best Practices: Implement robust data encryption and access controls.

- Common Pitfalls: Over-reliance on AI without proper oversight can lead to vulnerabilities.

- Future Trends: Increased regulation and AI-driven security solutions are on the horizon.

- Bottom Line: Vigilance and proactive security measures are essential in AI environments.

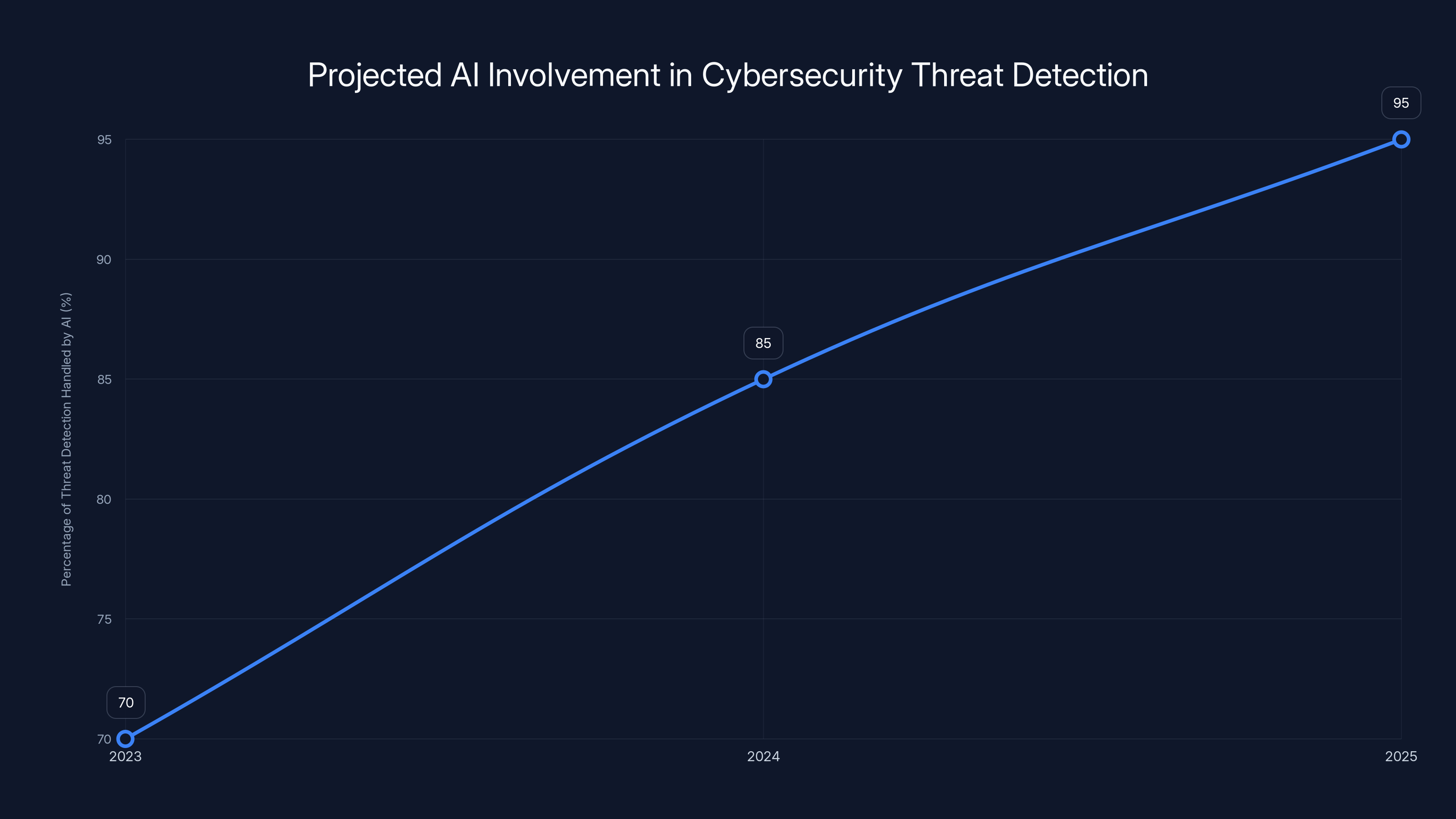

AI is projected to handle 95% of cybersecurity threat detection by 2025, showcasing its growing role in data security. Estimated data based on industry trends.

The Incident: What Happened?

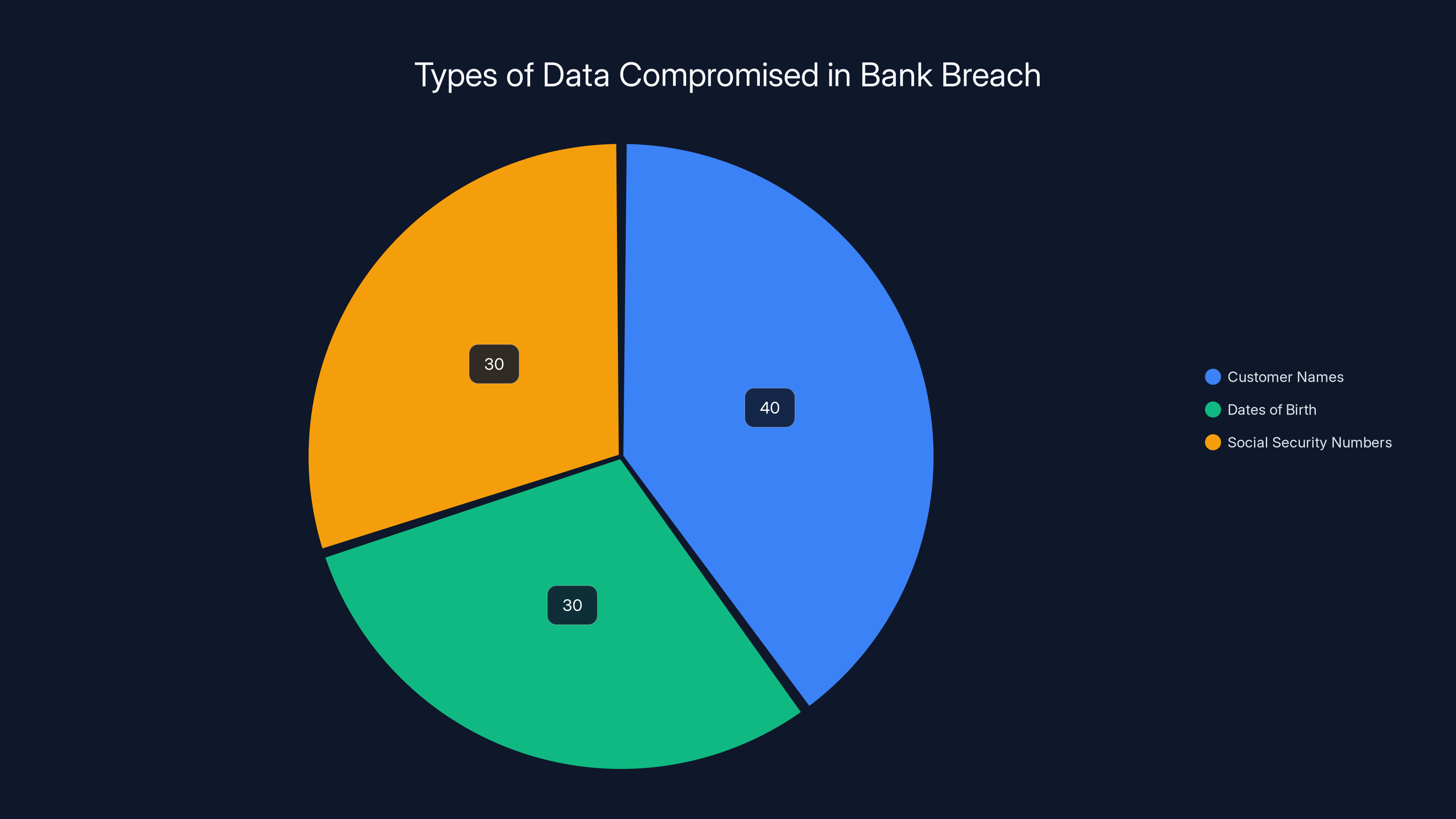

In May 2025, a US bank disclosed a security lapse involving the exposure of customer data through an AI application. The incident, reported in an 8-K filing, revealed that customer names, dates of birth, and Social Security numbers were compromised. The breach was attributed to unauthorized use of an AI-based software application, as detailed in Express News.

Understanding the Breach

The breach appears to have occurred when customer data was uploaded to an online AI chatbot without proper authorization. This highlights the risks associated with integrating AI into traditional banking systems without adequate security protocols, as noted by Cisco's FY25 Purpose Report.

Key Takeaway: AI applications can inadvertently expose sensitive data if not properly managed.

The breach primarily exposed customer names, dates of birth, and Social Security numbers. Estimated data based on typical breach reports.

Why AI and Data Security Clash

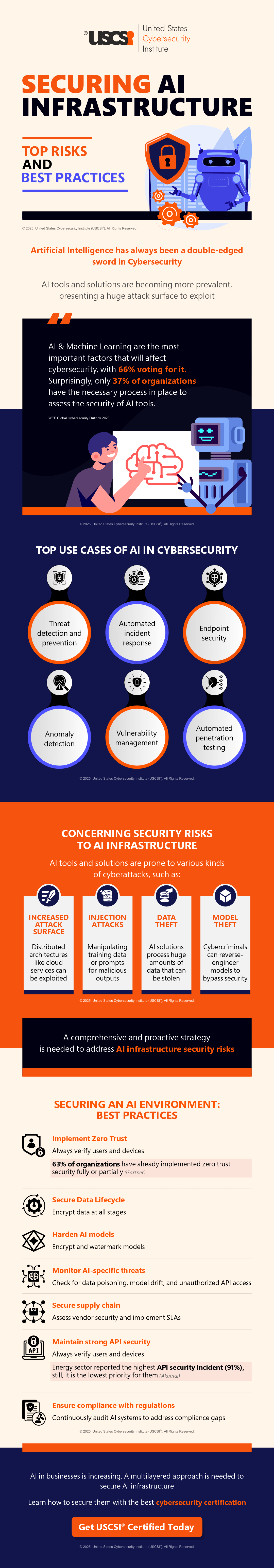

AI relies heavily on data to function effectively. This dependency creates a double-edged sword: while AI can enhance data processing capabilities, it also increases the risk of data exposure.

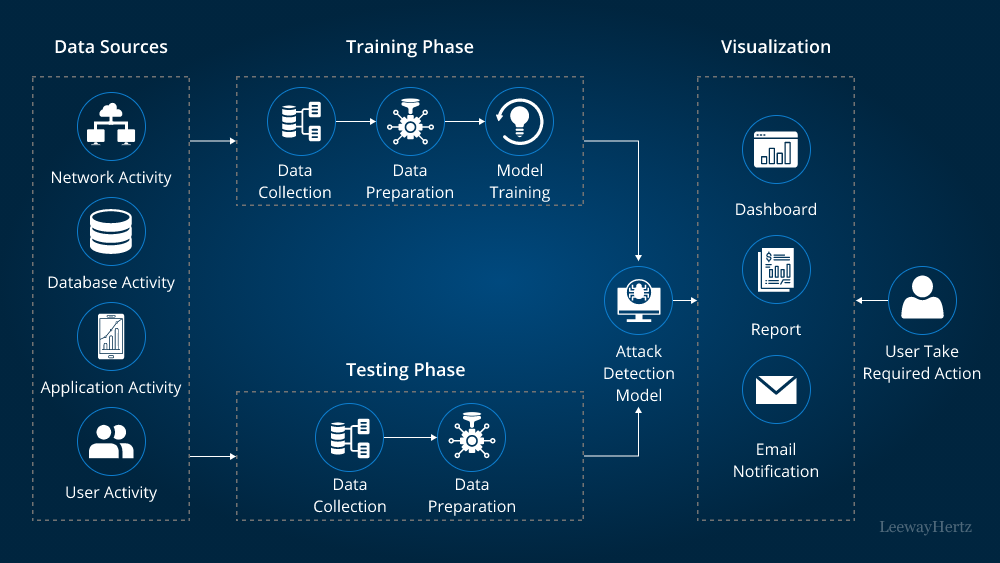

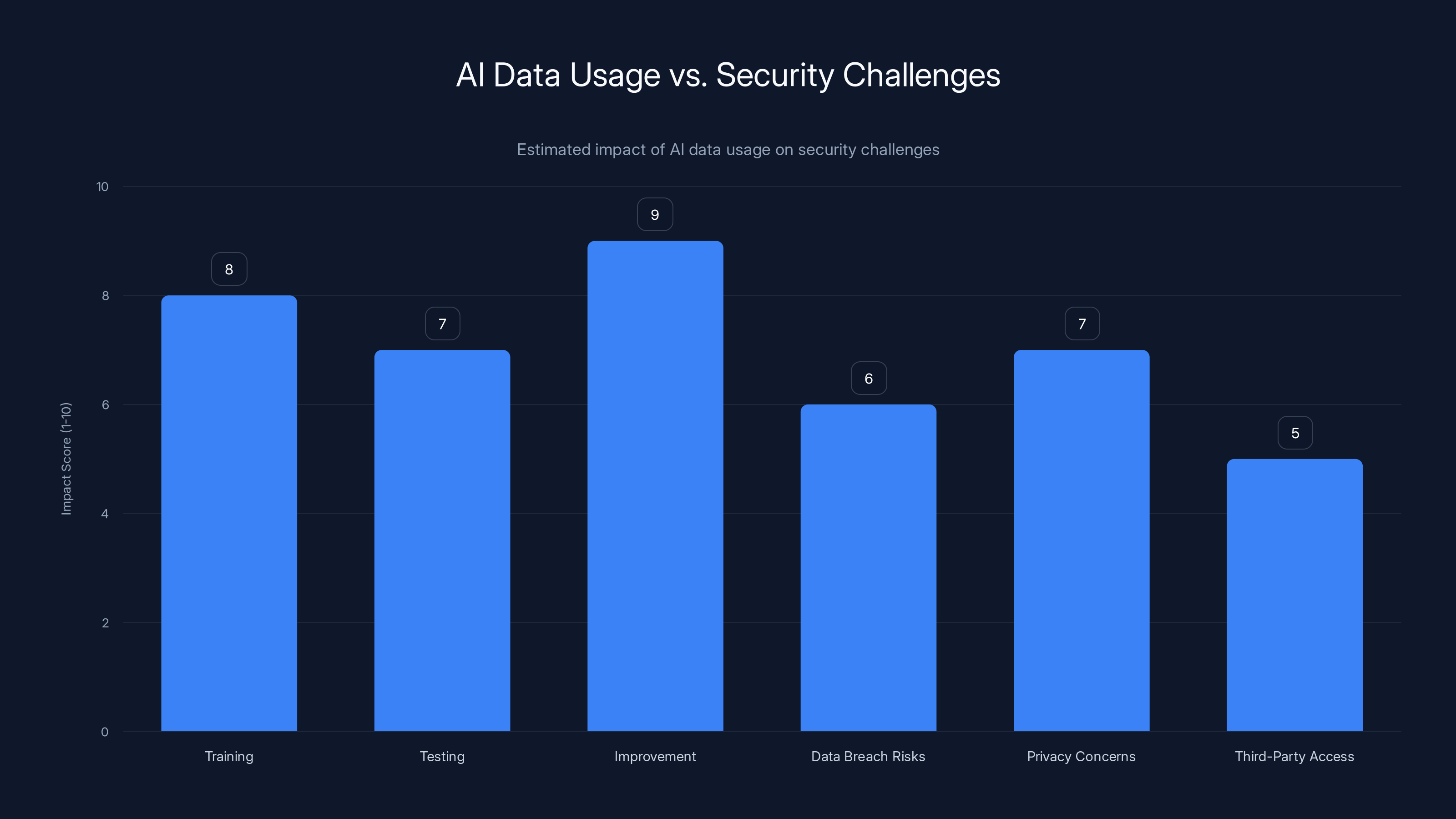

How AI Uses Data

AI applications use data for training, testing, and improving algorithms. This process often involves accessing large datasets, which can include sensitive information.

- Training: AI models require vast amounts of data to learn patterns and make predictions.

- Testing: Data is used to test AI models for accuracy and reliability.

- Improvement: Continuous data input helps refine AI algorithms over time.

Security Challenges

- Data Breach Risks: AI systems are vulnerable to breaches if data handling protocols are not stringent, as highlighted by Wiz's data security insights.

- Privacy Concerns: AI can inadvertently violate user privacy if sensitive data is not properly anonymized.

- Third-Party Access: Collaboration with third-party AI vendors can lead to data exposure if not carefully managed, as discussed in HSCC's guide on third-party AI risk.

Image Placeholder:

Best Practices for Data Security in AI

To mitigate the risks associated with AI and data security, organizations must adopt a comprehensive security strategy.

1. Data Encryption

Encrypt data both at rest and in transit to protect it from unauthorized access. Use strong encryption standards like AES-256.

plaintext# Example of AES encryption openssl enc -aes-256-cbc -salt -in data.txt -out encrypted_data.txt

Quick Tip: Regularly update encryption keys to further enhance security.

2. Access Controls

Implement strict access controls to limit who can view or manipulate sensitive data. Use multi-factor authentication for added security.

- Role-Based Access Control (RBAC): Assign permissions based on user roles.

- Least Privilege Principle: Provide users with the minimum level of access necessary.

3. Data Anonymization

Before sharing data with AI applications, anonymize sensitive information to protect user privacy.

- Tokenization: Replace sensitive data with unique identifiers.

- Data Masking: Alter data to obscure sensitive information while retaining utility.

Image Placeholder:

4. Regular Audits and Monitoring

Conduct regular security audits and real-time monitoring to detect and respond to potential threats. As noted in Industrial Cyber, AI-driven supply chains are outpacing traditional cybersecurity defenses.

Quick Tip: Automate monitoring processes using AI-driven security tools for faster threat detection.

AI's reliance on data for training, testing, and improvement has high impact scores, indicating significant data usage. However, this also correlates with notable security challenges, such as data breaches and privacy concerns. Estimated data.

Common Pitfalls and How to Avoid Them

Despite best efforts, organizations often fall into common pitfalls when integrating AI with data security.

Over-reliance on AI

AI is a powerful tool, but it should not be relied upon exclusively for security. Human oversight is crucial to catch potential issues AI might miss, as emphasized by Government Technology.

Inadequate Vendor Management

When working with third-party AI vendors, ensure they adhere to your data security standards. Conduct thorough assessments and require compliance certifications.

Lack of Employee Training

Employees must be trained on data security best practices and the specific risks associated with AI applications. Regular training sessions can help mitigate human error, as discussed in Insurance Journal.

Image Placeholder:

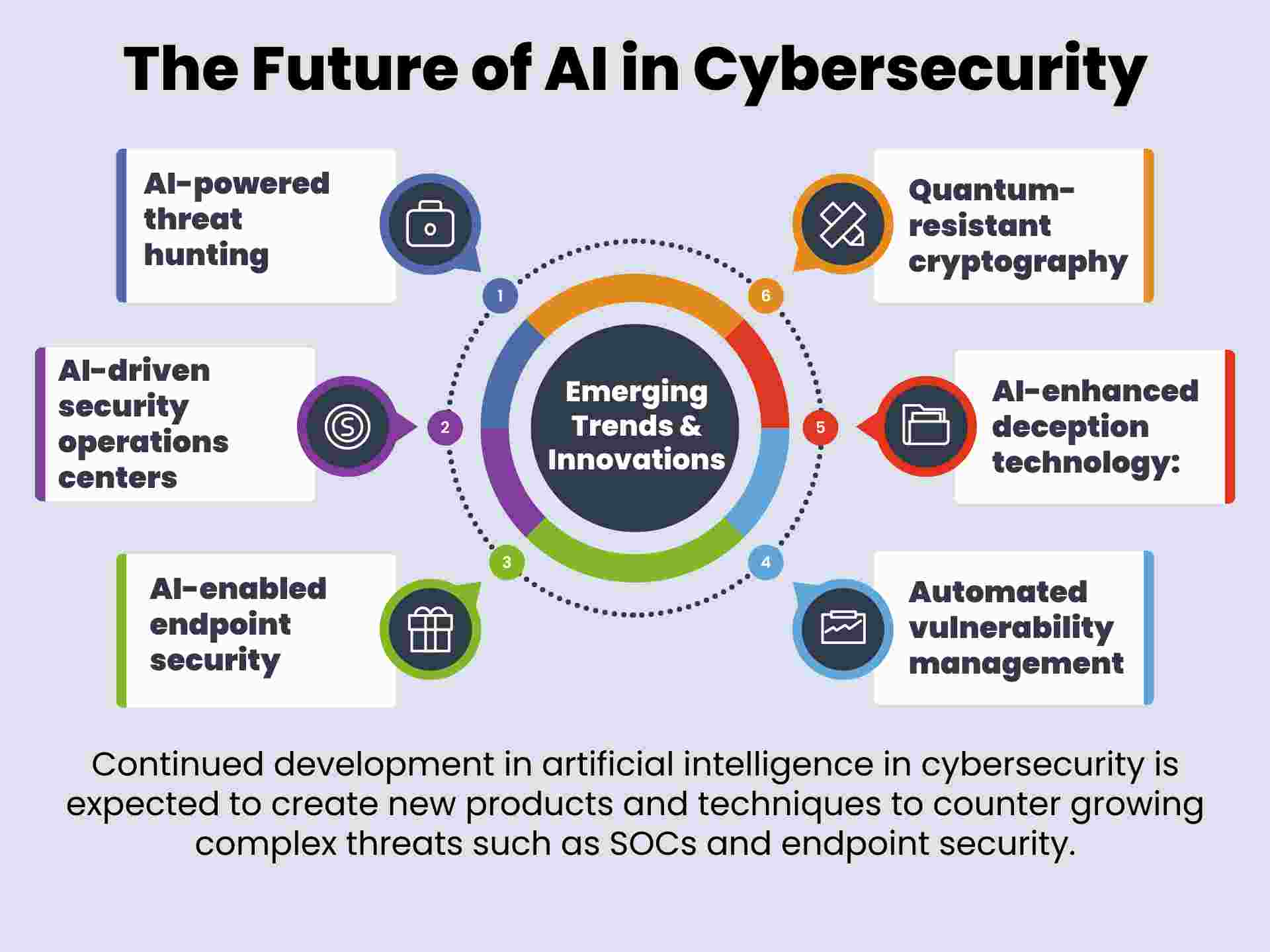

Future Trends in AI and Data Security

As AI technology evolves, so too will data security strategies. Here are some trends to watch for:

1. AI-Driven Security Solutions

AI itself is becoming a tool for enhancing data security. AI-driven security solutions can detect anomalies and potential threats faster than traditional methods, as noted by Futurity.

2. Increased Regulation

Governments worldwide are implementing stricter regulations on data security, particularly concerning AI. Organizations must stay informed and compliant to avoid penalties, as highlighted by HDBuzz.

3. Advanced Threat Detection

AI will continue to improve in identifying complex threats that are challenging for traditional security measures to detect.

Fun Fact: DID YOU KNOW: By 2025, it's estimated that AI will handle 95% of all cybersecurity threat detection activities. (Gartner)

Conclusion: Proactive Measures for a Secure Future

The recent security lapse at a US bank serves as a stark reminder of the importance of data security in the age of AI. By implementing robust security measures, conducting regular audits, and staying informed about emerging trends, organizations can protect sensitive data and maintain customer trust.

Bottom Line: Proactive data security measures are essential to harness the benefits of AI while minimizing risks.

FAQ

What is data encryption?

Data encryption is the process of converting data into a coded format to prevent unauthorized access. It ensures that only authorized parties can decrypt and access the original data.

How does AI impact data security?

AI impacts data security by increasing the complexity and volume of data processing, which can introduce vulnerabilities if not properly managed. However, AI can also enhance security through advanced threat detection.

What are the benefits of data anonymization?

Data anonymization protects user privacy by obscuring sensitive information while maintaining data utility. It reduces the risk of data breaches and compliance violations.

Why is employee training important for data security?

Employee training is crucial because human error is a common cause of data breaches. Training ensures that employees understand best practices and are aware of the specific risks associated with AI applications.

What are AI-driven security solutions?

AI-driven security solutions use machine learning algorithms to detect and respond to threats faster and more accurately than traditional security measures. They can identify patterns and anomalies that indicate potential security breaches.

How can organizations avoid over-reliance on AI for security?

Organizations can avoid over-reliance by maintaining human oversight, conducting regular audits, and combining AI with traditional security measures to create a balanced approach.

Key Takeaways

- Sensitive Data Exposure: Incident highlights the risks of sharing customer data with AI applications.

- Best Practices: Implement robust data encryption and access controls.

- Common Pitfalls: Over-reliance on AI without proper oversight can lead to vulnerabilities.

- Future Trends: Increased regulation and AI-driven security solutions are on the horizon.

- Bottom Line: Vigilance and proactive security measures are essential in AI environments.

Related Articles

- Why Intelligence and Investigative Capability are Essential to Enterprise Security [2025]

- Understanding the Security Implications of Running Claude Code in Chrome [2025]

- How Enterprises Can Safely Scale Agentic AI [2025]

- Google's Landmark Discovery: AI-Generated Zero-Day Exploit [2025]

- Defend Your Enterprise from the Shai-Hulud Worm and npm Vulnerability: 6 Actionable Steps [2025]

- The Future of Data: Google and SpaceX's Plan to Launch Data Centers into Orbit [2025]

![Understanding Data Security in the Age of AI: Lessons from a US Bank Security Lapse [2025]](https://tryrunable.com/blog/understanding-data-security-in-the-age-of-ai-lessons-from-a-/image-1-1778614575785.jpg)