Understanding the Mistral AI Breach: Implications for AI Security in 2025

The recent breach involving Mistral AI has sent shockwaves through the tech community. Hackers, identified as Team PCP, have stolen a significant amount of proprietary data, raising concerns about the security measures surrounding AI technology. This article delves into the specifics of the breach, its implications for AI security, and strategies to safeguard against future attacks.

TL; DR

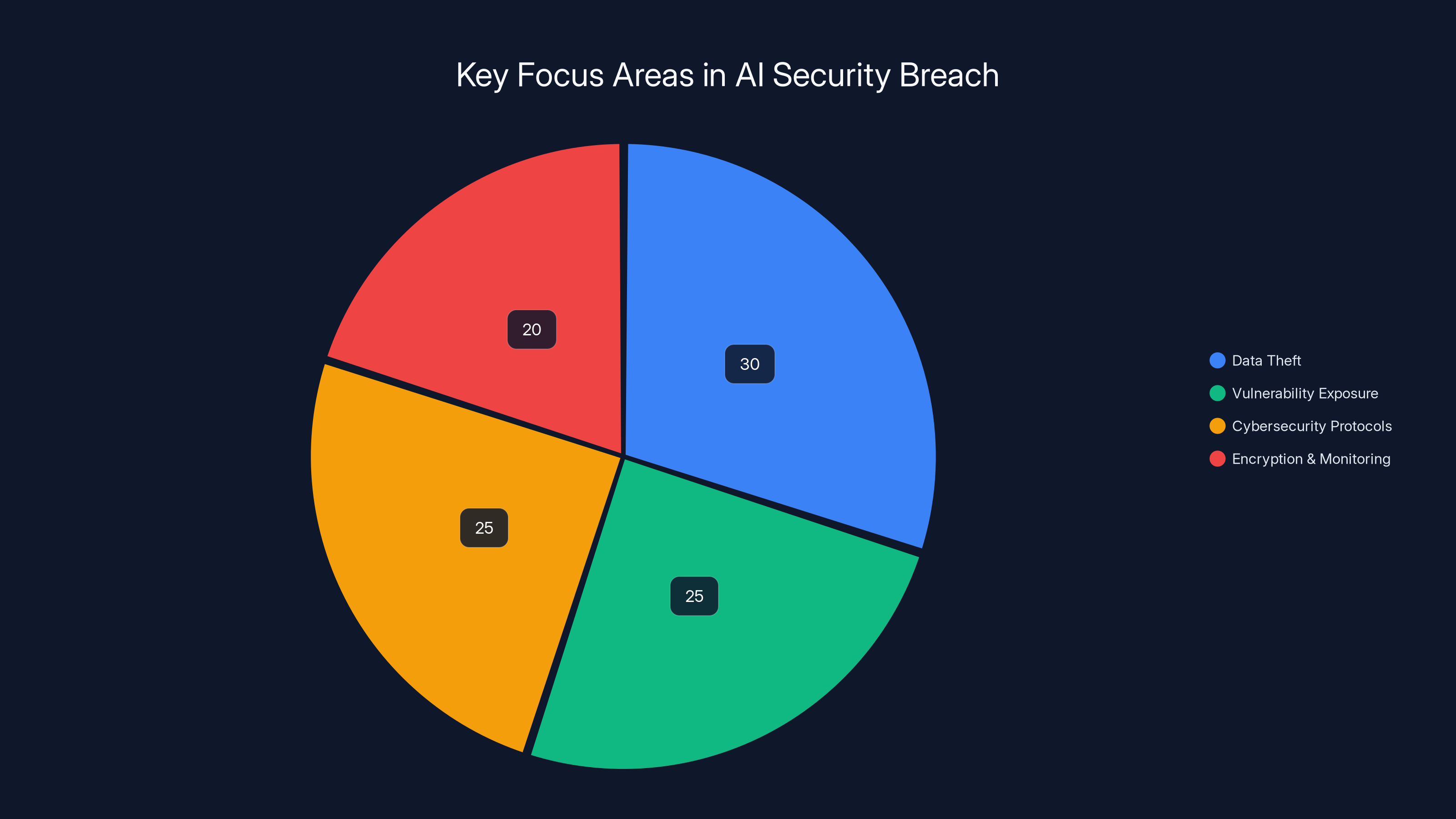

- Key Point 1: Team PCP hacked Mistral AI, stealing 450 repositories.

- Key Point 2: Breach exposes vulnerabilities in AI data security.

- Key Point 3: Companies must bolster cybersecurity protocols.

- Key Point 4: AI systems require robust encryption and real-time monitoring.

- Bottom Line: Proactive security measures are critical to protect AI assets.

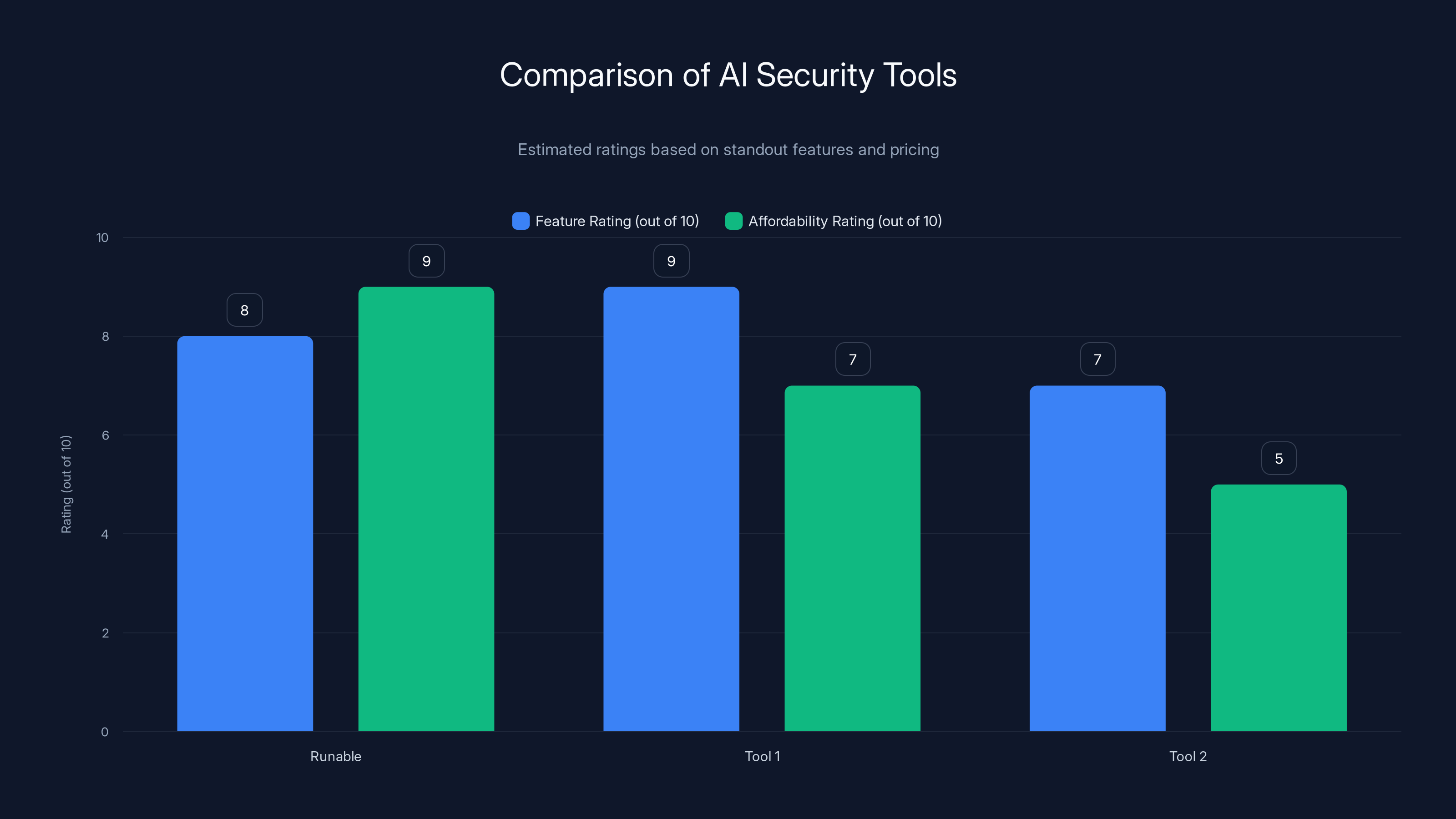

Tool 1 excels in features with a high integration capability, while Runable offers great affordability. Estimated data based on standout features and pricing.

What Happened in the Mistral AI Breach?

In a stunning revelation, Mistral AI, a prominent player in the artificial intelligence industry, confirmed that it had fallen victim to a cyber-attack. The breach, orchestrated by the hacker group Team PCP, resulted in the theft of approximately 450 repositories. These repositories contain around 5GB of internal source code, crucial for the training, fine-tuning, and benchmarking of AI models.

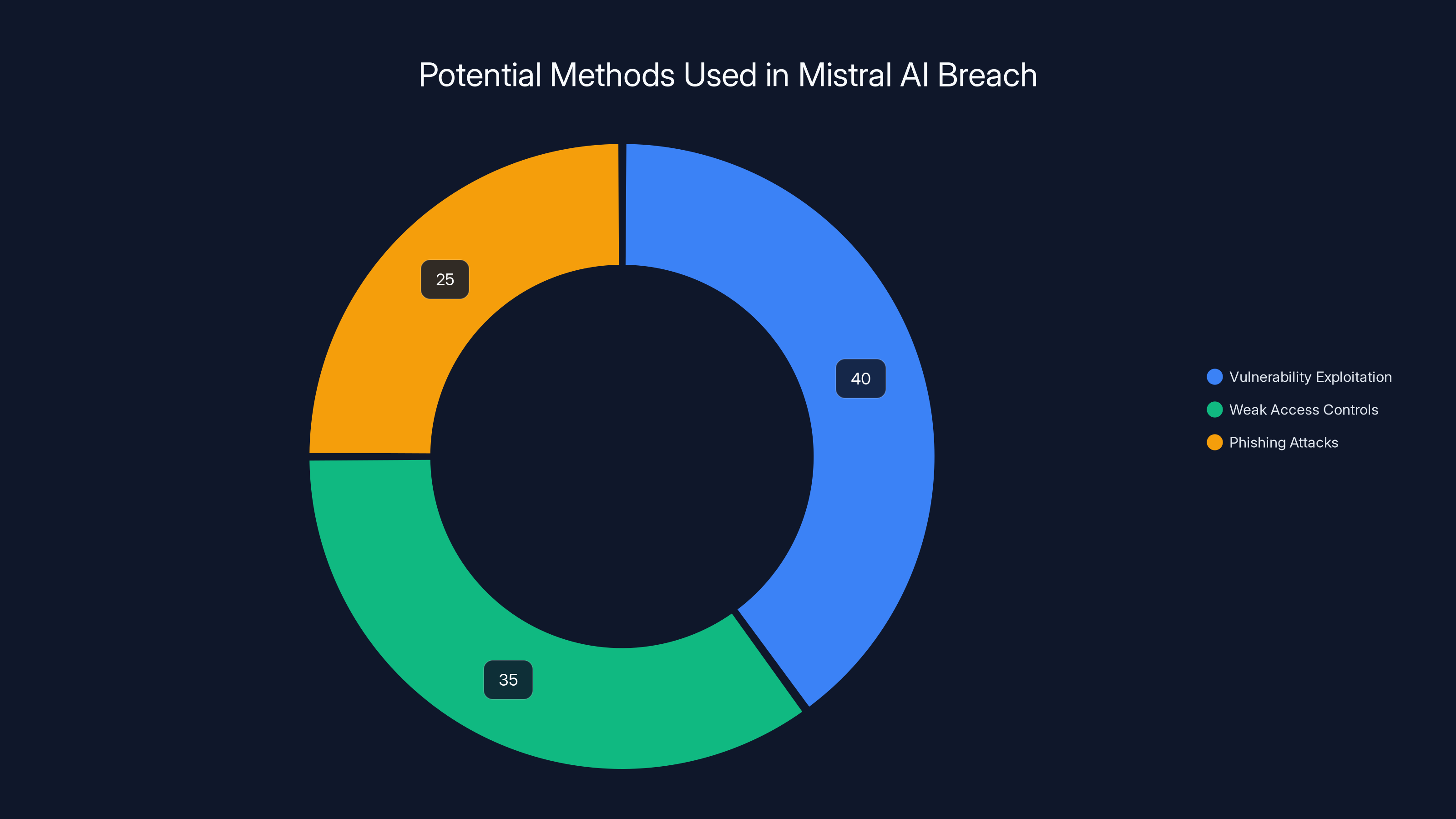

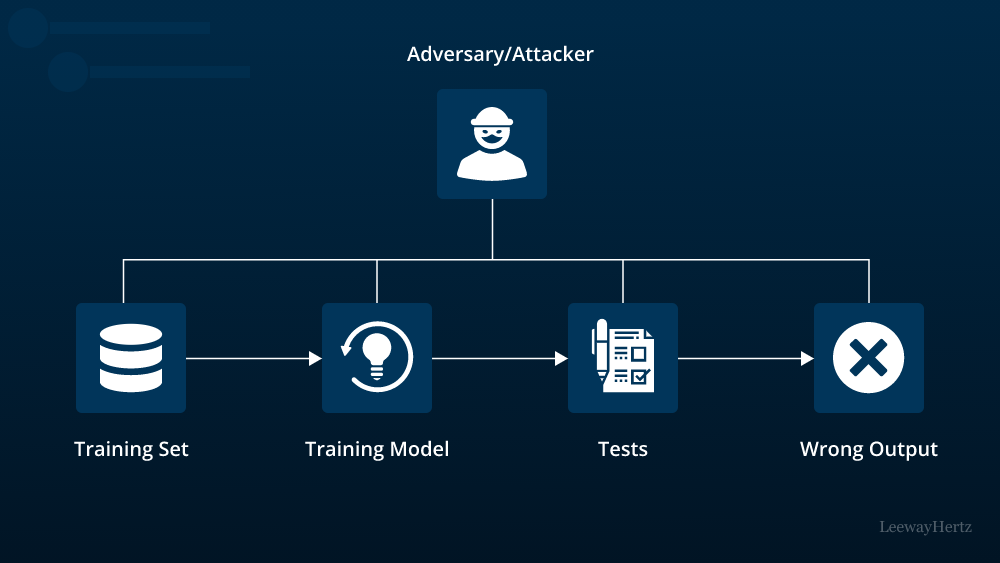

How Did the Breach Occur?

While the exact methods used by the hackers are not publicly disclosed, breaches of this nature often involve exploiting vulnerabilities in software, poor access controls, or phishing attacks. Once inside, hackers can navigate internal networks, accessing sensitive data stored in repositories.

- Vulnerability Exploitation: Hackers typically exploit unpatched software vulnerabilities to gain unauthorized access.

- Weak Access Controls: Inadequate access restrictions can allow unauthorized parties to access sensitive repositories.

- Phishing Attacks: These attacks trick employees into revealing login credentials through deceptive emails or websites.

Estimated data suggests vulnerability exploitation (40%) was the most likely method used in the Mistral AI breach, followed by weak access controls (35%) and phishing attacks (25%).

The Significance of Stolen Data

The stolen data from Mistral AI is not just any data; it's the lifeblood of their AI models. This includes source code for training models, which is critical for maintaining competitive advantages in AI development. The loss of such data can have severe repercussions, both financially and reputationally.

Implications for AI Development

- Intellectual Property Theft: The stolen source code represents years of research and development, which could now be used by competitors to replicate Mistral's AI models.

- Competitive Disadvantage: With proprietary algorithms and methodologies exposed, competitors can potentially bypass years of development work.

Protecting AI Systems: Best Practices

In light of the Mistral AI breach, it is crucial for organizations to re-evaluate their cybersecurity measures. Here are some best practices to enhance the security of AI systems:

1. Implement Robust Access Controls

- Role-Based Access Control (RBAC): Restrict access based on user roles within the organization.

- Multi-Factor Authentication (MFA): Add an extra layer of security by requiring two or more verification steps.

- Regular Audits: Conduct frequent security audits to identify and address potential vulnerabilities.

2. Encrypt Sensitive Data

- Data Encryption: Encrypt data at rest and in transit to prevent unauthorized access.

- Key Management: Use secure key management practices to safeguard encryption keys.

3. Real-Time Monitoring and Incident Response

- Intrusion Detection Systems (IDS): Deploy IDS to monitor network traffic for suspicious activity.

- Incident Response Plan: Develop and regularly update an incident response plan to quickly address breaches.

Estimated data shows emphasis on data theft and vulnerability exposure, highlighting the need for improved cybersecurity measures.

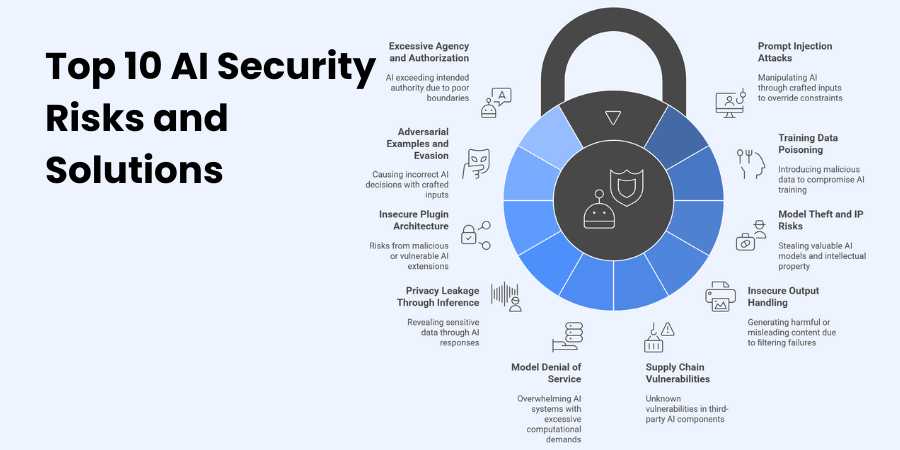

Common Pitfalls in AI Security and Solutions

Despite the best intentions, many organizations fall into common security pitfalls. Here are some pitfalls and how to avoid them:

Pitfall 1: Overlooking Human Error

Solution: Regular security training and awareness programs can mitigate the risk of human error leading to breaches.

Pitfall 2: Insufficient Security Budgets

Solution: Allocate sufficient resources to cybersecurity, recognizing it as an essential investment, not a cost.

Pitfall 3: Ignoring Third-Party Risks

Solution: Vet third-party vendors thoroughly and ensure they adhere to your security standards.

Future Trends in AI Security

The field of AI security is continually evolving. Here are some future trends that organizations should be aware of:

1. AI-Powered Security Solutions

AI itself is becoming a tool for enhancing cybersecurity measures. AI-powered security solutions can analyze vast amounts of data to detect anomalies and predict potential threats before they occur.

2. Quantum Encryption

As quantum computing becomes more prevalent, quantum encryption will emerge as a key player in securing data, offering levels of encryption that are currently unbreakable by classical computers.

3. Decentralized Security Models

Decentralization can minimize the risk of a single point of failure. By distributing data across multiple nodes, organizations can enhance their resilience against attacks.

Conclusion

The Mistral AI breach serves as a stark reminder of the vulnerabilities that exist within our digital landscapes. As AI continues to integrate into more aspects of business and society, the need for robust security measures becomes increasingly critical. Organizations must adopt a proactive approach to cybersecurity, implementing advanced technologies and practices to protect their valuable digital assets.

Use Case: Automate security compliance checks across multiple platforms with AI-driven workflows.

Try Runable For FreeFAQ

What is the Mistral AI breach?

The Mistral AI breach refers to the unauthorized access and theft of sensitive data from Mistral AI by the hacker group Team PCP.

How did hackers access Mistral AI's data?

While the exact methods are undisclosed, common techniques include exploiting software vulnerabilities, weak access controls, and phishing attacks.

What data was stolen in the Mistral AI breach?

Hackers stole approximately 5GB of internal source code, which is vital for the training and development of AI models.

How can companies protect against AI breaches?

Implement robust access controls, encrypt data, conduct regular security audits, and develop a comprehensive incident response plan.

Why is data encryption important?

Encryption protects sensitive data from unauthorized access by converting it into a secure code that requires a key to decode.

What are the future trends in AI security?

AI-powered security solutions, quantum encryption, and decentralized security models are emerging trends to watch.

The Best AI Security Tools at a Glance

| Tool | Best For | Standout Feature | Pricing |

|---|---|---|---|

| Runable | AI automation | AI agents for presentations, docs, reports, images, videos | $9/month |

| Tool 1 | Threat detection | Integrates with 8,000+ apps | Free plan available; paid from $19.99/month |

| Tool 2 | Data security | Automated data profiling | By request |

Quick Navigation:

- Runable for AI-powered presentations, documents, reports, images, videos

- Tool 1 for threat detection

- Tool 2 for data security

The Mistral AI breach is a sobering lesson in the importance of cybersecurity in today's digital age. By understanding the intricacies of such breaches and implementing cutting-edge security measures, organizations can safeguard their invaluable AI assets and ensure a more secure future.

Key Takeaways

- Data breaches in AI can lead to significant competitive disadvantages.

- Implementing robust security measures is critical for protecting AI assets.

- AI-powered security solutions offer advanced threat detection capabilities.

- Quantum encryption is emerging as a future-proof security measure.

- Decentralized security models reduce the risk of single points of failure.

Related Articles

- Understanding Zero-Day Exploits: The Case of YellowKey and Windows 11 BitLocker [2025]

- The Looming Exodus: Why Windscribe and Signal Are Threatening to Leave Canada [2025]

- Unveiling the Microsoft BitLocker USB Backdoor: Risks, Solutions, and Future Implications [2025]

- Exposing Cyber Intruders: A Deep Dive into Russian Government Hackers Targeting Signal Accounts [2025]

- Microsoft's MDASH AI Security Platform: A Game Changer? [2025]

- Identity is the New Perimeter: The Shift from Breaking In to Logging In [2025]