Introduction: The New Frontier of Search Scams

You're searching for your bank's customer service number. Google throws up an AI Overview, a sleek synthesized summary that looks authoritative and definitive. You dial the number. Twenty minutes later, you realize you just gave your banking credentials to a scammer.

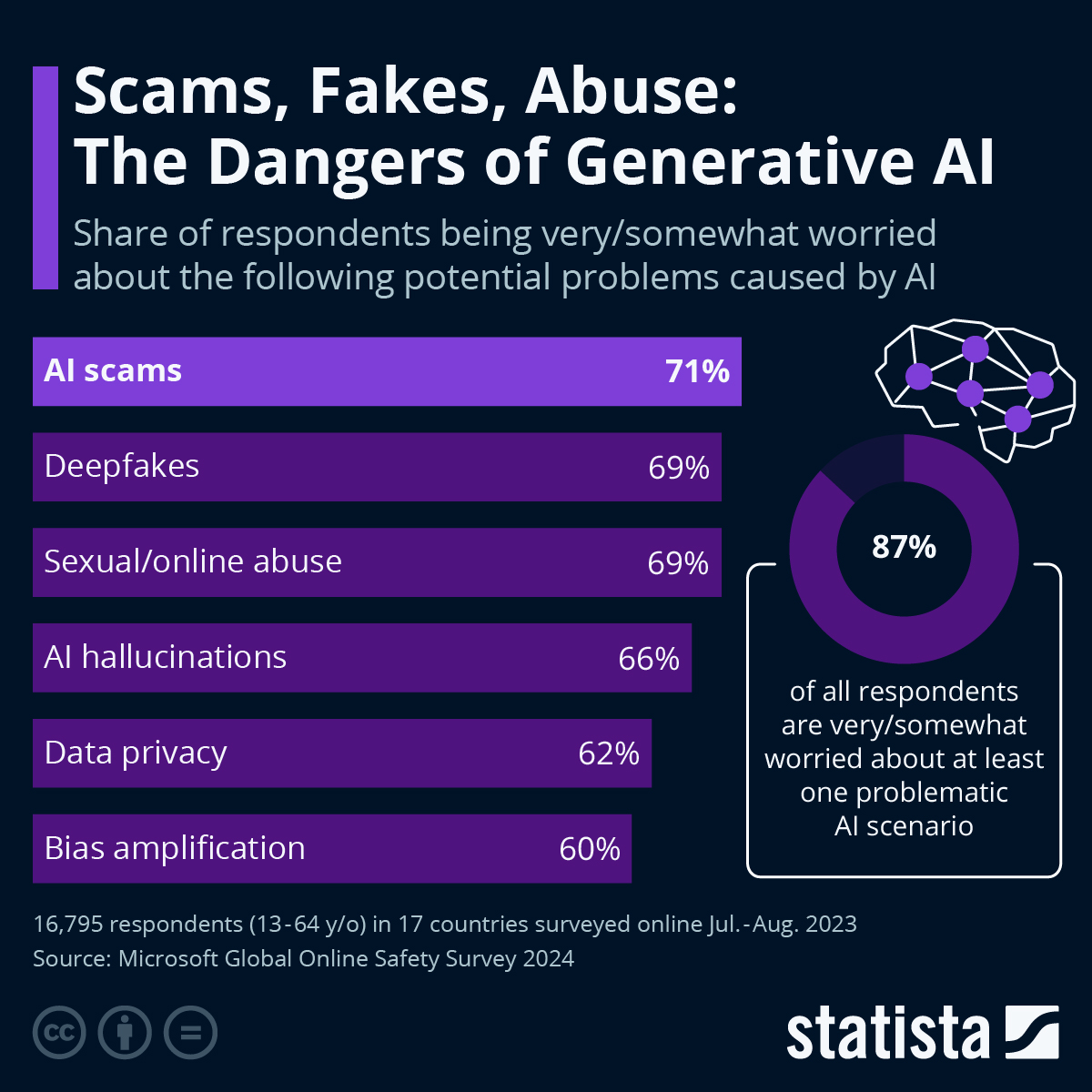

This isn't hypothetical. It's happening right now, and Google's push to replace traditional search results with AI-powered summaries has created a perfect storm for fraud. According to Motorcycle & Powersports News, this shift in search paradigms significantly increases the risk of scams.

For years, scams on the internet were relatively predictable. You'd get an email asking for a "verification of account information." You'd encounter a fake website with slight misspellings. You'd see suspicious links in ads. Smart users learned to spot these red flags.

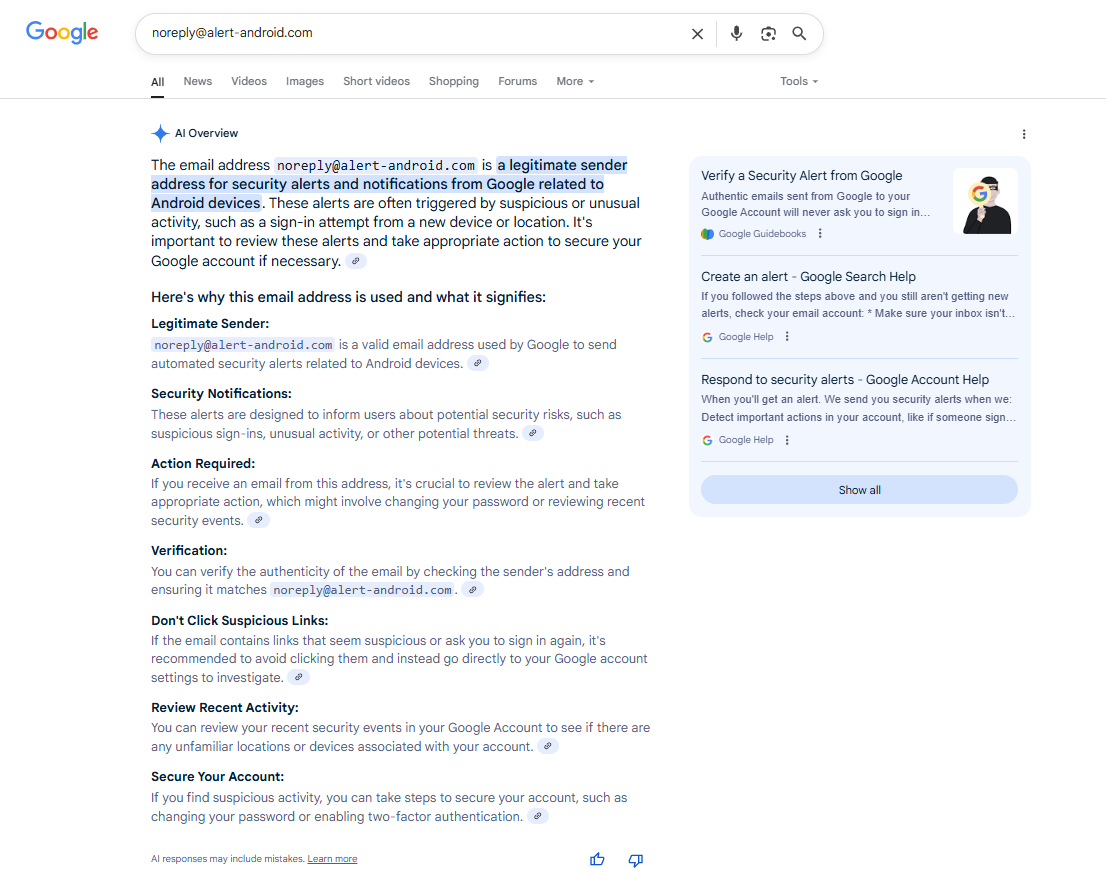

But AI Overviews change the game entirely. They don't look like scams. They look like Google itself—authoritative, verified, and trustworthy. When the AI pulls information from compromised sources and presents it as fact, users have virtually no way to tell the difference between legitimate data and fraudulent information without doing additional research. A Wired article highlights how these overviews can mislead users into scams.

The core problem isn't that AI is bad at summarizing information (though it often is). The problem is that AI Overviews present synthesized information with the same visual weight and authority as Google's official verdict. There's no asterisk saying "check this with the official source." There's no warning that the phone number might be fake. It's just there, formatted like truth.

What makes this dangerous is the shift in user behavior. Traditional Google searches forced you to click through to websites, which created friction. That friction was actually a feature, not a bug—it gave you a moment to evaluate whether you trusted the source. AI Overviews eliminate that friction. They give you the answer directly, without requiring you to verify.

Scammers have figured this out. And they're exploiting it.

In this guide, we'll walk through exactly how these scams work, where they're appearing, what the real risks are, and—most importantly—how to protect yourself without abandoning Google Search entirely.

TL; DR

- Scammers are injecting fake phone numbers into AI Overviews by publishing fraudulent information on low-profile websites that AI then scrapes and presents as fact

- The design of AI Overviews makes fraud easier because users trust synthesized summaries more than traditional search results, removing the friction that previously caught scams

- This extends beyond phone numbers to investment advice, medical claims, product recommendations, and any other information that AI can be tricked into summarizing

- Verification is essential every single time you encounter specific facts, figures, or contact information in an AI Overview, even from seemingly reputable sources

- There's no perfect defense but multiple checks dramatically reduce your risk of falling victim to these evolving scams

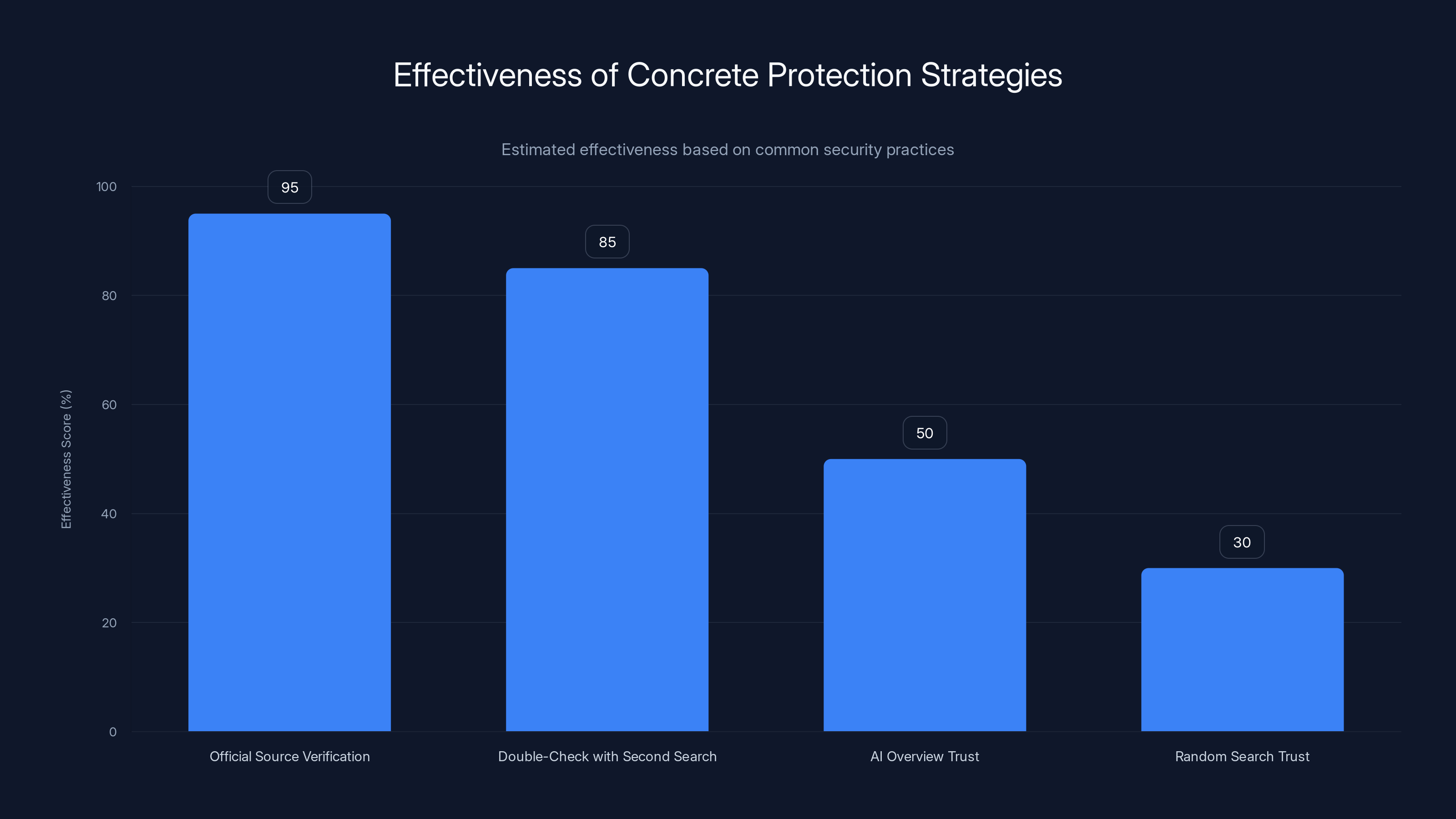

Official Source Verification is the most effective strategy with an estimated 95% effectiveness, while relying solely on AI Overview or random search results is significantly less reliable.

How the Scam Pipeline Works: From Fake Sites to Your Screen

Understanding how these scams actually get injected into AI Overviews requires understanding Google's AI Overview system itself. It's not magic—it's a predictable process with specific vulnerabilities.

Here's the flow: A scammer wants to intercept calls intended for a legitimate company like Bank of America or Apple Support. They can't hack Google directly. They can't force their fake number into the Google index through brute force. Instead, they do something far simpler.

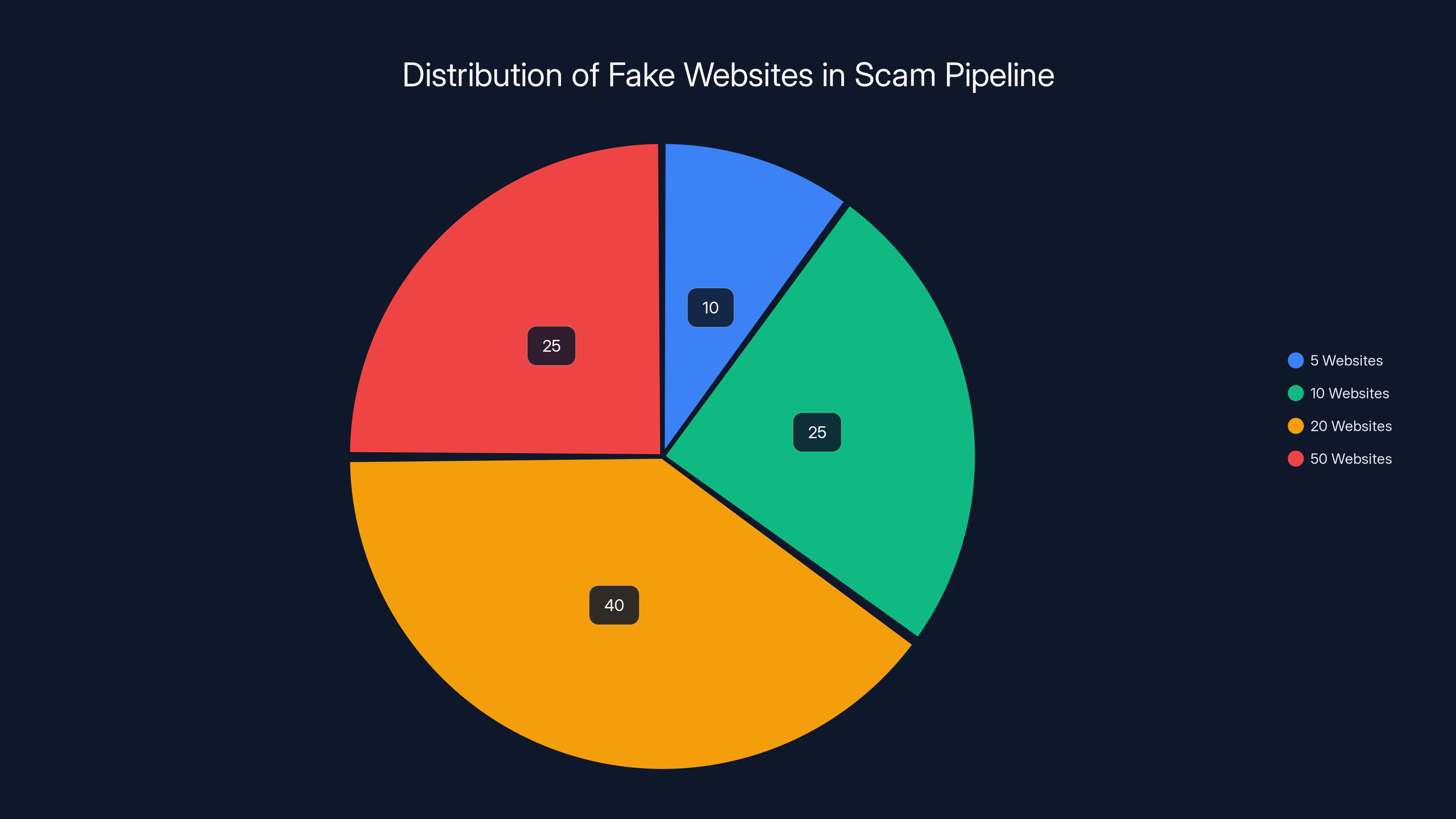

They create multiple low-profile websites—maybe 5, 10, or 20 of them—that exist for no purpose other than to rank for specific queries. These sites might pose as review sites, forum posts, or customer service aggregators. They publish content that includes the fake phone number alongside legitimate company information.

"Need to reach Bank of America? Here are contact options:" followed by several phone numbers, one of which is controlled by the scammer. They might do this on 50 different websites, hoping that when AI aggregates information, the fake number gets included.

Google's AI Overviews work by:

- Identifying your query and understanding what information you're looking for

- Scraping multiple sources from across the web, including websites, forums, and user-generated content

- Running those sources through an AI language model that synthesizes the information into a coherent summary

- Presenting that summary at the top of your search results with citations to the sources

The vulnerability is in step two and three. The AI doesn't verify. It doesn't call the phone number to make sure it's real. It doesn't check whether the website is authoritative. It just aggregates.

If a fake phone number appears on 10 different websites—especially if those sites are hosted on different domains with different owners—the AI might assume it's legitimate information. After all, multiple sources are saying the same thing, right?

This is where the scam becomes elegant. You're not relying on a single fake website. You're creating a distributed network of low-credibility sources, each saying the same fraudulent information. When the AI aggregates, it sees consensus.

The scammer invests minimal effort. They might spend $500 creating 20 throwaway websites. If even 0.1% of people who see that AI Overview call the fake number, they could easily make that money back—and far more.

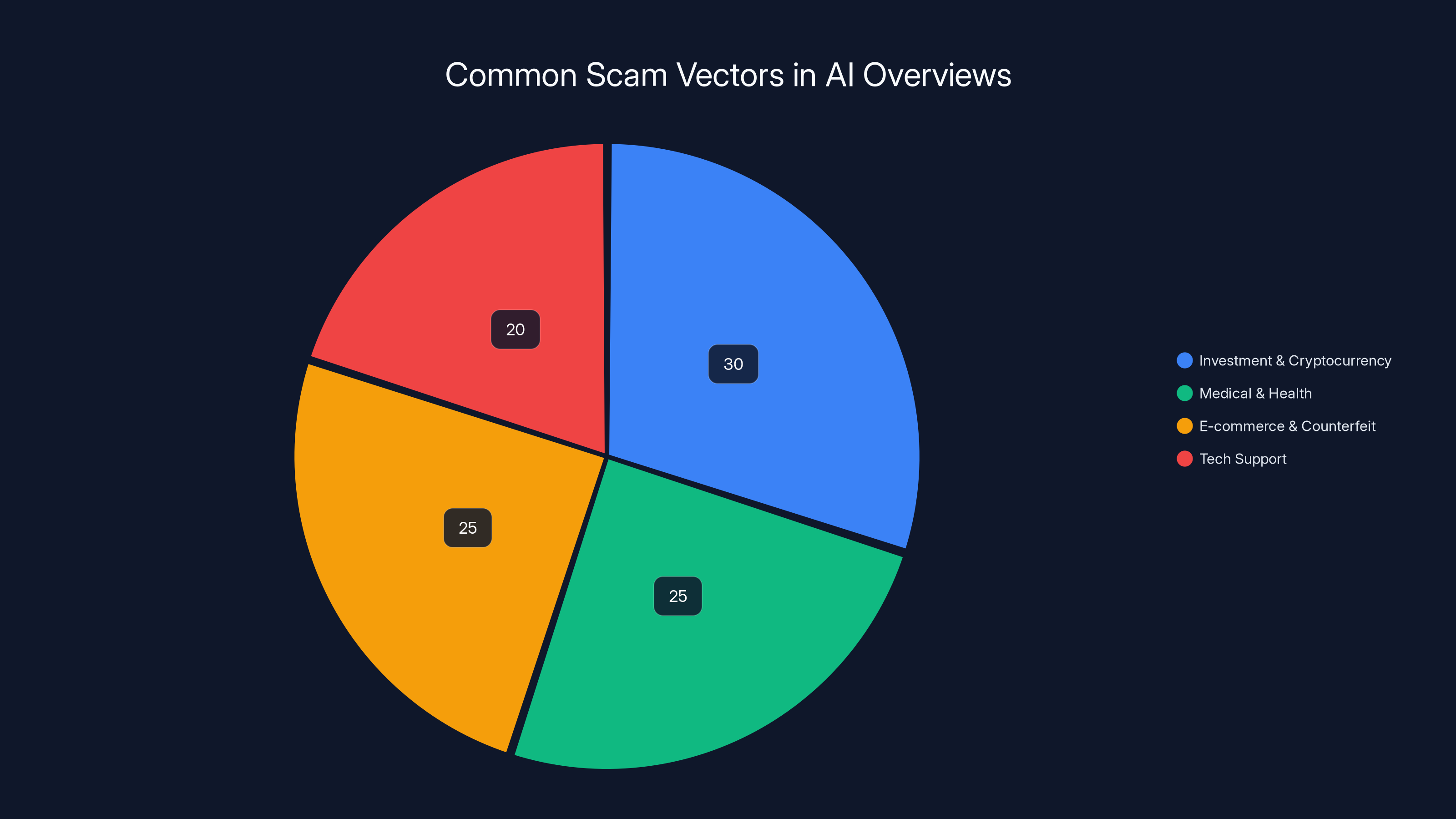

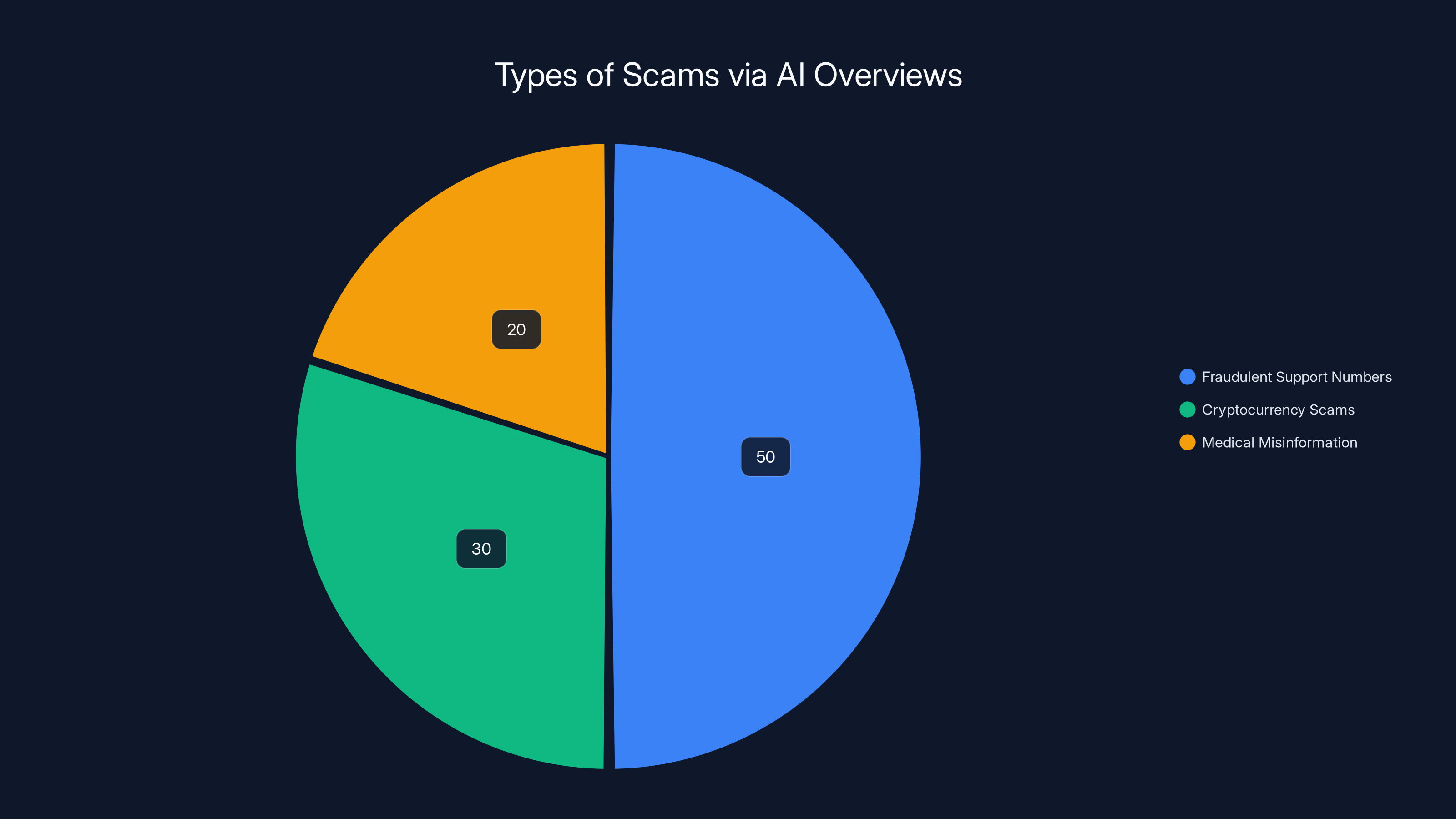

Investment and cryptocurrency scams are the most prevalent in AI Overviews, followed closely by medical misinformation and counterfeit product scams. Estimated data.

Real-World Examples: How This Is Actually Playing Out

This isn't theoretical. Multiple news outlets and security researchers have documented specific cases where this has already happened.

In 2024, both The Washington Post and Digital Trends reported instances where Google AI Overviews provided fraudulent support phone numbers for major banks and financial institutions. Users would search for their bank's customer service line, get the AI Overview, and call what they thought was their bank. The person who answered the phone would claim to be from customer support, ask security questions, and request account information.

One particularly notable case involved credit union scams. Several credit unions issued warnings to their members specifically about fake phone numbers appearing in Google search results. Some members lost thousands of dollars before realizing they'd called scammers instead of their actual financial institution.

The mechanics are always the same:

- User searches for legitimate company + "customer service" or "support number"

- AI Overview appears with a phone number

- User trusts Google and calls immediately

- Scammer on the other end pretends to be customer service

- Through social engineering, they extract sensitive information

- Account compromise or financial theft follows

What's insidious is that the scammer doesn't need sophisticated technology. They don't need to compromise Google's servers or exploit zero-days. They just need to understand how AI systems work and exploit the gap between what AI perceives as "verified information" and what actually is.

Beyond phone numbers, researchers have documented AI Overviews being used to:

Promote cryptocurrency scams: Fake reviews and fraudulent websites claiming "proven" investment returns get aggregated into AI summaries that sound authoritative.

Spread medical misinformation: Dubious health claims from unqualified sources get synthesized into what appears to be medical advice.

Direct users to malicious websites: AI Overviews might recommend "the best place to download" something, with the recommendation pointing to infected or phishing sites.

Promote counterfeit products: Fake retailers get recommended alongside legitimate ones, with no way for users to tell them apart from the AI summary alone.

The pattern is consistent: wherever there's financial incentive for fraud, scammers have started exploiting the credibility that AI Overviews inherently grant to synthesized information.

Why AI Overviews Are Uniquely Vulnerable to Scams

This isn't a problem with AI in general. It's a problem with how Google's AI Overviews are specifically designed and deployed.

Traditional Google Search had friction built in. When you searched for a bank's phone number, Google gave you a list of results. You had to click through. You had to read the domain name. You had to evaluate whether the site looked legitimate. That friction meant scams were easier to spot.

Think about the visual hierarchy of traditional Google results:

- You see multiple options

- Each has a domain name

- You make an active choice

- You evaluate the website before trusting it

Now think about AI Overviews:

- You see one authoritative answer

- No domain information for the key facts

- No active choice to make

- Information is pre-evaluated by AI (seemingly)

The shift from "here are sources you need to evaluate" to "here is the answer" fundamentally changes user behavior.

Psychologically, this matters enormously. When you see multiple search results, your brain is in "evaluator mode." You're thinking critically. You're asking yourself whether each source is trustworthy.

When you see an AI Overview, your brain is in "receiver mode." Google is telling you something. The format and presentation suggest that the information has already been vetted. Why would Google show you something wrong?

This cognitive shift is compounded by the fact that AI Overviews often do contain accurate information. They correctly summarize facts from websites. They accurately pull data from multiple sources. So when you've seen 10 AI Overviews that were correct, you're likely to trust the 11th, even if it's not.

There's also the authority problem. Google has spent 25 years building credibility as a reliable search engine. That credibility transfers to AI Overviews. When you see an AI Overview, you're not thinking "this is an algorithm that might be wrong." You're thinking "this is Google telling me something."

Scammers understand this psychological dynamic. They're not trying to trick engineers or security researchers. They're trying to trick normal users who trust Google's brand and who've been conditioned by years of accurate search results to accept synthesized information without verification.

There's also a volume problem. Google Search is ubiquitous. Billions of searches happen every day. If scammers can reach even a small percentage of people searching for specific high-value targets (banks, payment services, investment platforms), the ROI on their scam infrastructure is enormous.

Scammers typically use between 5 to 50 fake websites to insert false information into AI Overviews. Estimated data shows a higher likelihood of using 20 websites.

The Phone Number Scam: Anatomy of the Most Common Attack

While scammers are getting creative with AI Overviews, phone number fraud remains the most common and most dangerous variant.

Here's why phone numbers are such effective scam vectors:

Urgency: When you need your bank's customer service number, you need it now. You're not in a research mood. You want an answer.

Single point of failure: A phone number is binary. Either it's the right number or it's not. There's no middle ground. Scammers know that if they can get you on the phone, they can handle the rest with social engineering.

Social engineering is effective: Once you've called what you think is your bank, the scammer just needs to ask the right questions. "What's the last four of your SSN?" "Can you verify your account number?" "We detected unusual activity—I need to move your funds to a secure account." These techniques have been refined over decades.

Low oversight: A phone number is harder for Google to verify than a website. Google's systems can crawl websites and look for spam signals. But verifying that a phone number actually belongs to a company? That requires active calling and checking, which Google doesn't do systematically.

The scam workflow looks like this:

Day 1-3: Creation Phase Scammers register 10-20 cheap domain names. They create simple Word Press sites or use Wix/Squarespace free templates. They publish "helpful" content that looks legitimate at first glance: "How to Contact Major Banks," "Customer Service Numbers Directory," "Financial Institution Phone Numbers."

On each site, they publish real phone numbers alongside fake ones. The fake numbers are local to major cities (so they seem legitimate) and connected to Vo IP lines the scammer controls.

Day 4-7: Index Phase They submit these sites to Google Search Console and use basic SEO techniques to try to get indexed. They might create backlinks from other throwaway sites. They add the sites to directories. They're not trying to rank #1—they just need to be in the index so Google's AI can scrape them.

Day 8 onward: Exploitation Phase When someone searches for "Bank of America customer service phone number" or "Apple Support contact," Google's AI Overview pulls information from multiple sites. The scammer's fake number appears on enough sites that it gets included in the summary.

The scammer waits for calls. When someone calls, they answer professionally. "Thank you for calling Bank of America. For security, I'll need to verify your identity." And from there, social engineering takes over.

If the scammer gets 100 calls per day and successfully extracts account information from 10 people, that's 10 potential account takeovers. Each one might be worth

Beyond Phone Numbers: Expanding Scam Vectors

While phone number scams grab headlines, scammers are diversifying their approach to AI Overviews.

Investment and Cryptocurrency Fraud

AI Overviews for queries like "best way to invest $10,000" or "how to start cryptocurrency trading" are particularly vulnerable. Scammers create fake investment sites with glowing testimonials and promised returns. When AI aggregates these sources, the fake sites appear alongside legitimate financial advice.

A user sees what looks like a recommendation for a cryptocurrency platform in an AI Overview, opens an account, deposits $10,000, and discovers the "platform" is designed to steal their money. They've never even seen a traditional scam website—they came through Google's AI.

Medical and Health Misinformation

Queries about medical treatments, medications, and health conditions are ripe for exploitation. A scammer creates a site promoting an unproven treatment, fills it with fake testimonials and scientific-sounding language, and waits for AI to aggregate it.

Someone with a health condition sees what appears to be a treatment option in an AI Overview, buys expensive supplements that don't work, or—worse—delays legitimate medical treatment in favor of the scam "solution."

E-commerce and Counterfeit Products

Queries like "where to buy i Phone 15" or "best place to get name-brand sneakers cheap" get exploited by sites selling counterfeit products. The AI Overview might recommend a site that looks legitimate but is actually selling fake goods.

Users think they're getting a deal on brand-name products. They receive counterfeit items that don't work or break immediately. By the time they figure it out, the fake website is gone.

Tech Support Fraud

Beyond phone numbers, scammers impersonate legitimate tech companies in AI Overviews. "Is your computer running slow? Click here for free optimization" appears in an AI Overview for a query about computer performance. The link goes to malware.

Job Scams

AI Overviews for job searches are being exploited with fake job postings that look legitimate. Scammers claim you need to pay for "training" or "certification" or "background checks." The jobs don't exist, but your money does.

Each of these scam types exploits the same underlying vulnerability: users trust AI Overviews more than they should, and scammers can inject fraudulent information through distributed networks of low-credibility sites.

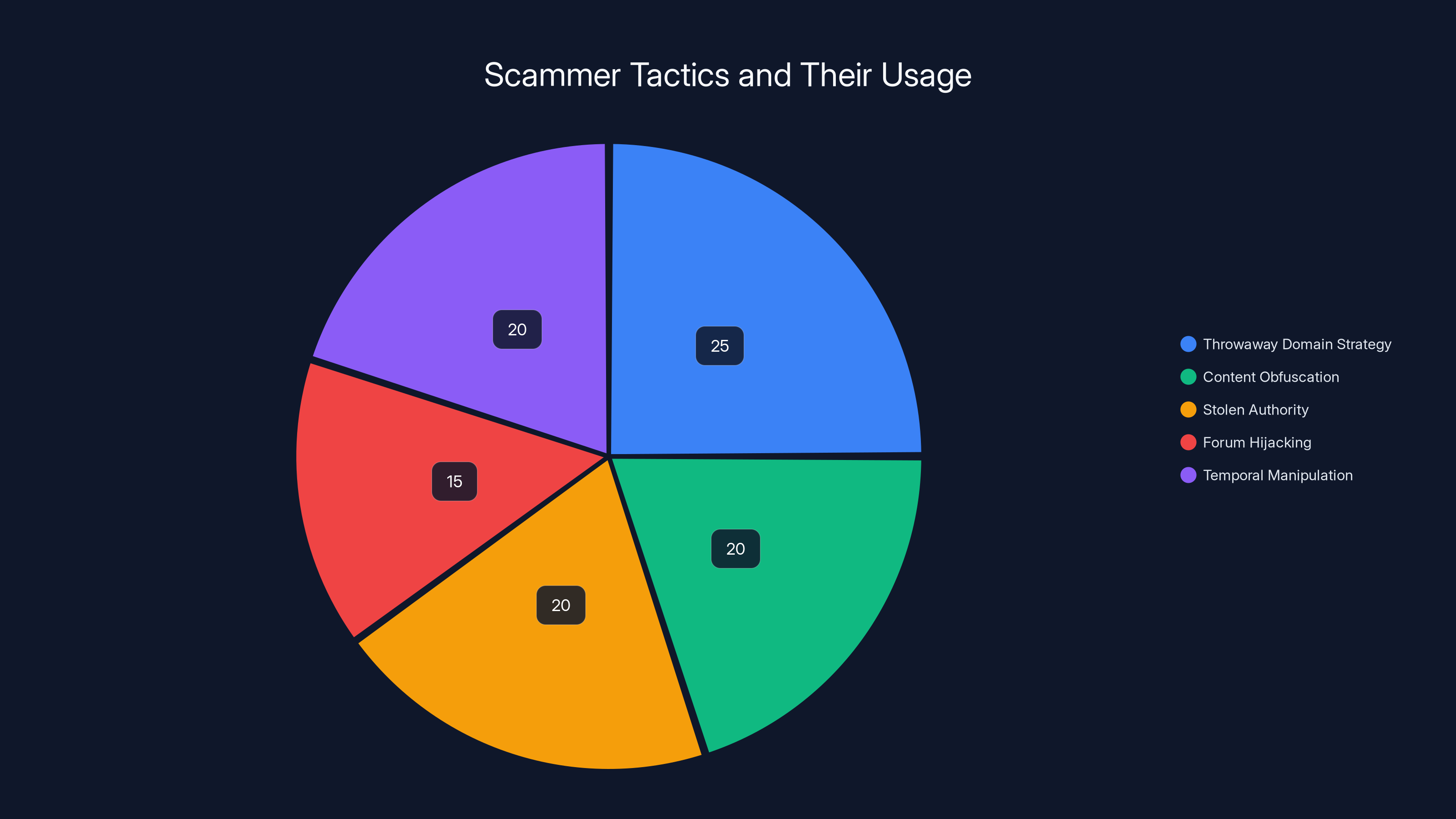

Estimated data shows that the 'Throwaway Domain Strategy' is the most commonly used tactic by scammers, accounting for 25% of their strategies, followed by 'Content Obfuscation' and 'Stolen Authority' at 20% each.

How Google Claims It's Fighting Back

Google has publicly acknowledged the problem and claims to be taking action.

In official statements, Google says its "anti-spam protections are highly effective at keeping scams out of AI Overviews." The company claims to be implementing multiple layers of fraud detection:

Pattern Recognition Systems: Google's spam detection algorithms look for patterns consistent with scam activity—multiple sites publishing identical or near-identical phone numbers, freshly registered domains, sites with minimal legitimate content.

Source Verification: For certain high-risk queries (like financial services and customer support), Google claims to prioritize official sources. When you search for a bank's phone number, Google says its systems try to pull from the bank's official website rather than aggregated third-party sources.

Removal at Scale: When scams are identified, Google claims to rapidly remove them from the index and prevent them from appearing in AI Overviews.

User Feedback Integration: Google has added features allowing users to flag inaccurate or fraudulent information in AI Overviews, and claims these flagging reports inform its spam detection systems.

These claims are not without merit. Google's spam detection systems are sophisticated, and they do catch many scams. The problem is one of scale and incentive alignment.

Google's core business model—advertising—doesn't directly incentivize protecting users from phone number scams. If a user gets defrauded through a phone number found in an AI Overview, Google doesn't lose money. The user might lose trust in Google Search, but there are no direct financial consequences for Google.

Moreover, detecting scams at scale is genuinely difficult. There are legitimate reasons for multiple websites to publish the same phone number (contact aggregators, review sites, forums). Distinguishing between legitimate aggregation and coordinated fraud requires sophisticated analysis.

Google has also been slow to adapt its systems to AI Overview-specific vulnerabilities. The spam detection systems were built for traditional search results, where users evaluated sources themselves. The bar for fraud detection in AI Overviews needs to be substantially higher.

There's also a timing problem. By the time Google identifies a scam and removes it, thousands of people might have already been defrauded. The economic incentive for scammers is to move fast—create the scam infrastructure, harvest victims for a few weeks or months, then disappear before detection.

The Structural Problem: Why This Can't Be Fully Solved

Here's the uncomfortable truth: There's no way for Google to completely eliminate this problem without fundamentally changing how AI Overviews work.

This isn't a technical limitation that can be overcome with better algorithms. It's a structural problem built into the core design of AI Overviews.

The Aggregation Problem

AI Overviews work by aggregating information from multiple sources. The aggregation is what gives them credibility—multiple sources saying the same thing feels more trustworthy than a single source.

But aggregation is exactly what scammers exploit. If they can plant their fraudulent information on 20 different websites, the AI will see consensus. To prevent this, Google would need to verify every single fact in every single source before aggregating.

That's not realistically possible at scale. There are billions of websites. Google can't call every phone number to verify it's real. Google can't visit every e-commerce site to verify the products are genuine. Google can't read every investment website to verify the claims are true.

The Authority Problem

AI Overviews inherit Google's authority. Users trust them because Google presents them, and Google has been trustworthy for 25 years.

But this creates a liability problem for Google. As AI Overviews become more prominent—and Google is clearly pushing them to be—Google is increasingly responsible for the accuracy of information in them. They're not just showing you links anymore; they're synthesizing information and presenting it as fact.

This increases Google's legal and reputational risk. But it doesn't directly incentivize improvement. For Google, it's more important to promote AI Overviews (because they make the search experience feel more advanced and keep users on Google's pages longer) than it is to solve the fraud problem.

The Verification Paradox

To truly prevent scams, Google would need to verify information in real-time. For phone numbers, that means actually calling them. For investment advice, that means evaluating claims. For e-commerce, that means checking if products are genuine.

But the more verification Google does, the more editorial responsibility it takes on. At a certain point, Google becomes not a search engine but a content provider, with all the legal and regulatory implications that entails.

Google has shown no appetite for this level of responsibility. It would rather present AI Overviews with the disclaimer that "AI can make mistakes" than take on the burden of verifying every claim.

The Economic Incentive Problem

From a purely economic standpoint, Google's incentive to fix phone number scams is limited. Each scam doesn't directly hurt Google—it hurts the user. And from Google's perspective, even if some users get defrauded, the overall trust in Google Search doesn't collapse (the trust might erode slowly, but not quickly enough to force action).

Scammers, meanwhile, have enormous incentives. A phone number scam is low-cost to set up and high-reward if it works. Even a small percentage of users calling the fake numbers generates significant revenue for scammers.

This creates an asymmetric game where Google is trying to prevent something that's not in their direct financial interest to prevent, while scammers are highly motivated to exploit the vulnerability.

Estimated data suggests fraudulent support numbers are the most common scam type in AI Overviews, followed by cryptocurrency scams and medical misinformation.

How Scammers Adapt and Stay Ahead

It's a game of cat and mouse, and scammers have shown impressive creativity in staying ahead of Google's detection systems.

The Throwaway Domain Strategy

Scammers register domains for $10-15 per year using privacy registrars that hide ownership information. They create a basic website, publish the fraudulent content, and delete it after 2-3 months when Google likely identifies it as spam.

By then, they've harvested victims and moved on. They repeat the process with new domains. Google's spam detection might be 80% effective, but scammers only need to succeed 20% of the time for the economics to work.

Content Obfuscation

When scammers realize Google is looking for patterns, they modify their approach. Instead of putting the fake phone number in an obvious place, they hide it in user comments, forum posts, or reviews that appear legitimate.

They use slight variations: (555) 123-4567, 555-123-4567, 5551234567. These variations look different to pattern-matching systems but are identical to humans and phone systems.

Stolen Authority

Scammers copy content from legitimate websites (banks, tech companies, investment firms) and republish it on their fake sites with the fraudulent phone number inserted. This makes the fake site look identical to the legitimate one, which helps it rank and tricks both AI systems and users.

Forum Hijacking

Scammers post on legitimate forums, review sites, and community platforms where they can't be easily removed. A post on Reddit saying "Just called this number for Apple Support: [FAKE NUMBER]—worked great!" looks like genuine user advice. When AI aggregates information, it pulls from these legitimate sites.

Temporal Manipulation

Scammers coordinate their site creation and content publication in waves. They might create 50 sites all at once, each publishing similar content, to appear as a coordinated network of independent sources. Or they spread them out over time to avoid detection patterns.

AI-Generated Content

With the rise of AI writing tools, scammers can generate thousands of pages of seemingly unique, natural-sounding content very cheaply. Each page looks different, but they all contain the same fraudulent information. This makes it harder for Google's systems to recognize them as part of a coordinated fraud campaign.

Legitimate Content Padding

Scammers create sites with tons of legitimate, helpful content—product reviews, how-to guides, news articles—and quietly slip their fraudulent phone number into a few pages. The site looks legitimate overall, so it gets indexed and recommended.

Each adaptation requires Google to update its detection systems. By the time Google catches up, scammers have already moved to the next technique.

Concrete Protection Strategies: What Actually Works

Given that Google can't fully solve this problem, you need to protect yourself.

The good news is that effective protection strategies exist. The bad news is they require you to change how you use search.

Strategy 1: Official Source Verification

This is the gold standard. Never trust an AI Overview for critical information like phone numbers, account details, or financial information. Always verify with the official source.

How to do it:

- See the AI Overview with a phone number

- Don't call it immediately

- In a new tab, go directly to the company's official website by typing the company name into your browser (bypassing search)

- Find the phone number on their official site

- Call that number instead

This takes 30 seconds and eliminates essentially all risk. It's the most reliable approach, but it requires discipline.

Why it works: The official company website is far harder for scammers to compromise than third-party sites. They'd need to hack into the company's domain or compromise their infrastructure. That's much harder than creating fake sites.

Strategy 2: Double-Check with a Second Search

Google itself recommends this approach: Use search to verify critical information twice.

How to do it:

- You see a phone number in an AI Overview

- Search specifically for "[Company Name] official phone number" or "[Company Name] customer service"

- Look at traditional search results (not the AI Overview) to see if the number appears on multiple legitimate-looking sites

- Look for consistency across different sources

If the number appears on:

- The company's official website

- Their Linked In page

- News articles about the company

- Multiple review sites

Then it's probably legitimate. If it only appears on obscure sites with weird domain names, it's probably a scam.

Why it works: Scammers can fake one or two sources, but they struggle to get their fraudulent information on the company's actual official website or into mainstream news articles.

Strategy 3: Phone Number Verification

Before calling any number, verify it separately.

How to do it:

- Take the phone number from the AI Overview

- Search for "[phone number] scam" or "[phone number] reviews"

- Look for community discussions about whether the number is real or fake

- Check scam reporting sites like Report Fraud.ftc.gov

If thousands of people have reported the number as fake, you've just saved yourself from a scam.

Why it works: Real scams have victims. Those victims often report them online. By searching for the number itself, you're tapping into crowdsourced fraud detection.

Strategy 4: Context Awareness

Think critically about why you're searching and what would make sense as an answer.

Examples:

- If you're searching for "how to make money fast," any AI Overview that shows you an easy way to make money is probably a scam

- If you're searching for "rare cryptocurrency that's about to moon," that's literally what scammers advertise

- If you're searching for "free $500 gift card," you're not going to find one

Part of fraud prevention is developing scam intuition. If something sounds too good to be true in an AI Overview, it probably is.

Why it works: Scammers target psychological vulnerabilities—greed, fear, laziness. By thinking critically about what's realistic, you can spot scams before you even verify information.

Strategy 5: Email and Contact Form First

When possible, contact companies through their website rather than by phone.

How to do it:

- Instead of calling the number in the AI Overview, go to the company's website

- Find their contact form or support email

- Send a message through their official website

- Wait for a response

This is slower than calling, but it's essentially scam-proof. A scammer can't intercept your email through an AI Overview.

Why it works: Contact forms and support emails are typically set up with security measures that phone-based scams don't face.

Strategy 6: Selective Trust in AI Overviews

AI Overviews aren't useless. They work fine for some types of information. It's all about understanding what they're bad at.

Trust AI Overviews for:

- Factual information (capitals of countries, historical dates)

- Definitions and explanations

- General how-to guides

- Product feature comparisons (that aren't sponsored)

- Scientific explanations

Don't trust AI Overviews for:

- Phone numbers

- Specific product recommendations (buy here, use this service)

- Medical advice

- Investment advice

- Legal advice

- Any information where you're making a significant decision or financial commitment

Why it works: AI is good at synthesizing factual information where being slightly wrong doesn't cost you money. It's bad at situations where being wrong has high consequences.

Estimated data suggests a significant increase in AI Overview scams, with incidents projected to quadruple by 2028 due to increased sophistication and scaling to new platforms.

What Banks and Companies Are Doing About This

It's not just individuals protecting themselves. Banks, tech companies, and other frequently-targeted institutions are taking action.

Official Warnings

Major financial institutions have issued public warnings about fake phone numbers appearing in search results. Credit unions have sent emails to members warning them about this specific threat. Tech companies have posted alerts on their official websites.

These warnings are increasing user awareness, though they're not reaching everyone who needs to see them.

Verification Systems

Some companies are implementing two-factor verification for phone-based support. Instead of immediately trusting you, the representative says, "I'm going to send you a verification code via your email on file. Please read it back to me." This prevents scammers from impersonating the company effectively.

Caller ID Authentication

Industries are slowly moving toward authenticated caller ID systems where when you call the legitimate company's number, your phone shows verified information. This helps users know they're calling the right number. The problem is this technology is still new and not universally adopted.

Website Security

Companies are investing in making their websites obviously official. They're using prominent branding, security indicators (HTTPS, trust badges), and clear contact information to make their sites more obviously legitimate than scammers' counterfeits.

Search Engine Relationships

Some major companies are working directly with Google to ensure their official contact numbers get prioritized in AI Overviews. While this helps legitimate companies, it's a Band-Aid on a structural problem.

Fraud Monitoring

Financial institutions are deploying advanced fraud detection to identify when accounts are being accessed from unusual locations or with unusual behavior patterns. This catches some scams after the fact, but prevention is always better.

These measures help, but they're reactive rather than proactive. They address the symptoms rather than the underlying problem.

The Bigger Picture: AI Overviews and Information Reliability

Phone number scams are just the most obvious manifestation of a larger problem: AI Overviews have fundamentally changed how users interact with information.

For decades, search results were transparent. You could see the sources. You could evaluate them. There was friction, and that friction was actually protective.

AI Overviews remove that friction in the name of user convenience. The tradeoff is loss of transparency.

When you see an AI Overview, you don't know:

- Where the information came from

- How authoritative each source is

- Whether the AI accurately represented what the sources said

- If there's conflicting information the AI left out

- Whether any of the sources are fraudulent

This information loss is the cost of convenience. Most users aren't aware they're paying it.

There are also concerns about the broader impact on information reliability:

The Feedback Loop Problem: If AI Overviews pull from websites, and websites optimize for appearing in AI Overviews, websites might start creating content designed for AI aggregation rather than designed to be accurate or useful.

This is similar to what happened with SEO optimization. Websites started writing for search engines rather than readers, leading to worse overall content quality.

The Authority Amplification Problem: When AI synthesizes information from 10 sources, it amplifies the apparent authority of that information. If 9 sources are legitimate and 1 is fraudulent, the AI might present it as fact because "multiple sources say it." But it only took 10% fraudulent sources to contaminate the result.

The Correction Problem: When an AI Overview is wrong, how do you correct it? You can flag it to Google, but there's no transparency about whether your flag was actually used or whether the AI Overview was actually changed. Users are left in the dark.

The Dependency Problem: As users increasingly rely on AI Overviews, they lose the critical evaluation skills they previously used with traditional search. The longer AI Overviews exist, the less equipped users become to evaluate information on their own.

These are structural problems with how AI Overviews work, not limitations that can be overcome with better algorithms.

The solution likely requires rethinking the product design entirely. But Google is clearly committed to AI Overviews as the future of search, which means these problems will persist and likely worsen as adoption increases.

Search Alternatives: Is There a Better Way?

For users deeply concerned about AI Overview scams, switching search engines is always an option.

Duck Duck Go

Duck Duck Go doesn't show AI-generated summaries. It shows traditional search results with source links. This means you get transparency about where information comes from, but you lose the convenience of synthesized answers.

For critical information (phone numbers, financial details, medical information), this transparency is actually better. You want to see sources.

The downside is that Duck Duck Go's search quality isn't as good as Google's for general queries. You might find what you're looking for, but it could take more effort.

Perplexity

Perplexity is an AI-powered search engine, so it has similar vulnerabilities to Google's AI Overviews. But it has better source transparency—it shows you exactly which sources the AI pulled information from, color-coded by citation.

This is helpful because you can immediately see if the sources are legitimate or suspicious. If a phone number appears and it's sourced from a random personal blog or new domain, that's a red flag.

Kagi

Kagi is a paid search engine that combines traditional search results with some AI features, but with stronger editorial curation. Because Kagi charges users directly, it has less incentive to show low-quality results that increase engagement.

The downside is it costs money ($10/month), and it's less developed than Google.

Traditional Browser Navigation

The most scam-proof approach is going directly to official websites without searching. If you know the company (your bank, Apple, Microsoft), you can type their domain directly into your browser and bypass search entirely.

This works for well-known companies, but it's not practical for exploring the web or finding new information.

None of these alternatives are perfect solutions, but they offer different tradeoffs depending on your needs.

For critical information: Use alternative search engines or go directly to official sources For general knowledge: Google's AI Overviews work fine For research: Traditional search results with visible sources are better

What Google Could Do (But Probably Won't)

There are technical and design changes that would meaningfully reduce AI Overview scams. But they'd require Google to accept tradeoffs it seems unwilling to make.

Change 1: Source Transparency

Google could display which specific source each claim in an AI Overview came from. Instead of a generic citation, you could see: "This phone number came from sourcesite.com, published 2 days ago, domain age 3 months."

With that information, you could immediately spot suspicious sources.

Why Google doesn't do this: It would make AI Overviews longer and more cluttered. It would also expose how much of the information comes from low-credibility sources, which might reduce user confidence in AI Overviews.

Change 2: Friction for High-Risk Queries

For queries involving phone numbers, financial information, or medical advice, Google could add a verification step. "Before calling this number, verify it on the company's official website. Click here to visit their site."

This adds friction, but friction is actually good for fraud prevention.

Why Google doesn't do this: It contradicts their stated goal of removing friction from search. Adding friction to AI Overviews would make them less appealing than traditional search results.

Change 3: Source Verification Requirements

Google could require human review of AI Overviews before they're shown for high-risk queries. A human editor verifies the phone number is real, the medical claim is sound, etc.

This would eliminate most fraud, but it would be expensive to scale.

Why Google doesn't do this: Google would essentially have to hire thousands of editors, which would cost hundreds of millions per year. That doesn't align with Google's business model.

Change 4: Removing AI Overviews for Dangerous Queries

Google could simply disable AI Overviews for certain query types: "customer service number," "how to cure [disease]," "investment opportunity," etc.

Users would get traditional search results instead. For queries where fraud risk is high, show sources instead of synthesis.

Why Google doesn't do this: It undermines the entire premise of AI Overviews as the future of search. Google has invested significantly in AI Overviews and wants them to be the default for all queries.

None of these changes are impossible. But they'd require Google to prioritize user safety over product features and convenience. The company has shown limited appetite for that tradeoff.

Red Flags: How to Spot Fraudulent AI Overviews

Though it's hard to identify scams from AI Overviews, there are patterns that should raise suspicion.

Red Flag 1: Unusual Phone Number Format

Legitimate Phone numbers follow recognizable patterns. Area codes are real (212 for New York, 415 for San Francisco, etc.). Numbers are typically formatted consistently.

Scam numbers often use unusual patterns:

- Area codes that aren't in major cities

- Extensions that seem random

- Formatting that doesn't match the company's other published numbers

- International numbers for domestic companies

Red Flag 2: Contradictory Information

If you see multiple numbers for the same company in the AI Overview, that's a bad sign. It might mean one is fraudulent.

Compare the number in the AI Overview to other numbers you can find for the same company. Do they match? If they're different, treat the AI Overview number with suspicion.

Red Flag 3: Vague Source Attribution

Look at how the AI Overview cites its sources. If it says "According to sources" or "Multiple reports indicate," that's vague. If it says "According to Bank of America's official website," that's specific.

Vague attributions often indicate the AI is pulling from low-credibility sources and synthesizing them in a way that sounds authoritative.

Red Flag 4: Unusual Language

Scammers often write in a way that's slightly off—slightly awkward phrasing, odd word choices, grammatical quirks. While AI-generated content sometimes has these same issues, they're a yellow flag to investigate further.

If the surrounding text sounds odd, the phone number is probably suspicious too.

Red Flag 5: Overly Perfect Information

Paradoxically, overly perfect information can be suspicious. If the AI Overview provides exactly what you're looking for with perfect formatting and no ambiguity—and if you can't easily find that same information elsewhere—it might be fabricated.

Scammers sometimes use AI to generate custom content designed specifically to appear in AI Overviews.

Red Flag 6: New or Unfamiliar Sources

If the AI Overview cites sources you've never heard of, investigate those sources. New websites with no history and limited content are often fraudulent.

Legitimate information usually appears on well-established, recognizable sources. If you only see it on obscure sites, it's probably not legitimate.

Red Flag 7: Too Good to Be True Offers

If an AI Overview recommends an investment opportunity with guaranteed returns, a medical treatment with cure claims, or a service with no downsides, that's almost certainly a scam.

Life involves tradeoffs. If an AI Overview presents something with no downsides, it's either too good to be true, or the AI has been compromised.

The Future: How This Problem Gets Worse

Unfortunately, the AI Overview scam problem is likely to intensify rather than improve.

Increasing Sophistication: Scammers learn from each iteration. As Google's fraud detection improves, scammers develop new techniques. This is an arms race, and history shows that scammers often win these races.

Scaling to New Products: Google isn't the only company building AI Overviews. As AI search capabilities spread to Bing, other search engines, and even specific industry applications, scammers will have more targets and more opportunities.

Deepfakes and Synthetic Content: As AI-generated audio and video improve, scammers won't just inject text into AI Overviews—they'll inject convincing synthetic videos and audio. This will make scams even harder to identify.

Targeting of Vulnerable Populations: Scammers will increasingly target elderly users, non-English speakers, and others less equipped to verify information. As AI Overviews expand to more languages and regions, this problem becomes global.

Regulatory Complexity: As governments become aware of this problem, they'll likely demand regulation. But regulation will be slow and ineffective—by the time a law is passed addressing AI Overview scams, the scam landscape will have evolved significantly.

Business Model Conflicts: Google's business model depends on keeping users in Google's ecosystem. Showing fraud in AI Overviews would make users leave. This creates a fundamental conflict of interest that won't be resolved by product updates.

The most likely future is one where:

- AI Overviews become the default way people interact with search

- Scams in AI Overviews become commonplace

- Users learn to distrust AI Overviews (or don't learn and get defrauded)

- A second, competing search paradigm emerges that offers more transparency

- We eventually settle on a hybrid approach: AI Overviews for low-risk information, traditional search for high-risk

But that's optimistic. The more likely scenario is that AI Overviews become ubiquitous and scam losses increase significantly, with users bearing the cost.

Developing Your Information Diet

Ultimately, protecting yourself from AI Overview scams is part of a larger practice: developing a healthy information diet.

Just as we've learned that not all food is equally nutritious, we need to learn that not all information sources are equally reliable.

A healthy information diet includes:

Authoritative Sources: Direct information from official sources—company websites, government agencies, peer-reviewed journals—should form the foundation.

Secondary Analysis: Trustworthy journalists, analysts, and researchers who synthesize information responsibly.

Diverse Perspectives: Understanding multiple viewpoints helps you think critically rather than blindly accepting one narrative.

Healthy Skepticism: Not paranoia, but a reasonable question: "Is this realistic? Who benefits if I believe this? What would I need to verify this?"

Media Literacy: Understanding how different sources make money, what biases they have, and how to evaluate credibility.

AI Overviews are fine as a convenience tool, but they shouldn't be your only source of information, especially for anything important.

Think of AI Overviews like fast food: Convenient for quick information, okay in moderation, but not the foundation of a healthy information diet.

For critical decisions, you need the nutritional equivalent of home-cooked meals: information from direct sources you've personally evaluated.

FAQ

What exactly is an AI Overview scam?

An AI Overview scam is when fraudsters publish false information (usually fake phone numbers, fraudulent websites, or misleading advice) on multiple low-credibility websites. Google's AI systems aggregate this information across multiple sources and present it as fact in an AI Overview. Users trust the AI Overview and contact the fraudulent number or follow the fraudulent advice, thinking they're contacting a legitimate company. The scammer then steals their money, personal information, or financial credentials through social engineering.

How do scammers get fake information into Google's AI Overviews?

Scammers create multiple fake websites and publish fraudulent information (like fake phone numbers) alongside legitimate information. They rely on the fact that Google's AI aggregates information from many sources, and if that fraudulent information appears on 10-20 different websites, the AI assumes it must be legitimate because multiple sources are "saying" the same thing. They don't hack Google directly; they exploit the weakness in how AI systems work: trusting information that appears consistently across multiple sources, even if those sources are all created by the same scammers.

Why does Google struggle to prevent AI Overview scams?

Google faces structural challenges: (1) Verifying every piece of information would require checking millions of facts daily, which isn't scalable. (2) Google's core incentive is to show AI Overviews (it makes search feel more advanced), not to prevent fraud in AI Overviews. (3) The AI aggregation model that makes AI Overviews work is the same thing that makes them vulnerable to scams. (4) By the time Google detects a scam, scammers have often already moved on to new domains and techniques. There's no perfect solution that doesn't fundamentally change how AI Overviews work.

What's the most effective way to protect myself from AI Overview scams?

The most effective approach is simple: don't trust AI Overviews for critical information like phone numbers, financial details, or medical advice. Always verify through official sources. Go directly to the company's official website by typing it in your browser (don't search). Look for contact information there. Call that number instead of trusting the one in the AI Overview. This adds 30 seconds to your interaction, but it eliminates essentially all fraud risk from this vector.

Can I turn off Google's AI Overviews?

As of 2025, Google hasn't provided a user-facing option to completely disable AI Overviews. When Google decides to show them, you see them. You can scroll past them or try a different search engine, but you can't turn them off directly in Google Search settings. Some privacy-focused browsers and search engines (like Duck Duck Go or Perplexity) offer alternatives that either don't use AI Overviews or present them with more source transparency.

How can I tell if a phone number in an AI Overview is fake?

Look for these red flags: (1) The number doesn't match the company's official website. (2) Searching the phone number itself reveals reports of scams or fraud. (3) The area code doesn't match the company's known locations. (4) The formatting seems unusual or inconsistent with other numbers from that company. (5) When you call, the person who answers sounds unprofessional or asks unusual questions. The most reliable method is to search the phone number itself along with "scam" or "reviews" to see if others have reported it as fraudulent.

Are other search engines and AI tools safer from this type of fraud?

Some alternatives are slightly better because they offer more source transparency. Perplexity shows color-coded citations so you can see which claims come from which sources—making suspicious sources more obvious. Duck Duck Go doesn't use AI Overviews, so you get traditional search results with visible sources. However, all AI-powered search tools face similar vulnerabilities. The safest approach for critical information is going directly to official sources rather than searching at all.

What should I do if I think I've encountered a scam in an AI Overview?

First, don't engage with it. Don't call the number, don't visit the website, don't send money. Second, report it to Google if possible. Third, if you've already been scammed, report it to the FTC at reportfraud.ftc.gov and to the actual company being impersonated. Fourth, monitor your financial accounts for unauthorized activity. Finally, consider using a credit monitoring service if sensitive information was compromised. Report the scam to others (friends, family, social media) so they don't fall for it too.

Is this problem going to get worse?

Likely yes. As AI Overviews become more ubiquitous (Google is clearly pushing them as the future of search), scammers will dedicate more resources to exploiting them. Scammer infrastructure is becoming more sophisticated and easier to set up through scam-as-a-service platforms. As AI tools improve, scammers will use deepfakes and synthetic content to make their fraud more convincing. Unless Google fundamentally changes how AI Overviews work (which seems unlikely), fraud in AI Overviews will become more common, not less.

Can I rely on Google's spam detection to keep me safe?

Not entirely. Google's spam detection is reasonably effective, but it's not perfect, and scammers are constantly adapting. Google catches many scams, but there's a lag between when a scam appears and when it's detected and removed. During that lag, thousands of people might be defrauded. Additionally, Google has limited incentive to make spam detection perfect—fraudulent AI Overviews don't directly cost Google money, they cost users. Your own due diligence (verifying information, checking sources) is more reliable than trusting Google's systems.

Conclusion: Taking Control of Your Information

Google's AI Overviews represent a genuine advancement in how we interact with information. They're faster, more conversational, and often more useful than traditional search results for general questions.

But they also represent a significant new risk vector for fraud. By removing the friction that previously protected users, AI Overviews have created an environment where scammers can inject fraudulent information that looks authoritative and is trusted by default.

The uncomfortable truth is that Google isn't fully incentivized to solve this problem. A few scams in AI Overviews won't significantly damage Google's business. Users might experience fraud, but they'll likely continue using Google because it remains the most convenient option.

This means protection falls on you. It requires developing better habits:

-

Establish default skepticism for AI Overviews: Treat them as convenient starting points, not final answers, especially for critical information.

-

Create a verification habit: For phone numbers, financial information, medical details, and any data that affects your decisions, verify through official sources. Make this automatic, not something you have to think about.

-

Navigate directly when possible: For services and companies you use regularly, bookmark their official sites. Use those bookmarks instead of searching.

-

Evaluate sources critically: When you see an AI Overview, pause and ask whether the sources seem credible. New domains, suspicious site names, and obscure websites are red flags.

-

Stay informed as the landscape evolves: Scammers are creative and adaptive. What works today might not work tomorrow. Stay aware of new scam types and share information with others.

-

Support better alternatives: If you care about information reliability, consider supporting search engines that prioritize transparency and security over convenience. Your usage patterns and payments influence what companies build.

-

Report fraud when you see it: Whether you encounter it or fall victim to it, reporting to Google, the FTC, and the impersonated company helps everyone.

The tech industry has a saying: "Move fast and break things." Google has moved fast with AI Overviews. The "things" being broken are information reliability and user safety. Fixing this requires Google to slow down, prioritize security, and accept some of the friction they've removed.

Until that happens, you're your own best defense.

The good news is that this defense is achievable. Following the verification strategies outlined in this guide essentially eliminates your risk from AI Overview scams. It requires slightly more effort than blindly trusting Google, but it's effort well spent.

AI Overviews are here to stay. Scams are here to stay. But fraud victims are optional. Choose to be skeptical, choose to verify, choose to stay safe.

Key Takeaways

- Scammers create multiple fake websites with fraudulent information that Google's AI aggregates, presenting false data as legitimate facts

- AI Overviews inherit Google's credibility but lack the transparency that made traditional search results protective against fraud

- Phone number scams are the most common attack vector because they require minimal scammer effort but generate significant financial returns

- Official source verification is the most effective defense: skip AI Overviews and go directly to company websites for critical information

- Google has limited financial incentive to fully solve this problem, making user due diligence essential rather than optional

Related Articles

- SAG-AFTRA vs Seedance 2.0: AI-Generated Deepfakes Spark Industry Crisis [2025]

- 4chan /pol/ Board Origins: Separating Fact From Conspiracy Theory [2025]

- Prediction Markets Regulation Battle: Senate vs CFTC [2025]

- India's New Deepfake Rules: What Platforms Must Know [2026]

- New York's AI Regulation Bills: What They Mean for Tech [2025]

- The Jeffrey Epstein Fortnite Account Conspiracy, Debunked [2025]

![Google AI Overviews Scams: How to Protect Yourself [2025]](https://tryrunable.com/blog/google-ai-overviews-scams-how-to-protect-yourself-2025/image-1-1771157607194.jpg)