HDD Reliability Guide: Which Drives Survive Data Centers [2025]

Your hard drive is probably going to fail. That's not pessimism—that's statistics.

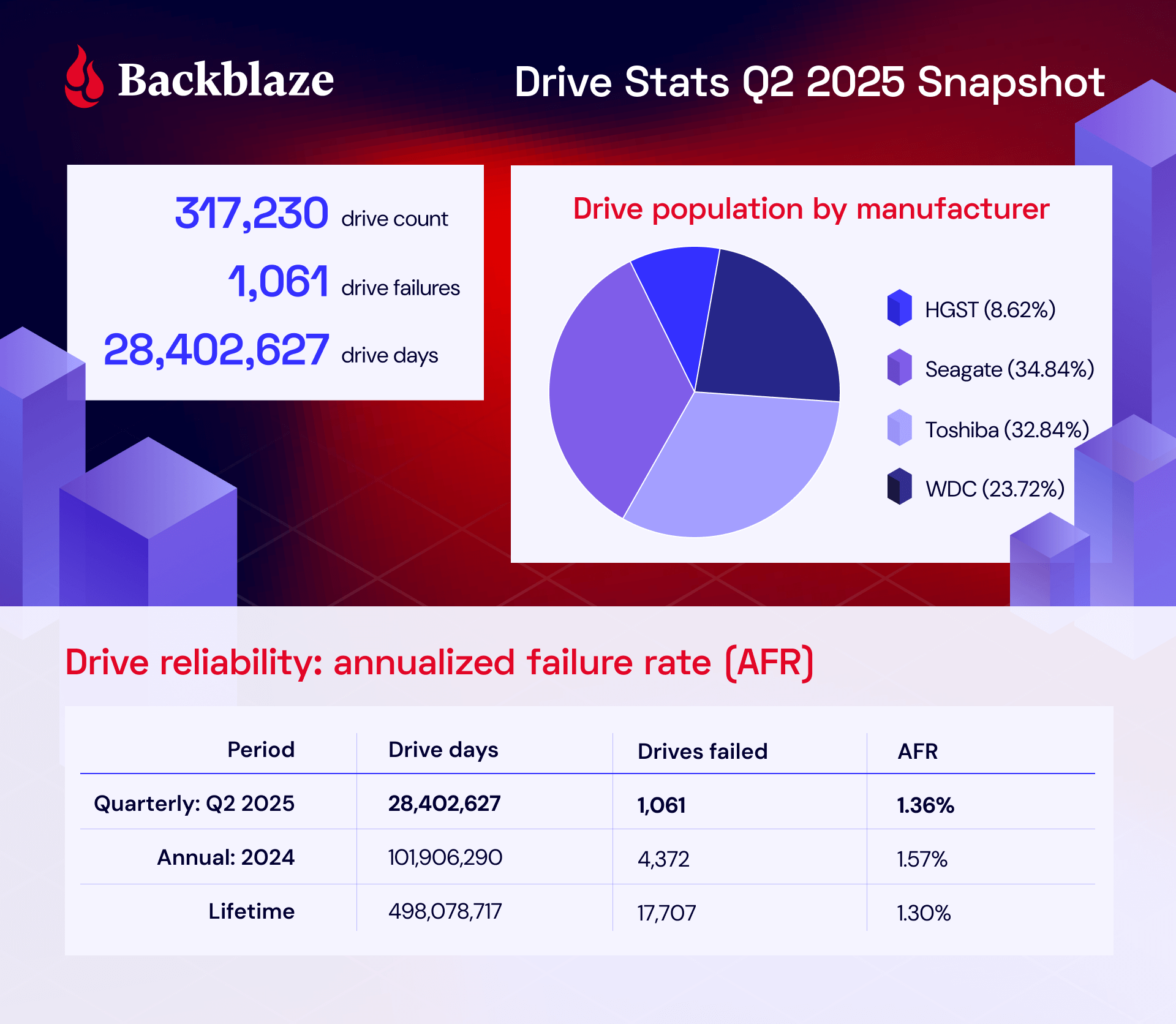

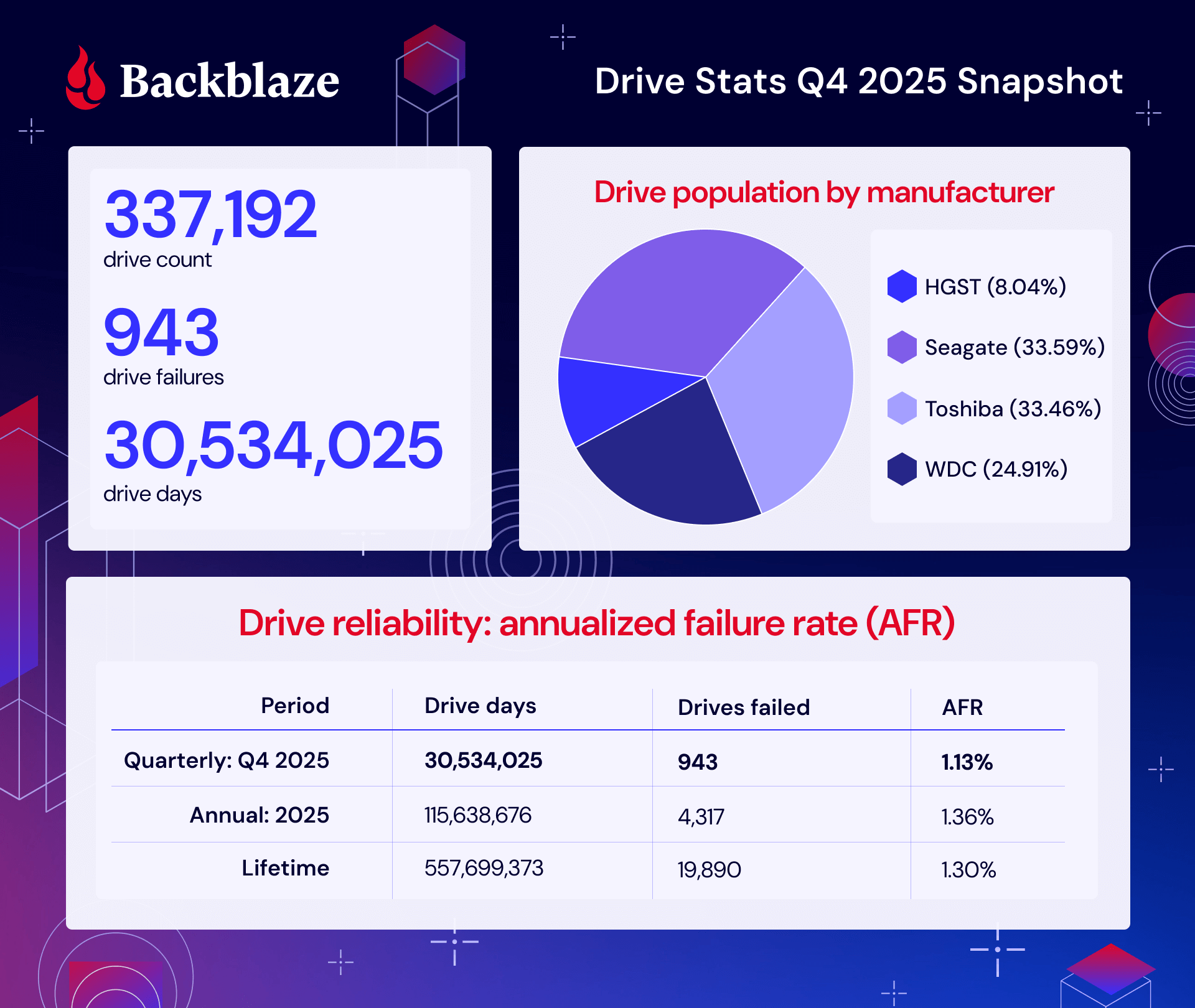

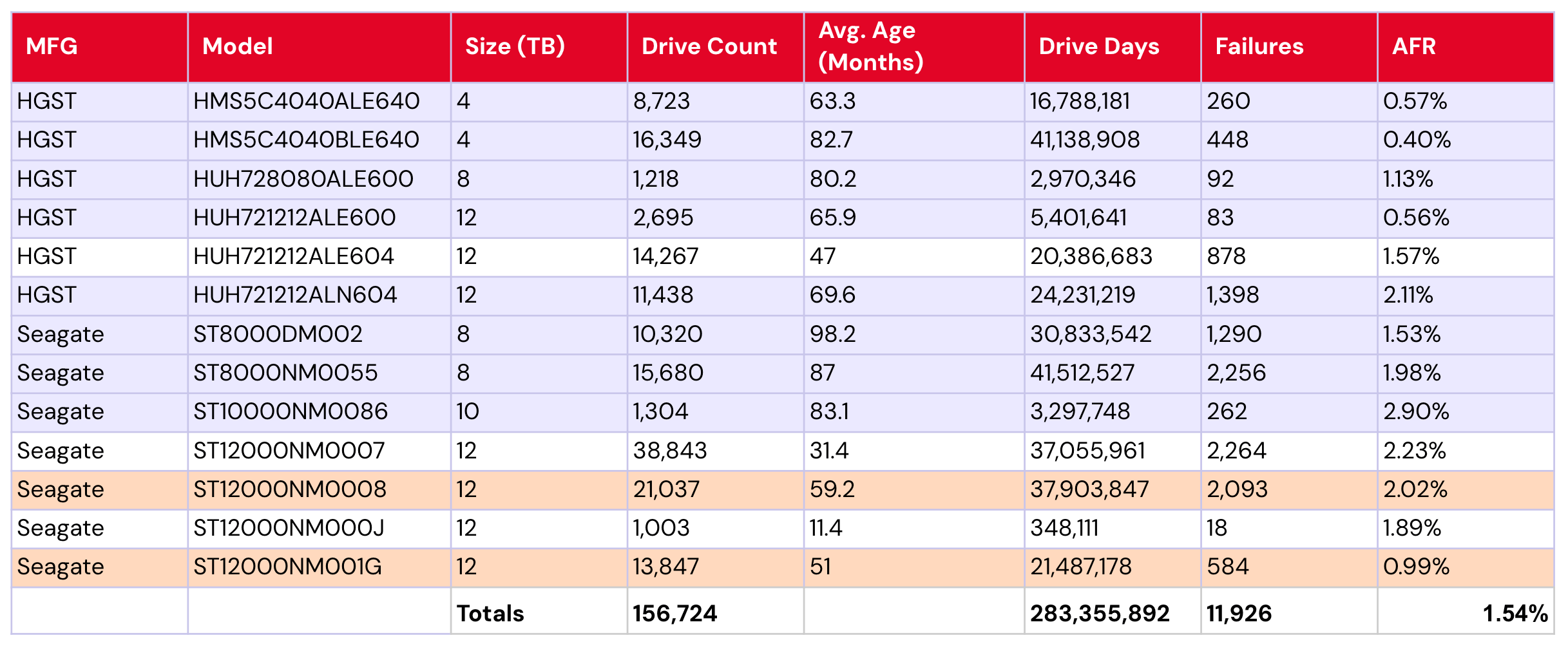

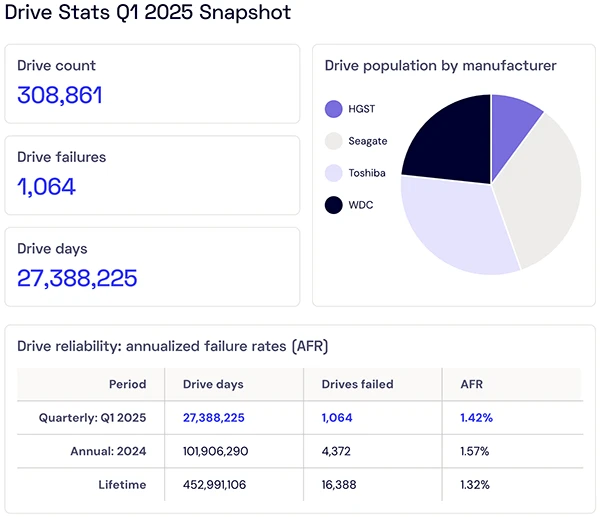

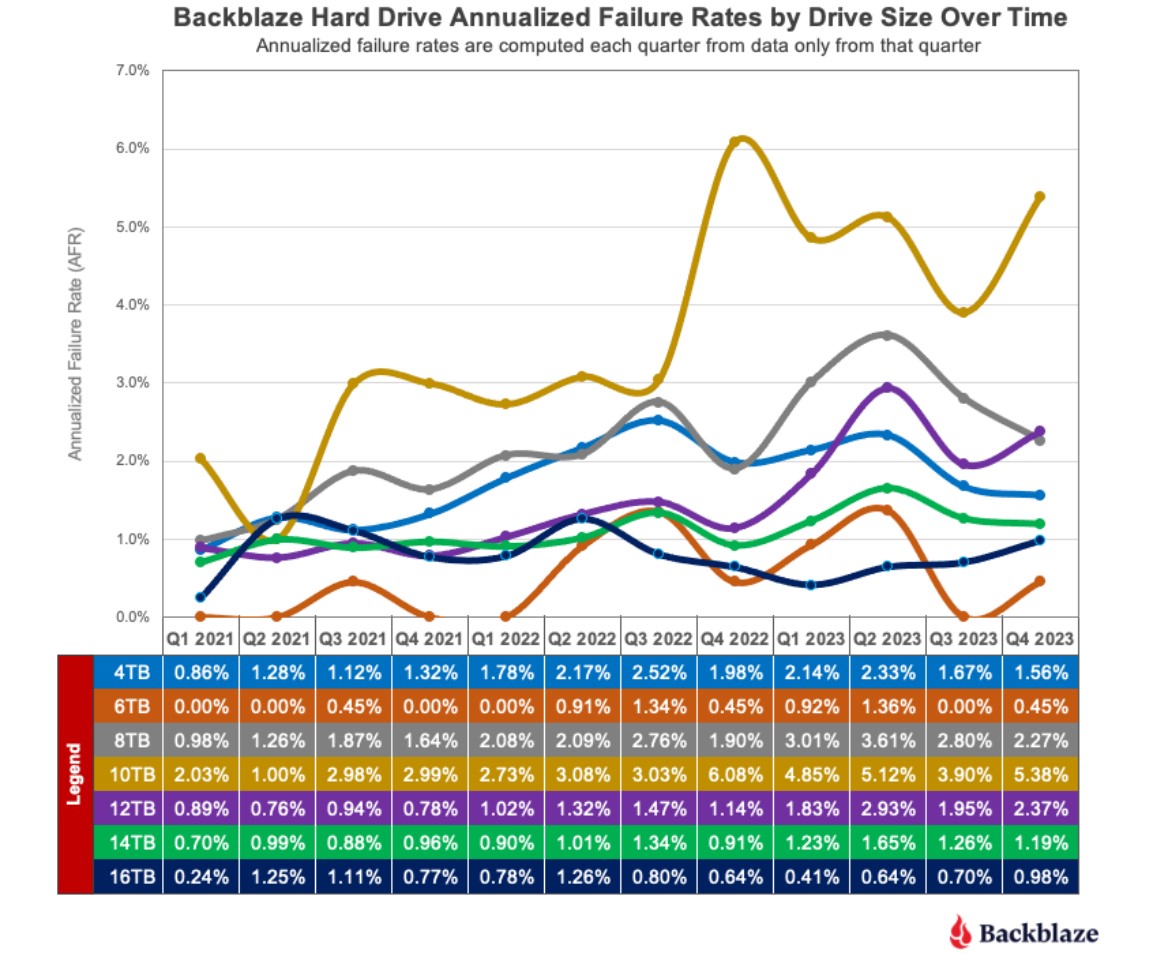

But here's the thing: some drives fail after three months. Others keep spinning for eight years straight. The difference between those outcomes? That's what Backblaze's latest 2025 hard drive reliability report finally clarifies with real data from 344,196 drives running in actual data centers.

This isn't marketing material. This isn't theoretical. Backblaze operates massive amounts of storage hardware and publishes their findings annually. In 2024, they had one of the clearest windows into HDD performance anywhere—115.6 million drive days of real-world operational data. That's not a test lab with perfect conditions. That's drives handling actual customer data under genuine stress.

The results matter because you're probably making a storage decision right now. Maybe you're building a home lab. Maybe you're setting up a NAS. Maybe you're replacing failed drives in an existing system. The choice between a

Let's break down what Backblaze found, what it means for you, and which drives are actually worth your money.

TL; DR

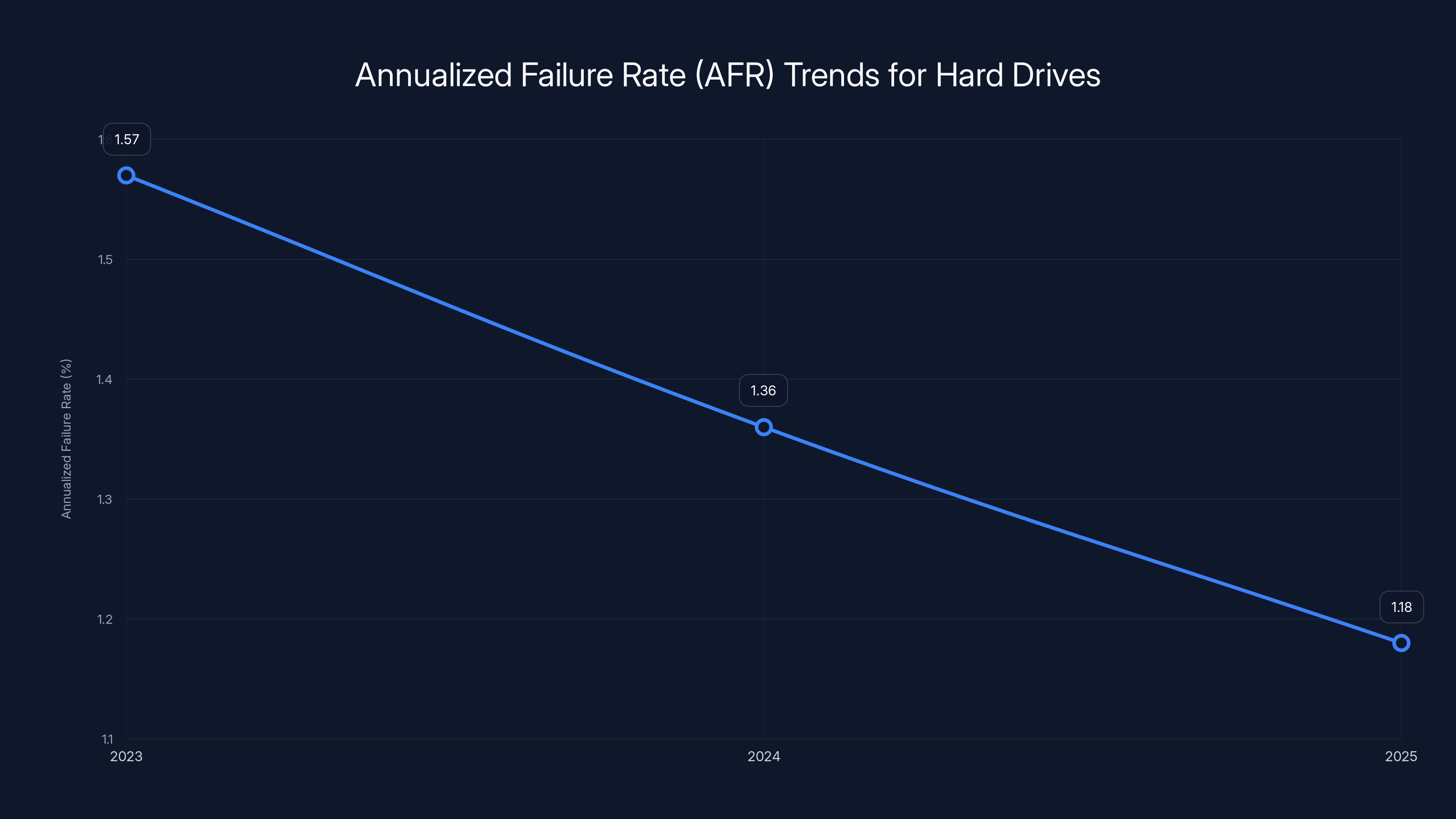

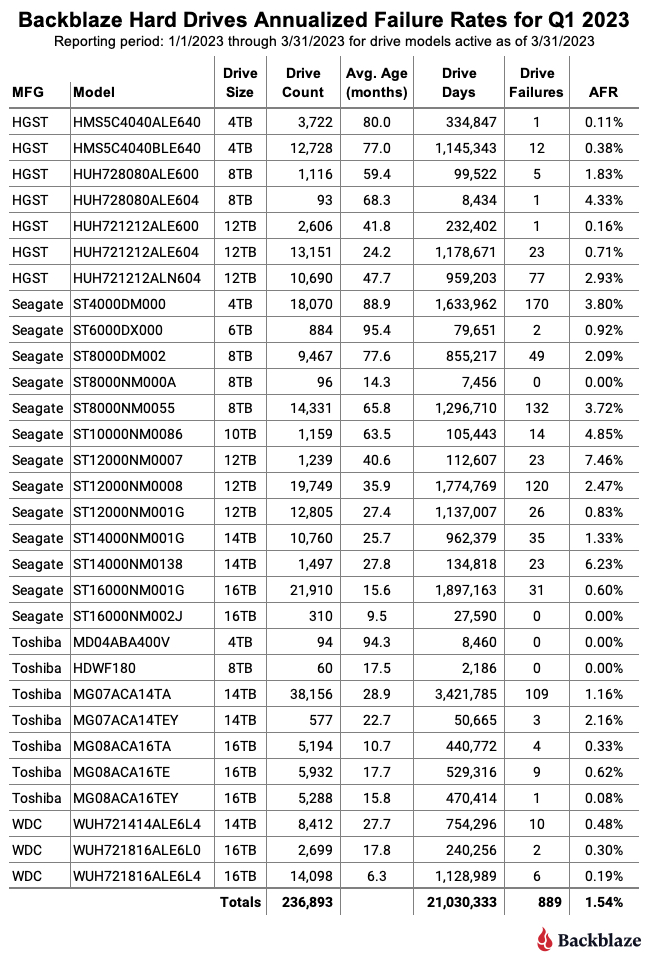

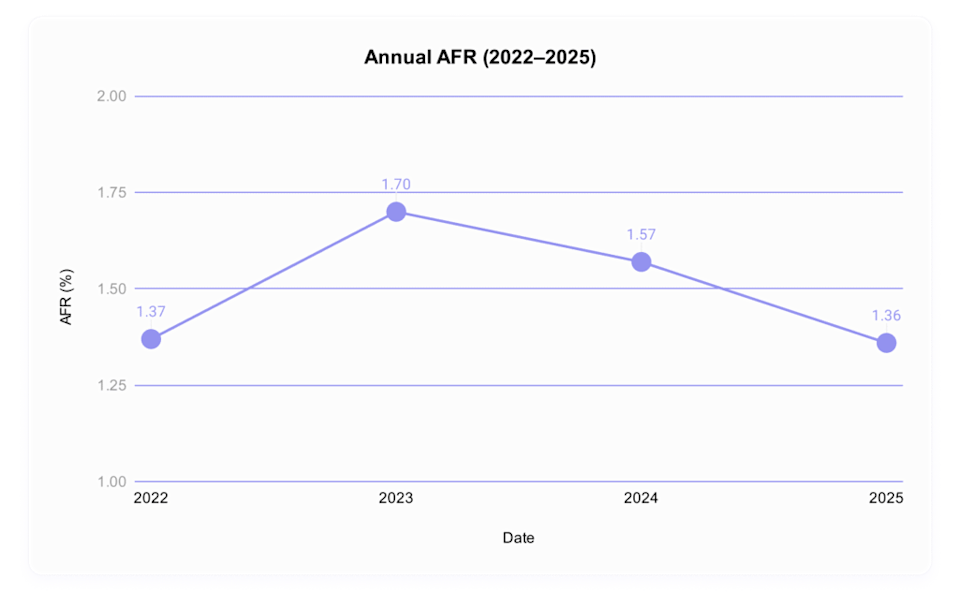

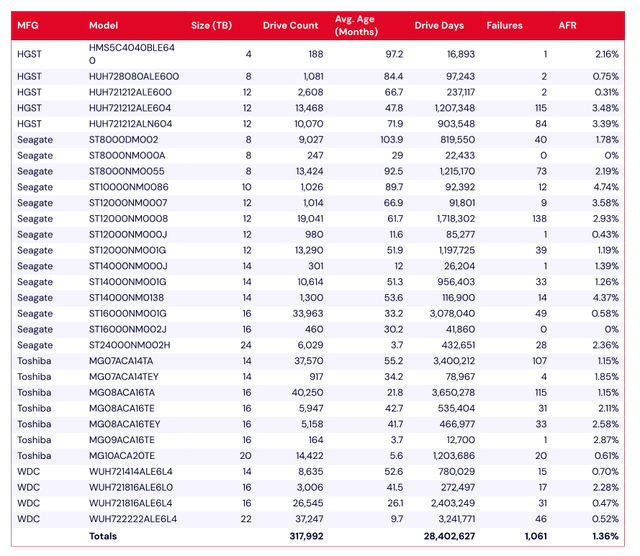

- Annual failure rate hit 1.36% across 344,196 drives, down from 1.57% the previous year, showing steady improvement

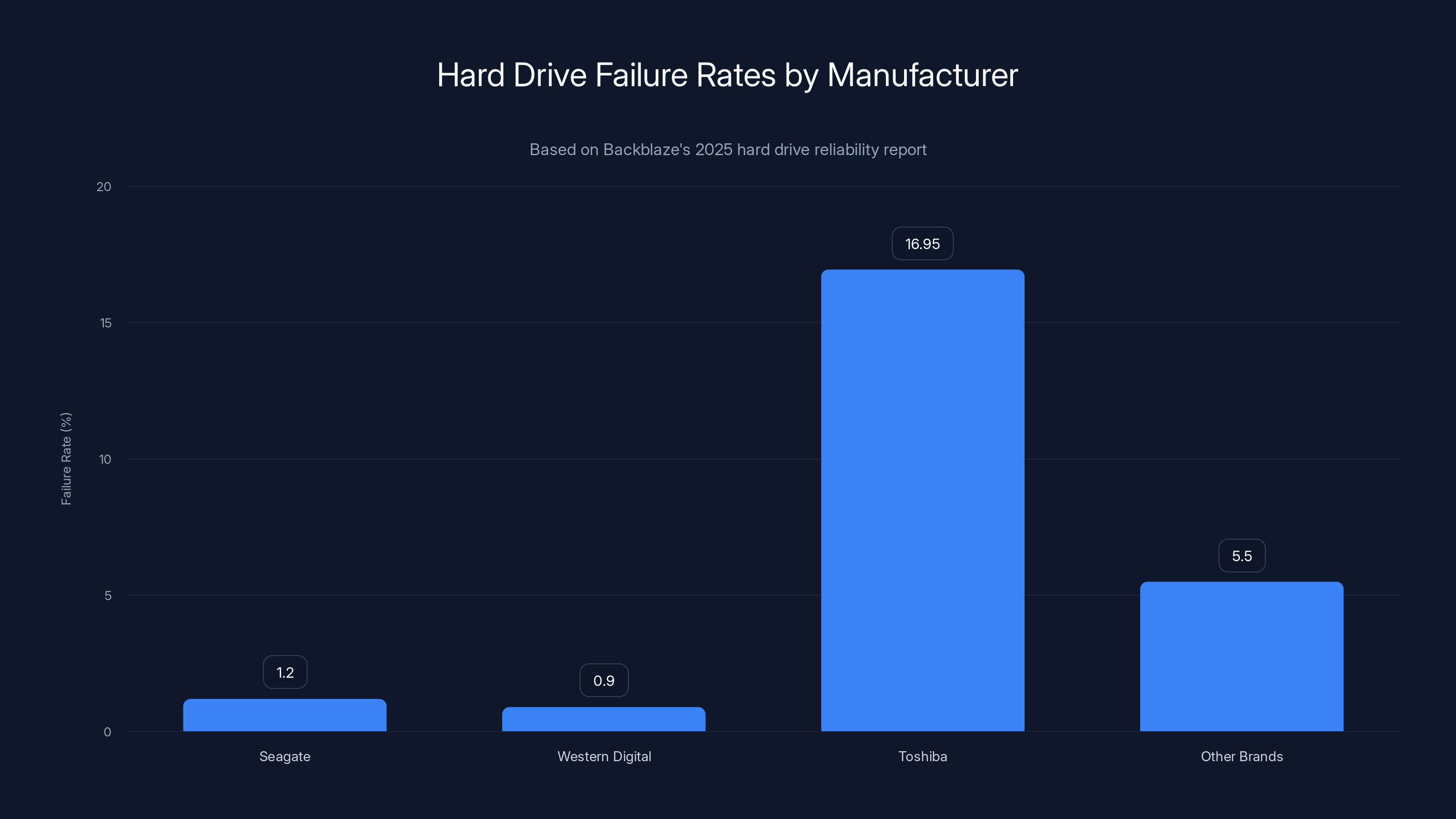

- Seagate and Western Digital dominate reliability rankings with models recording single-digit failure counts across the entire year

- The ST16000NM002J 16TB and WUH722626ALE6L4 26TB recorded just one failure each, setting benchmarks for durability

- Vibration emerges as culprit behind double-digit failure rates in aging drives, not manufacturing defects

- Toshiba firmware updates reversed a catastrophic 16.95% failure rate down to 4.14% in Q4, proving software fixes matter

- Storage economics are shifting: capacity grows, but prices are rising due to supply constraints, not just component costs

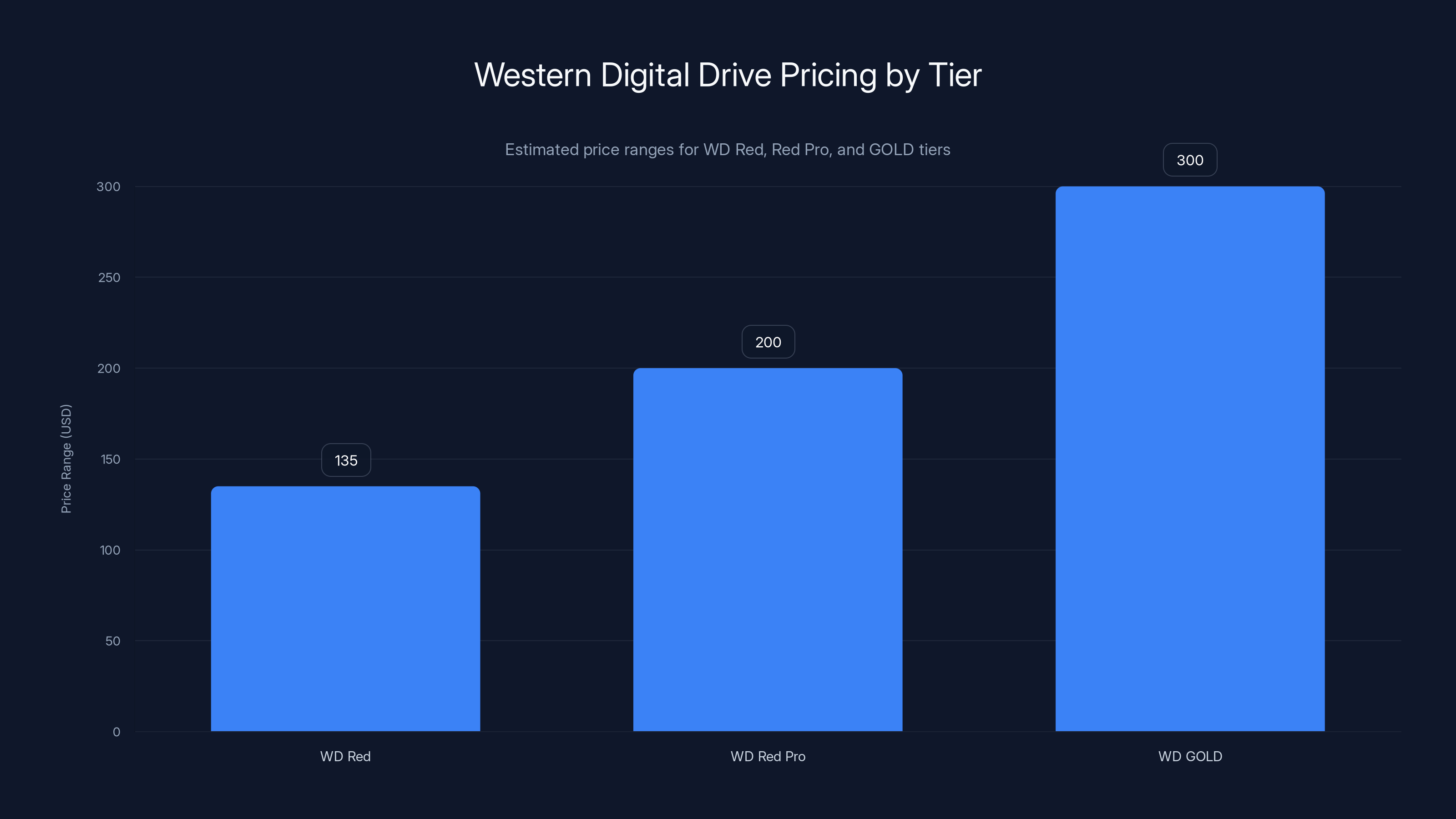

WD Red drives are priced between

Understanding the Backblaze Data: What 344,000 Drives Tell Us

Backblaze runs cloud backup and storage services for millions of customers. That means they operate warehouses full of hard drives. Lots of them. These drives aren't sitting on someone's shelf—they're spinning continuously, handling read/write requests, managing thermal stress, and experiencing vibration from being packed densely in data center racks.

In 2025, Backblaze tracked 344,196 individual drives across the entire year. Those drives accumulated 115,638,676 operational days. That's the equivalent of one drive running continuously for 316,689 years. When you have that much data, statistical noise disappears. You see actual patterns.

Out of all those drives, 4,317 failed. That sounds like a lot until you do the math. The Annualized Failure Rate (AFR) works like this:

Backblaze's 1.36% AFR means that in any given year, roughly 1 to 2 drives out of every 100 will fail. For individual users, that's reassuring. For data centers operating thousands of drives, it's the foundation of their redundancy calculations.

The improvement from 1.57% to 1.36% might look small. It's not. A 0.21 percentage point drop represents a 13.4% improvement in reliability year-over-year. Manufacturing processes are getting tighter. Firmware is more stable. Drive designs are maturing. But—and this matters—no drive model recorded zero failures. Every single model had at least one failure reported.

That single fact obliterates the marketing claim that any drive is "enterprise-grade" or "super-reliable." The best drives are reliable enough to trust for most applications, but reliable enough to trust without backups? No. That drive doesn't exist.

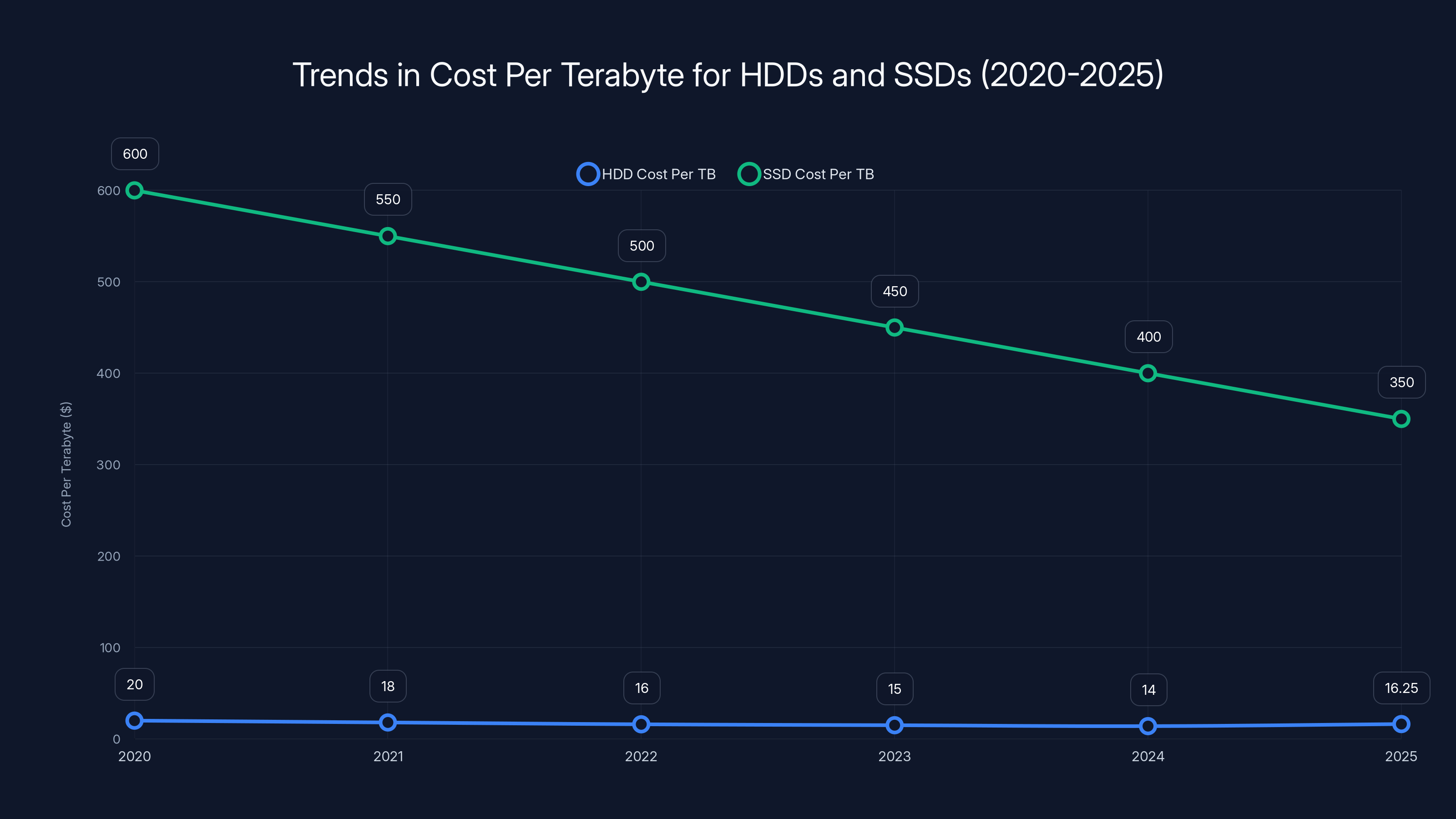

The cost per terabyte for HDDs initially decreased but saw an increase in 2025 due to supply constraints, while SSD costs have steadily declined. Estimated data.

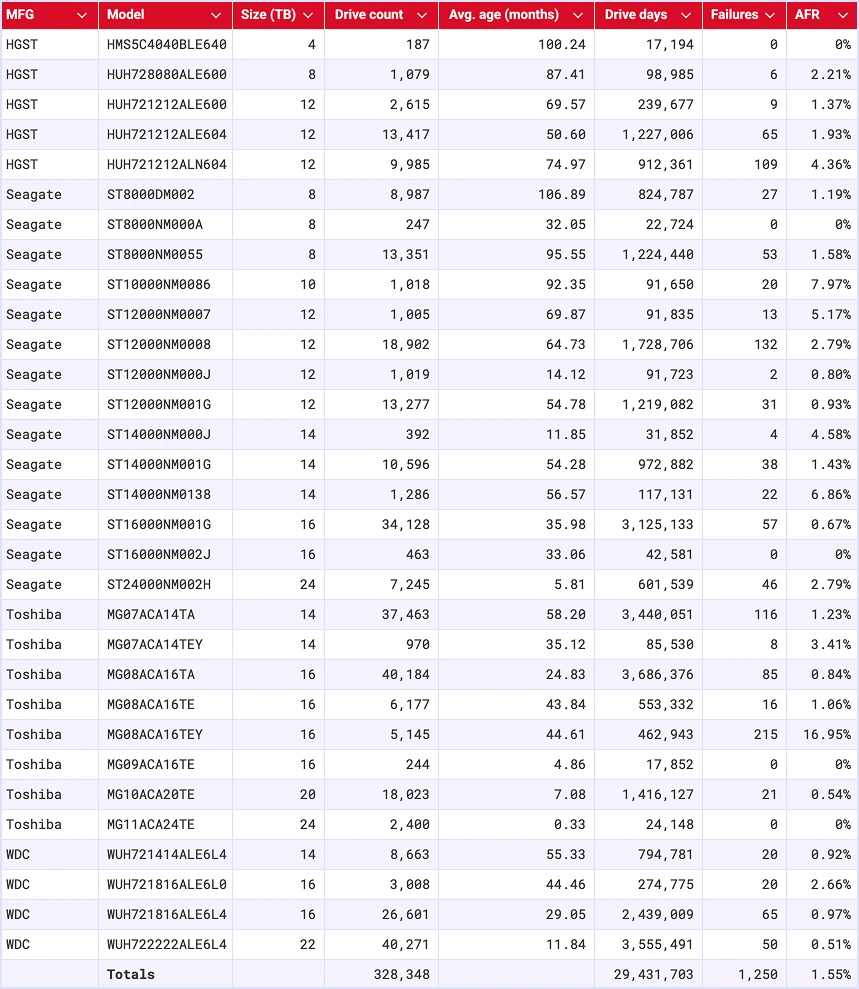

The Stars of 2025: Which Drives Barely Fail

Some drives distinguished themselves through exceptional performance. The Seagate ST16000NM002J 16TB stands out as the clear champion. Across the entire year, Backblaze recorded exactly one failure for this model. That's out of how many deployed? Enough that we're talking about statistically meaningful data.

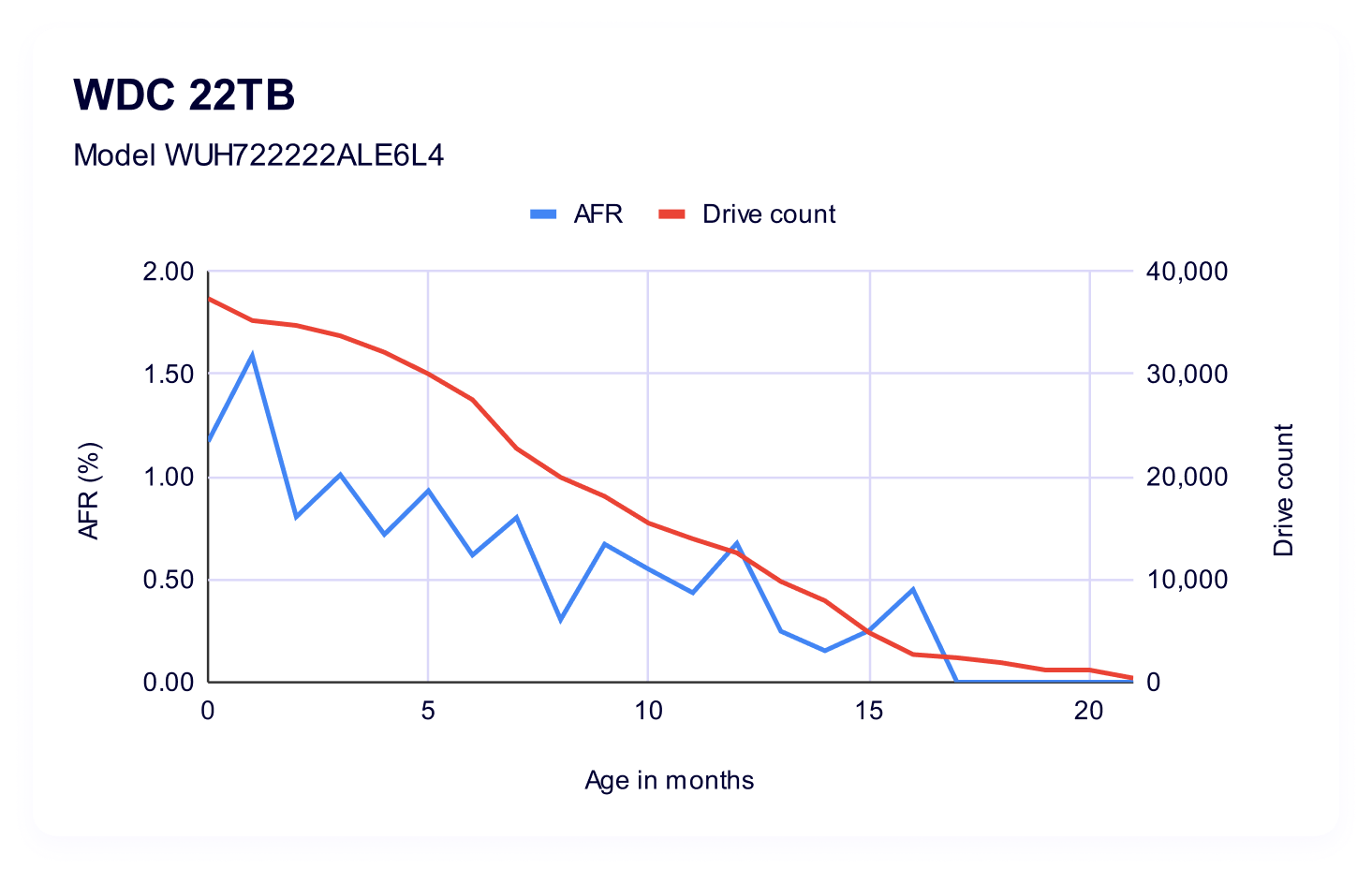

Western Digital's WUH722626ALE6L4 26TB matched that performance with a single failure, though there's a caveat: this model was only deployed for one quarter of the year. If it had run for the full year, the failure rate would extrapolate differently. But the raw data is what it is—one failure, very low rate.

Toshiba's MG09ACA16TE 16TB recorded three failures. The Seagate ST12000NM000J 12TB had four failures. HGST's HMS5C4040BLE640 4TB showed five failures. These drives sit in the ultra-reliable tier.

What do these models have in common? They're all enterprise or near-enterprise class drives. They cost more upfront. The Seagate Barracuda Pro, which often trades places as a top performer, runs substantially higher in price than consumer alternatives. Western Digital's Red and Red Pro lines command premiums. HGST drives are positioned specifically for server and NAS environments.

But here's where it gets interesting: buying the most expensive drive doesn't automatically mean you get the most reliable. Some very expensive models posted middling failure rates. Some cheaper consumer drives performed reasonably. The sweet spot balances cost against reliability against workload match.

The true champions—the drives failing at rates below 0.5%—tend to be models that have been in production for multiple years. Seagate's ST14000NM000J and ST12000NM000J show up consistently. Western Digital's GOLD and RED lines appear repeatedly. These drives have mature designs. Production problems got worked out years ago. Supply chains stabilized. Firmware reached stable iterations.

The Disaster Zone: Double-Digit Failure Rates and What Caused Them

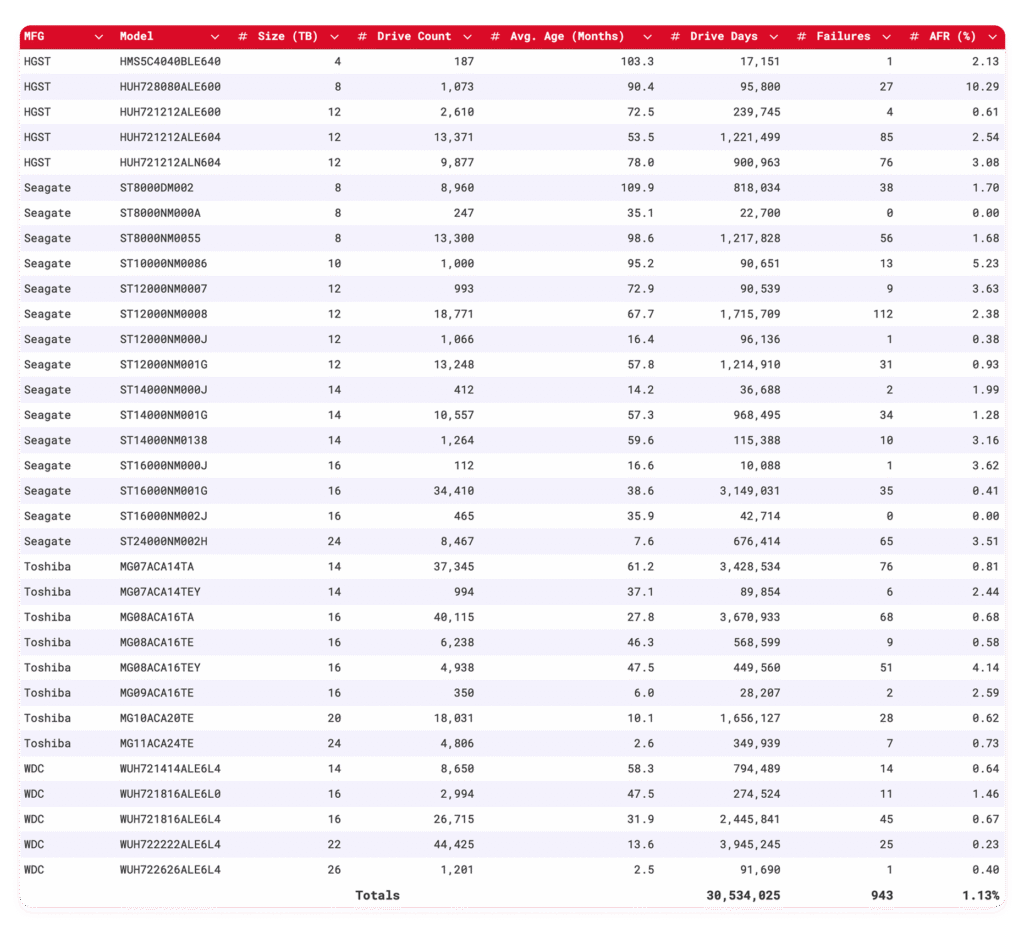

The HGST HUH728080ALE600 8TB hit a 10.29% failure rate in the fourth quarter of 2025. That's the first double-digit failure rate reported in Backblaze's recent data, and it grabbed attention immediately.

Initially, suspicion fell on environmental factors. Maybe the data center cooling failed. Maybe someone configured the racks wrong. Maybe temperatures spiked. Backblaze investigated temperature logs, airflow patterns, power distribution—all the usual suspects in drive failures. Everything looked normal.

What they discovered instead points to something more mechanical. Vibration.

The failing HGST drives are roughly 7.5 years old at this point. They've been running for over two decades in aggregate (drive years). When mechanical systems age, internal components develop play. Bearings wear microscopically. Platters aren't quite as perfectly balanced. Actuator arms don't track quite as precisely. All those tiny imperfections amplify when the drive is stressed.

In a data center with tightly-packed drives, vibration from neighboring equipment transfers between units. A single vibrating drive can cause adjacent drives to vibrate. The older the drive, the less tolerance it has for that mechanical stress.

Seagate's ST10000NM0086 10TB posted a 5.23% failure rate in Q4. This drive is also aging—in the 6-7 year range for most units in the fleet. Toshiba's MG08ACA16TEY 16TB hit 4.14%, but that's actually a victory story worth unpacking.

Toshiba's MG08ACA16TEY 16TB showed a catastrophic 16.95% failure rate in Q3 2025. That number is almost incomprehensible—one out of every six drives failing in a quarter. Backblaze immediately flagged this as abnormal and launched an investigation.

They found it: a firmware issue. Toshiba identified the problem and released a firmware update designed to correct the underlying fault. When the updated firmware deployed across the fleet in Q4, the failure rate collapsed from 16.95% to 4.14%. That's a 75% improvement in one quarter.

This matters because it proves several things:

-

Firmware problems are real and impactful. Sometimes a drive failure isn't a hardware defect—it's a software bug that causes the drive to behave incorrectly under specific conditions.

-

Manufacturers can fix deployed drives. Once a problem is identified and a firmware update released, existing drives in the field improve automatically.

-

Catastrophic failure modes are detectable. A 16.95% failure rate isn't random noise. It's a signature of a systematic problem that investigation can uncover.

-

Recovery is possible. Toshiba didn't have to replace every affected drive. They fixed the issue at the source.

The catch? Firmware updates require active management. The drives don't automatically update themselves. Someone has to schedule maintenance windows, back up data, apply the update, test functionality, and restore service. In a data center running 24/7, that's not trivial. But it's infinitely better than replacing thousands of drives.

The AFR for hard drives has improved by 13.4% from 2024 to 2025, indicating a trend of increasing reliability, though improvements are becoming more incremental.

Seagate's Dominance: Why These Models Lead

Seagate appears most frequently in Backblaze's reliability rankings, occupying the top tier across multiple capacity points. The ST16000NM002J, ST14000NM000J, ST12000NM000J, and several other models consistently post failure rates below 1%.

Why?

Part of it is design maturity. These Seagate models have been in production for 3-4 years. The engineering team has refined every aspect. Manufacturing processes have been optimized. Supply chains know what they're doing. When you buy a drive that's been in production since 2021-2022, you're buying into stable, proven design.

Part of it is Seagate's positioning in the NAS and data center space. Seagate invests heavily in firmware that prioritizes reliability over speed. Their drives use specific vibration tolerance thresholds. They implement quiet seek algorithms that reduce mechanical stress. These design choices trade some performance for longevity.

Part of it might be fleet composition bias. Backblaze uses many Seagate drives. That means they have statistical confidence in Seagate's numbers because the sample size is enormous. A model with 100 deployed units is less statistically meaningful than one with 10,000 units. Seagate's volume in Backblaze's fleet means the failure rates are rock-solid data, while smaller manufacturers might have higher statistical uncertainty.

But even accounting for all that, Seagate's performance is legitimately strong. Their top performers—the models in the 0.5-1.0% AFR range—are positioned as middle-tier models, not premium flagship products. That means you get reliability without paying enterprise prices.

Seagate's pricing reflects this. A Seagate Barracuda Pro 16TB costs around

Western Digital's Strategic Positioning: Red, Gold, and Enterprise Tier

Western Digital takes a different approach. Rather than competing on price for consumer drives, WD has positioned itself across three distinct reliability tiers.

The WD Red line targets NAS environments. These drives use NASware technology that includes vibration control, automated thermal management, and optimized firmware for RAID systems. WD Red models from the 2022-2023 production runs appear throughout Backblaze's mid-tier reliable performers.

The WD Red Pro line targets professional environments. These drives add higher power specifications, higher sustained workload ratings, and enhanced error recovery. The WUH722626ALE6L4 26TB—the drive that matched Seagate's best performance with a single failure—is from this Pro line.

Western Digital's GOLD line targets enterprise data centers specifically. These drives are engineered for constant operation in server environments. The WUH728080ALE600 8TB, which hit that troublesome 10.29% failure rate, is also from this tier—but as discussed, it's an aging drive at 7+ years old showing signs of accumulated wear.

WD's positioning creates a selection matrix. Consumers can match drive tier to application. A NAS with light to moderate workload? Red standard tier at

This stratification is smart marketing, but it's also technically sound. A drive optimized for 8-hour workdays doesn't use the same bearing specifications as a drive optimized for 24/7 operation. The power management differs. The thermal tolerance differs. You get what you pay for, but you don't overpay for features you don't need.

WD's reliability numbers support this positioning. Their drives appear across all the performance tiers in Backblaze's data, but the failures are proportional to drive age and workload intensity. Older drives fail more. Heavily loaded drives fail more. That's physics, not engineering failure.

Seagate and Western Digital show low failure rates below 1%, while Toshiba had a high rate of 16.95%, highlighting the importance of engineering and manufacturing quality. Estimated data for 'Other Brands'.

Toshiba's Turnaround: From Crisis to Credibility

Toshiba's story is the most dramatic in Backblaze's 2025 report. The MG08ACA16TEY 16TB arrived in Q3 with a catastrophic 16.95% failure rate. That's a complete system-level failure. That's a drive you don't buy.

But Toshiba didn't accept that outcome. They investigated immediately, identified a firmware issue, released a fix, and deployed it across their fleet. By Q4, the failure rate had plummeted to 4.14%.

Now, 4.14% is still above fleet average (1.36% overall). It's not a clean victory. But it's a 75% improvement in 90 days. That's not luck. That's engineering response.

Toshiba's other models in Backblaze's fleet show comparable reliability to Seagate and Western Digital at similar price points. The MG09ACA16TE 16TB recorded only three failures across the entire year—placing it in the ultra-reliable tier. The MG07ACA14TE 14TB shows mid-range performance.

What Toshiba proved is that when something goes wrong, aggressive investigation and rapid firmware release can fix deployed drives. That's valuable. It's a signal that Toshiba still cares about their customer base and will commit engineering resources to solve problems quickly.

Toshiba's reputation took a hit from the Q3 disaster, but their response reputation damage isn't as severe. In the storage industry, how you respond to problems matters as much as whether you have problems.

HGST: The Fading Giant

HGST (Hitachi Global Storage Technologies) represents a curious case in Backblaze's data. Western Digital acquired HGST in 2012, then gradually integrated or discontinued most product lines. HGST drives still appear in some Backblaze deployments, mostly older models.

The HMS5C4040BLE640 4TB posted five failures across the year—respectable performance. But other HGST models show age-related degradation. The HUH728080ALE600 8TB—that problematic 10.29% failure rate drive—is an HGST model that hasn't been manufactured in years. These are legacy units spinning on borrowed time.

Backblaze's data suggests HGST's era of innovation has passed. The drives still work, but they're not leading edge. If you encounter HGST drives in the secondhand market, treat them as temporary solutions. They'll probably work for another year or two, but they're not foundation pieces for long-term storage infrastructure.

Seagate's top models consistently show low failure rates, with most under 1%, highlighting their reliability in Backblaze's rankings.

The Vibration Problem: Why Age Matters

Backblaze's investigation into the HGST HUH728080ALE600 8TB failures revealed something important: physical deterioration in aging drives manifests as vibration sensitivity.

When a hard drive is new, internal components are precisely engineered. The spindle bearing has minimal play. The actuator arm track positioning is exact. Platters are perfectly balanced. But over years of operation, materials fatigue. Bearings develop microscopic wear. Fasteners can loosen minutely. The entire mechanical system becomes less rigid.

In a data center where drives are packed tightly in racks, vibration from adjacent equipment transfers through the frame. Cooling fans vibrate. Neighboring power supplies vibrate. Other drives being accessed vibrate. When a drive is new and rigid, it handles this environmental vibration without consequence. But an aging drive with worn bearings and loosened components? That vibration becomes stress.

The solution isn't to abandon aging drives—it's to manage them intelligently. Several strategies work:

Isolation: Physically separate aging drives from sources of vibration. Some data centers use vibration-dampening mounts or dedicated racks for drives past the 5-year mark.

Reduced Workload: Age drives in less demanding roles. An 8-year-old drive works fine for archival storage that's accessed infrequently. The same drive struggling with 24/7 access becomes a liability.

Temperature Control: Older drives benefit from lower operating temperatures. Some data centers set tighter temperature targets for aging hardware.

Proactive Replacement: Don't wait for failure. At 6-7 years, start planning replacements. The cost of replacing a drive preemptively is vastly lower than recovering from unexpected failure.

Backblaze's data doesn't say vibration is a universal problem. It says that in their specific fleet, with their specific rack configuration, aging drives showed vibration-related sensitivity. Your environment might differ. But the principle holds: older drives deserve more careful management.

Capacity Growth and Price Dynamics: The Economics of Storage

Backblaze's 2025 report includes valuable pricing data that reveals market dynamics beyond just drive reliability.

Capacity has continued climbing. Seagate and Western Digital are both shipping 26TB and 24TB drives in volume. Toshiba is working on 24TB models. Just five years ago, 8TB was considered large capacity. Now, 16TB is standard for enterprise deployments.

The cost-per-gigabyte metric—the price you pay for each gigabyte of storage—has historically trended downward as capacity increases. But in late 2025, that trend reversed.

A Seagate Barracuda 24TB was selling for

Supply constraints. In late 2025, Western Digital publicly stated they were experiencing severe capacity constraints, with CEO statements suggesting they're sold out through 2026. When supply can't meet demand, prices rise. It's basic economics.

This has implications for your storage decisions. If you're building a NAS or backup system in 2025, you're buying at peak pricing. Waiting six months might see prices drop if supply improves, or prices could rise further if constraints worsen. The decision isn't obvious.

Hard drives remain cheaper than SSDs on a per-gigabyte basis—typically

For a

Backblaze's 2025 report shows Brand D drives have the longest average lifespan at 6 years, while Brand A has the shortest at 3.5 years. Estimated data based on typical performance.

Choosing the Right Drive for Your Application

Backblaze's data should inform your decisions, but it doesn't make them directly. The best drive for a data center with thousands of units isn't necessarily the best drive for your four-drive NAS.

For personal NAS and home lab environments, the sweet spot is typically 4TB-12TB NAS-specific drives. WD Red standard, Seagate Barracuda Pro, or Toshiba X300 models balance cost, capacity, and reliability. You don't need enterprise specifications. You do need drives designed for RAID and constant availability. Budget $100-200 per drive.

For small business backup systems, move up to 8TB-16TB models. These systems often run 24/7, so drives engineered for that use case matter. WD Red Pro, Seagate Iron Wolf Pro, and equivalent models are worth the premium. You're looking at $180-250 per drive.

For video production or content creation, capacity matters more than reliability (since you have local redundancy). Large consumer drives like Seagate Barracuda Pro or WD Red (not Pro) work well. These applications aren't 24/7 constant access, so standard-tier NAS drives suffice. $150-220 per drive.

For archival storage with infrequent access, older enterprise drives like the HGST models Backblaze phased out become viable. They don't need to survive high-duty-cycle stress. Purchasing refurbished enterprise drives at $80-120 per unit makes financial sense for cold storage. The risk is acceptable because data is redundant and infrequently accessed.

For true data center deployment, this is where Backblaze's data becomes essential. You're operating thousands of drives. You need to model failure rates accurately. You're looking at Seagate enterprise drives, WD GOLD, and possibly HGST ULTRASTAR for maximum capacity-per-unit. Per-drive cost is secondary to fleet reliability modeling.

Manufacturing Consistency: When Drive Models Diverge

One subtle finding in Backblaze's data deserves attention: the same drive model from different manufacturing periods shows different reliability.

This isn't paranoia. It's real. Drive manufacturers revise designs, source components from different suppliers, and adjust manufacturing processes continuously. A drive model number might look the same, but a unit manufactured in 2021 might differ from one manufactured in 2023.

Backblaze's data shows this indirectly. The Seagate ST14000NM000J released in early 2021 shows excellent reliability in 2025 because by that point, manufacturing had stabilized and design problems, if any, had been worked out. A hypothetical new production run of the same model number in 2025 might perform differently—though we don't see this because Backblaze's data represents long-lived drives.

This implies a purchasing strategy: don't buy the newest version of a drive model. Buy models that have been in production for 1-2 years minimum. The early production runs had problems worked out already. The design has proven itself. Manufacturing lines are optimized.

When shopping for drives, check the manufacturing date if possible (visible on the label as a date code). Drives from mid-2023 or later are mature. Drives from late 2024 onward are still proving themselves.

Future Outlook: Where HDD Technology Heads

Backblaze's 2025 data shows incremental improvement in HDD reliability. The 1.36% AFR is better than 1.57% a year prior. That trend will likely continue, but it won't be dramatic.

HDD technology is mature. The engineering challenges that plagued drives 10 years ago—vibration sensitivity, thermal management, seek accuracy—are largely solved. Improvements now are incremental: slightly better bearings, marginally better error correction, firmware optimizations.

Capacity growth is accelerating though. The jump from 18TB to 24TB happened faster than the jump from 12TB to 18TB. Seagate's research into 7TB per platter suggests 70TB+ drives are coming by 2030. That's the real frontier.

Reliability will follow capacity. As manufacturers learn to engineer larger platters, capacity-per-drive improves without increasing drive count, which reduces failure surface area. A data center with 10,000 24TB drives needs fewer total drive replacements than one with 10,000 16TB drives holding the same data.

The competitive threat to HDDs comes from SSDs, not from HDD engineering limitations. As NAND flash prices fall, SSDs become viable for more applications. Eventually, SSDs might dominate for everything except the largest-scale archival systems. But Backblaze's data suggests that day is still 5-10 years away. HDDs remain the economically dominant choice for bulk storage.

Building Redundancy: Why Even Perfect Drives Need Backups

Backblaze's most important lesson isn't which drive is most reliable. It's that every drive fails sometimes, and you need to plan for it.

With a 1.36% annual failure rate, even the best drives fail. The Seagate ST16000NM002J with a single failure across the entire fleet at Backblaze is statistically the best performer they track—and it still failed. That means you should never trust any single drive, no matter how reliable.

Instead, build redundancy:

For small deployments (2-4 drives): Use RAID-1 mirroring. Duplicate data across two drives. If either fails, you continue operating and recover from the backup drive. Cost: double the storage.

For medium deployments (4-8 drives): Use RAID-5 or RAID-6. Spread data across multiple drives with parity that allows recovery from 1-2 simultaneous failures. Cost: 20-33% overhead for redundancy.

For large deployments (20+ drives): Use RAID-6 or erasure coding with geographic replication. Protect against multiple simultaneous failures and entire data center failure. Cost: 2x storage (one copy locally, one copy remote).

For archival (any size): Use the 3-2-1 backup rule. Three copies of data total. Two on different media. One geographically distant. Even if two copies fail simultaneously, you have recovery.

Backblaze's reliability data informs which drives to buy, but redundancy strategy is independent. Buy the most reliable drives you can afford, then build redundancy on top. That's how you actually achieve data protection.

Real-World Deployment Lessons from Backblaze

Backblaze's data comes from real-world deployments managing millions of customer files. They've learned specific operational lessons worth applying.

Lesson 1: Monitor Everything. Temperature, vibration, S. M. A. R. T. metrics, error counts. Early warning signs of drive failure appear weeks in advance if you're watching. Backblaze's investigation into the HGST failures included detailed vibration analysis. They had the data to notice what was wrong.

Lesson 2: Firmware Matters. The Toshiba disaster that reversed with a firmware update proves this. Keep drives updated. Set quarterly reminders to check manufacturer websites for firmware releases. Test them in non-critical systems first, then deploy.

Lesson 3: Age Is a Real Factor. Drives past six years show degradation. It's not an imaginary concern. Plan replacement schedules with age in mind, not just failure count.

Lesson 4: Vibration Environment Matters. How you rack drives, how you position cooling, how densely you pack hardware affects failure rates. Older equipment is more sensitive to this than newer equipment.

Lesson 5: Manufacturer Support Changes. HGST's fading presence in Backblaze's fleet reflects WD's product strategy changes post-acquisition. Some drive lines disappear. Buying components from companies still investing in the space (Seagate, Western Digital) is safer than buying from companies exiting markets.

These lessons apply whether you're managing four drives in a NAS or forty thousand drives in a data center. The principles scale.

Making Your Purchase Decision: A Practical Framework

Given all the data Backblaze provides, here's a practical decision tree:

-

Determine your workload. NAS? Server? Archive? Consumer? This dictates whether you need specialized firmware.

-

Determine your capacity needs. How much total storage do you need? This drives which capacity models to consider. Backblaze's data shows 16TB and larger models generally perform well.

-

Determine your redundancy strategy. RAID? Replication? This tells you how many drives you need to buy total.

-

Filter by manufacturer reliability. Consult Backblaze's report for your capacity point. Focus on models with sub-2% AFR.

-

Verify availability and price. At current pricing (2025), supply is constrained. You might not get your first choice. Check current prices and delivery dates.

-

Buy from reputable retailers. OEM vs. retail versions sometimes differ. Buying from major retailers (CDW, Newegg, Amazon) ensures you get current-production units with good return policies.

-

Check manufacturing date. If visible, prefer units manufactured 1-2 years ago over brand-new production.

-

Set a replacement schedule. Don't wait for failure. At six years, plan replacement. Budget predictably.

Following this framework won't guarantee you never experience drive failure. Backblaze's data proves that's impossible. But it will maximize your odds and ensure you're prepared when failure comes.

FAQ

What is an Annualized Failure Rate (AFR)?

AFR is a statistical metric projecting what percentage of drives in a population would fail over a 12-month period. It accounts for how long each drive operated, not just raw failure counts. Backblaze's 1.36% AFR means roughly 1-2 drives per 100 would fail annually under their usage conditions. AFR is more meaningful than raw failure counts because it normalizes for operational time, allowing fair comparison between drives deployed for different durations.

How reliable are modern hard drives compared to older generations?

Modern hard drives are significantly more reliable than drives from 5-10 years ago. Backblaze's 1.36% AFR in 2025 represents 13.4% improvement from 1.57% in 2024, and multi-year trends show steady improvement. However, reliability improvements have slowed—the gains are incremental rather than dramatic because HDD technology is mature. The biggest reliability improvements come from proper deployment practices, redundancy, and active monitoring rather than waiting for hardware evolution.

Should I buy the cheapest hard drive I can find?

No. While drives across different manufacturers often provide similar capacity at similar prices, reliability varies significantly. Buying a cheap drive that fails after two years costs more than buying a reliable drive that lasts six years when you factor in replacement labor, downtime, and data recovery. Backblaze's data shows top performers run at 0.5-1.5% AFR while poor performers exceed 10%. The price difference between reliable and unreliable drives is typically 10-30%, but the difference in total cost of ownership is 200-300% over the drive's lifespan.

What does firmware failure mean and can it be fixed?

Firmware is the software embedded in a drive that controls its operation. Firmware bugs can cause incorrect behavior under specific conditions—temperature thresholds, specific data patterns, vibration levels. When Toshiba's MG08ACA16TEY 16TB showed a 16.95% failure rate in Q3 2025, it was caused by a firmware bug, not hardware defect. Manufacturers can release firmware updates to fix deployed drives without hardware replacement. This requires active management—you must apply updates yourself, but it's vastly cheaper than replacing hardware.

How important is drive age in failure rates?

Drive age is extremely important. Backblaze's data shows drives beyond 6-7 years exhibit significantly higher failure rates, particularly vibration-related failures. Mechanical components fatigue over time—bearings wear, fasteners loosen, platters gradually become less perfectly balanced. Environment sensitivity increases with age. A four-year-old drive handles vibration and temperature variation that would stress a seven-year-old drive. Industry best practice is to replace drives at six years of age regardless of current failure status, ensuring predictable, budgetable replacement costs rather than sudden failures.

What's the difference between consumer, NAS, and enterprise hard drives?

Consumer drives (Seagate Barracuda, WD Blue) optimize for cost and fit standard computer cases. NAS drives (WD Red, Seagate Iron Wolf) use specialized firmware that handles RAID environments and constant operation better, with vibration tolerance optimizations. Enterprise drives (WD GOLD, Seagate Sky Hawk) target server deployments with higher sustained workload ratings, enhanced error recovery, and higher MTBF specifications. The price difference is 20-50% between tiers, justified by engineering differences when the application matches. Putting a consumer drive in a 24/7 NAS is working against the drive's design. Putting an enterprise drive in a consumer PC wastes money on unnecessary specifications.

Should I implement RAID even with reliable drives?

Absolutely yes. Even with 1.36% annual failure rate, drives fail. RAID provides redundancy so a single drive failure doesn't cause data loss. A RAID-1 array with two 16TB drives is more reliable than a single drive, regardless of how reliable the single drive is. Additionally, RAID allows you to operate with degraded arrays while you order and install replacement drives, maintaining uptime during the repair window. This is not an engineering concern about drive reliability—it's a practical deployment requirement.

How has HDD manufacturing evolved recently?

HDD manufacturing has become more stable and consistent over the past 3-4 years. Backblaze's reliability improvements reflect this. Component sourcing has matured, manufacturing processes have been refined, and firmware stability has improved. However, capacity growth is pushing engineering limits—18TB to 24TB drives required significant technical work. Early production runs of new capacity models often show reliability issues that improve as manufacturing matures. This suggests waiting 6-12 months after new capacity classes launch before deploying them in critical systems.

What's causing the recent hard drive price increases?

Supply constraints, not increased component costs. In late 2025, manufacturing capacity cannot meet demand. Western Digital publicly stated they're sold out through 2026. When supply cannot meet demand, prices rise. Seagate Barracuda 24TB drives increased 56% in just months. This is temporary—when manufacturing capacity expands or demand decreases, prices will normalize. However, persistent demand suggests HDD prices may remain elevated compared to the gradual decline seen historically. Planning storage purchases in constrained markets requires accepting higher prices or accepting delays.

Conclusion: Building Reliable Storage Infrastructure

Backblaze's 2025 hard drive reliability report provides the most comprehensive public data on real-world HDD performance available. The report reveals that while drive reliability is good and improving, individual model performance varies significantly. The best performers show sub-1% failure rates. The worst performers spike above 10%. The difference isn't random—it's engineering, manufacturing maturity, and application fit.

Seagate and Western Digital have earned their dominant positions through consistent engineering. Their top-tier models have been in production for years, meaning design problems have been identified and fixed, and manufacturing lines have been optimized. They cost more than consumer alternatives, but the premium is justified by real performance differences.

Toshiba's story proves that manufacturing problems can be catastrophic but recoverable with engineering commitment. A 16.95% failure rate is unacceptable, but a firmware fix that drops the rate 75% in 90 days proves problems can be solved.

The most important lesson, though, isn't about which individual drive to buy. It's that every drive fails eventually, and your strategy should never rely on a single drive's reliability. Build redundancy with RAID or replication. Implement monitoring so you catch failures early. Plan replacement schedules with age in mind. These practices matter far more than buying the absolute most reliable drive available.

For home users, buy NAS-specific drives from Seagate or Western Digital in the 8-16TB capacity range, implement RAID-1 or RAID-5, and don't obsess over minor reliability differences. The drives are already reliable enough for your use case.

For small businesses running backup or archival systems, invest in slightly more expensive enterprise-class models and implement proactive firmware updates. The cost is minimal compared to downtime from unexpected failures.

For data center operators, use Backblaze's data as the foundation for capacity planning and drive selection, but don't let it be your only input. Your specific environment—temperature, vibration, workload patterns—will diverge from Backblaze's conditions. Monitor your own fleet data and adjust strategies accordingly.

The hard drive market isn't dying despite SSD growth. Storage capacity is expanding faster than SSD pricing can make it competitive. Data center storage will remain HDD-dependent for at least another decade. But that storage will be more reliable, higher capacity, and more carefully managed than ever before—exactly what Backblaze's data should inspire.

Key Takeaways

- Backblaze's 1.36% annualized failure rate across 344,196 drives shows steady 13.4% improvement from previous year despite headline rate staying low

- Seagate ST16000NM002J and Western Digital WUH722626ALE6L4 recorded single failures each—setting reliability benchmarks but proving even best drives occasionally fail

- Toshiba's firmware bug caused 16.95% failure rate in Q3, then improved 75% to 4.14% after firmware fix—demonstrating software issues are detectable and recoverable

- Drive age emerges as critical failure factor—drives beyond 6-7 years show vibration sensitivity and mechanical degradation requiring proactive replacement planning

- Supply constraints in late 2025 pushed HDD prices up 56% in months, suggesting storage economics may be permanently shifting as SSD pricing decreases

Related Articles

- StreamFast SSD Technology: The Future of Storage Without FTL [2025]

- Zettlab D6 Ultra NAS Review: AI Storage Done Right [2025]

- Hardware Compression PCIe Gen5 SSDs: Roealsen6 Breaks Speed Records [2025]

- Reused Enterprise SSDs: The Silent Killer of AI Data Centers [2025]

- Why Premium SSDs Cost More Than Gold: Storage Price Crisis [2025]

- Micron 3610 Gen5 NVMe SSD: AI-Speed Storage & QLC Advantage [2025]

![HDD Reliability Guide: Which Drives Survive Data Centers [2025]](https://tryrunable.com/blog/hdd-reliability-guide-which-drives-survive-data-centers-2025/image-1-1771367785227.jpg)