Inside the Anthropic Leak: Unpacking the Claude Code Source Code Exposure [2025]

The tech world was recently shaken by the news that 512,000 lines of Claude Code's source code were inadvertently leaked by an Anthropic employee. This mishap exposed one of the company's most closely guarded secrets and raised significant questions about cybersecurity in the AI industry.

TL; DR

- Massive Leak: Over 512,000 lines of Claude Code's source code were leaked.

- Security Concerns: Highlights critical gaps in AI cybersecurity protocols.

- Industry Impact: Could alter competitive dynamics in AI research.

- Mitigation Strategies: Emphasize robust access controls and monitoring.

- Future Trends: Increased focus on secure coding practices.

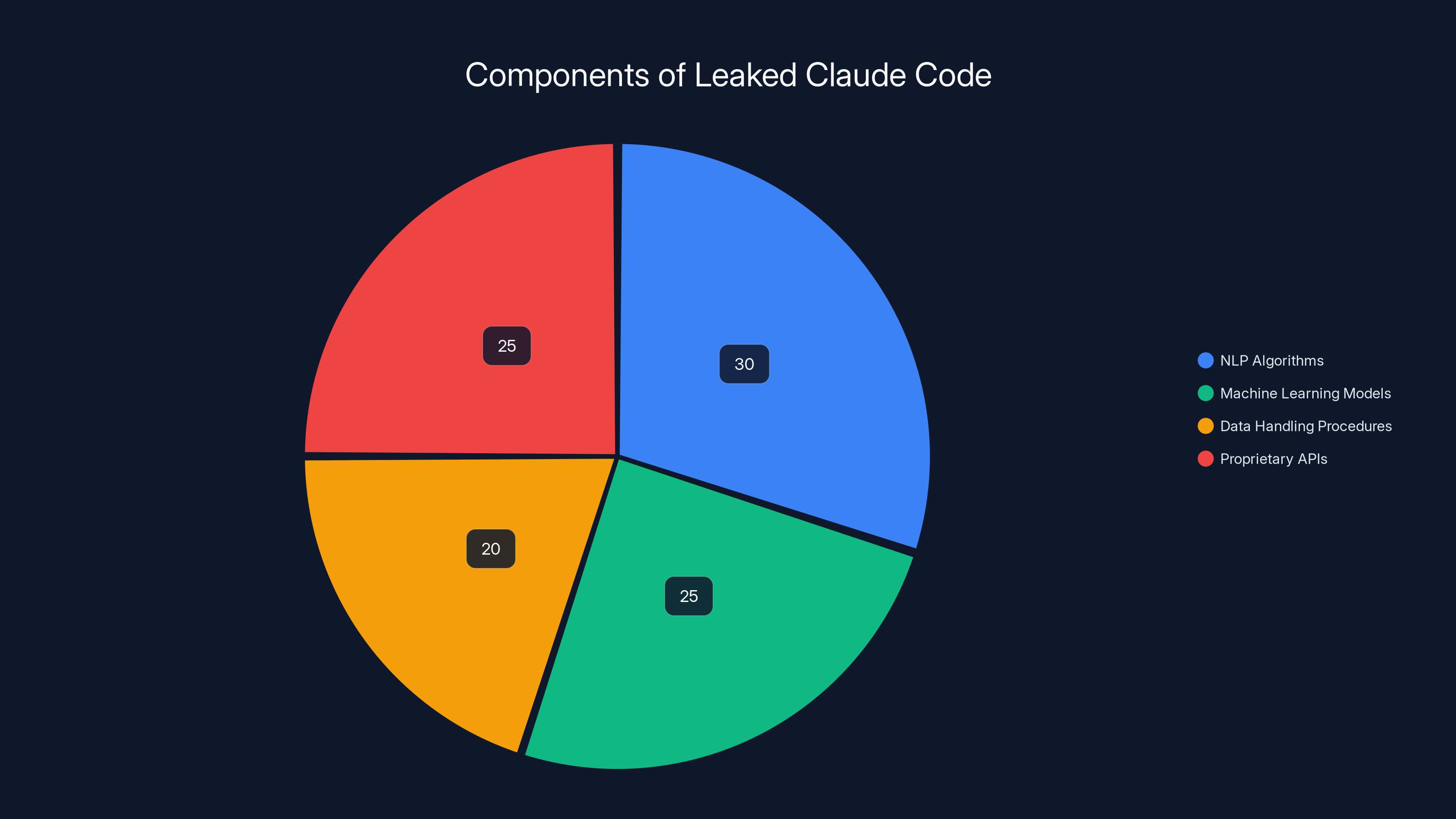

The leaked code primarily consists of NLP algorithms and machine learning models, each making up a significant portion of the leak. (Estimated data)

Understanding the Scope of the Leak

On a seemingly typical day, an Anthropic employee inadvertently exposed 512,000 lines of Claude Code's source code. This incident, involving over 1,900 Type Script files, quickly became a significant talking point in tech circles. But what does this mean for Anthropic, and more broadly, the AI sector?

What Happened?

In a classic case of human error, the leak occurred via an npm map file. This file, essential for linking Type Script to Java Script in web applications, inadvertently included sensitive code. Within hours, the code was mirrored and scrutinized by the public, revealing some of Anthropic's innovative approaches to AI.

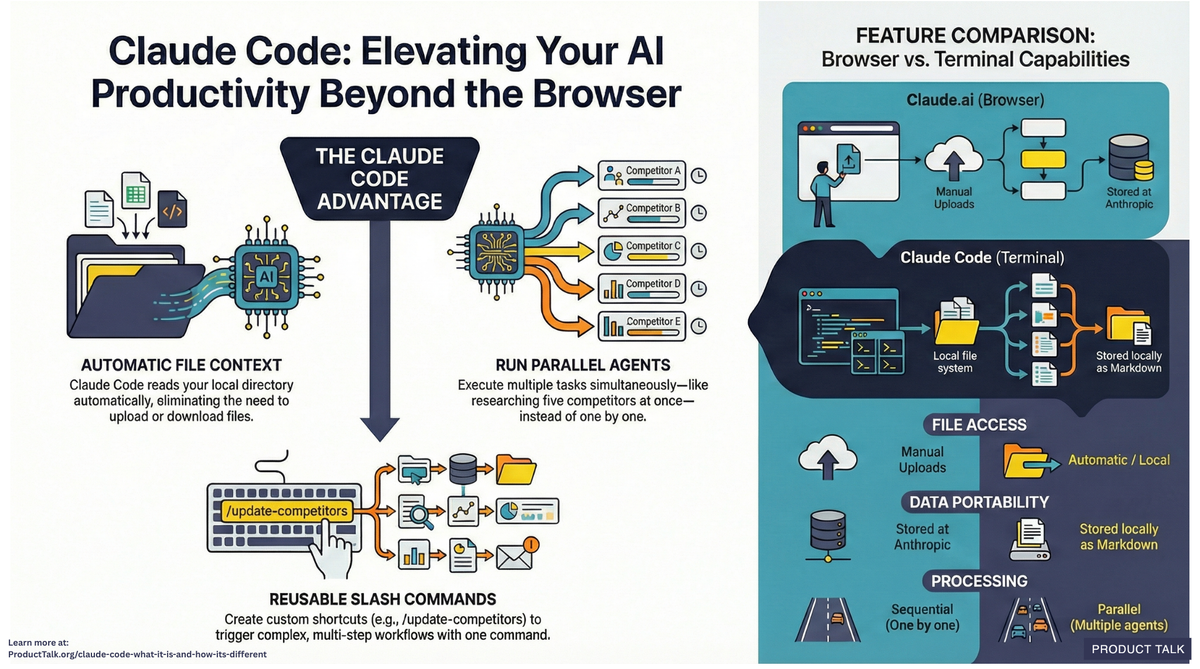

Why Is This Important?

The leak is not just about lost proprietary information. It's a reminder of the inherent vulnerabilities present in modern software development and distribution. With Claude Code being a significant piece of Anthropic's AI offerings, the exposure of its source code could have far-reaching implications for competitive positioning and intellectual property security.

Access controls and encryption are rated highly effective in securing source code, emphasizing their critical role in cybersecurity. (Estimated data)

The Technical Breakdown: What Was Leaked?

To understand the gravity of the leak, let's delve into the specifics of what the leaked code contained and its potential impact on AI development.

Key Components of Claude Code

- Natural Language Processing Algorithms: These algorithms are at the heart of Claude's ability to understand and generate human-like text.

- Machine Learning Models: The leaked code includes models designed to improve learning efficiency and accuracy.

- Data Handling Procedures: Sensitive data management protocols crucial for training AI models were exposed.

- Proprietary APIs: Interfaces that allow Claude to interact with other software systems.

The Impact on AI Development

The leak provides competitors insights into Anthropic's unique methodologies. While this might spur advancements in AI by allowing researchers to build on Anthropic's work, it also presents a risk of misuse or replication without proper attribution.

Cybersecurity Lessons Learned

This incident serves as a stark reminder of the importance of cybersecurity, especially in AI and software development.

Best Practices for Securing Source Code

- Access Controls: Implement strict access controls to ensure only authorized personnel can access sensitive code.

- Code Audits: Regular audits can help identify and mitigate potential vulnerabilities before they can be exploited.

- Encryption: Ensure that code repositories are encrypted to protect data integrity and confidentiality.

- Monitoring and Alerts: Set up real-time monitoring and alerts to detect unauthorized access promptly.

Common Pitfalls and Solutions

- Human Error: Automation can help reduce the risk of human error in code management processes.

- Lack of Training: Regular training sessions on cybersecurity protocols for employees can prevent accidental leaks.

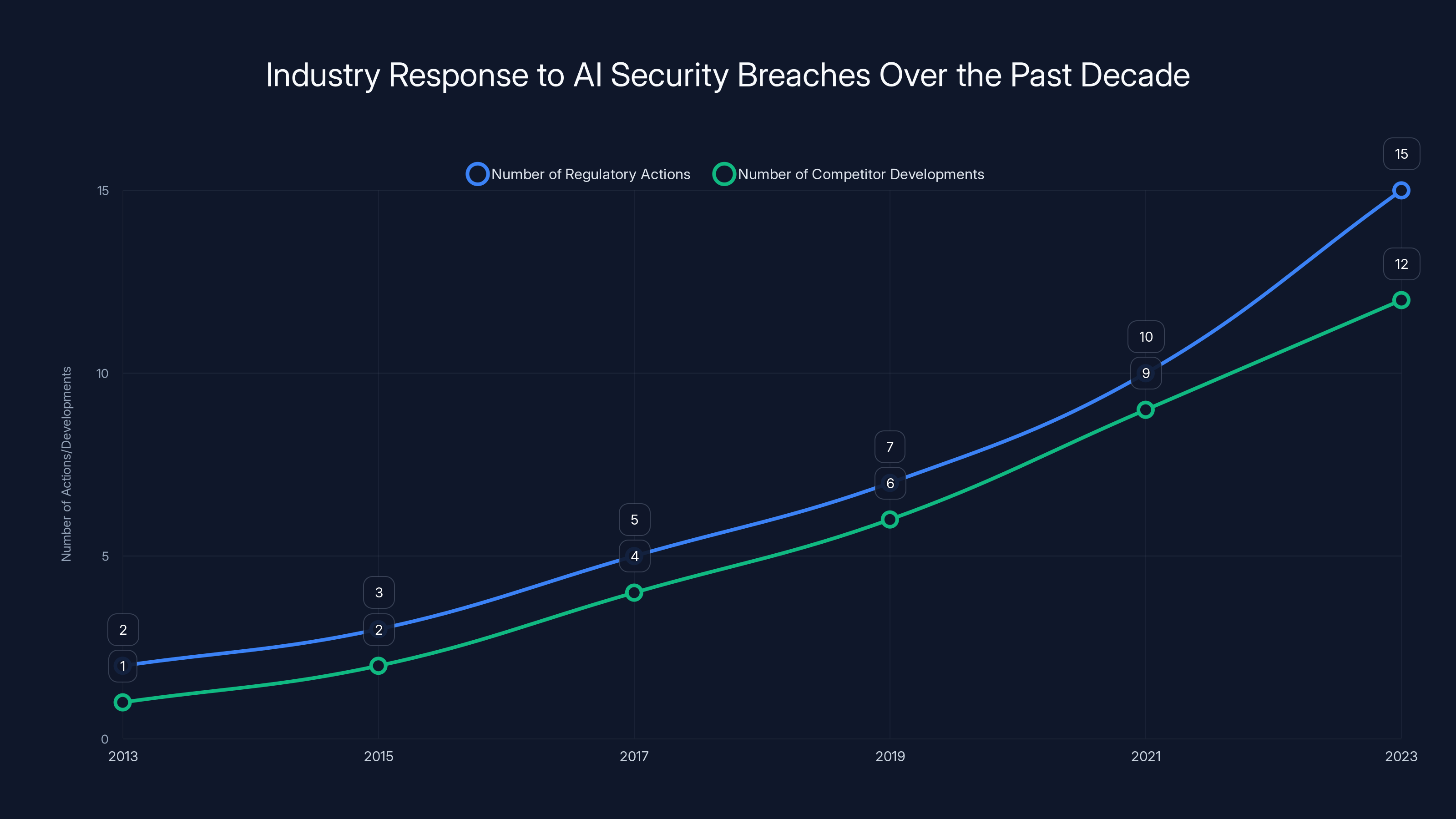

The chart illustrates an estimated increase in regulatory actions and competitor developments in response to AI security breaches over the past decade, highlighting growing industry vigilance. Estimated data.

The Industry Reaction: What's Next?

Competitor Response

The leak has put competitors in a challenging position. With access to Claude's source code, they could potentially accelerate their own AI development. However, ethical considerations and potential legal repercussions could deter blatant misuse.

Regulatory Implications

This incident highlights the need for stricter regulations and guidelines around AI development and data security. Governments and industry bodies might push for more comprehensive cybersecurity standards to prevent similar breaches in the future.

Practical Implementation Guides

For developers and companies looking to safeguard their AI projects, here are some practical steps to implement.

Developing a Secure AI Codebase

- Use Secure Coding Practices: Follow industry standards and guidelines to write secure code.

- Implement Version Control Systems: Use tools like Git to track changes and manage code versions effectively.

- Conduct Penetration Testing: Regularly test your systems for vulnerabilities using penetration testing.

Incident Response Plan

Having a clear incident response plan can mitigate the impact of any security breaches. This includes:

- Identifying Key Stakeholders: Designate a team responsible for managing security incidents.

- Communication Protocols: Establish clear communication channels for reporting and addressing breaches.

- Post-Incident Review: Analyze the breach to prevent future incidents.

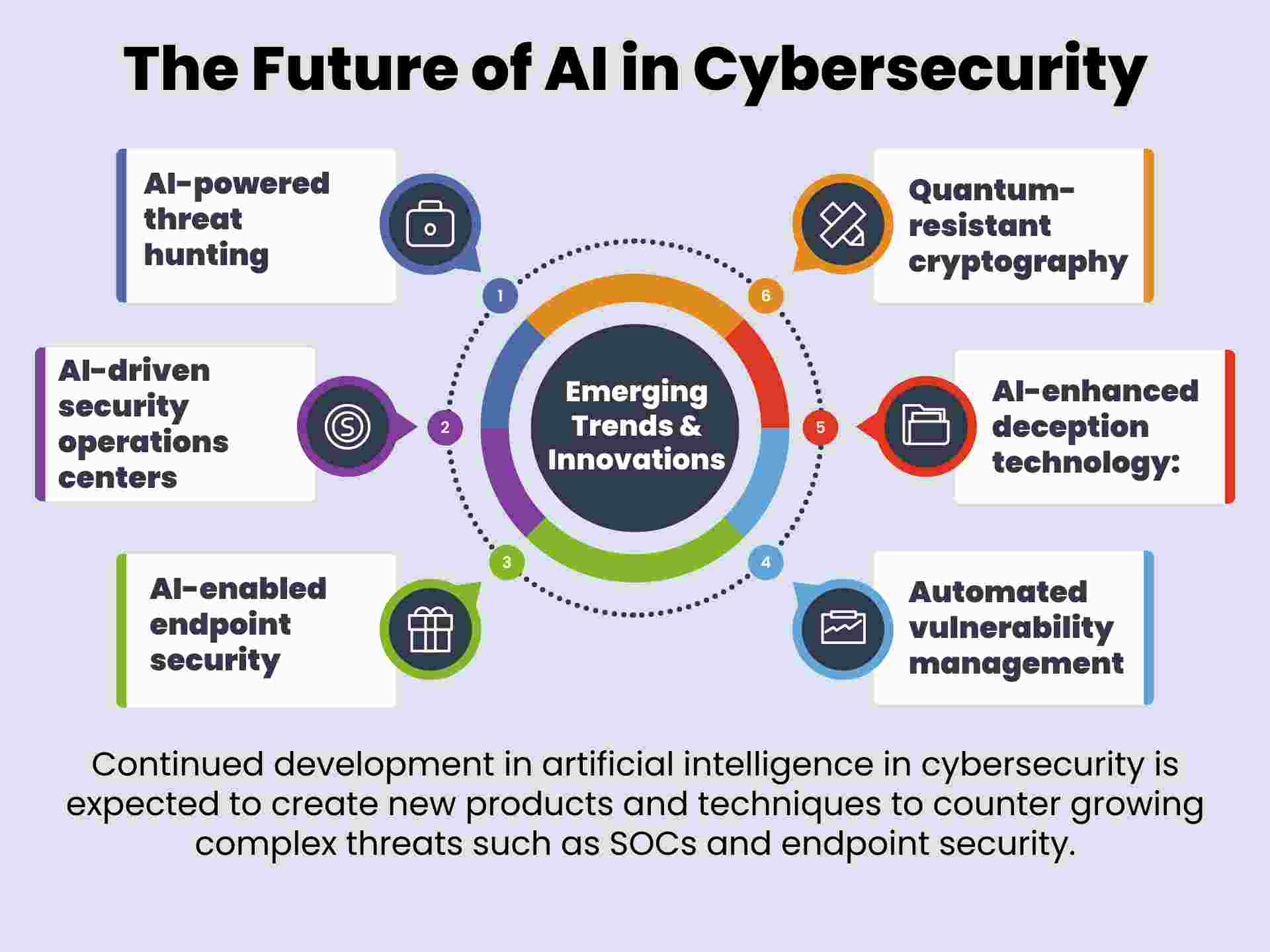

Future Trends in AI Security

As AI continues to evolve, so too will the security measures required to protect it.

Predictive Security Measures

AI can be leveraged to predict potential security threats before they occur. By analyzing patterns and anomalies, AI systems can proactively alert developers to potential risks.

Enhanced Data Privacy Protocols

With increasing amounts of data being used in AI training, there will be a stronger focus on ensuring data privacy through advanced encryption and anonymization techniques.

Conclusion: The Path Forward

The Anthropic code leak serves as a wake-up call for the AI industry. As we move forward, it's crucial to prioritize security in every aspect of AI development. By implementing robust cybersecurity measures and fostering a culture of vigilance, companies can protect their innovations and maintain their competitive edge.

Use Case: Automate your security monitoring with AI to prevent potential breaches before they happen.

Try Runable For FreeFAQ

What is the Anthropic code leak?

The Anthropic code leak refers to the accidental exposure of over 512,000 lines of Claude Code's source code, which was leaked via an npm map file.

How can companies prevent similar leaks?

Companies can prevent similar leaks by implementing strict access controls, regular code audits, and training employees on cybersecurity best practices.

What are the potential impacts of the leak?

The leak could alter competitive dynamics in the AI industry by exposing Anthropic's proprietary methodologies. It also raises significant cybersecurity concerns.

How should companies respond to a code leak?

Companies should have an incident response plan, which includes identifying key stakeholders, establishing communication protocols, and conducting post-incident reviews.

What future trends can be expected in AI security?

Future trends in AI security include predictive security measures using AI, enhanced data privacy protocols, and stricter regulatory guidelines.

What role does AI play in cybersecurity?

AI plays a critical role in cybersecurity by analyzing patterns and anomalies to predict potential security threats and alert developers to potential risks.

Key Takeaways

- The leak exposed over 512,000 lines of Claude Code.

- Critical gaps in AI cybersecurity were highlighted.

- Competitors could gain insights into Anthropic's methodologies.

- Robust access controls and monitoring are essential.

- Future trends will focus on predictive security measures.

Related Articles

- Unveiling OpenClaw Security Risks You Must Know About [2025]

- Tech Sovereignty: Why One Size Doesn’t Fit All [2025]

- AI and Bots Overtake Human Traffic: Navigating the New Internet Landscape [2025]

- AI Security: Understanding and Mitigating Risks in the AI Era [2025]

- Why Volumetric Video Excels in Sports but Faces Challenges in Cinema [2025]

- 10 Innovative Projects Built with OpenClaw [2025]

![Inside the Anthropic Leak: Unpacking the Claude Code Source Code Exposure [2025]](https://tryrunable.com/blog/inside-the-anthropic-leak-unpacking-the-claude-code-source-c/image-1-1775043283076.jpg)