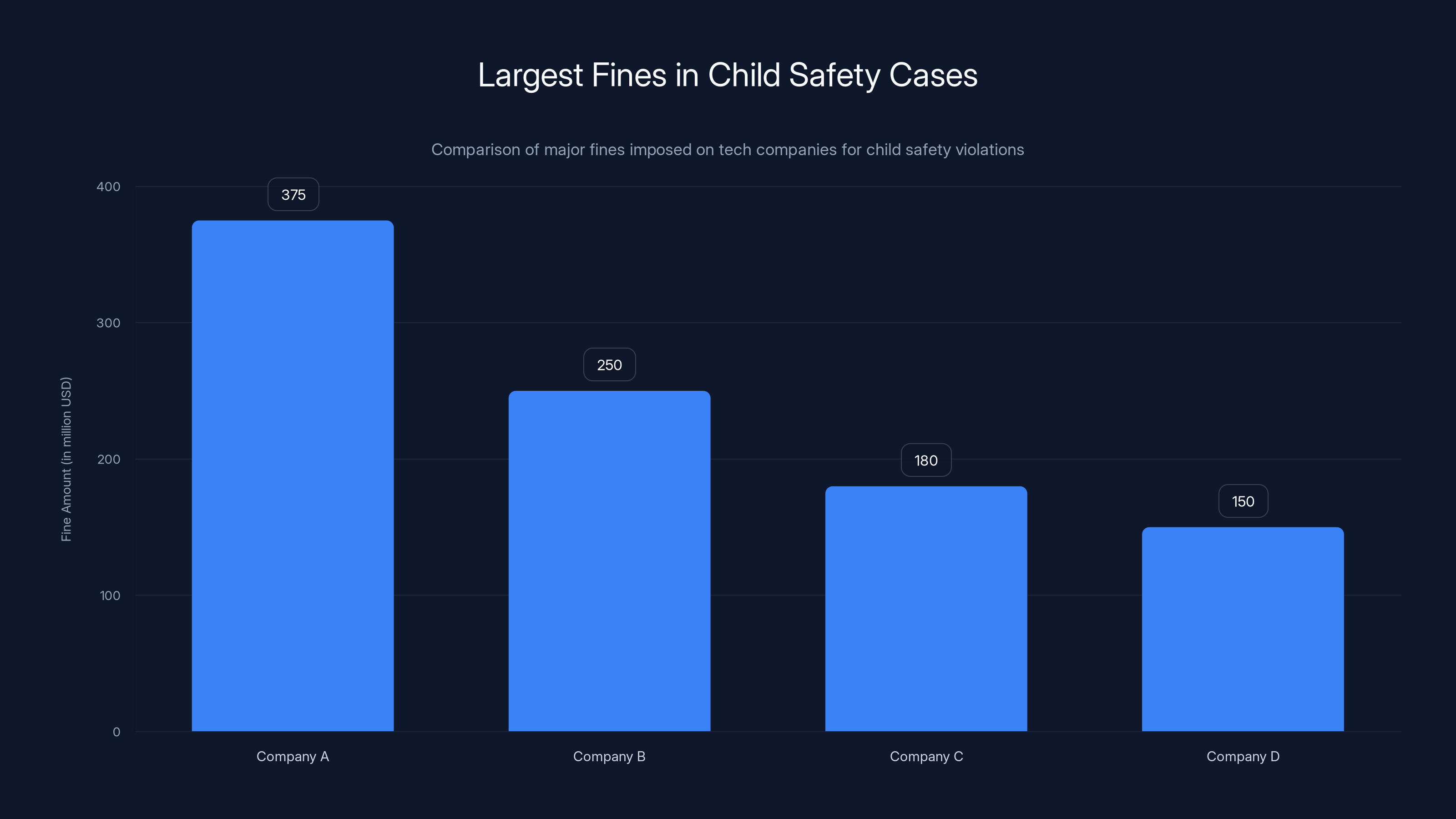

Jury Rules Against Meta, Orders $375 Million Fine in Major Child Safety Trial [2025]

In a landmark verdict that has sent ripples through the tech industry, a New Mexico jury has ruled against Meta, ordering the company to pay a staggering $375 million fine for failing to protect children on its platform. This decision marks a significant turning point in how tech giants are held accountable for user safety, particularly regarding minors. According to Engadget, this case has highlighted the critical need for improved safety measures.

TL; DR

- **375 million for violating New Mexico's consumer protection laws, as reported by AP News.

- Child Safety Concerns: The case highlighted Meta's failure to protect minors from exploitation and mental health risks, detailed in Source NM.

- Misleading Practices: Meta was found to have misled users about the effectiveness of its safety measures, according to The New York Times.

- Industry Implications: This verdict could lead to stricter regulations and increased scrutiny on tech companies, as noted by Bloomberg.

- Future Recommendations: Companies need to prioritize transparency and robust safety measures for minors.

The $375 million fine is the largest ever imposed in a child safety case, highlighting the growing legal pressures on tech companies to comply with consumer protection laws. Estimated data.

The Case Against Meta

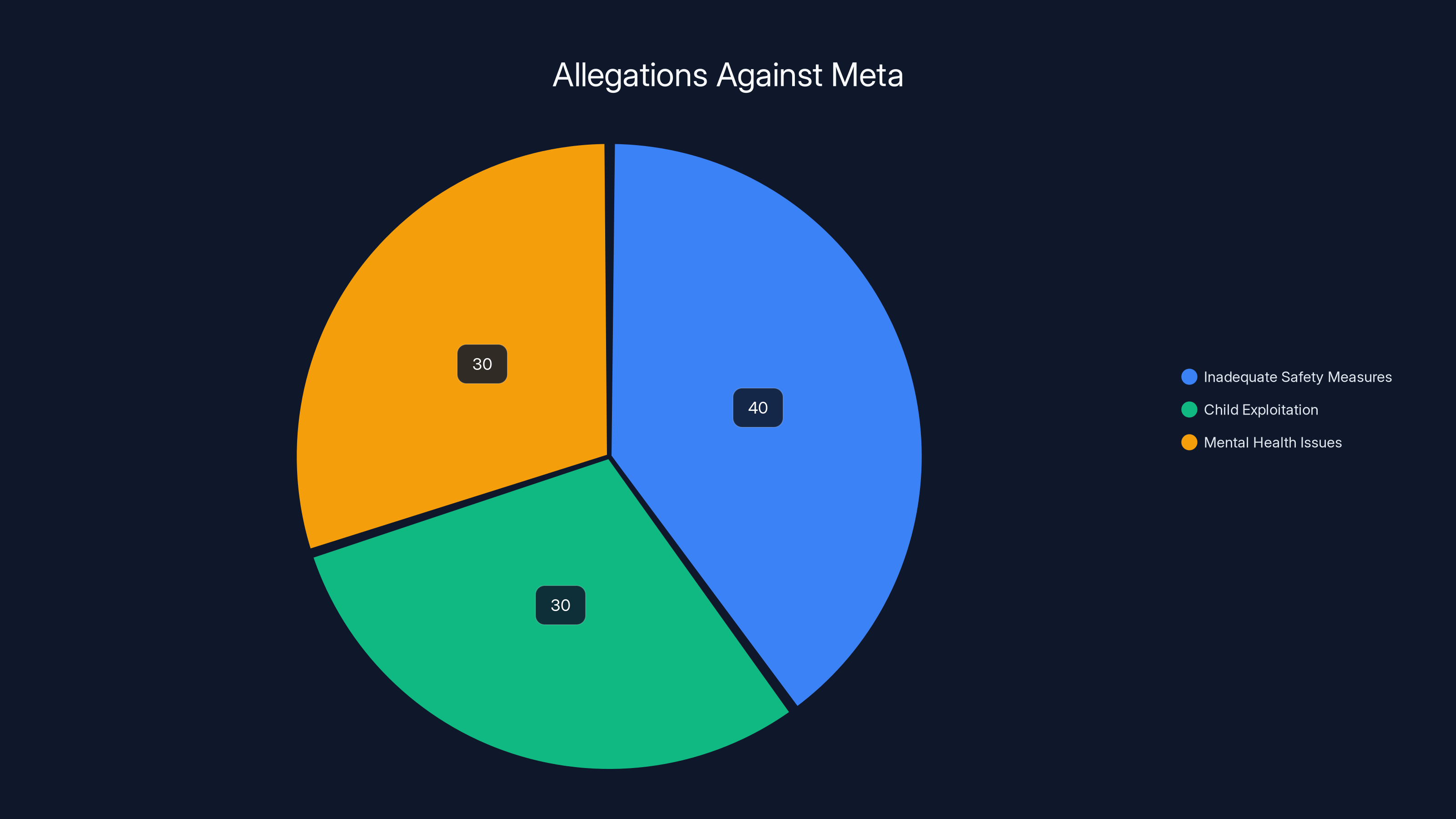

The trial, initiated by New Mexico's attorney general in 2023, accused Meta of knowingly putting children at risk. The allegations centered around the company's inadequate safety measures, which purportedly allowed for child exploitation and mental health issues to proliferate on its platform, as detailed in TechBuzz.

Allegations and Evidence

Prosecutors presented compelling evidence that Meta was aware of the risks but failed to take adequate steps to mitigate them. Internal documents revealed that the company prioritized growth and engagement over safety, despite being aware of the potential harm to young users, according to KTAR News.

Jury's Decision

After weeks of deliberation, the jury found Meta guilty on all counts. The verdict was clear: Meta had violated New Mexico's consumer protection laws by misleading users about the effectiveness of its safety protocols, as reported by Source NM.

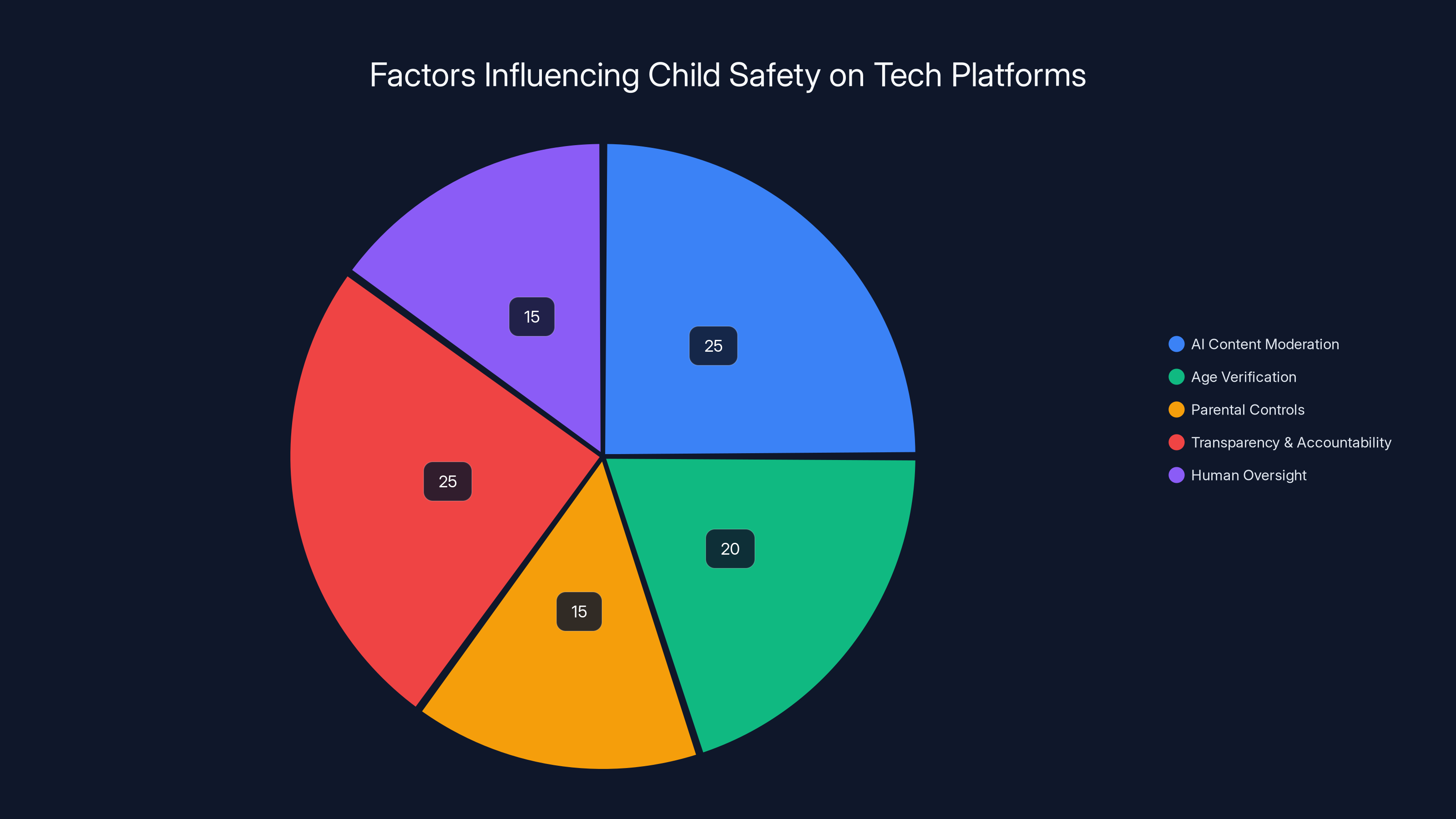

AI content moderation and transparency are key factors, each contributing 25% to child safety improvements. Estimated data.

Understanding the Implications

Legal Ramifications

The $375 million fine is one of the largest ever imposed in a child safety case, setting a precedent for future legal actions against tech companies. This ruling underscores the importance of adhering to consumer protection laws and the potential consequences of failing to do so, as highlighted by STL News.

Impact on the Tech Industry

This case is likely to spur other states and countries to re-evaluate their regulatory frameworks concerning child safety online. Tech companies may face increased scrutiny, with governments pushing for more stringent regulations to protect minors, according to BBC News.

Best Practices for Child Safety in Tech

Implementing Robust Safety Measures

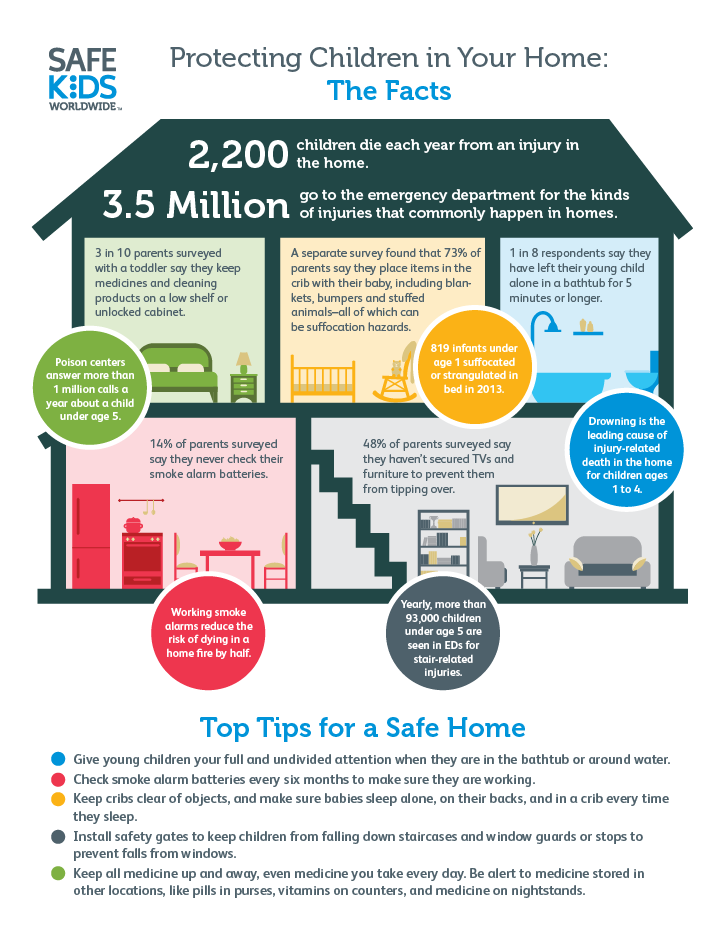

To avoid similar legal challenges, tech companies must prioritize the safety of their younger users. This includes implementing robust safety measures such as:

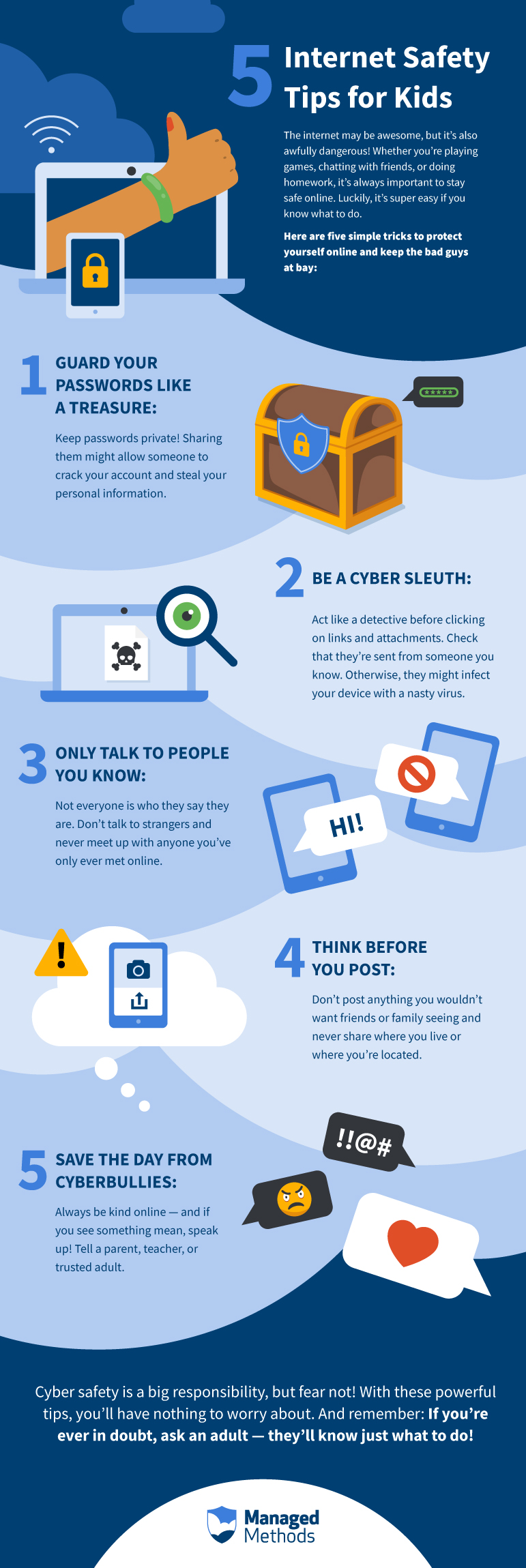

- Age Verification Systems: Ensuring that users are accurately identified and age restrictions are enforced.

- Content Moderation: Using AI and human moderators to monitor and remove harmful content proactively.

- Parental Controls: Offering tools that allow parents to monitor and control their children's online activities.

Transparency and Accountability

Companies should maintain transparency about their safety measures and be accountable for their effectiveness. Regular audits and public reports can help build trust with users and regulatory bodies, as suggested by Rest of World.

Estimated data showing the distribution of key allegations against Meta, highlighting inadequate safety measures as the primary concern.

Common Pitfalls and Solutions

Over-Reliance on Automation

While AI can be a powerful tool for content moderation, over-reliance on automation can lead to gaps in safety protocols. Companies should balance AI with human oversight to ensure nuanced decision-making, as discussed by Tech Policy Press.

Inadequate User Education

Educating users, especially minors and their guardians, about online safety is crucial. Tech companies should provide resources and guidance to help users navigate the digital landscape safely.

Future Trends in Child Safety

Stricter Regulations

In light of this case, governments are likely to impose stricter regulations on tech companies regarding child safety. This could include mandatory reporting of safety measures and regular compliance checks, as noted by Transparency Coalition.

Enhanced AI Capabilities

The development of more advanced AI systems could help improve content moderation and user safety. However, ethical considerations and potential biases in AI must be addressed to ensure fair and effective implementation.

Collaborative Efforts

Tech companies, governments, and NGOs may collaborate more closely to develop comprehensive strategies for protecting minors online. This could involve sharing best practices, resources, and data to create safer digital environments, as suggested by MSN News.

Conclusion

The New Mexico jury's decision against Meta is a wake-up call for the tech industry. It highlights the critical importance of prioritizing child safety and adhering to consumer protection laws. As the digital landscape continues to evolve, companies must remain vigilant and proactive in safeguarding their users, particularly vulnerable populations like children.

FAQ

What led to Meta's fine in the child safety trial?

Meta was fined $375 million after a New Mexico jury found it guilty of misleading users about the effectiveness of its child safety measures, violating state consumer protection laws, as reported by TechBuzz.

How can tech companies improve child safety on their platforms?

By implementing robust safety measures such as age verification, content moderation, and parental controls, and maintaining transparency and accountability, as emphasized by Rest of World.

What are the potential industry impacts of this verdict?

The ruling could lead to stricter regulations and increased scrutiny on tech companies regarding child safety, prompting them to prioritize user protection, as noted by BBC News.

What role does AI play in content moderation?

AI can automate content moderation processes, but companies should balance it with human oversight to ensure nuanced decision-making and address potential biases, as discussed by Tech Policy Press.

How might regulations change following this case?

Governments may impose stricter regulations on tech companies, including mandatory reporting of safety measures and regular compliance checks to ensure child protection, as highlighted by Transparency Coalition.

What future trends are expected in child safety online?

Enhanced AI capabilities, stricter regulations, and collaborative efforts between tech companies, governments, and NGOs are expected to drive improvements in child safety online, as suggested by MSN News.

Key Takeaways

- Meta fined $375 million for violating consumer protection laws.

- Trial highlights the need for robust child safety measures.

- Verdict could lead to stricter regulations in the tech industry.

- Transparency and accountability are crucial for user trust.

- Future trends include enhanced AI and collaborative safety efforts.

Related Articles

- Building Safe AI for Teens: OpenAI's New Open Source Tools [2025]

- The Hidden Risks of Meta's Retreat from End-to-End Encryption on Instagram DMs [2025]

- Meta's AI Content Moderation Shift [2025]

- Spotify's New Feature to Combat AI-Generated Music Misattribution [2025]

- How Arm's First CPU Will Revolutionize AI Datacenters at Meta [2025]

- Why Metaverse Visionaries Are Wary of Meta's Glasses [2025]

![Jury Rules Against Meta, Orders $375 Million Fine in Major Child Safety Trial [2025]](https://tryrunable.com/blog/jury-rules-against-meta-orders-375-million-fine-in-major-chi/image-1-1774393439701.jpg)