Why Linux 7.0 Is Finally Saying Goodbye to the Intel 440BX EDAC Driver

There's something bittersweet about watching legacy technology get officially deprecated. The Linux 7.0 kernel release marks a significant milestone: the formal removal of the EDAC driver for the Intel 440BX chipset, a piece of hardware that once represented the cutting edge of stability and compatibility in personal computing. But here's the thing—this driver hasn't actually worked since 2007. That's eighteen years of broken code sitting in the Linux kernel, gradually becoming more of a fossil than a functional component.

The 440BX chipset earned its legendary status during the late 1990s and early 2000s, a time when hardware standardization was still a radical concept. Before the 440BX arrived, buying a motherboard felt like a gamble. Manufacturers would implement things differently, drivers would conflict, and system stability was more wishful thinking than guarantee. Then Intel released the 440BX, and it changed everything.

The decision to remove this driver isn't arbitrary. It reflects a broader philosophical shift in Linux development toward maintaining only code that actually works and serves a genuine purpose. For over a decade and a half, the 440BX EDAC driver has been a zombie—technically present but completely non-functional due to fundamental incompatibilities with the Intel AGP driver. Keeping it around was like keeping a broken toolbox in your workshop. Sure, it takes up space, but eventually you need to throw it out.

What makes this removal noteworthy isn't just the technical cleanup. It's a statement about where the Linux community is placing its energy. Modern systems have moved far beyond the architectural assumptions of the late 1990s. We're talking about systems with dozens of cores, complex memory hierarchies, and radically different power management requirements. The resources spent maintaining legacy drivers for hardware from two decades ago represent opportunity costs that the community can no longer justify.

The Rise and Fall of the Intel 440BX Chipset

Understanding why the 440BX mattered requires understanding what computing was like before it arrived. In the mid-1990s, the PC market was fragmented. Different manufacturers implemented chipsets differently. Some were unstable at certain clock speeds. Others had bizarre memory compatibility issues. The industry had something called Plug and Play, which users affectionately renamed "plug and pray" because it rarely worked as advertised.

Intel released the 440BX in 1998, and it became an instant classic. The chipset delivered something radical: consistent behavior. It would run hardware slightly out of specification, handle memory configurations other chipsets rejected, and just work reliably day after day. For a market accustomed to driver conflicts and system crashes, this was revolutionary.

The 440BX enabled an entire era of PC building. Enthusiasts discovered they could buy cheap Celeron 300A processors—priced at around $40—and overclock them to 450MHz. That's a 50% performance increase without buying new cooling solutions. Try that on competing chipsets and your system would crash randomly. Do it on the 440BX and it would run stably for years. This reliability created a halo effect that made the chipset legendary among overclockers and system builders.

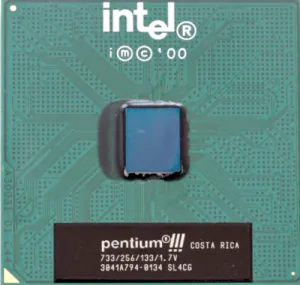

The platform supported both Intel Pentium II and Pentium III processors, meaning systems built on 440BX motherboards could be incrementally upgraded without a complete rebuild. You could start with a Pentium II, add more RAM, then swap in a Pentium III later. This upgradeability, combined with the chipset's tolerance for pushing hardware beyond specifications, created a following that persisted for years after newer chipsets arrived.

Server deployments also embraced the 440BX. The chipset's stability made it attractive for businesses running continuous operations. Even today, virtualization software like VMware defaults to emulating the 440BX architecture for compatibility. It's the hardware equivalent of Latin—nobody speaks it natively anymore, but it still works as a universal bridge between systems.

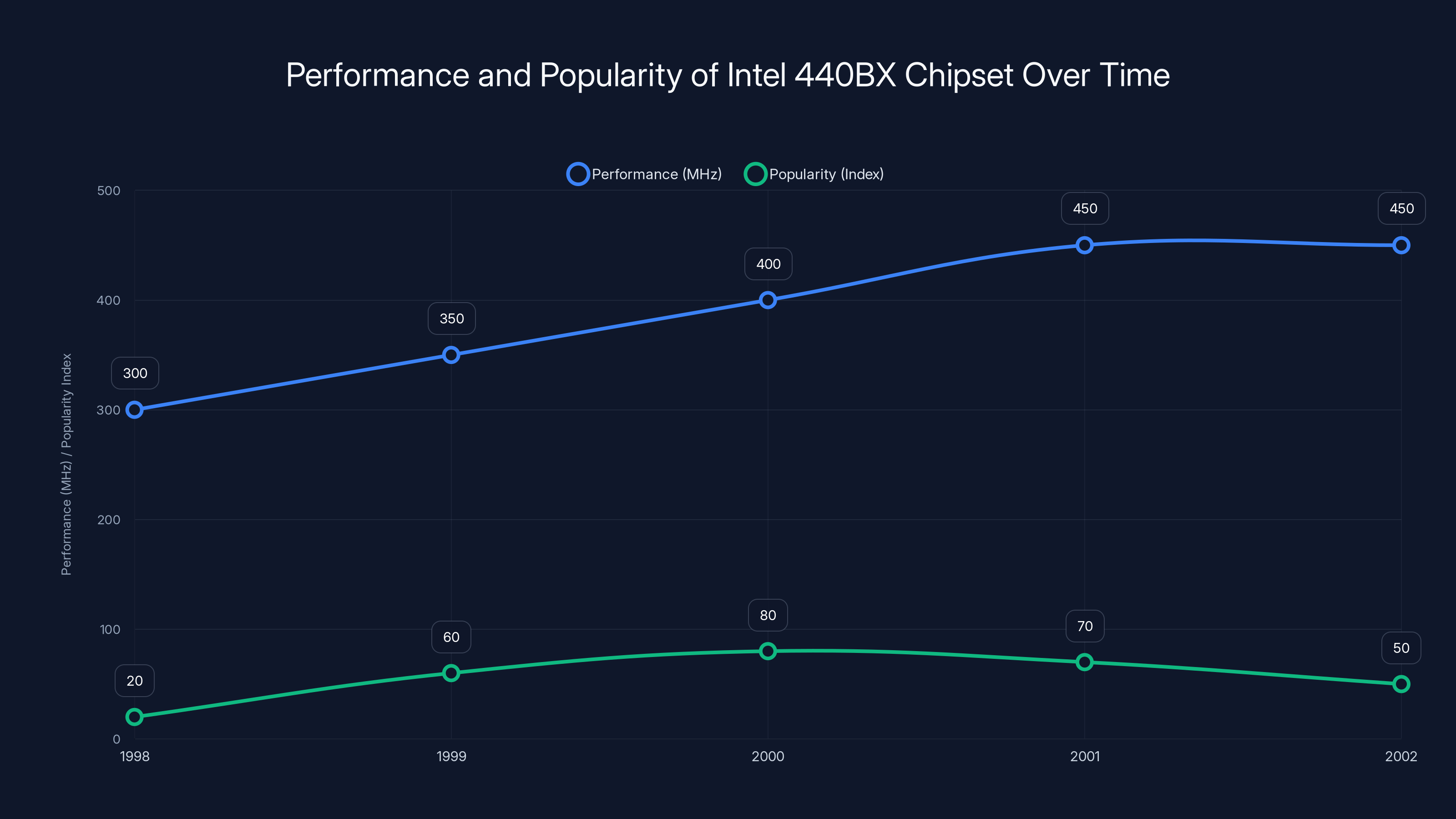

The Intel 440BX chipset saw a significant rise in performance and popularity from 1998 to 2000, driven by its stability and overclocking capabilities. Popularity declined slightly as newer technologies emerged. Estimated data based on historical context.

ECC RAM and Memory Error Correction at the Hardware Level

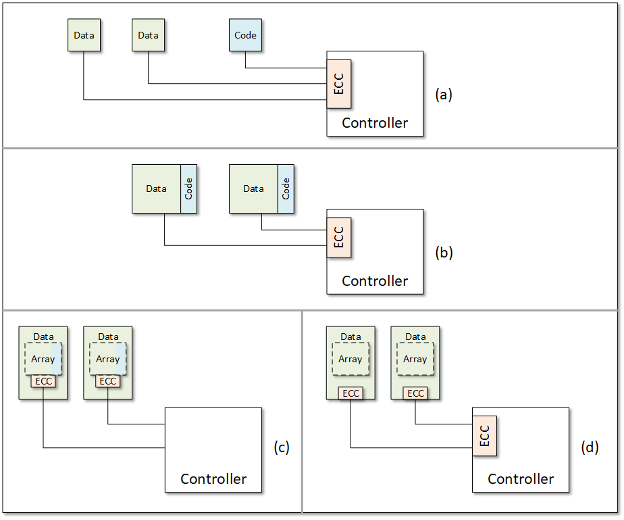

Before diving into why the EDAC driver stopped working, it's important to understand what the driver actually did. EDAC stands for Error Detection and Correction. At the hardware level, systems using ECC (Error-Correcting Code) RAM can detect and automatically correct single-bit memory errors. This happens transparently without any operating system involvement.

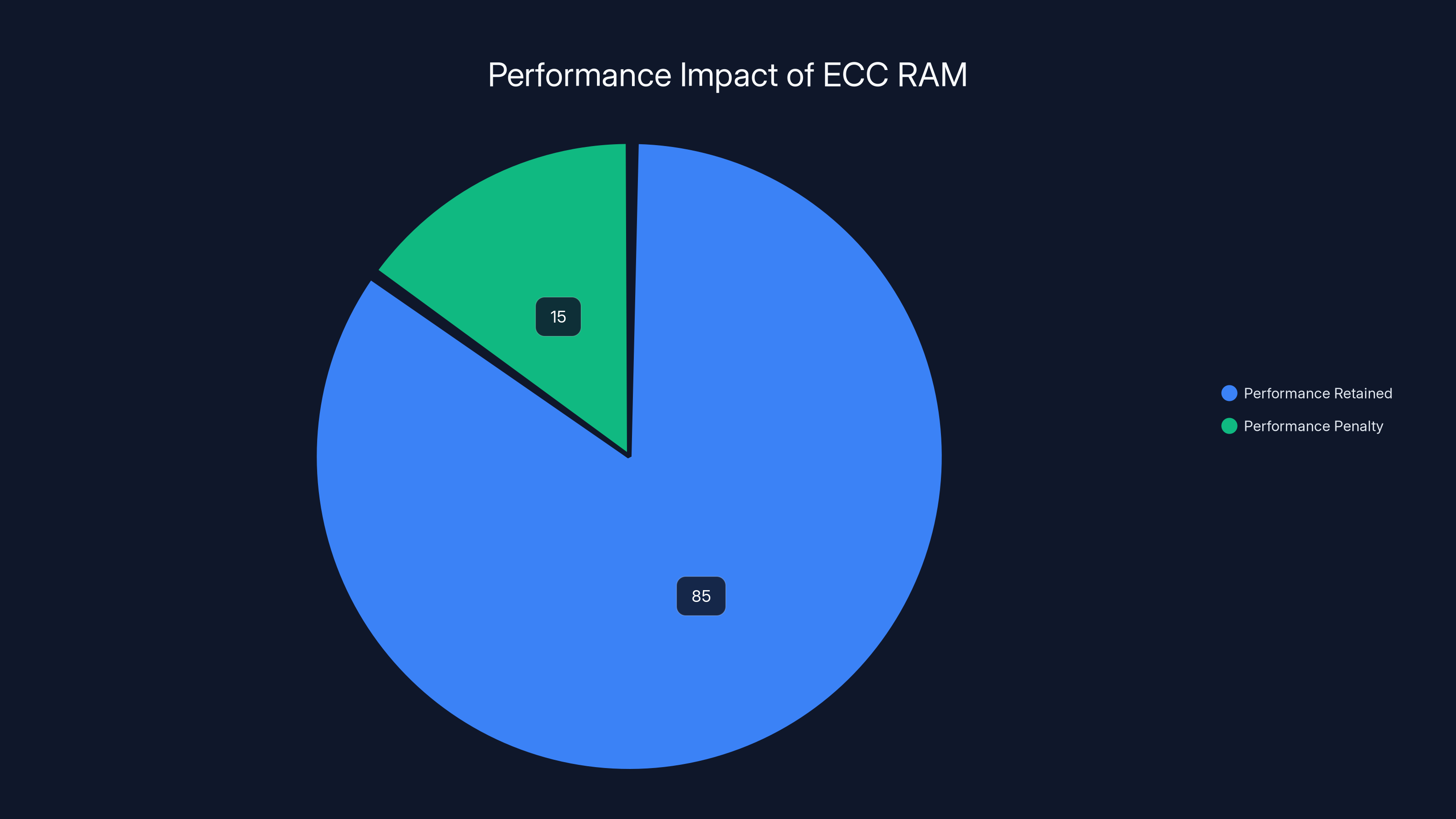

ECC RAM works by storing extra parity information with each word of data. If a single bit flips due to cosmic rays, electrical interference, or manufacturing defects, the system calculates what that bit should be and corrects it silently. The error happens, gets fixed, and nobody ever notices. This is why servers and workstations have traditionally relied on ECC RAM—the reliability is worth the 12-15% performance penalty.

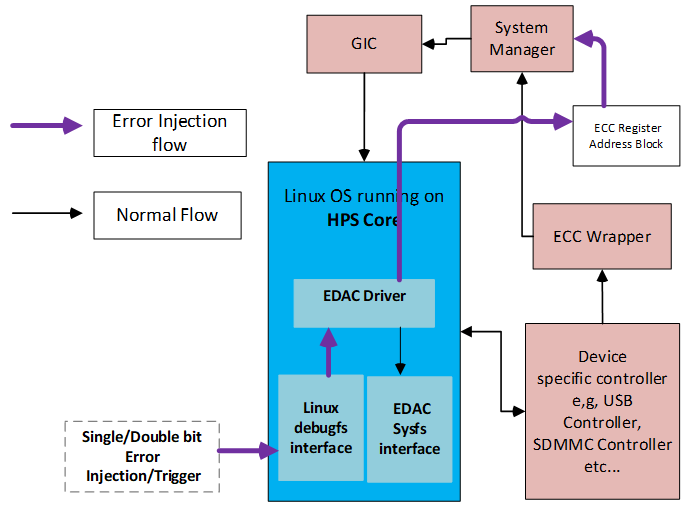

However, even though ECC RAM corrects errors automatically at the hardware level, the Linux kernel wants to know when corrections happen. This is where EDAC comes in. The EDAC subsystem collects information about bit flips and other memory errors, logging them so administrators can track patterns. If a particular memory module starts showing errors, the system can alert the operator. If errors appear in multiple locations, it might indicate a deeper hardware problem.

The 440BX EDAC driver was responsible for reading error status registers on the chipset and reporting what it found to the kernel. This is where the incompatibility emerged. The driver relied on accessing the Intel AGP driver to function. When AGP became less relevant and the AGP driver changed, the EDAC driver's assumptions broke. By 2007, the 440BX EDAC driver was fundamentally broken and nobody was maintaining it.

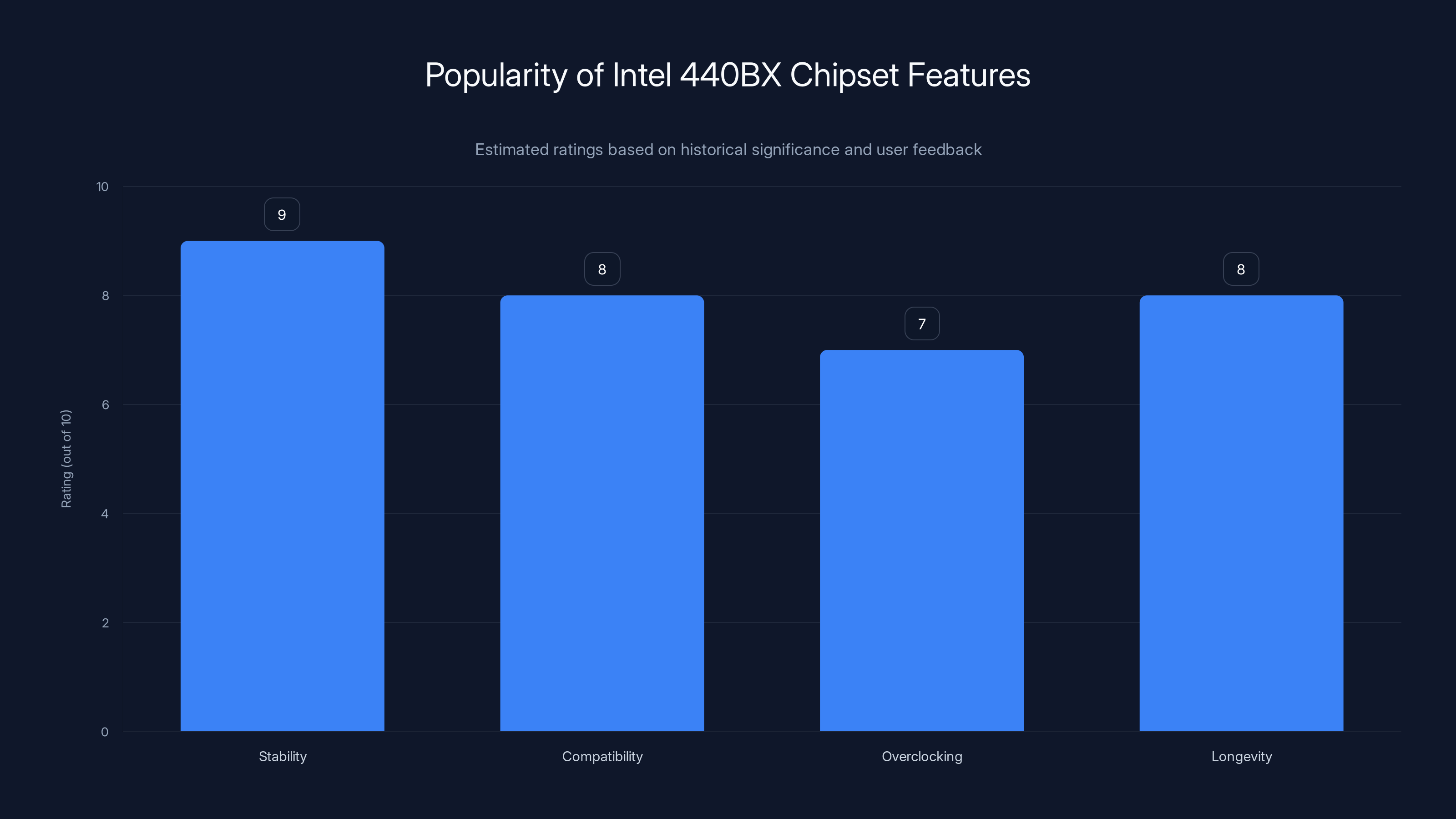

The Intel 440BX chipset was highly regarded for its stability and compatibility, making it a popular choice for PC builders in the late 1990s and early 2000s. (Estimated data)

The AGP Incompatibility That Broke Everything

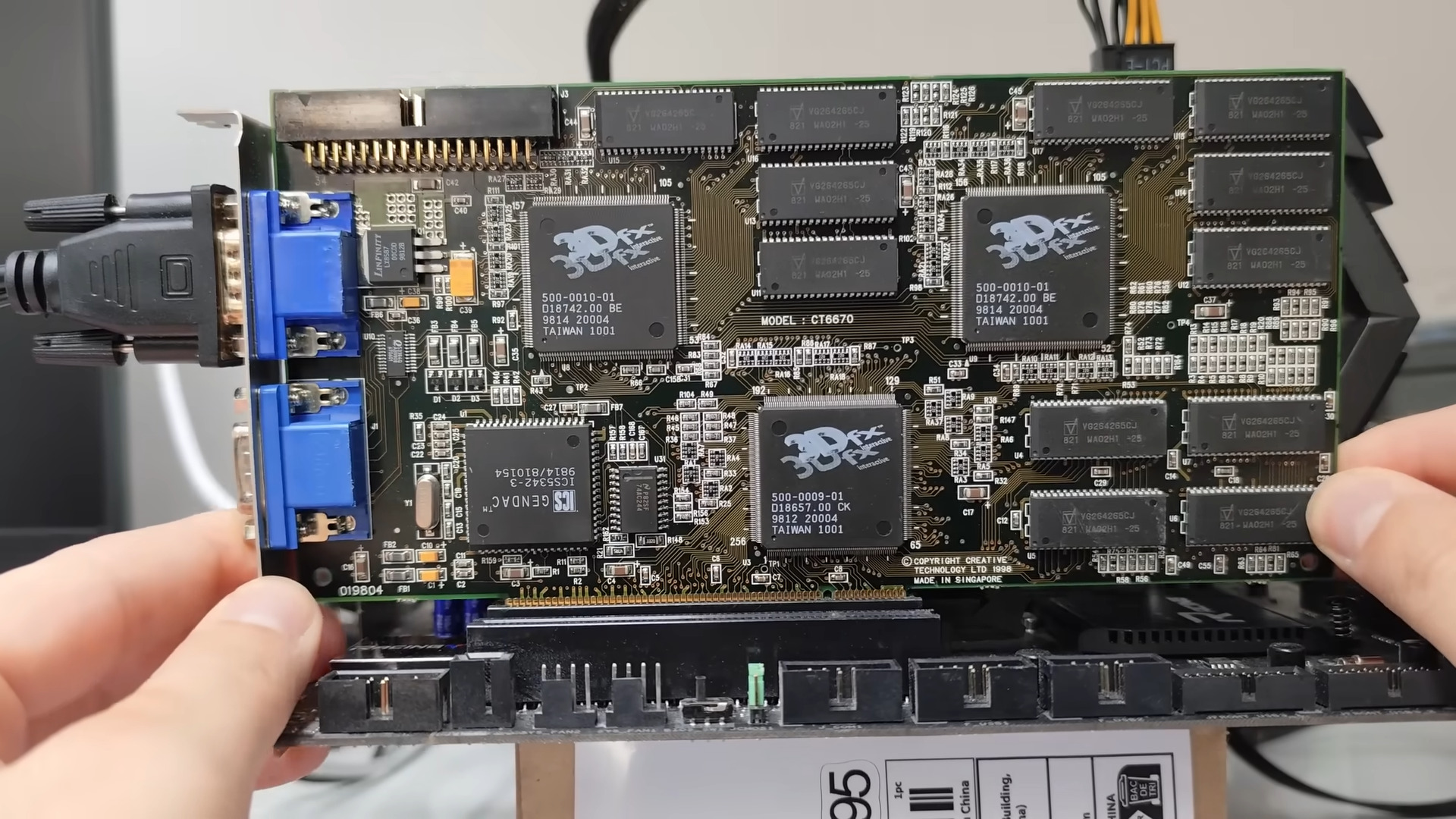

The accelerated Graphics Port was a revolutionary technology in the late 1990s. Before AGP, graphics cards communicated with the CPU through the standard PCI bus, which had limited bandwidth. AGP provided a dedicated high-speed connection directly to the graphics card, delivering significantly better performance for 3D applications.

The 440BX chipset included AGP support, which made it even more attractive for gaming and graphics-intensive work. The AGP driver in Linux interfaces directly with hardware on the system bus, accessing memory-mapped registers and configuration spaces. The 440BX EDAC driver depended on certain behaviors of the AGP driver to access the chipset's memory controller registers.

When the Linux AGP driver evolved to handle newer architectures and newer graphics cards, it changed how it accessed certain hardware resources. These changes weren't malicious or intentionally breaking—they were necessary updates to support modern hardware. But the 440BX EDAC driver, written for the older AGP driver behavior, couldn't adapt. It would either fail to initialize or access the wrong registers, returning garbage data instead of actual error information.

By 2007, people running 440BX systems had already mostly moved to newer hardware. The audience for this driver was shrinking toward zero. But the broken code remained in the kernel, carried forward through countless releases, a legacy of technical debt that accumulated silently. It wasn't causing active problems because most modern systems don't use 440BX chipsets. The driver just sat there, deprecated but not removed, a reminder of infrastructure that hadn't been updated in years.

What Actually Happens to Error Correction Without the EDAC Driver

This is the important part that many people get wrong. Removing the 440BX EDAC driver does not mean error correction stops working. On systems with ECC RAM using 440BX chipsets, error correction continues happening at the hardware level, exactly as before. Single-bit errors still get detected and corrected automatically. The system continues operating reliably.

What disappears is the software notification. The kernel can no longer monitor error rates and alert administrators when patterns emerge. If a memory module is slowly degrading, instead of getting system logs that show increasing error counts, an operator would simply see the machine running normally until something catastrophic happens. For most consumer systems, this doesn't matter—they're not running mission-critical applications that depend on knowing about memory error patterns.

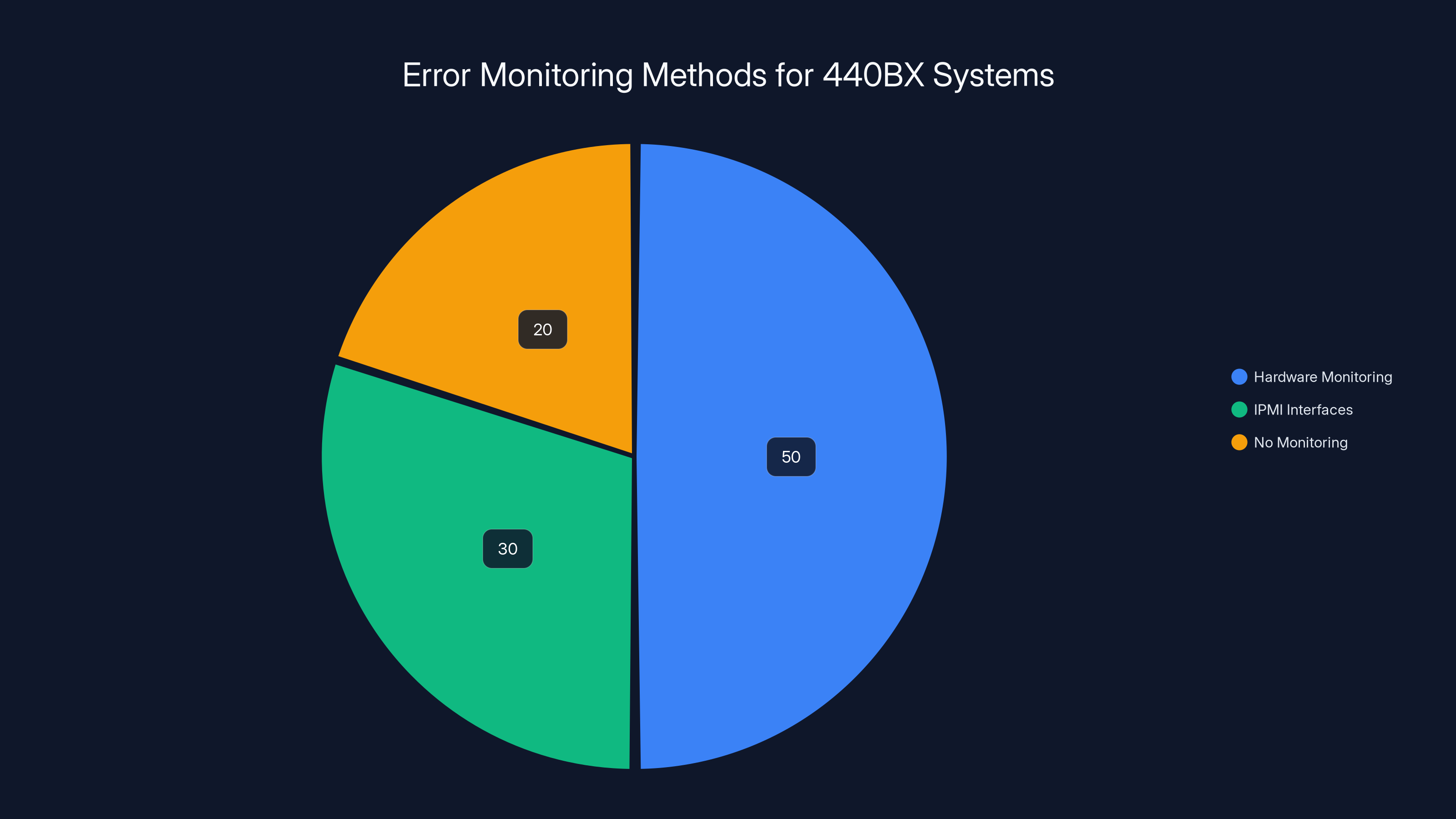

For systems that are still running 440BX hardware and need error monitoring, the options are limited but functional. Many 440BX motherboards included hardware monitoring on the motherboard itself, with on-board sensor systems. Some monitoring can be done through IPMI interfaces if the system supports them. The loss of kernel-level EDAC reporting is primarily an operational inconvenience rather than a functional failure.

In practice, any system still running 440BX hardware in 2025 probably isn't running modern Linux kernels anyway. These systems would typically stay on older kernel versions, where the EDAC driver continues to exist. If someone is actively maintaining a 440BX-based system, they're probably not chasing the absolute latest kernel releases. They're running whatever stable version works for their application.

Using ECC RAM incurs a performance penalty of approximately 12-15%, but it significantly enhances reliability by correcting memory errors automatically. Estimated data.

The Broader Trend: Linux Distros Cleaning House on Legacy Support

The removal of the 440BX EDAC driver is part of a larger pattern in Linux development. The community has gradually realized that maintaining support for 25-year-old hardware comes with real costs. Testing becomes more complicated. Code paths that nobody uses accumulate bugs that never get caught. Developers have to understand ancient architectures to make even simple changes to the kernel.

Over the past five years, Linux has dropped support for a surprising number of old architectures and drivers. The i386 (32-bit x86) architecture was deprecated in the 5.x kernel series. Support for older ARM variants was gradually phased out. Ancient SCSI drivers for equipment that hasn't been manufactured in two decades have been removed. Each removal was controversial with someone who still depended on that hardware, but the community ultimately recognized that maintaining code for hardware nobody buys anymore doesn't serve the project's long-term health.

This cleaning process serves several purposes. First, it reduces kernel binary size. Every line of code that gets removed is code that doesn't need to be compiled, loaded into memory, or maintained. Second, it simplifies the build system and test matrix. Developers can focus on testing paths that actually matter for modern systems. Third, it reduces the cognitive load on maintainers. They can spend time on features and optimizations that benefit the systems actually shipping today.

Linux distros have different philosophies about legacy support. Some distributions like Debian maintain support for older architectures longer, serving users in developing countries or running specialized embedded systems. Others, like Fedora, move faster, dropping support more aggressively to stay lightweight and contemporary. The removal of 440BX EDAC driver support reflects a decision that the complexity of maintaining this code exceeds its utility.

Kernel Development Philosophy: Maintainability Over Nostalgia

One of the core principles of Linux development is that code quality matters more than feature quantity. If code isn't being maintained, if bugs aren't being fixed, and if nobody is actually using it, then it's not serving the project. This philosophy has become increasingly important as the Linux kernel has grown to millions of lines of code.

Maintaining the 440BX EDAC driver required someone to understand how the Intel 440BX chipset works internally, how it interacts with the AGP subsystem, and how to fix it if bugs emerge. After 2007, nobody was bothering to maintain it. When new compiler flags were introduced that would have broken the driver, nobody updated it. When the AGP driver changed, the EDAC driver wasn't updated in response. It simply decayed in place.

From a software engineering perspective, this is actually worse than removing the code entirely. Broken, unmaintained code creates technical debt. Developers have to think about it when making changes to adjacent systems. It pollutes the codebase with examples of how not to do things. It increases the attack surface of the kernel by maintaining paths that don't work correctly. Removing it entirely is cleaner than leaving it to bit-rot.

The Linux community learned this lesson from years of experience maintaining large codebases. Companies that tried to keep supporting everything forever found themselves trapped under the weight of legacy code. Google discovered this when Android became massive—supporting every old device indefinitely became impossible. Microsoft learned it with Windows, ending support for older versions to focus development on current platforms. Even Apple, with its tightly controlled ecosystem, has to decide when to stop supporting old devices and old APIs.

Estimated data shows Linux prioritizes a lean codebase and innovation, while Windows focuses on backward compatibility, leading to higher maintenance burden.

How Modern Linux Handles Memory Management and Error Detection

While 440BX EDAC support is going away, modern Linux systems have evolved far more sophisticated memory error detection and reporting mechanisms. Systems using UEFI firmware have standardized memory error reporting through UEFI Event Logging. Modern CPUs report errors through the MCE (Machine Check Exception) subsystem, which captures processor-detected errors at a much deeper level than chipset-based EDAC ever could.

The MCE architecture in Linux is far more comprehensive than chipset EDAC. When a CPU detects an error, it signals an exception that the kernel's MCE handler catches. This handler reads detailed error information from the CPU's MSRs (model-specific registers), logs exactly what happened, and can optionally take corrective action like taking a core offline if it's reporting too many errors. This works on modern Intel, AMD, and ARM processors, covering virtually all systems that organizations are actively deploying.

Moreover, server-grade systems deployed today typically use IPMI (Intelligent Platform Management Interface) for hardware monitoring. The BMC (Baseboard Management Controller) continuously monitors system health, memory errors included, and reports problems through standardized mechanisms. This is far more reliable than depending on the operating system kernel to catch errors, because if the OS kernel crashes, the BMC continues monitoring and can power-cycle the system if necessary.

Cloud providers and large-scale deployments have essentially moved beyond the EDAC model entirely. They monitor memory errors through firmware-level mechanisms, application-level health checks, and automated system replacement. If a server shows increasing error rates, it gets removed from the load balancer and replaced. The old model of trying to make a slightly-broken system limp along no longer makes sense in environments where replacement hardware is readily available.

The Obsolescence Timeline: How Long Is Too Long for Legacy Support

The 440BX chipset arrived in 1998 and remained relevant for roughly a decade. By 2008, it was completely obsolete from a commercial perspective. Yet the EDAC driver remained in the Linux kernel for another 17 years, finally being removed in 7.0. This raises an interesting question about how long Linux should maintain support for retired hardware.

There's no universal answer, but the industry has converged on some rough guidelines. Server hardware typically gets security updates and bug fixes for 5-7 years after manufacture. Consumer hardware gets 2-3 years of active support. Specialized embedded systems might continue receiving updates for longer if they're shipping in products with 10+ year lifespans. But for hardware that's been completely discontinued for decades, the question isn't "should we support it" but rather "why haven't we removed it yet."

The 440BX removal also reflects changing economics in the software world. In the 1990s and 2000s, removing features from Linux was controversial because Linux users were often hobbyists who deliberately avoided upgrading to the latest version. If you had a 440BX system running smoothly on Linux 2.4, why upgrade to 2.6 just to get new features? You'd stick with what works. This mindset made removing features extremely controversial.

Modern Linux development doesn't work that way. Distributions like Fedora release new versions every six months, with users expected to upgrade regularly. Enterprise distributions like RHEL provide extended support periods, but even those have definite end dates. The idea that someone is still running a 440BX system with a brand-new 7.0 kernel is effectively zero. Anyone actually using 440BX hardware is either running an ancient kernel or not running Linux at all.

Estimated data shows that without the EDAC driver, 50% of 440BX systems use hardware monitoring, 30% use IPMI interfaces, and 20% have no monitoring.

Hardware Virtualization and 440BX Emulation

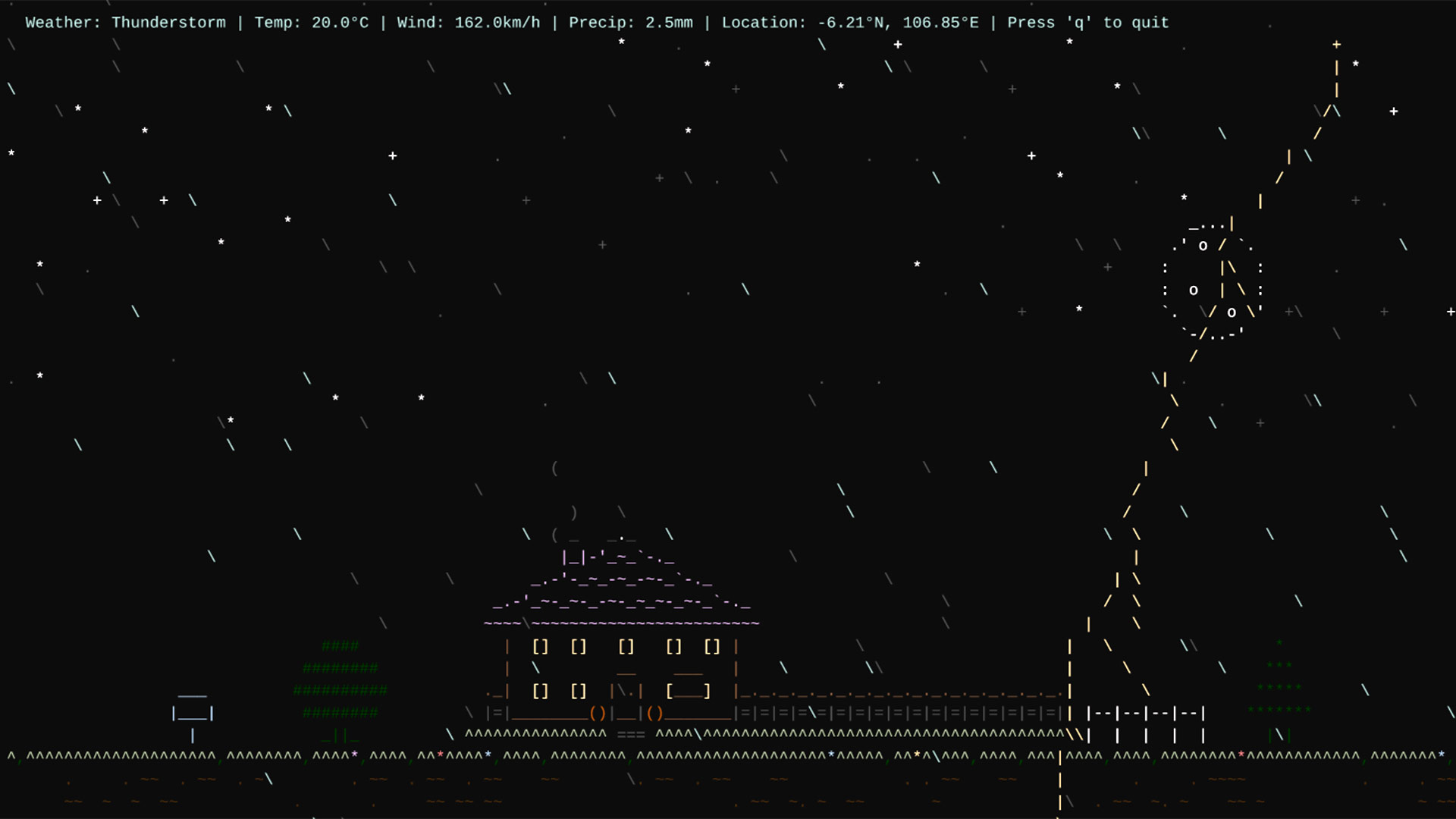

Here's where the 440BX gets a second life: virtualization. VMware and QEMU both default to emulating the Intel 440BX chipset for x86 virtual machines. This is remarkable when you think about it—a chipset from the 1990s has become the standard foundation for virtualized x86 systems in 2025. Why? Because it's well-understood, well-documented, and provides enough compatibility that operating systems from multiple eras can run on it without modification.

When you create a new virtual machine in VMware and install Windows XP, the guest operating system sees a 440BX chipset. When you launch an old Linux distribution in QEMU, it perceives a 440BX motherboard. This emulation layer ensures backward compatibility. Old software that was written for the 440BX era can run unchanged in virtual machines on modern hardware. It's the digital equivalent of archaeology—the 440BX becomes a standardized interface that bridges contemporary hardware with legacy software.

The removal of 440BX EDAC driver support in the Linux kernel doesn't affect this virtualization use case. Hypervisors emulate the chipset without needing the Linux kernel's EDAC driver. The guest operating system sees memory error registers and may even report error corrections, but those are synthetic signals generated by the hypervisor, not actual hardware errors. The virtualization layer is completely independent of whatever driver support exists in the host or guest kernels.

This disconnect points to something interesting: the 440BX has become more of a software standard than a hardware specification. It's a well-defined interface that can be emulated, containerized, and transmitted across networks. In this form, it will probably persist indefinitely. New virtual machines will continue emulating the 440BX chipset decades from now, ensuring software compatibility even as the actual hardware becomes completely extinct.

Practical Implications for Users Still Running 440BX Hardware

If you're actually reading this and thinking "wait, I still have a 440BX system," here's what the removal of EDAC driver support means for you in practical terms. If you're running an older kernel that still has the EDAC driver, nothing changes. The driver continues to work exactly as before. The removal only affects users who upgrade to Linux 7.0 or later.

For those upgrading, memory error correction continues working at the hardware level. Your ECC RAM, if you have it, still detects and corrects single-bit errors automatically. The system continues to operate reliably. What you lose is the ability to monitor error rates through the kernel's EDAC interface. If you have monitoring scripts that parse /sys/kernel/debug/edac/ to track memory errors, those scripts will break.

If you need to continue monitoring memory errors on 440BX hardware, alternatives exist. First, many 440BX motherboards included on-board monitoring electronics separate from the chipset itself. These can sometimes be accessed through other Linux kernel subsystems like hwmon. Second, if your system supports IPMI, you can configure the BMC to monitor memory errors and report them through standard IPMI mechanisms. Third, for systems that absolutely require kernel-level error tracking, you would need to remain on an older kernel version that still includes the EDAC driver.

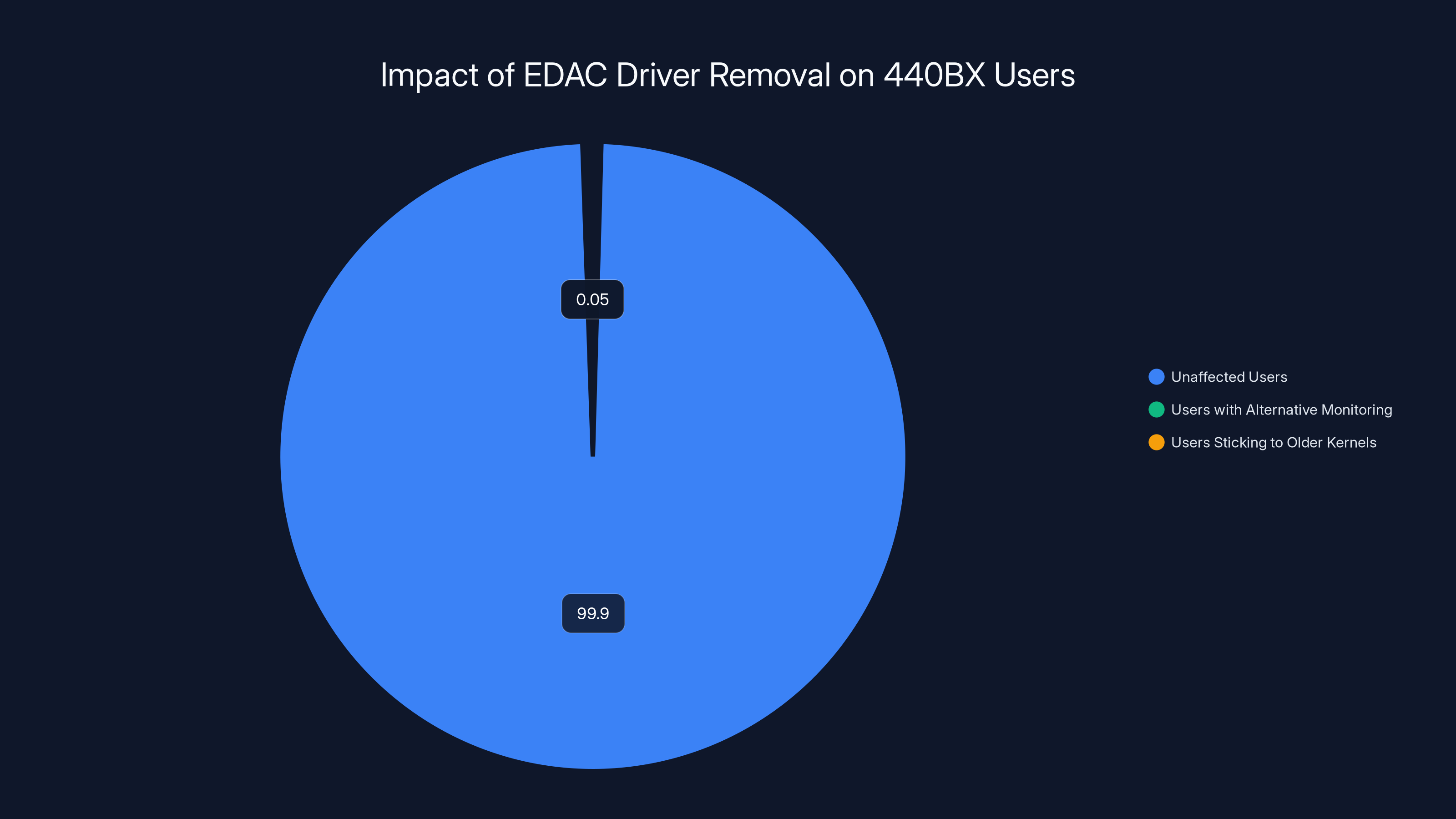

For 99.9% of users, this change is irrelevant. You're either running a modern CPU architecture that the 440BX removal doesn't affect, or you're running such old hardware that you wouldn't be updating to the latest kernel anyway. For the tiny fraction of users actively maintaining 440BX systems, this is a known and expected deprecation. The Linux community has been signaling for years that this code wouldn't be maintained forever.

The vast majority (99.9%) of users are unaffected by the removal of EDAC driver support for 440BX hardware. A small fraction may use alternative monitoring methods or stick to older kernels. (Estimated data)

Future of Linux Legacy Hardware Support

The removal of 440BX EDAC driver support likely won't be the last of these deprecations. Looking at the Linux kernel development roadmap, other aging driver and architecture support will probably follow similar paths. The i386 (32-bit x86) architecture is increasingly getting limited support. Various specialized SCSI controllers and network interfaces from the 2000s continue to be candidates for removal.

The philosophy guiding these decisions is straightforward: as hardware approaches true obsolescence (meaning no one is manufacturing it and active deployments are measured in single digits), Linux development should move toward supporting it through compatibility layers rather than native kernel code. Virtualization, emulation, and container technologies can provide the compatibility that legacy software needs without requiring the kernel to maintain ever-expanding support matrices.

This shift reflects changing practical realities in computing. Nobody is designing new systems around 25-year-old chipsets. Nobody is shipping 440BX motherboards in new servers or workstations. The systems using this hardware are running in specialized applications: vintage computing museums, retrocomputing enthusiasts, and specialized industrial deployments with extreme upgrade cycles. For all of these users, kernel-level support has become optional—they can run older kernels indefinitely, or use virtualization to bridge newer systems with legacy software.

The Linux community will likely continue making these deprecation decisions based on maintenance cost and practical usage. Each removed driver frees kernel developer time to focus on features and optimizations that benefit current users. Each removed architecture simplifies the build system and test matrix. These are real resource constraints in a volunteer-driven project, and the community has learned that accumulating code that nobody maintains creates technical problems over time.

The Historical Significance of Letting Legacy Die

There's something philosophically important about the removal of the 440BX EDAC driver beyond the technical details. The 440BX represents an era when hardware was the primary limiting factor in computing. Choosing the right motherboard could make or break your system's performance and stability. The 440BX earned legendary status because it solved real problems that its competitors couldn't. It represented genuine innovation.

But technology moved on. Processing power increased by orders of magnitude. Memory became faster and more reliable. CPUs incorporated error correction directly. The architectural assumptions that made the 440BX revolutionary became irrelevant. The removal of its EDAC driver is Linux finally accepting that this chapter of computing history is closed. The hardware has moved from "current but aging" to "vintage historical artifact," and the Linux kernel is moving on.

This is healthy for the ecosystem. Open-source projects that try to support everything forever eventually collapse under the weight of their own complexity. The only sustainable approach is to consciously decide what matters for the future and make peace with the fact that supporting the distant past is a constraint on progress. The 440BX served its purpose brilliantly. Now it's time to let go.

For enthusiasts still running 440BX systems, the removal is a chance to reflect on why they're maintaining this hardware. If it's purely for historical interest or retrocomputing hobbies, staying on an older kernel is perfectly fine. If it's because the system is stable and handles their workload, that stability doesn't depend on kernel version—it depends on the hardware and the software applications running on top. The Linux 7.0 change is the community saying "we're not going to hold your hand for this anymore," which is honest and fair.

What the 440BX Removal Tells Us About Open Source Evolution

The quiet deprecation and removal of legacy driver support is one of those moments that reveals something important about open-source software development. Unlike commercial software companies that have to maintain backward compatibility for business reasons, open-source projects can make decisions based purely on technical merit and sustainability.

Linux kernel maintainers aren't trying to sell you the latest version. They're trying to build a system that works well for the next decade. This means occasionally making hard choices about what to stop supporting. The 440BX EDAC driver removal is essentially the Linux community saying: "We've decided that maintaining code for hardware that stopped shipping in 2005 is not a good use of our time."

This decision-making process helps explain why Linux has remained competitive and relevant for three decades. The kernel has grown to over 30 million lines of code, yet it doesn't include a massive cruft of dead code from projects past. Maintainers actively prune, remove, and deprecate features that have outlived their usefulness. This discipline keeps the codebase lean and manageable.

Compare this to operating systems that try to maintain absolute backward compatibility forever. Windows still includes code to handle hardware from the 1980s. This commitment to never breaking anything creates a massive maintenance burden. Every security vulnerability discovered in old code has to be fixed. Every architectural change has to consider how it will affect code written decades ago. Eventually, the accumulated legacy becomes heavier than the innovative new features built on top.

Linux chose a different path. The project acknowledges that some things will be deprecated. Users get a warning period—often years—to adjust. Then the code is removed, and everyone moves forward. This approach keeps the project healthy and maintainable over the long term, even though it occasionally frustrates users still running ancient hardware.

Migration Strategies for Users of Legacy Hardware

For the tiny but real group of users actually running 440BX systems in 2025, the kernel upgrade situation requires some planning. If you're currently running Linux on a 440BX motherboard with a kernel version that includes EDAC support and you want to continue receiving security updates, you have several options.

The first and most straightforward option is to stay on a kernel version that includes the driver. Most Linux distributions maintain long-term support kernels for many years. Ubuntu provides five years of support for each LTS release. Red Hat Enterprise Linux offers support for ten years or longer. These stable kernel branches will continue including the 440BX EDAC driver indefinitely. You can stay on a stable version while the mainline kernel moves forward. This works if your use case doesn't require cutting-edge features.

The second option is to disable kernel upgrades for the EDAC subsystem specifically while upgrading other kernel components. Some sophisticated users compile custom kernels with the 440BX EDAC driver code isolated and maintainable separately. This is more complex and requires kernel compilation knowledge, but it allows you to receive security patches for everything else while keeping legacy driver support.

The third option is virtualization migration. If your 440BX system is running a workload that doesn't depend on bare-metal performance, you could migrate the system to run as a virtual machine on modern hardware. VMware and QEMU both emulate the 440BX chipset, providing full compatibility. The guest operating system continues seeing the same hardware architecture, but it's running on contemporary systems that receive full kernel support. This is actually how many companies maintain legacy software—not by keeping the old hardware running, but by virtualizing it on new infrastructure.

The fourth option is containerization. For workloads that don't absolutely require kernel-level features of the 440BX era, containerization can provide a compatibility bridge. The container can run an older Linux distribution that includes the necessary drivers, but the containers themselves run on modern kernel infrastructure. This works for applications that don't need direct hardware access.

Each approach has tradeoffs. Staying on old kernels means missing security updates in other subsystems. Custom kernel compilation requires technical expertise and ongoing maintenance. Virtualization adds overhead and removes bare-metal performance. Containerization limits which workloads you can migrate. There's no one-size-fits-all solution, which is why the Linux community's approach of supporting legacy hardware for 15+ years before removal actually makes sense from a practical standpoint.

The Economics of Legacy Hardware Support

Why did Linux maintain support for the 440BX EDAC driver for nearly two decades after it stopped working? The answer involves some economics of open-source software that don't get discussed often enough. In a commercial software company, someone has to decide whether continuing to support old hardware is worth the cost. They compare the number of users still on that hardware against the developer time required to maintain the code. When the math no longer works, support gets discontinued.

Open-source projects work differently. There's no explicit budget decision. Instead, maintenance happens because volunteers care enough to do it. A single developer might continue maintaining code for aging hardware because they use that hardware personally, or because they feel nostalgic about it, or simply because nobody has suggested removing it yet. This is why legacy code can persist in open-source projects long after it would be removed in commercial software.

Over time, though, this creates problems. The developer who maintained the 440BX EDAC driver code probably retired from active kernel development years ago. New maintainers inherit code they don't understand for hardware they've never seen. When bugs appear, they can't fix them effectively. When the code becomes incompatible with newer kernel infrastructure, it doesn't get updated. The code becomes "orphaned," technically still present but not actually maintained.

At some point, someone has to decide: is it worth keeping this orphaned code in the kernel? The answer increasingly is no. Keeping unmaintained code creates technical debt. Every time the kernel refactors a core subsystem, someone has to update the orphaned code or risk breaking it completely. Every new compiler flag or security feature might interact unexpectedly with old code. Eventually, the cost of maintaining the compatibility exceeds the value of having the code present.

This economic reality has gradually changed the culture of Linux development. There's an increasing recognition that removing dead code is better than letting it accumulate. The Rust programming language adoption in the Linux kernel, for example, partly stems from the realization that you can prevent certain classes of bugs in new code more effectively than you can maintain old C code to the same safety standards. Rather than endlessly debugging legacy code, invest in better tools for new code.

Technical Deep Dive: Why EDAC Broke and Why Fixing It Wasn't Worth It

To really understand why the 440BX EDAC driver was allowed to bitrot for so long, it helps to understand what happened technically. The driver worked by reading error status registers from the 440BX chipset. These registers existed at specific memory addresses on the system bus. The driver used the Intel AGP driver to map those addresses into the kernel's address space, then read the values.

The problem emerged when the AGP driver was updated for newer architectures. The AGP subsystem moved away from the specific memory mapping techniques the EDAC driver depended on. More fundamentally, newer hardware didn't use AGP at all—it used PCI Express and other interfaces. The AGP driver became increasingly niche, supporting only older systems that still had AGP slots.

When the AGP driver changed, the 440BX EDAC driver couldn't adapt. It relied on function calls and data structures that no longer existed or worked differently. The driver failed to initialize, or if it did initialize, it read from the wrong memory locations and returned garbage error data. By 2007, it was completely non-functional.

At that point, someone had to decide: should we fix this driver? But fixing it required several things. First, someone would need to understand the 440BX chipset's internal architecture in detail—not something well-documented for the general public. Second, they would need to redesign the driver to work without depending on the AGP subsystem. Third, they would need to test it on actual 440BX hardware, which was already becoming difficult to acquire. Finally, they would need to maintain it going forward whenever kernel infrastructure changed again.

Compare that against the alternative: remove the code entirely. Removing it requires no ongoing maintenance. It saves space. It simplifies the test matrix. It removes a code path that doesn't work. The tradeoff is that users with 440BX systems lose kernel-level EDAC monitoring.

By 2007, almost nobody was using 440BX systems anymore. They had moved to modern hardware. The people still running 440BX systems were either enthusiasts doing retrocomputing, or industrial deployments that wouldn't be updating kernels frequently anyway. For neither group was kernel-level EDAC monitoring critical. At the hardware level, error correction continued working perfectly fine.

So the driver remained broken but not removed, a zombie in the kernel until Linux 7.0 finally put it out of its misery. This is actually a common pattern in long-lived open-source projects—code gets broken, nobody fixes it because the affected user base is tiny, but nobody removes it either because it's not causing obvious problems. Eventually, when someone decides to clean up, the orphaned code gets removed entirely.

What This Means for Future Linux Compatibility Decisions

The 440BX EDAC driver removal establishes a precedent for how Linux handles legacy hardware going forward. It signals that the community is willing to make hard decisions about backward compatibility when supporting old hardware becomes unsustainable. This doesn't mean Linux will suddenly drop support for everything older than five years, but it does mean the community is more willing to deprecate and remove than in the past.

For developers considering what hardware to support in new projects, this is important. The Linux community will provide a grace period—often measured in years—before removing support. During that period, you should have time to migrate to newer hardware if your system depends on that support. But the community won't support everything forever. At some point, hardware becomes historical artifact rather than practical technology, and the kernel moves on.

For users of older hardware, this suggests a strategy: when major kernel changes are coming, plan for potential removals. If you're running a 440BX system, you should have recognized by 2007 that the hardware was aging and started planning for eventual kernel incompatibility. The removal in Linux 7.0 isn't a surprise—it's the conclusion of a process that started years earlier.

For Linux distros, this creates decisions about which removed drivers and features to backport to older kernel versions. Enterprise distributions like RHEL might maintain the 440BX EDAC driver in their stable branches even after it's removed from mainline, serving customers who still depend on it. Other distributions might drop support immediately. These decisions shape how long users can actually continue running older hardware with actively maintained kernels.

The Broader Ecosystem Impact of Hardware Deprecation

The removal of 440BX EDAC support has ripple effects beyond just Linux kernel development. Distributions have to decide how to handle it. System administrators have to plan for it. Tools that depend on EDAC monitoring have to be updated or replaced. Documentation about legacy systems becomes obsolete. The ecosystem gradually adapts as the hardware moves from "currently supported" to "legacy but tolerably incompatible" to "completely obsolete."

Virtualization becomes increasingly important as real hardware moves toward obsolescence. New systems wanting to run legacy workloads don't buy old hardware anymore—they run it in virtual machines on new hardware. This shift fundamentally changes the support calculus. The kernel doesn't need to natively support hardware that's being emulated rather than used bare-metal. Hypervisors can emulate the 440BX chipset indefinitely, providing compatibility even as native kernel support disappears.

But this depends on someone continuing to develop and maintain hypervisors that emulate the 440BX. As the hardware becomes more obscure, maintaining accurate emulation becomes harder. Eventually, emulation might become less accurate because developers no longer remember how the hardware actually behaved. This is why companies like VMware focus on emulating the most common platforms—the 440BX is useful as a default because it's well-understood, widely documented, and tolerated by a huge range of legacy operating systems.

The removal also affects retrocomputing communities who preserve old systems for historical reasons. Museums and enthusiasts who maintain actual 440BX hardware for historical purposes now have an additional constraint: they need to decide whether to stick with older kernels for Linux systems, or use virtualization to run newer systems that emulate the hardware. This changes how historical computing is preserved—moving from maintaining physical systems to maintaining virtual replicas that run on contemporary infrastructure.

FAQ

What exactly was the 440BX chipset and why was it so legendary?

The Intel 440BX was a system chipset released in 1998 that became legendary for its stability, compatibility, and tolerance for overclocking. It solved fragmentation issues in the PC market by providing a reliable, standardized platform that would run slightly out-of-spec hardware reliably. The 440BX could run cheap Celeron 300A processors overclocked to 450MHz, enabled enthusiasts to build compatible systems from diverse components, and remained rock-solid in server deployments for years. Its reliability and versatility made it the standard platform for PC builders and small deployments throughout the late 1990s and early 2000s.

Why did the EDAC driver stop working in 2007?

The 440BX EDAC driver depended on the Intel AGP driver to access memory controller registers on the chipset. When the AGP driver was updated for newer architectures and eventually deprecated (since newer systems used PCI Express instead), the EDAC driver's assumptions broke. It tried to call functions that no longer existed, access memory locations that had moved, or use data structures that had changed. By 2007, the driver was completely non-functional and nobody bothered maintaining it because the affected hardware was already becoming obsolete.

Does the loss of EDAC support mean memory errors won't be corrected anymore on 440BX systems?

No. Error correction continues working at the hardware level. ECC RAM automatically detects and corrects single-bit errors through hardware-based parity mechanisms that don't depend on any kernel driver. What's lost is the kernel's ability to monitor error rates and log error corrections. The system continues running reliably—you just can't track whether errors are occurring, which matters mostly for predictive maintenance rather than actual system stability.

What are the alternatives for monitoring memory errors on older hardware after the EDAC driver is removed?

Several alternatives exist. Many 440BX motherboards included on-board sensor systems that can be accessed through the hwmon Linux subsystem. Systems supporting IPMI can use the BMC (baseboard management controller) for monitoring, which works independently of the kernel. Virtualization is another option—running the 440BX system as a virtual machine on modern hardware that provides full kernel support. For pure compatibility needs, containerization can isolate legacy environments within modern kernel infrastructure.

Will Linux continue supporting other old chipsets, or is 440BX special?

The 440BX removal is part of a broader trend. Linux has deprecated support for aging architectures (like 32-bit i386), removed drivers for obsolete storage controllers, and phased out support for interfaces nobody uses anymore. The pattern is consistent: hardware that stopped being manufactured decades ago, has virtually no active deployments, and requires ongoing maintenance to remain compatible eventually gets removed. Enterprise distributions might backport deprecated drivers to long-term support branches, but the mainline kernel increasingly only actively maintains what's currently shipping in production systems.

Why didn't the Linux community remove this driver earlier if it was broken since 2007?

Open-source projects work through volunteer effort, and removing features is controversial. Even though the driver was broken and non-functional, someone would have had to step up and advocate for removal, deal with objections from users still on that hardware, and manage the deprecation process. It was easier to just leave it in place. Additionally, there wasn't an urgent business need to remove it—keeping broken code in the kernel doesn't actively harm other systems, it just accumulates technical debt. Linux 7.0 finally decided the debt wasn't worth carrying anymore.

What should I do if I'm actually still running a 440BX system?

You have several options. If you want to continue updating to the latest kernels, migrate to virtualization by running the system as a guest on contemporary hardware with a hypervisor that emulates the 440BX. If staying on the same physical hardware is important, you can remain on an older kernel version—most distributions maintain long-term support kernels for many years, and you'll continue receiving critical security updates even if you skip the very latest release. For workloads without hardware dependencies, containerization allows running legacy software on modern kernel infrastructure. The key is choosing your strategy before you find yourself forced to choose.

Does VMware's default emulation of the 440BX chipset mean the 440BX will never go away?

In a limited sense, yes. VMware and other hypervisors default to emulating the 440BX because it's a well-understood, widely-documented architecture that provides compatibility across many legacy operating systems. This emulated version will probably persist indefinitely as a software standard, even as actual 440BX hardware becomes impossible to find. But this is emulation rather than native support—it happens in the hypervisor, independent of what the host kernel does. Actual 440BX hardware and its native Linux support can become completely obsolete while the emulated version persists as a compatibility bridge.

What does this removal tell us about the long-term future of Linux compatibility?

It signals that Linux will make deliberate choices to stop supporting hardware that has become historical artifact rather than contemporary technology. This isn't a sudden shift—it's a gradual one that accelerates as the kernel becomes more complex and maintenance costs grow. For users and organizations, it means planning for eventual kernel incompatibility with aging hardware rather than expecting indefinite support. For developers, it suggests designing systems that can migrate to virtualization or containerization rather than expecting native kernel support forever. The kernel will continue supporting current and recent hardware comprehensively, but ancient systems eventually transition from "supported but aging" to "unsupported and must migrate."

Conclusion: The Legacy of the 440BX and What Comes Next

The removal of the 440BX EDAC driver from Linux 7.0 is the formal closing of a chapter in computing history. The chipset that revolutionized PC building in the late 1990s, that earned legendary status for stability and overclocking capability, that sustained countless enthusiast systems and commercial deployments, has now been completely deprecated from the Linux kernel. The driver itself has been broken and non-functional for eighteen years, carried forward through release after release more out of historical inertia than practical utility.

But this isn't really a sad farewell. The 440BX served its purpose brilliantly. It solved real problems that the computer industry faced. It enabled a generation of builders and enthusiasts to construct reliable systems from commodity components. It proved that thoughtful hardware design could remain stable and useful for decades. The legacy of the 440BX isn't tarnished by its removal from the Linux kernel. It's completed. The project finished, the hardware moved into history, and the software moved on to serve systems that didn't exist yet when the 440BX was designed.

What's remarkable is how long the Linux community carried the 440BX support forward. Nearly two decades of maintaining broken code, all because someone might still need it. The community could have removed it years earlier but didn't. This speaks to the ethos of open-source software—to support the strange edge cases, to keep old systems running, to avoid breaking things unnecessarily. Eventually, though, even that generosity has limits. At some point, carrying the past becomes a constraint on the future.

For the tiny fraction of users still running 440BX systems in 2025, nothing changes about their actual hardware capabilities. Memory error correction continues working. The system continues running. Moving to Linux 7.0 simply means losing kernel-level EDAC monitoring—a feature that hasn't worked properly in nearly two decades anyway. These users have several viable paths forward, from staying on supported older kernel versions to migrating to virtualization.

For the broader Linux ecosystem, the removal establishes a pattern: hardware that has become completely obsolete, that hasn't been manufactured for decades, that has virtually no active deployments, will eventually be deprecated and removed. Developers building systems today should recognize that the kernel won't support their hardware forever. At some point, updates will become incompatible. That's not a flaw in Linux—it's a feature. It allows the kernel to remain lean, maintainable, and focused on serving systems that people are actually using now.

The 440BX's removal from the Linux kernel is ultimately a statement that software should evolve along with hardware. You can't freeze infrastructure in time, even when it worked well. You have to let go of the past to make room for the future. The 440BX achieved immortality through virtualization—it exists now as a software standard that will probably persist indefinitely in hypervisors. But native kernel support is over. And that's okay.

If you're curious about what's next for Linux kernel development, watch for more deprecations of aging driver support. The community is increasingly willing to clean house. But there's still a generous grace period—usually years—before actual removal. For systems you're deploying now, assume the Linux kernel will support them actively for about 5-7 years from now, with decreasing support for another 5-7 years after that, and essentially no support after 15 years. Plan accordingly. Build systems that can migrate to virtualization or newer hardware when the time comes. And appreciate the hardware you're using now, because future Linux developers will probably have removed support for it long after it's ceased to be practical.

The 440BX's story in the Linux kernel is complete. It did what it was meant to do. Now it's time to move on.

Key Takeaways

- The Intel 440BX EDAC driver has been non-functional since 2007 due to AGP driver incompatibilities, yet remained in the Linux kernel for 18 additional years before removal in Linux 7.0

- Hardware-level error correction in ECC RAM continues working without the kernel driver, so system reliability isn't affected by its removal

- The 440BX represents one of computing's most influential chipsets, proving that thoughtful hardware design can remain stable and useful for over 25 years

- Linux's deprecation of legacy driver support reflects the sustainable approach to open-source maintenance: removing unmaintained code that serves virtually no active deployments

- Users still running 440BX systems can migrate through virtualization, stay on older kernel versions, use container-based approaches, or implement hardware monitoring alternatives

Related Articles

- Metal Gear Solid 4 Leaves PS3: Console Exclusivity Death [2025]

- Linux 6.19 Kernel Release and Linux 7.0 Announcement [2025]

- Kilo CLI 1.0: Open Source AI Coding in Your Terminal [2025]

- Linux Kernel Succession Plan After Linus Torvalds: What You Need to Know [2025]

- Moltbot's Viral Rebrand: How an AI Chatbot Became Internet Sensation [2025]

- WB-57 Emergency Landing: Heroic Belly Landing in Houston [2025]