Ray Kurzweil's 1991 AI Predictions: Why They're Eerily Accurate Today

Back in 1991, when most people thought artificial intelligence meant robots that looked like the Terminator, one man was already thinking decades ahead. Ray Kurzweil sat down with Computerworld and made a series of observations about where AI was headed that feel almost supernatural in their accuracy. Except there's nothing supernatural about it. He simply understood the technology better than anyone else.

The wild part? Most of what he predicted hasn't just happened. It's happened faster, in weirder ways, and with way more impact than anyone anticipated. The AI boom of 2023-2025 isn't surprising if you understand Kurzweil's framework. But it feels shocking if you've been paying attention to hype instead of fundamentals.

This article digs into what Kurzweil actually said in 1991, what he got right, what he underestimated, and what his predictions reveal about the nature of progress itself. Because here's the thing: understanding Kurzweil's logic helps you understand not just where AI came from, but where it's actually headed.

TL; DR

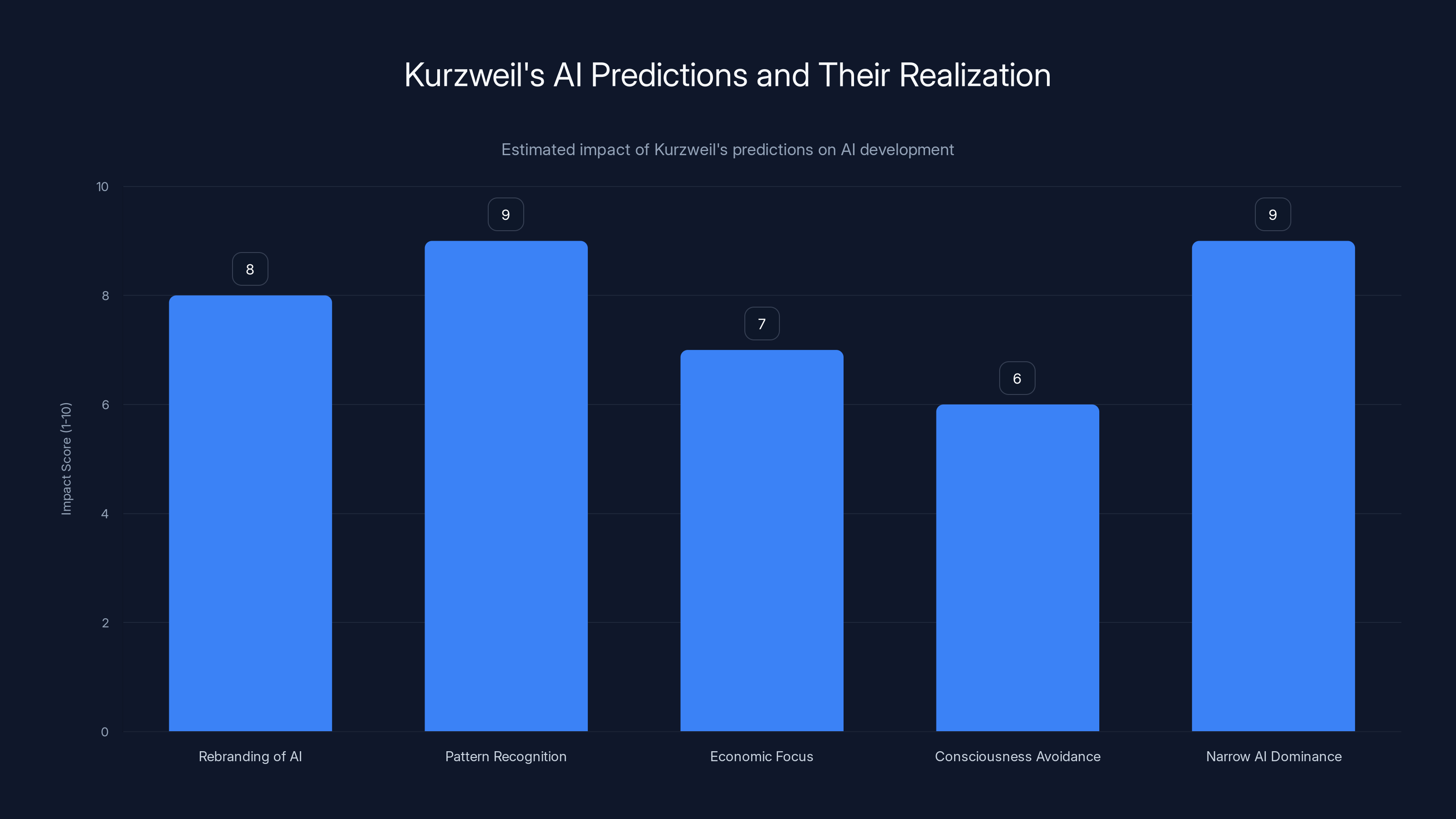

- Kurzweil's Core Insight: AI doesn't fail—it just gets rebranded as "normal" once it works (machine vision, speech recognition, recommendation systems)

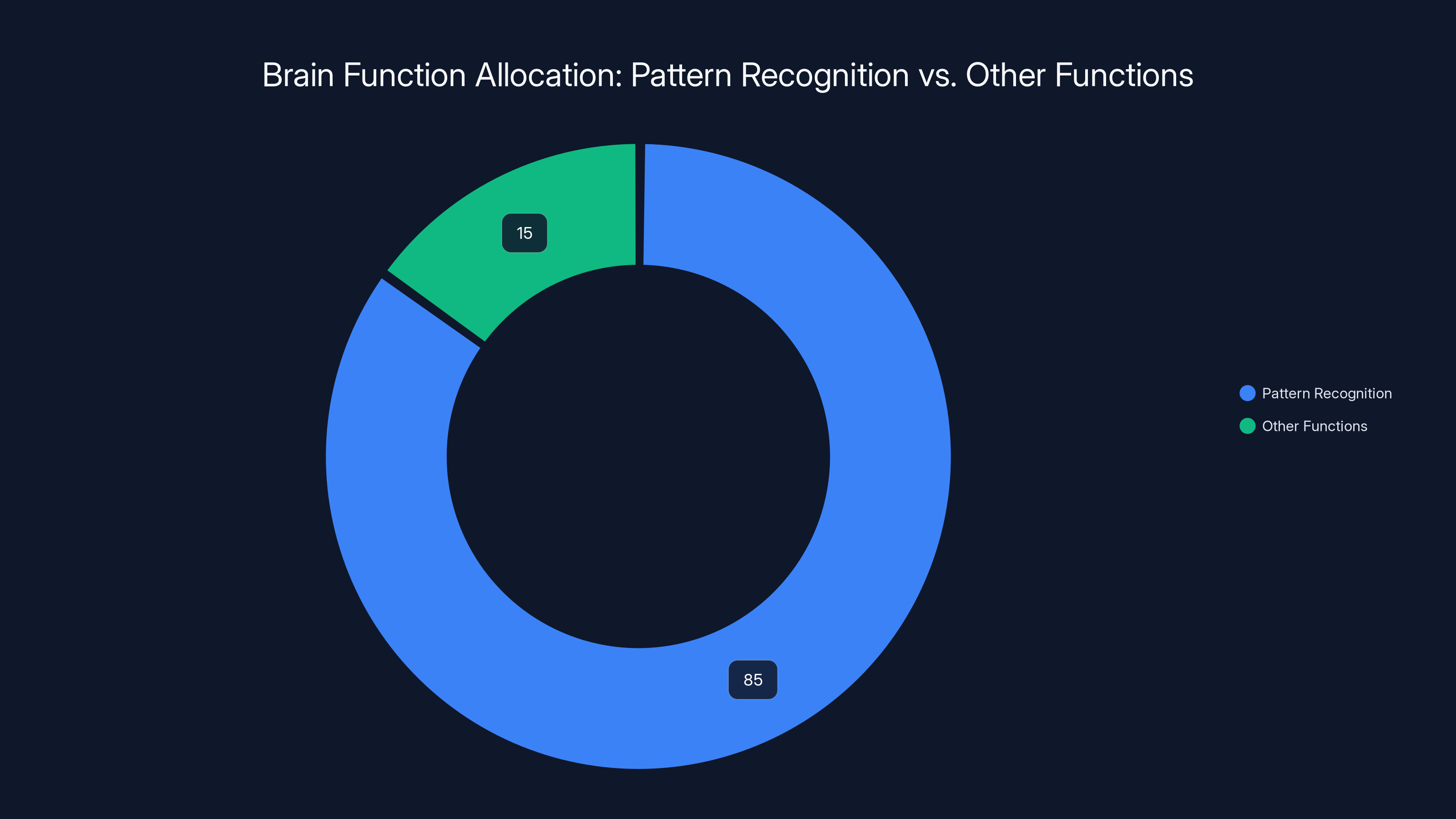

- The Pattern Recognition Principle: He correctly identified that 80-90% of human intelligence is pattern recognition, which is exactly what modern machine learning does best

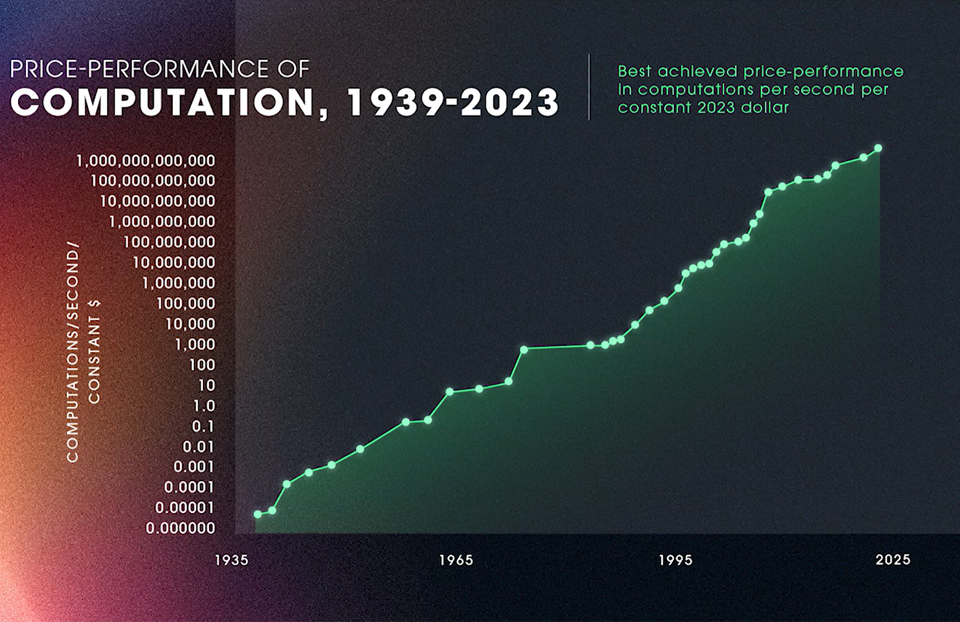

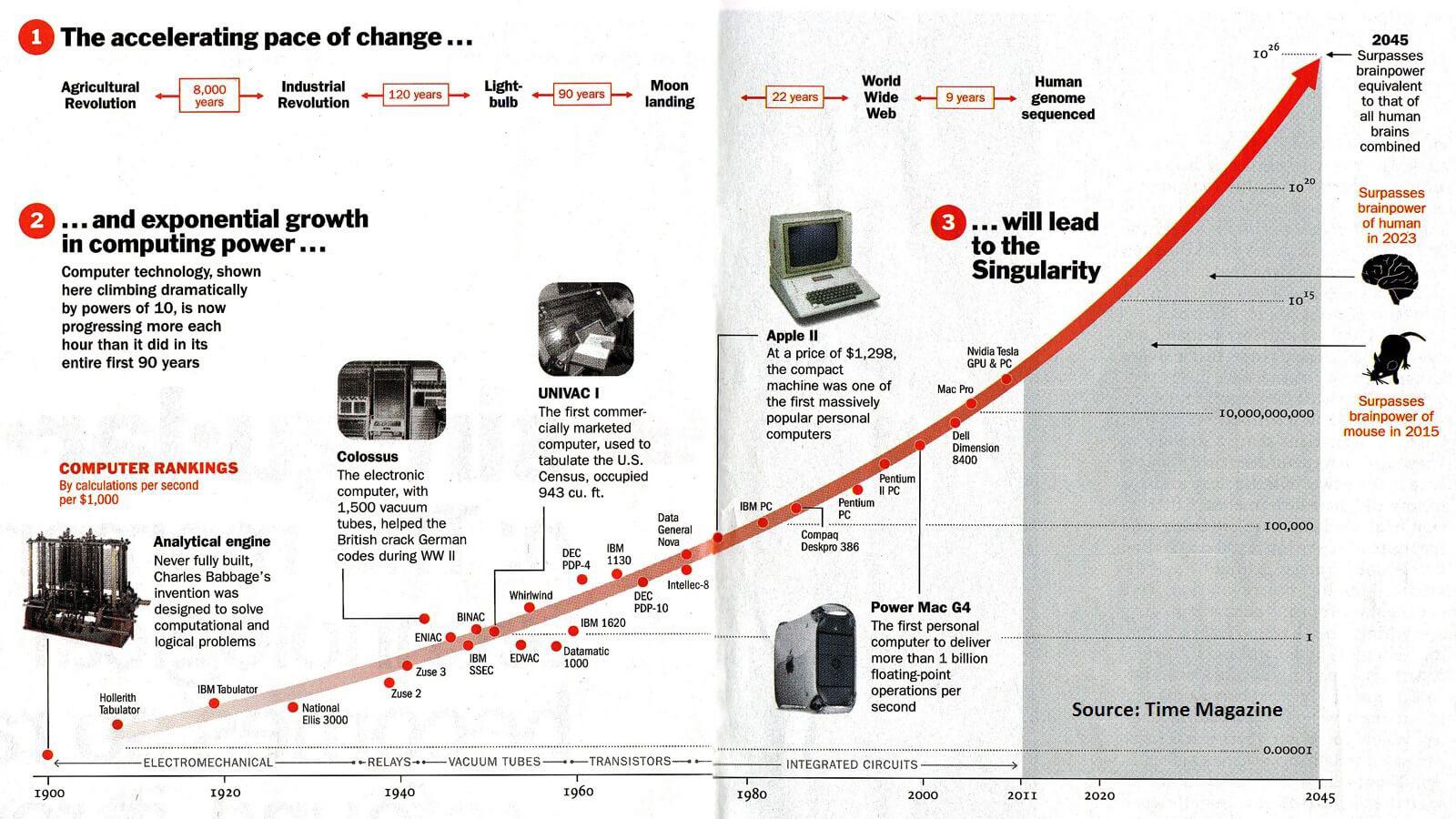

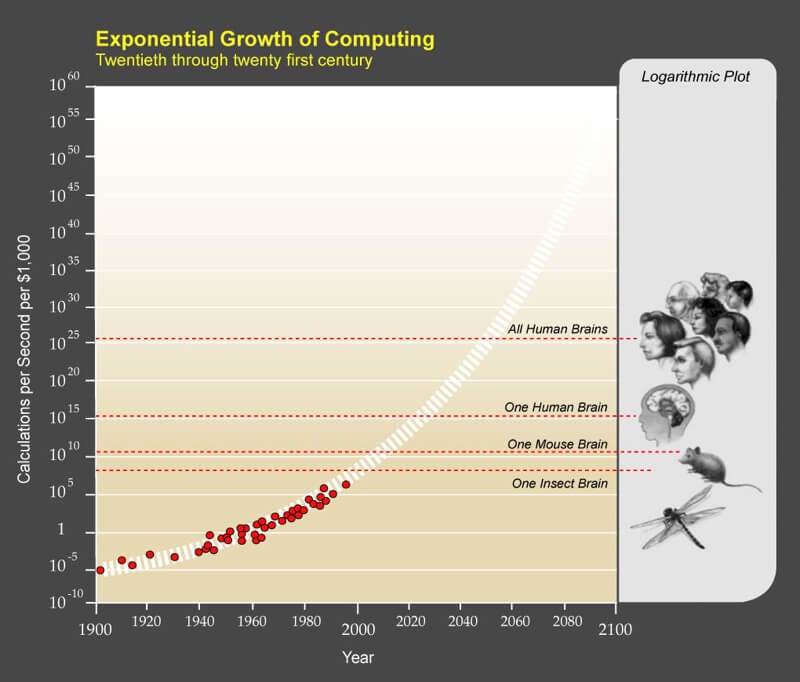

- Economics Over Breakthroughs: Kurzweil emphasized cost curves and computation power, not sudden scientific leaps—predicting the exact trajectory of today's AI boom

- The Consciousness Question: He avoided easy answers about machine consciousness, focusing instead on functional capabilities and domain expertise

- Domain Expertise Problem: He predicted narrow AI systems would dominate, which is still accurate 35 years later despite the hype around "general" AI

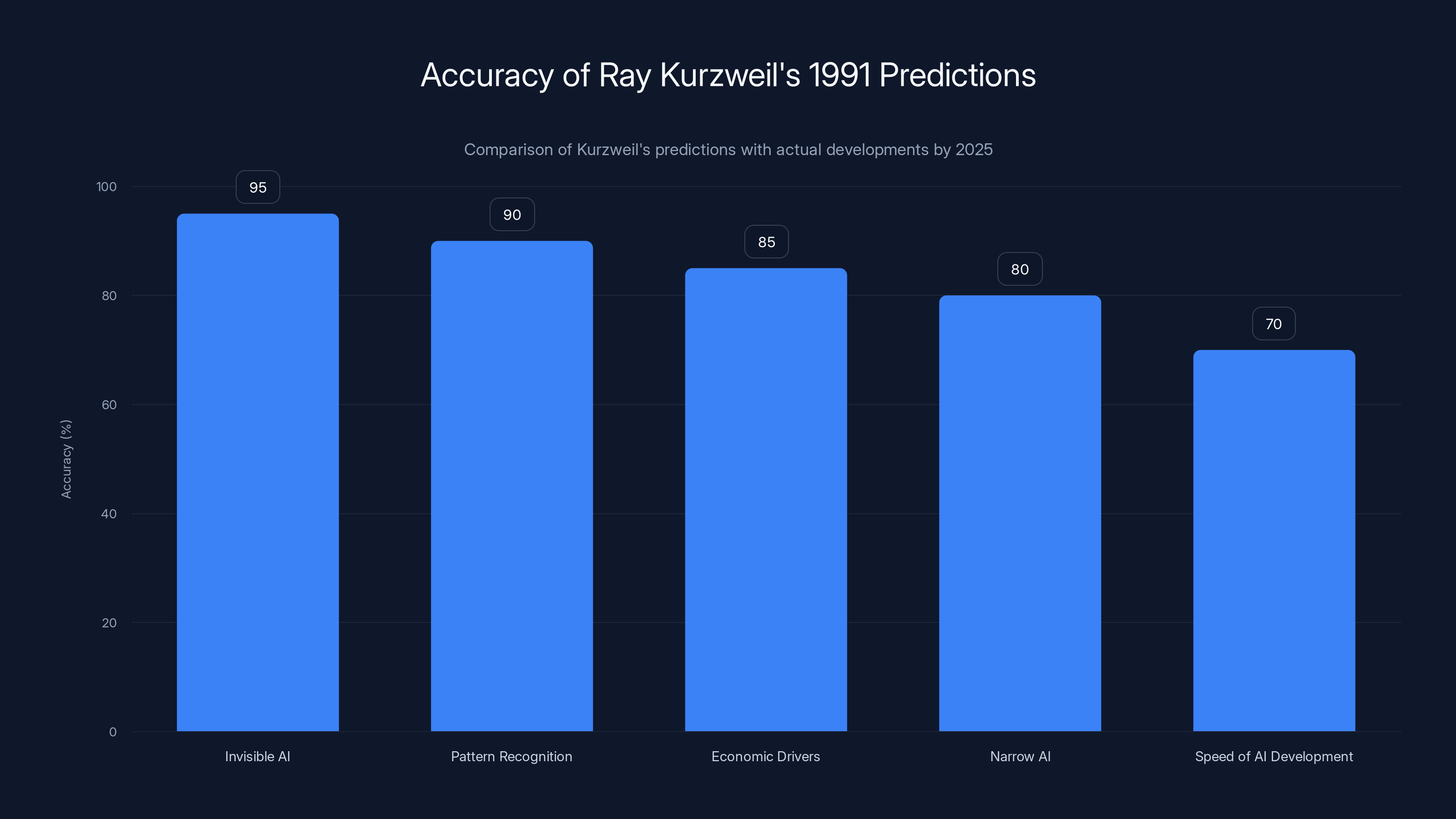

Kurzweil's predictions about AI becoming invisible, pattern recognition, and economic drivers were highly accurate, with over 80% accuracy. He underestimated the speed of AI development, particularly in large language models.

The 1991 Interview That Predicted Everything

In October 1991, Computerworld ran an interview with Kurzweil that reads like a cheat sheet for understanding the 2024 AI landscape. The interviewer asked straightforward questions. Kurzweil gave answers that were both humble and audacious.

He started by pushing back on a common claim: that AI had failed to deliver on its promises. Most people in the tech world at that time believed the AI boom of the 1980s had busted. Deep down, AI was just a dead-end technology. Kurzweil disagreed sharply.

"That's unfair, because every time we master a particular area of AI, it ceases to be considered AI. It's just like a magic trick—when you know how it's done, it's no longer magic."

Read that sentence again. This is the insight that explains everything that's happened since. AI doesn't fail. It succeeds so completely that we stop calling it AI. We call it "software" or "a feature" or "machine vision" or "recommendation algorithm."

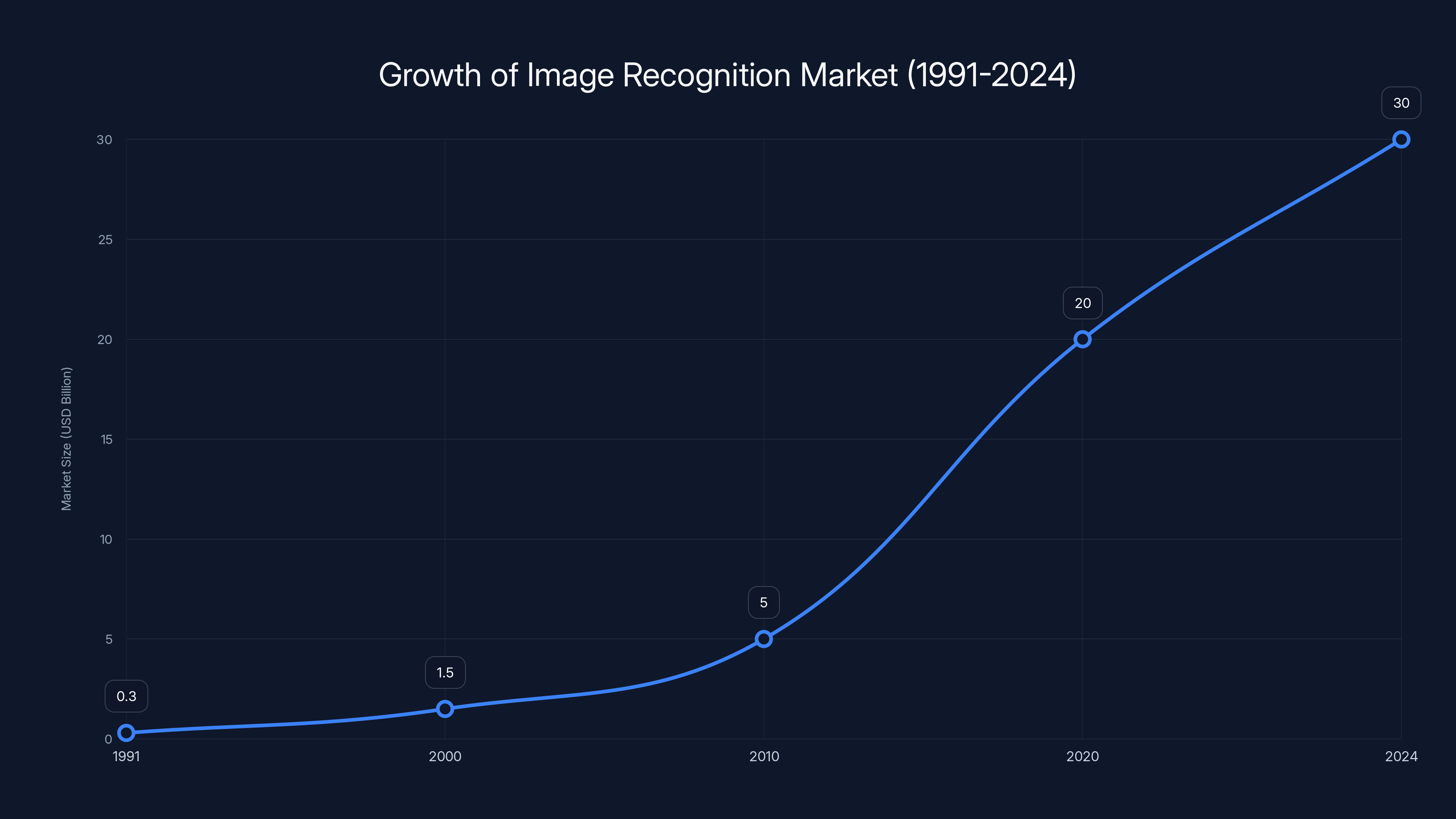

He gave a specific example: machine vision in 1991 was already a $300 million business. But nobody called it AI anymore. They just called it image recognition. The technology worked. It was useful. So we moved on and forgot it was ever considered AI at all.

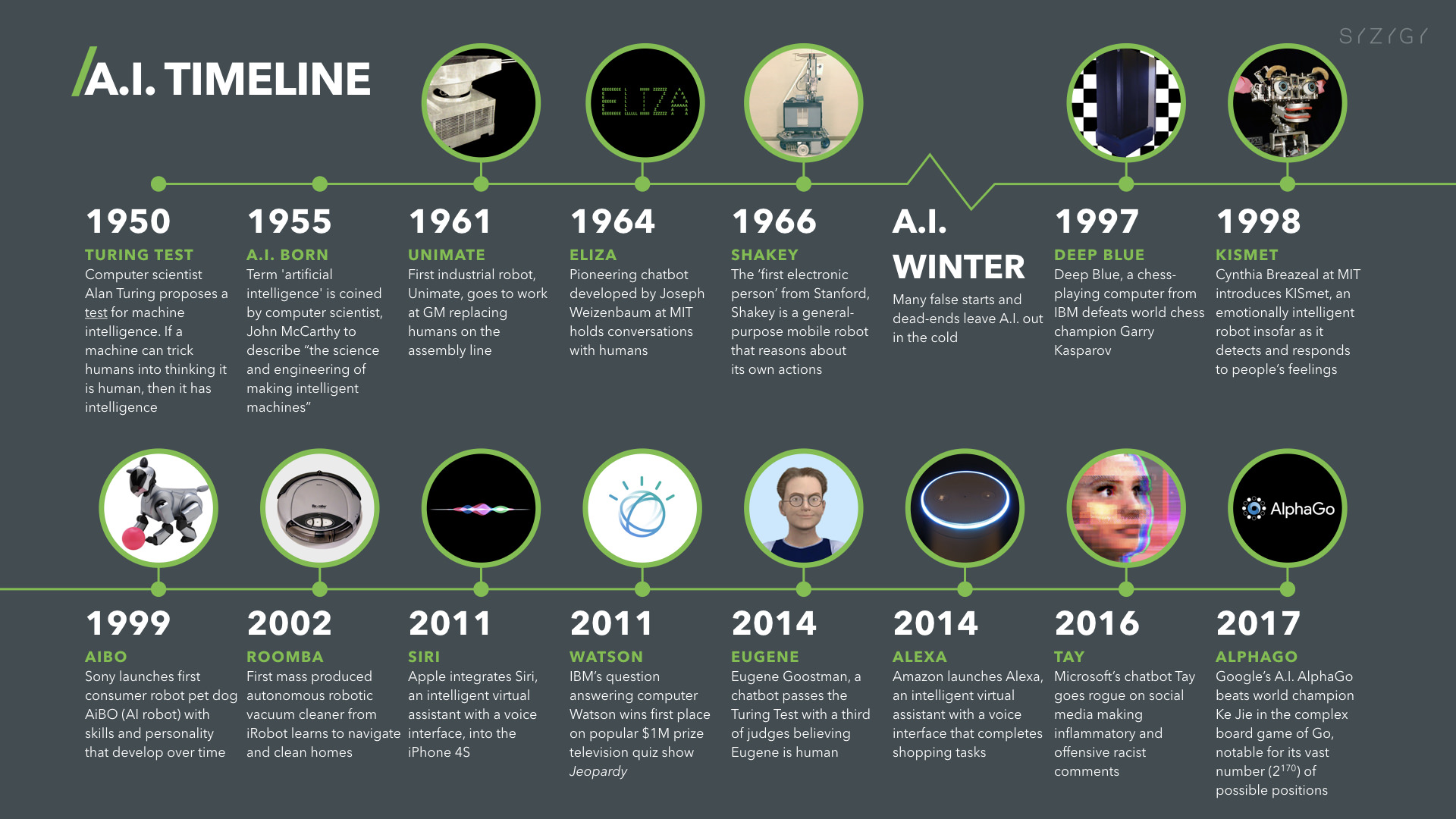

The interview happened at a specific moment in tech history. Personal computers were becoming common. The internet existed but wasn't yet consumer-facing. GPU computing didn't exist. Cloud storage was a science fiction concept. And yet Kurzweil could see where it was all heading because he understood the underlying economic forces.

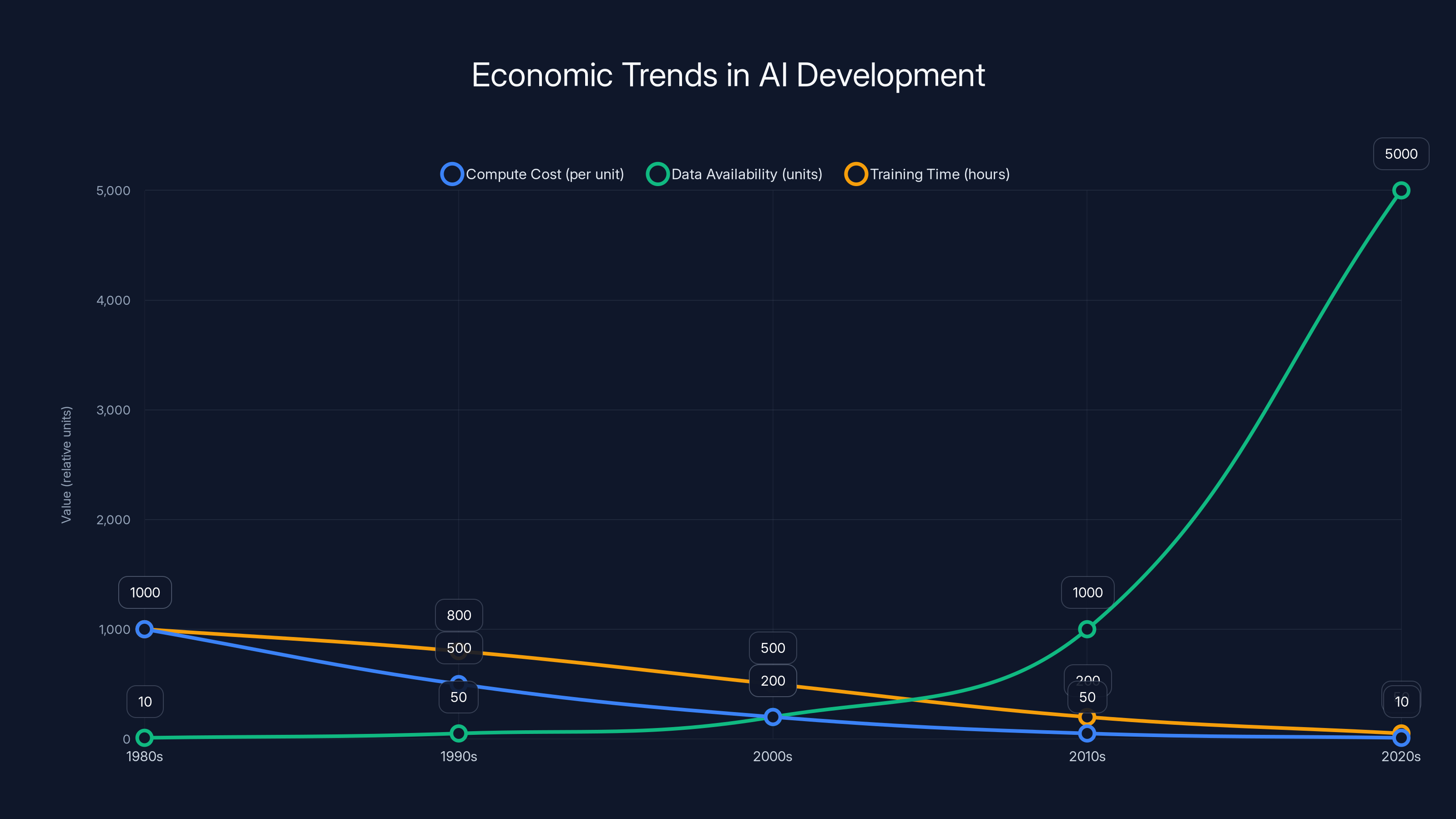

Estimated data shows how decreasing compute costs and increasing data availability have made AI techniques more practical over time, aligning with Kurzweil's economic perspective.

The "Magic Trick" Framework: Why We Keep Missing AI

Kurzweil's magic trick analogy is worth exploring because it explains a lot about why AI feels like it appeared overnight, when really it's been around for decades.

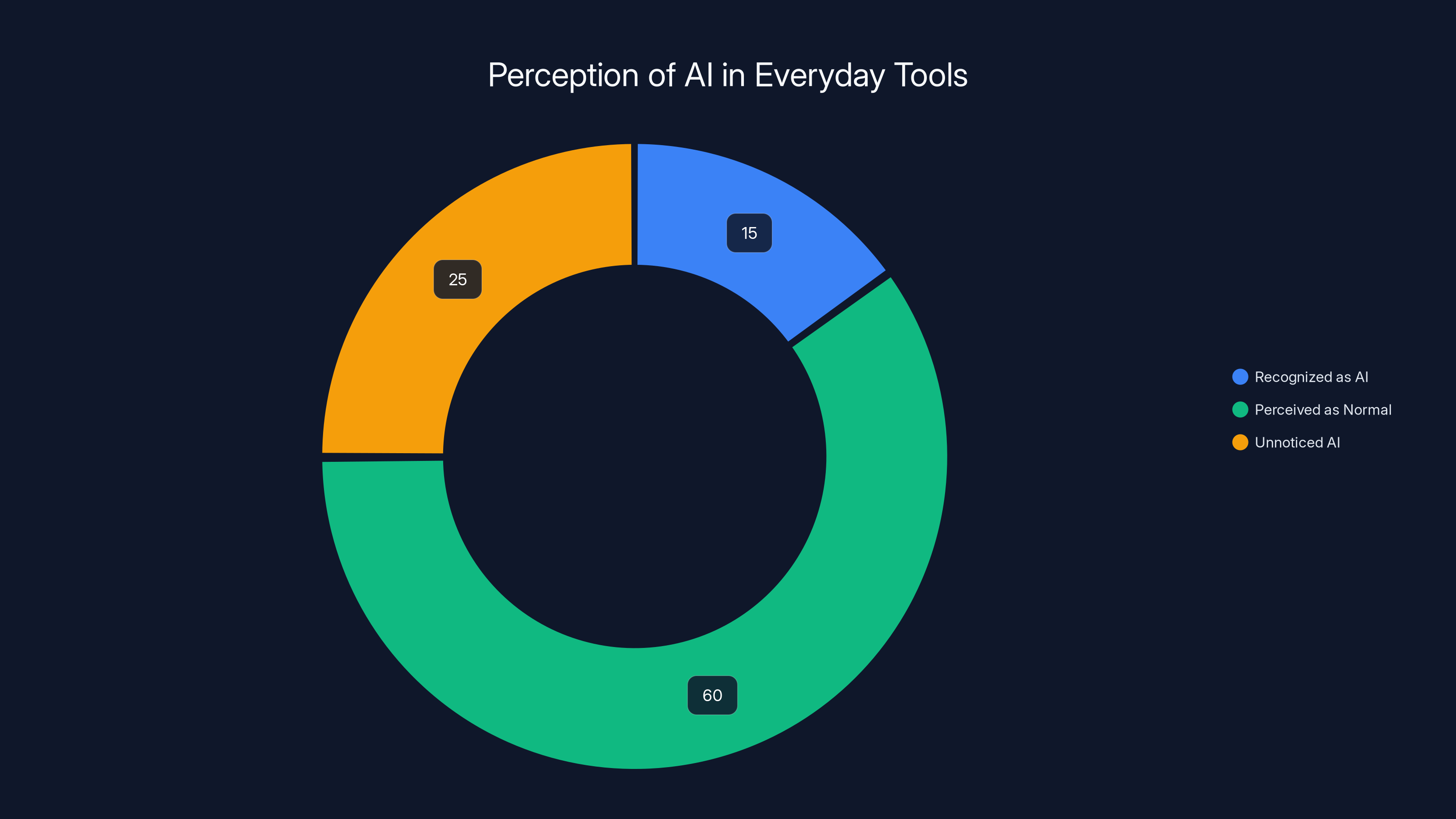

Think about everything your smartphone does. Face recognition? That's AI. The keyboard that predicts your next word? AI. The photos app that automatically organizes images by person? AI. The navigation app that predicts traffic patterns? AI. The recommendation algorithm that suggests what to watch? AI.

But when's the last time you thought of your phone as using artificial intelligence? Probably never. These features just work. They're normal. They're background. They're boring.

This is Kurzweil's insight in action. Every successful AI technology gets absorbed into the background until we forget it's AI at all. Then we move on to complaining about the next wave of AI that we don't understand yet.

Kurzweil also pointed out that people conflated "expert systems" with the entire field of AI. Expert systems were impressive (they could diagnose certain diseases or optimize certain financial decisions), but they were just one small corner of what AI could do. Most software would eventually become intelligent in subtle ways, he predicted. It just wouldn't be called AI anymore.

When you look at 2024-2025, this prediction is almost perfectly accurate. Every major software company is quietly adding AI features to their products. Not as a separate "AI tool" but as built-in capabilities. Excel has AI. Gmail has AI. Google Maps has AI. Figma has AI. They don't market these as AI features. They market them as "productivity improvements" or "smart suggestions."

The fact that we don't think of these as AI is actually proof that Kurzweil was right.

The Pattern Recognition Insight That Changed Everything

When asked to define artificial intelligence, Kurzweil gave a definition that feels obvious in hindsight but was actually quite radical in 1991:

"AI is the art of creating machines that perform functions we associate with human intelligence. Intelligence is the ability to use limited resources in an effective way using abstract reasoning, the ability to recognize patterns and the ability to solve problems in a limited time period."

But here's the key insight he added: "Probably 80% to 90% of our brains are devoted to pattern recognition and skill acquisition."

Let that sink in. Most of what we call "thinking" isn't reasoning in some grand philosophical sense. It's pattern matching. Recognizing that certain visual patterns mean a face. Recognizing that certain sound patterns mean a word. Recognizing that certain sequences of events have usually led to certain outcomes.

This observation is the foundation of modern machine learning. Every neural network, every transformer, every large language model is built on exactly this principle. They don't reason. They recognize patterns across enormous datasets. And that works because Kurzweil was right: pattern recognition is what intelligence mostly is.

The implication of this insight is profound. If human intelligence is mostly pattern recognition, then building machines that recognize patterns well is building machines that are intelligent. Not in some narrow sense. In the way that matters.

This is why Open AI's approach to scaling—throwing more data and more compute at pattern recognition—actually works. It's not brute force. It's hitting the actual mechanism that intelligence uses.

Kurzweil understood this in 1991, which is why he never doubted that machines could become intelligent. He just wanted to talk about the timeline and the path, not whether it was possible.

Kurzweil's predictions have significantly shaped AI development, particularly in pattern recognition and narrow AI systems. (Estimated data)

The Economics Argument: Why Timing Mattered More Than Breakthroughs

Here's what separates Kurzweil's thinking from most AI futurists: he was obsessed with economics, not breakthroughs.

When he looked at the 1980s and 1990s, he didn't see AI failure. He saw tools that worked but cost too much to deploy. Machine vision existed but was expensive. Speech recognition existed but had high error rates. Machine translation existed but was terrible.

But Kurzweil understood something crucial: computing follows predictable economic curves. Moore's Law and its cousins (storage, bandwidth, GPU performance) meant that expensive technology becomes cheap technology within a fairly predictable timeframe.

He articulated it clearly in the interview: "It was also clear to me that a gradual price/performance revolution of digital electronics would ultimately allow all of these types of information to become practical and cost-effective."

This is almost boring compared to the sci-fi framing of AI that dominates the culture. No neural net breakthroughs. No artificial consciousness. No quantum computing salvation. Just... economics. The cost of computation goes down. The availability of data goes up. The techniques that were impractical become practical. Everything else follows.

Look at what actually happened with large language models. The core transformer architecture (the technical foundation of Chat GPT, Claude, Gemini) was published in 2017. It wasn't revolutionary. It was a clever engineering approach to an existing problem.

But then compute got cheaper. Cloud infrastructure scaled. Companies could afford to train models on the entire internet multiple times over. The techniques that existed in 2017 became practical at scale in 2022-2023. Suddenly everyone was shocked by how intelligent the models were.

Kurzweil would have called this obvious. The only surprise was that it took until 2023, not 2015. Everything else was just economics playing out.

What Kurzweil Got Right About Narrow AI Systems

In the 1991 interview, Kurzweil was asked where AI stood in its evolution. His answer was remarkably clear-eyed about the limitations:

"We are creating systems that can emulate human intelligence within a narrow domain. They diagnose a limited domain of illnesses, play a game like chess, make a type of financial decision, guide a missile toward a building."

He then identified the critical limitation: "These systems become idiots again when they go outside their area of expertise."

This is still true in 2024. Chat GPT is brilliant at language but useless at real-time information. It can't run code or see images (well, now it can see images, but that's a separate trained component). Specialist medical AI systems are incredible within their domain but fail on edge cases. Recommendation algorithms work great for movies but terrible for choosing a life partner.

The depth of expertise is inversely related to the breadth of application. As you make a system smarter at more things, it generally gets dumber at any one thing.

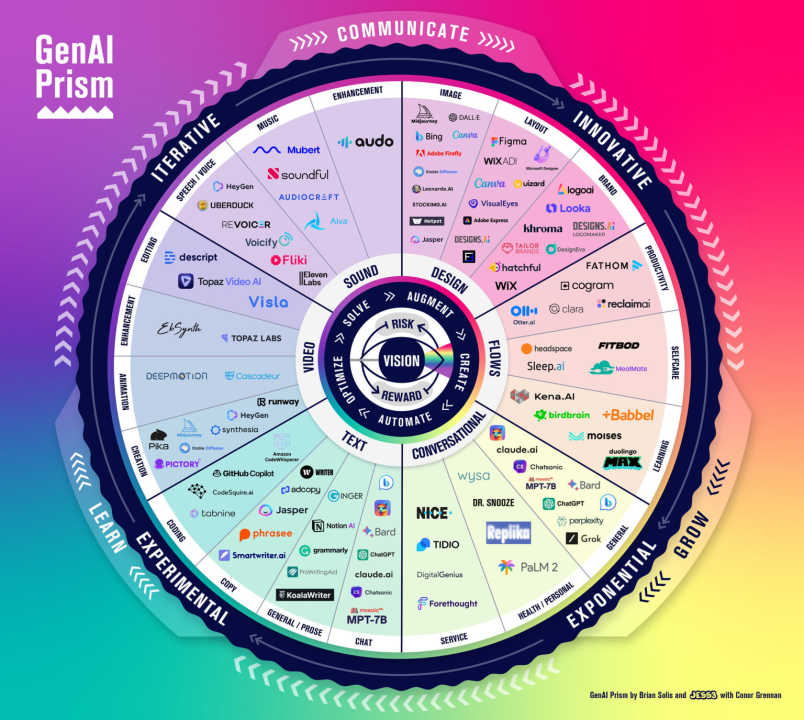

Kurzweil predicted that the path forward wasn't general intelligence. It was combining different narrow AI systems. "As AI matures, we're trying to broaden the machine's areas of expertise by combining different AI systems such as speech recognition, natural language understanding and the ability to make decisions within a certain expert domain."

This is exactly what's happening with modern AI systems. Chat GPT alone combines language understanding, code generation, image analysis, and reasoning. It's not one unified system. It's multiple specialized models working together. The same is true for Google Gemini, Claude, and most other large models.

But here's where Kurzweil's prediction gets interesting: he said combining narrow systems would broaden expertise. The implication is that you don't get general intelligence by building a universal system. You get it by stitching together many specialized systems. That's actually closer to how human brains work (different regions specialize in different functions) and it's increasingly how advanced AI works too.

The image recognition market has grown from

The Memory and Speed Advantage: Kurzweil's Overlooked Insight

One of the most significant parts of the interview gets overlooked because it sounds so obvious now:

"Once a computer can emulate essential human functionality, it can then combine that with the enormous superiority it already displays in its ability to remember billions or trillions of facts with extreme precision, to access that information at extremely high speed and to perform functions over and over again very quickly."

This is the real AI advantage. Not magical reasoning. Not superhuman intuition. Boring, mechanical superiority in memory and speed.

Human working memory can hold about 7 items. A computer can hold billions. Humans retrieve memories in seconds. Computers retrieve data in microseconds. Humans get tired. Computers don't.

When you combine human-level pattern recognition with machine-level memory and speed, you get something that looks superhuman very quickly. Not because the machine is smarter. Because the machine has advantages in the dimensions that matter for specific tasks.

This explains why AI is good at some things and terrible at others. It explains why Chat GPT can memorize the entire works of Shakespeare but struggles with common sense reasoning. It explains why AI excels at data analysis but fails at understanding metaphor (a task that requires common sense, not data).

Kurzweil understood that the AI advantage wasn't intelligence in the abstract. It was intelligence plus memory plus speed in specific domains. That's still the case 35 years later.

The Knowledge Accumulation Problem: Mastering Everything

Kurzweil then pointed out something that feels prophetic in the age of Chat GPT:

"If it can read a book, there's nothing to stop it from reading every book that's ever been published and all magazines and technical journals and from mastering all human knowledge."

This is exactly what happened. Large language models trained on the internet literally have read everything (or close to it). All the books. All the academic papers. All the articles. All the Reddit threads. All the technical documentation. Everything humans have written that's available digitally.

Because machines don't have the physical limitation of time in the same way humans do, they can digest all that information in days or weeks. A human lifetime of reading is machine reading in a few weeks of training.

The implication is staggering: once a machine reaches human-level capability in any domain, it immediately surpasses it by absorbing all available knowledge in that domain. A doctor AI doesn't just learn how to diagnose diseases the way a human doctor does. It learns from every medical journal ever published. It learns from every case study. Every drug interaction. Every rare condition. Every combination that humans have only seen a handful of times.

This is why specialized AI systems can become superhuman so quickly. They don't have the learning constraints that humans have. Once they cross the threshold of human capability, they keep accelerating.

Estimated data shows that 60% of AI features in everyday tools are perceived as 'normal', highlighting the seamless integration of AI into daily life.

The Consciousness Question: Kurzweil's Honest Answer

The interview included a philosophically loaded question: "Could a machine ever be conscious?"

Kurzweil didn't give an easy answer. He didn't say yes or no. He said: "The key is the issue of consciousness and what it means to be a living, conscious entity and..."

And then the article cuts off. We never get his full answer. But what's telling is that he didn't dismiss the question or claim machines would never be conscious. He treated it as a genuine open question that hinged on defining what consciousness actually means.

This matters because 35 years later, we still don't have a satisfying answer. People argue about whether Chat GPT is "really" intelligent or just "pattern matching." But here's the thing: humans are also pattern matching. We're just doing it at a different scale and speed.

Kurzweil understood that consciousness might not be some special sauce that only biological systems can have. It might be a property that emerges when a system gets complex enough, regardless of substrate. A pattern-matching machine that gets complex enough might develop properties that look indistinguishable from consciousness.

Or it might not. We genuinely don't know. But Kurzweil was right to treat it as an open question rather than a solved problem.

What Kurzweil Underestimated

Kurzweil's predictions were remarkably accurate, but there are a few things he didn't fully anticipate or underestimated.

The Speed of Language AI: Kurzweil expected narrow domain AI to gradually expand through combinations of different systems. He probably didn't expect that a single general-purpose language model would become so versatile so quickly. Chat GPT went from launch to 100 million users in 2 months. Nobody really anticipated that trajectory.

The Cultural Impact: Kurzweil understood the technical trajectory but maybe underestimated how disorienting and challenging the cultural impact would be. When machine vision became cheap, it didn't spark a global debate about the nature of intelligence. When Chat GPT became cheap, it did. The technology is less alien. The cultural response is more intense.

The Scaling Limitations: Kurzweil was confident that continued Moore's Law improvements would keep enabling new capabilities. But there are signs that we're hitting architectural limits. Scaling language models in the traditional way is becoming less efficient. We might need new breakthroughs, not just more of the same.

The Alignment Problem: Kurzweil briefly mentioned that machines exceeding human intelligence could be dangerous or have "difficult ramifications." He underestimated how hard the alignment problem would turn out to be. Making sure powerful AI systems do what we actually want is turning out to be much harder than making them capable.

Kurzweil estimated that 80% to 90% of our brain is devoted to pattern recognition, highlighting its critical role in human intelligence. (Estimated data)

The Broader Pattern: From "AI" to Normal

Zooming back out, what's remarkable about Kurzweil's 1991 prediction is that he got the overall pattern right: AI succeeds, then we stop calling it AI.

This happened with:

- Machine vision (1991 to 2010s): Called AI, then called "computer vision," then just "a feature"

- Speech recognition (1991 to 2010s): Called AI, then called "speech-to-text," then just "how phones work"

- Recommendation systems (1990s to 2010s): Called AI, then called "algorithms," then just expected behavior

- Spam filtering (1990s to 2010s): Called AI, then called "machine learning," then just "how email works"

- Search ranking (1990s to 2010s): Called AI, then called "ranking algorithms," then just "search"

- Chatbots (2010s to 2020s): Called AI, then... well, still called AI because it's new

But in five years, Chat GPT-like capabilities will probably be so normal that we'll stop thinking of them as AI. They'll be like voice assistants or search. Just how software works.

Kurzweil's insight was that this cycle repeats forever. Each successful AI technology gets absorbed into the background. Then we move on to complaining about the next wave of AI that we don't understand yet.

We're living through that right now. In 2023, AI was shocking and novel. In 2024, it's becoming normal. In 2025, it'll be expected. By 2030, it'll be invisible.

The Economics of AI Adoption

Kurzweil's emphasis on economics was prescient because it predicted the actual adoption curve we've seen with modern AI.

Compute cost has followed a predictable path: GPUs became cheap, then abundant, then cheap enough for home users. Training costs followed a similar arc. Data became abundant. Infrastructure (cloud providers) became mature.

The result is that AI capabilities that were expensive luxuries in 2015 became commodity services by 2023. API access to state-of-the-art language models costs pennies per thousand tokens. This makes deployment at scale economically viable for the first time.

This is why we suddenly see AI everywhere. Not because of a breakthrough. Because the cost curve finally aligned with the capability curve. It's not magic. It's just economics.

The same principle applies to the next wave of AI capabilities. The breakthroughs in multimodal learning (combining vision, language, audio) already exist. They're just expensive to train and deploy. As costs drop, adoption will accelerate. This will seem sudden. It's not. It's just economics playing out.

Where AI Goes Next: The Kurzweil Framework

If Kurzweil's framework holds (and it has so far), where does AI go next?

Based on his logic:

More narrow systems will become better: We'll see AI that's superhuman in specific domains: medical diagnosis, financial analysis, code generation, design, scientific research. Each will be incredibly narrow but incredibly capable.

These will be combined into broader systems: We'll see platforms that combine multiple specialized AI systems to handle more complex tasks. Your personal AI assistant might combine language understanding, visual processing, reasoning, memory, and domain expertise to be genuinely helpful.

Economics will determine speed: How fast this happens depends on how quickly compute costs drop and infrastructure matures. If progress continues at current rates, we're looking at another 5-10 year acceleration before the next wave of capabilities.

The consciousness question will remain open: We still won't know whether artificial systems become conscious as they get more capable. This will remain a philosophical puzzle, not a technical one.

Everything will seem normal once it works: In 2030, the capabilities we find shocking in 2025 will seem ordinary. AI will be in the background. We'll be shocked about something new.

This is Kurzweil's real prediction: not that AI will be good at specific things (that's obvious), but that progress will follow a predictable economic trajectory and that our perception of what's "really" AI will keep shifting as technology matures.

The Missing Piece: Human Purpose

The original question that started the 1991 interview was provocative: "What will people do in the year 2050, given the enormous intellectual power computers are likely to have?"

Kurzweil didn't give a simple answer in the available excerpt. But it's the most important question. Because technical capability and human purpose are different things.

Having intelligent machines doesn't automatically tell you what humans should do with their time. It doesn't answer questions about meaning, purpose, or what constitutes a good life.

This is actually where Kurzweil's economic framing breaks down. You can predict when a technology becomes cheap. You can't predict what humans will choose to do with it. You can't predict what they should do.

Some humans will use AI to become more productive. Others will use it to become lazier. Some will build businesses. Others will struggle with unemployment in affected sectors. Some will find new meaning. Others will lose it.

The technology is deterministic. Human response is not.

This is the gap between technical prediction (what Kurzweil was great at) and human prediction (which remains hard). We can predict the capability. We can't predict how humans will respond to it.

Why Kurzweil's Framework Still Applies

There's a meta-observation here. Kurzweil's 1991 framework for thinking about AI has remained accurate for 35 years through multiple cycles of hype and disappointment. Why?

Because he wasn't predicting specific breakthroughs. He was predicting economic forces. Moore's Law. The decreasing cost of computation. The expanding role of digital technology in all domains.

These forces are remarkably robust. They've driven tech progress for 50+ years. They're likely to drive it for 50+ more (with eventual physical limits, but that's a while away).

Kurzweil understood that AI isn't special. It's just the latest application of these economic forces. Digitize something, apply computation, iterate rapidly, and you get exponential improvement in that domain.

As long as computation is getting cheaper and faster, this process continues. As long as data is abundant, this process accelerates. As long as investment flows toward it, this process persists.

There's nothing mystical here. There's no secret sauce. It's just that every time you apply computation to a new domain, you get predictable improvements at predictable timescales.

Kurzweil understood the pattern. That's why his 35-year-old predictions still hold.

The Lesson for 2025 and Beyond

What should we take from Kurzweil's 1991 insights as we navigate 2025?

Focus on economics, not hype: When evaluating AI investments or strategies, ask about cost curves, not technical miracles. What's the economic trajectory? Will this get cheaper or more expensive? That determines whether it matters.

Successful AI gets invisible: The AI that matters isn't the one you're talking about. It's the one that becomes so useful that you stop thinking about it as AI. Watch for the quiet wins, not the flashy ones.

Narrow dominates: General-purpose AI is less likely than extremely good narrow AI. Better to build the world's best invoice recognition system than a mediocre everything system.

Pattern recognition is the game: AI is good at finding patterns. Use it for tasks where pattern recognition helps. Don't use it for tasks that require common sense or novel reasoning (it's terrible at those).

The consciousness question is still open: Don't get caught in debates about whether AI is "really" intelligent. Focus on whether it's useful. The question of machine consciousness is interesting but doesn't affect whether AI works.

Human purpose is the missing piece: Technical capability doesn't determine human meaning. That remains your choice. Make sure you're thinking about what you want, not just what's possible.

Kurzweil's 1991 framework was powerful because it separated the technical trajectory (predictable) from the human response (unpredictable). We can predict when AI gets good at something. We can't predict what humans will want to do about it.

FAQ

What exactly did Ray Kurzweil predict in 1991?

Kurzweil made several key predictions in his 1991 Computerworld interview. He predicted that AI doesn't fail—rather, successful AI technologies become invisible and stop being called AI (like machine vision and speech recognition). He stated that pattern recognition, not reasoning, constitutes 80-90% of human intelligence, making it the key to building intelligent machines. He predicted that economic forces (cheaper computation, more available data) would drive AI progress more than scientific breakthroughs. He also emphasized that AI systems would remain narrow and specialized for the foreseeable future, becoming genuinely capable only when combined with other specialized systems. These predictions have proven remarkably accurate through 2025.

How accurate were Kurzweil's predictions compared to what actually happened?

Kurzweil's accuracy is striking across multiple dimensions. His observation about AI succeeding then becoming invisible has played out exactly: image recognition, speech-to-text, recommendation systems, and spam filtering all followed this pattern. His emphasis on pattern recognition as the core of intelligence correctly predicted how modern machine learning actually works. His focus on economics proved more important than technology—the AI boom of 2023-2025 happened not because of new breakthroughs, but because compute costs finally aligned with capability. His prediction about narrow AI systems dominating remains true: specialized AI (medical diagnosis, code generation, image synthesis) is far more capable than attempts at general AI. The main area he underestimated was the speed and cultural impact of large language models like Chat GPT, which scaled faster than historical precedent would suggest.

Why did Kurzweil focus on economics instead of technology?

Kurzweil recognized that computing follows predictable economic curves governed by laws like Moore's Law (computing power doubling roughly every 18-24 months). He understood that AI breakthroughs require not just new algorithms or architectures, but the ability to deploy them at scale. An AI algorithm that requires a million dollars in compute to run once isn't useful commercially, even if it works. But that same algorithm becomes revolutionary when costs drop and it can run for cents. By focusing on cost curves rather than technical breakthroughs, Kurzweil identified the true driver of progress. This proved prescient: the transformer architecture (foundation of Chat GPT) was published in 2017 but didn't become revolutionary until 2022 when sufficient compute became available at reasonable cost. Economics determined timing more than innovation.

What did Kurzweil get wrong about AI?

Kurzweil's framework was remarkably accurate, but a few predictions missed or were underestimated. He probably didn't anticipate how quickly general-purpose language models would scale and become useful—Chat GPT's growth trajectory (100 million users in 2 months) exceeded typical adoption patterns he might have predicted. He briefly mentioned potential risks from superintelligent AI but appears to have underestimated how hard the alignment problem (ensuring AI does what we want) would prove to be in practice. He also seems to have slightly underestimated the cultural disruption of AI—when machine vision became ubiquitous, it didn't spark major philosophical debates, but Chat GPT did. Finally, his confidence in continuing Moore's Law progress may face physical limits sooner than expected, with some machine learning researchers noting efficiency gains are slowing in traditional scaling approaches.

Is Kurzweil's pattern recognition insight still valid with modern AI?

Completely. Modern AI, especially large language models, proves Kurzweil's insight correct at scale. Chat GPT and similar models are fundamentally pattern-matching systems trained on human text. They don't reason in a deliberative sense; they recognize statistical patterns in how words relate to each other and generate continuations based on those patterns. The insight that human intelligence is mostly pattern recognition (with memory and speed for narrow domains) explains both why modern AI works so well and where it struggles. It works brilliantly for tasks that are fundamentally pattern recognition (language, images, music). It struggles with tasks requiring common sense, novel reasoning, or physical intuition—domains where pattern matching alone isn't sufficient. This validates Kurzweil's framework perfectly.

What does Kurzweil's work suggest about future AI progress?

Based on Kurzweil's framework, future progress will follow predictable patterns: narrow AI systems will become increasingly superhuman in specific domains (medical diagnosis, scientific research, design), while general-purpose AI remains elusive. Economic factors will determine speed—as compute costs drop and training becomes cheaper, capabilities that seem cutting-edge in 2025 will seem ordinary by 2030. We'll likely see more hybrid systems combining multiple specialized AI tools rather than single unified "general" AI. The consciousness question will remain philosophically open but technically less relevant. Most importantly, successful AI will increasingly become invisible—people will stop calling it AI and just call it a feature or normal software. Disruption will come not from sudden breakthroughs but from familiar technologies gradually becoming cheap and ubiquitous enough that they transform industries.

Why do people still debate AI consciousness when Kurzweil avoided the question?

Kurzweil avoided claiming definitive answers about machine consciousness because the question fundamentally depends on how you define consciousness. If consciousness requires biological neurons, then AI consciousness is impossible. If consciousness emerges from sufficient complexity and self-awareness, then perhaps AI could develop it. If consciousness is just subjective experience, we have no way to verify it in any system (we can't even prove other humans are conscious, technically). Kurzweil treated it as a genuine open question rather than a settled issue, which remains wise. The technical capabilities of AI will keep improving regardless of whether they're "really" conscious. Focusing on whether systems are useful or dangerous is more practical than debating their inner experience.

How should businesses apply Kurzweil's framework today?

Businesses can apply Kurzweil's economic framework in several ways. First, focus on domains where narrow AI dramatically outperforms humans—these are the investments that will mature into permanent competitive advantages. Second, prioritize adoption before competitors do, understanding that successful AI will eventually become commonplace. Third, evaluate AI investments based on cost trajectory and scalability, not current capability—technologies that are expensive today may be commodities in 3-5 years. Fourth, prepare for the invisibility problem: AI that transforms your business probably won't be called "AI" in five years; it'll just be how you operate. Finally, remember that human purpose still matters—having an intelligent system isn't the same as knowing what to do with it. The companies that win will combine AI capability with clear strategic vision.

Looking Back at 1991 from 2025: What Time Has Taught Us

In some ways, Kurzweil's 1991 interview feels like a time capsule that explains exactly where we are now. The specific technologies he mentioned (machine vision, speech recognition) are now ubiquitous. The economic framework he used (cheaper computation drives adoption) exactly describes how we got Chat GPT. The warning about pattern recognition being central to intelligence perfectly predicts how modern deep learning actually works.

But in other ways, his 1991 perspective feels quaint. He was imagining a world where intelligent machines would gradually automate specific domains one at a time. Instead, we got general-purpose language models that touch dozens of domains simultaneously. He was thinking in terms of expert systems and specialized applications. Instead, we got systems trained on the entire internet that can do moderately well at almost anything.

This isn't a failure of his framework. It's the framework working exactly as predicted, just faster and weirder than expected. The economics of cloud computing and GPU scaling happened faster than the 1991 Kurzweil might have imagined. The availability of digital training data increased exponentially. The architectural innovations (transformers, scaling laws) turned out to be more powerful than expected.

So what's the real takeaway? Kurzweil was right about the underlying pattern: progress would follow economic forces, not technical miracles. AI would work by recognizing patterns. Successful AI would become invisible. The limiting factor would be when capabilities became cheap and practical, not whether they were possible.

But he also reveals that even the best predictive frameworks can't account for exactly how fast or in what unexpected ways progress might accelerate. The future rarely arrives on schedule. It's just usually weirder than we predict.

For anyone trying to navigate AI in 2025, Kurzweil's lesson is powerful: focus on fundamentals (cost curves, capability distribution, pattern recognition as the core mechanism) rather than hype cycles. Build assuming success gets boring faster than you expect. Invest in narrow excellence that can combine with other systems rather than general mediocrity. And remember that technical capability and human purpose remain separate questions.

Thirty-five years after his Computerworld interview, those insights are still guiding how the smartest people in technology think about AI. That's not luck. That's because Kurzweil understood something deeper than just predicting which technology would win. He understood the pattern of how technology transforms society. That pattern is as relevant in 2025 as it was in 1991. And probably will be in 2060 too.

Key Takeaways

- Kurzweil correctly predicted that successful AI gets rebranded as normal software once it works, which explains why image recognition and speech-to-text aren't considered AI anymore

- His insight that 80-90% of human intelligence is pattern recognition perfectly predicts how modern machine learning actually functions at scale

- Economics (falling compute costs) drives AI progress more than breakthroughs, explaining why transformers from 2017 became revolutionary in 2022-2023

- Narrow AI systems remain more capable than general AI, validating 35 years of predictions about specialization outperforming generalization

- The consciousness question remains philosophically open but technically irrelevant—what matters is whether AI is useful, not whether it's truly intelligent

![Ray Kurzweil's 1991 AI Predictions: Why They're Eerily Accurate Today [2025]](https://tryrunable.com/blog/ray-kurzweil-s-1991-ai-predictions-why-they-re-eerily-accura/image-1-1770237533895.jpg)