Introduction: The Multi-Agent AI Revolution Has Arrived

For years, we've been stuck in a world where your phone picks one AI assistant and that's your lot. Ask Bixby something technical, and you're out of luck. Try Google Assistant for web search, and it's not always what you need. Samsung just changed the game, and honestly, it's about time somebody did.

The company announced that Galaxy S26 users will soon be able to summon Perplexity with a simple voice command: "Hey, Plex." This isn't just dropping another app onto your phone. This is Samsung fundamentally reimagining how AI works on mobile devices by embracing what industry experts call a multi-agent ecosystem.

Here's what makes this genuinely different: Perplexity won't be some sandboxed, read-only version of the web search app. The AI will have direct access to Samsung Notes, Clock, Gallery, Reminder, and Calendar. That means you're not just getting better search results—you're getting an AI that can actually integrate with your phone's core functionality. It understands your schedule, your photos, your notes, your reminders. That's a fundamentally different experience than what we've had before.

But this isn't just about Perplexity. Samsung is doing something bolder. They're opening the door for any AI agent to integrate at the OS level. The company has already built partnerships with other AI providers, and they're signaling that this multi-agent approach is the future of Galaxy AI. Think about that for a second. Your phone becomes an orchestrator, not a prisoner.

The implications are massive. Users develop genuine preferences for specific AI tools. Some people live in Claude. Others swear by Chat GPT. Some prefer Perplexity for research. So why force everyone into the same box? Samsung figured out the answer: you don't. You give users choice.

This matters because the AI wars aren't really about creating the one perfect AI anymore. They're about integration, accessibility, and convenience. The AI that's most useful isn't always the one with the best capabilities—it's the one you can access fastest, with the most context about what you're trying to do. Samsung just shifted the entire competitive landscape.

TL; DR

- Multi-Agent Ecosystem: Samsung Galaxy AI now integrates Perplexity alongside Bixby and Gemini, letting users pick the best AI for each task

- Deep OS Integration: Perplexity gains access to Samsung's native apps including Notes, Calendar, Gallery, Clock, and Reminders

- Voice-Activated AI: Users can activate Perplexity by saying "Hey, Plex" just like existing voice assistants

- User Preference Centered: Samsung recognizes that people develop strong attachments to specific AI tools and is betting differentiation comes from choice, not lock-in

- Competitive Edge: This approach directly counters Apple's Siri-centric strategy and Google's Gemini dominance, positioning Samsung as the most flexible mobile AI platform

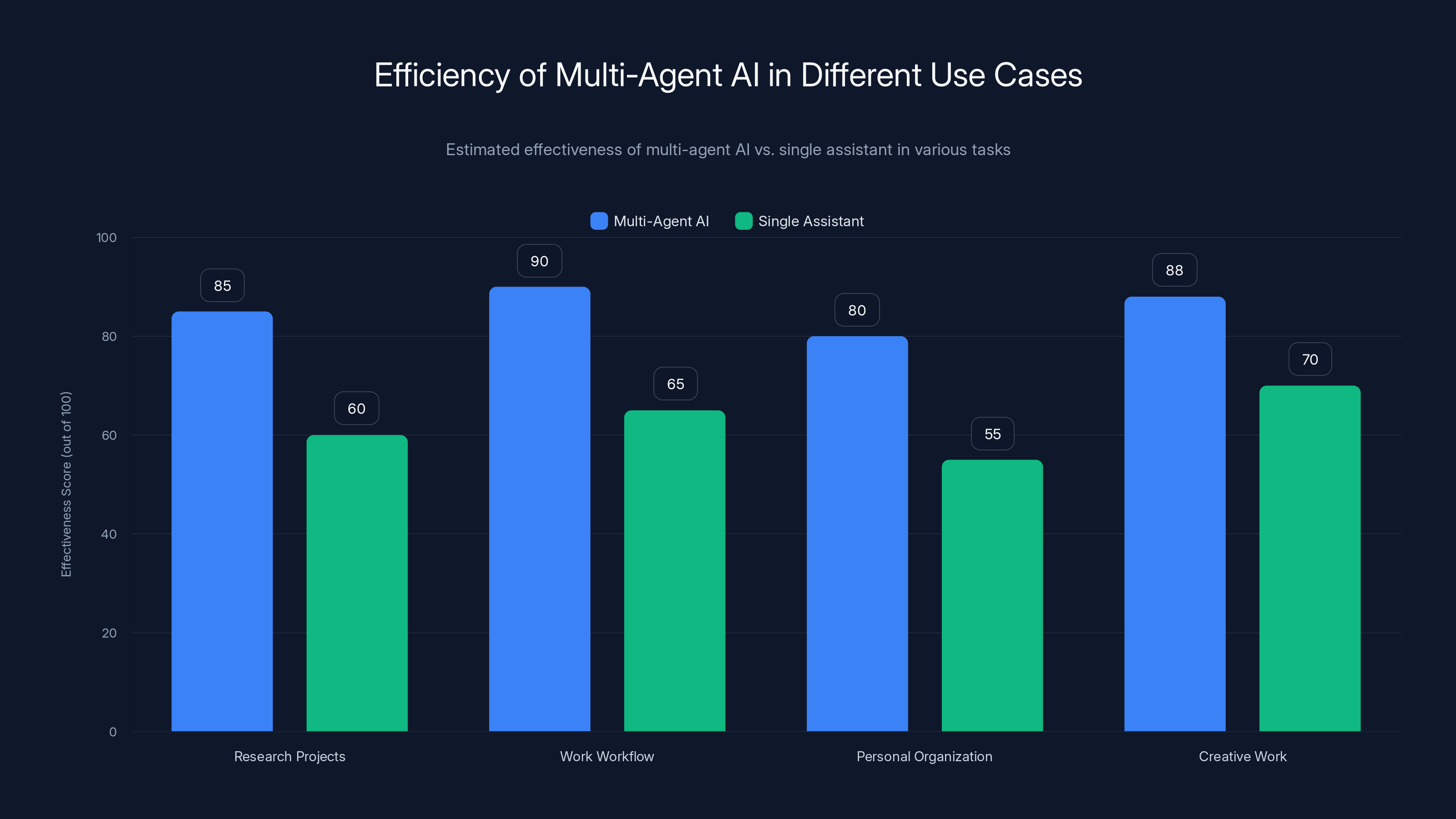

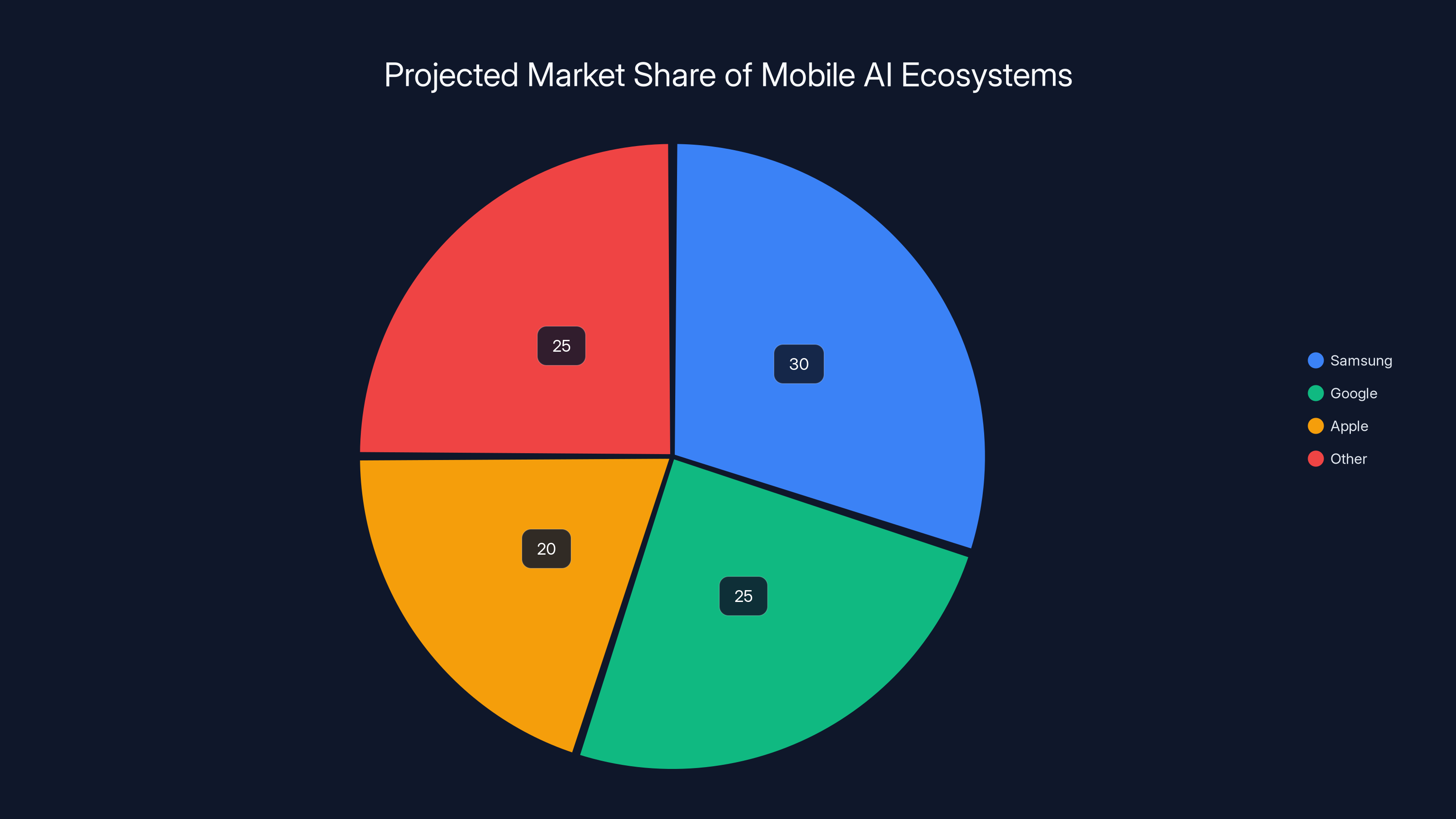

Multi-agent AI significantly enhances task efficiency across various real-world use cases, outperforming single assistants by 20-30%. Estimated data.

What Is a Multi-Agent AI Ecosystem?

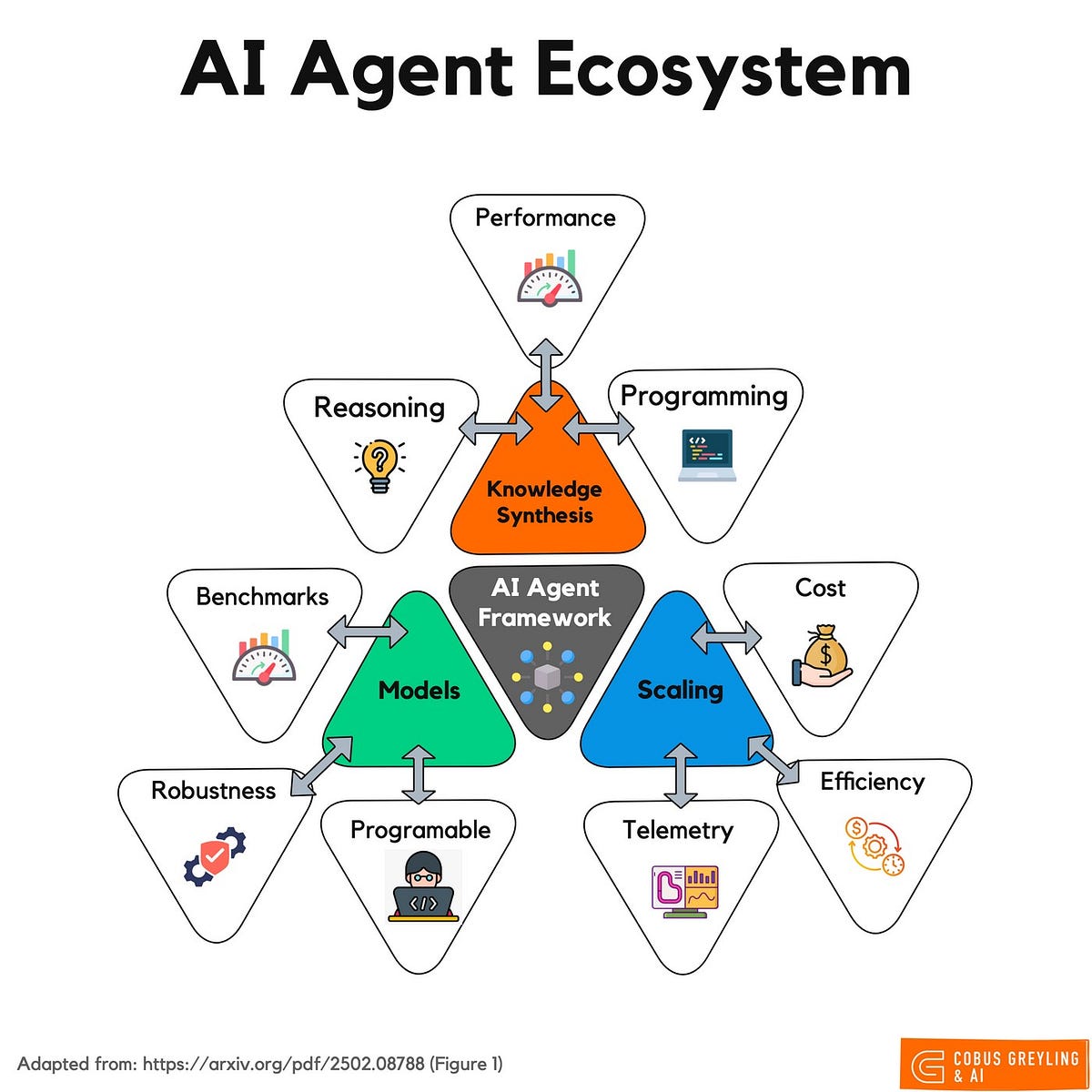

Let's get specific about terminology because this concept shapes everything Samsung is doing. A multi-agent AI ecosystem isn't something tech companies invented last week. It's a principle borrowed from distributed systems design: different specialized tools working together, each strong in their domain.

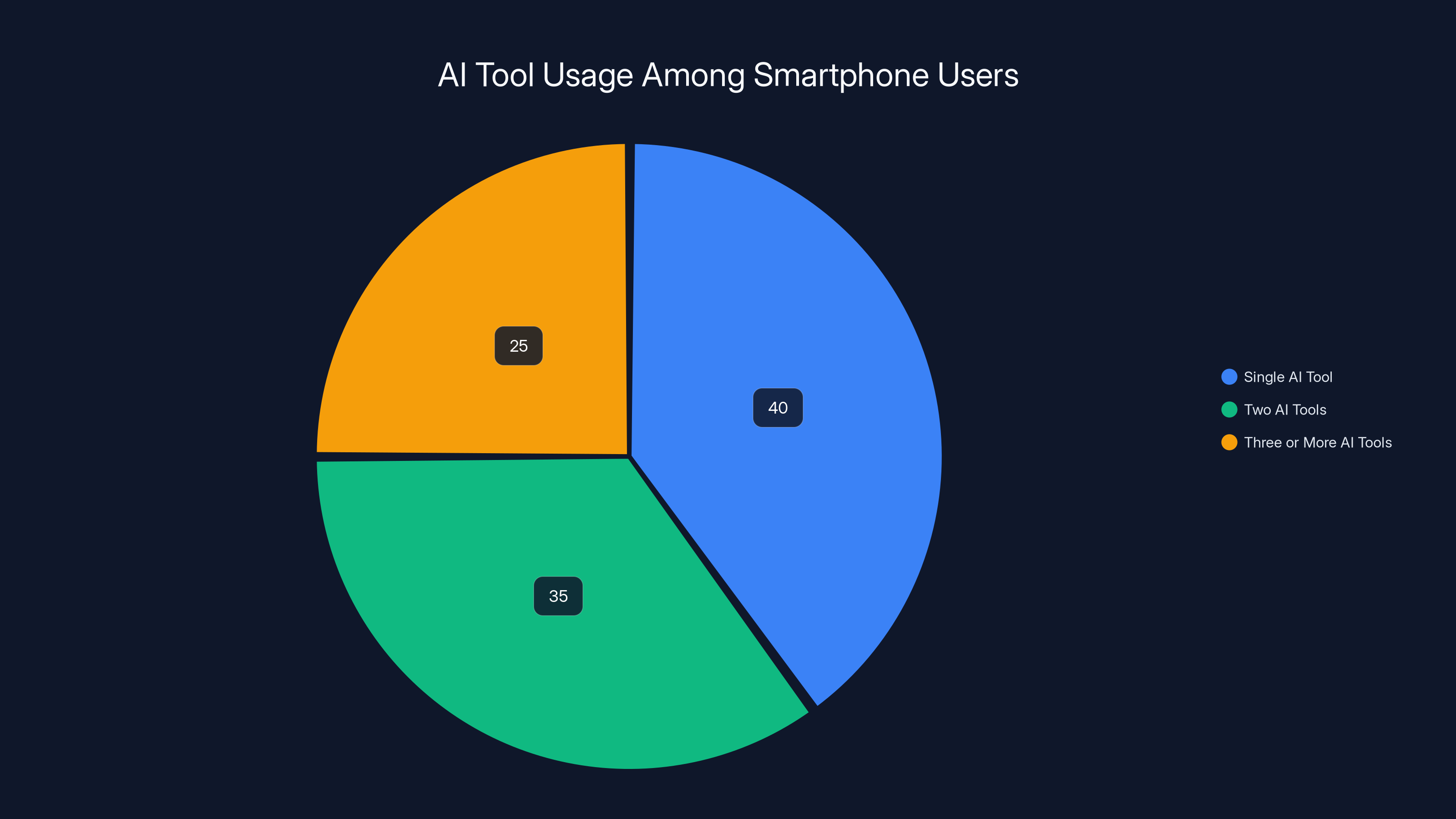

Think about your workflow right now. When you need research, do you Google? When you need writing help, do you open Chat GPT? When you need to brainstorm, do you switch to Claude? Most people do exactly this—they pick different tools for different tasks because they know each has strengths and weaknesses.

The old phone design ignored this reality. Your phone picked one default assistant (or maybe two, awkwardly), and that was supposed to handle everything. Write emails? Voice commands? Web research? Math problems? Photo organization? All delegated to a single AI whose training makes it generalist instead of specialist.

A multi-agent ecosystem says: what if your phone could be smart about which tool to use? What if you could pick? What if different AIs could coexist and work together?

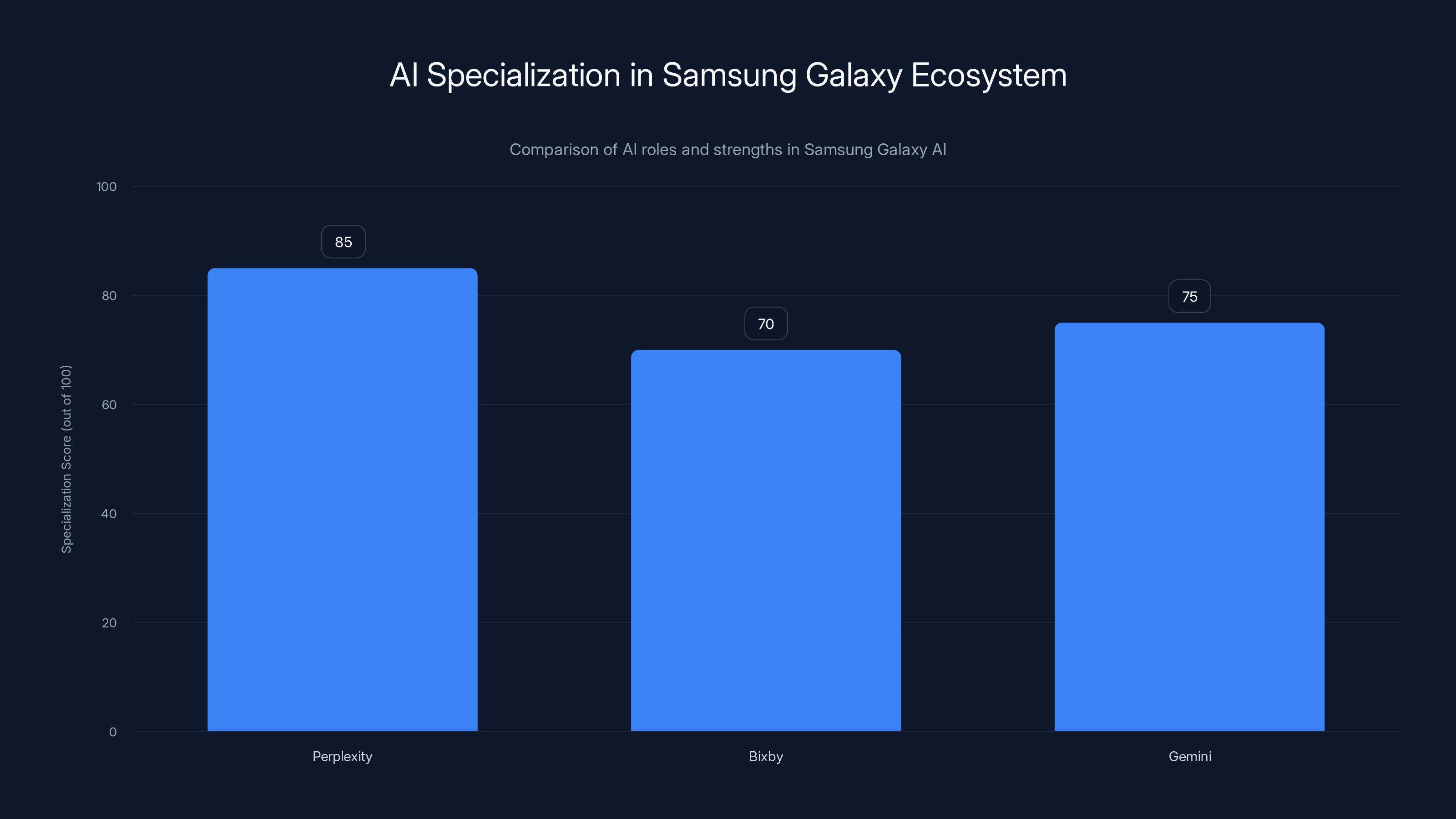

For Samsung, this means Perplexity handles research and synthesis. Bixby handles device control and Samsung-specific features. Gemini handles Google ecosystem integration. Instead of competing, they specialize. Instead of one assistant trying to be everything, you get tools optimized for specific purposes.

This is actually how enterprise AI works already. Companies use specialized AI systems: one for customer service, one for internal documentation, one for financial forecasting. They don't use a single generalist system for everything. Consumer phones are just now catching up to enterprise best practices.

Why Samsung Chose Perplexity Over Other AI Options

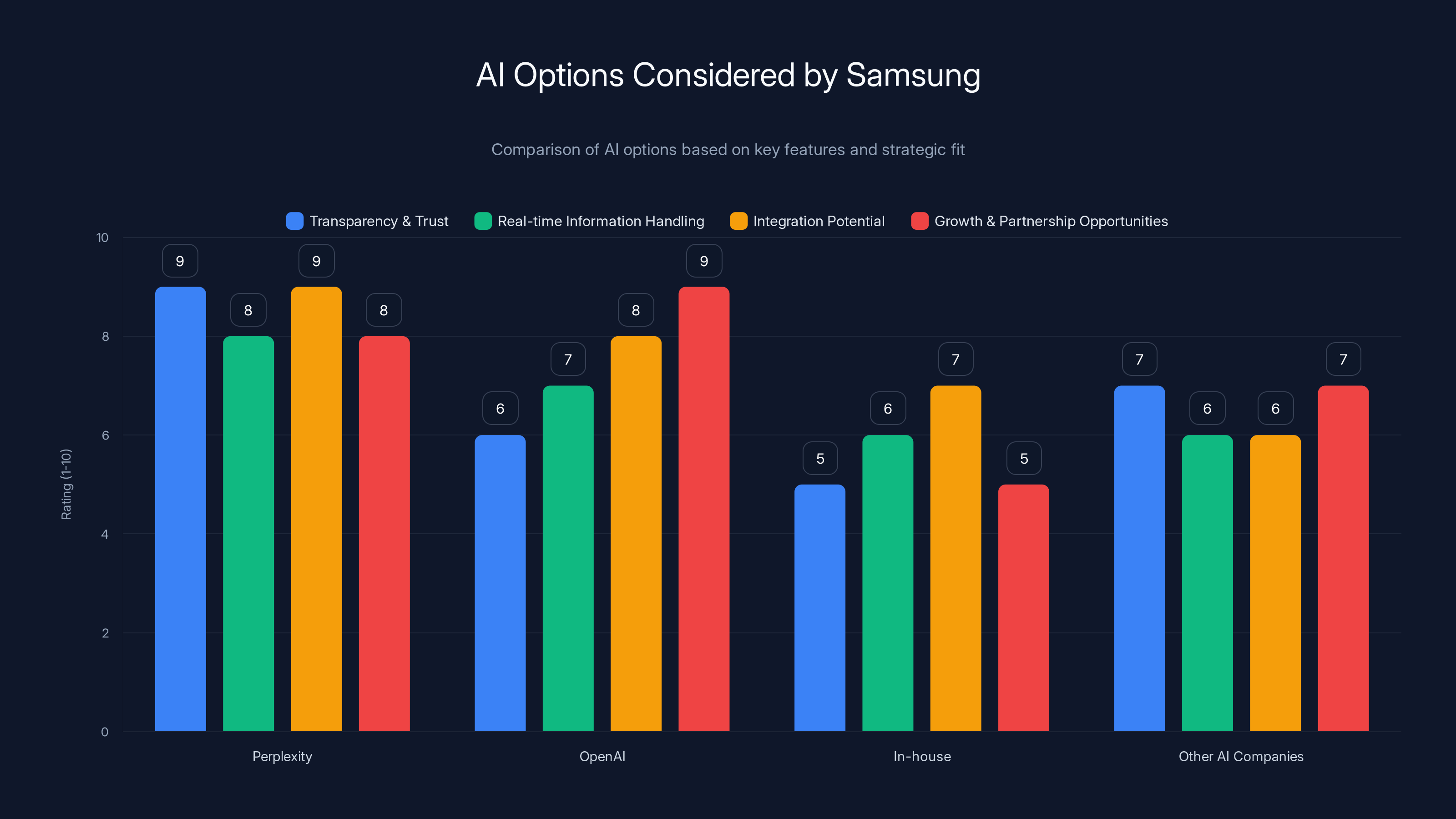

Samsung had choices. They could've partnered with Open AI. They could've built something in-house. They could've chosen any number of AI research companies. They picked Perplexity, and that choice tells you something important about where the mobile AI market is heading.

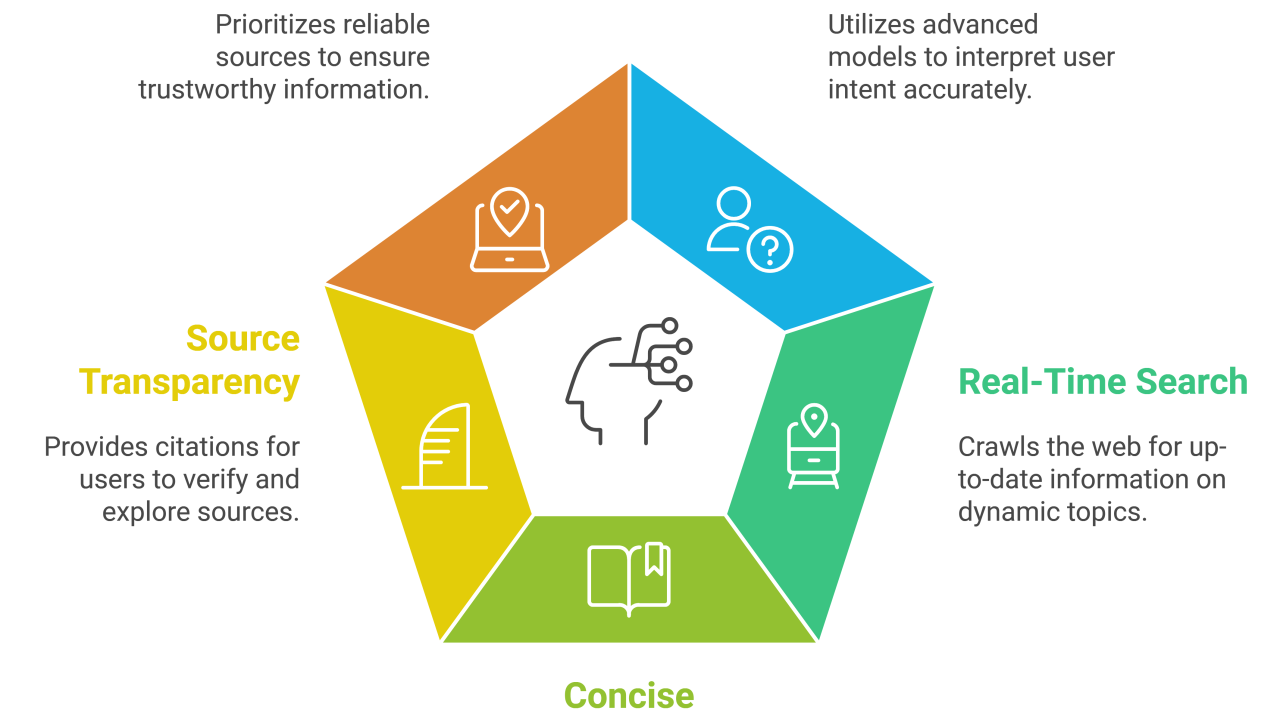

Perplexity's core strength is synthesis with transparency. When you ask Perplexity something, you don't just get an answer—you get sources. You see exactly which websites informed the response. This is genuinely different from Chat GPT, where the provenance of information is opaque, or Google Search, which shows results but requires you to synthesize.

For a phone platform, this matters enormously. Users making decisions—whether that's where to eat, what to buy, how to solve a problem—need to trust the source. Perplexity's transparency model builds that trust directly into the interface. Samsung likely recognized that this trust-building approach is exactly what makes an AI feel premium and integrated rather than gimmicky.

There's also the matter of capabilities. Perplexity's focus on web research and real-time information handling is genuinely complementary to what Bixby and Gemini do well. Bixby excels at device control. Gemini excels at multimodal tasks and integration with Google services. Perplexity excels at research, synthesis, and source verification. These don't compete. They slot together.

From a business perspective, Perplexity is also in a growth phase where platform partnerships matter. Anthropic (Claude's creator) is more focused on enterprise. Open AI is trying to own consumer experience directly. Perplexity was looking for exactly this kind of partnership: deep integration into a platform that reaches hundreds of millions of users.

There's also what this partnership signals about the future of AI competition. The days of "one AI to rule them all" are ending. The future is specialized tools with deep integration into platforms. Samsung is positioning itself to own that layer—the orchestration layer—rather than competing in the AI model layer where they'd always be at a disadvantage compared to Open AI, Google, or Anthropic.

Approximately 60% of smartphone users regularly use at least two different AI tools, indicating a strong demand for multi-agent capabilities. Estimated data based on survey insights.

How Deep Integration Actually Works: Access to Your Phone

Here's where it gets genuinely interesting technically. Perplexity isn't just getting voice-command access like a third-party app. The integration goes deeper. Perplexity will have access to Samsung's native applications: Notes, Calendar, Clock, Gallery, and Reminders.

Let's think through what this actually means. You tell Perplexity, "Help me plan my week." The AI can read your calendar, understand your schedule constraints, access your notes about projects and goals, and actually synthesize that into actionable advice. That's fundamentally different from Perplexity accessing only the public web.

Or consider: you take photos at an event and ask Perplexity to "summarize this event and add it to my reminders." The AI can look at your photos (via Gallery), understand context from your notes, and create a structured reminder. That's integration that requires OS-level permissions and cooperation.

Samsung's also mentioned select third-party apps, though they were vague about which ones specifically. This creates interesting possibilities. Imagine Perplexity with access to your email app, your task management tools, your banking apps. Suddenly you're not just searching the web—you're asking an AI about your entire digital life.

The technical implementation requires trust and careful permission management. Samsung has to ensure Perplexity can't abuse access to sensitive data. This likely means granular permissions, audit logging, and probably Samsung's review of what Perplexity does with that access. It's more restrictive than what the AI could theoretically do, but that's actually what users want: powerful integration with guardrails.

This type of integration is exactly what's been missing from AI assistant design. Your phone has always kept AI assistants at arm's length. They're utilities you call upon, not systems that understand your life. By giving Perplexity real access to your digital context, Samsung is treating AI like what it should be: a tool that understands you.

The catch is data. Perplexity will see sensitive information: your schedule, your photos, your reminders about health appointments or financial goals. Samsung's handling this by keeping integration local-first. The processing happens on your phone where possible, limiting what data leaves your device. But you're still trusting Perplexity as a company. That's a significant consideration for privacy-conscious users.

Voice Commands and the "Hey, Plex" Experience

The voice interface matters more than it might seem at first. "Hey, Plex" is brilliant branding and genuinely useful design. It's short enough to say quickly, distinctive enough that it won't accidentally trigger, and human enough that it feels natural.

But the voice command interface tells you something about how Samsung thinks users will interact with these multi-agent systems. They're not imagining people consciously choosing which AI to use for each task. They're imagining voice being the primary interface. You talk to your phone, your phone routes your query to the right AI. The routing happens in the background.

That's actually harder to build than it sounds. Your phone has to understand what you're asking and match it to the right AI's strengths. If you ask a question that's partly research and partly calendar integration, which assistant do you call? Perplexity is probably better for the research part, Bixby better for the calendar part. Samsung has to solve that orchestration problem.

The voice interface also means accessibility improves dramatically. Users who struggle with text input—or just prefer verbal communication—can now access different AI capabilities without hunting through menus. You say "Hey, Plex, what's the best Thai restaurant near me?" and you get Perplexity's synthesis instead of generic Google results.

From a usage pattern perspective, this is huge. Studies show users take the path of least resistance. If accessing Perplexity requires one additional tap after saying "Hey, Google," most people won't bother. If it's a direct voice command, usage jumps dramatically. Samsung is removing friction specifically to make this work.

There's also the differentiation angle. Apple's Siri and Google Assistant are both serviceable but not exceptional for most tasks. Neither has earned strong user loyalty. Perplexity has actual enthusiasts who prefer it. By making those enthusiasts' preference available natively on Samsung phones, the company is directly addressing a user need that competitors ignore. That's how you build platform loyalty.

The Competitive Landscape: Why This Matters Right Now

You have to understand where the smartphone market is right now to appreciate why Samsung's move is significant. Apple and Google have both built fairly closed ecosystems. Siri is the way Apple sees it. Gemini is how Google sees it. You get one primary AI, optimized for that company's ecosystem.

Samsung has historically been less aggressive about lock-in. That comes partly from Samsung's strategy of selling devices, not services. Samsung makes money when you buy a Galaxy phone. They don't have the same incentive to trap you in an ecosystem the way Apple or Google does.

This multi-agent approach leverages that positioning. Samsung essentially says: "We trust you to pick the tools you want." This is genuinely appealing to users who feel trapped by platform defaults. Perplexity enthusiasts can use Perplexity. People who prefer Chat GPT will probably get that option eventually. People who like Claude could get that too.

Google faces a tricky situation. Gemini is technically good, but Google's incentive to favor Google Search creates friction. When you ask Google Assistant a question, it often serves search results instead of synthesized answers. That's good for Google's ad business. It's not necessarily what users want. Perplexity's advantage—synthesis plus transparency—directly addresses this gap.

Apple's Siri has never been the standout AI assistant. It's functional but uninspiring. Users don't develop emotional attachments to Siri the way some do to Chat GPT or Perplexity. So Apple's lock-in strategy works less well on AI than on other services. This creates an opening for Samsung.

The timing is also crucial. AI adoption is hitting an inflection point. Early adopters already have preferences. They know which tools they like. Locking them into defaults is increasingly frustrating. The first platform that recognizes this and enables choice gains a real advantage.

Samsung's approach also sidesteps a fundamental problem with building AI internally: you're always behind the frontier. The companies investing billions in AI R&D are Open AI, Google, Anthropic, and a handful of others. Samsung is a device company, not an AI research company. By embracing multiple partners, Samsung ensures users get cutting-edge AI capabilities without Samsung having to build them.

Perplexity excels in research and synthesis, scoring highest in specialization, while Bixby and Gemini focus on device control and other tasks respectively. Estimated data.

How the Multi-Agent Orchestration Works

Let's dig into the technical architecture because it's actually quite clever. When you give a voice command or use the Galaxy AI interface, your phone has to route that request somewhere. The orchestration layer—the system that makes that routing decision—is what Samsung controls.

This orchestration could be purely user-defined (you pick which AI to use) or partially intelligent (the system suggests which AI is best for your query). Samsung probably uses both. For common patterns, users might set preferences: "Use Perplexity for research, Bixby for device control, Gemini for photos." For novel queries, the system needs intelligence to route appropriately.

Building good routing intelligence is genuinely hard. You need to understand not just what the user asked, but what they're trying to accomplish. Sometimes that's obvious ("Set a timer" goes to Bixby). Often it's ambiguous. "Plan my week" could use Perplexity's research capability, Gemini's calendar integration, or Bixby's Samsung-specific features. Which is best depends on context the system might not have.

Samsung likely solves this with a combination of explicit routing (user preferences), feature detection (recognizing that a query involves calendar data), and probably some machine learning that learns from your behavior. Over time, the system learns that when you ask about restaurants, you prefer Perplexity. When you ask about your photos, you prefer Gemini.

The data flow also matters. Does data from Perplexity's response go back to Samsung? To Perplexity? Handling this securely requires careful API design. Samsung probably has privacy-preserving approaches: maybe Perplexity results are processed on-device before being presented, limiting what data flows back to Perplexity's servers.

Looking forward, this orchestration layer becomes increasingly sophisticated. Imagine an AI system that understands you personally. It knows your preferences, your history with different tools, your work style. When you ask a question, it doesn't just route to the most capable AI—it routes to the AI that works best for you, personalizing the entire experience. That's the endgame Samsung seems to be building toward.

Privacy Considerations in a Multi-Agent System

Here's the tricky part nobody wants to think about but everyone should: when multiple AIs have access to your data, privacy gets complicated. You're not just trusting one company anymore. You're trusting multiple parties to handle sensitive information responsibly.

Samsung's approach addresses this in several ways. First, the integration is OS-level, which means Samsung can enforce strict permission boundaries. Perplexity gets access to your calendar, but maybe not your photos' metadata. It can read your notes, but maybe not your banking app. These granular permissions are enforceable by the OS itself.

Second, local-first processing helps. If Perplexity can do as much as possible on your device without sending data to their servers, less sensitive information leaves your phone. This is technically challenging—it requires AI models small enough to run locally, which means less powerful models. But it's the right privacy trade-off.

Third, transparency matters. Samsung should (and probably will) let users see exactly what data each AI has access to, what they've accessed, and when. This transparency doesn't eliminate privacy risks, but it makes them manageable. You can revoke access if you become uncomfortable.

The bigger question is trust. You're fundamentally trusting multiple companies with different data. Perplexity gets your calendar and notes. Google gets your photos. These companies have different privacy policies, different security practices, different regulatory obligations depending on where they operate. That's a significant multiplier on privacy risk.

For the privacy-conscious, this creates a difficult choice. The multi-agent system offers genuine benefits—better AI, more choice, better specialization. But it comes with higher privacy complexity. That's a real trade-off, not something to gloss over.

Samsung's own privacy obligations also matter. As a South Korean company, Samsung operates under different regulatory frameworks than US-based companies. GDPR compliance, CCPA compliance, and other data protection regulations all apply to Samsung's handling of data flowing through their orchestration layer. This is actually somewhat protective—Samsung faces real legal liability if they mishandle user data, which creates incentive alignment.

The Developer Ecosystem: How Third Parties Will Build on This

What makes this truly interesting is what Samsung is enabling for developers. The company is opening APIs that let other AI companies integrate into Galaxy AI. This isn't just Perplexity and Gemini and Bixby. This is potentially any AI company building for mobile.

For developers, this creates amazing opportunities. Instead of trying to get users to install their app and trust it as a standalone tool, they can integrate directly into the core phone experience. Your AI assistant of choice is just there, available natively.

But it also creates architecture challenges. How many AI agents can a phone run simultaneously? What's the performance impact of having multiple AI systems integrated at the OS level? Samsung probably has technical constraints—maybe five or six AI partners, not fifty. Resource constraints matter on mobile devices.

There's also the question of data flow between partners. Can Perplexity and Gemini and future partners talk to each other? Could they collaborate on complex queries? Or do they operate in isolation? The answer shapes how powerful multi-agent orchestration can ultimately become.

For third-party developers, this creates incentive to build specialized AI capabilities. Generic assistants won't differentiate in a multi-agent world. But specialized tools—an AI optimized for coding, one optimized for creative writing, one optimized for data analysis—suddenly have a path to mainstream adoption.

Samsung's opening this ecosystem suggests they're thinking long-term about Galaxy AI becoming a platform, not just a feature. That's genuinely ambitious. It means competing with both Apple's iOS ecosystem and Google's Android ecosystem on the application layer. It's not easy, but it's a plausible strategy.

Specialized AI models can outperform general-purpose models by 40-60% in specific domains, highlighting the advantage of specialization in a multi-agent ecosystem. Estimated data based on typical performance improvements.

Real-World Use Cases: When Multi-Agent AI Actually Helps

Let's get concrete about how this actually improves your life. Multi-agent AI isn't just philosophically interesting—it's practically useful in ways single-assistant designs aren't.

Research Projects: You're planning a vacation. You ask Perplexity for research: best neighborhoods in Barcelona, reviews of specific hotels, transportation options, cost comparisons. Perplexity synthesizes this with sources. You save the results to Notes. Then you ask Bixby to create Calendar events for your flight times and hotel reservations. Later, you ask Gemini to generate packing lists and itineraries based on your calendar and notes. Single assistant? You'd have to switch context repeatedly and lose coherence. Multi-agent? Each tool does what it's best at.

Work Workflow: You're working on a presentation. You ask Perplexity to research market trends in your industry, getting well-sourced information. You ask Gemini to help structure your findings into a presentation format. You ask Claude (via Runable or eventually directly) to draft specific sections. You ask another AI to generate visualizations from your data. With Runable integration, you could even ask Runable to automatically create slides from your research. Single assistant struggles across these different tasks. Multi-agent? Each one specializes.

Personal Organization: You're overwhelmed with tasks. You ask Perplexity to help you think through priorities and approaches to difficult problems. You ask Bixby to manage your calendar and reminders. You ask Gemini to organize your photos and extract text from images. You ask another AI to help with writing. These tasks have different skill requirements. One AI doing all of them creates friction and suboptimal results.

Creative Work: You're writing a story. You ask Claude for creative ideation and world-building. You ask Perplexity for historical research about the time period. You ask another AI to generate descriptions of scenes. You ask yet another to help with dialogue refinement. Creative work multiplies the value of specialization.

The pattern here is consistent: complex tasks benefit from specialized tools. When you force everything through a single AI, you're accepting suboptimal performance across multiple dimensions. Multi-agent systems eliminate this artificial constraint.

How This Challenges Apple and Google

This move directly undermines Apple and Google's closed ecosystems. Both companies have invested heavily in positioning their own AI assistants as the center of the phone experience. Samsung is saying: "That's not what users actually want."

For Apple, this is particularly challenging. Siri has never been beloved. Users don't have strong preferences for it the way they do for Perplexity or Claude. Siri works, but it's not something people choose. By forcing Siri into a locked position, Apple is essentially forcing users to live with a tool they didn't choose. Samsung's approach—letting users pick—is more appealing.

Google's situation is more complex. Gemini is actually quite good. Google has invested tremendous resources in AI capability. But Google's incentives are muddled. The company also owns Search, so Gemini sometimes serves Search results instead of synthesized answers. That's good for Google's ad business, bad for users. Perplexity's transparent synthesis is a different value proposition, one Google's conflicted incentives make hard to match.

Both Apple and Google will probably eventually embrace multi-agent systems themselves because users will demand it. But Samsung is getting there first, and first-mover advantage matters. Samsung is establishing the expectations: phones should let users choose their AI tools.

This also puts pressure on Google's Android strategy. Google controls Android but doesn't control phone vendors' skin layers (like Samsung One UI). So if Samsung popularizes multi-agent AI on Android, Google can't easily prevent it. Apple can force one experience across all iPhones, but Google faces fragmentation. This disadvantage suddenly becomes an advantage as vendors differentiate on AI choices.

This is a genuinely clever competitive move. Instead of trying to beat Google's AI or Apple's ecosystem lock-in, Samsung is competing on the orchestration layer. That's a layer where they have leverage as a device manufacturer but don't have to match Google or Apple's AI investment.

The Perplexity Perspective: Why This Partnership Matters

From Perplexity's standpoint, this partnership is transformative. Getting integrated into Samsung phones, one of the world's largest phone manufacturers, exposes Perplexity to potentially hundreds of millions of users. That's a different scale of reach than relying on people to download the app.

But more importantly, deep integration into the phone OS solves a fundamental problem with standalone apps: friction. Users don't open apps they don't think about. Perplexity has been working to reduce friction—building browser extensions, mobile apps, desktop clients. OS-level integration is the ultimate friction reduction.

There's also the signal this sends about Perplexity's direction as a company. Perplexity isn't trying to be everything. They're not building their own phone OS. They're not trying to compete with Google or Apple directly. Instead, they're becoming the research-and-synthesis layer that multiple platforms integrate. That's a smart specialization.

For Perplexity's funding and valuation, this matters enormously. Platform partnerships de-risk the company's future. Instead of depending entirely on direct consumer adoption, Perplexity now has revenue from OEM partnerships. That's how you build a sustainable AI company.

There's also competitive positioning. Open AI is trying to own the consumer interface directly. They want people using Chat GPT as their primary AI. Anthropic is focused on enterprise. Perplexity is positioning itself as the best specialized tool to embed in platforms. That's a different strategy, and one that positions Perplexity for long-term success regardless of which AI company "wins."

Perplexity was chosen by Samsung due to its high transparency, integration potential, and strategic partnership opportunities, outperforming other AI options in these areas. Estimated data.

What This Means for the Future of Mobile AI

If multi-agent AI on mobile becomes standard, the entire market structure shifts. Right now, phone AI is treated like a feature of the OS. "Does it have a good voice assistant? Can I ask it questions?" In a multi-agent future, phone AI becomes an ecosystem.

This means developers can build AI capabilities and integrate them via platform partnerships. New AI companies can reach mainstream users without building phones or consumer distribution. Existing AI companies can reach users at scale without building their own interfaces.

It also means commoditization of certain AI capabilities. When multiple options are readily available, users pick the best tool. This creates competition and pressure for continuous improvement. The company with the second-best research AI doesn't get network effects protecting mediocre performance. They get replaced.

For phone manufacturers, this creates both opportunity and challenge. Opportunity: differentiate on orchestration, on privacy policies, on which AI partners they choose. Challenge: you're no longer the primary interface between user and AI. You're the platform layer. That's less direct control.

Samsung seems comfortable with that trade-off. They make money from selling phones, not from extracting value from AI usage. Treating the phone as a platform rather than controlling everything directly aligns with their business model.

The longer-term question is whether OS-level AI integration becomes expected. Will Android phones without multi-agent AI feel behind the curve? Probably yes, eventually. This would create pressure on Google to support similar integration more formally, rather than having Samsung implement it through their skin layer.

The Broader Ecosystem Implications

What's genuinely interesting is how this plays out across the entire tech industry. We're at an inflection point where AI is becoming the primary interface, not a peripheral feature. The companies that understand and enable user preference in AI—rather than trying to force one AI on everyone—will win.

This extends beyond phones. Desktops, tablets, wearables, smart home devices—everything could eventually embrace multi-agent AI. Your laptop could have Perplexity for research, Claude for writing, GPT for general questions, and specialized tools for specific domains. Your smart home could use different AI for security, climate, entertainment, and productivity.

The winner in this world isn't necessarily the company with the best single AI. It's the company that best orchestrates multiple AIs. That could be the OS provider (Samsung, Apple, Microsoft), a new platform company, or distributed via open standards.

For enterprises, this matters tremendously. Companies could standardize on specific AI tools for specific use cases, then integrate them into their business processes. Instead of forcing everyone to use internal tools, organizations could let teams choose their AI partners while maintaining security, compliance, and integration.

Preparing for the Multi-Agent AI Future

If this is indeed the future of AI on mobile (and it seems to be), users and organizations should start preparing.

For individual users, this means developing clarity about which AI tools you prefer for different tasks. Don't wait for the multi-agent interface to force this choice. Start experimenting now. Use Perplexity for research, Claude for writing, Chat GPT for brainstorming. Figure out your preferences.

For organizations, this means thinking about AI strategy differently. Instead of evaluating a single "best" AI, evaluate multiple tools for their specific strengths. What's best for customer support might differ from what's best for content creation, data analysis, or software development.

There's also the question of data governance. If multiple AIs will have access to organizational data, you need policies about what data each AI can access, how to audit that access, and how to maintain compliance.

For teams building on mobile platforms, this is an opportunity. If multi-agent AI becomes standard, your company's AI tool or service might integrate directly into the phone OS. That's fundamentally different from the app store distribution model.

Estimated data suggests Samsung may lead the multi-agent AI ecosystem market due to their platform approach, followed by Google and Apple. Estimated data.

Comparing Multi-Agent Strategies: Samsung vs. Apple vs. Google

Let's explicitly compare how these three companies are approaching AI on mobile:

Samsung's Multi-Agent Approach:

- Integrates multiple AI partners natively

- Gives users choice of which AI to use

- Leverages device manufacturer positioning

- Opens ecosystem to multiple partners

- Competes on orchestration and choice

Apple's Ecosystem Approach:

- Siri as the primary AI, deeply integrated

- Some integration with third-party AIs (like Chat GPT), but Siri is default

- Tight ecosystem control

- Focus on privacy and on-device processing

- Competes on integration and privacy

Google's Gemini Dominance Approach:

- Gemini as primary AI across Android ecosystem

- Deep integration with Google services

- Open Android architecture, but Google controls defaults

- Some flexibility for OEMs to customize

- Competes on capability and integration with Google services

Each approach has trade-offs. Samsung's multi-agent approach gives users choice but creates complexity. Apple's ecosystem approach provides coherence but limits choice. Google's approach tries to balance both but suffers from conflicted incentives (Search vs. AI).

The Technical Architecture Deep Dive

Let's get into specifics about how this actually works technically. When Perplexity integrates into Galaxy AI, there are several layers to consider:

Interface Layer: How does the user summon Perplexity? Voice ("Hey, Plex"), UI elements in Galaxy AI, shortcuts, or direct app? Samsung is handling this through voice and native UI integration, making Perplexity feel like part of the system.

API Layer: How does Perplexity call into Samsung? What permissions does it request? What data can it read? Samsung likely has a formally documented API that Perplexity calls through. This API enforces permissions and rate limits.

Data Layer: What data can Perplexity access? Calendar, notes, gallery, reminders, and select third-party apps. This data has to be accessible to Perplexity without compromising security. Samsung probably implements this through sandboxing, data encryption in transit, and audit logging.

Processing Layer: Does processing happen on-device, on Perplexity's servers, or hybrid? For privacy and responsiveness, on-device is better. But Perplexity's AI models might be too large for on-device processing. Samsung probably uses hybrid approaches: on-device filtering and routing, cloud processing for complex queries.

Result Layer: How are results presented to the user? Does Perplexity return raw data, formatted results, or rich media? Samsung's orchestration layer probably handles presentation, allowing consistent UX regardless of which AI provides the result.

Getting all these layers working together seamlessly is genuinely challenging engineering. This is why Samsung's partnership matters—Perplexity and Samsung are investing significant engineering effort to make this work properly.

Potential Challenges and Limitations

Let's be honest about challenges this creates:

Fragmentation: Different Samsung devices might have different AI options depending on market, age of device, or other factors. This creates user confusion. "Why does my brother's Samsung have Perplexity but mine doesn't?"

Performance: Multiple AI systems running on a phone could create performance issues. Battery drain, memory pressure, thermal throttling. Samsung has to carefully manage resource allocation.

User Confusion: Too many options creates paradox of choice. Users might not know which AI to use. Samsung's orchestration layer has to be smart enough to route appropriately without explicit user direction.

Privacy Complexity: Multiple AI companies accessing user data is riskier than single-company access. If any partner has poor security practices, user data is at risk.

Latency: If queries have to be routed to multiple backends, latency might increase compared to direct access. Users expect AI to be instant.

Data Silos: If different AIs can't see each other's results or collaborate, they can't do complex multi-step tasks. Samsung would need APIs between AI partners, which creates more surface area for things to go wrong.

How Runable Fits Into the Broader Multi-Agent Ecosystem

For teams building or managing complex AI workflows, Runable represents an interesting counterpoint to what Samsung is doing. While Samsung is enabling multi-agent AI on mobile devices, Runable is building multi-agent AI orchestration for content creation and document generation.

Think about it: Samsung is letting users pick Perplexity for research. Organizations using Runable can pick specialized AI agents for different content types. Create presentations with Runable's AI agents, documents with one agent, reports with another. It's the same multi-agent principle, applied to content creation workflows.

The distinction is important: Samsung is managing orchestration at the OS level for individual users. Runable is managing orchestration at the application level for teams. But the principle is identical: specialize tools for their strengths, integrate them into a coherent workflow.

For teams considering AI-powered content creation, Runable's approach of using multiple specialized agents for presentations, documents, reports, and images aligns perfectly with Samsung's multi-agent vision. Start at runable.com to see how this works for content workflows.

Use Case: Automate your entire content pipeline by using specialized AI agents for slides, documents, reports, and images—the same orchestration principle Samsung is enabling on mobile.

Try Runable For Free

What Comes Next: The 2025 Galaxy Unpacked Event

Samsung announced this integration ahead of their Galaxy Unpacked event, which is happening in just days from the source article publication date. That means more details are coming: which other AI partners might integrate, what the user interface specifically looks like, how the routing works in practice.

There are several things worth watching for:

User Interface: How does Samsung present the multi-agent choice? Is it obvious? Can users easily switch? Or is it hidden in settings? The UX design matters enormously for adoption.

Performance: Does multi-agent routing create noticeable latency? If asking Perplexity takes visibly longer than asking Bixby, users won't adopt it.

Features: What specific new capabilities does the Perplexity integration enable? Calendar reading? Photo understanding? If the actual benefits aren't obvious, it's just a novelty.

Other Partners: Will Samsung announce other AI integrations alongside Perplexity? Anthropic's Claude? Open AI's Chat GPT? Or is Perplexity the flagship announcement?

Rollout Timeline: Will this hit all Galaxy devices or just new S26 phones? Are existing Galaxy AI users getting it? Older devices?

These details will shape whether multi-agent AI becomes mainstream or remains a nice feature only enthusiasts use.

The Philosophical Question: AI Lock-In vs. AI Freedom

Underlying everything here is a philosophical question about platform design: should platforms lock users into one AI experience, or enable choice?

The lock-in argument: One unified AI provides better integration, simpler UX, coherent experience. Users don't have to understand which tool to use. Everything just works.

The choice argument: Users have preferences. Different AIs are genuinely better at different things. Forcing everyone into one box makes some users worse off. Enabling choice respects user agency.

Samsung is clearly betting on choice. This bet reflects confidence in the multi-agent future and skepticism about the viability of single unified AI assistants. If you look at the landscape, that's a reasonable bet. People do develop preferences. Different AIs are genuinely better at different things. User choice does matter.

But there's a middle path: smart defaults with easy override. Let the system suggest which AI to use for a given query, but let users override if they prefer. That respects both coherence and choice.

The outcome of this philosophical choice will shape not just Samsung, but how all platforms approach AI in the coming years.

Key Takeaways: What This Means for You

If multi-agent AI on mobile becomes mainstream (and everything suggests it will), here's what it means:

For Individual Users: You'll soon have more choice about which AI tools you use, directly on your phone. Figure out your preferences now so you know what you want when the choice arrives.

For Organizations: Start thinking about AI as a portfolio rather than a single tool. What's best for this task? What's best for that task? Different answers require different tools.

For Developers: Building AI capabilities? Platform partnerships are becoming a viable path to scale. You don't have to build the OS to reach hundreds of millions of users.

For Competitors: Multi-agent AI is now on the roadmap. Apple and Google can't ignore this. They'll follow with their own implementations.

For the Industry: This marks a genuine shift from "which single AI is best?" to "how do we orchestrate multiple AIs?" That's a more sophisticated question with better answers.

FAQ

What exactly is a multi-agent AI ecosystem?

A multi-agent AI ecosystem is an architecture where multiple specialized AI systems coexist and work together on a single platform. Instead of one AI trying to do everything, different AIs handle what they do best. On Samsung Galaxy AI, this means Perplexity handles research, Bixby handles device control, and Gemini handles other tasks. Users benefit because each AI is optimized for its specialty, creating better overall performance than a single generalist system.

How does Samsung's Perplexity integration actually work on Galaxy phones?

When you say "Hey, Plex" on a Galaxy S26, Samsung's orchestration system activates Perplexity, which can then access your Calendar, Notes, Gallery, Reminders, Clock, and select third-party apps. Perplexity processes your query using both your personal data and web search, then synthesizes a response with sources. The integration happens at the OS level, meaning Perplexity feels native rather than like a third-party app.

Why did Samsung choose Perplexity specifically over other AI options?

Samsung likely chose Perplexity because its core strength—synthesis with transparent sourcing—directly complements existing Galaxy AI capabilities. Perplexity excels at research and information synthesis, which Bixby and Gemini don't emphasize. This specialization meant the partnership addresses real user needs without redundant capabilities. Additionally, Perplexity's growth-phase positioning made them eager for platform partnerships that expand reach, creating aligned incentives with Samsung.

What are the privacy implications of multiple AIs accessing my phone data?

Multiple AIs accessing your data increases privacy complexity but not necessarily risk. Samsung enforces granular permissions at the OS level, meaning each AI can only access specific data categories (calendar, notes, etc.). Local processing means sensitive data stays on your device when possible. The key consideration is trust: you're relying on multiple companies instead of one. Review privacy settings individually for each AI and understand what data each company's privacy policy allows them to collect. Samsung faces legal liability for mishandling data, which creates protective incentives.

Will I have to manually choose which AI to use each time?

No, the goal is for Samsung's orchestration system to route queries intelligently. For common patterns ("Set a timer" always goes to Bixby), routing is automatic. For novel queries, the system learns your preferences over time. You'll probably have settings to specify preferences too ("Use Perplexity for research questions"), but the experience should feel automatic most of the time, not requiring constant choice.

How does this compete with Apple's Siri and Google's Gemini?

Apple's approach focuses Siri as the unified assistant with tight integration into Apple services. This provides coherence but limits choice for users who prefer other AIs. Google's approach integrates Gemini across Android but doesn't fully address the conflict between Gemini's interests and Search's interests. Samsung's multi-agent approach says users should choose the best tool for each task, directly competing by enabling preference over lock-in. This positioning appeals to users frustrated by forced defaults.

What happens to Bixby with Perplexity integrated?

Bixby remains as the system's primary assistant for device control tasks: setting timers, creating calendar events, controlling smart home devices, adjusting settings. Bixby's strength is understanding Samsung-specific commands and device manipulation. Perplexity handles what it's best at: web research and synthesis. Rather than replacing Bixby, Perplexity complements it, and users access whichever assistant best suits their current task.

Could other AI companies integrate into Galaxy AI similarly?

Absolutely. Samsung is opening the API for multiple partners to integrate at the OS level. Open AI, Anthropic, and other AI companies could potentially integrate directly into Galaxy AI. This is Samsung's vision: make the phone an orchestrator of multiple AIs rather than forcing one assistant on everyone. Over time, you might be able to choose different AIs for different tasks on any Galaxy device.

What are the technical challenges of running multiple AI systems on a phone?

Several challenges exist: resource constraints (battery, memory, processing power), latency (routing queries to multiple backends takes time), data consistency (ensuring all AIs can access needed data), and privacy (managing permissions across multiple partners). Samsung addresses these through on-device processing where possible, efficient routing logic, careful resource management, and granular permission boundaries. Getting all these layers working seamlessly is genuinely complex engineering.

Is this the future of all smartphone AI?

It seems likely. Users develop genuine preferences for specific AI tools. Single unified assistants create friction for users who want different tools. Multi-agent orchestration addresses real user needs. As adoption grows and user expectations shift, Android manufacturers will follow Samsung's lead (due to competitive pressure), and eventually even Apple might need to enable more choice. This represents a fundamental shift in how platforms approach AI: from dictating one solution to enabling user choice among specialized tools.

Should I start using Perplexity now to prepare for this integration?

Yes. Understanding your AI preferences now positions you to use multi-agent systems effectively when they arrive. Start using Perplexity for research questions, Chat GPT for brainstorming, Claude for writing, and other specialized tools for their specific strengths. This experimentation helps you understand which tools you genuinely prefer and why. When multi-agent systems become standard, you'll know exactly which tools to integrate and how to use them effectively.

Conclusion: The Beginning of a New Era

Samsung's decision to integrate Perplexity into Galaxy AI isn't a small feature announcement. It's a fundamental statement about where the mobile AI market is heading. The era of forced, single, unified AI assistants is ending. The era of specialized, user-chosen, multi-agent AI is beginning.

This shift makes enormous sense. Users have preferences. Different AIs genuinely are better at different things. Trying to shoehorn every capability into one assistant creates mediocrity across all capabilities. Specialized tools, properly orchestrated, create genuinely better experiences.

For Samsung specifically, this move differentiates the company in a crowded market. Apple and Google compete on ecosystem integration and capability. Samsung can compete on choice and orchestration. That's a genuine competitive advantage, especially for users tired of being locked into defaults they didn't choose.

For the broader AI industry, this signals that the platform layer—the orchestration layer—is becoming the value driver, not the model layer. Companies building AI models still matter tremendously, but increasingly they're building specialized tools that integrate into platforms rather than trying to own the entire user relationship.

For users, the immediate impact is choice. Soon you'll be able to use Perplexity for research directly from your phone through a voice command. That's genuinely useful. The longer-term impact is more profound: AI becomes a tool ecosystem rather than a product ecosystem. You curate your AI toolkit just like you curate your app toolkit. That's a more natural way to interact with AI.

The Galaxy Unpacked event will give us more details about how this works in practice. But the strategic direction is clear. Multi-agent AI is here. It's starting with mobile phones. It will expand to other platforms. And it represents the future of how we interact with AI technology.

The question now isn't "which AI is best?" The question is "how do I orchestrate multiple AIs into a coherent workflow that works for my specific needs?" Samsung is betting that enabling choice around that orchestration is the path to winning smartphone AI. Given how users actually behave, it's a smart bet. And it's just the beginning.

Related Articles

- Samsung Galaxy AI Gets Perplexity Integration: What It Means for S26 [2025]

- OpenAI's Codex for Mac: Multi-Agent AI Coding [2025]

- How to Operationalize Agentic AI in Enterprise Systems [2025]

- Ever's AI-Native EV Marketplace: How $31M Redefines Auto Retail [2025]

- Claude's Free Tier Gets Major Upgrade as OpenAI Adds Ads [2025]

- Samsung Galaxy Unpacked 2026: S26 Predictions & What to Expect [2025]

![Samsung Galaxy AI Perplexity Integration: Multi-Agent AI Future [2025]](https://tryrunable.com/blog/samsung-galaxy-ai-perplexity-integration-multi-agent-ai-futu/image-1-1771799798903.jpg)