The Bold History of Self-Driving Cars: From 1904 to Modern Waymo

When most people think about autonomous vehicles, they picture sleek Tesla Cybertrucks or Google's Waymo fleet gliding silently through city streets, cameras and LiDAR spinning atop their roofs. But here's the thing: the race to build a self-driving car didn't start in Silicon Valley during the 2010s. It didn't even start in Detroit during the 1950s. It started in Spain, in 1904, when a Spanish engineer named Leonardo Torres Quevedo built a small three-wheeled vehicle that responded to wireless radio signals. No cameras. No neural networks. No algorithms learning from millions of miles of driving data. Just ingenuity, electricity, and an idea so far ahead of its time that it would take another 100 years for the world to catch up.

The journey from Quevedo's telekino to today's fully autonomous vehicles is a story of false starts, broken promises, technical breakthroughs, and the gradual realization that teaching a machine to drive is exponentially harder than anyone initially believed. It's a story about how ambitious visions repeatedly collided with messy reality. It's about how engineers in the 1920s controlled cars by radio, how the 1950s imagined highways of the future that never materialized, how the 1980s and 1990s thought autonomous driving was just around the corner, and how the 2010s finally made real progress using deep learning and computer vision.

Understanding where self-driving cars came from helps explain where they're going. Because every major innovation in autonomous vehicles didn't emerge from nowhere. Each one built on decades of failed experiments, theoretical work, and the persistent belief that one day, humans could surrender the wheel entirely.

TL; DR

- Leonardo Torres Quevedo's wireless telekino (1904) was the first radio-controlled vehicle, demonstrating that machines could be guided without human operators

- 1920s-1930s experiments in Dayton, Ohio and New York City proved radio-controlled cars worked in real traffic, though remained impractical novelties

- 1939 GM Futurama exhibit imagined a fully automated highway system powered by underground electromagnetic cables and guided by wireless signals

- 1950s-1980s experimental vehicles like the Firebird II and Carnegie Mellon's ALVINN proved autonomous driving was technically possible but remained decades away from practical deployment

- 2004 DARPA Grand Challenge catalyzed the modern autonomous vehicle revolution, launching Stanford's autonomous car program and attracting serious research funding

- Deep learning and computer vision (2012-present) have been the actual game-changers, finally giving self-driving cars the perception and decision-making abilities needed for real-world driving

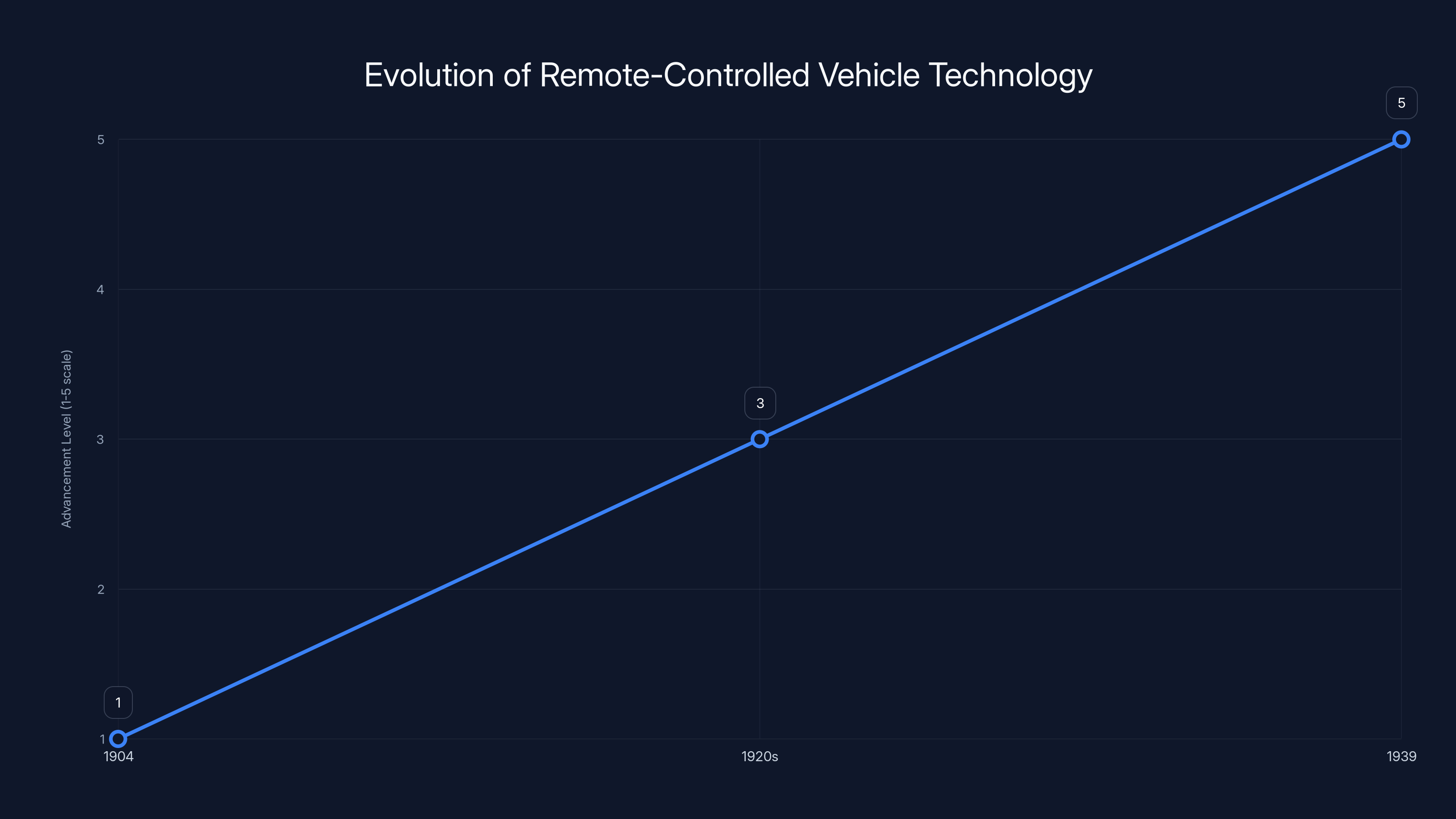

The timeline shows the progression from Leonardo Torres Quevedo's telekino in 1904 to GM's Futurama vision in 1939, illustrating increasing complexity and ambition in remote vehicle control technology. Estimated data.

Leonardo Torres Quevedo: The Forgotten Pioneer

Leonardo Torres Quevedo arrived in the world at an optimal moment for someone with engineering ambitions. Born in 1852 in Santa Cruz, Spain, he came of age during Europe's industrial revolution. But he wasn't simply a factory man or a railroad engineer. He was an inventor with vision, someone who understood that the future would belong to those who could automate the mundane, control the distant, and think several steps ahead of everyone else.

Quevedo is better known for his mechanical chess machine, the Ajedrecista, which he completed in 1914. This device played chess against human opponents with remarkable competence, especially in endgame scenarios. It was, in many ways, the first digital computer dedicated to playing a specific game. But before he conquered chess, Quevedo had already conceived something equally revolutionary: remote control.

In 1902, Quevedo began developing what he called the "Telekino," a name derived from Greek roots meaning "distant movement." The original concept was for aerial ships. Quevedo understood that airships were dangerous, prone to accidents, and difficult to control in uncertain conditions. What if, instead of a pilot being physically present, the airship could be controlled from the ground by wireless signals? This wasn't science fiction. It was engineering.

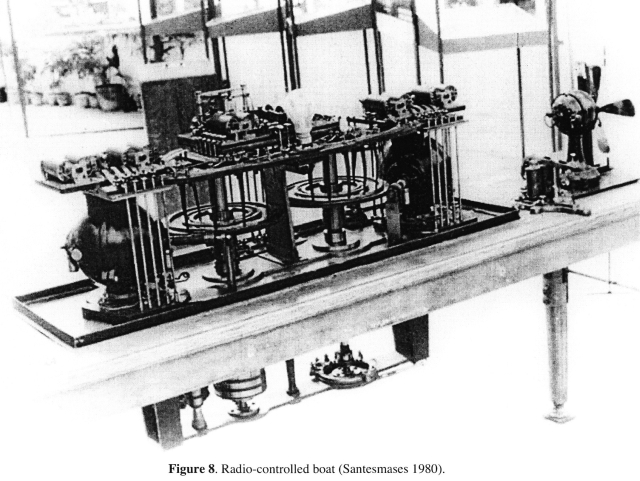

The telekino worked through an ingenious chain of wireless transmission and mechanical response. When Quevedo sent a wireless signal from a transmitter, it reached a receiver called a coherer mounted on the vehicle. The coherer detected the electromagnetic waves and converted them into electrical current. This current was then amplified and directed to electromagnets, which rotated switches that commanded small servomotors. These motors, in turn, controlled the vehicle's steering, throttle, and brakes.

What made this system revolutionary wasn't just that it worked, but that Quevedo could transmit up to 19 distinct commands to a remote vehicle. Nineteen different actions. That's remarkable precision for a system that relied on mechanical switching and electromagnetic pulses. In 1904, Quevedo successfully demonstrated the telekino controlling a small three-wheeled automobile from approximately 100 feet away, making it the first recorded instance of a vehicle being controlled by radio waves.

Quevedo received patents for the telekino in Spain, France, and the United States. The Spanish government, however, proved reluctant to fund the technology at scale. The Crown was cautious about military applications. Without state backing and facing skepticism from industry, Quevedo's work languished. He moved on to other inventions, and the telekino never achieved commercial success. But he had proven something fundamental: a vehicle could be guided by signals transmitted wirelessly. Control didn't require a human in the seat. It could be exerted from a distance.

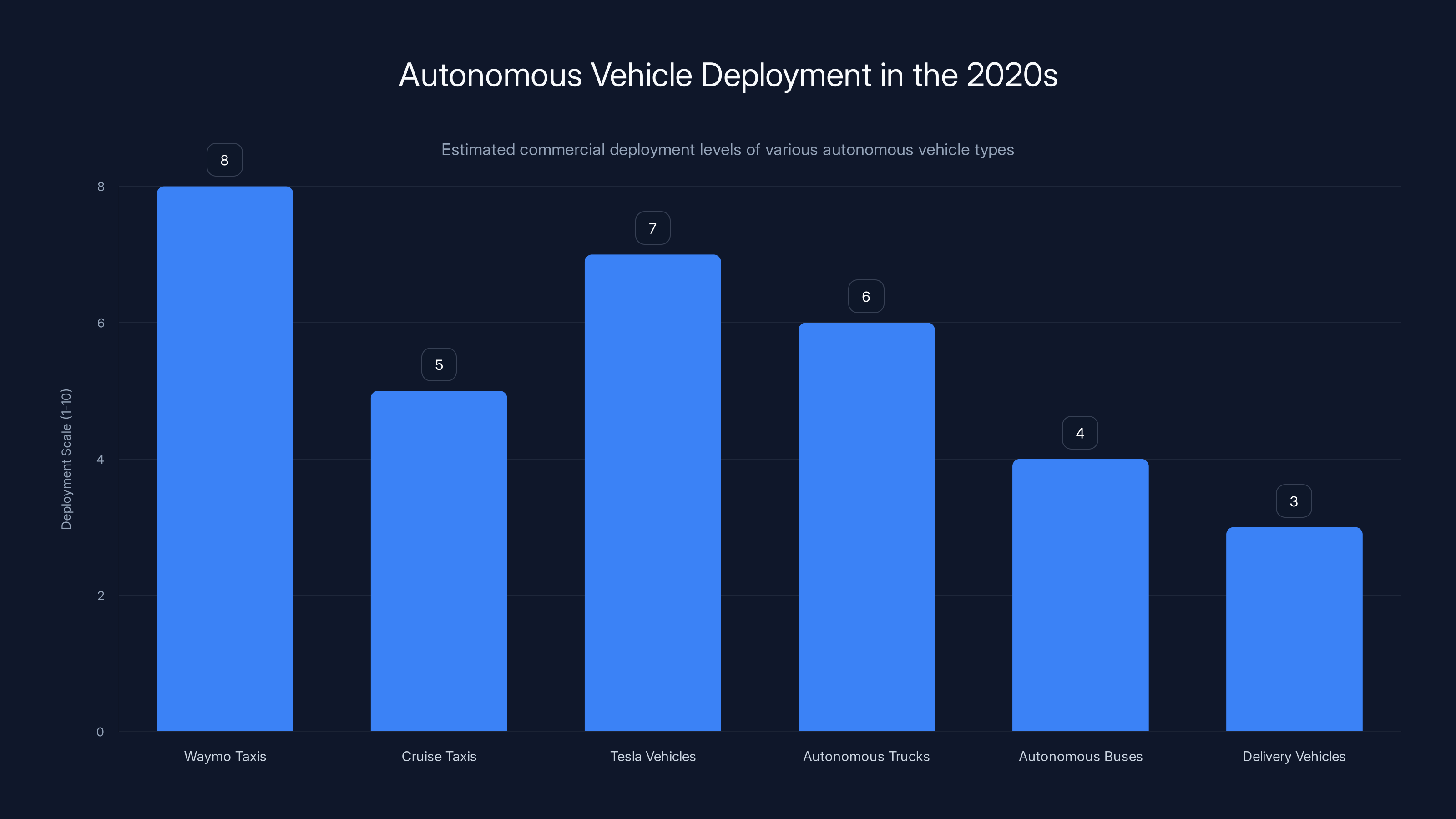

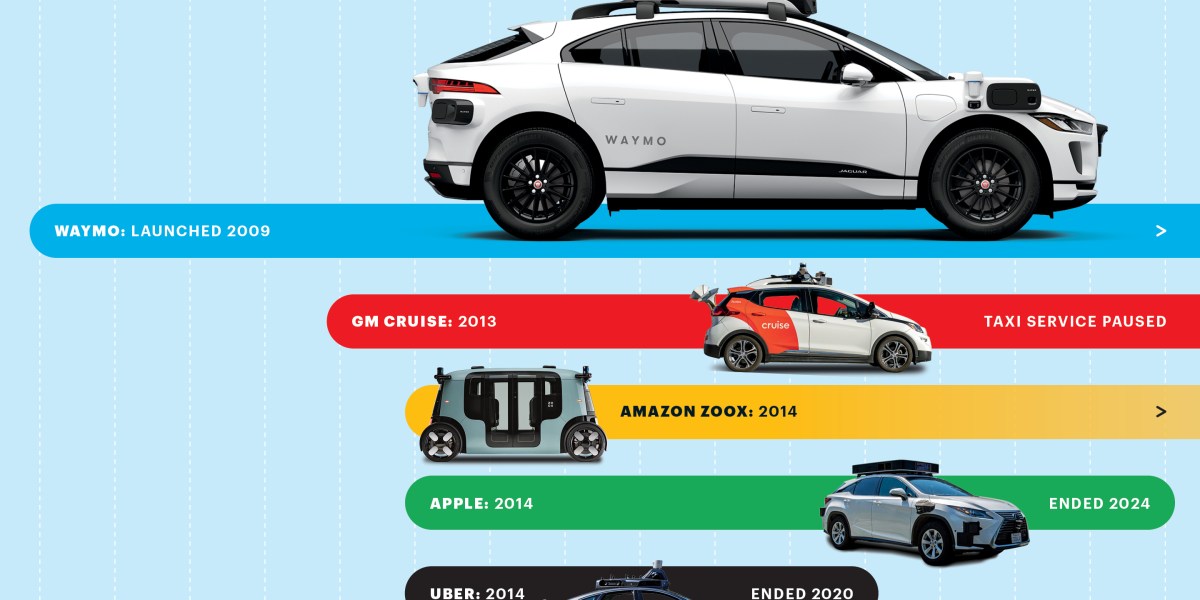

Waymo leads in autonomous taxi deployment, while Tesla's semi-autonomous systems are widely used. Estimated data reflects commercial deployment levels.

The 1920s Radio-Controlled Automobile Craze

After Quevedo's initial breakthrough faded into relative obscurity, the idea of remote-controlled vehicles didn't disappear. Instead, it migrated across the Atlantic and resurfaced in America during the 1920s, a period of unprecedented economic optimism and technological enthusiasm.

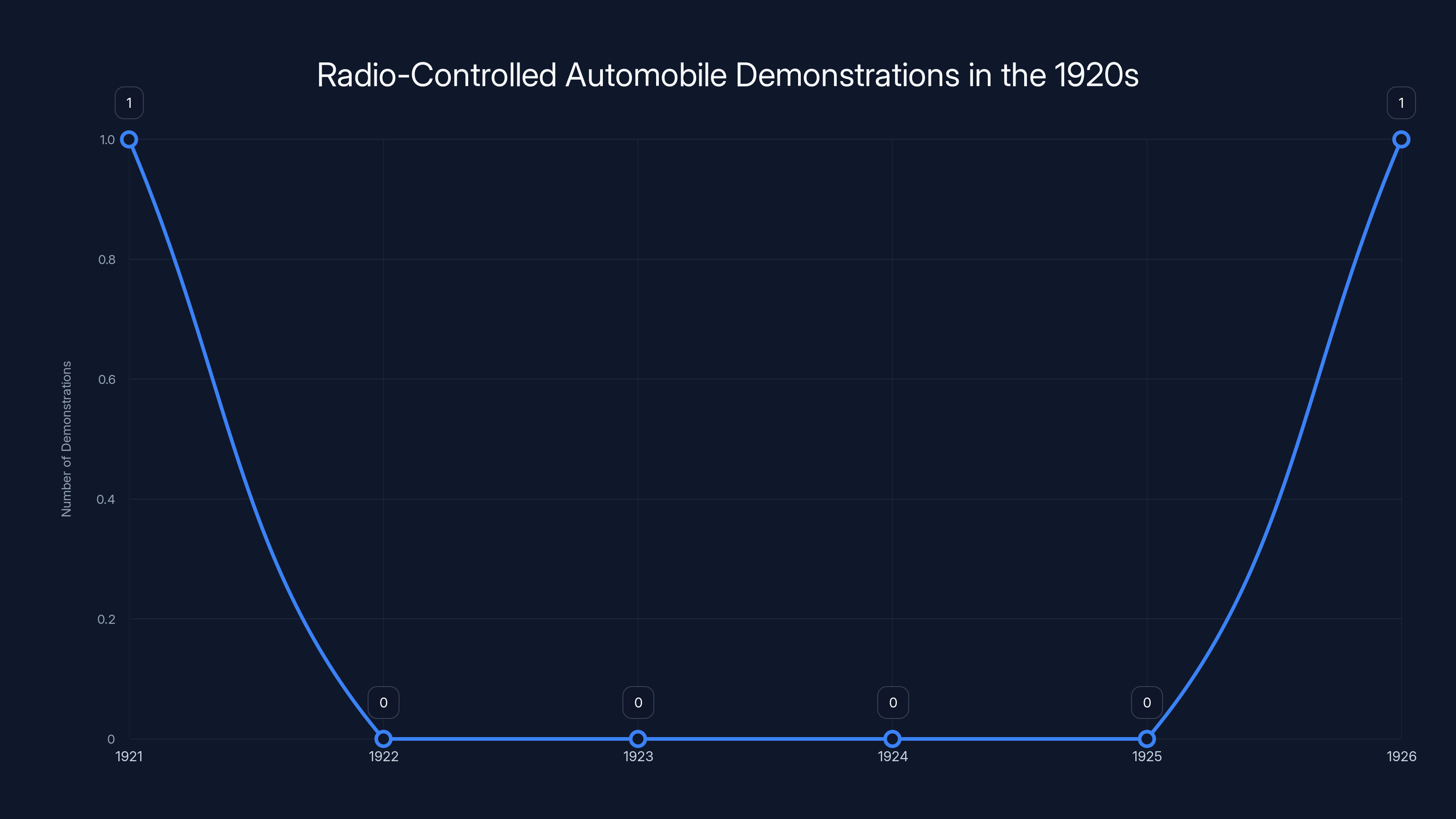

On August 5, 1921, in Dayton, Ohio, something remarkable happened. Captain R. E. Vaughn of the nearby Mc Cook Field Army Air Service sat inside a chase vehicle, hands gripping a radio transmitter. Before him, about fifty feet away, a small three-wheeled automobile rolled through Dayton's business district without a driver. No human sat behind its steering wheel. The car responded to Vaughn's radio signals, accelerating, braking, turning. This was the United States Army's public demonstration of remote-controlled automotive technology.

Dayton was the perfect place for this demonstration. The city was a nerve center of American automotive and aeronautical innovation. General Motors had established its Frigidaire Division there, promising an electrified future of automatic refrigerators and modern home appliances. Nearby Mc Cook Field was an Army Air Service facility where aviation experiments happened regularly. The intellectual environment was primed for innovation. When the Army brought a radio-controlled car to the streets of Dayton, it wasn't treated as an oddity. It was treated as evidence of progress.

Five years later, the spectacle moved east. In 1926, on the streets of New York City, another radio-controlled vehicle made headlines. This time it was a 1926 Chandler automobile, a well-regarded touring car of the period. The car sat quietly at the curb while curious onlookers gathered. Then, without any human at the wheel, the engine turned over. The gears engaged. The vehicle pulled smoothly into traffic, merged onto Fifth Avenue, and navigated the busy streets of Manhattan, responding to wireless commands from a chase car following behind.

The operator was Francis P. Houdina, an inventor who dubbed his system the "Houdina Radio Control." The name played on his connection to the famous magician Harry Houdini (though the two were not related). Houdina's system was more sophisticated than the military's earlier experiment. He installed antennas on the roof of the Chandler, and the wireless signals operated circuit breakers and small electric motors that controlled steering, throttle, brakes, and horn. For spectators watching a car drive itself down Fifth Avenue in 1926, it must have seemed like genuine magic—automotive wizardry.

The demonstrations continued into the 1930s. In Cincinnati, an inventor named Maurice J. Francill, who styled himself "America's Radio Wizard," demonstrated his own version of radio-controlled vehicles. Francill went beyond cars. He showed how radio control could operate farm equipment, milk cows, bake bread in ovens, and manage laundry operations. By 1936, newspapers from Ohio to California were still reporting his feats. The Orange County News declared that "Francill claims that he can accomplish anything the human hand can do by radio."

Francill's systems required substantial radio apparatus. He specified that eight pounds of radio circuitry was needed to control lights, ignition, horn, and motor starting. An additional five pounds of apparatus handled steering. This was heavy, power-hungry equipment. But it worked. In a world before digital electronics, before microprocessors, before anything resembling modern circuitry, engineers had successfully built systems that could guide vehicles remotely.

These demonstrations proved something important: remote control wasn't theoretical. It worked in real conditions, on real streets, with real traffic. The novelty of the demonstrations—the sheer spectacle—shouldn't obscure what they actually demonstrated. Engineers had solved the basic problem of transmitting control signals wirelessly and translating those signals into vehicle movements. What they hadn't solved, and wouldn't solve for decades, was how to make the vehicle itself intelligent enough to decide where to go, how to navigate obstacles, and how to respond to unpredictable situations without constant human guidance.

The Golden Age of Automotive Imagination: 1939-1956

The 1930s saw Americans emerge from the Great Depression with a curious mixture of caution and optimism. By 1939, when the New York World's Fair opened in Queens, the mood had shifted decisively toward optimism about the future. Technology, Americans believed, would solve problems. Engineering would make life better, safer, more convenient. It was in this context that General Motors unveiled Futurama, one of the most popular exhibits at the entire fair.

Futurama was a massive display, a showcase of automotive and urban futures. Fairgoers sat in moving chairs that carried them above a miniature city landscape, a diorama of the future built to scale. What they saw was remarkable: tiny electric vehicles moving smoothly along highways without drivers. These weren't random vehicles. They were traveling in orderly streams, coordinated and synchronized, never colliding, never deviating from their paths. It was a vision of perfect automotive harmony.

How would this work? GM explained that the vehicles would be guided by radio signals and electric currents running through cables and circuits embedded beneath the pavement. The highways of the future would contain an electromagnetic field that would both power the vehicles and direct their course. No steering wheels necessary. No human drivers needed. The road itself would guide the cars.

This wasn't merely entertainment. It represented serious engineering thinking at General Motors. During the 1930s and 1940s, GM had invested substantial resources into thinking about the future of transportation. The Futurama exhibit reflected real research into highway automation, wireless power transmission, and vehicle guidance systems. It suggested that autonomous driving wasn't a distant fantasy. It was a plausible future, perhaps one or two decades away.

After World War II, engineers didn't abandon the dream. In fact, they returned to it with renewed energy. At General Motors' Motorama, a traveling showcase of experimental vehicles and advanced concepts, the company continued to display visions of automated driving. In 1956, GM introduced the Firebird II, an experimental vehicle that embodied the future as the company understood it.

The Firebird II was stunning in appearance, a sleek, jet-inspired automobile with a bubble canopy and advanced aerodynamics. But beyond its styling, the Firebird II was designed with autonomous driving in mind. The vehicle had experimental systems for navigation and control. Crucially, the Firebird II was envisioned as part of a larger automated highway system. Cars like the Firebird II would travel on highways equipped with electronic guidance systems. The vehicle would follow these electronic roads, guided by signals transmitted from the roadway infrastructure itself.

The concept was called the Automated Highway System, or AHS. In theory, it was elegant. Cars would be mechanically guided by electronic systems in the road. Drivers would simply steer onto the automated highway, relinquish control to the road's guidance system, and ride along while the infrastructure controlled acceleration, braking, and steering. Once their destination exited approached, drivers would regain control and navigate local streets manually.

This vision dominated automotive and traffic engineering thinking for decades. It combined the romance of automation with the practicality of removing humans from the boring parts of driving (especially highway driving on long interstate journeys). But it had a critical flaw: it required rebuilding every major highway in the country with embedded electronic guidance systems. The cost would have been astronomical. The engineering challenges were substantial. And as highways continued to grow and evolve, retrofitting them became logistically impossible.

By the 1960s, it became clear that the Automated Highway System wasn't going to happen. At least not anytime soon. The dream persisted, but in a different form.

The 1920s saw key demonstrations of radio-controlled cars in 1921 and 1926, showcasing technological progress. Estimated data based on historical accounts.

The Quiet Decades: 1960s-1970s

After the optimistic promises of the 1950s, the 1960s and 1970s witnessed a curious phenomenon: the autonomous vehicle dream faded from public consciousness, though research quietly continued.

The space race dominated American technological imagination during the 1960s. The Apollo program absorbed massive resources, national attention, and engineering talent. Autonomous vehicles seemed quaint by comparison. Why solve urban driving when humans could reach the moon? Meanwhile, the Vietnam War, social upheaval, and economic turbulence made the bright future promised by Futurama seem naive.

Automakers continued to research automated systems, but quietly. There were no spectacular public demonstrations. No World's Fair exhibits promising cars that drove themselves. Instead, engineers worked in labs and test facilities, advancing incrementally on problems of vehicle control, navigation, and electronic systems. The research was serious, but invisible to the public.

During this period, engineers grappled with fundamental questions that remain central to autonomous driving today. How does a vehicle perceive its environment? How does it identify obstacles? How does it make decisions about acceleration and steering based on what it perceives? In the 1960s and 1970s, these were unsolved problems. Computers existed, but they were massive, power-hungry, and limited in their processing capabilities. Sensors existed, but they were crude. The idea of building a computer small and powerful enough to drive a car in real time seemed far-fetched.

Thus the decades of the 1960s and 1970s represent a kind of technological winter for autonomous driving. The grand visions of the 1950s had proven impractical. The technology didn't yet exist to make autonomous vehicles work without expensive infrastructure. And public interest had shifted elsewhere.

But this quiet period wasn't wasted. Researchers were advancing the underlying technologies that would eventually make autonomous driving possible: computers, sensors, control systems, and algorithms. The groundwork was being laid, even if few people realized it.

The VAMP Project and the 1980s Resurgence

By the 1980s, computers had become smaller, more powerful, and more affordable. Microprocessors that would have filled a room in the 1950s now fit on a single chip. The personal computer revolution was beginning. Against this backdrop, serious research into autonomous vehicles resumed.

One of the most significant projects of the 1980s was the VAMP (Vision-based Autonomous Mobile Platform), developed at Carnegie Mellon University. VAMP was a converted van equipped with cameras, image processing hardware, and onboard computers. The system used computer vision—processing images from cameras to identify lanes, road markers, and obstacles.

The vision-based approach was philosophically different from earlier attempts at automation. Rather than relying on infrastructure (embedded electronic guidance systems in roads), VAMP mounted the intelligence on the vehicle itself. The vehicle would look at the world through cameras and decide how to respond based on what it saw.

This was closer to how humans drive. We perceive our environment visually, then make decisions based on that perception. VAMP attempted to replicate that process computationally. Using edge detection algorithms and image processing techniques, VAMP could identify lane markings and road structure. Based on this visual information, the vehicle's onboard computer could command steering adjustments, keeping the vehicle within its lane.

VAMP was not fully autonomous in the modern sense. A human could still intervene. But it demonstrated something important: a vehicle could navigate using only visual perception and onboard computation, without special infrastructure, without radio signals from remote operators, and without embedded highway systems.

The VAMP project and similar research throughout the 1980s reignited interest in autonomous driving among engineers and researchers. Universities began establishing research programs. The question was no longer "Can we build a self-driving car?" but rather "How do we make them work reliably?"

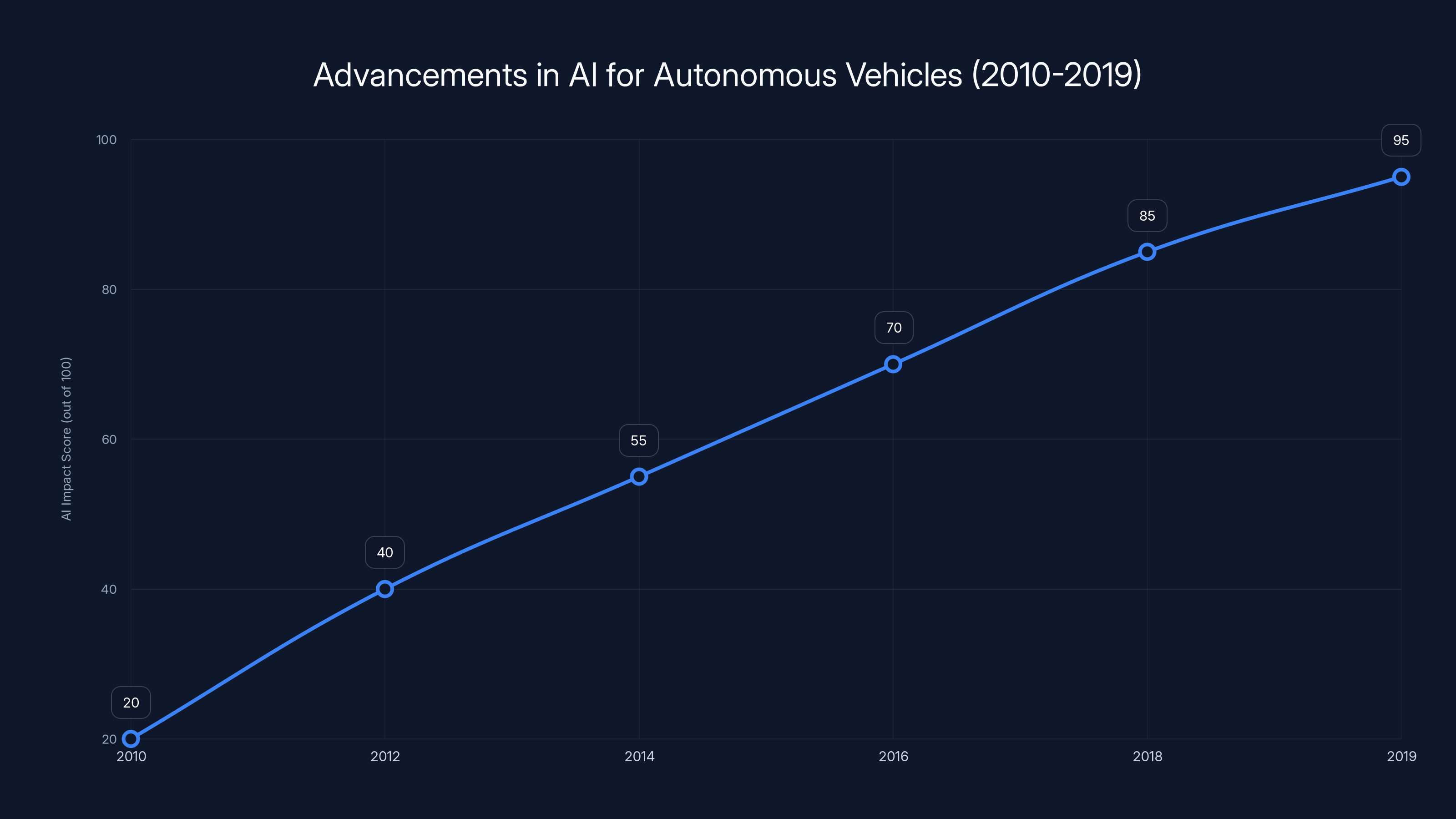

The 2010s saw significant advancements in AI for autonomous vehicles, with deep learning driving major improvements. Estimated data reflects the growing impact of AI technologies over the decade.

The 1990s: Neural Networks and Autonomous Lane Following

As the 1980s transitioned into the 1990s, a shift occurred in how researchers approached autonomous driving. Rather than relying on hand-crafted algorithms for image processing, researchers began experimenting with neural networks.

Neural networks are computational systems loosely inspired by how brains work. They consist of interconnected nodes (artificial neurons) that process information and pass results through layers. A neural network can be trained on examples. Show it thousands of images of roads, and tell it "this is a left lane marking" and "this is a right lane marking," and the network learns patterns. Later, when shown a new road image, it can identify lane markings without explicit programming.

At Carnegie Mellon, researchers led by Dean Pomerleau developed ALVINN (Autonomous Land Vehicle In a Neural Network). ALVINN was more sophisticated than VAMP. The system used a camera pointing at the road ahead and a neural network trained to predict steering commands. The network was trained by showing it road images captured during human driving, along with the corresponding steering inputs. The network learned the relationship between visual input and steering output.

The results were impressive. ALVINN could autonomously drive a vehicle on real roads, adjusting steering to stay within lanes and avoid obstacles. By the mid-1990s, ALVINN had driven thousands of miles autonomously, both on test tracks and public roads. It wasn't perfect—it would occasionally make errors—but it demonstrated that neural networks could learn to drive, even with 1990s-era computing hardware.

The significance of ALVINN and similar neural network-based approaches was philosophical and practical. Philosophically, it showed that driving could potentially be learned rather than explicitly programmed. Practically, it showed that the computational approach might work. But there was still a gap between ALVINN's lane-following ability on relatively simple roads and the kind of all-weather, urban, obstacle-avoiding autonomy that a truly self-driving car would require.

During the 1990s, researchers continued to refine these approaches. But practical autonomous vehicles remained largely confined to research institutions and laboratories. No automakers had committed to bringing autonomous vehicles to market. The technology worked in controlled conditions, on good roads, in fair weather. But it wasn't ready for the messy reality of everyday traffic.

The 2000s: DARPA, Challenges, and Renewed Momentum

The 2000s began with a turning point. In 2004, the Defense Advanced Research Projects Agency (DARPA) announced the Grand Challenge, a competition to design and build an autonomous vehicle capable of driving itself over a 150-mile course through the Mojave Desert without human intervention.

DARPA's motivation was military. The Pentagon wanted autonomous vehicles for logistics and combat operations. But the Grand Challenge was open to anyone: universities, private companies, startups, hobbyists. Whoever could build a vehicle that completed the course would win a $1 million prize.

The competition generated enormous excitement and attracted serious talent. Major universities mobilized research teams. Carnegie Mellon assembled a substantial effort. So did Stanford University. The competition caught the attention of entrepreneurs and engineers who had previously dismissed autonomous driving as too far in the future to warrant serious investment.

The first Grand Challenge, in 2004, was humbling. The winning vehicle traveled only 7.32 miles before becoming disabled. The course wasn't completed by any entrant. But the fact that some vehicles completed even a portion of the course using autonomous navigation was significant. DARPA had catalyzed a shift in how the engineering community approached the problem.

DARPA held additional challenges. In 2005, the Grand Challenge was repeated, and this time multiple vehicles completed the entire 131-mile course. In 2007, the Urban Challenge required autonomous vehicles to navigate a mock city environment, obeying traffic rules, responding to obstacles, and competing against other autonomous vehicles.

These competitions attracted ventures from established automakers. But more importantly, they attracted researchers and entrepreneurs who became convinced that autonomous driving could actually work. The competitions demonstrated progress. Year after year, vehicles completed longer distances, navigated more complex environments, and responded to more sophisticated scenarios.

Stanford's autonomous vehicle program, known as Stanley, won the 2005 Grand Challenge. The success brought visibility to Stanford's autonomous driving research and established the university as a leader in the field. Sebastian Thrun, who led Stanford's team, would later become director of Google's autonomous vehicle program (now Waymo).

The DARPA challenges marked a transition. Autonomous vehicles moved from being an interesting research novelty to being a genuine technical challenge that serious organizations were competing to solve. Money started flowing toward autonomous driving research. The venture capital community began paying attention. It became plausible that autonomous vehicles could actually reach the road within a decade or two.

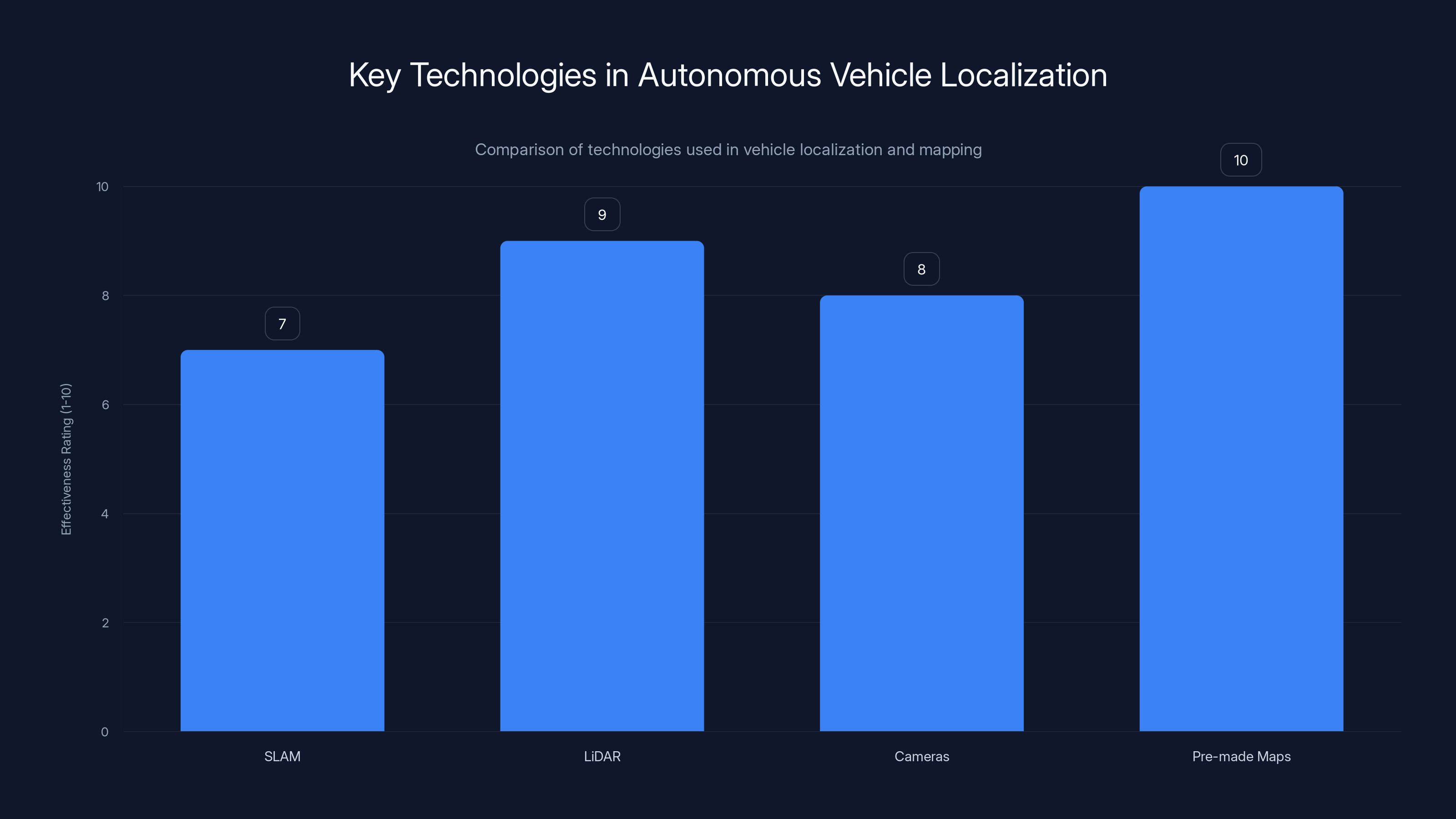

Pre-made maps are rated highest in effectiveness for localization due to their precision and reliability, followed by LiDAR and cameras. Estimated data.

The 2010s: Deep Learning and the AI Breakthrough

The real game-changer for autonomous vehicles came not from traditional roboticists, but from artificial intelligence researchers working on deep learning. In 2012, a team from the University of Toronto, led by Geoffrey Hinton, Alex Krizhevsky, and Ilya Sutskever, won a major computer vision competition using a deep convolutional neural network trained on GPUs (graphics processing units).

Their network, called AlexNet, wasn't designed for autonomous driving. It was designed for image classification—looking at pictures and identifying what objects they contained. But the implications for autonomous driving were immediately clear to researchers in the field. If neural networks could recognize objects in images, they could potentially recognize pedestrians, other vehicles, road signs, and obstacles from a self-driving car's camera feeds.

What made deep learning revolutionary was its performance and its generality. Previous approaches to autonomous driving relied on hand-crafted features and algorithms designed by engineers with specific knowledge about roads, traffic, and driving. Deep learning could learn features directly from data. Show the network millions of labeled images, and it learns to recognize patterns without explicit programming.

Google was paying attention to these developments. In 2009, Google began a secret autonomous vehicle project, recruiting researchers including Sebastian Thrun from Stanford. Google had access to massive computing resources, nearly unlimited funding, and enormous amounts of data from Google Street View and other sources. The company began training neural networks on massive datasets of traffic images, driving videos, and sensor data.

By the early 2010s, it became clear that deep learning was the right approach for autonomous driving. Traditional computer vision techniques reached a performance ceiling. Deep learning, by contrast, improved with more data and more compute. As companies accumulated more driving data and GPUs became more powerful, autonomous driving systems became better.

Tesla, the electric vehicle manufacturer founded by Elon Musk, launched Autopilot in 2014—a semi-autonomous driving system that could control steering, acceleration, and braking on highways. Autopilot couldn't fully drive the car autonomously, but it represented a real product available to consumers that used many of the techniques (neural networks, camera-based perception, real-time control) that research teams had been developing.

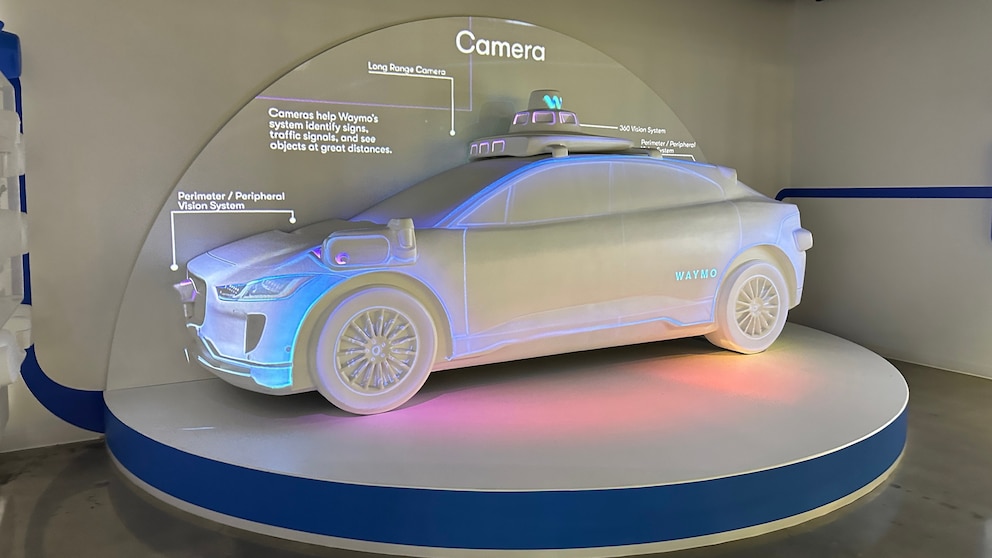

Waymo (which spun out from Google's autonomous vehicle project) continued to develop fully autonomous vehicles. By 2018, Waymo had deployed autonomous vehicles in limited commercial service in Phoenix, Arizona, picking up and dropping off passengers with minimal human intervention. It was the first true driverless taxi service, even if it was geographically limited.

The key technical breakthrough underlying this progress was the combination of three elements:

- Deep neural networks for perception (recognizing pedestrians, vehicles, traffic signs, road markings)

- Massive datasets of driving videos and sensor data for training

- Powerful computing hardware (GPUs and specialized AI accelerators) for running inference in real time

These three elements were largely absent in the 1990s and 2000s. The combination became possible in the 2010s, enabling the dramatic progress in autonomous driving.

Technical Evolution: Sensors and Perception

Astonishing progress in deep learning was necessary but insufficient for autonomous vehicles. The technology stack of a self-driving car requires more than just neural networks for image understanding. It requires a sophisticated sensor suite and hardware architecture.

Early autonomous vehicles in the 1980s and 1990s relied primarily on cameras. Vision-based approaches had appeal: cameras were cheap, human drivers use vision, and image processing seemed tractable. But cameras alone have limitations. They struggle in poor lighting, they require massive amounts of processing to extract 3D spatial information from 2D images, and they can fail in ways that are unpredictable and difficult to debug.

Modern autonomous vehicles employ a sensor fusion approach: multiple sensor types feeding information to a central processing system. The sensor suite typically includes:

LiDAR (Light Detection and Ranging): A sensor that emits laser pulses and measures the time for reflections to return, creating a precise 3D map of the environment. LiDAR provides direct depth information, making it excellent for obstacle detection and localization. It works at night and in poor visibility. The main drawback is cost. Early LiDAR systems cost tens of thousands of dollars per unit. Modern solid-state LiDAR is becoming cheaper, but remains expensive compared to cameras.

Radar: Radio detection and ranging systems that emit radio waves and detect reflections. Radar excels at measuring velocity (how fast things are moving toward or away from the sensor) and works well in rain, snow, and fog. Where LiDAR struggles in adverse weather, radar thrives. Most autonomous vehicles use multiple radar units, often one pointing forward and others pointing to the sides and rear.

Cameras: Multiple cameras positioned around the vehicle provide the primary visual perception. Modern systems use neural networks to recognize pedestrians, vehicles, traffic signs, lane markings, and other relevant objects. Cameras provide rich texture and color information that other sensors don't capture.

Inertial Measurement Units (IMUs): These sensors measure acceleration and rotation, providing feedback about the vehicle's motion and helping with localization.

GPS and GNSS: Global positioning systems provide location, though in urban environments with tall buildings (urban canyons), GPS alone is insufficient.

The genius of sensor fusion is redundancy and complementarity. If LiDAR detects an obstacle, cameras provide additional confirmation. If radar measures velocity of an approaching vehicle, that information enhances the vehicle's decision-making. If GPS signals are lost momentarily, IMU and wheel encoders provide dead reckoning.

But collecting sensor data is only the first step. The data must be processed, fused, and interpreted. Modern autonomous vehicles perform real-time processing that would have been unimaginable in earlier decades. A vehicle might process terabytes of sensor data every day. Advanced AI systems must fuse information from multiple sensors, predict future positions of other road users, make decisions about acceleration and steering, and ensure safety at all times.

The engineering challenges are substantial. Sensors must be calibrated and synchronized. Sensor data must be processed with latencies measured in milliseconds—delays exceeding a few hundred milliseconds can compromise safety. The processing must be reliable and deterministic. Unlike smartphone apps that can occasionally crash, an autonomous vehicle's control systems must function reliably in all conditions.

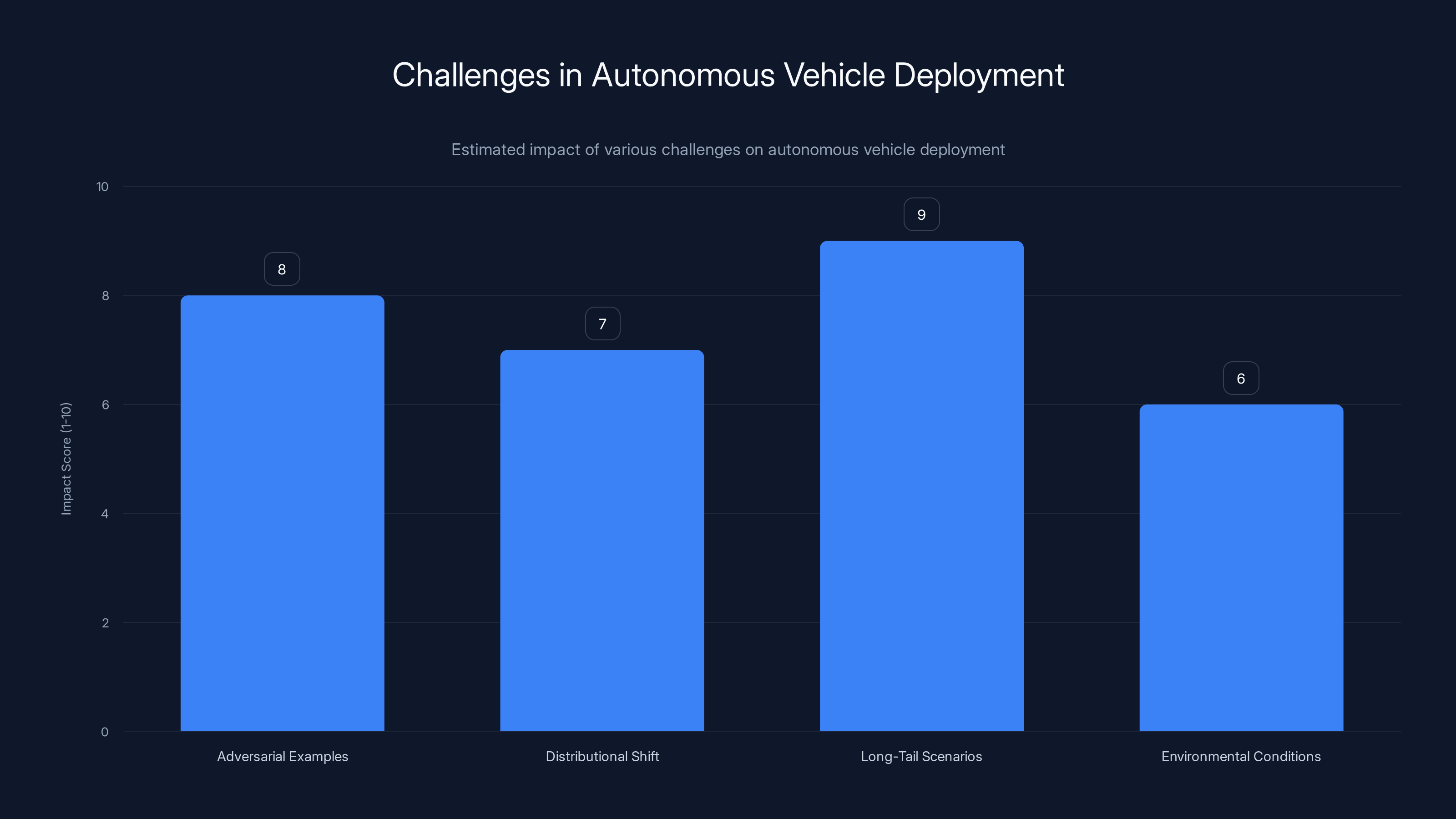

Long-tail scenarios pose the greatest challenge to autonomous vehicle deployment, with an estimated impact score of 9 out of 10. Estimated data.

Localization and Mapping: Knowing Where You Are

One challenge that receives less public attention than perception, but is equally critical, is localization—determining where the vehicle is with high precision. Human drivers know their location through experience, landmarks, and signs. They can read a street sign saying "Fifth Avenue" and immediately know where they are. Autonomous vehicles need to be more precise.

Modern autonomous vehicles often employ Simultaneous Localization and Mapping (SLAM) techniques. The vehicle builds a map of its environment while simultaneously determining its position within that map. LiDAR and cameras contribute to this process. By identifying distinctive features in the environment (building corners, poles, distinctive pavement patterns) and tracking how those features appear in successive sensor readings, the vehicle can estimate its position.

For greater precision, many autonomous vehicle systems use detailed pre-made maps. Before autonomous vehicles operate in an area, companies like Waymo and Tesla invest in high-resolution mapping. Their vehicles drive through areas recording detailed environmental data, building maps that are far more precise than consumer GPS. Later, when autonomous vehicles operate in these areas, they compare their current sensor data to the pre-made maps, achieving localization precision within centimeters.

This map-based localization has several advantages. It's faster and more reliable than building maps in real time. It provides confidence checking—if current sensor data doesn't match the pre-loaded map, the system knows something is wrong (perhaps a road closure, new construction, or sensor malfunction). But it also has limitations. Autonomous vehicles operating in unmapped areas can't rely on pre-made maps. And maintaining accurate maps as roads change, new buildings are constructed, and temporary barriers appear, requires constant updates.

The future may involve mixed approaches: pre-made maps for well-traveled urban areas where precise localization is crucial, and real-time SLAM for rural areas, temporary routes, and navigation in novel environments.

Decision-Making and Path Planning

Perception and localization tell the autonomous vehicle what's in its environment and where it is. But these alone don't enable driving. The vehicle must also decide what to do.

Autonomous vehicles need to answer constantly: Should I accelerate or brake? Should I steer left or right? Should I change lanes? Should I yield to an approaching pedestrian or proceed? These decisions must be made in real time, often with incomplete information, and safety must never be compromised.

Modern autonomous vehicles typically employ a decision-making architecture with multiple layers:

Behavioral Planning: This layer decides high-level behavior. Should the vehicle follow the current lane? Should it prepare to change lanes? Should it turn? These decisions often rely on pre-loaded maps and route information. The behavioral planner might consult a map, identify the vehicle's route, and decide whether the vehicle should continue straight, prepare for a turn, or switch lanes.

Motion Planning: This layer translates behavioral decisions into actual paths and speeds. If behavioral planning decides the vehicle should change lanes, motion planning computes a specific path that smoothly transitions the vehicle from its current lane to the target lane while respecting safety constraints and comfort constraints (acceleration shouldn't be too aggressive).

Control: This layer commands the vehicle's actuators (steering wheel, throttle, brakes) to execute the planned path and speed. Modern vehicles use PID controllers (Proportional-Integral-Derivative controllers) and other control algorithms to track the planned trajectory while accounting for the vehicle's dynamics and road conditions.

These layers don't operate in isolation. They constantly communicate and respond to new information. A behavioral planner might decide to change lanes, but if the motion planner detects that another vehicle has just moved into the target lane, the motion planner will refuse the request and inform the behavioral planner that the maneuver isn't currently possible.

Incorporating ethics and safety into these decision-making systems remains an active research area. The classic thought experiment is the "trolley problem": if an autonomous vehicle must choose between hitting one pedestrian or five pedestrians, which should it do? In practice, well-designed autonomous vehicles with good perception and planning should rarely face such stark choices, but designing decision systems that are both safe and ethically sound remains challenging.

The 2020s: Scaling and Commercialization

The 2020s have witnessed the first real-world deployment of autonomous vehicles at significant scale. Waymo, now owned by Alphabet, operates autonomous taxi services in multiple U. S. cities including Phoenix, San Francisco, and Los Angeles. These services are not unlimited—they operate in specific geographic areas with pre-mapped roads and favorable conditions—but they represent genuine commercial deployment with paying customers.

Cruise, another autonomous vehicle company, operated robotaxi services in San Francisco before facing operational challenges and regulatory scrutiny that temporarily suspended service. The company has since resumed more limited operations.

Tesla has continued to expand its Autopilot and Full Self-Driving features, though these remain semi-autonomous systems requiring human supervision rather than fully autonomous. Tesla's approach differs from Waymo's: Tesla emphasizes vision-based perception without LiDAR, and relies on incremental deployment of capabilities, gradually expanding from highway driving assistance toward more autonomous behaviors.

The 2020s have also seen emergence of autonomous driving in less obvious domains. Autonomous trucks are becoming reality, with companies like Aurora and others operating autonomous long-haul freight services. Autonomous buses are being tested in various cities. Even autonomous delivery vehicles (robotic delivery carts) have begun appearing on some sidewalks.

But challenges have emerged that complicate the narrative of inevitable progress. Regulatory uncertainty remains significant. Different cities and countries have different rules. Some jurisdictions welcome autonomous vehicle testing and deployment; others are cautious or skeptical. Insurance liability is unclear—if an autonomous vehicle causes an accident, who is responsible? The manufacturer? The operator? The owner? This ambiguity creates legal hesitation.

Safety remains paramount and complicated. When Uber's autonomous vehicle struck and killed a pedestrian in Tempe, Arizona in 2018, it shook confidence in the technology. Investigations revealed problems with the vehicle's perception system. The incident underscored that autonomous vehicles, despite their promise, must achieve safety levels that exceed human drivers to be widely accepted.

Then there are the stubborn edge cases. Autonomous vehicles work well on clear, sunny days on well-mapped roads with clear lane markings. Heavy snow, floods, unusual road conditions, and dynamic environments (like construction zones with temporary barriers and redirected traffic) remain challenges. Fully autonomous operation in all weather, in all conditions, everywhere remains years or decades away.

Comparative Analysis: Then and Now

Looking back from 2025 to 1904, the evolution of autonomous vehicle technology is staggering. Yet patterns emerge.

Quevedo's telekino and the 1920s radio-controlled cars solved the problem of remote control—transmitting commands wirelessly and having a vehicle respond. But they required a human operator actively controlling the vehicle. The vehicle itself had no intelligence.

The 1950s Futurama exhibit and the Firebird II imagined automation at the infrastructure level. Roads would be embedded with electronic systems that would guide vehicles. This approach avoided the need for onboard intelligence but required rebuilding all transportation infrastructure.

The VAMP and ALVINN projects of the 1980s and 1990s shifted to onboard intelligence: cameras and computers on the vehicle would perceive the environment and make driving decisions. This approach was more feasible than infrastructure-based automation because it didn't require changing existing roads.

The deep learning revolution of the 2010s provided the perception capabilities needed for this onboard-intelligence approach to work at scale. Cameras plus neural networks could, for the first time, reliably recognize pedestrians, vehicles, and road conditions well enough for autonomous driving.

But each phase faced obstacles. Quevedo's telekino had no source of funding. The radio-controlled cars of the 1920s couldn't navigate without operator guidance. The highway automation systems of the 1950s were too expensive and complex to implement. The vision-based systems of the 1980s and 1990s worked but were slow and unreliable. The deep learning systems of the 2010s worked well in ideal conditions but struggle with edge cases.

Currently, in 2025, we have the hardware (cameras, LiDAR, radar, processors), the software (deep learning models), and the data (billions of miles of driving data) needed for autonomous vehicles to work in favorable conditions. What remains unsolved is robustness across all conditions, regulatory frameworks, and public acceptance.

The path forward requires solving not just technical problems, but organizational, regulatory, and social ones. Progress will likely be gradual and geographically uneven, with some regions adopting autonomous vehicles earlier than others. The vision of fully autonomous, driverless vehicles operating everywhere remains real, but achieving it will take longer than early 21st-century optimists believed.

Modern Challenges: The Last Mile Problem

Autonomous vehicle researchers refer to the "last mile problem," though they mean something different from the logistics concept. The last mile in autonomous driving refers to the gap between what current systems can do (drive in good conditions on familiar roads with clear markings) and what they need to do to achieve widespread adoption (drive safely in all conditions, on unfamiliar roads, in adverse weather, in unstructured environments).

This last mile has proven stubbornly difficult to close. The early phases of autonomous vehicle development were characterized by rapid progress. By the early 2020s, neural networks had achieved human-level or superhuman-level performance on many perception tasks. But deploying those systems in production vehicles that operate safely in the real world introduced new challenges.

Adversarial Examples: Researchers have discovered that neural networks, despite their power, can be fooled in unintuitive ways. A pedestrian wearing certain camouflage patterns, or a road with unusual markings, or even adversarially designed stickers on road signs can cause perception systems to fail. These failures often don't occur to human drivers, but they occur to neural networks in unpredictable ways.

Distributional Shift: Neural networks trained on driving data from sunny California may perform poorly in snowy Boston. The distribution of visual features in training data (mostly clear days, USA roads, specific vehicle types) doesn't match the distribution in all deployment scenarios. Adapting systems to new environments remains challenging.

Long-Tail Scenarios: The common cases—following a lane on a highway, stopping at a red light, avoiding another vehicle on the road—autonomous systems handle well. But the long tail of rare, unusual situations—a child running into the road, a bridge closure with unexpected detours, a traffic officer manually directing traffic—systems may not have been trained on or designed for these scenarios.

Responsibility and Liability: If an autonomous vehicle causes an accident, assigning responsibility is legally and ethically complex. The manufacturer? The operator? The owner? This ambiguity creates reluctance to deploy fully autonomous vehicles at scale until legal frameworks are established.

These challenges aren't insurmountable, but they're stubbornly difficult. They explain why autonomous vehicle deployments in 2025 remain limited in scope—specific geographic areas, favorable conditions, human safety operators present—rather than the ubiquitous, condition-agnostic autonomy that was promised in the 1950s and remained the aspiration through the 2000s.

The Road Ahead: What 2025-2035 Might Bring

Predicting the future is notoriously difficult, but analyzing trends can suggest possibilities. Several trajectories seem plausible for autonomous vehicles over the next decade.

Scenario 1: Gradual Expansion from Current Footholds

Waymo, Cruise, and Tesla continue expanding geographic areas of operation, gradually moving from a few cities to a dozen cities. Autonomous vehicles increasingly operate in urban environments but remain supervised or semi-autonomous rather than fully autonomous. By 2035, major American cities have autonomous taxi options, but they're not yet cheaper or more convenient than human drivers for most trips. Autonomous long-haul trucking becomes mainstream, with significant economic benefits and job displacement in trucking.

Scenario 2: Technology Breakthrough

A fundamental breakthrough in AI perception or decision-making—perhaps through approaches different from current deep learning—enables autonomous vehicles to handle edge cases and novel environments much better than current systems. Companies like Waymo dramatically accelerate deployment. By 2035, fully autonomous vehicles operate in many American cities without human operators, though still in geographically limited service areas. Insurance and liability frameworks have been established. Autonomous vehicle adoption grows from tens of thousands to millions of vehicles.

Scenario 3: Regulation-Driven Slow Growth

Regulatory uncertainty and concerns about safety slow deployment more than technical limitations do. By 2035, autonomous vehicles remain rare outside of specific cities and routes where regulators have explicitly permitted them. Public skepticism about safety and concerns about job displacement limit growth. Technological capability advances faster than deployment expands.

Scenario 4: Localized Adoption

Different regions adopt autonomous vehicles at different rates. Western Europe embraces autonomous vehicles sooner than the United States due to more unified regulatory frameworks and higher public acceptance. China rapidly deploys autonomous vehicles due to government incentives and less regulatory friction. The United States remains divided by state and local regulations, creating patchwork adoption.

Which scenario unfolds depends on factors both technical and non-technical. It depends on whether fundamental breakthroughs are achieved in robustness and safety. It depends on how regulators in different jurisdictions respond. It depends on public acceptance and comfort with driverless vehicles. It depends on economic factors—if autonomous vehicles become much cheaper than human-driven services, adoption will accelerate.

What seems clear is that fully autonomous vehicles operating everywhere, in all conditions, without human intervention, is not around the corner. If such vehicles exist by 2035, they'll likely be limited to favorable conditions and specific geographic areas. Extending to all roads, all conditions, all weather may take decades beyond that.

This is not a failure of the industry or of the technology. It's simply the reality of an incredibly hard problem. Humans are remarkably adaptable drivers, capable of navigating novel situations, understanding implicit rules, and responding to unstructured environments. Replicating that capability completely and reliably in a machine remains profoundly difficult.

Historical Perspective: Why Predictions Fail

Reflecting on 120 years of autonomous vehicle history, a pattern emerges: predictions consistently overestimated how quickly autonomous vehicles would reach maturity. In 1939, Futurama suggested highways of the future would be fully automated. In 1956, the Firebird II seemed to portend full autonomy within a decade or two. In the 1980s, researchers believed fully autonomous vehicles were a decade away. In the 2000s and 2010s, industry figures repeatedly promised autonomous vehicles would be common within 10 years.

These predictions weren't crazy or delusional. They were made by intelligent people with genuine expertise. What they underestimated were the challenges of moving from demonstrations to deployment, from controlled environments to real-world chaos, from laboratory systems to production systems that must operate reliably in unpredictable conditions.

There's a useful distinction between capability and deployment. The capability to drive autonomously in ideal conditions, on a known route, in good weather has existed for decades. We had it in the 1980s with ALVINN. But deploying this capability as a service available to the public, operating safely in all conditions, at prices people will pay, with regulatory approval and insurance frameworks—this is dramatically harder and slower than demonstrating the capability.

The history of autonomous vehicles teaches humility about predictions. It suggests that even with dramatic advances in AI, the remaining challenges to fully autonomous vehicles are significant. It suggests that moving from capability to deployment is a multi-year process involving not just engineering but also regulatory, economic, and social challenges.

It also suggests optimism tempered with realism. Autonomous vehicles will almost certainly become more common. The technology is real and functional. Economic incentives exist (trucking, taxi services, delivery). But ubiquitous, fully autonomous vehicles operating without human supervision in all conditions everywhere—that aspiration may take longer than current predictions suggest, just as it did from 1904 to 2025.

FAQ

What was Leonardo Torres Quevedo's telekino?

The telekino was a wireless remote control system invented by Spanish engineer Leonardo Torres Quevedo in the early 1900s. In 1904, Quevedo successfully demonstrated the telekino controlling a small three-wheeled automobile from nearly 100 feet away using radio signals. This made it the earliest recorded instance of a vehicle being controlled by radio waves. While the telekino never achieved commercial success due to lack of funding from the Spanish government, it proved the fundamental concept that vehicles could be guided without a human operator onboard.

How did the 1920s radio-controlled cars work?

The 1920s radio-controlled cars used wireless radio signals to transmit commands from a remote operator to a receiver mounted on the vehicle. The receiver detected the radio signals and converted them into electrical current, which operated electromagnets and mechanical switches. These switches commanded small electric motors that controlled steering, throttle, brakes, and horn. Operators in a chase car could transmit up to 19 distinct commands, allowing them to guide the vehicle through traffic. The most famous example was Francis Houdina's demonstration of a 1926 Chandler driving itself on Fifth Avenue in New York City in 1926.

What was GM's Futurama vision for autonomous vehicles?

General Motors' Futurama exhibit at the 1939 New York World's Fair presented a vision of fully automated highways powered by embedded electronic systems. According to GM's concept, tiny electric vehicles would travel along highways guided by radio signals and electric currents running through cables beneath the pavement. These embedded systems would provide both power and guidance, eliminating the need for drivers and ensuring perfectly coordinated traffic with no collisions. While innovative and imaginative, this vision required completely rebuilding all highway infrastructure with embedded electronic systems, which proved economically infeasible.

Why did the Automated Highway System concept fail?

The Automated Highway System (AHS), imagined in the 1950s and pursued through the 1980s, required embedding electronic guidance systems in road infrastructure rather than building autonomous intelligence into vehicles. The concept was technically sound but economically impossible to implement. Retrofitting existing highways with the necessary infrastructure would have cost trillions of dollars. As highway networks continued to expand and evolve, maintaining compatibility with embedded systems became logistically impractical. Additionally, alternative approaches—mounting intelligence directly on vehicles—proved more feasible, leading researchers to abandon the infrastructure-based approach in favor of onboard autonomous systems.

What role did deep learning play in advancing autonomous vehicles?

Deep learning revolutionized autonomous driving by enabling vehicles to perceive their environment using neural networks trained on large datasets. Starting in 2012, when AlexNet won a computer vision competition using convolutional neural networks on GPUs, researchers recognized that deep learning could solve autonomous driving's perception challenges. Neural networks could recognize pedestrians, vehicles, traffic signs, and obstacles from camera images without requiring engineers to hand-craft detection algorithms. This breakthrough, combined with massive computing power and petabytes of driving data, enabled the dramatic progress in autonomous vehicles from 2012 onward. Modern autonomous vehicles rely fundamentally on deep learning for perception.

What is the "last mile problem" in autonomous driving?

The last mile problem refers to the gap between what current autonomous vehicle systems can do and what they need to do for widespread adoption. Current systems drive well in ideal conditions: clear weather, on familiar roads with clear lane markings, in mapped areas. But they struggle with adversarial examples, distributional shifts (performing poorly in conditions unlike their training data), rare edge cases, and unusual situations. Closing this gap—achieving reliable autonomous driving in all weather, all road conditions, all scenarios—has proven much more difficult than the earlier phases of development. This is why deployed autonomous vehicles in 2025 operate only in limited geographic areas with favorable conditions.

Why did autonomous vehicle timelines keep slipping?

Predictions about autonomous vehicles consistently overestimated how quickly they would become widely available because they underestimated the difference between demonstrating capability and achieving reliable deployment at scale. Building a prototype that drives itself in a controlled environment is much easier than building a production system that must operate reliably in unpredictable, unstructured real-world conditions. Additional challenges include regulatory uncertainty, establishing legal liability frameworks, achieving safety levels exceeding human drivers, and solving edge cases and unusual scenarios. Each of these required substantially more work than many predictions anticipated.

What autonomous vehicle services exist today?

As of 2025, Waymo operates autonomous taxi services (Waymo One) in Phoenix, San Francisco, Los Angeles, and other cities, providing ride-hailing to paying customers with minimal or no human intervention. Cruise operated robotaxi services before temporarily suspending due to operational and regulatory challenges, though the company has resumed limited operations. Tesla offers semi-autonomous driving features (Autopilot and Full Self-Driving) but these still require driver supervision and are not fully autonomous. Autonomous trucking companies like Aurora are operating long-haul freight services. Autonomous delivery vehicles are being tested in some cities. However, these services remain limited in scope—specific geographic areas, often during favorable conditions, with various levels of human supervision.

What challenges remain for fully autonomous vehicles?

Key challenges include: achieving reliable perception and decision-making in adverse weather (snow, rain, fog) where current systems struggle; handling edge cases and unusual scenarios not well-represented in training data; resolving regulatory uncertainty and establishing legal frameworks for liability and insurance; addressing public skepticism and concerns about safety; and solving the technical problem of robust performance across diverse environments and conditions. Additionally, economic challenges exist—making autonomous vehicles cheaper than human drivers while ensuring sufficient safety margins. These challenges are primarily blocking deployment and scaling, not fundamental capability demonstration.

Conclusion: A Century of Bold Attempts

From Leonardo Torres Quevedo's wireless telekino in 1904 to Waymo's autonomous taxis in 2025, the pursuit of self-driving cars represents one of humanity's most persistent technological ambitions. It's a story of visionaries, engineers, and entrepreneurs repeatedly convinced that autonomy was within reach, repeatedly discovering that the remaining challenges were more formidable than anticipated.

What's remarkable isn't that autonomous vehicles don't yet dominate our roads. It's that they exist at all. A century ago, Quevedo proved that vehicles could respond to wireless commands. Eighty years later, engineers were navigating highways using neural networks. Today, autonomous vehicles are genuinely operating in cities, picking up passengers and delivering goods. These aren't science fiction. They're real, functional, deployable technology.

The gap between capability and widespread deployment, however, remains substantial. We have vehicles that can drive themselves in favorable conditions. Scaling that to all conditions, all weathers, all road types, all of the strange and unpredictable scenarios that real traffic presents—that remains before us.

The lesson of 120 years of autonomous vehicle history is that the technology advances faster than deployment, that demonstrations don't translate automatically to services, and that the last 10 percent of capability requires the first 90 percent of effort. This isn't pessimism. It's realism informed by history.

In another 20 years, the autonomous vehicle landscape will likely look different from today. But based on the pattern of the past 120 years, it probably won't look as different as today's optimists predict. There will be more autonomous vehicles, more cities with services, more long-haul autonomous trucks. But fully autonomous vehicles operating without restrictions everywhere, to the vision Futurama promised a century ago, likely remains further in the future than current predictions suggest.

Yet the pursuit continues. The same engineering ambition that drove Quevedo, that motivated the 1920s experimenters, that filled the Futurama exhibit with miniature autonomous vehicles, that drove the DARPA competitions, that attracts billions in venture capital today—that ambition remains. In the end, the story of autonomous vehicles is not about when they'll arrive, but about the relentless human drive to automate even the most complex, safety-critical tasks. Eventually, we will get there. The only question is: how long?

Key Takeaways

- Leonardo Torres Quevedo's telekino (1904) was the first wireless remote-controlled vehicle, proving machines could be guided without human operators

- 1920s-1930s radio-controlled car demonstrations showed autonomous driving concepts worked in real traffic, though remained impractical novelties

- The 1950s vision of embedded highway systems for autonomous guidance was technically sound but economically impossible to implement at scale

- Deep learning breakthrough in 2012-2015 finally gave autonomous vehicles the perception capability needed for real-world driving

- Modern deployed autonomous vehicles (Waymo, Cruise) operate successfully but only in limited geographic areas under favorable conditions, not everywhere

- The gap between demonstrating autonomous capability and deploying reliable systems at scale has consistently been far larger than predicted

Related Articles

- Tesla's Cybercab Launch vs. Robotaxi Crash Rate Reality [2025]

- Waymo's Defense Against AV Critics: What You Need to Know [2025]

- New York's Robotaxi Reversal: Why the Waymo Dream Died [2025]

- Waymo's Remote Drivers Controversy: What Really Happens Behind the Wheel [2025]

- Why Waymo Pays DoorDash Drivers to Close Car Doors [2025]

- New York Pulls Back on Robotaxi Expansion: What Happened and Why [2025]

![The Bold History of Self-Driving Cars: From 1904 to Modern Waymo [2025]](https://tryrunable.com/blog/the-bold-history-of-self-driving-cars-from-1904-to-modern-wa/image-1-1771850268250.jpg)