The Post-Transformer Era: Solving AI's Energy Crisis [2025]

Artificial Intelligence (AI) has driven remarkable advancements across various domains, from automated translation to predictive analytics. However, one of the pressing issues that accompany these technological leaps is the energy consumption associated with AI models, particularly those relying on transformers. As AI capabilities expand, their energy demands grow, prompting the need for more sustainable alternatives.

TL; DR

- Energy Concerns: AI models, especially transformers, consume vast energy resources.

- Alternative Approaches: New AI architectures aim to reduce energy footprints.

- Efficiency Measures: Techniques like model pruning and quantization are gaining traction.

- Sustainability Goals: The industry is prioritizing green AI practices.

- Future Outlook: Continued innovation is essential to meet AI's energy challenges.

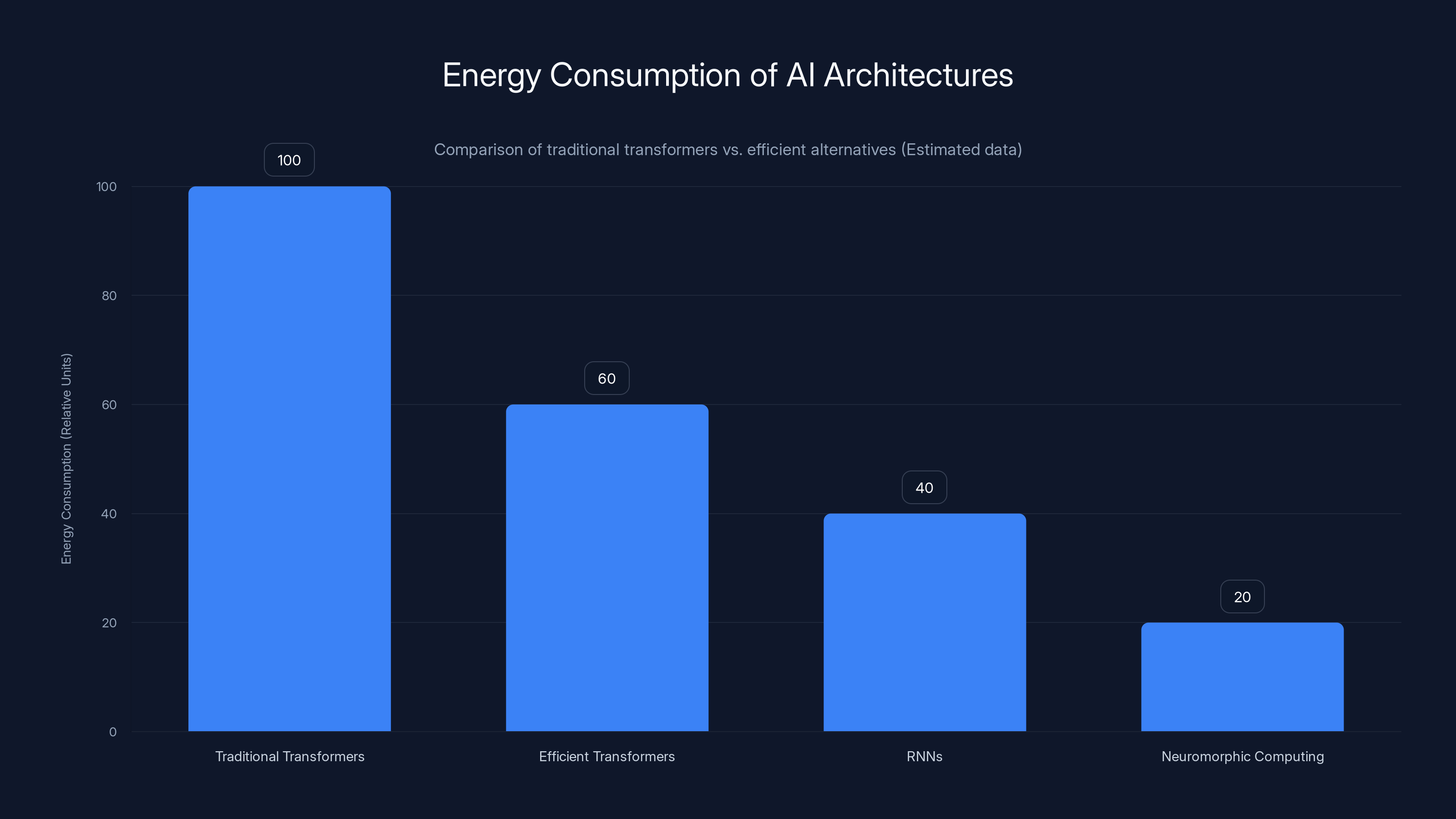

Efficient transformers, RNNs, and neuromorphic computing significantly reduce energy consumption compared to traditional transformers. Estimated data.

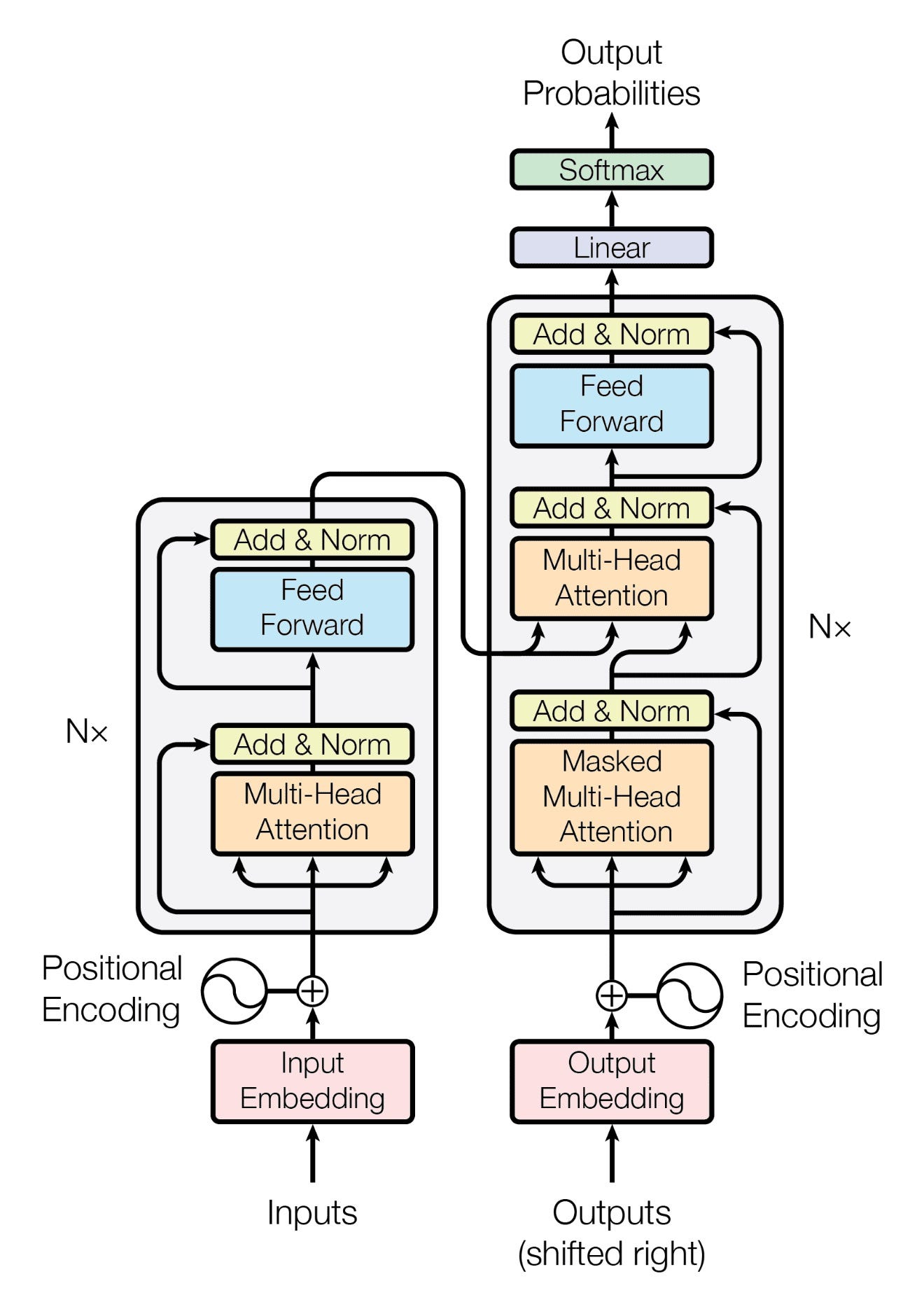

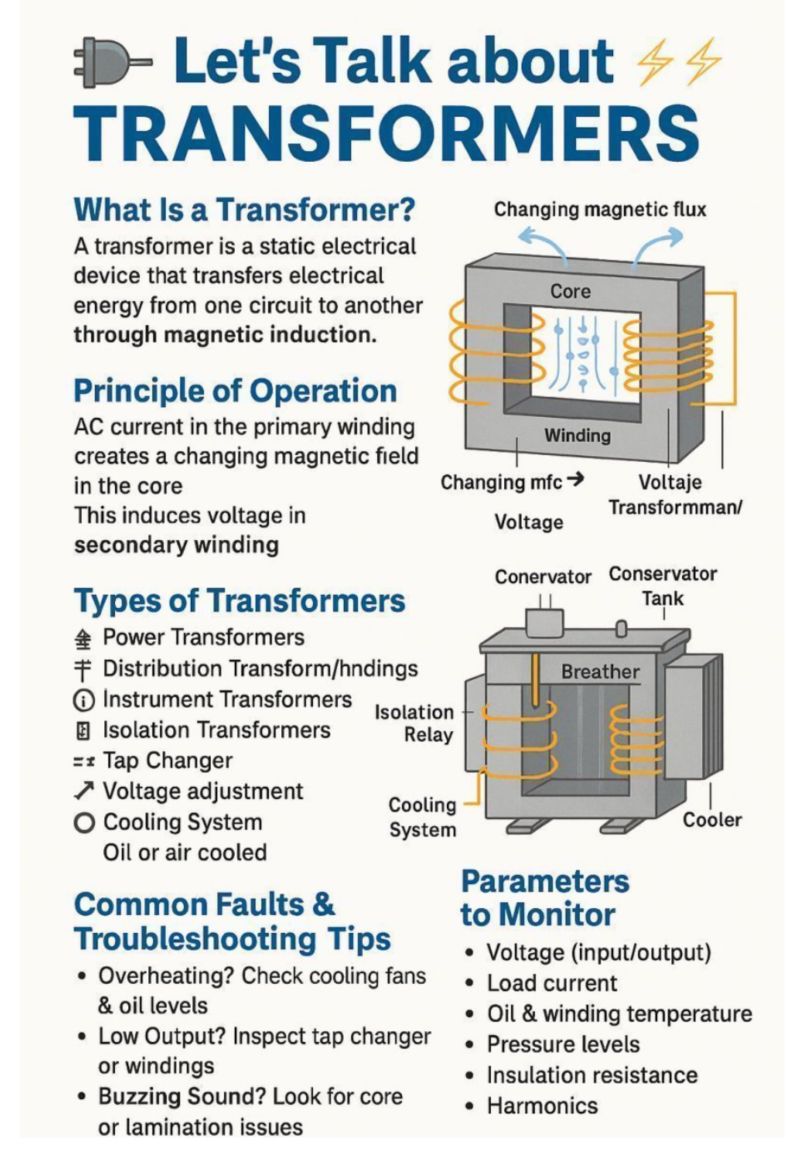

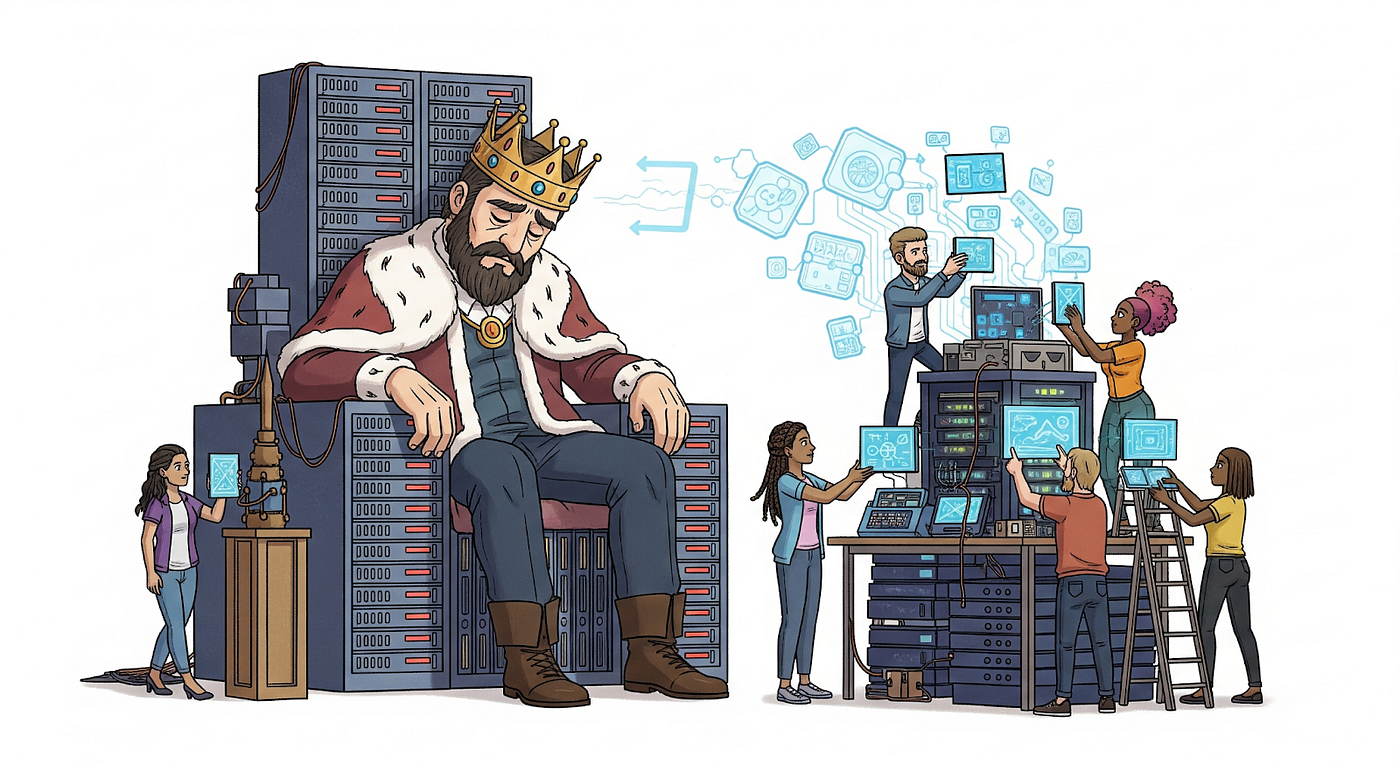

The Energy Challenge of Transformers

Transformers, the backbone of many state-of-the-art AI models, have revolutionized natural language processing and other fields. However, their energy consumption is substantial. A single training session for a large transformer model can consume as much energy as several homes do in a year.

Understanding Transformer Energy Use

Transformers process vast amounts of data using numerous parameters and layers. This complexity and the volume of calculations contribute to their high energy requirements. For instance, training models like GPT-3 requires massive computational resources, often necessitating large data centers.

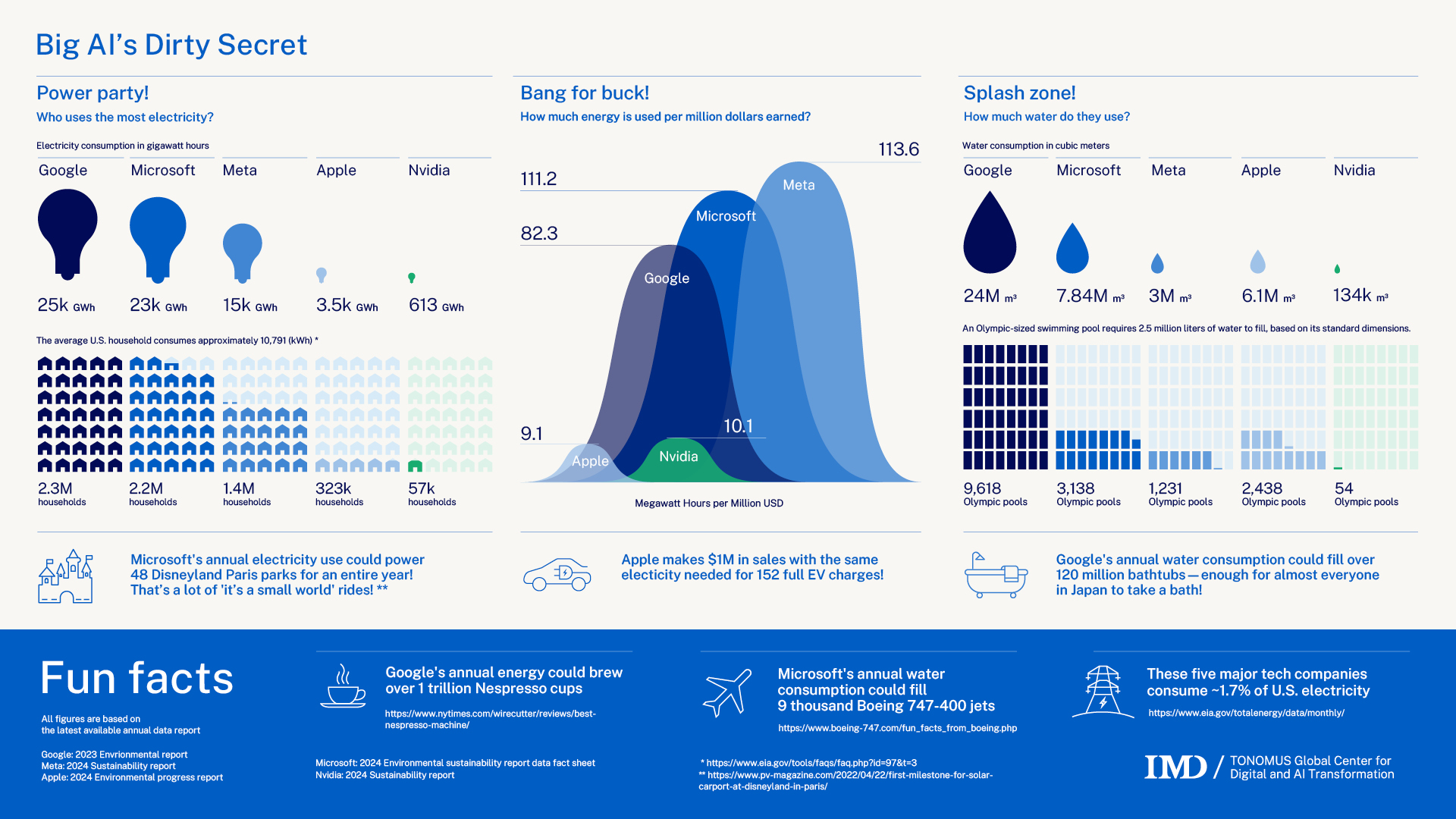

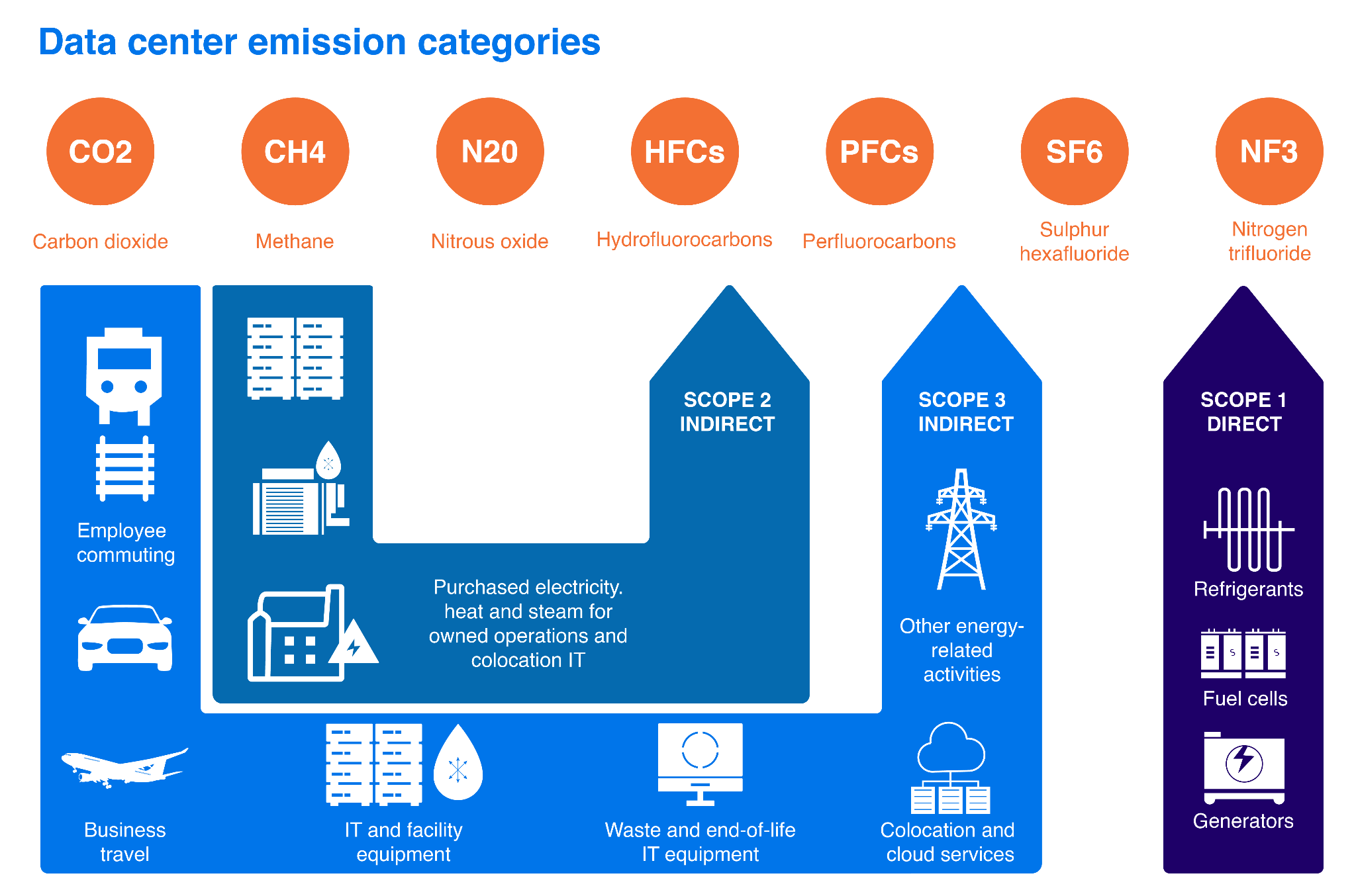

The Environmental Impact

The environmental footprint of transformers is significant. Data centers supporting these models contribute to carbon emissions and require enormous cooling capacities to prevent overheating. As AI models become more sophisticated, these environmental impacts are expected to grow unless addressed effectively.

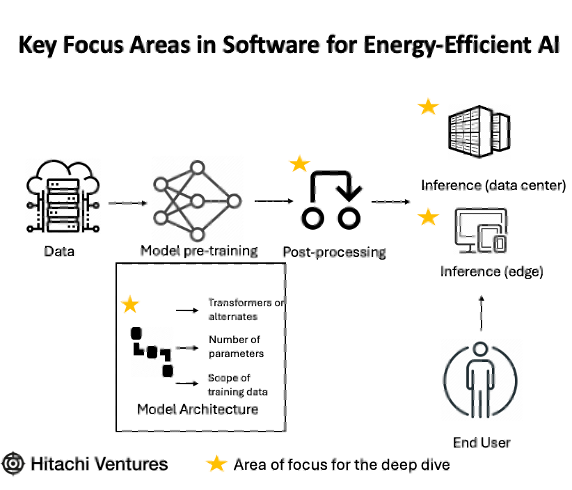

Exploring Alternatives: Post-Transformer Architectures

To mitigate the energy challenges posed by transformers, researchers are exploring several alternative architectures. These new models aim to maintain performance while drastically reducing energy consumption.

1. Efficient Transformers

Some advancements focus on optimizing transformers themselves. Efficient transformers reduce the number of parameters or layers, maintaining accuracy while using less energy.

- Sparse Transformers: These models only activate certain parts of the network during computation, reducing unnecessary calculations.

- Linear Transformers: By approximating attention mechanisms, these transformers cut down energy usage without compromising output.

2. Recurrent Neural Networks (RNNs)

RNNs are being revisited due to their inherent efficiency in handling sequential data. Unlike transformers, RNNs process data sequentially, which can be more energy-efficient for certain tasks.

Key Features of RNNs:

- Sequential Processing: Efficient for time-series data and tasks requiring temporal understanding.

- Fewer Parameters: Generally require less computational power than transformers.

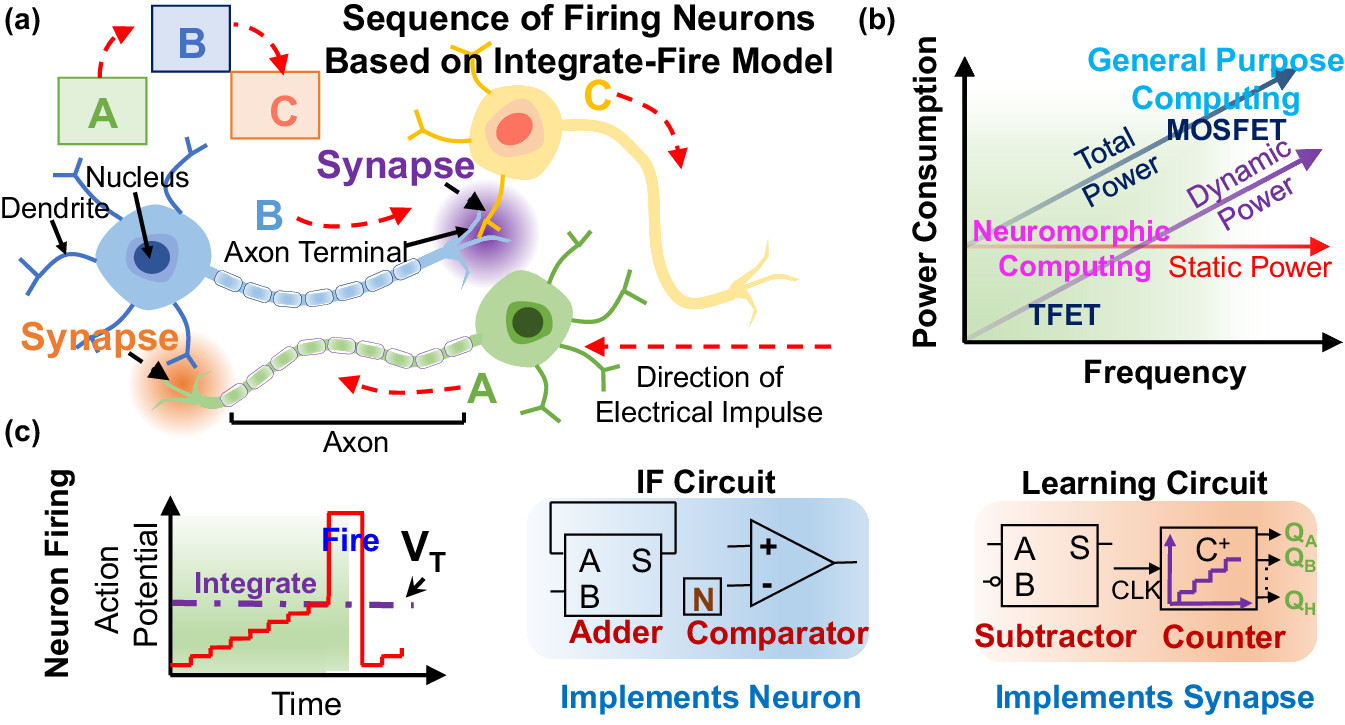

3. Neuromorphic Computing

Neuromorphic computing mimics the human brain's neural architecture, focusing on low power consumption and high efficiency. This approach uses spiking neural networks (SNNs) that only activate neurons when necessary.

Advantages:

- Energy Efficiency: SNNs consume substantially less energy.

- Real-Time Processing: Ideal for tasks requiring immediate responses, such as robotics.

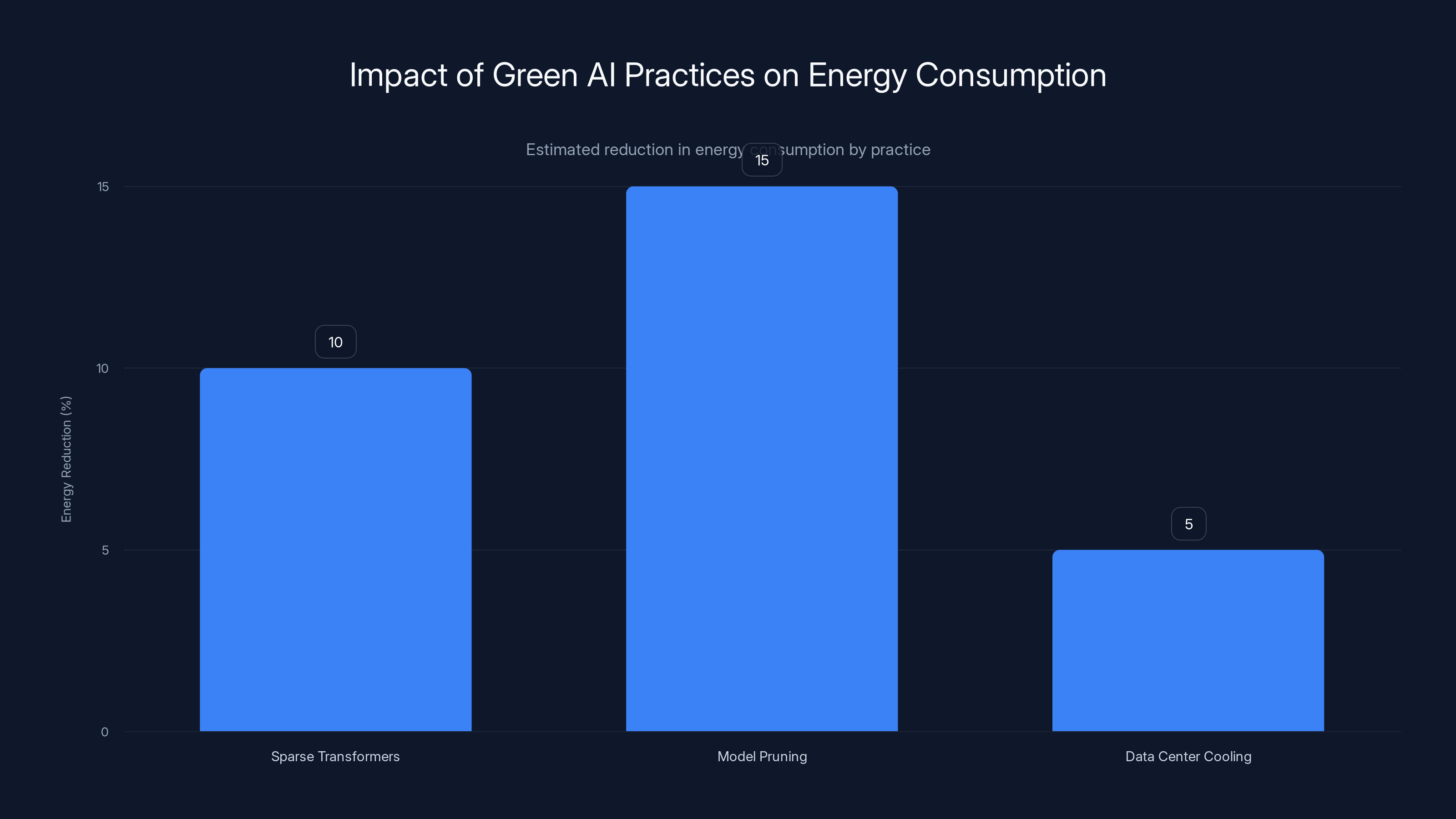

The adoption of green AI practices led to a 30% reduction in energy consumption, with model pruning contributing the most. Estimated data.

Techniques for Reducing AI Energy Consumption

Beyond architectural changes, several techniques can help reduce the energy footprint of AI models.

Model Pruning

Model pruning involves removing redundant weights and neurons from a network, decreasing the computations required. This technique can significantly reduce energy usage while maintaining model accuracy.

- Static Pruning: Performed once during training to create a leaner model.

- Dynamic Pruning: Continually adjusts the model's architecture during operation based on data inputs.

Quantization

Quantization reduces the precision of the numbers used in model calculations, decreasing computational load and energy consumption. This technique can be applied during training or inference.

- Advantages: Reduces memory requirements and speeds up computations.

- Trade-offs: May lead to a slight decrease in model accuracy.

Case Study: Implementing Green AI Practices

A prominent AI company recently adopted green AI practices to minimize its environmental impact. By integrating efficient transformer models and employing model pruning, the company reduced its data center energy consumption by 30%.

Steps Taken:

- Adopted Sparse Transformers: Reduced unnecessary computations.

- Implemented Model Pruning: Streamlined model architecture.

- Enhanced Data Center Cooling: Improved energy efficiency of infrastructure.

Future Trends in AI Energy Efficiency

As AI continues to evolve, the focus on energy efficiency will intensify. Here are some trends expected to shape the future of AI energy consumption.

1. AI Hardware Innovations

Development of energy-efficient AI hardware, such as tensor processing units (TPUs) and graphics processing units (GPUs), will play a crucial role. These specialized chips are designed to handle AI workloads more efficiently than traditional CPUs.

2. Federated Learning

Federated learning allows models to be trained across multiple devices without centralizing data. This approach can significantly reduce the energy costs associated with data transmission and centralized processing.

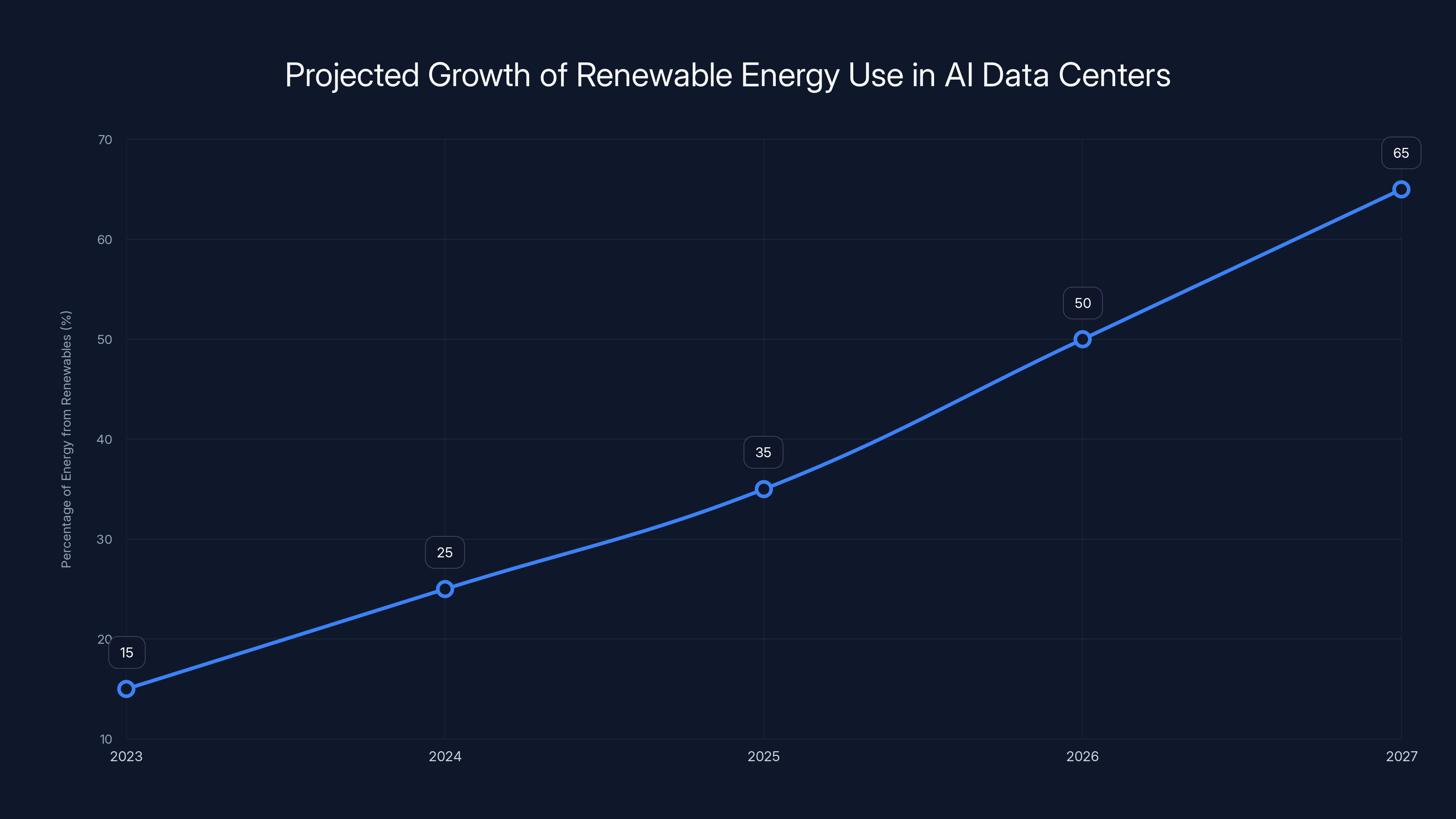

3. Increased Use of Renewable Energy

AI companies are increasingly investing in renewable energy sources for their data centers. Solar, wind, and hydroelectric power can mitigate the carbon footprint of AI operations.

4. Policy and Regulation

Governments may introduce regulations to ensure AI development aligns with sustainability goals. These policies could mandate energy efficiency standards or provide incentives for green AI initiatives.

The use of renewable energy in AI data centers is projected to grow significantly, with an estimated increase from 15% in 2023 to 65% by 2027. Estimated data.

Practical Guide to Implementing Energy-Efficient AI

For organizations and developers interested in adopting energy-efficient AI practices, here are some practical steps:

- Evaluate Current Models: Assess the energy consumption of existing AI models and identify areas for improvement.

- Adopt Efficient Architectures: Consider transitioning to architectures like efficient transformers or RNNs.

- Implement Pruning and Quantization: Use these techniques to streamline models and reduce energy usage.

- Optimize Infrastructure: Upgrade data centers with energy-efficient cooling and renewable energy sources.

- Monitor and Adjust: Continuously track energy usage and make adjustments as needed.

Common Pitfalls and How to Avoid Them

Over-Optimization

Focusing too heavily on reducing energy consumption without considering performance can lead to suboptimal results. It's crucial to strike a balance between efficiency and efficacy.

Solution: Regularly test and validate models to ensure they meet both energy and performance benchmarks.

Ignoring Infrastructure

While model optimization is important, neglecting the energy efficiency of the infrastructure can undermine efforts.

Solution: Invest in modern, energy-efficient data centers and technologies.

Conclusion: A Sustainable AI Future

Solving AI's energy crisis requires a holistic approach that combines architectural innovation, practical techniques, and sustainable practices. The transition beyond transformers to more energy-efficient models is a step in the right direction, but ongoing efforts are needed to ensure AI continues to evolve sustainably.

By prioritizing energy efficiency and sustainability, the AI industry can continue to innovate without compromising our planet's resources.

FAQ

What is the energy crisis in AI?

The energy crisis in AI refers to the increasing energy demands of AI models, particularly those using transformers, which contribute to significant carbon emissions and environmental impact.

How do transformers contribute to AI's energy consumption?

Transformers require substantial computational resources due to their complex architecture and vast number of parameters, leading to high energy consumption.

What are some alternatives to transformers for AI models?

Alternatives include efficient transformers, recurrent neural networks (RNNs), and neuromorphic computing, all of which aim to reduce energy usage.

How can AI models be made more energy-efficient?

Techniques like model pruning, quantization, and adopting efficient architectures can help reduce the energy footprint of AI models.

Why is energy efficiency important in AI development?

Energy efficiency is crucial to minimize the environmental impact of AI, reduce operational costs, and ensure sustainable technological advancement.

What role do renewable energy sources play in AI?

Renewable energy sources can significantly reduce the carbon footprint of AI data centers, supporting green AI initiatives and sustainable practices.

How can organizations implement energy-efficient AI practices?

Organizations can evaluate their current models, adopt efficient architectures, implement pruning and quantization, optimize infrastructure, and monitor energy usage.

What are the benefits of using neuromorphic computing in AI?

Neuromorphic computing offers energy efficiency and real-time processing capabilities, making it ideal for tasks requiring immediate responses.

Key Takeaways

- AI's energy demands are a growing concern, necessitating more sustainable practices.

- Efficient transformers and alternative architectures offer promising solutions.

- Techniques like model pruning and quantization can reduce energy usage.

- Investments in AI hardware and renewable energy are essential for sustainability.

- Organizations must balance energy efficiency with model performance.

Quick Tips

Related Articles

- Revolutionizing AI: Google's TurboQuant Compression Technology [2025]

- AI Chip Startup Rebellions Raises 2.3B Valuation [2025]

- Gimlet Labs: Revolutionizing AI Inference with Multi-Silicon Solutions [2025]

- Mamba 3: Revolutionizing Language Modeling Beyond Transformers [2025]

- Google's Gemini Embedding 2: Redefining Enterprise Efficiency with Multimodal Support [2025]

- AMD Introduces Ryzen AI Processors to Desktop PCs: A New Era for AI Computing [2025]

![The Post-Transformer Era: Solving AI's Energy Crisis [2025]](https://tryrunable.com/blog/the-post-transformer-era-solving-ai-s-energy-crisis-2025/image-1-1775725482749.jpg)