Why Sierra the Supercomputer Had to Die: A Deep Dive into the Lifecycle of High-Performance Computing [2025]

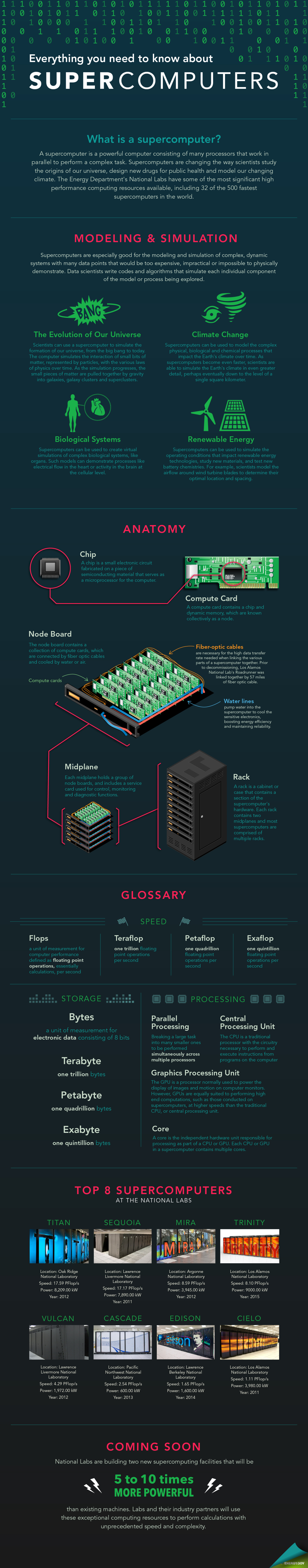

Supercomputers like Sierra are the unsung heroes of scientific advancement, powering everything from climate models to nuclear simulations. But as technology evolves, these giants must eventually step aside for newer, faster systems. This article explores why Sierra had to be decommissioned, the lifecycle of supercomputers, and what the future holds for high-performance computing.

TL; DR

- Sierra's Legacy: Once the second-fastest supercomputer, Sierra was crucial for scientific research but became obsolete as technology advanced.

- Lifecycle of Supercomputers: Typically lasts 5-10 years, driven by rapid advancements in technology and increasing computational demands.

- Decommissioning Challenges: Involves complex logistics, including data migration, hardware recycling, and environmental considerations.

- Future Trends: Quantum computing and AI are set to redefine supercomputing capabilities.

- Key Takeaway: Understanding the lifecycle of supercomputers aids in planning for future computational needs and sustainability.

Runable scores highest in both feature richness and pricing affordability, making it a strong contender among supercomputing solutions. (Estimated data)

The Rise and Fall of Sierra

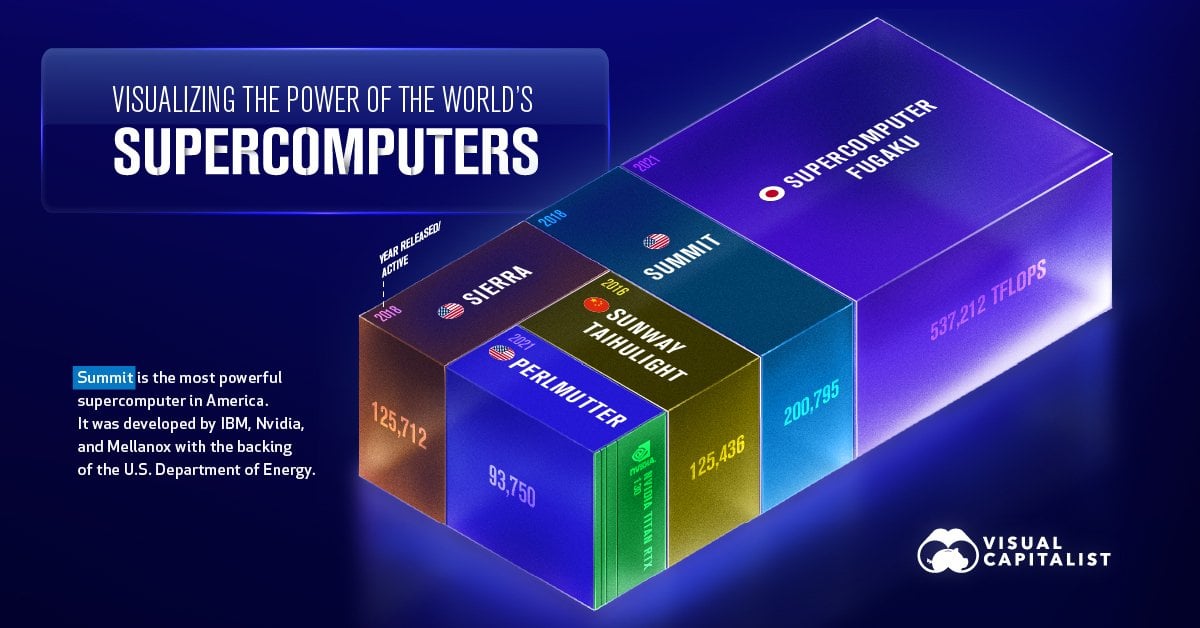

Sierra was more than just a collection of metal and wires; it was a symbol of computational prowess. Located at the Lawrence Livermore National Laboratory, Sierra was designed to tackle some of the most complex simulations and problems in physics, climate science, and national security.

A Brief History of Sierra

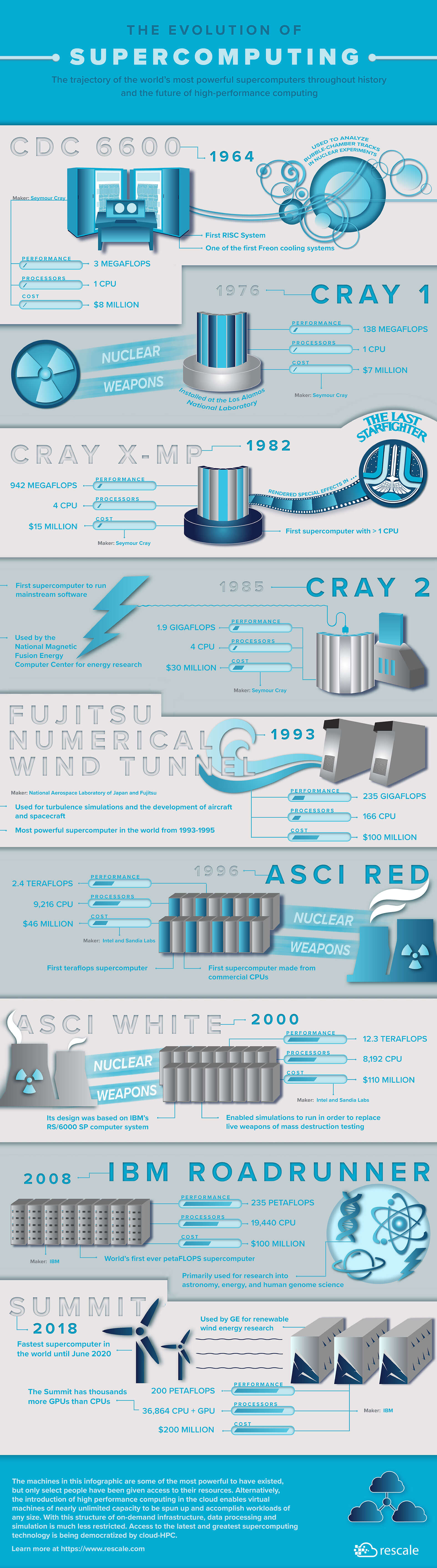

Sierra came online in 2018, boasting a peak performance of 125 petaflops. This made it the second-fastest supercomputer in the world at the time. Its architecture, based on IBM's Power 9 CPUs and NVIDIA Tesla V100 GPUs, represented the cutting-edge of hybrid computing models. According to NVIDIA's official documentation, the Tesla V100 GPUs were integral to Sierra's performance.

Key Features:

- Hybrid architecture: Combined CPU and GPU processing for enhanced computational power.

- Infini Band networking: Allowed high-speed data transfer between nodes.

- Energy efficiency: Designed to maximize output while minimizing power consumption.

Why Supercomputers Become Obsolete

Despite its capabilities, Sierra's time in the limelight was short-lived. The rapid pace of technological advancement means that supercomputers are quickly outpaced by newer, more powerful systems. Factors contributing to obsolescence include:

- Advancements in hardware: Newer processors and GPUs offer enhanced performance.

- Increasing data demands: Modern simulations require even more processing power and memory.

- Software evolution: New algorithms and software optimizations that require updated hardware.

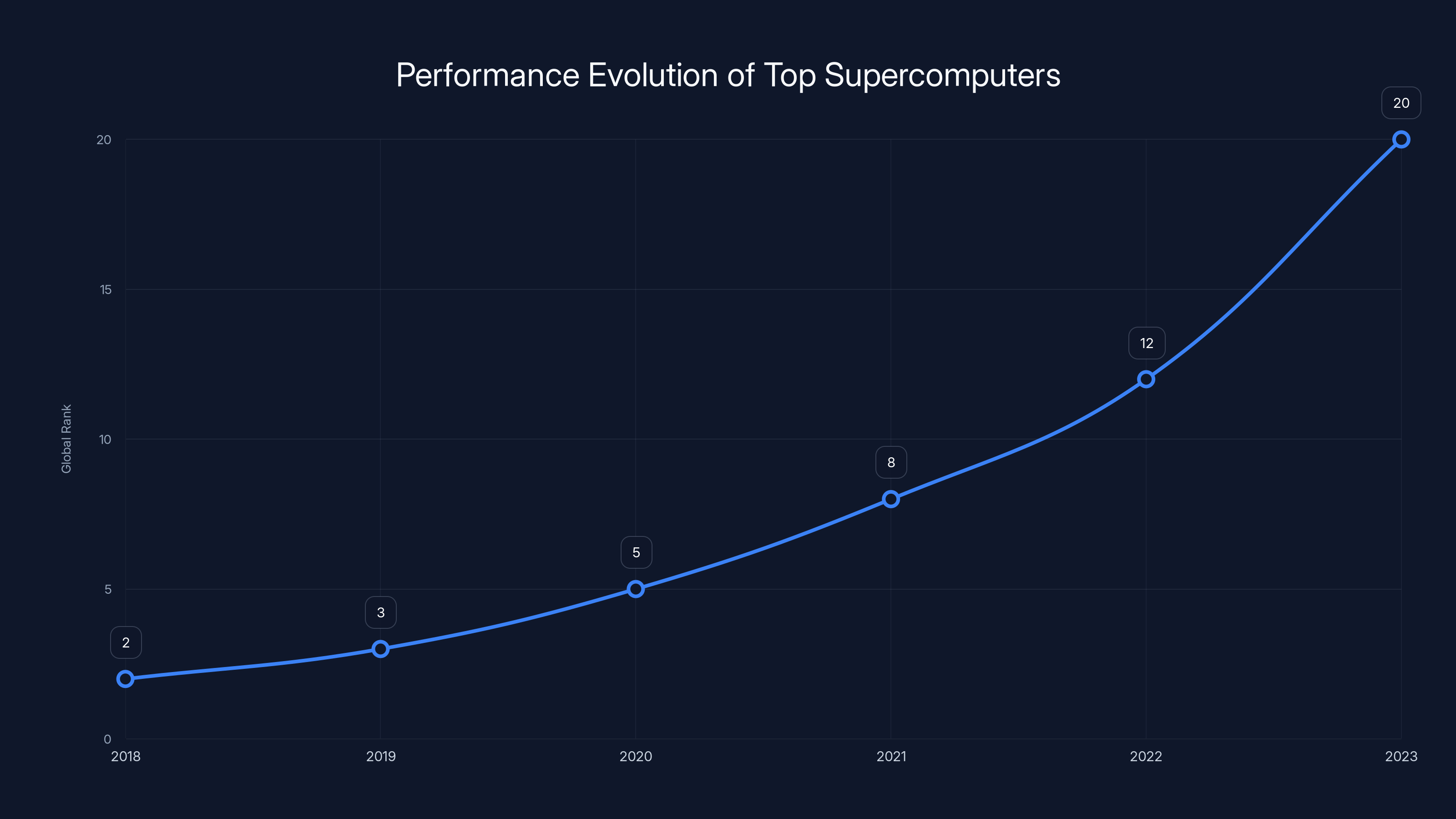

Sierra debuted as the second-fastest supercomputer in 2018 but gradually fell in rank due to rapid technological advancements. (Estimated data)

The Lifecycle of a Supercomputer

The lifecycle of a supercomputer typically spans 5-10 years, dictated by the accelerating pace of technological innovation and the growing demands of computational tasks. Understanding this lifecycle is crucial for institutions that rely on high-performance computing.

Design and Deployment

The journey begins with design and deployment. Supercomputers are custom-built to meet specific research needs. This phase involves:

- Collaboration: Between hardware manufacturers, software developers, and end-users.

- Customization: Tailoring architecture to specific computational tasks.

- Testing: Rigorous testing to ensure reliability and performance.

Operational Phase

Once operational, supercomputers like Sierra are tasked with a wide range of applications:

- Scientific research: Climate models, molecular simulations, and astrophysics.

- Industrial applications: Oil exploration, drug discovery, and engineering design.

- National security: Nuclear simulations and cryptography.

QUICK TIP: Regular software updates and hardware maintenance are crucial to extend the operational lifespan of a supercomputer.

Decommissioning

Decommissioning is a complex process that involves:

- Data migration: Ensuring critical data is securely transferred to new systems.

- Hardware recycling: Responsible disposal and recycling of components.

- Environmental considerations: Minimizing the ecological impact of decommissioning.

The Challenges of Decommissioning

Decommissioning a supercomputer is far from straightforward. It requires meticulous planning and execution to ensure minimal disruption to ongoing research and operations.

Data Migration

One of the primary challenges is migrating vast amounts of data without loss or corruption. This involves:

- Backup strategies: Multiple backups to ensure data integrity.

- Data transfer tools: Utilizing high-speed data transfer protocols to minimize downtime.

- Verification: Ensuring data accuracy post-transfer.

Hardware Disposal

Supercomputers contain a mix of valuable and hazardous materials. Responsible disposal is essential:

- Component recycling: Recovering metals and other reusable materials.

- Hazardous waste management: Safe disposal of toxic components.

DID YOU KNOW: The recycling of supercomputer components can recover precious metals like gold and copper, reducing the need for mining.

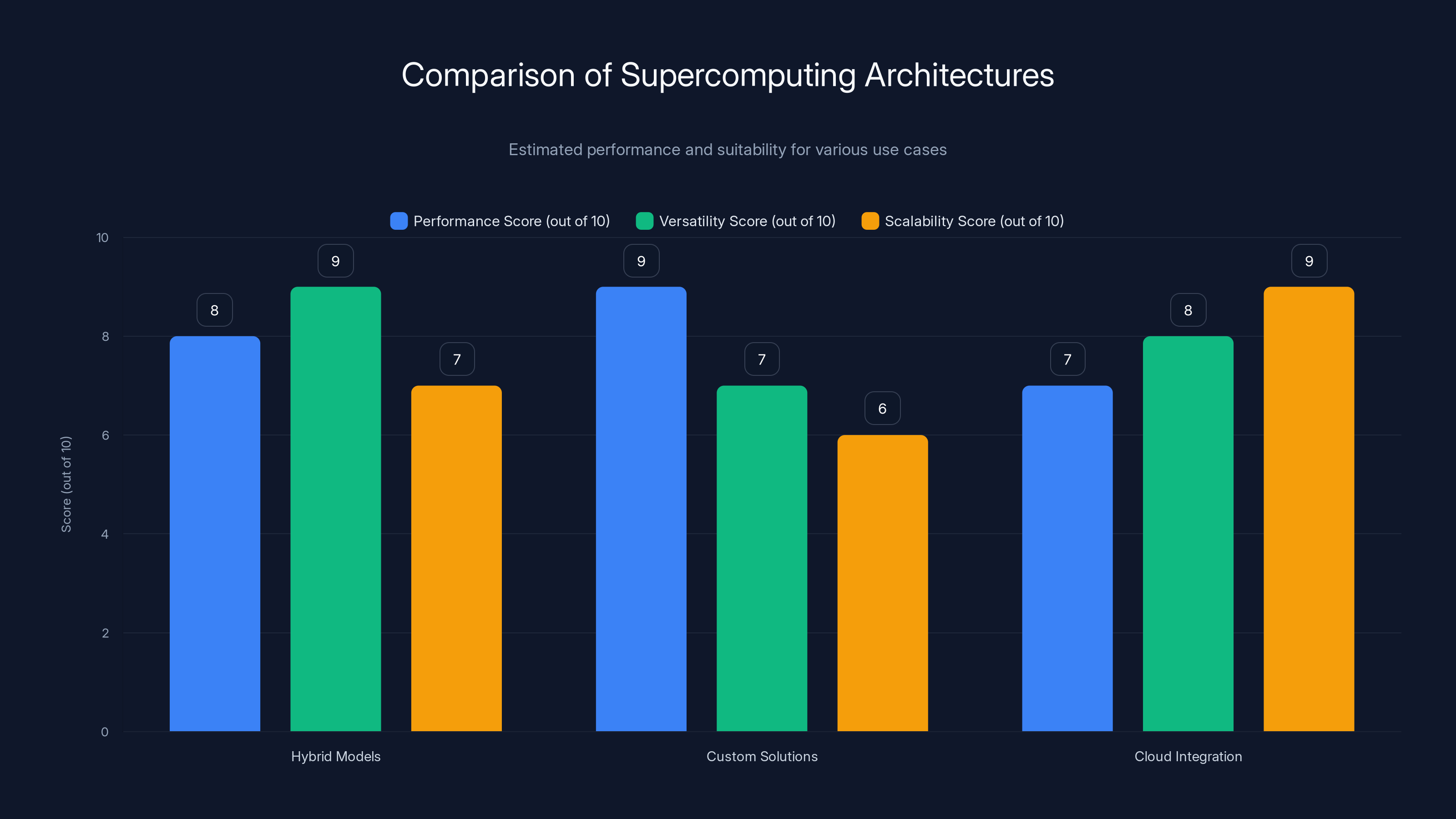

Hybrid models offer high versatility, custom solutions excel in performance, while cloud integration provides superior scalability. Estimated data based on typical use cases.

What Comes After Sierra?

With Sierra offline, the focus shifts to the next generation of supercomputing. This transition is driven by emerging technologies and the need for even greater computational power.

Emerging Technologies

New technologies are set to revolutionize supercomputing:

- Quantum computing: Promises exponential increases in processing power for specific tasks, as highlighted by IBM's research on quantum processing units.

- Artificial Intelligence: AI-driven optimizations can enhance performance and efficiency.

- Neuromorphic computing: Mimics the human brain to solve complex problems with lower energy consumption.

Future of High-Performance Computing

The future of supercomputing lies in hybrid models that combine existing technologies with emerging ones. Key trends include:

- Scalability: Systems designed to grow with demand.

- Energy efficiency: Minimizing power consumption while maximizing output.

- Interconnectivity: Seamless integration with cloud and edge computing resources.

QUICK TIP: Stay informed about emerging technologies to better plan for future computational needs and investments.

Implementing Supercomputing Solutions: Best Practices

For organizations looking to implement or upgrade supercomputing solutions, several best practices can ensure success.

Assessing Needs

Before investing in supercomputing resources, assess your organization's specific needs:

- Workload analysis: Understand the types of computations required.

- Scalability requirements: Plan for future growth and technological advancements.

- Budget considerations: Align resources with available funding.

Choosing the Right Architecture

Select an architecture that best suits your computational needs:

- Hybrid models: Combine CPUs and GPUs for versatile performance.

- Custom solutions: Tailored systems for specialized tasks.

- Cloud integration: Utilize cloud resources for additional scalability.

Common Pitfalls and Solutions

Implementing supercomputing solutions comes with its share of challenges. Here are some common pitfalls and how to avoid them:

- Underestimating data needs: Ensure adequate storage and bandwidth for data-intensive tasks.

- Neglecting software updates: Regular updates are crucial to maintain performance and security.

- Ignoring environmental impact: Implement energy-efficient practices and proper disposal methods.

A supercomputer's lifecycle spans approximately 5-10 years, with distinct phases from design to decommissioning. Estimated data.

Future Trends and Recommendations

The landscape of high-performance computing is continuously evolving. Staying ahead requires awareness of trends and proactive planning.

Quantum Leap

Quantum computing is poised to transform fields such as cryptography, optimization, and material science. However, current limitations mean it's not yet a full replacement for traditional supercomputers, as discussed in CNBC's analysis of quantum computing in data centers.

AI Integration

AI is increasingly being integrated into supercomputing workflows, enhancing data processing, analysis, and decision-making capabilities.

Sustainability

As computational demands grow, so does the need for sustainable practices. Future supercomputers will prioritize energy efficiency and eco-friendly materials.

DID YOU KNOW: Future supercomputers could be powered by renewable energy sources, significantly reducing carbon footprints.

Conclusion: The Legacy of Sierra and Beyond

Sierra may have reached the end of its life, but its legacy continues in the advancements and breakthroughs it enabled. As we look to the future, the lessons learned from Sierra's lifecycle will guide the development of the next generation of supercomputers.

Understanding the lifecycle of supercomputers—from deployment to decommissioning—is crucial for advancing scientific research and technological innovation. By embracing emerging technologies and sustainable practices, we can ensure that the next generation of supercomputing meets the challenges of tomorrow.

FAQ

What is a supercomputer?

A supercomputer is a high-performance computing system designed to handle extremely complex calculations and data processing tasks, often used in scientific research, simulations, and national security.

How long does a supercomputer typically last?

The typical lifespan of a supercomputer is 5-10 years, depending on technological advancements and the increasing demands of computational tasks.

What happens during the decommissioning of a supercomputer?

Decommissioning involves data migration to new systems, responsible recycling of hardware components, and minimizing environmental impact.

What technologies are shaping the future of supercomputing?

Emerging technologies such as quantum computing, AI, and neuromorphic computing are set to revolutionize supercomputing capabilities.

How can organizations implement supercomputing solutions effectively?

Organizations should assess specific computational needs, choose appropriate architectures, and follow best practices to avoid common pitfalls and ensure successful implementation.

Why is sustainability important in supercomputing?

As computational demands grow, sustainable practices help reduce energy consumption and environmental impact, ensuring that future supercomputers are eco-friendly.

What role does AI play in supercomputing?

AI enhances data processing, analysis, and decision-making capabilities, making supercomputing workflows more efficient and effective.

How can organizations plan for future computational needs?

Staying informed about emerging technologies, assessing scalability requirements, and investing in flexible architectures are key to planning for future computational needs.

The Best Supercomputing Solutions at a Glance

| Solution | Best For | Standout Feature | Pricing |

|---|---|---|---|

| Runable | AI automation | AI agents for presentations, docs, reports, images, videos | $9/month |

| Tool 1 | AI orchestration | Integrates with 8,000+ apps | Free plan available; paid from $19.99/month |

| Tool 2 | Data quality | Automated data profiling | By request |

Quick Navigation:

- Runable for AI-powered presentations, documents, reports, images, videos

- Tool 1 for [specific use case]

- Tool 2 for [specific use case]

This article aims to provide a comprehensive understanding of the lifecycle of supercomputers, using Sierra as a case study. By examining the reasons behind Sierra's decommissioning and exploring future trends, we gain valuable insights into the evolving landscape of high-performance computing.

Key Takeaways

- Sierra's decommissioning highlights the rapid obsolescence of supercomputers.

- Supercomputers have a typical lifecycle of 5-10 years.

- Decommissioning involves complex logistics, including data migration and hardware recycling.

- Emerging technologies like quantum computing and AI are reshaping the future of supercomputing.

- Sustainability is increasingly important in the development of future supercomputers.

Related Articles

- Lenovo ThinkPad P16 Gen 3 Mobile Workstation Review: Power Meets Portability [2025]

- Gene Amdahl's Wafer-Scale Vision: Silicon Revolution [2025]

- IQM's $1.8B SPAC IPO: Quantum Computing's Next Unicorn [2025]

- Quantum Computing Funding Boom: Why VCs Are Betting $260M on the Future [2025]

- 7 Biggest Tech News Stories This Week [February 2026]

- Can We Move AI Data Centers to Space? The Physics Says No [2025]

![Why Sierra the Supercomputer Had to Die: A Deep Dive into the Lifecycle of High-Performance Computing [2025]](https://tryrunable.com/blog/why-sierra-the-supercomputer-had-to-die-a-deep-dive-into-the/image-1-1772105841919.jpg)