Gene Amdahl's Wafer-Scale Vision: The Silicon Revolution That Was Decades Ahead of Its Time

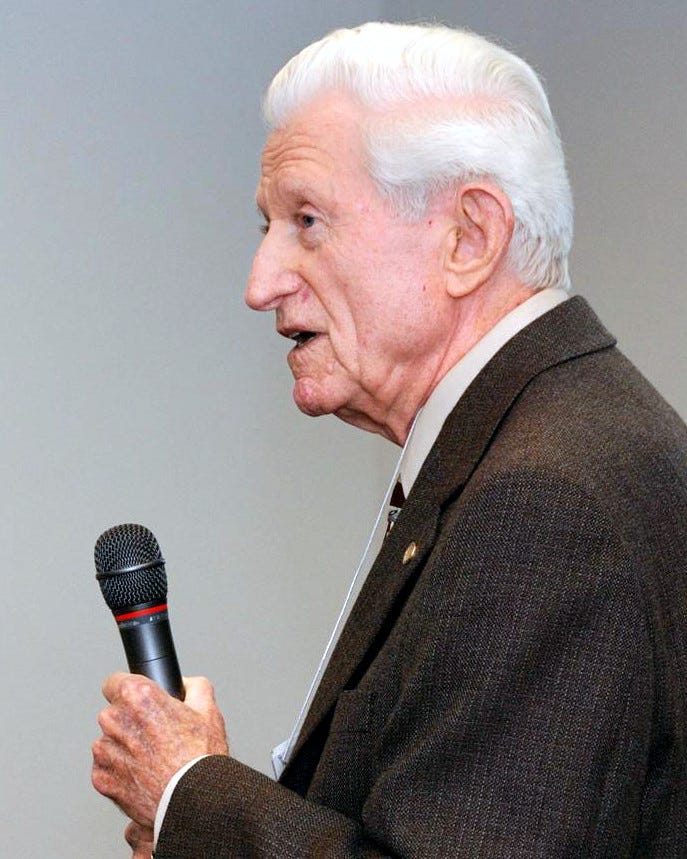

Gene Amdahl wasn't your typical engineer. While others were content optimizing existing systems, he was reimagining the fundamental architecture of computing itself. Long before Cerebras made headlines with its wafer-scale processors for artificial intelligence, Amdahl was pursuing an even more audacious idea: treating an entire silicon wafer as a single processor.

The year was 1983. Personal computers were still novelties. Mainframes dominated enterprise computing. Yet Amdahl, the legendary architect behind IBM's System/360 family, had already left Big Blue to found multiple ventures. Now, with his son Carl, he was betting his reputation on a technology that the semiconductor industry considered impossible.

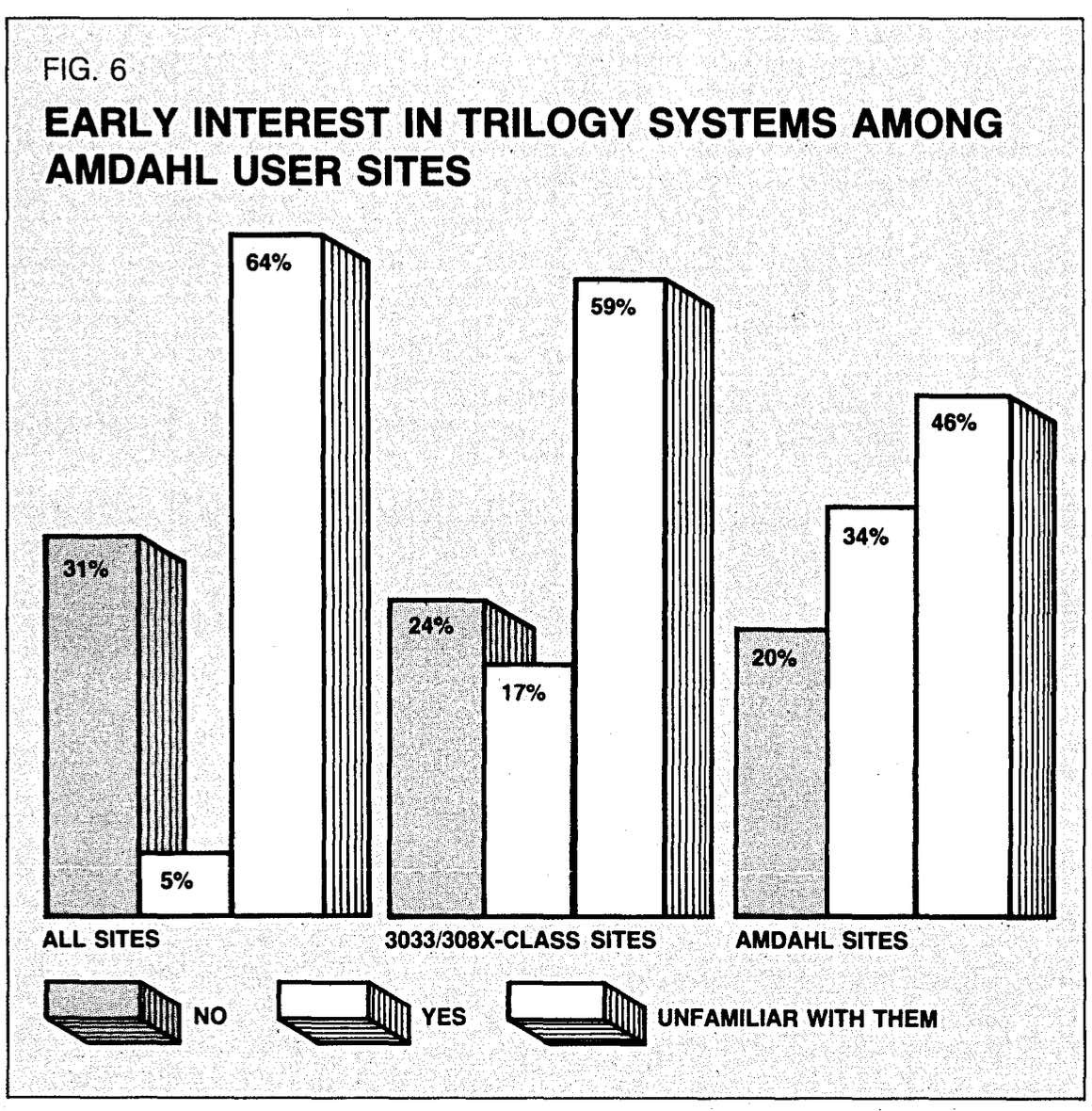

This wasn't just another startup pitch. Amdahl's name alone commanded attention. He'd already proven he could challenge IBM head-on with Amdahl Corporation, building compatible mainframes that captured significant market share. When he announced Trilogy Systems and its wafer-scale integration (WSI) plans, the industry listened. Some were skeptical. Others saw genius. All understood they were witnessing something historically significant.

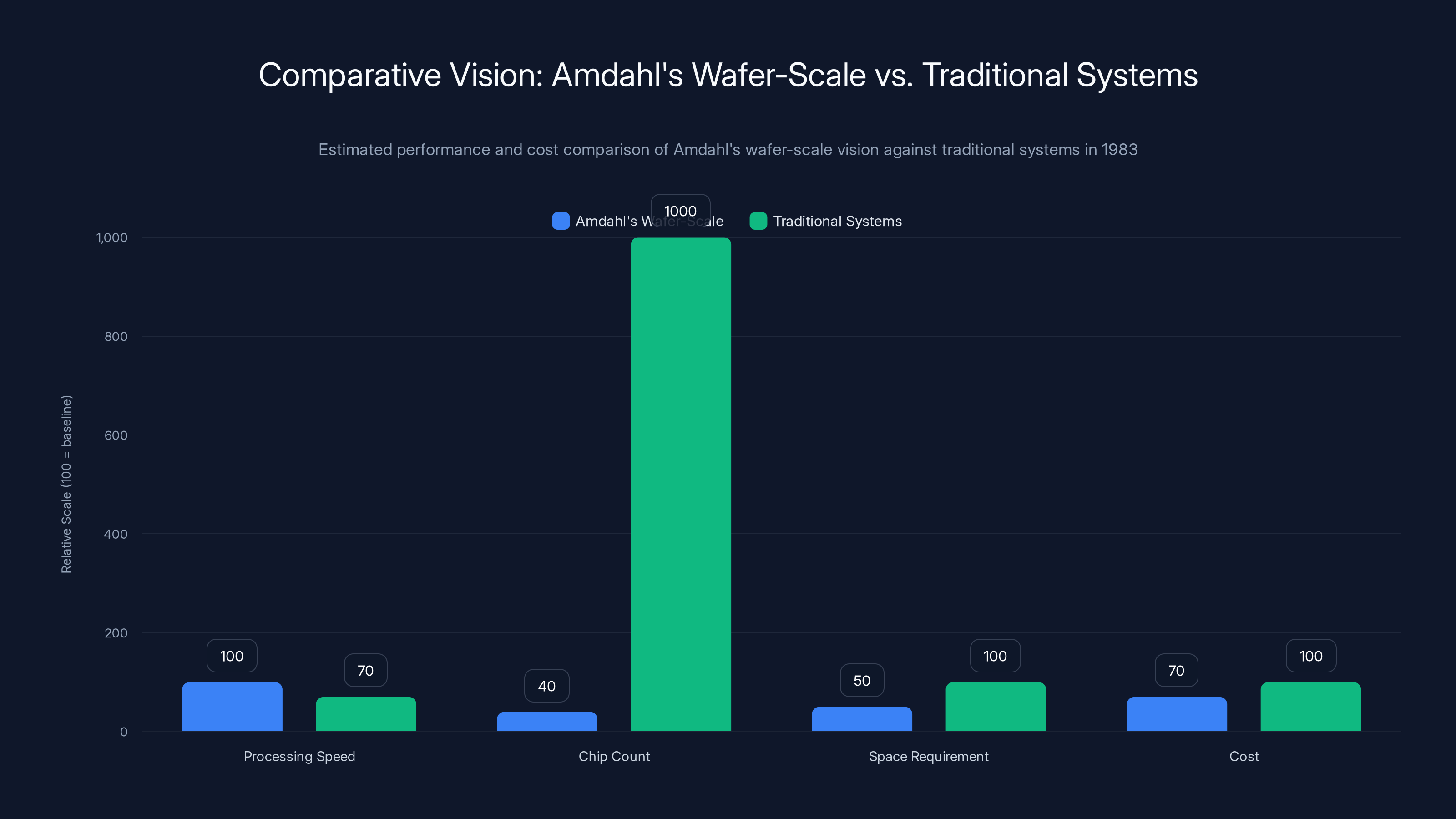

The vision was staggering: compress supercomputer performance into systems using just 40 chips instead of thousands. Achieve processing speeds rivaling the legendary Cray-1 supercomputer. Do it in less space. Undercut IBM pricing by 30%. And eventually, bring this technology to personal computers.

That last part was perhaps most remarkable. In 1983, suggesting that desktop machines could deliver mainframe-class performance seemed delusional. Yet Amdahl saw beyond the immediate horizon. He understood something fundamental about computing's future that others missed: efficiency gains from radical integration would eventually democratize power.

The story of Trilogy Systems and wafer-scale integration is a masterclass in innovation, ambition, and the brutal reality of turning visionary ideas into products. It's also a cautionary tale about timing, manufacturing complexity, and the gap between elegant theory and messy reality. Forty years later, as modern AI companies embrace many of Amdahl's core concepts, his legacy becomes clearer. He wasn't just ahead of his time. He was thinking about problems the industry hadn't yet recognized it had.

TL; DR

- Wafer-Scale Pioneer: Gene Amdahl attempted to use entire silicon wafers as single processors in 1983, decades before modern AI accelerators made this concept viable.

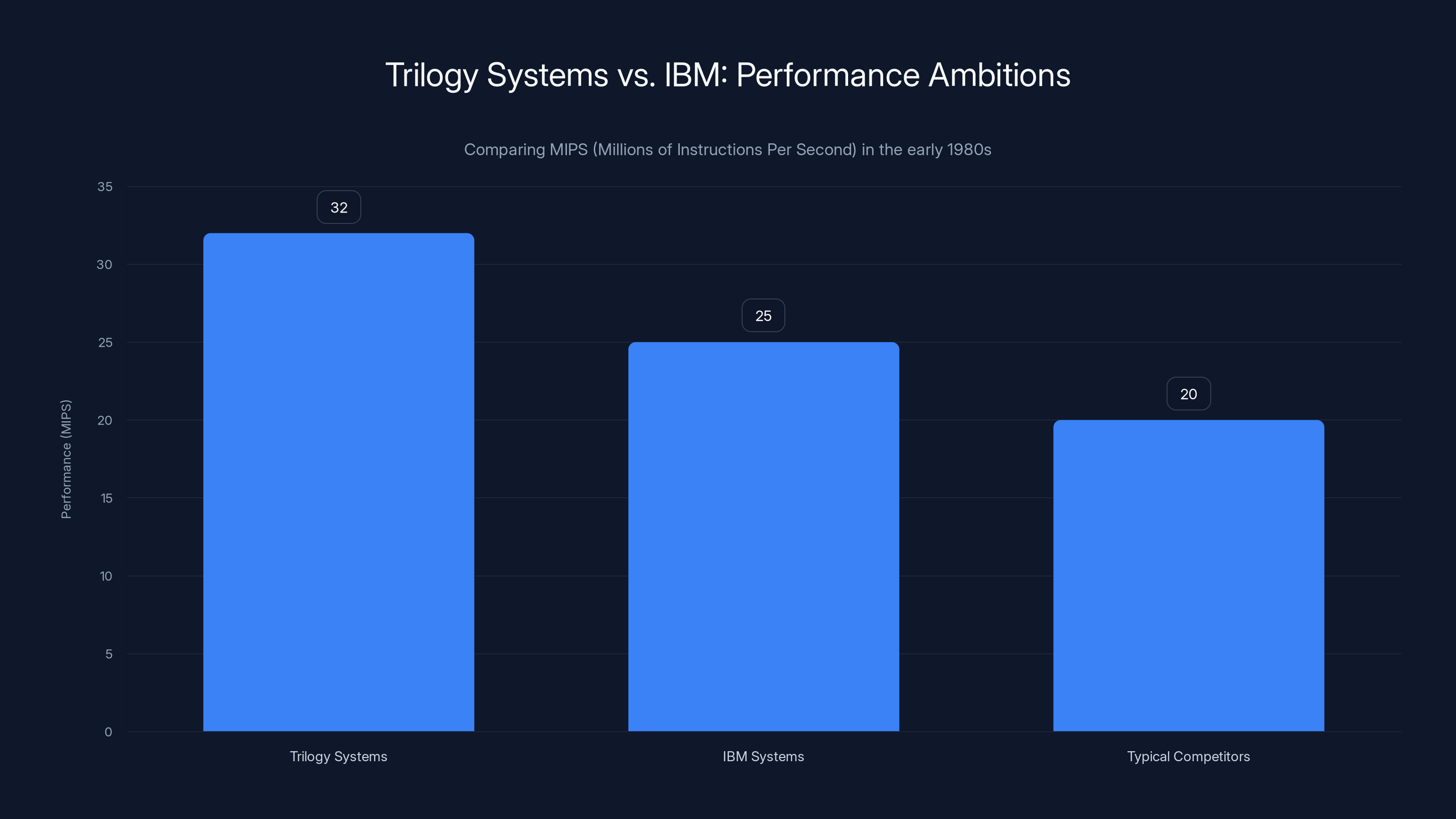

- Trilogy's Bold Vision: The company planned to build supercomputer-class systems using just 40 macrochips, achieving 32 million instructions per second while undercutting IBM by 30%.

- Design Innovation: Rather than accepting manufacturing defects as waste, Amdahl designed redundancy directly into silicon to work around damaged regions.

- Ambitious Scope: The technology wasn't limited to mainframes; Amdahl envisioned bringing wafer-scale integration to personal computers, a visionary concept for 1983.

- Commercial Failure, Conceptual Victory: Trilogy failed to commercialize the technology by 1985, but its core ideas eventually influenced modern chip design and AI processor development.

Trilogy Systems aimed to deliver 32 MIPS, surpassing IBM's 25 MIPS and typical competitors' 20 MIPS in the early 1980s. Estimated data reflects ambitious targets.

The Man Behind the Mainframe Revolution

To understand Amdahl's wafer-scale ambitions, you need to know who he was. Gene Myron Amdahl wasn't just an engineer. He was a systems architect with an almost prophetic ability to see computing's future trajectory.

Born in 1922, Amdahl came of age during computing's infancy. He earned degrees in engineering and physics from South Dakota School of Mines and Technology, then spent decades at IBM, where he became one of the most influential figures in computer architecture. His work on the System/360 family in the early 1960s fundamentally changed how the industry thought about computing platforms.

The System/360 wasn't the first computer, but it was revolutionary in scope. Amdahl designed a unified family of machines that could scale from small business systems to massive enterprise installations, all running the same software. This architectural vision defined enterprise computing for decades. More importantly, it proved Amdahl could think systemically about large-scale technical problems.

But Amdahl was restless. By 1970, he left IBM and founded Amdahl Corporation, which built IBM-compatible mainframes. The company succeeded spectacularly. By the late 1970s, it was the second-largest mainframe manufacturer in the world. Amdahl had proven something crucial: you could challenge IBM and win.

Yet even that wasn't enough. As he approached his sixties, Amdahl was thinking about the next generation of computing. The semiconductor industry was advancing rapidly. Manufacturing processes were improving. The question that obsessed him: what if you could radically rethink how chips were designed and manufactured? What if you could apply systems thinking to silicon itself?

This intellectual foundation mattered enormously. Amdahl understood the mathematics of parallel computing. He grasped the physical limitations of semiconductor design. He recognized that the industry's approach to chip manufacturing was based on assumptions that could be challenged. In the early 1980s, with his son Carl and a team of accomplished engineers, he decided to challenge those assumptions in the most dramatic way possible.

Amdahl's wafer-scale vision promised supercomputer performance with significantly fewer chips, reduced space, and lower costs compared to traditional systems of the time. (Estimated data)

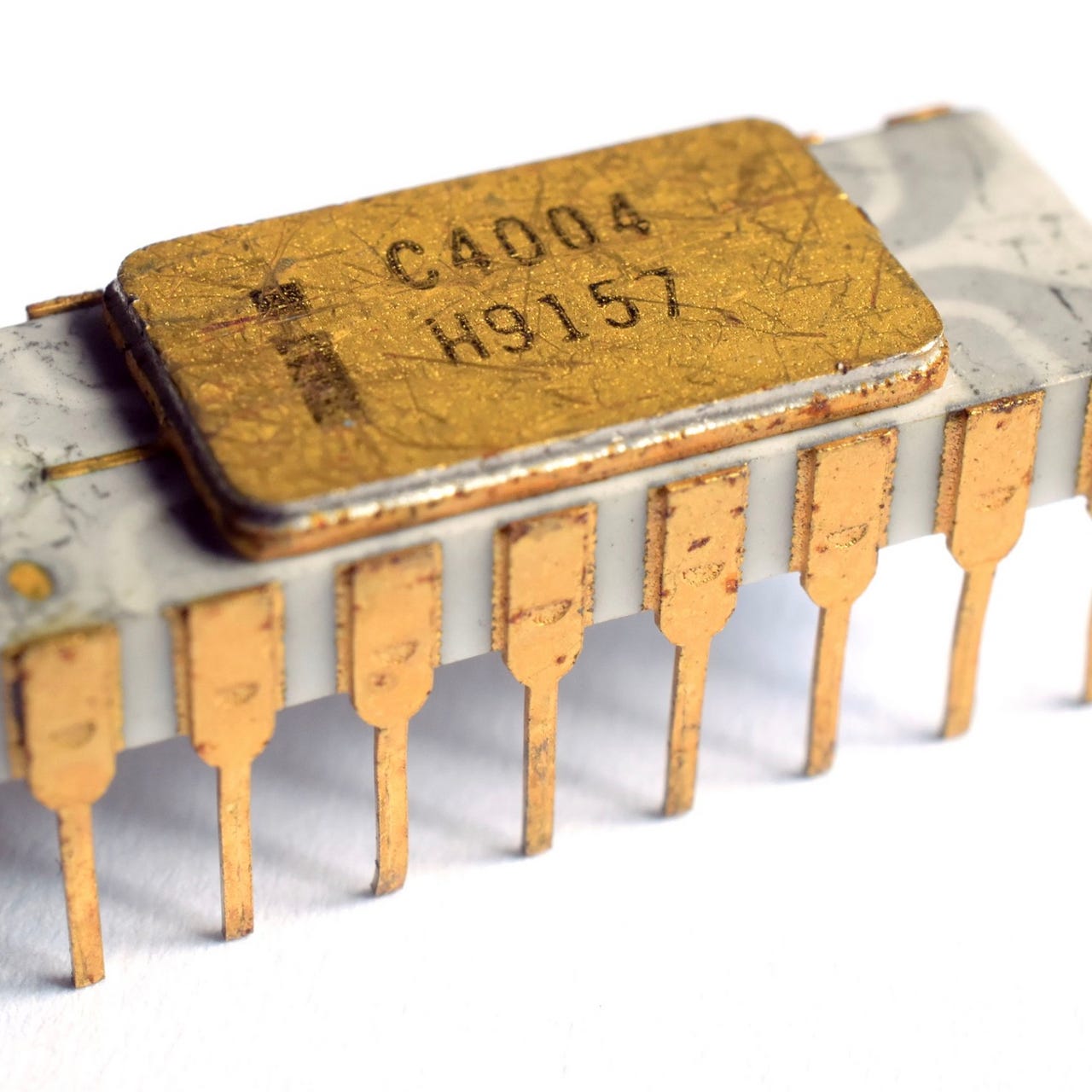

The Semiconductor Industry's Manufacturing Paradox

To appreciate Amdahl's audacity, you need to understand how semiconductor manufacturing worked in the early 1980s.

Chip fabrication was (and is) a process of extraordinary precision. Engineers would design circuits using photolithography, creating patterns at microscopic scales. A single wafer, typically made of silicon and measuring several inches in diameter, would have hundreds of identical die designs etched onto it. After fabrication, the wafer would be diced into individual chips.

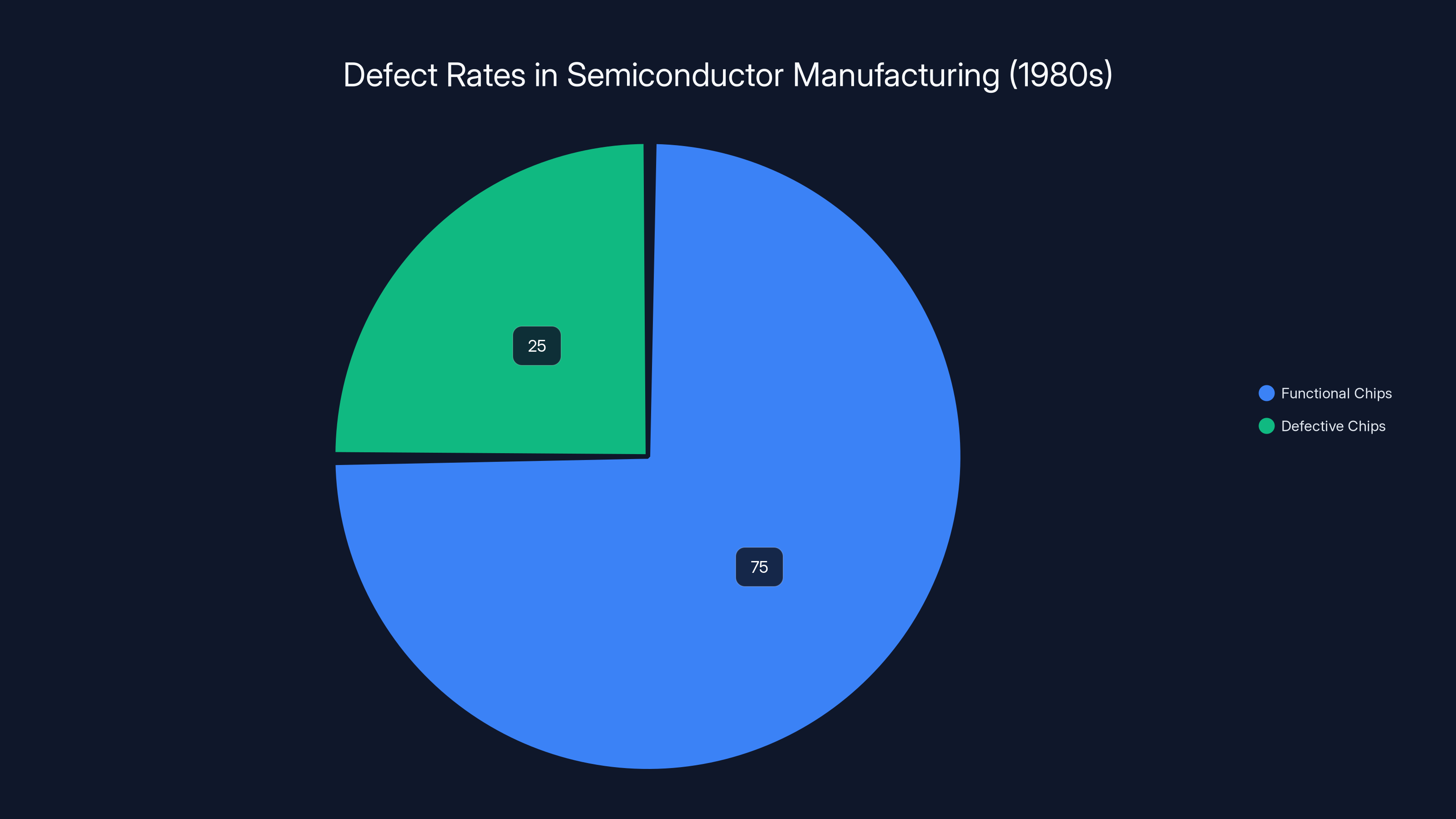

This redundancy through volume approach existed for a practical reason: manufacturing was imperfect. Dust particles, chemical contamination, temperature variations, and other factors could cause defects. Not every die on a wafer would work. Manufacturers accepted that 10-30% of chips might be unusable. The cost of these defects was built into pricing. As long as enough chips worked, the economics made sense.

This approach had defined the semiconductor industry since its inception. Every manufacturer used it. Every investor understood it. Every business model depended on it. It seemed immutable.

Amdahl saw something different. He didn't see defects as inevitable waste to be managed. He saw them as a design problem to be solved.

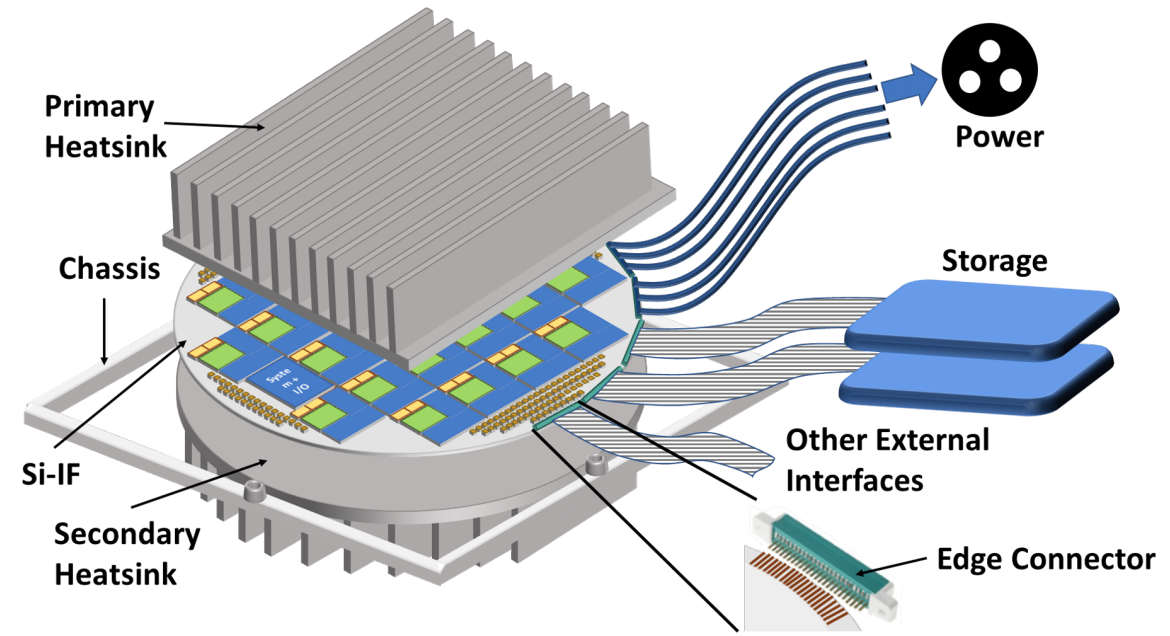

His insight was elegant: what if you designed a single large chip with built-in redundancy? Instead of cutting a wafer into hundreds of separate dies, use the entire wafer as one unified computational unit. Then design the chip's architecture to automatically route around defects, reconfiguring itself as needed.

In theory, this approach had enormous advantages. You eliminated the physical boundaries between separate chips. You reduced the number of connections between components. You gained the ability to optimize the entire wafer as a single system rather than managing hundreds of independent units. Manufacturing yield became less critical because the chip could adapt to imperfections.

Performance could be transformative. Fewer connections meant lower latency. Lower latency meant faster execution. A wafer-scale processor could potentially outperform dozens of individual chips connected together. This was the core promise of wafer-scale integration: fundamentally different architecture enabling fundamentally different performance.

But here's where theory meets practice. The defects on real wafers aren't uniformly distributed. They cluster. Some areas of a wafer might have zero defects while others have dozens. Designing a single unified circuit that could gracefully handle arbitrary defect patterns was far harder than designing hundreds of redundant identical chips.

You'd need to understand exactly where defects were. You'd need to dynamically reconfigure circuits around them. You'd need to ensure that the reconfiguration didn't create bottlenecks or reduce performance below acceptable levels. This demanded innovations in design tools, testing procedures, and manufacturing monitoring.

Amdahl and his team at Trilogy recognized these challenges. They weren't naive. But they believed the potential payoff justified the investment. If they could solve wafer-scale integration, they'd own a technology with profound implications for computing's future.

Trilogy Systems: The Audacious Startup

Trilogy Systems was founded in the early 1980s with a mission unlike typical semiconductor companies. Most startups in the space tried to compete by being faster, cheaper, or more specialized than incumbents. Trilogy's ambition transcended incremental improvement.

The company gathered some of the best engineering talent available. These weren't junior engineers. Amdahl attracted senior architects and researchers who'd spent decades in the industry. They came because they believed in his vision and their own ability to make it real.

Funding was unprecedented for a startup at that time. Trilogy raised hundreds of millions of dollars—massive sums for the early 1980s. This funding wasn't for incremental product development. It was for building an entirely new manufacturing capability from scratch. Trilogy planned to construct dedicated fabrication facilities. They recruited hundreds of engineers. They invested in completely novel design tools and testing equipment.

The ambition extended across multiple dimensions. Trilogy didn't just want to build wafer-scale chips. They wanted to build a complete computing platform using these chips. They planned mainframe-class systems that would directly challenge IBM. They established aggressive timelines. They made bold public commitments about performance and pricing.

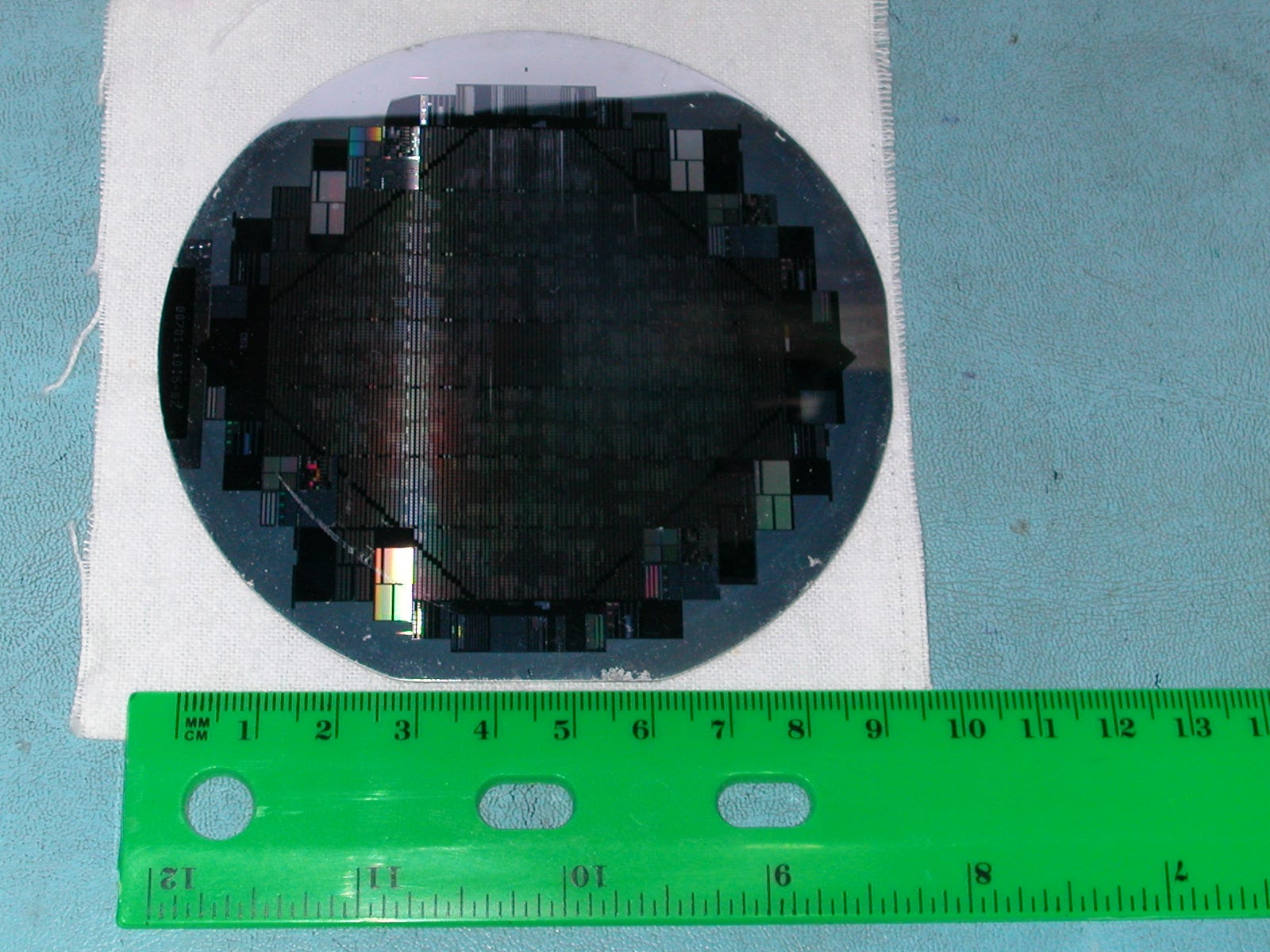

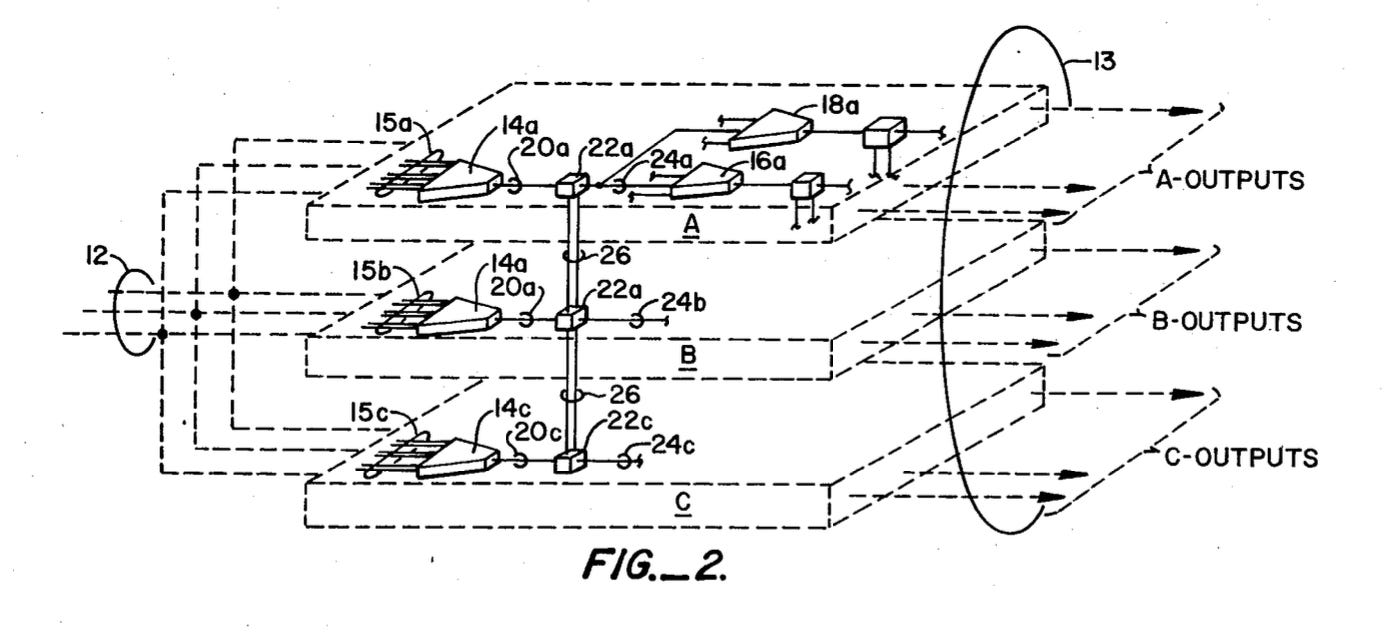

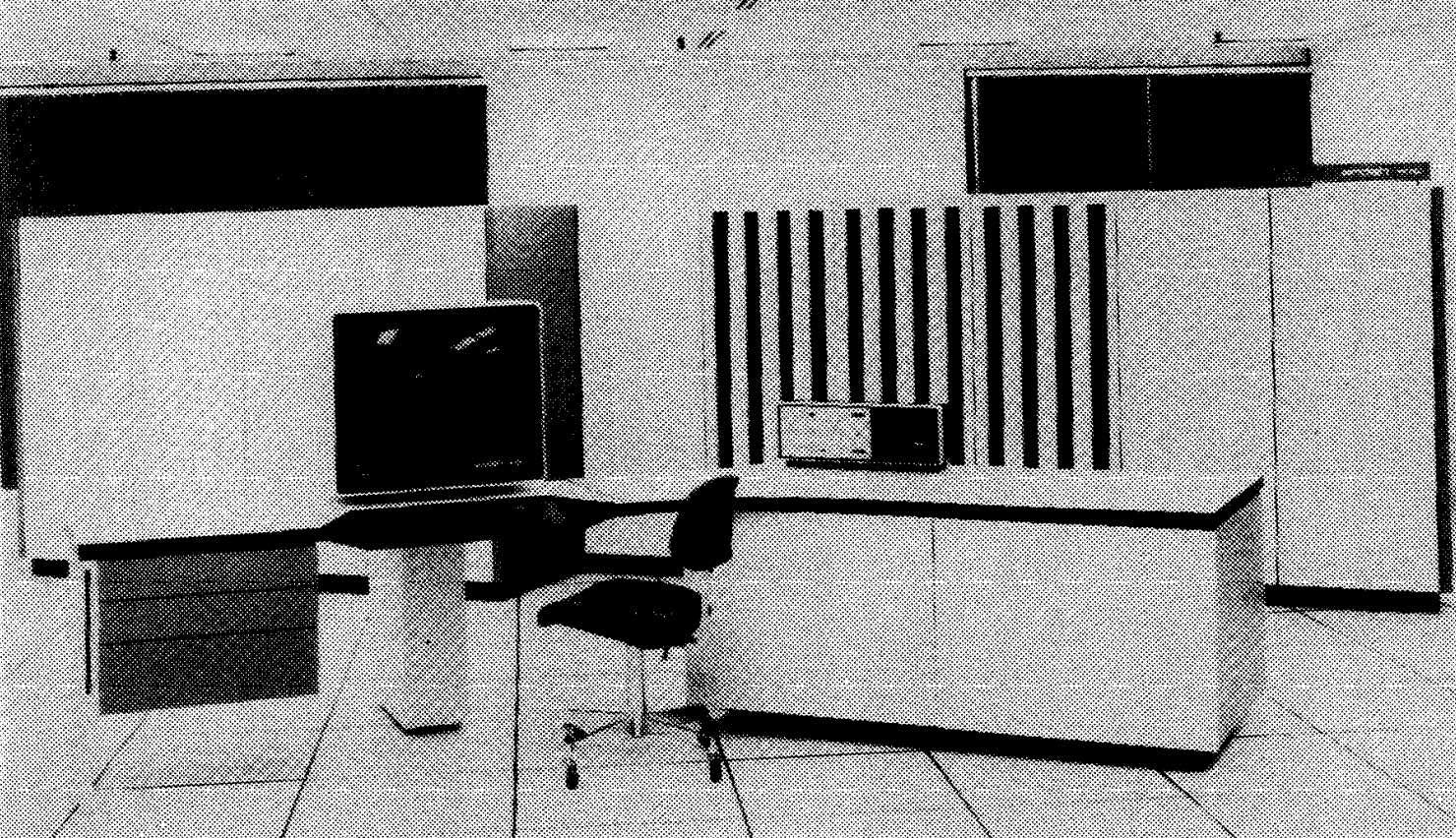

By mid-1983, news coverage reflected the scale of Trilogy's ambitions. Info World, then the leading trade publication for computing, reported on Amdahl's public unveiling of wafer-scale prototypes. The technical details were remarkable: 2.5-inch square wafers that would function as single integrated processors. Each macrochip would contain circuitry equivalent to roughly 100 conventional chips.

The performance targets were staggering. Trilogy planned to deliver 32 million instructions per second (MIPS)—more than existing IBM systems and competitive with specialized supercomputers. They'd achieve this using just 40 macrochips in a system. Conventional systems of that era required thousands of individual components.

Space requirements would drop dramatically. Power consumption would decrease significantly. Manufacturing costs, once the technology matured, would plummet. Trilogy suggested they could undercut IBM pricing by as much as 30% while delivering superior performance.

Amdahl also made remarkable claims about future applications. The technology, he suggested, could eventually reach personal computers. The idea that desktop machines might achieve supercomputer-class performance seemed absurd in 1983. Yet Amdahl stated it plainly: "It would be a really personal computer!"

This wasn't hyperbole. Amdahl was extrapolating forward using the same systems thinking that had made the System/360 successful. He understood that computing's future would be characterized by exponential performance growth and price compression. Wafer-scale integration could dramatically accelerate both trends.

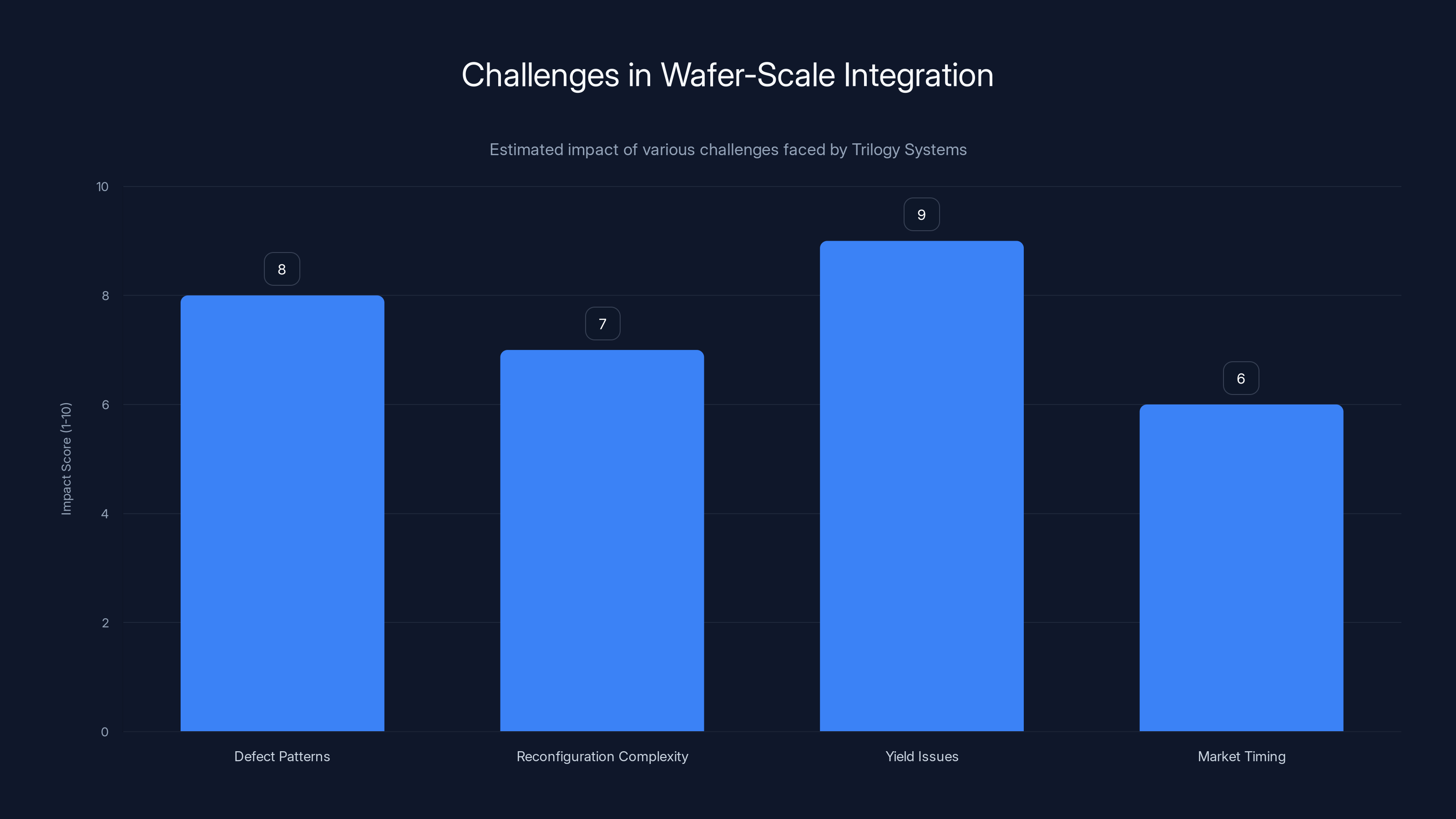

Trilogy Systems faced significant challenges in wafer-scale integration, with manufacturing yield issues being the most impactful. Estimated data.

The Technical Innovation: Designing Defects Away

Wafer-scale integration's central challenge was elegant but brutally difficult: design a system that could work despite knowing it would have defects, without knowing exactly where those defects would be.

Conventional approaches to defect tolerance existed. Aerospace and military systems had used redundancy for decades. But those systems duplicated entire components: if one unit failed, another took over. This approach worked but consumed massive area and power. For semiconductors, where every square millimeter mattered, such crude redundancy was prohibitive.

Amdahl's approach was more sophisticated. The idea was to build in extra circuits throughout the design—spare components distributed across the wafer. These spares wouldn't normally be used. But when manufacturing identified defects during testing, the chip's interconnect layers could be severed or modified to isolate defective regions and activate spare components instead.

This required understanding the chip's topology intimately. Different parts of the wafer might fail in different ways. A defective memory region could be isolated by remapping memory access. A defective processing unit could be bypassed by routing computations elsewhere. A defective interconnect section could be worked around using redundant pathways.

The mathematics of this approach were complex. You needed enough redundancy to handle realistic defect rates. But you couldn't add too much spare circuitry without defeating the density advantages that made wafer-scale integration worthwhile. The optimization problem was intricate: maximize yield while minimizing area overhead.

Trilogy invested heavily in design tools to solve this problem. They developed software to model defect patterns, simulate workarounds, and optimize redundancy placement. They created testing procedures to quickly identify defects and determine which spares to activate. They built manufacturing monitoring systems to track defect locations in real-time.

All of this was novel. No semiconductor company had attempted anything similar at scale. The design tools didn't exist. The testing methodologies were experimental. The manufacturing process would require innovations in photolithography, etching, and measurement.

This is why Trilogy's funding was so massive. They weren't just designing chips. They were developing an entirely new approach to semiconductor manufacturing and design. Every aspect of the pipeline needed innovation.

Amdahl's team made genuine technical progress. They produced prototype wafers. They demonstrated that wafer-scale integration could theoretically work. The early results were promising enough that investors and industry observers took them seriously.

But there was a gap between prototype and production. Demonstrating that something works in controlled conditions is fundamentally different from building a manufacturing process that reliably produces commercial products. That gap proved impossibly wide.

The Reality Collision: Manufacturing Meets Theory

Wafer-scale integration's ultimate failure wasn't due to flawed vision or inadequate funding. It failed because manufacturing reality proved more complex than theory predicted.

First, defect patterns were unpredictable in ways that made redundancy planning difficult. Manufacturing defects don't distribute randomly. They cluster due to contamination events, thermal gradients, and photolithography imperfections. A single dust particle during fabrication could create a defect zone affecting multiple circuits. One mask misalignment could ruin an entire quadrant of the wafer. This meant you couldn't pre-design a fixed redundancy pattern that would handle the full range of possible defect scenarios.

Second, testing became enormously complex. Identifying exactly which circuits on a wafer had failed, without damaging intact circuits, required sophisticated probe technologies. Manufacturing a single defect map could take hours per wafer. With hundreds of wafers in production and the need to reconfigure each one individually, testing quickly became the bottleneck.

Third, yield remained disappointingly low. Even with redundancy and workarounds, many wafers couldn't meet performance specifications. Defects in critical pathways sometimes couldn't be effectively routed around. The spares designed into the system weren't always in positions where they could compensate for actual defect locations. Manufacturing improvements that seemed promising in pilot runs failed to scale.

Fourth, time-to-market became a killer. Developing the manufacturing process, design tools, and testing procedures took much longer than anticipated. Semiconductor technology advanced faster than Trilogy could innovate. By the mid-1980s, conventional chip manufacturing had improved so much that the performance advantage of wafer-scale integration narrowed significantly.

Finally, market conditions shifted. The personal computer market was booming, but not for mainframes. IBM faced competition from multiple directions. The mainframe market, Trilogy's target, was contracting as computing moved toward distributed systems. The massive price cuts Trilogy hoped to achieve never materialized because manufacturing costs remained stubbornly high.

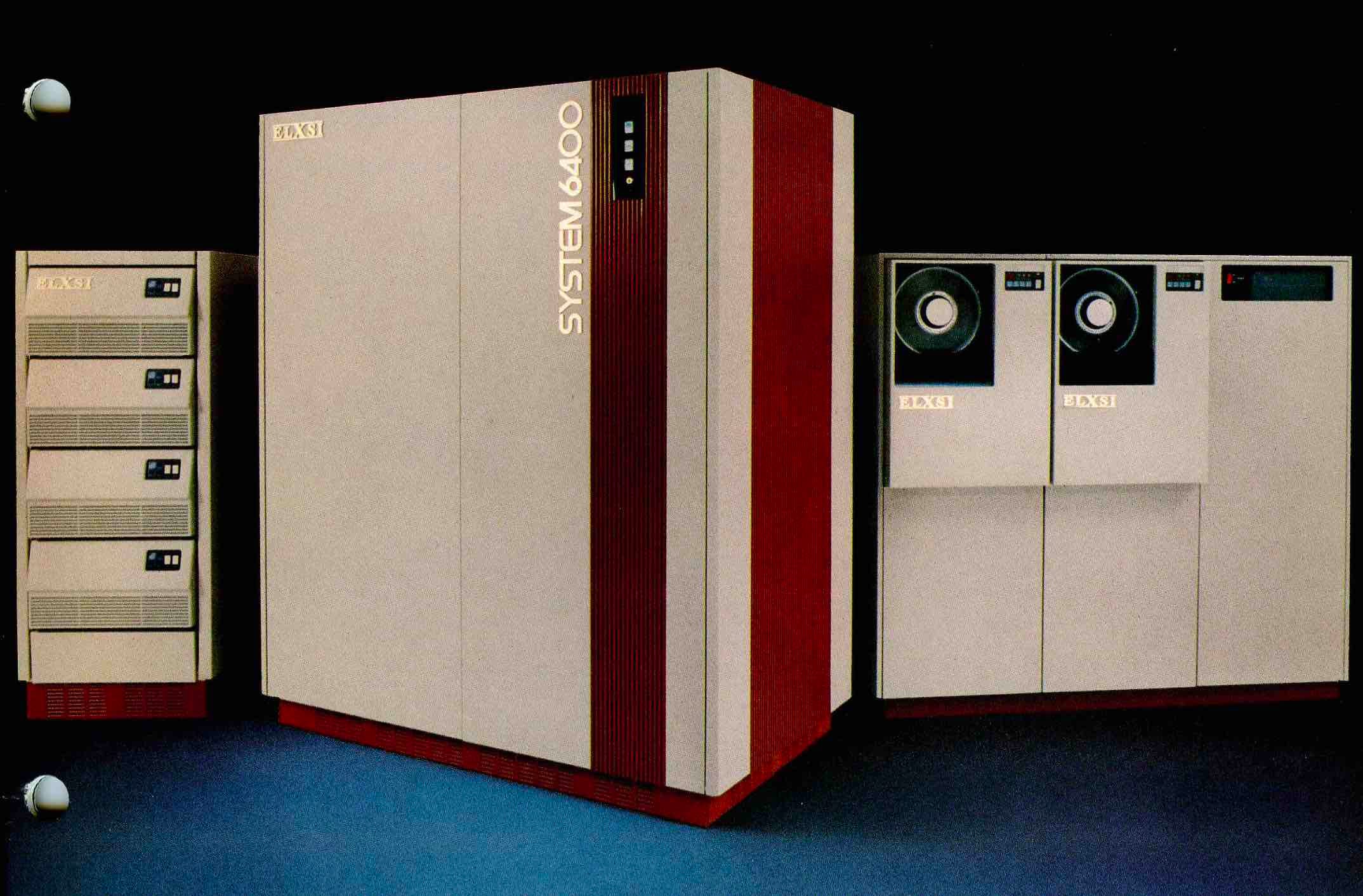

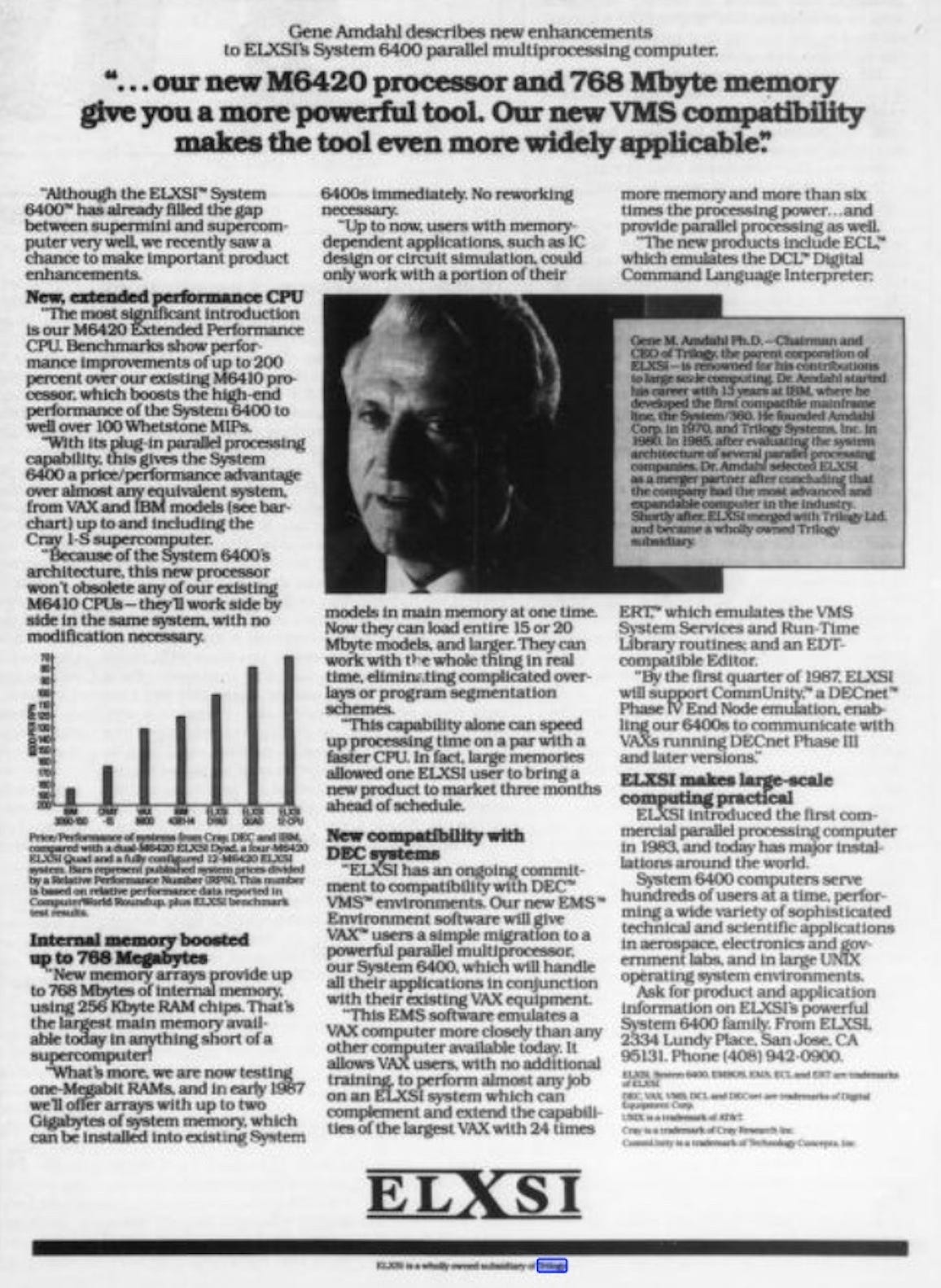

By 1985, Trilogy's situation had become untenable. The company had burned through enormous capital without delivering commercial products. Amdahl's reputation couldn't overcome engineering reality. The decision was made to seek merger or acquisition. Trilogy combined with Elxsi, a smaller computer manufacturer, in a restructuring that effectively ended Trilogy as an independent company.

The wafer-scale integration vision faded from the mainstream for decades. It seemed like a noble failure, a bridge too far, a bet that hadn't paid off.

In the 1980s, semiconductor manufacturing saw an estimated 10-30% of chips as defective. Amdahl's approach aimed to mitigate these defects by treating the entire wafer as a single unit. Estimated data.

Why It Failed (And What That Teaches Us)

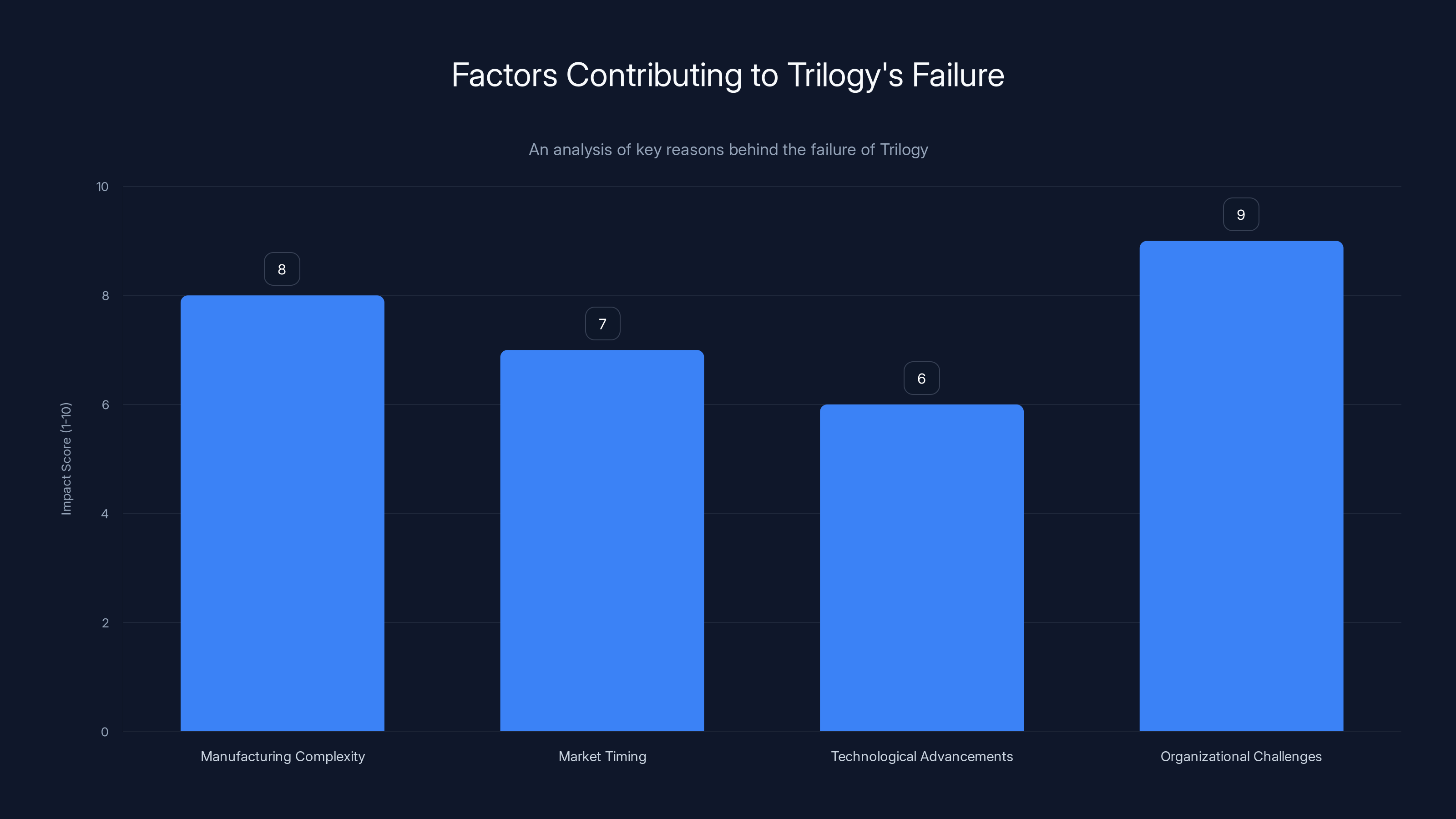

Engineering history is littered with brilliant ideas that failed for mundane reasons. Trilogy's story is no exception.

The core problem was that Amdahl treated wafer-scale integration as primarily a design problem, when it was actually a manufacturing problem. Design elegance couldn't overcome manufacturing complexity. Intellectual brilliance couldn't speed up process development. A legendary reputation couldn't change the fundamental physics of silicon fabrication.

Wafer-scale integration required not just one innovation but dozens of coordinated innovations across design tools, manufacturing processes, testing equipment, and system architecture. Achieving breakthrough improvements in all these areas simultaneously, at production scale, proved essentially impossible given 1980s technology.

The timing was also brutal. Amdahl launched Trilogy during a period of rapid semiconductor advancement. The advantages he envisioned became less dramatic as conventional chip manufacturing improved. Better design tools and lithography processes made conventional approaches more powerful. By the time Trilogy could have potentially achieved manufacturing success, the relative advantage of wafer-scale integration had diminished.

Market timing was equally problematic. Trilogy targeted the mainframe market, but personal computers and workstations were eating that market's lunch. The massive performance advantages Trilogy promised mattered less in a world moving toward distributed computing. A technology that delivered supercomputer performance at mainframe prices seemed brilliant in 1983. By 1985, the market wanted different things entirely.

Organizationally, Trilogy faced challenges managing the scale and complexity of simultaneous innovation across manufacturing, design, and systems. Startups are optimized for focused innovation in narrow domains. Trilogy tried to innovate across the entire semiconductor industry. That's extraordinarily ambitious and incredibly difficult.

The Conceptual Victory in Commercial Defeat

Yet here's the remarkable part: Trilogy failed commercially, but its core ideas proved prophetic.

Wafer-scale integration didn't disappear. It survived in specialized domains. Military and aerospace systems continued using WSI concepts for radiation-hardened processors. These applications valued reliability over cost and could justify the manufacturing complexity. Gradually, the technology improved. By the 1990s and 2000s, WSI chips appeared in various specialized applications.

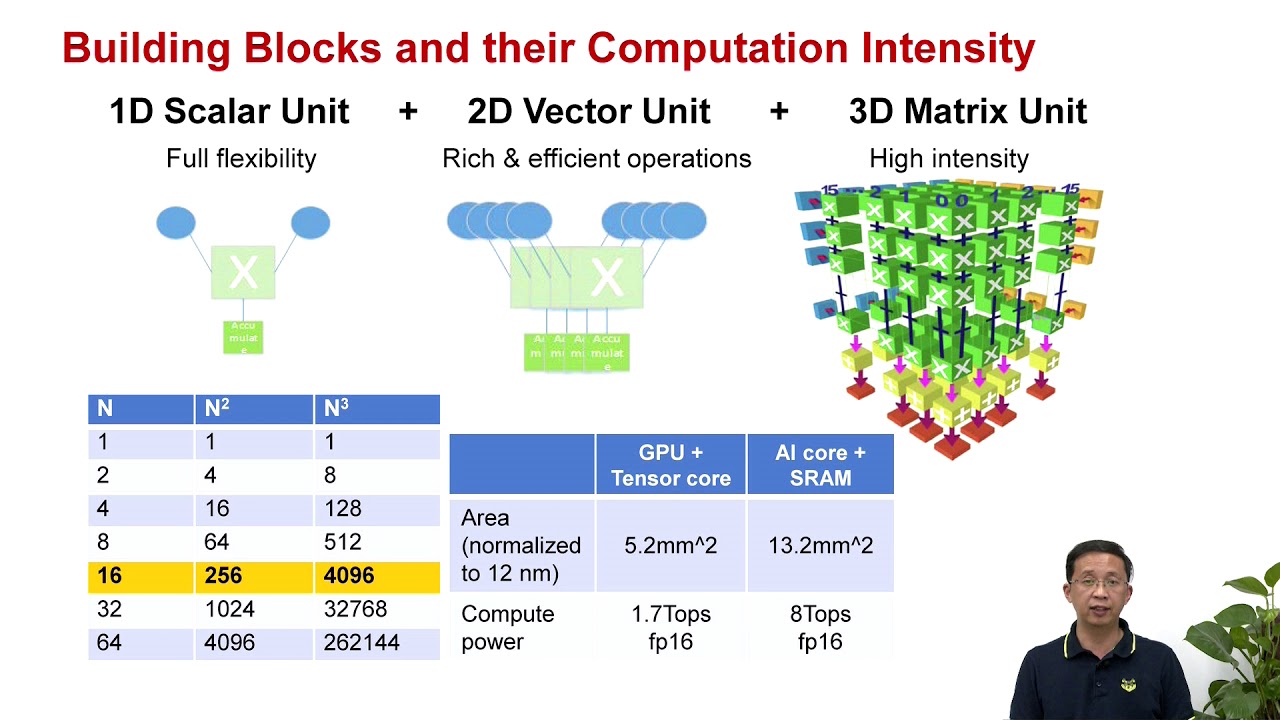

But the real vindication came with the rise of application-specific processors and modern AI accelerators. Companies designing chips for machine learning, cryptography, and other specialized tasks embraced Amdahl's core insight: using wafer area as a unified computational unit rather than as containers for hundreds of separate chips.

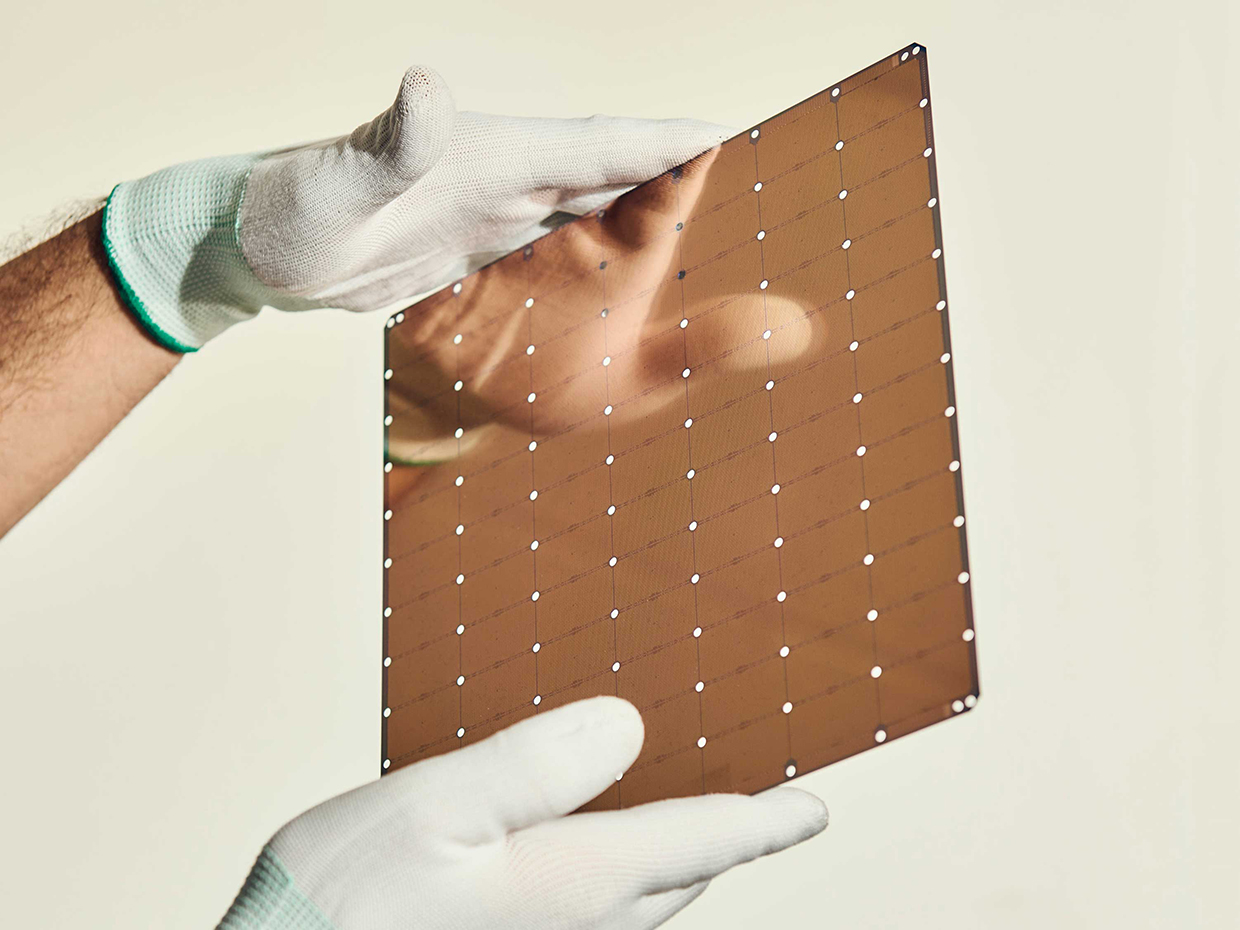

Cerebras explicitly adopted wafer-scale architecture for its AI processors. The company built chips using entire wafers as single computational units, exactly as Amdahl envisioned. The technology that Trilogy couldn't commercialize in 1983 became economically viable in the 2020s because:

- Manufacturing processes improved dramatically, making defect rates lower and more predictable

- Design tools advanced sufficiently to handle wafer-scale complexity

- AI workloads are tolerant of certain types of defects and benefit enormously from reduced latency

- Market conditions finally favored the performance advantages Amdahl promised

Amdahl's technical insight wasn't wrong. His understanding of semiconductor architecture wasn't flawed. He was simply too early. The ecosystem—manufacturing capability, design tool sophistication, market demand—wasn't ready.

This is perhaps the most important lesson from Trilogy's story: being right about the future and being able to commercialize that future are entirely different challenges. The most important innovations often fail when first attempted because the world isn't ready to support them.

Trilogy's failure was primarily due to organizational challenges and manufacturing complexity, with market timing and technological advancements also playing significant roles. Estimated data.

The Evolution of Chip Design Philosophy

While wafer-scale integration as a specific manufacturing approach faded, the philosophical principles Amdahl championed became central to modern chip design.

The idea of treating a die as a unified computational unit rather than as a collection of separate components is now standard. Modern processors integrate components that would have been separate in earlier eras. Cache hierarchies, memory controllers, I/O subsystems, and multiple processing cores are all part of the same unified die.

Redundancy by design is now ubiquitous. High-reliability systems include spare components. AI accelerators incorporate redundancy to handle manufacturing variations. The fundamental principle Amdahl advocated—designing for imperfection rather than demanding perfection—is now industry standard.

Defect tolerance has become increasingly important as chip sizes grow. Modern semiconductor manufacturing creates dies measured in square centimeters with billions of transistors. Defects are inevitable. Rather than treating them as failures, modern designers use Amdahl's approach: build in redundancy and design systems that can work despite imperfections.

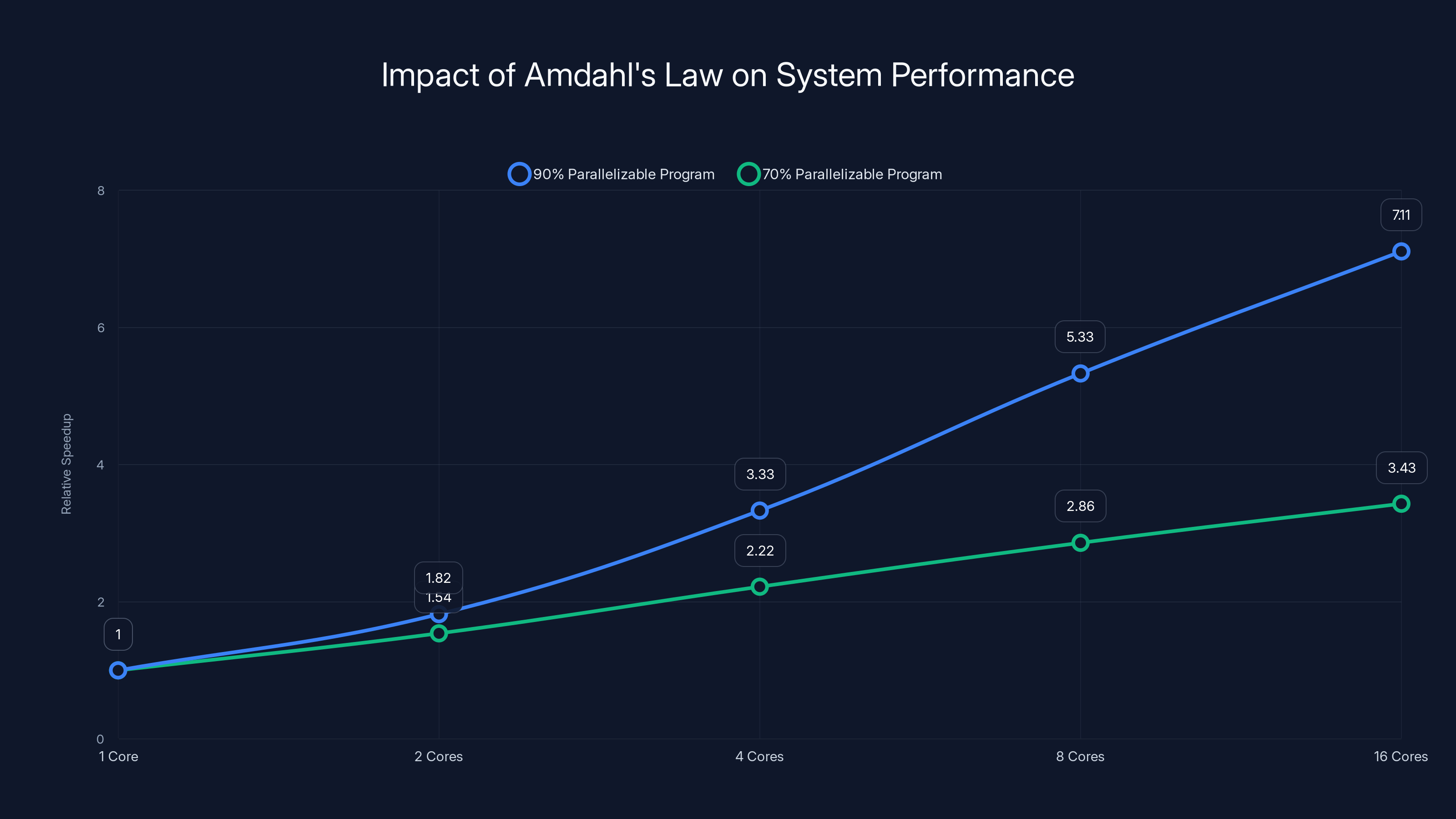

The mathematics of system-level optimization that Amdahl pioneered (his Amdahl's Law is still taught in every computer architecture course) directly influenced how modern processors are designed. Engineers constantly balance tradeoffs between different components, using mathematical models to optimize overall system performance.

Personal Computing Prediction: Decades Ahead

Perhaps the most prescient part of Amdahl's 1983 vision was his suggestion that wafer-scale integration could eventually reach personal computers, delivering supercomputer performance at consumer prices.

In 1983, this seemed absurd. Personal computers had roughly 1 MIPS of processing power. Supercomputers delivered 30-100 MIPS. The gap seemed unbridgeable. Why would personal computer users need supercomputer performance?

Amdahl understood something crucial that others missed: computing power follows exponential growth curves. Performance doubles every 18-24 months. Price drops follow similar curves. If you extrapolate these trends over 20-30 years, seemingly impossible gaps close. What's exotic becomes ordinary. What's expensive becomes cheap.

He was correct. By the 2010s, a smartphone delivered more computational power than a 1980s supercomputer. By the 2020s, a laptop could handle workloads that would have required a room-sized mainframe in 1983. This wasn't just due to manufacturing improvements or more transistors. It was partly due to innovations in architecture—exactly the kind of thinking Amdahl championed.

Wafer-scale integration's specific implementation didn't become the path for personal computers. Instead, Moore's Law (more transistors fitting on the same area) achieved many of the same results. But the underlying principle Amdahl was making—that radical approaches to chip organization could enable supercomputer performance in consumer devices—proved prescient.

Amdahl's Law shows diminishing returns in speedup as more cores are added, especially when the serial portion of a program is significant. Estimated data illustrates this effect.

The Legacy: How Trilogy Influenced Modern Computing

Trilogy Systems may have failed as a company, but its influence on computing is substantial and often unacknowledged.

First, it demonstrated that established semiconductor approaches could be fundamentally rethought. Before Trilogy, the industry accepted certain constraints as immutable. Trilogy showed that creative engineers could challenge those constraints. This mindset enabled future innovations.

Second, it proved that designing for defect tolerance was technically feasible, even if not economically viable in 1983. Subsequent researchers improved on Trilogy's approach. By the 2000s, defect tolerance and built-in redundancy were standard in specialized applications.

Third, it established that market and manufacturing readiness matter as much as technical capability. Brilliant design means nothing if you can't manufacture reliably or if the market doesn't need what you're building. This lesson has guided subsequent startups toward more realistic timelines and market validation.

Fourth, it attracted top talent to think about semiconductor design differently. Engineers who worked at Trilogy went on to founding or leading companies like Intel divisions, NVIDIA projects, and various specialized chip companies. They carried forward Amdahl's insights in more targeted ways.

Finally, it created a roadmap that later companies could learn from. When Cerebras decided to build wafer-scale AI processors, they understood Trilogy's lessons. They focused on a specific market (AI) where the benefits justified the complexity. They waited until manufacturing and design tools had advanced sufficiently. They built partnerships rather than trying to innovate across all dimensions simultaneously.

Modern Wafer-Scale Integration: AI's New Frontier

The resurgence of wafer-scale integration in the 2020s is dramatic and vindicated Amdahl's fundamental insight.

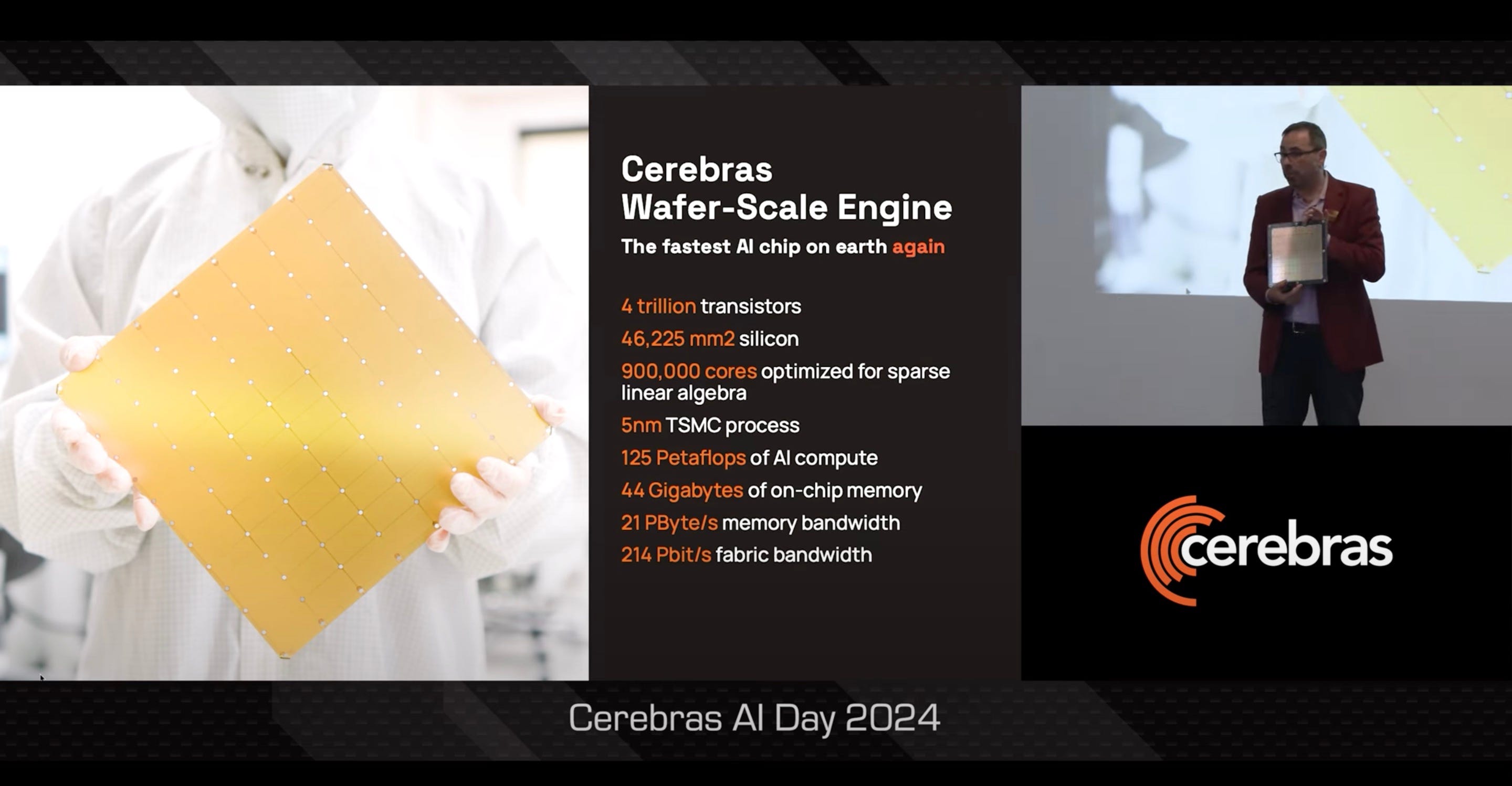

Cerebras launched the Wafer Scale Engine, a processor using entire silicon wafers as single computational units. This is almost exactly what Amdahl proposed in 1983, except it's built with 2020s technology, optimized for AI workloads, and operating in an ecosystem ready for it.

The performance advantages are substantial. The Wafer Scale Engine delivers 18 peta FLOPS of peak performance in a single chip. It incorporates 2.6 trillion transistors. It has approximately 400,000 processing cores. By treating the wafer as a unified computational unit, Cerebras achieved latencies and throughput impossible with conventional chip designs.

Other companies pursued similar approaches. Graphcore built processors with unusually large unified dies. Samba Nova designed chips emphasizing interconnectivity and unified performance. Even traditional chip makers like Intel and NVIDIA moved toward larger dies and more unified designs for AI applications.

These companies succeeded where Trilogy failed because:

- They focused on specific markets (AI) with clearly defined needs

- Manufacturing had advanced to the point where wafer-scale viability improved

- Design tools and simulation software were sufficiently sophisticated

- The performance advantages for their specific applications justified the manufacturing complexity

- They had patient capital understanding that the technology required time to mature

Amdahl didn't live to see this vindication. He passed away in 2015 at age 92. But his vision of wafer-scale processors became reality, eventually, with modern technology catching up to his ambition.

Lessons for Innovation and Long-Term Vision

The Trilogy story offers profound lessons about innovation that remain relevant in 2025.

First, being right about the future isn't enough. Amdahl understood computing's trajectory better than almost anyone. But understanding the future and being able to commercialize innovations in the present are different skills. Successful innovation requires both vision and execution, both understanding what's coming and having the resources and market conditions to make it real.

Second, execution complexity scales nonlinearly. Trilogy tried to innovate across multiple domains simultaneously: chip design, manufacturing processes, design tools, system architecture, and market strategy. The complexity of managing all these coordinated innovations proved overwhelming. Future innovators learned to focus more narrowly, dominating specific domains before expanding.

Third, market timing is often more important than technical merit. Wafer-scale integration's technical merits were genuine. But the market didn't need what Trilogy was building when they tried to build it. By the time the market did need wafer-scale processors (for AI), four decades had passed and technology had evolved enough to make the approach viable.

Fourth, building on failed efforts compounds success. Cerebras and other modern companies didn't start from scratch. They studied Trilogy's approach, learned from its failures, and built on its concepts using modern technology. Innovation is usually incremental, building on previous work, not revolutionary.

Fifth, some failures are necessary for progress. Trilogy's expensive failure proved that wafer-scale integration was technically feasible, even if not yet economically viable. This knowledge guided subsequent research. The company failed commercially but succeeded conceptually, providing a roadmap for later success.

The Broader Silicon Industry Transformation

Trilogy's rise and fall coincided with a broader transformation in the semiconductor industry that ultimately vindicated Amdahl's approach to thinking about chip design.

In the 1970s and early 1980s, the semiconductor industry was dominated by a few massive players: Intel, IBM, Motorola, and Texas Instruments. These companies built general-purpose processors that powered everything. Specialization seemed like a relic of expensive aerospace applications.

Trilogy's failure paradoxically contributed to a shift in this paradigm. By the 1990s, the industry began splintering into specialized segments. Graphics processors emerged. Digital signal processors became standard. Networking processors appeared. Application-specific integrated circuits proliferated.

This specialization vindicated Amdahl's core insight: different workloads benefit from different architectural approaches. General-purpose processors are compromises optimizing for many tasks at the expense of peak performance at any single task. Specialized processors, designed specifically for their target workload, can dramatically outperform general-purpose alternatives.

Wafer-scale integration embodies this principle perfectly. It's not a general-purpose manufacturing approach. It's specialized for workloads where reduced latency and maximum throughput matter more than flexibility. This is exactly why it failed in the general-purpose mainframe market but succeeds in AI accelerators and other specialized domains.

Amdahl's Law and Modern System Design

While Trilogy Systems failed, Amdahl's most enduring contribution to computing—Amdahl's Law—became increasingly relevant.

Published in 1967, Amdahl's Law describes the relationship between sequential and parallel portions of programs. If a program is 90% parallelizable and 10% sequential, you can never achieve more than a 10x speedup no matter how many processors you add. The sequential portion becomes the bottleneck.

This insight seems simple, but it's profound. It means that parallel processing improvements have fundamental limits. No amount of hardware can overcome serial bottlenecks. Any system optimization must address the slowest component, not just add more parallelism.

Amdahl's Law guided decades of computer architecture research. It explains why adding more cores provides diminishing performance returns. It explains why clock speed improvements matter for serial workloads. It guides tradeoffs between cache size, memory bandwidth, and processor count.

In the modern era of multi-core processors and distributed computing, Amdahl's Law remains essential. Cloud architecture, microservices design, and parallel algorithm development all rely on understanding Amdahl's insights. Modern companies optimizing for scale constantly confront Amdahl's Law: scaling is easy until you hit the serial bottleneck.

AI systems illustrate this perfectly. Training models benefits enormously from parallelization, but inference—running models on single inputs—has strong serial components. This is why AI companies obsess over latency reduction. Amdahl's Law explains why: serial latency becomes the bottleneck preventing scaling.

Wafer-scale integration directly addresses Amdahl's concerns. By reducing latency through unified chip architecture, wafer-scale designs can reduce serial bottlenecks. This enables better parallelization efficiency. The connection between Amdahl's 1967 theoretical insight and 2020s practical processor design is direct and profound.

The Competitive Landscape of Modern AI Chips

Wafer-scale integration's modern resurgence exists within an intensely competitive AI chip market.

NVIDIA dominates this market with its H100 and H200 GPUs. These are powerful processors but use conventional chip architecture: multiple dies connected through high-speed interconnects rather than a single unified die. NVIDIA's approach favors flexibility and manufacturing yield over latency optimization.

Cerebras pursues the opposite strategy: maximum unified integration, prioritizing latency and throughput. For workloads where latency matters (inference, real-time processing), Cerebras's approach offers advantages. For workloads where flexibility matters (research, experimentation), NVIDIA's modularity wins.

Graphcore took a middle path: larger unified dies than NVIDIA but not full wafer-scale. Samba Nova similarly emphasized architecture optimization for specific workloads.

Traditional players adapted. Intel's Gaudi processors moved toward larger dies. IBM explored specialized approaches. Even AMD, building on Lisa Su's chiplet strategy, eventually incorporated larger unified dies for specific applications.

The landscape shows Amdahl's influence: no single approach dominates. Instead, different architectures optimize for different workloads. Specialized design beats general-purpose design for specific tasks. System-level thinking matters more than individual component optimization.

This pluralism would have pleased Amdahl. He was never an absolutist. His argument was always that good architecture requires understanding your specific problem and optimizing for it. Wafer-scale integration is optimal for certain problems, not all problems. The diversity of modern AI chip approaches reflects this nuance.

FAQ

What is wafer-scale integration?

Wafer-scale integration is a semiconductor manufacturing approach that treats an entire silicon wafer as a single processor rather than cutting it into individual chips. The design incorporates built-in redundancy that allows the system to work around manufacturing defects by automatically routing around damaged regions. This approach reduces connections between components, lowers latency, and enables better performance optimization compared to conventional designs using multiple separate chips.

Why did Gene Amdahl believe wafer-scale integration was important?

Amdahl understood that computing's future would be characterized by exponential performance growth, and architectural efficiency would be crucial. By treating wafers as unified computational units rather than collections of separate chips, wafer-scale integration could dramatically reduce latency, improve throughput, and compress more capability into smaller spaces. Amdahl believed this approach would eventually enable supercomputer-class performance in personal computers, a prediction that required 40+ years to become practical but ultimately proved correct.

How did Trilogy Systems approach the manufacturing challenges of wafer-scale integration?

Trilogy developed sophisticated design tools to optimize redundancy placement across wafers. They created testing procedures to identify defects in real-time and determine which spare circuits to activate. They invested in manufacturing monitoring systems to track defect patterns and adjust processes accordingly. However, they underestimated how difficult it would be to achieve production-scale yields, as defect patterns were more unpredictable and clustered than theory suggested, and the complexity of individual wafer reconfiguration became a significant bottleneck.

Why did Trilogy Systems fail commercially if the technology was sound?

Trilogy faced multiple challenges simultaneously. Manufacturing yield remained lower than required for economic viability. Testing and reconfiguration of individual wafers was time-consuming and couldn't be automated effectively. The mainframe market, Trilogy's target, was contracting as computing shifted toward distributed systems. Most importantly, timing was poor: conventional chip manufacturing improved faster than Trilogy could innovate, narrowing the performance advantage of wafer-scale integration. The company ran out of capital before achieving commercial success, forcing a merger with Elxsi in 1985.

How do modern companies like Cerebras use Amdahl's wafer-scale concepts?

Cerebras built the Wafer Scale Engine, a processor using entire silicon wafers as single computational units, exactly as Amdahl proposed in 1983. Modern companies succeed where Trilogy failed because technology has advanced (better manufacturing processes, sophisticated design tools), markets are ready (AI demands low latency and high throughput), and companies focus narrowly (optimizing for specific workloads rather than trying to compete across all computing domains). Manufacturing defect rates are lower and more predictable, making wafer-scale integration economically viable for specialized applications.

What is Amdahl's Law and why does it matter today?

Amdahl's Law, published in 1967, describes how serial portions of programs limit overall speedup from parallelization. If 10% of a program runs sequentially, you can never achieve more than a 10x speedup regardless of processor count, because the sequential portion becomes the bottleneck. This insight remains central to modern computing: cloud architecture, microservices design, and parallel algorithm development all rely on understanding Amdahl's Law. Wafer-scale integration directly addresses Amdahl's concerns by reducing latency, enabling better parallelization efficiency.

How does wafer-scale integration compare to conventional multi-chip processor designs?

Wafer-scale designs prioritize latency reduction and unified throughput optimization, treating the entire wafer as one computational unit. Conventional multi-chip designs (like NVIDIA's GPU approach) use separate dies connected through high-speed interconnects, offering flexibility and better manufacturing yield. Neither approach is universally superior. Wafer-scale excels for latency-sensitive workloads like inference and real-time processing. Multi-chip designs excel where flexibility and iterative improvement matter. Modern AI chip markets show both approaches thriving in different niches, validating Amdahl's original insight that good architecture requires understanding your specific problem.

What lessons from Trilogy's failure continue to guide innovation?

Several key insights emerged: first, being right about the future isn't enough—execution and market timing matter equally. Second, managing simultaneous innovation across multiple domains is exponentially harder than focusing narrowly. Third, understanding what markets actually need today, not what they theoretically might need tomorrow, is crucial. Fourth, patient capital and realistic timelines are essential for fundamental innovations. Finally, failure can be productive: Trilogy failed commercially but proved wafer-scale integration was technically feasible, providing a roadmap for later success when technology and markets had advanced sufficiently. The most successful modern companies learned from Trilogy's mistakes rather than repeating them.

Conclusion: Vision Meeting Reality

Gene Amdahl stands as one of computing's most visionary figures. His contributions shaped enterprise computing through the System/360, defined theoretical limits through Amdahl's Law, and pursued architectural innovations decades ahead of their time with Trilogy Systems.

Trilogy failed, but not because Amdahl's vision was flawed. It failed because the ecosystem wasn't ready. Manufacturing capabilities were insufficient. Design tools were inadequate. Market conditions didn't align. These obstacles proved insurmountable in the early 1980s.

Four decades later, the vision became practical. Cerebras and other companies realized exactly what Amdahl proposed: wafer-scale processors delivering supercomputer performance with minimal latency. The path wasn't direct. Other technologies (multi-core processors, GPU acceleration, distributed computing) provided alternative routes. But the ultimate success of wafer-scale integration vindicated Amdahl's fundamental insight.

This story carries lessons beyond computing. It shows that innovation timelines are unpredictable. Brilliant ideas sometimes require decades to become practical. Commercial success and conceptual correctness are different measures. Most importantly, building on failed efforts compounds eventual success more effectively than starting fresh.

Amdahl's legacy isn't primarily about Trilogy Systems, though that venture showed remarkable ambition. His legacy is about thinking systematically about architectural problems, understanding that optimization requires considering entire systems rather than individual components, and being willing to challenge industry assumptions when the mathematics suggests they're wrong.

Those principles guide computing today as profoundly as they did in the 1960s and 1980s. Whether designing AI accelerators, optimizing cloud infrastructure, or architecting distributed systems, engineers grapple with questions Amdahl posed: How do you optimize for your specific workload? Where are the bottlenecks limiting performance? How can you redesign fundamentally to overcome those bottlenecks?

Wafer-scale integration, which seemed like a brilliant failure in 1985, became the future. Amdahl was right—just too early. That's actually a remarkable position to be in. It means your thinking was so far ahead that the world had to catch up to make it viable. In the end, that's a victory that transcends any single commercial venture.

Key Takeaways

- Gene Amdahl attempted wafer-scale integration in 1983, treating entire silicon wafers as single processors with built-in redundancy for defect tolerance

- Trilogy Systems failed commercially by 1985 due to manufacturing complexity, yield challenges, and poor market timing for mainframes

- Amdahl's core vision proved conceptually correct: wafer-scale integration became practical in the 2020s for AI accelerators like Cerebras

- Amdahl's Law, published 1967, remains central to modern computing by showing serial bottlenecks limit parallel processing improvements

- Modern chip design validates Amdahl's principle: optimize entire systems for specific workloads rather than general-purpose compromise

Related Articles

- Apple's Chinese Memory Gambit: Why DRAM and NAND Diversification Matters [2025]

- ULA's Vulcan Rocket Booster Problem: What Went Wrong [2025]

- Trump's 'Buy American' EV Charging Rule: A De Facto NEVI Moratorium [2025]

- Dell Pro Max Tower T2 Review: Professional Workstation [2025]

- AMD's Strong Financial Results Despite PC Market Decline [2025]

- Why America's $12B Mineral Stockpile Proves the Future Is Electric [2025]