AI News Apps Transform Podcasts Into Instant News Clips [2025]

There's a problem nobody talks about: podcasts are where news actually happens now.

Think about it. When a tech CEO wants to make an announcement, they don't call a press conference or leak to Reuters. They go on their favorite podcast. When a politician wants to float an idea, they sit down with a friendly host. When investors want to gauge sentiment, they listen to what the big names are saying on Spotify and Apple Podcasts.

But here's the catch—nobody has time to listen to four-hour podcasts just to catch the 45 seconds that actually matter.

That's exactly the problem Particle, a news app built by former Twitter engineers, is solving with a new feature called Podcast Clips. The app now automatically finds the most relevant moments from thousands of podcasts, pulls them into short, playable clips, and surfaces them directly alongside related news stories in your feed. No more hunting through episode transcripts. No more guessing where the important part is.

You read a story about, say, Sam Altman's latest comments on AI regulation. Below it, you see clips from three different podcasts where he discussed the exact same topic. Play the clip. Read the transcript with highlighted words. Keep scrolling. Done.

This is a fundamental shift in how we consume news, and it reveals something much bigger happening in media: the center of gravity is moving from traditional newsrooms to podcast mics. If you're not paying attention to what's being said on podcasts, you're missing half the story.

Let's dig into how this works, why it matters, and what it means for the future of news consumption.

TL; DR

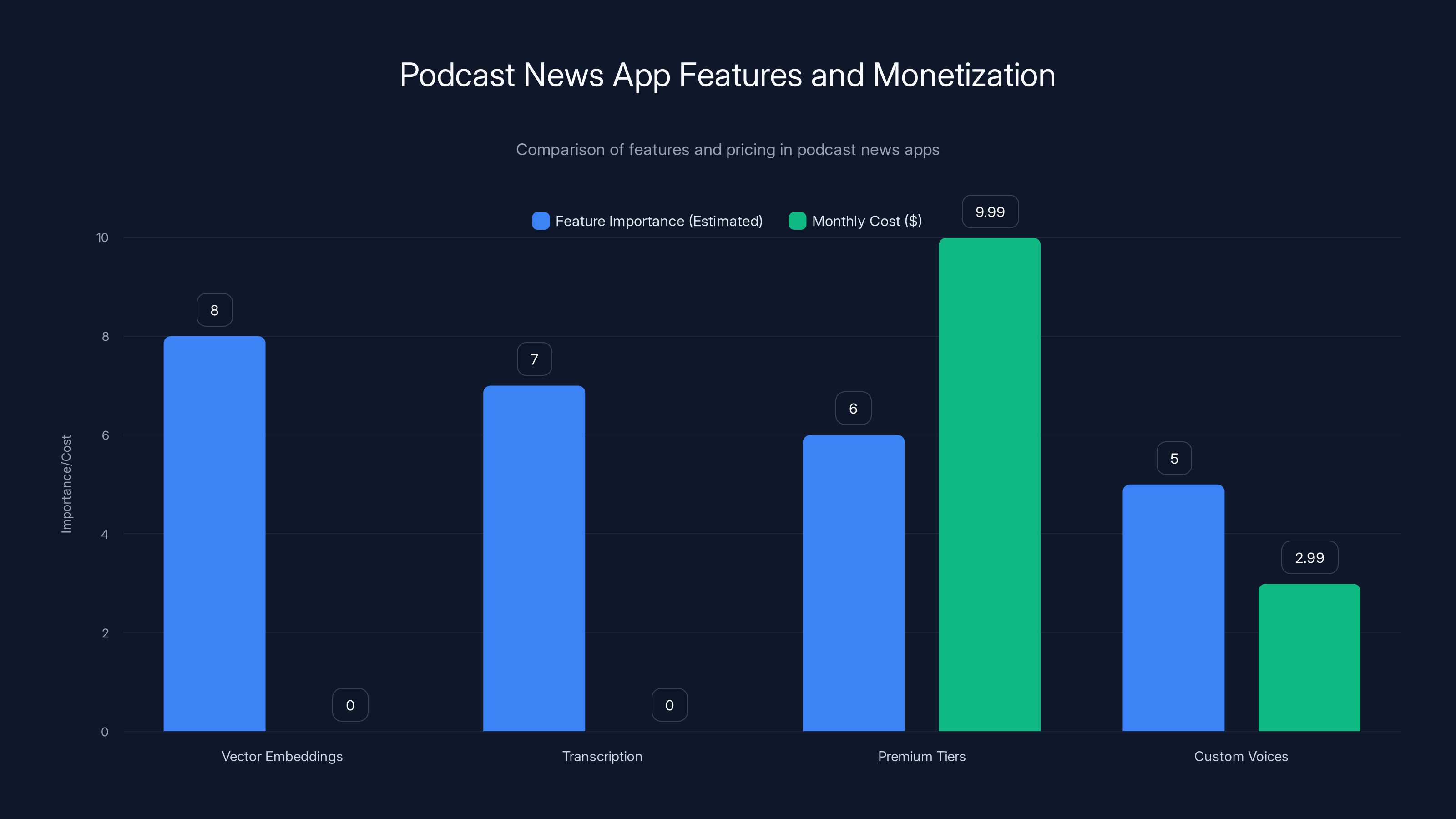

- AI is now mining podcasts for news: Apps like Particle use vector embeddings to match podcast moments with related news stories, creating a seamless feed of short clips

- Podcasts are becoming the primary news source: Tech leaders, politicians, and influential figures now break news on podcasts first, making traditional media secondary

- Vector embeddings, not generative AI: The technology uses embedding models to understand semantic relationships, not Chat GPT-style generation

- Transcription at scale: Services like Eleven Labs handle the heavy lifting of converting audio to text, which is then analyzed and clipped

- The monetization angle: News apps are adding premium tiers (9.99/month) with audio summaries, custom voices, and advanced features

- Bottom line: Podcast clips are becoming essential context for understanding modern news stories

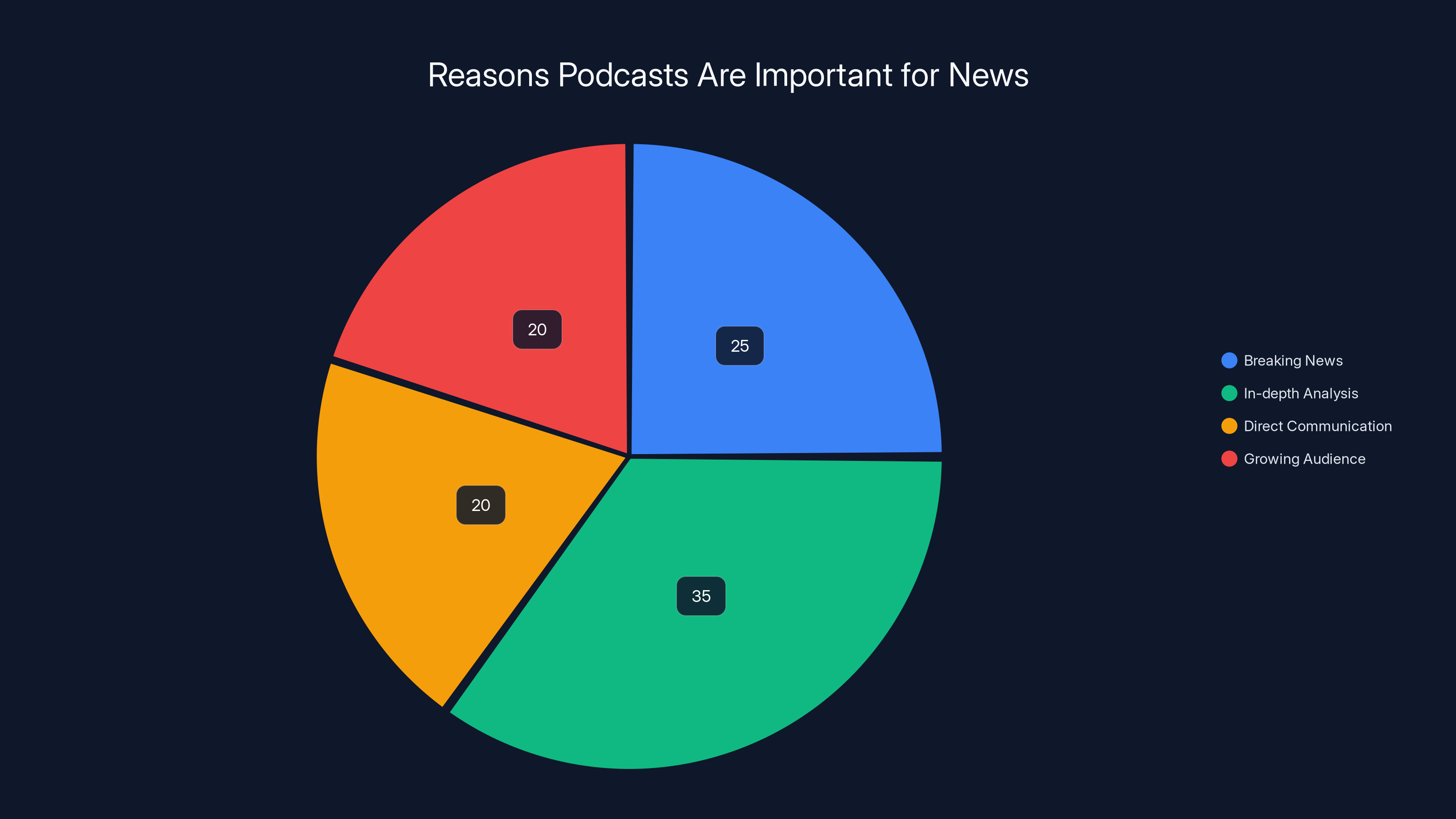

Podcasts are crucial for news due to their ability to break news (25%), provide in-depth analysis (35%), offer direct communication (20%), and cater to a growing audience (20%). Estimated data.

The Shift From Print to Podcasts: Why News Is Moving Off the Web

For decades, news worked like this: journalists published a story. Readers clicked. Ad impressions counted. The web was the distribution channel that mattered.

That model is collapsing.

Podcast consumption has grown exponentially. Spotify reports over 500 million monthly active users, with podcasts representing one of the fastest-growing content categories. Apple Podcasts has over 100 million subscribers. But the real story isn't just audience size—it's where trust is concentrated.

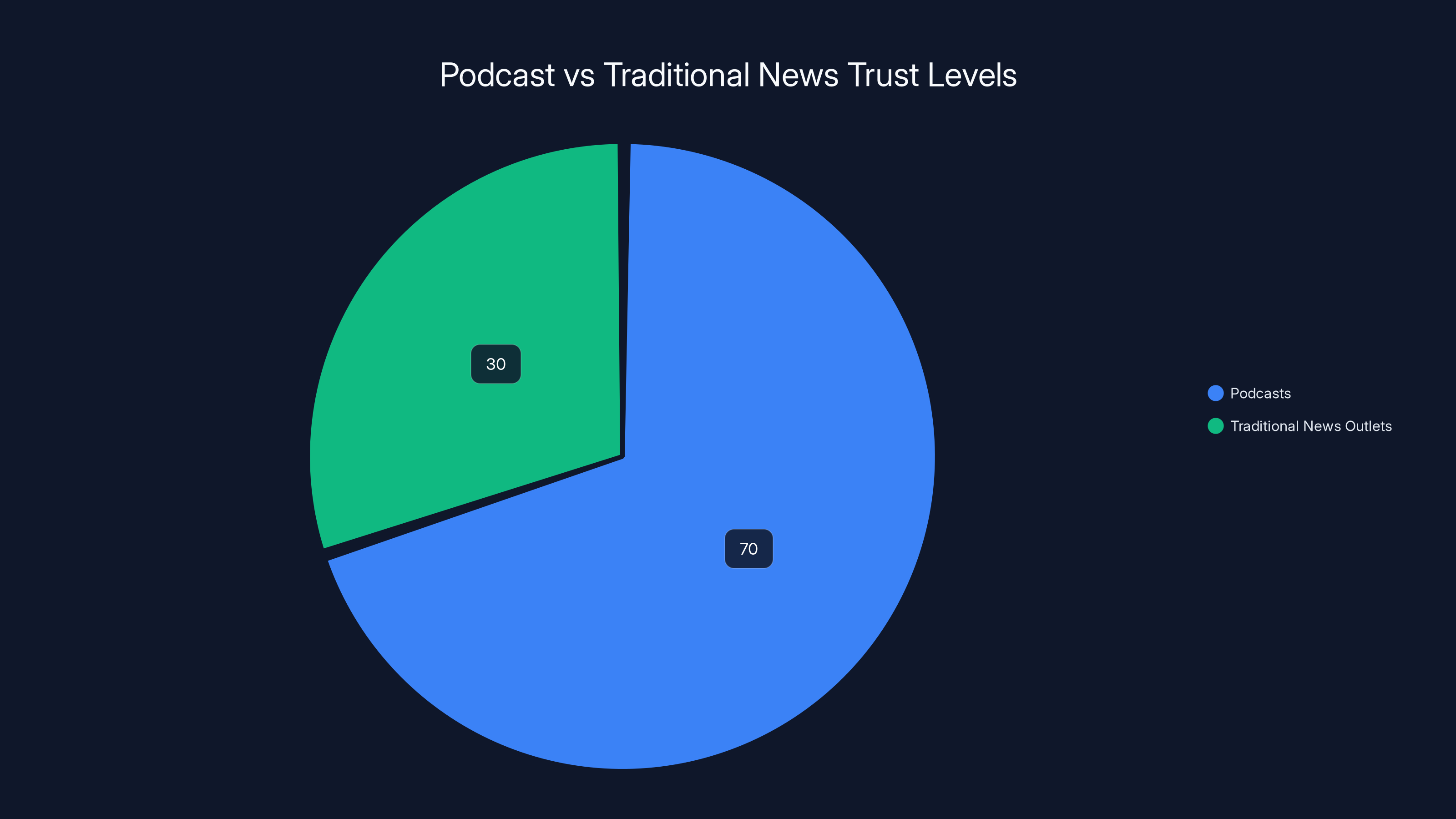

People trust podcasts more than traditional news outlets. A study from Edison Research found that podcast listeners rate their favorite shows as highly trustworthy sources of information, often more so than news websites. Why? Podcasts feel personal. You're listening to someone you've chosen, on their schedule, in their voice. There's no algorithm deciding what's news. There's no publisher deciding what to highlight.

That intimacy matters. It shapes behavior. Tech CEOs know this. When Elon Musk wants to explain his latest acquisition, he doesn't schedule a call with the New York Times. He goes on the Joe Rogan Experience or another podcast with millions of listeners. When Chat GPT's developers want to discuss safety concerns, they pick a podcast host they trust.

Bloomberg reported in 2024 that this trend has become deliberate strategy for tech executives. Rather than submit to traditional media scrutiny, they're seeking out podcast hosts who will give them air time without the editorial oversight. It's strategic, calculated, and increasingly common.

Newsrooms are paying attention. The New York Times, as reported by Nieman Lab, has built a custom AI tool that transcribes and summarizes new episodes from dozens of right-wing and conservative podcasts. They're doing this to understand what influential figures are saying about the news, to understand commentary, to track talking points.

If the Times is monitoring podcasts systematically, it's a signal: podcasts have become data that matters. They're no longer just entertainment. They're primary sources.

How Vector Embeddings Extract Meaning From Audio

Here's where it gets technical—but in a practical way.

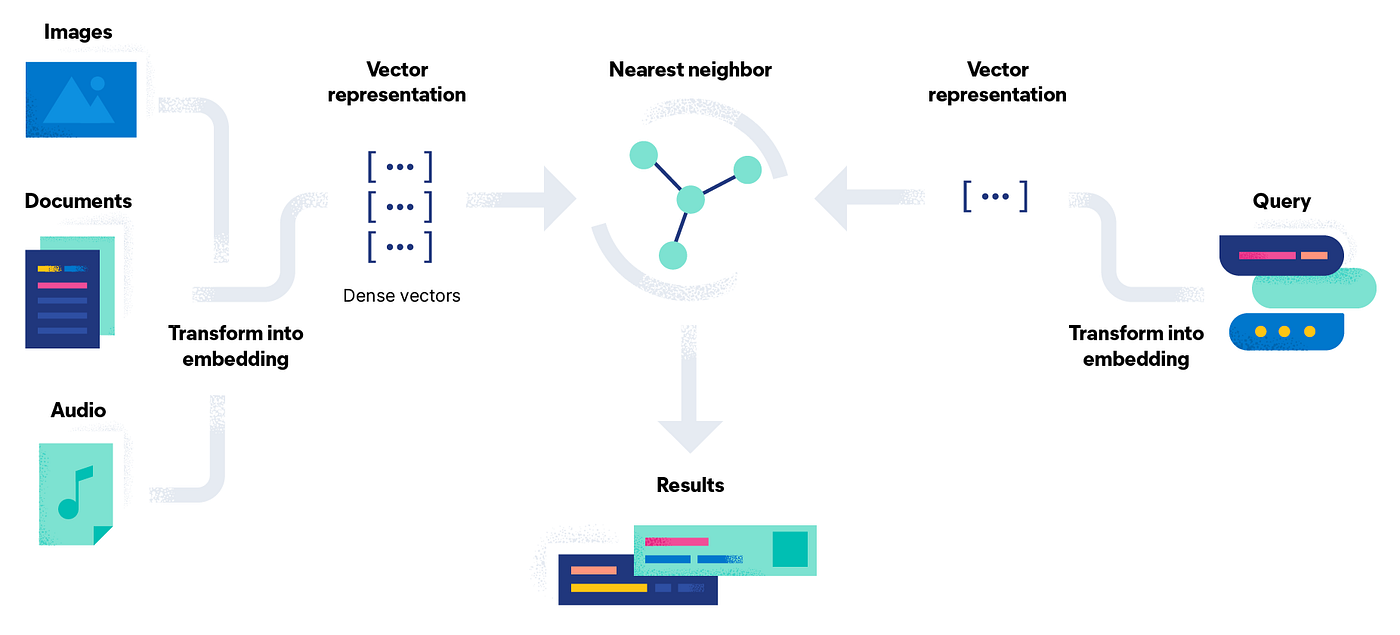

Particle's Podcast Clips feature works through a process called semantic matching using vector embeddings. Don't let the jargon scare you. The concept is straightforward.

A vector embedding is essentially a mathematical representation of meaning. When you take a piece of text—like a news headline or a podcast transcript—you can convert it into a series of numbers (a vector) that captures what that text is about. The magic is that texts with similar meanings end up with similar vectors.

Think of it like a map. Every word, phrase, or idea gets a coordinate in a multidimensional space. Stories about AI regulation cluster together. Stories about cryptocurrency cluster in a different area. Stories about healthcare reforms cluster elsewhere. When a new podcast episode gets transcribed, the system compares its vector to thousands of news story vectors. When they're close enough, the system knows there's a match.

Here's the key: this isn't generative AI. It's not Chat GPT inventing connections. It's a mathematical model understanding similarity. The difference matters because it's faster, more reliable, and less prone to hallucination.

Particle uses embedding models from companies that also provide large language models, according to founder Sara Beykpour, but the embeddings are pre-computed and static. They don't generate new text. They understand relationships.

Once matches are found, the second phase begins: intelligent clipping. A single podcast episode can cover 10, 20, or even 50 different topics. A two-hour show with multiple guests will jump between stories constantly. The system needs to understand exactly where one relevant clip starts and another ends.

Particle uses AI to handle this, determining when to begin recording and when to stop based on what's being discussed. This is where proprietary technology comes in. The algorithm has to understand not just that someone is talking about AI, but that they've transitioned from one angle on AI regulation to another angle on AI safety—and know to make the cut before the transition.

Transcription is handled by Eleven Labs, a company specializing in converting speech to text at scale. Eleven Labs' technology has gotten sophisticated enough to handle different accents, overlapping speech, and technical jargon. But raw transcription isn't enough. The text has to be timestamped. The system has to know exactly when each word occurs in the audio so it can pull the exact 45-second segment that matters.

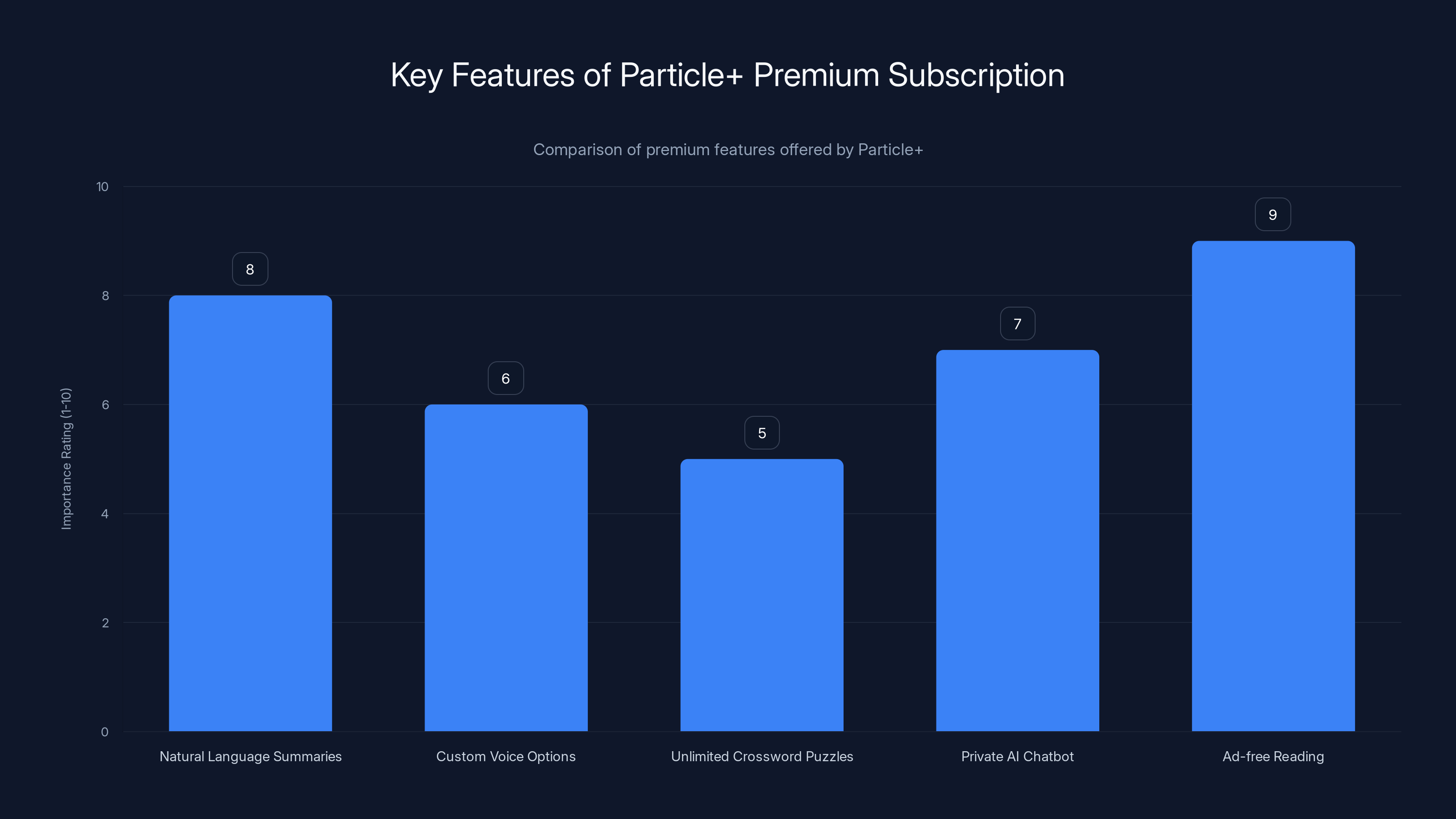

Ad-free reading and natural language summaries are highly valued features of Particle+, with estimated importance ratings of 9 and 8 respectively. Estimated data.

Building Entity Pages: From Stories to People

Particle's architecture extends beyond simple story matching. The app understands entities—people, organizations, locations, concepts.

Sam Altman is an entity. So is Open AI. So is "AI regulation" or "cryptocurrency policy." When you navigate to a person's entity page, instead of seeing random clips, you see a chronological feed of every podcast appearance that person has made.

This is powerful for a specific use case: understanding what influential figures are saying over time. You can watch how someone's position has evolved. You can see when they've been talking about a topic and when they've gone quiet. You can spot patterns in their messaging.

For researchers, journalists, and people trying to understand where power is actually being wielded, entity pages solve a real problem. Right now, if you want to track what Elon Musk has said about a topic over the past year, you have to manually search podcasts, find episodes, listen for relevant segments, and take notes. That's inefficient to the point of being impractical for most people.

With entity pages, you get a feed. No search required. No guessing which podcasts might have covered them. The AI has already done the work.

The system also shows "related entities." If you're looking at a page about AI safety, the app surfaces related people (researchers who study it), related organizations (labs working on it), and related topics (governance, alignment, policy). This creates a web of connections that mirrors how topics are actually discussed.

A journalist working on a story can use this to find sources. A student researching AI policy can use this to understand the landscape. Someone just trying to stay informed can use this to go deeper without leaving the app.

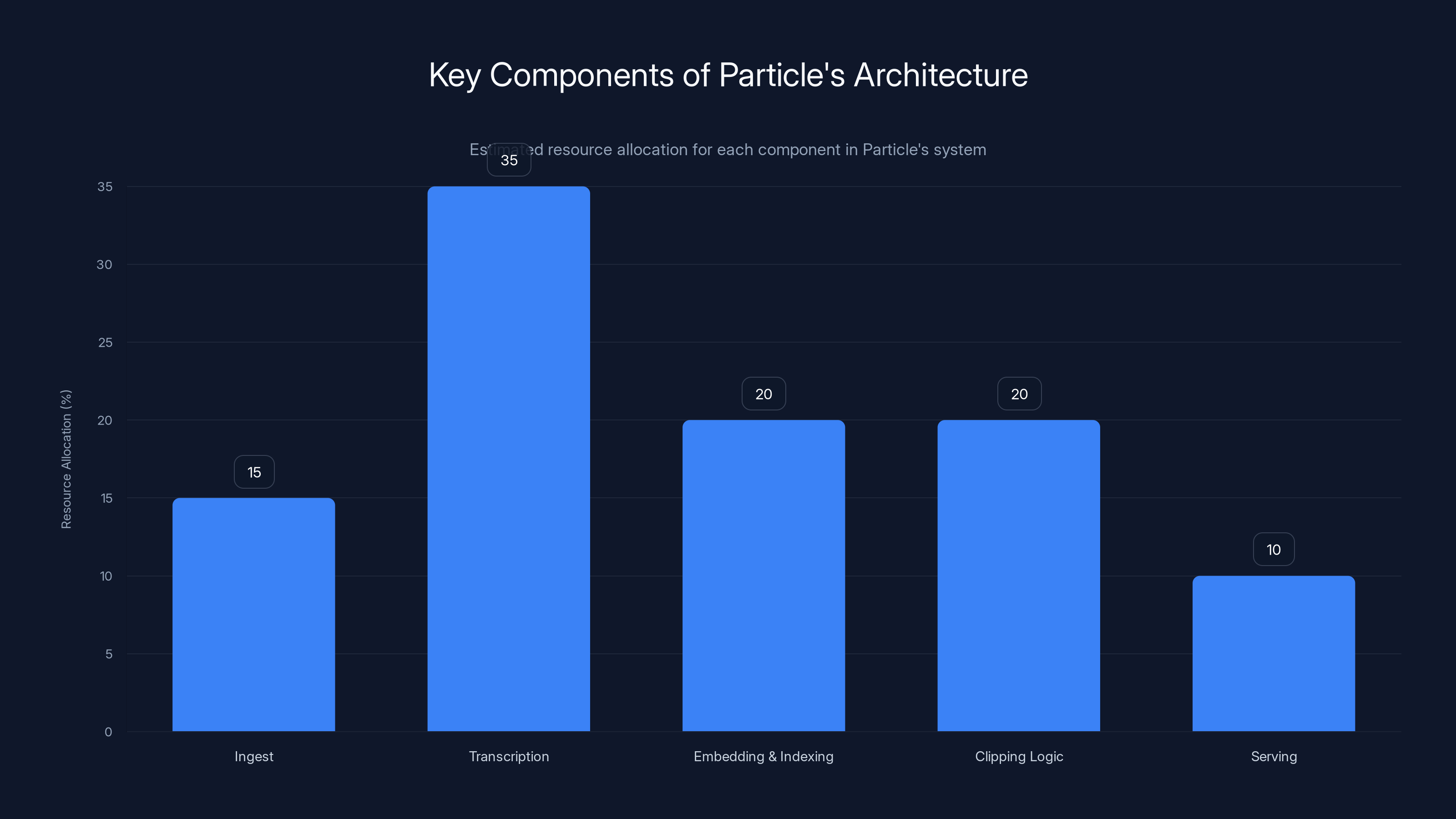

The Architecture: Transcription, Matching, and Clipping at Scale

Building this requires infrastructure most startups don't have.

First, there's ingest. Particle needs to continuously pull new episodes from thousands of podcasts. This requires partnerships with podcast directories (Spotify, Apple, etc.), monitoring for new episodes, and automatically downloading audio files.

Second, transcription. Every episode becomes text. For a single popular podcast with 100 episodes per year, that's 100 hours of audio to transcribe. Multiply that by thousands of podcasts. At current scale, we're probably talking about thousands of hours of audio per week being converted to text. This is computationally expensive and requires a specialized provider.

Eleven Labs and Whisper (Open AI's transcription model) are two of the few providers that can do this reliably. Particle chose Eleven Labs, which likely means they're paying per-hour for transcription services.

Third, embedding and indexing. Every transcribed episode gets converted to vector embeddings. These embeddings are stored in a vector database—a specialized database that's optimized for similarity searches. When a new news story comes in, the system embeds it and searches for nearby vectors in the database.

Fourth, clipping logic. Once a match is found, the system determines where the relevant segment starts and ends. This involves natural language understanding. The algorithm needs to know when someone has finished their point and moved on.

Fifth, serving. When you open the Particle app and scroll to a story, the backend queries the database for related podcast clips, retrieves metadata, and displays them with timestamps so the app knows where to start and stop playback.

At every step, there are latency considerations, cost considerations, and accuracy considerations. If embedding is slow, the app will be slow. If transcription fails, clips won't be generated. If clipping logic is wrong, you get irrelevant 45-second segments.

Building this well requires deep expertise in machine learning, audio processing, distributed systems, and database optimization. This is why most news startups can't do it. It's not just software engineering. It's research-grade infrastructure.

Podcast News: Breaking News That Traditional Media Misses

Here's where this gets interesting from a journalistic perspective.

Traditional news cycles move slowly. A CEO makes an announcement on a podcast. That announcement gets written up by tech blogs within hours. Major newspapers pick it up within days. By the time it hits the Times or the Post, the story is already cold.

But during those intermediate hours, thousands of people have already heard about it from the podcast directly. They've formed opinions. They've shared it with their networks. By the time traditional media publishes, they're often covering old news.

Some of the most important tech news in the past few years broke on podcasts first. When executives discuss strategic shifts, when investors share market observations, when researchers unveil findings, podcasts are often the initial medium.

This creates an asymmetry. People who listen to relevant podcasts are informed. People who don't are uninformed. The gap widens as podcasts become more essential.

Particle solves this by creating a bridge. Even if you don't listen to podcasts, you get the benefit of knowledge from people who do. The clipping feature surfaces the most important moments from the podcast ecosystem directly into your news feed.

But there's a subtler value here. Podcasts contain context that written news strips away. A CEO's tone matters. How they pause and think matters. How they respond to follow-up questions matters. You lose that in a written article. By including podcast clips, you're preserving some of that original context.

Podcasts are perceived as more trustworthy than traditional news outlets, with an estimated 70% trust level compared to 30% for traditional media. Estimated data.

The Monetization Play: Premium Features and Sustainable News

Particle introduced Particle+, an optional premium subscription at

Traditional news sites rely on advertising revenue. More pageviews equal more ad impressions equal more money. But that creates misaligned incentives. Publishers profit when you stay on the site longer, click more links, and engage with distracting content. Quality journalism actually competes with engagement optimization.

Subscription models flip this. Particle makes money when users stay subscribed, not when users click more links. This aligns incentives. If the product is good, users keep paying. If it's not, they cancel.

Particle+ includes several premium features:

Natural language summaries in custom styles: Instead of reading a story, you can ask the app to summarize it in a specific style—straightforward news summary, explainer, opinion piece. This is valuable for busy people who want context without reading the full story.

Custom voice options for audio feed: Particle has an audio feature called "Listen to the News," which reads news summaries aloud. Premium subscribers can choose different voices instead of a default voice. This is a small feature, but it improves the experience for people who consume news while commuting or exercising.

Unlimited crossword puzzles: News apps are adding games to increase engagement and retention. Unlimited access to puzzles is a benefit that doesn't cost much but increases perceived value.

Private questions with AI chatbot: Instead of having conversations that might be logged or analyzed, premium users can have private conversations with an AI assistant. This appeals to users who want to ask follow-up questions about news stories without that data being stored.

Ad-free reading: Most importantly, premium subscribers get an ad-free experience. No pop-ups, no in-article ads, no distracting sponsored content.

At $2.99 per month, the cost is low enough that it doesn't feel like a major commitment. Many people spend more on coffee. But aggregated across millions of users, it becomes meaningful revenue.

The challenge for any news app is convincing people to pay. Most people are accustomed to free news. The paywall has to feel worthwhile. Particle's strategy of bundling multiple small benefits (summaries, voices, puzzles, ad-free) is better than charging for a single premium feature.

Android Release: Expanding Beyond i OS

Particle initially launched on i OS. The Android release is significant because it signals the app is moving from early-adopter experiment to mainstream product.

Android represents a larger addressable market—roughly 70% of smartphones globally run Android. But Android also presents different challenges. There are hundreds of Android device manufacturers, different screen sizes, different processing power. Optimization is harder.

The Android release brought several UI improvements. The "Browse" tab now surfaces timely stories—the 2026 Winter Olympics, major sporting events, trending topics. This is smarter than just showing static categories like "Politics" or "Entertainment."

Entity pages got a redesign too. When you tap on a person, place, or topic, you now see a dedicated page with the entity's definition, top stories, related articles, related entities, and related topics. This creates a navigational web rather than a linear feed.

These changes aren't revolutionary, but they show thinking. The app isn't just pulling in clips and calling it done. It's building a browsable knowledge graph.

The Competitive Landscape: Who Else Is Building This

Particle isn't the only news app experimenting with AI-driven content aggregation.

Perplexity, which started as a search engine, now pulls in multiple sources, verifies them, and presents a synthesized answer. It doesn't do podcast clips specifically, but the principle is similar: instead of making you visit multiple sites, it assembles the relevant information in one place.

Google News has been experimenting with AI summaries. Microsoft's Copilot can summarize news. Apple News has added some AI features. But none of these are specifically focused on podcast integration.

That's where Particle's differentiation lies. While other news apps treat podcasts as an afterthought, Particle has made them central to the experience.

The barrier to entry is high. You need relationships with podcast platforms. You need transcription infrastructure. You need vector database expertise. You need the domain knowledge to understand when a 45-second segment is actually interesting.

Most major news organizations could theoretically build this, but they're slow to move. Most startups don't have the resources. That gives Particle a window.

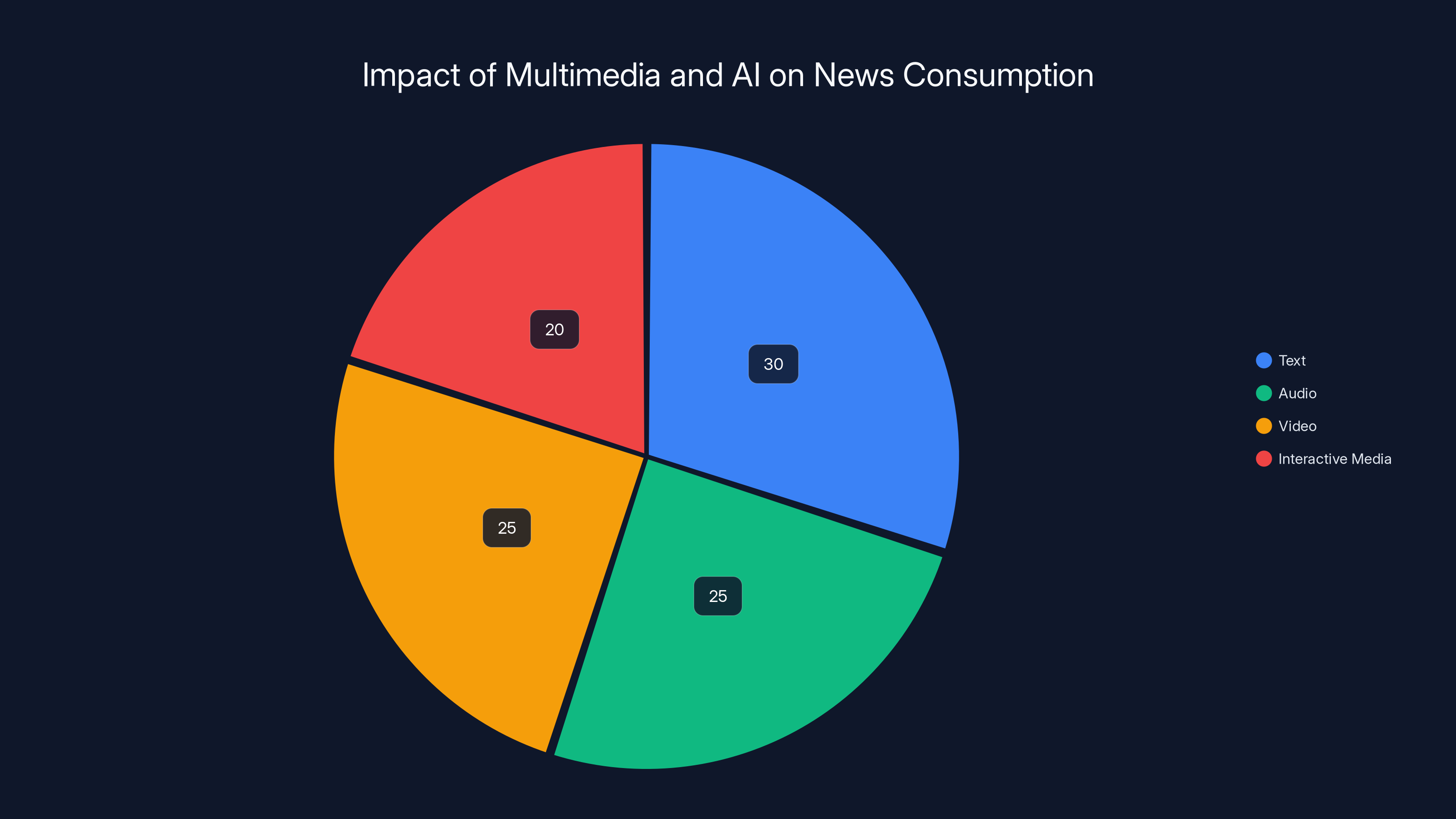

In the AI-driven multimedia era, news consumption is expected to diversify with text, audio, video, and interactive media each holding significant shares. Estimated data.

How Users Actually Interact With Podcast Clips

The user experience is crucial. A feature is only useful if people actually use it.

When you're reading a story on Particle, podcast clips appear as a section below the story. You see a thumbnail, the podcast name, the guest name, and a duration. If you're interested, you tap to play. The app shows a waveform with your current position. You can drag to seek. As the audio plays, the transcript below shows which word is being spoken, highlighted in real-time.

This creates a weird hybrid experience. You're reading while listening. Your eyes follow the transcript while your ears hear the audio. It's not ideal for pure listening. It's not ideal for pure reading. But as a scanning mechanism—a way to quickly verify whether a clip is worth your full attention—it works.

Some users will play the audio. Others will just read the transcript. Some will drag to the middle and check if it's interesting before committing to the full clip.

This flexibility is valuable. Not everyone learns the same way. Not everyone has the same attention span. Not everyone is in a context where they can listen to audio.

The Limits: What Podcast Clips Can't Do

Before getting too excited about this approach, let's acknowledge the limitations.

Latency: The system needs to transcribe, embed, and clip podcasts. This takes time. Unless you're monitoring podcasts in real-time, there will be a delay between a podcast episode dropping and related clips appearing in the Particle feed. If breaking news is happening on a podcast right now, you might not see it clipped for hours.

Accuracy: Transcription errors are real. If a speaker has an accent, mumbles, or uses technical jargon, the transcription might be wrong. If the transcription is wrong, the embeddings are wrong, and the matching is wrong. Cascade failures are possible.

Coverage: Particle can only surface clips from podcasts in its index. If important commentary happens on a niche podcast that isn't tracked, it's invisible.

Context loss: A 45-second clip is inherently decontextualized. You lose the full nuance of a longer discussion. You lose the banter and asides that make podcasts interesting.

Relevance mismatches: The semantic matching algorithm sometimes connects things that aren't actually related. A discussion of "AI policy" might get matched to a story about "AI chips" because they're both about AI. The system has to be smart enough to understand that these are different topics, but sometimes it won't.

Privacy concerns: Transcribing and indexing podcasts raises questions. Do podcast creators consent to being transcribed? Are they compensated? If a transcription contains sensitive information, is it properly handled?

The Broader Shift: Media Consumption and Information Asymmetry

Particle's feature reveals something deeper about how media is changing.

For most of modern history, information distribution was controlled by gatekeepers. You wanted to know the news, you read the newspaper. You wanted to understand current events, you watched the evening broadcast. Gatekeepers decided what was news and how it was covered.

The internet democratized this. Anyone could publish. Anyone could broadcast. But that created information overload. Too much content meant people couldn't process it all.

Solution: algorithms. Google News, Apple News, Facebook's algorithm, Twitter's algorithm. These systems try to surface the most relevant content from the overwhelming abundance.

But algorithms are imperfect. They optimize for engagement, which isn't always the same as importance. They create filter bubbles, where you see more of what you already like. They struggle with emerging stories that don't yet have lots of coverage.

Particle's approach is different. It's saying: instead of trying to optimize for engagement or create personalized bubbles, let's create a complete picture. Read the written news. Listen to what people are saying about it on podcasts. Understand both the story and the commentary.

This is closer to how news actually works in practice. When important news breaks, people don't just read articles. They listen to podcasts where experts discuss it. They read Twitter/X where the community reacts. They watch YouTube videos where people analyze it.

A truly comprehensive news experience would surface all of these simultaneously. Particle is moving toward that with podcast clips. Eventually, you'd see articles, podcast clips, social media reactions, video breakdowns, all in one place.

Transcription is the most resource-intensive component, estimated to require 35% of system resources, highlighting its computational demands. (Estimated data)

Privacy and Ethics: The Untold Story

Here's something worth thinking about that most people don't discuss: what are the privacy and ethical implications of automatically transcribing, indexing, and clipping millions of hours of podcasts?

Podcast creators likely don't expect their content to be indexed by multiple AI systems. When someone records a podcast, they're probably thinking about the 10,000 people who listen via Spotify or Apple Podcasts, not about the fact that their words will be transcribed, embedded into vectors, and matched against news stories.

Does this require consent? Legally, probably not in most jurisdictions. Fair use doctrine suggests that transcription for the purpose of creating an index falls under research or reference. But just because something is legal doesn't mean it's ethical.

There's also the question of accuracy. If a transcription is wrong, and that wrong transcription gets matched to a news story, has Particle made a false association? Who's responsible if someone sees a clipped quote that's inaccurate?

There's also the labor question. Eleven Labs and other transcription services use humans in the loop, at least for quality assurance. This involves human workers listening to and reviewing transcriptions. That's work that's compensated, but it's worth noting that the economic model depends on this labor.

Particle hasn't publicly addressed these questions, probably because they're not yet contentious. But as the system scales and becomes more visible, these conversations will likely emerge.

Comparing Podcast Clip Strategies: What Works and What Doesn't

Particle's approach isn't the only way to integrate podcasts into news consumption.

The New York Times approach: Build an internal tool that monitors podcasts for intelligence. This is labor-intensive but gives you raw material for editorial decisions.

The Substack approach: Let podcast creators publish alongside writers. No automated clipping, but closer integration of audio and written content.

The traditional media approach: Occasionally reference "as discussed on popular podcasts" but don't systematically track it. Slow to respond.

The Particle approach: Automatic transcription, embedding, matching, and clipping. Accessible to users, fast, but requires significant infrastructure.

Each approach has trade-offs. The Times' internal tool gives them depth but not breadth. Substack's approach gives creators choice but requires them to do the work. Particle's approach is automated but less controllable.

The best approach probably depends on your use case. If you're a news organization that needs intelligence about what's being said in podcasts, the Times' approach makes sense. If you're building a consumer news app, Particle's approach makes sense.

The Future: Where This Technology Goes

Assume Particle's feature works well and becomes a competitive advantage. Where does this lead?

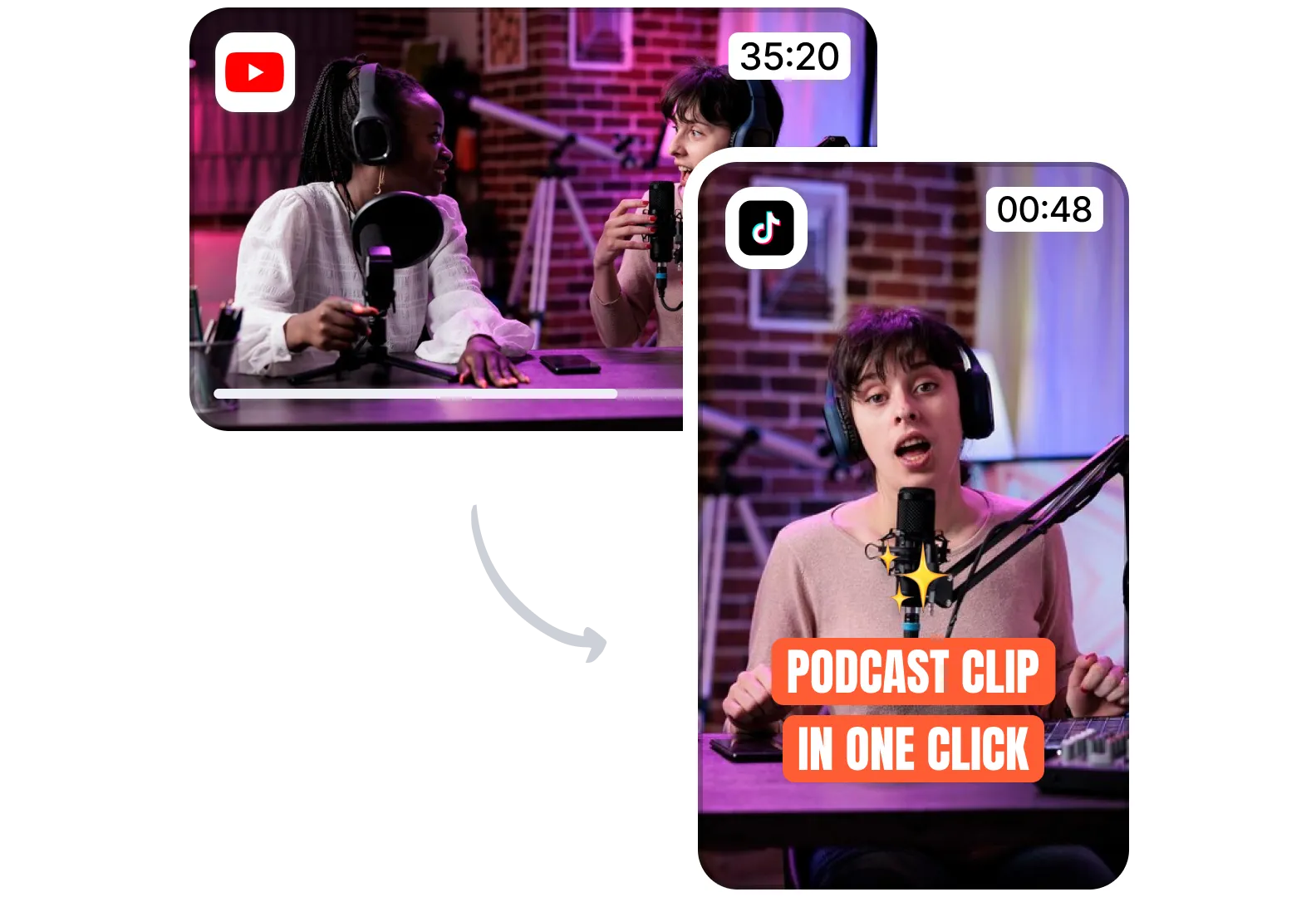

Multi-format integration: Video clips from YouTube, short clips from TikTok, Reddit discussions, Twitter/X threads. The principle is the same—find relevant context from across the internet and surface it alongside written stories.

Real-time alerts: Instead of waiting to see clips in your feed, get notified when someone discusses a topic you're following. "Elon Musk just talked about Mars on a podcast you follow" notifications.

Podcast communities: Community features built around podcast clips. "What did you think of this moment?" Comments, discussions, reactions.

Premium podcast integration: Exclusive clips or early access to clips from premium podcast networks, monetizing relationships with creators.

Transcription improvement: As more podcasts get transcribed, the training data for transcription models improves, leading to better accuracy, which leads to better clipping.

Personalization: The system learns which podcasts you find useful and starts prioritizing clips from those sources. Learns which topics interest you and surfaces clips about those topics more prominently.

The logical endpoint is a unified information feed that doesn't distinguish between formats. You read a story. Below it, relevant context from any source. Podcast clips, video analysis, expert tweets, social discussion. All in one place. All in the format that works best for you.

Podcast news apps leverage vector embeddings and transcription services as key features, with premium tiers offering additional benefits for

The Business Model Question: Can News Apps Sustain Themselves

There's a fundamental question behind all of this: can a news app actually make money?

The history of news app businesses is not encouraging. Google News launched as free aggregation and never successfully monetized. Most news app startups have struggled to reach breakeven, let alone profitability.

The challenge is that news is commoditized. If Story A is behind a paywall and Story B is free elsewhere, people go to Story B. Publishers can't charge for the raw news because the news itself isn't differentiated.

What you can charge for:

- Curation: Filtering the overwhelming amount of news to show only the important stuff.

- Analysis: Explaining what the news means and why it matters.

- Context: Showing you the full picture—articles, podcast clips, video analysis, social reactions.

- Convenience: Aggregating from multiple sources so you don't have to.

Particle is betting on a combination of these. The curation is handled by AI. The context is provided by podcast clips. The convenience is the whole app.

At $2.99 per month, the premium tier is positioned as an easy upgrade, not a major commitment. The free tier is generous enough to be useful, but premium adds enough value that some percentage of users will pay.

The question is whether that percentage is high enough to sustain a team, pay for infrastructure, and grow the product. Most paid news apps see single-digit premium conversion rates. That's a tough math to solve.

But if Podcast Clips becomes a unique feature that's not available elsewhere, that's defensible differentiation. Defensible differentiation can command premium pricing.

Comparing to Other AI News Approaches

Particle isn't the only startup rethinking how news is delivered with AI.

Perplexity takes a search-first approach, combining multiple sources into synthesized answers with citations. It's better for research than staying updated.

The Information is a paid newsletter with expert analysis, but it's human-curated, not AI-driven.

Morning Brew is a human-written daily digest that reached millions of subscribers. But it's not personalized—everyone gets the same content.

LinkedIn News personalizes based on your network and interests, but it's mostly coverage of business news.

Flipboard aggregates from multiple sources and lets you customize your magazines, but it's been around for over a decade and never achieved massive scale.

Particle's unique angle is podcast integration. It's also built by former Twitter engineers, which means the team understands social platforms, algorithmic feeds, and scaling to millions of users.

That's not a guarantee of success—lots of talented teams have failed at news apps—but it's a meaningful advantage.

Lessons for Other News Apps and Platforms

Even if Particle doesn't ultimately succeed as a business, the Podcast Clips feature teaches lessons for other news platforms.

Lesson 1: Context is more valuable than articles: Users don't just want news stories. They want to understand what people are saying about those stories. Podcast clips provide that context.

Lesson 2: Multiple formats make better sense: A story about "AI regulation" is better understood with articles, expert interviews, podcast commentary, and video analysis. No single format is sufficient.

Lesson 3: Infrastructure enables differentiation: The companies that can transcribe, embed, and match at scale will have advantages. This creates moats.

Lesson 4: Podcasts are data: Instead of ignoring podcasts, smart news platforms will systematically extract signals from them.

Lesson 5: Convenience matters more than you think: If you can show someone all the relevant information without them having to search multiple platforms, they'll use it. Repeatedly. And they'll potentially pay for it.

The Convergence: Where News Meets Search Meets Podcasts

Step back and look at the bigger picture. Multiple trends are converging:

- Podcasts are exploding as both content and as a source of original news and analysis.

- AI makes it possible to transcribe, understand, and index audio at scale.

- Vector embeddings make it possible to find semantic connections between different types of content.

- Users want convenience over having to manage multiple apps.

- Paywalls are becoming necessary for sustainable journalism.

These forces together create the conditions for new kinds of news apps. Not just aggregators (that's 2010). Not just summarizers with AI (that's 2023). But platforms that bring together written news, podcast commentary, video analysis, and expert perspectives into a unified experience.

Particle's Podcast Clips feature is a step toward that. It's not the end state, but it's movement in a directional shift that matters.

The winners in news won't be the ones who cover more stories or publish faster. They'll be the ones who provide the most complete picture with the least friction.

FAQ

What are podcast clips in news apps?

Podcast clips are short audio segments extracted from full podcast episodes and matched to related news stories. Instead of listening to an entire podcast to find relevant commentary, users can play a 30-60 second clip directly within a news app while reading the related story. The clips are automatically identified using AI technology that understands semantic relationships between podcast content and news articles.

How does AI identify relevant podcast moments?

The process works through vector embeddings, which are mathematical representations of meaning. When a podcast is transcribed and a news story is published, both are converted into vectors. The system compares these vectors to find semantic similarity—when a podcast moment and a news story are discussing the same topic, their vectors are mathematically close. Once a match is found, AI algorithms determine where the relevant segment starts and ends in the audio file, creating a precise clip that captures just the important moment without extra context.

Why are podcasts becoming important for news?

Tech executives, politicians, and influential figures now often break news and share analysis on podcasts before traditional media coverage appears. This means people who only read articles are missing conversations that shape public understanding. Podcasts allow for longer-form discussion, nuance, and direct communication without editorial intermediaries. As podcast audiences have grown—millions subscribe to major shows—the medium has become essential to understanding modern news and commentary.

What's the difference between podcast clip technology and generative AI like Chat GPT?

Podcast clip technology uses vector embeddings to understand relationships, not to generate new content. The AI isn't creating summaries or writing new text. It's understanding which podcast moments relate to which news stories and determining where to clip. This is fundamentally different from generative AI, which creates new text. Embeddings are faster, more reliable, and less prone to hallucination because they're not inventing anything—they're finding actual content that already exists.

How much does transcription of podcasts cost at scale?

Transcription costs vary by provider and quality requirements. Services like Eleven Labs and Open AI's Whisper charge per minute or hour of audio. For a platform processing thousands of hours of podcasts weekly, transcription represents a significant infrastructure cost. This cost is a barrier to entry that protects companies like Particle that have already invested in the capability. As transcription improves and becomes cheaper, more news platforms will likely add podcast integration.

Can podcasters opt out of being transcribed and clipped?

Legally, the answer varies by jurisdiction. In most places, transcription for indexing purposes falls under fair use. However, the ethical question is separate from the legal one. Podcasters have not explicitly consented to automated transcription by companies like Particle, and this raises privacy and rights questions. As the practice becomes more widespread, we'll likely see discussions about whether creators should be compensated or given control over how their content is used.

How accurate is the clipping process?

Accuracy depends on both transcription quality and semantic understanding. Transcription errors cascade through the system—if a word is transcribed incorrectly, embeddings might be wrong, and matching might fail. Similarly, the system might sometimes match unrelated content because they share keywords. Improvements in transcription accuracy and embedding models directly improve clipping accuracy. As these technologies mature, accuracy will approach but never reach 100%.

What's the business model for news apps using podcast clips?

Most news apps combine free access with premium subscriptions. Free users see ads and get basic features. Premium subscribers pay (

Are there privacy concerns with indexing podcasts?

Yes. Automatically transcribing and indexing millions of hours of podcast content involves processing sensitive information. If a podcast contains someone's name, address, or personal story, that information gets transcribed and indexed. This raises questions about data storage, handling of sensitive information, and who has access to these transcriptions. As podcast indexing becomes more common, regulations around it will likely develop.

How will podcast clip technology evolve?

The logical progression includes integrating clips from other video platforms like YouTube, real-time alerts when people discuss topics you follow, personalization based on which podcasts you find useful, and integration with social features like community discussions around clips. Eventually, the distinction between articles, videos, podcasts, and social media might blur into a unified information feed where the format is determined by what's most useful rather than what the content naturally is.

The Bottom Line: News Is Becoming Multimedia and AI Is Making It Efficient

Particle's Podcast Clips feature is a small feature in a small news app, but it points to something bigger: the way we consume news is fundamentally changing, and AI makes it possible to provide better experiences than were previously feasible.

For decades, news has been primarily written. Radio and television added audio and video, but they were separate distribution channels with separate audiences. The internet promised to converge all of these, but execution lagged. Most news sites are still primarily text with maybe embedded video.

Now, for the first time, the infrastructure exists to automatically integrate all formats. Transcription is good enough. Embeddings are sophisticated enough. Databases are fast enough. Mobile devices are powerful enough. All the pieces exist to show you a story with related podcast commentary, video analysis, expert tweets, and social discussion, all together, immediately.

Companies like Particle are moving toward this. It's not there yet. But the direction is clear.

For readers, this means better context and more complete understanding. Instead of reading an article about AI regulation and wondering what experts actually think, you hear from them. Instead of missing breaking news because it happened on a podcast you don't follow, you see it in your news feed.

For podcasters, this means their content becomes more discoverable. If you recorded something insightful about a topic, it might get surfaced to millions of people through a news app, driving listeners to your show.

For news organizations, this is both threat and opportunity. It's a threat because these new platforms could disintermediate traditional media. It's an opportunity because they create new distribution channels for quality analysis and reporting.

The winners will be the ones who move fast, build good product, and understand that the future of news is multimedia, AI-assisted, and oriented around providing complete context rather than individual articles.

Particle might be that winner. Or they might be a stepping stone toward something bigger. Either way, they've identified a real problem—podcasts contain important commentary that's hard to access—and built a scalable solution.

In an information ecosystem where the pace of change is accelerating and the amount of content is overwhelming, solutions that help people understand what actually matters will be valuable.

Podcast clips are one small piece of that puzzle. But they're pointing in the right direction.

Key Takeaways

- Podcasts have become a primary news source where tech executives and influential figures break news before traditional media, creating an information asymmetry for people who don't listen to them

- Vector embeddings enable semantic matching between podcast moments and news stories without generative AI, making the system faster and more reliable than large language models

- Podcast transcription and indexing requires significant infrastructure investment, creating competitive moats for platforms like Particle that can execute at scale

- News apps are consolidating around premium subscription models (9.99/month) because advertising-based models misalign incentives with quality journalism

- The future of news will integrate multiple formats—articles, podcasts, videos, social reactions—into unified feeds with AI determining the most relevant format for each story

Related Articles

- Apple Podcasts Video Experience: Reshaping Podcast Consumption [2025]

- Best News Apps for Android [2025]

- Roku's Streaming Bundle Strategy: How It Plans to Drive Profitability in 2026 [2025]

- Why Bezos Abandoned the Washington Post's Newsroom [2025]

- Spotify's 751M User Milestone: How Wrapped & Free Tier Features Changed the Game [2025]

- YouTube TV $80 Discount: How to Get It Before 2025 Ends

![AI News Apps Transform Podcasts Into Instant News Clips [2025]](https://tryrunable.com/blog/ai-news-apps-transform-podcasts-into-instant-news-clips-2025/image-1-1771866435971.png)