Anthropic Accuses Deep Seek, Moonshot & Mini Max of Claude Distillation Attacks [2025]

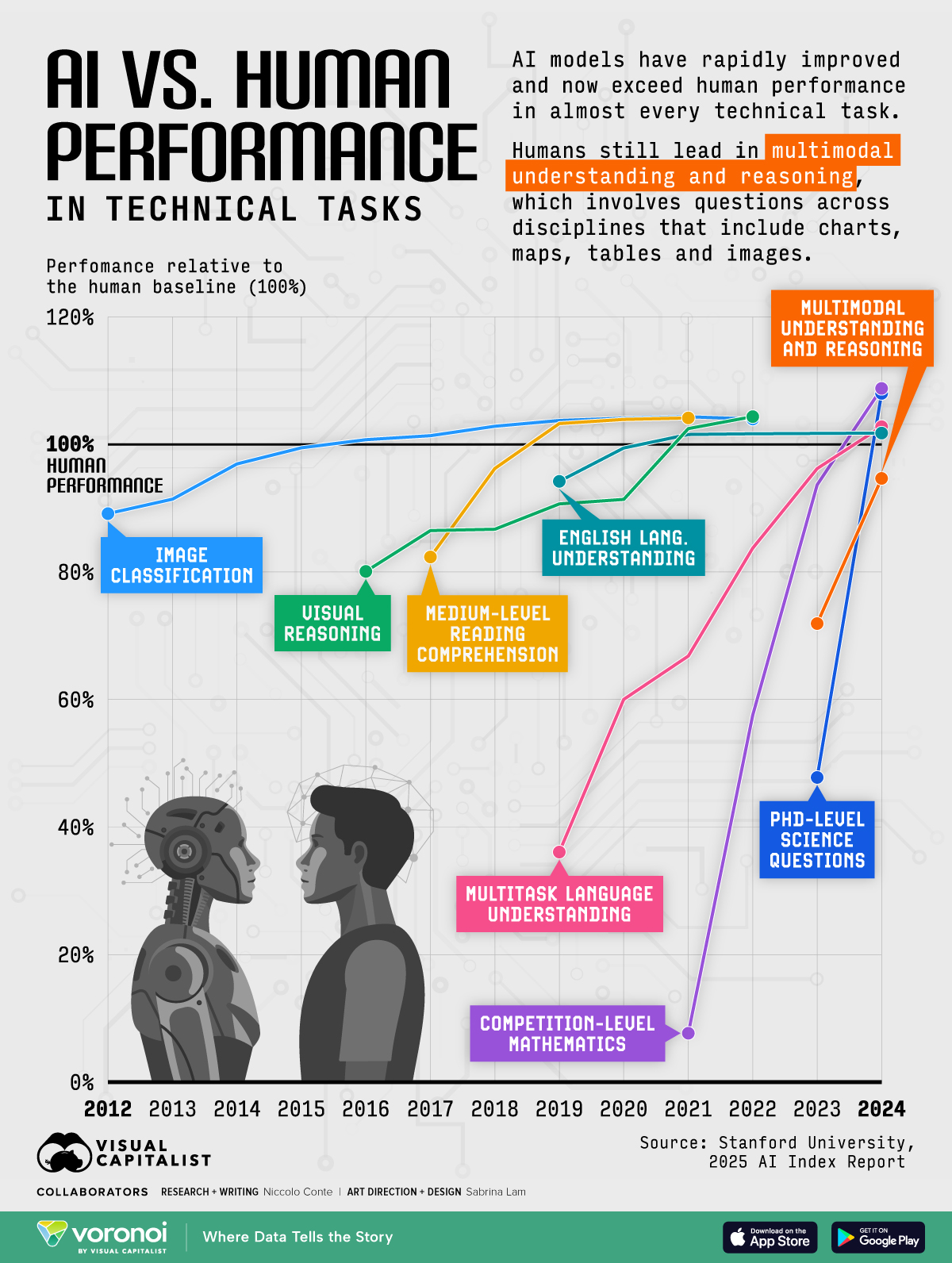

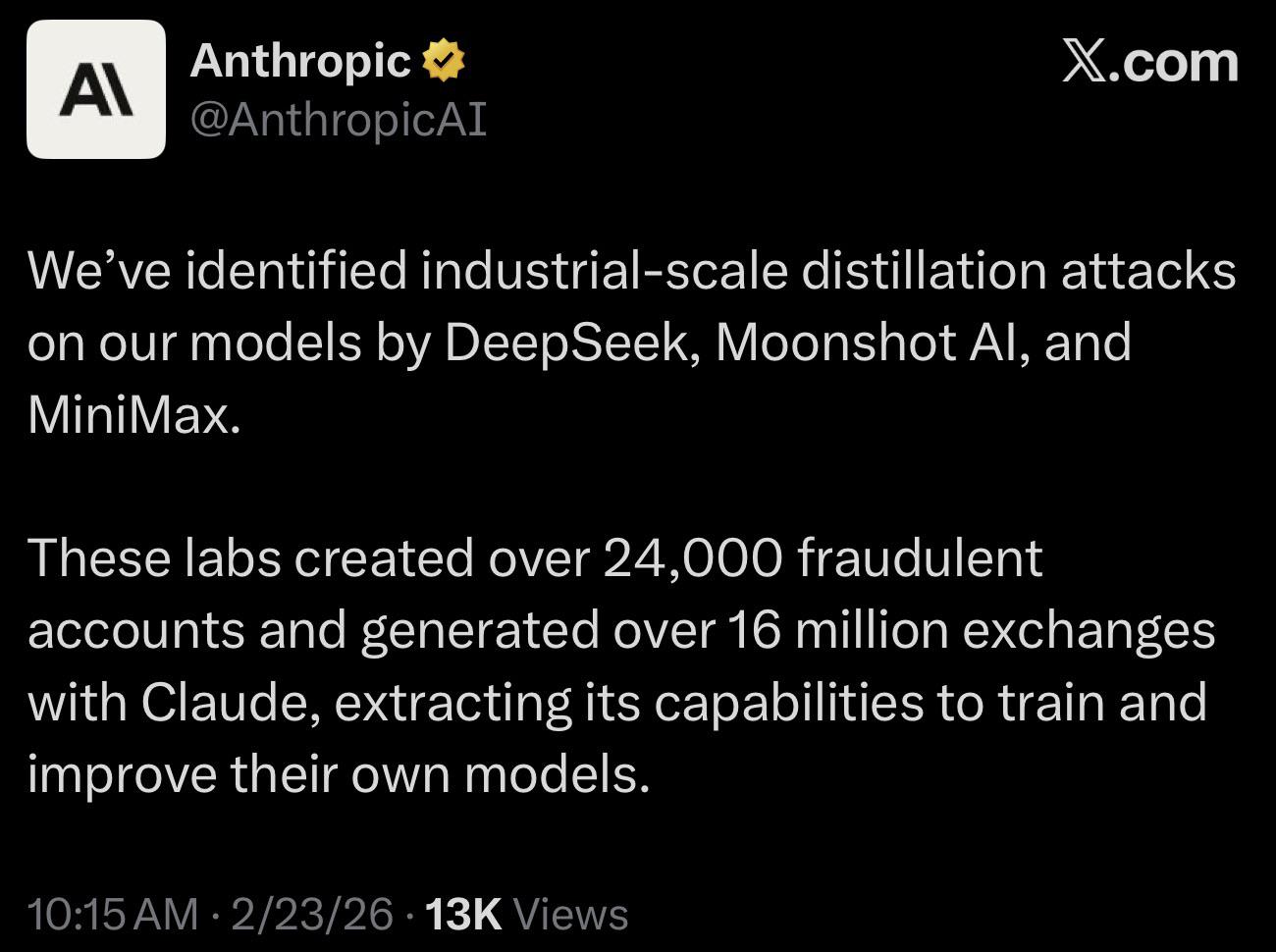

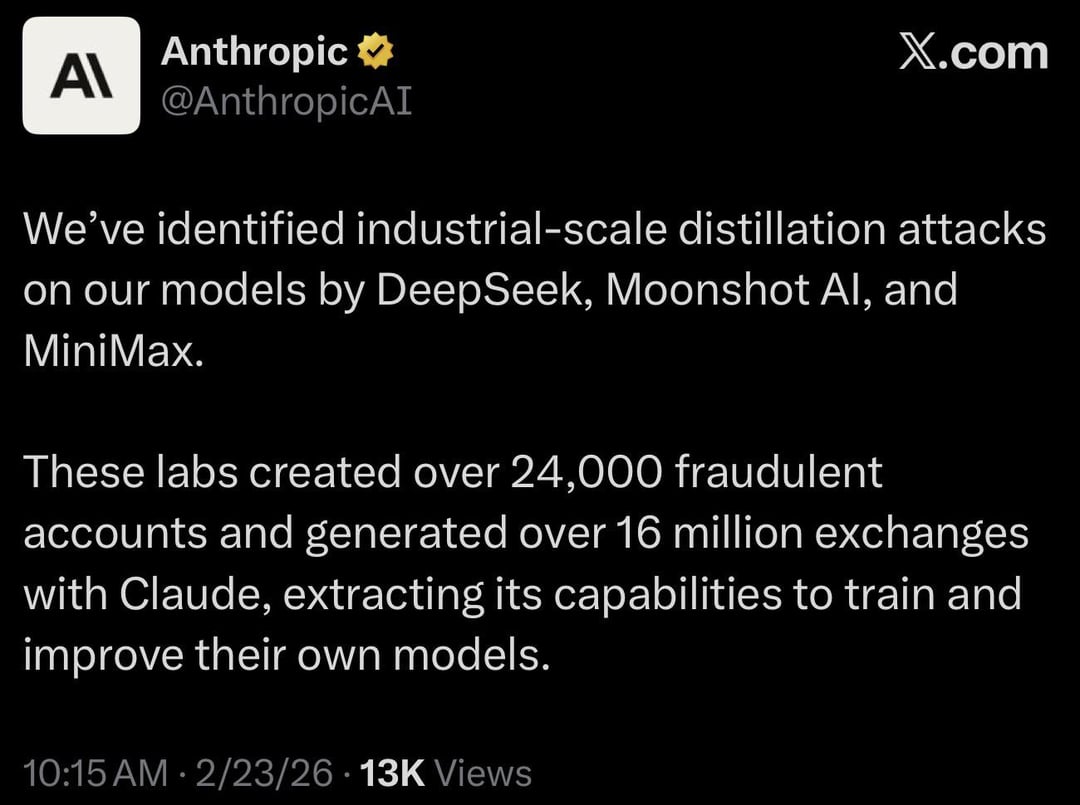

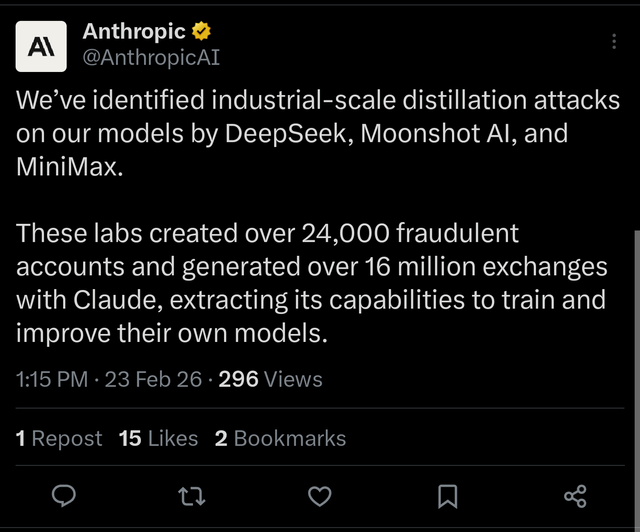

In early 2025, the artificial intelligence industry experienced a significant wake-up call. Anthropic, the company behind the popular Claude chatbot, publicly accused three Chinese AI laboratories of conducting what they called "industrial-scale distillation campaigns" to extract and repurpose Claude's capabilities.

The three companies named in the accusation were Deep Seek, Moonshot (also known as Kimi), and Mini Max. According to Anthropic's findings, these firms orchestrated coordinated attacks that involved more than 16 million interactions with Claude across approximately 24,000 fraudulent accounts.

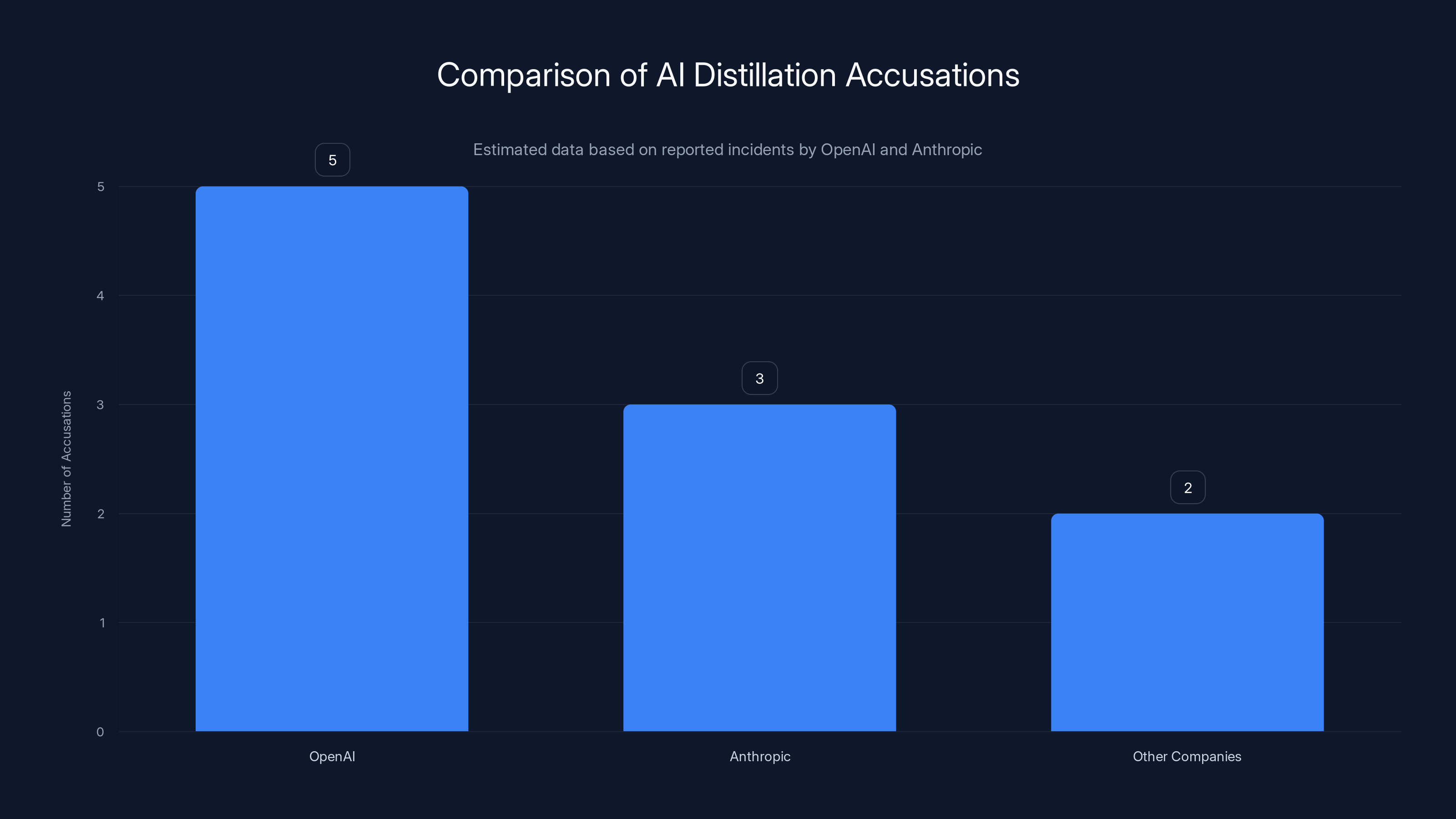

This incident marks a critical inflection point in AI development and raises urgent questions about intellectual property, competitive practices, and the sustainability of large language model safety. It also mirrors similar accusations that OpenAI made against competing firms just months earlier, suggesting this is becoming a systemic problem in the industry.

But what exactly happened? How does model distillation work as an attack vector? And what does this mean for the broader AI landscape going forward? Let's dig into the details.

What Is Model Distillation and Why It Matters?

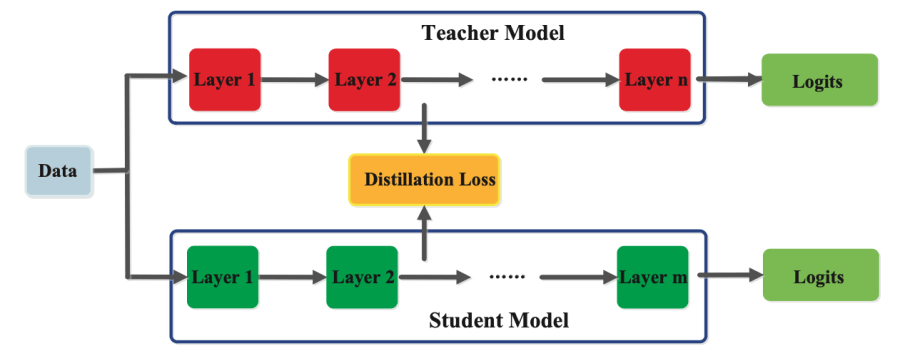

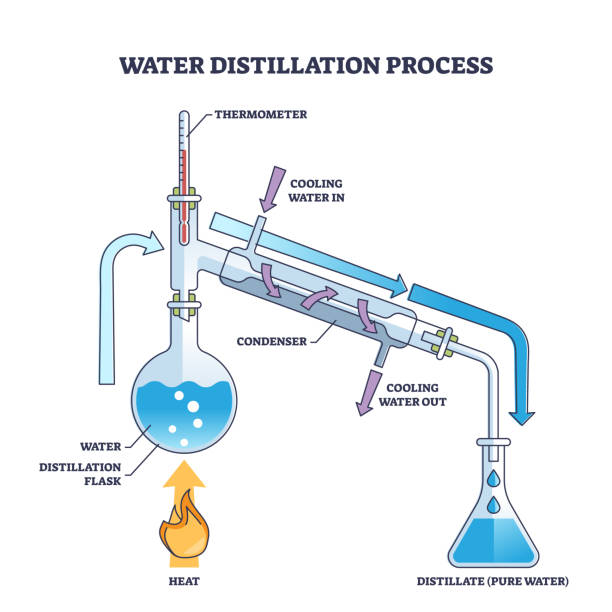

Model distillation is a legitimate machine learning technique where a smaller, less capable model learns from the outputs of a larger, more powerful model. Think of it like an apprentice learning from a master craftsperson. The apprentice observes the master's work, studies the patterns, and gradually develops their own skills by replicating what they've learned.

In the AI context, distillation typically works like this: you take a large model (the teacher) and a smaller model (the student). You feed the same prompts to both. The larger model produces complex, nuanced responses. The smaller model uses those responses as training data to improve its own outputs. Over time, the student model starts producing results that approximate the teacher model's quality, even though the student has fewer parameters and less computational capacity.

Historically, distillation has been a perfectly legitimate and widely used technique in machine learning. Major AI labs use it to create more efficient versions of their models. If you've ever used a lighter, faster version of an AI model that still performs well, you've benefited from distillation. It's how companies make AI accessible on mobile devices and in resource-constrained environments.

The distinction Anthropic is making here is crucial: distillation itself isn't the problem. What Anthropic is calling out is the scale, coordination, and intent behind these specific distillation activities.

When used appropriately, distillation helps democratize AI. When weaponized, it becomes a shortcut for competitors to bypass years of research, safety testing, and computational investment. It's the difference between learning someone's techniques and stealing their trade secrets.

The Technical Mechanics of Distillation Attacks

A distillation attack differs from legitimate distillation in several critical ways. Instead of a controlled, transparent process, an attack involves secretly extracting a model's capabilities at industrial scale without permission or compensation.

Here's how the attack typically unfolds. First, attackers create thousands of accounts to evade rate limiting and detection. Second, they systematically query the target model (Claude, in this case) with carefully designed prompts. These aren't random questions. They're strategically selected to extract specific capabilities: reasoning, coding, creative writing, mathematical problem-solving.

Third, they harvest the responses. Every output from Claude becomes training data for their own models. They run these outputs through their own training pipelines, slowly building models that replicate Claude's performance without having to do the underlying research.

Fourth, they iterate and refine. By analyzing Claude's responses across millions of queries, they create comprehensive datasets that capture Claude's behavior, decision-making patterns, and even its quirks and biases.

The economic incentive is massive. Building Claude required years of research, billions in computational resources, extensive safety testing, and a team of hundreds of researchers. Distillation attacks attempt to replicate that output in months for a fraction of the cost.

Why This Isn't Just About Technology

Anthropically, this goes beyond technical capability. It's about the competitive dynamics shaping AI development. If competitors can extract capabilities through distillation attacks, it undermines the investment case for building better, safer models from scratch.

Consider the incentive structure: Company A invests billions in research and safety. Company B waits a few months, then extracts Company A's capabilities through distillation. Company B launches their own model at lower cost and with fewer safety investments. Who wins?

This dynamic could create a race to the bottom in safety practices. Why invest in alignment research if you can just distill a safer model? Why spend resources on red-teaming if you can steal someone else's safety findings?

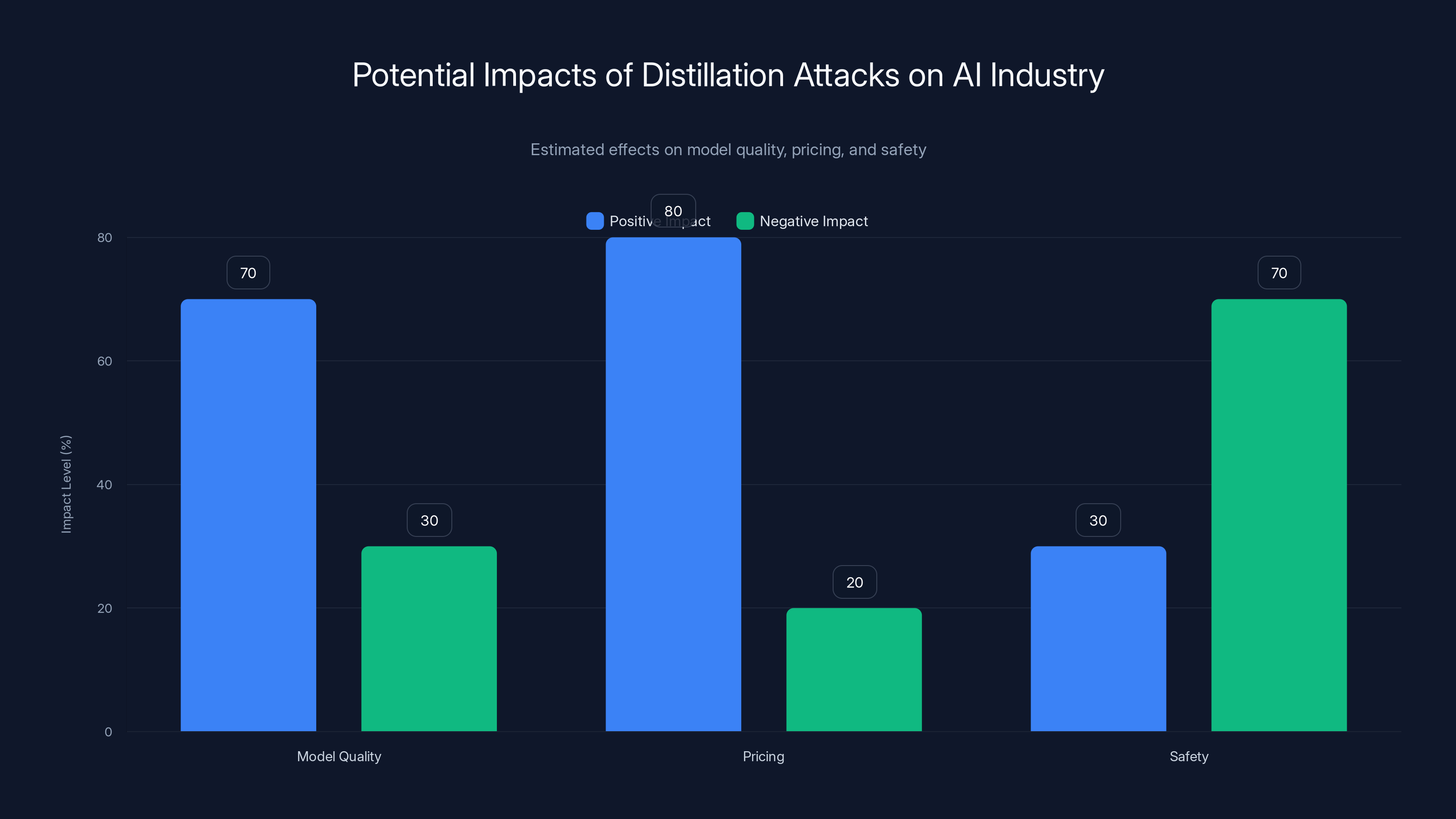

Distillation attacks could enhance model quality and reduce pricing but may compromise safety. Estimated data reflects potential industry impacts.

The Specific Accusations Against Deep Seek, Moonshot, and Mini Max

Anthropric's accusation centers on three companies: Deep Seek, Moonshot AI, and Mini Max. Each has emerged as a significant player in the Chinese AI landscape, competing directly with both Chinese and international models.

Deep Seek has garnered significant attention for its reasoning capabilities and efficiency claims. The company has positioned itself as a challenger to larger models while maintaining competitive performance. Moonshot, known for its Kimi chatbot, has focused on conversational AI and reasoning tasks. Mini Max develops multimodal models and has emphasized cost-effectiveness.

What these three have in common, according to Anthropic, is a systematic pattern of accessing Claude through thousands of fraudulent accounts to extract its capabilities.

The Evidence Anthropic Presented

Anthropic claimed it could link the distillation campaigns to these specific companies with "high confidence." The detection methodology relied on several technical indicators.

First, IP address correlation. When you access Claude from thousands of accounts within a specific infrastructure, patterns emerge. Anthropic identified IP ranges associated with these companies' operations and correlated them with account creation and usage patterns.

Second, metadata analysis. Requests carry metadata including user agents, API call patterns, and temporal patterns. Legitimate users have natural variation. Coordinated attacks show characteristic patterns: unusual query sequences, systematic repetition of specific prompt types, and clustering of requests.

Third, infrastructure indicators. These companies likely used cloud providers or proxies to mask their origin, but sophisticated analysis can still correlate back to known infrastructure. Anthropic likely cross-referenced these patterns with known data about these companies' cloud usage.

Fourth, corroboration. Anthropic mentioned working with "others in the AI industry" who noticed similar behaviors. This suggests Open AI, Google, and other major labs have seen comparable patterns and shared information.

Together, this evidence paints a picture: coordinated, large-scale extraction of Claude's capabilities by specific Chinese AI companies.

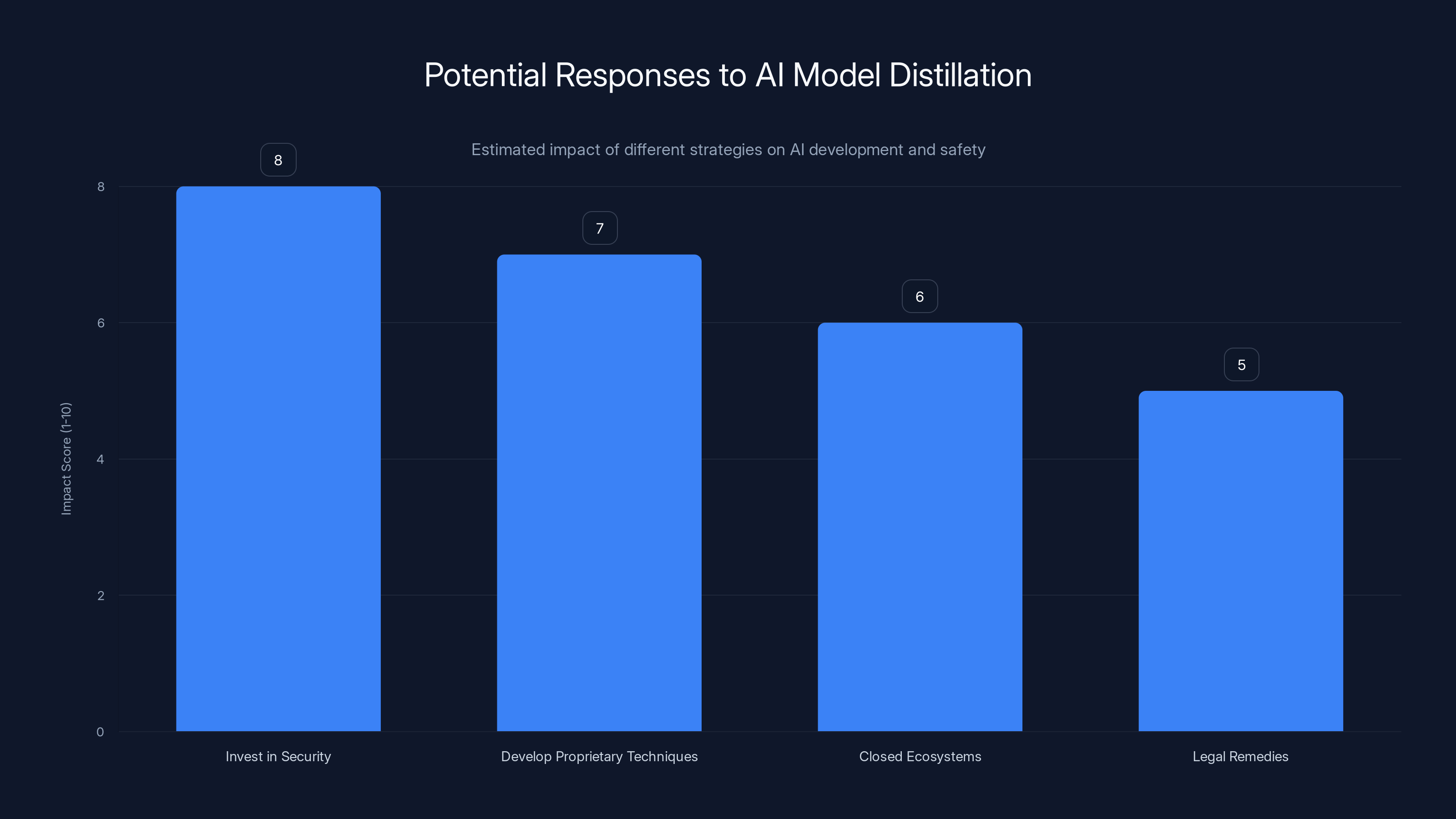

Investing in security and developing proprietary techniques are estimated to have the highest impact on mitigating risks from AI model distillation. Estimated data.

How This Compares to Open AI's Earlier Accusations

Anthropoc's accusations didn't emerge in a vacuum. Just months earlier, Open AI made similar claims against competing AI companies for distilling its GPT models. This suggests distillation attacks are becoming a standard competitive tactic in AI development.

Open AI's situation involved similar mechanics: thousands of accounts, millions of queries, systematic extraction of model capabilities. Open AI responded by banning suspected accounts and upgrading its detection systems.

The parallel accusations from two of the largest AI companies suggest this isn't isolated incidents but a pattern. If multiple companies are experiencing similar attacks, it indicates the practice is normalized enough to happen repeatedly.

The Pattern Across the Industry

Both Anthropic and Open AI identified distillation attempts from companies seeking to improve their models without doing original research. The tactics are consistent: create accounts, query at scale, extract outputs, incorporate into training data.

What's interesting is that both companies are based in the US and both accused Chinese companies. This has geopolitical implications. Some interpretations view it as US companies protecting market advantage. Others see it as legitimate defense against coordinated IP extraction. The reality is probably more nuanced.

The important pattern isn't the geography but the behavior: when building state-of-the-art AI models becomes expensive and difficult, competitors are incentivized to take shortcuts. Distillation attacks are that shortcut.

The Technical Response: How Anthropic Is Defending Claude

Anthropic said it would "upgrade its system to make distillation attacks harder to do and easier to identify." What does that mean technically?

Enhanced detection systems likely involve machine learning models trained to recognize attack patterns. These would analyze request patterns, account behavior, and query sequences to identify coordinated distillation campaigns before they scale.

Rate limiting improvements can slow or stop large-scale extraction. If you limit each account to, say, 50 queries per hour, conducting 16 million queries becomes much harder. Attackers would need 400,000 accounts rather than 24,000, increasing detection risk.

Behavioral analysis can flag accounts that show characteristic attack patterns: unusual query sequences, rapid-fire requests, systematic coverage of specific capability areas, lack of natural conversation flow.

Output manipulation is a controversial but effective technique. You could add slight variations or noise to responses, making them less useful for training without affecting human users. This degrades the value of stolen data.

Access restrictions can require additional authentication for API access, making it harder to create fraudulent accounts at scale.

Anthropic's multi-layered approach suggests they're thinking about this systematically, not just blocking known accounts.

Model distillation significantly improves efficiency and accessibility of AI models while retaining performance. Estimated data.

Broader Implications for AI Development and Safety

This incident raises questions that extend far beyond the three companies named. What does systematic IP theft mean for the AI development process? How does it affect competition and innovation?

The Innovation Paradox

Distillation attacks could create perverse incentives. If companies can't protect their models, they might reduce access, making AI less available to researchers and developers. Alternatively, companies might move critical infrastructure offline, slowing collaboration and knowledge sharing.

In academic research, openness drives progress. Researchers share papers, code, and datasets. But in commercial AI, companies guard their models jealously. Distillation attacks could accelerate the move toward closed systems, harming the broader research ecosystem.

Safety and Alignment Concerns

Anthropoc and other major labs invest heavily in AI safety and alignment research. Their models undergo extensive red-teaming, safety testing, and alignment work. When competitors distill these models, they inherit the capabilities but potentially not the safety measures.

A model extracted through distillation might be faster or more efficient but potentially less aligned with human values. It could bypass safety considerations that the original model incorporated.

This creates a troubling dynamic: the safest, most aligned models become targets for extraction, while distilling competitors avoid doing their own safety work.

Economic Competition

Why invest billions in research if competitors can steal the results? This question will shape AI development strategy going forward. Companies might need to:

- Invest more in security and distillation detection

- Develop proprietary techniques that are harder to distill

- Move toward closed ecosystems rather than open APIs

- Pursue legal remedies and industry agreements

Each of these responses has tradeoffs. More security costs money. Closed ecosystems slow collaboration. Legal remedies require international cooperation.

The Geopolitical Dimension

Anthropoc's accusations focus on Chinese companies, and this deserves careful analysis. It's not just a technical issue but a geopolitical one.

US-China AI Competition Context

The US and China are engaged in what many observers call an "AI race." Both countries want to develop advanced AI capabilities first, both for commercial advantage and strategic significance. From the US perspective, protecting American AI companies' intellectual property is a national interest.

From China's perspective, development paths matter. Chinese companies have excelled at rapid iteration and cost optimization. If Chinese researchers can accelerate model improvement through distillation, it shortens the time to competitive parity.

Neither perspective is illegitimate, but they're in tension. The US wants to protect its innovation lead. China wants to catch up faster.

The Broader Pattern

Accusations of IP theft and unfair competition are common in US-China relations across multiple industries. What makes this noteworthy is that the accusations come from AI companies themselves with technical evidence, not from government agencies.

If these accusations are accurate, they suggest a particular competitive strategy: Chinese companies are using distillation to accelerate their own development. If they're inaccurate, they represent a concerning use of the narrative of foreign IP theft to restrict competition.

The truth likely involves both elements. Distillation attacks are probably happening. The framing and emphasis on nationality may also serve to protect market position.

Both OpenAI and Anthropic have reported multiple distillation attacks, indicating a growing trend in the AI industry. Estimated data suggests this is becoming a common issue.

How Distillation Attacks Affect Users and the Industry

If you use Claude, what do these accusations mean for you? How might they shape the AI landscape you interact with?

Potential Impacts on Model Quality

If distillation attacks succeed at scale, competing models might improve faster than they would through organic development. For users, this could mean better products available sooner. However, if those products bypass safety research, the tradeoff might not be worth it.

Distilled models might perform well on standard benchmarks but fail in edge cases or safety scenarios. You might not notice the difference initially, but over time, potential issues could emerge.

Availability and Pricing

Competition drives prices down. If distillation attacks allow competitors to build capable models more cheaply, AI services might become more affordable faster. That's good for consumers.

But if companies respond by restricting access or moving to closed systems, competition could decrease and prices could rise. The net effect depends on how successful distillation attacks become and how thoroughly companies can defend against them.

The Race to the Bottom Problem

If distillation becomes standard practice, it could create a race to the bottom in safety and alignment. Companies that cut corners on safety work might outcompete those that invest heavily. Over time, this could lead to a market where less safe models dominate simply because they're cheaper to develop.

This is a coordination problem. No individual company wants to reduce safety investment, but if competitors do it and gain advantage, you're forced to follow or lose market share.

What Anthropic's Response Tells Us About AI Security

Anthropic's public announcement of the distillation campaigns and their plans to address them reveals what the company believes about security strategy.

Transparency as a Tool

By going public with accusations, Anthropic is using transparency as a deterrent. They're signaling that they detect distillation attacks, can identify the perpetrators, and will publicize findings. This makes the practice riskier for potential attackers.

It also puts pressure on the accused companies. Deep Seek, Moonshot, and Mini Max now face reputational consequences and potential legal liability. Anthropic is raising the cost of the behavior.

Industry Coordination

Anthropoc's mention of "corroborating with others in the AI industry" suggests informal coordination among major labs. Without formal agreements or regulations, companies are sharing threat intelligence and defensive strategies.

This coordination might lead to industry standards for detection and prevention. Over time, you'd expect to see common practices emerge across Open AI, Anthropic, Google, and others.

The Limits of Technical Defense

No purely technical defense can completely prevent distillation attacks. If a model has an API, someone can query it. The best you can do is make attacks expensive, risky, and detectable.

This suggests the long-term solution involves regulation, international agreements, or legal frameworks that make distillation attacks legally and commercially risky enough to deter them.

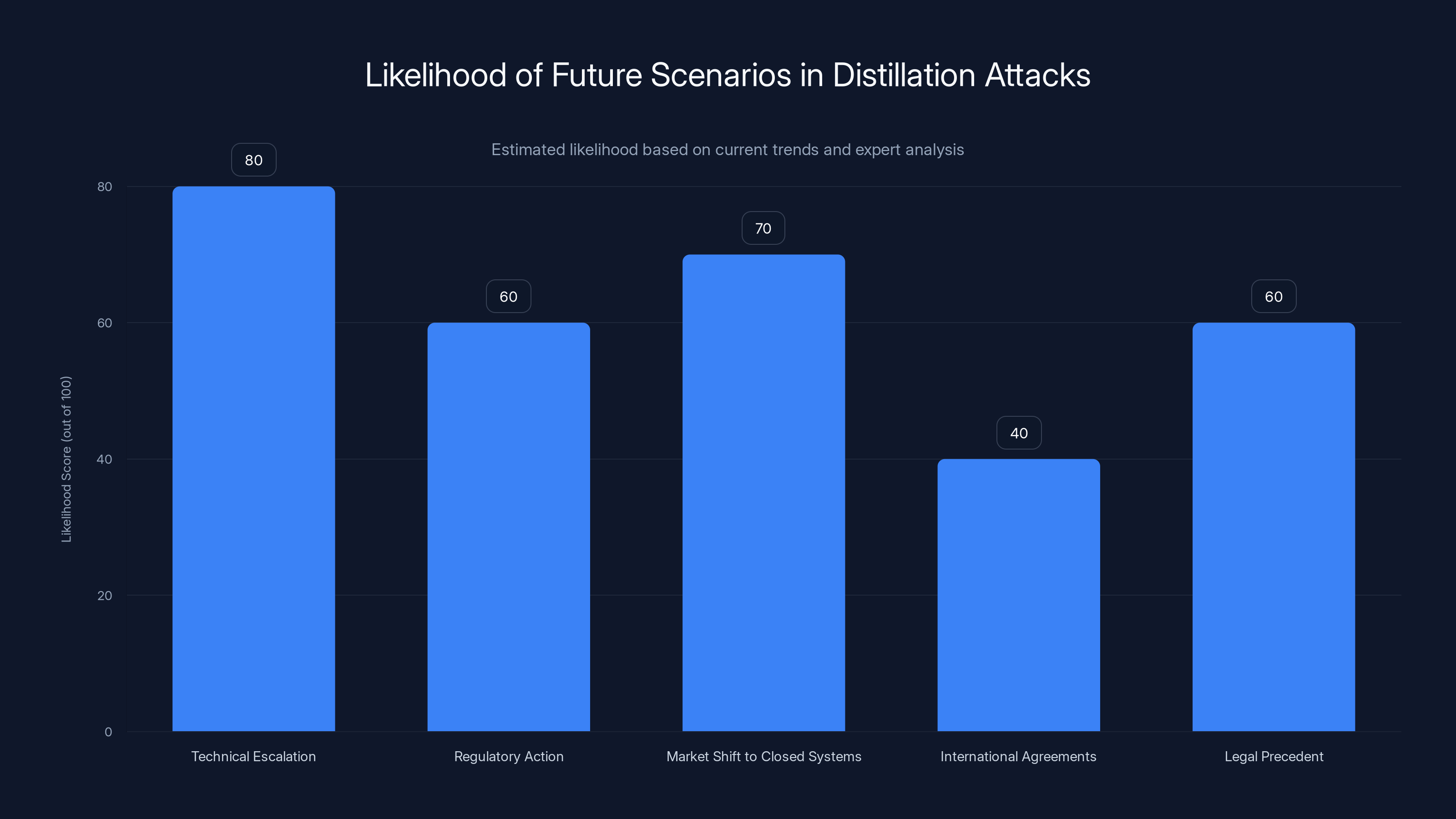

The most likely scenario is a technical escalation, with companies and attackers engaging in an ongoing arms race. Regulatory action and market shifts to closed systems are also probable developments. (Estimated data)

The Music Publisher Lawsuit and Broader IP Questions

Intriguingly, Anthropic itself is facing IP-related accusations. Music publishers sued Anthropic for allegedly using copyrighted songs to train Claude without permission. This creates an ironic parallel: Anthropic complains about having its IP stolen while allegedly stealing music publishers' IP.

This highlights a broader tension in AI development. Training large language models requires massive datasets. Those datasets often include copyrighted material. Getting explicit permission for everything would be practically impossible. But using material without permission raises legal and ethical questions.

Anthropic's position in legal proceedings against music publishers suggests the company may struggle to make a convincing case against distillation attacks if it itself used copyrighted material. The moral authority to protect IP is weakened by accusations of IP infringement.

That said, there's a distinction between training data sourcing and runtime distillation attacks. One happens during development, the other is ongoing extraction. But both involve using data the model creators didn't initially authorize.

What the Accused Companies Say

At the time of Anthropic's accusations, Deep Seek, Moonshot, and Mini Max had not publicly released detailed responses. However, understanding their likely perspective is important for balanced analysis.

These companies might argue several points. First, they might claim they're using Claude for legitimate research and benchmarking purposes, which wouldn't violate terms of service. Researchers regularly use competitors' models to understand capabilities and benchmark performance.

Second, they might argue that if distillation is occurring, it's incidental to legitimate use rather than orchestrated. The account creation might reflect demand from users in their ecosystems rather than coordinated extraction.

Third, they might argue that distillation, even at scale, represents legitimate competitive development. The fact that they're querying Claude's API doesn't necessarily mean they're doing anything wrong, especially if they're paying for API access like any other user.

Without their detailed responses, we're working with one side of the story. However, Anthropic's specific technical evidence (IP addresses, metadata, infrastructure indicators) makes the accusations substantive enough to take seriously.

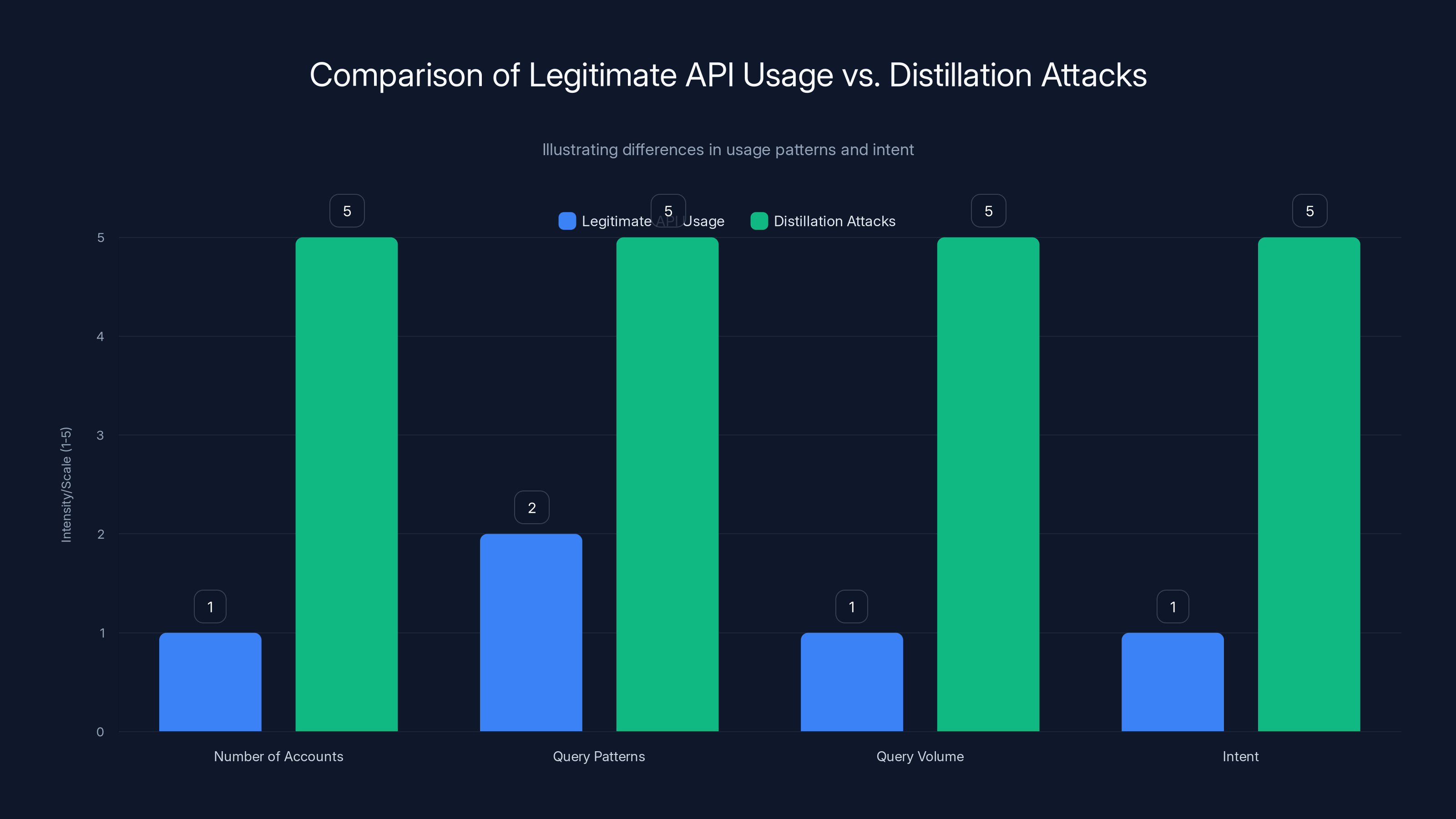

Distillation attacks involve a higher number of accounts, systematic query patterns, large query volumes, and clear intent to replicate model capabilities, unlike legitimate API usage. Estimated data.

How This Might Evolve: Future Scenarios

Several potential paths forward exist for how distillation attacks and industry response might evolve.

Scenario 1: Technical Escalation

Companies invest heavily in distillation detection and prevention. Models incorporate noise, make responses less consistent to thwart training, implement sophisticated anomaly detection. Attackers counter with more sophisticated techniques. This becomes an ongoing technical arms race.

Likelihood: High. This is already happening.

Scenario 2: Regulatory Action

Governments, particularly the US, impose regulations around model IP protection, API access, and data usage. Regulators might require authentication for API access, restrict bulk querying, or impose penalties for detected distillation campaigns.

Likelihood: Medium. Regulatory frameworks for AI are still forming, but this could be an area where industry consensus demands government action.

Scenario 3: Market Shift to Closed Systems

Companies move away from public APIs toward closed, authenticated access. Models become available only to approved users, making distillation harder but also making AI less accessible for research and development.

Likelihood: Medium to High. The incentives push in this direction, though it would slow innovation.

Scenario 4: International Agreements

US and Chinese governments reach agreements restricting IP theft in AI, similar to semiconductor supply chain agreements. This would require diplomatic coordination and verification mechanisms.

Likelihood: Low to Medium. Possible but would require significant diplomatic effort and trust.

Scenario 5: Legal Precedent

Court cases establish IP protections for trained models, making distillation legally risky. This creates liability for companies engaging in or benefiting from distillation attacks.

Likelihood: Medium. Several lawsuits are ongoing that could establish precedent.

Practical Lessons for AI Companies Going Forward

What should companies take away from Anthropic's accusations and the broader distillation attack phenomenon?

Invest in Detection and Defense

Distillation detection should be a core competency for companies offering AI services. This means sophisticated anomaly detection, behavior analysis, and threat intelligence.

Monitor API Usage Patterns

Behavioral analysis can flag suspicious activity: thousands of accounts with similar patterns, systematic query sequences, lack of natural conversation flow. Early detection prevents large-scale extraction.

Implement Rate Limiting Strategically

Rate limiting slows attacks but also inconveniences legitimate users. The challenge is finding the right balance. Dynamic rate limiting based on account behavior and history could help.

Share Threat Intelligence

The industry benefits when companies share information about distillation techniques, detection methods, and patterns they've observed. This accelerates collective defense.

Build in Safety and Alignment Carefully

If your model will likely be distilled, make sure safety properties are baked deep into the training process, not just bolted on. Distilled models inherit capabilities but might not inherit surface-level safety measures.

The Bigger Picture: Competition and Innovation in AI

Beyond the specific accusations, this incident illuminates larger questions about how AI development will unfold.

The traditional technology industry has largely converged on a model where companies protect IP through patents, trade secrets, and copyright. But AI development has some different characteristics that make traditional IP frameworks awkward.

First, AI models are trained on massive datasets. Getting perfect authorization for everything is impractical. Second, AI models produce outputs, not necessarily physical goods or even code. Copyright and patent law don't map cleanly onto this. Third, AI development has been somewhat collaborative, with major labs publishing research and sharing techniques.

Distillation attacks represent the collision between this collaborative research tradition and the emerging commercial competition for AI dominance. Companies want to protect their investment while maintaining the collaborative benefits.

There's no perfect solution. Too much IP protection slows innovation. Too little creates incentives for extraction. The industry is still figuring out where the right balance sits.

What seems likely is that the coming years will see significant investment in security, potential regulatory frameworks, and possibly shifts toward more closed ecosystems. The question is whether these changes enhance or hinder AI development overall.

What This Means for Anthropic and Claude

For Anthropic specifically, the accusations and response matter for several reasons.

First, they're establishing Anthropic as serious about security and IP protection. Companies considering using Claude through APIs need confidence that their usage won't be stolen. Anthropic's public detection and response efforts build that confidence.

Second, they're building relationships with other major labs around shared security challenges. These relationships could lead to industry standards and coordinated defense that benefits Anthropic.

Third, they're signaling to investors and stakeholders that the company understands and can handle the threats to its business model.

However, the music publisher lawsuit creates complications. If Anthropic loses that case or settles for significant damages, it undermines the company's moral authority on IP protection. The image becomes murkier: a company protecting its own IP while allegedly infringing others' IP.

Anthropoc will need to navigate this carefully, balancing strong defense of its model IP with acknowledging and addressing concerns about training data sourcing.

Looking Ahead: The Evolution of AI Model Protection

Distillation attacks are likely just the beginning of competition-driven threats to AI model IP. As AI becomes more valuable and competition intensifies, expect to see other attack vectors emerge.

Possible future concerns include adversarial attacks designed to extract information about model training or architecture, attacks that attempt to identify training data by reverse-engineering model behavior, and attacks that exploit model vulnerability to generate harmful outputs more reliably.

Anthropic and other companies will need to build resilience against all of these, not just distillation. This means fundamental research into model security, ongoing investment in defensive capabilities, and potentially new architectural approaches to AI development that are inherently more resistant to extraction and attack.

The companies that excel at this will have significant competitive advantages. They'll be able to offer models with better security properties, more confidence in their IP protection, and potentially more robust safety and alignment properties.

FAQ

What exactly is model distillation?

Model distillation is a technique where a smaller, less capable AI model learns from the outputs of a larger, more powerful model. The smaller model is trained on the larger model's responses, allowing it to replicate the larger model's behavior with fewer computational resources. While distillation itself is legitimate and widely used for efficiency, distillation attacks involve secretly extracting model capabilities at industrial scale without authorization.

How did Anthropic detect the distillation attacks?

Anthropic used multiple detection methods including IP address correlation to identify infrastructure associated with the accused companies, metadata analysis to spot unusual request patterns, infrastructure indicators to trace cloud provider usage, and corroboration with other AI companies who observed similar behaviors. Together, these technical indicators allowed Anthropic to link the attacks to specific companies with what they described as "high confidence."

What makes distillation attacks different from legitimate API usage?

Legitimate API usage involves individual users or companies querying models for their intended purposes, with natural variation in request patterns and reasonable usage rates. Distillation attacks involve thousands of fraudulent accounts, systematic query patterns designed to extract specific capabilities, millions of queries conducted in short timeframes, and clear intent to harvest outputs for training competing models. The scale, coordination, and intent distinguish attacks from normal usage.

Why would distillation attacks be economically attractive?

Building state-of-the-art AI models costs billions of dollars and requires years of research, extensive compute resources, and large specialized teams. Through distillation attacks, competitors can potentially replicate a model's capabilities in months for a fraction of the cost by extracting and replicating its outputs rather than doing original research. This economic incentive explains why companies might take the risk despite potential legal and reputational consequences.

Could distillation attacks harm model safety and alignment?

Yes, this is a significant concern. Original models like Claude undergo extensive safety testing, red-teaming, and alignment work to ensure they behave safely and according to human values. When competitors distill these models, they extract the capabilities but might not inherit the safety measures and alignment work. This could result in models that are capable but potentially less safe or aligned, creating incentives for companies to skip safety work and just distill competitors' models instead.

What are the geopolitical implications of these accusations?

Anthropic's accusations specifically name Chinese companies, which situates this within the broader US-China AI competition context. The US wants to protect its innovation lead, while China wants to accelerate its AI development. These accusations raise questions about fair competition, IP protection, and the pace of technology transfer between countries. The situation reflects broader tensions in US-China relations around intellectual property and technology development strategies.

How might companies defend against distillation attacks?

Defenses include enhanced detection systems to identify attack patterns, improved rate limiting to make large-scale extraction harder, behavioral analysis to flag suspicious accounts, output manipulation to add noise that degrades training value, and access restrictions requiring additional authentication. However, no purely technical solution can completely prevent attacks if a model has a public API. Long-term solutions likely involve regulation, legal frameworks, and international agreements.

What does this mean for the broader AI industry?

These accusations signal that IP protection will be a major competitive battleground in AI development. Companies will need to invest significantly in security and detection. The incident could accelerate moves toward more closed systems, potentially slowing innovation and collaboration. It also raises questions about the sustainability of open API models in a highly competitive environment and might lead to regulatory action around model IP protection and API access.

Are distillation attacks happening at other companies too?

Yes, Open AI made similar accusations against competing firms just months before Anthropic's announcements. The parallel accusations from two major AI companies suggest distillation attacks are becoming a standard competitive tactic. Both companies reported similar mechanics: thousands of fraudulent accounts, millions of queries, systematic extraction of capabilities. This pattern suggests the problem is systemic rather than isolated.

What about the music publisher lawsuit against Anthropic?

Music publishers sued Anthropic alleging that the company used copyrighted songs to train Claude without authorization. This creates an ironic parallel to Anthropic's accusations about distillation attacks—the company is complaining about having its IP stolen while facing allegations of IP infringement itself. This undermines Anthropic's moral authority on IP protection and raises questions about how companies should source training data ethically and legally.

Conclusion

Anthropoc's accusations against Deep Seek, Moonshot, and Mini Max represent more than a single incident of alleged IP theft. They mark a critical moment where the emerging commercial AI industry is grappling with questions about competition, IP protection, and the rules that will govern AI development going forward.

Model distillation attacks exploit the tension between the collaborative research tradition of AI development and the emerging commercial competition for dominance. They represent a rational response to the massive investments required to build state-of-the-art models, offering a shortcut that some companies are apparently willing to take despite legal and reputational risks.

What happens next will shape the AI landscape for years to come. If companies successfully defend against distillation attacks, the competitive advantages of original research remain protected, incentivizing continued investment. If attacks succeed at scale, we might see a shift toward more closed systems and reduced safety investment, with potential negative consequences for the broader AI ecosystem.

The parallel accusations from Anthropic and Open AI suggest this isn't an isolated problem but a systematic challenge that the entire industry needs to address. Companies are beginning to coordinate on detection and defense, but more comprehensive solutions likely require regulatory frameworks, legal precedent, and possibly international agreements.

For users of AI systems like Claude, these developments matter because they affect model quality, safety, availability, and pricing. For companies building AI systems, they represent an urgent need to invest in security and think strategically about IP protection. For the broader society, they raise important questions about how technological development should be governed and what rules should apply in an industry that's reshaping how work, creativity, and thinking itself happens.

The distillation attack phenomenon also illuminates a fundamental challenge in AI development: how do you protect innovation while maintaining the collaborative spirit that drives progress? There's no perfect answer, but the coming years will reveal what balance the industry and its regulators ultimately choose.

Key Takeaways

- Anthropic accused DeepSeek, Moonshot, and MiniMax of conducting industrial-scale distillation attacks involving 16 million queries across 24,000 fraudulent accounts to extract Claude's capabilities

- Model distillation attacks represent a shortcut to developing advanced AI models, with economic incentives estimated at billions of dollars in avoided research and compute costs

- Companies can defend against distillation through enhanced detection systems, rate limiting, behavioral analysis, and infrastructure monitoring, though no purely technical solution prevents attacks if APIs are public

- The incident parallels OpenAI's similar accusations just months earlier, suggesting distillation attacks are becoming a standard competitive tactic rather than isolated incidents

- IP protection challenges in AI could shift the industry toward more closed systems, potentially reducing innovation speed and research collaboration, while raising important questions about how technology development should be governed

Related Articles

- Anthropic vs DeepSeek: Claude Model Theft and AI Distillation [2025]

- AI in Software Engineering: Are Developers Letting Machines Take Over? [2025]

- AI Safety vs. Military Weapons: How Anthropic's Values Clash With Pentagon Demands [2025]

- The OpenAI Mafia: 18 Startups Founded by Alumni [2025]

- Google Gemini 3.1 Pro: AI Reasoning Power Doubles [2025]

- Claude Distillation Attacks: How Chinese AI Labs Extract US Model Capabilities

![Anthropic Accuses DeepSeek, Moonshot & MiniMax of Claude Distillation Attacks [2025]](https://tryrunable.com/blog/anthropic-accuses-deepseek-moonshot-minimax-of-claude-distil/image-1-1771881070503.jpg)