Anthropic’s Mythos Breach: Lessons Learned and Future Implications [2025]

In the realm of artificial intelligence, few events have stirred as much debate as Anthropic's Mythos breach. The incident not only exposed vulnerabilities in AI systems but also highlighted pressing issues in cybersecurity practices and AI ethics. Here, we'll dissect the breach, explore its implications, and offer guidance on how companies can safeguard against similar threats.

TL; DR

- Anthropic's Mythos breach: A major AI security incident that exposed sensitive data, as detailed in OpenTools' report.

- Security flaws: Revealed gaps in AI and data protection strategies, according to Bain & Company's analysis.

- Ethical concerns: Sparked debate over AI accountability and transparency, as discussed in BBC News.

- Future measures: Emphasize robust security protocols and ethical AI development, highlighted in Federal News Network's commentary.

- Industry impact: Raised awareness about AI vulnerabilities among tech companies, as reported by Bloomberg.

Understanding the Mythos Breach

The Mythos breach involved unauthorized access to Anthropic's AI models and sensitive data. It served as a wake-up call for the AI industry, underlining the importance of robust security measures in the development and deployment of AI technologies.

What Happened?

On a seemingly ordinary day, Anthropic discovered that unauthorized parties had gained access to their Mythos AI models. These models, which were designed to push the boundaries of AI capabilities, contained proprietary algorithms and sensitive training data. The implications were severe: potential misuse of the models and exposure of confidential information, as detailed in Bitcoin World News.

Key Lessons from the Breach

-

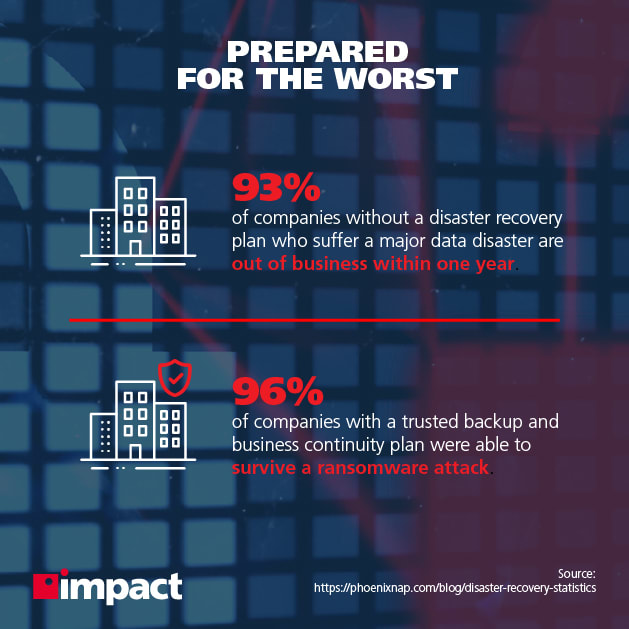

Data Encryption: The importance of encrypting sensitive data cannot be overstated. Companies must ensure that data at rest and in transit is adequately protected, as emphasized by Business.com.

-

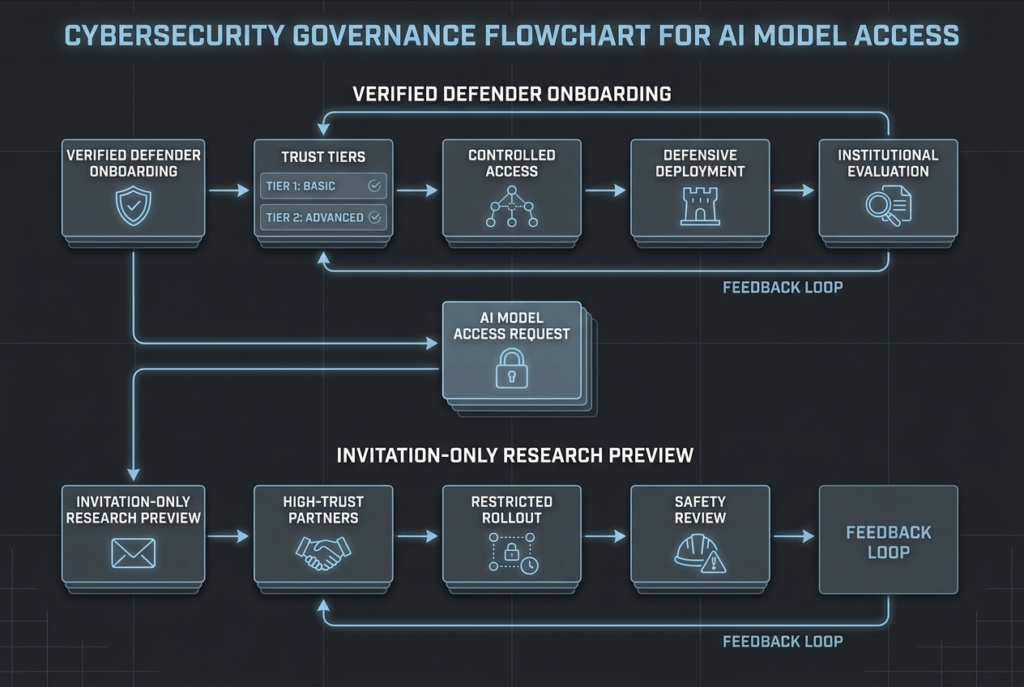

Access Controls: Implementing strict access controls is crucial. Only authorized personnel should have access to sensitive systems and data, a point highlighted in ABB's cybersecurity guidelines.

-

Incident Response: A well-defined incident response plan can mitigate the impact of a breach. Companies should regularly update and test their response strategies, as advised by Worcester Business Journal.

-

Transparency and Accountability: Clear communication with stakeholders is vital when a breach occurs. Transparency builds trust and helps manage the fallout, as noted in Cloudflare's insights.

-

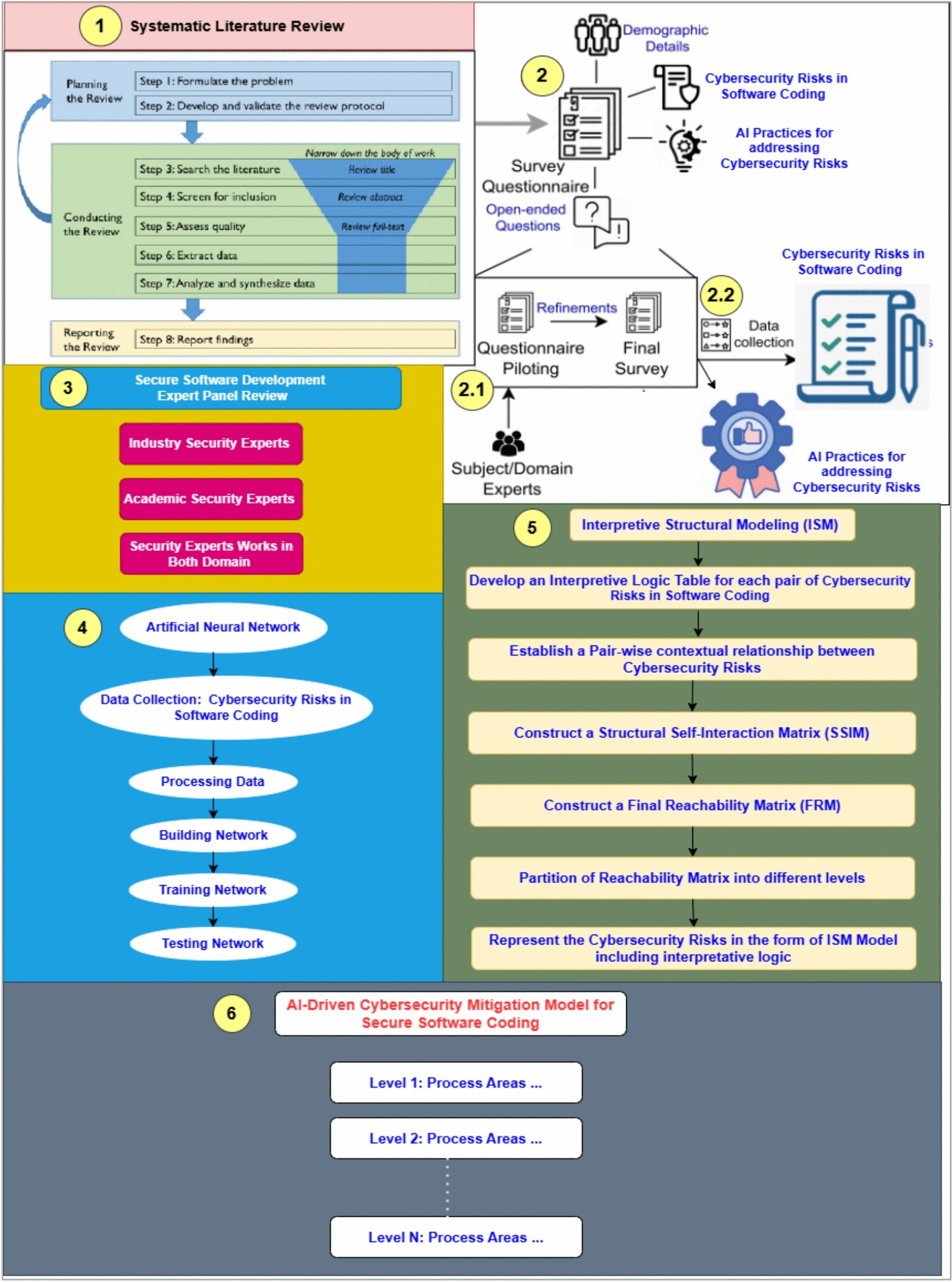

Ethical AI Development: Ethical considerations must be integrated into the AI development lifecycle to prevent misuse and ensure responsible AI deployment, as discussed in Nature.

Implementing Robust Security Practices

To prevent similar incidents, organizations must adopt comprehensive security practices that address both technological and human factors.

Comprehensive Security Framework

A robust security framework should include:

- Risk Assessment: Conduct regular risk assessments to identify vulnerabilities, as recommended by ASTHO.

- Security Policies: Develop and enforce security policies tailored to the organization's needs, as outlined in KnowBe4's blog.

- Employee Training: Educate employees on security best practices and the importance of vigilance, as advised by Bain & Company.

Ethical Considerations in AI Development

The breach also raises ethical questions about AI development and deployment. As AI systems become more complex and autonomous, ensuring ethical practices is paramount.

Key Ethical Principles

- Fairness: AI systems should be designed to avoid bias and discrimination, as emphasized in Nature.

- Transparency: AI decision-making processes should be transparent and explainable, as discussed in BBC News.

- Accountability: Clear accountability structures must be established for AI systems, as noted in Federal News Network.

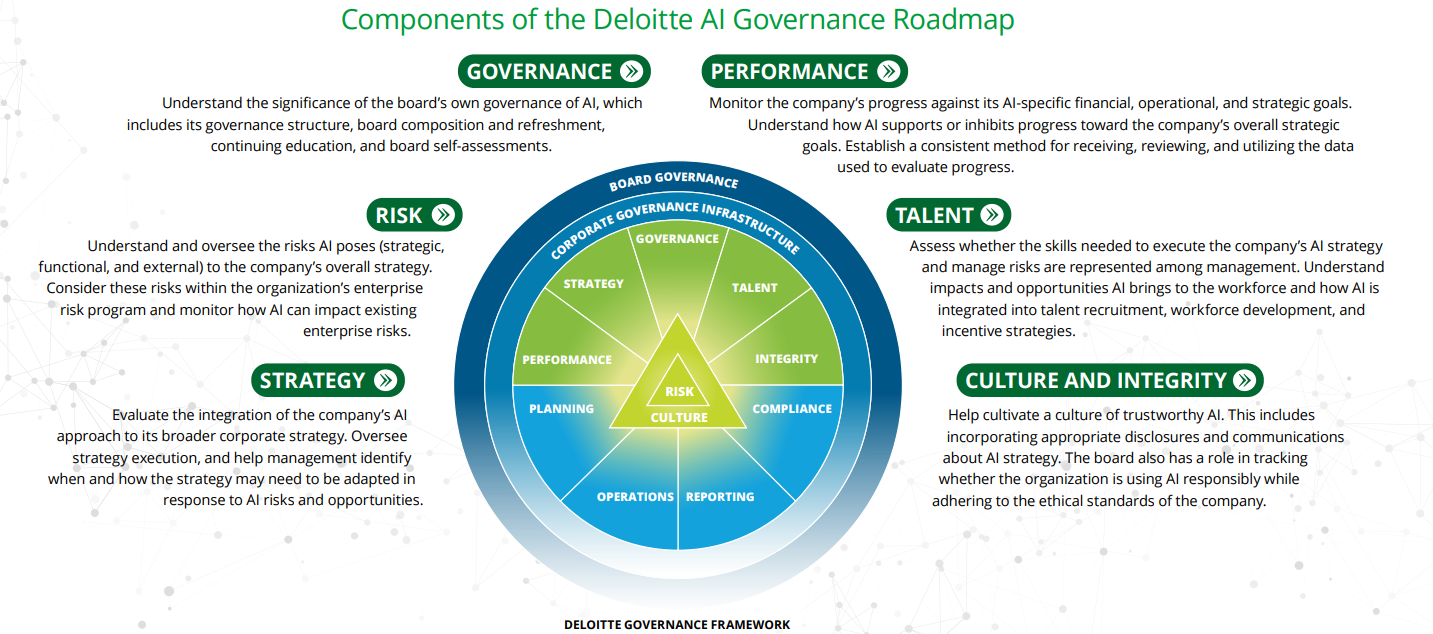

The Role of AI Governance

AI governance frameworks can help guide ethical AI development and ensure compliance with legal and regulatory standards.

Building an AI Governance Framework

- Regulatory Compliance: Stay informed about relevant regulations and ensure AI systems comply, as advised by ASTHO.

- Ethical Guidelines: Develop ethical guidelines tailored to the organization's AI initiatives, as recommended by Bain & Company.

- Stakeholder Engagement: Involve stakeholders in the governance process to build trust and transparency, as highlighted by Cloudflare.

Future Trends and Recommendations

As we look to the future, the importance of robust AI security and ethical practices will only grow. Companies must stay ahead of emerging threats and adapt to changing regulatory landscapes.

Anticipated Trends

- Increased Regulation: Expect more stringent regulations governing AI development and use, as predicted by Federal News Network.

- Focus on Explainability: Greater emphasis on making AI systems' decision-making processes transparent, as discussed in BBC News.

- Collaborative Security Efforts: Industry collaboration will be key to addressing AI security challenges, as noted in Bain & Company.

Common Pitfalls and Solutions

Organizations must be aware of common pitfalls in AI security and take proactive steps to avoid them.

Pitfalls

- Underestimating Threats: Failing to recognize the full scope of potential threats can lead to inadequate security measures, as highlighted by Worcester Business Journal.

- Neglecting Human Factors: Overlooking the human element in security can result in vulnerabilities, as discussed in KnowBe4's blog.

Solutions

- Regular Audits: Conduct regular security audits to identify and address weaknesses, as recommended by ASTHO.

- Comprehensive Training: Implement ongoing training programs to keep employees informed about security best practices, as advised by Bain & Company.

Conclusion

The Mythos breach serves as a stark reminder of the challenges and responsibilities that come with AI development. By implementing robust security practices and ethical guidelines, companies can not only protect their assets but also contribute to the responsible advancement of AI technologies.

FAQ

What is the Mythos breach?

The Mythos breach was a security incident involving unauthorized access to Anthropic's AI models and sensitive data, as reported by OpenTools.

How did the breach occur?

The breach occurred due to vulnerabilities in Anthropic's security measures, allowing unauthorized parties to access their AI systems, as detailed in Bitcoin World News.

What lessons can be learned from the breach?

Key lessons include the importance of data encryption, access controls, incident response, and ethical AI development, as outlined by Bain & Company.

What are the ethical implications of AI development?

Ethical implications include the need for fairness, transparency, and accountability in AI systems, as discussed in BBC News.

How can companies improve AI security?

Companies can improve AI security by implementing robust security frameworks, conducting regular audits, and providing employee training, as advised by ASTHO.

What is the role of AI governance?

AI governance frameworks guide ethical AI development and ensure compliance with legal and regulatory standards, as highlighted by Bain & Company.

What future trends are anticipated in AI security?

Future trends include increased regulation, a focus on explainability, and collaborative security efforts, as predicted by Federal News Network.

How can companies avoid common pitfalls in AI security?

Companies can avoid pitfalls by conducting regular audits, implementing comprehensive training, and recognizing the full scope of potential threats, as recommended by Worcester Business Journal.

Key Takeaways

- Anthropic's Mythos breach exposed significant AI security vulnerabilities, as reported by Bloomberg.

- Data encryption and access controls are critical for protecting sensitive information, as emphasized by Business.com.

- Ethical AI development requires fairness, transparency, and accountability, as discussed in BBC News.

- AI governance frameworks help ensure ethical and compliant AI practices, as highlighted by Bain & Company.

- Future AI security trends include increased regulation and focus on explainability, as predicted by Federal News Network.

Related Articles

- Understanding the Vercel Data Breach: Implications, Security Best Practices, and Future Directions [2025]

- Why Passkeys Are the Future of Authentication [2025]

- The Hidden Risks of AI-Generated Passwords [2025]

- The Silent Legacy of FTP Servers: Still Running and Still Vital [2025]

- The Intersection of Reality and AI: Understanding the Complex Case of Iranian Women 'Saved' from Execution [2025]

- Vercel's Security Incident: A Deep Dive into Compromised Accounts [2025]

![Anthropic’s Mythos Breach: Lessons Learned and Future Implications [2025]](https://tryrunable.com/blog/anthropic-s-mythos-breach-lessons-learned-and-future-implica/image-1-1776969363152.jpg)