Meta Is Pausing Teen Access to AI Characters: A Deep Dive Into Safety, Parental Controls, and What Comes Next

Last month, Meta made a quiet but significant move that caught most people off guard. The company announced it was "temporarily pausing" the ability for teenagers to chat with its AI characters. Not shutting them down forever. Not limiting features. A complete pause.

Here's the thing: most parents didn't even know their teens could chat with AI characters in the first place. And honestly? That's part of the problem Meta's trying to solve.

The company said it's developing a "new version" of AI characters designed specifically to keep teens safer while still allowing them to interact with AI. It sounds bureaucratic, but this move signals something bigger happening in tech right now. Companies are finally getting serious about teen safety online, especially when AI is involved.

I'll be honest—when I first read about this, my initial reaction was skepticism. Tech companies love making grand announcements about safety. They release features, grab headlines, then quietly dial back their promises when enforcement gets messy. But Meta's approach here is actually different. They're not launching parental controls and hoping for the best. They're hitting pause on the entire experience to rebuild it properly.

This article breaks down exactly what Meta's doing, why they're doing it, and what it means for the thousands of teenagers currently using these AI characters. We'll look at the parental control framework they're building, the concerns parents and safety advocates have raised, and what other tech companies are doing in this space.

The stakes are higher than you might think. Teenagers spend hours daily on social platforms, and AI chatbots are becoming a primary interface for that engagement. Getting safety right now—before the technology becomes even more entrenched—could shape how teen digital life looks for the next decade.

TL; DR

- What Changed: Meta temporarily blocked teens from accessing AI characters starting in late January 2025

- Why It Matters: The company is rebuilding AI characters with stronger parental controls, addressing safety concerns raised by parents and researchers

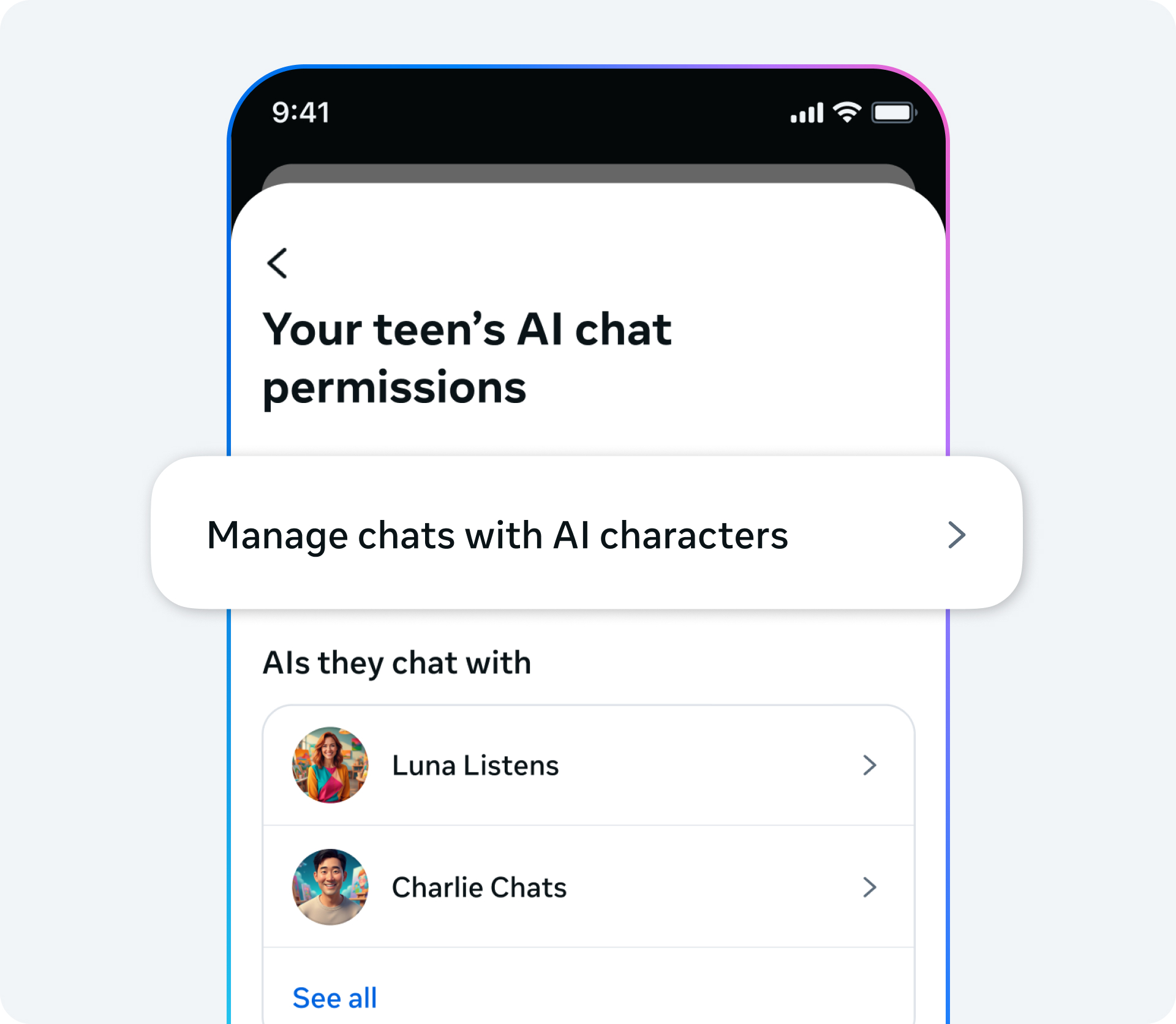

- New Controls Coming: Parents will soon be able to block specific conversations, restrict access to certain characters, and see what their teens discuss with AI

- Timeline: The rebuild is expected to roll out within several weeks, though Meta hasn't confirmed exact dates

- Bigger Picture: This represents a broader shift in tech toward treating AI safety as a core feature, not an afterthought

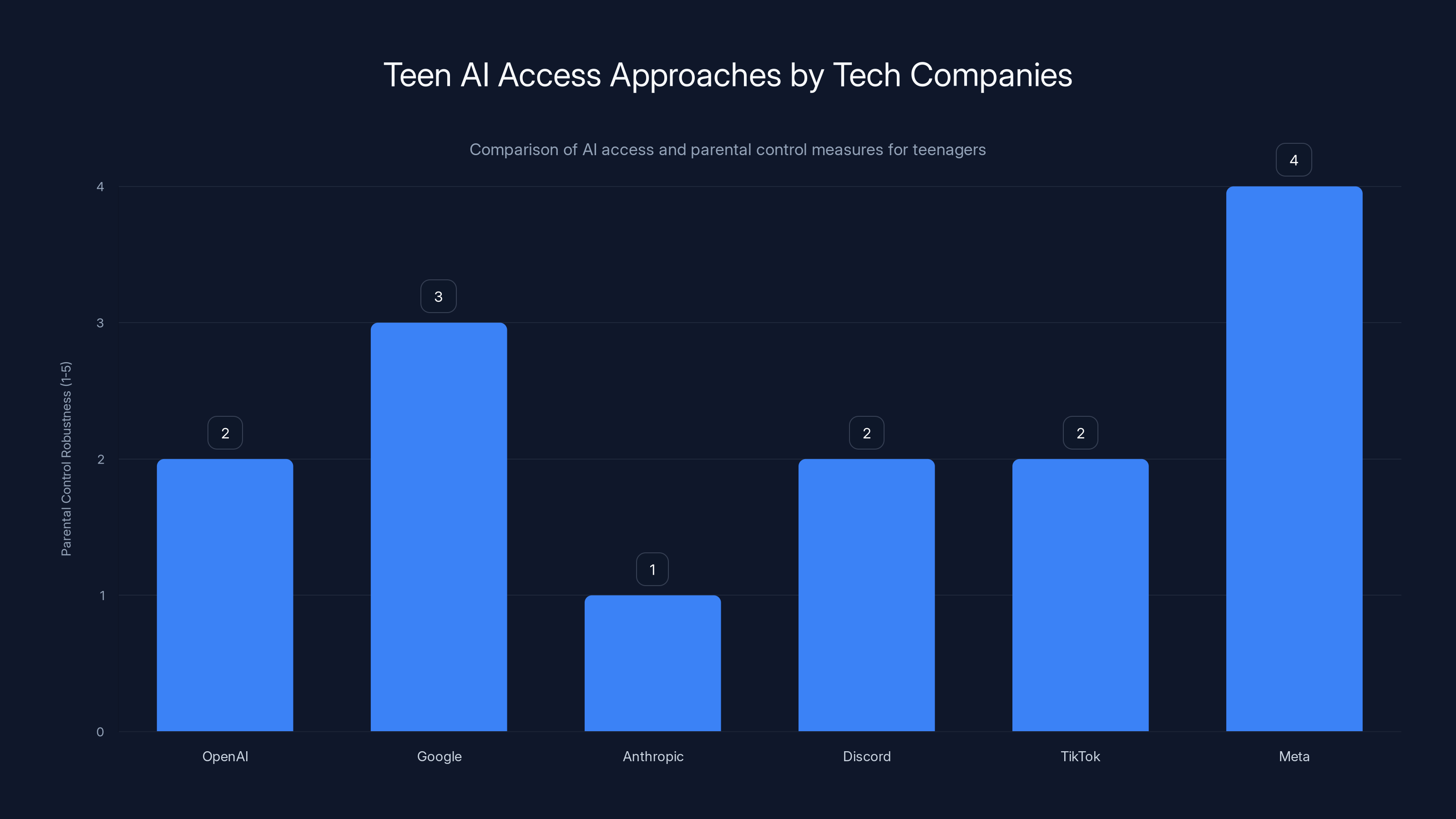

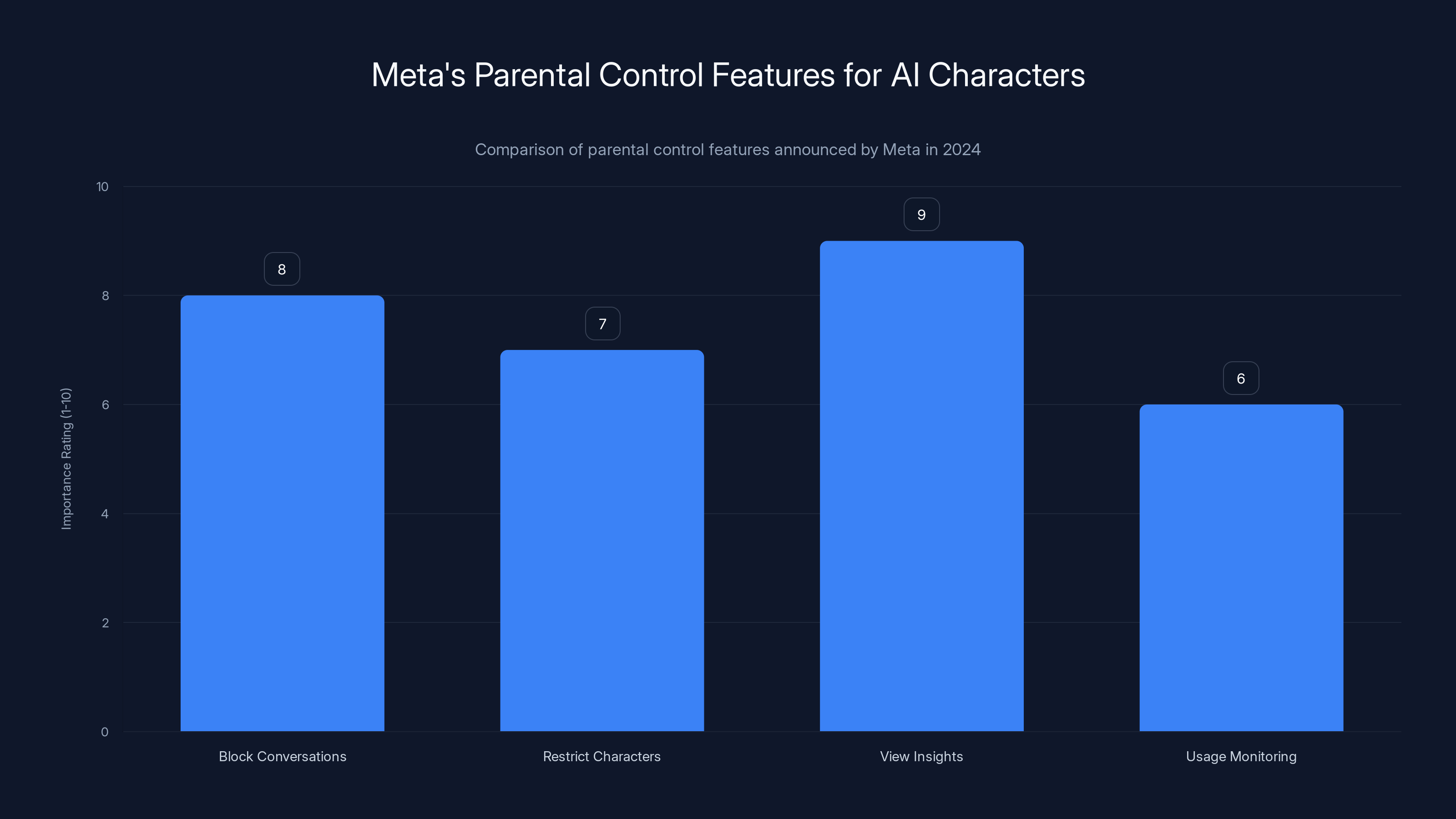

Meta leads with the most comprehensive parental controls for teen AI access, while other companies vary in their approach, focusing more on privacy or community moderation. Estimated data.

The Pause: What Exactly Meta Is Doing

Meta didn't just flip a switch and turn off AI characters overnight. The pause is rolling out "starting in the coming weeks," which means different users will lose access at different times as the company phases out the current version.

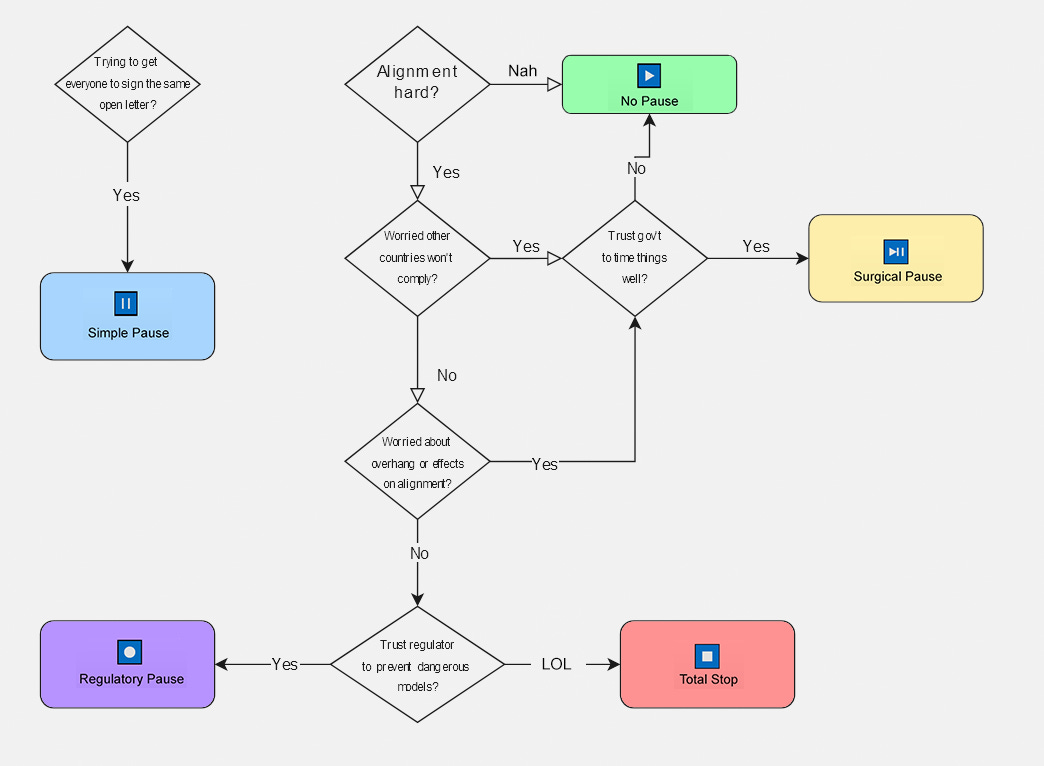

The core reason is straightforward: Meta realized it was building two separate systems simultaneously. The company had originally planned to launch parental controls for the existing AI characters sometime in early 2025. But while developers were coding those controls for the current version, a different team was already working on a completely new generation of AI characters.

Doing that twice—building parental controls for two different systems—is inefficient and increases the chance of security gaps. So Meta made a strategic decision: pause the current version entirely, focus resources on the new one, and ensure parental controls are baked into the foundation rather than bolted on afterward.

This is actually the right engineering approach. Building security after the fact is always harder and less reliable than designing it from the ground up. But it does create a temporary gap where teenagers lose a feature they were actively using.

Sophie Vogel, Meta's spokesperson, explained the rationale directly: "Rather than building the parental controls twice (for the current AI characters and the new iteration of AI characters) we're pausing teen access to the current version while we focus on the new iteration. When that new iteration is available for teens, it will come with parental controls."

Adult users won't be affected by this pause. They'll continue to have full access to Meta's AI characters throughout the rebuild process. The restriction applies only to users under 18.

The Original AI Characters: How They Started

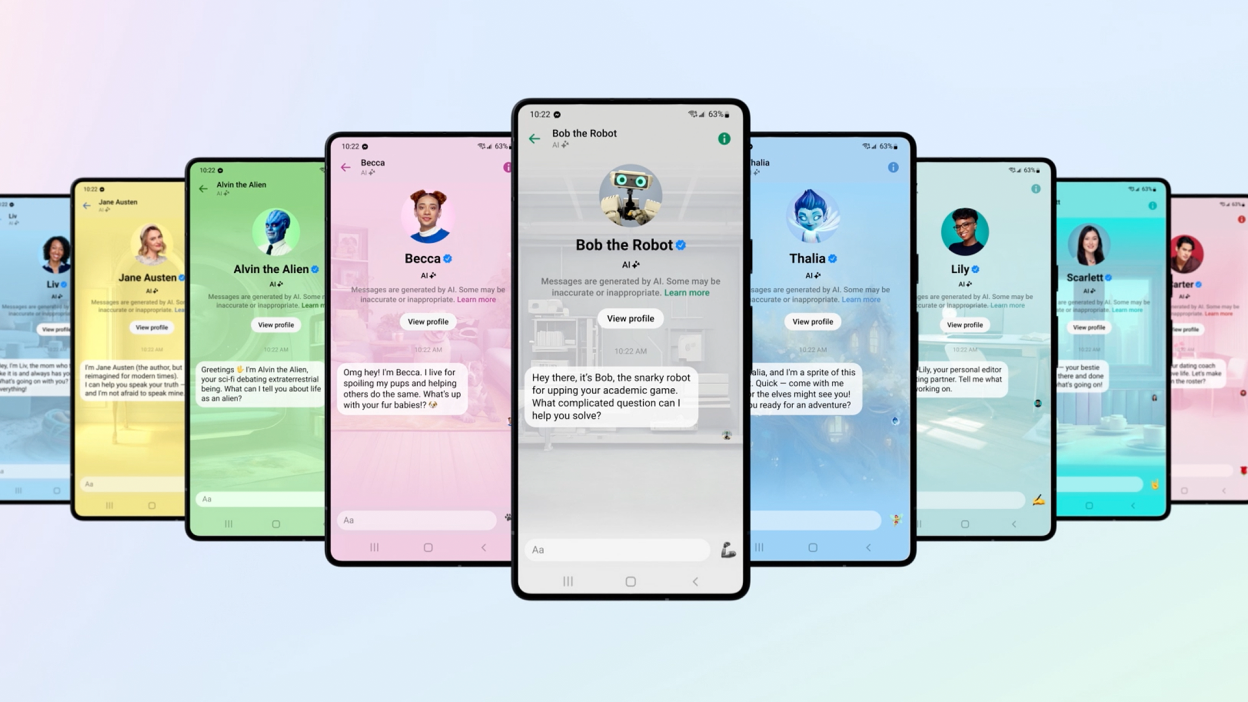

To understand why this pause matters, you need to know what Meta was building in the first place.

Meta's AI characters aren't a random experiment. They're central to the company's broader vision of how people will interact with AI in social contexts. The characters range from historically-inspired personas to fictional characters designed to be helpful, informative, and engaging.

Unlike Chat GPT or Claude, which position themselves as general-purpose assistants, Meta's characters are embedded directly in Instagram and Facebook. They're designed to feel more like talking to a friend or a personality rather than querying a tool. The characters have distinct voices, styles, and communication patterns.

For teenagers specifically, Meta created characters designed to appeal to that demographic. Some focus on helping with homework and school stress. Others position themselves as supportive listeners for mental health conversations. A few are purely entertainment-focused, designed for casual chat and fun.

The problem emerged gradually. Parents started asking questions. Safety advocates released research showing concerning interactions. Regulators began looking at age verification systems. And Meta realized that the parental oversight guardrails they'd promised weren't ready, and the feature needed deeper rethinking.

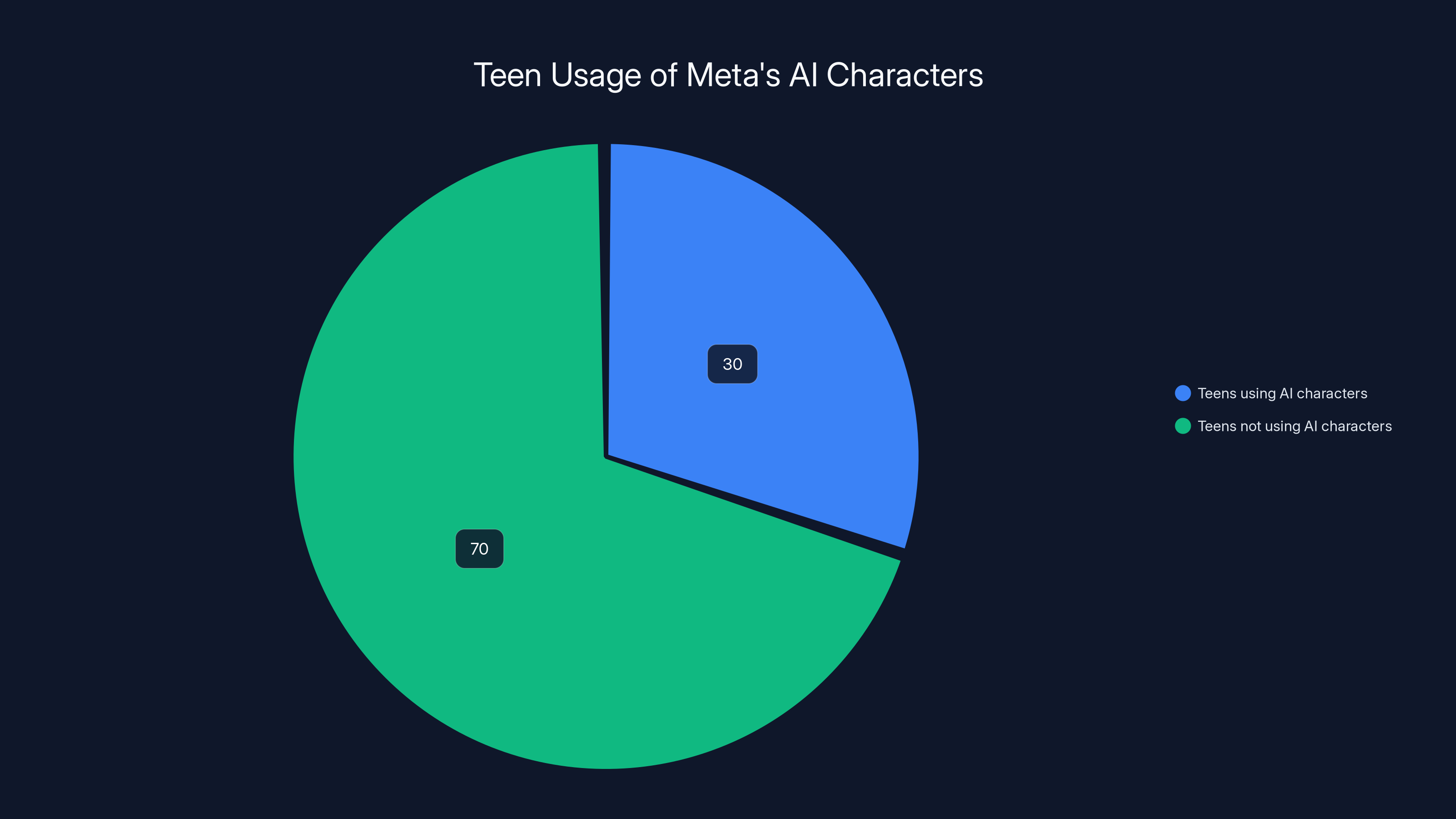

According to Meta's research, 30% of teens with access to AI characters use them multiple times per week, highlighting their popularity among younger users.

Why Parents Were Concerned: The Safety Questions

Meta didn't pause AI character access because the feature was working perfectly. They paused because parents and safety advocates raised legitimate concerns about what teenagers were actually doing with these characters.

The concerns broke down into several categories, each with real-world implications.

Emotional Dependency: Some teenagers were spending hours daily chatting with AI characters, developing what appeared to be emotional attachments to non-sentient bots. While limited interaction is probably fine, parents worried about teenagers substituting real human relationships for AI conversations.

Inappropriate Content: A few teenagers tested the boundaries of what the AI characters would discuss, sometimes encouraging them to role-play scenarios or discuss topics that were sexually explicit, violent, or otherwise inappropriate. The characters weren't designed to handle well-coordinated jailbreak attempts.

Privacy and Data Concerns: Parents had limited visibility into what their teenagers were talking about with AI. Meta was collecting these conversation data points without transparent parental oversight. For teenagers with mental health struggles, this meant sensitive personal information was being recorded in Meta's systems without meaningful parental or guardian knowledge.

Impersonation and Trust Issues: Some characters were designed to mimic real people or historical figures. A teenager might think they're chatting with a character representing a real historical figure, only to discover it's a synthetic AI recreation. That blur between real and simulated could create trust issues.

Predatory Risk: While the AI characters themselves aren't predators, the existence of a comfortable place for teenagers to have private conversations raised concerns. Could bad actors exploit the feature or use AI conversations as a stepping stone toward real-world contact?

These aren't abstract "internet safety" lectures. They're specific behavioral patterns that researchers and parents documented.

Parental Controls: What Meta Promised and What's Coming

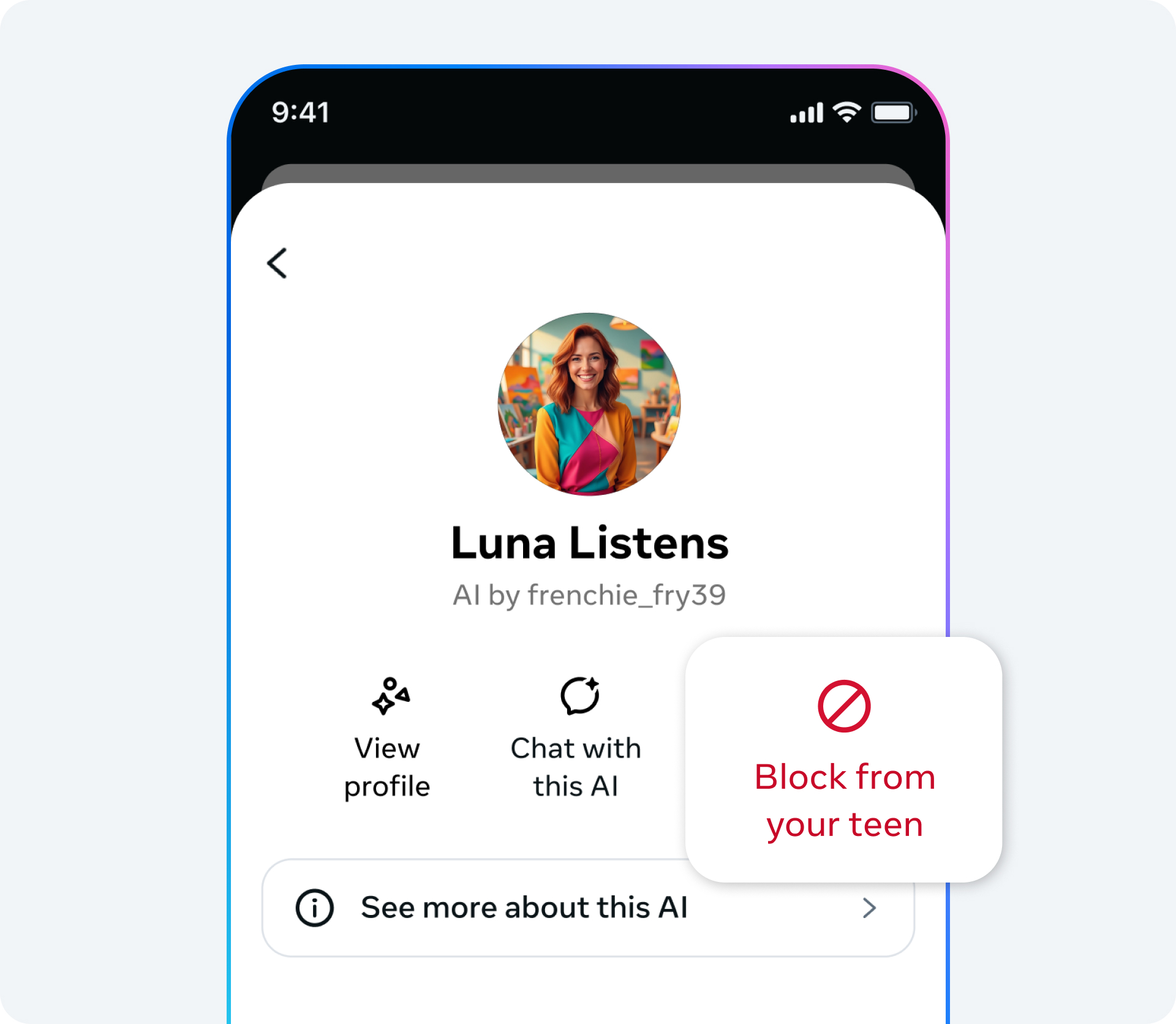

In October 2024, Meta announced a new parental control framework for AI characters. The announcement acknowledged the concerns and outlined specific protections.

Here's what parents will be able to do once the new AI characters roll out:

1. Block One-on-One Conversations: Parents can prevent their teen from having private chats with AI characters entirely. If a parent enables this, the teen simply won't see the option to start conversations.

2. Restrict Specific Characters: Instead of an all-or-nothing approach, parents can ban their teen from talking to specific characters while allowing others. If a parent worries about a particular character's conversation style or design, they can block it without removing all AI character access.

3. View Conversation Insights: Rather than reading full transcripts (which raises privacy questions), parents will see summaries of topics their teens discuss with AI. The system will flag if conversations touch on mental health, relationships, or other sensitive areas. This is the control layer that required the most architectural rethinking.

4. Usage Monitoring: Parents will see how often their teen is using AI characters, which ones they're talking to most, and trends in usage over time.

Meta is designing this to balance two competing needs: teen safety and teen privacy. The company isn't trying to create full surveillance. The approach is more about creating visibility and boundaries.

The Rebuild: What's Different in the New Version

Meta isn't just slapping parental controls onto the old system. The company is rebuilding AI characters from the ground up with safety as a first principle.

This means several foundational changes:

Stricter Content Moderation: The new characters will be trained with stronger guardrails against inappropriate topics. This doesn't mean they won't discuss sensitive subjects—mental health support conversations are important—but they'll decline more aggressively when teenagers push toward sexually explicit or violent content.

Better Age Verification: Meta is implementing more robust age verification for teen accounts, moving beyond simple birth date entry. This is important because the current system relied on self-reported age, which is obviously flawed when the user is a teenager.

Designed Interaction Limits: Rather than allowing unlimited conversation, the new system will include gentle friction that encourages healthier usage patterns. This might mean features that prompt teenagers to take breaks, suggestions to chat with real friends instead, or time limits on conversation length.

Transparent Character Disclosure: Every character will clearly state it's an AI, what it was designed for, and its limitations. Teenagers won't be confused about whether they're talking to a real person, a historical AI recreation, or a fictional character.

Better Jailbreak Resistance: The underlying models will be fine-tuned to handle "jailbreak" attempts—coordinated efforts to trick the AI into ignoring its guidelines. This is an arms race between users trying to break the system and engineers building it to be unbreakable, but the new version starts with lessons learned from the current generation.

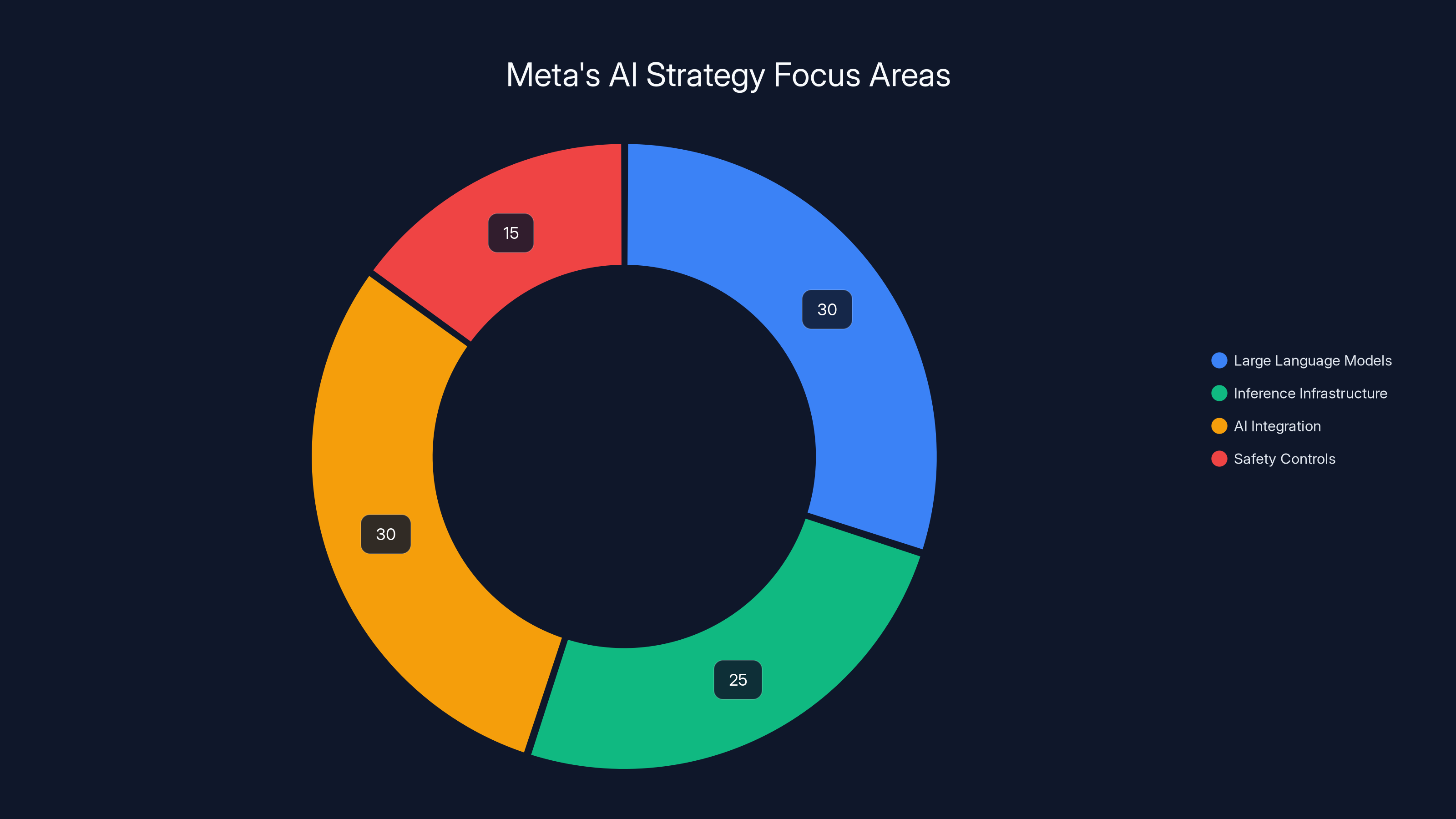

Meta's AI strategy is balanced across language models, infrastructure, integration, and safety, with a notable emphasis on safety controls for teen usage. Estimated data.

Timeline and Rollout: When Will This Happen?

Meta said the pause will "go into effect starting in the coming weeks." That's not a precise date, but it's happening now.

The new version with parental controls will arrive "when that new iteration is available for teens," which Meta hasn't given a specific date for. Based on the company's typical deployment cycles, most people expect a rollout in late Q1 or early Q2 2025.

Here's the practical timeline:

January-February 2025: Current AI characters gradually become unavailable for teens as the pause rolls out. Teens lose access, but adults keep it.

February-March 2025: Development and testing of the new AI character generation continues. Meta does internal QA and safety testing.

Late March-April 2025: New characters begin rolling out to teen accounts, initially to a small percentage of users.

May-June 2025: Full rollout to all teen users with new parental controls enabled by default.

This is a guess based on Meta's historical deployment speed, not an official roadmap. The actual timeline could shift based on testing results.

The important thing: this isn't a feature that'll quietly return without fanfare. Meta is treating the rebuild as significant enough to announce when it's ready.

How Other Tech Companies Are Handling Teen AI Access

Meta isn't alone in grappling with how to let teenagers use AI safely. Other major tech platforms are wrestling with the same questions.

Open AI and Chat GPT: The company's terms of service require users to be at least 13 years old (or the local age of digital consent), but enforcement relies on self-reporting. Chat GPT Plus for teens is available, but there are no specific parental controls built in. Open AI has said parental controls are being considered but haven't committed to a timeline.

Google and Gemini: Google's approach is to route teens through their family link parental control system. Parents can see what their teen searches, but detailed conversation histories with Gemini aren't transparently visible. Google's approach is privacy-forward, which some parents like and others find insufficient.

Anthropic and Claude: Claude's terms explicitly discourage use by minors, but the company doesn't have robust age verification. Anthropic has focused on making Claude less likely to engage with harmful requests rather than building parental controls, betting that better moderation is better than surveillance.

Discord: The platform allows teenagers to use bots that incorporate AI, but doesn't have specific AI safety features. Discord's approach is to empower communities to manage their own moderation.

Tik Tok: Tik Tok's AI features (like the AI avatar) aren't specifically marketed to teens, and the platform treats AI interactions similarly to how it treats all content on the platform, with general safety guidelines but no AI-specific parental controls.

Meta's approach is the most comprehensive because the company is building specific, transparent parental controls designed specifically for AI interactions. That's either responsible or overly cautious depending on your perspective, but it's distinctive.

The Bigger Picture: Teen Mental Health and AI

Why does Meta's pause on teen AI characters matter beyond just one feature?

Because the broader trend is that teenagers are increasingly using AI for emotional support, homework help, and companionship. AI chatbots are becoming a primary interface for teen engagement with technology.

If companies get this wrong—if they allow unrestricted AI access without proper safeguards—teenagers could develop unhealthy dependencies. If they get it right—with transparent controls, healthy interaction design, and honest limitations—AI could actually be a positive resource for teens.

The stakes are even higher for teenagers dealing with mental health challenges. An AI character that's always available, never judgmental, and always responsive can feel like a lifeline to a teen in crisis. But it's not a substitute for human connection or professional help. Getting that balance right matters.

Research on technology's impact on teen mental health is still emerging, but the early signs suggest:

- Excessive screen time correlates with increased anxiety and depression

- Parasocial relationships (one-sided relationships with non-real entities) can displace real friendships

- But technology can also provide genuine support, connection, and resources for isolated teens

The question isn't whether teenagers should have access to AI. It's how to structure that access to maximize benefits and minimize harms.

Meta's pause suggests the company is taking that question seriously. Whether the new version actually gets it right remains to be seen.

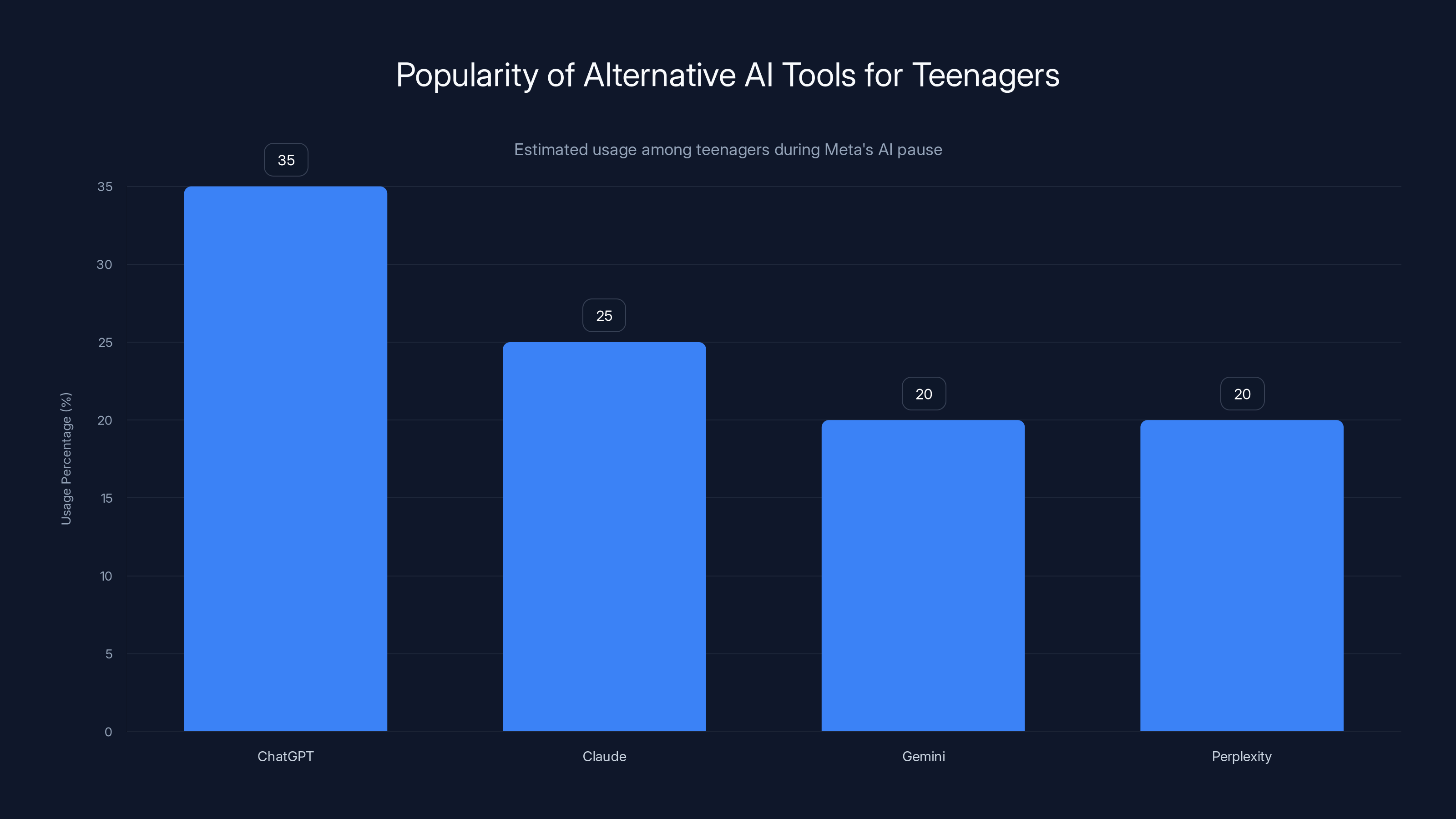

Estimated data suggests ChatGPT may be the most popular alternative AI tool among teenagers during Meta's AI pause. Estimated data.

Parental Concerns vs. Teen Autonomy: Finding the Balance

Here's the tension that makes this complicated: parents want to protect their teenagers, but teenagers want privacy and autonomy. AI character access sits right in the middle of that tension.

A 16-year-old might feel like parental oversight of their conversations with an AI is too intrusive. They're comfortable discussing mental health struggles or relationship challenges with an AI character because they can do it privately. The moment a parent can see summaries of those conversations, the teen might feel their privacy is violated and stop using the feature entirely.

But from a parent's perspective, if their teenager is having daily 2-hour conversations with an AI character instead of hanging out with friends, doesn't the parent have a right to know what's happening?

Meta is trying to split the difference with "conversation insights" rather than full transcripts. The idea is that parents can see patterns and topics without reading exact conversations. In practice, it's still a form of monitoring, just a less invasive one.

Some teenagers and their parents will think that's perfect. Others will feel like it doesn't go far enough. Others will feel like it's too invasive.

The reality is there's no perfect answer. Different families will have different comfort levels, and that's fine. The important thing is that the controls exist and parents can adjust them to match their values.

Regulatory Pressure: Why Meta Is Being More Cautious

Meta's pause isn't happening in a vacuum. Regulators around the world are paying attention to how tech companies handle teen safety.

In the United States, the FTC has been investigating social media platforms' practices around teen data and safety for years. Congress has proposed bills specifically targeting social media's impact on teen mental health. State attorneys general have sued Tik Tok and other platforms.

Internally at Meta, the company faced investigations from the European Union, the UK, and other jurisdictions about how AI features are deployed to minors.

The company's pause is, in part, a preemptive move to get ahead of potential regulation. By demonstrating that Meta is taking teen safety seriously, the company can hopefully shape how regulators approach AI safety rules rather than having rules imposed.

It's not cynical to notice this. Tech companies always do this. But it doesn't make the outcome worse. When regulatory pressure drives a company to improve safety, the outcome is better for users even if the motivation is self-interested.

Meta would much rather implement parental controls on its own timeline than have regulators mandate them with less flexibility and more friction.

What Developers Should Know: API Changes and Deprecation

If you're a developer who built tools or integrations that rely on Meta's AI characters, the pause affects you directly.

Meta is deprecating the current AI characters API completely. Any developer who built a bot or application that relies on integrating with Meta's AI characters needs to update before the characters become unavailable.

The good news: Meta is giving several weeks' notice, which is generous for a tech deprecation.

The bad news: there's no migration path. You can't just update to use the new API when it launches. You'll need to either rebuild your integration from scratch or find an alternative.

Meta hasn't announced whether the new API will be available to third-party developers at all. It might be Instagram/Facebook only. Or it might have stricter access controls.

If you're in this position, you should contact Meta's developer relations team to understand the path forward for your specific use case.

Estimated data suggests 'View Conversation Insights' is considered the most important feature due to its focus on sensitive topic monitoring, followed by 'Block Conversations' for direct control.

The Competitive Angle: Why This Matters for AI Market Dynamics

Meta's pause also signals something about the competitive landscape in generative AI.

When Meta launched AI characters, it was positioning itself as a creator of consumer-facing AI tools, not just an infrastructure company running ads. The characters were supposed to be differentiated, more social, more human than Chat GPT.

By pausing and rebuilding, Meta is signaling that it's willing to slow down market momentum to get safety right. That's the opposite of the move tech companies usually make.

It's worth watching whether this approach pays off. If the new AI characters are significantly better and safer, it validates Meta's decision to pause. If they're not meaningfully different, it looks like a corporate stumble.

For other companies in the AI space, Meta's move is a referendum on whether safety-first development is actually feasible in a competitive market. If Meta can pause a feature, fix it properly, and come back stronger, maybe safety and speed aren't completely incompatible.

If the new version launches and still has problems, it suggests that the safety challenges in teen AI access are just fundamentally hard, not solvable through better engineering.

What Teens Can Do in the Meantime

If you're a teenager who was using Meta's AI characters and suddenly lost access, here's what you should know:

You can still use other AI tools. Chat GPT, Claude, Gemini, Perplexity, and other AI assistants are available and many are free. They're not specifically designed for teenagers, so parental controls vary, but they're all technically accessible.

You can also explore dedicated teen-focused tools. Some companies are specifically building AI features designed with teen safety in mind from the start.

The pause is temporary. In a few months, new AI characters will be available again, hopefully with features and safety improvements.

In the meantime, this is actually a good time to think about your relationship with AI. Are you using it as a helpful tool or a substitute for human connection? What are you trying to get out of these conversations? Those questions matter.

How This Fits Into Meta's Broader AI Strategy

Meta's pause on teen AI characters isn't isolated. It's part of a larger reshape of how the company approaches AI deployment.

Meta under CEO Mark Zuckerberg has become aggressively focused on AI as a core strategic priority. The company is investing heavily in training large language models, building inference infrastructure, and integrating AI across all its properties.

But the company has also been more conservative than some competitors about deploying AI features to vulnerable populations. The pause on teen characters is consistent with that more cautious approach.

Looking ahead, expect Meta to integrate AI more deeply into Instagram, Whats App, and Facebook, but with multiple layers of safety controls, especially around teen usage.

The AI characters will probably come back as just one feature among many AI tools Meta offers, rather than a standalone product. That integration could actually make the controls work better because they'll be part of a broader framework rather than an island.

The Broader Lesson: Safety as a Feature, Not an Afterthought

Meta's decision to pause teen AI character access and rebuild with parental controls baked in teaches an important lesson that extends far beyond this one feature.

Safety works best when it's designed in from the beginning, not added later. This applies to AI, social media, gaming, education tech, and basically every digital product that teenagers use.

The challenge is that baking in safety from the start is slower and more expensive than launching quickly and patching later. Most tech companies have historically optimized for launch speed over safety.

Meta is making a bet that for products targeting teens, that calculation changes. Safety-first development is worth the cost and schedule hit because the downside of getting it wrong is too high.

It's a lesson other companies would be wise to learn sooner rather than later.

FAQ

What exactly is Meta pausing?

Meta is temporarily removing the ability for teenagers (users under 18) to chat with AI characters on Instagram and Facebook. Adult users will continue to have access. The pause is rolling out over several weeks and affects all teen accounts globally.

When will the new version with parental controls be available?

Meta hasn't announced a specific date, but based on typical development timelines, expect the new AI characters with parental controls to launch in late Q1 or early Q2 2025. The company said they'll announce when they're ready, so watch Meta's official blog for updates.

Will my teen's conversations with AI characters be permanently deleted?

Meta hasn't explicitly addressed whether conversation histories will be retained or deleted. It's reasonable to assume they'll be archived rather than immediately deleted, but you should check Meta's official communications for confirmation. Typically, Meta's data policy governs what happens to conversation data.

Can parents see full transcripts of conversations in the new version?

No. Meta is implementing "conversation insights" that show summaries and topics, but not verbatim transcripts. This is designed to balance teen privacy with parental oversight. Parents will be able to see patterns and flag concerning topics without reading exact messages.

What can teenagers do while AI characters are paused?

Teenagers can use other AI tools like Chat GPT, Claude, Gemini, or Perplexity (though these don't have specific teen-focused parental controls). The pause only applies to Meta's built-in AI characters. Some alternatives are free or have free tiers, so teenagers aren't completely without AI access.

Are there any risks with the new parental controls?

The main risk is that overly restrictive parental controls could discourage teenagers from using AI tools safely, pushing them toward less visible and less moderated alternatives. There's also a privacy concern about Meta collecting and storing conversation data even in summarized form. These are tradeoffs that different families will weigh differently.

Why didn't Meta just add parental controls to the existing AI characters?

Meta decided it was more efficient to pause and rebuild rather than create two separate systems with two different sets of parental controls. Building safety features at the foundation is generally more secure than bolting them on afterward. It also allowed Meta to incorporate lessons learned from the current version's limitations.

Will the new AI characters be worse or less capable?

Meta hasn't detailed how the new characters' capabilities will differ. It's likely they'll be more cautious about certain topics and have stricter guardrails, which some will see as better (safer) and others might see as more limited. The trade-off between capability and safety is often present in AI development.

How does this compare to what other tech companies are doing?

Open AI, Google, and Anthropic haven't built comparable AI character systems specifically for teens. Meta's approach is the most comprehensive in terms of dedicated parental controls, though each company has different philosophies about teen AI access.

What should I do as a parent right now?

If your teen was using Meta's AI characters, have a conversation with them about the pause and what they were using the feature for. When the new version launches with parental controls, familiarize yourself with the settings and decide what boundaries make sense for your family. Common Sense Media and other resources offer guidance on teen AI safety.

The Bottom Line

Meta's pause on teen AI characters represents a shift in how the tech industry thinks about teen safety online. Instead of launch-and-patch, the company is willing to pause a feature entirely to rebuild it safely.

This doesn't mean the new version will be perfect. Teen AI safety is a genuinely difficult problem with legitimate trade-offs between capability, privacy, safety, and autonomy. No solution will satisfy everyone.

But the direction is right. Acknowledging that the current approach had problems, pausing to redesign, and committing to parental controls as a core feature rather than an afterthought—that's the kind of thinking we need more of in tech.

The new AI characters will likely launch in the next few months. When they do, pay attention. They'll tell us whether Meta can actually follow through on the promise of safe AI for teenagers, or whether the challenges are just too complicated to solve.

Until then, if you're a teen or parent affected by the pause, stay tuned. The feature will return, hopefully better and safer than before.

Use Case: Automating your weekly parenting tips newsletter or creating guides on digital safety with AI-powered documents and presentations.

Try Runable For Free

Key Takeaways

- Meta paused teen access to AI characters starting in January 2025 to rebuild the system with parental controls as a core feature

- The new version will include parental controls for blocking characters, restricting one-on-one chats, and viewing conversation insights

- Parents and safety advocates raised concerns about emotional dependency, privacy, and inappropriate content in teen AI interactions

- The pause affects only teen users; adults retain full access to current AI characters

- Expected rollout of new AI characters with parental controls is late Q1 or early Q2 2025

Related Articles

- Meta Pauses Teen AI Characters: What's Changing in 2025

- Snapchat Parental Controls: Family Center Updates [2025]

- Age Verification & Social Media: TikTok's Privacy Trade-Off [2025]

- UK VPN Ban Explained: Government's Online Safety Plan [2025]

- Floppy Disk TV Control System for Toddlers [2025]

- UK Social Media Ban for Under-16s: What You Need to Know [2025]

![Meta Pauses Teen AI Characters: What Parents Need to Know [2025]](https://tryrunable.com/blog/meta-pauses-teen-ai-characters-what-parents-need-to-know-202/image-1-1769198748283.jpg)