Tik Tok Faces Its Biggest Regulatory Challenge Yet: The EU's Addictive Design Investigation

Something strange happened to your Friday night. You opened Tik Tok for ten minutes. Three hours later, you're still scrolling. Your thumbs are sore. Your eyes burn. And you can't remember a single video you watched.

That's not an accident.

In early 2025, the European Commission delivered preliminary findings that could fundamentally reshape how Tik Tok operates. They've concluded that Tik Tok's "addictive design" violates the Digital Services Act, specifically calling out infinite scroll, autoplay features, personalized recommendations, and push notifications as mechanisms designed to keep users compulsively engaged.

This isn't just regulatory talk. The Commission isn't being vague. They're citing neuroscience. They're pointing to specific design patterns. They're saying Tik Tok deliberately engineered psychological traps into its app, and those traps are harmful.

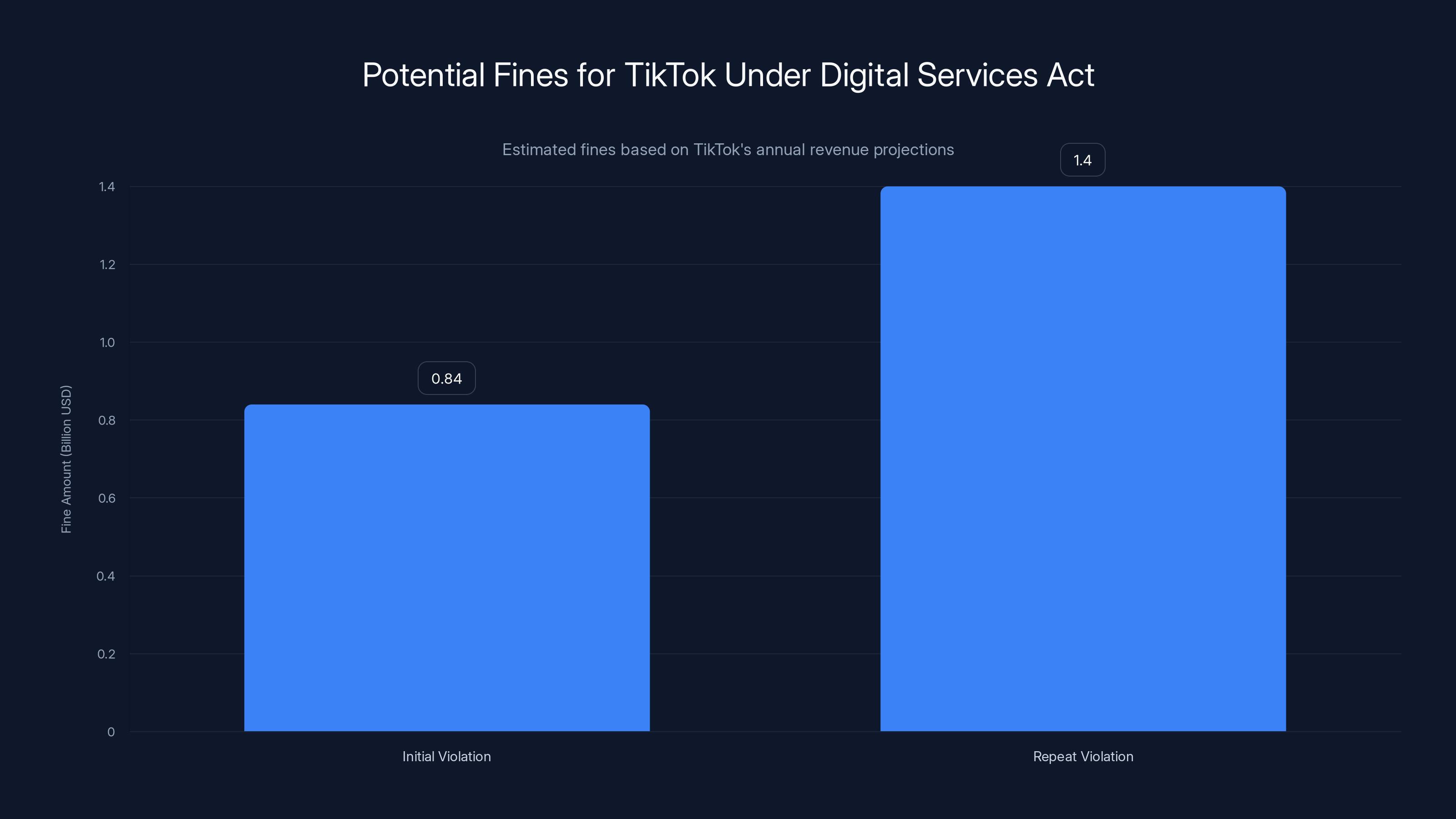

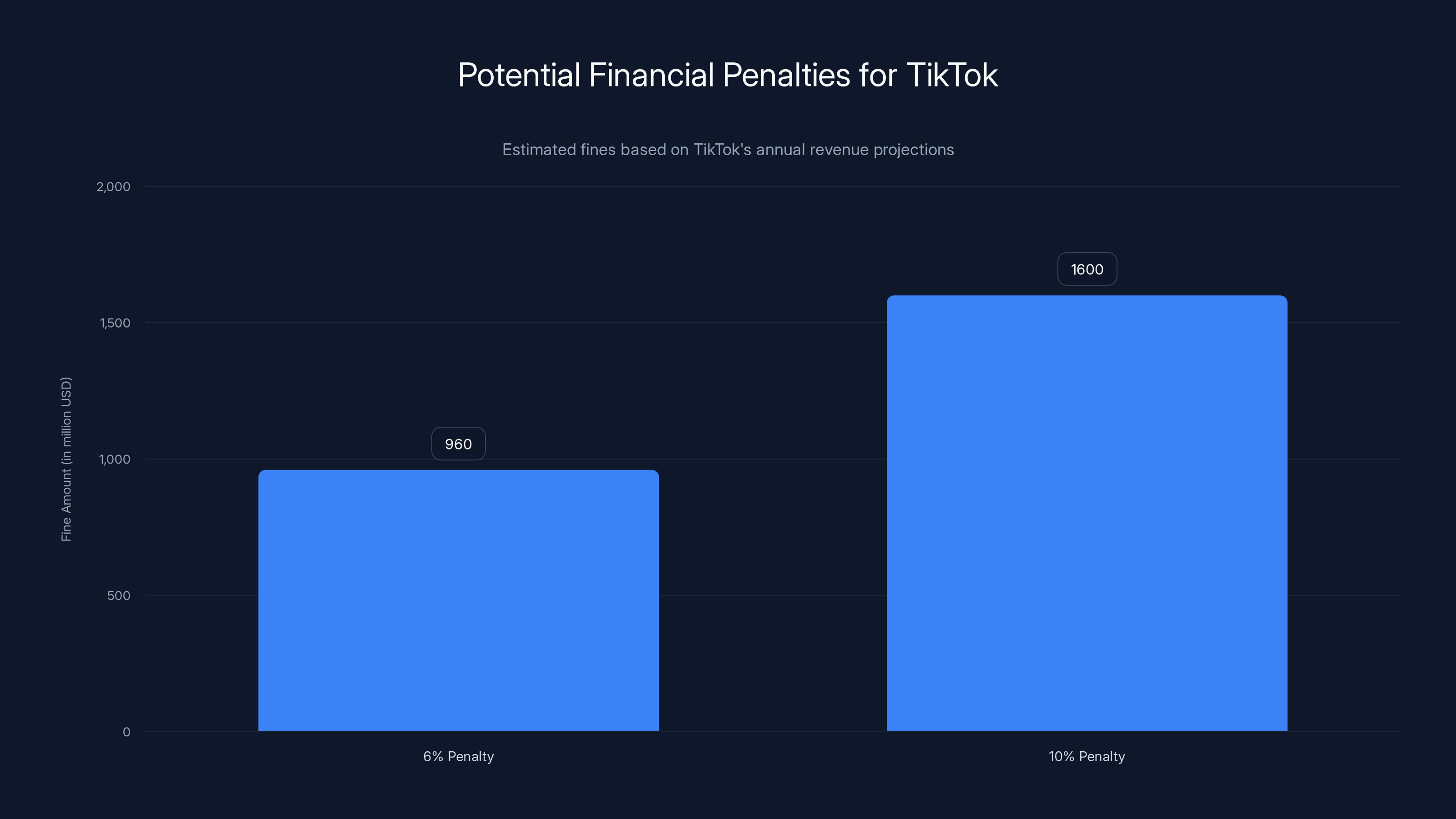

If the investigation concludes as preliminary findings suggest, Tik Tok faces fines up to 6% of its annual worldwide revenue. For context, that could mean billions. But the financial hit might be the least of it. The Commission is suggesting Tik Tok may need to "change the basic design of its service" to comply. That's not a minor tweak. That's a structural overhaul.

The stakes here extend beyond Tik Tok. This investigation sets a precedent for how regulators globally view social media design patterns. If the EU successfully forces changes, you can expect similar pressure from regulators in the UK, Australia, Canada, and potentially the United States.

Let's break down what's actually happening, why it matters, and what it might mean for the billions of people who use Tik Tok daily.

TL; DR

- The Core Issue: The European Commission found Tik Tok's infinite scroll, autoplay, and recommendation algorithm create addictive patterns that harm mental health

- Regulatory Foundation: Violations fall under the Digital Services Act, which requires large platforms to minimize addictive design features

- Potential Penalties: Tik Tok faces fines up to 6% of annual worldwide revenue if findings are confirmed

- Required Changes: Tik Tok may need to fundamentally redesign its core interface and limit infinite scroll functionality

- Broader Impact: This sets a global precedent for how regulators will scrutinize social media design patterns

- Timeline: Investigation began February 2024, with preliminary findings released in early 2025, still ongoing

TikTok could face fines between

What Exactly Is the European Commission Accusing Tik Tok Of?

The European Commission's investigation doesn't focus on content moderation or data privacy in the traditional sense. Instead, it homes in on something more fundamental: design patterns that exploit human psychology.

Think about how Tik Tok's interface actually works. You're not paging through content like you would on a website. You're not clicking "next." Instead, you're scrolling vertically through an endless stream. That stream never ends. New videos load automatically. The moment you finish watching one clip, the next one starts playing without you doing anything. Your feed learns what keeps you watching—sometimes showing you increasingly specific content to maximize engagement.

The Commission's preliminary findings specifically highlight four design mechanisms as problematic:

1. Infinite Scroll: The feed never stops. There's no bottom. No "You've reached the end of today's content" message. Just endless vertical scrolling, with new content loading continuously.

2. Autoplay: Videos begin playing automatically the moment they enter your viewport. You don't choose to play them. The app assumes you want to watch and starts the video anyway.

3. Push Notifications: Tik Tok sends notifications designed to pull you back into the app at strategic moments. "Your friend posted a new video." "Trending videos in your feed." These notifications interrupt your day with the specific goal of re-engagement.

4. Highly Personalized Recommendation Algorithm: Tik Tok's "For You Page" isn't random. It's engineered using sophisticated machine learning to show you precisely the type of content most likely to keep you watching. The algorithm learns your preferences, your behaviors, your vulnerabilities. It optimizes for watch time.

The Commission's analysis points to neuroscience research showing these patterns trigger compulsive behaviors. When content continuously rewards your scrolling with interesting videos, your brain enters what the Commission calls "autopilot mode." Your deliberate decision-making shuts down. You're no longer choosing to watch—you're compelled to watch.

This isn't theoretical criticism. The Commission cites actual peer-reviewed research on how variable reward schedules (where you don't know what you'll see next, but you know it might be interesting) create addictive patterns. It's the same mechanism behind slot machines. Your brain isn't wired to resist that pattern. Evolution didn't prepare us for algorithms.

Tik Tok's response has been dismissive. A Tik Tok spokesperson told the Financial Times that the Commission's findings are "categorically false and entirely meritless." The company argues that users can set screen-time limits and parental controls are available. But the Commission's position is clear: these options aren't enough, and they don't address the root problem.

The Digital Services Act: Europe's Regulatory Framework

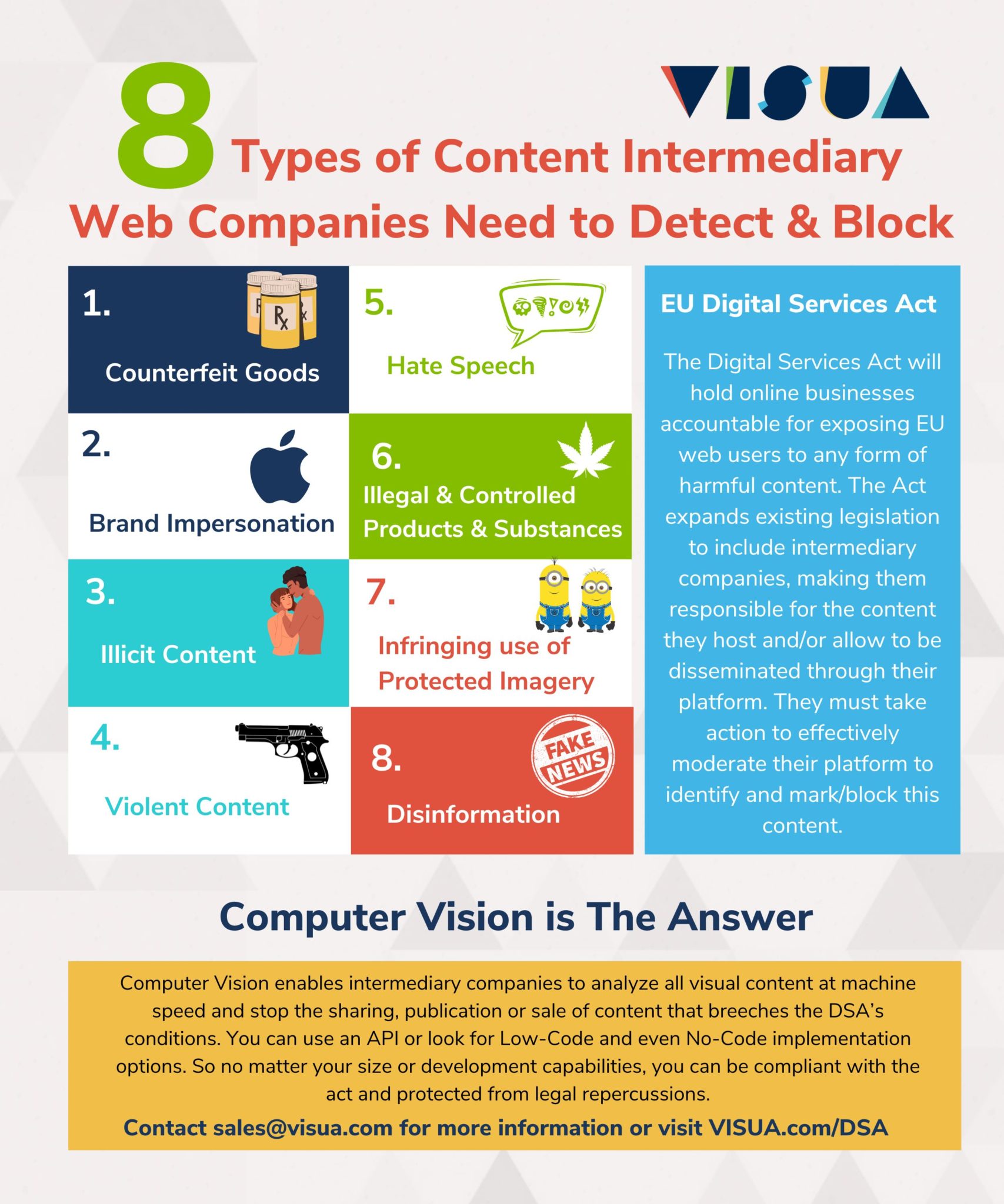

Understanding the EU's position requires understanding the Digital Services Act, which became law in 2024. This isn't an anti-Tik Tok regulation. It's a comprehensive framework governing how large digital platforms must operate across the European Union.

The DSA applies to any platform with more than 45 million users in the EU. Tik Tok has roughly 100 million European users. The law mandates that such "very large online platforms" must identify and mitigate systemic risks to users.

One of those risk categories is explicitly about design. The DSA requires platforms to minimize "dark patterns" and addictive design features, particularly those that could harm children and teenagers. The legislation recognizes that social media companies optimize for engagement, and that engagement often conflicts with user wellbeing.

The EU's position is philosophically different from how the United States typically approaches this. America generally trusts market forces and user choice. The EU takes a different view: when platforms have such enormous power over how people spend their time, and when those platforms explicitly design features to maximize compulsion, regulation becomes necessary.

Article 34 of the DSA specifically requires platforms to "provide a recommender system that is not based on automated processing of personal data profiled on the basis of tracking the user's behaviour." This doesn't mean Tik Tok can't have a recommendation algorithm. It means Tik Tok can't have a recommendation algorithm that relies on behavioral tracking as its primary mechanism.

Moreover, the DSA explicitly addresses dark patterns. Article 25 prohibits "material, fluent and non-obvious' ways that are likely to distort or impair the economic behavior of the recipient of a service by materially deviating from the person's reasonable expectations." Infinite scroll combined with autoplay could fall into this category if it's designed to trick users into spending more time than they intend.

The European Commission interprets the DSA as requiring that platforms actively work to reduce addictive effects, particularly for young users. This means not just providing tools (like screen-time limits) but fundamentally changing how the platform operates.

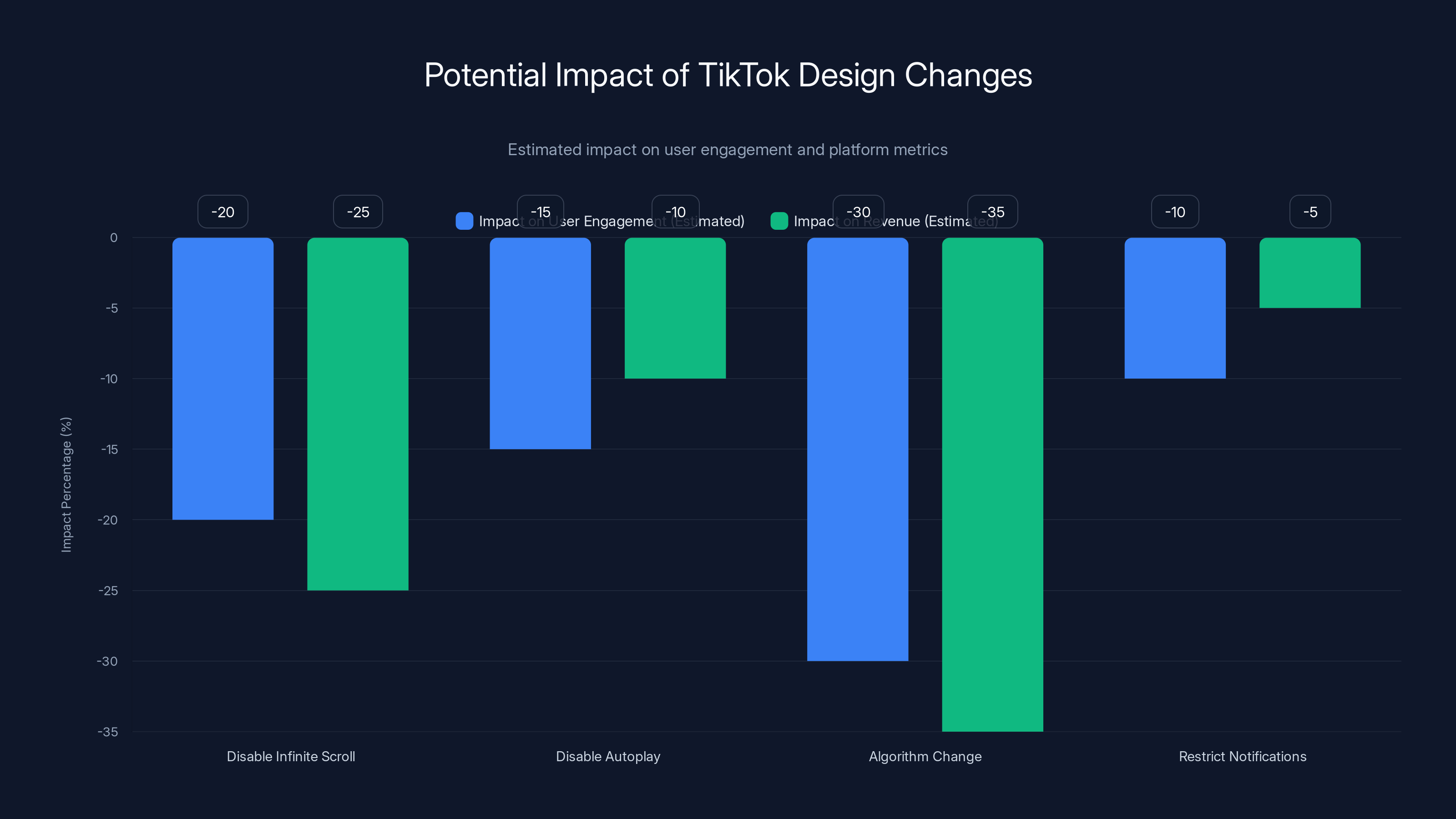

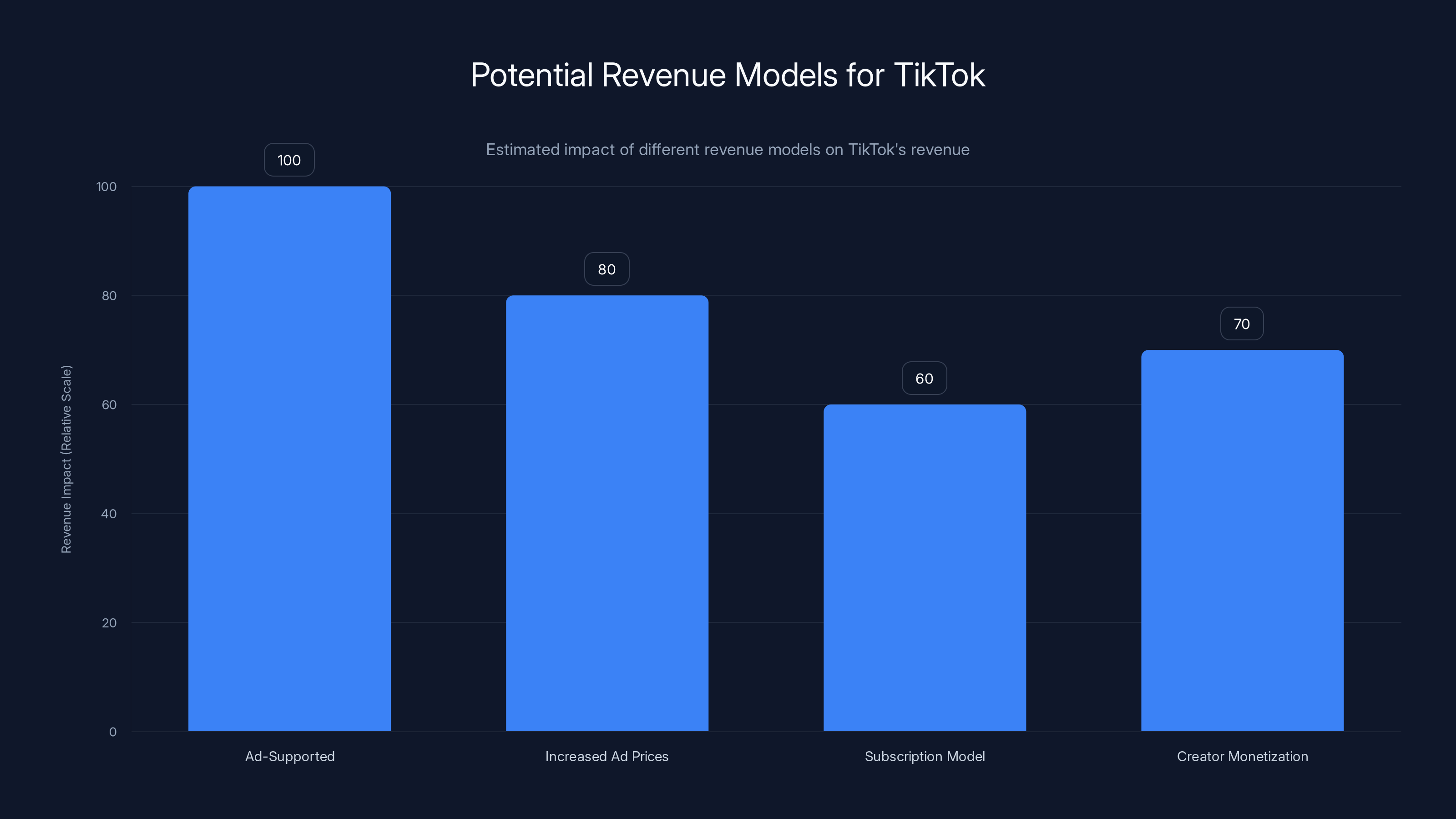

Estimated data suggests that changing TikTok's core design could significantly reduce user engagement and revenue, particularly if the recommendation algorithm is altered.

How Tik Tok's Algorithm Keeps You Scrolling Longer Than You Intend

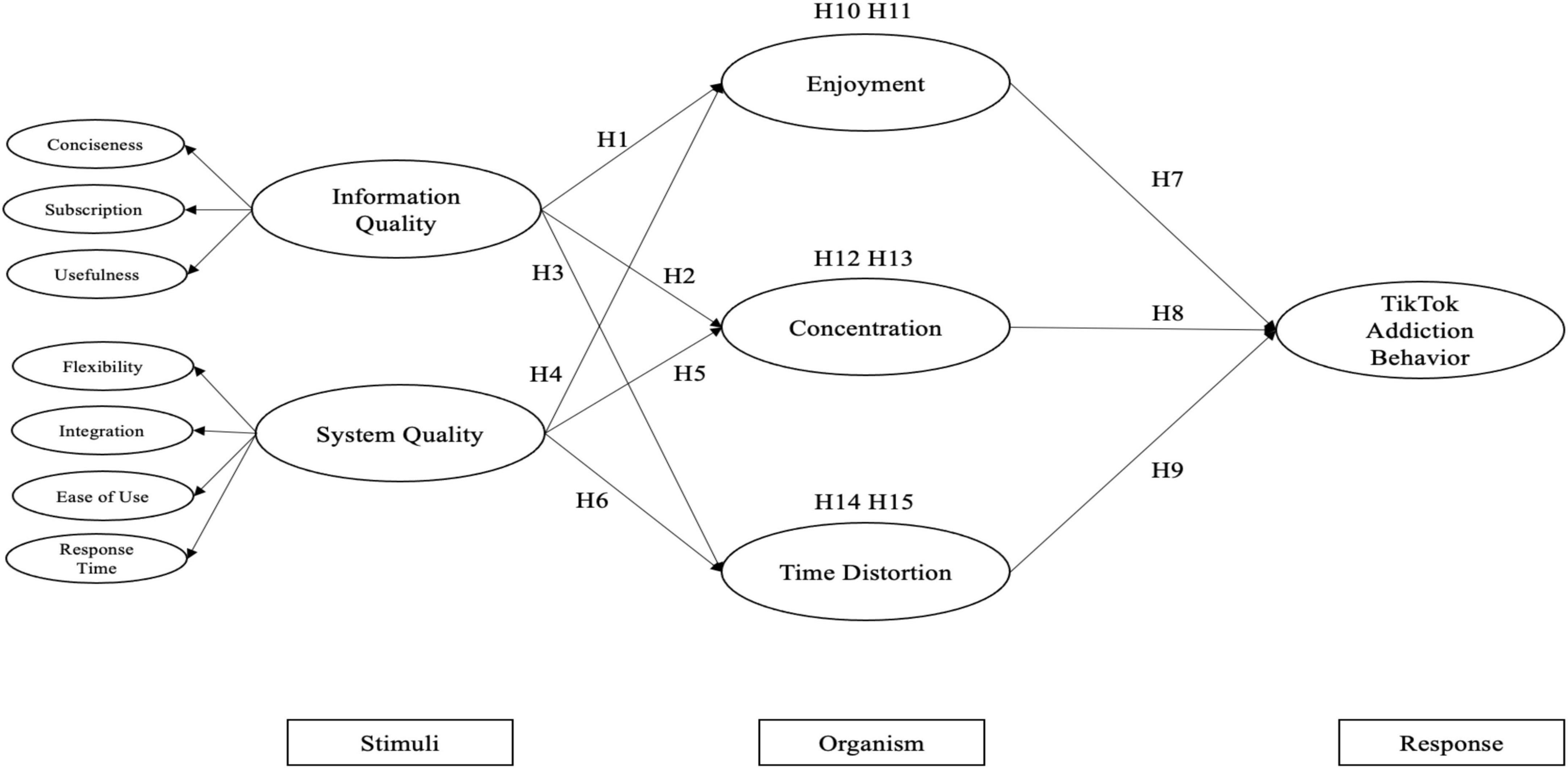

To understand why the Commission's findings matter, you need to understand how Tik Tok's recommendation algorithm actually works in practice.

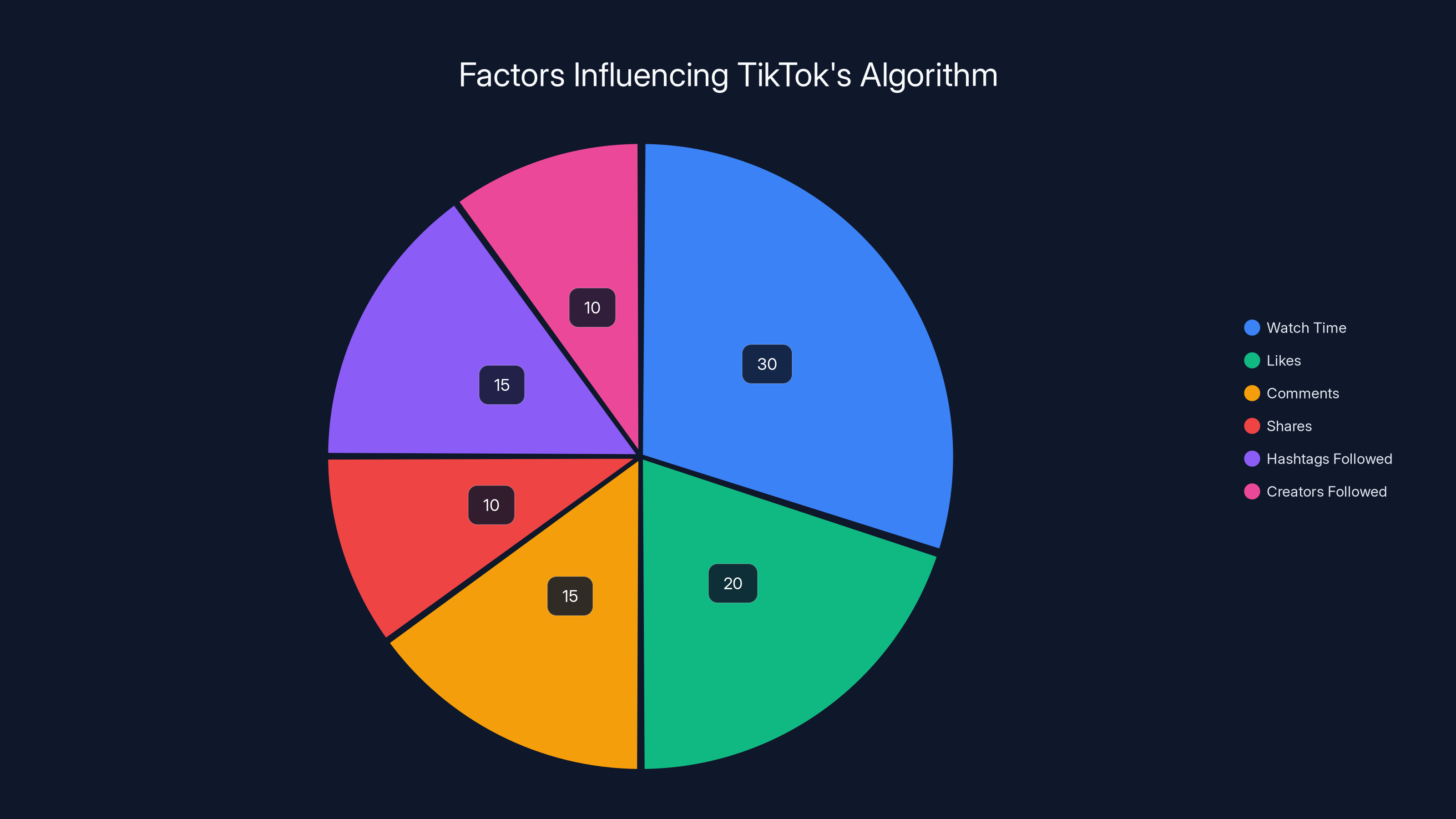

When you open Tik Tok, you're not seeing random videos from all Tik Tok creators. You're seeing videos specifically selected by an algorithm optimized for your engagement. That algorithm processes hundreds of signals: what you watched before, how long you watched it, what you liked, what you commented on, what you shared, what you scrolled past quickly, what hashtags you follow, what creators you follow, even metadata like the time of day you're active.

Machine learning models trained on this data predict which videos are most likely to keep you watching. The algorithm doesn't care about video quality. It doesn't care about accuracy or truthfulness. It cares about one metric: will this video make you keep scrolling?

This creates a feedback loop. If you watch slightly conspiratorial content without immediately leaving the app, the algorithm notices. It starts showing you more conspiratorial content. Not because the algorithm is trying to radicalize you, but because the algorithm learned that conspiratorial content keeps you watching longer than standard news.

Similarly, if you're vulnerable to outrage, the algorithm learns this. It shows you more content designed to anger you. Angry users are engaged users. Engagement is what the algorithm optimizes for.

The Commission's concern is that this optimization creates a perverse incentive structure. Tik Tok's revenue depends on keeping users on the platform. The longer you watch, the more ads you see, the more data Tik Tok collects, the more valuable you become as a user. This incentive structure is fundamentally at odds with user wellbeing.

A 2023 study by researchers at the University of Vermont found that Tik Tok's algorithm disproportionately promotes content from smaller creators with extreme viewpoints, likely because such content generates higher engagement. The algorithm isn't intentionally promoting misinformation, but its optimization mechanism creates conditions where misinformation flourishes.

Another 2024 study found that teenagers who use recommendation algorithms like Tik Tok's for more than two hours daily show measurably worse mental health outcomes than teenagers with moderate usage. The causation isn't certain (it's possible that teens with worse mental health seek more Tik Tok use), but the correlation is undeniable.

Tik Tok argues that users can disable the For You Page and use the Following page instead, which shows videos only from creators they've chosen to follow. This is technically true. But studies show that more than 85% of Tik Tok's watch time comes from the For You Page. Most users never change this setting. The algorithm is the default, and the default is designed to maximize engagement.

The Specific Design Mechanisms That Trigger Compulsive Behavior

Infinite scroll sounds simple, but it's psychologically powerful. Let's break down why.

When content has a clear endpoint, you make a conscious decision to stop. A traditional website has pages. A physical newspaper has edges. You can see when you've reached the end. You actively decide whether to get more content. Your brain engages in deliberate decision-making.

Infinite scroll eliminates the endpoint. As you approach the bottom of your feed, new content loads automatically. You never encounter a stopping point that forces conscious reflection. "Do I want to keep watching?" becomes impossible to answer because there's always more content immediately available.

Coupled with autoplay, this becomes even more powerful. You're not choosing to start videos. They start automatically. You're passively consuming rather than actively selecting. This shift from active to passive consumption is crucial. Active consumption requires deliberate decision-making. Passive consumption happens without conscious effort.

Push notifications create external triggers to re-engage. You might put the phone down. You've "used up" your Tik Tok time for the moment. Then a notification arrives. "Your friend posted a new video." That notification reactivates your desire to open the app. It breaks your attempt at self-control by introducing an external stimulus designed to overcome your willpower.

The combination of these three mechanisms (infinite scroll + autoplay + notifications) creates what behavioral psychologists call a "visceral trigger" cycle. The app removes friction from continued consumption, then sends notifications to trigger re-engagement when you've left.

Research on behavioral addiction uses a framework called the "I-PACE" model (Interaction-Person-Affect-Cognition-Execution). This model maps how compulsive behaviors develop. Tik Tok's design hits every component:

- Interaction: The app itself is designed for maximum engagement

- Person: The recommendation algorithm learns your specific vulnerabilities

- Affect: The content is emotionally stimulating

- Cognition: Infinite scroll prevents deliberate decision-making

- Execution: Autoplay and notifications make continuation effortless

When all these components align, addiction patterns emerge. This isn't unique to Tik Tok. You Tube has infinite autoplay. Instagram has infinite scroll. Twitter/X has algorithmic feeds. But Tik Tok combines all three mechanisms more aggressively than most competitors, and the Commission's investigation suggests this combination crosses a regulatory line.

Why the EU Cares More Than the United States About This

If you've watched regulatory hearings in the US Congress, you've probably noticed something. American lawmakers ask tech executives defensive questions. They often lack technical understanding. They rarely impose consequences.

The EU is different. European regulation operates on a principle called the "precautionary principle." When there's reason to believe a product or practice might cause harm, regulation can restrict it even before harm is definitively proven. This is different from America's approach, which generally requires clear evidence of harm before restricting products.

This philosophical difference explains why the EU moved on the Digital Services Act while the US remained gridlocked. America was still debating whether social media actually harms teenagers. The EU decided the evidence was sufficient and moved forward with regulation.

There's also a historical context. Europe has been more skeptical of American tech companies' practices than America itself. The EU has repeatedly passed regulations that the US viewed as overreach: GDPR (privacy), copyright rules, data localization requirements. The DSA is consistent with this pattern.

Financially, the EU can also afford to push back against tech companies. The US economy is dependent on tech companies in ways the EU economy isn't. American regulators face pressure that European regulators don't. When the EU threatens Tik Tok with 6% of annual revenue, Tik Tok has to listen because the EU market is valuable but not existential to Tik Tok's business model. (Unlike, say, Apple's threat to ban Tik Tok from the App Store, which would be existential.)

Additionally, European culture tends to be more skeptical of "move fast and break things" entrepreneurship. There's greater concern with social stability and individual protection. When a platform designed to maximize engagement creates mental health problems, particularly in teenagers, the European regulatory instinct is to restrict the platform. The American instinct is to educate users about responsible usage.

This isn't to say one approach is right and one is wrong. Each reflects different cultural values. But it explains why the EU moved first on addictive design. If American regulators want to avoid European-style regulation, they need to either demonstrate they can regulate effectively themselves or accept that their tech companies will face increasingly restrictive rules in European markets.

Estimated fines for TikTok could range from

What Tik Tok Is Actually Fighting Against

Tik Tok's response to the investigation has been combative. The company argues that the Commission's findings misrepresent how their platform works and what their design choices mean.

Tik Tok's core argument: users can control their own experience. The platform offers screen-time management tools. Parents can set parental controls. Users can switch to the Following page if they don't want algorithmic recommendations. Notifications can be turned off. The responsibility for moderate usage lies with the user, not the platform.

This argument isn't unreasonable as a matter of abstract principle. But it fails against the empirical reality that the Commission is citing. When 85% of users never switch away from the algorithmic For You Page, it doesn't matter that an alternative exists. When most users never adjust their notification settings, offering the option doesn't prevent the harm. When screen-time warnings don't stop compulsive usage (because the brain isn't making rational decisions during autopilot mode), the feature becomes performative.

Tik Tok also argues that the Commission is picking on them unfairly. Instagram has infinite scroll. You Tube has autoplay. Facebook has algorithmic recommendations. Why is Tik Tok uniquely at fault?

The answer, based on the Commission's preliminary findings, is intensity. Tik Tok combines these features more aggressively than competitors. But there's also another reason: precedent. If the EU successfully regulates Tik Tok under the DSA, they'll likely move against other platforms next. Tik Tok is the test case.

Tik Tok is also arguing that some of their design choices aren't about addiction but about good user experience. Autoplay videos reduces friction. Infinite scroll makes the interface feel faster. Notifications help creators reach audiences. From a pure product design perspective, these choices make sense. The question isn't whether they improve user experience—they do. The question is whether they improve experience by exploiting psychology in ways that conflict with user wellbeing.

Finally, Tik Tok is fighting because the stakes are enormous. If they're forced to fundamentally redesign their platform, they lose their competitive advantage. Tik Tok's advantage isn't that it hosts short videos. Instagram Reels, You Tube Shorts, and many other platforms do that. Tik Tok's advantage is that its algorithm is better at keeping users engaged. If forced to weaken the algorithm, Tik Tok becomes just another short-form video platform.

The Scientific Evidence Behind "Addictive Design"

The European Commission isn't making up the concept of addictive design out of whole cloth. There's actually substantial research backing their concerns.

Neuroscientist Adam Alter at NYU has done extensive research on behavioral addiction and digital products. He points out that addiction has specific characteristics: it produces temporary pleasure but long-term negative consequences, it requires increasingly larger doses to produce the same effect, it causes withdrawal when unavailable, and it persists despite the person wanting to stop. Social media exhibits all these characteristics for a significant portion of users.

The research on variable reward schedules, which underlies addictive design, goes back decades. Psychologist B. F. Skinner discovered that rewards given on unpredictable schedules create stronger compulsive behavior than rewards given consistently. Slot machines use this principle. Tik Tok's algorithm uses it too. Because you don't know what the next video will be, but you know it might be interesting, you keep scrolling.

A 2018 study in Addictive Behaviors found that social media users with "addictive" usage patterns showed neural activation patterns similar to those with substance addiction when exposed to app notifications. The brain's response to a Tik Tok notification resembles the response to a cocaine cue.

Meta (Facebook's parent company) conducted internal research in 2020 showing that Instagram's algorithm recommendations actively increased feelings of inadequacy in teenage girls. The company buried this research until it was leaked to the press. The research showed that Instagram knew its algorithm was harming teenage mental health and continued the algorithm anyway because it maximized engagement and advertising revenue.

Another 2023 study in Nature Human Behavior found that teenagers with heavy social media use showed measurably reduced gray matter in areas associated with reward sensitivity and impulse control. It's not certain whether heavy social media use causes this change or whether people with these brain characteristics gravitate toward social media, but the correlation is clear.

The American Psychological Association issued a report in 2023 specifically warning about social media addiction in adolescents. The report noted that algorithmic feeds and infinite scroll were specifically designed to maximize engagement in ways that conflict with healthy development.

So the Commission isn't wrong about the science. The evidence that these design patterns create compulsive behavior is substantial. The question is whether regulation is an appropriate response, or whether it crosses into paternalism.

The Financial Penalty: 6% of Annual Revenue

If the European Commission confirms its preliminary findings, Tik Tok could face a fine of up to 6% of its annual worldwide revenue. To understand what this means, let's look at actual numbers.

Tik Tok's annual revenue is estimated at

A 6% penalty on a

However, the DSA allows for escalating penalties. If Tik Tok receives a first penalty and then continues violating the regulations, the fine can increase. Repeat violations could lead to fines up to 10% of annual revenue. At that point, we're talking about

Moreover, the EU has indicated it might pursue additional violations beyond addictive design. The preliminary findings from this investigation already noted violations related to advertising transparency and data availability to researchers. Each violation could trigger separate fines.

The precedent is also important. If the EU successfully penalizes Tik Tok under the DSA, other jurisdictions will likely follow. The UK is already implementing its own Online Safety Bill. Australia is drafting comparable legislation. If Tik Tok faces fines in the EU, EU + UK + Australia could quickly add up to several billion dollars in penalties across multiple jurisdictions.

Finally, there's the non-financial penalty: the regulatory precedent. If the EU successfully forces Tik Tok to change its core design, every other platform knows they're next. Meta would face pressure to change Instagram. You Tube would face pressure to change autoplay and recommendations. This precedent could reshape the entire tech industry.

That's why Tik Tok is fighting so hard, despite the financial penalty being survivable. The threat isn't primarily about money. It's about control over their product design.

Estimated data shows that watch time is the most significant factor influencing TikTok's algorithm, followed by likes and comments. This highlights the algorithm's focus on engagement to keep users scrolling.

Could Tik Tok Actually Be Forced to Change Its Core Design?

This is the question that matters most. The Commission's preliminary findings suggest Tik Tok may need to "change the basic design of its service." What would that actually look like?

One possibility is mandating that infinite scroll be disabled by default. Users could enable it if they wanted, but the default experience would have stopping points. This would require active user choice to continue scrolling past certain content thresholds.

Another possibility is disabling autoplay by default and requiring users to manually play videos. This would require intentional action rather than passive consumption.

A third possibility is fundamentally changing the recommendation algorithm. Instead of showing you the videos most likely to keep you watching, the algorithm could prioritize other metrics: video quality, accuracy, creator quality, diversity of sources. This would materially reduce engagement but would serve user interests rather than platform engagement metrics.

Notifications could be restricted to only user-initiated actions. You follow a creator, that creator posts, you're notified because you chose to follow. But the platform wouldn't send you notifications designed to pull you back in based on algorithmic predictions.

The challenge is implementation. Some of these changes could be made relatively easily with software updates. Others would require fundamentally rethinking Tik Tok's entire revenue model, because Tik Tok's advertising revenue depends on engagement metrics that these restrictions would reduce.

It's also unclear whether these changes would actually solve the problem. If Tik Tok turns off infinite scroll but the recommendation algorithm is still highly personalized, users would still experience the compulsive behavior, just with more friction. The problem isn't uniquely infinite scroll. It's the combination of personalization, optimization for engagement, and features that minimize decision-making friction.

There's also a question of whether users actually want these restrictions. Surveys suggest that users appreciate Tik Tok's algorithm and prefer infinite scroll to paged content. Forcing a design they don't prefer could drive users to competitors.

The EU seems aware of this tension. They're not demanding Tik Tok operate at a loss or become unusable. They're demanding that Tik Tok balance engagement optimization with user wellbeing. How Tik Tok achieves that balance is ostensibly up to them. In practice, there's probably a narrow range of acceptable solutions.

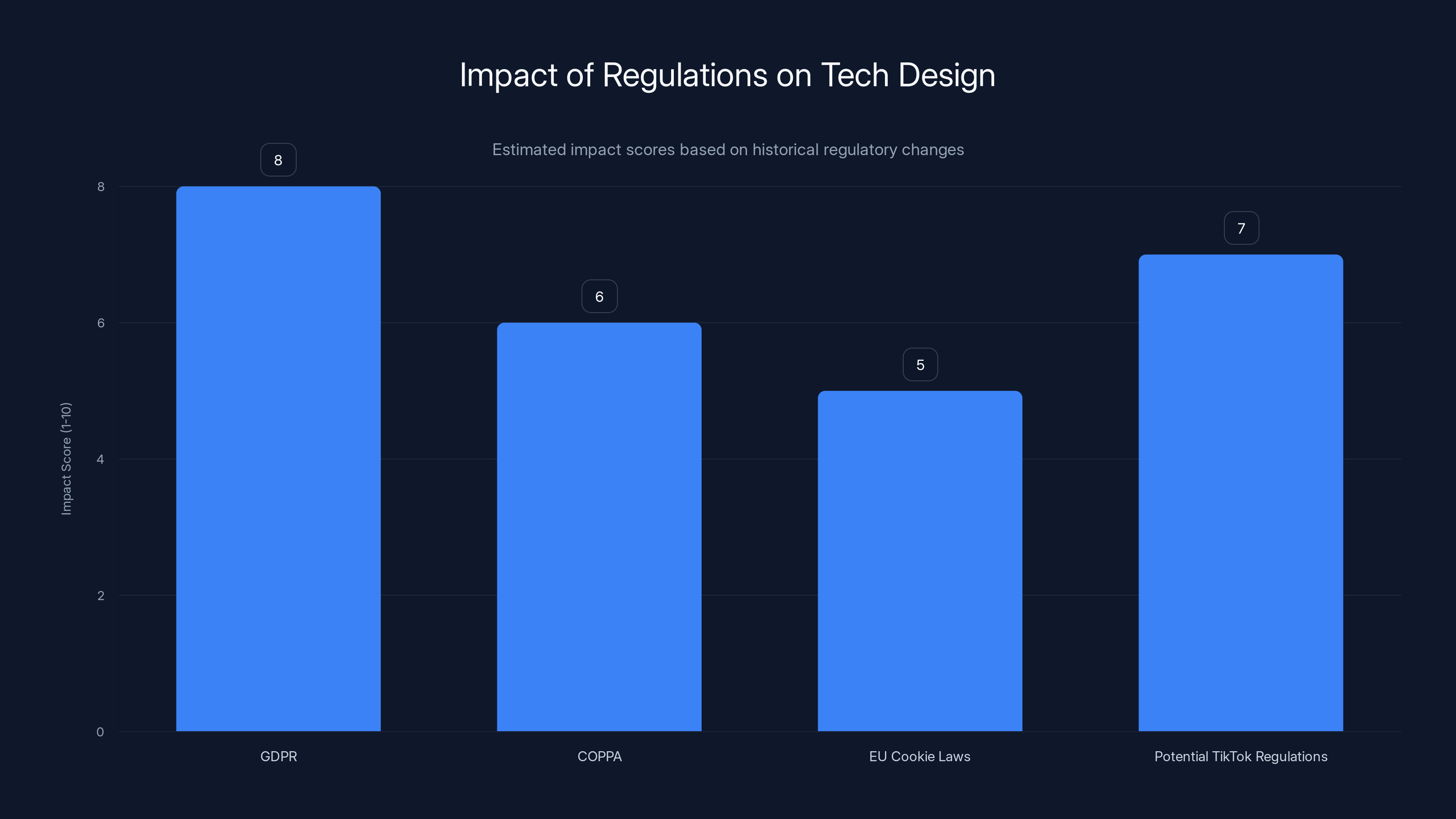

Precedent: How Other Regulations Have Shaped Tech Design

To predict what might happen with Tik Tok, look at how previous regulations changed tech companies' designs.

GDPR (the European General Data Protection Regulation) didn't ban data collection, but it required companies to ask permission first. This seems simple in theory. In practice, it changed how every tech company operates. Dark patterns in consent dialogs became an entire regulatory issue. Websites had to redesign their permission requests to comply with the law. The regulation didn't break the internet, but it did reshape incentives.

The COPPA rule in the United States restricted what companies could do with data from children under 13. You Tube responded by creating You Tube Kids, a separate app with restricted features and algorithmic recommendations. This worked, sort of. You Tube Kids has less addictive design than the main platform, but it's also less engaging.

EU cookie laws, implemented around 2012, required websites to ask permission before storing cookies. You've all seen the "Accept Cookies" banners on every European website. These were regulatory compliance responses. They're not great user experiences, but they technically comply with the law. Tech companies found ways to comply while minimizing impact to their business models.

This pattern suggests that Tik Tok will likely find ways to technically comply with new regulations while minimizing impact to engagement metrics. If the EU requires a user-friendly way to disable infinite scroll, Tik Tok will probably make that option available but bury it in settings. If they're required to change the default recommendation algorithm, they'll probably create an alternative algorithm that's less engaging but technically complies.

The question is whether regulators will accept minimal compliance or demand substantive change. Based on the EU's track record with GDPR, they'll probably accept technical compliance initially, then push for more meaningful change when companies game the system.

This could take years. The GDPR was implemented in 2018. We're now in 2025, and companies are still refining compliance. Tik Tok's regulatory process could follow a similar timeline: preliminary findings now, formal findings in 2025, appeals and negotiations through 2026, final compliance deadline in 2027 or beyond.

The Global Regulatory Cascade

What happens in the EU rarely stays in the EU when it comes to tech regulation. Other countries pay attention.

The UK, preparing to implement its Online Safety Bill, is likely to impose similar restrictions on addictive design. The bill explicitly requires platforms to minimize features that increase risk of harms. Addictive design clearly falls into this category.

Australia is drafting its Digital Duty of Care laws, which would require digital platforms to demonstrate they're minimizing harms. Australian regulators could easily interpret this to require changes to infinite scroll, autoplay, and algorithmic recommendation systems.

Canada passed Bill C-36 focusing on hate speech and harmful content online, but follow-up legislation is expected to address design-based harms. The proposed Digital Safety Commission would likely have authority to regulate addictive design.

Even in the United States, where tech regulation moves slowly, there's momentum. Senator Richard Blumenthal and others have proposed the Platform Accountability and Transparency (PAT) Act, which would require platforms to minimize addictive design features. This hasn't passed yet, but the political will is building.

The most likely scenario is that the EU moves first with Tik Tok penalties and design requirements. This creates precedent that other jurisdictions follow. Within five to ten years, every major jurisdiction will have similar rules. Tech companies will face a global regulatory environment that constrains addictive design features.

This could lead to either a global standard (all versions of Tik Tok have the same restrictions) or regional variations (different versions for different markets). Meta has already implemented regional variations for different regulations. Tik Tok would likely do the same.

Estimated data shows GDPR had the highest impact on tech design, while EU Cookie Laws had the least. TikTok regulations might have a significant impact, similar to GDPR.

What This Means for Creators and the Creator Economy

When people discuss the Tik Tok investigation, they focus on users and advertisers. But creators are equally affected.

Creators have built businesses on Tik Tok's algorithm. If the algorithm changes, creator revenues could suffer. Creators who built audiences by creating addictive content (shock value, outrage, conspiracy theories) would be hurt the most, since a reformed algorithm would de-prioritize this content.

Creators who focus on quality, originality, and providing actual value would potentially benefit. If Tik Tok's algorithm moves from "engagement optimization" to something more nuanced, creators of educational content, art, and other valuable content might see their work reach larger audiences.

There's also a question of earnings mechanics. Tik Tok pays creators through the Creator Fund, which is based on views and engagement. If engagement metrics change, creator earnings could change. A creator earning

Some creators have been advocating for exactly these changes. They've complained that Tik Tok's algorithm pushes them toward increasingly extreme content to maintain engagement. "The algorithm wants me to be more controversial," many creators have said in interviews. They'd welcome a change that rewards quality over addiction potential.

But creators who've built careers on the current system will view regulatory restrictions as threatening. Expect the creator community to be divided. Some will welcome change. Others will fight it.

The Deeper Question: Can Social Media Ever Be Non-Addictive?

This is the question that goes beyond Tik Tok specifically. Is it possible to build a social media platform that's engaging but not addictive? Or is engagement inherently addictive?

There's an argument that engagement and addiction are on a spectrum rather than distinct categories. Any system designed to be compelling will have some addictive properties. The question becomes about degree and intent.

One approach is transparency. If users understand exactly how the algorithm works and why they're being shown particular content, they can make more deliberate choices. But research suggests transparency alone isn't sufficient. Even when users understand how dark patterns work, the patterns still work.

Another approach is slower design. A platform could deliberately introduce friction. Make users think before scrolling. Require active choices rather than passive defaults. This would reduce engagement but might increase user satisfaction because users would feel they maintained control.

A third approach is to align platform incentives with user wellbeing rather than engagement. Instead of maximizing watch time, maximize user satisfaction or learning or skill development. This would require a fundamental business model change. Advertising-based models naturally incentivize engagement. Subscription-based models create weaker incentives for compulsive usage.

Reddit and other platforms have tried community-moderated approaches where users have more control over their feeds. Bluesky (the decentralized Twitter alternative) explicitly tries to give users more algorithmic choice. These approaches reduce addictive potential but also reduce engagement.

The uncomfortable reality is that making social media less addictive probably means making it less appealing to some users. Some users will leave. Usage time will decrease. Advertising revenue will decline.

This is why tech companies haven't voluntarily implemented these changes. The business incentives point toward addictive design. Only regulation can change those incentives.

Why Teenagers Are the Focus

Throughout the investigation, the European Commission particularly emphasizes the harm to teenagers. This is deliberate. The DSA includes special protections for minors because teenagers are more vulnerable to addictive design than adults.

Adolescent brains are still developing, particularly the prefrontal cortex (responsible for judgment and impulse control). Teenagers have greater reward sensitivity and weaker impulse control than adults. They're developmentally more vulnerable to addictive patterns.

Research shows that teenagers who use social media for more than two to three hours daily show measurably worse outcomes: higher rates of depression, anxiety, sleep problems, and body image issues. The causation question remains unsettled (do social media platforms cause these problems, or do struggling teens seek social media?), but the correlation is undeniable.

Many jurisdictions around the world are now proposing age-restricted social media. Spain recently proposed banning kids under 16 from social media. Australia is considering age verification laws. These proposals stem from the recognition that teenagers are particularly vulnerable to these platforms.

Tik Tok's investigation specifically notes that Tik Tok has a young user base. A significant portion of Tik Tok users are teenagers. This means Tik Tok's addictive design potentially harms the most vulnerable population.

The EU's focus on teenagers is both justified scientifically and politically smart. It's easier to convince the public to regulate a platform if the argument centers on protecting children. Nobody wants to be "the person who protects Instagram's engagement metrics over children's mental health."

Tik Tok's parental controls are part of the issue. Parental controls assume that parents will restrict their children's usage. But this assumes parental engagement that's not always present. Also, parental controls prevent teenagers from using the app, rather than making the app safer. The Commission wants the app itself to be less harmful, not just to be kept away from kids.

Estimated data suggests that moving to a subscription model or increasing ad prices could reduce TikTok's revenue compared to the current ad-supported model. Creator monetization might offer a moderate alternative.

What Competitors Should Be Worried About

This investigation is nominally about Tik Tok. But Instagram, You Tube, Snapchat, and other platforms should be nervous.

Instagram has the exact same features: infinite scroll, autoplay, algorithmic recommendations, push notifications. You Tube has autoplay and algorithmic recommendations. If the EU successfully regulates Tik Tok based on addictive design, Instagram and You Tube are next.

In fact, Meta has already been under investigation by the FTC for addictive design. The FTC is considering restrictions on Instagram's design. These are separate from the EU process, but they're moving in the same direction.

You Tube is probably less vulnerable because You Tube has more diverse use cases. People use You Tube for learning, tutorials, and long-form content, not just scrolling. The platform serves functions beyond pure engagement. This might give You Tube some insulation from the "addictive design" criticism.

Tik Tok and Instagram, by contrast, are primarily designed for passive consumption. They serve fewer utilitarian purposes. If a regulator decides one is addictive, it's harder for them to claim they're not addictive.

Snapchat is interesting because Snapchat added similar features to compete with Tik Tok, but Snapchat didn't fully commit to algorithmic recommendations. Snapchat's design is less optimized for engagement than Tik Tok's, which might mean less regulatory pressure but also less growth.

Smaller platforms like Be Real, Mastodon, and others that explicitly designed away from addictive features are probably safe from this investigation. But they'll also remain small because they can't match the engagement of addictive platforms.

This creates an interesting dilemma for the tech industry. Regulation that reduces addictive design will reduce engagement and growth. But regulation will also create a level playing field. All platforms will face the same restrictions. This might actually benefit established platforms that can survive lower growth rates.

The Long Investigation: Why Tik Tok Still Isn't Done Fighting

The DSA investigation that produced preliminary findings began in February 2024. We're now in early 2025. This has been roughly a year of investigation, and the process isn't close to finished.

Here's the timeline likely to unfold:

2025: The EU will accept Tik Tok's response to the preliminary findings. Tik Tok will submit arguments and evidence to defend itself. This process typically takes 3-6 months.

Mid-2025 to Late 2025: The European Commission will issue final findings. If the preliminary findings hold up, this will trigger formal penalties and a requirement to implement changes.

2025-2026: Tik Tok will file appeals and seek judicial review. European court processes move slowly. This could take another year or more.

2026-2027: Tik Tok will need to implement design changes. The specifics will likely be negotiated through a collaborative process with regulators.

2027+: Ongoing compliance monitoring. If Tik Tok doesn't actually implement changes, additional penalties and enforcement actions follow.

This is a multi-year process. Tik Tok isn't going to be forced to overnight redesign. But they also aren't going to escape the investigation or the requirements.

During this time, Tik Tok can challenge the findings, argue for lighter restrictions, lobby European politicians, and attempt to negotiate favorable terms. The company has resources and political connections. They'll use both.

But the outcome seems increasingly likely: Tik Tok will face restrictions on its design. Whether those restrictions are voluntary compliance or forced by fines probably depends on whether Tik Tok wants to preserve its European business model. Tik Tok makes significant revenue in Europe. Complete non-compliance isn't realistic.

What This Precedent Means for All of Tech

We're potentially watching a turning point. For decades, tech companies designed features based on engagement optimization without regulatory constraint. The company that maximized engagement won. Market forces determined winners.

The Tik Tok investigation suggests that era is ending. Regulation will increasingly constrain how platforms can design their features. Companies will need to balance engagement with compliance.

This creates a new competitive dynamic. Instead of competing solely on engagement, companies might compete on compliance. "Our platform respects your attention" could become a differentiating factor.

It also means that business models based on addictive engagement will face headwinds. Ad-supported models that depend on high engagement will be under pressure. Subscription models, which align company incentives with user satisfaction rather than watch time, might become more viable.

For developers and designers working on social platforms, this means paying attention to regulations. The designer who wants to add infinite scroll needs to now consider whether that feature might trigger regulatory investigation.

For users, this is complicated. Regulation might reduce addictive design, but it might also reduce engagement and features that users actually enjoy. The question becomes whether users want platforms optimized for engagement or platforms optimized for wellbeing. Those aren't the same thing.

The Business Model Question: Can Tik Tok Survive With Less Addictive Design?

Here's the financial reality. Tik Tok makes money from advertising. Advertisers pay for access to Tik Tok's users' attention. The more attention Tik Tok captures, the more it can charge advertisers.

If Tik Tok reduces addictive design, it will reduce average user engagement time. Lower engagement means fewer ad impressions per user. Fewer impressions means lower advertiser value.

Tik Tok could compensate by raising advertising prices. But there are limits. Advertisers will switch to other platforms if costs rise too much. This is why Meta charges lower prices on Instagram than on Facebook—Instagram has less captive engagement, so the platform can't charge as much.

Alternatively, Tik Tok could shift to subscription revenue. Tik Tok+ (a paid tier without ads) exists in some markets. If Tik Tok moves to a subscription-based model, the incentive to maximize engagement decreases. Subscribers pay a flat fee. More engagement doesn't increase revenue. The company could then optimize for user satisfaction rather than engagement.

But moving from ad-supported to subscription is risky. Most users won't pay for social media. Free services that make money from ads have always dominated. Switching models could drive users away.

Tik Tok could also try to monetize creators more aggressively, shifting from advertising to a creator ecosystem model. This would require taking a percentage of creator earnings or charging for creator tools. But creators are already frustrated with Tik Tok's revenue shares. Increasing monetization on creators could drive them to competitors.

The uncomfortable reality is that there might not be a path where Tik Tok reduces addictive design while maintaining current revenue levels. The company might need to accept lower revenue and slower growth as the cost of regulatory compliance.

For a private company owned by Byte Dance, this is survivable. Byte Dance is valuable regardless of Tik Tok's specific revenue. For a public company (which Tik Tok would be if listing independently), declining revenue would pressure stock price and invite activist investors demanding the company take shortcuts.

This might be one reason Tik Tok remains private and has resisted going public. Going public would expose it to capital markets pressure to maximize short-term profit, which conflicts with regulatory compliance that demands lower engagement optimization.

Will Tik Tok Actually Comply, or Will It Fight?

Tik Tok has stated it will challenge the findings "through every means available." This suggests a fight, not compliance.

Tik Tok will argue that the Commission's findings misrepresent their design choices. They'll argue that other platforms have similar features but aren't being investigated. They'll argue that users choose to use Tik Tok and can choose to leave. They'll argue that the solution is education and parental control, not regulation.

These arguments have merit, but they're probably not going to convince regulators who've already decided that addictive design is a problem.

Tik Tok might also try to negotiate a settlement. Rather than fighting to total victory, they might accept some design restrictions in exchange for avoiding massive fines. This is how many large tech companies handle EU regulation.

Google negotiated antitrust settlements. Facebook agreed to privacy changes to settle FTC complaints. Companies generally prefer negotiated settlements to prolonged legal battles because the certainty of known penalties is better than the uncertainty of court decisions.

Tik Tok might eventually accept something like: "Infinite scroll is allowed but must be settable to default off. Autoplay is allowed but must be settable to default off. Users must explicitly consent to algorithmic recommendations." These changes would reduce engagement but wouldn't destroy the platform.

But this depends on whether regulators are willing to negotiate. The EU has shown willingness to negotiate in past cases. But the Commission also seems committed to the principle that addictive design is inappropriate regardless of user choice.

The Counterfactual: What If Tik Tok Doesn't Change?

What happens if Tik Tok simply refuses to comply? What if they ignore the fines and keep operating as-is?

The EU could escalate enforcement. They could demand that Apple and Google remove Tik Tok from their European app stores. This would effectively ban Tik Tok in Europe by making it impossible to download.

We saw this with other regulations. When Basecamp (a software company) refused to comply with EU regulations, App Store pressure forced them to choose: comply or be delisted. Most companies comply when faced with app store removal.

Tik Tok could theoretically continue operating on websites and through existing installs, but new user acquisition would essentially stop. The platform would decline in Europe as it ages out without new European users.

This would be a significant blow to Tik Tok but not existential. Tik Tok's user base is global, and Europe is maybe 25% of total users. Losing Europe would hurt but not destroy the company.

However, loss of Europe would set precedent for other countries. If Tik Tok defies the EU, the UK, Australia, and Canada will feel emboldened to take similar action. Within a few years, Tik Tok could be effectively banned across the entire Western hemisphere and Europe.

At that point, it becomes existential. Tik Tok would be available only in China, India, and smaller markets. The company would become significantly less valuable.

So while Tik Tok might fight, total non-compliance probably isn't realistic. They'll either negotiate a settlement or eventually comply because the alternative is too costly.

FAQ

What is the Digital Services Act and how does it apply to Tik Tok?

The Digital Services Act is European Union legislation that came into effect in 2024, governing how large digital platforms must operate across EU member states. It applies to platforms with more than 45 million users in the EU—a category that includes Tik Tok, which has approximately 100 million European users. The law requires these "very large online platforms" to identify and mitigate systemic risks to users, including risks from addictive design features, dark patterns, and algorithmic recommendations that cause harm.

How exactly does Tik Tok's algorithm create addictive behavior?

Tik Tok's algorithm combines three powerful psychological mechanisms: first, variable reward schedules (you don't know what the next video will be, but it might interest you), which create compulsive scrolling; second, personalization that learns your specific preferences and vulnerabilities, showing you increasingly targeted content; and third, design features like autoplay and infinite scroll that minimize friction and deliberate decision-making, shifting your brain into "autopilot mode" rather than active engagement. Research shows this combination triggers neural activation patterns similar to substance addiction.

What penalties could Tik Tok face if the European Commission confirms its preliminary findings?

Tik Tok could face fines up to 6% of its annual worldwide revenue if the Commission's preliminary findings are confirmed. Based on estimated revenue of

Could Tik Tok be forced to disable infinite scroll, autoplay, or algorithmic recommendations?

Yes, the European Commission's preliminary findings explicitly suggest that Tik Tok may need to implement meaningful restrictions on infinite scroll, autoplay functionality, and its algorithm's personalization. The company might be required to disable these features by default and require explicit user consent to enable them, or to fundamentally change how the algorithm prioritizes content. Tik Tok maintains that such changes would degrade user experience, but regulators counter that the current design conflicts with user wellbeing.

Why is the EU investigating social media addictive design when the United States isn't doing the same?

The EU operates under the "precautionary principle," which allows regulation when there's reasonable evidence of potential harm, rather than waiting for definitive proof as the US typically requires. Additionally, European culture and regulation historically prioritize individual protection and social stability over tech company innovation freedom. The EU also has less economic dependence on tech companies than the US, giving regulators more political freedom to impose restrictions. Finally, the EU has already demonstrated willingness to regulate tech companies through GDPR and other laws, making similar action on social media more likely.

Will Tik Tok have to change for all users worldwide, or just European users?

Historically, tech companies implement regional variations for different jurisdictions. If Tik Tok is forced to change in Europe, EU users will likely experience a different version of the platform than non-EU users. This creates technical challenges and costs for Tik Tok, but it's the precedent established by how companies handle GDPR and other regional regulations. However, if similar regulations spread to other major markets (UK, Australia, Canada), Tik Tok might eventually need to implement these restrictions more globally.

Could the EU's investigation set a precedent that affects other social media platforms like Instagram and You Tube?

Absolutely. Instagram and You Tube have similar features (infinite scroll, autoplay, algorithmic recommendations) that could be challenged under the same regulatory framework. The EU's investigation into Meta for addictive design is already underway. If Tik Tok is successfully regulated, other platforms will expect similar regulatory pressure. This could lead to a cascade of platform redesigns across the entire social media industry as each platform faces regulation in different jurisdictions.

Is there scientific evidence that social media design features are actually addictive?

Yes, substantial peer-reviewed research demonstrates that infinite scroll, variable reward schedules, and personalized recommendations create addictive usage patterns. Studies show that social media notifications trigger neural activation similar to drug cues, that heavy social media use correlates with reduced gray matter in reward and impulse control brain regions, and that users with addictive social media patterns show similar psychological characteristics to those with behavioral addictions. The American Psychological Association has issued formal warnings about social media addiction, particularly in adolescents.

What would Tik Tok look like if it was forced to comply with the most restrictive possible interpretation of the regulation?

In an extreme compliance scenario, Tik Tok might require users to explicitly choose infinite scroll (with periodic stopping points by default), manually play videos rather than having autoplay enabled, opt into algorithmic recommendations rather than having them be default, and only receive notifications for actions the user explicitly requested (like follows or messages). These changes would substantially reduce engagement metrics but wouldn't necessarily make the platform unusable. More realistically, Tik Tok will likely reach a negotiated compromise somewhere between current design and maximum restriction.

Conclusion: The Beginning of the End of Addictive Design

We're witnessing a shift. For two decades, social media companies designed features based on a simple metric: engagement. More time on platform equals more valuable company. Engagement maximization became the dominant strategy.

The Tik Tok investigation represents the first serious regulatory challenge to this model. If the European Commission successfully forces design changes, the era of unregulated addictive design is ending.

This doesn't mean the investigation will destroy Tik Tok. The company is too valuable, too embedded in culture, too useful to billions of people. But it probably means Tik Tok will operate differently in Europe than elsewhere, and it almost certainly means other platforms will face similar pressure globally.

From a user perspective, this is complicated. Reducing addictive design might protect your mental health. Or it might make the platform less enjoyable. You might prefer infinite scroll to paginated content. You might like that your feed learns your preferences.

But the Commission's core argument is sound: when platform incentives are misaligned with user wellbeing, regulation becomes necessary. Companies won't voluntarily reduce engagement optimization because engagement optimization generates revenue. Only external pressure—regulatory pressure—changes corporate incentives.

The question going forward isn't whether regulation will happen. It will. The question is how it will be implemented, how serious it will be, and whether it'll actually improve user outcomes or just create compliance theater where platforms pretend to address the problem while maintaining engagement optimization through darker, more subtle patterns.

What's certain is that the days of platforms openly optimizing for compulsion without regulatory constraint are ending. The next chapter of social media will be written in the tension between user wellbeing and platform engagement. That tension will shape every product decision, every algorithm update, every design choice made by every social platform for the next decade.

Tik Tok is just the beginning.

Key Takeaways

- European Commission found TikTok's infinite scroll, autoplay, and personalized algorithm violate Digital Services Act through addictive design patterns

- TikTok faces potential fines up to 6% of annual worldwide revenue (1B+) and mandatory platform redesign requirements

- Neuroscience research confirms these design features trigger compulsive behaviors comparable to addiction, particularly in teenagers

- This investigation sets global precedent likely to cascade into UK, Australia, Canada regulatory actions on all major platforms

- Compliance will require TikTok to fundamentally redesign core features, potentially reducing engagement and forcing business model changes

Related Articles

- X's Paris Office Raided Over Grok's Illegal Content: Musk Summoned [2025]

- Netflix's Warner Bros. Deal: Smart TV & Remote Control Implications [2025]

- Zuckerberg Opposed Parental Controls on AI Chatbots: What It Means [2025]

- Google Antitrust Lawsuits 2025: Publishers, Ad Tech, and Legal Impact

- Digital Authoritarianism & Free Speech 2025: Complete Guide

- Apple's $2 Billion UK Antitrust Appeal: What's at Stake [2025]

![TikTok's Addictive Design vs. EU Regulators: What You Need to Know [2025]](https://tryrunable.com/blog/tiktok-s-addictive-design-vs-eu-regulators-what-you-need-to-/image-1-1770383360370.jpg)