Introduction: The AI Reckoning Nobody Wants to Talk About

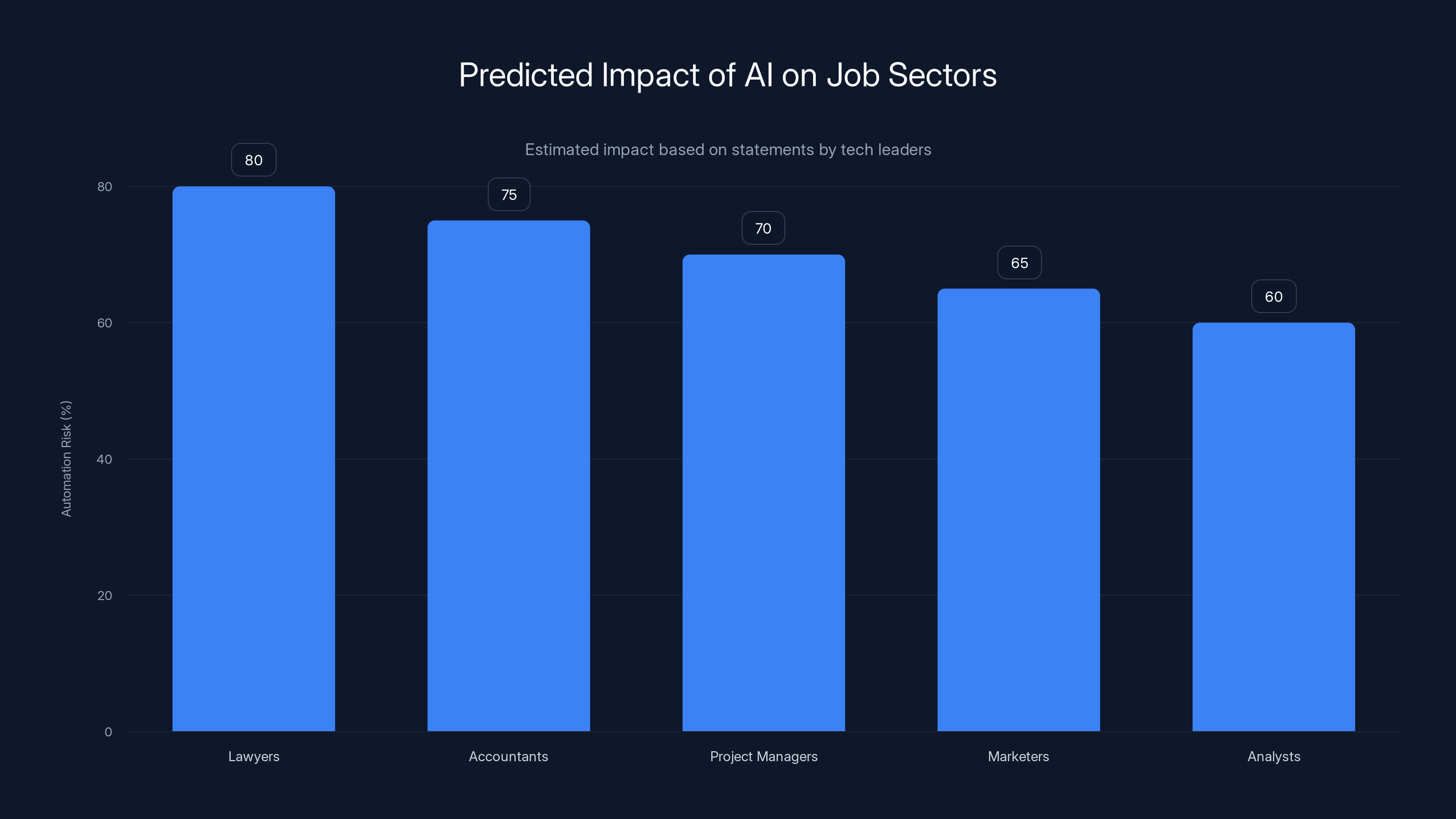

Last year, Microsoft's AI chief Mustafa Suleyman dropped a statement that made every office worker nervous. He said AI would reach human-level performance on most professional tasks—maybe all of them—within 12 to 18 months. Lawyers, accountants, project managers, marketers, analysts. Gone. Automated. Handled by digital minds that don't need coffee breaks or salary negotiations.

Sounds apocalyptic, right? Here's the thing: Suleyman's not alone in thinking this. Anthropic's Dario Amodei warned Congress that AI could wipe out half of all entry-level jobs in the next five years, potentially pushing unemployment to 20%. Even Elon Musk has said work might become optional. The consensus among tech leaders is growing darker by the week.

But here's what nobody's asking: Is this actually going to happen? Or are we watching billionaires make grandiose predictions that sound impressive in a YouTube interview but crumble under scrutiny?

I'll be honest—there's real change coming. AI is getting faster, smarter, and more capable every month. But the timeline? The scope? The "end of white-collar work"? That's where the narrative breaks down. Not because AI isn't dangerous or transformative. It absolutely is. But because disruption doesn't work the way tech CEOs describe it in their public statements.

Let's dig into what's actually happening, what's hype, and what white-collar workers actually need to worry about—because it's not the doomsday scenario you've been hearing.

TL; DR

- The Claim: Mustafa Suleyman says AI will automate most white-collar jobs in 12-18 months

- The Reality: AI is advancing rapidly, but automation timelines are historically 2-3x longer than predicted

- What's Actually Changing: AI will augment, not replace—at least for the next 3-5 years

- The Real Risk: Job displacement won't be universal; it'll hit specific roles (data entry, basic research, routine analysis) first

- Bottom Line: Adaptation matters more than panic; professionals who learn AI collaboration tools will outcompete those who don't

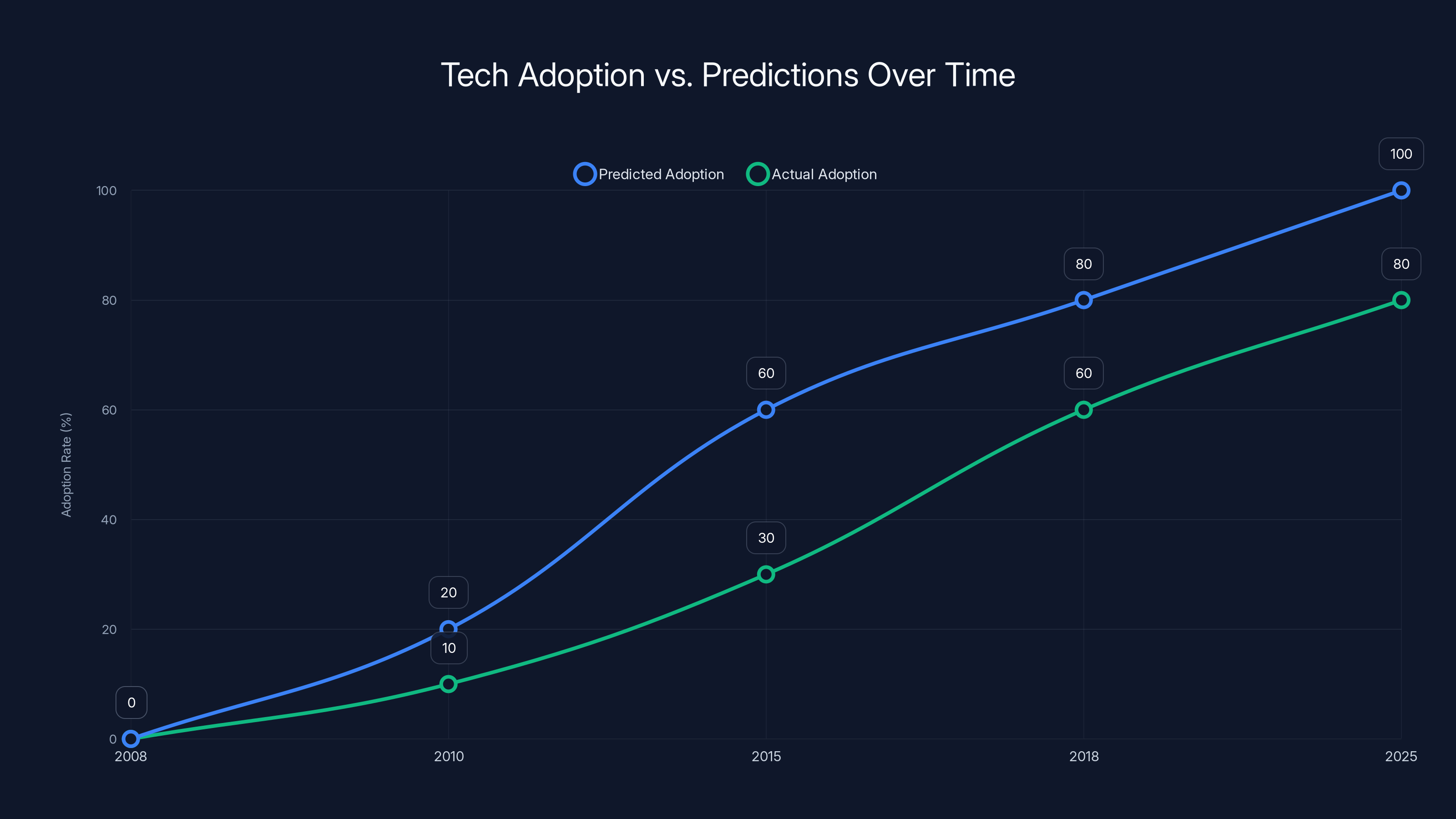

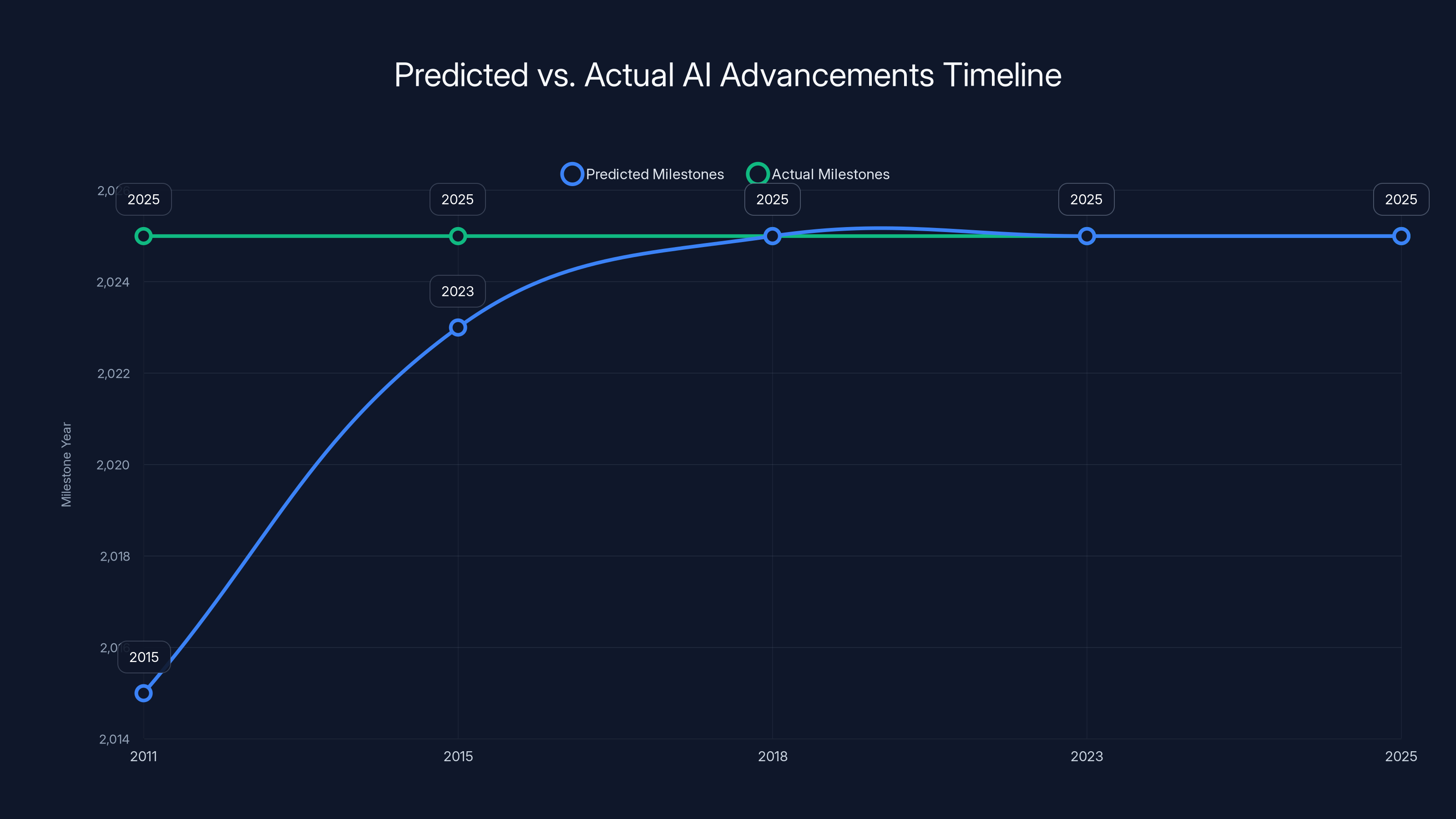

Estimated data shows that tech adoption consistently lags behind predictions, often taking 3-5 times longer due to real-world frictions.

What Suleyman Actually Said (And What It Means)

Mustafa Suleyman's statement wasn't casual speculation. He was explicit: "I think we're going to have a human-level performance on most, if not all, professional tasks." And the timeline: "within the next 12 to 18 months."

When he says "white-collar work," he's talking about sitting at a computer doing tasks that require education but not necessarily deep domain expertise. Lawyers reviewing contracts. Accountants reconciling books. Project managers updating timelines. Marketing strategists writing copy. These are the jobs he thinks are most vulnerable.

But here's what often gets lost in the headlines: Suleyman's also describing a specific version of AI—what he calls "artificial capable intelligence," a stepping stone toward AGI (Artificial General Intelligence). It's not that today's Chat GPT or Claude can do this. It's his prediction about where AI is heading.

The distinction matters because predictions about AI advancement have been notoriously off. In 2011, experts said we'd have driverless cars by 2015. It's 2025, and autonomous vehicles still can't operate reliably in rain. In 2018, people predicted AI would write most code by 2023. Yet software engineering jobs grew. The pattern is consistent: AI takes longer to displace humans than the people building it think.

Suleyman's track record on predictions is actually pretty mixed. He co-founded Inflection AI in 2023, positioning it as a competitor to OpenAI and Anthropic. By 2024, Microsoft hired him specifically because Inflection was losing the race. That's not a knock on Suleyman—he's clearly brilliant—but it shows that even AI leaders can misjudge what the market actually wants.

The Historical Pattern: Why Tech Predictions Always Overshoot

There's a consistent pattern in tech disruption that almost everyone ignores: adoption takes 3-5x longer than anyone predicts. This isn't because people are stupid. It's because real-world friction is invisible until you're living in it.

Look at cloud computing. Around 2008, analysts said on-premises servers would be obsolete by 2015. It's 2025, and plenty of enterprises still run critical systems on servers in their own data centers. Why? Regulatory compliance, vendor lock-in concerns, legacy integration nightmares, organizational inertia, and good old-fashioned fear of the unknown.

Or consider mobile banking. Predicted widespread by 2010, yet it took until 2018 before mobile transactions actually surpassed desktop in developed markets. Why? User trust, security concerns, friction in account verification, and the fact that older demographics held enormous wallet share.

Or robots in manufacturing. We've been talking about factory automation for 40 years. Industrial robots exist. They're incredibly capable. Yet humans still operate most production lines because robots can't adapt to slight variations in materials, can't troubleshoot unexpected problems, and cost hundreds of thousands to reprogram for a new product.

The pattern repeats: new technology shows promise, experts extrapolate, timelines compress, reality hits, adoption actually takes much longer than predicted. Yet each time, a new generation of tech leaders is shocked.

Why does this happen? Several reasons:

Organizational Resistance: Even when AI is objectively better at a task, companies don't instantly adopt it. They need to retrain staff, rethink workflows, deal with union concerns, manage client relationships, and handle the disruption of change. That's not exciting, but it's expensive and time-consuming.

Regulatory Friction: If AI is making decisions that affect people (hiring, loans, medical treatment), regulators will demand oversight. The EU's AI Act and similar regulations being drafted globally will slow deployment. Lawyers and compliance teams will slow things down intentionally.

Human Resistance: People who've spent 15 years mastering a job don't disappear when AI arrives. They push back. They find reasons why "AI doesn't understand the nuance of our work." Sometimes they're right. Often they're scared. But either way, the resistance is real.

Quality Uncertainty: AI is good, but it's not perfect. A lawyer using AI to draft contracts will still need to review everything. An accountant using AI to reconcile books will still need to check for errors. That overhead—the human-in-the-loop requirement—slows adoption dramatically.

Integration Nightmares: Companies don't work with one tool. They use 10 different systems. Making AI work across all of them, integrating data pipelines, connecting APIs, handling security—that's a project that takes months or years, not weeks.

Given this history, when Suleyman predicts full white-collar automation in 12-18 months, what we're really hearing is: "AI will be technically capable of this in 12-18 months." But capability ≠ deployment. Capability ≠ adoption. Capability ≠ the end of human workers.

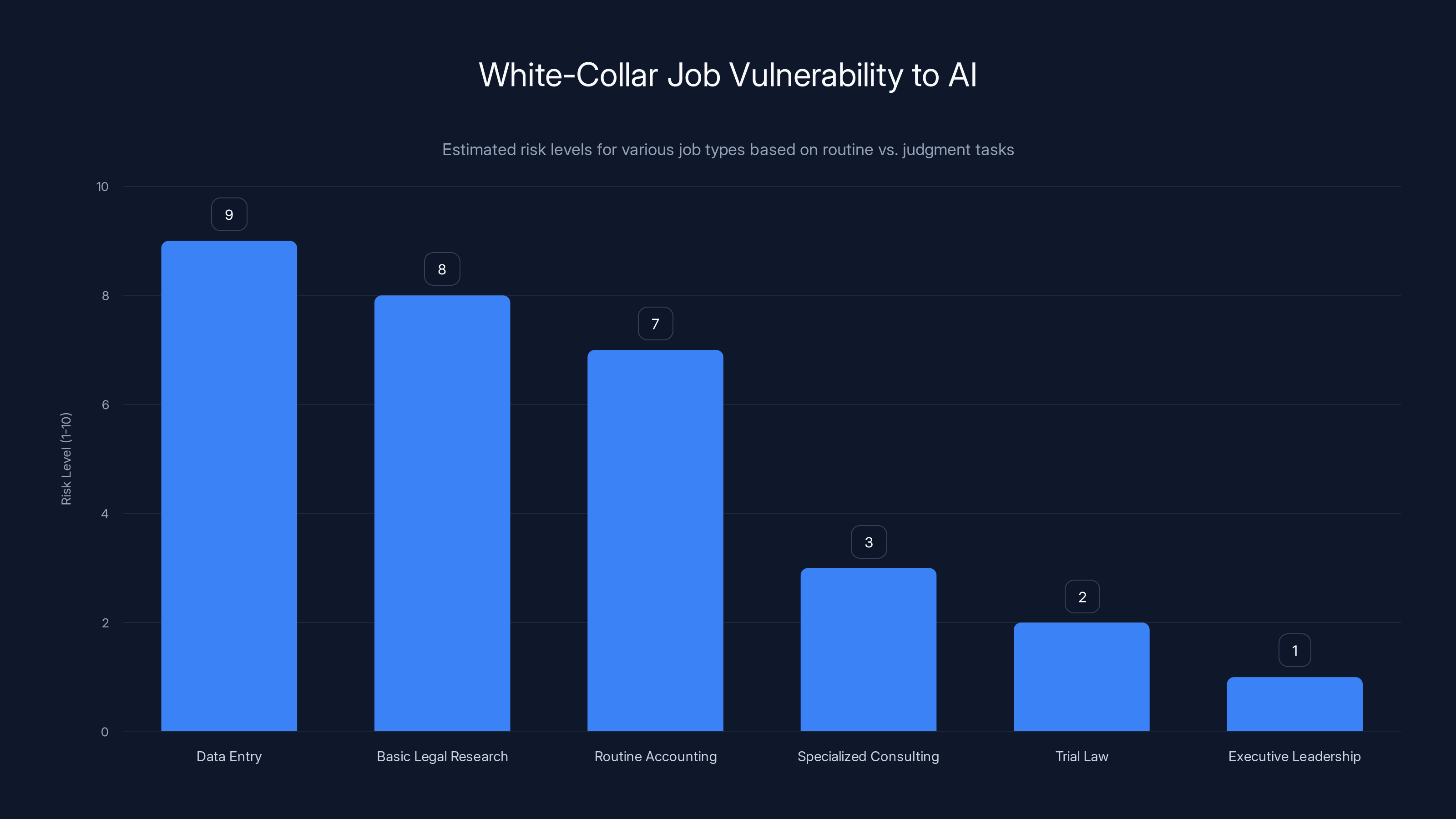

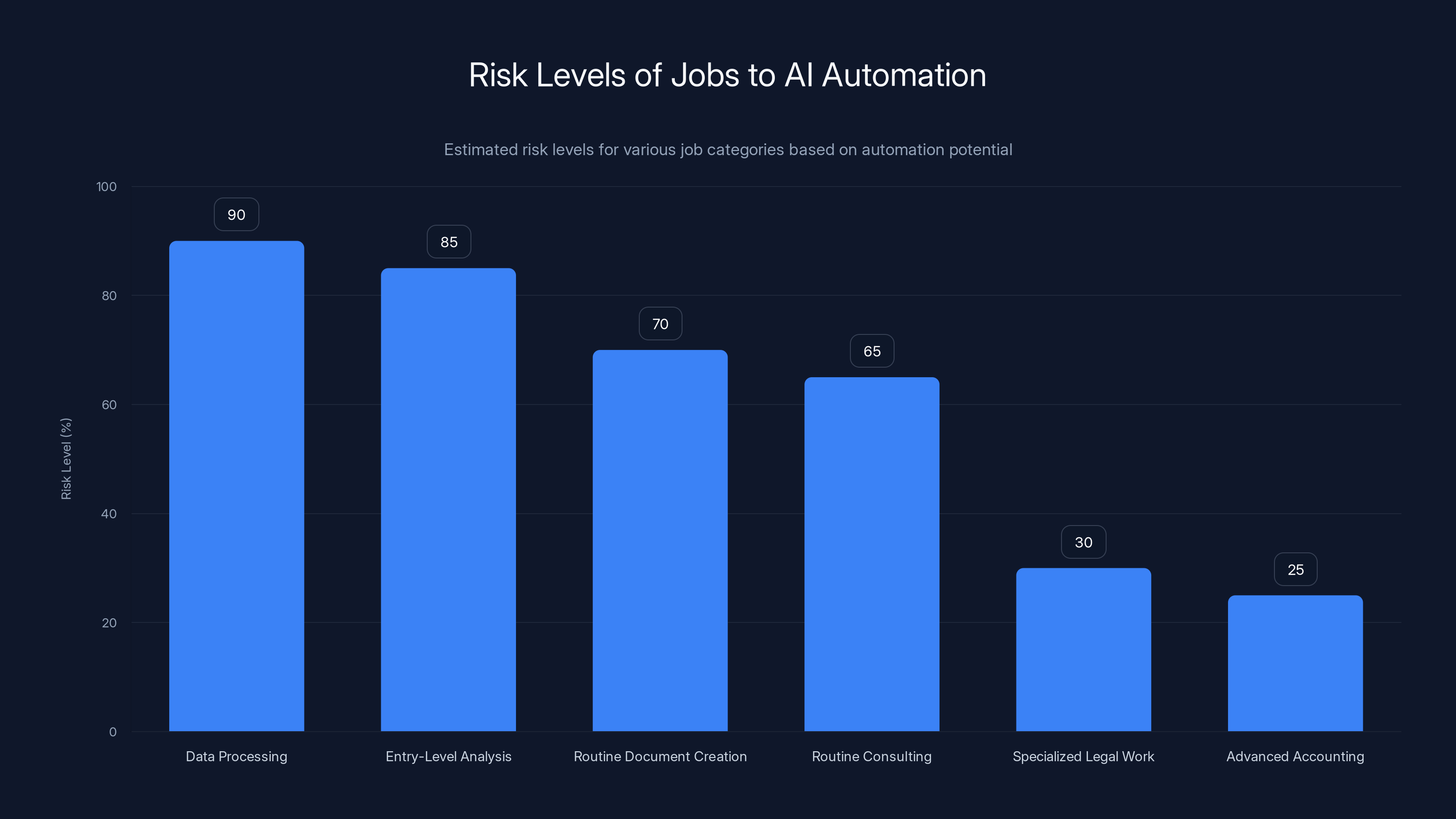

Entry-level positions with routine tasks face higher risk from AI, while roles requiring judgment and expertise, like trial law and executive leadership, are less vulnerable. Estimated data based on task nature.

What AI Can Actually Do Right Now (And What It Can't)

Let's be precise about AI's current capabilities, because the gap between "can do" and "will do" is enormous.

Where AI is Genuinely Strong:

Routine information retrieval and synthesis. Ask Chat GPT to summarize a 50-page report and extract key findings—it'll do that well. Ask it to find relevant case law for a legal research task—it'll find options (though a lawyer still needs to verify they're relevant and cited correctly).

Pattern recognition on structured data. AI can identify anomalies in financial records, spot potential fraud signatures, flag unusual inventory movements. But again, a human needs to decide what to do about it.

Content generation for templates. Writing a standard email? AI excels. Writing a social media post? Great. Writing marketing copy for a product you understand? Very solid. But writing something truly novel that requires deep expertise? Still shaky.

Code completion and routine programming. AI can finish your function for you, auto-complete variable names, suggest common patterns. It's like autocomplete on steroids. But asking AI to architect a system for 10 million concurrent users? That still needs a senior engineer thinking through trade-offs.

Where AI Still Struggles:

Novel problem-solving that requires domain expertise. An accountant's job isn't just about following rules. It's about understanding a client's specific financial situation, spotting opportunities, identifying risks that don't fit standard patterns, and making recommendations that account for edge cases. AI can help with the research phase, but the judgment call still requires a human with 10 years of experience.

Interpretation of ambiguous requirements. When a client says "we want our marketing to resonate better," what they actually mean might be: "we need to reach a younger demographic," or "our message is getting lost in the noise," or "our competitors are eating our lunch." A human marketer reads between the lines. AI reads the literal text.

Relationship management and negotiation. "I've worked with that vendor for 15 years, and I know how to handle them. They'll push back on price, but they'll move on delivery timeline. Here's how we structure the deal." That's based on relationship context that AI simply doesn't have.

Ethical judgment in gray areas. Should we fire this employee? Should we approve this risky project? Should we pivot the entire product strategy? These aren't questions with clear right answers. They require judgment that comes from experience, intuition, and accountability. An AI can present options. A human has to decide and live with consequences.

The Honest Assessment:

AI is becoming incredibly useful as a tool. It's not becoming a replacement for white-collar workers yet. Instead, it's shifting the nature of the work: less time on routine tasks (research, drafting, summarizing, calculating), more time on judgment calls that require expertise and accountability.

This is honestly good news if you're a professional. It means your job gets less soul-crushing, not less real. But it also means you need to get good at working with AI—fast.

The Jobs Most Vulnerable to AI Automation (And Why)

If we're being realistic, some white-collar jobs are definitely at higher risk than others. It's not all jobs equally. It's specific roles, specific functions.

Highest Risk: Data Processing and Entry-Level Analysis

Data entry jobs are already being eliminated. Companies are literally saving money by automating these roles right now, not in 12 months. Why? Because the task is fully routine, AI is already excellent at it, and there's no relationship component.

Same with basic data analysis. If your job is "run these reports, put numbers in a spreadsheet, send to the boss," AI can do that. Not perfectly, but well enough that a manager can quickly spot-check and publish.

Entry-level research roles are vulnerable too. Junior associate at a law firm doing legal research? Contract researcher gathering market data? Junior analyst preparing background on a company? These jobs might not disappear, but they'll require fewer people. One junior analyst plus AI might do the work of three juniors five years ago.

Medium Risk: Routine Document Creation and Routine Consulting Work

Any job that's mostly about following a template and filling in variables. Basic tax preparation (not complex cases). Routine legal document generation (not litigation strategy). Standard business proposal writing. These are all getting easier to automate.

Routine consulting too—the work that's "we've solved this problem 500 times, let me apply the standard solution." If you're doing the same advice over and over, AI can start handling more of it. A partner still needs to review and customize, but the work gets distributed differently.

Lower Risk: Jobs Requiring Deep Domain Expertise, Judgment, or Relationships

Specialized legal work. Trial lawyers aren't getting replaced by AI because trials require real-time judgment, reading a jury, adapting strategy based on how arguments land. That's not in a rule book.

Advanced accounting and tax strategy. For someone trying to optimize a complex corporate structure across multiple jurisdictions? That needs a seasoned advisor who understands the client's specific situation, the regulatory landscape, and creative legal strategies. AI helps with research, but a human makes the call.

Client-facing roles that depend on relationship and trust. A successful consultant or account manager isn't replacing themselves by handing things over to AI. Their value is knowing the client, understanding politics, spotting what the client needs before they ask for it, and having accountability for recommendations.

Leadership and strategy roles. "What should our company do?" That's not an AI question. It's a human judgment call that requires context, risk tolerance, board relationships, and the willingness to make a call and live with the consequences.

The Real Pattern:

Jobs are vulnerable when they're routine, when there's no relationship component, when there's no accountability on the AI, and when the work is already documented in systems. The jobs that'll survive longest are ones requiring judgment, relationships, and accountability.

Which means the real disruption isn't "AI replaces workers." It's "AI handles routine tasks, humans handle judgment." But if routine tasks are most of your job, you're at risk.

The "Artificial Capable Intelligence" Concept: What Suleyman Actually Believes

Suleyman specifically mentioned something called "artificial capable intelligence." It's his term (he coined it), and understanding what he means matters because it's more precise than just "AI will replace workers."

His idea is that AI is progressing through stages:

Stage 1: Narrow Task Automation (where we are now). AI excels at specific, defined tasks. Chat GPT is phenomenal at answering questions. Midjourney is phenomenal at generating images. But each tool does one thing.

Stage 2: Artificial Capable Intelligence (next 12-18 months, according to Suleyman). An AI system that can do many different tasks competently, switching between them, understanding context, adapting to new situations. Less like a specialist consultant, more like a capable generalist employee who can handle multiple responsibilities.

Stage 3: Artificial General Intelligence (the far future). An AI that matches human-level intelligence across all domains. Can do anything a human can do. This is still theoretical and may not be possible.

Suleyman's 12-18 month prediction is specifically about Stage 2: capable AI, not just narrow AI.

Here's the critical thing: even if capable AI arrives in 12-18 months, deploying it across billions of jobs is a totally different timeline. You can have a technology and not use it at scale for years. It takes time to:

- Train people on how to use it

- Figure out liability and insurance implications

- Get regulatory approval

- Integrate with existing systems

- Build the organizational processes around it

- Deal with the political and social friction of massive job displacement

So Suleyman's probably right that the technology will be ready in 12-18 months. But actual deployment? That's 3-5 years minimum for early adopters, 10+ years for broad adoption.

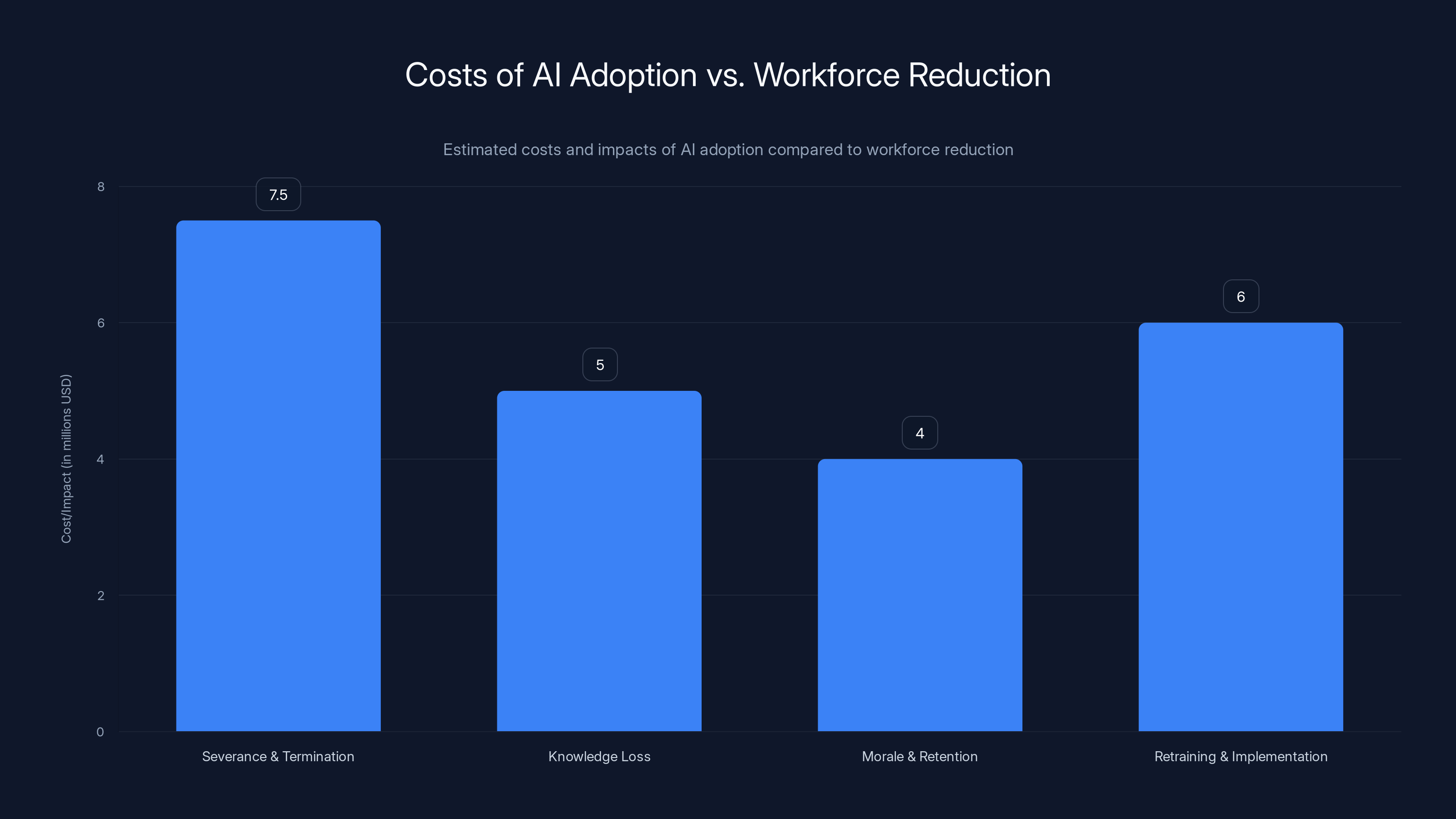

Estimated data shows that the costs of severance, knowledge loss, morale impact, and retraining can be significant, often outweighing immediate savings from workforce reductions.

Why Dario Amodei's 20% Unemployment Prediction Needs Context

Dario Amodei from Anthropic made a prediction that frankly spooked people more than Suleyman's. He said AI could cause unemployment of 20% in the next one to five years, potentially wiping out half of all entry-level jobs.

Again, this is technically possible. But the context matters:

Amodei presented this to Congress as a warning, not a prediction. He was saying: "This is the risk if we don't prepare. This is what could happen if we treat AI deployment the same way we've treated other economic disruptions—with no planning, no retraining, no safety net."

That's different from "this will definitely happen."

Historical precedent: When ATMs were introduced, people predicted massive unemployment among bank tellers. Teller jobs did decline, but not dramatically, because:

- Banks needed fewer tellers per branch but opened more branches

- Teller work evolved to include customer service and sales

- The pace of ATM rollout was gradual enough that the job market adapted

Same with email killing letter carriers (didn't happen at the scale predicted), or e-commerce destroying retail (it did cause disruption, but retail employment stayed relatively stable until the pandemic hit).

The pattern: disruption happens, but usually at the edges first, and usually slower than predicted. Entry-level jobs are definitely at highest risk. But 20% unemployment in 1-5 years would require:

- AI deployment at massive scale across enterprises

- Deliberate decisions by companies to cut staff instead of augment with AI

- No new jobs created to replace the displaced workers

- No government intervention or retraining programs

- No massive cultural or regulatory backlash that slows adoption

One or two of these might happen. All of them? Very unlikely.

The more realistic scenario: 20% of entry-level job roles get disrupted over 3-5 years, but the labor market adapts, new roles emerge (someone has to supervise the AI, train it, debug it, handle exceptions), and unemployment rises somewhat but doesn't hit 20%.

Is that still disruptive? Absolutely. Is it apocalyptic? Less likely.

What "Human-Level Performance" Actually Means (And Where AI Still Falls Short)

When Suleyman says AI will achieve "human-level performance on most, if not all, professional tasks," what does that actually mean?

It's ambiguous, and the ambiguity matters.

One interpretation: AI can do specific tasks as well as an average human. A lawyer using AI tools can draft a contract as well as a junior associate. An accountant with AI can prepare a tax return as well as someone with 2 years of experience.

Another interpretation: AI can perform entire job roles without human oversight. An AI lawyer can handle a client's legal needs. An AI accountant can run a company's books.

These are very different thresholds. The first is probably achievable in 12-18 months. The second is much further away.

Here's the honest truth: AI is great at narrow tasks. Can it match human performance on "draft a business email"? Yes. On "understand this company's entire legal exposure and recommend strategy"? Not yet.

Think about your own job. How much of it is truly routine? How much requires you to combine ten different pieces of information, consider edge cases, make judgment calls, and account for things that aren't in any training data?

Most professional jobs are probably 40-60% routine work and 40-60% judgment/context work. AI is currently excellent at the routine part. It's getting better at the judgment part, but it's not there yet.

Human-level performance on tasks doesn't mean human-level performance on jobs.

The Real Disruption: Augmentation, Not Replacement

Here's what I actually think will happen, based on how technology actually gets adopted:

AI will augment white-collar workers far more than it replaces them. At least for the next 3-5 years.

What this looks like in practice:

Lawyers: Instead of "AI replaces lawyers," you get "one lawyer plus AI tools replaces two lawyers." The lawyer spends less time on legal research (AI does that), more time on strategy and client relationships. Some junior associate positions disappear. The partner role evolves. The market absorbs the shift over 5-7 years.

Accountants: Automated accounting software already exists and hasn't eliminated accountants. What it did: eliminate data entry clerks, shift accountant work toward tax strategy and advisory. AI will accelerate that. Less time on reconciliation (AI does it), more time on tax planning and business advice. Some accounting firms contract. Others grow because they can serve more clients with fewer people.

Marketing: AI tools for content generation already exist. What actually happens: marketers spend less time on copy writing, more time on strategy and data interpretation. The ones who learn to work with AI get more productive. The ones who resist become less competitive. Some junior copywriter roles dry up. The market shifts over 3-4 years.

Project Management: AI can generate status reports, flag risks, suggest schedule optimizations. A project manager using AI is more productive. Does that mean half as many PMs are needed? Maybe. But if you can oversee more projects with less overhead, companies tend to increase the number of projects they tackle, not just cut PMs.

Strategic Work: This is the last to be automated because it requires judgment. An AI can be an excellent analyst and suggest options. A human executive chooses the strategy and takes accountability. This trend might not change much in the next 10 years.

The net effect: white-collar work evolves from routine task execution toward judgment and strategy. The number of white-collar jobs doesn't collapse. The profile of the workers changes. Some people adapt well. Some don't. The market absorbs it gradually.

This is honestly better than if AI just replaced workers, because it means white-collar work becomes less soul-crushing and more interesting. But it also means you need to get good at AI collaboration—because everyone else is.

This line chart illustrates the gap between predicted and actual AI advancements, highlighting the consistent delay in achieving AI milestones.

The Skills That Will Actually Survive AI Disruption

If you're worried about job displacement, stop worrying and start building skills that AI can't automate.

Critical Thinking and Judgment: AI can present options. Humans choose. The professionals who survive are the ones who make good choices, account for complexity, and take accountability for outcomes. This is fundamentally human and will remain valuable.

Relationship and Influence: Business is personal. A consultant's value isn't just their expertise. It's their ability to listen, understand unstated needs, build trust, and influence decisions. That's all human. AI can help with the information gathering part, but the relationship part is irreplaceable.

Complex Problem-Solving Across Domains: When problems touch multiple disciplines—when a business problem involves law, finance, operations, and strategy—you need a human who understands all of them and can navigate the politics. AI is great at narrow expertise. Humans are better at connecting across domains.

Teaching and Knowledge Transfer: The irony: as AI gets smarter, people need better training on how to use it. The people who can teach others to work with AI effectively will be highly valued. This skill becomes more valuable as AI becomes more common.

Ethical and Regulatory Navigation: When decisions affect people—hiring, lending, treatment, content moderation—regulators want humans accountable. An AI can make the recommendation, but a human needs to approve it and take responsibility. The professionals who understand both the AI and the regulatory landscape will be needed.

Specialized Domain Expertise: If you deeply understand something that's hard to encode in AI (like surgery, or litigation strategy, or managing teams), your job is more secure. The moat is: "I know things that AI doesn't." The wider your expertise, the harder it is for AI to replicate.

The Common Thread: The jobs that survive are the ones where human judgment, accountability, and relationships matter. Not because AI isn't smart enough, but because society won't let AI be the final decision-maker for things that affect humans.

If your job is 100% routine task execution, that's at risk. If your job involves any of the above? You're probably fine if you adapt.

What Companies Are Actually Doing (Not What They're Saying)

There's a big gap between the hype about AI automation and what companies are actually doing with AI right now.

What companies say: "We're deploying AI to transform our business."

What companies are actually doing: "We're using AI to help employees do their existing jobs faster."

Why the gap? Because actually replacing workers at scale is hard. You need regulatory approval. You need to handle severance. You need to manage morale ("if the company fires people, are we next?"). You need to retrain remaining staff. You need to handle the PR ("we eliminated jobs with AI" plays poorly).

But you do need to handle rising costs. Salaries go up 3-4% annually. AI productivity tools cost $5-50/person/month and don't demand raises. So companies are adopting AI to maintain productivity with the same headcount, not to cut headcount.

This means:

Near-term (next 2-3 years): AI adoption increases productivity. Headcount stays relatively flat. Some hiring slows down. Some low-value jobs convert to machines. Overall employment doesn't collapse.

Medium-term (3-7 years): As AI becomes table stakes, companies that adopted early have a competitive advantage. They can undercut on price or over-deliver on quality. Competitors have to adopt or die. Employment consolidates—winners grow, losers shrink. Some job categories disappear. New ones emerge.

Long-term (7+ years): We're in uncharted territory. Society will have adapted, new regulations will exist, new job categories will have emerged, and we'll be worrying about something different.

The historical pattern: when technology disrupts an industry, it doesn't happen overnight. It takes 5-15 years. Some workers transition successfully. Some don't. New opportunities emerge. Society gradually adjusts. We look back and say, "Of course that happened," and can't imagine why we didn't see it coming.

The Regulatory and Political Headwinds

One thing tech leaders often underestimate: regulation and politics will slow AI deployment.

EU AI Act: Already passed. Restricts high-risk AI applications. Requires transparency, human oversight, documentation. This slows deployment.

US Executive Orders: Biden issued an AI safety executive order. It doesn't ban AI, but it requires federal agencies to evaluate risks before adoption. State regulations are coming too.

Labor Politics: As AI eliminates jobs, there will be political pressure to tax AI adoption or slow deployment. Expect unions to push back. Expect politicians to grandstand about "protecting American jobs."

Liability Concerns: If AI makes a bad decision (fires the wrong person, denies a loan unfairly, provides wrong medical advice), who's liable? The company? The AI vendor? The algorithm designer? Until this is clarified, companies will move slowly.

Ethical Pressure: Companies care about brand reputation. If a company is perceived as using AI to ruthlessly cut jobs, it affects recruitment, client relationships, and PR. Most companies will use AI to augment, not replace, for reputation reasons if nothing else.

All of these slow deployment to probably 50-70% of what pure technical capability would allow.

Suleyman's 12-18 month prediction assumes technical capability. But deploying at scale? Regulatory approval, training, integration, and political friction could stretch that to 3-5 years easily.

Data processing and entry-level analysis jobs are at the highest risk of AI automation, while specialized legal work and advanced accounting face lower risks. Estimated data based on job functions.

What You Should Actually Do (Practical Steps)

Enough doom and gloom. Here's what you should actually do:

Immediately (Next Month):

Pick an AI tool and use it on your actual work. Chat GPT Plus, Claude, Runable—doesn't matter which. Use it to:

- Summarize something you normally summarize manually

- Draft something you normally draft manually

- Analyze data you normally analyze manually

Spend 5-10 hours actually getting good at using it. Not playing with it. Actually integrating it into your workflow. You'll learn what AI is actually good at versus the hype.

Next 3 Months:

Map your job to routine versus judgment work. Look at your calendar for the past month. What did you spend time on that was routine? What required judgment?

For the routine stuff, figure out if AI or automation could handle 50% of it. If yes, learn those tools. If no, why not? (Sometimes the answer is: "the tools don't exist yet" or "our systems are too old." That's important information.)

Next 6 Months:

Start building skills in the categories I mentioned: judgment, relationships, complexity, teaching, ethics. If your job is pure routine, start looking at adjacent roles that involve more judgment. Have conversations with people in those roles. See if you can transition.

If you're in leadership, think about how AI affects your team. Which people will adapt easily? Which will struggle? Can you help them transition before the market forces it?

Next 1-2 Years:

Keep learning. AI will change every 6 months. The tools get better. New tools emerge. Workflows evolve. Stay in the loop. Join online communities where people discuss AI tools for your profession. (There are probably Slack channels or Reddit communities specific to your field already.)

Most importantly: don't panic. Panic makes you reactive. Learning makes you proactive. Panic leads to poor decisions. Learning leads to better positioning.

Case Study: How AI Is Actually Playing Out in Specific Industries

Legal Services:

Large law firms are already using AI for contract review, document analysis, and legal research. Firms like Weil, Gotshal & Manges deployed AI tools for due diligence. What happened? Not massive job cuts. Instead, they took on more clients, charged slightly less (more efficient), and shifted junior associate work away from "document review" toward higher-value tasks.

Some contract review positions went away. But paralegal and junior associate demand stayed relatively stable because the firm grew.

Small law firms haven't adopted as heavily because the upfront cost and integration work don't make sense for a 10-person firm.

Prediction: Big legal will adopt AI heavily over 2-3 years. Litigation practices (harder to automate) will grow. Transactional practices (easier to automate) will consolidate. Some junior associate positions will disappear. Partner roles will evolve. But the number of lawyers won't collapse.

Financial Services and Accounting:

Deloitte is already using AI for audit and tax preparation. Large firms are consolidating—merging because AI allows them to do the same work with fewer people. This is real job loss, but it's structural consolidation, not replacement overnight.

Small accounting firms are adopting AI slower. Some will disappear. Some will specialize (high-complexity tax, or niche consulting). The overall number of accountants probably declines 10-15% over 5 years, not 50% in 18 months.

Customer Service:

This one's already happening. Chatbots handle routine inquiries. Human agents handle complex issues. Some customer service rep positions went away. But companies opened more complex support roles (handling escalations, training the AI, managing edge cases).

Net job loss? Maybe 20-30% over 3-5 years. But not zero growth. The work shifted.

Software Development:

AI code completion tools (like GitHub Copilot) are becoming standard. Did this eliminate junior developers? No. It made good developers more productive. Some junior developer positions became more competitive (you need to be better to compete). Some expanded because companies could take on more projects.

Net effect: more senior developers, fewer junior developers, and a higher bar for entry into the field.

The Pattern:

In every case, AI is being used to augment, not replace. Companies using it well get more productive. They tend to grow, not contract. Jobs shift, don't disappear. The transition takes 3-5 years, not 12-18 months.

The Economics of AI Adoption (Why Companies Don't Just Cut Everyone)

Here's an economic reality that rarely gets discussed: even when AI can do something cheaper, companies don't always choose to cut headcount.

Why? Because the costs of replacing people are enormous:

Severance and Termination: If you cut 1,000 employees, severance (even just 2-3 months) might cost $5-10 million. Legal liability? Millions more.

Knowledge Loss: When you cut people, you lose institutional knowledge. That new person who left understood the client relationships, knew the weird workarounds in the system, understood the politics. Replacing that is hard.

Morale and Retention: If you cut 20% of staff, the remaining 80% know they could be next. Retention suffers. People job hunt. Your best people leave first. New hiring becomes harder. Productivity drops.

Retraining and Implementation: Building and implementing an AI system that replaces people takes time and money. Integration with legacy systems, testing, training, rollout. The project probably takes 1-2 years and costs millions.

Alternative: Grow, Don't Shrink:

If AI makes your team 20% more productive, a smarter move is: take on more work, grow revenue, and hire more people. You don't fire anyone. You just shift the work they do.

Or, let productivity gains become profit instead of payroll reductions. Your margins improve without cutting jobs.

This is why historically, productivity gains from technology don't lead to massive unemployment. They lead to economic growth and new jobs. Economists call this the "productivity paradox"—computers and automation keep improving, yet employment stays relatively stable (or grows) because:

- Productivity gains lead to lower prices

- Lower prices increase demand

- Increased demand requires more workers (to make more stuff, provide more services, etc.)

So AI will probably follow the same pattern: companies get more productive, the market grows, employment shifts but doesn't collapse, and 20 years from now we wonder why we ever thought AI would cause massive unemployment.

Estimated data based on tech leaders' predictions suggests high automation risk for various white-collar jobs, with lawyers facing the highest risk.

The Honest Case for Concern (Without the Doomsday Framing)

I don't want to be all "AI is fine, no worries." There are legitimate concerns.

Job Transitions Are Painful: Even if net employment stays stable, some people will lose their jobs. Transitioning to a new role sucks. Retraining takes time. Your paycheck might go down while you learn new skills. This is real hardship, even if it's not apocalyptic.

The Transition Might Be Faster Than We Can Handle: If AI adoption accelerates faster than expected, and companies do cut headcount aggressively, unemployment could spike 5-10% in certain regions or industries. That's painful even if it's not 20%.

Inequality Could Increase: High-skill workers (people who can work with AI) will be in demand and well-paid. Low-skill workers will face more competition and lower wages. This could exacerbate inequality. Politically and socially, that's a problem even if aggregate employment is fine.

Some Industries Will Contract: Some professions will genuinely shrink. Accounting might need 30% fewer people in 10 years. Entry-level positions in many fields will disappear. If you're in those fields, you need to plan.

Regulatory Risk: If governments panic about AI job displacement, they might introduce rules that slow innovation or economic growth. That could create secondary unemployment.

But none of this is "AI will replace most workers in 12 months." It's "AI will disrupt the labor market, people will struggle to adapt, we need better retraining programs, and some regions/industries will be hit harder than others."

That's a very different problem from what Suleyman and Amodei are describing. And very different solutions.

Policy Solutions That Actually Matter

If disruption is coming (and it probably is, just slower than predicted), policy matters.

Better Retraining Programs: Community colleges and online platforms need better AI training. Government should fund it.

Income Support During Transitions: If someone's job is disrupted, they need support while retraining. Unemployment benefits should be longer and more generous for job displacement.

Education That Evolves Faster: K-12 and college education are designed for jobs that existed in 1995. We need education that prepares people for continuous learning and adaptation.

Tax Policy That Shares Gains: If AI productivity goes to corporate profits and executive bonuses, inequality increases and resentment grows. Tax policy should ensure that some gains go to displaced workers or public benefits.

Regulation That Enables, Not Blocks: Excessive regulation could slow beneficial AI adoption. But absent regulation, companies will over-deploy AI in risky ways (hiring decisions, medical decisions, criminal justice). Smart regulation balances both.

These are solvable problems. Countries that invest in them will manage AI transitions well. Countries that don't will face political backlash and social disruption.

The Bottom Line: Suleyman's Right About Capability, Wrong About Timeline

Mustafa Suleyman is probably right that AI will become capable of doing most professional tasks at a human level within 12-18 months. The technical progress is real. The systems are getting better every month.

But "capable of" is different from "deployed at scale." And "deployed at scale" is different from "the end of white-collar work."

Here's what I think actually happens:

12-18 months: AI systems reach the technical capability to handle most routine professional tasks without human oversight. Big headlines. Lots of fear.

18-36 months: Enterprise adoption accelerates. Companies start deploying AI more aggressively. Some job categories show measurable decline. First meaningful labor market impact appears (unemployment in some fields ticks up). People starting getting nervous.

3-5 years: AI is standard in most large enterprises. Job market has adapted. Some positions disappeared. New ones emerged. Employment has shifted but not collapsed. We've adjusted to the new normal.

5-10 years: We're living in the AI-augmented economy. People look back and say, "That was surprisingly smooth. Why were we so panicked?"

The people who do well: ones who learned to work with AI early, adapted their skills, and repositioned toward judgment-heavy work.

The people who struggle: ones who didn't adapt, clung to routine work, and got displaced without a backup plan.

But collectively? We'll figure it out. Humans usually do.

Conclusion: Preparation Beats Panic

AI is coming. It's going to be disruptive. White-collar work will change. Some jobs will disappear. Some will evolve. New ones will emerge.

But it's probably not happening in 12 months. It's probably happening over 3-7 years. And the people who prepare now will do better than people who panic or wait.

So here's what you actually do:

Don't panic. Panicked decisions are bad decisions.

Do learn. Spend an hour this week getting good at an AI tool. Figure out where it helps in your workflow. Understand what it's good and bad at.

Do think strategically. Are you in routine work? Start planning a shift toward judgment work. Are you a manager? Start thinking about how your team adapts.

Do stay informed. AI changes fast. What's true today might be outdated in six months. Follow the real experts (not the hype), read good analysis, stay in the conversation.

Do advocate for better policy. If you're worried about disruption, push for retraining programs, better unemployment benefits, education reform. Policy actually matters here.

Mustafa Suleyman might be right about the direction. But the timeline? That's where things get fuzzy. And in that fuzzy space is where the real work of adaptation happens.

The future isn't predetermined. It's shaped by choices we make now. Make smart ones.

FAQ

Will AI really replace most white-collar workers in 12-18 months?

Probably not in 12-18 months, though AI will likely become technically capable of it. History shows technology adoption takes 3-5x longer than early predictions suggest. Regulatory hurdles, integration challenges, organizational resistance, and the need for human oversight will extend the timeline significantly. Some routine jobs will be disrupted faster, but complete white-collar automation across industries will take 5-10+ years.

What does "human-level performance" on professional tasks actually mean?

It typically means AI can perform specific, defined tasks (like drafting a document or analyzing data) as well as an average human. This is different from AI replacing entire job roles, which requires handling judgment calls, managing relationships, and taking accountability for outcomes. AI might excel at individual tasks while still requiring human oversight for complete job functions.

Which white-collar jobs are most at risk from AI?

Entry-level positions involving routine data processing, basic analysis, and document generation are highest risk. Jobs requiring minimal judgment or relationships, like data entry, basic legal research, and routine accounting, are vulnerable. In contrast, roles requiring deep expertise, client relationships, ethical judgment, and accountability—like specialized consulting, trial law, or executive leadership—face lower near-term risk. The vulnerability depends more on job content (routine vs. judgment) than job title.

Should I be worried about losing my job to AI?

Worry is unproductive, but preparation is smart. Audit your job for routine versus judgment work. If more than 80% of your time is routine, start upskilling toward judgment-heavy roles now. If you're in judgment-heavy work already, focus on becoming better at working with AI tools—that's your competitive advantage. The people who adapt early will outcompete those who resist.

What skills will AI not be able to automate?

Judgment in gray areas, relationship management, complex cross-domain problem-solving, ethical decision-making with accountability, teaching others to work with AI, and deep specialized expertise are all difficult for AI to fully automate. These skills remain valuable because they involve human judgment, accountability, and context that AI struggles with. Professionals who build these skills alongside AI capabilities will be most valuable.

How long will it actually take for AI to disrupt the job market?

Based on historical technology adoption patterns, meaningful disruption likely takes 3-7 years for early adopters, with broader impact over 10-15 years. Some specific job categories will show decline faster (within 2-3 years), while overall employment adjusts more gradually. The transition timeline depends on regulatory environment, company adoption speed, worker adaptability, and whether policy supports retraining and transition assistance.

What can governments do to manage AI job displacement?

Effective policies include funding comprehensive retraining programs, extending unemployment benefits for displaced workers, modernizing education to emphasize continuous learning, implementing smart AI regulation that enables beneficial adoption while restricting harmful uses, and ensuring tax policy shares productivity gains broadly rather than concentrating them. Countries that invest in these transitions will manage disruption better than those that don't.

Is AI adoption actually happening in companies right now?

Yes, enterprise AI adoption is accelerating. Large companies in finance, law, consulting, and tech are actively deploying AI tools to augment worker productivity. However, most companies are using AI to increase output with the same workforce, not to cut headcount immediately. The typical pattern is: AI adoption leads to higher productivity, which leads to increased capacity and new opportunities, rather than layoffs—at least in the near term.

Try Runable For Automation and AI Tasks

Use Case: Automate repetitive professional tasks like report generation, document creation, and presentation building with AI-powered workflows

Try Runable For Free

Key Takeaways

- AI will likely become technically capable of automating professional tasks in 12-18 months, but actual deployment will take 3-7 years due to regulatory, organizational, and integration friction

- Historical technology disruption patterns show adoption timelines are consistently 3-5x longer than expert predictions—cloud computing, ATMs, and mobile banking all followed this pattern

- Jobs vulnerable to AI are those with 60%+ routine task content; judgment-heavy work requiring expertise, relationships, and accountability will remain valuable for 10+ years

- Most companies are using AI to augment worker productivity rather than replace headcount, shifting work from routine tasks toward judgment and strategy

- Professional survival depends on building skills AI can't automate: critical thinking, relationship management, cross-domain problem-solving, ethical judgment, and teaching others to work with AI

Related Articles

- Fractal Analytics IPO Signals India's AI Market Reality [2025]

- The Hidden Cost of AI Thinking: What We Lose at Work [2025]

- MIT's Self-Distillation Fine-Tuning: Solving LLM Catastrophic Forgetting [2025]

- NanoClaw: The Secure Agent Framework Fixing OpenClaw's Critical Flaws [2025]

- OpenAI vs Anthropic: Enterprise AI Model Adoption Trends [2025]

- Amazon's 16,000 Layoffs: What It Means for Tech Workers [2025]

![Will AI Really Replace White-Collar Workers in 12-18 Months? [2025]](https://tryrunable.com/blog/will-ai-really-replace-white-collar-workers-in-12-18-months-/image-1-1771272528057.jpg)