Introduction: The Environmental Reckoning for Artificial Intelligence

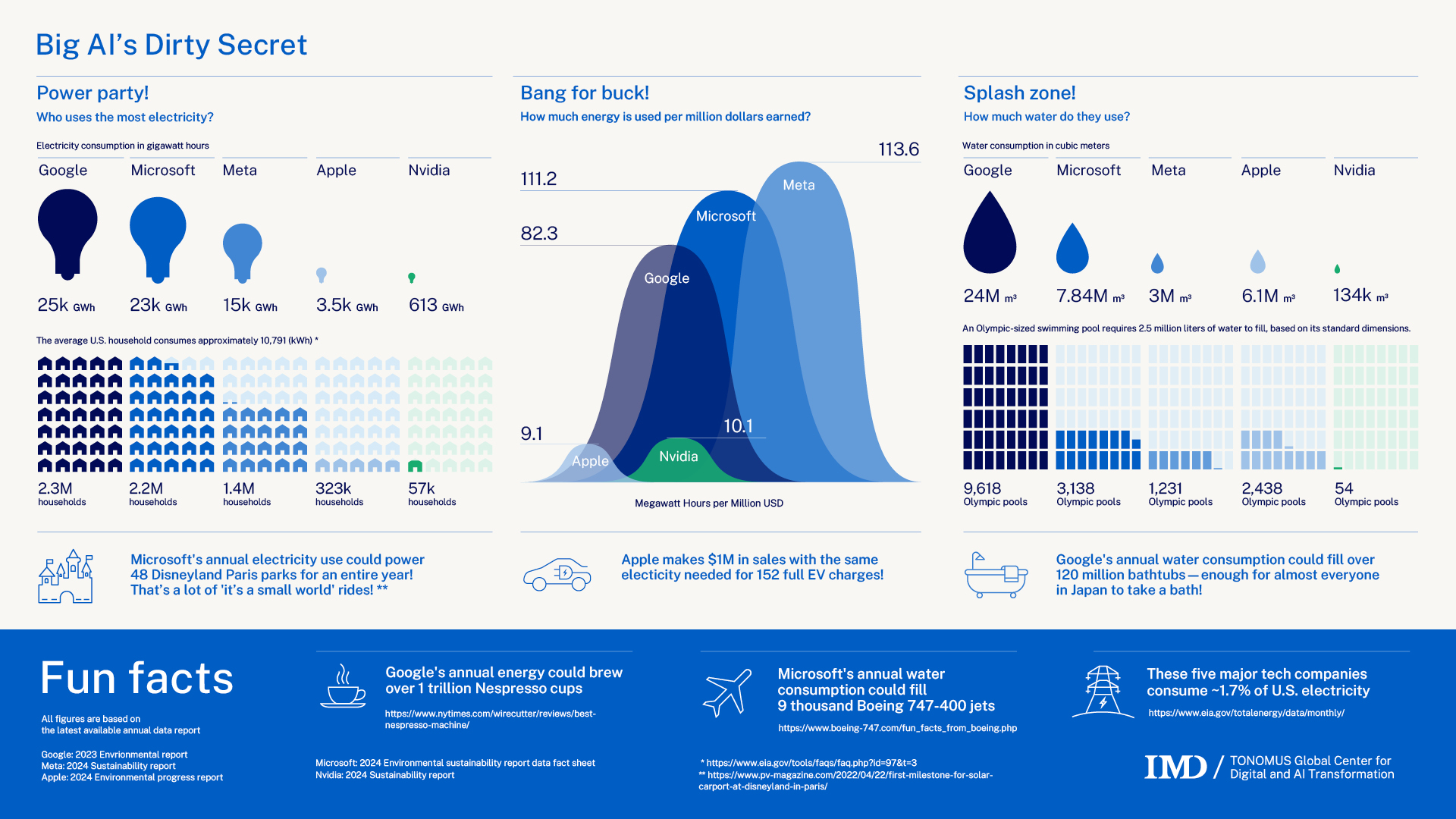

Last year, a viral chart claimed that training Chat GPT consumed enough water to fill Olympic swimming pools. The number was everywhere. Social media posts. News articles. Think tank reports. Then Sam Altman stepped in and called it "completely untrue."

Here's the thing, though. He was right to push back on the water numbers. But he also admitted something more important: AI's energy consumption is actually a serious problem. And it's only getting worse.

This matters because AI adoption is accelerating everywhere. Hospitals use it for diagnostics. Banks use it for fraud detection. Marketing teams use it to generate campaigns. Every query to Chat GPT, every image generated on Midjourney, every code completion from Git Hub Copilot—it all burns electricity. Real electricity. From real power plants.

The environmental debate around AI has become polarized. One side claims the sky is falling. The other dismisses concerns entirely. The truth? It's complicated, but undeniably important.

This article breaks down what we actually know about AI's environmental footprint. We'll separate the hype from the hard data, explore where the real problems lie, and explain why energy consumption matters far more than water use in the conversation about AI sustainability. Whether you work in tech, policy, or just care about the planet, understanding AI's environmental impact is essential to making informed decisions about the tools we build and deploy.

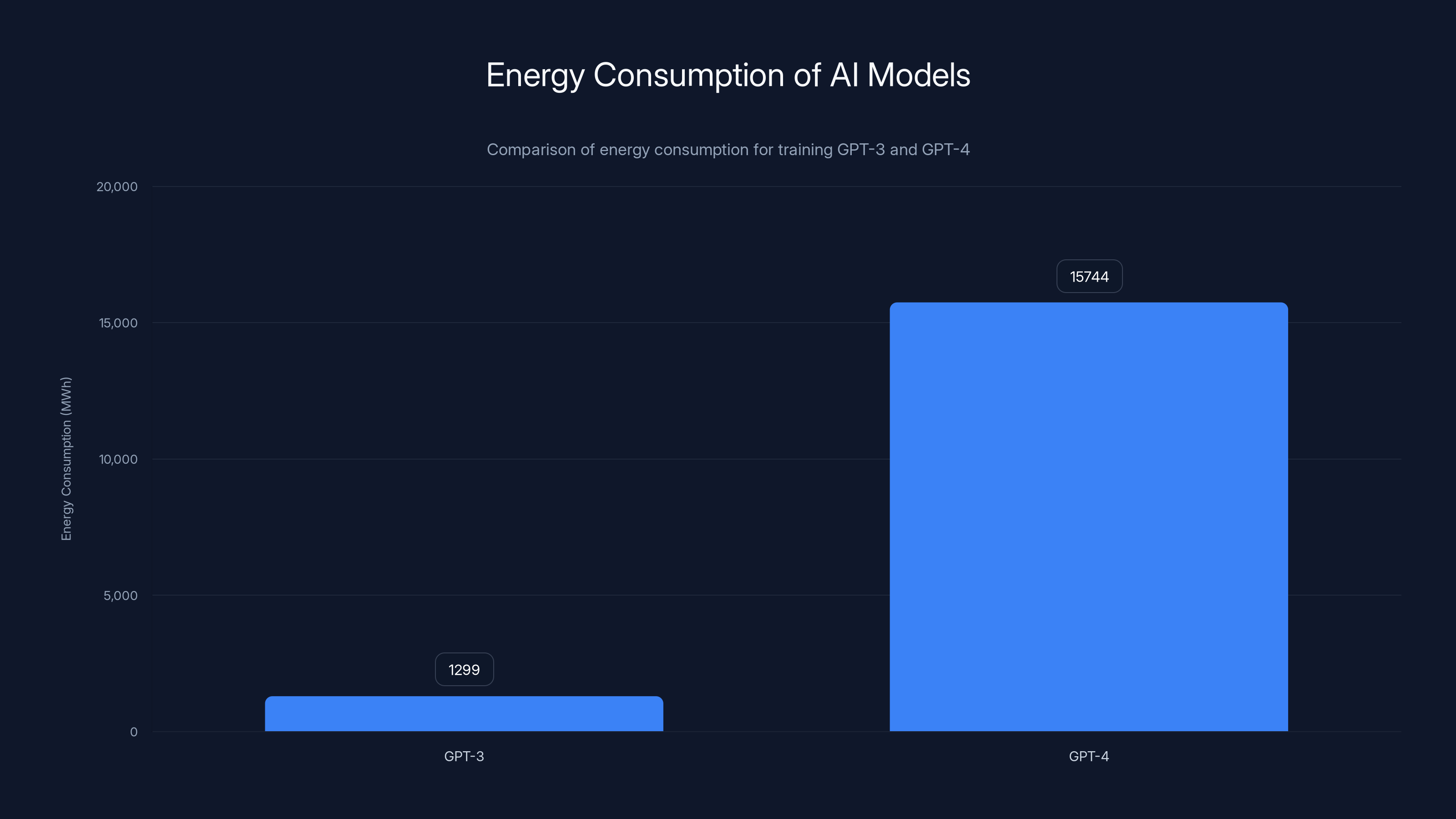

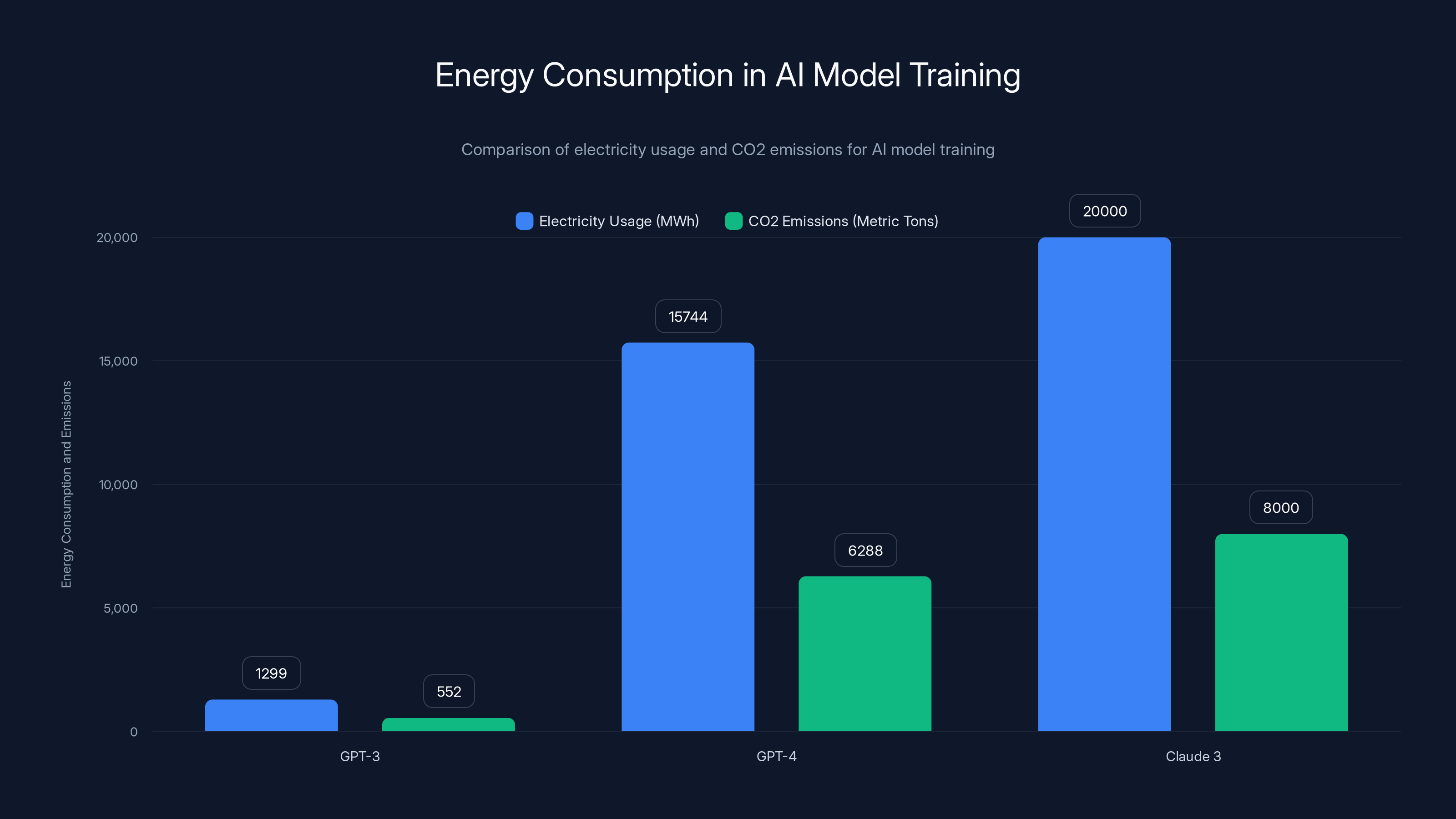

GPT-4's training consumed approximately 15,744 MWh, about 12 times more than GPT-3, highlighting the increasing energy demands of advanced AI models.

TL; DR

- Water claims were exaggerated: Chat GPT's training used far less water than viral claims suggested, though the exact numbers remain difficult to verify

- Energy consumption is the real issue: AI training and inference require massive amounts of electricity, with some estimates suggesting data centers account for 2-4% of global emissions

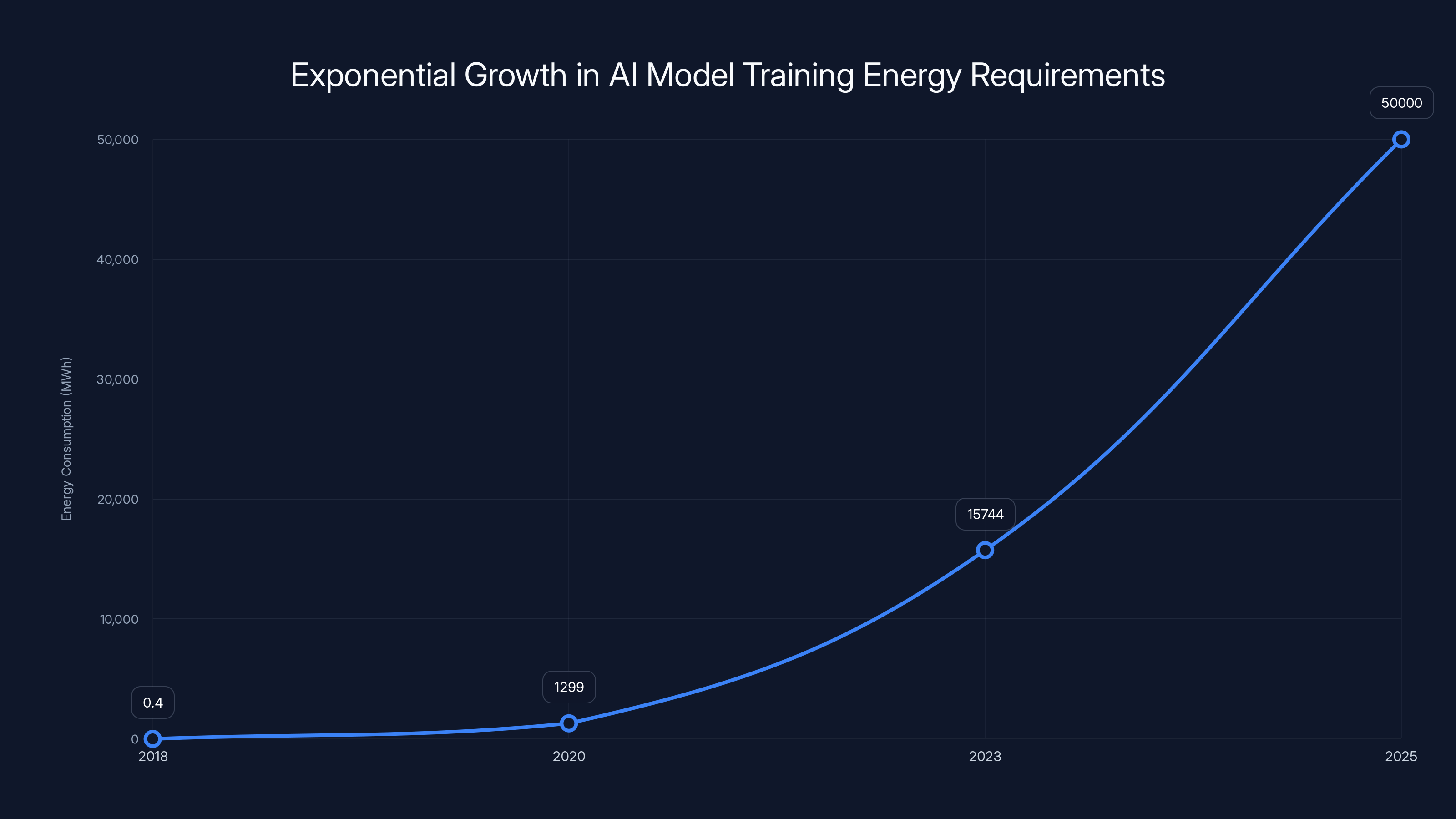

- The problem is scaling: As AI models get larger and more prevalent, energy demands grow exponentially, not linearly

- Renewable energy helps, but isn't a silver bullet: Data centers powered by renewables still consume scarce resources and compete with other grid demands

- Transparency matters most: Companies rarely disclose environmental costs, making it nearly impossible for users to understand their actual impact

- The future depends on efficiency innovations: Quantization, pruning, and better hardware architectures could reduce energy demands significantly

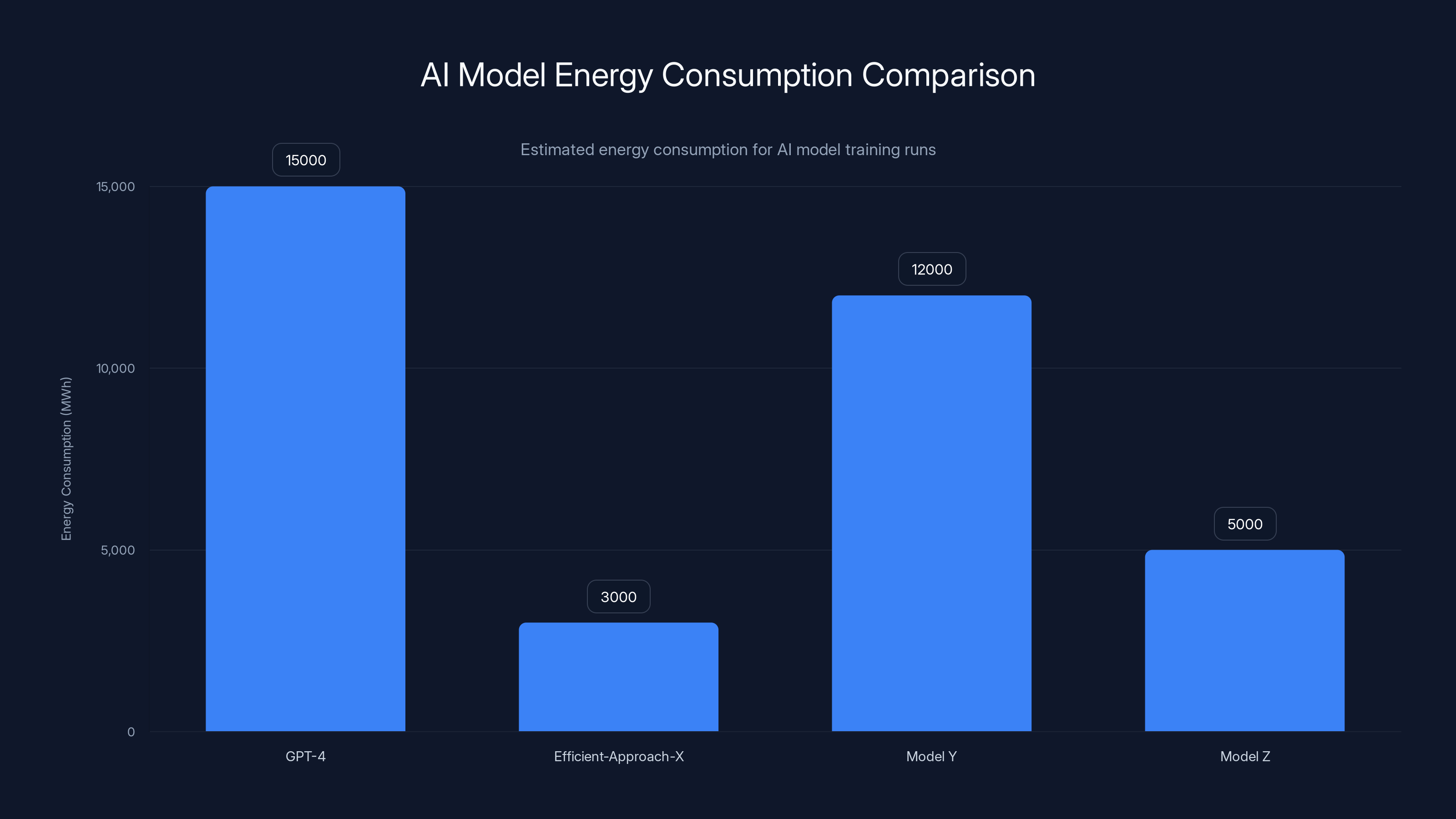

Estimated data shows GPT-4 consumes significantly more energy compared to Efficient-Approach-X, highlighting the potential for efficiency improvements in AI model training.

The Water Use Controversy: What Actually Happened

In 2023, researchers published a study estimating that training large language models like Chat GPT required significant water consumption. The headlines were dramatic: "Chat GPT's water footprint rivals a small city." "Open AI's model drank millions of gallons." News outlets ran with it.

The methodology was straightforward: researchers estimated the cooling water needed to keep data center servers from overheating during model training. They looked at evaporative cooling systems, which use water as a heat exchange mechanism. Then they multiplied estimated energy usage by water consumption ratios from public data center literature.

The problem? Those estimates were based on assumptions about power consumption, cooling efficiency, and data center specifications that nobody actually verified with Open AI. Different data centers have wildly different water efficiency profiles. Some use water-cooled systems. Others use air cooling. Some facilities recycle water; others don't. Without access to actual facility data, the researchers were working with educated guesses.

When Altman pushed back, saying the claims were "completely untrue," he had a point. The estimates were speculative. But his pushback created another problem: it implied water consumption wasn't worth discussing at all. That's where the nuance gets lost.

Here's what we actually know: Open AI later shared some environmental impact data, but it was limited and opaque. They disclosed that GPT-4 training required approximately 15.74 MWh of energy, which translates to carbon emissions of roughly 6,288 metric tons of CO2. Converted, that's roughly equivalent to the annual emissions from 1,200 gasoline-powered cars.

But they didn't break down water consumption separately. Nobody did. That's the frustrating part. The water debate became a proxy for a larger problem: tech companies don't report environmental metrics consistently, making verification nearly impossible.

Energy Consumption: The Real Environmental Problem

Forget water for a moment. Energy is where the actual environmental issue lives.

Training a large language model requires running billions of parameters through trillions of data points. The computation is staggering. GPT-4 likely required somewhere in the range of 10-100 Exa FLOPS of computation (that's quintillions of floating-point operations). Sustaining that level of computation for weeks or months consumes enormous amounts of electricity.

Here's the scale:

- GPT-3 training: Approximately 1,299 MWh of electricity, generating roughly 552 metric tons of CO2

- GPT-4 training: Approximately 15,744 MWh of electricity, generating roughly 6,288 metric tons of CO2

- Claude 3 training: Estimated 20,000+ MWh based on publicly available information about model size and complexity

But training is only half the story. Open AI trains models once, then deploys them. The real ongoing energy consumption comes from inference—every time someone asks Chat GPT a question, the model runs a computation.

Inference is less energy-intensive than training per query, but the cumulative impact is massive. Chat GPT now handles 200 million active users monthly. Even at a fraction of a cent worth of electricity per query, the aggregated consumption is enormous.

Researchers at MIT and other institutions have estimated that data centers collectively account for 2-4% of global greenhouse gas emissions. That's more than aviation, similar to the emissions from manufacturing. And that includes everything: email servers, streaming platforms, social media, storage—not just AI.

But AI is the growth engine. As models get larger and more capable, energy consumption doesn't scale linearly. It scales exponentially. A model twice as large doesn't use twice as much energy; it might use three or four times as much.

The carbon emissions from training a single cutting-edge model can equal what an average person produces in years. This raises an uncomfortable question: is the productivity gain worth the environmental cost? Nobody's seriously calculating that trade-off.

AI model training energy requirements have grown exponentially from 0.4 MWh in 2018 to an estimated 50,000 MWh by 2025. This highlights the increasing demand for computational resources in AI development.

Where Data Centers Get Their Power: The Carbon Question

Electricity isn't electricity. Its carbon footprint depends entirely on how it's generated.

A megawatt-hour from a coal power plant emits roughly 820-1,050 kg of CO2. The same megawatt-hour from a wind turbine? Essentially 0 kg (after accounting for manufacturing and maintenance). Solar is in between, typically 30-40 kg per megawatt-hour over its lifetime.

This is where the environmental narrative gets complicated. Google, Microsoft, and Open AI have all made commitments to operate data centers on renewable energy. Google claims its data centers now match 100% of their consumption with renewable energy purchases. Microsoft has committed to being carbon-negative by 2030.

These are real commitments. But they come with important caveats.

First, renewable energy isn't unlimited. Every megawatt of renewable energy powering a data center is a megawatt not available for other uses. Cities, homes, and businesses are competing for the same renewable energy sources. Building new solar and wind farms takes time, capital, and regulatory approval.

Second, renewable energy purchases don't mean renewable power supply. When Google says they match 100% renewable, they're buying renewable energy credits from power producers elsewhere. The electricity flowing into their physical data center might still come from natural gas or coal. They're shifting money around, not necessarily shifting electrons. This is called "additionality," and it's genuinely contentious in climate science.

Third, manufacturing and infrastructure still have carbon costs. Building data centers requires concrete, steel, and rare earth elements. Manufacturing servers produces emissions. Installing solar panels and wind turbines requires resources. The full lifecycle carbon footprint is larger than just operational electricity.

Here's the math on renewable power:

If electricity usage is 15,000 MWh and grid carbon intensity is 200 kg CO2/MWh (a mix of renewables and natural gas), that's 3,000 metric tons of CO2. Add embodied carbon from infrastructure, and you're closer to 4,000+ metric tons for training a single model.

For context, that's equivalent to:

- Driving a car for 6 million miles

- Flying 800 people from New York to London

- Powering an average household for 400+ years

The Scaling Problem: Exponential Growth in AI Demand

We're at an inflection point. AI adoption is accelerating exponentially while model sizes and compute requirements are also growing exponentially.

Consider the numbers:

- 2018: Open AI's GPT-2 training required approximately 0.4 MWh of energy

- 2020: GPT-3 training required 1,299 MWh (roughly 3,200x increase)

- 2023: GPT-4 training required 15,744 MWh (12x increase from GPT-3)

- 2025+: The next generation will likely require 50,000+ MWh based on observed trends

This isn't just Open AI. Anthropic is training Claude, which requires comparable compute. Google is training Gemini. Meta released Llama, which was trained on massive compute infrastructure. Microsoft is heavily invested in infrastructure for both Copilot and Azure AI services.

But it's not just about training the biggest models. It's about the proliferation of models. Companies are fine-tuning general-purpose models for specific tasks, creating thousands of specialized AI models. Each requires compute. Each produces emissions.

And inference demands are exploding. Chat GPT serves 200 million monthly active users. Microsoft's Copilot features are integrated into Windows, Office, and Git Hub. Google is embedding AI into Search, Gmail, and Workspace. The cumulative inference energy is growing faster than anyone predicted.

Here's what should worry us: nobody knows the exact current global AI energy consumption. Different methods of calculation produce vastly different numbers. Estimates range from 200 TWh annually (equivalent to 0.5% of global electricity) to over 1,000 TWh annually (equivalent to 2.5% of global electricity).

The variance exists because:

- Training is episodic and one-time (hard to track)

- Inference is continuous and distributed across thousands of servers (harder to measure)

- Companies don't report detailed energy metrics (no standardization)

- Different counting methodologies produce different results

What's not in doubt: it's growing. Rapidly.

Plans at major cloud providers suggest aggressive expansion. Microsoft has announced plans to build massive new data centers. Google is acquiring geothermal energy rights to power new facilities. Amazon is expanding AWS infrastructure. This capital expenditure is enormous—tens of billions of dollars. And it's based on the assumption that AI demand will continue exploding.

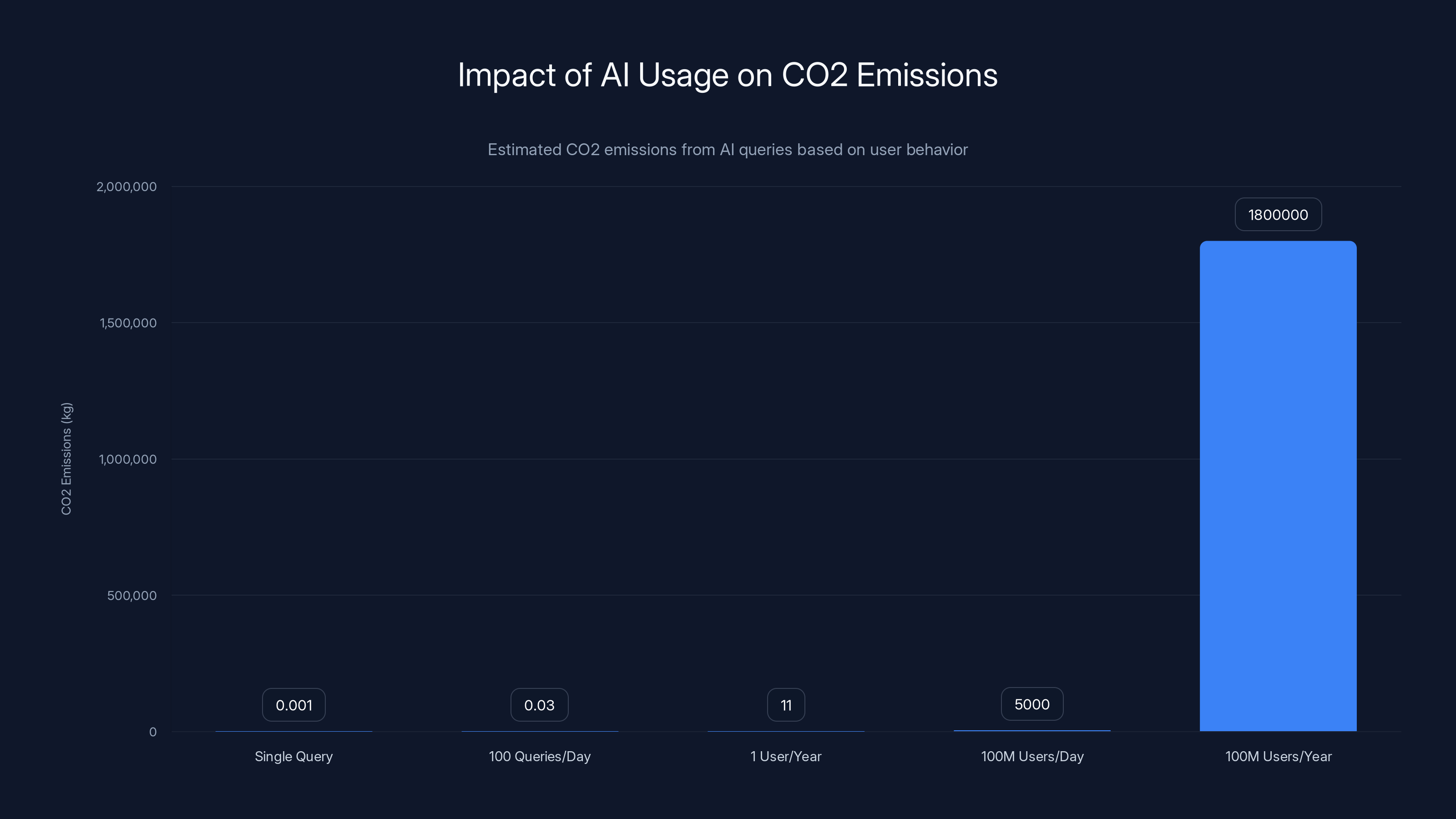

Individual AI usage can accumulate significant CO2 emissions, with 100 million users potentially generating 1.8 million metric tons annually. Estimated data.

Efficiency Innovations: Can We Do More With Less?

The good news: research into AI efficiency is advancing rapidly. If we can reduce the energy required per inference or per training run, we can decouple AI capabilities from environmental impact.

Quantization is one approach. Large language models use high-precision numerical representations (typically 32-bit floating-point). Quantization reduces this to lower precision (16-bit, 8-bit, or even 4-bit). The trade-off? Slightly reduced accuracy. But for many applications, the accuracy loss is imperceptible while energy savings are 2-4x.

Pruning removes redundant parameters. Machine learning researchers have discovered that large neural networks contain significant redundancy. You can remove 50-90% of the parameters without meaningfully impacting performance. Smaller models require less energy to run.

Distillation trains a smaller "student" model to mimic a larger "teacher" model. The student learns the teacher's behavior while being more efficient. This is how Anthropic produces both Claude 3 Opus (powerful, expensive) and Claude 3 Haiku (fast, cheap).

Hardware innovations matter too. NVIDIA continues improving GPU efficiency. Google's TPU (Tensor Processing Unit) is custom-designed for machine learning, consuming less energy than general-purpose GPUs. Apple's neural engine chips are optimized for on-device inference, moving computation from power-hungry data centers to users' phones.

Here's the problem with all these innovations: they're not keeping pace with model growth. For every efficiency innovation, someone trains a larger model. The problem becomes a treadmill—we're always running faster just to stay in place.

Take GPT-3 versus GPT-4. Researchers likely applied some efficiency improvements during GPT-4 development. But the model is so much larger that energy consumption still increased 12x. That's not a success story for efficiency—it's an illustration of how quickly capability increases outpace efficiency gains.

Scope 3 Emissions: The Hidden Cost You're Not Tracking

Climate accounting has a framework called "Scopes." Scope 1 is direct emissions from facilities you own. Scope 2 is emissions from electricity you purchase. Scope 3 is everything else in your supply chain.

Most companies only report Scope 1 and Scope 2. They ignore Scope 3.

For AI, Scope 3 is enormous. It includes:

- Emissions from electricity production and transmission

- Emissions from manufacturing servers and data center infrastructure

- Emissions from the mining of rare earth elements for semiconductors

- Emissions from shipping hardware globally

- Emissions from supporting human workers at data centers

When researchers calculate AI's full carbon footprint, they typically include Scope 1 and Scope 2 only. Adding Scope 3 could easily increase the figure by 20-40%.

But here's what's worse: consumer-level AI usage is almost entirely off the books. When you use Chat GPT, the energy consumed and emissions produced get attributed to Open AI's Scope 2. Not to your company. Not to your personal carbon footprint.

This creates a perverse incentive structure. Individual users have no motivation to minimize AI usage because they don't see the environmental cost. Corporations have no obligation to report AI-related emissions. The entire cost gets externalized to society.

Imagine if gasoline included carbon accounting at the pump. You'd see: "That gallon of gas costs $3.50 and produces 20 pounds of CO2." You'd make different choices. AI needs similar transparency.

Some companies are starting. Hugging Face released a carbon tracking tool for machine learning models. You can calculate the carbon cost of training or fine-tuning a model and choose lower-emission alternatives. But adoption is minimal. Most users don't know it exists.

GPT-4 training consumes significantly more electricity and generates more CO2 emissions compared to GPT-3. Claude 3 also has high energy demands. Estimated data for Claude 3 emissions.

Environmental Policies and AI Regulation: What's Actually Being Done

Policymakers are waking up to AI's environmental impact, but slowly.

The European Union's proposed AI Act includes environmental requirements, though they're vague. Data center operators must report energy consumption and carbon emissions. But the regulations don't limit how much energy AI systems can consume—they just require transparency.

The U. S. has no comprehensive federal policy on AI energy consumption. The White House released an AI Executive Order in 2023 that touched on environmental concerns, but it lacks enforcement mechanisms. Some states are pursuing their own policies. California requires large data centers to report water consumption, which indirectly impacts AI operators. But it's not AI-specific regulation.

China has aggressive data center efficiency standards. All new data centers must achieve PUE (Power Usage Effectiveness) of 1.3 or better, meaning for every watt of useful computation, no more than 0.3 watts are wasted on cooling and overhead. It's the toughest standard globally. But even this doesn't limit absolute energy consumption for AI—it just improves efficiency within permitted parameters.

The challenge with regulation: defining what's "reasonable" energy consumption for AI is nearly impossible. How much electricity should training Chat GPT-5 be allowed to consume? There's no technical answer. It depends on value judgments: Is the capability worth the environmental cost? Who gets to decide?

International coordination is mostly absent. There's no global standard for measuring AI carbon emissions. Different countries have different power grids with different carbon intensities. An AI data center in Iceland (powered by geothermal energy) has a vastly different impact than one in coal-heavy regions.

The result: companies can cherry-pick locations and calculation methods to make their environmental impact look better than it is.

Comparative Analysis: AI Energy Consumption Versus Other Industries

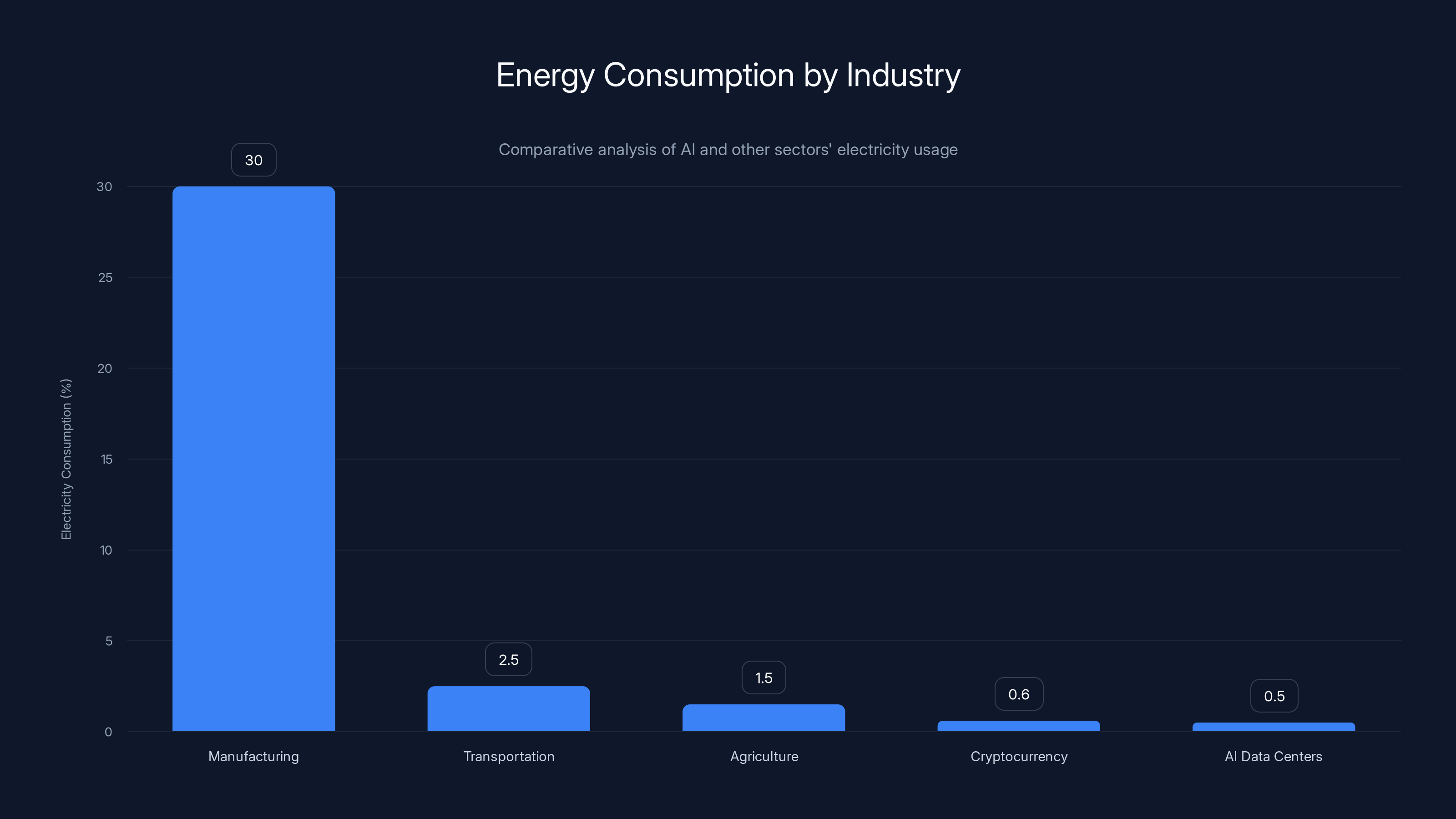

Context matters. How does AI's energy consumption compare to other sectors?

Manufacturing: The global manufacturing sector consumes roughly 30% of global electricity. AI data centers are a tiny fraction of that. But they're growing faster than almost any other sector.

Transportation: Aviation consumes about 2-3% of global electricity and produces roughly 2% of CO2 emissions. AI might be on track to match or exceed aviation within a decade.

Agriculture: Modern agriculture is energy-intensive (fertilizers, machinery, irrigation). It consumes roughly 1-2% of global electricity. AI data centers are approaching this level.

Cryptocurrency: Bitcoin mining consumes an estimated 150+ TWh annually, roughly equivalent to the electricity consumption of Argentina. AI consumption is currently less, but growing faster.

The honest comparison: AI isn't the biggest environmental culprit today. But it's accelerating toward significance. And unlike many other energy-intensive industries, AI's energy consumption doesn't produce tangible physical goods. It produces information and capability.

This creates a philosophical question: Is the knowledge generated by AI valuable enough to justify the environmental cost? A nuclear power plant powering a hospital seems justified. A nuclear power plant powering a data center so people can generate AI-written emails? That's a harder moral calculus.

AI data centers currently consume less electricity than major sectors like manufacturing and transportation but are growing rapidly, potentially matching sectors like aviation and agriculture within a decade. Estimated data.

The User's Role: How Individual Choices Impact AI Emissions

There's a narrative that blames individual users. "Stop using AI tools and you'll reduce emissions." That's incomplete. Individual action matters, but systemic change matters more.

But individual choices do accumulate. Here's the math:

- A single Chat GPT query consumes roughly 0.003 k Wh of energy

- That equals roughly 1 gram of CO2 (depending on grid carbon intensity)

- 100 queries per day = 30 grams of CO2

- A year of 100 daily queries = roughly 11 kg of CO2

For perspective, that's equivalent to driving a car 25 miles.

But millions of people use these tools. If 100 million people use AI tools daily with average queries of 50 per person (not unreasonable in 2025), that's 5 billion daily queries. At 1 gram per query, that's 5,000 metric tons of CO2 daily. Annually, it's roughly 1.8 million metric tons.

That's meaningful. Not world-changing, but meaningful.

What users can actually do:

-

Be intentional about AI usage: Don't use AI tools for tasks that don't require them. That $5 productivity gain isn't worth the environmental cost for every single email.

-

Choose efficient models: If you're paying for API access, use cheaper, smaller models. Claude 3 Haiku costs 90% less than Opus and consumes a fraction of the energy.

-

Batch requests: Instead of making 100 small queries, combine them into 10 larger queries. Fewer requests means lower total energy.

-

Demand transparency: Ask AI companies about their energy consumption and carbon footprint. Market pressure drives change faster than regulation.

-

Support policies: Vote for and advocate for politicians and policies that prioritize renewable energy and data center efficiency standards.

The Path Forward: What Needs to Change

We're at a crucial juncture. AI is becoming ubiquitous, but its environmental impact remains largely invisible. Three things need to happen:

First: Standardized Measurement and Transparency

Every major AI company should publish annual environmental impact reports, similar to how they publish security bulletins. Include:

- Total energy consumption (training + inference)

- Carbon emissions by data center location

- Renewable energy percentage (actual, not purchased credits)

- Embodied carbon from infrastructure

- Efficiency improvements over time

Without standardized measurement, we're arguing about shadows on a cave wall. Hugging Face, Anthropic, and smaller companies are starting this. It needs to become the norm, not the exception.

Second: Efficiency-First Development

The AI research community needs to prioritize efficiency alongside capability. Currently, the incentive structure rewards bigger, more capable models. Leaderboards track accuracy, not efficiency.

Imagine if model cards included: "GPT-4 achieves 85% accuracy on this benchmark while consuming 15,000 MWh per training run. Efficient-Approach-X achieves 82% accuracy with 3,000 MWh per training run. Choose based on your actual requirements."

This would encourage developers to use smaller, efficient models for tasks where they're sufficient. It would reward innovations in quantization, pruning, and distillation. It would shift the entire AI development trajectory.

Third: Regulatory Frameworks Based on Science

EU regulations are a start, but they're not aggressive enough. Governments should consider:

- Carbon pricing for AI compute (similar to carbon taxes on industries)

- Efficiency standards for data centers hosting AI workloads

- Mandatory disclosure of environmental impact before deploying AI systems

- Taxes on compute-intensive training runs that exceed efficiency thresholds

This might slow AI development in some areas. That's intentional. Not all AI applications deserve unlimited energy. Generating 100 variations of a marketing email doesn't. Developing medical diagnostic AI does.

Automation and Productivity: Do the Benefits Justify the Cost?

Here's the utilitarian argument: If AI saves knowledge workers 10 hours per week, and that translates to 4 billion additional productive hours annually across the workforce, isn't that worth the environmental cost?

Maybe. But we need honest accounting.

For tools like Runable, which automate document creation, presentation design, and report generation, the environmental calculation is different than for pure research models. Runable uses pre-trained models (most inference, not training) to help teams generate content faster. The energy per user is relatively modest compared to training a new model from scratch.

But even inference has cumulative cost. If Runable saves one person 5 hours per week, and that person would have otherwise been commuting to an office, there's a net environmental benefit. But if the person uses those 5 hours to generate more content (adding to overall internet traffic and energy consumption), the net benefit shrinks.

The honest answer: We don't know the net environmental impact of most AI tools because we don't have good baseline data. What would that person have done without AI? How does their alternative use of time impact overall emissions?

These are empirical questions that deserve research. Instead, companies deploy AI tools without any environmental accounting.

Investment Trends: Where Venture Capital Is Betting on AI Efficiency

Venture capital has noticed the efficiency opportunity. Billions are flowing into startups focused on AI optimization.

Hardware optimization companies are getting funded heavily. Cerebras designed a processor specifically for training AI models, claiming 10x faster training with lower energy. Graphcore builds specialized processors for machine learning. These companies are betting that custom silicon beats general-purpose GPUs for efficiency.

Model compression startups are emerging. Companies developing quantization and pruning techniques that reduce model size without accuracy loss are seeing investor interest. The value proposition is clear: do more with less compute.

Energy monitoring tools are being built. Startups are creating dashboards that let companies track their AI-related energy consumption and carbon footprint. It's unsexy work, but necessary for transparency.

Renewable energy + data center partnerships are forming. Companies are financing solar and wind farms specifically to power data centers. The economics work if you can lock in long-term power agreements.

The investment thesis makes sense: efficiency is profitable. Reducing energy consumption reduces operating costs. Companies will pay for tools that do that. But the investment still can't keep pace with the scale of the problem if model size continues growing exponentially.

Academic Research: What Researchers Are Actually Discovering

Universities and research labs are digging into AI's environmental impact with rigor missing from industry.

MIT researchers found that large language models exhibit severe performance degradation in low-precision environments, but with careful architecture design, you can recover most accuracy while using 80% less energy. The implication: we're wasting enormous amounts of compute through inefficient design choices.

UC Berkeley research into model pruning discovered that you can remove 90% of neural network parameters with minimal accuracy loss on many tasks. The paper showed that existing models are vastly over-parameterized for real-world use cases.

Stanford's foundation model report documented the environmental costs of training cutting-edge AI models and found that environmental cost reporting is nearly absent from papers describing new models. They advocate for mandatory environmental impact reporting as part of machine learning publishing standards.

The research consensus: significant efficiency gains are possible without sacrificing capability. We're not at the theoretical limit of efficiency. But the incentive structure doesn't reward pursuing it.

The Role of Data Centers in the Broader Grid

Data centers aren't isolated. They're part of the electrical grid, which means they compete with homes, hospitals, factories, and agriculture for available power.

In times of grid stress (extreme heat, extreme cold, maintenance), data centers add demand pressure that can raise electricity prices globally. This makes power more expensive for everyone else.

Some regions are experiencing real grid impacts. Taiwan, which manufactures most of the world's AI chips and hosts many data centers, has experienced power shortages directly attributed to data center expansion. Ireland has restricted new data center construction in some regions due to grid capacity constraints.

This creates a policy tension. Data centers bring jobs and tax revenue. But they strain infrastructure and raise energy costs. Communities are starting to push back.

One solution being piloted: demand response programs. Data centers volunteer to reduce energy consumption during grid stress in exchange for lower electricity rates during normal times. This helps stabilize the grid. But it only works if there's flexibility in when data center workloads run.

For AI inference (which happens when users request it), there's limited flexibility. You can't tell someone "wait 3 hours for your response because the grid is stressed." For training and batch processing, there's much more flexibility. You can schedule training runs to avoid peak grid demand.

Geopolitics and AI Energy Competition

Energy competition is becoming a geopolitical factor.

China is investing heavily in AI infrastructure and renewable energy simultaneously. The strategy: dominate AI research while also controlling the energy infrastructure that powers it. The US is following suit, but more reactively.

Whoever can produce AI with the lowest energy footprint has an economic advantage. This could drive genuine innovation in efficiency.

But there's also a race-to-the-bottom risk. Countries might relax efficiency standards or environmental regulations to attract data center investment. Poland and other Eastern European nations have offered incentives for data center construction, sometimes in regions with coal-heavy power grids.

The geopolitical risk: AI development could accelerate global climate emissions if energy-intensive development shifts to countries with less stringent environmental standards.

International agreements on AI energy efficiency would help level the playing field and prevent this kind of regulatory arbitrage. But global cooperation on AI is already difficult due to security concerns. Adding environmental standards makes it harder.

Conclusion: Separating Signal from Noise in the AI Environmental Debate

Let's return to where we started. Sam Altman was right that the water use claims were overstated and based on questionable assumptions. But his pushback inadvertently obscured a more important issue: AI's energy consumption really is significant and growing exponentially.

Here's what we know with confidence:

-

Training large models requires enormous amounts of electricity (15,000+ MWh for cutting-edge models). The carbon footprint is measurable and substantial.

-

Inference energy is harder to measure but potentially larger in aggregate because it happens continuously across millions of users. A single query might consume little energy, but billions of queries accumulate rapidly.

-

Current efficiency is not at theoretical limits. Significant energy reductions are possible through quantization, pruning, distillation, and hardware innovations.

-

The problem is accelerating. AI consumption is growing faster than efficiency improvements. Without intervention, AI could account for 5-10% of global electricity consumption within a decade.

-

Transparency is nearly absent. Companies don't report energy consumption and carbon emissions consistently. Without data, we can't make informed decisions.

-

Environmental costs are externalized. Users don't see the energy cost of their queries. Companies don't face financial penalties for high-energy models. Governments haven't regulated the sector. The result: perverse incentives.

What needs to happen:

Immediate: Mandatory transparent reporting of energy consumption and carbon emissions. Every major AI company publishes annual environmental impact reports.

Medium-term: Efficiency standards for data centers. Regulatory frameworks that require cost-benefit analysis before deploying energy-intensive AI systems. Tax structures that make external environmental costs internal to corporate decision-making.

Long-term: Fundamental research into more efficient AI architectures. Innovation in hardware specifically designed for AI workloads. Integration of environmental impact into ML research publishing standards.

The stakes are real but not yet catastrophic. AI's contribution to global emissions is still in the single-digit percentages. But the trajectory is concerning. Without intervention, AI could become one of the largest sources of industrial emissions within 15 years.

This isn't an argument against AI. Responsibly developed and deployed AI can solve enormous problems: disease diagnosis, climate modeling, protein folding, materials science. The question isn't whether AI should exist. It's whether we're willing to demand environmental accountability from the people building and deploying it.

The water debate was a distraction. Energy is where the real conversation needs to happen.

FAQ

What is AI energy consumption?

AI energy consumption refers to the electricity required to train and run artificial intelligence models. Training involves processing massive datasets through neural networks to optimize billions of parameters, consuming enormous amounts of computational power for weeks or months. Inference is the ongoing energy required every time someone uses a trained model (like asking Chat GPT a question). Combined, these two processes consume hundreds of megawatt-hours for large models, generating significant carbon emissions depending on the power grid's energy mix.

How much energy does training Chat GPT actually consume?

Open AI disclosed that GPT-4 training required approximately 15,744 MWh of electricity, generating roughly 6,288 metric tons of CO2. This is equivalent to the annual emissions from roughly 1,200 gasoline-powered vehicles. For comparison, GPT-3 required approximately 1,299 MWh, meaning GPT-4 consumed about 12 times more energy despite using the same architecture. The exact numbers are difficult to verify because Open AI doesn't fully disclose all environmental metrics, and different calculation methods produce varying results.

Are the water use claims about AI really false?

The specific numbers in viral water consumption claims were based on estimates and assumptions that nobody verified with Open AI, making them effectively unverifiable. However, that doesn't mean data centers use no water—they use billions of gallons daily for cooling. The real issue is that water consumption claims became a distraction from the more serious problem: energy consumption. Different data centers have vastly different water efficiency profiles depending on their cooling technologies, location, and operational practices, making blanket claims problematic. Open AI's pushback was justified on the numbers, but the underlying concern about resource consumption remains valid.

What's the difference between training energy and inference energy?

Training energy is consumed once when teaching a model using millions of data examples. GPT-4 was trained once over several weeks, consuming roughly 15,744 MWh total. Inference energy is consumed every time someone uses that trained model—a smaller amount per query but multiplied by billions of queries. Chat GPT serves 200 million monthly active users; if each uses the model even occasionally, the aggregated inference energy vastly exceeds training energy over a year. This is why inference efficiency and usage patterns matter far more than training efficiency for users' actual environmental impact.

Can renewable energy solve AI's environmental problem?

Renewable energy significantly reduces the carbon intensity of data centers, and companies like Google and Microsoft have committed to operating on renewable energy. However, renewable energy is not unlimited. Every megawatt powering a data center is unavailable for other uses like homes and hospitals. Additionally, "renewable energy purchases" often involve buying credits from power producers elsewhere rather than guaranteeing actual renewable electricity flows to the facility. The real solution requires both renewable energy expansion and absolute energy consumption reduction through efficiency improvements.

How can individuals reduce their AI-related environmental impact?

Use AI tools intentionally rather than reflexively, choosing smaller efficient models when available (Claude 3 Haiku instead of Opus), batching multiple requests into fewer queries, and supporting companies that transparently report their environmental metrics. However, the most impactful action isn't individual behavior change—it's demanding systemic change. Vote for policies supporting renewable energy infrastructure, pressure companies for environmental transparency, and support research into AI efficiency innovations. Individual actions matter at scale but pale compared to the impact of regulatory frameworks and corporate incentive structures that reward efficiency.

What are quantization, pruning, and distillation in AI efficiency?

Quantization reduces the numerical precision of AI models from 32-bit to 16-bit or 8-bit representations, decreasing memory requirements and energy consumption by 2-4x with minimal accuracy loss. Pruning removes redundant parameters from neural networks, with research showing you can remove 50-90% of parameters while maintaining performance. Distillation trains a smaller "student" model to mimic a larger "teacher" model, capturing most of the original's capability at a fraction of the energy cost. All three techniques reduce energy consumption, but they haven't kept pace with model size growth, meaning larger models still consume more total energy despite efficiency improvements.

Why don't AI companies report their environmental impact?

There's no industry standard for measuring or reporting AI environmental metrics, no regulatory requirement (yet) in most jurisdictions, and companies lack financial incentives since users don't see energy costs. Additionally, many companies treat environmental data as competitive information. Without standardized measurement methodologies, different calculation approaches produce vastly different results, making comparisons difficult. Hugging Face has built carbon tracking tools and advocates for transparency, but adoption remains minimal. Systemic change requires either regulation or market pressure creating competitive advantage for environmentally responsible companies.

Will AI's energy consumption continue growing exponentially?

Current trends suggest exponential growth is likely unless something changes. Model sizes continue increasing, inference demand grows with user adoption, and companies are expanding data center infrastructure with $100+ billion capital expenditures. However, efficiency innovations could decouple capability from energy consumption. Whether that happens depends on whether the research community prioritizes efficiency alongside capability, and whether regulation or market pressure incentivizes it. Without intervention, AI could account for 5-10% of global electricity within a decade, but aggressive efficiency improvements could keep it under 2-3% despite capabilities increasing dramatically.

What policies should governments implement around AI energy consumption?

Ideal policies would include mandatory environmental impact reporting (similar to financial disclosures), carbon pricing for compute-intensive training runs, efficiency standards for data centers, cost-benefit analysis requirements before deploying energy-intensive systems, and international agreements preventing regulatory arbitrage. The EU's AI Act is a beginning, but enforcement mechanisms are weak. More aggressive approaches might include taxes on compute consumption above efficiency thresholds, restrictions on training run duration or size, and priority access to renewable energy for high-value use cases. The challenge is defining what constitutes reasonable energy expenditure for different AI applications, which is partly technical and partly political.

The Bottom Line

AI's environmental impact is real, measurable, and growing. The water use debate was largely a distraction from the more important energy consumption issue. We've separated the signal from noise and established that significant efficiency improvements are possible without sacrificing capability—but the research and deployment incentive structures don't currently reward pursuing them.

The path forward requires three things: transparency (companies reporting actual environmental metrics), efficiency-first development (prioritizing energy alongside capability), and smart regulation (making environmental costs visible to decision-makers).

The good news: we can still course-correct. AI's consumption is still manageable as a percentage of global emissions. But the window for preventive action is closing. If we want AI to be part of the solution to climate change—modeling climate systems, optimizing energy grids, discovering new materials—we need to get serious about AI's own environmental footprint right now. Not eventually. Now.

Key Takeaways

- Sam Altman's criticism of water use claims was technically justified but obscured the more serious energy consumption issue affecting AI's environmental impact

- Training GPT-4 consumed 15,744 MWh of electricity producing 6,288 metric tons of CO2, equivalent to annual emissions from 1,200 gasoline vehicles

- Inference energy consumption is harder to measure but potentially larger in aggregate than training due to billions of daily user queries

- Significant efficiency improvements through quantization, pruning, and distillation remain underexploited due to incentive structures favoring capability over efficiency

- Without intervention, AI could account for 5-10% of global electricity consumption by 2030, but transparent reporting and efficiency-first development could limit impact to 2-3%

Related Articles

- AI Energy Consumption vs Humans: The Real Math [2025]

- AI Data Centers & Electricity Costs: Who Pays [2025]

- Why States Are Pausing Data Centers: The AI Infrastructure Crisis [2025]

- Can We Move AI Data Centers to Space? The Physics Says No [2025]

- Alibaba's Qwen 3.5 397B-A17: How Smaller Models Beat Trillion-Parameter Giants [2025]

- Adani's $100B AI Data Center Bet: India's Infrastructure Play [2025]

![AI's Hidden Cost: Energy, Water & Environmental Impact [2025]](https://tryrunable.com/blog/ai-s-hidden-cost-energy-water-environmental-impact-2025/image-1-1771866531924.jpg)