The Handhold That Never Happened: What a Symbolic Gesture Revealed About AI's Power Struggle

It was supposed to be a unified moment. Prime Minister Narendra Modi stood on stage at India's AI Impact Summit in New Delhi, orchestrating a show of solidarity. Tech executives from around the world were invited to join hands and raise them together, a symbolic gesture meant to underscore a shared commitment to advancing artificial intelligence for humanity's benefit.

Every executive complied. Every executive, that is, except two: Sam Altman of OpenAI and Dario Amodei of Anthropic.

They stood with their hands conspicuously apart, refusing to participate in what should have been a simple, nonpolitical gesture. For those paying close attention to the AI industry, that awkward moment said more than any press release could. It wasn't just about two CEOs avoiding a photo op. It was a public declaration that the relationship between the industry's two most influential AI companies had fractured beyond repair, at least for now.

This wasn't the first time Altman and Amodei had clashed publicly. In recent weeks, their companies had traded barbs on social media, with Altman specifically calling Anthropic "dishonest" and "authoritarian" in response to Anthropic's critique of OpenAI's advertising practices. "We would obviously never run ads in the way Anthropic depicts them," Altman wrote. "We are not stupid, and we know our users would reject that."

But the India summit moment was different. It was physical. It was visible. And it happened on the global stage, in front of Prime Minister Modi himself, at an event meant to celebrate cooperation and progress in AI. The symbolism was impossible to miss: the two CEOs of the companies leading the AI revolution couldn't even hold hands.

So what exactly is happening between these two companies? Why has the relationship deteriorated so publicly? And what does this rivalry mean for the future of artificial intelligence itself?

The answers are more complicated than a simple personality clash or business competition. They involve fundamentally different philosophies about how AI should be developed, deployed, and commercialized. They involve competing visions for what responsible AI looks like. And they involve billions of dollars in market value, investor capital, and the race to build the most capable AI systems the world has ever seen.

This is the story of a rivalry that's reshaping the entire AI industry, told through the lens of that awkward moment in New Delhi.

The Context: Two Companies, Two Philosophies

To understand why Altman and Amodei won't hold hands, you need to understand how differently they see the world.

OpenAI, under Sam Altman's leadership, has embraced a pragmatic approach to AI commercialization. The company launched ChatGPT as a free service, then introduced ChatGPT Plus at $20 per month. It's partnering with Microsoft, integrating with enterprise software, and aggressively pursuing commercial applications of its technology. Altman has been vocal about the need for AI regulation, but he's also been clear that OpenAI intends to be the company that develops and deploys the most capable AI systems. That's not a bug in his vision, it's a feature. OpenAI should win because OpenAI can be trusted to develop the right way.

Anthropic, founded by former OpenAI executives including Dario Amodei and his sister Daniela, took a different path. The company positioned itself as the more careful, more conscientious alternative to OpenAI. Anthropic invested heavily in AI safety research and developed Constitutional AI, a technique designed to make language models more honest and less prone to harmful outputs. The company was slower to commercialize, focusing first on making sure its technology was safe and aligned with human values before scaling deployment. Anthropic's pitch to investors and the world: we're doing this right, even if it takes longer.

For years, this could have been a coexistence. The AI market was big enough for both companies to grow without directly competing. But as both companies moved toward building the most capable AI systems possible, and as both sought massive capital to fund their research and infrastructure, the dynamic shifted. They started directly competing for the same resources, the same talent, and increasingly, the same customers.

The tension that erupted publicly in recent weeks wasn't new. It had been building beneath the surface for months, maybe longer.

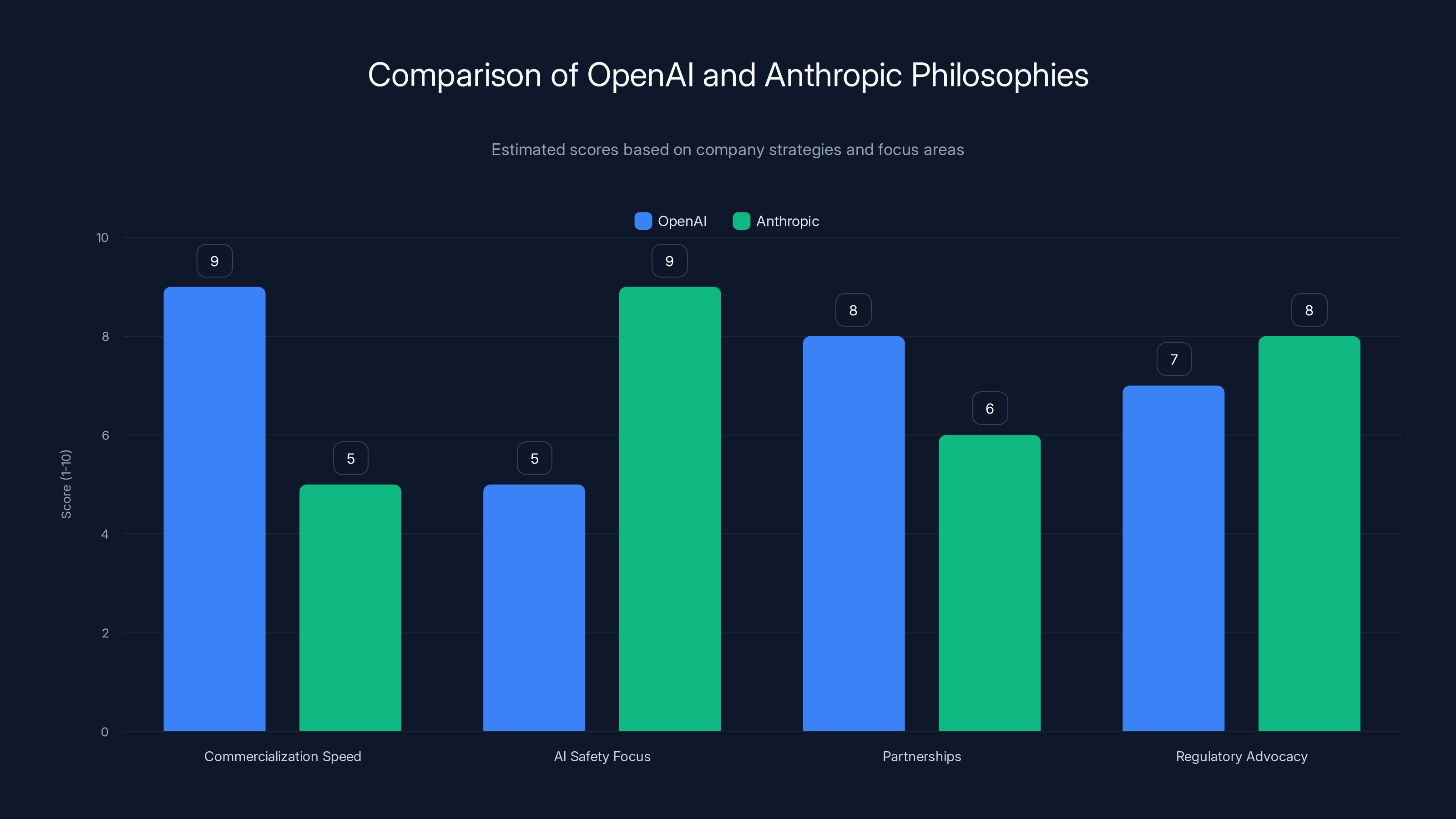

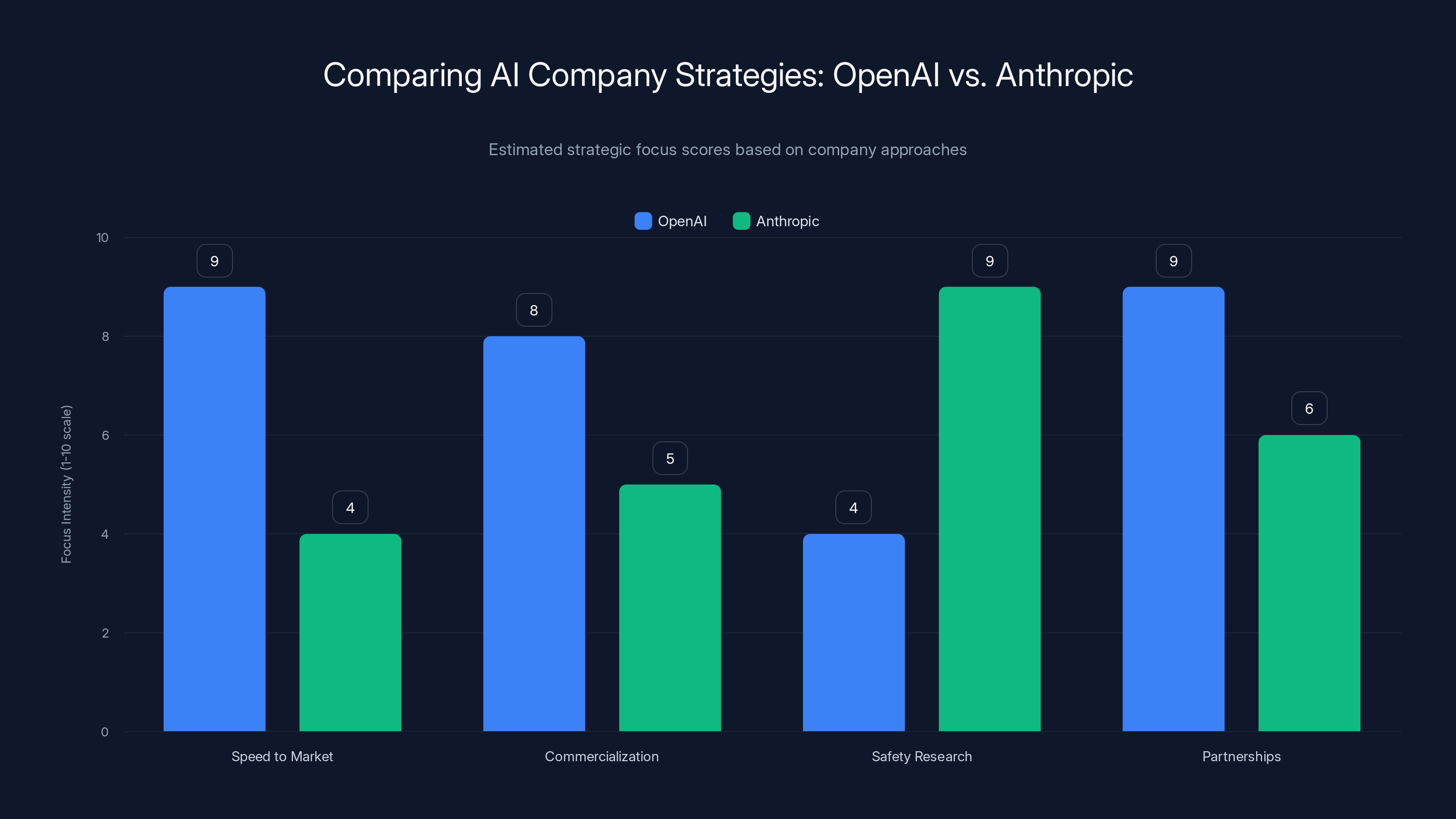

OpenAI scores higher in commercialization speed and partnerships, while Anthropic leads in AI safety focus. Both advocate for AI regulation. (Estimated data)

The Advertising Wars: Where It All Boiled Over

The immediate spark for the public conflict came from an unexpected direction: advertising.

Anthropic published a critique of OpenAI's advertising practices, specifically calling out how OpenAI was depicting AI capabilities in its marketing materials. According to Anthropic's analysis, OpenAI's ads were misleading about what ChatGPT could actually do. The ads suggested capabilities that the current versions of ChatGPT didn't reliably possess, setting users up for disappointment.

It was a fair criticism, the kind that you see in most competitive industries. Companies critique each other's claims all the time. But Altman's response was unusually harsh. He didn't just defend OpenAI's advertising. He questioned Anthropic's integrity.

"We would obviously never run ads in the way Anthropic depicts them," he wrote on X (formerly Twitter). "We are not stupid, and we know our users would reject that."

That line, "We are not stupid," was pointed. It suggested that if OpenAI were actually making the false claims Anthropic alleged, it would be a fundamental strategic blunder. But it also implied something about Anthropic itself. The subtext was clear: either Anthropic is lying about what we're doing, or they're stupid enough not to understand how the market works. Neither option was flattering for Anthropic.

Anthropic responded, and the back-and-forth continued. What might have been a technical disagreement in a previous era became a public relations battle, playing out on social media and in industry publications. Each company's supporters rallied to defend their side. Investors started paying attention. Employees started noticing the tension.

By the time the India summit rolled around, the relationship had cooled significantly. The handhold incident was the physical manifestation of a relationship that had already fractured in the digital realm.

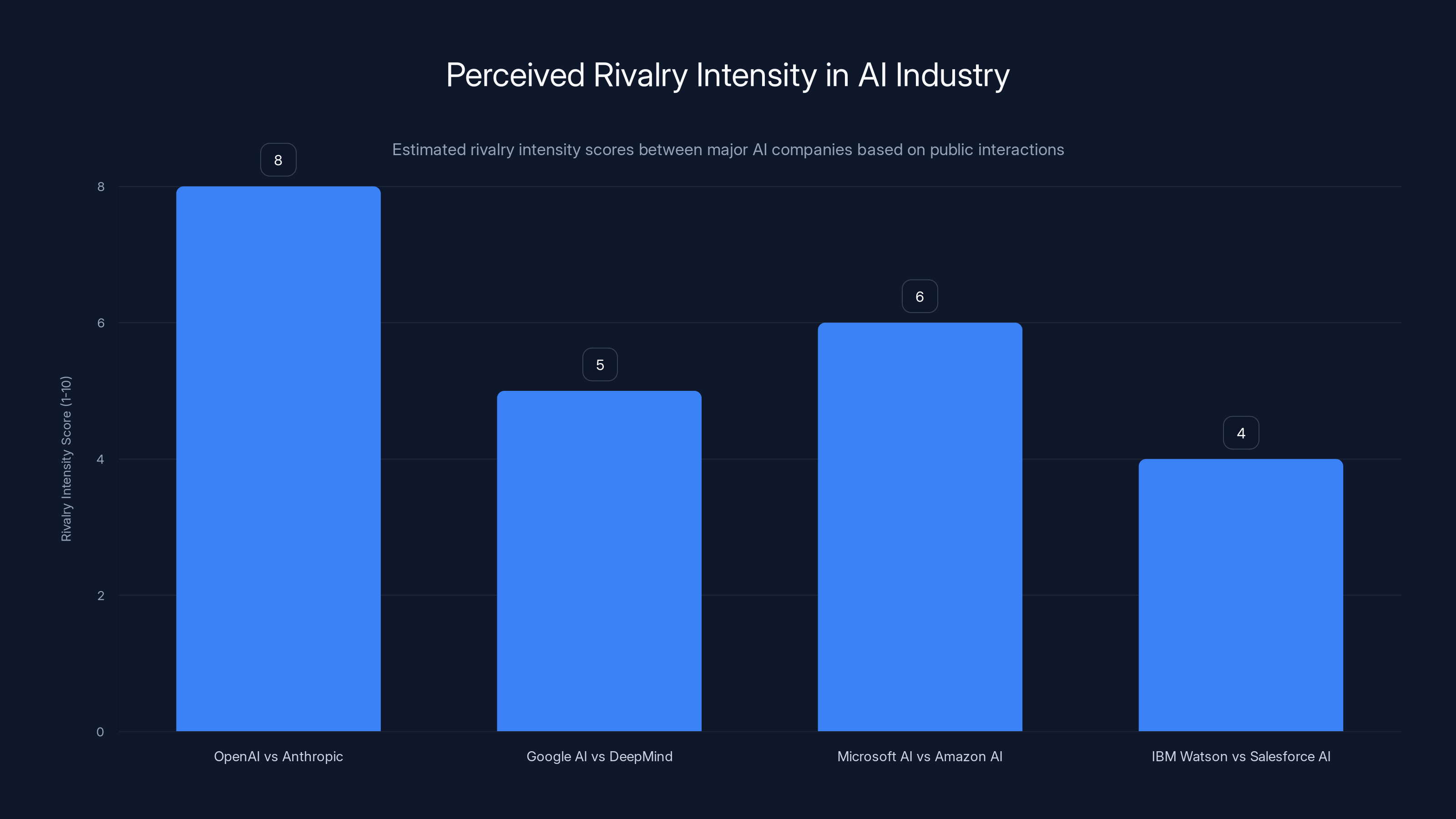

OpenAI and Anthropic exhibit the highest perceived rivalry intensity, reflecting public disputes and non-cooperation. Estimated data based on industry analysis.

India's AI Ambitions and Why This Moment Mattered

India wasn't a random choice for this summit. The country has become increasingly important to the global AI conversation, and both OpenAI and Anthropic know it.

India's technology sector is massive. The country is home to some of the world's largest IT services companies, including Infosys, TCS (Tata Consultancy Services), and Wipro. These are companies that serve enterprises globally, implementing technology solutions at scale. They're also companies that are investing heavily in AI, both for their own internal operations and to offer to clients.

Moreover, India has a massive population—1.4 billion people—and a growing middle class with increasing purchasing power. The Indian market for AI services could be enormous. This isn't just about helping India develop its own AI capabilities. It's about accessing the Indian market before competitors do.

Both OpenAI and Anthropic understand this. OpenAI announced at the summit that it was opening two new offices in India and partnering with TCS to deploy ChatGPT and other tools. Anthropic announced it had opened an office in India and was partnering with Infosys.

These aren't small announcements. They represent long-term commitments to the Indian market and recognition that India is going to be a crucial battleground for AI deployment in the coming years. When Prime Minister Modi called for the tech executives to join hands, he was essentially asking them to affirm their commitment to working together for India's benefit.

The fact that Altman and Amodei refused that gesture was particularly significant in this context. It suggested that the rivalry between their companies was now so fundamental that they couldn't even participate in a diplomatic show of unity on behalf of a nation they both wanted to do business with. It was an uncomfortable moment for everyone involved.

The Deeper Divide: Business Models and Market Strategy

Beyond the personal tensions, Altman and Amodei represent different strategic visions for how AI companies should operate.

OpenAI's strategy, under Altman, has been to move fast, commercialize aggressively, and let the market validate the technology. Get ChatGPT in the hands of hundreds of millions of users. Partner with major corporations like Microsoft. Integrate AI into productivity tools. Build a consumer base and an enterprise base simultaneously. The risks are that you move too fast, that you miss important safety considerations, or that you oversell capabilities. But the upside is massive: if you're the company that gets AI right and deploys it at scale, you win the entire market.

Anthropic has taken a more methodical approach. Develop the technology carefully. Research safety intensively. Make sure you understand the risks before scaling. Move into commercial applications more slowly and deliberately. The risks are that competitors like OpenAI move faster and dominate the market before Anthropic reaches scale. But the upside is that if something goes wrong with AI deployment at massive scale, Anthropic can claim to have taken the precautions that others didn't.

These aren't just different tactics. They're different bets about what the world needs from AI companies. They're different assumptions about what risk looks like and how to manage it.

For enterprises evaluating AI vendors, this matters. If you believe that moving quickly and iterating based on user feedback is the right approach, you'll prefer OpenAI. If you believe that thorough research and careful deployment are more important than speed, you'll prefer Anthropic. But you probably can't believe both of those things equally. The companies are operating under fundamentally different assumptions.

That's why the conflict is so intractable. It's not just about who has the better product or who's executing better. It's about different philosophies about how the world should change.

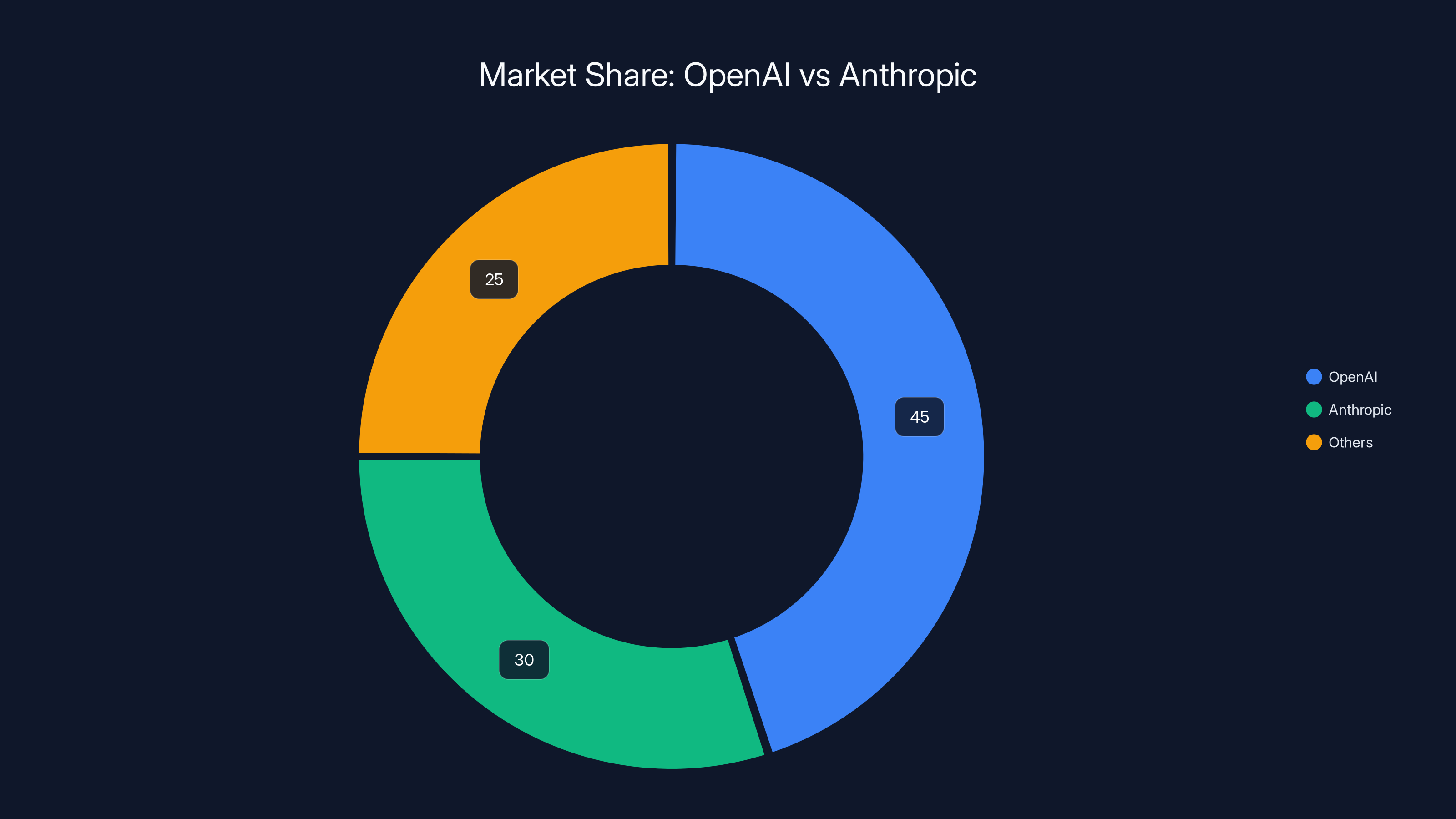

OpenAI holds an estimated 45% market share, focusing on speed and commercialization, while Anthropic captures 30% with a focus on safety and careful research. Estimated data.

The Capital Race and Competitive Pressure

One reason the tensions have escalated is that both companies are in an increasingly intense capital race.

Developing state-of-the-art AI systems is extraordinarily expensive. Training a large language model requires massive compute resources, expensive GPUs, and enormous energy expenditures. OpenAI spent billions on infrastructure in 2024 alone. Anthropic is raising capital at a furious pace to keep up. Other companies like Google, Meta, xAI, and Mistral are also investing heavily.

This capital race creates pressure to commercialize faster, to show investors that the technology has economic value, and to demonstrate market traction. It's harder to take the slow, methodical approach when you're burning hundreds of millions of dollars per year and investors want to see returns.

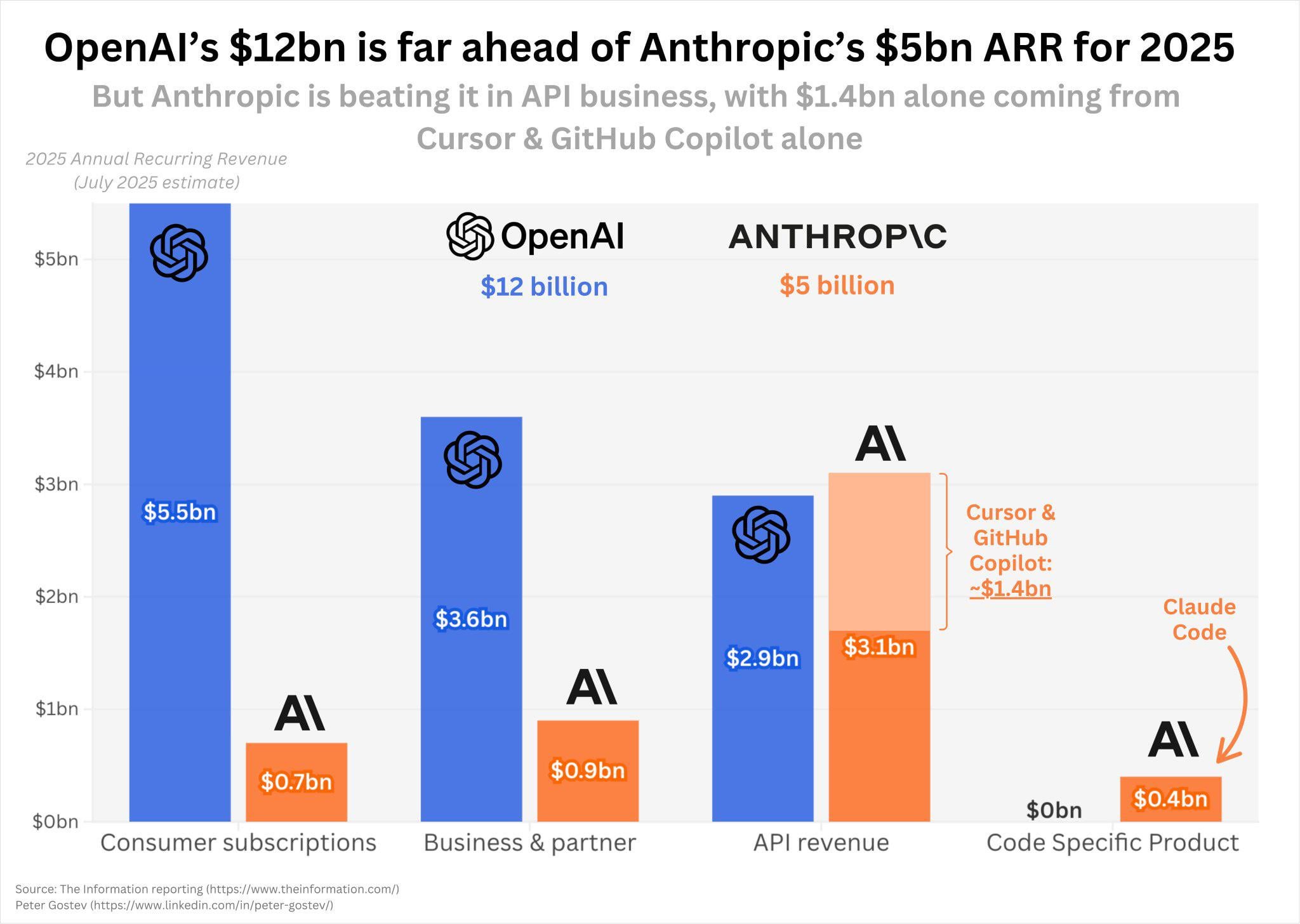

OpenAI, backed by Microsoft's capital and resources, can move faster on this front. Anthropic has raised significant capital but doesn't have the deep pockets of Microsoft backing it. That creates an asymmetry in how aggressively each company can pursue commercialization and market expansion.

The India market, in this context, is particularly important. It's an opportunity to demonstrate global reach and to access a market where there's less entrenched competition from previous-generation tech companies. Whoever gets there first with a strong product and partnerships with local tech powerhouses will have a significant advantage.

Both companies knew this when they went to India. Both companies made significant announcements about their plans. And both companies' CEOs likely understood that being in the same room, at the same summit, would invite direct comparison and potential conflict.

The handhold refusal was almost predictable in that context. Two companies competing intensely, with different strategies and different backers, couldn't even pretend at diplomatic unity for a photo op.

The Anthropic Position: Safety First, Speed Second

Dario Amodei's perspective on why Anthropic is different from OpenAI has been consistent over time.

When Amodei left OpenAI to start Anthropic, it wasn't primarily because he had a different business model in mind. It was because he believed OpenAI wasn't prioritizing AI safety research enough. He thought the company was moving too fast toward building and deploying powerful AI systems without fully understanding the risks.

At Anthropic, Amodei has built a company culture around the belief that you can't separate capability development from safety research. They have to happen together. You can't build powerful systems and then bolt on safety measures afterward. Safety has to be baked into the development process from the beginning.

This philosophy has translated into specific research programs and technical approaches. Constitutional AI is one example. Mechanistic interpretability research is another—Anthropic is investing heavily in understanding how large language models actually work internally, which is foundational to making them safer.

But this approach also means Anthropic is slower to commercialize. It takes longer to develop products when you're also doing foundational research into safety. It means you might pass on market opportunities because you're not confident enough that you've mitigated the risks.

From Altman's perspective, this is overly cautious. The market is moving fast. Users want AI tools now, not in five years after perfect safety research is complete. Companies that move too slowly will be left behind. And actually, deploying widely and getting feedback from millions of users is itself a form of safety research—it tells you what goes wrong in the real world.

These aren't crazy perspectives. They're different bets about how to manage risk in a high-stakes domain. But they're incompatible bets. You can't pursue both strategies simultaneously at the same company. That's partly why Amodei left OpenAI to start a different company. It's why the two companies now compete. And it's probably why Amodei wasn't interested in holding hands with Altman at the India summit.

OpenAI emphasizes speed and partnerships, while Anthropic focuses on safety research. Estimated data reflects strategic priorities.

The OpenAI Position: Move Fast and Maintain Trust

Sam Altman's perspective is more pragmatic and market-oriented, though he's not indifferent to safety concerns.

Altman has been vocal about the need for AI regulation. He's testified before Congress. He's participated in international discussions about AI governance. But he's also been clear that he believes OpenAI is in the best position to develop AI safely, precisely because the company is moving fast, deploying widely, and getting real-world feedback.

In Altman's view, the safest approach to AI development is to ensure that the companies building the most powerful systems are trustworthy, well-capitalized, and able to implement safety measures at scale. OpenAI, backed by Microsoft and with billions in capital raised from investors, fits that profile. A startup that's slower to commercialize might have higher ideals about safety, but it doesn't have the resources to implement those ideals comprehensively.

Moreover, Altman believes that if OpenAI doesn't dominate the AI market, other companies will. Some of those companies might be less careful about safety. Some might be state-sponsored entities with different priorities. From this perspective, OpenAI winning the AI race isn't just good for OpenAI. It's good for humanity, because it ensures that the most powerful AI systems are being built by a company that, whatever its flaws, has transparency and safety as part of its stated mission.

This is a winning argument in many boardrooms and among many investors. It's why OpenAI has been able to raise capital so effectively. It's why partnerships with companies like Microsoft, Salesforce, and others have come relatively easily. The reasoning is simple: if we're going to have powerful AI systems deployed globally, they should come from OpenAI.

But Amodei and others at Anthropic see this as precisely the wrong reasoning. They believe that moving so fast without fully understanding the risks is itself a risk—a major one. They believe that taking time to understand how these systems work, how they can fail, and how to make them more robust is the responsible approach, even if it means moving slower than competitors.

There's no objective way to resolve this debate. It's a fundamental disagreement about how to manage risk in a domain where the risks are unprecedented and not fully understood.

The Ripple Effects: What This Rivalry Means for the Industry

The tensions between Altman and Amodei aren't just about two CEOs who don't get along. They're shaping the direction of the entire AI industry.

When OpenAI and Anthropic are competing publicly, with harsh words and refusals to cooperate, it sends signals to the rest of the industry. Other AI companies have to choose which approach to align with. Do you move fast like OpenAI, or carefully like Anthropic? Do you prioritize commercialization or safety research? Do you build partnerships or maintain independence?

This rivalry is also affecting how investors evaluate AI companies. Is the company that's moving faster and commercializing more aggressively the better investment? Or is the company that's taking time to get safety right the better long-term bet? Different investors are answering this question differently.

It's affecting how enterprises adopt AI. Companies using OpenAI tools are essentially betting on OpenAI's approach to safety and commercialization. Companies using Anthropic tools are betting on a different approach. Over time, as more companies make these choices, ecosystems will form around each company's technology and philosophy.

It's affecting talent in the industry. Researchers who prioritize safety and careful research are more likely to join Anthropic. Researchers who want to work on cutting-edge applications and move fast are more likely to join OpenAI or other fast-moving companies. The industry is slowly sorting itself according to these philosophical differences.

And it's affecting the broader conversation about AI governance and regulation. Regulators are watching this rivalry and trying to understand what the right approach is. Should they encourage companies to move fast and iterate? Or should they require companies to prove safety before scaling? The fact that the leading companies are operating under fundamentally different philosophies makes it harder for regulators to figure out what rules make sense.

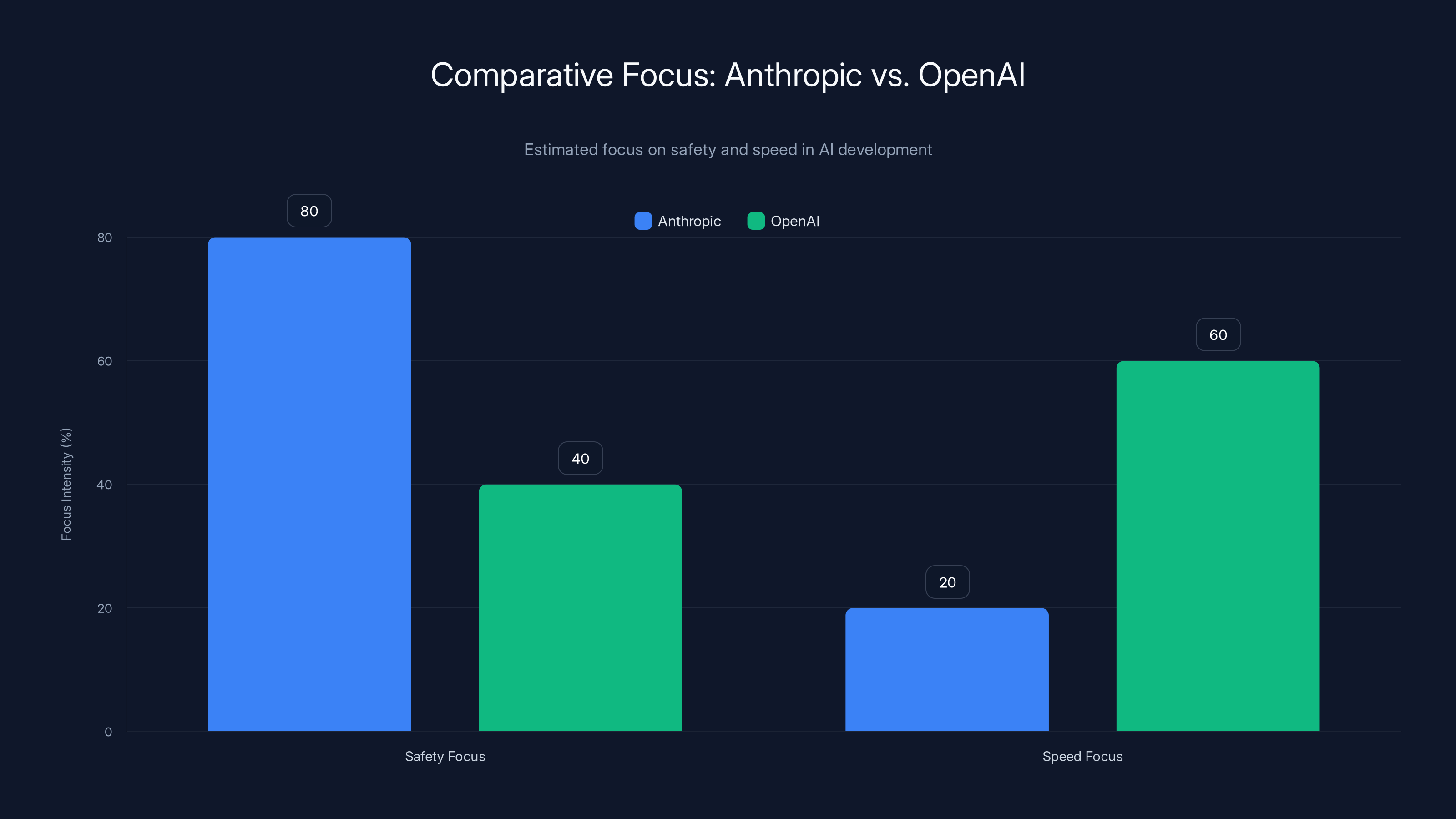

Anthropic prioritizes safety significantly more than speed, while OpenAI balances both but leans towards speed. Estimated data based on company philosophies.

The India Summit as a Microcosm

The India summit, in many ways, was a perfect setting for this conflict to become visible.

India is developing its own AI capabilities and trying to figure out how to implement AI in its society. The country is in a position to choose which approach to align with—the faster OpenAI approach or the more cautious Anthropic approach. When Prime Minister Modi asked the tech executives to join hands, he was essentially asking them to show that they could work together on behalf of India's interests.

The fact that Altman and Amodei refused that gesture was a statement: we can't pretend to be on the same team, even for diplomatic purposes. We disagree too fundamentally about how this should be done.

For India, this creates complexity. The country wants access to the latest AI technology and partnerships with the leading companies. But it's also trying to implement AI in a way that's responsible and aligned with Indian values. When the leading AI companies are openly hostile to each other, it makes that task harder. India has to figure out how to work with both companies, even though they're competing intensely and not even willing to hold hands symbolically.

From a PR perspective, the refusal to hold hands was a disaster for both companies. They looked petty, childish, and hostile. Prime Minister Modi looked awkward. The tech industry looked fractured and self-interested. In a moment meant to celebrate global cooperation on AI, the leading companies put their rivalry on full display.

But from a strategic perspective, the refusal was honest. It acknowledged that the rivalry is real, that the disagreements go deep, and that neither company is willing to pretend otherwise for the sake of a photo op. In some ways, that honesty is more valuable than fake cooperation would have been.

The Race for Capability and the Role of Competition

One argument in favor of the current rivalry is that competition drives progress.

When OpenAI released ChatGPT and captured public attention, Anthropic responded by developing Claude and increasing its research pace. When Anthropic published research on Constitutional AI and safety techniques, OpenAI had to pay attention and ensure it was doing equivalent or better safety work. The companies pushing each other forward means both are more likely to develop better, safer, more capable systems than either would alone.

Moreover, having competing approaches to AI development is itself valuable. If everyone followed OpenAI's approach, and it turned out to be wrong, the entire industry would suffer. If everyone followed Anthropic's approach, and it turned out that speed was more important than anyone realized, the industry would suffer. Having both companies pursuing different strategies means at least one is likely to be closer to right.

But competition can also be destructive. When companies waste energy on public disputes instead of focusing on product development, everyone loses. When rivalry makes it harder for companies to work together on shared problems like AI safety, everyone loses. When the leading companies are openly hostile, it makes it harder for the industry to build consensus on governance and regulation.

The India summit handhold moment captured this tension. It showed both the inevitability of competition and its costs.

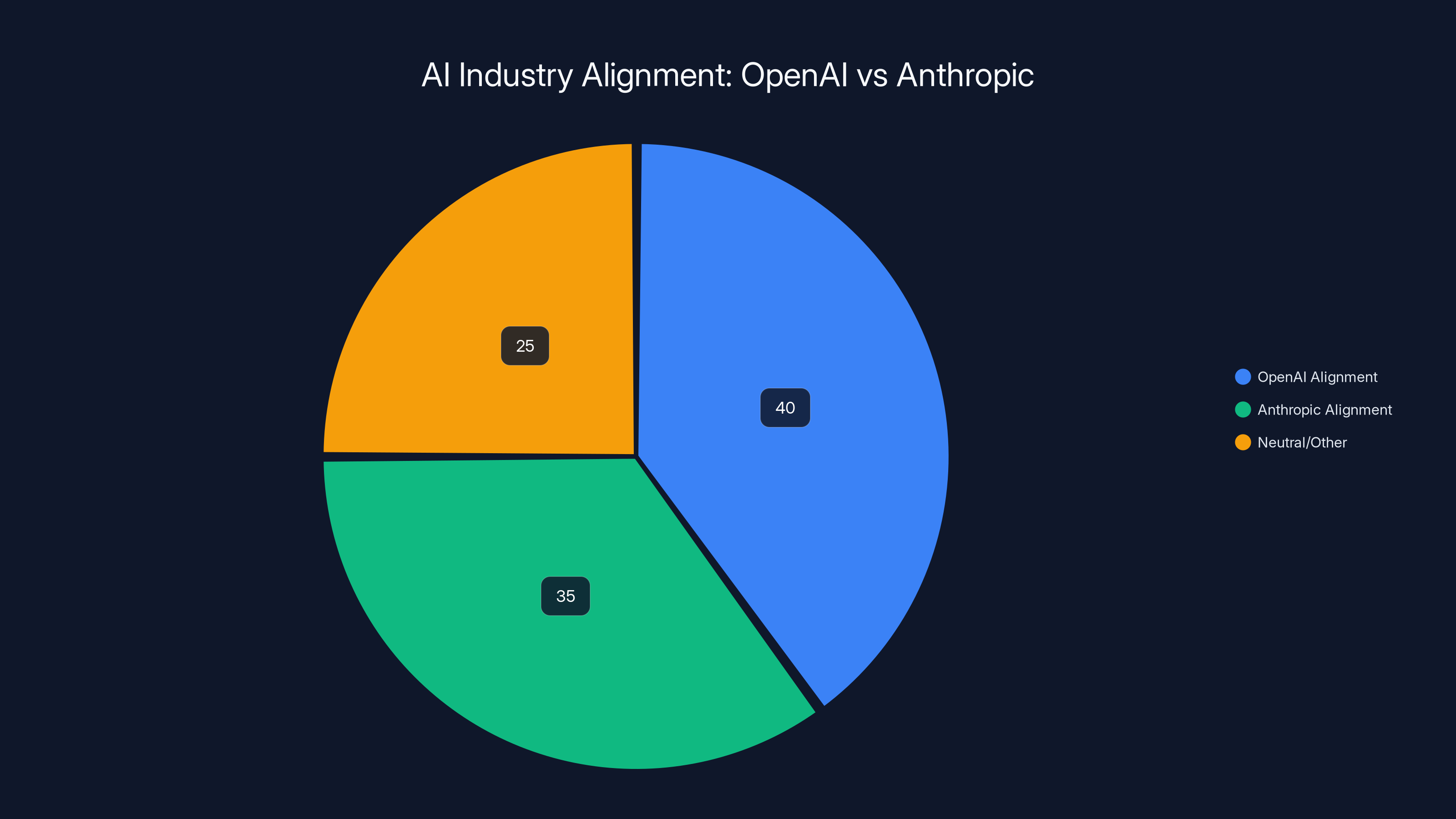

Estimated data suggests a nearly even split between companies aligning with OpenAI's fast-paced approach and Anthropic's safety-focused strategy, with a significant portion remaining neutral or aligned with other philosophies.

Looking Forward: Will This Rivalry Escalate or Stabilize?

The key question now is whether the Altman-Amodei rivalry will escalate or stabilize.

Escalation would mean more public disputes, more harsh rhetoric, potentially legal conflicts if either company feels the other is misrepresenting it publicly or violating patents or intellectual property rights. It would mean less cooperation on shared problems and more zero-sum competition. It would mean choosing different partners and platforms, making it harder for customers to use both companies' tools together.

Stabilization would mean that both companies accept the rivalry as a normal part of competition, they maintain professional boundaries, they compete hard but within industry norms, and they find ways to work on shared problems even while competing. It would mean occasional conflicts but not constant warfare.

Historically, technology industry rivalries have gone both ways. Microsoft and Apple competed intensely for decades but eventually found ways to coexist and even work together. Google and Yahoo had a bitter rivalry that faded as the market evolved. Amazon and eBay competed but in adjacent spaces. Sometimes rivalries escalate into all-out conflicts. Sometimes they stabilize into mature competition.

For Altman and Amodei, it probably depends on a few factors. Do one or both companies need access to the other's technology or partnerships? That tends to push toward stabilization. Are the capital markets rewarding conflict, or rewarding cooperation and maturity? If conflict is seen as indicating strong conviction and strategic clarity, it might continue. Are the respective boards and investors pushing for different strategies? If OpenAI's board is encouraging aggressive competition while Anthropic's board is encouraging cooperation, that asymmetry would create ongoing tension.

Most likely, the rivalry will continue but with less public drama. Both companies will focus more on product development and less on public disputes. There might be occasional moments of conflict, like the India summit, but not constant warfare. The companies will compete hard but with increasing professionalism.

The handhold moment, awkward as it was, might actually serve as a circuit breaker. It was so visibly awkward that both companies probably want to avoid similar incidents in the future. After you've refused to hold hands with your rival at a global summit, the bar for further drama is pretty high.

The Broader AI Industry Implications

Beyond the Altman-Amodei conflict, the rivalry between OpenAI and Anthropic is raising important questions for the entire AI industry.

First, is there room for multiple approaches to AI safety and development? Or is one approach clearly right and the other clearly wrong? The market will eventually answer this question. If OpenAI's fast-moving approach proves successful and safe, Anthropic's more cautious approach might look overcautious in retrospect. If Anthropic's approach prevents major AI failures or harms that OpenAI's approach would have caused, it will look wise. We won't know for years or decades.

Second, how should AI companies balance commercialization with research? This is a genuine tension, not a false one. You can't fund AI research without revenue. But commercialization pressure can compromise research integrity and lead to cutting corners. Both companies are trying to find the right balance, but they're landing in different places.

Third, what role should government and regulation play? OpenAI's approach assumes that the market and trust in major companies can guide AI development safely. Anthropic's approach somewhat assumes that more regulation and oversight might be necessary. This disagreement is going to shape policy conversations in coming years.

Fourth, how important is transparency in AI development? OpenAI has published some research but kept much of its work proprietary. Anthropic has been somewhat more open with safety research. This creates different incentives and different risk profiles.

These aren't questions with obvious right answers. They're questions that the entire AI industry is grappling with. The fact that OpenAI and Anthropic are representing different sides of these debates is actually valuable for the industry. It means we're not converging prematurely on one approach before we've fully explored alternatives.

The Human Element: Personality and Trust

Some of the tension between Altman and Amodei is undoubtedly personal.

Altman and Amodei have different personalities, different communication styles, and different ways of approaching problems. Altman is more of a visionary and communicator, comfortable talking to the press and making bold claims about the future. Amodei is more reserved, more focused on technical depth, and more cautious about making public claims.

These personality differences are often magnified in media coverage. The story of the visionary entrepreneur (Altman) competing against the careful scientist (Amodei) is simpler and more compelling than the actual reality. But these narratives can influence how people perceive the rivalry and whether they see it as inevitable or contingent on personality factors.

The reality is that even if Altman and Amodei had gotten along perfectly as people, their companies probably still would have ended up in competition. The market opportunities are too large, the capital available is too abundant, and the strategic visions are too different. These companies were probably always going to compete intensely.

But better personal relationships might have made the competition less public and less hostile. Companies whose leaders genuinely like each other tend to compete differently than companies whose leaders actively dislike each other. They're more likely to find ways to work together on non-competitive issues. They're less likely to make public attacks.

So while the personality factor isn't the root cause of the rivalry, it's definitely affecting how the rivalry plays out publicly.

The Global Implications: AI Sovereignty and Regional Strategies

The Altman-Amodei rivalry is also playing out in the context of broader questions about AI sovereignty and regional power.

China is developing its own AI capabilities through companies like Baidu, Alibaba, and ByteDance. The EU is trying to build European AI companies like Mistral. India is investing in its own AI research while also seeking partnerships with leading global companies. Japan, South Korea, and other countries are pursuing AI strategies.

In this context, neither OpenAI nor Anthropic can afford to be seen as purely American companies that don't respect local partnerships and strategies. Both companies need to build relationships in India, the EU, and other regions. But they're competing for these relationships, and the rivalry makes it harder to build them.

India's prime minister, by asking the executives to join hands, was essentially asking them to put aside their competition in favor of India's interests. The refusal to do so, while understandable from each company's strategic perspective, was problematic from India's perspective. It signaled that the companies' rivalry mattered more to them than India's priorities.

Over time, this might push India (and other regions) to invest more in developing local AI capabilities rather than relying on partnerships with OpenAI or Anthropic. That's arguably healthy in the long run, but in the short term, it means lost opportunities for all parties.

Lessons for Entrepreneurs and Investors

The Altman-Amodei rivalry offers several lessons for entrepreneurs and investors in the AI space and beyond.

First, strategic disagreements often lead to company splits and competition. When founding teams disagree on fundamental strategic issues—like how fast to move or how much to prioritize safety—the disagreement usually gets resolved through founders leaving and starting competing companies. This is normal and healthy. It's how the market explores different approaches to a problem.

Second, competition doesn't have to be hostile. The fact that OpenAI and Anthropic are competing doesn't mean they have to be enemies. Many competitive relationships in tech are professional even if they're intense. The India summit moment shows what happens when rivalry becomes personal and hostile.

Third, investors need to choose sides or hedge. If you're investing in AI, you're betting on one approach to safety and commercialization or the other (or both). OpenAI's approach and Anthropic's approach will have different outcomes. Understanding what you believe about these approaches should guide your investment decisions.

Fourth, public communications matter. How companies talk about their competitors affects perception, talent recruitment, and customer relationships. The Altman response to Anthropic's advertising critique—calling it "dishonest" and saying "we are not stupid"—probably hurt OpenAI more than it hurt Anthropic. It made Altman sound defensive and insulting.

Fifth, geographic strategy matters. By opening offices in India and partnering with local companies, both OpenAI and Anthropic are making long-term bets on regional importance. But these bets are undermined if the companies can't cooperate at the diplomatic level. In the future, both companies might find it valuable to maintain a more professional relationship internationally, even while competing domestically.

The Path Forward: What Comes Next

So what happens next in the Altman-Amodei saga?

In the short term, both companies will probably focus more on execution and less on public rhetoric. The India summit was awkward enough that both companies likely want to avoid similar incidents. That doesn't mean the rivalry will cool, but it probably means the public displays of hostility will decrease.

In the medium term, one or both companies will likely make major technical breakthroughs or encounter major challenges. These will reshape the competitive landscape. If OpenAI releases a major new model that works flawlessly at scale, Anthropic will have to respond aggressively. If Anthropic's safety research prevents a major AI incident that OpenAI faces, the balance of credibility will shift. These developments will matter more than the current rhetoric.

In the long term, the market will decide which approach was right. Did moving fast and iterating on user feedback lead to safer, more capable AI systems than careful research and slower commercialization? We'll find out. History is littered with examples of fast-moving competitors who got it right and overcautious competitors who missed their moment. It's also full of examples of the opposite.

What seems clear is that both OpenAI and Anthropic will continue to exist, compete, and develop AI. Neither company is going to disappear. Both have too much capital, too much talent, and too much market traction. The rivalry will continue, but hopefully in a more professional form.

And the world will be better off for having two companies pursuing different approaches to AI development, even if those companies won't hold hands.

FAQ

What caused the rivalry between OpenAI and Anthropic?

The rivalry emerged from fundamental strategic differences about how to develop AI safely and responsibly. Dario Amodei and other researchers left OpenAI to start Anthropic because they believed OpenAI was moving too fast without sufficient focus on safety research. This philosophical disagreement has evolved into direct competition for capital, talent, and market share, with both companies now pursuing different approaches to commercialization and safety.

Why did Altman and Amodei refuse to hold hands at the India summit?

The handhold refusal was a physical manifestation of the deteriorating relationship between the two executives and their companies. After weeks of public attacks—including Altman calling Anthropic "dishonest" and "authoritarian"—holding hands would have seemed hypocritical. The refusal was a statement that the rivalry had become too intense to pretend at diplomatic unity, even for a symbolic gesture at a global summit.

What's the difference between OpenAI's and Anthropic's approach to AI development?

OpenAI, led by Altman, prioritizes speed to market, commercialization, and real-world feedback from widespread deployment. The company believes the safest approach is to build powerful AI at scale with trustworthy companies and iterate based on user feedback. Anthropic prioritizes careful research, thorough safety analysis, and slower commercialization. The company believes you can't separate safety from capability development and that understanding how AI systems work internally is essential before scaling.

How is this rivalry affecting the broader AI industry?

The rivalry is forcing the entire industry to grapple with fundamental questions about safety, speed, commercialization, and regulation. It's creating two distinct camps within the AI industry: those who believe in moving fast like OpenAI and those who believe in moving carefully like Anthropic. This competition drives both companies forward but also creates industry fragmentation and makes it harder to build consensus on governance issues.

Which approach to AI development is likely to be right?

The market will ultimately answer this question through outcomes over the next 5-10 years. If OpenAI's approach leads to powerful, safe AI systems that provide clear benefits to society, it will be vindicated. If Anthropic's approach prevents major harms or produces more reliable systems, it will be vindicated. Both approaches involve real tradeoffs, and which one was right depends partly on risks and outcomes we can't fully predict yet.

Is the rivalry between Altman and Amodei personal or strategic?

It's primarily strategic, rooted in genuinely different beliefs about how AI should be developed. However, the rivalry has also become personal. Altman and Amodei have different communication styles and personality types. The strategic disagreement created competition, which then developed personal animosity. The India summit handhold moment showed how much the relationship has deteriorated beyond just business competition.

What are the implications for customers choosing between OpenAI and Anthropic?

Choosing between the companies means implicitly choosing which approach to AI development you believe in. If you choose OpenAI, you're betting that moving fast and iterating on feedback is the right approach. You're also betting that OpenAI can be trusted to manage risks appropriately at scale. If you choose Anthropic, you're betting that careful research and slower deployment are worth the pace penalty. Different customers will have different preferences based on their risk tolerance and use cases.

Will the rivalry between these companies escalate further?

Likely not dramatically. The India summit moment was awkward enough that both companies probably want to avoid similar public incidents. However, the underlying strategic competition will continue and probably intensify. Both companies will compete harder on product, on capital raising, and on talent recruitment. But the public hostility will probably decrease as both companies focus more on execution and less on rhetoric.

How does this rivalry affect AI regulation and governance?

The disagreement between the companies is making it harder for regulators to figure out what rules make sense. Should regulators encourage companies to move fast and iterate? Or should they require proof of safety before scaling? The fact that the leading companies operate under fundamentally different philosophies means there's no clear consensus on the right regulatory approach. This actually might be healthy—it prevents premature regulatory convergence on one approach before alternatives have been fully explored.

What can other AI companies learn from this rivalry?

Other AI companies can learn that strategic differences on fundamental issues often lead to competition and sometimes hostility. They can learn that maintaining professional relationships while competing intensely is valuable for everyone. They can learn that public attacks on competitors, while sometimes satisfying, usually backfire. And they can learn that the AI industry is at an inflection point where different approaches to development are still viable, but that window might not stay open forever. Eventually, one approach will probably dominate.

Conclusion: The Handhold That Defined an Era

When Prime Minister Modi extended the invitation to join hands, he was asking for something simple: a symbolic gesture of unity and shared commitment to advancing AI. He was asking for something that should have been easy. Instead, he got a stark illustration of how divided the AI industry has become.

Sam Altman and Dario Amodei didn't hold hands because they couldn't, not in any practical sense, but because they couldn't pretend to unity they don't feel. The disagreement between them runs too deep. It's not about ego or personality, though those factors matter. It's about fundamentally different visions for how AI should be developed, deployed, and commercialized.

Altman believes that the safest path to powerful AI is to move fast, deploy widely, and iterate based on real-world feedback. He believes that OpenAI is trustworthy enough to do this safely. He believes that if OpenAI doesn't develop and deploy the most powerful AI systems, less careful companies will.

Amodei believes that the safe path is to understand how AI systems actually work before scaling them. He believes that safety research and capability development must happen together. He believes that moving slowly and carefully is worth the cost of potentially missing some market opportunities.

Neither belief is obviously wrong. The world won't know for years or decades which approach was right. But in the meantime, the two executives have stopped pretending that they agree. The India summit moment made that explicit in a way that was uncomfortable for everyone involved.

What makes this moment historically significant isn't the awkwardness itself. It's what the awkwardness reveals about where we are in the AI revolution. We're at a point where different strategic approaches to AI are still viable. We're at a point where the leading companies can pursue genuinely different philosophies. We're at a point where the outcome of this competition is genuinely uncertain.

In five years, Altman's approach might look obviously right. In five years, Amodei's approach might look wise. Or they might both look partially right, having learned from each other. The market will decide.

But for now, the refusal to hold hands is honest in a way that faked unity wouldn't have been. It acknowledges that the AI industry is divided. It acknowledges that the leading companies don't agree on fundamental issues. It acknowledges that this competition matters.

Prime Minister Modi asked for unity. What he got was clarity about the fact that unity isn't possible right now. And while that's awkward, it's probably better than the alternative, which would have been pretending that agreement existed when it clearly doesn't.

The rivalry between Altman and Amodei will continue. The companies will compete. The approaches will be tested. The market will render its judgment. And somewhere down the line, when the dust settles, we'll know whether the refusal to hold hands was the moment when two leaders chose honesty over diplomatic performance.

That's what the India summit moment really means. Not that these guys don't like each other, though that's probably true. Not that they're unprofessional, though the moment looked unprofessional. But that the AI industry is too important, the stakes are too high, and the disagreements are too fundamental for anyone to pretend otherwise.

The handhold that never happened was the hand that refused to hide the truth.

Key Takeaways

- The refusal to hold hands at India's summit was a public manifestation of deep strategic disagreements about AI safety and commercialization speed

- OpenAI prioritizes fast deployment with real-world iteration, while Anthropic emphasizes careful research before scaling—fundamentally incompatible philosophies

- The rivalry has escalated from strategic competition to personal hostility, with Altman calling Anthropic 'dishonest' and the companies making increasingly harsh public statements

- India's strategic importance to both companies makes the diplomatic failure particularly significant, as both are betting heavily on market expansion there

- The competition is driving the entire AI industry to grapple with fundamental questions about how fast is too fast and what safety measures are sufficient before deployment at scale

Related Articles

- Tech Leaders Respond to ICE Actions in Minnesota [2025]

- Tech CEOs on ICE Violence, Democracy, and Trump [2025]

- Pentagon vs. Anthropic: The AI Weapons Standoff [2025]

- Peter Steinberger Joins OpenAI: The Future of Personal AI Agents [2025]

- OpenAI Hires OpenClaw Developer Peter Steinberger: The Future of Personal AI Agents [2025]

- OpenClaw Founder Joins OpenAI: The Future of Multi-Agent AI [2025]

![Altman vs. Amodei: The AI CEO Rivalry Playing Out at India's Summit [2025]](https://tryrunable.com/blog/altman-vs-amodei-the-ai-ceo-rivalry-playing-out-at-india-s-s/image-1-1771510339485.jpg)