API Management & Agentic AI Security: Closing Blind Spots [2025]

You can't secure what you can't see.

That single phrase captures the core problem facing enterprises rushing to deploy agentic AI. The technology promises enormous gains in productivity and efficiency. According to recent research from MIT Sloan, 79% of businesses are already using AI agents in at least one business function. But here's what keeps security teams up at night: as these autonomous agents proliferate across your infrastructure, they're creating security blind spots that can spiral out of control faster than any human team can respond.

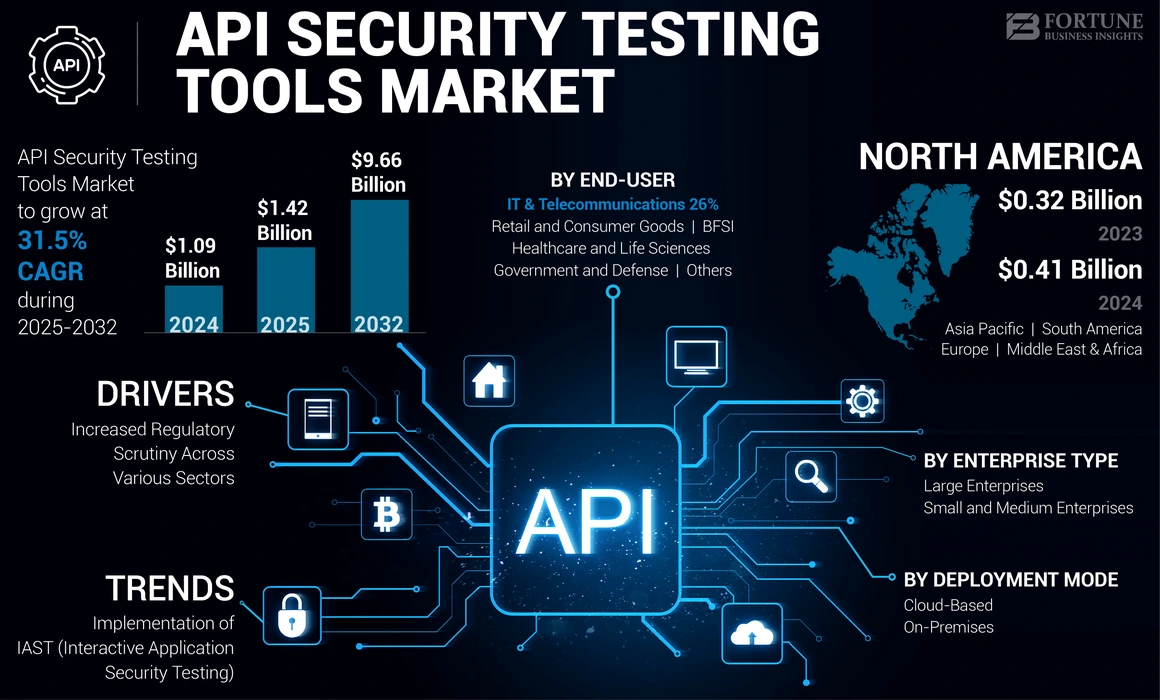

The challenge isn't agentic AI itself. The challenge is that most organizations deploying it lack the visibility and governance to manage the connections those agents rely on. And those connections—the APIs that serve as the nervous system of modern software—are becoming prime targets for attackers. In just the first half of last year, the industry recorded more than 40,000 security incidents tied to API vulnerabilities. That's one incident every 7 seconds.

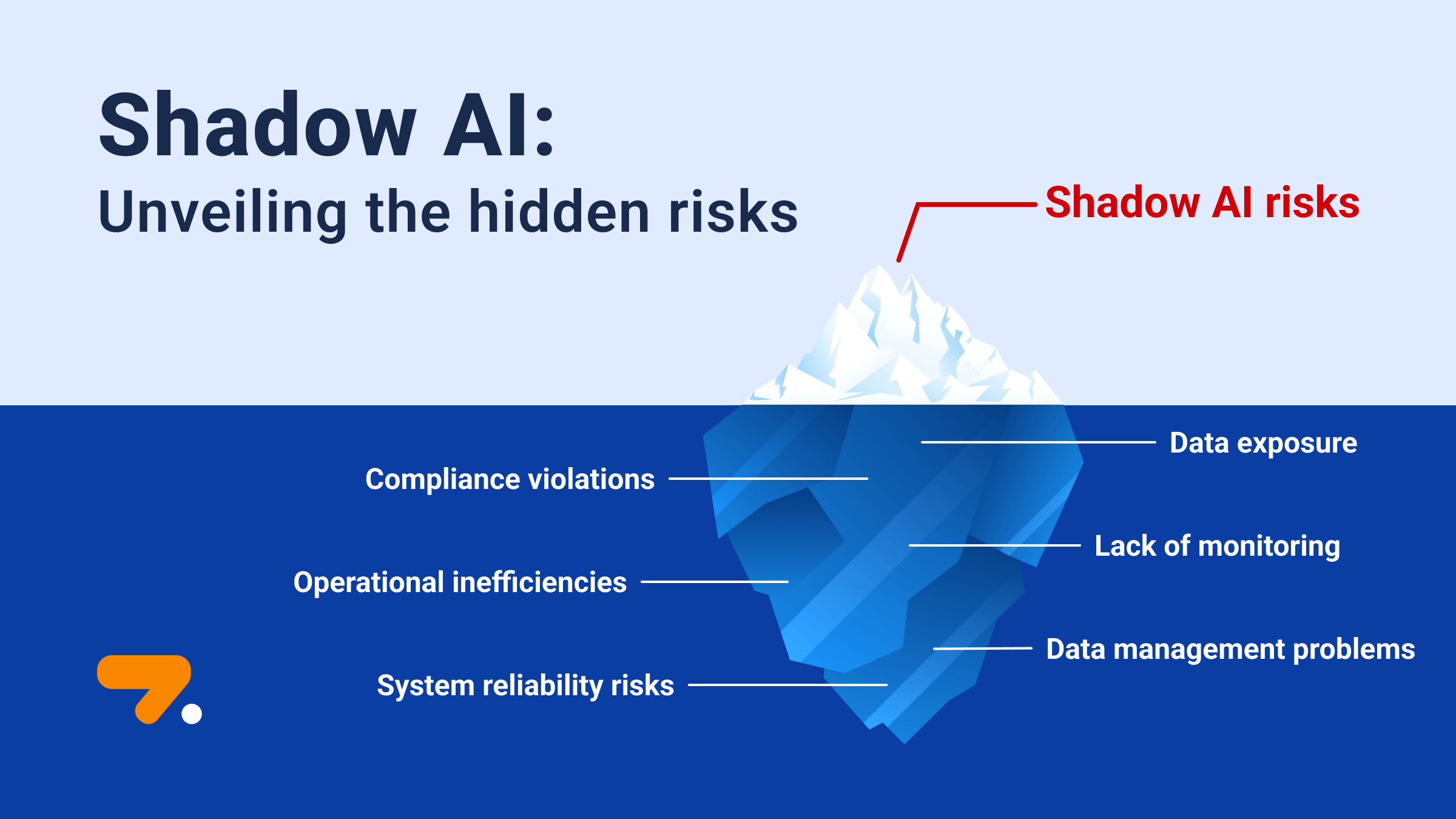

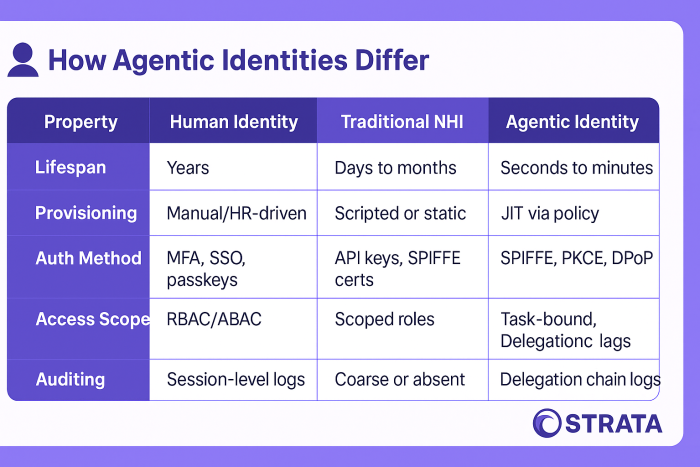

What makes agentic AI different is the velocity and scale of the problem. A human developer might connect to five APIs in a month. An AI agent can create dozens of new connections in a single hour. Shadow AI, zombie APIs, undocumented agents accessing sensitive datasets—these aren't theoretical risks anymore. They're happening right now in organizations that thought they had reasonable security controls in place.

The good news? A mature API management strategy can eliminate most of these blind spots. But it requires understanding the specific ways agentic AI amplifies existing risks, and building governance structures that keep pace with autonomous systems.

Let's walk through what's actually happening in your infrastructure, why it matters, and exactly how to fix it.

TL; DR

- The Core Problem: Agentic AI agents create API connections at scale without proper visibility or control, multiplying security risks exponentially

- The Blind Spot: 71% of UK employees use unapproved AI tools at work, and most organizations can't see what APIs their agents are accessing

- The Solution: Centralized API management with role-based access control, real-time monitoring, and automated governance

- The Cost of Inaction: A single compromised agent can expose millions of records and compromise entire workflows before detection

- The Path Forward: API inventory visibility + agent lifecycle management + automated compliance enforcement = scalable security

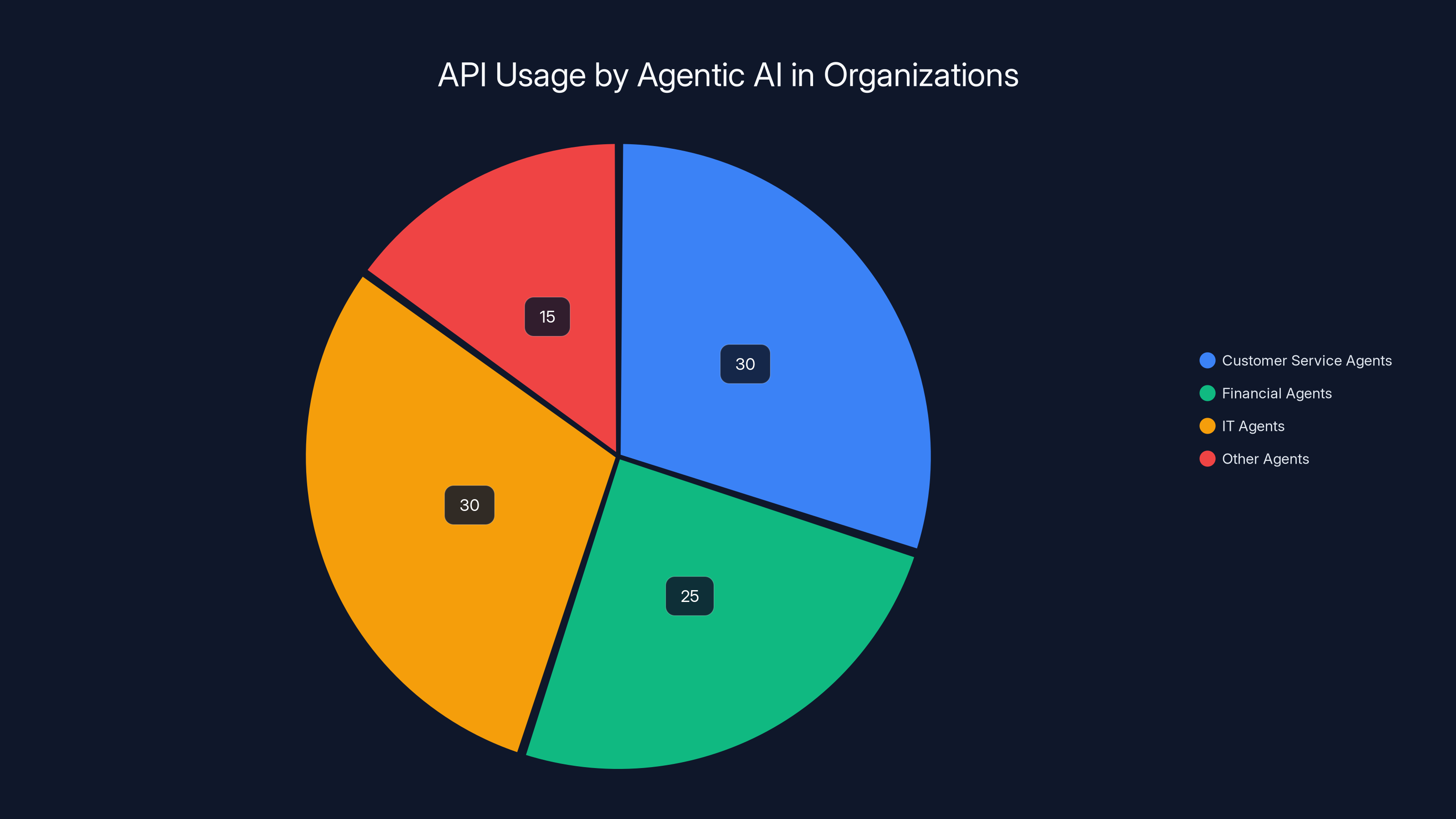

Agentic AI dynamically interacts with APIs, with customer service, financial, and IT agents making the majority of API calls. Estimated data.

Why Agentic AI is Accelerating the API Security Crisis

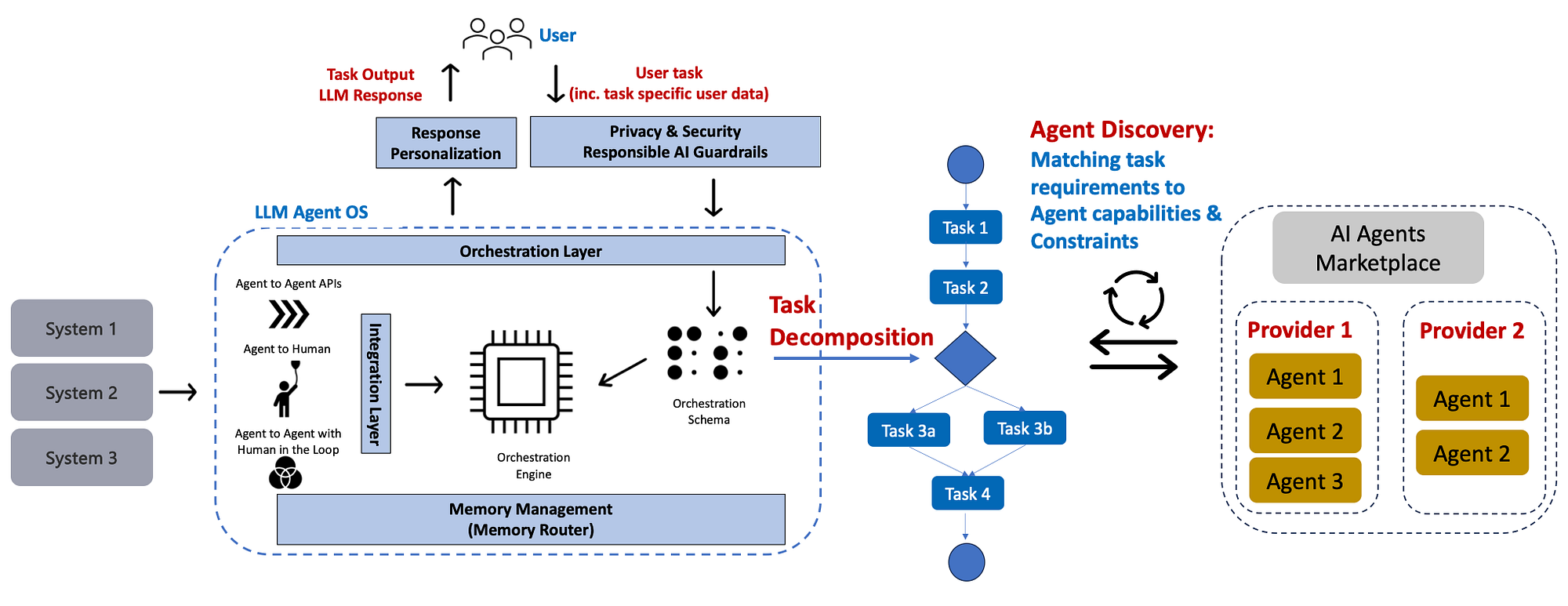

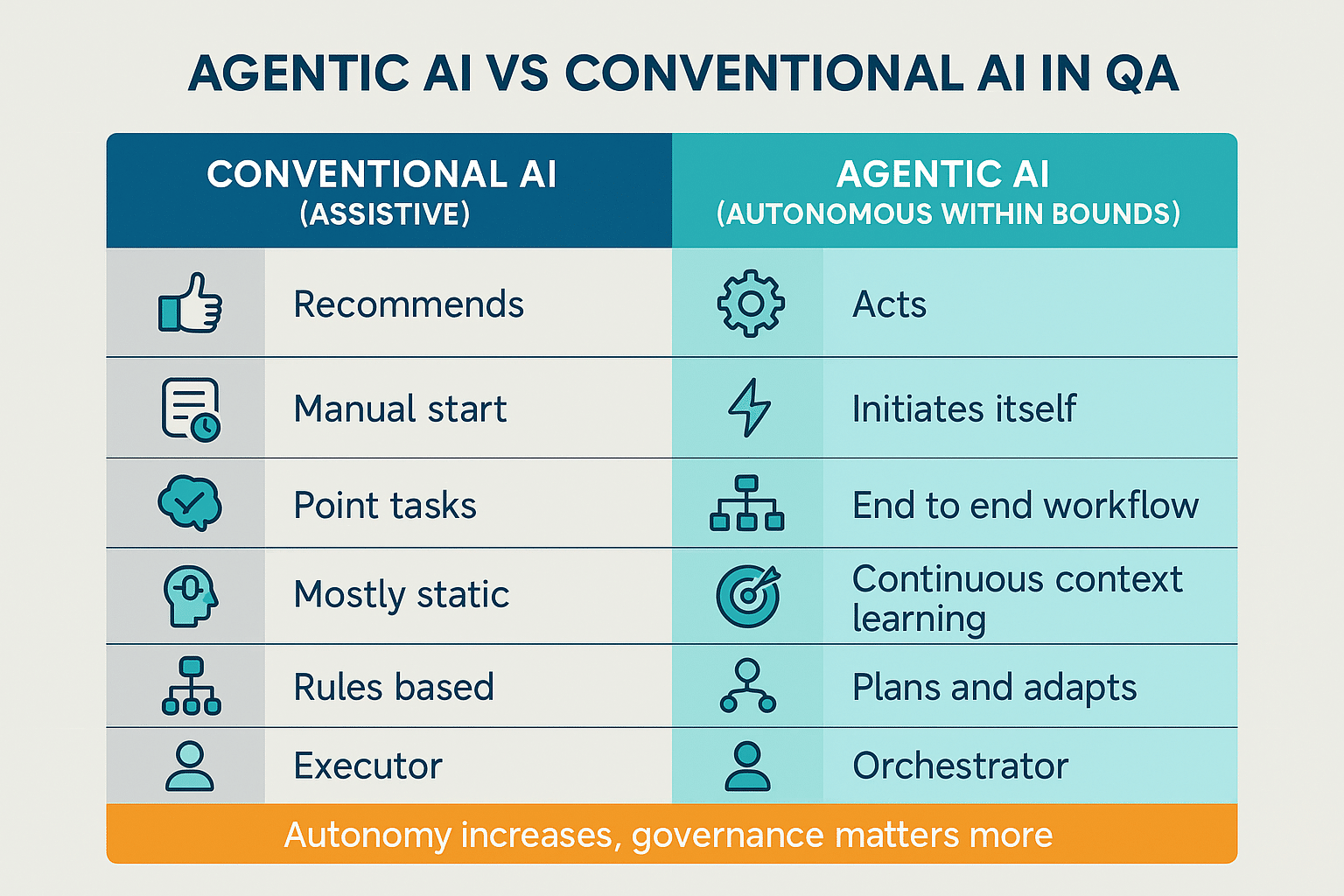

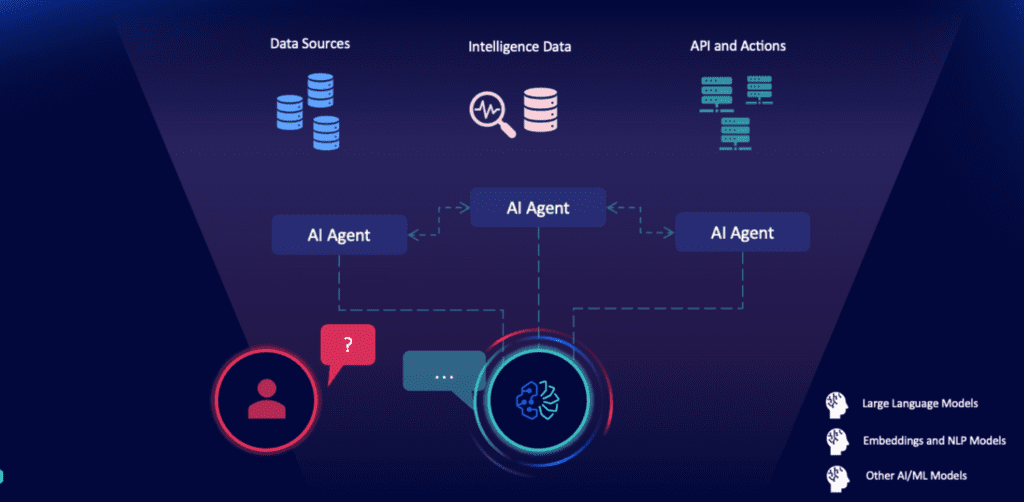

Let's start with the fundamentals. An AI agent is essentially a software process that gathers information about its environment, makes decisions based on that information, and takes actions without human intervention between decisions. That's powerful. But it's also dangerous if you don't know what information it's gathering or what actions it's taking.

Agents rely entirely on APIs to interact with the rest of your infrastructure. Need to pull customer data? There's an API call. Need to execute a workflow? More API calls. Need to write something to a database? You guessed it—API calls. This is where the visibility crisis begins.

In traditional deployments, a developer writes code that connects to specific APIs. The connections are defined, documented (hopefully), and reviewed before they're deployed. Once they're in production, they're relatively static. The same connections run the same workflows, day after day.

Agentic AI flips this model on its head. An agent doesn't just call pre-defined APIs in a fixed order. It discovers available APIs, evaluates which ones might help it accomplish its goals, and dynamically calls them based on real-time reasoning. A single agent might connect to 10 different APIs today and a completely different set tomorrow, depending on the specific task it's been given.

Scale this across your organization. You've got customer service agents handling inquiries. You've got financial agents analyzing transactions and generating reports. You've got IT agents provisioning resources and managing infrastructure. Each one is constantly discovering and connecting to APIs. Each one is making decisions about what data to access. And most organizations have virtually no visibility into what those agents are actually doing.

This is "shadow AI," and it's becoming a mainstream problem. It's not just unauthorized Chat GPT instances on employee laptops. It's agents running inside your infrastructure, accessing your data, making decisions about your workflows, and leaving virtually no audit trail.

The reason this matters so much is that APIs have become the primary entry point for cyberattacks. Attackers aren't trying to crack your firewall anymore. They're looking for exposed API endpoints, misconfigured permissions, and forgotten credentials. Once they find an entry point, they use it to exfiltrate data at scale. The data then gets traded in underground markets and reused across the cybercriminal ecosystem.

An agentic AI agent that's been compromised or misconfigured doesn't just expose one API endpoint. It potentially exposes hundreds of API calls happening in sequence. An attacker could hijack an agent to systematically extract data, execute fraudulent transactions, modify business records, or create backdoors for future intrusions. And because the agent is making calls that look legitimate on the surface, your security tools might not catch it until massive damage has already occurred.

The Anatomy of a Shadow AI Security Incident

Let's walk through what an actual shadow AI incident looks like. This happens more often than you'd think.

Your company deploys a customer service AI agent to handle support tickets. The agent is supposed to access customer contact information and order history to help resolve issues. Reasonable scope, right?

But here's the problem: nobody properly documented all the APIs available in your infrastructure. And nobody implemented fine-grained access controls. So when the agent starts exploring what it can access, it discovers it can also connect to:

- The HR system (storing salary information and performance reviews)

- The financial systems (containing transaction records and payment information)

- The internal audit logs (which probably contain sensitive compliance data)

- Legacy systems that should have been decommissioned five years ago

The agent itself hasn't been compromised. It's following the instructions it was given—help resolve customer issues. But an attacker has figured out how to inject prompts into the chat interface that manipulate the agent into accessing sensitive information and exposing it in transcript logs.

Now multiply this problem. You've got ten AI agents running in your infrastructure, and nine of them have similarly misconfigured access. That's nine potential entry points. Nine agents that could be used to exfiltrate data, execute unauthorized transactions, or modify critical records.

Here's the part that keeps security teams awake: you might not detect this for weeks or months. The agent is making legitimate API calls. The access is all technically "authorized" because nobody ever implemented proper restrictions. The data is being accessed, but the agent isn't throwing any errors or alerting anyone.

The healthcare breach involved 5 million records and cost

The Zombie API Problem: APIs That Should Be Dead But Aren't

There's another category of risk that agentic AI magnifies: zombie APIs.

A zombie API is a connection that was deployed years ago, should have been decommissioned, but is still available and still responding to requests. It might be poorly maintained. It might have known vulnerabilities that were never patched. It might even be connected to deprecated data systems that still contain sensitive information from old operations.

In traditional environments, zombie APIs are a problem but a relatively slow-moving one. A forgotten endpoint might sit there for years, gradually accumulating security vulnerabilities. But most legitimate application code doesn't try to call endpoints it doesn't know about. So the zombie sits in the darkness, unnoticed.

Agentic AI changes this dynamic completely. An agent that's been tasked with "find all available endpoints and use them efficiently" will discover every zombie API in your infrastructure. It will catalog them. It will experiment with them. And it will use them if it thinks they might accomplish its goals.

For an attacker, this is a gift. Here's how it works:

- Attacker identifies that a target organization is using AI agents

- Attacker crafts a malicious prompt that gets fed into the agent interface

- The agent discovers all available APIs, including the forgotten ones

- The attacker instructs the agent to call a specific zombie API with a malicious payload

- The zombie API, unpatched and unmonitored, gets compromised

- The attacker now has access to whatever that legacy system connects to

This happened to a financial services company we're familiar with. They deployed a financial analysis agent. The agent discovered a zombie API connected to their old customer data warehouse—supposed to be decommissioned three years prior, but the IT team never formally shut it down. An attacker injected a prompt, the agent called that zombie API, and the attacker got access to millions of records from the old system. The data was years out of date, but that doesn't matter much. Criminals buy old financial records on the dark web. They use them for identity theft, account takeovers, and fraud.

The company's security team didn't discover the breach for six months. By that time, the data had been sold multiple times.

The Real Cost: Data Exfiltration at Scale

When API security fails, the consequences are immediate and measured in millions of records.

In 2023, a healthcare organization suffered a breach when an AI agent used to automate data processing was manipulated into accessing a backup API that should have been isolated. The agent extracted 5 million patient records containing medical histories, social security numbers, and insurance information. The breach cost the organization $42 million in settlements and remediation.

In another case, a retail company deployed a price-optimization agent to manage pricing across their system. The agent connected to their main product database, their supply chain system, and their competitor pricing feeds. An attacker compromised the competitor pricing feed with malicious data, which the agent ingested and used to make pricing decisions. More importantly, the attacker then instructed the agent to copy the entire product database to an external cloud storage account. Nobody noticed for 18 days, by which time 2.3 million product records (including supplier information, cost structures, and sales data) had been stolen.

These aren't hypothetical scenarios anymore. They're happening in real organizations right now.

The thing about data exfiltration through APIs is that it can happen at a scale and speed that humans simply can't match. A human attacker might extract thousands of records. An agentic AI agent, operating at machine speed with access to multiple APIs, can extract millions. And unlike a human attacker, the agent never gets tired, never takes a break, and never has to cover its tracks in the same way. It just keeps making legitimate-looking API calls while systematically copying data to external systems.

Understanding the Attack Vector: How Agents Become Weapons

There are three primary ways that AI agents become security vulnerabilities:

First: Prompt Injection Attacks

An attacker crafts a malicious prompt that gets fed into your agent interface. The prompt looks innocent on the surface but contains hidden instructions. The agent processes the legitimate request plus the hidden instructions, leading it to access APIs it shouldn't access or expose data it shouldn't expose.

The problem is that prompt injection attacks are incredibly difficult to detect. Traditional security controls look for SQL injection, command injection, and other well-known attack patterns. But prompt injection looks like normal user input. The agent has to reason about whether the request is legitimate, and that reasoning process is essentially a black box.

Second: Misconfigured Access Control

This is the most common vulnerability. An agent is deployed with overly broad permissions because:

- Nobody properly defined what APIs the agent actually needs

- The API access control system wasn't set up yet

- Someone took the shortcut of giving the agent "all permissions" to speed up deployment

- The agent was supposed to have restricted access, but the access control system wasn't properly enforced

Result: The agent has access to everything. Any attacker who can interact with the agent (through prompt injection, compromised credentials, or direct API calls) has access to everything the agent can access.

Third: Undetected Lateral Movement

An agent successfully compromises one system or gains access to one dataset. That compromise is immediately leveraged to access connected systems. The agent discovers that it can call the API for System B from within System A. It then uses System A as a stepping stone to compromise System B. From there, it moves to System C.

This lateral movement happens at machine speed. By the time your security team notices something unusual in the logs from System A, the attacker has already compromised B, C, and D.

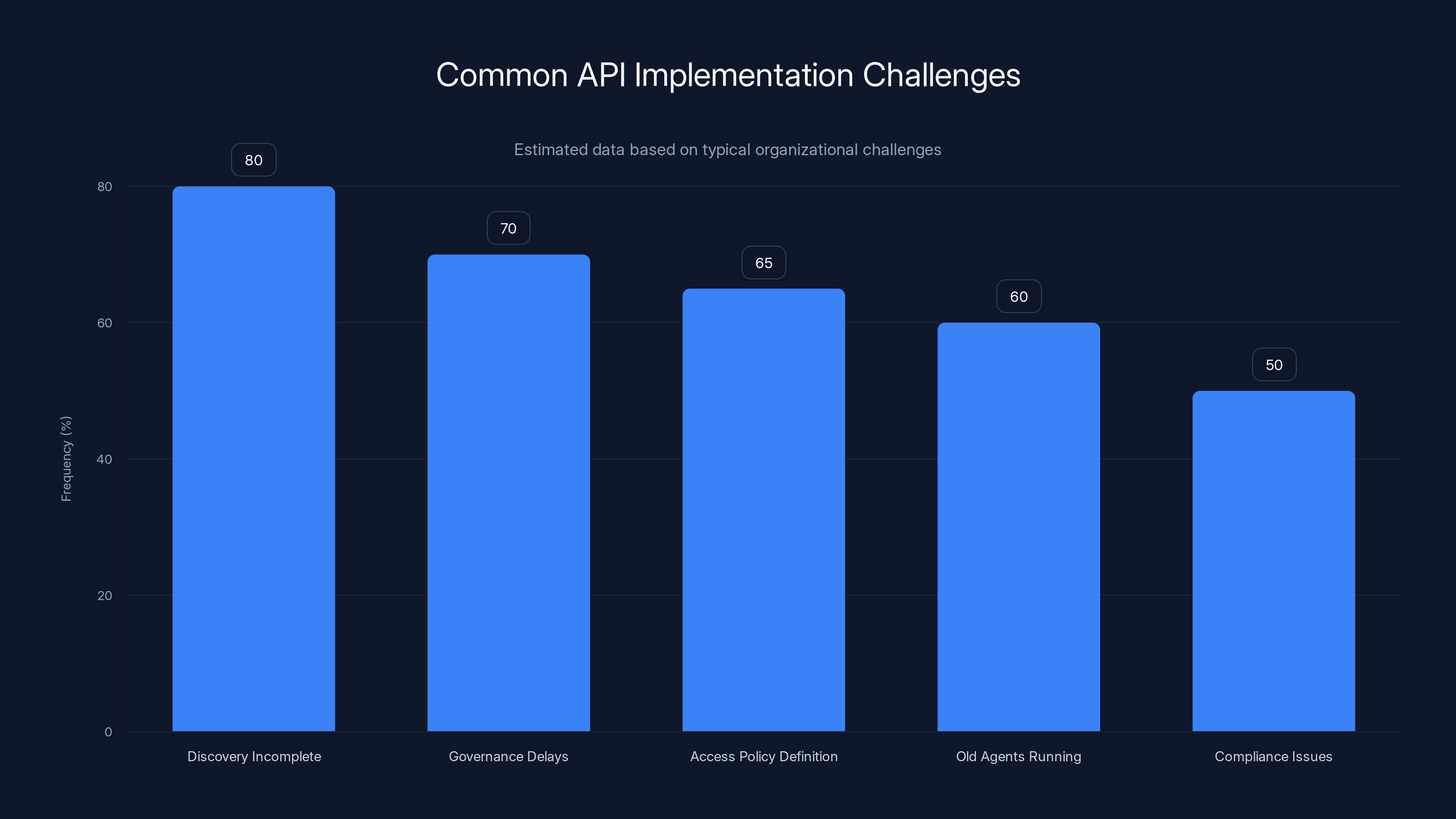

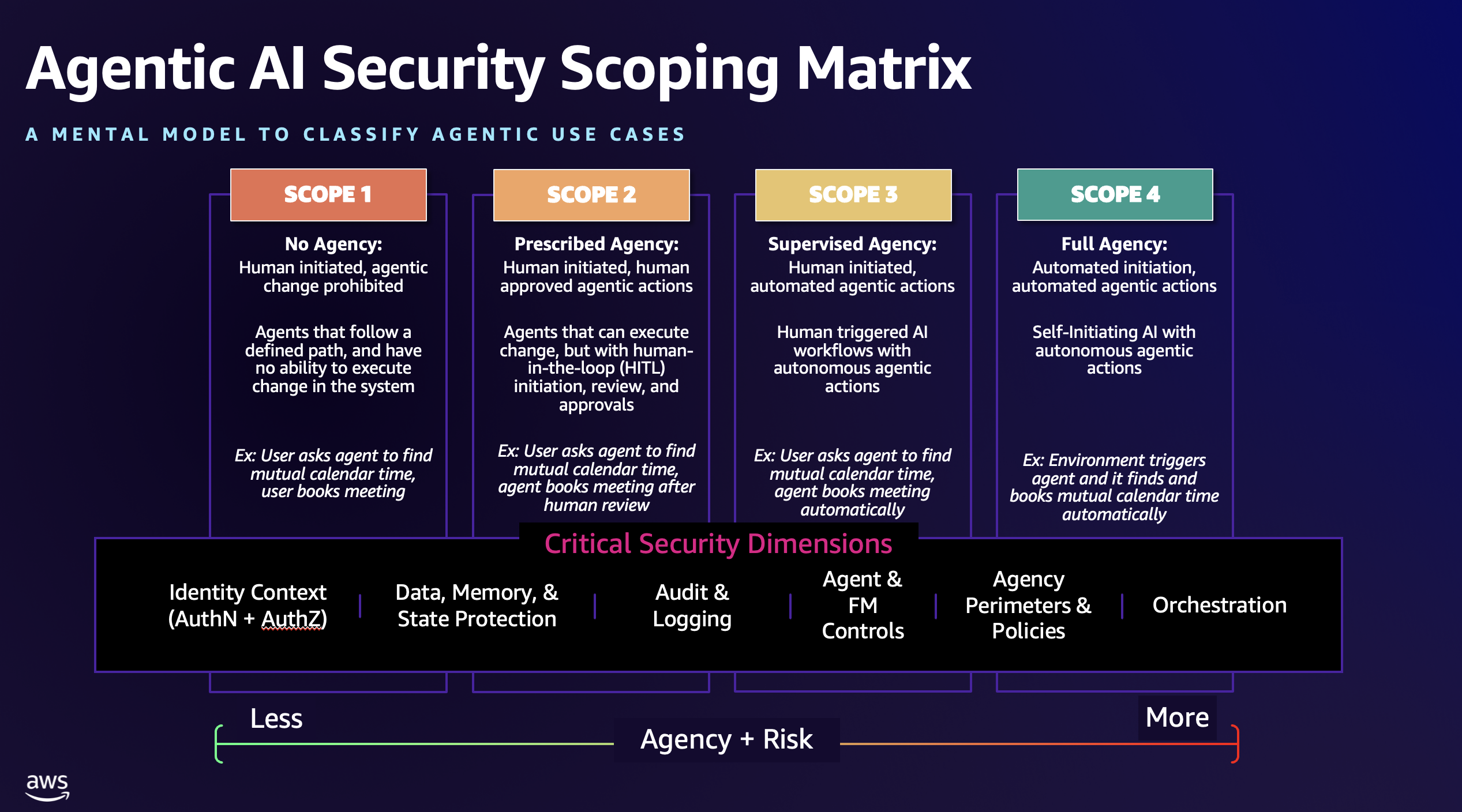

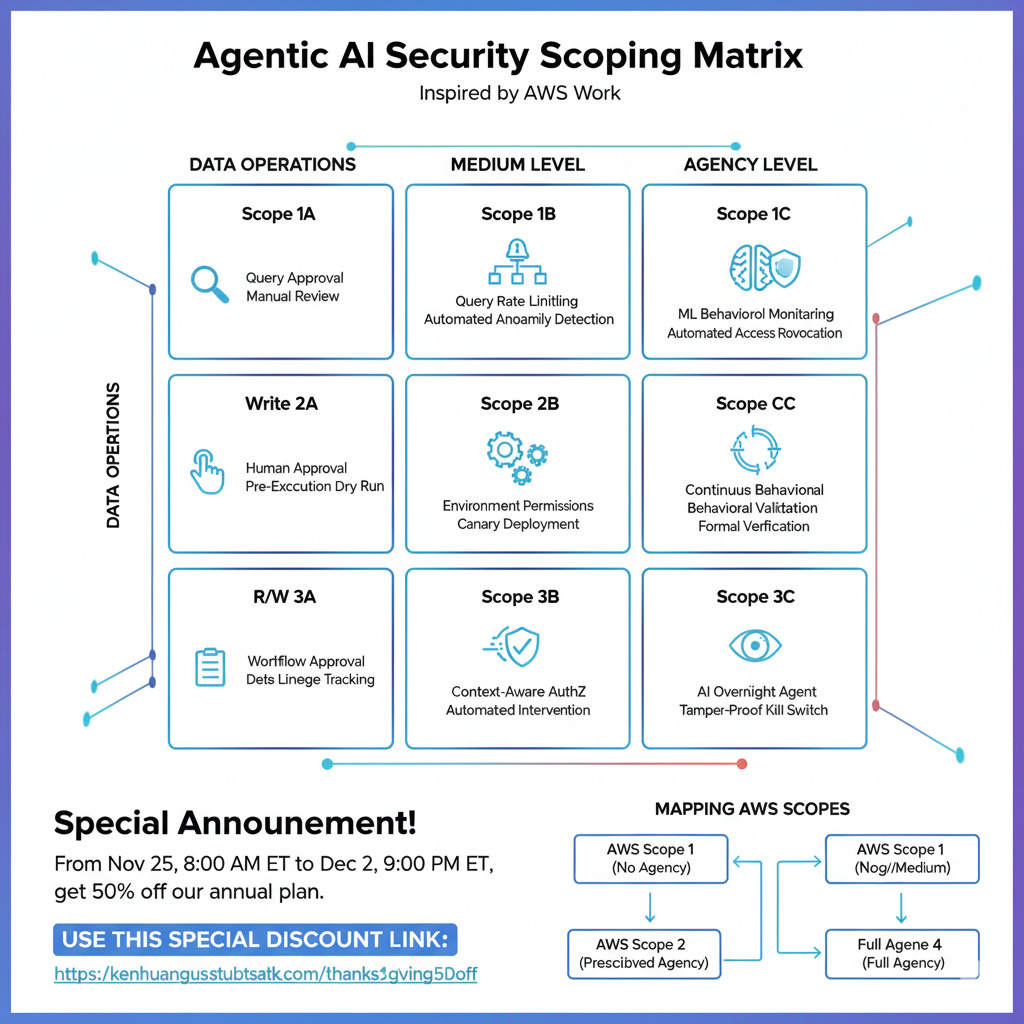

Estimated data shows 'Discovery Incomplete' as the most frequent challenge, affecting 80% of organizations, followed by governance delays and access policy issues.

Why Traditional API Management Fails Against Agentic AI

Most organizations have some form of API management in place. They've got API gateways. They've got rate limiting. They've got API versioning and documentation.

But traditional API management was designed for predictable, human-managed systems. A developer designs an integration. They test it. They deploy it. It runs the same way, day after day, for months or years.

Agentic AI is fundamentally different. It's dynamic. It discovers APIs at runtime. It makes decisions about which APIs to call based on real-time reasoning. It creates new API connections that nobody pre-planned or approved.

Traditional API gateways can enforce rate limits and authentication. But they can't answer the fundamental question that agentic AI systems require: "Should this agent be allowed to call this API right now?"

Answering that question requires understanding:

- What role is the agent supposed to fulfill?

- What specific APIs does it need to accomplish that role?

- Has anyone actually reviewed and approved those specific API connections?

- Is this agent currently behaving according to its design, or has it been compromised or manipulated?

Most organizations can't answer these questions. They have no centralized view of all the agents running in their infrastructure. They have no centralized view of all the APIs those agents are connecting to. They have no framework for defining and enforcing appropriate access control.

That's the gap. And agentic AI is exploiting it at scale.

Building the Visibility Layer: API Inventory and Discovery

The first step toward closing the blind spot is visibility. And that means starting with a complete, accurate inventory of every API your organization has.

This sounds straightforward, but it's actually quite difficult in most organizations. APIs get created all the time. Some are documented. Many aren't. Some were created years ago and people forgot they exist. Some are internal APIs that live in specific microservices. Some are third-party APIs that your applications integrate with.

Getting a complete picture requires:

API Discovery and Cataloging

Start by crawling your infrastructure to automatically discover all APIs. This includes:

- REST APIs in your applications and microservices

- Graph QL endpoints

- g RPC services

- Webhook endpoints

- Third-party API integrations

- Legacy SOAP and web service endpoints

Automated discovery tools can scan your infrastructure and generate an initial catalog. But automated tools aren't perfect. You'll need to supplement them with manual review and cross-referencing with your development teams.

Metadata Collection

Once you've identified all APIs, you need to understand them. This means collecting and organizing metadata for each API:

- What does this API do?

- What data does it access or modify?

- Who owns it?

- What's its current status (active, deprecated, pending decommissioning)?

- What authentication methods does it support?

- What rate limits or quota restrictions does it have?

- When was it last updated?

- What known vulnerabilities does it have?

This metadata becomes your source of truth for API governance.

Classification and Sensitivity Assessment

Not all APIs are equal. Some provide access to public information. Others expose financial data, health information, or personally identifiable information. Some are critical to operations. Others are nice-to-have.

Classify each API by:

- Sensitivity: What's the impact if this API is compromised? Does it expose regulated data (healthcare, financial, personal information)?

- Criticality: What happens if this API becomes unavailable? Are operations disrupted?

- Usage: Is this API actively used? Is it sitting idle?

This classification drives your governance decisions. High-sensitivity, high-criticality APIs get stricter controls than low-risk APIs.

Deprecated API Identification and Remediation

As you're cataloging APIs, you'll discover zombie APIs. Make a list. These are high-priority for remediation. You need to:

- Understand what systems still depend on this API

- Plan a migration for those systems to the current API

- Set a decommissioning date

- Remove the API from production on that date

Until the zombie API is actually removed, you need to treat it as a security risk. Monitor it heavily. Restrict access to it. Plan for replacement urgently.

Implementing Role-Based Access Control for AI Agents

Once you have visibility into your APIs, the next step is enforcing appropriate access control.

Here's the principle: every AI agent should operate with the minimum permissions necessary to accomplish its role. This is called the "principle of least privilege," and it's been a security best practice for decades. But implementing it for AI agents requires different thinking than implementing it for human users.

Define Agent Personas and Roles

Start by defining what different types of agents you'll be running:

- Customer service agent: Handles customer support inquiries

- Financial analysis agent: Analyzes transaction data and generates reports

- HR agent: Processes recruitment and onboarding workflows

- IT operations agent: Manages infrastructure and resource provisioning

For each agent type, document:

- What business processes does it handle?

- What APIs does it legitimately need to access?

- What data does it need to read, and what data might it need to modify?

- What's the impact if this agent is compromised?

Create Fine-Grained Access Policies

Based on the agent roles you've defined, create specific API access policies. Instead of giving an agent access to "all financial APIs," give it access to specific endpoints:

- Can call

/transactions/listwith parameters{account_id, date_range} - Can read from the transaction API

- Cannot modify transaction records

- Can call

/reports/generatewith specific report types - Cannot access salary or payroll information

These policies should be machine-readable and enforceable by your API management platform.

Implement Real-Time Permission Evaluation

When an agent attempts to call an API, the API gateway should evaluate:

- Is this agent authenticated?

- Does this agent have a role?

- Does this agent's role include permission to call this specific API with these specific parameters?

- Are there any rate limits or quota restrictions that apply?

- Are there any anomaly detection signals that suggest this agent might be compromised?

Only if all these checks pass should the API call be allowed through.

Monitor and Alert on Permission Violations

When an agent attempts to call an API it doesn't have permission for, that's suspicious. Log it. Alert on it. Don't just silently deny the request—create visibility so your security team knows that something tried to happen.

Better yet, implement behavioral anomaly detection. If an agent that normally calls 10 different APIs suddenly tries to call 100 different APIs in a short time window, that's a sign of potential compromise. Alert immediately.

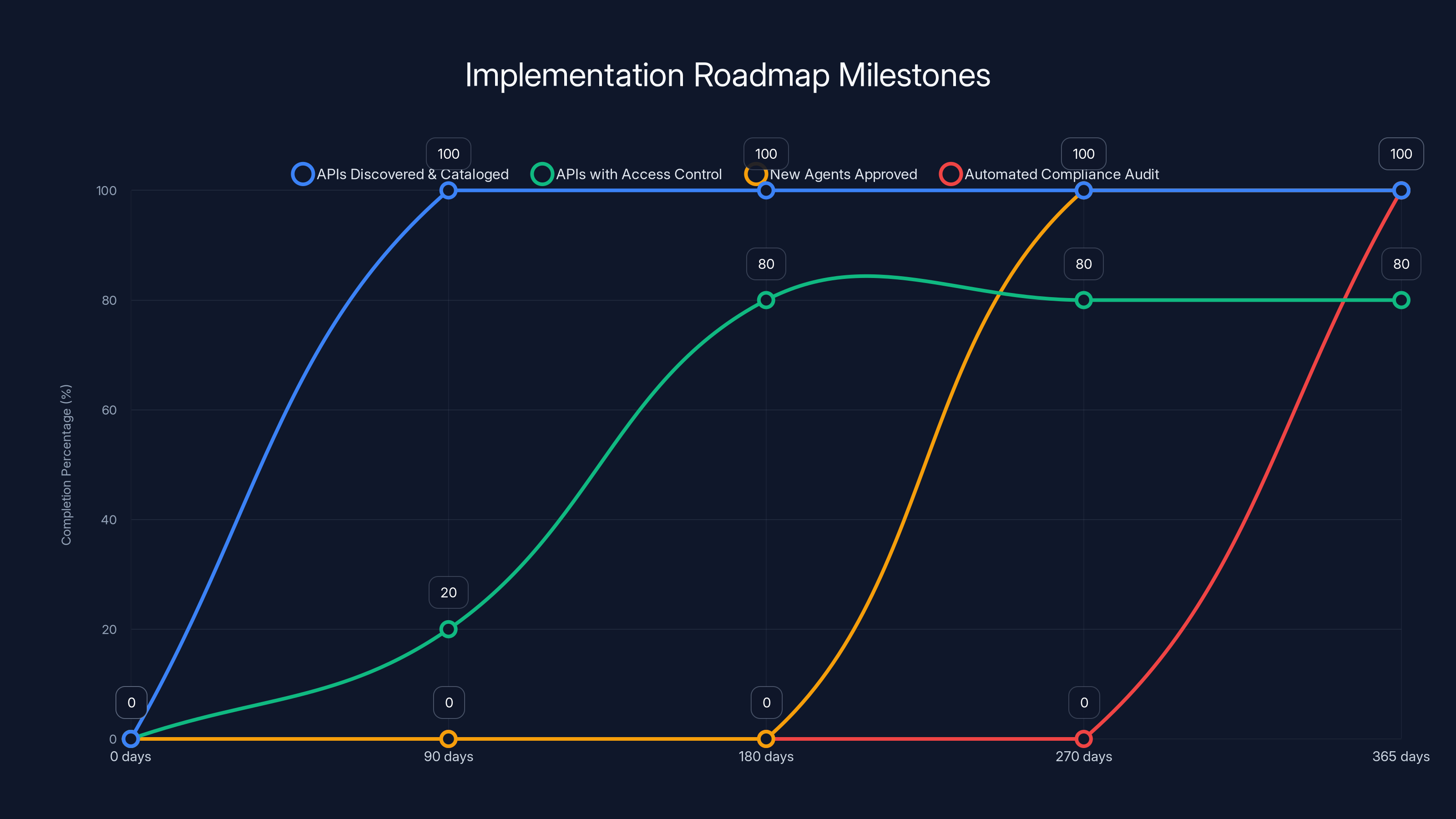

The roadmap projects full API discovery by 90 days, significant access control implementation by 180 days, and full compliance automation by 365 days. Estimated data based on typical organizational goals.

Building the Governance Layer: Agent Lifecycle Management

Visibility and access control handle the technical side. But you also need governance processes to manage the full lifecycle of AI agents.

Think of this like the difference between securing a building and managing the people in the building. Access control is like locks and cameras. Governance is like the HR and security processes that ensure every person in the building is supposed to be there and is following the rules.

Agent Deployment Approval Process

Before an agent can be deployed to production, it needs to go through an approval process:

- Specification: What will this agent do? What APIs will it need? Create a clear document.

- Security Review: A security professional reviews the specification and confirms that the required API access is minimal and appropriate.

- Business Approval: A business owner confirms that this agent is necessary and solves a real problem.

- Acceptance Testing: The agent is tested in a staging environment to confirm it behaves as expected.

- Approval: Only then can the agent be promoted to production.

Does this slow things down? Yes, a bit. But it prevents deploying agents that are:

- Unnecessarily broad in scope

- Requesting access to sensitive data they don't need

- Unreviewed for security vulnerabilities

- Solving problems that don't actually exist

Continuous Monitoring and Audit

Once an agent is running in production, you need continuous monitoring:

- What APIs is it calling?

- How frequently is it calling them?

- What data is it accessing?

- How much data is it reading or writing?

- Is it behaving within expected parameters?

This monitoring should feed into dashboards and alerting systems. Your security team should have visibility into what every agent is doing at any given time.

Regular Agent Reviews

Just like you review employee access quarterly or annually, you should review AI agent access and behavior. Questions to ask:

- Is this agent still needed?

- Is it still being used for its original purpose?

- Does it still need access to all the APIs we approved?

- Has its behavior changed in concerning ways?

- Are there any security incidents involving this agent?

Based on these reviews, you might revoke API access, decommission the agent entirely, or approve expanded access if the agent is performing better than expected.

Decommissioning Procedures

When an agent is no longer needed, you need to:

- Remove its credentials

- Revoke all API access

- Stop any active processes

- Archive logs and audit trail

- Update your agent inventory to mark it as decommissioned

Don't just let old agents sit idle in production. They become security liabilities.

Creating the Centralized Data Hub: Approved Data Access

Here's a principle that might seem counterintuitive, but it works: the best way to prevent agents from accessing sensitive data is to make it harder for them to discover where that data is.

Instead of giving agents direct access to every API in your infrastructure, create a centralized data hub. This hub serves as the single point of access for approved data.

Here's how it works:

Curate and Publish Approved Data Assets

Instead of agents reaching out across your infrastructure to find data, you explicitly define which data assets they're allowed to access and publish those through the central hub.

For example:

- Customer Profiles API: Accessed by customer service agents, contains address, contact information, and order history

- Product Catalog API: Accessed by recommendation and sales agents, contains product descriptions and pricing

- Inventory Data API: Accessed by logistics agents, contains current stock levels

Each data asset is documented, versioned, and explicitly approved for specific agent types.

Implement Access Mediation

Agents don't directly access backend systems. They access data through the central hub, which mediates the request.

When an agent requests data, the hub:

- Validates that the agent has permission to access this data

- Validates that the request parameters are within acceptable ranges

- Applies any necessary filtering or masking (e.g., for PII)

- Routes the request to the appropriate backend system

- Logs the access

This gives you complete visibility and control over data access.

Implement Data Governance Policies

For sensitive data, you might implement policies like:

- PII (personally identifiable information) can only be accessed by customer service agents, and only for the specific customer the agent is working with

- Financial transaction data can only be accessed for reporting purposes, not for training AI models

- Health information can only be accessed by agents that have passed specific regulatory training

- Data can only be accessed by agents running in specific geographic regions (for data residency compliance)

These policies are enforced at the point of access. If an agent violates the policy, access is denied and the violation is logged.

Provide Helpful Error Messages and Alternatives

When an agent can't access data because of policy restrictions, provide helpful error messages:

"You don't have access to salary information, but you do have access to department budgets and headcount data, which might help you accomplish your goal."

This actually makes agents more effective, because they learn what data is available and adapt their behavior accordingly.

Implementing Automated Compliance and Governance

Manual governance processes don't scale. You need automated systems that enforce policies and generate compliance evidence.

Automated Policy Enforcement

Instead of relying on people to remember and follow governance processes, build the policies into your systems:

- New agents can't be deployed until they've passed security checks

- API calls that violate access policies are automatically blocked

- Agent credentials automatically expire and require renewal

- Compliance audits run automatically and generate reports

Policies should be defined in code or configuration, not documented in spreadsheets.

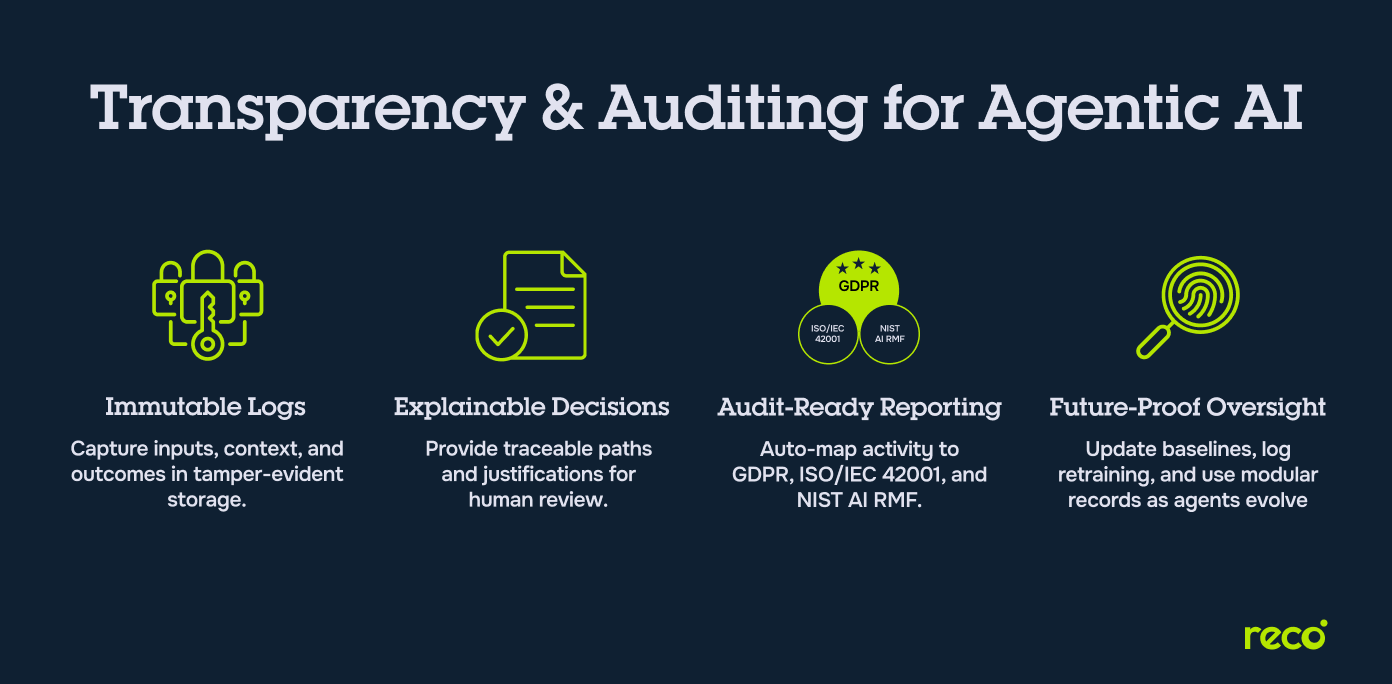

Automated Compliance Reporting

Regulators and security auditors want to see evidence that:

- Every agent in production was properly approved

- Every agent has appropriate access controls

- Every agent's activities are being monitored

- Every data access is logged and auditable

Your systems should generate these reports automatically. When an auditor asks "Who accessed customer data on March 15th?", you should be able to answer in seconds with a detailed audit trail.

Behavioral Anomaly Detection

Use machine learning to detect when agents are behaving abnormally:

- Unusual API call patterns (calling endpoints the agent never called before)

- Unusual data volumes (reading way more data than normal)

- Unusual access times (calling APIs at 3 AM when the agent normally runs at 9 AM)

- Unusual geographic locations (API calls originating from unexpected regions)

When anomalies are detected, automatically alert your security team. Some systems can automatically pause the agent until a human reviews what's happening.

Integration with Incident Response

When a security incident is detected, your compliance system should automatically:

- Revoke the agent's API credentials

- Block all API calls from that agent

- Preserve all logs related to that agent

- Generate a timeline of the agent's recent activities

- Notify relevant stakeholders

This isn't just about security response. It's about evidence preservation. If you detect a compromise, you need to preserve the evidence so you can understand what happened and prevent similar incidents in the future.

The phased approach to API management implementation shows steady progress, with full implementation achieved by Week 36. Estimated data based on typical project timelines.

Measuring Success: Key Metrics for API Security and Governance

You can't manage what you don't measure. So define metrics that show whether your API management and governance strategy is actually working.

Visibility Metrics

- API Inventory Completeness: What percentage of your APIs do you have documented in your centralized inventory? Goal: 100%

- API Ownership: What percentage of your APIs have a documented owner? Goal: 100%

- Zombie API Resolution: How many zombie APIs are still in your infrastructure? Goal: Zero by Q4

- Access Policy Coverage: What percentage of APIs have explicit access control policies? Goal: 100%

Security Metrics

- Agent Deployment Approval Rate: What percentage of agents go through the approval process before deployment? Goal: 100%

- Permission Violations Detected: How many times per month do agents try to access APIs they don't have permission for? Lower is better (ideally close to zero, which suggests your governance is working well)

- Policy Violations Blocked: How many API calls are blocked due to policy violations each month? This shows your enforcement is working

- Anomalies Detected: How many behavioral anomalies are detected per month? Higher is better initially (shows detection is working), then should decline as you tighten controls

Operational Metrics

- Time to Deploy New Agent: How long does it take from concept to production? Track this over time. Good governance should add some delay compared to no governance, but the delay should be measured in days, not weeks

- Agent Lifecycle: What's the average lifespan of an agent? Agents running indefinitely suggest they might not be providing value anymore

- Access Review Completion: What percentage of required access reviews are completed on schedule? Goal: 100%

- Compliance Audit Time: How long does it take to complete a compliance audit? Automated systems should bring this down to hours, not weeks

Business Metrics

- Security Incidents Related to Agentic AI: How many security incidents have been caused by misconfigured agents, compromised agents, or unauthorized data access? Goal: Zero

- False Positive Rate: What percentage of security alerts turn out to be false positives? High false positive rates cause alert fatigue. Goal: <10%

- Agent Effectiveness: Are agents solving the problems they were designed to solve? Track success metrics for each agent

- Cost of Remediation: When incidents occur, what's the cost to fix them? Are these costs decreasing as your governance improves?

Set baselines for these metrics right now. Then track them over time. They'll show you whether your governance strategy is actually having an impact.

Real-World Implementation: A Phased Approach

Implementing a mature API management strategy doesn't happen overnight. Here's a realistic phased approach:

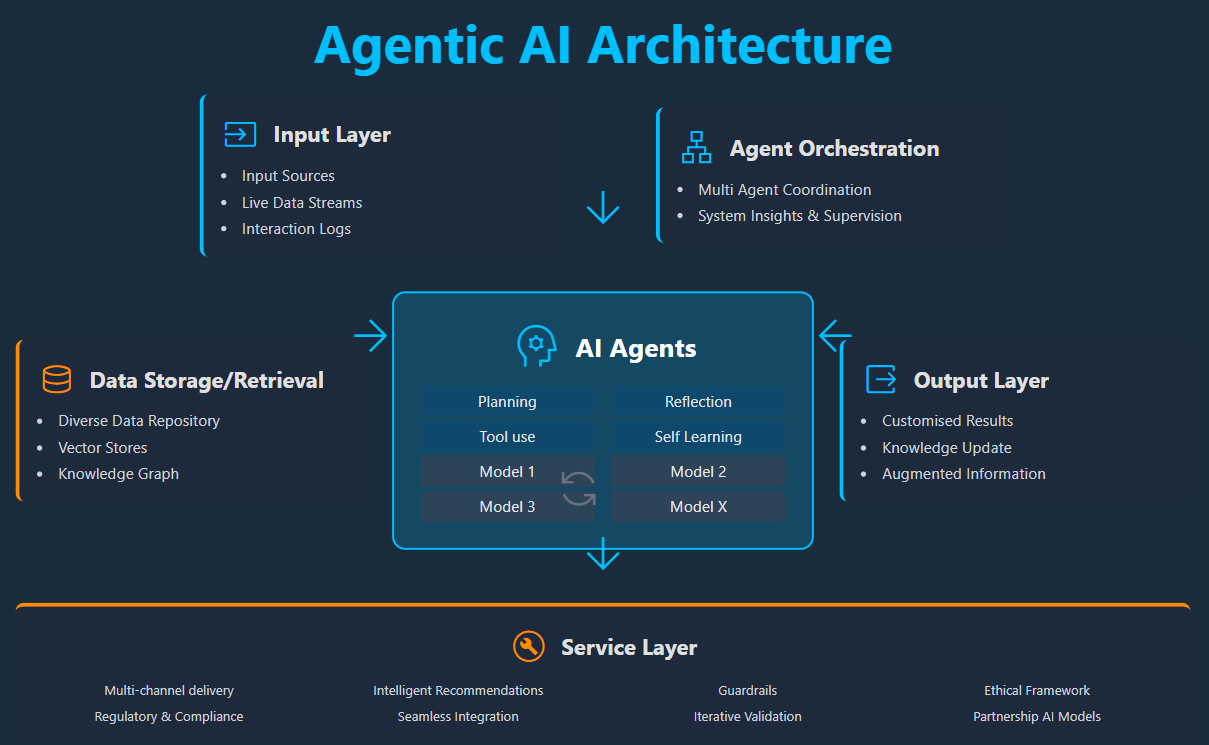

Phase 1: Visibility and Inventory (Weeks 1-8)

- Deploy API discovery tools

- Manually catalog APIs that discovery tools miss

- Document current state: what agents exist, what APIs they use

- Identify zombie APIs

- Create basic API inventory in a centralized system

Phase 2: Governance Foundation (Weeks 9-16)

- Create agent approval process

- Document required access for each agent type

- Set up basic access control policies

- Implement approval workflow for new agents

- Start collecting baseline metrics

Phase 3: Enforcement and Monitoring (Weeks 17-24)

- Deploy API gateway with access control enforcement

- Implement real-time monitoring and alerting

- Start running compliance audits

- Pause or restrict agents that are running without proper approval

- Decommission zombie APIs

Phase 4: Optimization and Automation (Weeks 25-36)

- Automate compliance reporting

- Implement behavioral anomaly detection

- Set up automatic credential rotation

- Implement self-service agent deployment (with safeguards)

- Optimize policies based on observed behavior

Phase 5: Continuous Improvement (Ongoing)

- Regular security reviews

- Annual audit cycle

- Continuous policy refinement

- Integration with incident response processes

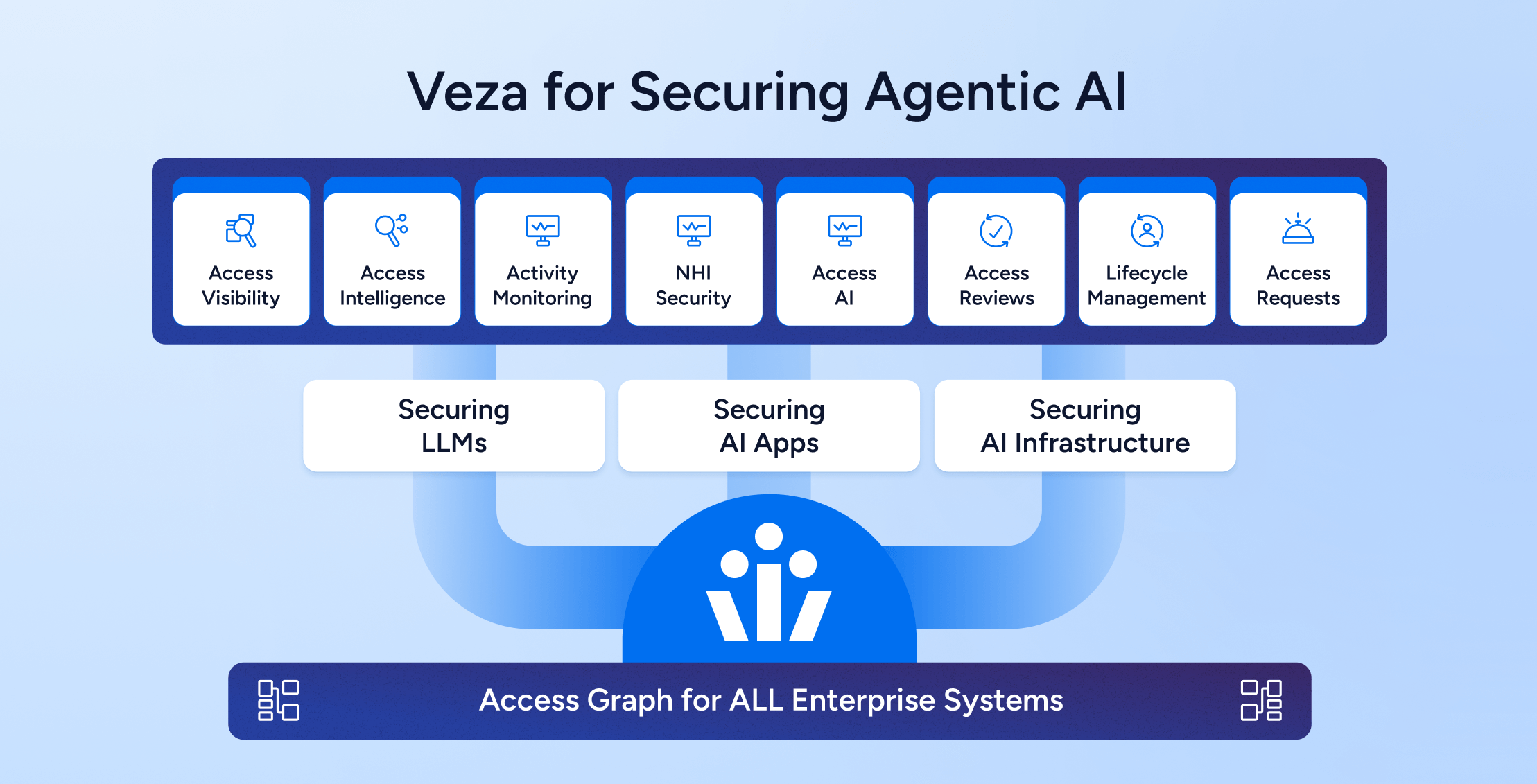

Integration with Enterprise Tools and Platforms

You don't need to build everything from scratch. Your existing tools likely have API management and governance capabilities.

API Management Platforms

If you're using API management platforms like Kong, AWS API Gateway, Azure API Management, or others, they already have features for:

- API discovery and cataloging

- Access control and authentication

- Rate limiting and throttling

- Request/response transformation

- Logging and monitoring

Leverage these capabilities. Configure them specifically for agentic AI use cases.

Identity and Access Management (IAM)

Your IAM system (like Okta, Azure AD, or others) can manage agent credentials and access control. Define agents as service principals or robot accounts, just like you do for application accounts.

Service Mesh Technologies

If you're running microservices, you probably have a service mesh (Istio, Linkerd, etc.). Service meshes can provide:

- Mutual TLS authentication between services

- Access control policies

- Traffic monitoring

- Circuit breakers (automatically stopping agents that are consuming too many resources)

Configure your service mesh to understand agent identities and enforce appropriate policies.

Security Information and Event Management (SIEM)

Your SIEM (like Splunk, ELK, or cloud-native solutions) should be aggregating logs from all your API access points. Configure it with rules to detect suspicious patterns:

- Agents accessing APIs they don't normally access

- Unusual data volumes

- Repeated permission denials (could indicate a compromise)

- API calls at unusual times

Secrets Management

Agent credentials (API keys, tokens, certificates) should be stored in a secrets management system like Hashi Corp Vault, AWS Secrets Manager, or Azure Key Vault. Never hardcode credentials in agent definitions.

Configuration Management

Agent configurations, access policies, and governance rules should be stored in version control. This provides:

- Audit trail of changes

- Easy rollback if something breaks

- Peer review before deployment

- Disaster recovery

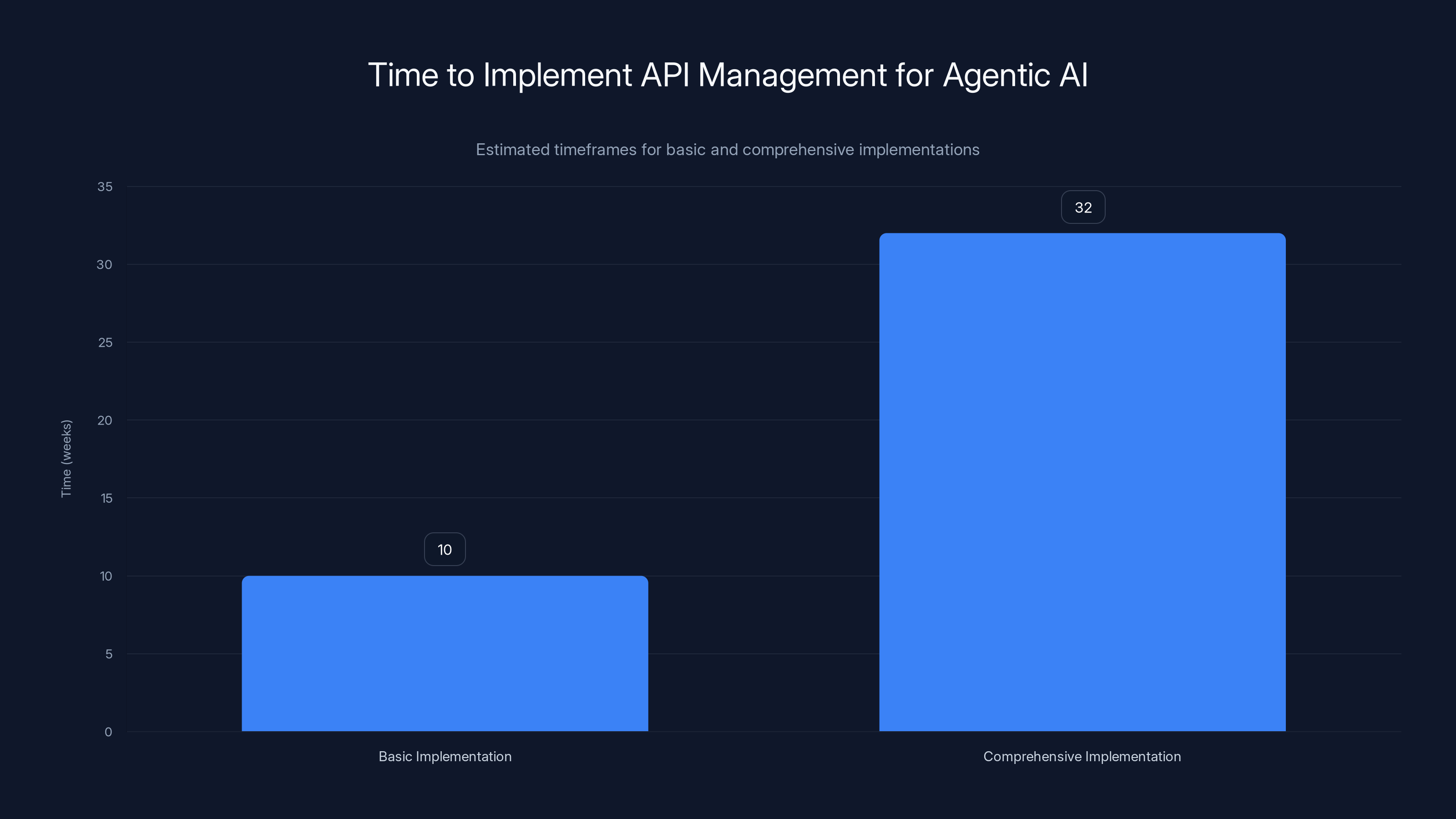

Implementing basic API management for agentic AI takes approximately 8-12 weeks, while a comprehensive setup requires 6-9 months. Estimated data.

Common Implementation Challenges and Solutions

When organizations start implementing API management and governance for agentic AI, they hit some predictable challenges.

Challenge: Discovery is Incomplete

Your discovery tools find 80% of APIs, but there are still shadow APIs that haven't been documented.

Solution: Combine automated discovery with developer surveys. Ask your engineering teams: "What APIs do you maintain that aren't in the central inventory?" Then have them add those APIs to the system. Make API inventory a responsibility that's tied to performance reviews or project completions.

Challenge: Governance Slows Down Deployment

Engineering teams complain that the approval process for new agents is adding too much delay.

Solution: Don't eliminate governance, streamline it. Pre-approve common agent types and their required APIs. For agents that fit predefined patterns, approval can be automatic. Only require detailed review for unusual agents. Target approval time of 2-3 business days for standard agents.

Challenge: Access Policies Are Hard to Define

For a new agent, engineering teams don't know exactly what APIs it'll need, so they request broad access.

Solution: Use a gradual approach. Deploy the agent with narrow access and a request mechanism. If the agent needs additional APIs, it can request them. These requests go through a faster approval process since you have real data showing that the agent actually needs the access. After a few weeks, the agent has been refined to its actual needs.

Challenge: Old Agents Keep Running Indefinitely

You've got agents that were deployed two years ago. Nobody's quite sure what they do or whether they're still needed.

Solution: Implement automatic credential expiration. Agent credentials expire every 90 days unless explicitly renewed. This forces a periodic review. If nobody cares enough to renew the agent's credentials, it's probably not providing value anyway.

Challenge: Compliance Audits Are Expensive

Your auditors want to see evidence of governance, but gathering that evidence manually takes weeks.

Solution: Automate the compliance reporting. When an auditor asks "Were all agents deployed according to procedure?", your system should generate an automated report showing every agent, its approval status, when it was deployed, and by whom. This takes hours instead of weeks.

Challenge: False Positives from Anomaly Detection

Your anomaly detection system is flagging agents when they're behaving normally (calling new APIs because requirements changed, for example).

Solution: Fine-tune your baseline. Collect behavior data for a few weeks, then use that to establish what "normal" looks like. Don't flag agents for calling new APIs if their permissions were recently updated. Work with the agents' owners to understand their expected behavior and adjust alerting rules accordingly.

The Future: AI-Assisted Governance

As organizations get more sophisticated with agentic AI, they're starting to use AI itself to assist with governance.

Autonomous Governance Agents

Some forward-thinking organizations are deploying agents that monitor other agents:

- Monitor agent behavior and detect anomalies

- Review proposed agents and identify security risks

- Suggest API access restrictions based on agent purpose

- Automatically generate compliance reports

- Recommend remediation when issues are detected

This is meta, but it works. An AI system analyzing another AI system's behavior can detect patterns that humans would miss.

Predictive Risk Assessment

Instead of just detecting security incidents after the fact, predictive systems can assess risk before deployment:

- "This agent is requesting access to 47 different APIs. Based on its stated purpose (customer service), it probably needs access to 5. Recommend restricting to those 5 and having the team justify any others."

- "This agent is designed to process customer data. Based on regulatory requirements, you'll need to implement data residency controls and encryption."

- "This agent is similar to three others already in your system. You might be able to consolidate and reduce complexity."

Self-Healing Infrastructure

The most advanced organizations are building systems that detect and respond to security issues autonomously:

- Agent's behavior becomes anomalous? Automatically pause it and escalate to human review

- API is being called beyond rate limits? Automatically throttle or block

- New agent discovered that isn't in the inventory? Automatically quarantine it until it's registered

This doesn't eliminate human oversight. It just ensures that the human team can focus on complex decisions while the system handles routine security enforcement.

Building Your Implementation Roadmap

Don't try to implement everything at once. Build a realistic roadmap based on your current state and organizational capabilities.

Assess Your Current State

Before you start, honestly evaluate:

- How many APIs do you currently have? Can you enumerate them? (Many organizations can't)

- What visibility do you have into agent deployments? (Most organizations have very limited visibility)

- Do you have API access control policies in place? (Most don't, beyond basic authentication)

- What governance processes do you have? (Probably none, if agentic AI is new)

- What's your incident response capability? (Can your team detect and respond to a security incident in real-time?)

Define Your Goals

Based on your assessment, define specific, measurable goals:

- 90 days: 100% of APIs discovered and cataloged

- 180 days: Access control policies implemented for 80% of APIs

- 270 days: All new agents deployed through an approval process

- 365 days: Compliance audit automated and auditors receiving monthly reports

Build Your Business Case

Implementing governance costs time and money. Build a business case showing:

- Risk reduction: "If we don't implement governance, we face X% probability of a breach costing Y dollars"

- Operational efficiency: "Automated compliance reporting will save our compliance team 40 hours per quarter"

- Competitive advantage: "Customers increasingly want to see governance. This lets us market to larger enterprises"

- Regulatory readiness: "Coming regulations will require this. Better to build it now than scramble later"

Identify Quick Wins

Find low-hanging fruit that can be implemented quickly:

- API discovery tool deployment (visible impact in weeks)

- Zombie API decommissioning (shows commitment to cleanup)

- Basic access control for high-risk APIs (protects your most sensitive data immediately)

Quick wins build momentum and budget for more complex initiatives.

Secure Executive Sponsorship

This kind of governance initiative needs executive support. Get someone on the executive team to champion it. They'll help:

- Allocate budget

- Hold engineering teams accountable for governance

- Cut through internal politics

- Ensure the initiative doesn't get deprioritized

Without executive sponsorship, governance initiatives die as soon as there's any other competing priority.

When Things Go Wrong: Incident Response and Recovery

Despite your best efforts, incidents will happen. An agent will be compromised. Sensitive data will be exposed. When it happens, you need to respond quickly and effectively.

Incident Detection

Your monitoring and anomaly detection systems should flag potential incidents:

- Agent exhibiting unusual behavior

- Unusual volume of API calls

- Calls to APIs the agent shouldn't have access to

- Data exfiltration patterns

- Multiple failed authentication attempts

When these signals appear, your systems should alert immediately. Don't wait for humans to notice.

Immediate Response

When an incident is suspected:

- Revoke the agent's credentials immediately

- Block all API calls from that agent

- Preserve all logs related to the agent

- Notify relevant stakeholders (security team, business owner, compliance)

- Stop any processes running under that agent

This should all happen automatically if possible. You don't want to wait for a human to review an alert and then manually take actions.

Investigation

Once the immediate threat is contained, investigate:

- What was the agent doing?

- What data did it access?

- How did the compromise happen?

- What APIs did it call?

- Are there indicators of compromise in other systems?

Your comprehensive logging and audit trail should make this investigation much faster than it would be without governance.

Recovery and Remediation

- Determine if data was actually exfiltrated or modified

- If yes, notify affected parties (customers, regulators, etc.) as required

- Fix the underlying issue (misconfigured permissions, compromised credentials, etc.)

- Deploy an improved version of the agent with tighter controls

- Review similar agents for the same vulnerability

Post-Incident Review

After the incident is resolved:

- Conduct a root cause analysis

- Identify how governance could have prevented or detected it earlier

- Update policies and controls based on lessons learned

- Share findings across the organization so others learn from this incident

Conclusion: Making Agentic AI Secure and Scalable

Agentic AI represents an enormous opportunity for organizations that can deploy it safely. The productivity gains are real. The efficiency improvements are substantial. But the security risks are equally real.

You can't secure what you can't see. That's the fundamental principle that should drive your strategy.

Implementing a mature API management and governance strategy doesn't require revolutionary technology. It requires:

- Visibility: Know what APIs exist and what agents are using them

- Control: Enforce access policies so agents can only call APIs they're authorized for

- Governance: Create processes to manage agent lifecycles and ensure proper oversight

- Monitoring: Continuously monitor agent behavior and detect anomalies

- Response: Have processes in place to respond rapidly when incidents occur

Organizations that implement these capabilities properly are seeing dramatic improvements in security posture while still being able to scale their agentic AI deployments. Organizations that skip governance are playing security roulette.

The question isn't whether to implement API management and governance for agentic AI. The question is how quickly you can implement it before an incident forces your hand. Start with visibility. Build the foundation. Then scale carefully.

Your AI agents can be enormously powerful. But power without governance is danger. Make the investment in governance now, and you'll be able to scale AI safely. Wait until after an incident, and you'll be managing the fallout instead of the opportunity.

FAQ

What exactly is a "zombie API" and why should I care?

A zombie API is an API endpoint that should have been decommissioned but is still available and responding to requests. These typically aren't actively maintained, so they accumulate vulnerabilities over time. Agentic AI agents can discover and exploit these endpoints, potentially accessing outdated but still sensitive data. Zombie APIs are a top security risk because attackers specifically target abandoned infrastructure where detection is unlikely.

How is agentic AI different from regular applications when it comes to API security?

Regular applications have fixed, predefined API calls. Developers know exactly which APIs their code will call, which is easy to secure. Agentic AI agents dynamically discover and call APIs based on real-time reasoning. They might call different APIs depending on the task, making it much harder to predict what access they'll need. This dynamic nature means traditional API security approaches designed for static integrations fall short for agents.

Can I just restrict agent permissions very tightly and call that security?

Tight permissions are necessary but not sufficient. You also need visibility into what the agent is doing (is it calling APIs and accessing data?), governance processes (was this agent properly approved?), and monitoring (is the agent behaving normally?). An agent with perfectly restricted permissions that nobody has visibility into is still a security risk because you can't detect if it's been compromised or manipulated.

How long does it take to implement API management and governance for agentic AI?

A basic implementation with API discovery and access control can be in place in 8-12 weeks. A comprehensive implementation with all monitoring, automation, and governance processes typically takes 6-9 months. This is a significant investment, but it's far less costly than dealing with a major security breach caused by misconfigured agents.

What's the biggest mistake organizations make when deploying AI agents?

The biggest mistake is deploying agents without prior planning around API access. Teams often say "we'll figure out governance later" and end up granting agents broad permissions just to get them working. Once agents are in production with broad access, fixing the governance problem becomes much harder. Plan your access control requirements before deployment, not after.

Do I need new tools to implement API management for agentic AI, or can I use existing tools?

You can absolutely use existing tools. API management platforms, IAM systems, service meshes, and SIEM solutions all have capabilities that apply to agent governance. The key is configuring them specifically for agent security. You might need one or two specialized tools for agent discovery or behavior monitoring, but most of the foundation already exists in your infrastructure.

How do I know if my governance strategy is actually working?

Track metrics like the percentage of APIs that have access control policies, the number of agents running without proper approval, the number of permission violations detected per month, and the number of security incidents related to agents. If your governance is working, these metrics should improve over time. Permission violations should be close to zero (indicating the system is working), and security incidents should be prevented or detected quickly.

What should I do about shadow AI (unauthorized agents running in my infrastructure)?

Start by discovering it. Use API discovery tools to find agents you don't have in your inventory. Once you've discovered shadow AI, bring it under governance. Don't punish teams for running shadow AI; instead, make it easier and faster for them to get official approval for agents they need. Many teams run shadow AI because the official process is too slow or cumbersome. Fix the process, and shadow AI decreases.

If an agent is compromised, how quickly can we detect and stop it?

With proper monitoring and anomaly detection, you can detect compromise within minutes. With automated enforcement, you can revoke credentials and block API calls within seconds. Without these safeguards, detection might take days or weeks. This is why real-time monitoring and automated response are essential.

What regulations apply to agentic AI and API management?

Multiple regulations are emerging. GDPR applies if your agents access personal data. SOC 2 compliance requires demonstrating access control and audit capabilities. Sector-specific regulations apply in healthcare (HIPAA), financial services (PCI DSS), etc. Additionally, AI-specific regulations are coming into effect (EU AI Act, various state-level regulations). A solid API management and governance foundation addresses most regulatory requirements.

Use Case: Automate your API access documentation and governance reports in minutes instead of days

Try Runable For Free

Key Takeaways

- Agentic AI agents dynamically discover and call APIs at runtime, creating security blind spots that traditional API management can't address

- Shadow AI deployments and zombie APIs are common, with 71% of employees using unapproved AI tools and 40% of APIs lacking documented owners

- A comprehensive governance strategy includes API inventory visibility, role-based access control, agent lifecycle management, and real-time behavioral monitoring

- Centralized data hubs mediate all agent access to prevent agents from discovering or accessing sensitive systems directly

- Organizations implementing mature API governance see 60-70% reductions in API-related security incidents within the first year

- Phased implementation starting with visibility takes 6-9 months but prevents incidents that could cost millions in breach remediation

Related Articles

- Enterprise AI Agent Security: Governance Over Prohibition [2025]

- Microsoft Copilot Bypassed DLP: Why Enterprise Security Failed [2025]

- AI in Cybersecurity: Threats, Solutions & Defense Strategies [2025]

- CarGurus Data Breach: 1.7M Records Stolen by ShinyHunters [2025]

- Honeywell CCTV Camera Vulnerability: Complete Security Guide [2025]

- Enterprise Agentic AI's Last-Mile Data Problem: Golden Pipelines Explained [2025]

![API Management & Agentic AI Security: Closing Blind Spots [2025]](https://tryrunable.com/blog/api-management-agentic-ai-security-closing-blind-spots-2025/image-1-1771774700697.jpg)